A Tiered Validation Strategy for ML-Assisted Non-Target Analysis: From Foundational Concepts to Advanced Applications in Biomedical Research

This article provides a comprehensive framework for implementing a robust tiered validation strategy in Machine Learning-assisted Non-Target Analysis (ML-NTA).

A Tiered Validation Strategy for ML-Assisted Non-Target Analysis: From Foundational Concepts to Advanced Applications in Biomedical Research

Abstract

This article provides a comprehensive framework for implementing a robust tiered validation strategy in Machine Learning-assisted Non-Target Analysis (ML-NTA). Tailored for researchers, scientists, and drug development professionals, it bridges the gap between raw analytical data and environmentally or biologically actionable insights. The content systematically progresses from foundational principles of NTA and ML integration to advanced methodological applications, tackling common troubleshooting scenarios. It culminates in a detailed examination of multi-tiered validation, incorporating analytical verification, external dataset testing, and environmental plausibility assessments. By offering a structured pathway to ensure the reliability, interpretability, and real-world relevance of ML-NTA outputs, this guide aims to empower professionals in translating complex datasets into credible findings for drug discovery, environmental monitoring, and risk assessment.

Demystifying ML-Assisted NTA: Core Principles and the Critical Need for Tiered Validation

Core Principles and Definitions

Non-Target Analysis (NTA) represents a paradigm shift in analytical chemistry, moving from hypothesis-driven to discovery-based approaches. Unlike traditional targeted analysis that quantifies predefined compounds, NTA aims to comprehensively detect and identify a wide range of chemical substances without prior knowledge of the sample composition [1]. This capability is particularly valuable for discovering unknown contaminants, transformation products, and metabolites that would otherwise escape detection using conventional methods.

High-Resolution Mass Spectrometry (HRMS) serves as the analytical foundation for NTA by providing the exact molecular mass of compounds with exceptional accuracy. Where conventional mass spectrometry measures nominal mass, HRMS distinguishes between molecules with minute mass differences—such as cysteine (121.0196 Da) and benzamide (121.0526 Da)—enabling precise molecular formula assignment and compound identification [2]. The high resolving power (typically ≥20,000) and mass accuracy (≤5 ppm) of modern HRMS instruments make this distinction possible [3].

The integration of these fields has created a powerful platform for comprehensive chemical characterization across pharmaceutical, environmental, and biological research, particularly for addressing "known unknowns" and "unknown unknowns" in complex mixtures [4] [5].

The HRMS Working Principle

The operational principle of HRMS encompasses three fundamental stages that transform sample molecules into interpretable data, as detailed in Table 1.

Table 1: Fundamental Stages of High-Resolution Mass Spectrometry

| Step | Description | Common Techniques | Key Applications |

|---|---|---|---|

| Ionization | Converts neutral molecules to gas-phase ions | Electrospray Ionization (ESI), Matrix-Assisted Laser Desorption/Ionization (MALDI) | ESI for fragile biomolecules; MALDI for proteins and polymers |

| Mass Analysis | Separates ions by mass-to-charge ratio (m/z) | Time-of-Flight (TOF), Orbitrap, Fourier Transform Ion Cyclotron Resonance (FT-ICR) | TOF for rapid screening; Orbitrap for high resolution; FT-ICR for ultra-high resolution |

| Detection | Records ion intensity and exact mass | High-precision detectors | Quantification, structural elucidation, formula prediction |

The ionization process occurs under vacuum conditions to prevent ion-molecule collisions, using techniques like ESI that preserve molecular integrity for accurate mass determination [2] [6]. Following ionization, mass analyzers separate ions based on their m/z values with high resolution, while detection systems generate mass spectra that reflect ion abundance and precise molecular weights [6].

Integrated NTA Workflow with HRMS

The complete NTA workflow integrates sample preparation, HRMS analysis, and advanced data processing in a systematic approach to uncover previously undetected chemicals. The following diagram illustrates this comprehensive process:

NTA-HRMS Integrated Workflow with Machine Learning Assistance

Sample Preparation and Extraction

Effective sample preparation is crucial for balancing selectivity and sensitivity in NTA. The goal is to remove interfering matrix components while preserving a broad spectrum of analytes [7]. Common extraction techniques include:

- Solid Phase Extraction (SPE): Often employs multi-sorbent strategies (e.g., Oasis HLB with ISOLUTE ENV+, Strata WAX, and WCX) to broaden chemical coverage [7]

- Green Extraction Techniques: QuEChERS, Microwave-Assisted Extraction (MAE), and Supercritical Fluid Extraction (SFE) improve efficiency by reducing solvent usage and processing time [7]

HRMS Data Acquisition and Processing

HRMS platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems coupled with liquid or gas chromatography (LC/GC), generate complex datasets essential for NTA [7]. Post-acquisition processing involves:

- Centroiding: Converting profile data to centroid data

- Peak Detection and Alignment: Identifying chromatographic peaks and aligning retention times across samples

- Componentization: Grouping related spectral features (adducts, isotopes) into molecular entities [7]

Quality assurance measures include confidence-level assignments (Level 1-5) and batch-specific quality control samples to ensure data integrity [7]. The output is a structured feature-intensity matrix serving as the foundation for machine learning analysis.

Experimental Protocols for Key NTA Applications

Protocol 1: ML-Assisted Contaminant Source Tracking

Objective: Identify contamination sources using ML-based NTA with a tiered validation strategy [7].

Table 2: Reagent Solutions for Contaminant Source Tracking

| Research Reagent | Function | Application Context |

|---|---|---|

| Mixed-mode SPE cartridges | Broad-spectrum analyte enrichment | Water sample preparation for NTA |

| LC-HRMS quality control samples | Monitor instrument performance | Batch-to-batch normalization |

| Certified Reference Materials (CRMs) | Verify compound identities | Analytical confidence assessment |

| Internal standard mixture | Correct retention time drift | Data alignment across batches |

Procedure:

- Sample Collection: Collect environmental samples (water, soil, biota) from potential contamination sources

- Extraction: Process using mixed-mode SPE to maximize compound coverage [7]

- HRMS Analysis: Analyze using LC-HRMS with ESI in both positive and negative modes

- Data Preprocessing:

- Apply retention time correction and m/z recalibration

- Perform peak matching across batches [7]

- Generate feature-intensity matrix

- ML Analysis:

- Conduct exploratory analysis using PCA and hierarchical clustering

- Train supervised classifiers (Random Forest, SVM) on labeled samples

- Apply feature selection to identify source-specific chemical indicators [7]

- Validation:

- Tier 1: Verify identities using CRMs or spectral library matches

- Tier 2: Assess generalizability with external datasets

- Tier 3: Evaluate environmental plausibility using geospatial data [7]

Protocol 2: Structural Alert Screening for Hazard Prioritization

Objective: Prioritize potentially hazardous features using ML classification of MS/MS spectra [8].

Procedure:

- Data Acquisition: Collect MS/MS spectra for known compounds with and without structural alerts

- Feature Extraction:

- Extract fragment masses and neutral losses from MS/MS spectra

- Bin fragments and neutral losses to nearest 0.1 m/z

- Create binary matrices indicating presence/absence of features [8]

- Model Training:

- Train neural network classifier for organophosphorus alerts

- Train Random Forest classifier for aromatic amine alerts

- Optimize using cross-validation [8]

- Application to NTS Data:

- Apply trained models to prioritize LC-HRMS features in environmental samples

- Focus identification efforts on high-priority features [8]

Machine Learning Integration and Tiered Validation

Machine learning has redefined NTA potential by identifying latent patterns in high-dimensional HRMS data that traditional statistics often miss [7]. The tiered validation framework ensures ML outputs are both chemically accurate and environmentally meaningful, addressing the critical gap between analytical capability and decision-making.

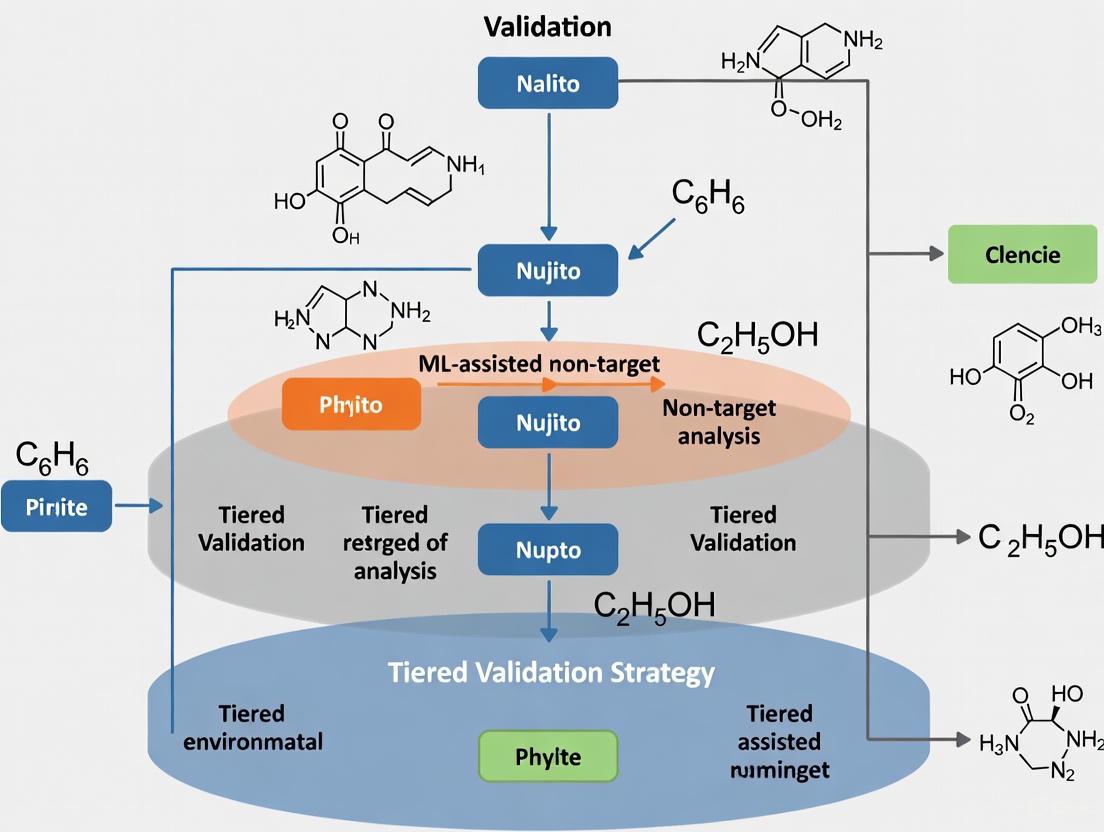

The following diagram illustrates the ML-assisted analysis framework with integrated validation:

ML-Assisted NTA Framework with Tiered Validation

ML-Oriented Data Processing and Analysis

The transition from raw HRMS data to interpretable patterns involves sequential computational steps:

- Data Preprocessing: Addresses data quality through noise filtering, missing value imputation (e.g., k-nearest neighbors), and normalization to mitigate batch effects [7]

- Exploratory Analysis: Identifies significant features via univariate statistics and prioritizes compounds with large fold changes

- Dimensionality Reduction: Techniques like PCA and t-SNE simplify high-dimensional data [7]

- Supervised ML Models: Including Random Forest and Support Vector Classifiers trained on labeled datasets to classify contamination sources [7]

Tiered Validation Strategy

A robust three-tiered validation framework ensures reliable ML-NTA outputs:

- Analytical Confidence: Verified using certified reference materials or spectral library matches to confirm compound identities [7]

- Model Generalizability: Assessed by validating classifiers on independent external datasets with cross-validation techniques to evaluate overfitting risks [7]

- Environmental Plausibility: Correlates model predictions with contextual data, such as geospatial proximity to emission sources or known source-specific chemical markers [7]

Applications and Current Challenges

NTA-HRMS has demonstrated significant utility across multiple domains, though challenges remain for full operationalization.

Table 3: NTA-HRMS Applications Across Sample Matrices

| Sample Matrix | Commonly Detected Chemicals | Analytical Platform | Key Applications |

|---|---|---|---|

| Water | PFAS, pharmaceuticals, pesticides | LC-HRMS (51%), GC-HRMS (32%), Both (16%) | Source tracking, emerging contaminant discovery |

| Soil/Sediment | Pesticides, PAHs, transformation products | GC-HRMS, LC-HRMS | Effect-directed analysis, contamination forensics |

| Human Biospecimens | Plasticizers, pesticides, halogenated compounds | LC-HRMS (ESI+/ESI-) | Biomarker discovery, exposure assessment |

| Consumer Products | Flame retardants, plasticizers | GC-HRMS, LC-HRMS | Safety evaluation, regulatory compliance |

Key Applications

- Environmental Monitoring: Identifying previously unknown pollutants and transformation products in water, soil, and air [5]

- Exposure Assessment: Characterizing human chemical exposures through biomonitoring [5]

- Pharmaceutical Analysis: Detecting unknown impurities and metabolites in drug development [1]

- Effect-Directed Analysis: Identifying bioactive compounds in complex mixtures through fractionation and bioactivity testing [4]

Current Challenges and Research Gaps

Despite significant advances, NTA-HRMS faces several challenges:

- Quantitative Limitations: Most NTA provides relative quantitation; absolute concentration estimates require additional calibration approaches [4]

- Data Complexity: Large, high-dimensional datasets require advanced computational tools and expertise [7]

- Standardization Needs: Lack of harmonized protocols and reporting standards across laboratories [3]

- Confidence Assessment: Varying levels of identification confidence require systematic reporting frameworks [3]

- Black-Box Models: Complex ML models like deep neural networks lack interpretability, limiting regulatory acceptance [7]

The integration of machine learning with NTA-HRMS continues to evolve, with future developments focusing on improved quantification methods, enhanced model interpretability, and standardized validation frameworks to bridge the gap between analytical capability and environmental decision-making [7] [4] [9].

The Role of Machine Learning in Interpreting Complex NTA Datasets

Non-target analysis (NTA) using high-resolution mass spectrometry (HRMS) has emerged as a vital approach for detecting thousands of chemicals without prior knowledge, proving particularly valuable for identifying emerging environmental contaminants and unknown compounds in complex samples [7] [9]. The principal challenge of NTA now lies not in detection itself, but in developing computational methods to extract meaningful environmental information from the vast chemical datasets generated by HRMS platforms [7]. Machine learning (ML) has redefined the potential of NTA by effectively identifying latent patterns within high-dimensional data, making these algorithms particularly well-suited for contamination source identification and compound characterization [7]. This document outlines a systematic framework and detailed protocols for implementing ML-assisted NTA within a tiered validation strategy for robust research outcomes.

Comprehensive Workflow of ML-Assisted NTA

The integration of ML and NTA for contaminant source identification follows a systematic four-stage workflow: (i) sample treatment and extraction, (ii) data generation and acquisition, (iii) ML-oriented data processing and analysis, and (iv) result validation [7]. Each stage requires careful optimization to ensure data quality and interpretable results.

Stage 1: Sample Treatment and Extraction

Sample preparation requires careful optimization to balance selectivity and sensitivity, achieving a compromise between removing interfering components and preserving as many compounds as possible with adequate sensitivity [7].

Protocol 1.1: Comprehensive Sample Preparation for ML-NTA

- Objective: To extract a broad range of compounds with sufficient recovery while minimizing matrix interference for downstream ML analysis.

- Materials: Solid phase extraction (SPE) systems, multi-sorbent materials (Oasis HLB, ISOLUTE ENV+, Strata WAX, WCX), QuEChERS kits, microwave-assisted extraction (MAE) systems.

- Procedure:

- Select appropriate extraction technique based on sample matrix (water, soil, biological).

- For broad-spectrum coverage, employ multi-sorbent SPE strategies combining complementary sorbents.

- Perform extraction using optimized parameters (pH, solvent composition, volume).

- Concentrate extracts under gentle nitrogen stream to appropriate volume.

- Add internal standards for quality control of the extraction process.

- Quality Control: Include procedural blanks, replicates, and spiked samples to monitor contamination, precision, and recovery efficiency.

Stage 2: Data Generation and Acquisition

HRMS platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems, generate complex datasets essential for NTA [7].

Protocol 2.1: HRMS Data Acquisition for ML-Ready Datasets

- Objective: To generate high-quality, consistent HRMS data suitable for ML processing.

- Materials: LC-QTOF or LC-Orbitrap systems, quality control samples, data acquisition software.

- Procedure:

- Perform chromatographic separation using reversed-phase or HILIC columns with appropriate gradients.

- Acquire data in both positive and negative ionization modes with mass resolution >25,000.

- Include data-dependent MS/MS acquisition for compound identification.

- Inject quality control samples (pooled samples, solvent blanks) regularly throughout sequence.

- Process raw data using software (e.g., XCMS, MS-DIAL) for peak picking, alignment, and componentization.

- Output: Structured feature-intensity matrix with rows representing samples and columns corresponding to aligned chemical features.

Stage 3: ML-Oriented Data Processing and Analysis

The transition from raw HRMS data to interpretable patterns involves sequential computational steps [7].

Table 1: Data Preprocessing Methods for ML-NTA

| Processing Step | Technique Options | Purpose | Key Parameters |

|---|---|---|---|

| Missing Value Imputation | k-nearest neighbors, half-minimum | Handle missing values | k value, imputation method |

| Normalization | Total Ion Current (TIC), probabilistic quotient | Mitigate batch effects | Reference sample, method |

| Data Alignment | Retention time correction, m/z recalibration | Standardize features across batches | Alignment tolerance, reference |

| Noise Filtering | Blank subtraction, coefficient of variation | Remove irreproducible features | Blank threshold, CV cutoff |

Protocol 3.1: Data Preprocessing Pipeline

- Objective: To transform raw feature-intensity data into a clean, normalized dataset ready for ML analysis.

- Software: Python/R with appropriate packages (scikit-learn, XCMS, CAMERA).

- Procedure:

- Perform missing value imputation using k-nearest neighbors (k=5) for values missing in <50% of samples.

- Apply TIC normalization to correct for overall signal intensity variations.

- Conduct data alignment across batches using statistical algorithms for retention time correction and m/z recalibration.

- Filter features present in blanks >30% of sample intensity or with high analytical variability (CV >30% in QCs).

- Apply data scaling (autoscaling, Pareto scaling) as appropriate for subsequent ML algorithms.

Dimensionality Reduction and Pattern Recognition

Dimensionality reduction techniques simplify high-dimensional data, while clustering methods group samples by chemical similarity [7].

Table 2: ML Algorithms for NTA Data Analysis

| Algorithm Category | Specific Methods | NTA Applications | Advantages |

|---|---|---|---|

| Unsupervised Learning | PCA, t-SNE, HCA, k-means | Exploratory data analysis, sample clustering | No labels required, reveals intrinsic patterns |

| Supervised Classification | Random Forest, SVM, Logistic Regression | Source attribution, sample classification | High accuracy, handles non-linear relationships |

| Feature Selection | Recursive feature elimination, variable importance | Identify marker compounds, reduce dimensionality | Improves interpretability, reduces overfitting |

Protocol 3.2: Dimensionality Reduction and Classification

- Objective: To identify patterns in chemical profiles and build predictive models for sample classification.

- Software: Python/R with scikit-learn, SIMCA, or similar platforms.

- Procedure:

- Perform Principal Component Analysis (PCA) to visualize sample clustering and identify outliers.

- Apply t-distributed Stochastic Neighbor Embedding (t-SNE) for nonlinear dimensionality reduction.

- Implement Random Forest classification with 100-500 trees to differentiate sample classes.

- Use recursive feature elimination to identify most discriminative chemical features.

- Validate model performance using cross-validation and independent test sets.

Tiered Validation Strategy for ML-Assisted NTA

Validation ensures the reliability of ML-NTA outputs through a three-tiered approach that bridges analytical rigor with real-world relevance [7].

Tier 1: Analytical Confidence Verification

Protocol 4.1: Analytical Validation Using Reference Materials

- Objective: To verify the accuracy of compound identification and quantification.

- Materials: Certified reference materials (CRMs), internal standards, spectral libraries.

- Procedure:

- Analyze CRMs relevant to the sample matrix alongside experimental samples.

- Confirm compound identities using Level 1-5 confidence rankings based on spectral matching.

- Verify retention time stability and mass accuracy against known standards.

- Calculate precision and accuracy for quantified compounds.

Tier 2: Model Generalizability Assessment

Protocol 4.2: External Validation of ML Models

- Objective: To evaluate model performance on independent datasets and assess overfitting risks.

- Materials: Independent sample sets from different batches or locations, validation software.

- Procedure:

- Reserve 20-30% of samples as a hold-out test set before model training.

- Apply trained models to completely independent external datasets.

- Use k-fold cross-validation (k=5 or 10) to assess model stability [10].

- Calculate performance metrics (accuracy, precision, recall, F1-score) on validation sets.

Tier 3: Environmental Plausibility Checks

Protocol 4.3: Contextual Validation with Environmental Data

- Objective: To correlate model predictions with contextual environmental information.

- Materials: Geospatial data, known source-specific chemical markers, historical contamination data.

- Procedure:

- Compare model-predicted source contributions with known source locations.

- Verify presence of established chemical markers for specific sources.

- Assess temporal consistency of predictions with known emission patterns.

- Evaluate quantitative relationships between predicted sources and measured concentrations.

Table 3: Tiered Validation Framework for ML-NTA

| Validation Tier | Validation Components | Acceptance Criteria | Outcome Metrics |

|---|---|---|---|

| Tier 1: Analytical Confidence | CRM analysis, spectral matching, mass accuracy | Mass error < 5 ppm, RT stability < 0.2 min, spectral match > 80% | Identification confidence levels (1-5), quantification accuracy |

| Tier 2: Model Generalizability | Cross-validation, external validation, hold-out testing | Cross-validation accuracy > 80%, minimal performance drop on external sets | Accuracy, precision, recall, F1-score, ROC curves |

| Tier 3: Environmental Plausibility | Geospatial correlation, marker consistency, temporal trends | Statistical significance (p < 0.05) with contextual data | Correlation coefficients, spatial clustering, temporal patterns |

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Materials for ML-NTA Workflows

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Multi-sorbent SPE cartridges (Oasis HLB, Strata WAX/WCX) | Broad-spectrum compound extraction | Combine complementary sorbents for increased coverage of polar and non-polar compounds [7] |

| Certified Reference Materials (CRMs) | Analytical validation | Verify accuracy of identification and quantification for quality assurance [7] |

| Stable isotope-labeled internal standards | Quantification and process control | Correct for matrix effects and recovery variations during sample preparation |

| Quality control samples (pooled QCs, solvent blanks) | Monitoring analytical performance | Evaluate system stability, reproducibility, and contamination throughout sequences [7] |

| Retention time index standards | Chromatographic alignment | Standardize retention times across batches and instruments for consistent feature alignment [7] |

| MS tuning and calibration solutions | Instrument calibration | Ensure mass accuracy and sensitivity according to manufacturer specifications |

Machine learning has transformed non-target analysis from a mere detection tool to an powerful interpretive framework for understanding complex environmental mixtures. The structured workflow and tiered validation strategy presented here provides researchers with a systematic approach for implementing ML-assisted NTA that balances innovation with analytical rigor. By adhering to these protocols and validation frameworks, researchers can generate chemically accurate, environmentally meaningful results that support informed decision-making in environmental monitoring, regulatory actions, and public health protection. Future advancements in explainable AI and integrated computational models will further enhance the applicability of ML-NTA in environmental risk assessment frameworks.

In machine learning-assisted non-target analysis (ML-assisted NTA), the journey from raw feature detection to the meaningful identification of contaminants is fraught with a critical bottleneck. This bottleneck encompasses the computational and methodological challenges in transforming high-dimensional chemical feature data into reliable, source-specific identifications that can inform environmental decision-making [7]. The high dimensionality of datasets significantly elevates computational costs and complicates the process of selecting relevant features, often resulting in suboptimal selections [11]. Furthermore, early NTA approaches that prioritized signal intensity risked overlooking low-concentration but high-risk contaminants and failed to account for source-specific chemical interactions [7]. This protocol outlines a systematic, tiered-validation framework designed to address this bottleneck, enhancing the reliability and interpretability of ML-NTA for researchers and drug development professionals.

Quantitative Data in ML-NTA Workflows

The ML-NTA workflow generates and relies on multifaceted quantitative data. The table below summarizes key performance metrics for the machine learning models used in the data analysis stage.

Table 1: Key Performance Metrics for ML Models in Contaminant Source Classification

| Metric | Description | Typical Range in NTA Studies | Interpretation in NTA Context |

|---|---|---|---|

| Classification Accuracy | The correctness of the AI model's predictions in classifying contamination sources [12]. | Balanced accuracy of 85.5% to 99.5% has been reported for PFAS source classification [7]. | Must be balanced against other performance metrics like latency [12]. |

| Latency | The time taken for an AI model to process an input and produce an output [12]. | Critical for real-time applications; specific values are hardware and model-dependent. | Important for near-real-time monitoring applications. |

| Throughput | The number of tasks an AI system can handle within a given time frame [12]. | Dependent on data complexity and computational resources. | Indicates the efficiency of processing large batches of HRMS samples. |

The initial data acquisition stage produces a foundational quantitative dataset: a feature-intensity matrix. In this matrix, rows represent individual environmental samples, and columns correspond to the aligned chemical features detected by high-resolution mass spectrometry (HRMS), with cell values indicating the intensity or abundance of each feature [7].

Table 2: Quantitative Data Characteristics in HRMS-Based NTA

| Data Aspect | Quantitative Measure | Impact on Analysis |

|---|---|---|

| Feature Dimensionality | Can encompass thousands to millions of chemical features [7]. | Increases computational burden; necessitates robust feature selection. |

| Signal Intensity | Varies over several orders of magnitude between features. | Requires normalization; high-intensity features can dominate unsupervised analysis. |

| Confidence Levels | Assignment of Levels 1-5 for compound identification [7]. | Provides a quantitative confidence score for identifications. |

Experimental Protocols for a Tiered Validation Strategy

A tiered validation strategy is paramount to ensure that model outputs are both chemically accurate and environmentally meaningful. The following protocols provide a methodology for each tier.

Tier 1 Validation: Analytical Confidence Verification

Objective: To confirm the chemical identity of features prioritized by ML models. Materials: Certified reference materials (CRMs), commercial spectral libraries, quality control (QC) samples. Procedure:

- CRM Analysis: Analyze CRMs relevant to the suspected contamination sources alongside environmental samples. This verifies instrument calibration and retention time stability.

- Spectral Library Matching: Compare the acquired MS/MS spectra of prioritized features against commercial (e.g., NIST) and public (e.g, MassBank) spectral libraries. A match is typically considered confident (Level 1 identification) when the forward and reverse spectral similarity scores exceed a threshold (e.g., >70%) and the retention time is consistent with the standard [7].

- Quality Control: Inject batch-specific QC samples (e.g., pooled samples) throughout the analytical sequence to monitor instrument performance and data reproducibility [7].

Tier 2 Validation: Model Generalizability Assessment

Objective: To evaluate the performance and robustness of the trained ML classifier on independent data, ensuring it has not overfitted the training set. Materials: An external dataset not used during model training or hyperparameter tuning. Procedure:

- Data Splitting: Initially, split the annotated dataset into a training set (e.g., 70-80%) and a hold-out test set (e.g., 20-30%).

- Model Training & Cross-Validation: Train the selected ML model (e.g., Random Forest, Support Vector Classifier) on the training set. Use k-fold cross-validation (e.g., k=10) on this training set to optimize model parameters and obtain a preliminary performance estimate [7].

- External Validation: Apply the final, optimized model to the completely unseen hold-out test set. This provides an unbiased estimate of model performance.

- Performance Metrics Calculation: Calculate key metrics from Table 1 (e.g., balanced accuracy, precision, recall) for both the cross-validation and external validation sets. A significant drop in performance on the external set indicates overfitting.

Tier 3 Validation: Environmental Plausibility Check

Objective: To contextualize model predictions within real-world conditions and known source-receptor relationships. Materials: Geospatial data on potential emission sources, historical contamination data, literature on source-specific chemical markers. Procedure:

- Geospatial Correlation: Overlay the model-predicted contamination sources on a map with known locations of industrial, agricultural, or urban sites. High prediction probabilities for a specific source should correlate with proximity to that source type [7].

- Marker Compound Consistency: Investigate whether the features identified as important by the ML model (e.g., via Random Forest's feature importance) are known chemical markers for the predicted source. For example, the model might correctly identify certain PFAS compounds as highly indicative of fire-fighting foam runoff [7].

- Historical Comparison: Compare current model findings with historical data from the same or similar sites to assess the temporal plausibility of the identified sources.

Workflow and Logical Pathway Diagrams

The following diagram illustrates the integrated workflow of ML-assisted NTA, from sample collection to validated identification, highlighting the critical bottleneck and the tiered validation strategy designed to address it.

The logical relationship between the core feature selection bottleneck and the information bottleneck principle is further detailed in the following diagram.

The Scientist's Toolkit: Research Reagent Solutions

Successful execution of the ML-NTA workflow relies on a suite of essential reagents, software, and analytical resources.

Table 3: Essential Research Reagents and Materials for ML-NTA

| Item Name | Function / Application | Specific Examples / Notes |

|---|---|---|

| Multi-Sorbent SPE Cartridges | Broad-spectrum extraction of analytes with diverse physicochemical properties from complex environmental matrices [7]. | Oasis HLB, ISOLUTE ENV+, Strata WAX, Strata WCX [7]. |

| Certified Reference Materials (CRMs) | Analytical confidence verification (Tier 1 Validation); used for instrument calibration and confirming compound identities [7]. | Source-specific CRMs (e.g., PFAS mixtures, pesticide mixes). |

| Quality Control (QC) Samples | Monitoring data integrity and instrumental performance throughout the analytical sequence [7]. | Pooled quality control samples, procedural blanks [7]. |

| HRMS Platform with Chromatography | Data generation and acquisition; provides the high-resolution mass spectral data and chromatographic separation needed for NTA [7]. | Orbitrap or Q-TOF systems coupled with LC or GC [7]. |

| Information Bottleneck Feature Selection Tool | Addresses the feature selection bottleneck by globally optimizing the selection of a feature subset (Xs) that is maximally informative about the source labels (Y) [11]. | Masked Deterministic IB (MDIB) neural network framework [11]. |

| Spectral Libraries | Compound annotation and identification via spectral matching (Tier 1 Validation) [7]. | NIST, MassBank, mzCloud. |

| ML Model Benchmarking Datasets | For training, testing, and benchmarking ML models for visualization and classification tasks [13]. | VizNet [13], VizML [13]. |

In the realms of machine learning (ML)-assisted non-targeted analysis (NTA) and pharmaceutical development, validation transcends mere best practice—it constitutes an operational necessity. The convergence of black-box model complexity and pervasive data quality challenges creates a risk landscape where undiscovered errors can compromise scientific conclusions, regulatory submissions, and ultimately, patient safety. As models grow more sophisticated, traditional validation approaches become insufficient, necessitating a systematic, tiered strategy that spans from data acquisition to model deployment.

The stakes are substantial. In pharmaceutical research, data quality lapses have triggered regulatory application denials and significant market value erosion [14]. Similarly, ML models, particularly deep learning architectures, introduce unique vulnerabilities through their non-deterministic behavior and opacity, making standard validation protocols inadequate [15]. This application note establishes a comprehensive validation framework specifically designed for ML-assisted NTA research, providing experimentally-validated protocols to ensure reliability amidst these complexities.

The Dual Challenge: Black-Box Models and Data Quality

The Opacity Problem of Black-Box Models

Machine learning models, especially complex deep learning networks, often function as "black boxes" where the relationship between inputs and outputs lacks transparency. This opacity presents three critical validation challenges:

- Explainability Deficit: The inability to trace decision pathways hinders scientific acceptance and regulatory approval, particularly when models are used for critical decision-making in drug development [16].

- Unpredictable Failure Modes: Without transparent internal logic, models may fail unexpectedly on edge cases or data distributions not represented in training sets [15].

- Validation Complexity: Traditional sensitivity analysis becomes computationally intensive and difficult to interpret when inputs and outputs in neural network models lack the clear relationships found in statistical models [16].

The Data Quality Imperative

Data quality forms the foundational layer upon which all subsequent analysis rests. In pharmaceutical research and NTA studies, data challenges manifest uniquely:

- Incomplete Patient Records: Lead to potential misdiagnoses and flawed clinical trial outcomes [14].

- Inconsistent Drug Formulation Data: Generate errors in manufacturing and dosage calculations [14].

- Fragmented Data Silos: Hamper collaboration and real-time decision-making across research organizations [14].

- Data Drift: The gradual shift in real-world data distributions compared to training data causes model degradation over time, necessitating continuous monitoring [15].

Table 1: Documented Impacts of Poor Data Quality in Pharmaceutical Research

| Issue Documented | Consequence | Source |

|---|---|---|

| FDA Application Denial | Clinical trial datasets lacking required nonclinical toxicology studies | [14] |

| Import Alert List Additions | 93 companies flagged for drug quality issues including record-keeping lapses | [14] |

| Manufacturing Site Penalties | Inadequate documentation and quality control measures delaying drug approval | [14] |

Tiered Validation Strategy for ML-Assisted NTA

A tiered validation strategy provides a structured approach to navigate the complexities of modern analytical pipelines. This multi-layered framework ensures comprehensive coverage from basic data quality to model performance in real-world scenarios.

The Four-Stage Workflow

ML-assisted NTA for contaminant source identification follows a systematic workflow comprising four critical stages [7]:

- Sample Treatment and Extraction: Balancing selectivity and sensitivity through purification techniques.

- Data Generation and Acquisition: Utilizing HRMS platforms to generate complex datasets for analysis.

- ML-Oriented Data Processing and Analysis: Applying computational methods to extract meaningful patterns.

- Result Validation: Implementing a multi-faceted approach to verify reliability and relevance.

The following diagram illustrates this comprehensive workflow and its key components:

Tiered Validation Protocol

The validation stage (Stage 4) implements a three-tiered approach to ensure comprehensive verification [7]:

Tier 1: Analytical Confidence Verification

- Objective: Confirm compound identities through analytical standards

- Protocol: Use certified reference materials (CRMs) or spectral library matches

- Acceptance Criteria: ≥95% match with reference spectra

Tier 2: Model Generalizability Assessment

- Objective: Evaluate performance on independent datasets

- Protocol: Validate classifiers on external datasets using k-fold cross-validation

- Acceptance Criteria: Balanced accuracy maintained within 5% of training performance

Tier 3: Environmental Plausibility Checks

- Objective: Correlate predictions with real-world context

- Protocol: Compare model outputs with geospatial proximity to emission sources and known source-specific chemical markers

- Acceptance Criteria: Statistical significance (p < 0.05) in expected directional relationships

Experimental Protocols and Application

Case Study: Toxicity-Based Prioritization Framework

An automated toxicity-based prioritization framework for NTA demonstrates the practical implementation of tiered validation [17]. This integrated workflow combines spectral matching, retention time prediction, and toxicity assessment to prioritize environmental pollutants.

Table 2: Experimental Protocol for Toxicity-Based Prioritization

| Step | Methodology | Parameters Measured | Tools/Platforms |

|---|---|---|---|

| Sample Preparation | Solid phase extraction with multi-sorbent strategy | Analyte recovery rates | Oasis HLB, ISOLUTE ENV+ |

| Data Acquisition | LC-QTOF-MS with MSE mode (DIA) | Retention time, m/z, intensity | High-resolution mass spectrometer |

| Data Processing | Spectral library searching, QSRR-based RT prediction | Spectral matching scores, RT accuracy | EPA ToxCast, ChemSpider, PubChem |

| Toxicity Assessment | Multi-endpoint toxicity prediction | ToxPi scores, 6 toxicity endpoints | EPA TEST software |

| Prioritization | Combined algorithm of multiple filters | Tier assignment (1-5) | NTAprioritization.R package |

The workflow successfully processed a candidate list of 6,982 compounds from a sludge water sample, reducing it to a prioritized list of 2,779 compounds with 21 out of 28 spiked standards correctly identified and prioritized [17].

Visualization of the Prioritization Workflow

The toxicity-based prioritization framework integrates multiple data sources and analytical steps to efficiently identify compounds of concern:

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for ML-Assisted NTA

| Category | Specific Tools/Platforms | Function | Application Context |

|---|---|---|---|

| HRMS Platforms | Q-TOF, Orbitrap Systems | High-resolution mass detection for compound identification | Structural elucidation of unknown compounds [7] |

| Chromatography Systems | LC-ESI, GC-EI | Compound separation prior to mass analysis | Expanding chemical coverage for comprehensive analysis [17] |

| Spectral Libraries | EPA ToxCast, ChemSpider, PubChem | Reference databases for spectral matching | Compound identification and confirmation [17] |

| Toxicity Prediction | EPA TEST Software, ToxPi | Multi-endpoint toxicity assessment | Prioritization based on potential biological impact [17] |

| Data Processing | NTAprioritization.R Package | Automated prioritization workflow | Streamlined candidate evaluation and tier assignment [17] |

| Model Validation | Galileo Platform, Scikit-learn | Performance metrics tracking and model evaluation | Continuous validation and drift detection [18] [19] |

Validation Metrics and Performance Assessment

Quantitative Validation Metrics

Establishing comprehensive performance metrics is essential for objective model assessment. The following metrics provide multidimensional evaluation:

Table 4: Performance Metrics for Model Validation

| Metric Category | Specific Metrics | Optimal Range | Application Context |

|---|---|---|---|

| Classification Metrics | Accuracy, Precision, Recall, F1 Score | Domain-dependent (e.g., >0.85 for high-stakes) | Model performance evaluation [19] [20] |

| Model Discrimination | ROC-AUC | >0.8 (excellent), 0.7-0.8 (acceptable) | Binary classification tasks [19] |

| Regression Metrics | MAE, MSE, RMSE | Context-dependent (lower values preferred) | Continuous outcome prediction [20] |

| Clustering Quality | Silhouette Score, Davies-Bouldin Index | >0.5 (good clustering), lower values better for DBI | Unsupervised learning applications [20] |

| Toxicity Prediction | Balanced Accuracy | 85.5-99.5% (as demonstrated in PFAS classification) | Contaminant source identification [7] |

Addressing Common Validation Challenges

Even with robust metrics, validation faces practical challenges that require specific mitigation strategies:

- Data Scarcity: When labeled data is limited, employ data augmentation, transfer learning from pre-trained models, and active learning to prioritize informative samples [18].

- Class Imbalance: Address unequal class distributions with techniques like SMOTE (Synthetic Minority Over-sampling Technique), weighted loss functions, and stratified sampling [18].

- Conflicting Metrics: Establish a clear metric hierarchy based on business goals and apply multi-objective optimization to find balanced solutions [18].

- Concept Drift: Implement automated monitoring to detect changes in data distributions that require model retraining [15].

Validation in ML-assisted NTA research is not merely a technical checklist but a fundamental scientific principle that ensures research integrity and practical utility. The tiered validation strategy presented here provides a structured approach to navigate the complexities of black-box models and data quality challenges. By implementing these protocols—from analytical verification to environmental plausibility checks—researchers can build confidence in their findings and accelerate the translation of analytical data into actionable environmental and pharmaceutical insights.

The framework demonstrated in the toxicity-based prioritization case study, which successfully processed thousands of compounds while maintaining identification accuracy, showcases the practical implementation of these principles [17]. As ML applications continue to evolve in sophistication, so too must our validation methodologies, ensuring that scientific progress remains grounded in reliability and reproducibility.

Building the Workflow: A Step-by-Step Guide to ML-Oriented NTA and Prioritization Strategies

Within the framework of tiered validation strategy for machine learning (ML)-assisted non-target analysis (NTA), the initial stage of sample treatment and extraction is paramount. This step transforms a raw, complex environmental or biological matrix into a purified analyte mixture suitable for high-resolution mass spectrometry (HRMS). The quality and comprehensiveness of the data generated by HRMS are fundamentally limited by the efficacy of this first sample preparation stage. Consequently, the selection and execution of extraction protocols directly influence the performance of downstream ML models by determining the diversity and integrity of the chemical features available for pattern recognition and source attribution [7]. This document provides detailed application notes and protocols for comprehensive extraction techniques, designed to establish a robust foundation for reliable ML-assisted NTA.

Core Principles of Extraction

The fundamental goal of sample preparation is to isolate target analytes from interfering components in the sample matrix while ensuring high recovery and preserving the chemical integrity of the constituents. The extraction process generally follows these stages: (1) the solvent penetrates the solid matrix; (2) solutes dissolve into the solvent; (3) solutes diffuse out of the solid matrix; and (4) the extracted solutes are collected [21]. Several factors critically influence extraction efficiency and must be optimized for specific applications [21]:

- Solvent Selection: Governed by the principle of "like dissolves like," where solvent polarity should match the polarity of the target solutes. Methanol (MeOH) and ethanol (EtOH) are frequently used as universal solvents for phytochemical investigations [21].

- Particle Size: A smaller particle size enhances solvent penetration and solute diffusion, improving yield. However, excessively fine particles can lead to excessive solute absorption and filtration challenges [21].

- Temperature: Elevated temperatures can increase solubility and diffusion rates but must be balanced against the risk of degrading thermolabile compounds or causing solvent loss [21].

- Extraction Duration: Yield increases with time until equilibrium is reached between the solute concentration inside and outside the solid material [21].

- Solvent-to-Solid Ratio: A higher ratio generally increases extraction yield, but an excessively high ratio is inefficient, requiring large solvent volumes and prolonged concentration times [21].

A variety of techniques, from conventional to modern, are available for sample treatment. The choice of method depends on the sample matrix, the physicochemical properties of the analytes, and the requirements for throughput, selectivity, and solvent consumption. The table below provides a comparative summary of key extraction methods.

Table 1: Comparison of Common Extraction Techniques Used in Sample Preparation

| Extraction Technique | Principle | Best For | Advantages | Disadvantages | Key Parameters |

|---|---|---|---|---|---|

| Maceration [21] | Solvent-assisted passive diffusion at room temperature. | Thermolabile compounds; simple setup. | Simple, low equipment cost. | Long extraction time, low efficiency. | Solvent type, particle size, soaking duration. |

| Percolation [21] | Continuous flow of fresh solvent through the sample bed. | Continuous processes; higher efficiency than maceration. | More efficient than maceration. | Can require more solvent than maceration. | Solvent flow rate, particle size, column packing. |

| Decoction [21] | Heating the sample in solvent, typically water. | Water-soluble, heat-stable compounds. | Efficient for hard plant tissues. | Not suitable for thermolabile or volatile compounds. | Boiling duration, pH, herb-to-water ratio. |

| Solid Phase Extraction (SPE) [7] | Selective adsorption/desorption of analytes from a liquid sample onto a solid sorbent. | Purification and concentration; selective class extraction. | High selectivity, clean-up, analyte enrichment. | Can be selective for certain properties, limiting broad coverage. | Sorbent chemistry (e.g., Oasis HLB, ENV+), wash/elution solvents. |

| Pressurized Liquid Extraction (PLE) [7] | Extraction with liquid solvents at elevated temperatures and pressures. | Fast and efficient extraction from solid matrices. | Fast, reduced solvent consumption, automated. | High equipment cost. | Temperature, pressure, solvent type, static/dynamic cycles. |

| Microwave-Assisted Extraction (MAE) [21] [7] | Heating the sample-solvent mixture via microwave energy. | Rapid heating and extraction. | Rapid, low solvent consumption, high yield. | Potential for non-uniform heating. | Microwave power, temperature, solvent dielectric constant. |

| Supercritical Fluid Extraction (SFE) [7] | Utilization of supercritical fluids (e.g., CO₂) as the extraction solvent. | Selective extraction of non-polar to moderately polar compounds. | Solvent-free (using CO₂), tunable selectivity, fast. | High equipment cost, limited for polar compounds. | Pressure, temperature, modifier addition. |

| QuEChERS [7] | "Quick, Easy, Cheap, Effective, Rugged, and Safe" method involving solvent extraction and salt-induced partitioning. | High-throughput multi-residue analysis (e.g., pesticides). | Rapid, high-throughput, minimal solvent. | May require further clean-up for complex matrices. | Salt mixtures, dispersive SPE sorbents for clean-up. |

Detailed Experimental Protocols

Protocol 1: Solid Phase Extraction (SPE) for Broad-Range Contaminant Enrichment

This protocol is optimized for the extraction of a wide range of emerging contaminants from water samples, forming a foundational step for ML-NTA workflows [7].

1. Research Reagent Solutions

Table 2: Essential Materials for SPE Protocol

| Item | Function |

|---|---|

| Oasis HLB SPE Cartridge (or equivalent) | Hydrophilic-Lipophilic Balanced copolymer sorbent for broad-spectrum retention. |

| ISOLUTE ENV+ / Strata WAX/WCX | Mixed-mode or ion-exchange sorbents used in a multi-sorbent strategy for expanded coverage. |

| HPLC-grade Methanol | Elution solvent for strongly retained analytes. |

| HPLC-grade Acetone | Elution solvent for a broader range of analytes. |

| Type 1 Water (LC-MS grade) | For sample preparation and cartridge conditioning. |

| Ammonium Formate / Acetate Buffer | For pH adjustment and ion-pairing in mobile phases. |

2. Procedure

- Conditioning: Sequentially pass 5-10 mL of methanol (or acetone) followed by 5-10 mL of Type 1 water through the SPE cartridge. Do not allow the sorbent bed to dry out.

- Sample Loading: Load the acidified/pretreated water sample (e.g., 100 mL to 1 L) at a controlled, slow flow rate (e.g., 5-10 mL/min).

- Washing: After sample loading, wash the cartridge with 5-10 mL of a water-methanol mixture (e.g., 95:5 v/v) to remove weakly retained matrix interferences.

- Drying: Remove residual water by drawing air or nitrogen through the cartridge for 10-30 minutes, or by centrifugation.

- Elution: Elute the retained analytes with 5-10 mL of a strong organic solvent (e.g., methanol, acetone, or a mixture) into a collection tube.

- Reconstitution: Evaporate the eluent to near dryness under a gentle stream of nitrogen. Reconstitute the dried extract in an appropriate volume (e.g., 100-200 µL) of initial mobile phase (e.g., water/methanol) compatible with the subsequent LC-MS analysis. Vortex thoroughly and transfer to an autosampler vial.

Protocol 2: Pressurized Liquid Extraction (PLE) from Solid Matrices

This protocol is designed for the efficient extraction of organic contaminants from solid samples such as soil, sediment, or biological tissue [7].

1. Research Reagent Solutions

Table 3: Essential Materials for PLE Protocol

| Item | Function |

|---|---|

| PLE System (e.g., Accelerated Solvent Extractor) | Automated system to maintain high temperature and pressure. |

| Diatomaceous Earth | Dispersant to mix with the sample for improved solvent contact. |

| Cellulose Filters | Placed at the ends of the extraction cell to prevent particulate clogging. |

| HPLC-grade Solvents (e.g., Acetone, Hexane, DCM) | Extraction solvents selected based on target analyte polarity. |

2. Procedure

- Sample Preparation: Homogenize the solid sample and mix it thoroughly with an inert dispersant like diatomaceous earth in a defined ratio.

- Cell Packing: Place a cellulose filter at the bottom of the stainless-steel extraction cell. Pack the sample-dispersant mixture into the cell, avoiding voids. Top with another cellulose filter.

- Extraction: Place the cell in the PLE system. Set the extraction parameters. A typical method includes:

- Solvent: Acetone:hexane (1:1 v/v) or other optimized mixtures.

- Temperature: 100 °C.

- Pressure: 1500 psi.

- Heat Time: 5-10 minutes.

- Static Time: 5-10 minutes.

- Flush Volume: 60% of cell volume.

- Purge Time: 60-90 seconds with nitrogen.

- Cycles: 1-3 static cycles.

- Collection: The extracted analytes are collected in a sealed vial.

- Concentration: If necessary, concentrate the extract under a gentle nitrogen stream and reconstitute in a suitable solvent for analysis.

Workflow Integration and Visualization

The sample treatment and extraction stage is the critical first step in a multi-stage ML-assisted NTA workflow. The following diagram illustrates its position and relationship with subsequent stages, from data generation to final validation.

The specific choice of extraction technique dictates the chemical feature space that will be profiled. The diagram below outlines the decision-making process for selecting an appropriate technique based on the sample matrix.

This document outlines the detailed protocols for the data generation and acquisition stage within a tiered validation strategy for machine learning (ML)-assisted non-target analysis (NTA) using Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS). The quality and integrity of the data acquired at this stage are foundational for all subsequent ML and statistical analysis. Adherence to the standardized protocols described herein ensures the generation of consistent, high-fidelity data suitable for retrospective interrogation and model training [22] [23].

Essential Research Reagent Solutions

The following table details key reagents and materials essential for preparing and running HRMS samples, ensuring analytical reproducibility and accuracy.

Table 1: Key Research Reagent Solutions and Materials

| Item | Function / Description |

|---|---|

| Internal Standards | Isotopically-labeled compounds spiked into all samples and calibration standards to monitor instrument performance, correct for matrix effects, and validate the analytical run [23]. |

| Methanolic Standard Mixtures | Quality control (QC) samples containing approximately 100 reference compounds at known concentrations (e.g., 0.5 mg L–1) used to verify system suitability, sensitivity, and chromatographic performance [23]. |

| Blank Matrices | Samples of the solvent or a blank biological matrix processed without analytes. Critical for identifying background contamination and ensuring the absence of carryover [23]. |

| HPLC Grade Water | Ultra-pure water used as a control matrix and for preparing mobile phases and standard solutions to minimize background interference [22]. |

| Structured Query Language (SQL) Database (e.g., ScreenDB) | A digital archive for parsed, peak-deconvoluted LC-HRMS data. Enables scalable, long-term storage and flexible, retrospective querying of vast datasets for NTA and method monitoring [23]. |

| Laboratory Information Management System (LIMS) | A system, such as STARLIMS, for managing sample metadata, case characteristics, and complementary quantitative data, ensuring traceability and connectivity with HRMS data [23]. |

HRMS Instrumentation and Data Acquisition Specifications

Precise configuration of the LC-HRMS platform is critical for acquiring comprehensive data. The parameters below are based on established, scalable NTA workflows [23].

Table 2: Example LC-HRMS Instrument Configuration for NTA

| Parameter | Specification |

|---|---|

| Chromatography | Reversed-phase liquid chromatography (RPLC) |

| Gradient Mode | Linear gradient (specific solvents and proportions should be defined per method) |

| Total Run Time | 15 minutes [23] |

| Mass Spectrometer | Q-TOF (e.g., Xevo G2-S) [23] |

| Ionization Source | Electrospray Ionization (ESI), positive and/or negative mode |

| Acquisition Mode | Data-independent acquisition (DIA / MSE), collecting low and high collision energy spectra concurrently [23] |

| Data Archiving | Parsing of peak-deconvoluted data to an SQL database (e.g., ScreenDB) for long-term, queryable storage [23] |

Experimental Protocols

Sample Preparation Protocol

Objective: To reproducibly extract and prepare samples for LC-HRMS analysis while maintaining analyte integrity. Materials: Samples, internal standards mixture, appropriate solvents (e.g., methanol, acetonitrile), HPLC-grade water, centrifuges, vortex mixer. Procedure:

- Sample Aliquoting: Precisely aliquot a defined volume or mass of each sample (e.g., 100 µL of plasma, 100 mg of tissue homogenate).

- Internal Standard Addition: Spike a known concentration of internal standards into every sample, calibration standard, and QC sample immediately prior to any preparation steps. This controls for variability in extraction and ionization [23].

- Protein Precipitation / Extraction: Add a precipitating solvent (e.g., cold acetonitrile, 3:1 v/v) to the sample. Vortex vigorously for 1-2 minutes.

- Centrifugation: Centrifuge at a high speed (e.g., 10,000-15,000 x g) for 10 minutes to pellet precipitated proteins and particulates.

- Supernatant Collection: Carefully transfer the clear supernatant to a new LC-MS compatible vial.

- Evaporation & Reconstitution: Evaporate the supernatant to dryness under a gentle stream of nitrogen or using a centrifugal evaporator. Reconstitute the dried residue in a defined volume of initial mobile phase or a suitable solvent mixture (e.g., 95:5 water:methanol). Vortex to ensure complete dissolution.

- Vialing: Transfer the final extract to an LC vial with insert for analysis.

Quality Control and System Suitability Protocol

Objective: To ensure the analytical system is performing within specified parameters and that the generated data is reliable. Materials: Methanolic standard mixtures, blank matrices, internal-standard blank injections [23]. Procedure:

- Sequence Design: Integrate QC samples at regular intervals throughout the analytical sequence (e.g., at the beginning, after every 10-12 experimental samples, and at the end).

- QC Sample Set: For each batch, analyze:

- A blank matrix to check for contamination.

- An internal-standard blank injection (solvent) to monitor instrumental background.

- Methanolic standard mixtures containing ~100 compounds at 0.5 mg L-1 to assess sensitivity, retention time stability, and mass accuracy [23].

- Data Review: Before proceeding with full data analysis, review the QC data. The batch should be accepted only if predefined forensic QC criteria are met (e.g., stable retention time and intensity for internal standards, acceptable mass error, absence of significant contamination) [23].

Data Acquisition and Pre-processing Protocol

Objective: To acquire raw HRMS data in a manner that captures maximum information and to convert it into a structured, queryable format. Materials: Prepared samples, configured LC-HRMS system, data processing software (e.g., UNIFI, XCMS), SQL database. Procedure:

- Data Acquisition: Inject samples according to the established sequence and LC-HRMS method (Table 2), acquiring data in a data-independent (ME) mode to fragment all ions without precursor selection.

- Initial Data Processing: Process the raw data files through a peak-picking and componentization algorithm within the vendor's software (e.g., UNIFI). This step performs peak detection, groups co-eluting ions (including isotopes, adducts, and fragment ions) into components, and filters out non-chromatographic noise [23].

- Data Parsing to SQL Database: Export the componentized data and parse it into a structured SQL database (e.g., ScreenDB). This involves storing each ion signal (with its accurate mass, retention time, intensity, and link to its component) as a separate, queryable entry. This "decomponentized" storage is crucial for flexible, retrospective NTA [23].

Experimental Workflow and Data Flow Visualization

The following diagrams, generated using Graphviz, illustrate the logical flow of the experimental process and the subsequent data lifecycle.

Diagram 1: End-to-end workflow for HRMS-based sample analysis and data generation.

Diagram 2: Data flow from the SQL database to various applications, including ML training.

Within the systematic framework of Machine Learning-assisted Non-Target Analysis (ML-NTA) for contaminant source identification, Stage 3: ML-Oriented Data Processing serves as the critical computational bridge between raw analytical data and interpretable environmental insights [7]. This stage transforms the high-dimensional, complex data generated by high-resolution mass spectrometry (HRMS) into a structured format suitable for pattern recognition and machine learning modeling [7]. The primary objective is to extract meaningful chemical patterns and reduce data complexity while preserving diagnostically significant information essential for accurate contaminant source attribution [7]. The process is methodically sequenced into three core components: Data Preprocessing to ensure data quality and consistency, Dimensionality Reduction to mitigate the curse of dimensionality and enhance model generalization, and Clustering to uncover inherent group structures within the data without prior knowledge of sample labels [7]. The effective execution of this stage is a prerequisite for developing robust, interpretable, and generalizable ML models that can withstand rigorous tiered validation and provide actionable intelligence for environmental decision-making [7].

Data Preprocessing: Foundational Data Quality Assurance

Data preprocessing encompasses the initial set of operations designed to address data quality issues inherent in raw HRMS feature-intensity matrices, where rows represent samples and columns correspond to aligned chemical features [7]. This phase ensures the reliability and consistency of downstream analyses.

Core Preprocessing Techniques

The principal techniques employed in ML-NTA workflows include [24] [7] [25]:

- Missing Value Imputation: Data collections are frequently incomplete. Strategies include removing records with excessive missingness or estimating missing values using methods like k-nearest neighbors (KNN) imputation, which leverages similarities between samples to fill gaps intelligently [7].

- Noise Filtering: Low-abundance signals that are indistinguishable from instrumental noise are identified and removed to prevent them from obscuring genuine chemical patterns [7].

- Data Normalization: Techniques such as Total Ion Current (TIC) normalization are applied to correct for variations in overall signal intensity between samples, mitigating batch effects and making feature intensities comparable across the entire dataset [7].

- Data Alignment: Variations in analytical platforms or acquisition dates can cause misalignment. This process involves retention time correction, mass-to-charge ratio (m/z) recalibration, and peak matching to ensure chemical features are accurately aligned across all samples [7].

Table 1: Standardized Data Preprocessing Protocol for HRMS Data in ML-NTA

| Processing Step | Standard Method/Protocol | Key Parameters & Considerations |

|---|---|---|

| Missing Value Imputation | k-Nearest Neighbors (KNN) Imputation [7] | - n_neighbors: Typically 5. - Distance metric: Euclidean. - Applied separately to each batch in cross-batch studies. |

| Noise Filtering | Abundance-based Thresholding [7] | - Remove features with intensity < 3x blank sample signal [7]. - Filter features present in < 10% of QC samples [7]. |

| Data Normalization | Total Ion Current (TIC) Normalization [7] | - Normalize each sample's feature intensities to its total ion count. - Robust to high missing value rates. |

| Data Alignment | Retention Time Correction & Peak Matching [7] | - Algorithms: XCMS [7]. - Critical for cross-batch/lab studies. - Orbitrap may require more stringent alignment than Q-TOF [7]. |

| Outlier Handling | Interquartile Range (IQR) Method [25] | - Identify outliers: Values < Q1 - 1.5IQR or > Q3 + 1.5IQR. - Decision: Remove, cap, or retain based on domain context [25]. |

Experimental Protocol: k-Nearest Neighbors (KNN) Imputation

Purpose: To replace missing values in the feature-intensity matrix with estimates derived from the most similar samples, preserving dataset structure and statistical power [7].

Procedure:

- Input: A peak table (samples × features) with missing values, denoted as

NaN. - Parameter Setting: Define the number of neighbors (

k); a default ofk=5is often effective. - Distance Calculation: For each sample containing a missing value in a specific feature, compute the Euclidean distance to all other samples based on the non-missing features.

- Neighbor Identification: Identify the

ksamples with the smallest Euclidean distance (the nearest neighbors). - Value Imputation: Calculate the mean (for continuous data) or mode (for categorical data) of the target feature's values from the

knearest neighbors. Use this calculated value to replace the missing datum. - Iteration: Repeat steps 3-5 until all missing values have been imputed.

- Output: A complete feature-intensity matrix ready for subsequent analysis.

Considerations: KNN imputation is computationally intensive for very large datasets. The choice of k and the distance metric can influence results and should be reported for reproducibility [7].

KNN Imputation Workflow: This protocol replaces missing values using similar samples.

Dimensionality Reduction: Addressing the Curse of Dimensionality

HRMS-based NTA datasets are characteristically high-dimensional, containing thousands of chemical features (dimensions) per sample. This creates the "curse of dimensionality," leading to data sparsity, increased computational cost, and a high risk of model overfitting [26]. Dimensionality reduction techniques counteract this by transforming the data into a lower-dimensional space while preserving its essential structure [26] [27].

Techniques and Selection Criteria

Two primary approaches are feature selection and feature extraction [26].

Feature Selection identifies and retains a subset of the most relevant original features. This is valuable when interpretability is crucial, as the original feature meanings are retained. Methods include:

- Filter Methods: Use statistical measures (e.g., correlation, ANOVA) to rank features independently of the model [26].

- Wrapper Methods: Evaluate feature subsets based on their performance on a specific model (e.g., Recursive Feature Elimination) [26] [7].

- Embedded Methods: Integrate feature selection within the model training process (e.g., Random Forest importance) [26] [27].

Feature Extraction creates new, fewer features by transforming or combining the original ones. These new features often better capture underlying patterns, though they may lack direct interpretability [26].

Table 2: Comparative Analysis of Dimensionality Reduction Techniques for ML-NTA

| Technique | Type | Key Principle | Advantages | Limitations | Ideal Use Case in ML-NTA |

|---|---|---|---|---|---|

| Principal Component Analysis (PCA) [26] [7] [27] | Linear Feature Extraction | Finds orthogonal axes (PCs) of maximum variance in the data. | - Simple, fast, deterministic. - Preserves global structure. - Good for initial exploration. | Assumes linear relationships. - Poor with complex nonlinear patterns. | Exploratory data analysis, visualization, preprocessing for linear models [26] [7]. |

| t-SNE [26] [7] | Nonlinear Feature Extraction | Preserves local similarities by modeling pairwise probabilities. | - Excellent for visualizing complex clusters. - Captures nonlinear structures. | - Computational heavy. - Results depend on perplexity parameter. - Global structure not preserved. | Visualizing cluster separation and local sample relationships [26] [7]. |

| Linear Discriminant Analysis (LDA) [26] | Supervised Feature Extraction | Maximizes separation between pre-defined classes. | - Optimal for classification. - Enhances class separability. | Requires labeled data. - Assumes normal data distribution. | Creating features for a classifier when sample sources are known [26]. |

| Autoencoders [26] | Nonlinear Feature Extraction | Neural network that learns compressed data representation. | - Powerful for complex, nonlinear data. - Can handle very high dimensionality. | - "Black-box" nature. - Computationally intensive. - Requires large datasets. | Extracting features from highly complex NTA datasets when other methods fail [26]. |

Experimental Protocol: Principal Component Analysis (PCA)

Purpose: To reduce data dimensionality by transforming features into a set of linearly uncorrelated principal components (PCs) that capture the maximum variance in the data [26] [27].

Procedure:

- Input: A preprocessed and complete feature-intensity matrix.

- Standardization: Standardize the dataset so that each feature has a mean of 0 and a standard deviation of 1. This prevents features with larger scales from dominating the variance.

- Covariance Matrix Computation: Calculate the covariance matrix to understand how the features vary from the mean with respect to each other.

- Eigendecomposition: Compute the eigenvectors and eigenvalues of the covariance matrix. The eigenvectors represent the directions of the new feature space (Principal Components), and the eigenvalues represent the magnitude of the variance carried by each PC.

- Sorting: Sort the eigenvectors by decreasing eigenvalue. The eigenvector with the highest eigenvalue is the first principal component.

- Projection: Select the top

keigenvectors (wherekis the desired number of dimensions) and project the original data onto this new subspace to obtain the lower-dimensional representation.

Considerations: The number of components to retain (k) is a critical choice. It can be determined by looking for an "elbow" in a Scree Plot (plot of eigenvalues) or by retaining enough components to explain a sufficiently high proportion (e.g., >95%) of the total cumulative variance [26].

PCA Procedure: This workflow reduces data dimensionality by identifying key variance directions.

Clustering: Discovering Inherent Group Structures

Clustering is an unsupervised machine learning technique that groups similar data points together based on their characteristics without using pre-defined labels [28]. In ML-NTA, this is pivotal for discovering natural groupings in the data, such as identifying samples that share a common contamination source or similar chemical profile [7].

Clustering Algorithms and Their Applications

The choice of algorithm depends on the expected data structure and the research question.

- Centroid-based (e.g., k-means): Organizes data around central points (centroids). It is efficient and scalable but requires pre-specifying the number of clusters (

k) and is sensitive to outliers and non-spherical clusters [28] [29]. - Density-based (e.g., DBSCAN): Defines clusters as contiguous regions of high data density. Its key advantage is that it does not require

kto be specified beforehand and can identify clusters of arbitrary shapes and noise points [28] [29]. - Hierarchical (e.g., HCA): Builds a tree of clusters (dendrogram), allowing visualization of relationships at multiple levels of granularity. It provides a rich view of data structure but can be computationally intensive for large datasets [28] [7] [29].

- Distribution-based (e.g., GMM): Assumes data is generated from a mixture of probability distributions. It provides probabilistic cluster assignments, which is useful for handling ambiguity, but requires specifying the number of distributions and can be computationally expensive [28] [29].

Table 3: Clustering Method Selection Guide for Environmental Sample Grouping

| Algorithm | Core Mechanism | Key Parameters | Pros | Cons | NTA Application Context |

|---|---|---|---|---|---|

| k-Means [28] [29] | Iteratively assigns points to nearest of k centroids. | k (number of clusters). |

- Simple, fast, scalable (O(n)) [29]. - Easy to interpret. | - Sensitive to initial centroid guess & outliers [28] [29]. - Assumes spherical, similar-sized clusters. | Initial, efficient grouping of samples where the approximate number of source types is known. |

| DBSCAN [28] [29] | Groups dense regions; labels sparse areas as noise. | eps (neighborhood radius), min_samples (core point definition). |

- Finds arbitrary shapes. - Robust to outliers. - No need to specify k. |

- Struggles with varying densities [28] [29]. - Parameter choice is critical. | Identifying core and outlier samples in spatial/temporal gradients with unknown cluster count [7]. |

| Hierarchical (HCA) [28] [7] [29] | Builds a tree of clusters via merging/splitting. | Distance metric, linkage criterion. | - No need to specify k upfront. - Provides intuitive dendrogram. - Reveals data hierarchy. |

- High computational cost (O(n²) typical) [29]. - Merging/splitting is irreversible. | Analyzing hierarchical source relationships (e.g., major source type -> sub-types) [7]. |

| Gaussian Mixture Model (GMM) [28] [29] | Fits data as a mixture of Gaussian distributions. | n_components (number of distributions). |

- Provides soft (probabilistic) clustering. - Flexible cluster shape (covariance). | - Sensitive to initialization. - Can overfit if n_components is too high. |

Modeling samples with partial membership to multiple contamination sources. |

Experimental Protocol: k-Means Clustering

Purpose: To partition n samples into k clusters, where each sample belongs to the cluster with the nearest mean (centroid), minimizing within-cluster variance [28].

Procedure:

- Input: A dataset (often the output from a dimensionality reduction step like PCA for better performance).

- Parameter Initialization: Choose the number of clusters

k. Methods to inform this choice include the Elbow Method (plotting within-cluster sum of squares vs.k) or domain knowledge. - Centroid Initialization: Randomly select

kdata points from the dataset as the initial centroids. - Assignment Step: Assign each data point to the closest centroid based on a distance metric (typically Euclidean distance).

- Update Step: Recalculate the centroids as the mean of all data points assigned to that cluster.

- Iteration: Repeat steps 4 and 5 until the centroids no longer change significantly (convergence) or a maximum number of iterations is reached.

- Output: Cluster labels for each sample and the final centroid locations.

Considerations: k-means is sensitive to the initial random selection of centroids. It is good practice to run the algorithm multiple times with different initializations (n_init parameter) and use the result with the lowest within-cluster variance. The Elbow Method is a heuristic, not a definitive test for k [28].

k-Means Clustering: This algorithm partitions data into k clusters by minimizing variance.

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

This section details the critical software, libraries, and analytical tools required to implement the protocols described in this application note.

Table 4: Essential Research Reagents & Computational Solutions for ML-NTA Data Processing

| Tool/Category | Specific Examples | Function in ML-Oriented Data Processing |

|---|---|---|

| Programming Languages & Core Libraries | Python, R | Primary languages for implementing the entire data processing pipeline, from data manipulation to model training and visualization. |

| Data Manipulation & Analysis | Pandas, NumPy (Python); dplyr (R) | Used for loading, cleaning, filtering, and transforming the feature-intensity matrix (e.g., handling missing values, normalization). |

| Machine Learning & Preprocessing | Scikit-learn (Python); caret (R) | Provides a unified interface for all major preprocessing techniques (imputation, scaling), dimensionality reduction algorithms (PCA, LDA), and clustering methods (k-means, DBSCAN, HCA). Essential for building reproducible pipelines. |

| Nonlinear Dimensionality Reduction & Advanced ML | t-SNE, UMAP, Autoencoders (e.g., using TensorFlow or PyTorch) | Specialized libraries for implementing complex feature extraction techniques that capture nonlinear patterns in the data, crucial for visualizing and understanding complex NTA datasets. |