Advances in In-Situ Monitoring of Environmental Pollutants: Real-Time Techniques for Public Health and Biomedical Research

This article provides a comprehensive review of cutting-edge in-situ monitoring techniques for environmental pollutants, tailored for researchers, scientists, and drug development professionals.

Advances in In-Situ Monitoring of Environmental Pollutants: Real-Time Techniques for Public Health and Biomedical Research

Abstract

This article provides a comprehensive review of cutting-edge in-situ monitoring techniques for environmental pollutants, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles driving the shift from traditional lab-based methods to real-time, on-site analysis. The scope spans from established methodologies like chemical sensors and biosensors to emerging technologies such as biomonitoring and big data integration. Critical challenges including sensor stability, data integration, and environmental variability are addressed, alongside a comparative analysis of technique validation. The synthesis underscores the profound implications of these advancements for ensuring environmental health, enhancing the reproducibility of biomedical research, and informing toxicological risk assessment in drug development.

The Critical Need for Real-Time Data: Foundational Principles of In-Situ Pollutant Monitoring

In the field of environmental pollutant research, the ability to accurately and timely assess contamination is paramount for public health protection and effective remediation strategies [1]. For decades, the standard approach has relied on traditional laboratory methods, which involve collecting physical samples from the field and transporting them to a central facility for analysis. While these methods are valuable for their sensitivity and accuracy, they are constrained by complex preparation, potential sample degradation, and significant delays between sampling and result availability [1] [2]. In contrast, in-situ monitoring represents a paradigm shift, enabling real-time, on-site measurement of pollutants directly within their environmental matrix. This Application Note defines in-situ monitoring, details its protocols, and systematically contrasts it with traditional laboratory analysis, providing researchers with a framework for selecting appropriate methodologies for environmental pollutant research.

Core Concepts and Definitions

In-Situ Monitoring

In-situ monitoring refers to the direct, on-site analysis of environmental samples—be it air, water, or soil—without the need for removal and transport to a centralized laboratory. This approach leverages field-portable instruments to gather data in real-time or near-real-time, providing immediate insight into environmental conditions [2]. A key application is the real-time tracking of dynamic processes, such as monitoring natural labile copper (Cu') during the growth of a marine diatom to understand its bioavailability [3].

Traditional Laboratory Methods

Traditional methods involve the collection of grab or composite samples from a field site, followed by their preservation, transportation, and subsequent processing in a controlled laboratory setting. These analyses often involve sophisticated, stationary instruments and require extensive sample preparation [2] [4]. They have historically been the foundation for regulatory compliance and environmental quality assessment.

Comparative Analysis: In-Situ vs. Laboratory Methods

The following table summarizes the fundamental differences between these two approaches, highlighting key operational and performance characteristics.

Table 1: Comparison of In-Situ Monitoring and Traditional Laboratory Methods

| Characteristic | In-Situ Monitoring | Traditional Laboratory Methods |

|---|---|---|

| Analysis Location | On-site, in the field [2] | Off-site, in a centralized laboratory [2] |

| Time to Results | Real-time or near-real-time [5] [2] | Delayed (hours to weeks) due to transport and queuing [6] |

| Sample Preparation | Minimal or none; direct measurement [2] | Extensive (e.g., preservation, extraction, purification) [4] |

| Spatial Resolution | High; enables dense, strategic sampling and mapping of plumes [2] | Lower; constrained by cost and logistics of sample collection [7] |

| Temporal Resolution | High; capable of continuous monitoring to capture dynamics [5] | Low; typically discrete snapshots in time [7] |

| Data Utility | Rapid decision-making, early warning systems, process control [5] [8] | Regulatory compliance, reference data, method development [4] |

| Cost Structure | Lower operational cost per data point; higher initial instrument investment [2] | High per-sample cost due to labor, preparation, and disposal [2] |

| Environmental Footprint | Greener; minimal reagent use and analytical waste [2] | Higher; generates significant solvent and consumable waste [2] |

| Key Limitations | Higher detection limits, potential field interferences, limited multiplexing [2] | Sample degradation during transport, poor temporal representation, high cost of dense sampling [7] [2] |

Experimental Protocols

Protocol for In-Situ Monitoring of Bioavailable Copper in Aquatic Systems

This protocol is adapted from recent research on monitoring natural labile copper (Cu') during the growth of marine diatoms [3].

Principle

A functionalized iridium-needle electrode (Ir-NE) is used for voltammetric determination. The electrode is coated with agarose gel (AG-gel) as a protective layer and gold nanoparticles (AuNPs) which provide excellent electro-catalytic capacity. This setup allows for separation-catalysis detection, offering high sensitivity and anti-biofouling capability for direct, real-time measurement in a complex culture medium [3].

Research Reagent Solutions & Essential Materials

Table 2: Key Reagents and Materials for In-Situ Copper Monitoring

| Item | Function/Brief Explanation |

|---|---|

| Iridium-needle electrode (Ir-NE) | Base sensor platform for voltammetric measurements. |

| Gold Nanoparticles (AuNPs) | Functional coating that enhances sensitivity via electro-catalytic activity. |

| Agarose Gel (AG-gel) | Forms a protective layer on the electrode, enhancing stability and lifespan while providing anti-biofouling properties. |

| Culture Medium | The environmental matrix (e.g., for marine diatom Phaeodactylum tricornutum). |

| Standard Cu' Solutions | Used for calibration and quantification of labile copper concentration. |

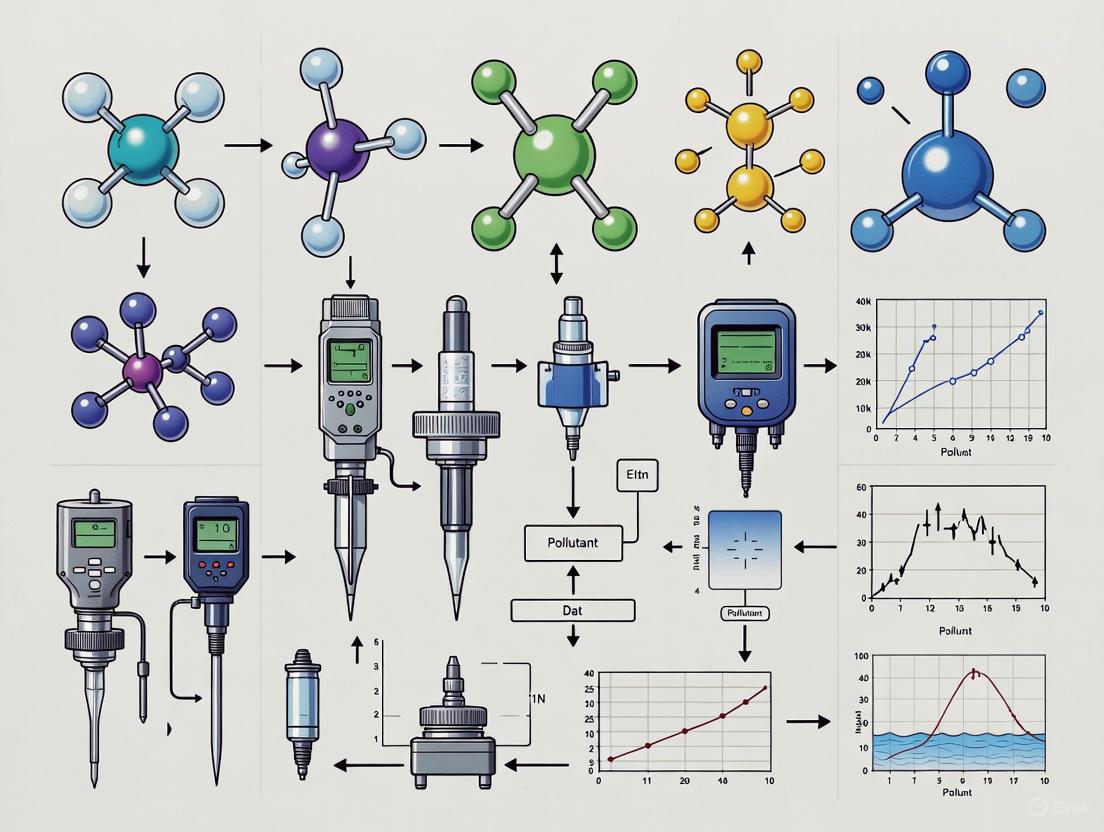

Workflow Diagram

The following diagram illustrates the sequential workflow for this in-situ monitoring experiment.

Step-by-Step Procedure

- Electrode Fabrication: Prepare the iridium-needle electrode (Ir-NE) substrate.

- Functionalization: Modify the electrode surface by depositing AuNPs followed by a coating of AG-gel. This enhances sensitivity, stability, and anti-biofouling properties [3].

- Calibration: Calibrate the functionalized electrode using standard solutions of labile copper (

Cu') in a matrix similar to the sample to establish a quantitative relationship. - In-Situ Deployment: Place the calibrated electrode directly into the culture medium containing the growing marine diatom (Phaeodactylum tricornutum).

- Real-Time Measurement: Initiate continuous or frequent voltammetric measurements to track changes in

Cu'concentration throughout the diatom's growth cycle. - Data Analysis: Correlate the measured

Cu'concentrations or theCu'/TdCuratio with algal cell density to assess copper bioavailability [3].

Protocol for Traditional Laboratory Analysis of Pollutants in Water

This generalized protocol is indicative of methods used for regulated compounds like PFAS (Per- and polyfluoroalkyl substances) in drinking water [4].

Principle

Solid-phase extraction (SPE) is used to isolate, concentrate, and purify target analytes from a large volume of water. The extracted analytes are then separated and quantified using liquid chromatography–tandem mass spectrometry (LC–MS/MS) [4].

Workflow Diagram

The multi-stage, time-intensive process for traditional laboratory analysis is outlined below.

Step-by-Step Procedure

- Field Sampling: Collect a representative water sample in a pre-cleaned container. Samples may be composited over time.

- Preservation & Transport: Chemically preserve the sample (e.g., by adjusting pH) to prevent degradation and ship it on ice to a certified analytical laboratory. This step can introduce a delay of days.

- Sample Preparation (SPE): In the laboratory, pass the water sample through a conditioned SPE cartridge to adsorb the target pollutants. The cartridge is then washed with solvents to elute the concentrated analytes. This process is time-consuming (can take "four hours to a day") and generates organic waste [4].

- Instrumental Analysis (LC-MS/MS): Inject the extract into the LC-MS/MS system. The liquid chromatography column separates the complex mixture, and the mass spectrometer identifies and quantifies the specific pollutants.

- Data Processing & Reporting: Analyze the raw data, apply quality control checks, and generate a formal report. The entire process from sampling to final result can take days to weeks.

The Scientist's Toolkit: Key Technology Enablers

The advancement of in-situ monitoring is driven by several key technologies that form the modern environmental scientist's toolkit.

Table 3: Key Enabling Technologies for Modern Environmental Monitoring

| Technology | Function/Brief Explanation | Key Feature |

|---|---|---|

| Field-Portable XRF | On-site elemental analysis of solids (e.g., soil, sediments) for heavy metals [2]. | Non-destructive; provides immediate results for site screening. |

| Portable GC-MS | On-site separation and identification of volatile organic compounds (VOCs) in air, water, and soil [2]. | Gold-standard identification in the field; crucial for emergency response. |

| Biosensors | Biological recognition element (e.g., enzyme, antibody) coupled to a transducer for specific pollutant detection [1]. | High specificity and potential for miniaturization. |

| IoT Sensors | Networks of small, connected sensors that transmit data wirelessly for real-time tracking of parameters like temperature, pH, and specific ions [8] [9]. | Enables large-scale, continuous monitoring networks. |

| Advanced Spectrometers | Portable versions of UV-Vis, NIR, and Raman spectrometers for on-site molecular analysis [2]. | Versatile for a range of organic and inorganic pollutants. |

The contrast between in-situ monitoring and traditional laboratory methods is stark, representing a trade-off between speed, spatial/temporal resolution, and operational cost versus the ultimate sensitivity and regulatory acceptance often associated with established lab techniques [1] [2]. In-situ monitoring is indispensable for dynamic risk assessment, rapid site characterization, and understanding real-world biogeochemical processes where timely data is critical. Traditional methods remain essential for validation, compliance with specific regulations, and analyzing complex mixtures at trace levels.

The future of environmental pollutant research lies in interdisciplinary approaches and the intelligent integration of these complementary methodologies [1] [7]. Field-based studies capture essential ecosystem feedbacks, while controlled laboratory experiments reveal underlying mechanisms. Bridging this divide, through techniques like data assimilation and the development of more robust and sensitive field instruments, will be crucial for comprehensive public health protection and environmental stewardship [7].

The increasing anthropogenic load on environmental systems has necessitated the development of advanced in-situ monitoring techniques for detecting and quantifying key pollutants. Heavy metals, volatile organic compounds (VOCs), pharmaceuticals, and emerging contaminants represent significant risks to ecosystem integrity and human health due to their persistence, toxicity, and bioaccumulative potential [10] [11]. Traditional laboratory-based analysis methods, while accurate, often lack the temporal and spatial resolution required for comprehensive environmental assessment, particularly given the complex dispersion patterns of these contaminants in aquatic systems [3] [10]. This application note synthesizes current methodologies and protocols for in-situ monitoring of these pollutant classes, framed within a research context emphasizing real-time detection, spatial analysis, and advanced sensing technologies. The integration of geographic information systems (GIS), nano-enabled sensors, and advanced spectroscopic methods is transforming environmental monitoring from a descriptive to a predictive, integrative framework for environmental governance [10].

Application Notes

The monitoring of heavy metals (HMs) in aquatic environments has evolved significantly through the integration of geographic information systems (GIS) and advanced sensing technologies. GIS applications enable the spatial assessment and management of HMs across multiple scales, from localized aquifers to regional hydrological systems [10].

Spatial Monitoring Framework: A typical GIS-based environmental assessment for heavy metals involves a multi-stage process: (1) collection of water samples and chemical analysis to quantify HM concentrations; (2) georeferencing using GPS coordinates; (3) system development and integration through GIS software with specialized hydrological applications; and (4) spatial analysis to identify high-risk areas and model contaminant dispersion [10]. Case studies demonstrate that concentrations of certain heavy metals frequently surpass World Health Organization (WHO) thresholds, posing substantial risks to human health and aquatic ecosystems [10].

In-situ Metal Speciation Monitoring: Beyond total metal concentration, understanding metal bioavailability requires speciation analysis. A novel iridium-needle electrode (Ir-NE) functionalized with agarose gel (AG-gel) and gold nanoparticles (AuNPs) has been developed for the real-time in-situ monitoring of natural labile copper (Cu') in marine environments [3]. This sensor successfully achieved real-time in-situ monitoring of Cu' in the culture medium of the marine diatom Phaeodactylum tricornutum, demonstrating that Cu' or the Cu' to total dissolved Cu ratio (Cu'/TdCu) may be a more accurate indicator of copper bioavailability to marine diatoms than total dissolved copper (TdCu) [3].

Table 1: Advanced Monitoring Technologies for Heavy Metals in Aquatic Systems

| Technology/Method | Key Features | Target Analytes | Spatial Application Scale |

|---|---|---|---|

| GIS-based Spatial Modeling [10] | Integration with statistical techniques, remote sensing, and machine learning; predictive capability | Multiple heavy metals (e.g., Pb, Cd, Hg, As) | Local aquifers to regional hydrological systems |

| Functionalized Electrodes (AG-gel/AuNPs/Ir-NE) [3] | In-situ, real-time monitoring; high sensitivity; anti-biofouling capability; measures metal speciation | Labile copper and other bioavailable metal species | Microenvironments (e.g., algal culture media, sediment-water interface) |

| Passive Sampling Devices [12] | Time-integrated data; accumulates trace metals over time; improves detection of low-concentration metals | Broad range of metal contaminants | Point sources (e.g., industrial outfalls, stormwater discharges) |

Volatile Organic Compounds (VOCs) as Diagnostic Tools

VOC detection has important applications in clinical diagnostics and environmental monitoring, with a marked shift toward sensor-based approaches that offer rapid, cost-effective, and non-invasive analysis [13] [14].

Clinical Diagnostics via Bacterial VOC Profiling: In clinical wound management, quantifying VOCs released by bacteria provides a promising, non-invasive method for early infection detection [13]. This approach allows for continuous monitoring without invasive procedures, reducing patient discomfort and infection risk. Sensor technologies, including array-based, nano, and microsensors, are particularly advantageous over conventional spectroscopy methods due to their rapidity, affordability, and precision [13]. These sensors detect specific VOC biomarkers associated with bacterial metabolism, enabling prompt intervention.

Advanced VOC Sensing Technologies: Conventional VOC detection techniques like gas chromatography-mass spectrometry (GC-MS) are being supplemented or replaced by advanced sensing devices based on optical, electrochemical, and chemoresistive materials [14]. These advanced sensors demonstrate significant potential for non-invasive early diagnosis and disease monitoring through exhaled breath analysis, without compromising the accuracy and specificity of conventional techniques [14].

Table 2: Comparison of Conventional and Advanced VOC Detection Techniques

| Technique Category | Example Techniques | Key Advantages | Primary Limitations |

|---|---|---|---|

| Conventional Methods [14] | Gas Chromatography-Mass Spectrometry (GC-MS), Proton-Transfer-Reaction Mass Spectrometry (PTR-MS), Selected-Ion Flow-Tube Mass Spectrometry (SIFT-MS) | High accuracy and specificity; gold standard for compound identification | Often laboratory-bound; time-consuming; expensive equipment; requires skilled operators |

| Advanced Sensing Approaches [13] [14] | Optical, Electrochemical, and Chemoresistive Sensors; Array-based, Nano, and Micro-sensors | Rapid, cost-effective, non-invasive, precise; potential for point-of-care and continuous in-situ monitoring | Ongoing development to match the full specificity and multi-analyte capability of conventional methods |

Pharmaceutical Residues and Emerging Contaminants

Pharmaceutical residues and other emerging contaminants (ECs) represent a growing environmental concern, as they often escape conventional wastewater treatment processes and pose risks of endocrine disruption and antimicrobial resistance (AMR) [15] [16] [11].

Global Occurrence and Risk: A global synthesis of data from 101 peer-reviewed publications evaluated the occurrence of 20 pharmaceuticals in sewage treatment plants (STPs) [15]. Analgesics/anti-inflammatory drugs were found at the highest cumulative concentrations, particularly in North and South America. Compounds such as diclofenac, ibuprofen, sulfamethoxazole, and ciprofloxacin were frequently detected at high concentrations, sometimes exceeding 100,000 ng/L in STP influent [15]. While ibuprofen and naproxen showed high removal efficiencies (>80%), compounds like diazepam, carbamazepine, azithromycin, and clindamycin demonstrated persistence through conventional treatment [15].

API Contamination Hotspots from Manufacturing: Although direct releases from pharmaceutical manufacturing account for only about 2% of the total pharmaceutical load in the environment, they can create significant local contamination "hotspots" due to high concentrations of active pharmaceutical ingredients (APIs) [16]. This is particularly relevant given the geographical concentration of API production in regions like India and China, where a significant proportion of watersheds face medium to high water stress and wastewater treatment infrastructure may be limited [16]. These point-source discharges are a noted contributor to environmental antibiotic resistance [16].

Beyond PFAS: The Next Generation of Emerging Contaminants: Regulatory and research focus is expanding beyond PFAS to include other classes of ECs [12] [11]. These include:

- Nanomaterials and Engineered Nanoparticles (e.g., fullerenes, metal oxides) with complex environmental behavior and potential for bioaccumulation [12] [11].

- Microplastics and Nanoplastics, which are ubiquitous in aquatic environments and can adsorb organic pollutants, facilitating their transport [12] [11].

- Persistent Additives and Novel Industrial Chemicals, such as flame retardants and plasticizers [12].

Table 3: Selected Pharmaceuticals in Global Wastewater and Their Removal Efficiency Data synthesized from 101 peer-reviewed publications on global pharmaceutical pollution [15]

| Pharmaceutical Compound | Therapeutic Class | Maximum Reported Influent Concentration (ng/L) | Typical Removal Efficiency in Conventional STPs |

|---|---|---|---|

| Diclofenac | Analgesic/Anti-inflammatory | >100,000 | Variable; often persistent |

| Ibuprofen | Analgesic/Anti-inflammatory | >100,000 | High (>80%) |

| Sulfamethoxazole | Antibiotic | >100,000 | Variable |

| Ciprofloxacin | Antibiotic | >100,000 | Moderate to High |

| Carbamazepine | Anticonvulsant | Data Not Specified | Low / Persistent (Negative Removal Observed) |

| Diazepam | Anxiolytic | Data Not Specified | Low / Persistent (Negative Removal Observed) |

Experimental Protocols

Protocol: In-situ Monitoring of Bioavailable Copper in Marine Microenvironments

Objective: To achieve real-time, in-situ monitoring of natural labile copper (Cu') during the growth of a marine diatom, Phaeodactylum tricornutum, using a functionalized iridium-needle electrode [3].

Principle: The protocol employs an agarose gel (AG-gel) and gold nanoparticle (AuNPs) modified iridium-needle electrode (AG-gel/AuNPs/Ir-NE). The AG-gel acts as a protective layer, enhancing stability and lifespan, while the AuNPs provide excellent electrocatalytic capacity for voltammetric determination. This setup enables a separation-catalysis detection mechanism that offers high sensitivity and anti-biofouling capability [3].

Diagram 1: In-situ Cu' Monitoring Workflow

Materials and Reagents:

- Iridium-needle electrode (Ir-NE) [3]

- Gold nanoparticle (AuNP) suspension (for electrode functionalization) [3]

- Agarose gel (AG-gel) (for protective coating) [3]

- Marine diatom Phaeodactylum tricornutum culture

- Synthetic or natural seawater culture medium

- Copper standard solutions for calibration

- Voltammetric analyzer (e.g., potentiostat)

Procedure:

Electrode Fabrication and Functionalization:

- Clean and polish the iridium-needle electrode substrate.

- Electrodeposit or drop-cast gold nanoparticles (AuNPs) onto the electrode surface to enhance electrocatalytic activity.

- Apply a thin, uniform layer of agarose gel (AG-gel) over the AuNPs-modified surface. This gel layer acts as a protective barrier, conferring anti-biofouling properties and enhancing electrode stability for long-term in-situ deployment [3].

- Validate electrode performance using standard copper solutions.

Experimental Setup and Deployment:

- Inoculate Phaeodactylum tricornutum in culture medium under controlled conditions (e.g., light, temperature).

- Calibrate the functionalized AG-gel/AuNPs/Ir-NE sensor in the culture medium prior to diatom inoculation.

- Immerse the validated sensor directly into the diatom culture vessel for in-situ monitoring.

Real-time Measurement and Data Acquisition:

- Conduct voltammetric measurements (e.g., square-wave anodic stripping voltammetry) at predetermined time intervals throughout the diatom growth period.

- Continuously record the voltammetric signals corresponding to the concentration of natural labile copper (Cu').

Data Analysis and Correlation:

- Convert the electrochemical signals into Cu' concentrations using the pre-established calibration curve.

- Periodically measure and record the cell density of P. tricornutum.

- Perform correlation analysis between the measured Cu' concentrations (and the Cu'/TdCu ratio) and the diatom cell density to assess the relationship between copper bioavailability and algal growth [3].

Protocol: GIS-Based Spatial Assessment of Heavy Metal Contamination

Objective: To monitor and manage heavy metal (HM) contamination in water resources by assessing spatial distribution patterns, identifying pollution hotspots, and evaluating associated environmental and health risks [10].

Principle: This protocol uses geographic information systems (GIS) to integrate, visualize, and analyze georeferenced data on heavy metal concentrations in water. It combines spatial analysis with statistical techniques and machine learning to model contamination and inform management decisions [10].

Diagram 2: GIS-Based HM Assessment Workflow

Materials and Software:

- GPS device for precise sample location logging

- Water sampling equipment (bottles, filters, preservatives)

- Analytical instrumentation (e.g., ICP-MS, AAS) for HM quantification

- GIS software (e.g., ArcGIS, QGIS)

- Spatial database management system

- (Optional) Hydrological modeling applications (e.g., HEC-RAS, SWMM) and statistical/ML software (e.g., R, Python) [10]

Procedure:

Data Collection and Georeferencing:

- Design a sampling strategy covering the water resource of interest (e.g., river, lake, aquifer).

- Collect water samples following standardized protocols, ensuring chain of custody.

- Analyze samples in the laboratory using validated methods (e.g., ICP-MS) to quantify concentrations of target heavy metals.

- Record the geographic coordinates (latitude/longitude) of each sampling point using a GPS device, creating a georeferenced dataset [10].

System Development and Integration:

- Input the georeferenced heavy metal data into the GIS software.

- Develop a spatial database incorporating additional relevant layers, such as land use (industrial, agricultural), hydrological features, soil types, and population density.

- Integrate specialized hydrological or hydraulic models if required for predicting contaminant transport [10].

Spatial Analysis and Modeling:

- Use GIS interpolation techniques (e.g., kriging, inverse distance weighting) to generate continuous spatial distribution maps (thematic maps) of individual heavy metal concentrations.

- Identify contamination hotspots by overlaying concentration data with regulatory thresholds (e.g., WHO limits).

- Perform statistical and machine learning analysis to correlate heavy metal concentrations with potential anthropogenic sources (e.g., proximity to industrial discharges, agricultural runoff) [10].

- Conduct health risk evaluations by modeling exposure pathways for nearby populations.

Visualization and Reporting:

- Create interactive maps, charts, and dashboards to effectively communicate findings.

- Synthesize results into a decision support system to provide actionable insights for environmental managers, policymakers, and public health authorities [10].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Materials for Pollutant Monitoring

| Research Reagent/Material | Function/Application | Key Characteristics |

|---|---|---|

| Gold Nanoparticles (AuNPs) [3] | Electrode functionalization for enhanced electrocatalytic detection of metals. | High surface-area-to-volume ratio; excellent conductivity; can be synthesized in controlled sizes. |

| Agarose Gel (AG-gel) [3] | Protective coating for in-situ electrodes; provides anti-biofouling properties. | Hydrophilic polymer; forms a porous, protective layer; enhances sensor stability and lifespan. |

| Passive Samplers (POCIS, Chemcatcher) [12] | Time-integrated sampling of trace organic contaminants (e.g., pharmaceuticals) from water. | Accumulates contaminants over time; provides a more representative picture of pollution levels than grab sampling. |

| Specialized GIS Software & Databases [10] | Platform for spatial data integration, analysis, and visualization of pollutant distribution. | Enables management of georeferenced data; supports advanced spatial analysis and modeling. |

| High-Resolution Mass Spectrometry (HRMS) [12] | Non-targeted screening for unknown emerging contaminants and transformation products. | High mass accuracy and resolution; enables identification of compounds not in standard target lists. |

| Reverse Osmosis (RO) & Nanofiltration Membranes [17] | Advanced treatment for removing micropollutants and salts from pharmaceutical wastewater. | High rejection rates for contaminants; key component in achieving high-purity water standards and Zero Liquid Discharge. |

Accurate detection and monitoring of environmental pollutants are paramount for effective public health initiatives and disease prevention [1]. The selection of sampling methodology is a critical determinant in data quality, influencing the reliability of risk assessments and the efficacy of mitigation strategies. For decades, grab sampling has been a conventional technique for environmental monitoring. However, its inherent limitations, particularly its inability to capture temporal variations in pollutant concentrations, have become increasingly apparent. This has created a significant demand for monitoring solutions that offer high temporal resolution, enabling researchers to observe dynamic changes and trends in contaminant levels over time [18]. This document outlines the core limitations of grab sampling, underscores the importance of temporal resolution, and provides detailed protocols for implementing advanced, continuous monitoring techniques.

Limitations of Grab Sampling

Grab sampling involves the collection of a discrete environmental sample (e.g., water, air) at a specific location and point in time. While modern systems offer improved safety and efficiency [19], the fundamental constraints of this method remain.

Core Technical and Methodological Constraints

- Snapshot-in-Time Data: Grab samples provide only a single data point, potentially missing short-term peaks, cyclical fluctuations, and transient pollution events that could be critical for risk assessment [20].

- Risk of Unrepresentative Data: The "snapshot" nature means concentrations can be highly susceptible to temporary conditions, leading to data that may not accurately represent average or worst-case exposure scenarios [21].

- Limited Scope for Identification: Grab sampling is poorly suited for non-targeted screening, as it may miss contaminants that are present intermittently. Studies show passive sampling can identify a higher number of contaminants, such as pharmaceuticals and pesticides, compared to grab sampling [20].

- Potential for Sample Degradation: Between sample collection and laboratory analysis, the integrity of the sample may be compromised through chemical or biological processes, despite preservation efforts [21].

- High Long-Term Costs: Although a single grab sample may seem inexpensive, the cumulative cost of numerous samples over time—reportedly ranging from $100 to $1,000 per sample for off-site analysis—can be substantial, especially for long-term monitoring programs [21].

Comparative Analysis: Grab vs. Passive Sampling

The following table summarizes a key study comparing grab and passive sampling techniques for identifying contaminants of emerging concern (CECs) in wastewater effluent (WWE) and river water.

Table 1: Comparative performance of grab and passive sampling in a non-target screening study [20].

| Parameter | Grab Sampling | Passive Sampling |

|---|---|---|

| Total Compounds Identified (WWE) | Lower (e.g., missed 5 compounds found by passive samplers) | Higher (85 compounds identified) |

| Total Compounds Identified (River Water) | Variable (17-24, depending on date) | More consistent (47 compounds identified) |

| Ion Abundance / Signal Quality | Lower, leading to poorer quality MS2 spectra | Higher, providing better quality MS2 spectra for identification |

| Isotopic Pattern Match | Poorer (e.g., <80% for some compounds) | Superior (e.g., 4 out of 4 isotopes present) |

| Number of Fragments in MS2 | Lower | Higher |

| Susceptibility to Concentration Fluctuations | High | Low (integrates over time) |

The Critical Need for Temporal Resolution

Temporal resolution refers to the frequency at which measurements are taken over time. High temporal resolution is crucial for understanding the dynamics of environmental systems.

Impact on Modeling Accuracy

Research demonstrates that incorporating temporal dependencies significantly enhances the predictive accuracy of air pollution models. A 2025 study on urban air pollution modeling found that including temporal lag features (autocorrelation) dramatically improved model performance [18].

Table 2: Impact of temporal autocorrelation on machine learning model performance for predicting pollutant concentrations [18].

| Pollutant | Model Scenario | RMSE (µg/m³) | Performance Change |

|---|---|---|---|

| PM₁₀ | Without temporal lags | 92.56 | - |

| With temporal lags (AR) | 68.59 | 25.9% RMSE Reduction | |

| PM₂.₅ | Without temporal lags | 61.10 | - |

| With temporal lags (AR) | 37.30 | 38.9% RMSE Reduction | |

| NOx | Without temporal lags | 7.90 | - |

| With temporal lags (AR) | 12.10 | 53.2% RMSE Increase |

The pollutant-specific nature of these results—where temporal data benefited PM predictions but not NOx—underscores the need for a tailored, resolution-aware modeling strategy [18].

Benefits for Public Health and Policy

High temporal resolution data provides a robust foundation for evidence-based decision-making, enabling:

- Refined Public Health Advisories: Real-time data allows for dynamic health warnings, such as during acute pollution events.

- Effective Source Attribution: Identifying patterns in pollution levels helps pinpoint specific sources and their operational timelines.

- Optimized Remediation Efforts: Continuous monitoring can assess the real-time effectiveness of cleanup actions, allowing for immediate adjustments.

- Comprehensive Risk Assessment: Capturing peak exposures and cumulative doses leads to more accurate evaluations of human and ecological health risks.

Experimental Protocols for Advanced Temporal Monitoring

Protocol: Non-Target Screening with Passive Samplers and HRMS

This protocol is adapted from methodologies used to identify pharmaceuticals, pesticides, and their transformation products in water [20].

1. Sampling Deployment

- Materials: Passive sampling devices (e.g., POCIS), grab sample bottles, field filters, coolers, chain-of-custody forms.

- Procedure:

- Deploy passive samplers in the water body for a defined period (e.g., 14 days).

- In parallel, collect grab samples at the time of deployment and retrieval.

- Record in-situ parameters (pH, temperature, dissolved oxygen).

- Store samples on ice and transport to the laboratory promptly for analysis.

2. Sample Preparation and Analysis

- Materials: Liquid Chromatography system coupled to a High-Resolution Mass Spectrometer (LC-HRMS), solid phase extraction (SPE) apparatus, analytical standards.

- Procedure:

- Extraction: Process passive samplers and grab samples using SPE to concentrate analytes.

- Instrumental Analysis: Analyze extracts using LC-HRMS in Data-Dependent Acquisition (DDA) mode. Use a C18 column with a water/acetonitrile gradient elution.

- Quality Control: Include procedural blanks and quality control samples spiked with internal standards.

3. Data Processing and Compound Identification

- Materials: HRMS data processing software, spectral libraries (e.g., mzCloud, NIST).

- Procedure:

- Process raw HRMS data to perform peak picking, alignment, and deconvolution.

- Identify compounds by matching acquired MS2 spectra to spectral libraries (Level 2a identification) [20].

- Increase confidence by employing retention time prediction models where possible.

- For final confirmation, analyze authentic analytical standards (Level 1 identification).

Protocol: In-Situ Monitoring with Micro-Chemical Sensors

This protocol outlines the use of in-situ sensors for real-time monitoring of volatile organic compounds (VOCs) [21].

1. Sensor System Deployment

- Materials: Chemiresistor sensor array in a waterproof housing, data logging system, power supply, deployment fixture.

- Procedure:

- Calibrate the sensor array in the laboratory using training sets of target VOCs to establish a response pattern library.

- Emplace the sensor package in the subsurface or water column using a dedicated well or deployment fixture.

- Connect to a power source and data logger configured for continuous or frequent intermittent measurement.

2. Data Acquisition and Transmission

- Materials: Remote data transmission hardware (e.g., cellular or satellite modem).

- Procedure:

- Program the data logger to record electrical resistance measurements from each chemiresistor at set intervals (e.g., every 15 minutes).

- Transmit data in near real-time to a central server for access and analysis.

3. Data Analysis and Contaminant Characterization

- Materials: Data analysis software with pattern recognition capabilities, contaminant transport models.

- Procedure:

- Analyze the time-dependent resistance data using pattern recognition algorithms to identify and quantify specific VOCs based on the calibration training set.

- Use the temporal data series in inverse modeling with contaminant transport models to characterize the source location and composition.

Visualization of Methodologies

Workflow for Advanced Pollutant Monitoring

In-Situ Chemiresistor Sensing Mechanism

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key materials and reagents for advanced environmental pollutant monitoring.

| Item | Function/Application |

|---|---|

| Passive Samplers (e.g., POCIS) | Time-integrative sampling of hydrophilic contaminants from water; provides a cumulative picture of exposure over deployment period [20]. |

| Chemiresistor Sensor Array | In-situ, real-time detection of VOCs; consists of polymers that swell upon VOC exposure, changing electrical resistance [21]. |

| LC-HRMS System | High-confidence identification of unknown pollutants and transformation products through accurate mass measurement and structural fragmentation [20]. |

| Discrete Interval Sampler | Collects no-purge, discrete groundwater samples from specific depths without agitation, preserving sample integrity for VOCs [22]. |

| Solid Phase Extraction (SPE) Cartridges | Concentration and clean-up of water samples prior to instrumental analysis, improving detection limits for trace-level pollutants [20]. |

| Deuterated Internal Standards | Correction for matrix effects and analyte loss during sample preparation and analysis, improving quantitative accuracy in mass spectrometry [20]. |

| Authentic Analytical Standards | Unambiguous confirmation of contaminant identity (Level 1 identification) and instrument calibration for quantitative analysis [20]. |

In-situ monitoring techniques provide critical, real-time data on environmental pollutants, serving as a foundational element for public health initiatives, quantitative risk assessment, and targeted disease prevention strategies. The ability to detect and measure contaminants directly in the environment enables a proactive approach to safeguarding human health. These monitoring data feed directly into the public health intervention model, which is structured across multiple tiers of prevention—from primordial efforts aimed at eliminating risk factors from populations to tertiary measures that manage established chronic diseases [23]. This document outlines detailed protocols and applications for leveraging in-situ monitoring within this public health framework, providing researchers and scientists with the methodologies to translate environmental data into actionable health protections.

Public Health Frameworks and the Role of Environmental Monitoring

Public health interventions are systematically categorized into several levels of prevention, each representing a different stage for applying strategies to avoid or mitigate disease. The continuous, real-time data provided by advanced in-situ monitoring technologies are vital for informing actions at every stage [24] [23].

Levels of Prevention and Corresponding Monitoring Applications

The table below delineates how in-situ monitoring data directly supports interventions at each stage of prevention.

Table 1: Linking In-Situ Monitoring Data to Levels of Prevention

| Level of Prevention | Goal of Intervention | Application of In-Situ Monitoring Data |

|---|---|---|

| Primordial [23] | Establish conditions that minimize future health risks for entire populations. | Identifying geographic areas with high baseline levels of air or water pollutants (e.g., PM2.5, heavy metals) to inform land-use planning and environmental policies. |

| Primary [23] | Reduce or eliminate risk factors in healthy individuals to prevent disease onset. | Triggering public health advisories (e.g., air quality alerts) to warn susceptible populations to reduce exposure during high-pollution events. |

| Secondary [23] | Detect and treat existing disease in its earliest, often asymptomatic, stages. | Pinpointing hotspots of known contaminants (e.g., carcinogens) to target community-level health screening programs for early detection of related illnesses. |

| Tertiary [23] | Manage established chronic disease to prevent complications and disability. | Tracking compliance with environmental regulations in areas with vulnerable populations (e.g., those with pre-existing heart or lung disease) to prevent exacerbations. |

Protocol: Human Health Risk Assessment Informed by In-Situ Data

The following protocol is adapted from the United States Environmental Protection Agency's (EPA) framework for conducting a Human Health Risk Assessment (HHRA) [25]. It integrates specific methodologies for utilizing in-situ monitoring data at each step to enhance the assessment's accuracy and relevance.

Planning and Scoping

Objective: To define the purpose, scope, and technical approach of the risk assessment.

- Key Activities:

- Identify the Population at Risk: Determine if the assessment will focus on the general population, sensitive lifestages (e.g., children, pregnant women), or highly exposed subgroups [25].

- Define the Environmental Hazard of Concern: Select the specific chemical, radiation, or biological pollutant to be assessed. In-situ monitoring is crucial for identifying the contaminants most prevalent in the area of interest [25] [26].

- Delineate the Exposure Scenario: Identify potential exposure pathways (air, water, soil) and routes (inhalation, ingestion, dermal contact) using local environmental data from sensors [25].

Step 1: Hazard Identification

Objective: To determine whether exposure to a stressor can cause an increase in the incidence of specific adverse health effects and characterize the quality of the evidence [25].

- Experimental Protocol:

- Data Collection: Gather evidence from:

- Epidemiological Studies: Statistical evaluations of human populations to find associations between exposure and effect. In-situ data provides critical, spatially-resolved exposure information that strengthens these studies [25].

- Toxicological Studies: Data from controlled animal studies (e.g., on rats, mice) are used to infer potential human hazard when human data is unavailable [25].

- Mode of Action (MoA) Analysis: Evaluate the sequence of key biological events, from cellular interaction to the adverse health outcome (e.g., cancer formation) [25].

- Weight of Evidence (WOE) Characterization: Synthesize all data to assign a qualitative descriptor (e.g., "Carcinogenic to humans," "Suggestive evidence of carcinogenic potential") to the stressor [25].

- Data Collection: Gather evidence from:

Step 2: Dose-Response Assessment

Objective: To quantify the relationship between the dose of a stressor and the probability or severity of the associated adverse health effect [25].

- Experimental Protocol & Data Analysis:

- Critical Effect Selection: Review all studied effects and select the adverse effect (or its precursor) that occurs at the lowest dose as the basis for risk assessment [25].

- Data Modeling: Fit mathematical models to the experimental dose-response data. The choice of model depends on the MoA:

- Linear Model: Often used for carcinogens, assuming no safe threshold.

- Non-Linear Model (e.g., Threshold): Used for non-cancer effects, identifying a dose below which no adverse effect is expected.

- Point of Departure (POD) Identification: Determine the dose level from the data that corresponds to a low, measurable effect (e.g., a Benchmark Dose). The POD is then extrapolated to estimate risk at lower, environmentally relevant exposure levels measured by in-situ monitors [25].

Table 2: Key Dose-Response Metrics and Calculations

| Metric | Definition | Application in Risk Assessment |

|---|---|---|

| Benchmark Dose (BMD) | A statistical lower confidence limit for a dose that produces a predetermined change in response rate (e.g., 10% effect). | Used as the Point of Departure (POD) for extrapolation to human exposure levels, providing a more robust alternative to the No-Observed-Adverse-Effect-Level (NOAEL). |

| Reference Dose (RfD) | An estimate (with uncertainty spanning an order of magnitude) of a daily oral exposure to the human population that is likely to be without risk of deleterious effects. | Calculated as RfD = POD / (Uncertainty Factors). Used to assess non-cancer risks from chronic exposure. |

| Cancer Slope Factor (SF) | An upper-bound estimate of risk per unit intake of a chemical over a lifetime (mg/kg/day). | Used to estimate cancer risk: Risk = Exposure (mg/kg/day) × SF. A risk of 1E-6 indicates a 1 in 1,000,000 chance of developing cancer. |

Step 3: Exposure Assessment

Objective: To estimate the magnitude, frequency, duration, and route of exposure for the defined population [25]. This is the stage where in-situ monitoring directly feeds into the quantitative risk assessment.

- Experimental Protocol:

- Environmental Concentration Measurement: Deploy in-situ monitoring technologies (e.g., electrochemical biosensors for heavy metals, low-cost sensor pods for particulate matter) in relevant media (air, water, soil) to collect real-time concentration data [24] [26].

- Exposure Factor Evaluation: Collect data on human behavior patterns:

- Inhalation rates

- Ingestion of water and food

- Time-activity patterns (e.g., time spent outdoors)

- Body weight

- Exposure Calculation: Combine concentration and exposure factor data to estimate average daily dose (ADD).

- Formula:

ADD = (C × IR × EF × ED) / (BW × AT) - Where: C = Concentration (from monitoring), IR = Intake Rate, EF = Exposure Frequency, ED = Exposure Duration, BW = Body Weight, AT = Averaging Time.

- Formula:

Step 4: Risk Characterization

Objective: To integrate information from hazard identification, dose-response assessment, and exposure assessment to estimate the likelihood and severity of adverse health effects in the population [25].

- Data Analysis and Synthesis:

- Risk Estimation:

- For Non-Cancer Effects: Calculate the Hazard Quotient (HQ).

HQ = ADD / RfD. An HQ > 1 indicates potential for adverse effects. - For Cancer Effects: Calculate excess cancer risk.

Risk = ADD × SF.

- For Non-Cancer Effects: Calculate the Hazard Quotient (HQ).

- Uncertainty and Variability Analysis: Describe major sources of uncertainty (e.g., animal-to-human extrapolation) and population variability.

- Risk Communication: Summarize the findings, assumptions, and public health implications in a clear, transparent manner for risk managers and stakeholders.

- Risk Estimation:

The following workflow diagram illustrates the integrated process of a Human Health Risk Assessment driven by in-situ monitoring.

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

The following table details key reagents, materials, and technologies essential for conducting in-situ environmental monitoring and the associated public health research.

Table 3: Essential Research Tools for In-Situ Monitoring and Health Analysis

| Item / Technology | Function / Application |

|---|---|

| Whole-Cell Biosensors [26] | Genetically modified microorganisms that produce a measurable signal (e.g., light, fluorescence, electric current) in response to specific pollutants (e.g., hydrocarbons, heavy metals) or general toxicity. |

| Electrochemical Sensors [26] | Compact devices that measure electrical changes (current, potential) induced by chemical reactions with target pollutants. Ideal for in-situ measurements due to their portability and adaptability for on-line systems. |

| Low-Cost Sensor Pods (IoT) [24] | Networks of compact, often wireless, sensors that measure parameters like particulate matter (PM), ozone (O₃), and nitrogen dioxide (NO₂) at high spatial density, enabling community-level exposure assessment. |

| Reference Materials & Standards [24] [27] | Certified materials with known concentrations of pollutants, used for calibrating monitoring equipment and ensuring the quality and reliability (Quality Assurance/Quality Control) of generated data. |

| Data Standards (e.g., from E-Enterprise) [27] [28] | Common formats and definitions for environmental data elements. They ensure consistency, improve public access, and allow for seamless data sharing and integration across agencies and platforms. |

| Quality Control (QC) Samples [24] | Duplicate samples, blanks, and spikes processed alongside field samples to monitor precision, accuracy, and potential contamination during sample collection and analysis. |

Case Study: UCMR - A Regulatory Application of Monitoring Data

The Unregulated Contaminant Monitoring Rule (UCMR) program by the U.S. EPA is a prime example of a systematic, national-level public health initiative driven by environmental monitoring data [29].

- Objective: To collect nationwide occurrence data for contaminants suspected to be present in drinking water but lacking health-based standards. This data directly supports the EPA Administrator's decision on whether to regulate a contaminant [29].

- Protocol and Workflow:

- Contaminant Selection: Contaminants are prioritized from the Contaminant Candidate List (CCL) based on health effects information (e.g., carcinogenicity), public interest (e.g., PFAS), and the availability of a validated analytical method [29].

- Mandated Monitoring: Nationwide public water systems (PWSs) are required to collect water samples and analyze them for the list of UCMR contaminants. The program includes all large PWSs and a representative sample of small PWSs [29].

- Data Management and Analysis: All analytical results are stored in a National Contaminant Occurrence Database (NCOD). EPA uses this data to determine the frequency and levels of exposure across the U.S. population [29].

- Public Health Impact: The UCMR provides the critical occurrence data needed to make science-based regulatory decisions, ultimately protecting public health by identifying and controlling emerging drinking water contaminants [29].

On the Front Lines: A Guide to Current In-Situ Monitoring Technologies and Their Applications

Chemical sensor arrays, particularly those based on chemiresistors, have emerged as powerful tools for the in-situ monitoring of environmental pollutants, offering a robust solution for real-time, on-site detection of Volatile Organic Compounds (VOCs) [21]. These systems are crucial for characterizing contaminated sites, such as those regulated by the Superfund program or containing underground storage tanks, where traditional laboratory analyses are often prohibitively expensive and time-consuming [21].

The fundamental operating principle of a chemiresistor is a change in electrical resistance upon exposure to a target chemical analyte. A typical chemiresistor is fabricated by depositing a sensing material—often a polymer composite mixed with conductive carbon particles—onto electrode structures [21]. When VOC molecules interact with the sensing film, they are absorbed, causing the film to swell physically. This swelling increases the average distance between the conductive particles within the composite, thereby reducing the number of electrical pathways and increasing the overall electrical resistance of the film [21]. This process is fully reversible; upon removal of the VOC, the polymer desorbs the analyte, shrinks back to its original state, and the electrical resistance returns to its baseline value. The core mechanism of a chemiresistor is illustrated below.

The unique power of this technology lies in the use of a sensor array comprising multiple chemiresistors, each coated with a slightly different sensing material (e.g., different polymers). This creates a unique "fingerprint" response pattern for different VOCs or mixtures, enabling sophisticated pattern recognition algorithms to identify and quantify specific pollutants with high accuracy [21] [30].

Experimental Protocols

Protocol for Fabrication of a Polymer-Composite Chemiresistor Array

This protocol details the creation of a basic chemiresistor array for VOC detection, suitable for laboratory validation and field deployment in environmental monitoring [21].

- Objective: To fabricate a multi-element chemiresistor array using polymer-carbon composite sensing films.

- Summary: An "ink" is prepared by dispersing conductive carbon particles within a polymer solution. This ink is precisely deposited onto a micro-fabricated electrode array, forming the core sensing elements.

Materials & Equipment:

- Substrate with patterned electrode array (e.g., gold or platinum interdigitated electrodes on silicon or alumina).

- Non-conductive polymer(s) (e.g., Polyepichlorohydrin, Polyisobutylene, Polysiloxane derivatives).

- Conductive carbon black particles.

- Suitable solvent (e.g., Tetrahydrofuran, Toluene, Cyclohexane).

- Precision micro-syringe or automated deposition system.

- Analytical balance.

- Ultrasonic bath.

- Vacuum oven or controlled hotplate.

Step-by-Step Procedure:

- Ink Formulation: For each unique polymer type, prepare a sensing ink. Dissolve the selected polymer in the solvent at a concentration of 1-5% (w/w). Subsequently, add conductive carbon black at a ratio of 1:2 to 1:4 (carbon:polymer, w/w) to the solution. Sonicate the mixture for 30-60 minutes to ensure homogeneous dispersion.

- Sensor Deposition: Mount the electrode array substrate securely. Using a micro-syringe or automated dispenser, deposit a small, controlled volume (typically 0.1 - 1 µL) of a specific polymer-carbon ink onto the active area of individual electrode pairs. Repeat this process, using a different polymer-based ink for each sensor element to create a diverse array.

- Film Curing: Place the deposited array in a vacuum oven or on a hotplate at a mild temperature (e.g., 40-60°C) for 1-2 hours. This step evaporates the solvent, leaving behind a stable, cross-linked polymer-carbon composite film.

- Baseline Stabilization: Before first use, condition the sensor array by exposing it to a stream of dry, purified air or nitrogen for several hours while monitoring the resistance signals. This ensures stable baseline readings.

Protocol for Field Deployment and In-Situ Monitoring of VOCs

This protocol outlines the procedure for deploying a packaged chemiresistor array for subsurface VOC monitoring, a key application in environmental remediation and public health protection [21].

- Objective: To deploy a chemiresistor array for long-term, in-situ characterization of VOC contaminants in the vadose zone.

- Summary: The sensor array is housed in a specialized, waterproof package and installed in a monitoring well to provide real-time data on VOC presence and concentration.

Materials & Equipment:

- Packaged chemiresistor array (e.g., housed in a stainless-steel package with a gas-permeable membrane).

- Data acquisition system with remote communication capabilities (e.g., cellular or satellite modem).

- Power supply (e.g., battery with solar panel).

- Groundwater monitoring well for vadose zone access.

- Calibrated gas standards for field validation.

Step-by-Step Procedure:

- Pre-Deployment Calibration: Prior to deployment, perform a laboratory calibration of the sensor array. Expose the array to known concentrations of target VOCs (e.g., TCE, benzene, toluene) to generate a "training set" of response patterns. Use this data to train a pattern recognition model (e.g., Principal Component Analysis or machine learning classifier) [21] [31].

- Sensor Emplacement: Lower the packaged sensor array into the monitoring well to the desired depth within the vadose zone. Seal the well head to prevent atmospheric interference and secure the associated data and power cables.

- System Activation: Power on the data acquisition system. Initiate continuous or periodic resistance measurements from all sensors in the array. The system should transmit data to a central server at predefined intervals.

- Data Analysis & Validation: Monitor incoming data streams remotely. The trained pattern recognition model will automatically process the array's fingerprint responses to identify detected VOCs and estimate their concentrations. Periodically validate sensor readings by comparing them with concurrent soil-gas sample analyses performed via traditional laboratory methods (e.g., Gas Chromatography-Mass Spectrometry).

The overall workflow, from sensor response to data interpretation, is summarized below.

Performance Data and Analysis

The performance of a chemiresistor array is characterized by its sensitivity, selectivity, and stability. The following tables consolidate key quantitative data from sensor array studies [32] and list commonly targeted VOCs in environmental monitoring [21].

Table 1: Representative Performance of a Chemiresistor Array for Gas Discrimination (Adapted from UCSD Dataset) [32]

| Target Gas | Concentration Range (ppmv) | Typical Classification Accuracy* | Key Features for Identification | ||

|---|---|---|---|---|---|

| Ethanol | 5 - 1000 | Up to 99.8% | Characteristic response pattern across 16 sensors with 128 features. | ||

| Ethylene | 5 - 1000 | Up to 99.8% | Distinct fingerprint from steady-state and transient response features. | ||

| Ammonia | 5 - 1000 | 100% | Unique dynamic response (exponential moving average features). | ||

| Acetaldehyde | 5 - 1000 | 100% | Specific normalized resistance change ( | ΔR | ) pattern. |

| Acetone | 5 - 1000 | Up to 99.5% | Identified via combined steady-state and decay transient features. | ||

| Toluene | 5 - 1000 | Up to 99.7% | Recognized by its unique multi-sensor fingerprint. |

Note: Accuracy achieved using trained classifiers (e.g., SVM) on a 128-dimensional feature vector under controlled conditions.

Table 2: Common VOC Targets in Environmental Monitoring and Their Sources [21]

| Volatile Organic Compound (VOC) | Class | Typical Environmental Sources |

|---|---|---|

| Trichloroethylene (TCE) | Halogenated Hydrocarbon | Industrial solvent, metal degreaser, groundwater contaminant. |

| Benzene | Aromatic Hydrocarbon | Petroleum products, industrial chemical production. |

| Toluene, Xylene | Aromatic Hydrocarbon | Gasoline, solvents, paints, thinners. |

| Carbon Tetrachloride (CT) | Halogenated Hydrocarbon | Former solvent, refrigerant, precursor in chemical production. |

| Chloroform | Halogenated Hydrocarbon | By-product of water chlorination, solvent. |

| Hexane, Octane | Aliphatic Hydrocarbon | Gasoline, petroleum solvents. |

The Scientist's Toolkit: Research Reagents and Materials

Table 3: Essential Materials for Chemiresistor Array Development and Deployment

| Item | Function / Application |

|---|---|

| Interdigitated Electrode (IDE) Arrays | Provides the foundational transducer platform; the comb-like structure maximizes contact area with the sensing film for sensitive resistance measurements. |

| Diverse Polymer Libraries | Creates cross-reactive sensor arrays. Different polymers (e.g., polysiloxanes, polyethers) swell to different extents for various VOCs, generating unique fingerprint patterns. |

| Conductive Carbon Black | The conductive filler in the composite; its dispersion within the polymer matrix forms a percolation network whose resistance is modulated by polymer swelling. |

| Volatile Organic Compound Standards | Used for calibrating sensor arrays and generating training sets. High-purity standards are essential for developing accurate quantification and classification models. |

| Data Acquisition System with Multi-Channel Readout | Simultaneously measures and records resistance changes from all sensors in the array, enabling real-time fingerprint capture. |

| Stainless-Steel Sensor Package with Gas-Permeable Membrane | Protects the delicate sensor elements from harsh subsurface environments (e.g., moisture, soil) while allowing target VOCs to diffuse to the sensing films [21]. |

| Pattern Recognition Software | The analytical brain of the system. Uses algorithms (e.g., PCA, LDA, machine learning) to decode the complex fingerprint data from the array for VOC identification and concentration estimation [31]. |

The sustainable monitoring of environmental pollutants requires rapid, sensitive, and on-site screening techniques. Biosensors that incorporate whole-cell bioreporters, such as naturally bioluminescent bacteria, represent a promising technological solution for the rapid toxicity assessment of water samples [33]. These sensors leverage the physiological response of living organisms to provide a biologically relevant measure of toxicity, complementing conventional chemical analysis.

The bacterium Aliivibrio fischeri is a well-established bioreporter for toxicological studies. Its bioluminescence, a result of the enzymatic activity of luciferase encoded by the lux operon, is directly tied to cellular metabolic health [33]. When exposed to toxic substances, the metabolic disruption leads to a measurable decrease in light output, providing a rapid and functional measure of toxicity. Traditional methods based on A. fischeri (e.g., ISO 11348) require laboratory infrastructure and skilled personnel. Recent advances have successfully transitioned this assay into a portable, sustainable paper biosensor format, integrating sample analysis with smartphone-based detection and artificial intelligence (AI) for data interpretation, thus enabling effective in-situ monitoring [33].

Application Notes

This section outlines the core principles and performance data of the luminescent bacterial biosensor for toxicity screening.

Operating Principle

The biosensor operates on the principle of toxicity-induced quenching of bioluminescence. The A. fischeri bacteria are immobilized in a hydrogel matrix on a paper substrate. In the presence of a toxicant, the cellular metabolism is compromised, leading to a reduction in the synthesis of the luciferase enzyme or its substrates (FMNH2 and a long-chain aldehyde). This results in a dose-dependent decrease in bioluminescence intensity, which is captured using a smartphone camera and quantified by a dedicated AI application [33].

Analytical Performance

The performance of the A. fischeri paper biosensor was evaluated against several classes of environmental contaminants. The following table summarizes its sensitivity for key pollutants.

Table 1: Analytical performance of the A. fischeri paper biosensor for selected contaminants.

| Contaminant | Class | Limit of Detection (LOD) |

|---|---|---|

| Microcystin-LR | Cyanotoxin | 0.23 ppb [33] |

| Sodium Hypochlorite (NaClO) | Disinfectant | 0.1 - 4.0 ppm (tested range) [33] |

| 3,5-Dichlorophenol | Organochlorine | 1.0 - 6.0 ppm (tested range) [33] |

| Lead (from Lead Nitrate) | Heavy Metal | 5.0 - 100 ppb (tested range) [33] |

The biosensor has been successfully applied to the analysis of real water samples, including tap water and industrial wastewater, showing promising results for on-site screening applications [33]. The integration of an on-board calibration curve and an AI-powered application allows for accurate quantification and minimizes interferences from varying smartphone camera resolutions [33].

Experimental Protocols

Biosensor Fabrication and Assay Procedure

Below is the detailed methodology for fabricating the paper biosensor and performing the toxicity assay.

Protocol: Fabrication of the Bioluminescent Paper Biosensor and Toxicity Assay

Principle: Immobilize Aliivibrio fischeri in an agarose hydrogel on a wax-patterned paper support to create a ready-to-use biosensor for toxicity screening based on bioluminescence quenching.

Research Reagent Solutions and Essential Materials:

Table 2: Key research reagents and materials.

| Item | Function/Specification |

|---|---|

| Aliivibrio fischeri | Naturally bioluminescent bioreporter strain (e.g., strain from Prof. Stefano Girotti) [33]. |

| Whatman 1 CHR paper | Cellulose chromatography paper used as the support for the biosensor [33]. |

| Lysogeny Broth (LB) Medium | Culture medium for growing A. Fischeri, supplemented with high salinity (30 g/L NaCl) [33]. |

| Agarose | Polysaccharide used to form a hydrogel matrix for bacterial entrapment (0.5% w/v final concentration) [33]. |

| Wax Printer | (e.g., Phaser 8400 office) Used to create hydrophobic barriers on the paper, defining hydrophilic wells [33]. |

| Cardboard Dark Box | Used during signal acquisition to eliminate ambient light interference [33]. |

| Smartphone with AI App | (e.g., OnePlus 6T) Equipped with a custom application (e.g., "Scentinel") for image capture and data analysis [33]. |

Procedure:

Sensor Design and Fabrication:

- Design a circular flower-like pattern with one central and six peripheral hydrophilic wells (5 mm diameter) using presentation software (e.g., PowerPoint).

- Print the pattern onto Whatman paper using a wax printer.

- Heat the printed paper at 150°C for 1 minute to allow the wax to penetrate and form hydrophobic barriers.

- Seal the back of the sensor with adhesive tape to prevent leakage [33].

Bacterial Culture and Immobilization:

- Culture A. fischeri in LB medium with high salinity at 19°C with orbital shaking (140 rpm) until the optimal cell density is reached (OD600 ~5.0) [33].

- Prepare a 3% (w/v) agarose solution in sterile water by heating.

- Cool the agarose solution to approximately 60°C.

- Mix 80 μL of the 3% agarose with 420 μL of the bacterial suspension (OD600 = 5.0). The final mixture will be approximately 0.5% (w/v) agarose and at a suitable temperature (~30°C) for the cells [33].

- Immediately pipette 20 μL of the bacteria-agarose mixture into each hydrophilic well of the paper sensor.

- Allow the hydrogel to solidify by equilibrating the sensor at room temperature (25°C) for 30 minutes. The biosensor is now ready for use [33].

Toxicity Assay Execution:

- Dispense a 30 μL volume of standard solution (for calibration) or the unknown water sample into the respective wells.

- Incubate the sensor at room temperature for 15 minutes.

- Place the sensor inside a cardboard dark box to avoid external light interference.

- Capture an image of the sensor using a smartphone camera with predefined settings (e.g., 30-second integration time, ISO 1600) [33].

- Analyze the image using the custom Android application (e.g., "Scentinel"), which uses an AI algorithm to interpolate the bioluminescent signal from the sample well against the on-board calibration curve and report a quantitative result, such as toxicity equivalents [33].

Visualizations

Biosensor Workflow and Signaling Pathway

The following diagram illustrates the complete experimental workflow, from biosensor preparation to result analysis, and integrates the underlying biological signaling pathway of bioluminescence in A. fischeri.

lux Operon Regulation and Toxicity Mechanism

This diagram provides a more detailed view of the genetic regulation and biochemical pathway responsible for light production, and how toxicants interfere with this process.

Surface-Enhanced Raman Spectroscopy (SERS) has emerged as a powerful analytical technique for the in-situ monitoring of environmental pollutants, transforming the landscape of environmental and food safety analysis. SERS enhances the inherently weak Raman scattering signals from molecules adsorbed onto or in close proximity to nanostructured metallic surfaces, typically gold or silver [34]. This phenomenon provides a significant enhancement in sensitivity, enabling the detection of contaminants at trace concentrations directly in the field, which is a critical capability for modern environmental research [35] [36]. The technique's power lies in its combination of molecular fingerprinting specificity, high sensitivity, and the potential for rapid, on-site analysis, making it exceptionally suitable for monitoring pollutants like pesticides and antibiotics in complex environmental matrices.

Principles of SERS

The remarkable sensitivity of SERS stems from two primary enhancement mechanisms. The electromagnetic (EM) mechanism is the dominant contributor, where the excitation of localized surface plasmon resonances in plasmonic nanostructures generates intense local electromagnetic fields, known as "hot spots" [35] [34]. When analyte molecules are located within these hot spots, their Raman signals can be enhanced by factors as high as 10^7 to 10^10 [35] [37]. The chemical enhancement (CM) mechanism involves a charge-transfer process between the analyte molecule and the metal surface, which can further increase the signal, though to a lesser extent than the EM mechanism [35]. For effective SERS detection, analytes must be in close proximity or adsorb to the substrate surface, and the substrate itself must be robust with a long lifetime and provide reproducible enhancements [35].

Application Notes: SERS for Environmental Pollutant Monitoring

The following table summarizes recent, advanced SERS applications for detecting environmental pollutants in the field, showcasing the technique's versatility and high sensitivity.

Table 1: Advanced SERS Applications for In-Situ Environmental Monitoring

| Target Analyte(s) | SERS Substrate / Platform | Sample Matrix | Detection Limit / Performance | Key Innovation / Feature |

|---|---|---|---|---|

| Thiram, Carbendazim (CBZ), Nitrofurazone (NFZ) | Flexible Cellulose Nano Fiber (CNF) / Gold Nanorod@Silver (GNR@Ag) [36] | Fruit surfaces (e.g., apples, chili peppers) | Thiram: 10⁻¹¹ M [36] | Flexibility for direct application on non-planar surfaces; Hydrophilic substrate with hydrophobic PDMS for evaporation enrichment, boosting sensitivity by 465% [36] |

| Various Pesticides | Biorecognition-element combined substrates (e.g., antibodies, aptamers) [35] | Food and environmental samples | Not specified; improves selectivity in complex matrices [35] | Integration of biorecognition molecules (antibodies, aptamers) with SERS substrates to create highly specific biosensors [35] |

| Sulfamethazine (SMT) | Recyclable SERS-DGT device with Au@g-C₃N₄ nanosheets [38] | Water | 1.031 - 761.9 ng mL⁻¹ [38] | Integrates in-situ sampling, pretreatment, detection, and photodegradation; Device is recyclable (4 cycles) [38] |

| Doxorubicin (Model Drug) | GO-Fe₃O₄@Au@Ag Nanocomposites [39] | In-vivo tumor microenvironments | Enables real-time monitoring of drug release [39] | pH-responsive drug release with real-time SERS monitoring; Also allows for MR imaging and photothermal therapy [39] |

| Pesticides | Gold Nanodomes; Nanoplasmonic Slot Waveguides [37] | Laboratory analysis | High SERS enhancement factors [37] | Comparison of free-space and waveguide-based SERS platforms; Waveguide approach suitable for lab-on-a-chip integration [37] |

Key Material Innovations

Table 2: Essential Research Reagent Solutions for SERS Substrate Fabrication

| Material / Reagent | Function in SERS Application |

|---|---|

| Gold (Au) and Silver (Ag) Nanoparticles | The most common plasmonic materials used to create SERS substrates. Their size, shape (e.g., nanospheres, nanorods), and composition are tuned to optimize surface plasmon resonance for maximum signal enhancement [35] [36]. |

| Graphene Oxide (GO) & g-C₃N₄ Nanosheets | Two-dimensional materials used as supports. They improve substrate stability, prevent nanoparticle aggregation, enhance adsorption of aromatic pollutants via π-π interactions, and can contribute to chemical enhancement and photocatalytic degradation of analytes [35] [39] [38]. |

| Magnetic Nanoparticles (e.g., Fe₃O₄) | Used in core-shell structures (e.g., Fe₃O₄@Au@Ag) to enable magnetic separation and preconcentration of analytes from complex samples, simplifying sample preparation and improving detection limits [35] [39]. |

| Biorecognition Elements (Antibodies, Aptamers) | Molecules engineered to bind specifically to a target pollutant. They are combined with SERS substrates to create highly selective biosensors that can identify specific analytes within complex mixtures like food extracts or environmental water [35]. |

| Cellulose Nanofibers (CNF) | Form a flexible, highly absorbent, and hydrophilic substrate backbone. This enables the creation of flexible SERS sensors that can conform to non-planar surfaces, such as the skin of fruits [36]. |

| Raman Reporters (e.g., 4-MPBA, 4-ATP) | Molecules with a strong, known Raman signature used to functionalize SERS probes. They can act as internal standards or, in traceable drug delivery systems, their signal change can indirectly monitor the release of a therapeutic agent [39] [36]. |

Experimental Protocols

This protocol details the use of a flexible, absorbent sensor for direct application on food surfaces.

Workflow: On-Site Pesticide Detection

Materials:

- SERS Substrate: Flexible CNF/GNR@Ag sensor.

- Equipment: Portable or handheld Raman spectrometer (e.g., 785 nm laser excitation).

- Reagents: Methanol or ethanol for pesticide extraction.

- Accessories: Hole-punched hydrophobic Polydimethylsiloxane (PDMS) film.

Procedure:

- Substrate Fabrication:

- Synthesize gold nanorods (GNRs) via a seed-mediated growth method.

- Coat the GNRs with a silver shell (GNR@Ag) of optimized thickness to maximize "hot spot" formation.

- Integrate the GNR@Ag nanostructures with cellulose nanofibers (CNF) using a vacuum filtration method to form the flexible sensor film [36].

- Sample Collection & Analyte Enrichment:

- Extract pesticides from the surface of an apple or chili pepper using a small volume of solvent.

- Apply the extract to the hydrophilic CNF/GNR@Ag sensor.

- Place the hole-punched hydrophobic PDMS film on top of the sample droplet. This creates a localized evaporation effect, driving the microfluidic flow and concentrating the analyte molecules within the small hole area, which significantly enhances the SERS signal [36].

- SERS Measurement:

- Place the prepared sensor directly under the portable Raman spectrometer.

- Focus the laser beam onto the enriched area under the PDMS hole.

- Acquire SERS spectra with an appropriate integration time (e.g., 1-10 seconds).

- Data Analysis:

- Identify the characteristic Raman fingerprint peaks of the target pesticide (e.g., Thiram).

- Perform quantitative analysis by constructing a calibration curve of peak intensity versus known pesticide concentration.

This protocol describes an all-in-one device for passive sampling and sensing of antibiotics in water bodies.

Workflow: In-Situ Antibiotic Sensing

Materials:

- SERS-DGT Device: Consisting of a binding gel with Au@g-C₃N₄ nanosheets, a diffusive gel, and a filter membrane.

- Equipment: Handheld Raman spectrometer, Xenon lamp for regeneration.

- Reagents: Au@g-C₃N₄ nanosheet suspension (synthesized via in-situ growth of Au nanoparticles on g-C₃N₄).

Procedure:

- Device Preparation:

- Synthesize the Au@g-C₃N₄ nanosheet suspension, which serves as the binding agent with SERS activity and photocatalytic properties.

- Assemble the SERS-DGT device by loading the Au@g-C₃N₄NS suspension into the device's binding layer, covered by a diffusive gel and a protective membrane [38].

- Device Deployment and Sampling:

- Deploy the SERS-DGT device in the water body (e.g., a pond) for a predetermined time (24 to 180 hours). During this period, antibiotic molecules (e.g., Sulfamethazine, SMT) diffuse through the membrane and gel and are bound and enriched by the Au@g-C₃N₄NS [38].

- In-Situ Sensing:

- Retrieve the device from the water.

- Directly place the device's binding layer under the handheld Raman spectrometer for SERS measurement without any elution steps. The accumulated SMT is detected and quantified based on its SERS fingerprint [38].

- Device Regeneration (Recycling):

- After measurement, expose the used binding phase to a Xenon lamp. The Au@g-C₃N₄NS acts as a photocatalyst, degrading the adsorbed SMT antibiotics.

- Confirm degradation via the disappearance of the SMT SERS signal. The device can then be redeployed. This cycle has been validated for up to four reuses without significant performance loss [38].