Advancing Ecotoxicology: Navigating Regulatory Acceptance for New Approach Methodologies (NAMs)

This article explores the transformative role of New Approach Methodologies (NAMs) in modernizing ecotoxicology and safety assessment.

Advancing Ecotoxicology: Navigating Regulatory Acceptance for New Approach Methodologies (NAMs)

Abstract

This article explores the transformative role of New Approach Methodologies (NAMs) in modernizing ecotoxicology and safety assessment. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of the scientific foundations, diverse applications, and strategic pathways for overcoming barriers to regulatory acceptance. The content synthesizes current evidence, from foundational definitions and technological breakthroughs—including in vitro models, organ-on-a-chip systems, and computational tools—to the practical and scientific hurdles impeding widespread adoption. It further examines critical validation frameworks and comparative analyses against traditional animal data, offering a forward-looking perspective on integrating NAMs into a new paradigm for chemical risk assessment that is more human-relevant, efficient, and ethically sound.

The New Paradigm: Understanding NAMs and Their Rise in Modern Ecotoxicology

New Approach Methodologies (NAMs) represent a transformative paradigm in toxicology, defined as any technology, methodology, approach, or combination thereof that can be used to replace, reduce, or refine (the 3Rs) animal toxicity testing, allowing for more rapid and effective prioritization and assessment of chemicals [1]. The transition from traditional animal testing to these human-relevant methods addresses significant challenges, including the lack of standardized validation criteria and the recognition of limitations in animal test reproducibility for predicting human toxicity [2]. This shift is driven by the scientific imperative to improve the human relevance of safety assessments while aligning with ethical considerations and technological advancements.

The framework of NAMs extends across multiple disciplines and product development areas, encompassing in silico (computational), in chemico (abiotic measures of chemical reactivity), and in vitro (cell-based) assays, as well as alternative testing strategies employing omics technologies and innovative model systems [1] [3]. What makes these approaches "new" is not necessarily their recent development but their purposeful design and fit-for-purpose application in regulatory contexts to refine, reduce, and ultimately replace the reliance on traditional animal-based tests [1]. This evolution is pushing scientific and technological boundaries, increasing both the depth and pace of our understanding of toxic substance effects on human health and ecosystems.

Methodological Foundations of NAMs

Categorization of NAMs Technologies

NAMs encompass a diverse suite of technologies that can be categorized by their fundamental approach and application. In vitro systems include cell-based assays, reconstructed human tissue models (e.g., cornea-like epithelium, 3D human epidermis), and more complex microphysiological systems such as organ-on-a-chip technologies that mimic human organ functionality [4] [5] [6]. These systems provide human-relevant data at the cellular and tissue levels, enabling investigation of specific biological pathways and toxicity endpoints without using whole animals.

In silico approaches represent another critical category, leveraging computational power to predict chemical toxicity. These include quantitative structure-activity relationship (QSAR) models, artificial intelligence-based computational simulations of toxicity, and virtual tissue computer models that simulate how chemicals may affect human development [6] [7]. The U.S. Environmental Protection Agency (EPA) develops virtual tissue models as some of the most advanced methods being developed today, helping reduce dependence on animal study data and providing much faster chemical risk assessments [7].

Integrated testing strategies combine multiple lines of evidence through Defined Approaches and Integrated Approaches to Testing and Assessment (IATA). These frameworks allow for the collection, generation, evaluation, and integration of various data types for clear and transparent decision-making in chemical assessment [8]. For ecotoxicology specifically, NAMs can include alternative types of testing such as employing omics, or in vivo testing of non-protected taxonomic groups (e.g., many invertebrates) or some vertebrate life stages (e.g., fish and amphibian embryos) [1].

Key Methodological Workflows

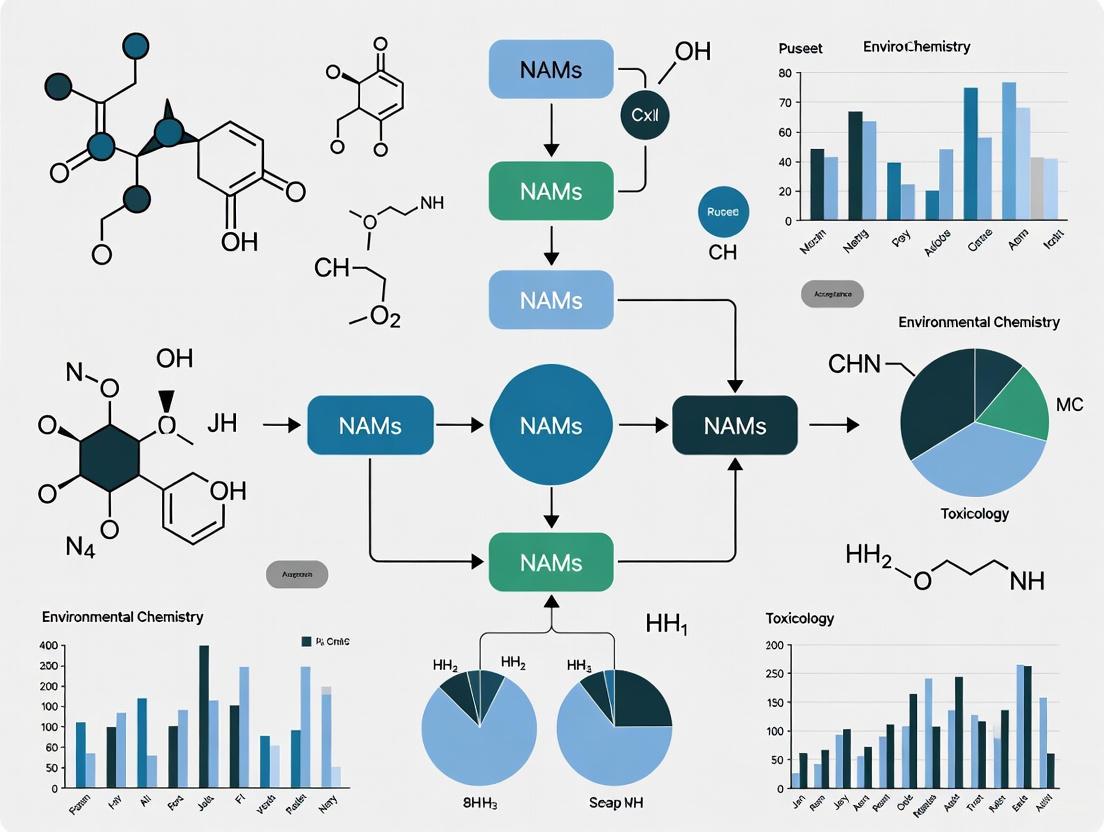

The implementation of NAMs follows structured workflows that vary based on the specific methodology and context of use. The following diagram illustrates a generalized workflow for NAMs application in chemical safety assessment:

Generalized NAMs Workflow for Chemical Safety Assessment

This workflow demonstrates the iterative nature of NAMs application, beginning with computational predictions, progressing through increasingly complex bioassay systems, and culminating in integrated data analysis for hazard characterization. The decision points allow for refinement and additional testing based on data adequacy and regulatory requirements.

For specific toxicity endpoints, more detailed workflows are employed. The following diagram illustrates a defined approach for skin sensitization assessment, one of the most advanced areas for NAMs implementation:

Defined Approach for Skin Sensitization Assessment (OECD TG 497)

This defined approach for skin sensitization integrates three key in vitro assays that address different key events in the adverse outcome pathway for skin sensitization: molecular initiation (protein binding), cellular response (keratinocyte activation), and immune response (dendritic cell activation). The integration of these lines of evidence through a standardized framework provides a comprehensive assessment without animal testing and is formally accepted under OECD Test Guideline 497 [5].

Experimental Protocols and Methodological Details

Protocol for In Vitro Skin Sensitization Assessment

The following detailed protocol outlines the methodology for implementing the Defined Approach for skin sensitization as described in OECD Test Guideline 497, which represents one of the most significant advances in replacing animal tests for regulatory decision-making [5].

Materials and Reagents:

- Test chemical at appropriate purity (>90% recommended)

- DPRA assay kit or components (synthetic peptides, HPLC system)

- KeratinoSens cell line (adherent transgenic keratinocytes)

- hCLAT cell line (THP-1 or U937 human monocytic cells)

- Cell culture media and supplements

- Positive controls (e.g., Cinnamic aldehyde for KeratinoSens)

- ELISA reagents for CD86 and CD54 detection

- Flow cytometer for hCLAT analysis

Procedure:

- DPRA Execution:

- Prepare 0.667 mM solutions of synthetic peptides in phosphate buffer

- Incubate test chemical with peptides at 25°C for 24 hours

- Analyze by HPLC to quantify peptide depletion

- Calculate mean peptide depletion for categorization

KeratinoSens Assay:

- Culture KeratinoSens cells in recommended medium

- Expose to six concentrations of test chemical for 48 hours

- Measure luciferase activity as indicator of Nrf2 activation

- Determine EC1.5 value and calculate induction factor

hCLAT Implementation:

- Culture THP-1 cells in RPMI 1640 with 10% FBS

- Expose to test chemical for 24 hours

- Stain cells with anti-CD86 and anti-CD54 antibodies

- Analyze by flow cytometry to determine relative fluorescence intensity

- Calculate CV75 and CV150 values for both markers

Data Integration:

- Input results from all three assays into OECD TG 497 prediction model

- Apply decision rules to categorize sensitization potency (1A, 1B, or no category)

- Generate final prediction with confidence metrics

Validation Criteria:

- Positive controls must yield expected responses in all assays

- Cell viability must be within acceptable ranges (70-100% for KeratinoSens, >50% for hCLAT)

- HPLC performance standards must be met in DPRA

- The test is considered valid only when all quality control criteria are satisfied

This integrated testing strategy has demonstrated equivalent or superior performance compared to the traditional murine Local Lymph Node Assay (LLNA), providing a human-relevant, animal-free approach for skin sensitization assessment that is now widely accepted by regulatory agencies globally [5].

Protocol for Fish Cell Line Acute Toxicity Testing (OECD TG 249)

For ecotoxicology applications, the Fish Cell Line Acute Toxicity - RTgill-W1 assay represents a significant advancement in reducing and replacing fish testing for chemical safety assessment [5].

Materials and Reagents:

- RTgill-W1 cell line (rainbow trout gill epithelium)

- L-15/ex culture medium

- 96-well tissue culture plates

- Neutral red uptake assay reagents

- Positive control chemicals (e.g., 3,4-dichloroaniline)

- Test chemicals with known purity

Procedure:

- Cell Culture Preparation:

- Maintain RTgill-W1 cells in L-15/ex medium at 19°C

- Seed cells in 96-well plates at optimal density (~20,000 cells/well)

- Culture for 2-3 days until 80-90% confluent

Chemical Exposure:

- Prepare concentration series of test chemical in L-15/ex

- Replace medium with chemical exposures in triplicate

- Include solvent controls and positive controls

- Incubate plates at 19°C for 24 hours

Viability Assessment:

- Perform neutral red uptake assay

- Incubate with neutral red solution (40 μg/mL) for 3 hours

- Extract dye with destaining solution (1% acetic acid, 50% ethanol)

- Measure absorbance at 540 nm with reference at 690 nm

Data Analysis:

- Calculate percent viability relative to solvent controls

- Generate concentration-response curves

- Determine IC50 values using appropriate statistical models

- Classify toxicity based on established thresholds

This method provides a reliable indicator of acute fish toxicity while significantly reducing the number of fish required for chemical safety assessment, demonstrating the application of NAMs principles in ecotoxicology [5] [1].

Regulatory Landscape and Acceptance

Current Status of Accepted NAMs

The regulatory acceptance of NAMs has progressed substantially across multiple jurisdictions and toxicity endpoints. The following table summarizes key NAMs that have achieved regulatory acceptance for specific applications:

Table 1: Regulatory Acceptance of Select NAMs for Various Toxicity Endpoints

| Toxicity Area | Method/Approach | Regulatory Acceptance | Key Applicable Regulations |

|---|---|---|---|

| Skin Sensitization | Defined Approaches on Skin Sensitization | U.S.: OECD TG 497 (2021)EU: OECD TG 497 (2021) | Replacement of animal tests [5] |

| Ocular Irritation/Corrosion | Defined Approaches for Serious Eye Damage and Eye Irritation | U.S.: OECD TG 467 (2022)EU: OECD TG 467 (2022) | Replacement of rabbit Draize test [5] |

| Ocular Irritation | Reconstructed Human Cornea-like Epithelium Model | OECD TG 437 | Replacement of rabbit tests for eye irritation [4] |

| Skin Irritation | 3D Reconstructed Human Epidermis Model | OECD TG 439 | Accepted for human pharmaceuticals [4] |

| Ecotoxicity | Fish Cell Line Acute Toxicity - RTgill-W1 | U.S.: OECD TG 249 (2021)EU: OECD TG 249 (2021) | Reduces/replaces fish acute toxicity testing [5] |

| Endocrine Disruption | Rapid Androgen Disruption Activity Reporter Assay | U.S.: OECD TG 251 (2022)EU: OECD TG 251 (2022) | Replacement and reduction of animal use [5] |

| Pyrogenicity | In vitro pyrogen tests | FDA guidance | Replacement of rabbit pyrogen test [4] [3] |

| Developmental Neurotoxicity | Developmental neurotoxicity testing battery | OECD GD 377 (2023) | Accepted via OECD guidance [5] |

Recent regulatory initiatives demonstrate accelerating momentum for NAMs integration. The U.K. government has launched a bold new strategy with specific time-bound commitments, including fully replacing the rabbit pyrogen test by the end of 2025, ending skin and eye irritation/corrosion testing on animals by 2026, and fully replacing skin sensitization testing on animals by 2026 [9]. Similarly, the U.S. FDA announced in 2025 a groundbreaking plan to phase out animal testing requirements for monoclonal antibodies and other drugs, promoting the use of AI-based computational models, cell lines, and organoid toxicity testing instead [6].

Regulatory Validation Frameworks

The validation and qualification of NAMs for regulatory use follow structured frameworks that emphasize scientific rigor and context-specific application. The FDA's New Alternative Methods Program employs a qualification process that allows for the evaluation of alternative methods for a specific context of use, defining the boundaries within which available data adequately justify use of the tool [4]. This approach recognizes that NAMs may be suitable for specific applications even if they cannot completely replace animal tests for all endpoints.

The European Medicines Agency (EMA) collaborates with international partners on webinar series to explore the state of the science for NAMs application in specific areas like bioaccumulation assessment, promoting the adoption of Integrated Approaches to Testing and Assessment (IATA) that integrate multiple lines of evidence for clear and transparent decision-making [8].

A significant development in facilitating regulatory acceptance is the upcoming Collection of Alternative Methods for Regulatory Application (CAMERA), an interactive web-based user interface and database for accessing validated and qualified NAMs for regulatory contexts, with plans to deploy a publicly available Beta version in Q3 2025 [5]. This resource will substantially improve accessibility to approved methods and their appropriate contexts of use.

Essential Research Reagents and Tools

The implementation of NAMs requires specialized reagents, tools, and computational resources. The following table details key research solutions essential for conducting NAMs-based assessments:

Table 2: Essential Research Reagent Solutions for NAMs Implementation

| Reagent/Tool Category | Specific Examples | Function/Application | Regulatory Status |

|---|---|---|---|

| Reconstructed Tissue Models | EpiDerm, EpiSkin, SkinEthic RHE | In vitro skin corrosion/irritation testing | OECD TG 431, 439 [3] |

| 3D Tissue Equivalents | Cornea-like epithelium models, 3D human epidermis | Ocular and dermal irritation assessment | OECD TG 437, 439 [4] |

| Cell Line-Based Assays | KeratinoSens, hCLAT, IL-2 Luc assay | Skin sensitization, immunotoxicity screening | OECD TG 442D, 444A [5] |

| Microphysiological Systems | Organ-on-chip (liver, heart, immune organs) | Complex organ-level toxicity assessment | FDA qualification programs [4] [6] |

| Computational Tools | CHemical RISk Calculator (CHRIS), QSAR Toolbox | In silico toxicity prediction | FDA qualified for specific contexts [4] |

| High-Throughput Screening Platforms | ToxCast assay battery, High Throughput Transcriptomics | Rapid chemical prioritization and screening | EPA research applications [7] |

| Zebrafish Embryo Assays | EASZY assay - detection of endocrine active substances | Endocrine disruption screening | OECD TG 250 (2021) [5] |

| Fish Cell Lines | RTgill-W1 cell line assay | Acute fish toxicity prediction | OECD TG 249 (2021) [5] |

| Toxicogenomics Tools | High Throughput Phenotypic Profiling (HTPP) | Mechanistic toxicity assessment | EPA research applications [7] |

These tools are supported by publicly available data resources that enhance their utility and application. The EPA's computational toxicology data provides open access to high-throughput screening data on thousands of chemicals, while the Toxicity Reference Database (ToxRefDB) contains in vivo study data from over 6000 guideline studies for more than 1000 chemicals, serving as a resource for structured animal toxicity data for retrospective and predictive toxicology applications [7].

Implementation Challenges and Future Directions

Despite significant progress, the transition from animal studies to NAMs still faces multiple technological, industry, regulatory, and commercial challenges [3]. A primary limitation is that NAMs do not currently provide the complexity of the toxicity safety endpoints that regulators require to authorize products fully, creating the risk that important human risks may be missed without animal studies [3]. This underscores the need for continued method development and validation to address the full spectrum of toxicity endpoints required for comprehensive safety assessment.

The lack of a unified framework for validation and acceptance represents another significant barrier to broader NAMs adoption [2]. Successful implementation requires a cross-industry approach to NAMs validation grounded in measurable quality standards and standardization, with clearly defined standards, standardized protocols, and transparent data sharing to accelerate integration into regulatory decision-making [2].

Future advancement depends on continued investment and collaboration. As noted by Labcorp, further government investment, international collaboration, global standardization, and updated regulatory frameworks are required to enable gaps to be filled and for NAMs to fulfill their potential to replace in vivo models completely [3]. Promising developments include the U.K.'s commitment to £30 million for a preclinical translational models hub and the FDA's $5 million in new funding to support its New Alternative Methods Program [9] [4].

For researchers implementing NAMs, success requires careful consideration of the specific context of use, selection of appropriately validated methods for targeted endpoints, and engagement with regulatory agencies early in the process to ensure alignment with acceptance criteria. As the field evolves, the gradual development and validation of NAMs for specific purposes will supplement animal use initially, with increasing replacement as the science and regulatory confidence advance [3].

The field of toxicology is undergoing a fundamental transformation, moving away from traditional animal-based testing toward a new paradigm centered on human biology and technological innovation. New Approach Methodologies (NAMs) represent a suite of innovative tools—including in vitro systems, in silico models, and advanced computational approaches—that are revolutionizing how we assess chemical safety [10]. This shift is not merely technological but is driven by powerful scientific limitations of existing methods, compelling ethical considerations, and persuasive economic imperatives. The convergence of these forces is accelerating the adoption of NAMs within regulatory ecotoxicology and human health assessment, creating a pivotal moment for researchers, regulatory agencies, and industry stakeholders. The transition represents more than just alternative test methods; it embodies a fundamental reimagining of toxicological safety assessment through more protective and relevant models that have a reduced reliance on animals [10]. This whitepaper examines the multidimensional drivers propelling this change and provides technical guidance for professionals navigating this evolving landscape.

Scientific Imperatives: Beyond Animal Model Limitations

The Poor Predictive Value of Traditional Models

The scientific case for NAMs begins with recognizing the critical limitations of conventional animal testing, particularly their questionable predictivity for human outcomes. Extensive research has documented that rodents, the most common test species in safety assessment, have a surprisingly low true positive human toxicity predictivity rate of only 40%–65% [10]. Despite this limited accuracy, these animal models have historically been treated as a "gold standard" for validating new methods, creating a scientific paradox where human-relevant biology is benchmarked against imperfect surrogates. This fundamental misalignment between animal models and human biology drives the scientific imperative for more physiologically relevant testing systems.

The scientific imperative is further strengthened by the growing recognition that NAMs do not aim to simply recapitulate animal tests without animals, but to provide more relevant information on chemicals to enable exposure-based safety assessment [10]. This represents a paradigm shift from observing toxicity in whole organisms to understanding mechanisms of action in human-relevant systems. The aim is to improve the overall approach to safety assessment rather than to find direct replacements for animal tests, acknowledging that a one-to-one replacement approach is often not scientifically achievable for complex endpoints [10].

Advanced Capabilities of New Approach Methodologies

NAMs leverage breakthroughs in biotechnology and computational sciences to overcome the limitations of traditional models. These approaches include microphysiological systems (organs-on-chips), high-throughput screening platforms, transcriptomics, proteomics, and sophisticated computational models including artificial intelligence and machine learning [11] [10]. These technologies enable researchers to study chemical effects on human biology at unprecedented resolutions, from molecular initiation events to tissue-level responses.

Table 1: Comparison of Traditional Animal Models vs. New Approach Methodologies

| Parameter | Traditional Animal Models | New Approach Methodologies (NAMs) |

|---|---|---|

| Biological Relevance | Limited human relevance (40-65% predictivity) [10] | High human relevance using human cells/tissues |

| Mechanistic Insight | Often observational with limited mechanistic data | High-resolution mechanistic data on pathways |

| Throughput | Low (weeks to months per study) | High (days to weeks for multiple compounds) |

| Cost per Compound | High (tens to hundreds of thousands of dollars) | Significantly lower, especially for screening |

| Regulatory Acceptance | Well-established but increasingly questioned | Growing, with case-by-case and systematic acceptance [2] [12] |

| Ethical Considerations | Significant animal use concerns | Aligns with 3Rs principles (Replacement, Reduction, Refinement) |

Artificial intelligence, especially machine learning and fuzzy logic systems, is now being widely used to predict chemical toxicity, enabling researchers to simulate thousands of chemical reactions and toxic interactions without laboratory experimentation [11]. These computational approaches are particularly valuable for prioritizing which compounds require urgent regulatory attention among the thousands of chemicals in commercial use. The integration of AI with experimental data creates powerful predictive models that continuously improve with additional data input.

Ethical Imperatives: The Evolution of Research Ethics

The 3Rs Framework and Regulatory Momentum

The ethical foundation for NAMs adoption rests on the principles of the 3Rs (Replacement, Reduction, and Refinement of animals in research), which have gained substantial traction within the scientific community [10]. This ethical framework has evolved from an aspirational guideline to a operational mandate embodied in recent legislation and regulatory policies worldwide. The FDA Modernization Act 2.0 (2022) represents a landmark in this evolution, explicitly authorizing the use of alternatives to animal testing and removing previous requirements for animal studies in biological product licensing [13]. Similarly, the Toxic Substances Control Act (TSCA), as amended by the Frank R. Lautenberg Chemical Safety for the 21st Century Act, directs the EPA to reduce and replace vertebrate animal testing to the extent practicable [13].

This regulatory momentum continues to accelerate, as evidenced by the FDA's 2025 Roadmap to Reducing Animal Testing in preclinical safety studies, which outlines a strategic, stepwise approach toward phasing out animal testing with scientifically-validated NAMs [13]. At least twelve U.S. states, including California, New York, and Virginia, have banned the sale of cosmetics tested on animals, creating a regulatory patchwork that further incentivizes non-animal approaches for consumer products [13]. Internationally, the European Union's upcoming REACH 2.0 revision, expected by the end of 2025, continues this trend by potentially introducing additional provisions that could further reduce animal testing requirements while maintaining safety standards [12].

Beyond Animal Welfare: Expanded Ethical Considerations

While animal welfare concerns remain a primary ethical driver, the ethical imperative for NAMs adoption has expanded to include human health protection and environmental justice. Traditional animal testing's limited predictivity for human outcomes raises ethical questions about relying on data that may not adequately protect human populations from chemical exposures [10]. NAMs using human cells and tissues offer the potential for more human-relevant safety assessments, potentially reducing the risk of approving chemicals that might prove harmful to people.

Furthermore, NAMs enable more comprehensive environmental risk assessment through approaches like ecosystem-level modeling and community-level toxicological assessments that consider food web interactions, habitat loss, and biodiversity impacts [11]. These approaches address ethical obligations to protect entire ecosystems rather than just individual species, supporting more informed environmental decision-making that acknowledges the complex interdependencies in natural systems.

Economic Imperatives: Efficiency and Innovation Drivers

Cost and Time Advantages of NAMs

The economic case for NAMs stems from their significant advantages in both cost efficiency and development timeline acceleration. While traditional animal studies can require months to years and cost tens to hundreds of thousands of dollars per chemical, NAMs enable rapid screening of multiple compounds simultaneously at a fraction of the cost. High-throughput in vitro systems and in silico models allow researchers to evaluate thousands of chemicals in days or weeks, providing early triage decisions that focus resources on the most promising candidates [11] [10]. This efficiency is particularly valuable for assessing the vast number of chemicals in commerce that lack comprehensive safety data.

The economic imperative extends beyond direct cost savings to include risk mitigation and innovation acceleration. By providing more human-relevant data earlier in development, NAMs help identify potential safety issues before significant resources are invested, reducing late-stage attrition rates that substantially impact development costs. For industries facing increasing regulatory scrutiny of chemical mixtures, NAMs offer practical approaches for assessing combination effects that would be prohibitively expensive and ethically challenging using traditional animal models [12].

Regulatory Efficiency and Digital Transformation

The ongoing digital transformation of regulatory science presents additional economic incentives for NAMs adoption. The European Union's transition toward digital labeling requirements and the implementation of the Digital Product Passport (DPP) create infrastructure ideally suited for NAMs data integration [12]. While this transition requires initial investment in digital tools and system upgrades, it ultimately streamlines regulatory compliance and supply chain communication. The 2024 "stop-the-clock" mechanism for CLP regulation implementation provides businesses with additional time to adapt to these digital requirements, representing a strategic opportunity to invest in NAMs-compatible infrastructure [12].

Table 2: Economic Impact Assessment of NAMs Implementation

| Economic Factor | Traditional Testing Paradigm | NAMs-Enabled Paradigm |

|---|---|---|

| Testing Costs | High per compound, especially for chronic endpoints | Lower per compound, especially for screening |

| Timeline | Months to years for complete assessment | Days to weeks for initial screening and prioritization |

| Regulatory Compliance | Increasing costs due to animal testing restrictions | Future-proofed against evolving regulatory restrictions |

| Data Utility | Primarily for regulatory compliance | Mechanistic data useful for product development and optimization |

| Infrastructure Needs | Established but increasingly contested | Requires initial investment but offers long-term advantages |

Technical Implementation: Frameworks and Methodologies

Validation and Regulatory Acceptance Frameworks

A critical challenge in NAMs implementation has been the lack of standardized validation and acceptance criteria [2]. In response, regulatory agencies and standards organizations have developed systematic frameworks to establish scientific confidence in NAMs for specific regulatory decisions. The Interagency Coordinating Committee on the Validation of Alternative Methods (ICCVAM) has proposed a modernized approach to validation that emphasizes integrating results from multiple in vitro, in chemico, and in silico approaches rather than finding alternatives to single in vivo tests [13]. Similarly, the Organisation for Economic Co-operation and Development (OECD) has established Test Guidelines for Defined Approaches (DAs), which combine specific data sources with fixed data interpretation procedures [10].

Successful examples of this framework approach include OECD Test Guidelines 467 for skin sensitization and OECD TG 497 for eye irritation, which are now widely used in regulatory settings worldwide [10]. These Defined Approaches demonstrate that combinations of NAMs can provide reproducible, reliable safety decisions without animal data. The Next Generation Risk Assessment (NGRA) framework represents a more comprehensive implementation, defined as an exposure-led, hypothesis-driven approach that integrates in silico, in chemico and in vitro approaches, where NGRA is the overall objective, and NAMs are the tools used to achieve it [10].

Integrated Workflow for NAMs Implementation

The implementation of NAMs follows a systematic workflow that integrates multiple technologies and data sources. The diagram below illustrates a representative workflow for chemical safety assessment using NAMs:

Chemical Safety Assessment Workflow

This workflow begins with comprehensive chemical characterization, followed by sequential application of in silico, in vitro, and mechanistic approaches, culminating in integrated risk assessment. The tiered strategy allows for early termination of testing for clearly safe or hazardous compounds, conserving resources for chemicals requiring more extensive evaluation.

Essential Research Reagents and Platforms

Successful implementation of NAMs requires specific research tools and platforms designed to replicate human biological responses. The table below details essential research reagents and their applications in NAMs-based assessment:

Table 3: Essential Research Reagent Solutions for NAMs Implementation

| Reagent/Platform | Function | Application Examples |

|---|---|---|

| Human Cell Lines (primary, iPSCs, immortalized) | Provide human-relevant biological system for toxicity assessment | HepaRG for hepatotoxicity, renal proximal tubule cells for nephrotoxicity |

| Organ-on-a-Chip Platforms | Microphysiological systems that mimic organ-level structure and function | Lung-on-chip for inhalation toxicity, blood-brain barrier models for neurotoxicity |

| High-Content Screening Assays | Multiparametric analysis of cellular responses using automated microscopy | Mitochondrial membrane potential, nuclear morphology, oxidative stress markers |

| Pathway-Specific Reporter Systems | Monitor activation of specific toxicological pathways | Antioxidant response element (ARE) reporters for oxidative stress |

| Multi-omics Reagents (transcriptomics, proteomics, metabolomics) | Comprehensive molecular profiling of chemical effects | RNA sequencing for mode-of-action analysis, mass spectrometry for metabolite identification |

| QSAR Software and Databases | Quantitative Structure-Activity Relationship modeling for prediction | OECD QSAR Toolbox, EPA's CompTox Chemistry Dashboard |

Case Studies: Demonstrating Real-World Application

Crop Protection Products: Captan and Folpet Assessment

A compelling case study demonstrating NAMs efficacy involves the crop protection products Captan and Folpet, where a multiple NAM testing strategy was performed using 18 in vitro studies [10]. The assessment included OECD Test Guideline-compliant assays for eye and skin irritation and skin sensitization, plus novel approaches like the GARDskin skin sensitization and rat EpiAirway acute airway toxicity assays. The NAMs package appropriately identified both chemicals as contact irritants, demonstrating that a suitable risk assessment could be performed with available NAM tests, broadly aligning with risk assessments conducted using existing mammalian test data [10]. This case illustrates how combinations of established and novel NAMs can provide comprehensive safety assessments for specific regulatory decisions.

Regulatory Integration: EPA and Health Canada Initiatives

Regulatory agencies worldwide are actively integrating NAMs into their chemical assessment frameworks. The U.S. Environmental Protection Agency (EPA) has developed a New Approach Methods Work Plan prioritizing activities to reduce vertebrate animal testing while continuing to protect human health and the environment [13]. Health Canada has proposed using NAM-based points of departure alongside physiologically based kinetic estimates of systemic exposures in regulatory decision-making [10]. These initiatives demonstrate the progressive regulatory acceptance of NAMs and provide templates for other agencies seeking to implement similar approaches.

The adoption of New Approach Methodologies represents a convergence of scientific necessity, ethical responsibility, and economic pragmatism. The scientific imperatives are clear: traditional animal models show limited predictivity for human outcomes, while NAMs offer more human-relevant, mechanistic insights into chemical toxicity. The ethical drivers are compelling: evolving regulatory policies and public expectation demand reduced animal testing while improving human health protection. The economic advantages are persuasive: NAMs offer faster, more cost-effective safety assessment that aligns with digital transformation of regulatory science.

For researchers and drug development professionals, successful navigation of this transitioning landscape requires embracing integrated testing strategies that combine multiple NAMs approaches, engaging early with regulatory agencies on NAMs implementation, investing in digital infrastructure for data management and submission, and contributing to the growing body of case studies demonstrating successful NAMs application. The ongoing development of unified frameworks for validation and acceptance will further accelerate this transition, ultimately benefiting human health, environmental protection, and scientific innovation [2]. The paradigm shift toward NAMs is not merely inevitable but already underway, representing the future of toxicological science and regulatory safety assessment.

New Approach Methodologies (NAMs) represent a transformative shift in toxicology and safety assessment, moving away from traditional animal models toward more predictive, human-relevant systems. Formally coined in 2016, the term "NAM" encompasses a broad suite of innovative technologies and approaches used for the regulatory hazard or safety assessment of chemicals, drugs, and other substances [14]. These methodologies are defined by their ability to replace, reduce, or refine (the 3Rs) animal testing while improving the human relevance of safety evaluations [15] [3]. The driving forces behind NAM adoption include scientific limitations of animal models in predicting human responses, ethical pressures, economic benefits, and growing regulatory support [16] [14] [17].

The fundamental components of NAMs include in vitro models (cell- and tissue-based systems), in silico computational tools, and integrated testing strategies that combine multiple data sources [14]. These are not standalone techniques but rather a complementary toolkit designed to provide a more mechanistic understanding of toxicity pathways. This technical guide examines the core components of NAMs, their methodologies, and their integration within modern regulatory frameworks for ecotoxicology and drug development.

In Vitro Methodologies

Definition and Scope

In vitro NAMs utilize cell- or tissue-based systems maintained in controlled laboratory environments to assess biological responses to chemical compounds and pharmaceuticals. These models range from simple two-dimensional cell cultures to increasingly complex three-dimensional systems that better recapitulate tissue-level physiology and function [14]. The primary advantage of in vitro systems lies in their use of human-derived cells, which provides direct insight into human biological responses without species extrapolation uncertainties [16].

Key In Vitro Platforms and Applications

In vitro NAMs encompass diverse platforms, each with specific applications and technological sophistication. The table below summarizes the primary in vitro platforms and their representative uses in safety assessment.

Table 1: Key In Vitro Platforms and Their Applications in Safety Assessment

| Platform Category | Technical Description | Key Applications | Complexity Level |

|---|---|---|---|

| 2D Cell Cultures | Monolayers of cells on flat surfaces | Basic toxicity screening, high-throughput assays [14] | Low |

| 3D Spheroids & Organoids | Multicellular aggregates with cell-cell interactions | Disease modeling, developmental toxicity, tissue-specific responses [18] [14] | Medium |

| Microphysiological Systems (MPS)/Organ-on-a-Chip | Microengineered systems mimicking organ-level function | Pharmacokinetics, toxic mechanisms, tissue-tissue interfaces [16] [14] | High |

| Stem Cell-Based Models | Human embryonic or induced pluripotent stem cells (hESCs/hiPSCs) | Developmental and reproductive toxicity (DART), organogenesis studies [18] | Medium-High |

Detailed Experimental Protocol: Zebrafish Embryotoxicity Test (ZET)

The Zebrafish Embryotoxicity Test (ZET) is one of the most cited in vitro (eleutheroembryo) NAMs in the literature for assessing developmental toxicity [18]. Below is a generalized methodology.

1. Test System Preparation:

- Organism: Wild-type or transgenic zebrafish (Danio rerio) embryos.

- Collection: Collect embryos from adult breeding pairs following natural spawning.

- Selection: At 4-6 hours post-fertilization (hpf), select fertilized, cleaving embryos under a stereomicroscope.

2. Test Article Exposure:

- Exposure Initiation: Transfer 20-30 embryos per treatment group to multi-well plates containing embryo medium.

- Concentration Range: Expose embryos to a minimum of five concentrations of the test substance, typically in a logarithmic series (e.g., 0.1, 1, 10, 100 µM). Include a solvent control (e.g., 0.1% DMSO) and a positive control (e.g., 4 mM 3,4-dichloroaniline).

- Exposure Conditions: Maintain plates at 28°C with a 14h:10h light:dark cycle. Refresh test solutions every 24 hours to ensure stable compound concentration.

3. Endpoint Measurement and Analysis:

- Observation Timepoints: Record phenotypic endpoints at critical developmental stages (24, 48, 72, and 96 hpf).

- Key Endpoints: Score for coagulation, lack of somite formation, non-detachment of tail, and lack of heartbeat [18]. Additional endpoints can include yolk sac edema, pericardial edema, and malformations of the head, jaw, and tail.

- Data Analysis: Calculate the percentage of embryos exhibiting lethal or sublethal malformations at each concentration. Determine the LC50 (lethal concentration for 50% of embryos) and EC50 (effect concentration for 50% of embryos for specific malformations) using statistical models (e.g., probit analysis).

4. Validation and Interpretation:

- Predictive Model: Apply the Fish Embryo Acute Toxicity (FET) test prediction model to classify the test substance's toxicity.

- Context of Use: The ZET provides information on the potential of a chemical to cause developmental defects. It is often used as part of an Integrated Approach to Testing and Assessment (IATA) for early screening [18].

Research Reagent Solutions for In Vitro NAMs

Table 2: Essential Research Reagents for Advanced In Vitro NAMs

| Reagent/Material | Function and Application |

|---|---|

| Human Induced Pluripotent Stem Cells (hiPSCs) | Foundation for generating patient-specific cell types for disease modeling and toxicity testing; used in developmental toxicity assays [18]. |

| Extracellular Matrix (ECM) Hydrogels | (e.g., Matrigel, collagen) Provide 3D scaffolding for organoid and spheroid formation, enabling complex cell-cell interactions. |

| Microfluidic Organ-Chip Devices | Polymer-based chips with micro-channels and chambers that house living cells to simulate organ-level physiology and fluid flow [14]. |

| Cryopreserved Primary Hepatocytes | Gold-standard human liver cells for evaluating hepatic metabolism and hepatotoxicity in 2D and 3D culture systems. |

| Mechanistic Biomarker Assay Kits | (e.g., CYP450 activity, apoptosis, oxidative stress) Quantify specific molecular initiating events and key events in Adverse Outcome Pathways. |

In Silico Methodologies

Definition and Scope

In silico NAMs comprise computational tools and models that predict chemical properties, biological activity, and toxicity based on chemical structure and existing data [14]. These methods are highly scalable, cost-effective, and can screen thousands of compounds virtually before any laboratory testing is initiated. They are particularly valuable for prioritizing chemicals for further testing and filling data gaps through quantitative structure-activity relationship (QSAR) models and read-across approaches [15].

Key In Silico Approaches and Applications

In silico methodologies leverage advances in computing power and data science to model complex biological interactions. The table below categorizes the primary computational approaches used in safety sciences.

Table 3: Key In Silico Approaches and Their Applications in Safety Assessment

| Approach Category | Technical Description | Key Applications | Data Input Requirements |

|---|---|---|---|

| Quantitative Structure-Activity Relationship (QSAR) | Statistical models linking chemical descriptors to biological activity | Predicting physicochemical properties, ecotoxicity, and specific toxicity endpoints [14] | Chemical structure, validated experimental data for training |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Mathematical models simulating Adsorption, Distribution, Metabolism, and Excretion (ADME) | Extrapolating in vitro bioactivity data to human exposure contexts; predicting internal target site concentrations [16] | In vitro absorption/metabolism data, physiological parameters |

| Adverse Outcome Pathway (AOP) Frameworks | Structured knowledge assemblies linking molecular initiation to adverse outcomes | Organizing mechanistic data for use in Integrated Approaches to Testing and Assessment (IATA) [14] | Empirical data from in vitro and in silico studies for Key Events |

| Artificial Intelligence/Machine Learning (AI/ML) | Algorithms that identify complex patterns in large-scale biological and chemical data | Toxicity prediction, biomarker discovery, and enhancing QSAR/PBPK models [16] | Large, high-quality 'omics' or high-throughput screening (HTS) data |

Detailed Experimental Protocol: QSAR Model Development

The development of a Quantitative Structure-Activity Relationship (QSAR) model is a foundational in silico activity. The following outlines a standard workflow.

1. Dataset Curation:

- Data Collection: Compile a high-quality dataset of chemical structures and their associated experimental biological activity (e.g., IC50, LC50, binary toxic/non-toxic outcome) from reliable public (e.g., EPA's CompTox Chemistry Dashboard) or proprietary sources.

- Chemical Standardization: Process all structures to remove duplicates, salts, and standardize tautomers and stereochemistry. This ensures consistency in descriptor calculation.

- Dataset Division: Split the curated dataset into a training set (≈70-80%) for model building and a hold-out test set (≈20-30%) for final model validation.

2. Molecular Descriptor Calculation and Selection:

- Descriptor Calculation: Use cheminformatics software (e.g., PaDEL, RDKit, Dragon) to compute numerical descriptors representing chemical features (e.g., molecular weight, logP, topological indices, electronic properties).

- Descriptor Filtering: Remove constant or near-constant descriptors. Apply feature selection techniques (e.g., correlation analysis, genetic algorithms) to reduce dimensionality and avoid overfitting, retaining the most informative descriptors.

3. Model Building and Internal Validation:

- Algorithm Selection: Choose a machine learning algorithm appropriate for the data (e.g., Partial Least Squares for continuous outcomes; Random Forest or Support Vector Machines for classification).

- Model Training: Train the model using the selected descriptors and activity data from the training set.

- Internal Validation: Assess model performance using cross-validation (e.g., 5-fold or 10-fold) on the training set. Common metrics include Q² (for regression) and cross-validated accuracy (for classification).

4. Model Validation and Applicability Domain:

- External Validation: Use the hold-out test set to evaluate the model's predictive performance. Report standard metrics (e.g., R², RMSE for regression; accuracy, sensitivity, specificity for classification).

- Define Applicability Domain (AD): Characterize the chemical space where the model's predictions are considered reliable, based on the training set's descriptor ranges. Predictions for chemicals outside the AD should be treated with caution [15].

Integrated Testing Strategies

Conceptual Framework

Integrated Testing Strategies (ITS) represent the pinnacle of the NAMs paradigm, combining data from multiple sources—in silico, in vitro, and existing in vivo data—within a structured framework to support a regulatory decision without new animal studies [14]. The core principle is weight-of-evidence (WoE), where complementary pieces of evidence are synthesized to build scientific confidence. ITS are often built around Adverse Outcome Pathways (AOPs), which provide a systematic map of the measurable key events leading from a molecular initiating event to an adverse outcome at the organism or population level [18] [14].

Components of Integrated Approaches

Successful integration relies on several conceptual and computational frameworks:

- Integrated Approaches to Testing and Assessment (IATA): These are pragmatic, hypothesis-based approaches that integrate multiple data sources to inform regulatory decisions for a specific hazard endpoint [18].

- Weight-of-Evidence (WoE) Frameworks: Structured methods for transparently weighing the strength, consistency, and relevance of all available data to reach a conclusion [18].

- Bioinformatics and Computational Integration Tools: AI/ML platforms and computational models (e.g., QSP, PBPK) that fuse high-dimensional in vitro and in silico data to generate human-relevant predictions [16].

Workflow for an Integrated Strategy

The following diagram and description outline a generalized workflow for an ITS applied to chemical safety assessment.

1. Problem Formulation and Context of Use Definition:

- Clearly define the regulatory question and the specific context of use (e.g., screening, prioritization, or definitive hazard classification) [16]. This initial step dictates the required level of confidence and the stringency of the methods used.

2. AOP Development and Application:

- Identify relevant Adverse Outcome Pathways (AOPs) that link molecular initiating events (e.g., receptor binding) to the adverse outcome of concern. The AOP framework guides the selection of which key events to measure in vitro and which to predict in silico [14].

3. In Silico Profiling:

- Use QSAR models and read-across from structurally similar, data-rich chemicals to predict the potential for the molecular initiating event and other early key events [15]. This step helps flag potential hazards and design targeted in vitro tests.

4. Targeted In Vitro Assay Battery:

- Conduct a battery of in vitro tests designed to measure specific key events identified in the AOP. For example, for endocrine disruption, this might include assays for estrogen receptor (ER) and androgen receptor (AR) binding, and steroid hormone production [18].

5. PBPK Modeling and In Vitro to In Vivo Extrapolation (IVIVE):

- Use Physiologically Based Pharmacokinetic (PBPK) models to translate the effective concentrations from in vitro assays into equivalent human external exposure doses. This critical step contextualizes the bioactivity data for risk assessment [16].

6. Weight-of-Evidence Integration and Decision:

- Synthesize all data streams (in silico predictions, in vitro key event data, PBPK modeling output) within a WoE framework. The integration can be qualitative or supported by quantitative scoring systems. The outcome is a conclusion on the potential hazard and risk that can be used for regulatory decision-making [18] [16].

The adoption of NAMs in regulatory decision-making is actively evolving. Regulatory bodies like the FDA and EMA are promoting frameworks to enable their use, emphasizing the need for a clearly defined Context of Use (COU) for each application [16] [17]. A significant hurdle has been the traditional, resource-intensive validation process, which is now being modernized through Scientific Confidence Frameworks (SCFs). These SCFs provide a flexible, fit-for-purpose approach to demonstrate the reliability and relevance of a NAM for its specific COU, based on elements such as biological relevance, technical characterization, and data integrity [15].

Building scientific confidence also relies heavily on case studies that demonstrate the effectiveness of NAMs in a regulatory context and on interdisciplinary collaboration across developers, users, and regulators [15]. For clinical pharmacologists and toxicologists, this shift necessitates early involvement in defining the COU for NAMs and leveraging AI/ML and mechanistic modeling (PBPK, QSP) to translate NAM-derived data into clinically relevant predictions [16].

In conclusion, the core components of NAMs—in vitro methods, in silico tools, and integrated strategies—represent a paradigm shift toward a more human-relevant, mechanistic, and efficient approach to safety science. While challenges in standardization, validation, and regulatory harmonization remain, the collaborative efforts of researchers, industry, and regulators are accelerating the integration of these powerful methodologies into the mainstream of ecotoxicology and drug development.

The field of toxicology is undergoing a fundamental paradigm shift, moving from traditional animal-based safety assessments toward a new era of human-relevant, mechanistic-based testing. This transition is being catalyzed by both ethical imperatives and growing scientific evidence highlighting the limitations of animal models, including species variation, costly and time-consuming trials, and frequent failures to accurately predict human responses [19]. New Approach Methodologies (NAMs) represent a broad category of innovative tools—including in vitro cell-based systems, organ-on-chip models, and in silico computational approaches—that offer more predictive, efficient, and human-relevant safety assessments [10] [19].

The regulatory landscape is rapidly evolving to keep pace with these scientific advancements. Global regulatory agencies are now actively developing frameworks and policies to facilitate the integration of NAMs into chemical and drug safety evaluation. This shift represents more than just a substitution of methods; it constitutes a comprehensive overhaul of safety assessment paradigms aimed at making them more predictive, responsive, and efficient [19]. This technical guide examines the current global regulatory momentum, analyzes landmark legislation and policies, and provides researchers with practical frameworks for navigating this new landscape, all within the context of advancing NAM acceptance in ecotoxicology and human health risk assessment.

Global Regulatory Landscape and Policy Frameworks

The acceptance and integration of NAMs into regulatory decision-making is progressing through coordinated efforts across international jurisdictions. These initiatives range from formal legislation to strategic work plans and guidance documents designed to build scientific confidence in alternative methods.

Table 1: Global Regulatory Initiatives Advancing NAMs

| Country/Agency | Policy/Legislative Initiative | Key Objectives | Status/Impact |

|---|---|---|---|

| United States (FDA) | FDA Modernization Act 2.0 (2022) & Recent Policy Announcements | Permits the use of alternative methods beyond animal testing for drug safety and efficacy [6]. | Active implementation; FDA announced plan to phase out animal testing for monoclonal antibodies [6]. |

| United States (EPA) | NAMs Work Plan under TSCA | Aims to reduce vertebrate animal testing while protecting human health and the environment [20]. | Ongoing; includes developing scientific confidence frameworks and case studies [20]. |

| European Union (EMA) | Regulatory Acceptance Pathways | Provides formal procedures (Qualification, Scientific Advice) for NAM developers to gain regulatory acceptance [21]. | Active pathways; CHMP can issue qualification opinions on NAMs for specific contexts of use [21]. |

| U.S. Congress (Proposed) | Bill S355 (2025) | Seeks to update FDA regulations, replacing "animal" test references with "nonclinical" tests [22]. | Introduced February 2025; would modernize regulatory language to accommodate alternatives [22]. |

United States: Legislative and Policy Developments

The United States has witnessed significant regulatory momentum through both legislative action and agency-led initiatives. The FDA Modernization Act 2.0, signed into law in 2022, was a pivotal legislative achievement that removed the mandatory requirement for animal testing for drugs and biologics, explicitly allowing the use of alternative methods. Building on this foundation, the FDA has taken groundbreaking steps to implement this authority. In a recent announcement, the agency revealed a plan to phase out animal testing requirements specifically for monoclonal antibody therapies and other drugs, replacing them with more effective, human-relevant methods [6]. This initiative encourages developers to leverage advanced computer simulations and human-based lab models, such as organoids and organ-on-a-chip systems, to predict drug safety and efficacy [6].

Concurrently, the Environmental Protection Agency (EPA) has advanced its own strategic approach under the Toxic Substances Control Act (TSCA). The EPA's NAMs Work Plan, first released in 2020 and updated in 2021, establishes a comprehensive framework to prioritize activities that reduce vertebrate animal testing while continuing to protect human health and the environment [20]. The plan's key objectives include evaluating regulatory flexibility for accommodating NAMs, developing baselines to assess progress, establishing scientific confidence in NAMs, and demonstrating their application to regulatory decisions through case studies [20].

Further legislative momentum is evidenced by the recent introduction of Bill S355 in February 2025. This bill would require the Secretary of Health and Human Services to publish a rule updating various FDA regulations to replace references to "animal" tests with "nonclinical" tests, thereby modernizing the language around research methodologies and potentially allowing for broader acceptance of alternative approaches [22].

European Union: Structured Pathways for Acceptance

The European Medicines Agency (EMA) has established formal, structured pathways to foster regulatory acceptance of NAMs. Unlike the US approach, which has relied heavily on recent legislation, the EU system emphasizes scientific validation and qualification procedures within existing regulatory frameworks. EMA provides multiple interaction mechanisms for NAM developers, including:

- Briefing Meetings: Hosted through EMA's Innovation Task Force (ITF), these meetings allow for informal discussions on NAM development and readiness for regulatory acceptance [21].

- Scientific Advice: Enables developers to ask specific scientific and regulatory questions about including NAM data in future clinical trial or marketing authorization applications [21].

- Qualification Procedure: For NAMs with sufficient robust data, developers can apply for CHMP qualification, which assesses the utility and regulatory relevance of a NAM for a specific context of use [21].

- Voluntary Submission of Data: Also known as the "safe harbour approach," this procedure allows developers to submit data for evaluation without immediate regulatory consequences, helping to build confidence and define contexts of use [21].

The core principles for regulatory acceptance in the EU framework include the availability of a defined test methodology, a clear description of the proposed NAM's context of use, and demonstration of the method's relevance, reliability, and robustness within that context [21].

Methodological Frameworks: IATA and AOP

The successful regulatory application of NAMs often requires their integration within broader methodological frameworks that provide structure and mechanistic understanding. Two key frameworks facilitating this integration are Integrated Approaches to Testing and Assessment (IATA) and the Adverse Outcome Pathway (AOP).

Integrated Approaches to Testing and Assessment (IATA)

IATA provides a systematic approach that combines multiple sources of information—from existing knowledge to targeted new data generated through NAMs—to conclude on the toxicity of chemicals. According to the OECD, IATA integrates and weighs all relevant evidence to support regulatory decision-making on potential hazards and risks [23]. This framework is particularly valuable for complex endpoints where no single NAM can provide a complete assessment.

Diagram 1: IATA framework for chemical risk assessment. This diagram illustrates the systematic integration of diverse data sources within an IATA to support regulatory decisions.

Adverse Outcome Pathway (AOP)

The Adverse Outcome Pathway framework provides a structured, mechanistic representation of the sequence of biological events leading from a molecular initiating event to an adverse outcome at the organism or population level. AOPs serve as a central organizing principle for toxicological data, creating a shared understanding of how chemicals perturb biological systems [23]. They are particularly valuable for supporting the development and application of NAMs by identifying key measurable events at cellular and molecular levels that can serve as indicators of potential adverse outcomes.

Diagram 2: Adverse Outcome Pathway (AOP) framework. This diagram shows the sequential chain of key events from molecular initiation to adverse outcome, with dashed lines indicating where NAMs can provide critical data.

Experimental Protocols and Methodologies

Defined Approaches for Skin Sensitization

The development of Defined Approaches (DAs) for skin sensitization represents a successful case study in regulatory acceptance of NAMs. A DA combines specific information sources—such as in chemico, in vitro, and in silico data—with a fixed data interpretation procedure to classify chemicals without additional animal testing [10]. The OECD Test Guideline 497 outlines approved DAs for this endpoint.

Protocol Overview:

- Key Event 1 Assessment (Molecular Initiating Event): Perform the Direct Peptide Reactivity Assay (DPRA) to measure a chemical's ability to covalently bind to skin proteins, a key molecular event in skin sensitization.

- Key Event 2 Assessment (Cellular Response): Utilize the KeratinoSens assay to assess the activation of the Nrf2 antioxidant response pathway in human keratinocytes, measuring luciferase gene reporter activity. 3 Key Event 3 Assessment (Dendritic Cell Activation): Conduct the h-CLAT assay to evaluate surface marker expression (CD86 and CD54) in a human monocytic cell line (THP-1) following chemical exposure.

- Data Integration: Apply a fixed prediction model—such as the 2 out of 3 rule or a predefined integrated testing strategy—to the results from the individual assays to classify the skin sensitization potential of the test substance.

This DA has been validated against existing animal and human data, demonstrating similar or improved performance compared to the traditional Local Lymph Node Assay (LLNA) in mice [10].

Next Generation Risk Assessment (NGRA) for Systemic Toxicity

For more complex endpoints like repeated dose or organ toxicity, Next Generation Risk Assessment (NGRA) represents an exposure-led, hypothesis-driven approach that integrates multiple NAMs [10] [19].

Protocol Overview:

- Exposure Assessment: Define realistic human exposure scenarios for the chemical of interest, including concentration, duration, and route of exposure.

- Bioactivity Assessment:

- Conduct high-throughput screening in a panel of human cell lines representing different tissues (e.g., liver, kidney, neuronal) to identify potential targets and mechanisms of toxicity.

- Employ transcriptomic analysis (RNA-seq) to identify pathway perturbations and derive benchmark concentrations for point of departure (PoD) calculations.

- Toxicokinetic Modeling: Use physiologically based kinetic (PBK) models to translate in vitro effective concentrations to human equivalent doses, factoring in absorption, distribution, metabolism, and excretion (ADME).

- Point of Departure (PoD) Derivation: Calculate the benchmark dose (BMD) from in vitro concentration-response data, then use PBK modeling to convert this to a human equivalent dose.

- Risk Characterization: Compare the human equivalent dose with anticipated human exposure levels to determine the margin of safety.

This approach was successfully applied to crop protection products Captan and Folpet, where a package of 18 in vitro studies appropriately identified them as contact irritants, demonstrating that a suitable risk assessment could be performed with available NAM tests [10].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Platforms for NAMs Research

| Reagent/Platform | Function | Application in NAMs |

|---|---|---|

| Primary Human Cells | Provide species-relevant biological responses from diverse donors. | Foundation for in vitro assay development; account for human variability [19]. |

| 3D Organoids & Spheroids | 3D cell cultures that better recapitulate tissue microarchitecture and function. | Model organ-specific toxicities; improve physiological relevance over 2D models [19] [23]. |

| Microphysiological Systems (MPS) | Multi-channel 3D microfluidic cell culture chips that simulate organ-level physiology. | "Organ-on-a-chip" models for assessing systemic toxicity and ADME [6] [19]. |

| High-Content Screening (HCS) Platforms | Automated microscopy and image analysis for multiparametric cellular assessment. | High-throughput phenotypic screening for toxicity pathways [19] [23]. |

| Omics Technologies (Genomics, Transcriptomics, Proteomics) | Comprehensive analysis of molecular changes in biological systems. | Identify mechanisms of toxicity; derive points of departure; support AOP development [19] [23]. |

| QSAR Tools & Computational Platforms | Predict chemical properties and toxicity based on structural features. | Priority setting; read-across; filling data gaps for untested chemicals [23]. |

| PBK Modeling Software (e.g., httk, GastroPlus) | Simulate absorption, distribution, metabolism, and excretion of chemicals in silico. | Translate in vitro concentrations to human relevant doses [23]. |

The regulatory momentum for NAMs is unmistakable and accelerating globally. From the FDA Modernization Act 2.0 and EPA's strategic work plans in the United States to the structured qualification procedures at the EMA in Europe, regulatory agencies are actively building frameworks to accept and implement these innovative approaches [6] [21] [20]. This paradigm shift is being driven by the convergence of ethical imperatives, scientific advancement, and regulatory leadership.

For researchers and drug development professionals, success in this new landscape requires not only technical expertise in developing and executing NAMs but also a sophisticated understanding of the regulatory pathways and evidence requirements for acceptance. The frameworks of IATA and AOP provide critical structure for integrating diverse data streams and building mechanistic understanding that can support regulatory decisions [10] [23]. As the field continues to evolve, emerging technologies—including advanced bioprinting, single-cell technologies, and explainable AI—are poised to further transform toxicology toward a fully human-relevant, animal-free future [19].

The ongoing collaboration between scientists, regulators, and industry stakeholders remains essential to build the scientific confidence and standardized approaches needed to fully realize the potential of NAMs in protecting human health and the environment while advancing ethical science.

The NAMs Toolbox: Methodologies, Technologies, and Real-World Applications in Ecotoxicology

The field of toxicology and drug development is undergoing a profound transformation, driven by a regulatory and ethical push towards New Approach Methodologies (NAMs). These are defined as any technology, methodology, approach, or combination that can provide information on chemical hazard and risk assessment to avoid the use of animal testing [24]. This shift is accelerating the transition from traditional two-dimensional (2D) cell cultures to advanced Three-dimensional (3D) in vitro models such as organoids and microphysiological systems (MPS), with Organ-on-a-Chip (OoC) technology at the forefront [25]. This evolution is critical; traditional 2D cultures lack biological complexity, and animal models suffer from species-translation issues, contributing to high drug failure rates in clinical trials [26]. The enactment of the FDA Modernization Act 2.0 in 2022, which removed the mandatory animal testing requirement for drug development, marked a pivotal moment for adopting these human biology-relevant tools [27]. This guide provides an in-depth technical overview of these systems, framed within the context of NAMs for ecotoxicology and regulatory acceptance, to equip researchers and drug development professionals with the knowledge to navigate this new paradigm.

From 2D to 3D: A Technical Comparison of In Vitro Systems

The Limitations of Conventional 2D Cell Cultures

Traditional 2D cell cultures, where cells grow as a monolayer on flat, rigid plastic surfaces, have been a laboratory staple for decades. These static models are simple and cost-effective but lack the physiological relevance of human biology [26]. In these systems, cell morphology, polarity, and signal transduction are inconsistent with in vivo conditions, leading to distorted cell behavior and a loss of tissue-specific functionality and heterogeneity [27]. This fundamental disparity causes experimental results to frequently deviate from clinical outcomes, limiting the predictive value of 2D models in drug screening and toxicology [27].

The Rise of 3D Models: Organoids and Spheroids

Three-dimensional models, such as spheroids and organoids, represent a significant leap forward. Organoids are self-organizing, three-dimensional structures derived from pluripotent stem cells (PSCs) or adult stem cells (ASCs) that replicate key features of tissues and organs [28].

- Adult vs. Pluripotent Stem Cell-Derived Organoids: ASC organoids are generated from tissue biopsies and retain adult metabolic functions, making them well-suited for toxicology and pharmacokinetics studies [28]. PSC-derived organoids, from embryonic or induced pluripotent stem cells (iPSCs), track developmental signals, providing insights into congenital diseases and early toxicities [28].

- Extracellular Matrix (ECM) Scaffolds: The self-assembly of organoids depends on a supportive ECM. While Matrigel has been the standard, it is undefined and animal-derived. Synthetic hydrogels made of polyethylene glycol (PEG) are emerging as xeno-free alternatives, allowing independent tuning of stiffness, degradability, and ligand density [28].

- Limitations of Conventional 3D Models: While superior to 2D cultures, many conventional 3D models lack multiscale organization, tissue-tissue interactions (e.g., vascular networks), and mechanical forces found in living tissues [28].

Organ-on-a-Chip: Integrating Microphysiology and Microfluidics

Organ-on-a-Chip (OoC) systems, or Microphysiological Systems (MPS), are bioengineered microfluidic devices designed to emulate the functional units of human organs [28] [25]. They bridge the gap between simple 3D cultures and human physiology by incorporating dynamic microenvironments.

- Microfluidic Control: OoC platforms provide precise control over fluid flow, gradients, and shear stress at microscale dimensions, enabling efficient nutrient delivery and waste removal [28].

- Physiological Relevance: These systems can replicate tissue-specific mechanical forces (e.g., peristalsis in gut-chips, breathing in lung-chips), cellular confinement, and physiologically relevant compartmentalization [28] [26].

- Multi-Organ Systems: The integration of multiple organ chips, known as body-on-a-chip or multi-organ-chip (MOC) systems, allows for the study of sequential multi-organ processes like absorption, distribution, metabolism, and excretion (ADME) of compounds [28] [27].

Table 1: Comparative Analysis of In Vitro Model Types

| Feature | 2D Cell Culture | 3D Organoids/Spheroids | Organ-on-a-Chip (MPS) |

|---|---|---|---|

| Architectural Complexity | Monolayer; lacks 3D structure | 3D structure; recapitulates some tissue organization | 3D structure with tissue- and organ-level functionality |

| Microenvironment | Static; lacks physiological cues | Static; limited nutrient diffusion to core | Dynamic fluid flow, shear stress, and mechanical forces |

| Cellular Interactions | Homotypic or limited co-culture | Homotypic and some heterotypic cell interactions | Enables complex heterotypic cell-cell and tissue-tissue interactions |

| Physiological Fidelity | Low; altered cell morphology and signaling | Medium; captures some aspects of tissue biology | High; mimics key aspects of human organ physiology |

| Throughput & Scalability | High | Medium | Medium to Low (increasing with automation) |

| Primary Applications | Basic research, high-throughput compound screening | Disease modeling, drug efficacy testing, personalized medicine | ADME studies, disease modeling, predictive toxicology, mechanistic studies |

The Scientist's Toolkit: Essential Reagents and Materials for OoC

Constructing and maintaining robust in vitro models, particularly organoids and OoCs, requires a suite of specialized reagents and materials.

Table 2: Key Research Reagent Solutions for Advanced In Vitro Models

| Reagent/Material | Function | Key Considerations & Examples |

|---|---|---|

| Stem Cell Sources | Starting material for generating organoids | Adult Stem Cells (ASCs): Isolated from biopsies, tissue-specific. Induced Pluripotent Stem Cells (iPSCs): Reprogrammed from somatic cells, patient-specific [28] [27]. |

| ECM Scaffolds | Provides a 3D supportive structure for cell growth and organization | Matrigel: Gold standard but undefined and animal-derived. Synthetic PEG Hydrogels: Defined composition, tunable stiffness, xeno-free [28]. |

| Soluble Factors | Directs stem cell differentiation and maintains tissue-specific functions | Growth Factors: EGF, Noggin, R-spondin for intestinal organoids [28]. Cytokines & Differentiation Inducers. |

| Microfluidic Device | Platform for housing the biological model and enabling perfusion | Materials: PDMS (common), plastics, glass. Fabrication: Soft lithography, micromilling, 3D printing [28]. |

| Cell Culture Media | Provides nutrients and maintains physiological pH | Must be tailored to the specific organ model; often requires specialized formulations for stem cell maintenance or differentiation. |

Experimental Protocols: Key Methodologies for OoC Workflows

Protocol 1: Establishing a Patient-Derived Organoid Culture

This protocol is foundational for creating biologically relevant models for personalized medicine and disease modeling [27].

- Tissue Acquisition and Processing: Obtain human tissue from biopsies or surgical resections. Mechanically dissociate and enzymatically digest the tissue to release individual cells or small crypt fragments.

- Embedding in ECM: Suspend the cell pellet in a cold, liquid ECM solution (e.g., Matrigel or a synthetic PEG hydrogel). Plate the mixture as small droplets in a pre-warmed culture dish and allow the matrix to polymerize.

- Organoid Culture Initiation: Overlay the polymerized ECM droplets with a specialized expansion medium containing a defined cocktail of niche factors. For intestinal organoids, this typically includes Wnt agonist (R-spondin1), BMP antagonist (Noggin), and Epidermal Growth Factor (EGF) to support long-term self-renewal [28].

- Passaging and Expansion: Mechanically break up the organoids and re-embed the fragments into new ECM every 5-14 days to maintain the culture.

Protocol 2: Integrating Organoids into a Microfluidic Chip

This protocol outlines the process for transitioning from a static organoid culture to a dynamic Organ-on-a-Chip system [28] [25].

- Chip Priming and Coating: Introduce a coating solution, such as an ECM protein (e.g., collagen IV, fibronectin), into the microfluidic channels of the sterilized chip. Incubate to allow coating adhesion, then rinse.

- Cell Seeding: Load a cell suspension (single cells or pre-formed organoid fragments) into the designated tissue chamber of the chip. Allow cells to adhere and organize under static conditions for a specified period (e.g., 2-24 hours).

- Initiation of Perfusion: Connect the chip to a perfusion system or pump. Begin a low flow rate of culture medium to gradually introduce shear stress and nutrient/waste exchange without detaching the cells.

- System Maintenance and Monitoring: Culture the chip under continuous or intermittent flow. Monitor the tissue morphology and function daily using integrated sensors or endpoint assays like microscopy, transepithelial electrical resistance (TEER), and collection of effluent for analysis.

Protocol 3: Conducting a Predictive Drug-Induced Liver Injury (DILI) Assay

This protocol exemplifies the application of a complex in vitro model (CIVM) for safety assessment, a core tenet of NAMs [26].

- Model Selection: Utilize a human Liver-Chip, which typically contains primary human hepatocytes and non-parenchymal cells (like Kupffer cells) in a fluidic environment.

- Compound Dosing and Exposure: Introduce the drug candidate into the perfusion medium at a range of clinically relevant concentrations. Include known hepatotoxicants (e.g., Acetaminophen) and non-toxic compounds as controls.

- Endpoint Analysis: After 5-14 days of exposure, assess multiple endpoints indicative of liver injury:

- Cellular Viability: Measure lactate dehydrogenase (LDH) release in the effluent.

- Functional Markers: Analyze albumin and urea production.

- Histological Markers: Fix and stain the tissue for markers of apoptosis, necrosis, and oxidative stress.

- Data Integration and Prediction: Compare the multi-parameter response of the test compound to the controls. A study demonstrated that a Liver-Chip model correctly identified 87% of drugs that cause DILI in humans, outperforming conventional animal and spheroid models [26].

Visualizing Workflows and Signaling in OoC Technology

Workflow for Organoid-on-Chip Model Generation

This diagram illustrates the key steps in creating a vascularized patient-derived Organ-on-a-Chip model for advanced therapeutic testing.

A Framework for NAMs in Environmental Risk Assessment

This diagram outlines a conceptual framework for integrating NAMs into environmental safety decision-making without generating new animal data [29].

Regulatory Acceptance and the Future within the NAMs Landscape

The regulatory landscape is rapidly adapting to embrace NAMs. The FDA's 2025 phased plan to prioritize non-animal testing methods, including OoCs and computational models, signifies deep regulatory commitment [28]. Programs like the FDA's Innovative Science and Technology Approaches for New Drugs (ISTAND) pilot are critical; in September 2024, a Liver-Chip model became the first OoC accepted into ISTAND, marking a significant step toward regulatory qualification [26].