AI and Machine Learning in Environmental Chemical Monitoring: From Predictive Toxicology to Precision Public Health

This article provides a comprehensive overview of the transformative role of Artificial Intelligence (AI) and Machine Learning (ML) in monitoring environmental chemicals and assessing their risks.

AI and Machine Learning in Environmental Chemical Monitoring: From Predictive Toxicology to Precision Public Health

Abstract

This article provides a comprehensive overview of the transformative role of Artificial Intelligence (AI) and Machine Learning (ML) in monitoring environmental chemicals and assessing their risks. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles bridging AI with chemical engineering and toxicology. The scope ranges from methodological applications in water quality assessment and predictive toxicology to the optimization of models for real-world deployment and rigorous comparative validation of algorithms. By synthesizing the latest research and case studies, this review highlights how these technologies are enabling more efficient chemical risk assessment, informing the safety profiling of new compounds, and opening new frontiers in understanding the exposome's impact on human health.

The New Frontier: How AI is Revolutionizing Environmental Chemistry and Toxicology

The Convergence of Big Data and AI in Modern Toxicology

The field of toxicology is undergoing a profound transformation, moving from traditional, observation-based methods to a data-rich discipline powered by Big Data and Artificial Intelligence (AI). This convergence is particularly critical within environmental chemical monitoring, where the vast number of chemicals and their potential interactions with biological systems present an immense challenge for human-centric analysis [1]. Machine learning (ML), a subset of AI, provides the computational framework to analyze these complex, high-dimensional datasets, enabling the prediction of toxicological endpoints and the identification of novel risk patterns [2]. The exponential growth in publications in this domain, from fewer than 25 per year before 2015 to over 719 in 2024, underscores the rapid adoption and immense potential of these technologies [1]. This document outlines detailed application notes and experimental protocols to guide researchers in leveraging Big Data and AI for advanced toxicological assessment.

The integration of ML into environmental chemical research has seen explosive growth, dominated by specific algorithms, geographic centers of excellence, and thematic research clusters.

Table 1: Growth and Thematic Focus of ML in Environmental Chemical Research (Data sourced from 3150 publications, 1985–2025) [1]

| Aspect | Quantitative Summary |

|---|---|

| Publication Volume | Over 3150 publications (1985–2025), with an exponential surge from 2020 (179 publications) to 2024 (719 publications). |

| Leading Countries | People's Republic of China (1130 publications), United States (863 publications), India (255 publications), Germany (232 publications), England (229 publications). |

| Prominent Institutions | Chinese Academy of Sciences (174 publications), United States Department of Energy (113 publications). |

| Dominant ML Algorithms | XGBoost, Random Forests, Support Vector Machines (SVMs), k-Nearest Neighbors (k-NN), Bernoulli Naïve Bayes, Deep Neural Networks (DNNs). |

| Key Research Clusters | ML model development, water quality prediction, QSAR applications, per-/polyfluoroalkyl substances (PFAS), and risk assessment. |

Application Notes & Experimental Protocols

Protocol 1: Developing a Supervised ML Model for Toxicity Prediction

This protocol provides a generalized workflow for building a supervised ML model to predict a specific toxicological endpoint, such as receptor binding affinity or clinical toxicity.

The Scientist's Toolkit: Essential Reagents & Data Solutions

| Item | Function & Description |

|---|---|

| Chemical Databases | Curated datasets (e.g., PubChem, ChEMBL) providing chemical structures, properties, and associated toxicological endpoints for model training. |

| Toxicological Endpoint Data | Experimental data from in vitro assays (e.g., IC50) or in vivo studies, serving as the labeled data for supervised learning. |

| Molecular Descriptors | Numerical representations of chemical structures (e.g., molecular weight, logP, topological indices, fingerprint bits) that serve as input features for the ML model. |

| ML Algorithms (XGBoost/RF) | Ensemble learning methods effective for classification and regression tasks on structured data, known for high performance in toxicological QSAR models [1] [3]. |

| Model Validation Suite | A set of techniques and metrics (e.g., k-fold cross-validation, confusion matrix, ROC curves) to assess model robustness, prevent overfitting, and ensure predictive reliability [2]. |

Procedure:

- Define the ML Problem: Precisely formulate the toxicological question. Determine if it is a classification task (e.g., toxic/non-toxic) or a regression task (e.g., predicting a continuous value like LD50) [2].

- Construct and Curate the Dataset:

- Data Acquisition: Gather a large dataset of chemicals with reliable, experimentally derived toxicological labels. Aim for a minimum of 1,000 samples, with 10,000–100,000 being ideal for most problems [2].

- Data Cleansing: Remove duplicates, impute missing values, fix outliers, and group sparse classes. Convert categorical data to numerical formats (e.g., one-hot encoding) and normalize or scale the data [2].

- Feature Engineering: Select the most relevant molecular descriptors or features. This can be achieved through statistical methods (e.g., variance filtering) or by leveraging domain expertise to discard irrelevant data, which improves model performance and interpretability [2].

- Model Training and Validation:

- Data Splitting: Divide the dataset into a training set (typically 2/3) and a testing set (1/3). The testing set must remain completely unseen during training [2].

- Algorithm Selection: Choose an appropriate algorithm (e.g., Random Forest, XGBoost). Train multiple models on the training set.

- K-Fold Cross-Validation: To avoid training bias, further divide the training data into 'k' folds (e.g., k=5). Train the model k times, each time using a different fold as validation and the remaining folds for training. This provides a robust estimate of model performance [2].

- Performance Evaluation: Use the held-out test set to evaluate the final model. Report metrics from the confusion matrix (accuracy, precision, recall) or regression metrics (Mean Squared Error) [2].

- Model Application and Iteration: The validated model can be deployed for predicting the toxicity of new chemical entities. The workflow is iterative, allowing for continuous model improvement with new data to minimize error and maximize predictive accuracy [2].

Application Note 1: AI for Predictive Toxicology in Emergency Medicine

AI models are being developed to assist in the high-stakes environment of emergency toxicology, where rapid and accurate diagnosis is critical.

Protocol: Building a Poison Identification Model from Symptom Data

Objective: To develop a Deep Neural Network (DNN) model that identifies the causative agent in acute poisoning based on clinical symptom data.

Procedure:

- Data Source: Utilize a large-scale poison control database, such as the U.S. National Poison Data System (NPDS), which contains hundreds of thousands of records linking exposures to clinical outcomes [4].

- Case Selection: Filter the dataset to focus on single-agent poisonings for a defined set of high-priority drugs (e.g., acetaminophen, benzodiazepines, calcium channel blockers) [4].

- Feature Definition: Define clinical features (symptoms, vital signs, laboratory anomalies) that constitute the input variables (features) for the model.

- Model Architecture and Training:

- Implement a DNN using frameworks like PyTorch or Keras.

- The model should take the clinical feature vector as input and output a probability for each candidate poison.

- Train the model to minimize the loss function (e.g., categorical cross-entropy) using the confirmed poison cases as labels [4].

- Model Validation: Assess the model's performance by measuring specificity and sensitivity for each poison. For example, published models have demonstrated specificities of >92%, and over 99% for specific toxins like sulfonylureas and calcium channel blockers [4].

Table 2: Performance Metrics of a DNN Model for Poison Identification (Example) [4]

| Poison / Drug Class | Sensitivity | Specificity |

|---|---|---|

| Acetaminophen | -- | >92% (Overall) |

| Benzodiazepines | -- | >92% (Overall) |

| Calcium Channel Blockers | -- | >99% |

| Sulfonylureas | -- | >99% |

| Lithium | -- | >99% |

Application Note 2: Visual Validation of QSAR/QSPR Models

The "black-box" nature of complex ML models poses a challenge for regulatory acceptance. Visual validation tools are essential for interpreting model behavior and establishing trust.

Protocol: Visual Validation of a QSAR Model using MolCompass

Objective: To visually identify regions of chemical space where a QSAR model's predictions are unreliable (model cliffs) by projecting predictions onto a 2D map.

Procedure:

- Generate Predictions: Run a set of compounds (e.g., a validation set) through the QSAR model to obtain predicted values and errors.

- Compute Molecular Descriptors: Calculate the same high-dimensional molecular descriptors used to train the parametric t-SNE model in MolCompass for all compounds in the validation set [5].

- Project onto Chemical Space: Use the pre-trained parametric t-SNE model within the MolCompass framework. This neural network projects the high-dimensional descriptors of each compound to a fixed X,Y coordinate on a 2D scatter plot, preserving chemical similarity [5].

- Visualize Model Performance: Color the points on the scatter plot based on the model's prediction error for each compound. Alternatively, use color to represent the predicted value itself.

- Identify Model Weaknesses: Analyze the resulting map. Clusters of compounds with high prediction error indicate specific regions of chemical space (e.g., a particular molecular scaffold) where the model's Applicability Domain is weak and its predictions cannot be trusted [5].

Challenges and Future Directions

Despite the promise, several challenges remain. Data quality and availability are paramount, as models require large amounts of high-quality, representative data to perform well [6]. The "black box" problem necessitates a focus on explainable AI (XAI) to build trust, especially for regulatory applications [1] [6]. Furthermore, models trained on one chemical domain or population may not transfer seamlessly to another, limiting their generalizability [6]. Future progress hinges on expanding chemical coverage, systematically coupling ML outputs with human health data, and fostering international collaboration to translate ML advances into actionable chemical risk assessments [1]. The integration of AI into environmental toxicology marks a shift from reactive observation to proactive, data-driven preservation of ecosystem and human health [7].

The field of process engineering has undergone a profound methodological transformation, shifting from reliance on pseudo-empirical correlations to data-driven machine learning (ML) approaches. This evolution is particularly evident in environmental chemical monitoring, where the ability to predict chemical behavior, transport, and toxicity has been revolutionized by computational advances. Machine learning is now reshaping how environmental chemicals are monitored and their hazards evaluated for human health [1]. This perspective traces this intellectual and technical journey, demonstrating how process engineering has matured from using limited correlative approaches to leveraging sophisticated ML algorithms that offer unprecedented predictive capabilities while introducing new epistemological challenges.

This transformation mirrors broader trends in computational toxicology, which has experienced a marked surge in publication activity over the past two decades [1]. The exponential growth in ML applications for environmental chemical research—with annual publications skyrocketing from fewer than 25 papers before 2015 to over 719 in 2024—signals a fundamental paradigm shift in how engineers and scientists approach chemical risk assessment [1]. This article examines this transition within the context of environmental chemical monitoring, highlighting both the remarkable capabilities and significant ethical considerations inherent in modern ML approaches.

The Era of Pseudo-Empirical Correlations: Historical Context and Limitations

Before the advent of computational modeling, process engineers relied heavily on empirical correlations derived from limited experimental data. These approaches, while valuable withing their original constraints, often suffered from oversimplification and limited domain applicability. The fundamental epistemological weakness of this era was the confusion of correlation with causation—a problem that persists in more sophisticated forms within some contemporary ML applications [8].

Historically, engineers developed quantitative structure-activity relationships (QSARs) that attempted to correlate molecular descriptors with biological activity or environmental fate parameters. While these approaches represented an advance over purely observational science, they were constrained by several factors:

- Limited dataset size - Early correlations were built on small, homogenous datasets

- Simplified linear assumptions - Complex, non-linear relationships were often approximated as linear

- Domain specificity - Models developed for one chemical class frequently failed to generalize to others

- Limited computational power - Restricted the complexity of relationships that could be captured

The ethical implications of these limitations became apparent when simplistic correlations were applied to complex biological and social phenomena. As [8] critically observes, the disregard for historical context in various application domains has led some ML researchers to repeat past mistakes, essentially reviving pseudoscientific approaches like physiognomy under a technological veneer. This problematic legacy underscores the importance of maintaining philosophical rigor alongside technical advancement.

The Machine Learning Revolution in Environmental Chemical Monitoring

Bibliometric Landscape and Research Trends

The integration of ML into environmental chemical research represents nothing short of a revolution. A comprehensive bibliometric analysis of 3,150 peer-reviewed articles from 1985-2025 reveals an exponential publication surge beginning in 2015, dominated by environmental science journals, with China and the United States leading in research output [1]. This growth trajectory closely mirrors the broader field of computational toxicology, indicating a fundamental shift in methodological approaches.

Table 1: Thematic Research Clusters in ML for Environmental Chemicals

| Research Cluster Focus | Representative Algorithms | Primary Applications |

|---|---|---|

| ML Model Development | XGBoost, Random Forests | General predictive model building |

| Water Quality Prediction | SVMs, Kolmogorov-Arnold Networks | Drinking water quality index prediction |

| QSAR Applications | Bayesian models, Neural Networks | Toxicological endpoint prediction |

| Risk Assessment | Ensemble methods, Explainable AI | Dose-response and regulatory applications |

| Emerging Contaminants | Graph Neural Networks (GNNs) | PFAS, microplastics, lignin, arsenic |

Eight distinct thematic clusters have emerged from this bibliometric analysis, centered on ML model development, water quality prediction, QSAR applications, and specific contaminant classes like per-/polyfluoroalkyl substances (PFAS) [1]. A distinct risk assessment cluster indicates the migration of these tools toward dose-response and regulatory applications, though significant gaps remain. Notably, keyword frequencies show a 4:1 bias toward environmental endpoints over human health endpoints, highlighting an important area for future research integration [1].

Advantages of ML Over Traditional Approaches

Machine learning approaches offer several distinct advantages over traditional pseudo-empirical correlations:

- Handling of high-dimensional data: ML algorithms can process complex, high-dimensional datasets that characterize modern chemical and toxicological research [1]

- Non-linear pattern recognition: Unlike traditional statistical methods, ML can capture complex, non-linear relationships without pre-specified model forms

- Predictive accuracy: Ensemble methods like XGBoost and random forests consistently outperform traditional regression approaches

- Adaptability: ML models can be continuously updated with new data, allowing for improved performance over time

The capacity of ML to handle "big data" facilitates probabilistic predictions and pattern recognition that are increasingly being applied in chemical risk assessment frameworks [1]. This represents a fundamental shift from an empirical science focused primarily on apical outcomes to a data-rich discipline ripe for AI integration.

ML-Enabled Detection of Soil Contaminants: A Case Study in Advanced Monitoring

Experimental Protocol and Workflow

A groundbreaking approach developed by researchers at Rice University and Baylor College of Medicine exemplifies the power of ML in environmental monitoring. Their method for identifying hazardous pollutants in soil—even ones never isolated or studied in a lab—combines light-based imaging, theoretical predictions, and machine learning algorithms [9]. The protocol can be broken down into the following key steps:

Sample Preparation: Soil samples are collected from the field and prepared for analysis using surface-enhanced Raman spectroscopy (SERS). The technique employs specially designed signature nanoshells to enhance relevant traits in the spectra [9].

Spectral Data Acquisition: A light-based imaging technique known as surface-enhanced Raman spectroscopy analyzes how light interacts with molecules, tracking the unique patterns, or spectra, they emit. These spectra serve as "chemical fingerprints" for each compound [9].

Theoretical Spectral Library Generation: Using density functional theory—a computational modeling technique that predicts how atoms and electrons behave in a molecule—researchers calculate the spectra of various polycyclic aromatic hydrocarbons (PAHs) and their derivatives based on molecular structure. This generates a virtual library of "fingerprints" for these compounds [9].

Machine Learning Analysis: Two complementary ML algorithms—characteristic peak extraction and characteristic peak similarity—parse relevant spectral traits in real-world soil samples and match them to compounds mapped out in the virtual library of spectra [9].

Validation: The method was tested on soil from a restored watershed and natural area using both artificially contaminated samples and control samples. Results demonstrated reliable detection of even minute traces of PAHs using a simpler and faster process than conventional techniques [9].

Table 2: Research Reagent Solutions for ML-Enabled Soil Contaminant Detection

| Reagent/Material | Specifications | Function in Protocol |

|---|---|---|

| Signature Nanoshells | Designed to enhance relevant spectral traits | Amplification of Raman spectroscopy signals |

| Soil Samples | From restored watershed and natural areas | Real-world validation matrix for method testing |

| PAH/PAC Standards | For artificially contaminated samples | Method calibration and performance validation |

| Density Functional Theory Code | Computational modeling technique | Prediction of molecular behavior and spectral properties |

| Raman Spectrometer | Portable or laboratory-grade | Acquisition of chemical fingerprint data |

The researchers compared this process to using facial recognition to find an individual in a crowd: "You can imagine we have a picture of a person when they're a teenager, but now they're in their 30s. On the theory side, we can predict what the picture will look like" [9]. This analogy highlights the predictive power of combining theoretical modeling with ML approaches.

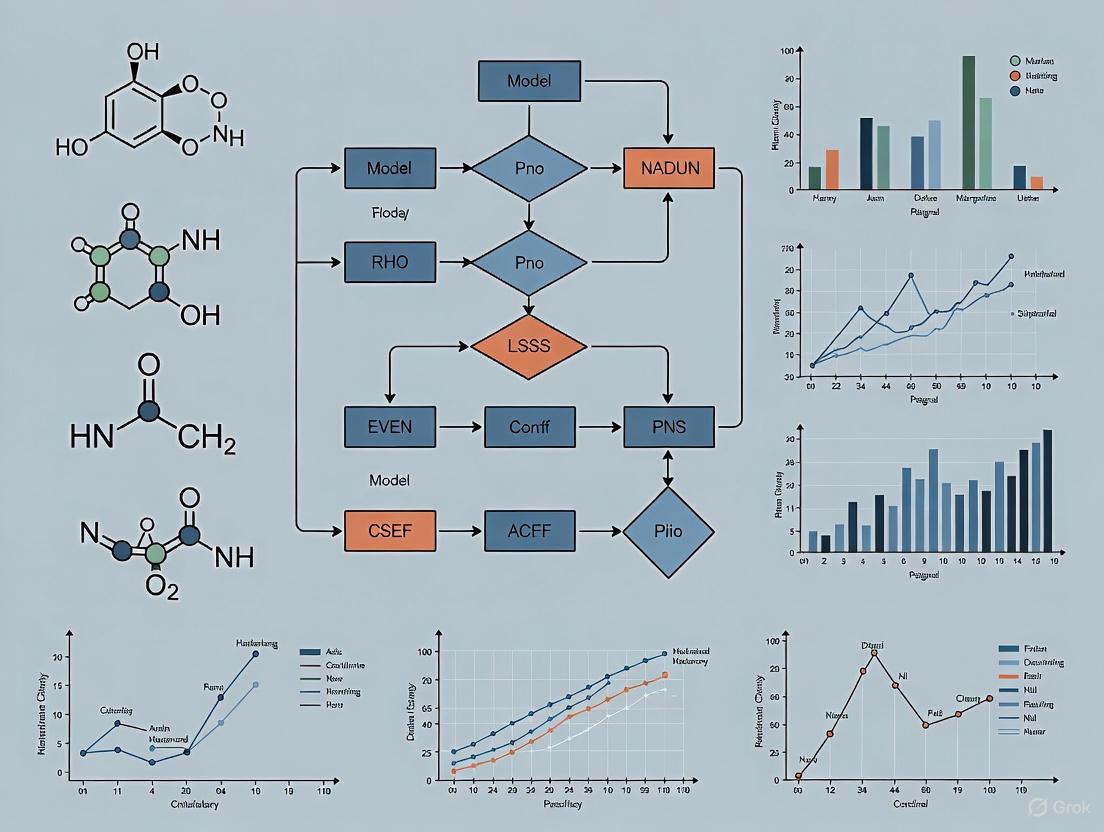

Workflow Visualization

Diagram 1: ML-enabled soil contaminant detection workflow integrating experimental and theoretical approaches

Ethical Implications and the Resurgence of Pseudoscience

Epistemological Concerns in ML Applications

The extraordinary capacity of deep learning methods to process vast amounts of complex data and extract intricate correlations has led to a troubling trend: the undue attribution of causality by designers and users [8]. This problem is particularly acute when ML systems are applied to sensitive domains that demand explainability, such as criminal justice, hiring decisions, or health assessments.

[8] contends that bestowing deep learning-based systems with "oracle-like" powers is not only scientifically unsound but also "akin to endorsing pseudosciences such as Lombrosianism, physiognomy, and social astrology." This criticism highlights how historical pseudoscientific approaches have been resurrected under the veneer of technological sophistication, often without proper acknowledgment of their problematic lineage.

Several concerning applications demonstrate this trend:

- Facial analysis software claiming to infer political orientation or sexual orientation [8]

- AI-driven judicial decision systems determining bail eligibility based on background data [8]

- Personality detection algorithms based solely on facial features [8]

- Criminality prediction tools utilizing facial features and gestural tics [8]

These applications revive discredited deterministic approaches under the guise of algorithmic objectivity, creating what [10] terms "the reanimation of pseudoscience in machine learning."

Ethical Framework for Responsible ML Implementation

The ethical challenges posed by ML in process engineering and environmental monitoring demand a structured framework for responsible implementation. The following principles should guide development and deployment:

Causal Humility: Explicit acknowledgment that correlation does not imply causation, no matter how sophisticated the pattern recognition [8]

Human Oversight: Maintaining expert human judgment throughout the ML lifecycle, particularly for sensitive applications [8]

Fairness Metrics: Prioritizing metrics that promote fairness over mere performance in model evaluation [8]

Domain Expertise Integration: Ensuring ML experts collaborate closely with domain specialists who understand the historical context and limitations of their field [8]

Transparency and Explainability: Developing methods that provide insight into model decision-making processes, particularly for high-stakes applications

The diagram below illustrates the critical integration of ethical considerations throughout the ML development lifecycle:

Diagram 2: Ethical framework integrating guardrails throughout the ML development lifecycle

Future Directions and Recommendations

As ML continues to transform process engineering and environmental chemical monitoring, several critical pathways emerge for responsible advancement:

Expanding Chemical Coverage: Current ML applications cover a limited subset of environmental chemicals. Systematic expansion of the substance portfolio is needed to address emerging contaminants [1].

Health Data Integration: The 4:1 bias toward environmental endpoints over human health endpoints must be addressed through systematic coupling of ML outputs with human health data [1].

Explainable AI Adoption: Complex "black box" models require complementary explainable AI workflows to build trust and facilitate regulatory acceptance [1].

International Collaboration: Translation of ML advances into actionable chemical risk assessments will require fostered international collaboration across disciplines [1].

Validation Standards: Development of rigorous validation frameworks specific to ML applications in environmental monitoring to ensure reliability and reproducibility.

The field must also address the significant environmental footprint of ML itself. As [11] notes, AI systems require substantial natural resources—with training large language models consuming millions of liters of fresh water and AI's computing needs doubling yearly. Developing more efficient algorithms and sustainable computing practices represents an essential direction for future research.

The journey from pseudo-empirical correlations to machine learning in process engineering represents both tremendous scientific progress and a cautionary tale about the persistence of epistemological challenges. While ML approaches have revolutionized environmental chemical monitoring—enabling detection of previously unidentifiable contaminants, improving predictive accuracy, and accelerating risk assessment—they have also resurrected fundamental questions about correlation, causation, and scientific validity.

The responsible integration of ML into process engineering requires maintaining the delicate balance between leveraging its remarkable capabilities while resisting the temptation to treat it as an oracle. By learning from history, maintaining ethical vigilance, and prioritizing scientific rigor over expediency, the field can harness machine learning's potential while avoiding the repetition of past mistakes. The future of environmental chemical monitoring lies not in uncritical adoption of ML technologies, but in their thoughtful integration within a framework that respects the complexity of natural systems and the lessons of scientific history.

The application of artificial intelligence (AI) in chemical research is transforming how environmental chemicals are monitored, how their properties are predicted, and how their hazards are evaluated for human health [1]. Machine learning (ML), a subdiscipline of AI, provides powerful predictive capabilities by learning from datasets [12]. The three primary ML paradigms—supervised, unsupervised, and reinforcement learning—offer distinct approaches and are suited to different challenges in the chemical sciences. This document details their specific applications, protocols, and reagent solutions within the context of environmental chemical monitoring research, providing a practical toolkit for researchers and drug development professionals.

Supervised Learning in Chemical Research

Supervised learning utilizes labeled datasets to train predictive models for classification or regression tasks [12]. In chemical contexts, this typically involves using known molecular structures to predict properties or activities.

Application Notes

Supervised learning is the most widely applied ML paradigm in chemical research [1]. It is extensively used for Quantitative Structure-Activity Relationship (QSAR) modeling, toxicity assessment, and predicting physicochemical properties such as boiling point, melting point, and solubility [13]. Analyses of the research landscape show that ensemble methods like XGBoost and Random Forests are among the most cited algorithms for these tasks, prized for their predictive accuracy and robustness [1]. A significant application is predicting the environmental impacts of chemicals over their life cycle, where molecular-structure-based models offer a rapid and cost-effective alternative to traditional, slower life cycle assessments (LCA) [14].

Experimental Protocol: Molecular Property Prediction

Objective: To train a supervised learning model for predicting the boiling point of organic compounds from their molecular structures.

Workflow:

- Data Collection & Curation: Assemble a dataset of organic compounds with experimentally measured boiling points from databases like PubChem or DrugBank [13]. Remove duplicates and standardize chemical structures.

- Molecular Representation (Featurization): Convert molecular structures into a numerical format. Common representations include:

- SMILES Strings: Convert SMILES into numerical vectors using embedders like Mol2Vec or VICGAE [15].

- Molecular Descriptors: Calculate descriptors (e.g., molecular weight, logP, number of rotatable bonds) using toolkits like RDKit [13].

- Molecular Fingerprints: Generate binary bit vectors representing the presence or absence of specific substructures.

- Data Preprocessing: Split the dataset into training, validation, and test sets (e.g., 70/15/15). Apply feature scaling or normalization to the numerical data.

- Model Training & Validation: Train a selected algorithm (e.g., XGBoost, Random Forest, Support Vector Machine) on the training set. Use the validation set for hyperparameter tuning to optimize model performance.

- Model Evaluation & Prediction: Assess the final model on the held-out test set using metrics like Root Mean Square Error (RMSE) and R². Use the model to predict boiling points for new, unknown compounds.

Table 1: Performance of Supervised Learning Models in Chemical Applications

| Application Area | Common Algorithms | Reported Performance Metrics | Key References |

|---|---|---|---|

| Chemical Property Prediction | XGBoost, Random Forest, SVMs | Accuracy up to 93% for critical temperature prediction [15] | [15] [13] |

| Toxicity & Environmental Impact | Random Forest, Bernoulli Naïve Bayes, Graph Neural Networks (GNNs) | High predictive accuracy for receptor binding/antagonism; enables rapid LCA [1] [14] | [1] [14] |

| Water & Air Quality Monitoring | SVMs, Multilayer Perceptrons, XGBoost | Improved forecasting and high-resolution mapping of pollutants (e.g., PM2.5) [1] | [1] [16] |

Unsupervised Learning in Chemical Research

Unsupervised learning discovers inherent patterns, clusters, or structures from unlabeled data [12]. It is invaluable for exploratory data analysis in large chemical datasets.

Application Notes

In chemical research, unsupervised learning is primarily used to map the "chemical space," which helps in understanding the diversity of chemical libraries and identifying novel compound clusters [13]. Techniques like clustering (e.g., k-means, hierarchical clustering) and dimensionality reduction (e.g., PCA, t-SNE) are fundamental. They can group compounds with similar structural or property profiles, aiding in lead identification and prioritization for experimental testing. Furthermore, these methods are applied to analyze complex environmental data, such as identifying co-occurrence patterns of pollutants or clustering water quality samples to track pollution sources [1].

Experimental Protocol: Chemical Space Mapping

Objective: To analyze a large chemical library and identify naturally occurring clusters of compounds based on their molecular descriptors.

Workflow:

- Data Compilation: Load a chemical library (e.g., from ZINC15 or an in-house collection). Standardize and curate the structures [13].

- Descriptor Calculation: Use a cheminformatics toolkit like RDKit to compute a comprehensive set of molecular descriptors (e.g., topological, electronic, and physicochemical descriptors) for all compounds.

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the high-dimensional descriptor matrix. This projects the data onto a new set of orthogonal axes (Principal Components) that capture the maximum variance, reducing the dimensions to 2D or 3D for visualization.

- Clustering Analysis: Apply a clustering algorithm like k-means to the original descriptor space or the principal components. This will assign each compound to a specific cluster based on similarity.

- Visualization & Interpretation: Create a scatter plot of the compounds using the first two or three principal components. Color the points by their cluster assignment. Analyze the clusters to identify regions of chemical space with high density or interesting properties.

Reinforcement Learning in Chemical Research

Reinforcement Learning (RL) involves an agent that learns to make optimal sequential decisions by interacting with an environment and receiving feedback in the form of rewards [17] [12]. Its application in chemical sciences is emerging and focuses on optimization problems.

Application Notes

While more common in healthcare for dynamic treatment regimes [12], RL is gaining traction in chemistry for molecular design and reaction optimization. In de novo drug design, the RL agent acts as a "molecule generator," with the environment being a predictive model for a desired property (e.g., binding affinity, solubility). The agent is rewarded for generating molecules that improve this property, learning to propose optimal chemical structures over time [13]. RL is also used to optimize complex, multi-step processes, such as chemical reaction conditions or industrial chemical manufacturing, to maximize yield or minimize energy consumption [17] [11].

Experimental Protocol: Molecular Optimization with RL

Objective: To employ an RL agent to optimize a lead compound for improved binding affinity.

Workflow:

- Problem Formulation:

- State (s): The current molecular structure.

- Action (a): A permissible molecular modification (e.g., adding/removing a functional group).

- Reward (r): The change in predicted binding affinity after the modification.

- Policy (π): The strategy the RL agent uses to select the next action.

- Agent & Environment Setup: The agent is the RL algorithm (e.g., Proximal Policy Optimization - PPO). The environment is a simulation that includes the starting molecule and a pre-trained supervised model (the "reward predictor") that scores new structures for binding affinity.

- Training Loop:

- The agent takes an action to modify the current molecule.

- The environment returns the new molecule and a reward based on the change in the predicted property.

- The agent updates its policy to maximize cumulative reward over multiple steps.

- Iteration & Convergence: This loop repeats for thousands of episodes. The agent learns a policy that guides it through chemical space toward regions of high binding affinity.

- Output: The process yields a set of optimized molecular structures proposed by the RL agent for synthesis and experimental validation.

Table 2: Reinforcement Learning in Optimization Contexts

| Application Area | Common Algorithms | Key Metrics & Outcomes | Key References |

|---|---|---|---|

| Molecular Design & Optimization | Policy Gradient Methods (e.g., PPO), Actor-Critic Methods | Generates novel, optimized structures meeting target criteria (solubility, binding) [17] [13] | [17] [13] |

| Industrial Process Control | Deep Q-Networks (DQN), Hybrid Methods | Reduces energy consumption in manufacturing by 20-30% [11] | [17] [11] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Data Resources for AI in Chemical Research

| Tool/Resource Name | Type | Primary Function in Chemical AI Research |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for cheminformatics, including descriptor calculation, fingerprint generation, and molecular operations [13]. |

| ChemXploreML | Desktop Application | User-friendly app for predicting molecular properties using ML, without requiring deep programming skills [15]. |

| PubChem / DrugBank | Chemical Database | Public repositories of chemical molecules and their biological activities, used for data collection and model training [13]. |

| TensorFlow Agents / Ray RLlib | RL Framework | Libraries for developing and training Reinforcement Learning agents, applicable to molecular optimization tasks [17]. |

| VOSviewer / R | Bibliometric Analysis Tool | Software for mapping and analyzing scientific literature trends, useful for understanding the research landscape [1]. |

The application of machine learning (ML) and artificial intelligence (AI) in environmental chemical research represents a paradigm shift in how scientists monitor chemical hazards, assess ecological impacts, and evaluate human health risks. This emerging interdisciplinary field leverages computational power to analyze complex, high-dimensional environmental datasets that characterize modern chemical and toxicological research [1]. As the volume of research accelerates, bibliometric analysis has become an essential tool for mapping the intellectual structure, temporal evolution, and collaborative networks within this rapidly evolving domain [1] [18]. These quantitative assessments provide valuable insights into publication patterns, citation networks, and keyword trends, enabling researchers and policymakers to identify research fronts, consolidate evidence, and prioritize resources [18].

This application note presents a comprehensive bibliometric framework for analyzing the exponential growth and thematic clusters in ML applications for environmental chemical monitoring. We provide detailed protocols for data collection, processing, and visualization, along with structured tables summarizing key quantitative findings. Additionally, we introduce essential computational tools and reagents that constitute the researcher's toolkit for conducting bibliometric studies in this field. The insights generated through these methodologies reveal how ML is reshaping environmental chemical research, from molecular-level toxicology to ecosystem-scale monitoring [1].

Quantitative Landscape of ML in Environmental Chemical Research

Publication Growth and Geographic Distribution

Bibliometric analyses reveal a striking exponential growth in publications at the intersection of machine learning and environmental chemicals. Analysis of 3,150 peer-reviewed articles from 1985-2025 shows publication output remained modest until approximately 2015, with fewer than 25 papers published annually [1]. A notable shift occurred around 2020, when publications surged to 179, nearly doubling to 301 in 2021, and reaching 719 by 2024 [1]. This trend reflects a broader acceleration in AI applications across environmental research, with one analysis of 4,762 publications noting a marked increase since 2010 [18].

Table 1: Annual Publication Growth in ML for Environmental Chemical Research

| Year Range | Publication Characteristics | Annual Growth Rate |

|---|---|---|

| 1985-2015 | Modest output (<25 papers/year) | Minimal growth |

| 2020 | Sharp increase to 179 publications | Significant surge |

| 2021 | Nearly doubled to 301 publications | ~68% growth |

| 2024 | Reached 719 publications | Sustained exponential growth |

Geographically, research production is dominated by a few key countries. Analysis reveals that 4,254 institutions across 94 countries have contributed to this field [1]. The People's Republic of China leads with 1,130 publications, followed by the United States with 863 publications [1]. Other significant contributors include India (255 publications), Germany (232 publications), and England (229 publications) [1]. Notably, the United States demonstrates stronger collaborative networks, evidenced by a higher total link strength (TLS of 734) compared to China (TLS of 693) [1]. At the institutional level, the Chinese Academy of Sciences leads with 174 publications, followed by the United States Department of Energy with 113 publications [1].

Table 2: Geographic Distribution of Research Output

| Country | Number of Publications | Total Link Strength (Collaboration) |

|---|---|---|

| China | 1,130 | 693 |

| United States | 863 | 734 |

| India | 255 | Not specified |

| Germany | 232 | Not specified |

| England | 229 | Not specified |

Thematic Clusters and Research Foci

Co-citation and co-occurrence analyses reveal distinct thematic clusters within the ML-environmental chemical research landscape. One comprehensive analysis identified eight major clusters centered around: (1) ML model development, (2) water quality prediction, (3) quantitative structure-activity relationship (QSAR) applications, and (4) per-/polyfluoroalkyl substances (PFAS) [1]. A distinct risk assessment cluster indicates migration of these tools toward dose-response and regulatory applications [1].

The algorithmic landscape is dominated by specific ML approaches. XGBoost and random forests are the most cited algorithms, while deep learning architectures like convolutional neural networks (CNNs) and graph neural networks (GNNs) are increasingly applied to complex environmental data [1]. In broader environmental research, Artificial Neural Networks (ANN) represent the most frequently used ML technique, followed by Support Vector Machines (SVM) [19].

Application domains show a distinct pattern of emphasis. Keyword frequency analysis reveals a 4:1 bias toward environmental endpoints over human health endpoints [1]. This suggests that while ML applications for ecological monitoring are well-established, connections to human health outcomes remain underexplored. Emerging topics include climate change, microplastics, and digital soil mapping, while lignin, arsenic, and phthalates appear as fast-growing but understudied chemicals [1].

Experimental Protocols for Bibliometric Analysis

Data Collection and Preprocessing

Protocol 1: Database Query and Search Strategy

- Database Selection: Select primary databases such as Web of Science Core Collection (WoSCC) or Scopus for their comprehensive coverage of peer-reviewed literature [1] [18].

- Search Query Formulation:

- Field Restrictions: Apply field tags to search title, abstract, and keywords for comprehensive retrieval [1]

- Temporal Filtering: Define appropriate date ranges based on research objectives (typically 20+ years for evolutionary analysis) [1]

- Document Type Limitation: Restrict to article-type documents written in English to maintain consistency [1]

Protocol 2: Data Extraction and Cleaning

- Record Export: Export full records and cited references in compatible formats (e.g., CSV, plain text) [1]

- Data Validation: Check for and remove duplicate entries using digital object identifiers (DOIs) or title matching [20]

- Field Standardization: Normalize author names, affiliations, and keyword variations to ensure accurate counting [19]

- Missing Data Handling: Implement appropriate strategies for records with incomplete metadata

Analytical Workflow for Bibliometric Mapping

The following diagram illustrates the comprehensive workflow for conducting bibliometric analysis in this field:

Protocol 3: Temporal Trend Analysis

- Annual Publication Counting: Calculate yearly publication counts to identify growth patterns [1]

- Cumulative Trend Analysis: Plot cumulative publications to visualize acceleration phases [20]

- Growth Rate Calculation: Compute compound annual growth rates for specific periods [21]

- Citation Burst Detection: Identify papers with sudden citation increases using algorithms like Kleinberg's burst detection [20]

Protocol 4: Thematic Cluster Identification

- Keyword Co-occurrence Analysis:

- Cluster Generation:

- Use clustering algorithms (e.g., modularity-based, hierarchical) to identify thematic groups [19]

- Determine optimal cluster resolution through parameter sensitivity testing

- Cluster Labeling:

- Extract representative terms based on frequency and centrality metrics

- Apply natural language processing for label generation when needed [21]

Protocol 5: Collaboration Network Mapping

- Node Definition: Define nodes as countries, institutions, or authors based on research questions [1]

- Edge Weighting: Establish collaboration strength based on co-authorship frequency [21]

- Centrality Metrics: Calculate betweenness, closeness, and eigenvector centrality to identify key connectors [20]

- Community Detection: Apply community detection algorithms to identify collaborative subnetworks [21]

Visualization Approaches for Bibliometric Data

Thematic Evolution and Conceptual Structure

The following diagram illustrates the relationship between major thematic clusters and their applications in environmental chemical research:

Protocol 6: Network Visualization Development

- Software Selection: Utilize specialized bibliometric software (VOSviewer, CiteSpace, or bibliometrix in R) [1] [20]

- Layout Optimization: Apply force-directed algorithms (e.g., Fruchterman-Reingold, Kamada-Kawai) for clear network representation [19]

- Visual Encoding:

- Map node size to publication count or citation frequency

- Represent cluster affiliation through color coding

- Scale edge thickness according to collaboration strength or co-citation frequency [1]

- Interactivity Implementation: Enable zoom, filter, and detail-on-demand features for exploratory analysis

Protocol 7: Temporal Evolution Mapping

- Time Slicing: Divide dataset into consecutive time periods (typically 2-5 year intervals) [1]

- Overlay Visualization: Create multiple network maps for different periods and compare structural changes [19]

- Thematic Evolution Analysis: Track cluster emergence, merger, fragmentation, or disappearance across time slices [21]

- Trajectory Mapping: Visualize the development path of specific research themes using alluvial diagrams or theme rivers [21]

Table 3: Essential Software Tools for Bibliometric Analysis

| Tool Name | Primary Function | Application in Environmental ML Research | Access |

|---|---|---|---|

| VOSviewer [1] | Network visualization and clustering | Co-citation analysis, keyword co-occurrence mapping | Free |

| CiteSpace [20] | Temporal pattern detection, burst identification | Emerging trend analysis, research front identification | Free |

| R bibliometrix package [21] | Comprehensive bibliometric analysis | Data preprocessing, multiple analysis capabilities | Open source |

| Python (Scikit-learn, NLTK) [21] | Text mining, NLP, machine learning | Topic modeling, abstract analysis, LDA implementation | Open source |

| CitNetExplorer | Citation network analysis | Document clustering, knowledge diffusion pathways | Free |

Table 4: Key Data Sources for Bibliometric Studies

| Database | Coverage Strengths | Export Capabilities | Limitations |

|---|---|---|---|

| Web of Science Core Collection [1] | High-quality journal coverage, citation data | Comprehensive record export | Limited conference proceedings |

| Scopus [18] | Broader coverage, including more international journals | Flexible export options | Subscription required |

| Google Scholar | Broadest coverage including grey literature | Limited bulk export capabilities | Data quality variability |

Application Notes and Interpretation Guidelines

Key Findings and Research Gaps

Bibliometric analyses consistently identify several critical research gaps in the application of ML to environmental chemical research. There remains a significant disparity between environmental and human health focus, with keyword frequencies showing a 4:1 bias toward environmental endpoints [1]. This indicates a need for greater integration of human health data with ML outputs to better assess public health implications of chemical exposures [1].

There are also notable chemical coverage gaps, with emerging contaminants like microplastics receiving increasing attention, while other substances such as lignin, arsenic, and phthalates appear as fast-growing but understudied chemicals [1]. The field would benefit from expanding the substance portfolio to ensure comprehensive chemical risk assessment [1].

Methodologically, there is growing recognition of the need for explainable AI (XAI) workflows to enhance model transparency and trust in critical environmental applications [1] [18]. The "black-box" nature of many complex ML models remains a barrier to their adoption in regulatory decision-making [18].

Future Directions and Emerging Trends

Based on bibliometric trends, several future research directions appear promising:

- Integration of Multi-modal Data: Combining traditional chemical monitoring with novel data sources (e.g., remote sensing, IoT sensors, citizen science) [18] [22]

- Advanced Hybrid Models: Developing frameworks that combine mechanistic models with data-driven ML approaches for improved interpretability [20]

- Cross-disciplinary Collaboration: Fostering partnerships between environmental scientists, computer scientists, and regulatory experts [1]

- Real-time Monitoring Systems: Leveraging ML for dynamic chemical risk assessment and early warning systems [18] [23]

The field is also witnessing the rise of specialized ML applications in areas such as wastewater treatment optimization [20], indoor air quality prediction [23], and life cycle assessment [24], indicating a maturation of the research landscape beyond foundational methods to domain-specific implementations.

By employing the protocols and tools outlined in this application note, researchers can systematically map the evolving landscape of ML applications in environmental chemical research, identify emerging opportunities, and facilitate evidence-based research planning and resource allocation in this rapidly advancing field.

The field of environmental chemical risk assessment is undergoing a profound transformation, driven by the increasing volume and variety of data and the need to evaluate more chemicals than traditional methods can accommodate [1] [25]. The core challenge lies in effectively integrating these multifarious data sources—including chemical properties, environmental monitoring data, toxicological studies, and exposure information—into a cohesive analytical framework. Machine learning (ML) and artificial intelligence (AI) have emerged as powerful technologies capable of translating these complex datasets into actionable risk assessments [1] [26]. This Application Note defines the central data integration challenge and provides detailed protocols for implementing ML-driven solutions that enable a more holistic understanding of chemical risks.

Bibliometric analysis of the field reveals an exponential publication surge from 2015 onward, with China and the United States leading research output [1]. The literature identifies eight major thematic clusters, including ML model development, water quality prediction, quantitative structure-activity relationship (QSAR) applications, and risk assessment methodologies [1]. Despite this growth, a significant gap persists: keyword frequency analysis shows a 4:1 bias toward environmental endpoints over human health endpoints, highlighting the need for more integrated approaches [1].

The Data Integration Landscape

Data Source Heterogeneity

The first dimension of the integration challenge involves managing diverse data types and formats from disparate sources:

- Chemical Structure Data: Molecular descriptors, structural fingerprints, and physicochemical properties

- Environmental Monitoring Data: Air, water, and soil quality measurements from fixed and mobile sensors [1]

- Toxicological Data: Results from in vitro assays, traditional animal studies, and high-throughput screening

- Exposure Data: Consumer use patterns, environmental fate information, and biomonitoring results

- Omics Data: Genomic, proteomic, and metabolomic profiles from advanced analytical techniques [27]

- Geospatial Data: Location-specific environmental conditions and population distribution information

Current Limitations and Research Gaps

Traditional risk assessment approaches struggle with this data complexity, creating a significant discrepancy between the number of chemicals requiring assessment and those actually evaluated [25] [26]. The European Commission's Joint Research Centre has identified that the current process is hampered by a lack of experts for evaluation, interference of third-party interests, and the sheer volume of potentially relevant information from disparate sources [25].

Table 1: Key Research Gaps in Data Integration for Chemical Risk Assessment

| Research Gap | Impact on Risk Assessment | Potential ML Solution |

|---|---|---|

| Chemical Coverage Bias | Fast-growing chemicals (e.g., lignin, arsenic, phthalates) remain understudied [1] | Transfer learning from data-rich to data-poor chemical classes |

| Health Endpoint Neglect | 4:1 publication bias toward environmental over human health endpoints [1] | Multi-task learning for simultaneous environmental and health prediction |

| Data Standardization | Diverse formats, protocols, and terminology hinder integration [26] | Natural language processing for automated data harmonization |

| Model Interpretability | Complex AI models function as "black boxes" limiting regulatory acceptance [26] | Explainable AI (XAI) and integrated gradient interpretation [28] |

Quantitative Analysis of ML Applications

Bibliometric analysis of 3,150 peer-reviewed publications (1985-2025) reveals the evolving landscape of ML in environmental chemical research [1]. The data demonstrates a notable shift in 2020, when publications rose sharply to 179, nearly doubling to 301 in 2021, and reaching 719 publications in 2024 [1]. This growth trajectory highlights the accelerating interest and investment in computational approaches for environmental monitoring.

Table 2: Dominant Machine Learning Algorithms in Environmental Chemical Research

| Algorithm Category | Specific Methods | Primary Applications | Citation Frequency |

|---|---|---|---|

| Ensemble Methods | XGBoost, Random Forests | Water quality prediction, heavy-metal contamination mapping [1] | Most cited algorithms [1] |

| Neural Networks | Multitask Neural Networks, Graph Neural Networks (GNNs) | Molecular property prediction, river network modeling [1] | Fastest growing approach |

| Traditional Classifiers | Support Vector Machines (SVM), k-Nearest Neighbors (k-NN) | Chemical classification, receptor binding prediction [1] | Established baseline methods |

| Dimensionality Reduction | PCA, OPLS, O2PLS | Spectral data analysis, omics integration [27] | Essential for preprocessing |

Experimental Protocols

Protocol 1: Multi-Sensor Fusion for Environmental Monitoring

Objective: Implement a sensor fusion framework to improve the accuracy of environmental parameter prediction using heterogeneous sensor data.

Background: Multi-sensor fusion addresses individual sensor weaknesses by combining multiple data sources to decrease uncertainty and increase reliability, robustness, and accuracy [29]. The methodology operates at three abstraction levels: data-level, feature-level, and decision-level fusion [29].

Materials and Reagents:

- Environmental Sensors: Acoustic, vibration, and atmospheric sensors for simultaneous data capture

- Data Acquisition System: Multi-channel system capable of synchronous sampling

- Computational Environment: Python with TensorFlow/Keras or R with appropriate ML libraries

- Reference Analytical Method: Gold-standard laboratory equipment for validation

Procedure:

Sensor Deployment and Data Collection:

- Deploy multiple heterogeneous sensors (acoustic, vibration, gas, particulate) in the target environment

- Collect synchronous data streams at appropriate sampling frequencies (minimum 1 Hz for most applications)

- Record environmental conditions (temperature, humidity) that may affect sensor performance

Data Preprocessing:

- Apply synchronization algorithms to align temporal data streams

- Implement noise reduction filters appropriate for each sensor type

- Normalize data using z-score or min-max scaling based on data distribution

Feature Extraction:

- Calculate statistical features (mean, variance, skewness, kurtosis) for sliding time windows

- Extract frequency-domain features using Fast Fourier Transform (FFT) for vibrational/acoustic data

- Generate cross-sensor correlation features to capture interdependent relationships

Fusion Model Implementation:

- Develop separate neural network translators for each sensor type to predict the target parameter

- Implement feature-level fusion by concatenating feature vectors from multiple sensors

- Apply decision-level fusion using ensemble methods (Voting, Multi-view stacking) to combine predictions

Model Interpretation:

- Use Integrated Gradients to identify temporal regions with greatest influence on predictions [28]

- Quantify relative contribution of each sensor modality to overall prediction accuracy

- Validate against reference measurements and calculate performance metrics (RMSE, MAE, R²)

Validation: Systematic experiments using this methodology have demonstrated successful mapping of machine acoustics to power consumption with 5.6% error, tool vibration to power consumption with 8.2% error, and fused acoustics and vibration data to power with 2.5% error [28].

Multi-Sensor Fusion Workflow for Environmental Monitoring

Protocol 2: Chemical Grouping and Read-Across using Generative AI

Objective: Employ generative AI and ML approaches to group chemicals by structural and toxicological similarity for efficient risk assessment.

Background: Chemical grouping and read-across allows prediction of properties for data-poor chemicals using information from similar, data-rich chemicals. Generative AI enhances this process by efficiently identifying and categorizing chemicals, handling large datasets where traditional methods falter due to volume and complexity [26].

Materials:

- Chemical Databases: PubChem, ChEMBL, or internal compound libraries

- Molecular Representation Tools: RDKit or OpenBabel for descriptor calculation

- Generative AI Framework: GPT-based architectures or variational autoencoders

- Validation Dataset: Chemicals with known toxicological profiles for method verification

Procedure:

Chemical Representation:

- Calculate molecular descriptors (topological, electronic, thermodynamic)

- Generate structural fingerprints (ECFP, FCFP) for similarity assessment

- Create learned representations using graph neural networks for complex structural relationships

Chemical Space Mapping:

- Apply dimensionality reduction techniques (PCA, t-SNE, UMAP) to visualize chemical space

- Implement clustering algorithms (k-means, hierarchical clustering) to identify natural groupings

- Validate clusters using internal metrics (silhouette score) and external toxicological knowledge

Read-Across Model Development:

- For each chemical cluster, identify source compounds with complete toxicological data

- Build predictive models using random forests or gradient boosting within each cluster

- Apply genetic algorithms to optimize feature selection and model parameters

Generative AI for Data Augmentation:

- Train variational autoencoders on known chemical-toxicity pairs

- Generate novel chemical representations within identified activity cliffs

- Use generative models to propose hypothetical structures for targeted testing

Validation and Uncertainty Quantification:

- Implement cross-validation within chemical clusters to assess predictivity

- Calculate uncertainty metrics using conformal prediction or Bayesian approaches

- Perform external validation with held-out test sets of recently evaluated chemicals

Application Notes: This approach significantly enhances the efficiency of literature review by classifying and ranking the quality of clinical and non-clinical data, ensuring researchers can access and synthesize relevant information swiftly [26]. Furthermore, it enables predictive toxicology where ML models trained on existing chemical toxicity profiles can predict the potential toxicity of new chemicals, accelerating screening processes [26].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Integrated Risk Assessment

| Tool/Category | Specific Solution | Function in Risk Assessment |

|---|---|---|

| Multivariate Analysis Software | SIMCA [27] | Provides specialized interfaces for spectroscopy and omics data analysis, enabling pattern recognition in complex environmental datasets |

| Sensor Fusion Platforms | POFM (Prediction of Optimal Fusion Method) [29] | Machine learning-based approach that predicts the best fusion method for a given set of sensors and data characteristics |

| Data Standardization Frameworks | EPA Data Standards [30] | Provides consistency in definitions and formats for data elements and values, improving access to meaningful environmental data |

| Multi-sensor Hardware | Acoustic, Vibration, and Gas Sensors [28] | Capture complementary information about environmental conditions, enabling comprehensive monitoring through data fusion |

| Generative AI Tools | Chemical Language Models [26] | Create new content or predictions based on existing data, revolutionizing data analysis and predictive modeling of complex biological systems |

Integrated Workflow for Holistic Risk Assessment

The following diagram synthesizes the protocols and methodologies into a comprehensive workflow for holistic risk assessment, illustrating how multifarious data sources integrate through ML and AI approaches:

Holistic Risk Assessment Workflow Integrating Multifarious Data Sources

Addressing the core challenge of integrating multifarious data sources requires a systematic approach that combines advanced ML techniques with domain expertise in toxicology and environmental science. The protocols outlined in this Application Note provide actionable methodologies for implementing multi-sensor fusion and chemical grouping strategies that can significantly enhance the efficiency and accuracy of risk assessment.

Future developments in this field will likely focus on several key areas: (1) expanding chemical coverage to address currently understudied substances; (2) systematically coupling ML outputs with human health data to address the current environmental bias; (3) adopting explainable AI workflows to increase regulatory acceptance; and (4) fostering international collaboration to translate ML advances into actionable chemical risk assessments [1]. Additionally, ongoing research must address critical challenges related to data bias and quality, lack of standardization, need for multidisciplinary collaboration, and model interpretability [26].

As the field evolves, the integration of Generative AI presents particularly promising opportunities for enhancing scientific-technical report generation and chemical safety data analysis [26]. By embracing these cutting-edge technologies while maintaining scientific rigor, researchers can transform the challenge of data multiplicity into an opportunity for more comprehensive and protective risk assessment paradigms.

From Code to Chemistry: Key ML Methodologies and Real-World Monitoring Applications

The accurate prediction of the Water Quality Index (WQI) is a critical challenge at the intersection of environmental science and machine learning (ML). As human activities and climate change intensify threats to water resources, ML models have emerged as powerful tools for assessing water quality, reducing monitoring costs, and informing policy decisions [31]. The application of ML in environmental chemical research has seen an exponential surge in publications since 2015, with China and the United States leading research output [1]. This document establishes performance benchmarks for ML models in WQI prediction and provides detailed experimental protocols to standardize methodologies across the research community, with particular relevance for environmental chemical monitoring applications.

Performance Benchmarks for ML Models in WQI Prediction

Recent studies have evaluated numerous machine learning algorithms for predicting WQI across diverse geographical contexts and water body types. The table below synthesizes performance metrics from key studies to establish current benchmarks.

Table 1: Performance benchmarks of machine learning models for WQI prediction

| Model Category | Specific Model | R² | RMSE | MAE | Dataset Context | Source |

|---|---|---|---|---|---|---|

| Stacked Ensemble | Stacked Regression (XGBoost, CatBoost, RF, GB, ET, AdaBoost + Linear Regression meta-learner) | 0.9952 | 1.0704 | 0.7637 | Indian rivers (1,987 samples) | [32] |

| Individual Ensemble | CatBoost | 0.9894 | 1.5905 | 0.8399 | Indian rivers (1,987 samples) | [32] |

| Individual Ensemble | Gradient Boosting | 0.9907 | 1.4898 | 1.0759 | Indian rivers (1,987 samples) | [32] |

| Neural Networks | Artificial Neural Network (ANN) | 0.97 | 2.34 | 1.24 | Dhaka's rivers, Bangladesh | [33] |

| Ensemble Methods | Random Forest Regression | 0.97 | N/A | N/A | Dhaka's rivers, Bangladesh | [33] |

| Boosting Algorithms | XGBoost | 97% accuracy (classification) | Logarithmic loss: 0.12 | N/A | Danjiangkou Reservoir, China (6-year data) | [31] |

The performance data reveals that stacked ensemble methods currently achieve the highest predictive accuracy for WQI, followed closely by individual ensemble algorithms like Gradient Boosting and CatBoost. The superior performance of ensemble approaches can be attributed to their ability to reduce overfitting and generalize well across heterogeneous environmental datasets [32]. Neural networks also demonstrate strong capability in capturing complex, nonlinear relationships in water quality data [33].

Experimental Protocols for WQI Prediction

Data Collection and Preprocessing Protocol

Table 2: Essential water quality parameters for WQI prediction

| Parameter | Significance | Standard Measurement | Common Influential Rank |

|---|---|---|---|

| Dissolved Oxygen (DO) | Indicates aquatic ecosystem health | mg/L | Highest [32] |

| Biochemical Oxygen Demand (BOD) | Measures organic pollution | mg/L | High [32] |

| Conductivity | Indicates dissolved inorganic solids | µS/cm | High [32] |

| pH | Measures water acidity/alkalinity | pH units | High [32] |

| Total Phosphorus (TP) | Indicator of nutrient pollution | mg/L | Key indicator for rivers [31] |

| Ammonia Nitrogen | Indicator of organic pollution | mg/L | Key indicator for rivers [31] |

| Water Temperature | Affects chemical and biological processes | °C | Key for reservoirs [31] |

| Permanganate Index | Organic matter indicator | mg/L | Key indicator for rivers [31] |

Protocol Steps:

Data Sourcing: Collect water quality data from monitoring stations, public repositories (e.g., Kaggle's Indian Water Quality Data), or institutional databases. Ensure datasets span sufficient temporal range (multi-year preferred) and represent diverse environmental conditions [32] [34].

Data Cleaning:

- Handle missing values using median imputation or K-Nearest Neighbors (KNN) imputation. KNN imputation has demonstrated superior performance in water quality datasets by preserving local data relationships [35].

- Identify and process outliers using Interquartile Range (IQR) method [32].

- Normalize parameters to a common scale (e.g., 0-1) to prevent dominance of variables with larger numerical ranges [32].

Feature Selection: Apply Recursive Feature Elimination (RFE) with tree-based algorithms (e.g., XGBoost) to identify the most predictive parameters. This reduces dimensionality and measurement costs while maintaining accuracy [31].

Model Training and Validation Protocol

Data Partitioning: Split dataset into training (70-80%), validation (10-15%), and test (10-15%) sets. Maintain temporal consistency if working with time-series data.

Model Selection and Training:

- Implement a diverse set of algorithms including tree-based methods (XGBoost, Random Forest, CatBoost), neural networks (ANN, LSTM), and traditional regression models as baselines [33].

- For ensemble stacking, implement the following architecture:

- Optimize hyperparameters for each algorithm using grid search or Bayesian optimization with cross-validation.

Model Validation:

- Apply k-fold cross-validation (typically k=5) to ensure robust performance estimation [32].

- Evaluate models using multiple metrics: R² (coefficient of determination), RMSE (Root Mean Square Error), MAE (Mean Absolute Error), and for classification tasks, accuracy and logarithmic loss [31] [33].

- Conduct sensitivity analysis to assess model stability against varying data conditions [33].

Model Interpretation Protocol

SHAP Analysis: Implement SHapley Additive exPlanations (SHAP) to interpret model predictions and identify feature importance [32]. This provides both global interpretability (overall feature importance) and local interpretability (individual prediction explanations).

Uncertainty Quantification: Evaluate model uncertainty using techniques such as eclipsing rate analysis, particularly when comparing different WQI aggregation functions [31].

The following workflow diagram illustrates the complete experimental pipeline for WQI prediction using machine learning:

Table 3: Essential resources for WQI prediction research

| Resource Category | Specific Tool/Resource | Function/Purpose | Example Implementations |

|---|---|---|---|

| Computational Algorithms | XGBoost, CatBoost, Random Forest | Base predictive models for WQI | Feature selection, standalone prediction [31] [32] |

| Stacked Ensemble Methods | Combining multiple models for improved accuracy | Linear Regression as meta-learner [32] | |

| Artificial Neural Networks (ANN) | Capturing complex nonlinear relationships | Multilayer perceptrons for WQI prediction [33] | |

| Interpretability Frameworks | SHAP (SHapley Additive exPlanations) | Model interpretation and feature importance analysis | Identifying key drivers of water quality [32] |

| Benchmark Datasets | LakeBeD-US | Standardized dataset for method comparison | 500M+ observations from 21 US lakes [34] |

| Indian Water Quality Data | Publicly available river quality data | 1,987 samples from Indian rivers (2005-2014) [32] | |

| Feature Selection Methods | Recursive Feature Elimination (RFE) | Identifying most predictive parameters | Combined with XGBoost for parameter selection [31] |

| Uncertainty Quantification | Eclipsing Rate Analysis | Evaluating WQI model uncertainty | Comparing aggregation functions [31] |

Advanced Applications and Future Directions

Knowledge-Guided Machine Learning (KGML)

Integrating physical and ecological principles with machine learning has emerged as a promising approach for improving water quality predictions. KGML techniques have demonstrated success in forecasting lake temperature, phytoplankton dynamics, and phosphorus concentrations by combining mechanistic understanding with data-driven approaches [34]. This hybrid methodology is particularly valuable for predicting the evolution of complex water quality phenomena across spatial and temporal scales.

Real-Time Monitoring Systems

The integration of ML models with Internet of Things (IoT)-based water quality sensor networks enables real-time WQI prediction and proactive water resource management [32]. Stacked ensemble models with SHAP interpretability can be deployed in cloud-based architectures to provide continuous water quality assessment and early warning systems for pollution events.

Standardized Benchmarking

The development of standardized benchmark datasets like LakeBeD-US, available in both "Ecology Edition" and "Computer Science Edition," facilitates comparative methodological analysis and accelerates innovation in water quality prediction [34]. Such resources enable researchers to evaluate new algorithms against established baselines under consistent conditions.

The following diagram illustrates the relationships between key components in an advanced WQI prediction system:

Read-Across Structure-Activity Relationships (RASAR) and AI-Driven Predictive Toxicology

Read-Across Structure-Activity Relationship (RASAR) represents an emerging cheminformatics modeling approach that integrates the principles of quantitative structure-activity relationship (QSAR) with the similarity-based reasoning of read-across (RA) to create predictive models with enhanced accuracy [36]. This hybrid methodology has gained significant traction in predictive toxicology and environmental chemical research as it leverages the strengths of both parent approaches while mitigating their individual limitations. Traditional QSAR relies on statistical correlations between chemical descriptors and biological activity, whereas read-across is a non-statistical grouping approach that fills data gaps by extrapolating information from similar source compounds to a query chemical [36] [37]. The fusion of these methodologies has yielded quantitative RASAR (q-RASAR) and classification RASAR (c-RASAR) models that demonstrate superior predictive performance compared to conventional QSAR models across various toxicity endpoints and material properties [36] [38].

The genesis of RASAR aligns with the broader transformation of toxicology from a purely empirical science to a data-rich discipline ripe for artificial intelligence (AI) integration [39]. As chemical risk assessment faces challenges from high costs, low throughput, and uncertainties in cross-species extrapolation associated with traditional methods, AI-enabled prediction technologies have emerged as transformative solutions [37]. Machine learning (ML) and deep learning algorithms now provide powerful capabilities for analyzing massive datasets of chemical structures, biological activities, and toxicity profiles, enabling the identification of hidden patterns and relationships that inform high-accuracy predictive models [37] [39]. Within this context, RASAR has positioned itself as a particularly promising approach that embodies the "prediction-inspired intelligent training" paradigm, where prediction aspects are incorporated directly into the model development process [38].

Fundamental Concepts and Methodological Framework

Core Principles and Definitions

The foundational principle underlying both read-across and QSAR methodologies is the similarity principle - the concept that compounds with similar structural features will demonstrate similar properties, biological activities, and toxicities [36]. Molecular structures determine molecular properties through specific characteristics including atom types, bond types, functionalities, interatomic distances, arrangement of functionality within molecular skeletons, branching, cyclicity, hydrogen bonding propensity, and molecular size [36]. These structural elements dictate how molecules interact with biological systems through physicochemical forces.

Quantitative Read-Across (q-RA) applies the read-across concept within machine-learning-based supervised prediction frameworks, demonstrating superior performance over QSAR-derived predictions in multiple applications [36]. The further evolution to quantitative Read-Across Structure-Activity Relationship (q-RASAR) generates QSAR-like statistical models by incorporating various similarity and error-based descriptors computed from original structural and physicochemical descriptors [36]. Unlike conventional QSAR models where descriptors are derived directly from the chemical structure of the compound itself, RASAR descriptors for a query compound are computed from its close congeners based on similarity considerations [36] [38]. This fundamental difference embeds predictive capability directly into the learning process, resulting in what has been termed "prediction-inspired" modeling that typically delivers better quality predictions using the same quantum of chemical information [36].

RASAR Descriptors and Similarity Metrics

The RASAR framework employs composite functions and similarity-based descriptors that capture relationships between compounds. Key descriptors include:

- RA function: A core mathematical representation of the read-across prediction

- Average similarity: Measures the overall structural and property similarity between compounds

- Banerjee-Roy concordance measures (gm and gm_class): Quantitative expressions of concordance in properties and activities

- Banerjee-Roy similarity coefficients (sm1 and sm2): Newly proposed similarity indices that help analyze possible activity cliffs in training and test sets [38]

These descriptors are computed for query compounds from source compounds with known target properties, enabling predictions through well-validated models developed from training sets [36]. The similarity metrics and error considerations may be further refined with sophisticated machine learning approaches to advance the field [36].

Table 1: Key RASAR Descriptors and Their Functions

| Descriptor Category | Specific Metrics | Function in Model Development |

|---|---|---|

| Similarity Measures | Average similarity, sm1, sm2 | Quantify structural and property similarity between source and query compounds |

| Concordance Measures | gm, gm_class | Assess agreement in properties and activities between similar compounds |

| Error-Based Descriptors | RA function | Capture prediction errors and uncertainties in the read-across process |

| Composite Functions | Various combined metrics | Integrate multiple similarity and error considerations for enhanced predictions |

Experimental Protocols and Application Workflows