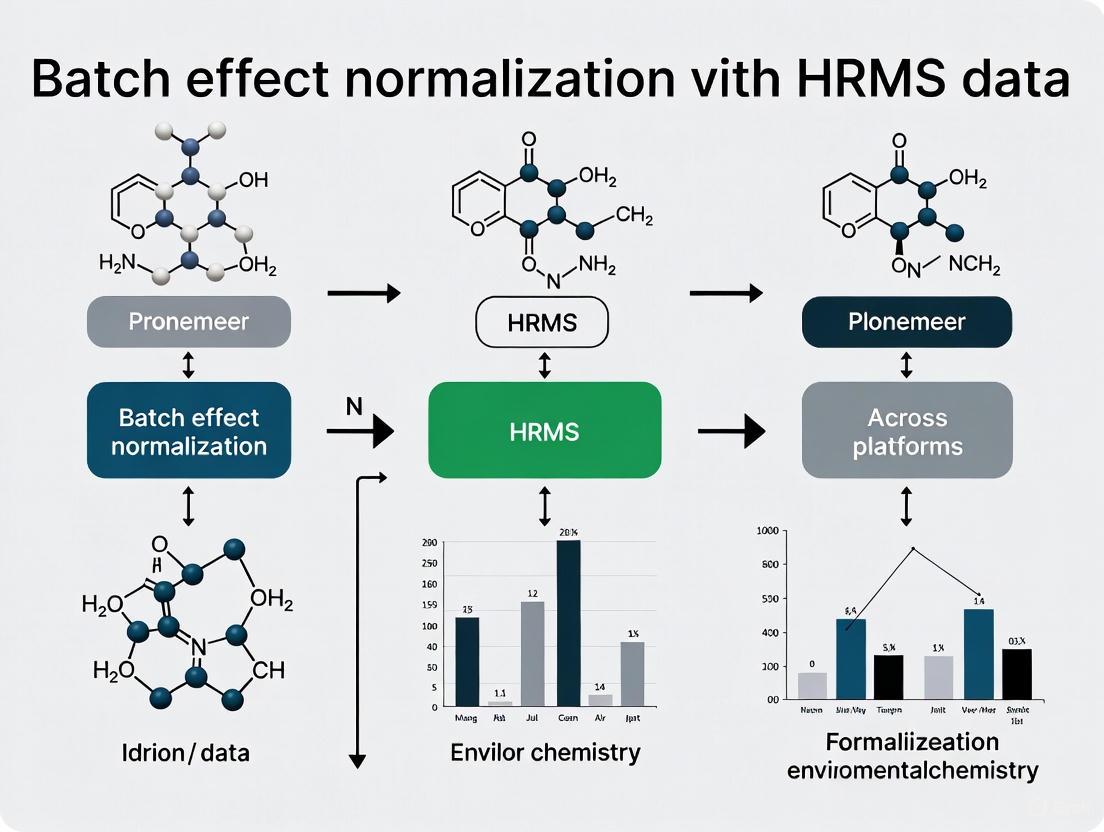

Batch Effect Normalization for HRMS Data: A Cross-Platform Guide for Robust Multi-Omic Integration

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of batch effects in High-Resolution Mass Spectrometry (HRMS) data across different analytical platforms.

Batch Effect Normalization for HRMS Data: A Cross-Platform Guide for Robust Multi-Omic Integration

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of batch effects in High-Resolution Mass Spectrometry (HRMS) data across different analytical platforms. It covers the foundational principles of batch effects and their profound impact on data integrity and reproducibility in biomedical research. The scope extends to a detailed examination of current computational methodologies, including empirical Bayes frameworks, ratio-based scaling, and deep learning approaches, alongside practical strategies for troubleshooting and optimizing normalization workflows. Furthermore, the article presents a rigorous framework for the validation and comparative assessment of correction performance using benchmark datasets and quality metrics, equipping scientists with the knowledge to achieve robust and reliable cross-platform data integration in large-scale omics studies.

Understanding Batch Effects: The Hidden Threat to HRMS Data Integrity

Frequently Asked Questions

1. What is a batch effect in HRMS data? A batch effect is a form of unwanted technical variation that is introduced into high-throughput data due to differences in experimental conditions. These can occur over time, when using different instruments or labs, or when employing different analysis pipelines [1] [2]. In HRMS-based studies, such as proteomics or metabolomics, these effects are systematic variations that are not related to the biological signals of interest [3] [4].

2. What are the main sources of batch effects? Batch effects can arise at virtually every stage of an HRMS experiment. Key sources include:

- Sample Preparation: Differences in reagents, technicians, or protocols [3] [1].

- Instrumental Variation: Changes in instrument performance, maintenance, or calibration over time [3] [4].

- Data Acquisition: Variations in liquid chromatography conditions (e.g., retention time drift) or mass spectrometer settings across batches [3] [5].

- Study Design: A flawed or confounded design, where batches are not balanced with biological groups, can introduce batch effects that are impossible to fully separate from the biological signal [1] [2].

3. Why is it crucial to correct for batch effects? Uncorrected batch effects can lead to incorrect conclusions, reduce statistical power, and are a paramount factor contributing to the irreproducibility of scientific studies [1] [2]. In severe cases, they have led to retracted articles and invalidated research findings. For example, in a clinical trial, a batch effect from a change in RNA-extraction solution led to incorrect patient classifications, affecting treatment regimens for 28 individuals [1] [2].

4. What is the risk of "over-correction"? Over-correction occurs when batch effect removal methods also remove genuine biological variation. This can hinder biomedical discovery by eliminating the very signals researchers are trying to detect. It is essential to use methods that balance the removal of technical noise with the preservation of biological diversity [3].

5. At which data level should batch effect correction be performed? The optimal stage for correction is an active area of research. However, a recent comprehensive benchmarking study in proteomics revealed that protein-level correction is the most robust strategy. The process of quantifying proteins from precursor and peptide-level data interacts with batch-effect correction algorithms, and performing correction at the protein level was found to be more effective [6].

Troubleshooting Guides

Guide 1: Diagnosing Batch Effects in Your Data

Before correction, you must identify the presence and severity of batch effects.

- Objective: To visually and statistically assess the impact of batch effects on your dataset.

- Principle: Technical variations from batches often constitute a major source of variance in the data, which can mask biological patterns.

Protocol:

- Data Preparation: Start with your feature-by-sample matrix (e.g., peak intensities for each sample).

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the uncorrected data.

- Visual Inspection: Create a PCA scores plot, coloring the samples by their batch ID. If samples cluster strongly by batch rather than by biological group, a significant batch effect is present [4].

- Statistical Analysis: Use Principal Variance Component Analysis (PVCA) to quantify the proportion of total variance in the data that is attributable to the batch factor versus the biological factor of interest [6] [4]. A high variance component for batch indicates a need for correction.

Guide 2: Selecting a Batch Effect Correction Algorithm

Choosing the right method is critical, as no single tool is universally best.

- Objective: To select an appropriate batch effect correction algorithm (BECA) for your specific HRMS data.

- Principle: Different algorithms make different assumptions about the data. The choice depends on your data type, study design, and the nature of the batch effect [6] [1].

The table below summarizes standard and advanced methods:

Table 1: Common Batch Effect Correction Algorithms

| Method Name | Category | Key Principle | Considerations |

|---|---|---|---|

| ComBat [4] | Sample data-driven / Statistical | Uses an empirical Bayes framework to adjust for mean and variance shifts between batches. | Powerful but can be sensitive to model parameters and small batch sizes. |

| BERNN [3] | Deep Learning (Neural Network) | Uses a neural network with adversarial learning or triplet loss to create a batch-invariant representation that maximizes classification performance. | Can model complex, non-linear batch effects but requires significant data and computational resources. |

| Harmony [6] | Statistical | Iteratively clusters cells (or samples) and calculates a cluster-specific correction factor to integrate datasets. | Originally for single-cell RNA-seq, but can be extended to other omics data. |

| Ratio [6] | Scaling | Normalizes feature intensities in study samples by those in concurrently profiled universal reference samples. | Requires high-quality reference materials. Effective when batch effects are confounded with biological groups. |

| cytoNorm [7] | Data-driven (for Cytometry) | Uses a set of anchor nodes to align the quantiles of marker expressions from different batches. | Specifically designed for cytometry data; highlights the need for field-specific tools. |

| Internal Standard Scaling [4] | ISTD-based | Scales feature peak heights using the peak heights of spiked-in isotopically labelled internal standards. | Requires a robust suite of internal standards; effective for correcting systematic intensity drift. |

Diagram: A logical workflow for selecting a batch effect correction strategy.

Guide 3: A Two-Stage Preprocessing Protocol for Multi-Batch LC/MS Data

This guide addresses batch effects during the initial data preprocessing stage, which is critical for peak alignment and quantification before intensity-based correction.

- Objective: To achieve better peak detection, alignment, and quantification across multiple batches by integrating batch information directly into the preprocessing workflow [5].

- Principle: Traditional preprocessing treats all samples as a single group, leading to peak misalignment across batches. A two-stage approach performs optimal processing within batches first, then aligns the results between batches [5].

Diagram: Two-Stage Preprocessing Workflow for Multi-Batch LC/MS Data.

Protocol:

- Stage 1 - Within-Batch Processing: Process each analytical batch individually through standard preprocessing steps: peak detection, retention time (RT) correction, peak alignment, and weak signal recovery. This creates an optimal feature table for each batch [5].

- Create Batch-Level Matrices: Generate a representative feature matrix for each batch by averaging the RT and intensity values for each feature across samples within that batch.

- Stage 2 - Between-Batch Alignment: Treat each batch-level matrix as a "sample" and perform a second round of RT correction and feature alignment on them. This step aligns the features across all batches [5].

- Back-Mapping and Final Quantification: Map the aligned features from the batch-level analysis back to the original individual samples. A final weak signal recovery can be performed across all batches using the improved alignment [5].

- Post-Processing: Apply an intensity-based batch effect correction method (e.g., from Table 1) to the final, aligned feature table to remove any remaining intensity biases.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Batch Effect Mitigation in HRMS Studies

| Item | Function in Batch Effect Control |

|---|---|

| Universal Reference Materials | A standardized sample (e.g., commercial quality control plasma or a custom mix) analyzed across all batches. Used to monitor technical performance and for Ratio-based normalization [6]. |

| Isotopically Labelled Internal Standards (ISTDs) | A set of stable isotope-labeled compounds spiked into every sample at known concentrations. Used to correct for sample-specific matrix effects and instrumental variation via ISTD-based scaling [4]. |

| Quality Control (QC) Samples | A pooled sample, typically an aliquot of all study samples, injected repeatedly throughout the analytical sequence. Used in QC-based methods to model and correct for signal drift within and between batches [4] [5]. |

| Standardized Protocol Documentation | Detailed, step-by-step documentation for every procedure from sample collection to data acquisition. Critical for identifying the source of batch effects and ensuring consistency across batches and labs [1]. |

Frequently Asked Questions

What is a batch effect? A batch effect is a technical source of variation in data that is unrelated to the biological questions of a study. These are non-biological differences introduced during sample processing, data acquisition, or analysis due to factors like different reagents, instruments, personnel, or processing dates [8] [2] [9].

How do I know if my data has a batch effect? Batch effects can be identified through exploratory data analysis. Common methods include:

- Visual Inspection: Using Principal Component Analysis (PCA) or UMAP/t-SNE plots to see if samples cluster by batch rather than by biological group [8] [7].

- Quantitative Metrics: Applying tests like the k-nearest neighbor batch effect test (kBET) or Local Inverse Simpson's Index (LISI) to statistically assess batch mixing [8] [10].

What is the difference between normalization and batch effect correction? These are two distinct but related steps:

- Normalization adjusts for technical variations like sequencing depth or library size across individual samples. It operates on the raw data to make samples comparable [8] [10].

- Batch Effect Correction addresses systematic differences between groups of samples (batches) processed at different times or under different conditions. It typically uses normalized data as its input [8].

Can I correct for a batch effect if my study design is confounded? If your biological variable of interest (e.g., 'disease' vs 'control') is perfectly aligned with batch (e.g., all controls in one batch and all diseases in another), it is impossible to statistically disentangle the biological signal from the technical batch effect. This underscores the critical importance of a balanced experimental design where biological groups are distributed across batches [9].

What are the signs of overcorrection? Overcorrection occurs when a batch effect removal method is too aggressive and removes genuine biological signal. Signs include [8]:

- The loss of known, canonical cell-type or disease-specific markers.

- A significant overlap of markers between distinct cell clusters.

- Cluster-specific markers being dominated by common, uninformative genes.

Troubleshooting Guides

Problem: Clustering in PCA/UMAP is Driven by Batch, Not Biology

Description: When visualizing your data, samples group together based on their processing batch instead of their biological condition (e.g., disease vs. control).

| Potential Cause | Recommended Action | Principles & Notes |

|---|---|---|

| Strong Technical Variation | Apply a suitable batch effect correction algorithm. | Choose a method appropriate for your data type and size. For large LC-MS datasets, newer deep learning models like BERNN may be effective [11]. |

| Confounded Design | Re-analyze the data, acknowledging the limitation. | If the design is confounded, statistical correction is not reliable. Conclusions must be drawn with extreme caution [9]. |

| Incorrect Normalization | Ensure proper normalization is performed before batch correction. | Normalization addresses cell-specific or sample-specific technical biases and is a prerequisite for effective batch correction [8] [10]. |

Workflow: Diagnosing and Correcting Batch-Driven Clustering

Problem: Inconsistent Biomarker Discovery Across Batches

Description: Features (e.g., metabolites or proteins) identified as significant in one batch do not replicate in another, hindering the identification of robust biomarkers.

| Potential Cause | Recommended Action | Principles & Notes |

|---|---|---|

| Uncorrected Intensity Drift | Use Quality Control (QC) samples or background correction methods to model and correct for signal drift over time [12]. | QC-based methods like QC-RLSC use pooled samples to track and correct instrumental variation [12]. |

| Peak Misalignment | Use preprocessing tools designed for multiple batches that perform alignment and weak signal recovery across batches [5]. | Traditional preprocessing that treats all samples as one group can misalign peaks, an error that cannot be fixed by post-hoc intensity correction [5]. |

| Insufficient Data Harmonization | For multi-platform studies, use integration methods that explicitly account for platform-specific differences. | Methods like Harmony, LIGER, or Seurat Integration are designed to find shared biological features across diverse datasets [8] [10]. |

Quantitative Evaluation of Batch Effect Correction

After applying a correction method, it is crucial to evaluate its performance. The table below summarizes key metrics.

| Metric Name | What It Measures | Interpretation |

|---|---|---|

| kBET [8] [10] | Whether local neighborhoods of cells contain a balanced mix of batches. | Lower rejection rates indicate better batch mixing. |

| LISI [10] | Diversity of batches (iLISI) and cell types (cLISI) in local neighborhoods. | Higher iLISI = better batch mixing. Higher cLISI = better cell-type separation. |

| PCA-based Visualization [8] [7] | Visual clustering of samples by batch in a low-dimensional plot. | Batches should overlap visually after successful correction. |

| Classification Performance [11] | Ability of a model to predict biological class in a batch-not-seen-during-training setting. | Strong performance indicates biological signal is preserved across batches. |

The Scientist's Toolkit: Essential Reagents & Materials for Batch Effect Management

| Item | Function in Batch Effect Mitigation |

|---|---|

| Pooled Quality Control (QC) Sample | A standardized sample run repeatedly throughout and across batches to monitor and correct for instrumental drift and technical variation [12]. |

| Standard Reference Material | A commercially available or internally validated standard with known concentrations of analytes used to calibrate instruments and compare performance across platforms and batches. |

| Balanced Block Study Design | A planned experimental design (not a reagent, but essential) that ensures biological groups of interest are evenly distributed across all batches, preventing confounding [9]. |

Workflow: A Two-Stage Preprocessing Approach for LC-MS Data

For LC-MS data, batch effects can be addressed during data preprocessing itself. The following workflow, adapted for HRMS, outlines a robust two-stage method [5].

Protocol Details:

- Stage 1 - Within-Batch Processing: Each batch is processed individually through peak detection, retention time (RT) correction, and feature alignment. A batch-level feature matrix is created, containing the average m/z, RT, and intensity for each feature in that batch [5].

- Stage 2 - Between-Batch Integration: The batch-level matrices are then aligned. This involves a second round of RT correction and feature alignment across batches. Finally, this aligned feature list is mapped back to the individual samples, allowing for the recovery of weak signals that may have been missed in some batches but detected in others [5].

- Advantage: This method prevents peak misalignment and omission across batches, problems that cannot be fixed by simply applying an intensity-based batch correction after standard preprocessing [5].

Frequently Asked Questions

1. What are the most common sources of batch effects in HRMS studies? Batch effects arise from both biological and non-biological confounding factors. Common technical sources include differences in instrument availability, sample collection timelines, operators, reagent batches, instrument maintenance, ion source variations, and sample-specific matrix effects. Even when using identical instrumentation, analyses performed over extended periods (months to years) will exhibit batch effects due to instrumental variation or differential compound degradation in stored samples [13] [4].

2. What is the difference between normalization and batch effect correction? These terms are often used interchangeably but refer to distinct procedures. Normalization involves sample-wide adjustments to align the distribution of measured quantities across samples, typically by aligning sample means and medians. Batch effect correction is a data transformation that corrects quantities of specific features across samples to reduce technical differences. In a proper workflow, normalization is performed prior to batch effect correction [14].

3. Can batch effects be completely eliminated? Complete elimination is challenging and potentially harmful. Over-correction can remove essential biological variability, diminishing classification performance and statistical power. The goal is to reduce batch effects to a level where they no longer mask biological signals, while preserving genuine biological diversity [13] [15].

4. At what data level should batch effects be corrected in bottom-up proteomics? Recent evidence suggests protein-level correction is the most robust strategy. In MS-based proteomics, protein quantities are inferred from precursor and peptide-level intensities. Benchmarking studies comparing precursor, peptide, and protein-level corrections found that applying correction at the final protein level best enhances multi-batch data integration in large cohort studies [16].

Troubleshooting Guides

Problem: Biological Groups Cluster by Batch in PCA

Description: After initial data processing, Principal Component Analysis shows samples grouping primarily by analytical batch rather than biological condition.

Solution:

Apply a structured batch-effect correction workflow:

- Diagnose: Use Principal Variance Component Analysis to quantify variability associated with batches [4] [16].

- Correct: Apply a suitable algorithm like ComBat (empirical Bayes) [4] [15] or the PARSEC strategy (standardization and mixed modeling) [17].

- Validate: Re-run PCA and correlation analyses to confirm reduced batch clustering and improved biological group separation [14].

For severely confounded designs where biological groups are processed in entirely separate batches, use a ratio-based method (Ratio-G) if reference materials were profiled concurrently with study samples [15].

Problem: Significant Missing Data After Merging Batches

Description: When combining datasets from multiple batches or platforms, a large proportion of features contain missing values, complicating statistical analysis.

Solution:

- Use algorithms designed for incomplete data:

- BERT (Batch-Effect Reduction Trees): A high-performance method that integrates incomplete omic profiles by decomposing the correction into a binary tree, retaining significantly more numeric values than other methods [18].

- HarmonizR: An imputation-free framework that employs matrix dissection to integrate datasets with arbitrary missing value patterns [18].

Problem: Decreased Statistical Power After Batch Correction

Description: After batch effect correction, the ability to detect differentially expressed features is reduced, suggesting potential over-correction.

Solution:

- Verify correction method appropriateness: Ensure the method matches your experimental design (balanced vs. confounded) [15].

- Avoid over-correction: Some methods, particularly neural network-based approaches, can remove biological variance along with technical noise. Select methods that demonstrate a balance between batch effect removal and biological signal preservation [13].

- Consider protein-level correction: If working with proteomics data, apply correction at the protein level rather than the peptide/precursor level to enhance robustness [16].

Experimental Protocols for Batch Effect Management

Protocol 1: Post-Acquisition Correction with PARSEC

This three-step workflow improves comparability without long-term quality controls [17].

- Data Extraction: Combine and extract raw data from the different studies or cohorts to be analyzed.

- Standardization: Apply batch-wise standardization to the combined dataset.

- Filtering: Filter features based on analytical quality criteria to retain high-quality data for downstream analysis.

Protocol 2: Reference Material-Based Ratio Method

This method is particularly effective when batch effects are completely confounded with biological factors [15].

- Concurrent Profiling: In each analytical batch, profile both the study samples and one or more designated reference material samples.

- Ratio Calculation: Transform the absolute feature values of each study sample into ratios relative to the corresponding feature values in the reference material:

Ratio = Feature_Study_Sample / Feature_Reference_Material. - Data Integration: Use the ratio-scaled values for all downstream analyses and cross-batch integrations.

Protocol 3: Assessment and Correction using ComBat

An empirical Bayes method widely used for batch effect correction [4] [15].

- Data Preprocessing: Perform initial data filtering, log2 transformation, and quantile normalization on the feature intensity matrix.

- Batch Correction: Apply the ComBat algorithm to estimate hyperparameters for the distribution of batch effects by pooling information across features within a batch, then adjust intensities accordingly.

- Quality Assessment: Evaluate correction success using Principal Component Analysis, Hierarchical Clustering Analysis, and Principal Variance Component Analysis to confirm reduced batch-associated variability.

Batch Effect Correction Performance Comparison

Table 1: Comparison of Batch Effect Correction Algorithms

| Algorithm | Underlying Principle | Best For | Strengths | Limitations |

|---|---|---|---|---|

| ComBat [4] [15] | Empirical Bayes | General-purpose use, balanced designs | Effective mean and variance adjustment | May over-correct in confounded designs |

| Ratio-based [15] | Scaling to reference material | Confounded batch-group scenarios | Preserves biological signals relative to reference | Requires concurrent profiling of reference materials |

| Harmony [15] | PCA-based clustering | Multi-omics data integration | Iterative clustering with correction factors | Performance varies by data type |

| PARSEC [17] | Standardization & mixed modeling | Studies lacking long-term QCs | Combines batch and group effect correction | Three-step workflow may be complex |

| BERT [18] | Tree-based decomposition | Large-scale, incomplete data | High performance, retains more data | Newer method, less established |

| BERNN [13] | Neural Networks | Maximizing classification performance | Suite of models (VAE, DANN, invTriplet) | Potential over-correction, black-box nature |

Table 2: Quantitative Performance Metrics from Benchmarking Studies

| Study Context | Metric | Uncorrected Data | After Batch Correction | Correction Method |

|---|---|---|---|---|

| Multibatch WWTP Samples [4] | Batch-associated variability (via PVCA) | High | Significantly Reduced | ComBat |

| Multi-omics (Quartet Project) [15] | Signal-to-Noise Ratio (SNR) | Low | Improved | Ratio-based |

| LC-MS Classification [13] | Sample Classification Performance | Moderate | Strongest | BERNN (Neural Networks) |

| Incomplete Omic Data (6000 features) [18] | Retained Numeric Values (50% missing) | 50% | BERT: ~50% retainedHarmonizR: ~23-73% retained | BERT vs. HarmonizR |

| Protein-level vs. Peptide-level [16] | Coefficient of Variation (CV) | Higher at peptide level | Lower at protein level | Protein-level correction |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Batch Effect Management

| Item | Function in Batch Management | Application Notes |

|---|---|---|

| Universal Reference Materials (e.g., Quartet Project materials) [15] | Provides a stable benchmark for cross-batch normalization via ratio-based methods. | Essential for confounded study designs. Use one or more reference materials processed concurrently with each batch. |

| Isotopically Labelled Internal Standards [4] | Enables internal standard-based correction for signal drift and matrix effects. | Add to each sample at the start of preparation. A robust suite covering various compound classes is ideal. |

| Pooled Quality Control (QC) Samples [14] [4] | Monitors instrument performance and technical variation throughout the analytical run. | Create from an aliquot of all samples. Inject repeatedly throughout the batch sequence. |

| Certified Reference Materials [19] | Verifies analytical confidence and confirms compound identities during validation. | Used for tiered validation of machine learning models and analytical results. |

| Multi-sorbent SPE Cartridges [19] | Improves broad-spectrum analyte recovery during sample preparation, reducing a key source of variability. | Combining sorbents (e.g., Oasis HLB with ISOLUTE ENV+) expands compound coverage compared to single sorbents. |

Workflow Diagrams

Diagram 1: Comprehensive Batch Effect Management Workflow

Batch Effect Management Workflow

Diagram 2: Data Processing Levels in Bottom-Up Proteomics

Proteomics Data Correction Levels

The Critical Need for Normalization in Multi-Batch and Longitudinal Studies

Technical Support Center

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between normalization and batch effect correction?

While both are preprocessing steps, they address different technical variations. Normalization operates on the raw data matrix to correct for cell-specific or sample-specific technical biases. This includes differences in sequencing depth (total reads per sample), library size, and RNA capture efficiency. Its goal is to make measurements from different samples directly comparable. In contrast, batch effect correction specifically addresses systematic technical variations introduced when samples are processed in different batches, sequencing runs, laboratories, or using different platforms or protocols. It typically works on a dimensionality-reduced version of the normalized data to remove these batch-associated variations while preserving biological signals [8] [10] [20].

2. How can I visually detect the presence of batch effects in my dataset?

The most common and effective way to identify batch effects is through visualization of unsupervised clustering.

- Principal Component Analysis (PCA): In the presence of a batch effect, the scatter plot of the top principal components (PCs) will show samples clustering primarily by their batch number rather than by their biological group (e.g., case/control). The first few PCs are often driven by the batch effect [8].

- t-SNE/UMAP Plots: Before correction, cells or samples from the same biological group but different batches will often form separate, distinct clusters. After successful batch correction, these samples should mix together and cluster based on their biological similarities [8].

3. My biological groups are completely confounded with batch (e.g., all controls in Batch 1, all cases in Batch 2). Can I still correct for batch effects?

This is a challenging confounded scenario. Most standard batch-effect correction algorithms (BECAs) may fail because they cannot distinguish true biological differences from technical batch variations. In this situation, the most effective solution is a ratio-based method (Ratio-G). This requires that you concurrently profiled a common reference material (e.g., a standardized control sample) in every batch. You then transform the absolute feature values of your study samples into ratios relative to the values of the reference material from the same batch. This scaling step effectively cancels out the batch-specific technical variation, making data across batches comparable [15].

4. What are the key signs that my batch effect correction might be overcorrected?

Overcorrection occurs when the correction algorithm removes genuine biological variation along with the technical noise. Key signs include [8]:

- A significant portion of your identified cluster-specific marker genes are actually housekeeping or widely expressed genes (e.g., ribosomal genes).

- There is a substantial overlap in the marker genes identified for different cell types or conditions.

- There is a notable absence of expected canonical markers for a known cell type present in your dataset.

- You find a scarcity of differential expression hits in pathways that are biologically expected to be active given your sample composition.

5. We are planning a long-term study. Should we run all samples in one large batch or multiple smaller batches?

Evidence suggests that running samples in multiple, smaller batches with an appropriate batch correction step is preferable to one large batch. Analyzing all samples in a single batch risks compound degradation during long-term storage, which can introduce its own form of bias. Running samples in multiple batches as they are collected, followed by a robust batch-effect correction method like ComBat, has been shown to successfully reduce the influence of batch effects and yield more reliable data than a single large batch [21].

Troubleshooting Guides

Issue: Poor Clustering After Batch Correction

Symptoms: After applying batch correction, your samples still cluster primarily by batch in a PCA plot, or biological groups fail to form distinct clusters.

Potential Causes and Solutions:

- Insufficient Normalization: Batch correction methods often assume that data has already been properly normalized.

- High Proportion of Sparse Features: Datasets with many features that appear only sporadically can challenge some algorithms.

- Action: Apply a filter to remove features with very low detection frequency before alignment and correction [21].

- Algorithm Selection: The chosen method may not be suitable for your data structure.

Issue: Loss of Biological Signal After Correction (Suspected Overcorrection)

Symptoms: Known biological distinctions (e.g., between different cell types) are blurred or lost after correction. Expected marker genes are no longer differentially expressed.

Potential Causes and Solutions:

- Over-Aggressive Correction: The parameters of the correction method are too strong.

- Action: Re-run the correction with a lower correction strength parameter if available. For methods like Harmony, you can adjust the

thetaparameter (a lower value applies less correction) [10].

- Action: Re-run the correction with a lower correction strength parameter if available. For methods like Harmony, you can adjust the

- Confounded Design: The experimental design has batch and biology completely confounded.

- Action: If a reference material is available, use the ratio-based method [15]. If not, be cautious in your interpretation, as statistical separation of batch and biology is intrinsically difficult.

Issue: Inconsistent Alignment of Metabolomics Features Across LC-MS Batches

Symptoms: Difficulty aligning peaks for the same metabolite across batches due to significant retention time (RT) shifts and m/z variance.

Potential Causes and Solutions:

- Chromatographic Variability: Different LC columns, systems, or mobile phase gradients between batches.

- Action: Use a computational alignment tool like metabCombiner. This software is designed specifically to align metabolomics features from disparate LC-MS experiments by determining a common set of compounds across batches, accounting for RT and m/z differences [22].

- Lack of Internal Standards:

- Action: Include isotopically labelled internal standards in every sample. These can be used for retention time correction during data pre-processing (e.g., in software like MS-DIAL) and for intensity normalization [21].

Experimental Protocols

Protocol 1: Basic Normalization of Bulk RNA-seq Data using edgeR

This protocol uses the edgeR package in R to perform library size normalization on a raw count matrix [20].

Input: Raw count matrix (genes x samples).

Protocol 2: Batch Effect Correction using a Ratio-Based Method with a Reference Material

This protocol is highly effective for confounded study designs and multi-omics data [15].

Prerequisite: A common reference material (e.g., a standardized control sample from the Quartet Project) must be profiled in every analytical batch.

Steps:

- Data Acquisition: For each batch, profile all study samples AND the common reference material.

- Feature Extraction: Process raw data to obtain absolute abundance values for each feature (e.g., metabolite, transcript) in each sample.

- Ratio Calculation: For every feature in every study sample, calculate a normalized ratio value.

Ratio (Study Sample) = Absolute Abundance (Study Sample) / Absolute Abundance (Reference Material)- Perform this calculation separately within each batch.

- Data Integration: The resulting ratio-based values for each study sample can now be combined into a single, batch-corrected data matrix for downstream analysis.

Comparative Data Tables

Table 1: Comparison of Common Batch Effect Correction Algorithms

| Tool / Method | Underlying Principle | Strengths | Limitations / Best For |

|---|---|---|---|

| ComBat [15] [21] | Empirical Bayes method that pools information across features. | Effective at removing batch mean and variance; widely used in omics. | May not handle non-linear batch effects well. |

| Harmony [8] [10] [15] | Iterative clustering in PCA space with correction. | Fast, scalable to millions of cells; preserves biological variation. | Requires PCA first; limited native visualization. |

| Seurat Integration [8] [10] | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN). | High biological fidelity; integrates with full Seurat workflow. | Computationally intensive for very large datasets. |

| Ratio-Based Method [15] | Scales feature values relative to a common reference material. | The only reliable method for completely confounded batch-group scenarios. | Requires a reference material be run in every batch. |

| Scanorama [8] | MNN matching in dimensionally reduced spaces with similarity weighting. | High performance on complex data; produces corrected matrices. | Computationally demanding due to high-dimensional neighbor search. |

Table 2: Common Normalization Methods for Sequencing Data

| Method | Description | Application Notes |

|---|---|---|

| Counts Per Million (CPM) [20] | Scales counts by the total library size per sample. | Simple but does not account for RNA composition. Good for initial checks. |

| Trimmed Mean of M-values (TMM) [20] | Weighted trimmed mean of log expression ratios (M-values) between samples. | Assumes most genes are not DE. Robust and widely used in bulk RNA-seq (e.g., edgeR). |

| Log Normalization [10] | Library size normalization, scaled by a factor (e.g., 10,000), followed by log-transformation. | Standard in many scRNA-seq workflows (e.g., Seurat, Scanpy). Simple and effective. |

| SCTransform [10] | Regularized Negative Binomial regression that models technical noise. | Advanced method for scRNA-seq. Replaces scaling, normalization, and feature selection in Seurat. |

| Centered Log Ratio (CLR) [10] | Log-transforms the ratio of a feature's value to the geometric mean of all features in a sample. | Primarily used for normalizing antibody-derived tags (ADT) in CITE-seq data. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Solutions for Multi-Batch Studies

| Item | Function in the Context of Batch Normalization |

|---|---|

| Reference Materials (e.g., Quartet Project references) [15] | Commercially available or in-house standardized samples derived from a well-characterized source. Profiled in every batch to enable ratio-based correction and quality control. |

| Isotopically Labelled Internal Standards [22] [21] | Chemical compounds identical to the analytes of interest but labelled with heavy isotopes (e.g., ^13^C, ^15^N). Added to each sample to correct for retention time shifts, ionization efficiency, and matrix effects, particularly in metabolomics and proteomics. |

| Pooled Quality Control (QC) Samples [21] | A single sample created by pooling a small aliquot of every study sample. Injected repeatedly throughout the analytical run to monitor and correct for instrumental drift over time. |

| Method Blanks [21] | Samples containing all reagents but no biological matrix. Used to identify and filter out background contaminants and chemical noise introduced during sample preparation. |

Workflow Visualization

Workflow for Normalization and Batch Correction in Multi-Batch Studies

A Practical Toolkit: Batch Effect Correction Algorithms and Their Implementation

In high-throughput genomic, transcriptomic, and metabolomic studies, batch effects are technical variations introduced when samples are processed in different experimental batches, using different equipment, reagents, or personnel. These non-biological variations can confound true biological signals, reduce statistical power, and even lead to spurious scientific conclusions if not properly addressed [2]. The need for effective batch correction is particularly acute in large-scale studies such as those utilizing High-Resolution Mass Spectrometry (HRMS), where data acquisition may span weeks or months [23] [21]. This technical guide provides an overview of three major algorithm families used for batch effect normalization, with specific application to cross-platform HRMS research.

Comparison of Major Algorithm Families

Table 1: Core Characteristics of Major Batch Effect Correction Algorithm Families

| Algorithm Family | Key Principle | Primary Use Cases | Key Assumptions | Common Implementations |

|---|---|---|---|---|

| Empirical Bayes | Uses Bayesian shrinkage to estimate and adjust for batch effects on both mean and variance parameters [24]. | Genomic studies, metabolomics, multi-batch studies with balanced designs [23] [24]. | Batch effects impact many features similarly; error terms typically normally distributed [24]. | ComBat (parametric & non-parametric), ber [23] [24]. |

| Ratio-Based | Applies scaling factors based on reference points, standards, or central tendencies to normalize data [25] [23]. | Targeted metabolomics, studies with quality control samples or internal standards [23] [21]. | A valid reference point (e.g., median, control sample) exists and is applicable to all features [25]. | Mean-centering, standardization, Internal Standard Scaling (ISS), LOWESS [23]. |

| Matrix Factorization | Decomposes data matrix into lower-dimensional factors, isolating batch effects from biological signals [26] [27]. | Nontarget analysis, imaging mass spectrometry, sparse datasets [26] [21]. | Batch effect variance is captured in dominant components distinct from biological signal [23]. | PCA, SVD, Independent Component Analysis (ICA), Non-negative Matrix Factorization (NMF) [26] [23]. |

Table 2: Performance Considerations and Data Requirements

| Algorithm Family | Handling of Severe Batch Effects | Data Distribution Requirements | Dependence on QC Samples | Software/Tools |

|---|---|---|---|---|

| Empirical Bayes | Effective for moderate to severe batch effects affecting both location and scale [24]. | Assumes normal distribution of error terms; parametric and non-parametric versions available [24]. | Not required; uses study data directly [23]. | ComBat in R/sva, ber, dbnorm R package [23] [24]. |

| Ratio-Based | Best for moderate batch effects primarily affecting signal location (mean) [25]. | No strong distributional assumptions; non-parametric [25]. | Required for QC-based methods; internal standards for ISS [23] [21]. | LOWESS, various custom scripts in R/Python [23]. |

| Matrix Factorization | Effective when batch effects are captured in dominant components of variance [26] [23]. | Works with non-Gaussian data; NMF specifically for non-negative data [26]. | Not required; uses study data directly [23]. | PCA, ICA, NMF in various programming environments [26] [23]. |

Experimental Protocols for Major Algorithms

Protocol 1: Empirical Bayes Method (ComBat) Implementation

The ComBat method uses empirical Bayes frameworks to standardize data across batches. The following steps outline a standard implementation protocol [24] [23]:

- Input Data Preparation: Format your data as an M×N matrix, where M represents the number of metabolic features (e.g., m/z ratios) and N represents the number of samples.

- Model Parameterization: Apply the ComBat model, which assumes the data follows: ( Y{ijg} = αg + X{ij}βg + γ{ig} + δ{ig}ε{ijg} ) where ( Y{ijg} ) is the signal for gene/feature g in sample j from batch i, ( αg ) is the overall signal, ( Xβ ) represents biological covariates, ( γ{ig} ) is the additive batch effect, ( δ{ig} ) is the multiplicative batch effect, and ( ε{ijg} ) is the error term [24].

- Empirical Priors Estimation: Estimate batch effect parameters (( γ{ig} ) and ( δ{ig} )) using empirical Bayes shrinkage, which pools information across features within each batch for more robust estimation, particularly with small sample sizes [24].

- Data Adjustment: Adjust the data using the estimated parameters to remove batch effects while preserving biological signals of interest.

- Validation: Assess correction efficacy using PCA visualization and metrics such as adjusted R-squared to quantify residual batch-associated variance [23].

Protocol 2: Ratio-Based Normalization with Internal Standards

This approach is particularly valuable in HRMS-based metabolomics where internal standards are routinely used [23] [21]:

- Standard Selection: Identify and include a suite of stable isotopically-labeled internal standards (ISTDs) that represent various chemical classes in your analysis.

- Data Acquisition: Run samples across multiple batches, injecting QC samples (pooled from all samples) at regular intervals throughout each batch.

- Peak Alignment: Align peaks across all samples using retention time correction based on internal standards.

- Signal Drift Modeling: For each feature, model the relationship between signal intensity and injection order using QC samples, typically with LOWESS regression.

- Normalization Application: Scale the peak intensities of each feature in study samples using the corresponding ISTD or the drift model established from QCs.

- Performance Verification: Calculate the coefficient of variation for ISTDs across batches to confirm normalization efficacy.

Protocol 3: Matrix Factorization for Batch Effect Removal

Matrix factorization techniques like PCA, ICA, and NMF can isolate and remove batch effects without requiring QC samples [26] [23]:

- Data Preprocessing: Reshape the three-dimensional IMS data (x, y, m/z) into a two-dimensional matrix (pixels × m/z values) and apply masking to exclude areas without sample [26].

- Factorization: Apply the chosen matrix factorization algorithm:

- Component Identification: Identify components corresponding to batch effects rather than biological signals of interest.

- Signal Reconstruction: Reconstruct the data matrix excluding batch-associated components.

- Validation: Compare pre- and post-correction data structure using clustering algorithms and variance explanation metrics.

Frequently Asked Questions (FAQs)

Q1: How do I choose between parametric and non-parametric Empirical Bayes methods? Parametric ComBat assumes normal distribution of error terms and uses parametric priors for batch effect parameters, while non-parametric ComBat relaxes this distributional assumption. Use parametric versions when data approximately meets normality assumptions, as it often provides more powerful shrinkage. Use non-parametric versions when data severely violates normality assumptions, as it is more robust to distributional anomalies [24] [23].

Q2: What is the "reference batch" approach in Empirical Bayes methods and when should I use it? The reference batch approach modifies the standard ComBat model by designating one high-quality batch as a static reference to which all other batches are adjusted. This is particularly valuable in biomarker studies where a training set must remain fixed while applying corrections to subsequent validation cohorts, thus avoiding "set bias" where adding new batches alters previously corrected data [24].

Q3: When would ratio-based methods be preferable over more sophisticated approaches like Empirical Bayes? Ratio-based methods are preferable when you have reliable internal standards or QC samples that adequately represent analytical variation across your compound classes of interest. They are also advantageous when you need a transparent, easily interpretable normalization approach without complex statistical assumptions, particularly for targeted analyses where appropriate standards are available [23] [21].

Q4: How can I handle batch effects when my data has a high proportion of zeros or missing values? Matrix factorization methods, particularly non-negative matrix factorization (NMF), can be effective for sparse data with many zeros, as they don't assume a normal distribution [26]. For Empirical Bayes approaches, consider the non-parametric ComBat variant, which doesn't rely on normality assumptions and may be more robust to data sparsity [23].

Q5: What visualization and metrics can I use to evaluate batch correction success? Principal Component Analysis (PCA) plots should show batch mixing rather than separation by batch [23] [21]. Principal Variance Component Analysis (PVCA) can quantify the proportion of variance explained by batch before and after correction [21]. The dbnorm package provides an adjusted R-squared (adj-R²) score that measures the linear association between metabolite levels and batch, with lower values indicating successful correction [23].

Table 3: Key Research Reagents and Computational Resources for Batch Effect Correction

| Resource Type | Specific Examples | Function in Batch Effect Correction |

|---|---|---|

| Internal Standards | Stable isotopically-labeled analogs of analytes [21]. | Serve as reference points for ratio-based normalization, correcting for signal drift and instrumental variation. |

| Quality Control (QC) Samples | Pooled samples representative of entire sample set [23]. | Monitor technical variation across batches; used in QC-based correction methods. |

| Reference Materials | Standard Reference Materials (SRMs) [21]. | Provide benchmark for data alignment and normalization across platforms and laboratories. |

| Software Packages | dbnorm R package [23]. | Compares and selects optimal batch correction method for specific datasets. |

| Software Packages | ComBat in R/sva package [24] [23]. | Implements Empirical Bayes framework for batch effect adjustment. |

| Software Packages | MS-DIAL [21]. | Performs data alignment and peak picking for HRMS data prior to batch correction. |

| Software Packages | FastICA, NMF packages [26]. | Implement matrix factorization algorithms for batch effect identification and removal. |

Workflow Diagrams for Algorithm Selection

Diagram 1: A decision workflow for selecting the most appropriate batch effect correction algorithm based on data characteristics and research needs.

Diagram 2: A sequential workflow of the Empirical Bayes (ComBat) batch correction process.

Frequently Asked Questions (FAQs)

Q1: My high-throughput proteomics data comes from multiple labs. Which batch-effect correction method is most robust when my sample groups are not evenly distributed across batches (confounded design)?

A1: In confounded designs, where biological groups are unevenly distributed across batches, Ratio-based methods and RUV-III-C are generally preferred. A 2025 benchmarking study demonstrated that Ratio methods are particularly effective in such scenarios because they use a universal reference sample to create a stable adjustment factor, reducing the risk of removing true biological signal. In contrast, methods like ComBat, which rely on the mean of the entire batch, can be misled by the overrepresentation of a particular biological group [16].

Q2: I am processing lipidomics data from minimal serum volumes (e.g., 10 µL). My internal standards show good reproducibility, but I still see batch effects. What should I check?

A2: First, verify that your internal standard normalization is applied correctly. A proven LC-HRMS workflow for minimal serum volumes uses a simplified methanol/MTBE extraction and internal standard normalization to achieve high precision (RSD 5-6%) [28]. If batch effects persist:

- Check Standard Distribution: Ensure your internal standards are spiked evenly into all samples and cover a broad range of the chemical space you are measuring.

- Inspect for Sample-Specific Effects: Batch effects can be sample-specific [29]. Use quality control (QC) samples, like pooled study samples, to diagnose if the effect is global or influences specific sample types differently.

- Consider Advanced Methods: If simple normalization is insufficient, apply a formal batch-effect correction like RUV-III-C or ComBat using the injection batch as a covariate.

Q3: At which data level—precursor, peptide, or protein—should I perform batch-effect correction in my bottom-up proteomics study?

A3: Recent comprehensive benchmarking indicates that protein-level correction is the most robust strategy [16]. While it is technically possible to correct at the precursor or peptide level, the study found that protein-level correction, performed after protein quantification (e.g., using MaxLFQ, iBAQ, or TopPep3), consistently yielded superior results in minimizing unwanted variation while preserving biological signal across various metrics and algorithms [16].

Q4: How can I handle batch effects in my data when I do not have any technical replicates or reference samples?

A4: This is a common challenge. Your options depend on the method:

- ComBat and Median Centering: These can be applied without replicates, but they carry a higher risk of removing biological signal if the study design is confounded [16].

- RUV-III-C: This method requires either technical replicates or negative control genes (genes not expected to be affected by the biological variables of interest) to estimate the unwanted variation [30] [31]. Without these, it cannot be used.

- Ratio Method: By definition, this method requires a reference sample measured in every batch [16].

- Alternative Strategy: If no replicates or controls are available, your best option is often to include "batch" as a covariate in your downstream statistical models (e.g., linear models for differential expression) to account for its variance.

Comparison of Key Batch-Effect Correction Methods

The table below summarizes the core characteristics and performance of the four highlighted methods to guide your selection.

| Method | Core Mechanism | Data Requirements | Strengths | Weaknesses |

|---|---|---|---|---|

| ComBat | Empirical Bayes framework to adjust for location (mean) and scale (variance) shifts between batches [29] [16]. | Batch labels. | Effectively handles mean and variance shifts; widely used and validated. | Can be sensitive to outliers in small batches [29]; risks over-correction in confounded designs [16]. |

| Ratio | Scales feature intensities by the ratio between the study sample and a universal reference sample (e.g., a pooled standard) analyzed in the same batch [16]. | A universal reference sample analyzed in every batch. | Simple and intuitive; highly robust in confounded designs [16]. | Requires valuable MS run time for reference samples; performance depends on reference quality. |

| Median Centering | Centers the median (or mean) of each feature's intensity to zero (or a global median) within each batch [32] [16]. | Batch labels. | Computationally simple and fast; performs well in balanced designs [32]. | Only corrects for additive effects; less effective for complex batch effects; impacted by outliers [29]. |

| RUV-III-C | Uses technical replicates and negative control genes in a linear model to estimate and remove unwanted variation [16]. | Technical replicates or negative control genes. | Powerful and flexible; can handle multiple sources of unwanted variation simultaneously [30]. | Requires a well-designed experiment with replicates or reliable negative controls. |

Performance Metrics in Proteomics Benchmarking

A 2025 study evaluated these methods on multi-batch proteomics data, measuring performance using metrics like the coefficient of variation (CV) within technical replicates and the Matthews Correlation Coefficient (MCC) for identifying true differential expression. The results below highlight that Ratio and RUV-III-C methods often achieve the best balance between removing batch effects and preserving biological truth [16].

| Method | Coefficient of Variation (CV) | Matthews Correlation Coefficient (MCC) | Signal-to-Noise Ratio (SNR) |

|---|---|---|---|

| No Correction | High | Low | Low |

| ComBat | Low | Medium | Medium |

| Ratio | Lowest | High | High |

| Median Centering | Medium | Medium | Medium |

| RUV-III-C | Low | High | High |

Experimental Protocols for Benchmarking Batch-Effect Correction

Protocol 1: Benchmarking Batch Correction in a Confounded Proteomics Study

This protocol is adapted from a large-scale benchmarking study [16].

Dataset Preparation:

- Obtain a multi-batch dataset with known ground truth, such as the Quartet protein reference materials, where sample types (D5, D6, F7, M8) are measured across multiple MS runs [16].

- For a confounded design (Quartet-C), intentionally distribute sample types unevenly across batches to simulate a realistic and challenging scenario.

Data Pre-processing and Quantification:

- Process raw MS files using a standard pipeline to generate precursor intensities.

- Perform protein quantification using one or more algorithms (e.g., MaxLFQ, iBAQ, TopPep3) to generate protein-level abundance matrices [16].

Batch-Effect Correction:

- Apply the four correction methods (ComBat, Ratio, Median Centering, RUV-III-C) to the protein-level data. For the Ratio method, designate one sample as the universal reference.

- For RUV-III-C, use the technical replicates within the Quartet data as input.

Performance Assessment:

- Feature-based: Calculate the Coefficient of Variation (CV) for technical replicates across batches. Lower CV indicates better precision.

- Truth-based: Since the true differences between Quartet samples are known, compute the Matthews Correlation Coefficient (MCC) to evaluate how well each method recovers true differential expression without false discoveries.

- Sample-based: Perform Principal Component Analysis (PCA) and calculate the Signal-to-Noise Ratio (SNR) to assess how well the data separates by biological group after correction.

Protocol 2: Applying Correction to LC-HRMS Lipidomics Data from Minimal Serum Volumes

This protocol is based on a workflow for integrated lipidomics and metabolomics [28].

Sample Preparation:

- Use a minimal volume of serum (e.g., 10 µL).

- Perform a simplified liquid-liquid extraction using a 1:1 (v/v) mixture of methanol and methyl tert-butyl ether (MTBE).

- Spike in a mixture of internal standards (IS) covering various lipid classes before extraction.

LC-HRMS Analysis:

- Analyze samples using a reversed-phase liquid chromatography system coupled to a high-resolution mass spectrometer.

- Acquire data in both positive and negative ionization modes.

- Crucially, distribute Quality Control (QC) samples—a pooled mixture of all study samples—evenly throughout the acquisition batch.

Data Pre-processing:

- Process raw data for peak picking, alignment, and annotation. Identify over 440 lipid species across 23 classes.

- Apply the first level of normalization by dividing the peak area of each lipid feature by the peak area of its corresponding internal standard.

Batch-Effect Diagnosis and Correction:

- Check the PCA plot of the QC samples. If the QCs do not cluster tightly, a significant batch effect is present.

- If internal standard normalization is insufficient, apply a second correction. Use the

ComBatfunction (from thesvaR package) orRUV-III-Cwith the injection batch as the primary factor. - Validate the correction by confirming that the QC samples now form a tight cluster in the PCA plot.

Workflow and Method Selection Diagrams

Batch Effect Correction with RUV-III-C and Pseudo-Replicates

Batch Effect Correction Method Selector

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials used in the experiments and workflows cited in this guide.

| Reagent / Material | Function / Explanation | Example Context |

|---|---|---|

| Universal Reference Sample | A standardized sample (e.g., pooled from all study samples or a commercial reference material) analyzed in every batch to enable ratio-based correction [16]. | Quartet protein reference materials; a pooled plasma sample. |

| Internal Standards (IS) | Chemically analogous compounds spiked into each sample at a known concentration to correct for technical variability during sample preparation and ionization [28]. | Stable isotope-labeled lipids or peptides added prior to extraction in lipidomics/proteomics. |

| Bridging Controls (BCs) | Identical technical replicate samples included on every processing plate or batch to directly measure and correct for batch-specific effects [29]. | 8-12 identical plasma samples on each plate in a PEA proteomics study. |

| Methanol:MTBE (1:1, v/v) | A simplified liquid-liquid extraction solvent mixture for simultaneous extraction of lipids and semi-polar metabolites from minimal serum volumes [28]. | 10 µL serum lipidomics workflow. |

| Pseudo-Replicates of Pseudo-Samples (PRPS) | In-silico samples created by grouping biologically homogeneous samples, enabling the use of RUV-III-C when physical technical replicates are unavailable [30] [31]. | Correcting library size, tumor purity, and batch effects in large-scale TCGA RNA-seq data. |

| Isobaric Tags (TMT, iTRAQ) | Multiplexing reagents that allow several samples to be pooled and analyzed in a single MS run, reducing inter-run variability but introducing a need for normalization within and across runs [33]. | Multiplexed proteomics experiments across multiple LC-MS/MS runs. |

Troubleshooting Guide: Batch Effect Correction in MS-Based Proteomics

This guide addresses common challenges researchers face when choosing the optimal stage for batch-effect correction in mass spectrometry-based proteomics.

1. Poor Data Integration After Multi-Batch Studies

- Problem: Biological signals remain obscured after integrating data from multiple batches, leading to irreproducible results.

- Solution: Implement protein-level batch-effect correction as your primary strategy. Benchmarking studies demonstrate it is the most robust approach, enhancing data integration in large cohort studies [16].

- Protocol: Use the MaxLFQ quantification method combined with Ratio-based batch-effect correction, which has shown superior prediction performance in large-scale clinical datasets [16].

2. Inconsistent Differential Expression Results

- Problem: Lists of differentially expressed proteins (DEPs) change drastically when re-analyzing data or including new batches.

- Solution: Apply correction at the protein level to maintain a stable relationship between protein quantification and batch-effect removal. This strategy improves the reliability of DEP identification, as measured by metrics like the Matthews correlation coefficient (MCC) [16].

- Protocol: When batch effects are confounded with biological groups, employ a Ratio-based correction method, which is particularly effective in such challenging scenarios [16].

3. Over-Correction and Loss of Biological Signal

- Problem: Batch-effect correction is too aggressive, removing genuine biological variation along with technical noise.

- Solution: Validate correction efficacy using both feature-based and sample-based metrics. Avoid applying algorithms blindly; instead, use positive controls like technical replicates to ensure biological signal is preserved [16] [4].

- Protocol: After correction, use Principal Variance Component Analysis (PVCA) to quantify the remaining variance attributable to batch factors. A successful correction should significantly reduce this component [16] [4].

Frequently Asked Questions

Q1: At which data level should I correct batch effects in my proteomics study? A1: Comprehensive benchmarking using real-world and simulated datasets indicates that batch-effect correction at the protein level is the most robust strategy. The process of aggregating precursor or peptide-level data into protein quantities interacts with batch-effect correction algorithms. Performing correction after protein quantification provides more consistent and reliable results across different experimental scenarios [16].

Q2: Which batch-effect correction algorithm should I use? A2: The optimal algorithm can depend on your specific dataset and quantification method. Benchmarking of seven common algorithms (ComBat, Median centering, Ratio, RUV-III-C, Harmony, WaveICA2.0, and NormAE) reveals that Ratio-based scaling is a universally effective method, particularly when batch effects are confounded with biological groups. The MaxLFQ-Ratio combination has demonstrated superior performance in large-scale clinical applications [16]. ComBat, an empirical Bayes method, has also proven effective in reducing batch effects in HRMS data from environmental monitoring studies [4].

Q3: How can I quantitatively assess the success of my batch-effect correction? A3: Use a combination of feature-based and sample-based metrics for a comprehensive assessment [16]:

- Feature-based: Calculate the coefficient of variation (CV) within technical replicates across batches. For datasets with known truth, use correlation coefficients (RC) and Matthews correlation coefficient (MCC) to evaluate differential expression analysis.

- Sample-based: Evaluate the signal-to-noise ratio (SNR) in differentiating sample groups via PCA. Use Principal Variance Component Analysis (PVCA) to quantify the contribution of batch factors before and after correction [4].

Q4: My data was acquired in multiple analytical batches. Should I have run everything in one batch instead? A4: No. Studies comparing single-batch versus multi-batch acquisition for long-term monitoring have shown that running samples in multiple, smaller batches with an appropriate batch-correction step is preferable to a single large batch. This approach avoids risks associated with compound degradation during long-term storage and effectively controls for instrumental variability through computational correction [4].

Performance Comparison of Batch-Effect Correction Strategies

The table below summarizes key quantitative findings from benchmarking studies to guide your method selection.

Table 1: Benchmarking Results for Batch-Effect Correction in Proteomics

| Correction Level | Recommended Use Case | Key Performance Metrics | Top-Performing Algorithm & Quantification Method Combinations |

|---|---|---|---|

| Protein-Level | Large-scale cohort studies; Confounded designs (batch mixed with biology) | High robustness, superior signal-to-noise ratio, reduced batch variance in PVCA | MaxLFQ + Ratio: Superior prediction performance in clinical data [16] |

| Peptide-Level | Studies requiring peptide-level analysis | Variable performance, interacts with protein quantification method | Varies significantly; requires dataset-specific benchmarking [16] |

| Precursor-Level | Limited application in proteomics; more common in metabolomics | Lower overall robustness for protein-level inference | Not generally recommended as the primary strategy for proteomics [16] |

Experimental Protocol: Protein-Level Batch-Effect Correction Workflow

This protocol outlines a standard workflow for implementing and validating protein-level batch-effect correction, based on methodologies from benchmark studies [16] [4].

1. Input Data Preparation

- Start with a protein abundance matrix (samples × proteins) derived from your chosen quantification method (e.g., MaxLFQ, iBAQ, TopPep3).

- Ensure the matrix is properly log2-transformed if required by the selected batch-effect correction algorithm.

- Compile a metadata file that clearly defines batch membership and biological groups for all samples.

2. Algorithm Selection and Application

- Select an algorithm such as ComBat or Ratio-based correction. ComBat uses an empirical Bayesian framework to adjust for batch effects [4].

- Run the chosen algorithm, specifying the batch variable. If available, specify biological covariates to protect during correction.

- Code Example (Theoretical):

3. Validation and Quality Control

- Perform Principal Variance Component Analysis (PVCA) on the data before and after correction to quantify the reduction in variance explained by the batch factor [16] [4].

- Visually inspect data integration using PCA plots. Batch clusters should merge post-correction, while biological groups should remain distinct.

- Calculate the coefficient of variation (CV) for technical replicates across different batches to confirm decreased technical variability.

Diagram Title: Protein-Level Batch-Effect Correction Workflow

Table 2: Essential Resources for Batch-Effect Correction Research

| Resource | Function/Description | Relevance to Batch-Effect Studies |

|---|---|---|

| Quartet Project Reference Materials | Four grouped reference materials (D5, D6, F7, M8) for multi-omics QC [16]. | Provides a ground-truth benchmark dataset with known relationships for developing and testing batch-effect correction methods. |

| Internal Standards (ISTDs) | Isotopically labelled compounds added to each sample for signal correction. | Used in QC-based and ISTD-based normalization to adjust for feature-specific intensity variations across batches [4]. |

| Pooled Quality Control (QC) Samples | Aliquots from all samples combined and injected repeatedly during a run. | Serves as a technical replicate to model and correct for signal drift and batch effects related to injection order [4]. |

| Reference Datasets (e.g., ChiHOPE) | Large-scale, real-world datasets from cohort studies (e.g., 1,431 T2D plasma samples) [16]. | Enables validation of batch-effect correction methods in a realistic, large-scale clinical proteomics context. |

Core Concepts: Batch Effect Normalization

What is batch effect, and why is it particularly problematic in cross-platform HRMS studies?

Batch effects are systematic technical variations introduced during sample preparation, data acquisition, or analysis runs that are not related to the biological factors of interest. In cross-platform HRMS research, these effects are especially problematic because technical variations from different instruments or protocols can obscure true biological signals, leading to false discoveries and irreproducible results. Batch effect normalization is the data transformation process that corrects for these technical variations, making samples comparable across different batches and platforms [14].

How does 'dbnorm' specifically address the challenges of large-scale metabolomic studies?

The dbnorm package provides a comprehensive framework for batch effect correction in large-scale metabolomic datasets, which often suffer from signal drift across long-term data acquisition periods. It integrates multiple statistical models and provides diagnostic tools to help users select the most appropriate correction method for their specific dataset structure. Unlike single-algorithm approaches, dbnorm enables comparative assessment of different correction methods through scoring metrics and visual diagnostics, making it particularly valuable for cross-platform HRMS data where no single method performs optimally in all scenarios [34] [35].

Implementation Guide: Using the 'dbnorm' Package

What are the prerequisites and installation steps for 'dbnorm'?

dbnorm requires several R package dependencies from both CRAN and Bioconductor. Proper installation involves these steps:

CRAN Dependencies:

Bioconductor Dependencies:

Installation from GitHub:

After installation, load all required packages using library() function for each dependency [34].

What is the recommended workflow for preparing data and applying 'dbnorm'?

The optimal workflow for dbnorm follows a structured pipeline from data preparation through correction and validation, with specific requirements at each stage.

Data Preparation Requirements:

- Input data must be in CSV format with batches in the first column

- Data should be normalized and log2-scaled to account for high-abundance features

- Independent experiments should be in rows, features (variables) in columns [34]

Missing Value Imputation:

dbnorm provides two functions for handling missing values:

emvd(): Estimates missing values using the lowest detected value in the entire experimentemvf(): Estimates missing values using the lowest value for each specific feature [34]

What are the key functions in 'dbnorm' and when should each be used?

Table: Key Functions in the dbnorm Package

| Function Name | Primary Purpose | Key Features | Recommended Use Cases |

|---|---|---|---|

Visodbnorm |

Visualization and correction via multiple models | PCA plots, Scree plots, RLA plots; applies ComBat (parametric/non-parametric) and ber models | Initial exploration, datasets with <2000 features [34] |

dbnormSCORE |

Model performance evaluation | Calculates adjusted R-squared (adj-R²) for each model; generates correlation and score plots | Comparing model effectiveness, selecting optimal method [34] |

dbnormNPcom |

Individual model application | Specific application of non-parametric ComBat with clustering analysis | Large datasets requiring specific algorithm application [34] |

hclustdbnorm |

Hierarchical clustering analysis | Evaluates dissimilarity between identical samples using Pearson distance | Assessing correction quality for QC replicates [34] |

Method Comparison and Selection

What statistical models are available in 'dbnorm', and how do they differ?

dbnorm implements several established statistical models for batch effect correction, each with different theoretical foundations and performance characteristics.

Empirical Bayes Methods (ComBat):

- Parametric ComBat: Assumes batch effects follow a parametric distribution, uses empirical Bayes framework for parameter estimation, and applies shrinkage adjustment [35]

- Non-parametric ComBat: Makes fewer distributional assumptions, more flexible for complex data structures but may be less efficient with small sample sizes [35]

Linear Fitting Methods (ber):

- ber (Batch Effect Removal): Uses linear fitting for both location and scale parameters, originally developed for microarray data [35]

- ber-bagging: Applies bootstrap aggregation (bagging) to the ber algorithm with n=150 bootstrap samples, improving stability and performance [34]

How do I select the most appropriate model for my specific dataset?

dbnorm provides quantitative metrics to guide model selection through the dbnormSCORE function, which calculates adjusted R-squared values representing the proportion of variance explained by batch effects before and after correction.

Table: Performance Comparison of Batch Effect Correction Methods

| Correction Method | Maximum Variability Explained by Batch (Adj-R²) | Consistency Across Features | Computational Efficiency | Best For |

|---|---|---|---|---|

| Raw Data | 0.50-1.00 (50-100%) | N/A | N/A | Baseline assessment [35] |

| Parametric ComBat | <0.01 (<1%) | High | Moderate | Most datasets with clear batch structure [35] |

| Non-parametric ComBat | ~0.60 (~60%) | Variable | Moderate | Complex batch effects with non-normal distributions [35] |

| ber | <0.01 (<1%) | High | High | Datasets with linear batch effects [35] |

| ber-bagging | <0.01 (<1%) | Very High | Lower | Maximum stability and performance [34] |

| Lowess (QC-based) | ~0.78 (~78%) | Variable | High | Datasets with quality control samples [35] |

The optimal model typically demonstrates the lowest maximum adj-R² value while maintaining consistent performance across all metabolic features. In comparative studies, both ber and parametric ComBat have shown superior performance with residual batch effects explaining <1% of variability [35].

Troubleshooting and FAQ

How do I resolve missing value errors during data preprocessing?

Missing values (NA or zero values) must be addressed before batch effect correction. dbnorm provides two primary functions for missing value imputation:

The choice between methods depends on your data structure. Use emvd when you want consistent imputation across all features, and emvf when feature-specific baselines are more appropriate [34].

What should I do if PCA plots still show batch clustering after correction?

Persistent batch clustering after correction indicates incomplete batch effect removal. Follow this diagnostic protocol:

- Verify correction method effectiveness: Use

dbnormSCORE()to quantify residual batch effects - Check for extreme outliers: Examine probability density function plots using

ProfPlotraw()and corrected versions - Consider algorithm adjustment: Switch between parametric and non-parametric versions of ComBat

- Evaluate data transformation: Ensure proper log2-scaling was applied to abundance data [34]

If problems persist, consider applying multiple correction methods sequentially or investigating potential confounding between biological groups and batches.

Why does my analysis fail with large datasets (>2000 features), and how can I address this?

The Visodbnorm and dbnormSCORE functions are optimized for datasets with fewer than 2000 features for computational efficiency. For larger datasets:

Additionally, consider:

- Increasing computational resources or using high-performance computing environments

- Implementing feature filtering to remove low-variance metabolites before batch correction

- Using sampling approaches for diagnostic visualizations [34]

How can I validate that biological signals were preserved during batch correction?

Biological signal preservation can be validated through multiple approaches:

- Positive Control Analysis: Monitor known biological differences across conditions

- QC Replicate Correlation: Assess correlation between technical replicates or quality control samples

- Hierarchical Clustering: Use

hclustdbnorm()to evaluate whether biologically similar samples cluster together post-correction - Differential Analysis: Perform preliminary differential analysis to confirm expected biological findings [34]

The dbnorm package provides built-in visualization functions including PCA plots, probability density function plots, and hierarchical clustering dendrograms to support these validation approaches.

Advanced Applications and Integration