Beyond Compliance: A 2025 Guide to Mapping R&D Data to Environmental Reporting Frameworks

This article provides a comprehensive guide for researchers, scientists, and drug development professionals navigating the complex process of translating intricate operational data into compliant environmental, social, and governance (ESG) disclosures.

Beyond Compliance: A 2025 Guide to Mapping R&D Data to Environmental Reporting Frameworks

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals navigating the complex process of translating intricate operational data into compliant environmental, social, and governance (ESG) disclosures. It addresses the foundational challenges of data fragmentation and materiality, outlines a methodological approach for cross-framework mapping, offers solutions for common data quality and supply chain obstacles, and establishes validation protocols for audit readiness. The content is specifically tailored to the unique context of biomedical R&D, covering everything from laboratory energy consumption and clinical trial travel to solvent waste management and supply chain sustainability, empowering professionals to transform reporting from a compliance burden into a strategic asset.

The Data Labyrinth: Why Mapping R&D Operations to ESG Frameworks is a Foundational Challenge

Frequently Asked Questions (FAQs)

FAQ 1: What is the core philosophical difference between the ISSB and CSRD/GRI frameworks that affects data mapping? The core difference lies in their definition of materiality. The ISSB (IFRS S1 and S2) uses financial materiality, focusing solely on sustainability matters that affect a company's enterprise value. In contrast, CSRD (using ESRS) and GRI employ double materiality, which requires reporting on both how sustainability issues affect the company and how the company impacts society and the environment [1] [2] [3]. This fundamental difference means a single data point, like greenhouse gas emissions, may need to be sliced, contextualized, and reported differently for each framework [1].

FAQ 2: What is the most significant data collection challenge for CSRD compliance? The most significant challenge is Scope 3 emissions data collection and the broader requirement for value chain reporting [3]. CSRD mandates that companies obtain data from all suppliers where feasible, moving beyond direct operations (Scope 1) and purchased energy (Scope 2) to the entire value chain [1] [3]. This is complex because it involves gathering consistent, audit-ready data from partners who may not have mature data collection systems themselves [4] [5].

FAQ 3: How can our research organization efficiently approach reporting when we have limited in-house ESG expertise? A recommended strategy is to "Build Once, Report Many" [1]. This involves:

- Starting with a Master Disclosure Matrix: Create a centralized matrix that aligns common disclosure topics across ISSB, GRI, and CSRD, tagging their source frameworks and metrics [1].

- Developing Unified Data Collection Templates: Consolidate data templates to capture datapoints once and use them across frameworks, identifying shared KPIs like Scope 1-3 emissions [1].

- Leveraging Technology: Implement ESG data management platforms that offer pre-built mapping templates and automated validation to reduce manual effort and errors [1] [6].

FAQ 4: Our data is scattered across departments. What is the first step to gaining control for reporting? The first step is to establish a robust data governance framework [6]. This means assigning clear ownership for each ESG data category (e.g., energy data to facility managers, diversity metrics to HR) and implementing standardized data collection processes with regular update schedules [2] [6]. Assigning roles like "ESG Controllers" to oversee data quality is a emerging best practice to ensure accountability [6].

Troubleshooting Guides

Issue 1: Inconsistent Data from Suppliers for Scope 3/Value Chain Reporting

Problem: Data received from suppliers is in inconsistent formats, of varying quality, or incomplete, making aggregation and reporting impossible.

Diagnosis: This is a common issue driven by a lack of standardised reporting requirements for small and medium-sized enterprises (SMEs) and the inherent complexity of global supply chains [4] [1].

Solution:

- Develop Supplier-Friendly Templates: Create simplified, standardized data collection templates for your suppliers, using common metrics from GRI or the Partnership for Carbon Accounting Financials (PCAF) where possible.

- Implement a Supplier Portal: Use technology platforms to provide a centralized portal for data submission, which can include built-in validation checks to improve data quality at the point of entry [1] [6].

- Engage and Educate: Proactively communicate with suppliers about your reporting requirements and the importance of data quality. Consider providing training or resources to help them build their own data collection capacity.

- Phased Approach: Start by focusing on your largest suppliers or those in high-impact sectors, then gradually expand the program [1].

Issue 2: Struggling with the "Double Materiality" Assessment for CSRD

Problem: The process of identifying which sustainability topics are material from both an impact and financial perspective is unclear and resource-intensive.

Diagnosis: Double materiality is a new concept for many organizations and requires cross-functional collaboration and structured stakeholder engagement [3] [5].

Solution:

- Follow a Structured Process: Begin with GRI 3: Material Topics, which provides a guided process for determining material topics [5].

- Map Your Value Chain: Identify all entities and stakeholders across your operations and value chain [3].

- Systematic Stakeholder Engagement: Engage with a representative range of stakeholders (investors, employees, communities, NGOs) through surveys, interviews, and panels to understand their concerns about your company's impacts [5].

- Cross-Functional Workshop: Convene a workshop with leaders from sustainability, finance, legal, operations, and HR to assess and prioritize the identified topics based on their significance [3].

Issue 3: Difficulty Mapping a Single Data Point to Multiple Frameworks

Problem: You have collected a data point, like "Workplace safety incidents," but are unsure how to report it correctly for GRI, ISSB, and CSRD.

Diagnosis: While themes overlap across frameworks, the specific metrics, granularity, and audience expectations differ [1].

Solution: Use the following table as a guide to map a single data point across the three primary frameworks.

Table: Data Point Mapping for "Workplace Safety"

| Framework | Relevant Standard | Key Reporting Requirements & Nuances |

|---|---|---|

| GRI | GRI 403: Occupational Health and Safety | Comprehensive focus. Requires data on injury rates (e.g., TRIR), work-related ill health, absenteeism, and detailed narratives on the management system, worker participation, and prevention programs [5]. |

| ISSB | IFRS S1 (General Requirements) | Investor-focused. Report on safety performance as a metric useful for understanding enterprise value. Focus on financial materiality: how safety incidents lead to operational downtime, litigation, reputational damage, and increased insurance costs [1]. |

| CSRD | ESRS S1: Own Workforce | Dual focus (Double Materiality). Report similar metrics to GRI (injury rates, ill health). Must also disclose how the company ensures the health and safety of its workers (impact materiality) and how safety incidents pose financial risks to the company (financial materiality) [7]. |

Experimental Protocols for Data Management

Protocol 1: Establishing the ESG Data Lifecycle

Purpose: To create a systematic, repeatable process for managing ESG data from collection to reporting, ensuring accuracy, auditability, and reliability [6].

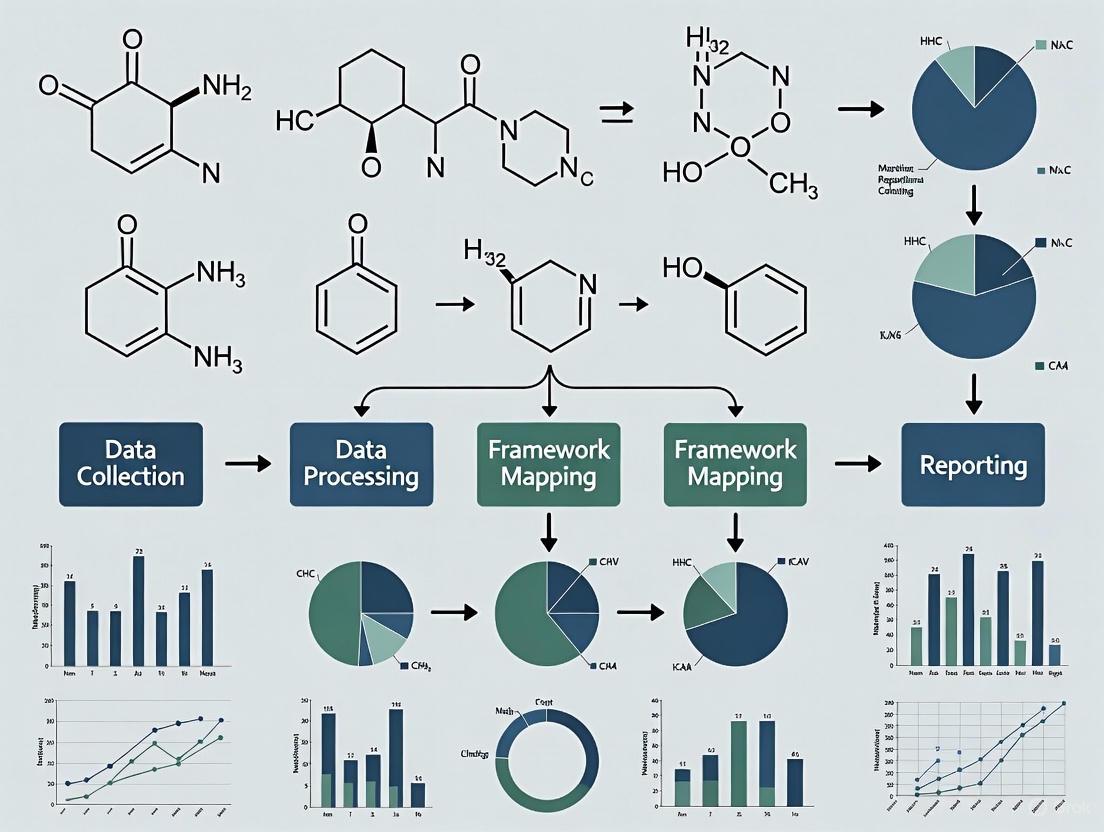

Workflow Diagram:

Methodology:

- Collection: Gather comprehensive data from diverse sources (IoT sensors, utility bills, HR systems, supplier portals). Automate capture where possible using APIs and integrations [4] [6].

- Verification: Validate data against source documentation. Apply validation rules to identify outliers. Implement approval workflows to confirm data meets quality standards before proceeding [6].

- Storage: Use secure, centralized repositories that maintain data integrity, version control, and audit trails. This serves as the single source of truth [6].

- Analysis: Transform raw data into actionable intelligence. Identify trends, benchmark performance, and calculate derived metrics to drive improvement and inform strategy [6].

- Reporting: Convert analyzed data into formatted communications for stakeholders, ensuring clear linkages back to source data for verification [6]. Data governance and integrity controls must be applied throughout all phases [6].

Protocol 2: Conducting a Double Materiality Assessment

Purpose: To systematically identify and prioritize the sustainability topics that are material for CSRD and GRI reporting, based on their financial impact and impact on society and the environment [3] [5].

Workflow Diagram:

Methodology:

- Phase 1: Identification

- Map the entire value chain, from sourcing to end-of-life.

- Identify a longlist of potential sustainability topics from relevant frameworks (e.g., ESRS, GRI) and industry benchmarks [5].

- Phase 2: Assessment

- Impact Materiality: Assess the company's actual and potential impacts on people and the environment for each topic. This requires engaging with affected stakeholders through surveys, interviews, and panels [3] [5].

- Financial Materiality: Assess the potential of each sustainability topic to generate risks and opportunities that affect the company's financial performance, cash flows, and enterprise value in the short, medium, and long term [3].

- Phase 3: Prioritization

- Convene a cross-functional team (sustainability, finance, risk, operations) to review the assessments.

- Use a consistent scoring methodology to prioritize topics that are significant from either an impact or financial perspective, or both [3].

- Phase 4: Validation & Reporting

- Document the entire process, including methodologies, stakeholders engaged, and rationale for decisions.

- Disclose the results of the assessment and the list of material topics in the sustainability report [5].

The Researcher's Toolkit: Essential Solutions for ESG Data Mapping

This table details key resources and methodologies required for effective data mapping and reporting.

Table: Research Reagent Solutions for ESG Data Mapping

| Tool / Solution | Function & Application in Data Mapping |

|---|---|

| Master Disclosure Matrix | A centralized spreadsheet or database that aligns common ESG disclosure topics and tags their source frameworks (ISSB, GRI, CSRD), metrics, and reporting timelines. It is the foundational "map" for all reporting activities [1]. |

| ESG Data Management Platform | Purpose-built software (e.g., Coolset, Solvexia, Workiva) that automates data collection, validation, and reporting. These tools often include pre-built mapping templates for different frameworks and are essential for moving beyond error-prone spreadsheets [4] [1] [6]. |

| Unified Data Collection Template | Standardized internal templates used to capture ESG datapoints once from data owners. These are designed to be modular, allowing the same core data (e.g., kWh of energy) to be used across multiple frameworks with contextual adjustments, minimizing duplication of effort [1]. |

| Governance Framework (RACI Chart) | A clear assignment of Roles and Responsibilities (Responsible, Accountable, Consulted, Informed) for ESG data. This defines data owners (e.g., facility manager for energy data), stewards, and controllers, establishing accountability [6]. |

| Third-Party Assurance Provider | An independent auditor that provides verification (assurance) for ESG disclosures. Engaging them early ensures data collection processes are designed to be "audit-ready," enhancing credibility and meeting regulatory requirements for CSRD and others [4] [8] [3]. |

Fundamental Concepts and Definitions

What is the core difference between single and double materiality in the context of environmental reporting research?

Single materiality, often referred to as financial materiality, focuses only on how environmental, social, and governance (ESG) factors affect a company's financial performance [9]. In a research context, this means your analysis would be confined to how environmental data (e.g., emissions, water usage) translates into financial risks or opportunities that impact the company's bottom line [10].

Double materiality expands this view into a two-way assessment. It is a foundational concept in frameworks like the European Union's Corporate Sustainability Reporting Directive (CSRD) and requires evaluating both [11] [12]:

- Impact Materiality (Inside-Out): The company's impacts on the environment and society.

- Financial Materiality (Outside-In): How sustainability issues, in turn, create financial consequences for the company.

How do "financial materiality" and "impact materiality" differ in their analytical endpoints?

The distinction lies in the primary subject of the analysis. The table below summarizes the key differences, which are crucial for defining the scope of a research project.

| Feature | Financial Materiality | Impact Materiality |

|---|---|---|

| Core Question | How do environmental/sustainability issues affect the company's financials? [9] | How do the company's activities affect the environment and society? [11] |

| Analytical Direction | Outside-In (external factors impacting the firm) [13] | Inside-Out (firm's activities impacting the external world) [13] |

| Primary Research Endpoint | Financial performance, cash flows, cost of capital, enterprise value [12] [10] | Scale, scope, irremediability of impacts on people and the environment [11] [12] |

| Key Stakeholders for Analysis | Investors, lenders, financial analysts [9] | Affected communities, NGOs, civil society, regulators [12] |

Methodologies and Experimental Protocols

What is a standardized, step-by-step protocol for conducting a double materiality assessment in a research setting?

A robust double materiality assessment, as outlined in the ESRS, can be structured into a multi-stage iterative process [12]. The following workflow provides a methodological blueprint for researchers.

What are the key "research reagents" or essential components for a double materiality assessment?

In an experimental context, conducting this assessment requires specific inputs and tools. The table below details these essential components.

| Research Component | Function & Description | Example Sources & Tools |

|---|---|---|

| Stakeholder Input | Provides qualitative and quantitative data on perceived impacts and financial concerns. Critical for validating internal hypotheses [12]. | Interviews, surveys, focus groups with affected communities, investors, employees [12] [9]. |

| Sustainability Matter Lists | Standardized taxonomies of potential environmental and social topics serve as a checklist to ensure comprehensive coverage [12]. | ESRS Appendices, Global Reporting Initiative (GRI) Standards, SASB Industry Standards [12] [13]. |

| Sector & Peer Benchmarking | Provides context for determining the materiality of an issue by comparing it to industry norms and competitor disclosures [12]. | Peer sustainability reports, sector-specific benchmarks, analyst reports [12]. |

| Materiality Thresholds | The criteria (e.g., significance, severity, likelihood) used to judge whether an impact, risk, or opportunity is material [12] [13]. | Defined criteria for scale, scope, irremediability of impacts; potential financial effect on cash flows [12]. |

Technical Support: Troubleshooting Common Research Challenges

FAQ: In our analysis, we are encountering significant data gaps, particularly in the value chain. How can we address this?

Data incompleteness is a common and critical challenge in environmental and sustainability research [14]. Potential solutions include:

- Leverage Big Data Analytics: Explore the use of big data and advanced analytics as a cost-effective solution to fill data gaps. This can involve using satellite imagery (remote sensing), IoT sensors, or social media data to model or estimate missing environmental parameters [14].

- Explicitly Report Limitations: In your research documentation, transparently disclose the data gaps, their potential impact on your materiality conclusions, and the assumptions used to bridge these gaps. This is a requirement under standards like the CSRD [12] [9].

- Apply the Precautionary Principle: If severe negative impacts are plausible but data is insufficient, the precautionary principle may warrant treating the topic as material even without full quantification [12].

FAQ: Our model is suffering from spatial autocorrelation and poor generalization when predicting environmental impacts. What steps can we take?

This is a known pitfall in data-driven geospatial modeling for environmental research [15]. To enhance model accuracy:

- Account for Spatial Autocorrelation (SAC): Ensure your model validation methodology properly accounts for SAC. Techniques like spatial cross-validation, where training and test sets are separated in space, can reveal a model's true predictive power and prevent over-optimistic performance metrics [15].

- Incorporate Uncertainty Estimation: Implement methods to quantify the uncertainty of your predictions, especially when applying models to areas with different data distributions from the training set (the out-of-distribution problem) [15].

- Address Data Imbalance: Environmental data is often imbalanced (e.g., rare events like spills or species sightings). Use techniques such as stratified sampling or specialized algorithms to ensure your model can accurately predict minority classes [15].

FAQ: How do we ensure our materiality assessment is not biased towards easily quantifiable financial metrics at the expense of significant but hard-to-quantify impacts?

This is a fundamental challenge in balancing the two dimensions of double materiality.

- Structured Impact Assessment: Do not default to financial quantification for impact materiality. Use the prescribed qualitative criteria of scale, scope, and irremediability to assess the significance of an impact on people and the environment, independent of its current financial effect [11] [12].

- Iterative Re-evaluation: Recognize that a sustainability matter can be material from an impact perspective first. This can reveal future financial risks (e.g., new regulations, reputational damage) that may not be present on the balance sheet today but must be captured in the financial materiality assessment over longer time horizons [9]. The process is inherently iterative [12].

Technical Support Center

Troubleshooting Guides

Guide: Resolving Manual Data Transcription Errors

Problem: Laboratory staff manually re-enter data from analyzers into the Laboratory Information System (LIS) and Electronic Medical Record (EMR), leading to a high rate of transcription errors that compromise data integrity and patient safety [16].

Symptoms:

- Discrepancies between analyzer output and final recorded results.

- Increased instances of misplaced decimals or incorrect patient IDs in reports.

- Compliance audits revealing inconsistent or incomplete source data [16].

Resolution:

- Verify Instrument Interface Connectivity: Confirm that all laboratory analyzers are connected to the LIS via supported interfaces (e.g., HL7, FHIR, REST APIs) [16] [17].

- Enable Automatic Result Capture: Configure the LIS to automatically capture and ingest result data directly from each instrument, bypassing manual entry [16].

- Audit Data Provenance: Use the LIS to generate a complete audit trail for each result, documenting the instrument, reagent batch, user, and verification timestamp [16].

Verification:

- Monitor the error rate for transcribed data; successful implementation should reduce manual transcription errors to near-zero [16].

- Check that the audit trail for a sample result now automatically populates with instrument-level detail.

Guide: Addressing Delays in Lab Result Reporting

Problem: Critical lab results are delayed in reaching clinicians, leading to prolonged emergency department stays, postponed treatments, and suboptimal patient outcomes [16].

Symptoms:

- Results are delivered to physicians via email or fax hours after tests are completed.

- Clinicians report spending excessive time chasing lab results.

- Evidence of duplicate test orders due to perceived result loss [16].

Resolution:

- Activate Real-Time Results Delivery: Ensure the LIS is configured for real-time, bi-directional data transmission with the hospital's EMR using certified HL7 or FHIR standards [16].

- Implement a Secure Provider Portal: Provide clinicians with instant, full-context access to verified lab results through a secure web portal, including alerts for critical values [16].

- Automate Reporting Workflows: Eliminate batch-reporting processes. Configure the system so that validated results are transmitted to the EMR and available in the portal immediately upon verification [16].

Verification:

- Measure the turnaround time (TAT) from test verification to clinician access; this process can reduce delays by up to 80% [16].

- Confirm that a test result appears in the secure provider portal and the EMR simultaneously and immediately after final sign-off in the LIS.

Guide: Mitigating Vendor and AI Data Governance Gaps

Problem: Sensitive data is exposed because of governance failures, including unencrypted data at rest, poor vendor oversight, and uncontrolled use of AI tools, leading to high breach rates [18].

Symptoms:

- Inability to locate all sensitive data across clinical, administrative, and research systems.

- Security incidents involving third-party vendors or AI applications.

- Data stored on backups or storage systems without encryption [18].

Resolution:

- Close the Encryption Gap: Implement encryption for data at rest, not just in transit. This protects patient records, imaging files, and research repositories on storage systems [18].

- Establish Continuous Vendor Monitoring: Move beyond point-in-time questionnaires. Integrate vendor systems into your security monitoring for ongoing risk assessment [18].

- Integrate AI into Security Frameworks: Bring AI tools (e.g., for clinical decision support or billing) under formal governance. Track AI data access, enforce controls, and include AI transactions in security flow mapping [18].

Verification:

- Use data discovery tools to confirm that all identified sensitive data stores are now encrypted.

- Verify that the security operations center has visibility into file access patterns and data flows involving third-party vendors and internal AI tools.

Frequently Asked Questions (FAQs)

Q1: Our lab uses a modern LIS, but the hospital's corporate EMR doesn't seem to receive all our data. Where should we start troubleshooting?

A: Begin by diagnosing the "EMR handshake." First, verify that your LIS uses certified, bi-directional HL7 or FHIR standards compatible with the hospital's EMR (e.g., Epic, Cerner) [16]. Second, check the real-time results delivery configuration to ensure data transmission is not being held in a batch queue. The issue often lies in the interface engine between the two systems, not in the LIS or EMR themselves [16].

Q2: What are the most critical metrics for identifying a data silo problem?

A: Quantify the problem by tracking these key metrics [16] [19]:

- Manual Transcription Error Rate: Studies show rates of 3-4%, which can significantly alter clinical decisions [16].

- Result Turnaround Time (TAT): Delays can extend emergency department stays by 61% and postpone treatments by 43% [16].

- Operational Cost of Disconnects: Calculate the labor hours spent daily on managing system disconnects. A 50-person lab can waste over 2,600 hours annually [16].

Q3: How can we improve cross-departmental coordination to break down silos?

A: Implement two key strategies from organizational management [19]:

- Assign a Directly Responsible Individual (DRI): Appoint a single person with the authority and accountability to manage performance horizontally across IT, HR, finance, and clinical silos for a specific service line (e.g., spine care) [19].

- Use Integrated, Real-Time Dashboards: Provide DRIs and department leaders with dashboards that aggregate metrics from all systems into a shared view. This allows for real-time monitoring of issues like patient length of stay or discharge delays, rather than relying on 30-60 day old reports [19].

Q4: We need to map our operational lab data to the GRI and CDP environmental reporting frameworks. How can we ensure data consistency?

A: To avoid duplication and ensure consistency, leverage the official mapping resources provided by framework organizations. For instance, GRI and CDP have released a joint mapping tool that shows how disclosures under the GRI 102: Climate Change and GRI 103: Energy standards align with CDP's environmental datapoints [20] [21]. This allows you to apply the principle of 'write once, read many,' using the same high-quality operational data for different reporting purposes [20].

The impact of operational data silos can be measured in clinical errors, financial costs, and security risks. The tables below consolidate key quantitative data from the search results for easy comparison.

Table 1: Clinical and Operational Impact of Data Silos

| Metric | Impact Level | Consequence |

|---|---|---|

| Manual Transcription Error Rate [16] | 3-4% | Alters clinical decisions, leads to duplicate tests or missed diagnoses. |

| Lost Source Lab Data [16] | Up to 10.5% | Found in compliance audits due to inconsistent or incomplete entry. |

| Emergency Department Stay Extension [16] | 61% | Prolonged stays due to delays in lab reporting. |

| Postponement of Treatments [16] | 43% | Delays in receiving lab results directly impact treatment schedules. |

| Annual Labor Waste (50-person lab) [16] | 2,600 hours | Time spent on managing system disconnects and manual reconciliation. |

Table 2: Data Security and Governance Risks

| Metric | Impact Level | Context |

|---|---|---|

| Healthcare MFT Security Incidents [18] | 44% | Organizations experiencing incidents in the past year. |

| Healthcare Data Breaches [18] | 22% | Highest breach rate among all sectors surveyed. |

| Organizations Encrypting Data at Rest [18] | 11% | Highlights critical "encryption gap" despite secure data transit. |

| Vendor-Implicated Breaches [18] | Nearly 60% | Third-party vendors are a major risk vector. |

| AI-Related Security Incidents [18] | 26% | Organizations experiencing incidents related to AI tool use. |

Experimental Protocols and Workflows

Protocol for Implementing a Horizontal Data Governance Framework

This methodology provides a systematic approach to breaking down internal silos between lab, clinical, and corporate units, optimizing for overall system performance rather than isolated departmental goals [19].

1. Problem Identification and DRI Appointment:

- Define the Scope: Identify a specific, cross-functional workflow that is underperforming due to silos (e.g., the patient discharge process, management of a specific clinical service line).

- Appoint a Directly Responsible Individual (DRI): Assign a single person with the clear authority and accountability for the end-to-end performance of the identified workflow. The DRI's role is to manage horizontally across all involved silos (IT, HR, finance, clinical departments) [19].

2. Integrated Dashboard Development:

- Aggregate Cross-Silo Data: Work with the IT department to build a real-time dashboard that pulls metrics from all relevant systems (LIS, EMR, bed management, finance) into a single, shared view.

- Establish Actionable Benchmarks: Populate the dashboard with key performance indicators (KPIs). For example, a chief medical officer's dashboard should track length of stay (LOS) in hours for each patient floor, while a nurse's dashboard should monitor LOS for every patient on their floor, with drill-down capability to individual medical records [19].

3. Continuous Monitoring and Intervention:

- Enable Real-Time Intervention: The DRI and department leaders use the dashboard to identify and address bottlenecks as they occur (e.g., a patient staying longer than expected due to a missing lab result).

- Shift in Accountability: Support services like HR, IT, and legal are instructed that their objective is to support the DRI in achieving the overall performance outcome, rather than optimizing their own siloed objectives [19].

Protocol for Mapping Operational Data to ESG Reporting Frameworks

This methodology details the process for connecting internal operational data, such as energy consumption in lab facilities, to the specific disclosure requirements of environmental reporting frameworks like GRI and CDP.

1. Framework Alignment and Data Source Identification:

- Utilize Official Mapping Tools: Access and review interoperability resources, such as the GRI-CDP mapping, which tracks how disclosures under GRI 102 (Climate Change) and GRI 103 (Energy) align with the CDP questionnaire [20] [21].

- Identify Internal Data Owners: Determine which internal systems and personnel are responsible for the required data points (e.g., facility management for energy usage, lab operations for solvent and chemical waste data).

2. Centralized Data Aggregation and Validation:

- Automate Data Collection: Use ESG reporting software or data integration platforms to pull data from source systems (ERP, utility tracking, lab waste management) into a centralized, validated source of truth [22].

- Apply the 'Write Once, Read Many' Principle: Structure the data collection so that a single data point (e.g., total electricity consumption) can be used to fulfill multiple disclosure requirements across GRI, CDP, and other frameworks without duplication of effort [20].

3. Disclosure and Audit Preparation:

- Generate Framework-Specific Reports: Utilize the centralized data and mapping knowledge to produce tailored reports for each framework (e.g., a GRI Index, a completed CDP questionnaire).

- Maintain a Transparent Audit Trail: Ensure that all data points can be traced back to their operational source, with clear documentation of the methodologies and calculations used, ready for internal or external audit [22].

Workflow and System Relationship Diagrams

Data Flow in Siloed vs Integrated Lab Systems

Horizontal Governance for Service Line Performance

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Digital Interoperability "Reagents" for Data Integration

| Solution / Standard | Function | Role in Experimental Data Flow |

|---|---|---|

| HL7 / FHIR Standards [16] | Enable bi-directional communication between clinical systems (LIS, EMR). | Acts as the universal "buffer solution," allowing lab data to seamlessly move from analyzers to the clinical record without manual intervention, preserving data integrity. |

| RESTful APIs [16] [17] | Provide a modern, cloud-native method for systems to exchange data over the internet. | Functions as a "molecular linker," enabling fast and reliable connections between the LIS and external systems like reference labs, billing software, or future AI diagnostic tools. |

| GRI-CDP Mapping Tool [20] [21] | A resource that aligns disclosure requirements between two major sustainability reporting frameworks. | Serves as an "alignment catalyst," allowing researchers to efficiently map operational lab data (energy, waste) to standardized environmental reports, reducing duplication of effort. |

| True SaaS LIS [17] | A cloud-native Laboratory Information System with a multi-tenant architecture and automatic, zero-downtime updates. | Provides the "core growth medium" for digital operations, ensuring the lab's central data platform is always current, scalable, and free from the technical debt of legacy systems. |

| Integrated Security Governance [18] | A framework combining data discovery, access control, and vendor monitoring into a cohesive strategy. | Acts as a "universal protease inhibitor," protecting sensitive research and patient data by blocking exploitation paths across fragmented technology landscapes and third-party tools. |

For researchers and scientists, quantifying the environmental footprint of R&D activities presents a significant challenge. The core difficulty lies in mapping disparate, raw operational data onto standardized environmental reporting frameworks required by regulators and investors. This technical support center provides practical methodologies to bridge that gap, focusing on the key pillars of energy, waste, water, and supply chain impacts.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical environmental metrics for an R&D facility to track? The most critical metrics form the foundation of most major reporting frameworks. Tracking these ensures compliance and identifies key areas for efficiency gains [23] [24].

- Energy & Emissions: Total energy consumption (kWh), and Greenhouse Gas (GHG) emissions broken down by Scope 1 (direct), Scope 2 (indirect from purchased energy), and Scope 3 (other indirect, including supply chain) [2] [25].

- Waste: Total waste generated, waste diverted from landfill (%), and recycling rate (%) [23].

- Water: Total water usage (cubic meters or tons) [25].

- Supply Chain: Environmental performance of suppliers, often captured via Scope 3 emissions and supplier audit results [2] [25].

FAQ 2: Our lab has energy data from utility bills, but how do we convert this to carbon emissions? This is a fundamental step for reporting. The conversion requires knowing the emission factor of your local electricity grid.

- Method: Use the formula:

Emissions (kg CO₂e) = Energy Consumed (kWh) × Emission Factor (kg CO₂e/kWh). - Data Source: Your electricity provider or your country's environmental protection agency often publishes grid-specific emission factors. This conversion is essential for calculating your Scope 2 emissions [25].

FAQ 3: How can we accurately track waste from numerous small-scale experiments? This is a common pain point. The solution involves moving from estimates to measured data.

- Standardized Protocol: Implement a lab-level waste segregation protocol. Use clearly labeled bins for different waste streams (e.g., general, recyclable plastic, glass, hazardous).

- Measurement: Weigh each waste stream at the point of collection using standardized digital scales. Track the weights in a central logbook or digital platform. This primary data is crucial for calculating accurate recycling and diversion rates [23].

FAQ 4: What is the simplest way to start accounting for our supply chain (Scope 3) environmental impact? Scope 3 emissions are complex, but a phased approach is effective.

- Initial Step: Begin by mapping your procurement spend and identifying "hotspot" categories—materials and reagents with the highest purchased volume or value.

- Data Collection: Deploy a supplier self-assessment questionnaire (SAQ) focused on these hotspots, asking for their environmental data (e.g., their energy use and waste generation) [2] [25]. This provides initial, modeled data for your largest Scope 3 categories.

Troubleshooting Guides

Issue: Inconsistent Data for Sustainability Reporting

Problem: Data on energy, water, and waste is stored in different formats (paper logs, utility bills, supplier invoices), making consolidated reporting time-consuming and prone to error.

Solution: Implement a unified data collection and management protocol.

- Digitalize Data Entry: Create a simple, centralized digital form (e.g., a shared spreadsheet or internal web form) for all lab personnel to log waste measurements and water readings.

- Standardize Units: Mandate the use of consistent units across all inputs (e.g., kWh for energy, kilograms for waste, cubic meters for water).

- Automate Data Pulls: Where possible, use APIs or dedicated software to automatically pull data from smart meters and utility portals.

- Consolidate: Aggregate this data monthly into a master environmental data file. This creates a single source of truth for reporting against frameworks like GRI or SASB [23] [2].

Issue: Low Waste Diversion Rate from Landfill

Problem: A large percentage of lab waste, including non-hazardous packaging materials, is being sent to landfill instead of being recycled or composted.

Solution: Conduct a waste audit and refine segregation workflows.

table: Waste Stream Identification and Management

| Waste Stream | Common R&D Examples | Proper Management Pathway |

|---|---|---|

| Recyclables | Clean plastic pipette tip boxes, glass media bottles, cardboard | Recycling bin |

| Compostables | Biomass from non-hazardous cell cultures (e.g., yeast, algae) | Commercial composting |

| Hazardous Waste | Solvents, chemical reagents, biohazardous materials | Specialized hazardous waste disposal |

| General Waste | Contaminated plastics, mixed materials | Landfill (after reduction efforts) |

- Audit: Sort and weigh a day's worth of "general" lab waste to identify mis-categorized streams.

- Label: Update bin labels with clear, specific text and images of acceptable items.

- Train: Brief all personnel on the updated segregation protocol, emphasizing the economic and environmental costs of landfill [23].

- Track: Monitor the diversion rate monthly using the formula:

(Weight of Diverted Waste / Total Waste Generated) × 100[23].

Experimental Protocols for Environmental Data Collection

Protocol 1: Measuring Energy Consumption of a Lab-Scale Bioreactor

1. Objective: To quantify the direct energy footprint of a specific R&D process for accurate carbon accounting.

2. Methodology:

- Equipment: Lab-scale bioreactor, a calibrated wattmeter (plug-in energy monitor).

- Procedure: a. Connect the bioreactor and all ancillary equipment (heating mantle, pumps, controllers) to a power strip. b. Connect the power strip to the wattmeter, which is then plugged into the wall outlet. c. Reset the wattmeter to zero. d. Run the fermentation process per experimental parameters. e. Upon completion, record the total energy consumed in kilowatt-hours (kWh) from the wattmeter display.

- Data Analysis: Multiply the total kWh by your grid's emission factor to calculate the carbon footprint of the run. This provides precise data for your Scope 2 inventory [25].

Protocol 2: Quantifying Process Water Usage in a Chromatography Step

1. Objective: To accurately measure the water consumption of a purification step, a key metric for resource efficiency.

2. Methodology:

- Equipment: Liquid chromatography system, graduated cylinder or flow totalizer.

- Procedure: a. For buffer preparation: Measure the volume of all aqueous buffers and solutions used in the process using graduated cylinders. b. For system equilibration/cleaning: If the system uses a constant flow rate, use a stopwatch and a graduated cylinder to measure the flow rate (mL/min). Multiply by the total run time to calculate total volume. c. Sum all measured volumes to determine the total water consumption for the process, converting to cubic meters (1 m³ = 1000 L).

- Data Analysis: This primary data allows for the calculation of water intensity (e.g., water used per gram of purified product), a key efficiency metric [25] [24].

The Scientist's Toolkit: Essential Reagents & Solutions

table: Research Reagent Solutions for Environmental Footprint Analysis

| Item | Function in Footprint Analysis |

|---|---|

| Digital Wattmeter | Measures real-time and cumulative energy consumption (kWh) of individual lab instruments. |

| Bench Top Scale | Precisely weighs waste streams (e.g., plastic, glass, biomass) for mass balance calculations. |

| Flow Totalizer / Meter | Attaches to water outlets to measure total volume of water used in a specific process. |

| Supplier Self-Assessment Questionnaire (SAQ) | A standardized form to collect environmental performance data from material suppliers. |

| Data Consolidation Software | Spreadsheet or specialized ESG software to aggregate and manage all environmental data. |

Environmental Performance Data and Frameworks

To contextualize your findings, the table below summarizes key global data and reporting standards.

table: Key Environmental Metrics and Reporting Frameworks

| Metric Category | Example Quantitative Data / Benchmark | Relevant Reporting Framework |

|---|---|---|

| Global CO₂ Emissions | 38.1B tonnes (fossil fuels, 2025 projection) [26] | GRI, CDP, TCFD [23] [2] |

| Waste Diversion Rate | Percentage of waste recycled/composted vs. landfilled [23] | GRI, SASB [23] |

| Water Usage | Total withdrawal in cubic meters [25] [24] | GRI, SASB (sector-specific) [2] |

| Scope 3 Emissions | Supply chain emissions; often the largest portion of a carbon footprint [25] | CDP, GRI, ISSB [2] |

Visual Workflows for Environmental Data Management

Diagram 1: From Lab Data to Sustainability Report

Diagram 2: Waste Stream Identification Logic

Frequently Asked Questions (FAQs)

FAQ 1: What are the core challenges when mapping our internal operational data to environmental reporting frameworks?

Researchers often face a complex puzzle when aligning their data with frameworks like those from the ISSB, GRI, or the EU's CSRD. The primary challenges include:

- Misaligned Definitions of Materiality: Different frameworks prioritize information differently. The ISSB focuses on financial materiality (how sustainability issues affect the company's enterprise value), while GRI and CSRD require double materiality, which also considers the company's impacts on society and the environment [1]. Using the wrong lens can lead to non-compliant disclosures.

- Surface-Level Overlap: While themes like "climate" or "governance" appear across frameworks, the specific metrics, granularity, and calculation boundaries often differ [1]. A single data point, like GHG emissions, may need to be sliced and presented differently for each standard.

- Data Fragmentation and Quality: Operational data is often siloed across departments (e.g., HR, operations, supply chain) in disparate systems [27] [28]. This leads to inconsistencies, inaccuracies, and significant manual effort to compile for reporting.

- Rapidly Evolving Requirements: The regulatory landscape is changing quickly. For example, the European Sustainability Reporting Standards (ESRS) under CSRD include over 1,100 data points, many requiring forward-looking metrics [1]. Keeping pace with these changes demands agile data systems.

FAQ 2: How can I ensure our ESG data meets the "investor-grade" standard expected by regulators and the financial community?

Investor-grade data is transparent, comparable, and assured. To achieve this, you must treat ESG data with the same rigor as financial data [29].

- Implement Robust Data Governance: Establish clear ownership for each data category, validate data at the point of entry, and implement review and audit trails [1]. A centralized data hub is critical for audit readiness [30].

- Prepare for External Assurance: Under regulations like CSRD, limited assurance is already required, moving towards reasonable assurance [29]. Your processes and data must be able to withstand external audit. This requires transparent data collection, verifiable calculations, and documented methodologies [30].

- Build an ESG Control Framework: A formal control framework helps manage risks and strengthen the reliability of reporting. This involves identifying and assessing ESG risks, designing control measures, and monitoring their effectiveness [30].

FAQ 3: The FAIR principles (Findable, Accessible, Interoperable, Reusable) are a major topic in the scientific community. How do they apply to corporate environmental reporting?

The FAIR principles, while developed for scientific data, are directly applicable to corporate ESG data, particularly its Interoperability and Reusability [31] [32].

- Interoperability: This is the harmonization of data structure, formatting, and annotation. Using consistent, machine-readable formats and standardized vocabularies (ontologies) allows your data to be easily integrated and analyzed across different systems and by different stakeholders [31].

- Reusability: Data should be well-described with rich metadata so it can be reused for multiple purposes—whether for regulatory submission, investor reports, or internal research and development. Robust metadata provides crucial context on collection methods, lab protocols, and software versions, which is fundamental for replication and trust [31].

FAQ 4: What is the strategic value of overcoming these data mapping challenges?

Beyond compliance, effective data mapping transforms sustainability reporting from a burden into a strategic advantage. It:

- Reduces Compliance Costs: A "build once, report many" approach avoids duplication of effort [1].

- Enhances Decision-Making: Reliable, integrated ESG data provides insights for better strategic planning and risk management [30].

- Builds Stakeholder Trust: Transparent and assured disclosures strengthen credibility with investors, customers, and employees [29] [28].

- Improves Resilience: Organizations that firmly embed ESG are better positioned to navigate regulatory changes and market shifts [30].

Troubleshooting Guides

Issue: Inconsistent Data Due to Varying Materiality Definitions

Problem: Your data collection is inconsistent because teams are confused about what to report for different frameworks (e.g., ISSB vs. CSRD).

Solution: Implement a Master Disclosure Matrix.

- Identify Core Themes: Start with common ESG areas like climate, governance, and labor that are present across all frameworks [1].

- Build a Centralized Matrix: Create a single source of truth that aligns disclosure topics, tags the source framework (ISSB, GRI, CSRD), flags required metrics, and notes areas of overlap and divergence [1]. This matrix simplifies complexity and ensures everyone is aligned on what data to collect and for which purpose.

Issue: Fragmented and Manual Data Collection Processes

Problem: Data is trapped in silos (spreadsheets, departmental databases), making collection slow, error-prone, and inefficient.

Solution: Develop Unified Data Collection Templates and Leverage Technology.

- Consolidate Templates: Wherever possible, design modular data templates to capture datapoints once and use them across multiple frameworks [1].

- Invest in a Centralized System: Adopt a purpose-built ESG data management platform. These systems can automate data collection from various sources (ERP, HR, supply chain), embed validation rules, and provide a single source of truth, drastically reducing manual effort and improving data quality [1] [28].

Issue: Data Lacks the Quality Needed for Audit and Assurance

Problem: Your ESG data is not "assurance-ready," creating regulatory and reputational risk.

Solution: Establish a Robust ESG Control Framework.

- Risk Identification: Map ESG-related risks across your organization and value chain. This aligns closely with performing a Double Materiality Assessment for CSRD [30].

- Implement Controls: Design and implement concrete control measures to mitigate identified risks that exceed your risk appetite [30].

- Monitor and Govern: Continuously monitor the effectiveness of controls through Key Risk Indicators (KRIs) and control testing. Report regularly to the board to ensure active oversight [30].

Experimental Protocols & Data

Protocol 1: Implementing a Master Disclosure Matrix

Objective: To create a centralized system for tracking and aligning ESG disclosure requirements across multiple reporting frameworks.

Methodology:

- Framework Analysis: Compile a list of all relevant frameworks (e.g., GRI, ISSB, CSRD). For each, list all required and recommended disclosures and metrics.

- Theme Mapping: Group these disclosures into core ESG themes (e.g., GHG Emissions, Water Usage, Board Diversity).

- Matrix Population: Create a spreadsheet or database with columns for: Disclosure Topic, GRI Code/ISSB Requirement/ESRS Datapoint, Metric, Data Source, Responsible Department, and Reporting Timeline.

- Gap & Overlap Analysis: Use the matrix to identify where one data point can satisfy multiple framework requirements and where unique, framework-specific data collection is needed.

Protocol 2: Conducting a Double Materiality Assessment

Objective: To identify which sustainability topics are material for reporting under frameworks like the CSRD, considering both financial and impact perspectives.

Methodology:

- Stakeholder Identification: Identify key internal and external stakeholders (investors, employees, customers, communities, regulators) [28].

- Impact Assessment: Evaluate and rank the significance of your organization's actual and potential impacts on people and the environment.

- Financial Assessment: Evaluate and rank the sustainability-related risks and opportunities that affect your organization's enterprise value.

- Consolidation: Combine the results of both assessments to determine a final list of material topics. This list forms the foundation of your CSRD report [30].

Data Presentation

Table 1: Comparison of Key Environmental Reporting Frameworks

| Framework | Governing Body | Primary Focus | Materiality Approach | Key Characteristics |

|---|---|---|---|---|

| CSRD/ESRS [1] [29] | European Union | Comprehensive sustainability transparency | Double Materiality (Impact + Financial) | Mandatory for ~50,000 companies; detailed, line-by-line disclosure templates; requires assurance. |

| ISSB (IFRS S1/S2) [1] [29] | IFRS Foundation | Information for capital markets | Financial Materiality | Aims to be a global baseline; incorporates SASB standards; focused on enterprise value. |

| GRI [1] [29] | Global Reporting Initiative | Impacts on economy, environment, people | Impact Materiality | Most widely adopted global standard; modular structure with sector-specific standards. |

Table 2: Essential "Research Reagent Solutions" for ESG Data Management

| Item | Function | Example/Description |

|---|---|---|

| ESG Data Management Platform [1] [27] | Centralizes data collection, validation, and reporting; enables audit trails and framework mapping. | Software like IRIS CARBON or Locus Technologies that automates workflows and integrates with existing systems. |

| Master Disclosure Matrix [1] | Serves as a single source of truth for tracking reporting requirements across all applicable frameworks. | A centralized spreadsheet or database linking data points to ISSB, GRI, and CSRD requirements. |

| Data Governance Framework [33] [30] | Defines people, policies, and processes for data decisions; ensures accountability and data integrity. | A framework assigning data ownership, validation procedures, and security protocols. |

| Control Framework (e.g., COSO ICSR) [30] | Provides structured internal controls over sustainability reporting to ensure data reliability and audit readiness. | A set of controls for processes like data collection, calculation, and management review of ESG metrics. |

| Reporting Format Templates [32] | Standardizes (meta)data structure for specific data types (e.g., water chemistry, GHG emissions) to ensure FAIRness. | Community-developed templates for consistently formatting data fields and metadata. |

Workflow Visualization

Title: ESG Data Mapping from Operations to Reporting

Building Your ESG Data Pipeline: A Step-by-Step Methodology for Life Sciences

Frequently Asked Questions

Q1: What is a "material topic" in the context of drug development and environmental reporting? A1: A material topic is an ESG (Environmental, Social, and Governance) issue that reflects a drug development company's significant economic, environmental, and social impacts, or one that substantively influences the assessments and decisions of stakeholders [1]. For environmental reporting under frameworks like CSRD, this is assessed through the principle of double materiality, meaning you must evaluate both:

- Inside-out impact: How your drug development activities impact the environment (e.g., solvent waste, energy consumption, water usage).

- Outside-in financial impact: How environmental risks and regulations (e.g., carbon pricing, waste disposal laws) present financial risks or opportunities to your development program [1] [27].

Q2: Why is this step so challenging for research scientists? A2: The primary challenge is the misalignment between operational lab data and the specific metrics required by ESG frameworks [27]. Common issues include:

- Data Silos: Environmental impact data (e.g., from waste logs, energy meters, lab equipment) is often fragmented across different departments and systems [27].

- Lack of Standardization: Unlike financial data, sustainability metrics can vary across reporting frameworks, making it difficult to collect data once and use it for multiple reports [1].

- Evolving Regulations: ESG standards are continuously refined, requiring agile data collection practices that can adapt to new requirements [27].

Q3: Our company is in the preclinical phase. Which environmental topics are most material for us? A3: While a full materiality assessment is needed, early-stage companies should prioritize topics where data is readily available and highly relevant to their activities. Key topics often include:

- Energy Consumption & GHG Emissions: From high-energy-use equipment (e.g., -80°C freezers, fume hoods) and transportation of biological samples [27].

- Water Usage: From laboratory processes and cleaning-in-place (CIP) systems [27].

- Waste Management: Particularly hazardous chemical and biological waste generated during research and development [34] [27].

- Supply Chain Sustainability: Environmental impacts of sourcing raw materials, reagents, and single-use plastics [1] [27].

Troubleshooting Guide: Identifying and Prioritizing Material Topics

| Problem/Symptom | Possible Root Cause | Diagnostic Steps | Recommended Solution & Fix |

|---|---|---|---|

| Cannot identify relevant environmental topics. | Lack of familiarity with ESG framework requirements (e.g., CSRD's ESRS, GRI). | 1. Review the list of mandatory and sector-specific data points in the ESRS [27].2. Benchmark against peer companies' sustainability reports.3. Conduct stakeholder interviews with R&D, facilities, and EHS teams. | Build a Master Disclosure Matrix [1] to align and track potential topics against the frameworks you must report on. |

| Data for a topic is fragmented or unavailable. | Operational data (e.g., energy, waste) is not collected centrally or tracked at the project level. | 1. Map the data flow for a key metric (e.g., kg of solvent waste).2. Identify where data is recorded (e.g., lab notebooks, facility invoices).3. Audit the completeness and quality of these data sources. | Develop Unified Data Collection Templates [1] and establish robust data governance with clear ownership for each data category [1]. |

| Struggling to prioritize a long list of topics. | No clear, consistent methodology for scoring and ranking topics based on their impact and relevance. | 1. Define criteria for prioritization (e.g., significance of impact, influence on stakeholder decisions, regulatory imperative).2. Score each topic on these criteria with a cross-functional team. | Use a prioritization matrix to visually plot topics based on agreed-upon scores. Focus first on high-impact, high-probability topics [34]. |

| Uncertain if a topic is "material" for reporting. | The concept of "double materiality" is not being applied correctly. | For each potential topic, ask two questions:1. Impact Materiality: Does our drug development work create a significant impact on the environment through this topic?2. Financial Materiality: Could this topic generate financial risks or opportunities for our company? | A topic is material if the answer to either question is "yes." [1] Document the rationale for your decision. |

Experimental Protocol: Double Materiality Assessment

Objective: To systematically identify, assess, and prioritize material environmental topics for ESG reporting within a drug development organization.

Methodology:

Identification of Topics & Stakeholders:

- Inputs: Brainstorming sessions with R&D, Clinical, CMC, Regulatory, and EHS teams. Review industry standards (e.g., GRI, SASB/ISSB, CSRD/ESRS) [1].

- Stakeholder Mapping: Identify key internal and external stakeholders (e.g., investors, regulators, patients, employees, suppliers).

Assessment of Impacts & Financial Relevance:

- Impact Materiality (Inside-Out): For each topic, assess the scale, scope, and irremediability of your company's environmental impact. Use available operational data (e.g., waste logs, energy bills) to inform the assessment [1].

- Financial Materiality (Outside-In): For each topic, assess the potential financial effects (risks and opportunities) over the short-, medium-, and long-term. Consider factors like changing regulations, market access, and cost of capital [1].

Prioritization of Topics:

- Use a scoring matrix to rank topics based on the results of the dual assessment. This can be a quantitative (e.g., 1-5 scale) or qualitative (e.g., High/Medium/Low) scoring system.

- Visualization: Plot the results on a materiality matrix to provide a clear visual representation of priority topics.

Validation & Review:

- Present the preliminary findings and the draft materiality matrix to senior management and relevant stakeholders for validation [34].

- Establish a process for annual review to ensure the assessment remains current.

Double Materiality Assessment Workflow

The Scientist's Toolkit: Research Reagent Solutions for Environmental Data Management

| Research Reagent / Tool | Function in Context of Environmental Data |

|---|---|

| Lab Equipment Energy Monitors | Devices that measure real-time electricity consumption of specific high-load equipment (e.g., freezers, bioreactors), providing primary data for Scope 2 GHG emission calculations [27]. |

| Electronic Lab Notebooks (ELN) | Digital platforms for recording experimental data, which can be configured to systematically track volumes of solvents and reagents used, enabling more accurate waste and emission inventories. |

| Waste Tracking & Classification Software | Specialized systems to log, categorize, and quantify hazardous and non-hazardous lab waste streams, ensuring accurate data for environmental reporting [27]. |

| Carbon Accounting Software | Platforms that automate the collection, calculation, and management of GHG emission data (Scopes 1, 2, and 3), aligning it with frameworks like the GHG Protocol for reporting [27]. |

| ESG Data Management Platform | Centralized software (e.g., Locus, IRIS CARBON) designed to collect, map, and report ESG data against multiple frameworks (CSRD, GRI, ISSB), ensuring consistency and audit-readiness [1] [27]. |

Quantitative Data on Material Topic Challenges

Table 1: Key Challenges in Preparing for ESG Data Assurance [1]

| Challenge | Percentage of Companies Citing as Top Challenge |

|---|---|

| Lack of internal skills and experience | 42% |

| Evolving regulatory requirements | 38% |

| Data availability and quality | 36% |

| Integrating data from multiple sources | 34% |

| Cost and resource constraints | 29% |

Table 2: Common ESG Framework Requirements for Drug Development [1]

| Framework | Primary Focus | Materiality Approach | Key Environmental Metrics for Drug Dev |

|---|---|---|---|

| ISSB | Enterprise value; investor needs | Financial materiality | GHG Emissions (Scopes 1-3), Climate Risk, Water Usage |

| GRI | Broad stakeholder impact | Impact materiality | Energy, Water, Effluents and Waste, Biodiversity |

| CSRD | Double materiality & stakeholder | Double materiality | All GRI topics plus Circular Economy, Supply Chain Impacts, Pollution |

Materiality Matrix for Topic Prioritization

For researchers in drug development and the sciences, the proliferation of environmental, social, and governance (ESG) reporting frameworks presents a complex data management challenge. A Master Disclosure Matrix is a critical research tool that acts as a centralized database, systematically aligning disparate disclosure requirements from major frameworks like the Global Reporting Initiative (GRI), the Corporate Sustainability Reporting Directive (CSRD), and the International Sustainability Standards Board (ISSB) [1]. Its primary function is to map shared and unique data points, thereby reducing duplication, minimizing reporting fatigue, and ensuring data consistency across studies and regulatory submissions [1]. This guide provides a detailed experimental protocol for constructing such a matrix, tailored to the needs of scientific professionals navigating this intricate landscape.

Troubleshooting Guides and FAQs

FAQ 1: Why is a Master Disclosure Matrix particularly important for scientific and research-oriented organizations? Scientific organizations possess complex operational data related to energy-intensive lab equipment, solvent use, waste generation, and supply chain logistics. The matrix helps methodically identify which specific data points (e.g., Scope 3 emissions from chemical suppliers, water consumption in lab processes) are required by which framework, transforming scattered operational data into structured, auditable disclosures for stakeholders and regulators [1] [5].

FAQ 2: We have begun mapping but found that a single data point, like GHG emissions, is requested by all three frameworks. Why can't we just report the same number everywhere? While themes overlap, the devil is in the details of materiality, scope, and calculation boundaries [1]. The GRI and CSRD employ a double materiality perspective, requiring you to report on your organization's impacts on the environment and how sustainability issues create financial risks and opportunities [35]. The ISSB, conversely, focuses solely on financial materiality and enterprise value [1] [36]. Your experimental protocol for the matrix must capture these nuances to prevent non-compliant disclosures.

FAQ 3: What is the most common source of error when building the matrix for the first time? The most frequent error is treating the matrix as a one-time exercise [1]. These frameworks are dynamic. For instance, the new GRI 101: Biodiversity Standard becomes effective in 2026, introducing comprehensive supply chain reporting requirements [5]. Similarly, the ISSB is continuously enhancing its SASB Standards [36]. A static matrix will quickly become obsolete, leading to reporting inaccuracies.

FAQ 4: How can we manage data collection for disclosures that span multiple departments, such as lab operations, procurement, and facilities? This is a core challenge of cross-functional collaboration [5]. The solution is to establish robust data governance from the outset. Assign clear ownership for each data category (e.g., Procurement owns supplier environmental data, Facilities owns direct energy and emissions data) and implement a centralized data collection platform to break down departmental silos [1].

FAQ 5: Our initial materiality assessment identified numerous potential topics. How do we prioritize what to include in the matrix? Focus first on high-impact, high-frequency metrics that are common across most frameworks [1]. A practical starting point is Scope 1, 2, and 3 greenhouse gas emissions [1]. This provides a manageable "quick win" and establishes the data collection workflow before scaling up to include more complex topics like biodiversity impacts or a full human capital analysis.

Experimental Protocol: Constructing the Master Disclosure Matrix

Objective: To create a unified Master Disclosure Matrix that maps and aligns the disclosure requirements of the GRI, CSRD, and ISSB frameworks, enabling efficient, accurate, and audit-ready sustainability reporting.

Background: Companies face a complex data puzzle with over 600 ESG-related disclosure provisions globally [1]. The GRI, CSRD, and ISSB frameworks, while having thematic overlaps, differ significantly in their definitions, materiality approaches, and required granularity [1]. A systematic mapping methodology is essential to navigate this complexity.

Materials and Reagents

Table: Key Research Reagent Solutions for Matrix Construction

| Reagent Solution | Function in the Experiment |

|---|---|

| Official Framework Standards (e.g., GRI 102, ESRS, IFRS S1/S2) | Serve as the primary source templates for all disclosure requirements and metric definitions [37] [38] [35]. |

| Interoperability Guidance (e.g., GRI-CDP mapping, ISSB-EFRAG guidance) | Provides pre-identified alignments between frameworks, reducing initial mapping workload [20] [36]. |

| Centralized Database/Platform (e.g., ESG software, structured spreadsheet) | Acts as the reaction vessel for consolidating data, housing the matrix, and enabling collaboration [1]. |

| Governance Committee (Cross-functional team) | Catalyzes the process, ensures accountability, and validates materiality decisions across the organization [5] [1]. |

Methodology

Step 1: Identify Core ESG Themes and Material Topics

- Procedure: Begin by listing common ESG themes relevant to your research organization (e.g., climate change, energy, water, waste, biodiversity, human capital) [1]. Conduct a double materiality assessment as per GRI 3 and ESRS to determine which topics are significant from both an impact and financial perspective [5] [35].

- Data Analysis: Engage with key stakeholders (investors, regulators, community) to validate the material topics. Document the methodology and outcome of the assessment [5].

Step 2: Source and Populate Framework-Specific Disclosures

- Procedure: For each material topic, systematically review the GRI Topic Standards (e.g., GRI 102 for Climate, GRI 303 for Water), the relevant ESRS (e.g., ESRS E1 for Climate), and ISSB IFRS S2 (for Climate) and SASB Standards (for industry-specific metrics) [5] [36]. Extract every required disclosure, including narrative and quantitative metrics.

- Data Analysis: Input these discrete disclosure requirements into your centralized database. Tag each one with its source framework, topic, and a unique identifier.

Step 3: Map Alignments and Divergences

- Procedure: This is the core "reaction." For each disclosure from one framework, search for corresponding or related disclosures in the others. Note the nature of the relationship: is it an exact match, a partial match requiring adjusted calculation, or a unique requirement?

- Data Analysis: Use the interoperability guidance from Step 1 as a starting point. Critically assess differences in scope (e.g., operational vs. value chain), calculation methodologies, and materiality lenses [1].

Step 4: Design and Execute Unified Data Collection

- Procedure: Based on the completed matrix, design modular data collection templates. The goal is to capture a data point once at its most granular level, which can then be reformatted for different framework needs [1].

- Data Analysis: Assign data owners for each KPI and establish a clear audit trail. Implement data validation rules at the point of entry to ensure quality [1].

Step 5: Validate, Assure, and Iterate

- Procedure: Test the matrix and data collection process with a pilot group or on a previous reporting period. Seek third-party assurance on a subset of data to verify the system's robustness [35].

- Data Analysis: Establish a quarterly review cycle to monitor and incorporate updates to the frameworks, ensuring the matrix remains a living document [1].

Visualization of Workflow

The following diagram illustrates the logical workflow and iterative nature of constructing and maintaining the Master Disclosure Matrix.

Master Disclosure Matrix Development Workflow

Expected Results and Data Interpretation

A successfully executed protocol will yield a dynamic Master Disclosure Matrix. The table below provides a simplified example of what an output from Step 3 might look like for a common disclosure area.

Table: Example Master Disclosure Matrix Output for Climate-Related Metrics

| Material Topic & Data Point | GRI 102: Climate Change | ESRS E1 (CSRD) | IFRS S2 (ISSB) | Data Source & Owner | Notes on Alignment/Divergence |

|---|---|---|---|---|---|

| Gross Scope 1 Emissions | Required (GRI 102-13) | Required (ESRS E1-6) | Required (IFRS S2) | Facilities Dept. | High alignment: All require disclosure in metric tons of CO2e. Calculation methodology is aligned with GHG Protocol. |

| Climate Transition Plan | Required (GRI 102-15) | Required (ESRS E1-6) | Required if used (IFRS S2) | Strategy/CEO Office | Partial alignment: All require disclosure if a plan exists. GRI & CSRD emphasize "just transition" social aspects [5]. ISSB focuses on financial strategy [36]. |

| Scope 3 Emissions | Required (GRI 102-13) | Required (ESRS E1-6) | Required (IFRS S2) | Procurement & EHS | Alignment with nuance: All require disclosure. ISSB may provide relief for certain financed emissions [36]. Boundary definitions (e.g., R&D partners) must be checked for consistency. |

| Energy Consumption | GRI 103: Energy | Required (ESRS E1-5) | SASB Standards (Industry-specific) | Facilities & Lab Ops | Divergence: GRI & CSRD have detailed breakdowns. ISSB relies on SASB for industry-specific metrics, which may differ in scope [36]. |

Interpretation: The matrix makes interdependencies and conflicts explicit. It shows where a single data source can satisfy multiple frameworks (e.g., Scope 1 emissions) and where nuanced, framework-specific narratives are needed (e.g., transition plans). This allows researchers and sustainability teams to "build once, report many," significantly enhancing efficiency and data reliability [1].

A significant challenge in modern Research and Development (R&D) is the disconnect between high-level sustainability reporting and granular operational data. While comprehensive frameworks like the Global Reporting Initiative (GRI) provide standardized metrics for environmental reporting, organizations consistently struggle to translate broad objectives into practical, tangible operations and extract the necessary data from core R&D processes [39]. This gap is particularly acute in drug development and scientific research, where detailed experimental data exists but is not structured to align with external reporting requirements. The failure to bridge this gap can lead to inaccurate reporting, inefficiency, and an inability to demonstrate the full environmental impact of R&D activities. This guide provides a structured approach to developing unified data collection templates that directly address this mapping challenge.

Core R&D Performance and Environmental Metrics

To create an effective unified template, you must first identify the key performance indicators (KPIs) from both R&D management and environmental reporting frameworks. The table below synthesizes essential metrics that serve this dual purpose.

Table 1: Unified R&D and Environmental Metrics for Data Collection

| Metric Category | Specific KPI | Calculation Formula | Data Source in R&D | Relevance to Environmental Reporting |

|---|---|---|---|---|

| Financial Investment | R&D Spending as % of Revenue [40] | (Total R&D Expenditure / Total Revenue) * 100% |

Financial/ERP System | Indicates commitment to sustainable innovation. |

| Return on Innovation Investment (ROI²) [40] | ((Financial Gain - Cost of Investment) / Cost of Investment) * 100% |

Project financial tracking | Justifies spending on resource-efficient projects. | |

| Pipeline Efficiency | Time-to-Market (TTM) [40] [41] | Average time from project start to market launch |

Project management software | Longer cycles often correlate with higher cumulative resource/energy use. |

| Idea Conversion Rate [40] | (Number of Implemented Ideas / Total Submitted Ideas) * 100% |

Idea management platform | Measures efficiency, reducing waste on non-viable projects. | |

| Output & Impact | Percentage of Revenue from New Products [40] | (Revenue from New Products / Total Revenue) * 100% |

Sales & product database | Tracks commercial success of sustainable product innovations. |

| Total Patents Filed [41] | Count of patents filed in a period | Legal/IP management system | Proxies for innovation output; green patents are a key ESG indicator. | |

| Environmental Resource Use | Direct Energy Consumption | Total kWh from lab operations (per experiment/project) | Utility meters, equipment logs | Core GRI/GHG Protocol metric (GRI 302) [42] [43]. |

| Solvent & Water Usage | Volume of water/solvents used and treated | Inventory & purchasing systems | Material to GRI 303 (Water) and waste management reporting [44]. | |

| Hazardous Waste Generation | Weight of hazardous waste by type (e.g., chemical, biohazard) | Waste manifest logs | Critical for GRI 306 (Waste) and operational footprint assessments [44] [39]. |

Unified Data Collection Workflow

The following diagram illustrates the logical workflow for integrating R&D operational data with environmental reporting, turning disparate data points into compliant reports.

The Scientist's Toolkit: Essential Research Reagents & Materials

Accurate environmental reporting in wet labs depends on tracking the consumption and disposal of key materials. The following table details common reagents and their associated data tracking requirements.

Table 2: Key Research Reagent Solutions and Sustainability Tracking

| Item/Category | Primary Function in Experiment | Key Data to Collect for Sustainability |

|---|---|---|

| Organic Solvents (e.g., DMSO, Acetonitrile, Methanol) | Compound dissolution, mobile phase in HPLC, protein purification. | Volume purchased, volume disposed as hazardous waste, recycling rate. |

| Cell Culture Media & Reagents | Support growth of cellular models in drug screening and toxicity assays. | Volume used, plastic consumables (flasks, plates) associated, biohazard waste generated. |

| Antibodies & Assay Kits | Detection and quantification of specific proteins or biomarkers (ELISA, Western Blot). | Quantity used, packaging waste (plastic, cold chain materials), hazardous chemical components. |

| PCR & Molecular Biology Kits | Gene amplification, sequencing, and cloning. | Plastic consumable waste (tip, tubes, plates), energy consumption of thermocyclers, hazardous dye waste. |

| Chemical Catalysts & Ligands | Enable synthetic chemistry for novel compound creation. | Mass used, associated energy for reactions (heating, cooling), waste stream characterization. |

Troubleshooting Guides and FAQs

FAQ 1: Our experimental data is scattered across lab notebooks and local files. How can we systematically collect it for reporting?

Challenge: Inconsistent and manual data capture leads to gaps and inaccuracies, making it impossible to calculate metrics like solvent waste per project for GRI reporting [39].

Solution:

- Implement a Standardized Digital Template: Develop and deploy a simple, mandatory digital form for all researchers to complete at the end of each experimental procedure or batch. This template should capture the core data points from Table 1 and Table 2.

- Centralize Data Entry: Use a centralized platform (e.g., an Electronic Lab Notebook - ELN - or a custom database) to prevent data silos. This creates a single source of truth for both research progress and environmental impact [45].

- Automate Where Possible: Integrate data pulls from instrument software and purchasing systems to auto-populate fields like energy consumption and material volumes, reducing manual entry errors [39].

FAQ 2: How do we map our internal R&D terms (e.g., "Project Alpha Solvent Use") to standardized GRI metrics (e.g., "GRI 306-3 Waste Generated")?

Challenge: The language of the lab does not directly correspond to the categories used in sustainability frameworks, creating a significant mapping barrier [43].

Solution:

- Create a Data Dictionary: Develop an internal cross-reference table—a data dictionary—that explicitly defines which internal data points correspond to which external reporting metrics.

- Example: Internal data field

"Q1_Acetonitrile_Waste_kg"maps to GRI 306-3 (Waste Generated) and is categorized as Hazardous Waste.

- Example: Internal data field

- Leverage Process Mining: For complex operational processes like "Purchase-to-Pay" for lab supplies, process mining techniques can analyze event logs to identify specific process steps with high environmental impact, creating a heat map for targeted data collection and mapping [39].

FAQ 3: We have the data, but how do we prove its quality and accuracy to auditors and stakeholders?