Beyond the Hype: Assessing Predictive Accuracy of Machine Learning Models for Environmental and Biomedical Data

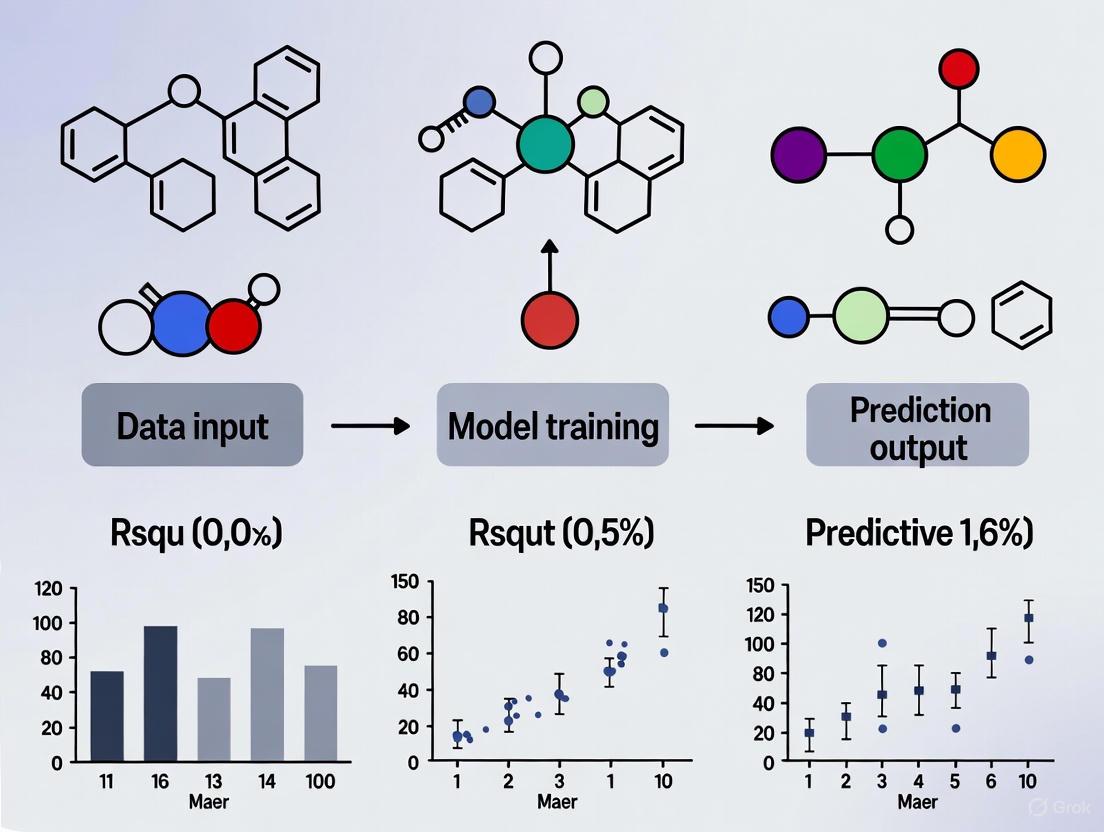

This article provides a comprehensive assessment of machine learning (ML) model accuracy for environmental and biomedical data, a critical concern for researchers and drug development professionals relying on data-driven insights.

Beyond the Hype: Assessing Predictive Accuracy of Machine Learning Models for Environmental and Biomedical Data

Abstract

This article provides a comprehensive assessment of machine learning (ML) model accuracy for environmental and biomedical data, a critical concern for researchers and drug development professionals relying on data-driven insights. It explores the foundational principles of data-driven modeling in complex environmental systems, reviews advanced methodological applications from water quality to climate science, and addresses significant troubleshooting challenges like data scarcity and spatial autocorrelation. The analysis critically evaluates validation frameworks and comparative performance of ML models against traditional methods, offering a rigorous guide for developing reliable predictive tools in biomedical and clinical research contexts.

The Foundation of Data-Driven Modeling: Core Principles and Environmental Data Complexities

Defining the Data-Driven Paradigm in Environmental Science

The field of environmental science is undergoing a profound transformation, shifting from a tradition of intuition-based and reactive management to a new, evidence-based paradigm centered on data-driven decision-making [1]. This approach, termed Data-Driven Environmental Management (DDEM), systematically utilizes data—from sensor readings and satellite imagery to community-sourced information—to inform and optimize environmental decisions and actions [1]. This represents a fundamental departure from older methods, moving towards a more proactive, predictive framework for tackling complex ecological challenges [1]. Concurrently, the broader scientific community has recognized data-driven science as the fourth paradigm of science, following empirical observation, theoretical science, and computational simulation [2]. The convergence of environmental science with advanced machine learning (ML) and the availability of vast, complex datasets is creating unprecedented opportunities to understand, manage, and improve our planetary systems [3] [4].

Core Components of the Data-Driven Workflow

The data-driven paradigm in environmental science is built upon a cyclical process that transforms raw data into actionable insights and measurable environmental improvements [1]. This process can be broken down into several key stages, supported by specialized tools and methodologies.

Table: Core Stages of the Data-Driven Environmental Science Workflow

| Stage | Core Activity | Key Tools & Methods |

|---|---|---|

| Data Acquisition | Collecting raw environmental data | IoT sensors, satellite remote sensing, citizen science initiatives [1] [5] |

| Data Processing | Cleaning, organizing, and managing data | Cloud computing platforms, data preprocessing algorithms [1] |

| Data Analysis | Extracting patterns and building models | Machine learning, statistical modeling, Geographic Information Systems (GIS) [1] |

| Insight Extraction | Interpreting results to generate knowledge | Data visualization dashboards, statistical inference [1] |

| Decision-Making & Action | Implementing data-informed interventions | Predictive management strategies, adaptive policy frameworks [1] |

Successfully implementing this workflow requires a suite of key resources and reagents. The table below details essential components for a research environment focused on machine learning for environmental impact prediction.

Table: Essential Research Reagents and Resources for Data-Driven Environmental Science

| Category | Item | Function / Application |

|---|---|---|

| Data Sources | LCA Databases (e.g., Ecoinvent) [6] | Provide standardized, high-quality life cycle inventory data for training and validating predictive models. |

| Public Materials Databases (e.g., Materials Project, ICSD) [2] | Offer computed and experimental properties of known and hypothetical materials for environmental impact studies. | |

| Sensor Networks & Satellite Imagery [1] [5] | Enable continuous, real-time collection of critical environmental parameters like pollutant concentrations and habitat changes. | |

| Software & Modeling Tools | Simulation Software (e.g., SimaPro) [6] | Used to generate reference LCA data for validating the predictions of novel machine learning models. |

| Fuzzy Inference System (FIS) Generators [6] | Approaches like Fuzzy C-Means (FCM) and Subtractive Clustering create interpretable, non-linear models for complex environmental systems. | |

| Neuro-Fuzzy Modeling Platforms (e.g., ANFIS in MATLAB) [6] | Combine the learning power of neural networks with the transparent logic of fuzzy systems for predicting emissions. | |

| Evaluation Frameworks | Statistical Testing Suites [7] | Used to assign statistical significance when comparing machine-learning models and ensure robustness of performance claims. |

| Paired Evaluation Methods [8] | A simple, robust approach for evaluating ML model performance in small-sample studies and identifying the impact of confounders. |

Comparative Analysis of Machine Learning Approaches

The application of machine learning within the data-driven environmental paradigm spans a wide range of tasks, from classifying water quality for aquaculture to predicting the life-cycle environmental impacts of chemicals [3] [9]. Selecting the appropriate model and evaluation metric is critical for generating reliable, actionable results.

Model Performance in Environmental Management Tasks

Different ML models excel in different environmental applications. The following table summarizes the performance of various models on two distinct tasks: optimizing water quality management in aquaculture and predicting CO2 emissions for agricultural products.

Table: Comparative Performance of ML Models on Environmental Prediction Tasks

| Model / Application | Key Performance Metrics | Experimental Context & Dataset |

|---|---|---|

| Voting Classifier (Ensemble) | Accuracy: 100%, Cross-validation: High performance [9] | Task: Predict optimal water quality management actions for tilapia aquaculture. Dataset: Synthetic dataset of 150 samples, 21 water quality parameters, 20 management scenarios [9]. |

| Random Forest | Accuracy: 100%, Cross-validation: High performance [9] | |

| Gradient Boosting | Accuracy: 100%, Cross-validation: High performance [9] | |

| Neural Network | Accuracy: 100%, Mean Cross-validation Accuracy: 98.99% ± 1.64% [9] | |

| Adaptive Neuro-Fuzzy Inference System (ANFIS) | High accuracy in predicting CO2 equivalent emissions [6] | Task: Predict CO2 emissions for open-field strawberry production using data from greenhouse cultivation. Dataset: LCA data from Ecoinvent database; model trained and validated in MATLAB [6]. |

| Fuzzy C-Means (FCM) | Highest accuracy among FIS generation approaches [6] |

A Guide to Evaluation Metrics for Model Comparison

Choosing the right metric is as important as choosing the right model. The table below outlines common evaluation metrics, guiding researchers on their appropriate use when comparing models for environmental science applications.

Table: Machine Learning Evaluation Metrics for Environmental Research

| Metric | Formula / Principle | Best Use Case in Environmental Science |

|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) [7] | Provides a general overview when dataset classes are balanced. Less informative for imbalanced data (e.g., rare event prediction). |

| Sensitivity (Recall) | TP/(TP+FN) [7] | Critical when the cost of missing a positive event is high (e.g., failing to detect a toxic chemical spill). |

| Specificity | TN/(TN+FP) [7] | Essential when correctly identifying negative instances is paramount (e.g., confirming a water source is safe). |

| Precision | TP/(TP+FP) [7] | Important when false alarms (False Positives) are costly or resource-intensive (e.g., triggering an unnecessary emergency response). |

| F1-Score | 2 · (Precision · Recall)/(Precision + Recall) [7] | A balanced measure when seeking a harmonic mean between precision and recall, useful for overall model assessment on imbalanced data. |

| Area Under the ROC Curve (AUC) | Area under the Sensitivity vs. (1-Specificity) plot [7] | Evaluates the model's overall ranking capability across all possible classification thresholds. A value of 1 indicates perfect classification. |

| Matthews Correlation Coefficient (MCC) | (TN·TP - FN·FP) / √((TP+FP)(TP+FN)(TN+FP)(TN+FN)) [7] | A robust metric for binary classification that produces a high score only if the model performs well across all four confusion matrix categories (TP, TN, FP, FN). |

Experimental Protocols for Robust Model Assessment

To ensure that comparisons between machine learning models are fair and scientifically sound, researchers must adhere to rigorous experimental protocols. This is especially critical when dealing with the complex, often confounded, datasets common in environmental science.

Protocol 1: Paired Evaluation for Small-Sample Studies

Environmental datasets, such as those from specific crop studies or rare ecological events, are often limited in sample size. The paired evaluation method is a robust approach for these scenarios [8].

- Objective: To generate a detailed decomposition of performance estimates, identify outliers, and accurately compare models in the presence of known confounders without requiring data modification [8].

- Procedure:

- Pair Formation: From the test data, systematically form all possible unique pairs of samples. A pair is considered "rankable" if their true labels can be ordered. To account for measurement uncertainty, a parameter δ can be set to define a minimum required separation in the label space for a pair to be rankable [8].

- Model Ranking: For each rankable pair, assess whether the model correctly ranks the two samples (e.g., assigns a higher probability or score to the sample with the higher true label value) [8].

- AUC Estimation: Calculate the estimated AUC as the fraction of all rankable pairs that were ranked correctly. This aligns with the probabilistic interpretation of AUC [8].

- Model Comparison: To compare two models (A and B), create a 2x2 contingency table counting pairs that (i) both models rank correctly, (ii) only A ranks correctly, (iii) only B ranks correctly, and (iv) both models rank incorrectly. Statistical significance of the difference in performance can be assessed using tests like Fisher's exact test or McNemar's test [8].

- Visualization: The following diagram illustrates the logical workflow and decision points in the paired evaluation method.

Protocol 2: Statistical Testing for Model Comparison

When comparing the performance of two or more models, it is insufficient to simply report metric values. Statistical tests are required to determine if observed differences are significant [7].

- Objective: To assign statistical significance when comparing machine-learning models, ensuring that a perceived improvement is not due to random chance [7] [8].

- Procedure:

- Data Collection: Obtain multiple, independent values of the chosen evaluation metric (e.g., accuracy, F1-score) for each model. This is typically achieved through repeated k-fold cross-validation or bootstrapping [7].

- Test Selection:

- For comparing two models, a paired t-test can be used if the metric values are approximately normally distributed and the same data splits are used for both models [7].

- For comparing multiple models on multiple datasets, more advanced tests like ANOVA with post-hoc tests are appropriate [7].

- As demonstrated in the paired evaluation protocol, Fisher's exact test or McNemar's test can be applied to contingency tables built from paired comparisons [8].

- Interpretation: A resulting p-value below a predetermined significance level (e.g., 0.05) suggests that the difference in model performance is statistically significant.

Advanced Considerations and Future Directions

The data-driven paradigm continues to evolve, pushing the boundaries of what is possible in environmental prediction and management. Key areas of advancement include the integration of physical laws with data-driven models and the development of frameworks for long-term, uncertain climate projections [4]. For instance, the Learning the Earth with AI and Physics (LEAP) initiative leverages AI to uncover patterns in vast climate datasets while embedding the physical laws and causal mechanisms of climate science into their algorithms [4]. Furthermore, addressing data scarcity remains a critical challenge. Future progress depends on establishing large, open, and transparent life-cycle assessment (LCA) databases and constructing more efficient, chemically relevant descriptors for model input [3]. The integration of large language models (LLMs) is also expected to provide new impetus for database building and feature engineering, further accelerating this transformative field [3].

Unique Characteristics of Environmental and Ecological Data

Environmental and ecological data present unique challenges and opportunities for machine learning (ML) applications. Unlike many other domains, ecological data are characterized by their complex spatial and temporal dependencies, high dimensionality, and multiscale interactions between biological and physical processes. As ecological systems face increasing pressures from climate change, biodiversity loss, and pollution, accurate predictive modeling has become essential for conservation planning, policy development, and sustainable resource management. This guide examines the distinctive attributes of environmental data through a comparative analysis of ML performance across multiple ecological applications, providing researchers with evidence-based insights for model selection and implementation.

Defining Characteristics of Environmental and Ecological Data

Environmental and ecological data possess several distinguishing features that directly impact ML model performance and selection strategies.

Spatial and Temporal Dependency

Ecological processes operate across nested spatial and temporal scales, creating complex dependency structures in the data. For instance, plant trait data collected along elevation gradients in Norway demonstrated how climate change impacts manifest differently across organizational levels from physiology to ecosystems [10]. This spatiotemporal autocorrelation violates the independence assumption common in many statistical models and requires specialized approaches that explicitly account for these dependencies.

High Dimensionality and Multimodality

Modern ecological studies integrate diverse data types creating high-dimensional, multimodal datasets. The Norwegian plant trait study exemplifies this characteristic, combining 28,762 trait measurements with 2.26 billion leaf temperature readings, 3,696 ecosystem CO2 flux measurements, and high-resolution multispectral imagery [10]. Similarly, comprehensive water quality management in aquaculture requires simultaneous monitoring of 21 distinct parameters spanning physical, chemical, and biological indicators [9].

Nonlinearity and Threshold Responses

Ecological systems frequently exhibit nonlinear dynamics and threshold responses to environmental drivers. Research on Rose's mountain toad demonstrated counterintuitive survival patterns where adult mortality increased during wetter years despite the species' dependence on aquatic breeding habitats [11]. These complex nonlinear relationships challenge traditional modeling approaches but are well-suited to certain ML algorithms.

Comparative Performance of Machine Learning Models

Experimental evaluations across multiple environmental domains reveal significant variation in model performance depending on data characteristics and prediction tasks.

Table 1: Comparative Performance of ML Models Across Environmental Applications

| Application Domain | Top-Performing Models | Key Performance Metrics | Data Characteristics |

|---|---|---|---|

| Ground-level Ozone Prediction | XGBoost, Random Forest | R² = 0.873, RMSE = 8.17 μg/m³ [12] | Time-series pollution data with lagged features |

| Aquaculture Management | Neural Networks, Ensemble Methods | Accuracy = 98.99% ± 1.64% [9] | Multi-parameter water quality measurements |

| Climate Emulation | Linear Pattern Scaling (LPS) | Outperformed deep learning on temperature prediction [13] | Climate model output data |

| Ecological Quality Assessment | CA-Markov Model | Predicted spatial ecological patterns [14] | Remote sensing imagery time series |

| Environmental Mobility | Random Forest Classification | F1 scores: 0.87 (very mobile), 0.81 (mobile), 0.96 (non-mobile) [15] | Chemical structure fingerprints |

Table 2: Model Performance Trade-offs in Environmental Applications

| Model Category | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|

| Tree-Based Models (XGBoost, RF) | High accuracy with tabular data, handles missing values well [12] | Limited extrapolation capability, less effective with spatial data | Pollution prediction, trait-based classification |

| Neural Networks | Excellent for complex patterns, high-dimensional data [9] | Data hunger, computational intensity, limited interpretability | Image analysis, complex system modeling |

| Physics-Informed Models | Strong extrapolation, incorporates domain knowledge [13] | May oversimplify complex processes | Climate projection, fundamental processes |

| Hybrid Approaches | Leverages strengths of multiple approaches [14] | Implementation complexity | Land use change, ecosystem forecasting |

Experimental Protocols and Methodologies

Lagged Feature Prediction for Ozone Pollution

The superior performance of XGBoost in ozone prediction (R² = 0.873) emerged from a rigorous experimental protocol incorporating historical context through lagged features [12].

Dataset Composition: The study utilized hourly ground-level air quality observations from January 1 to December 31, 2023, obtained from Station 1006A of the China National Environmental Monitoring Center, combined with meteorological reanalysis data from the ERA5-Land product.

Feature Engineering: The critical innovation was the incorporation of lagged features, including historical concentrations of ozone and nitrogen dioxide (NO₂) from the previous 1-3 hours. This temporal context significantly enhanced model performance compared to approaches using only current conditions.

Model Training Protocol:

- Feature Selection: XGBoost combined with SHAP analysis identified 11 key features from initial candidate variables, improving computational efficiency by 30% without sacrificing accuracy.

- Hyperparameter Tuning: Researchers employed GridSearchCV with TimeSeriesSplit (5-fold cross-validation) to prevent data leakage and maintain temporal integrity.

- Evaluation Metrics: Comprehensive assessment using R², RMSE, and MAE with particular attention to 24-hour prediction performance.

Water Quality Management Decision Support

The development of highly accurate ML models (98.99% accuracy) for tilapia aquaculture water quality management addressed the critical gap between prediction and actionable decisions [9].

Synthetic Dataset Development: Due to the absence of publicly available decision-focused datasets, researchers created a comprehensive synthetic dataset representing 20 critical water quality scenarios:

- Ammonia spikes (Total Ammonia Nitrogen = 2.0 mg/L)

- Low dissolved oxygen (DO = 4.0 mg/L)

- pH fluctuations (pH = 6.2)

- Combined stressors and emergency situations

Data Generation Methodology:

- Primary Parameter Setting: Key parameters for each scenario were set to values established in aquaculture literature.

- Secondary Parameter Generation: Remaining parameters were generated using realistic ranges and correlations derived from established literature.

- Controlled Variation: Multiple samples per scenario incorporated ±10-20% variation to simulate realistic measurement variability.

Preprocessing Pipeline:

- Class balancing using SMOTETomek to address imbalanced scenario frequencies

- Feature scaling to normalize parameter measurements

- Comprehensive evaluation including accuracy, precision, recall, F1-score with cross-validation

Climate Model Emulation Benchmarking

The demonstration that simpler physics-based models (Linear Pattern Scaling) can outperform deep learning for certain climate prediction tasks required careful experimental design to address natural variability in climate data [13].

Benchmarking Challenge: Natural climate variability (e.g., El Niño/La Niña oscillations) can distort standard evaluation metrics, creating misleading performance assessments.

Robust Evaluation Framework:

- Standard Benchmark Comparison: Initial comparison showed LPS outperforming deep learning on nearly all parameters, contradicting domain expectations for complex patterns.

- Variability-Adjusted Evaluation: Development of new evaluation methods with expanded data to address natural climate variability.

- Domain-Specific Validation: Revised evaluation confirmed deep learning advantages for local precipitation prediction while maintaining LPS superiority for temperature forecasting.

Implementation Insight: The study highlighted that model selection must consider specific prediction tasks, with deep learning showing particular value for problems involving extreme precipitation and aerosol impacts.

Visualization of Environmental Data Structures

The complex, multi-scale nature of environmental data requires specialized visualization approaches to understand relationships and dependencies.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Environmental ML research requires specialized data sources, processing tools, and analytical frameworks.

Table 3: Essential Research Tools for Environmental Machine Learning

| Tool Category | Specific Solutions | Function & Application | Representative Use |

|---|---|---|---|

| Data Sources | National Environmental Monitoring Center (CNEMC) data | Provides ground-level air quality observations for pollution modeling [12] | Ozone and NO₂ measurements for Beijing prediction |

| Data Sources | ERA5-Land reanalysis product | Supplies meteorological parameters (temperature, humidity, wind) [12] | Historical weather data for ozone prediction models |

| Data Sources | Vestland Climate Grid | Long-term climate and ecological monitoring across elevation gradients [10] | Plant trait-climate relationship studies |

| Processing Tools | Google Earth Engine (GEE) cloud platform | Processes multi-temporal remote sensing data for large-scale analysis [14] | Ecological quality assessment in Johor, Malaysia |

| Processing Tools | R packages (tidyverse, spectrolab, LeafArea) | Specialized statistical analysis and visualization of ecological data [10] | Plant functional trait data processing |

| Modeling Frameworks | Python Scikit-learn with TimeSeriesSplit | Prevents data leakage in temporal model validation [12] | Ozone prediction with lagged features |

| Modeling Frameworks | Adaptive Neuro-Fuzzy Inference Systems (ANFIS) | Combines neural networks with fuzzy logic for complex systems [6] | Life cycle assessment of agricultural products |

| Specialized Sensors | Multi-parameter water quality sensors | Monitors dissolved oxygen, pH, ammonia, temperature in aquaculture [9] | Real-time water quality management in tilapia farming |

| Specialized Sensors | Handheld hyperspectral sensors | Captures detailed spectral signatures of vegetation [10] | Plant trait and physiological status assessment |

The unique characteristics of environmental and ecological data—including spatiotemporal dependencies, multimodal sources, and complex nonlinearities—demand careful matching of machine learning approaches to specific prediction tasks. Evidence from comparative studies demonstrates that no single modeling approach dominates across all environmental applications. Instead, model selection must be guided by data characteristics, domain knowledge integration, and specific prediction requirements. Tree-based models like XGBoost excel with tabular environmental data, particularly when enhanced with temporal feature engineering, while simpler physics-based approaches remain valuable for fundamental climate processes. The most promising future direction lies in hybrid modeling frameworks that leverage the strengths of multiple approaches while explicitly accommodating the unique properties of environmental data through specialized preprocessing, feature engineering, and validation protocols tailored to ecological systems.

AI's role in environmental science is marked by a powerful contradiction: it is both a catalyst for a green technology revolution and a significant consumer of natural resources. For researchers and scientists, the key lies in strategically selecting and deploying models where their predictive accuracy and efficiency yield the greatest net environmental benefit. This guide objectively compares the performance of different AI approaches, providing the data and methodologies needed to inform these critical decisions.

Performance Comparison of AI Models in Environmental Research

The table below summarizes key quantitative findings on the performance and environmental impact of various AI models and approaches, providing a basis for comparison.

| Model / Approach | Reported Efficiency Gain / Performance | Environmental Cost / Impact | Key Application Context |

|---|---|---|---|

| AI for Environmental Data Analysis | Reduces decision-making time by >60% compared to traditional methods [16]. | Not specified; overall system efficiency is the primary metric. | General environmental data analysis and complex issue resolution [16]. |

| GPT-4 (Code Generation) | Can achieve functional correctness on programming problems using a multi-round correction process [17]. | Emitted 5 to 19 times more CO₂eq than human programmers for the same task [17]. | Solving programming problems from the USA Computing Olympiad (USACO) database [17]. |

| Smaller Models (e.g., GPT-4o-mini) | Can match human environmental impact when successful, but may have higher failure rates [17]. | Can match the environmental impact of human programmers upon success [17]. | Solving programming problems from the USA Computing Olympiad (USACO) database [17]. |

| Small Language Models (SLMs) | Cost-efficient, suitable for edge deployment, and easier to customize for specific domains [18]. | Lower infrastructure requirements and operational costs due to smaller size (1M-10B parameters) [18]. | Enterprise AI strategies, edge computing, and specialized agentic AI systems [18]. |

| Generative AI (Training) | N/A (Initial model creation phase). | GPT-3 training consumed ~1,287 MWh of electricity, generating ~552 tons of CO₂ [19]. | Training of large foundational models like OpenAI's GPT-3 and GPT-4 [19]. |

| Generative AI (Inference) | A single ChatGPT query consumes about 5x more electricity than a web search [19]. | Inference is estimated to account for 80-90% of total AI computing power and energy demands [20]. | Daily use of deployed models, such as queries to ChatGPT or other large language models [19] [20]. |

Experimental Protocols for Assessing AI Performance and Impact

To ensure objective and reproducible comparisons, researchers should adhere to standardized experimental protocols. The following methodologies are critical for evaluating both the functional performance and the environmental footprint of AI models.

Multi-Round Correction Process for Functional Accuracy

This protocol, derived from a comparative study of AI and human programmers, is designed to achieve functionally correct outputs from AI models, which is a prerequisite for a fair environmental impact assessment [17].

Objective: To iteratively guide an AI model to produce a functionally correct output (e.g., a piece of code that passes all test cases) and measure the resources consumed in the process [17].

Workflow:

- Problem Selection: Choose a task with clear, objective correctness criteria. The cited study used programming problems from the USA Computing Olympiad (USACO) database, which includes predefined test cases [17].

- Initial Prompting: The selected problem is formatted into a prompt and sent to the AI model via its API [17].

- Execution and Validation: The model's output is executed and validated against the ground-truth test cases [17].

- Iterative Correction: If the output fails, specific feedback based on the type of error (e.g., runtime error, wrong answer, time limit exceeded) is fed back to the model with a request to fix the code. This loop continues until either all tests pass or a predefined iteration limit (e.g., 100 rounds) is reached [17].

The following diagram illustrates this iterative workflow:

Life Cycle Assessment (LCA) for Environmental Impact

This methodology provides a holistic framework for quantifying the environmental footprint of AI operations, crucial for making informed trade-off decisions [17].

Objective: To calculate the carbon dioxide equivalent (CO₂eq) emissions of AI inference requests, encompassing both operational and embodied energy costs [17].

Framework Components (based on the Ecologits LCA model): The total environmental impact is calculated using a two-part framework [17]:

- Usage Impacts: Operational energy consumed directly by the AI task.

- Formula:

Total Energy = PUE × (EGPU + Eserver\GPU) - Variables:

PUE: Power Usage Effectiveness of the data center.EGPU: Energy used by GPUs, modeled as a function of the number of output tokens and the model's active parameters.Eserver\GPU: Energy used by other server components.

- Formula:

- Embodied Impacts: Emissions from manufacturing the computing and cooling hardware, allocated to each inference request based on its resource consumption [17].

Application to Human Comparison: When comparing against human performance, as in the coding study, the environmental impact of human work is estimated using average computing power consumption (e.g., from running a laptop for the task duration) and associated emissions [17].

The logical structure of this impact assessment is shown below:

The Scientist's Toolkit: Key Reagents & Materials

For researchers implementing and evaluating AI systems in environmental science, the following tools and concepts are essential.

| Item / Concept | Function / Relevance in Research |

|---|---|

| Life Cycle Assessment (LCA) | A standardized methodology (ISO 14044) for assessing the environmental impacts associated with all stages of a product's life, from raw material extraction to disposal. Critical for quantifying the true carbon cost of AI models [17]. |

| Power Usage Effectiveness (PUE) | A metric that measures a data center's energy efficiency. It is the ratio of total facility energy to IT equipment energy. A lower PUE indicates a more efficient facility and is a key variable in LCA calculations [17]. |

| Multi-round Correction Agent | An AI system designed to iteratively critique and correct its own outputs. This is a key experimental protocol for achieving functional accuracy in complex tasks, but it significantly increases the number of AI calls and energy use [17]. |

| Small Language Models (SLMs) | Models with 1 million to 10 billion parameters. They are a key reagent for reducing environmental impact due to their lower computational demands, suitability for edge deployment, and easier domain specialization [18]. |

| Pre-trained Models & APIs | Leveraging existing, general-purpose models via API or through fine-tuning. This is a key strategy for reducing energy consumption, as it avoids the immense cost of training new models from scratch [21]. |

| Ecologits Software Package | An open-source tool (version 0.8.1 cited) that employs LCA methodology to estimate the embodied and usage ecological impacts of AI inference requests, helping to automate impact calculations [17]. |

Strategic Implications for Research and Development

The data and methodologies presented lead to several critical conclusions for professionals in research and development:

- The Inference Problem is Paramount: While model training garners significant attention, the inference phase—the daily use of deployed models—is estimated to consume 80-90% of AI's computing power and is the primary driver of its long-term environmental footprint [20]. Optimization efforts must focus here.

- The High Cost of Marginal Gains: Research indicates that a substantial portion of training energy (about half, in one observation) can be spent chasing the last 2-3 percentage points of accuracy [22]. For many applications, accepting a "good enough" model can yield dramatic energy savings.

- Efficiency as a Driving Trend: The field is rapidly evolving towards more efficient practices. This includes the rise of Small Language Models (SLMs) [18], algorithmic improvements that double efficiency every eight to nine months (a trend termed "negaflops") [22], and hardware innovations that reduce precision without sacrificing performance [22].

- System-Level Optimization is Critical: Beyond the model itself, choices about data center location (for access to renewable energy and cooler climates) [22] [19], scheduling compute tasks for times of day with cleaner energy mixes [22], and using advanced cooling systems can drastically reduce the net environmental impact [21].

In conclusion, AI presents a dual reality for the green technology revolution. Its ability to accelerate environmental research is undeniable, yet this comes with a tangible resource cost. The path forward requires a meticulous, evidence-based approach where model selection is guided not only by predictive accuracy but also by computational efficiency, ultimately ensuring that the promise of AI contributes positively to global sustainability goals.

The predictive accuracy of machine learning (ML) models in environmental research is fundamentally constrained by the quality of the underlying data. While model architecture is often a focus of optimization, data-centric challenges—specifically data scarcity, class imbalance, and difficulties in measuring trace concentrations—represent significant and frequently underestimated pitfalls. These issues can lead to models that are imprecise, lack generalizability, or fail to detect critical, low-frequency environmental events. Success in this field requires a rigorous approach to data collection, preprocessing, and model evaluation to ensure that predictions are both statistically sound and actionable for researchers and policymakers. This guide objectively compares the performance of various methodological approaches and ML models designed to overcome these common data pitfalls, providing a framework for developing more reliable environmental forecasting tools.

Foundational Concepts and Data Pitfalls

Defining Core Data Challenges

- Data Scarcity: A "too-small-for-purpose sample size," which can ruin a study by resulting in overfitting, imprecision, and a lack of statistical power [23]. In environmental contexts, collecting extensive, high-quality labeled data is often prohibitively expensive or logistically complex.

- Class Imbalance: A common issue in classification problems where the classes of interest are not represented equally. In predictive monitoring, for instance, crucial events like pollutant spills or equipment failures are typically rare compared to normal operation data.

- Trace Concentrations: Measuring environmental variables that are present at very low levels, such as specific heavy metals or pollutants, is subject to significant challenges. Data can be error-prone and contain measurement and misclassification errors, which are often wrongfully considered unimportant [23]. The "noisy data fallacy" is the misconception that only the strongest effects will be detected in such data, when in reality, the relationships can be complex and unpredictable [23].

The Impact of Data Pitfalls on Model Performance

These data challenges directly undermine the reliability of ML models. Data scarcity can cause a model to learn idiosyncrasies of a limited dataset that do not generalize to the broader population, a problem known as overfitting [23]. Class imbalance can lead a model to become biased toward the majority class, achieving high accuracy by simply ignoring the rare but often most important events. Finally, the presence of measurement error in data on trace concentrations can introduce bias and obscure the true relationships between variables, leading to flawed conclusions.

Comparative Analysis of Methodologies and Model Performance

Strategies for Mitigating Data Scarcity and Imbalance

Different strategies offer distinct advantages and trade-offs for handling scarce or imbalanced datasets. The table below summarizes the performance of common approaches based on documented experimental protocols.

Table 1: Performance Comparison of Mitigation Strategies for Data Scarcity and Imbalance

| Methodology | Experimental Protocol | Key Performance Findings | Advantages | Limitations/Disadvantages |

|---|---|---|---|---|

| Synthetic Data Generation [9] | - Define critical scenarios based on literature/expert knowledge.- Generate parameter values using realistic ranges and correlations.- Introduce controlled variation (±10-20%) to base values. | Creates a robust foundation for model development where real-world data is absent. Enabled multiple ML models to achieve high accuracy (>98%) in decision-support tasks. | Directly addresses complete data absence. Allows for controlled, scenario-specific data creation. | Fidelity is dependent on the accuracy of the underlying assumptions and expert knowledge. |

| Algorithmic Data Balancing (SMOTETomek) [9] | - Apply hybrid sampling technique combining Synthetic Minority Over-sampling (SMOTE) and Tomek links undersampling.- Integrate into preprocessing workflow before model training. | Effectively balanced a multi-class dataset, enabling robust model training. Used in a study where top models achieved perfect accuracy on a held-out test set. | Addresses class imbalance directly in the data space. Can improve model performance on minority classes. | May increase computational overhead. Can potentially introduce noise if not carefully tuned. |

| Simulation & Physics-Based Emulators [13] | - Use simpler, physics-based models (e.g., Linear Pattern Scaling) to generate data or predictions.- Compare performance against complex deep-learning models on a standardized benchmark. | In climate prediction, simpler models outperformed deep learning for estimating regional surface temperatures. Deep learning was better for local rainfall estimates. | Often more interpretable and computationally efficient. Leverages existing scientific knowledge. | May lack the flexibility to capture complex, non-linear relationships as effectively as deep learning. |

| Ensemble Modeling (Voting Classifier) [9] | - Combine predictions from multiple base models (e.g., Random Forest, XGBoost, Neural Networks).- Use a majority or weighted voting system to determine the final prediction. | Multiple ensemble and individual models achieved perfect accuracy (100%) on a test set for a water quality management task, with cross-validation confirming high robustness. | Leverages strengths of diverse models, reducing variance and improving generalization. | Increased computational cost and complexity in training and deployment. |

Model Performance on Processed Environmental Data

Once data challenges are mitigated, selecting an appropriate ML model is crucial. The following table compares the performance of various models applied to preprocessed environmental data.

Table 2: Comparative Performance of Machine Learning Models on Environmental Prediction Tasks

| Model | Application Context | Reported Performance Metrics | Comparative Advantage |

|---|---|---|---|

| Random Forest | Water Quality Management Decision Support [9] | 100% Accuracy, 100% F1-Score on test set. | High accuracy, robust to overfitting, handles non-linear relationships well. |

| Gradient Boosting (XGBoost) | Water Quality Management Decision Support [9] | 100% Accuracy, 100% F1-Score on test set. | High predictive power and efficiency; often a top performer on structured data. |

| Neural Network | Water Quality Management Decision Support [9] | 98.99% ± 1.64% Mean Accuracy (Cross-Validation). | High capacity for learning complex, non-linear patterns from data. |

| Linear Pattern Scaling (LPS) | Climate Emulation (Local Temperature) [13] | Outperformed deep-learning models on temperature prediction in a robust evaluation. | Superior for linear relationships, highly interpretable, computationally efficient. |

| Lasso Regression | Predicting PM2.5 Air Quality Index [24] | Model: PM2.5AQI = 83.08 - 10.30(Humidity) - 0.13(Temp); Adjusted R²: 0.15, RMSE: 25.36. | Performs automatic variable selection, prevents overfitting via regularization. |

| Deep Learning Model | Climate Emulation (Local Precipitation) [13] | Outperformed Linear Pattern Scaling for precipitation prediction in a robust evaluation. | Best for capturing non-linearity and complex patterns in specific contexts like rainfall. |

Experimental Protocols in Practice

Detailed Workflow: From Data Acquisition to Model Validation

The following diagram illustrates a consolidated experimental workflow, synthesized from multiple studies, for handling environmental data pitfalls.

Key Methodological Steps Explained

- Problem Definition & Scenario Identification: The process begins by clearly defining the research question and the specific environmental scenarios to be modeled, often derived from literature and expert consultation [9]. This step is critical for determining subsequent data needs.

- Data Acquisition & Preprocessing: Data is collected from relevant sources (e.g., IoT sensors, historical databases). Preprocessing involves cleaning the data and handling missing values, which is crucial as "any method of analysis may fail to accurately predict... performance" with incomplete data [25]. Feature scaling is also applied to normalize parameter ranges [9] [24].

- Exploratory Data Analysis (EDA): This phase involves visualizing and analyzing the data to understand distributions, identify potential imbalances, and detect outliers, justifying the prerequisites for modeling such as normality [24].

- Addressing Data Pitfalls: This is the core mitigation step. Based on the EDA, researchers may:

- Generate synthetic data to cover critical but unobserved scenarios [9].

- Apply class-balancing algorithms like SMOTETomek to rectify imbalanced datasets [9].

- Employ physics-based simulations to augment data or serve as a baseline, acknowledging that simpler models can sometimes outperform complex AI [13].

- Model Training & Selection: Multiple ML algorithms are trained on the processed dataset. Studies often compare a suite of models, from regularized linear regressions [24] to ensemble methods and neural networks [9].

- Robust Model Evaluation: A critical step to avoid skewed results. This involves using cross-validation to ensure robustness and applying improved benchmarking techniques that account for factors like natural climate variability, which can distort standard performance scores [13]. Comparing complex models against simpler baselines is essential.

The Scientist's Toolkit: Essential Research Reagents & Solutions

In the context of computational environmental science, "research reagents" refer to the essential software tools, algorithms, and data preparation techniques that enable robust model development.

Table 3: Key Computational Reagents for Environmental ML Research

| Tool/Solution | Category | Function & Application |

|---|---|---|

| SMOTETomek [9] | Algorithmic Data Balancer | A hybrid sampling technique that reduces class imbalance by generating synthetic minority class samples (SMOTE) and cleaning the data space (Tomek links). |

| Synthetic Data Generator | Data Augmentation Tool | Creates representative datasets based on expert-defined scenarios and realistic parameter ranges, mitigating total data scarcity [9]. |

| Linear Pattern Scaling (LPS) [13] | Physics-Based Emulator | A simple, interpretable model based on physical relationships. Serves as a high-performance baseline and robust solution for certain linear prediction tasks. |

| Voting Classifier [9] | Ensemble Model | Combines predictions from multiple base estimators (e.g., Random Forest, XGBoost) to improve generalizability and accuracy. |

| Lasso/Ridge Regression [24] | Regularized Linear Model | Linear models with penalty terms that prevent overfitting and, in Lasso's case, perform automatic variable selection. Useful for datasets with many predictors. |

| Color Brewer 2.0 [26] | Visualization Aid | Provides empirically tested and accessible color palettes for data visualization, ensuring charts are interpretable for all audiences, including those with color vision deficiencies. |

Navigating the pitfalls of data scarcity, imbalance, and trace concentration measurement is a prerequisite for developing accurate machine learning models in environmental research. Evidence shows that no single model or strategy is universally superior. The optimal approach depends on the specific problem context: simpler, physics-based models like Linear Pattern Scaling can be remarkably effective and efficient for well-understood, linear relationships [13], while ensemble methods and neural networks excel at capturing complex, non-linear patterns when sufficient, well-preprocessed data is available [9]. Crucially, the commitment to rigorous methodologies—including synthetic data generation, strategic class balancing, and, most importantly, robust evaluation against simple baselines—is what ultimately ensures model predictions are reliable and fit for purpose in guiding critical environmental decisions.

From Theory to Practice: Methodologies and Real-World Applications in Predictive Modeling

Machine learning (ML) has emerged as a transformative tool in environmental science, enabling researchers to analyze complex ecological systems, predict phenomena with unprecedented accuracy, and inform policy decisions. Environmental data presents unique challenges including high dimensionality, spatiotemporal dependencies, and complex nonlinear relationships between variables. Unlike traditional statistical methods that require explicit parameterization of physicochemical mechanisms, ML algorithms operate within a non-parametric paradigm, autonomously extracting discriminative features from multidimensional datasets through explicit learning mechanisms [12]. This capability makes ML particularly valuable for modeling intricate environmental processes such as atmospheric chemistry, hydrological systems, and ecological patterns.

The application of ML in environmental prediction spans numerous domains including air and water quality monitoring, land use classification, energy consumption forecasting, and climate impact assessment. As noted in studies of urban heat distribution, while ML is already being used for predictions in environmental science, it remains crucial to assess whether data-driven models that successfully predict a phenomenon are representationally accurate and actually increase our understanding of the phenomenon [27]. This comparative guide examines the performance of key machine learning algorithms across various environmental prediction tasks, providing researchers with evidence-based insights for selecting appropriate methodologies for their specific applications.

Key Machine Learning Algorithms for Environmental Prediction

Algorithm Categories and Characteristics

Environmental prediction utilizes diverse machine learning approaches, each with distinct strengths for handling different data types and prediction tasks. Random Forest (RF) is an ensemble learning method that operates by constructing multiple decision trees during training and outputting the mode of classes (classification) or mean prediction (regression) of the individual trees. Support Vector Machines (SVM) are supervised learning models that analyze data for classification and regression analysis by finding the optimal hyperplane that separates classes in high-dimensional space. Artificial Neural Networks (ANN) are computing systems inspired by biological neural networks that learn to perform tasks by considering examples without being programmed with task-specific rules. Gradient Boosting Models (GBM) including XGBoost are ensemble techniques that build models sequentially, with each new model attempting to correct the errors of the previous ones. Long Short-Term Memory (LSTM) networks are a type of recurrent neural network capable of learning long-term dependencies in sequential data, making them particularly valuable for time-series forecasting.

Performance Comparison Across Environmental Domains

Table 1: Comparative Performance of ML Algorithms in Environmental Prediction Tasks

| Environmental Domain | Best Performing Algorithm(s) | Performance Metrics | Key Findings | Citation |

|---|---|---|---|---|

| Water Quality Anomaly Detection | Modified Encoder-Decoder with Quality Index | Accuracy: 89.18%, Precision: 85.54%, Recall: 94.02% | Superior anomaly detection in treatment plants using adaptive quality assessment | [28] |

| Land Use/Land Cover Classification | Random Forest, Artificial Neural Network | Overall Accuracy: 94-96%, Kappa: 0.91-0.93 | RF and ANN outperformed SVM in urban LULC classification of Lusaka and Colombo | [29] |

| Ground-Level Ozone Prediction | XGBoost with Lagged Features | R²: 0.873, RMSE: 8.17 μg/m³ | Lagged Feature Prediction Model significantly enhanced accuracy across all algorithms | [12] |

| Corporate Green Innovation | Gradient Boosting Model | Superior to Linear Model, Decision Tree, and Random Forest | Better captured non-linear relationships in corporate environmental performance data | [30] |

| Coastal Wetland Classification | Random Forest | Higher accuracy than K-Nearest Neighbors | Pixel-based classification outperformed object-based analysis in heterogeneous areas | [31] |

| Energy Consumption Prediction | Ridge Algorithm | Lowest MSE across multiple sectors | Outperformed Lasso, Elastic Net, Extra Tree, RF, and K Neighbors in efficiency and accuracy | [32] |

Experimental Protocols and Methodologies

Data Preparation and Feature Engineering

Successful environmental prediction requires meticulous data preparation and strategic feature engineering. In the ozone prediction study, researchers implemented a Lagged Feature Prediction Model (LFPM) that incorporated historical concentrations of ozone and nitrogen dioxide from the past 3 hours as lagged features [12]. This approach recognized that ozone concentration is influenced by the accumulation effect of precursor pollutants and meteorological conditions with time lags. The experimental design utilized hourly ground-level air quality observations from the China National Environmental Monitoring Center network combined with meteorological parameters from the ERA5-Land reanalysis product with 0.25° × 0.25° spatial resolution.

For land use and land cover classification, the protocol involved acquiring Landsat Thematic Mapper (TM) and Operational Land Imager (OLI) imagery for multiple time periods (1995-2023) [29]. The images underwent radiometric and atmospheric correction before classification. Training data was collected through stratified random sampling, with reference polygons digitized using high-resolution ancillary data and field verification. To address missing data in environmental sensors, which undermines reliability, researchers have developed ensemble imputation methods that simultaneously consider both time dependence of univariate data and correlation between multivariate variables [33].

Model Training and Validation Approaches

Model validation in environmental prediction requires special consideration of temporal and spatial dependencies. In the ozone prediction study, researchers used TimeSeriesSplit with 5-fold cross-validation to prevent data leakage and ensure model consistency with time series data [12]. This approach progressively expands the training window while maintaining time order, using subsequent data as a validation set to simulate real-world prediction scenarios. Hyperparameter tuning was performed using GridSearchCV from the Python Sklearn library.

For image classification tasks, standard validation metrics include Overall Accuracy (OA), Kappa coefficient, producer's accuracy, and user's accuracy [29] [31]. These metrics provide complementary information about classification performance, with producer's accuracy measuring how well training set pixels are classified and user's accuracy indicating the probability that a pixel classified into a category actually represents that category on the ground.

Diagram 1: Experimental workflow for environmental prediction showing the sequential process from data collection to interpretation, with common algorithm-domain applications.

Research Reagent Solutions: Essential Tools for Environmental ML

Table 2: Essential Research Tools and Data Sources for Environmental Machine Learning

| Tool Category | Specific Tools/Sources | Application in Environmental ML | Key Features | |

|---|---|---|---|---|

| Remote Sensing Platforms | Landsat TM/OLI, UAV-mounted multispectral sensors | Land use classification, vegetation monitoring | Multi-temporal data, various spatial resolutions | [29] [31] |

| Environmental Sensor Networks | IoT-based environmental sensors, China National Environmental Monitoring Center | Air/water quality monitoring, real-time data collection | Measure multiple parameters (CO, CO2, PM2.5, NO2, etc.) | [12] [33] |

| Meteorological Data Sources | ERA5-Land reanalysis, local weather stations | Climate and pollution modeling | Gridded data, multiple meteorological variables | [12] |

| Software Libraries | Python Scikit-learn, TensorFlow, R CARET | Model implementation and training | Pre-built algorithms, hyperparameter tuning tools | [12] [29] |

| Validation Frameworks | TimeSeriesSplit, k-fold Cross-Validation | Model performance assessment | Prevents data leakage, robust accuracy estimation | [12] |

| Feature Engineering Tools | SHAP, Principal Component Analysis | Feature selection and importance analysis | Model interpretability, dimensionality reduction | [12] |

Case Studies in Environmental Prediction

Urban Land Use and Land Cover Classification

A comprehensive study compared RF, ANN, and SVM for detecting spatio-temporal land use-land cover dynamics in Lusaka and Colombo from 1995 to 2023 [29]. The research utilized Landsat Thematic Mapper (TM) and Operational Land Imager (OLI) imagery, with results showing that RF and ANN models exhibited superior performance, both achieving Mean Overall Accuracy (MOA) of 96% for Colombo and 96% and 94% for Lusaka, respectively. The RF algorithm notably produced slightly higher overall accuracy and kappa coefficients (0.92-0.97) compared to both ANN and SVM models across both study areas. The study revealed significant land use changes, with vegetation expanding by 11,990 ha (60.4%) in Lusaka during 1995-2005, primarily through conversion of bare lands, though built-up areas experienced substantial growth (71%) from 2005 to 2023.

Ground-Level Ozone Prediction in Beijing

A systematic comparison of nine machine learning methods for predicting ground-level ozone pollution in Beijing demonstrated the superior performance of XGBoost when combined with lagged features [12]. The study incorporated historical concentrations of ozone and nitrogen dioxide from the past 3 hours as lagged features. Initial results using only meteorological variables showed limited accuracy (LSTM-based methods achieved R² = 0.479). Adding five pollutant variables markedly improved predictive performance across all methods, with XGBoost achieving the highest accuracy (R² = 0.767). Further application of the Lagged Feature Prediction Model (LFPM) enhanced prediction accuracy for all nine methods, with XGBoost leading (R² = 0.873, RMSE = 8.17 μg/m³), representing a 125% relative improvement in R² compared to meteorological-variable-only predictions.

Water Quality Anomaly Detection

Research on water quality monitoring introduced a machine learning approach with a modified Quality Index (QI) for anomaly detection in treatment plants [28]. The method integrated an encoder-decoder architecture with real-time anomaly detection and adaptive QI computation, providing dynamic evaluation of water quality. Experimental results demonstrated superior performance with accuracy of 89.18%, precision of 85.54%, recall of 94.02%, and Critical Success Index of 93.42%. The revised QI was continuously updated using real-time sensor data, aiding decision-making in treatment operations. This approach highlighted the practical utility of combining machine learning with adaptive quality assessment for improving water treatment plant efficiency.

Diagram 2: Data-algorithm-application relationships in environmental prediction showing how different data types inform algorithm selection for specific environmental domains.

The comparative analysis of machine learning algorithms for environmental prediction reveals that algorithm performance is highly dependent on the specific application domain, data characteristics, and feature engineering strategies. Ensemble methods, particularly Random Forest and Gradient Boosting variants like XGBoost, consistently demonstrate strong performance across multiple environmental domains including air quality prediction, land use classification, and water quality monitoring. The success of these algorithms stems from their ability to model complex nonlinear relationships while maintaining robustness against overfitting.

The integration of domain knowledge through feature engineering significantly enhances model performance, as evidenced by the substantial improvements achieved through lagged features in ozone prediction [12] and adaptive quality indices in water quality monitoring [28]. Furthermore, the comparison of pixel-based versus object-based image analysis highlights the importance of matching analytical approaches to the heterogeneity of the environment being studied [31].

Future research directions should focus on developing hybrid models that leverage the strengths of multiple algorithms, improving model interpretability for environmental decision-making, and creating standardized validation frameworks that account for spatial and temporal autocorrelation in environmental data. As machine learning continues to evolve, its integration with process-based models may offer the most promising path toward both accurate prediction and enhanced understanding of environmental systems.

The optimization of water quality management is a cornerstone for the success and sustainability of tilapia aquaculture, with poor water quality remaining the primary cause of production losses, disease outbreaks, and environmental degradation [9]. Predictive modeling using machine learning (ML) has emerged as a transformative approach, moving beyond traditional monitoring to provide proactive, data-driven management decisions. However, a significant gap persists in the literature between simply predicting water quality parameters and recommending specific, actionable management actions—a gap that hinges on the predictive accuracy and reliability of the underlying models [9]. For researchers and professionals, the critical question is not merely which model can be applied, but how to select and validate a model that is "good enough" for specific operational contexts, a determination that requires a nuanced understanding of performance metrics and evaluation protocols [34]. This case study provides a comparative analysis of machine learning models for predicting water quality management actions, offering a framework for assessing model performance within the broader thesis of environmental data research.

Experimental Protocols: Methodologies for Model Development and Validation

The assessment of predictive model performance requires rigorously designed experiments. The following protocols, synthesized from recent studies, outline the standard methodology for developing and validating ML models for aquaculture water quality.

Dataset Development and Preprocessing

A primary challenge in this domain is the lack of standardized, public datasets. One seminal study addressed this by creating a synthetic dataset based on an extensive review of literature and established aquaculture best practices [9].

- Scenario Definition: The researchers systematically defined 20 distinct water quality scenarios representing common challenges in Nile tilapia aquaculture. These included ammonia spikes, low dissolved oxygen, pH fluctuations, high salinity, and copper toxicity [9].

- Data Generation: For each scenario, key parameters were set to values identified in the literature (e.g., Dissolved Oxygen = 4.0 mg/L for a "low DO" scenario). The remaining parameters were generated using realistic ranges and correlations derived from established literature, with controlled variation (±10–20%) added to simulate realistic measurement noise [9].

- Preprocessing: The dataset of 150 samples was preprocessed using class balancing with SMOTETomek to address potential class imbalance and feature scaling to normalize the parameter ranges for model consumption [9].

Model Training and Validation Framework

A robust validation strategy is paramount to avoid over-optimistic performance reports and to ensure model generalizability.

- Model Selection: Studies typically compare a suite of ML algorithms to identify the most suitable approach. Commonly evaluated models include Random Forest (RF), Gradient Boosting, XGBoost, Support Vector Machines (SVM), and Neural Networks [9] [35]. Ensemble models, such as a Voting Classifier, are also employed to leverage the strengths of individual models [9].

- Performance Metrics: Predictive performance is evaluated using multiple metrics to provide a comprehensive view [9] [34]. Common metrics include:

- Accuracy: The proportion of total correct predictions.

- Precision: The proportion of correct positive predictions among all positive predictions (minimizing false positives).

- Recall: The proportion of actual positives correctly identified (minimizing false negatives).

- F1-Score: The harmonic mean of precision and recall.

- Cross-Validation: To ensure robustness, models are evaluated using k-fold cross-validation (e.g., 5-fold), which partitions the data into 'k' subsets, repeatedly training the model on k-1 folds and validating on the held-out fold [9] [36]. This process provides a mean performance score and a measure of its variability (e.g., ± standard deviation).

The Critical Step of Accuracy Assessment

An accuracy assessment definitively evaluates the correctness of a classification by comparing model predictions to a reference dataset, typically summarized in an error or confusion matrix [37] [38]. This matrix is the foundation for key metrics:

- User's Accuracy: Also known as precision, it reflects errors of commission (false positives). It is calculated from the rows of the confusion matrix [37].

- Producer's Accuracy: Equivalent to recall, it reflects errors of omission (false negatives). It is calculated from the columns of the confusion matrix [37].

- Kappa Statistic: A measure of agreement that corrects for chance, providing an overall assessment of classification accuracy [37].

The following diagram illustrates the structured workflow for a robust predictive modeling experiment in this domain.

Comparative Model Performance: A Multi-Study Analysis

Different studies have evaluated a range of ML models, with performance varying based on the specific task (classification vs. regression), dataset characteristics, and optimization techniques.

Table 1: Comparative Performance of ML Models in Aquaculture Water Quality Prediction

| Study Focus | Best Performing Model(s) | Key Performance Metrics | Comparative Models |

|---|---|---|---|

| Management Action Classification [9] | Voting Classifier, Random Forest, Gradient Boosting, XGBoost, Neural Network | Accuracy: 100% (test set);Neural Network CV Accuracy: 98.99% ± 1.64% | Support Vector Machines, Logistic Regression |

| Water Quality Parameter Prediction [35] | Support Vector Machine (SVM) | Accuracy: ~99%;Correlation Coefficient: 0.99 for DO, pH, NH3-N, NO3-N, NO2-N | BPNN, RBFNN, LSSVM |

| Water Quality Classification [36] | HBA-Optimized XGBoost | Average Accuracy: 98.05% (5-fold CV);Highest Accuracy: 98.45% | (Model optimized with Honey Badger Algorithm) |

The high accuracy scores reported in these studies, particularly the multiple models achieving perfect scores on test sets [9], demonstrate the potential of ML for this application. However, it is critical to interpret these values in context. As highlighted in ecological informatics, a model achieving a high value on one metric should not be accepted uncritically as proof of high predictive performance, as values can be influenced by factors like species prevalence and study design [34].

Beyond a Single Metric: A Multi-Dimensional View

Model selection requires a balanced consideration of multiple performance attributes, not just a single accuracy score.

Table 2: Model Attributes and Suitability for Deployment

| Model | Key Strengths | Considerations for Deployment | Interpretability |

|---|---|---|---|

| Random Forest (RF) | High accuracy, robust to noise, handles nonlinear relationships [9] [39]. | Computationally expensive for very large datasets; "off-line" iterative nature can make incorporating new data complex [40]. | Medium (provides feature importance) |

| XGBoost | High predictive accuracy, computational efficiency, built-in regularization [9] [36]. | Requires careful hyperparameter tuning for optimal performance [36]. | Medium (provides feature importance) |

| Support Vector Machine (SVM) | Excellent generalization on small datasets, robust to overfitting [9] [35]. | Performance can be sensitive to kernel choice and hyperparameters [35]. | Low (often seen as a "black box") |

| Neural Network | Very high accuracy, capable of modeling extreme complexity [9]. | High computational cost; requires large amounts of data; prone to overfitting without validation [9] [40]. | Low (complex "black box") |

| Ensemble (Voting) | Leverages strengths of multiple models; often achieves top-tier performance and robustness [9]. | Increased complexity in training and maintenance [9]. | Varies (depends on base models) |

The Researcher's Toolkit: Essential Reagents and Computational Solutions

The experimental work in predictive water quality modeling relies on a combination of physical monitoring technologies and computational frameworks.

Table 3: Key Research Reagent Solutions for Aquaculture Water Quality Modeling

| Tool / Solution | Function / Description | Application in Research |

|---|---|---|

| IoT Sensor Array | A system of sensors for continuous, real-time monitoring of parameters like pH, DO, temperature, and turbidity [41]. | Generates the high-resolution temporal data required for training and validating predictive models [9] [41]. |

| Synthetic Data Generation | A methodology for creating realistic, labeled datasets based on expert-defined scenarios and literature ranges [9]. | Overcomes the critical barrier of scarce public datasets, enabling model development and initial testing [9]. |

| SMOTETomek | A hybrid data preprocessing technique that combines oversampling (SMOTE) and undersampling (Tomek links) [9]. | Addresses class imbalance in datasets, ensuring models are not biased toward the most frequent management scenarios [9]. |

| SHAP (SHapley Additive exPlanations) | A game theory-based approach to explain the output of any machine learning model [36]. | Provides post-hoc model interpretability, identifying which water quality parameters (e.g., Ammonia, DO) are most influential in predictions [36]. |

| Cross-Validation Framework | A resampling procedure (e.g., 5-fold) used to evaluate a model's ability to generalize to unseen data [9] [36]. | A core protocol for providing a robust estimate of model performance and mitigating overfitting [9]. |

This comparison guide demonstrates that multiple machine learning models, including Random Forest, XGBoost, SVM, and Neural Networks, can achieve exceptionally high predictive accuracy for water quality management in aquaculture, with several studies reporting results exceeding 98% [9] [35] [36]. Rather than identifying a single universally optimal model, the evidence indicates that model selection should be guided by specific deployment requirements, such as dataset size, need for interpretability, and computational constraints [9] [40]. The pursuit of predictive accuracy must be grounded in rigorous experimental protocols—including synthetic data generation, robust cross-validation, and comprehensive accuracy assessment using a confusion matrix—to ensure that models are not only statistically sound but also truly fit-for-purpose in supporting the complex decisions required for sustainable aquaculture [9] [37] [34].

The rapid degradation of air quality poses a pervasive threat to global public health and environmental stability, necessitating advanced predictive frameworks for timely intervention [42]. Traditional monitoring systems, often reliant on static ground-based stations, fall short in capturing the complex, non-linear spatiotemporal dynamics of air pollutants, leading to delayed public warnings [42]. Machine learning (ML) has emerged as a transformative tool, capable of processing complex, multi-source environmental data to deliver real-time air quality assessment and predictive health risk mapping [42]. This case study objectively compares the performance of various ML models applied to this critical task, situating the analysis within the broader thesis of assessing predictive accuracy in environmental data research. For researchers and scientists, understanding the relative strengths, experimental protocols, and performance benchmarks of these algorithms is paramount for developing effective early warning systems and pollution control strategies.

Performance Benchmarking of Machine Learning Models

A critical review of recent studies reveals a diverse landscape of ML algorithms applied to Air Quality Index (AQI) prediction and health risk assessment. The selection of an appropriate model hinges on a balance between predictive accuracy, computational efficiency, interpretability, and suitability for real-time deployment. The table below provides a comparative summary of model performances from key studies, using standardized evaluation metrics.

Table 1: Comparative Performance of Machine Learning Models for AQI Prediction

| Study & Context | Top-Performing Model(s) | Key Performance Metrics | Key Input Features | Other Models Tested |

|---|---|---|---|---|

| Amravati, India (2025) [43] | Decision Tree + Grey Wolf Optimization (GWO) | R²: 0.9896, RMSE: 5.9063, MAE: 2.3480 | PM2.5, PM10, NO2, NH3, SO2, CO, Ozone | Random Forest, CatBoost, SVR, Unoptimized Decision Tree |

| Gazipur, Bangladesh (2025) [44] | Gaussian Process Regression (GPR) | R²: >96%, RMSE: 1.219 (Testing) | PM2.5, PM10, CO (selected via feature importance) | Ensemble Regression, SVM, Regression Tree, Kernel Approximation |

| Iğdır, Türkiye (2025) [45] | XGBoost | R²: 0.999, RMSE: 0.234, MAE: 0.158 | PM₁₀, SO₂, NO₂, O₃, & 5 meteorological variables | LightGBM, Support Vector Machine (SVM) |

| General Health Risk Assessment (2024 Review) [46] | Random Forest | Most popular algorithm (used in 34.62% of 26 reviewed studies) | Diverse clinical and demographic data | SVM, Neural Networks, DNN, XGBoost |

The data indicates that no single model is universally superior; performance is highly dependent on the specific environmental context, data quality, and feature set. Ensemble methods like Random Forest and XGBoost consistently demonstrate high predictive power and robustness across different domains, including environmental and health data [42] [46] [45]. Furthermore, the integration of metaheuristic optimization algorithms, such as Grey Wolf Optimization (GWO), can significantly enhance the performance of base models like Decision Trees, pushing their accuracy to state-of-the-art levels [43]. For resource-constrained environments, simplified models using a minimal set of high-importance pollutants (e.g., PM2.5, PM10, CO) have proven to deliver high accuracy (exceeding 96%) without the complexity of processing numerous input features [44].

Detailed Experimental Protocols and Methodologies

The rigorous benchmarking of ML models requires a methodical approach to data handling, model training, and evaluation. The following workflow generalizes the experimental protocols common to the cited studies, providing a reproducible template for environmental data research.

Data Acquisition and Preprocessing

- Data Sourcing: Models are trained on historical air quality data from official monitoring networks [43] [45] or IoT-based sensor systems [44]. These datasets typically include concentrations of critical pollutants (PM2.5, PM10, NO2, O3, SO2, CO) and often incorporate meteorological variables (temperature, humidity, wind speed/direction) [42] [45].

- Data Cleaning: This critical step involves handling missing values, removing duplicate records, and identifying outliers, for instance, using box plotting techniques as employed by [44]. This ensures data consistency and reliability for model training.

- Data Transformation: To prepare data for ML algorithms, normalization is commonly applied. Techniques like Min-Max scaling (to a 0-1 range) [44] or Z-score normalization [47] are used to bring all features onto a comparable scale, preventing models from being biased by variables with larger numerical ranges.

Feature Engineering and Selection

- AQI Calculation: The AQI is frequently calculated from pollutant concentrations using standardized linear interpolation formulas based on national or international breakpoint tables [43] [44].

- Feature Importance: Identifying the most influential predictors is key to building efficient models. The Random Forest algorithm is often used for this purpose, as demonstrated by [44], which found PM2.5, PM10, and CO to be the most significant features, allowing for a simplified yet accurate predictive model.

Model Training, Tuning, and Evaluation

- Data Splitting: A standard 80:20 split for training and testing sets is widely used to validate model performance on unseen data [43] [44].

- Hyperparameter Tuning: To maximize performance, models undergo systematic hyperparameter optimization. Methods include:

- Model Validation: K-fold cross-validation (e.g., 5-fold or 10-fold) is essential for assessing model stability and generalizability, reducing the risk of overfitting [43] [44].

- Performance Metrics: Models are evaluated using a suite of metrics, each providing a different perspective [48]:

- R-squared (R²): Explains the proportion of variance in the AQI predictable from the input features.

- Root Mean Square Error (RMSE): Penalizes larger errors more heavily.

- Mean Absolute Error (MAE): Provides a linear score for the average error magnitude.

The experimental workflow relies on a suite of computational tools and data resources. The following table details these essential components, which form the backbone of research in this field.

Table 2: Key Research Reagent Solutions for ML-Based Air Quality Studies

| Tool / Resource | Type | Primary Function in Research | Example Use Case |

|---|---|---|---|

| Scikit-learn [43] | Software Library | Provides implementations of a wide array of ML algorithms (RF, SVM, DT) and data preprocessing utilities. | Model building, hyperparameter tuning with GridSearchCV, and metric calculation. |

| XGBoost / LightGBM [42] [45] | Software Library | High-performance, ensemble gradient boosting frameworks designed for speed and accuracy. | Handling structured environmental data and achieving state-of-the-art prediction scores. |

| Python (Pandas, NumPy) [43] | Programming Environment | Core languages and libraries for data manipulation, analysis, and numerical computation. | Data cleaning, feature engineering, and integrating the entire modeling pipeline. |

| Jupyter Notebook [43] | Interactive Environment | An open-source web application for creating and sharing documents containing live code and visualizations. | Prototyping models, exploratory data analysis, and documenting the research process. |

| Centralized Air Quality Databases [43] [45] | Data Source | Repositories of historical and real-time pollutant concentration data from government and research institutions. | Sourcing ground-truth data for model training and validation. |

| IoT Sensor Networks [42] [44] | Data Source | Mobile and fixed sensors that generate real-time, high-resolution data on pollutants and meteorology. | Enabling continuous data flow for live model updates and real-time risk mapping. |