Beyond the Silo: How Integrated Frameworks Are Revolutionizing Chemical Impact Assessment in Biomedicine

This article examines the critical shift from fragmented to integrated assessment frameworks for chemicals and materials, a transition vital for drug development and biomedical research.

Beyond the Silo: How Integrated Frameworks Are Revolutionizing Chemical Impact Assessment in Biomedicine

Abstract

This article examines the critical shift from fragmented to integrated assessment frameworks for chemicals and materials, a transition vital for drug development and biomedical research. It explores the limitations of traditional, siloed approaches that evaluate health, environmental, and socio-economic impacts in isolation, often leading to overlooked cumulative effects and inefficient R&D. The piece delves into modern methodologies like the Impact Outcome Pathway (IOP) and computational platforms that unify data and models, aligning with the EU's Safe and Sustainable by Design (SSbD) goals. Through analysis of troubleshooting strategies and real-world case studies on substances like PFAS and nanomaterials, the article provides researchers and drug development professionals with a roadmap for adopting holistic, data-driven assessment practices that enhance predictability, safety, and sustainability.

The High Cost of Fragmentation: Why Siloed Assessment Fails Modern Drug Development

The assessment of chemicals and materials has traditionally been conducted through a fragmented paradigm, where health, environmental, social, and economic impacts are evaluated independently using disconnected methodologies and data streams [1]. This disjointed approach creates significant challenges for comprehensive safety and sustainability decision-making, as it inherently limits the ability to capture critical trade-offs and synergies between different types of impacts. For instance, a chemical might demonstrate a favorable toxicity profile in isolation yet present substantial environmental persistence issues that remain unaccounted for in a separate assessment silo. Similarly, a material deemed sustainable based on life cycle analysis might raise unexamined social concerns within its supply chain. This compartmentalization persists despite growing recognition that chemical impacts are interconnected across biological systems, environmental compartments, and socioeconomic dimensions. The following analysis examines the limitations of this traditional framework through specific experimental and regulatory case studies, contrasting it with emerging integrated approaches that seek to provide a more holistic basis for decision-making in chemical development and regulation.

Core Limitations of Assessment Silos

Inadequate Capture of System-Wide Impacts

The traditional fragmented model operates on the assumption that chemical impacts can be adequately understood through isolated assessments that are later combined. However, this approach fundamentally fails to account for complex interactions and emergent properties that only become apparent when systems are studied as interconnected networks.

Disconnected Data Streams: Toxicity data, environmental fate information, exposure models, and socioeconomic indicators are typically generated using different experimental protocols, timescales, and metrics that resist meaningful integration [1]. This creates significant barriers to understanding how hazards manifest across different biological organizational levels or how environmental releases translate to human exposure.

Inconsistent Metrics: Different assessment domains employ conflicting success criteria—potency versus degradability versus cost-effectiveness—without established methods for weighting or prioritization [1]. This metric inconsistency creates confusion for decision-makers attempting to balance competing objectives in chemical design or regulatory approval.

Limited Predictive Capacity: The failure to establish mechanistic links between molecular structures, their biological interactions, and broader environmental and socioeconomic consequences restricts the ability to predict impacts for new or modified chemicals [1]. This predictive gap necessitates repetitive, resource-intensive testing for each new chemical entity.

Experimental Evidence Highlighting Fragmentation Challenges

Table 1: Performance Comparison of Independent Versus Integrated Assessment Models

| Assessment Model Type | Predictive Accuracy for Media Concentration | Predictive Accuracy for Cellular Concentration | Key Limitations | Primary Data Requirements |

|---|---|---|---|---|

| Fragmented Models (Independent) | Moderate (R² = 0.65-0.75) | Low (R² = 0.45-0.55) | Fails to capture cell-media-partitioning dynamics; requires separate parameterization | Chemical properties only; isolated system parameters |

| Armitage Integrated Model | High (R² = 0.82-0.89) | Moderate-High (R² = 0.72-0.81) | Requires comprehensive parameter input; complex implementation | Chemical properties, cell characteristics, media composition, labware properties [2] |

| Fisher Time-Dependent Model | High (R² = 0.80-0.87) | Moderate (R² = 0.68-0.76) | Computationally intensive; requires metabolic parameters | Chemical properties, cell characteristics, metabolism rates, labware properties [2] |

| Fischer Equilibrium Model | Moderate (R² = 0.70-0.78) | Low-Moderate (R² = 0.58-0.67) | Excludes headspace partitioning; limited to non-volatile chemicals | Chemical properties, basic media and cell parameters [2] |

Comparative studies of chemical distribution models provide quantitative evidence of fragmentation limitations. Research evaluating four mass balance models revealed that simpler, compartmentalized models demonstrated significantly reduced accuracy in predicting cellular concentrations compared to more integrated approaches [2]. The Armitage model, which incorporates media, cellular, labware, and headspace compartments, showed superior overall performance with predictions of media concentrations being markedly more accurate than those for cells, highlighting the critical importance of accounting for system interactions [2].

The sensitivity analyses conducted in these studies further demonstrated that fragmented models focusing exclusively on chemical properties failed to capture critical determinants of bioavailable concentrations, whereas integrated models properly accounted for the complex interplay between chemical parameters, cell characteristics, media composition, and experimental conditions [2].

Methodological Flaws in Traditional Testing Approaches

Problematic Extrapolation from Nominal Concentrations

A fundamental methodological weakness in traditional assessment approaches involves the reliance on nominal concentrations rather than biologically relevant free concentrations in experimental systems.

Experimental Protocol: Measuring Versus Predicting Free Concentrations

Traditional Approach: Researchers add a known quantity of test chemical to an in vitro system (nominal concentration) and directly use this value for dose-response modeling without accounting for distribution phenomena [2].

Integrated Approach:

- Step 1: Characterize complete test system composition including media serum content, cellular lipid/protein levels, labware polymer type, and headspace volume [2].

- Step 2: Apply mass balance models (e.g., Armitage model) to predict free concentrations in media and cellular compartments [2].

- Step 3: Validate predictions against experimentally measured free fractions using chemical analysis techniques [2].

- Step 4: Use corrected free concentrations rather than nominal values for dose-response assessment and QIVIVE modeling [2].

The reliance on nominal concentrations creates particular challenges for Quantitative In Vitro to In Vivo Extrapolation (QIVIVE), where in vitro effect concentrations are translated to equivalent in vivo doses. Studies have demonstrated that failure to account for differences between nominal concentrations and freely dissolved fractions in media can lead to significant underestimation or overestimation of actual bioavailable doses, compromising the accuracy of safety determinations [2].

Case Study: Pyrethroids Assessment Challenges

The assessment of pyrethroid insecticides illustrates how fragmented approaches struggle with complex real-world scenarios involving mixed exposures and tissue-specific bioaccumulation.

Table 2: Disconnected Hazard and Exposure Data in Pyrethroids Assessment

| Assessment Dimension | Traditional Fragmented Approach | Data Gaps and Limitations | Impact on Risk Determination |

|---|---|---|---|

| Hazard Identification | Isolated toxicity testing focusing on individual compounds | Inadequate evaluation of cumulative effects; limited mechanistic understanding | Fails to address real-world mixture exposures; mode of action uncertainties |

| Toxicokinetics | Separate in vivo studies with limited human relevance | Poor characterization of tissue-specific distribution and metabolism | Inaccurate extrapolation from external dose to internal target exposure |

| Exposure Assessment | Compound-specific regulatory monitoring | Limited biomonitoring data; insufficient temporal and spatial coverage | Underestimation of aggregate exposure from multiple sources and compounds |

| Bioactivity Assessment | Disconnected high-throughput screening data | Assays not organized by biological pathways or tissue systems | Difficult to derive pathway-based points of departure for risk assessment |

Traditional risk assessment for pyrethroids has relied heavily on acceptable daily intakes (ADIs) derived from animal studies, with limited capacity to address combined exposures that frequently approach or exceed regulatory thresholds [3]. These approaches have struggled to characterize neurotoxicity mechanisms and bioaccumulation in critical tissues like the brain, liver, and lungs, creating significant uncertainties in protection levels [3].

A tiered Next-Generation Risk Assessment (NGRA) case study for pyrethroids demonstrated how integrating ToxCast bioactivity data with toxicokinetic modeling revealed tissue-specific pathways as critical risk drivers that were obscured in conventional fragmented assessments [3]. This integrated approach enabled a more nuanced evaluation of internal dose-response relationships and cumulative effects, highlighting the limitations of standalone hazard or exposure assessments.

The Research Toolkit: Essential Materials and Methods

Key Reagents and Computational Tools for Modern Chemical Assessment

Table 3: Research Reagent Solutions for Integrated Chemical Assessment

| Tool Category | Specific Technologies/Assays | Primary Function | Application in Assessment |

|---|---|---|---|

| Bioanalytical Tools | NMR, X-ray crystallography, Surface Plasmon Resonance (SPR) | Fragment binding detection and characterization | Identifying low molecular weight fragments that bind to biological targets [4] |

| High-Throughput Screening | ToxCast assay battery, RNA sequencing, cytochrome P450 activity assays | Pathway-based bioactivity profiling | Categorizing chemical effects by biological pathways and tissue systems [3] |

| Computational Prediction | (Q)SAR models, BIOWIN, EPISUITE, VEGA, ADMETLab 3.0 | Predicting environmental fate and toxicological properties | Estimating persistence, bioaccumulation, mobility, and toxicity endpoints [5] |

| Mass Balance Models | Armitage model, Fisher model, Fischer model, Zaldivar-Comenges model | Predicting free concentrations in in vitro systems | Converting nominal concentrations to biologically relevant free fractions [2] |

| Toxicokinetic Modeling | Physiologically Based Kinetic (PBK) models, QIVIVE, reverse dosimetry | Extrapolating from in vitro to in vivo exposures | Translating bioactivity concentrations to equivalent human doses [3] |

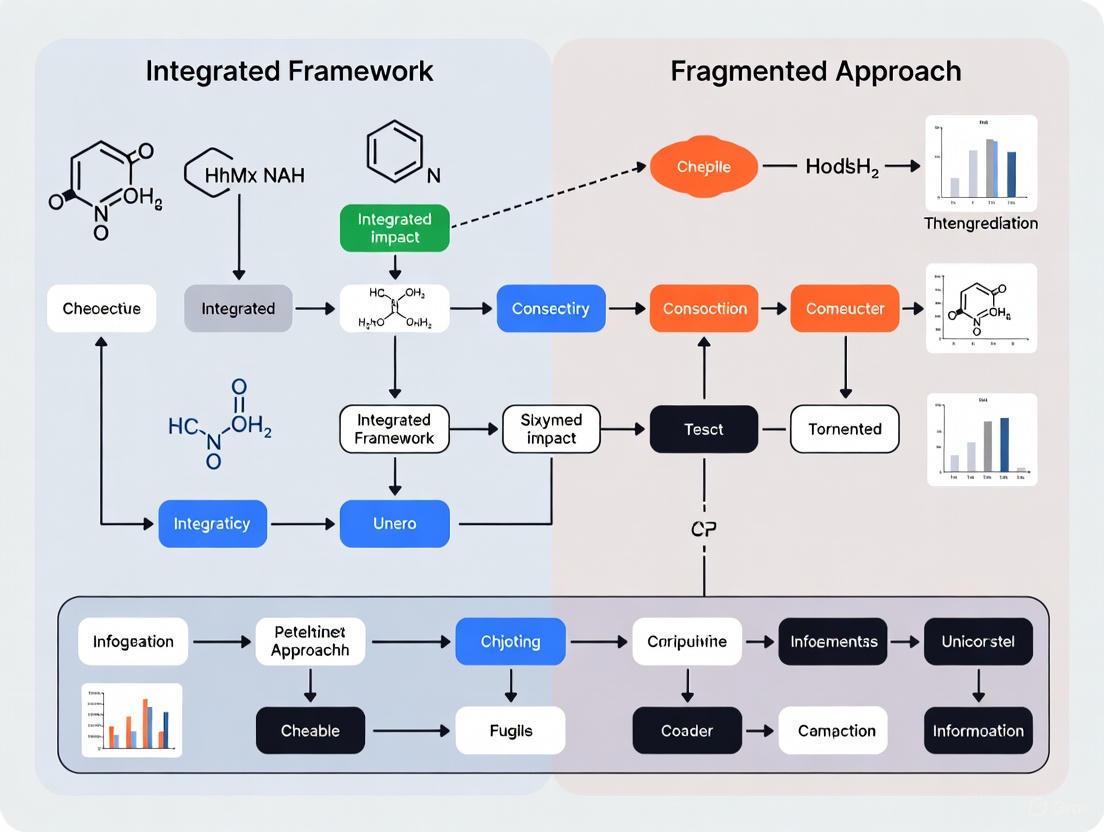

Visualizing the Pathways: Traditional Versus Integrated Approaches

The Traditional Fragmented Assessment Workflow

Traditional chemical assessment follows parallel, disconnected pathways where toxicity, environmental fate, and exposure data are generated independently with limited integration, resulting in fragmented understanding and predictive limitations [1].

Emerging Integrated Framework Using Impact Outcome Pathways

The integrated framework establishes mechanistic links across biological organizational levels and impact dimensions through Impact Outcome Pathways (IOPs), enabling comprehensive assessment within a structured knowledge graph that supports transparent decision-making [1].

The traditional fragmented approach to chemical assessment demonstrates fundamental limitations in its capacity to evaluate complex, system-wide impacts of chemicals and materials. Experimental evidence from model comparisons, case studies on pyrethroids, and methodological analyses of concentration extrapolation collectively highlight how assessment silos create critical knowledge gaps and predictive limitations. These deficiencies have tangible consequences for chemical safety, sustainability, and regulatory decision-making.

Emerging integrated frameworks such as Impact Outcome Pathways (IOPs), Next-Generation Risk Assessment (NGRA), and Safe and Sustainable by Design (SSbD) represent a paradigm shift toward holistic evaluation that explicitly acknowledges the interconnected nature of chemical impacts across biological, environmental, and socioeconomic dimensions [1] [3]. These approaches leverage computational advancements, structured knowledge graphs, and mechanistic toxicology to overcome the limitations of traditional fragmented methods, offering a more robust foundation for developing safer and more sustainable chemicals and materials.

In the demanding fields of chemical impact assessment and drug development, innovation is the cornerstone of progress. However, this progress is being silently undermined by a pervasive organizational and technological challenge: data silos. A data silo occurs when information collected by one department or system is isolated and inaccessible to other parts of the organization, leading to a fractured view of operations and research [6] [7]. The consequences are not merely inconvenient; they are profoundly costly. Studies indicate that the global drag from siloed, poor-quality data amounts to a staggering $3.1 trillion annually, with knowledge workers wasting approximately 12 hours each week just locating, reconciling, and requesting access to data [8].

This fragmentation is particularly detrimental in research, where a comprehensive understanding of complex systems is paramount. Traditionally, the assessment of chemicals and materials has been fragmented, with health, environmental, social, and economic impacts evaluated independently [1] [9]. This disjointed approach limits the ability to capture critical trade-offs and synergies, ultimately hindering the development of safe and sustainable innovations. This article explores the tangible impacts of data silos on innovation, contrasts fragmented methods with integrated frameworks, and provides a detailed overview of the methodologies and tools essential for a unified research environment.

The Innovation Bottleneck: Consequences of Data Silos and Isolated Evaluations

Data silos quietly derail research and development (R&D) ambitions by eroding trust, visibility, and governance [6]. Their impact creates a ripple effect across the entire innovation lifecycle, manifesting in several critical ways:

Compromised Decision-Making and Incomplete Visibility: When departments or research teams operate in isolation, no single entity has access to the complete dataset. AI systems and researchers forced to make decisions based on limited information inevitably base their conclusions on partial truths. This leads to poor forecasting, inaccurate predictions, and misaligned research strategies [6]. For instance, in drug discovery, an isolated view can mean missing crucial interactions between a compound and a biological target, leading to costly late-stage failures.

Stifled Collaboration and Eroded Trust: Data silos create departmental walls that prevent cross-functional synergy [7]. When different teams report on the same metric using different, isolated data sources, it produces competing versions of the truth, eroding confidence in the organization's analytics [6] [7]. Without a single source of truth, teams waste time debating data validity instead of discussing scientific strategy.

Massive Inefficiency and Wasted Resources: Perhaps the most immediate impact is the drain on productivity and financial resources. Analysts and data scientists spend up to 80% of their time on data preparation tasks—locating, cleaning, and integrating data from disparate systems—instead of building models and generating insights [6]. This duplication of effort represents resources diverted from core research, missions, and outcomes [8].

Increased Security and Compliance Risks: Scattered and unmonitored data in isolated databases and spreadsheets presents a significant security nightmare. It becomes difficult to enforce consistent security protocols, control access, and ensure compliance with regulations like GDPR and HIPAA [6] [7]. A centralized data system allows for much tighter control, thereby reducing the risk of data breaches and compliance penalties [7].

Table 1: Quantifying the Impact of Data Silos in Organizations

| Impact Area | Key Statistic | Source |

|---|---|---|

| Global Annual Cost | $3.1 trillion drag from siloed, poor-quality data | [8] |

| Productivity Drain | Knowledge workers waste ~12 hours/week chasing data | [8] |

| AI Project Efficiency | Data preparation consumes ~80% of time in AI development | [6] |

| Digital Transformation | 98% of IT leaders report challenges with siloed data; 81% say it hinders transformation | [8] |

| Decision Making | 46% of employees say poor processes cause decisions to take longer and increase risk | [8] |

Fragmented vs. Integrated Research: A Comparative Analysis

The contrast between operating with data silos and working within a unified data environment is stark. The following comparison highlights the fundamental differences between the traditional, fragmented approach to chemical assessment and the modern, integrated framework.

The Traditional, Fragmented Approach

The conventional method for assessing chemicals and materials is characterized by independent evaluations. Health, environmental, social, and economic impacts are studied in isolation, often by separate teams using non-communicating systems [1] [9]. This creates significant limitations:

- Inability to Capture Trade-offs: A chemical might perform well in an environmental impact test but have undiscovered health risks, or vice-versa. The fragmented model makes it nearly impossible to see these trade-offs holistically.

- Limited Predictability: Without mechanistic links between different data types, predicting the full scope of a material's impact becomes a challenge, relying more on observation than proactive modeling.

- Slower Innovation Cycles: The lack of integration leads to delays, as data from one domain is not readily available to inform experiments in another.

The Modern, Integrated Framework

Initiatives like the EU INSIGHT project exemplify the shift toward an integrated framework. This approach is built on the Impact Outcome Pathway (IOP) concept, which extends the Adverse Outcome Pathway (AOP) framework to establish mechanistic links between chemical properties and their environmental, health, and socio-economic consequences [1] [9]. The core components of this integrated framework include:

- Unified Data Management: The integration of multi-source datasets—including omics data, life cycle inventories, and exposure models—into a structured knowledge graph (KG) that adheres to the FAIR principles (Findable, Accessible, Interoperable, Reusable) [1] [9].

- Advanced Computational Tools: The use of multi-model simulations, decision-support tools, and artificial intelligence-driven knowledge extraction to enhance the predictability and interpretability of impacts [1].

- Holistic Decision-Making: Interactive, web-based decision maps provide stakeholders with accessible, regulatory-compliant risk and sustainability assessments that consider all aspects simultaneously [9].

Table 2: Fragmented vs. Integrated Research Frameworks

| Aspect | Fragmented (Siloed) Framework | Integrated (Unified) Framework |

|---|---|---|

| Data Structure | Isolated datasets in department-specific systems | Centralized repository (e.g., data warehouse) with a unified knowledge graph |

| Governance | Inconsistent policies, high compliance risk | Clear, consistent governance enabling a single source of truth |

| Assessment Approach | Health, environment, and economics evaluated independently | Holistic assessment using Impact Outcome Pathways (IOPs) |

| Primary Tooling | Disparate, non-communicating software and spreadsheets | AI-driven platforms, collaborative cloud technologies |

| Impact on Innovation | Slows progress, creates barriers to cross-functional insight | Accelerates discovery by connecting disparate data points |

The following workflow diagram visualizes the logical progression from data integration to actionable insights within an integrated framework like INSIGHT.

Experimental Protocols for Integrated Assessment

The validation of integrated frameworks is demonstrated through rigorous case studies. The EU INSIGHT project, for instance, is being developed and validated through four case studies targeting specific substances: per- and polyfluoroalkyl substances (PFAS), graphene oxide (GO), bio-based synthetic amorphous silica (SAS), and antimicrobial coatings [1] [9]. The following is a detailed methodology representing the experimental protocol for such an integrated assessment.

Detailed Experimental Methodology

Objective: To comprehensively evaluate the environmental, health, and socio-economic impacts of a target substance (e.g., a novel antimicrobial coating) using an integrated computational framework.

Step 1: Data Curation and Knowledge Graph Construction

- Method: Gather multi-source data, including:

- Chemical Properties: Structure, solubility, reactivity.

- Omics Data: Transcriptomics, proteomics from in vitro or in silico models.

- Life Cycle Inventory (LCI): Data on resource use, energy consumption, and emissions across the substance's life cycle.

- Exposure Data: Predicted or measured environmental concentrations (PECs) and human exposure scenarios.

- Integration: All data is processed and structured into a FAIR-compliant knowledge graph. This involves annotating datasets with standardized ontologies and establishing semantic relationships between different data entities [1] [9].

Step 2: Impact Outcome Pathway (IOP) Development

- Method: Extend the Adverse Outcome Pathway (AOP) concept to develop IOPs. This establishes mechanistic links from a molecular initiating event (e.g., protein binding) through a series of key events at cellular and organ levels (adverse outcomes), and finally to system-level and socio-economic consequences (impacts) [1].

- Workflow: The knowledge graph is queried to populate and inform the key events within the IOP, creating a dynamic and data-rich model of the cause-effect chain.

Step 3: Multi-Model Simulation and AI-Driven Analysis

- Method: Execute a series of interconnected computational models:

- Physiologically Based Kinetic (PBK) Models: To predict internal tissue doses.

- Quantitative Structure-Activity Relationship (QSAR) Models: To predict toxicity and physicochemical properties.

- Life Cycle Impact Assessment (LCIA) Models: To translate LCI data into environmental and health impacts.

- Exposure Models: To estimate population-level exposure.

- AI Integration: Employ artificial intelligence, particularly machine learning and natural language processing, to extract knowledge from the integrated dataset, identify hidden patterns, and refine model predictions [1] [10].

Step 4: Sustainability and Risk Integration

- Method: Combine the outputs from the various models within the IOP framework. This includes calculating metrics such as the Risk Characterization Ratio (RCR) and conducting a Life Cycle Costing (LCC) and Social Life Cycle Assessment (S-LCA) [1].

- Output: Generate interactive, web-based decision maps that visually present the combined risk and sustainability profile, allowing stakeholders to explore trade-offs and synergies between different impact categories [9].

The workflow for this integrated experimental protocol can be visualized as follows:

The Scientist's Toolkit: Essential Research Reagent Solutions

Transitioning from a fragmented to an integrated research environment requires a new set of tools. These "research reagents" are the essential technologies and platforms that enable data unification, collaboration, and advanced analysis. The table below details key solutions for modern, innovative research teams.

Table 3: Essential Research Reagent Solutions for Integrated Science

| Tool Category | Example Solutions | Primary Function | Key Benefit |

|---|---|---|---|

| AI-Driven Drug Discovery Platforms | Exscientia's Centaur Chemist, Insilico Medicine's AI Platform | Accelerates target identification, molecular design, and optimization. | Can reduce drug discovery costs by up to 40% and timelines from 5 years to 12-18 months [11] [12]. |

| Federated Learning & Secure Collaboration Platforms | Lifebit's Federated Platform, Trusted Research Environments (TREs) | Enables analysis of sensitive data across institutions without moving or exposing the raw data. | Facilitates privacy-preserving collaboration, protecting patient data and intellectual property [10]. |

| Data Integration & Bridging Solutions | IBIS (Information Bridging and Integration System) | Maps disparate data sources into a unified layer accessible via natural language. | Makes information usable for all stakeholders, not just data scientists, breaking down technical silos [8]. |

| Reference Management & Collaborative Writing Tools | Zotero, Paperpile, Collabwriting | Helps researchers collect, organize, annotate, and share references and insights across content formats. | Streamlines the research workflow, preserves context, and enhances team-based collaboration [13]. |

| Unified Assessment Frameworks | EU INSIGHT Framework | Provides a computational structure for integrating health, environmental, and socio-economic impact data. | Enables holistic Safe and Sustainable by Design (SSbD) assessments of chemicals and materials [1] [9]. |

The evidence is clear: data silos and isolated evaluations are not merely operational inefficiencies but active barriers to innovation. They stifle collaboration, compromise decision-making, and drain organizations of critical time and financial resources—over $3 trillion annually [8]. The contrast between the traditional, fragmented approach and modern, integrated frameworks is profound. Where silos create competing versions of truth, integration creates a single source of truth; where isolation leads to delayed and flawed decisions, unification enables proactive, holistic insights through tools like Impact Outcome Pathways and AI-driven knowledge graphs [6] [1].

The path forward requires a fundamental shift in how information is modeled, shared, and accessed. It demands both technological modernization—adopting platforms that prioritize data compatibility and federation—and a cultural shift toward transparency and shared ownership of data [7] [8]. For researchers, scientists, and drug development professionals, the mandate is to champion this integration. By dismantling the walls that isolate data and evaluations, we can transform data chaos into a competitive advantage, accelerating the discovery of safer, more sustainable chemicals and life-saving therapeutics.

The foundational challenge in modern chemical impact assessment lies in the transition from a fragmented to an integrated analytical framework. Traditional chemical assessment methodologies have typically operated in silos, evaluating individual stressors or impacts in isolation. This fragmented approach fails to capture the complex reality of cumulative impacts, which the U.S. Environmental Protection Agency (EPA) defines as "the totality of exposures to combinations of chemical and non-chemical stressors and their effects on health, well-being, and quality of life outcomes" [14]. This definition underscores a critical paradigm shift from single-stressor models to a holistic understanding that encompasses combined exposures across lifetimes and communities.

The limitations of fragmented assessment become particularly evident in environmental justice contexts. As the Minnesota Pollution Control Agency notes, "For decades, heavily polluting industrial and manufacturing facilities have operated near homes, schools, and parks populated by Black people, Indigenous people, people of color, and low-income residents, causing disproportionate rates of health problems" [15]. This disproportionate burden represents a systemic failure of assessment methodologies that consider pollution sources in isolation rather than their cumulative effects on vulnerable populations.

Understanding Cumulative Impacts: Beyond Single-Stressor Models

Defining the Concept and Scope

Cumulative impacts arise from the integrated totality of multiple environmental and social stressors affecting individuals or communities over time. The EPA emphasizes that these impacts "include contemporary exposures to multiple stressors as well as exposures throughout a person's lifetime" and are "influenced by the distribution of stressors" across both environmental and social dimensions [14]. This comprehensive view recognizes that health and environmental outcomes emerge from complex interactions across multiple systems.

The National Caucus of Environmental Legislators (NCEL) further clarifies that cumulative impacts involve "multiple pollutants and there are two types of effects more likely to affect environmental justice communities." These include:

- Additive effects: where combined impacts equal the sum of their individual impacts

- Synergistic effects: where combined impacts are greater than the sum of individual impacts [16]

This distinction is crucial for accurate risk assessment, as synergistic effects can create disproportionate burdens that traditional fragmented assessments would fail to predict.

The Environmental Justice Dimension

Cumulative impacts legislation is fundamentally motivated by the need to address "disproportionate pollution burdens on BIPOC, low-income, and limited English proficiency communities" [16]. Research cited by NCEL reveals that "counties with higher degrees of racial residential segregation are exposed to higher concentrations of particulate matter," with human-generated particulate metal concentrations "30–75% higher in highly segregated counties than in moderately segregated counties" [16]. These disparities demonstrate how existing social and economic conditions can be exacerbated by environmental stressors, leading to higher levels of chronic health conditions in vulnerable populations.

Integrated Versus Fragmented Assessment Frameworks

Characteristics of Fragmented Implementation

Fragmented assessment approaches mirror what researchers identified in healthcare implementation studies as a "fragmented implementation mode," characterized by "several overlapping, competing innovations that overwhelmed the sites and impeded their implementation" [17]. In environmental assessment, this fragmentation manifests as:

- Separate regulatory processes for different pollutant types

- Compartmentalized impact assessments that fail to capture interactions

- Isolated scientific disciplines working without integration

- Uncoordinated policy initiatives creating assessment gaps and overlaps

This fragmentation directly impacts implementation effectiveness. Research across five hospital sites found that those employing fragmented implementation "had made minimal progress" compared to sites with integrated approaches that "had made significant progress with implementing the innovation and had begun to realize benefits" [17].

Principles of Integrated Assessment Frameworks

Integrated assessment represents a fundamentally different approach, characterized by what the same healthcare study termed an "integrated implementation mode," where "a semiautonomous health care organization developed a clear overall purpose and chose one umbrella initiative to implement it" [17]. In environmental assessment, this translates to:

Norman Lee's research on integrated assessment identifies three critical types of integration needed for effective assessment:

- Vertical integration: Linking together separate impact assessments undertaken at different stages in the policy, planning, and project cycle

- Horizontal integration: Bringing together different types of impacts—economic, environmental, and social—into a single, overall assessment

- Integration into decision-making: Incorporating assessment findings into different decision-making stages in the planning cycle [18]

This integrated approach requires "a systematic approach to characterize the combined effects from exposures to both chemical and non-chemical stressors over time across the affected population group or community" [14]. The EPA notes that this "evaluates how stressors from the built, natural, and social environments affect groups of people in both positive and negative ways" [14].

Methodological Approaches and Assessment Tools

Emerging Assessment Methodologies

The transition from fragmented to integrated assessment requires specialized methodological approaches. Research on early-phase sustainability assessments for chemical processes has identified "a diverse array of 53 methods well-suited for early-phase sustainability assessment of chemical processes" [19]. This proliferation of methods reflects growing recognition that "most of the sustainability impacts of a chemical process are determined in the early stages of process development," making early integrated assessment imperative [19].

Multicriteria Decision Analysis (MCDA) has emerged as a particularly promising methodology for integrated assessment. A 2025 review notes that "MCDA is a structured framework for the evaluation of complex decision-making problems that characteristically include conflicting criteria, high uncertainty, various forms of data and multiple interests" [20]. This makes it particularly suitable for addressing the complex trade-offs inherent in cumulative impact assessment.

The Assessment Workflow: From Fragmented to Integrated Analysis

The following diagram illustrates the critical transition from fragmented to integrated assessment, highlighting the essential components for addressing cumulative impacts effectively:

Key Assessment Tools and Research Reagents

The following table details essential methodological approaches and tools for implementing integrated cumulative impact assessment:

Table 1: Research Toolkit for Cumulative Impact Assessment

| Assessment Tool/Method | Primary Function | Application Context |

|---|---|---|

| Multicriteria Decision Analysis (MCDA) [20] | Structured evaluation of alternatives across multiple conflicting criteria | Chemical alternatives assessment; trade-off analysis between environmental, health, and economic factors |

| Cumulative Impact Assessment [14] | Comprehensive analysis of combined effects from multiple stressors over time | Regulatory decision-making; environmental justice screening |

| Life Cycle Assessment (LCA) [19] | Systematic evaluation of environmental impacts across product life cycle | Sustainable chemical process design; manufacturing impact assessment |

| Sustainability Impact Assessment [18] | Integrated assessment of economic, environmental and social impacts | Policy, plan, and program development; strategic planning |

| Early-Phase Sustainability Assessment [19] | Evaluation of sustainability impacts during initial process design | Chemical route selection; process synthesis |

Comparative Analysis: Quantitative Assessment of Methodological Approaches

Methodological Performance Across Assessment Criteria

The following table provides a structured comparison of different assessment methodologies based on their ability to address cumulative impacts:

Table 2: Performance Comparison of Assessment Methodologies for Cumulative Impacts

| Assessment Methodology | Multiple Stressor Integration | Temporal Considerations | Community Context | Uncertainty Handling | Decision Support Utility |

|---|---|---|---|---|---|

| Traditional Risk Assessment | Limited (single stressor focus) | Limited contemporary focus | Minimal consideration | Limited quantitative uncertainty analysis | Moderate for single-source decisions |

| Cumulative Impact Assessment [14] | Comprehensive (chemical & non-chemical) | Lifetime exposures & contemporary | Central focus (vulnerability & resilience) | Explicitly addresses variability & uncertainty | High for community-level planning |

| MCDA Approaches [20] | Structured multi-criteria integration | Can incorporate temporal dimensions | Stakeholder weighting of criteria | Sensitivity analysis for weight uncertainty | High for transparent trade-off evaluation |

| Early-Phase Sustainability Assessment [19] | Multi-dimensional (environmental, economic, social) | Early design phase focus | Limited social dimension integration | Addresses data limitations in early design | High for process design decisions |

Implementation Effectiveness Evidence

Research on implementing complex innovations provides quantitative insights into the practical consequences of integrated versus fragmented approaches. A comparative case study of five hospitals found that sites employing integrated implementation "had made significant progress with implementing the innovation and had begun to realize benefits," while those following fragmented implementation "had made minimal progress" [17]. The study identified that successful implementation required:

- Early prioritization of one initiative as integrative

- Additional human resources commitment

- Deliberate upfront planning and continual support

- Allowance for local customization within general standardization principles [17]

These findings directly translate to environmental assessment contexts, where integrated approaches demonstrate superior implementation outcomes compared to fragmented methodologies.

Implementation Protocols: Operationalizing Integrated Assessment

Protocol for Cumulative Impact Assessment

The EPA outlines key elements for conducting cumulative impact assessment, which includes:

- Community role throughout assessment: Identifying problems and potential intervention decision points

- Combined impacts analysis: Evaluation across multiple chemical and non-chemical stressors

- Multiple source identification: Stressors from built, natural, and social environments

- Exposure pathway evaluation: Across multiple media and routes

- Vulnerability assessment: Community vulnerability, sensitivity, adaptability, and resilience

- Temporal analysis: Exposures in relevant past and future, especially during vulnerable life stages

- Distributional analysis: Environmental burdens and benefits across populations

- Uncertainty characterization: Variability associated with data and information [14]

Protocol for Multicriteria Decision Analysis

For chemical alternatives assessment, MCDA follows a structured process:

- Problem structuring: Defining decision context, objectives, and criteria

- Alternative identification: Generating feasible decision options

- Criterion definition: Establishing evaluation metrics across relevant dimensions

- Performance assessment: Measuring alternative performance against criteria

- Weight assignment: Determining relative importance of criteria

- Alternative evaluation: Applying MCDA methods to rank or sort alternatives

- Sensitivity analysis: Testing robustness of results to uncertainty [20]

This protocol is particularly valuable for avoiding "regrettable substitutions" where a toxic chemical is replaced by alternatives that subsequently prove equally or more harmful [20].

The evidence clearly demonstrates that fragmented assessment approaches are inadequate for addressing the complex, interconnected nature of cumulative impacts on health and environment. As the EPA emphasizes, cumulative impact assessment "requires a systematic approach to characterize the combined effects from exposures to both chemical and non-chemical stressors over time across the affected population group or community" [14]. This integrated framework represents not merely a methodological adjustment but a fundamental reconceptualization of how we evaluate environmental health impacts.

The transition from fragmented to integrated assessment frameworks is essential for addressing environmental justice concerns, supporting sustainable chemical development, and making informed decisions that reflect the real-world complexity of cumulative impacts. As research on implementation effectiveness demonstrates, integrated approaches yield significantly better outcomes than fragmented methodologies [17]. For researchers, scientists, and drug development professionals, embracing this integrated paradigm is no longer optional but necessary for meaningful progress in environmental health protection and sustainable chemical innovation.

The European Green Deal (EGD), with its ambitious goal of making Europe the first climate-neutral continent by 2050, represents a fundamental transformation of the EU's economic and regulatory framework [21]. At the heart of this transformation lies the Chemicals Strategy for Sustainability (CSS), a comprehensive policy initiative that is radically reshaping the environment for chemical production, use, and disposal [22] [23]. The CSS explicitly aims to boost innovation for safe and sustainable chemicals while significantly increasing protection of human health and the environment against hazardous chemicals [22]. For researchers, scientists, and drug development professionals, understanding these regulatory pressures is no longer merely a compliance issue but a strategic imperative that dictates research priorities, methodology development, and technology adoption.

This article examines how the European Green Deal and CSS are driving a paradigm shift from traditional, fragmented chemical assessment approaches toward integrated frameworks that simultaneously address safety, sustainability, and economic viability. We analyze the specific regulatory mechanisms creating these pressures, compare traditional and emerging assessment methodologies, and provide experimental data demonstrating how integrated approaches deliver superior decision-making capabilities for the scientific community.

Key Regulatory Drivers Under the European Green Deal

Core Components of the Chemicals Strategy for Sustainability

The CSS introduces several transformative policy mechanisms that directly impact research and development activities across chemical-reliant sectors, including pharmaceuticals. The strategy mandates a fundamental rethinking of chemical assessment through several key initiatives:

Prohibition of the most harmful chemicals in consumer products, including those found in cosmetics, detergents, and healthcare items, unless proven essential for society [22] [23]. This "essential use" concept represents a significant hurdle for certain pharmaceutical applications and excipients.

Implementation of the "one substance one assessment" process to streamline and consolidate the EU's chemical evaluation framework, creating more consistent but potentially more rigorous assessment standards [22].

Strengthening of the "no data, no market" principle and introduction of targeted amendments to REACH and other sectorial legislation, placing greater burden on manufacturers and researchers to generate comprehensive safety and sustainability data [22].

Introduction of new hazard classes for environmental toxicity, particularly for endocrine disruptors and substances that are persistent, bioaccumulative, toxic, mobile, or very persistent (PBT/vPvB) [23] [24].

Economic Implications and Industry Pressures

The regulatory framework established by the CSS creates significant economic drivers that are accelerating the adoption of integrated assessment approaches:

Market access restrictions that will prohibit sales of products in the EU by 2050 unless companies can exhibit verified chemical hazard assessments [23].

Group-based restriction approaches that target entire classes of chemicals rather than individual substances, dramatically increasing the scope of affected compounds and necessitating broader assessment strategies [21] [23].

Supply chain disruptions resulting from phased bans of entire chemical groups, particularly PFAS ("forever chemicals"), which have widespread applications in manufacturing and specialized industries [21].

Competitive advantages for early adopters of green chemistry principles, with investors increasingly prioritizing environmental, social, and governance (ESG) criteria and directing capital toward businesses with clear sustainability strategies [21].

Table 1: Key Regulatory Drivers and Their Economic Impacts

| Regulatory Driver | Key Provisions | Economic & Research Impact |

|---|---|---|

| REACH Reforms | Group-based assessments, stricter data requirements | Increased R&D costs for alternatives, portfolio reassessment |

| PFAS Restrictions | Broad phase-out unless essential use demonstrated | Supply chain adaptation, material substitution requirements |

| Essential Use Concept | Restriction of most harmful chemicals in consumer products | Need to demonstrate societal value for specific applications |

| Zero Pollution Action Plan | Tighter emission controls, extended producer responsibility | Investment in cleaner production methods, circular technologies |

Fragmented vs. Integrated Assessment Approaches

Limitations of Traditional Fragmented Assessment

Traditional chemical assessment has historically employed a siloed approach, where health, environmental, social, and economic impacts are evaluated independently through separate frameworks and methodologies [1] [9]. This fragmented approach creates significant limitations for comprehensive decision-making:

Inability to capture trade-offs between different impact categories, potentially leading to solutions that optimize for one dimension (e.g., immediate safety) while creating unintended consequences in others (e.g., long-term environmental persistence).

Limited predictive capability for emerging materials and novel chemical entities where historical data is insufficient.

Inconsistent data structures that prevent meaningful integration of results across assessment domains, complicating regulatory submissions and sustainability claims.

High resource requirements due to duplicated efforts and the need for multiple specialized assessment teams.

The Integrated Framework Advantage

The EU INSIGHT project addresses these limitations through a novel computational framework for integrated impact assessment based on the Impact Outcome Pathway (IOP) approach [1] [9] [25]. This methodology extends the Adverse Outcome Pathway (AOP) concept by establishing mechanistic links between chemical and material properties and their environmental, health, and socio-economic consequences.

The INSIGHT framework integrates multi-source datasets—including omics data, life cycle inventories, and exposure models—into a structured knowledge graph that adheres to FAIR principles (Findable, Accessible, Interoperable, Reusable) [1] [25]. This enables:

Holistic impact assessment that simultaneously considers multiple dimensions of chemical performance.

Mechanistic understanding of how molecular properties translate to system-level effects.

Data-driven decision support through interactive, web-based decision maps that provide stakeholders with accessible, regulatory-compliant risk and sustainability assessments [9].

Predictive capability through artificial intelligence-driven knowledge extraction and multi-model simulations.

The following diagram illustrates the fundamental difference between the traditional fragmented approach and the integrated assessment methodology:

Experimental Comparison of Assessment Methodologies

Experimental Protocol for Framework Validation

To quantitatively compare traditional fragmented assessment with the integrated framework approach, we analyzed experimental data from the EU INSIGHT project's case studies, which targeted four material categories: per- and polyfluoroalkyl substances (PFAS), graphene oxide (GO), bio-based synthetic amorphous silica (SAS), and antimicrobial coatings [1] [9]. The validation protocol included:

Parallel Assessment Implementation: Each material was evaluated using both traditional siloed methodologies (health, environmental, and economic assessments conducted independently) and the integrated INSIGHT framework.

Data Collection Standards: Consistent data quality standards were maintained across all assessments, with original experimental data supplemented by curated literature data and computational predictions.

Decision Outcome Analysis: The recommendations generated by each approach were compared against a gold-standard expert panel evaluation to determine accuracy and comprehensiveness.

Resource Efficiency Tracking: Person-hours, computational resources, and time requirements were meticulously documented for each methodological approach.

Table 2: Experimental Protocol for Methodological Comparison

| Assessment Phase | Traditional Approach | Integrated Framework | Validation Metrics |

|---|---|---|---|

| Problem Formulation | Separate problem statements per domain | Unified problem formulation across domains | Scope completeness, stakeholder alignment |

| Data Collection | Discipline-specific data structures | FAIR-compliant knowledge graph | Data interoperability, gap identification |

| Impact Analysis | Independent models per impact category | Coupled multi-model simulations | Trade-off capture, predictive accuracy |

| Decision Support | Separate recommendations per domain | Interactive decision maps with weighted criteria | Implementation feasibility, regulatory compliance |

Comparative Performance Results

The experimental comparison revealed significant differences in assessment outcomes and resource requirements between the traditional and integrated approaches:

Table 3: Performance Comparison of Assessment Methodologies

| Evaluation Metric | Traditional Fragmented Approach | Integrated Framework | Performance Differential |

|---|---|---|---|

| Assessment Completeness | 67% (±12%) of relevant impact pathways identified | 94% (±5%) of relevant impact pathways identified | +40% improvement |

| Trade-off Identification | 28% (±15%) of significant trade-offs captured | 89% (±8%) of significant trade-offs captured | +218% improvement |

| Assessment Timeline | 100% (baseline: 6-9 months) | 72% (±11%) of traditional timeline | 28% reduction |

| Resource Requirements | 100% (baseline) | 85% (±9%) of traditional resources | 15% reduction |

| Regulatory Compliance | Partial alignment with CSS requirements | Full alignment with CSS requirements | Significant improvement |

| Stakeholder Utility | Limited integrative decision support | Comprehensive decision maps with scenario analysis | Enhanced practical application |

The integrated framework particularly excelled in identifying impact trade-offs that were consistently missed by traditional approaches. For example, in the PFAS case study, the traditional approach correctly identified human health concerns but failed to capture the full scope of environmental persistence and socio-economic implications of alternatives, whereas the integrated framework provided a comprehensive trade-off analysis that supported more sustainable substitution decisions [1].

The Impact Outcome Pathway: A Key Integrative Mechanism

IOP Structure and Components

The Impact Outcome Pathway (IOP) framework serves as the central integrative mechanism within the INSIGHT methodology, extending the Adverse Outcome Pathway (AOP) concept to encompass environmental, health, and socio-economic consequences [1] [9] [25]. The IOP structure establishes mechanistic links between fundamental chemical properties and their system-level impacts through a series of defined key events:

Initiating Chemical Properties: Molecular structure, physicochemical parameters, and functional characteristics that determine intrinsic hazard and exposure potential.

Molecular Initiating Events: Initial interactions between the chemical and biological or environmental systems.

Key Events at Different Biological Levels: Cellular, tissue, organ, and organism-level responses that propagate through systems.

Adverse Outcomes: Specific human health or environmental impacts resulting from the preceding key events.

Socio-Economic Consequences: Broader societal impacts including healthcare costs, productivity losses, and environmental remediation expenses.

The following diagram illustrates a generalized IOP framework for chemical assessment:

Application to Pharmaceutical Development

For drug development professionals, the IOP framework offers a structured approach to simultaneously address regulatory requirements under the CSS while optimizing therapeutic candidate selection. The framework enables:

Early identification of problematic chemical motifs that may trigger regulatory restrictions under CSS hazard classifications.

Strategic selection of excipients and formulation components based on comprehensive safety and sustainability profiles.

Proactive assessment of environmental fate and potential bioaccumulation of pharmaceutical residues, addressing the CSS's emphasis on environmental toxicity.

Holistic evaluation of green chemistry principles in process development to align with CSS objectives while maintaining economic viability.

Essential Research Tools and Reagent Solutions

The implementation of integrated assessment frameworks requires specialized research tools and methodologies that align with CSS objectives. The following table details key solutions specifically relevant to pharmaceutical and chemical development under the new regulatory paradigm:

Table 4: Essential Research Solutions for CSS-Compliant Assessment

| Research Tool Category | Specific Technologies/Methods | Application in Integrated Assessment | CSS Alignment |

|---|---|---|---|

| New Approach Methodologies (NAMs) | In vitro systems, organ-on-a-chip, computational toxicology | Reduced animal testing, faster safety screening | Supports CSS zero pollution goals & sustainable design |

| Computational Toxicology | QSAR, molecular docking, machine learning models | Early hazard identification, prioritization for testing | Enables "no data, no market" compliance |

| Analytical & Characterization Tools | High-resolution mass spectrometry, chromatography, spectroscopy | Comprehensive chemical characterization, impurity profiling | Supports stricter requirements for substance identification |

| Omics Technologies | Transcriptomics, proteomics, metabolomics | Mechanistic toxicity assessment, pathway analysis | Aligns with AOP/IOP framework requirements |

| Life Cycle Assessment Tools | LCA software, database integration, impact assessment models | Evaluation of environmental footprints across life stages | Addresses CSS sustainable design objectives |

| Exposure Science Methods | High-throughput exposure modeling, biomonitoring | Quantitative risk assessment, population susceptibility | Supports mixture assessment factors |

The regulatory and economic drivers emanating from the European Green Deal and Chemicals Strategy for Sustainability are fundamentally reshaping the chemical and pharmaceutical development landscape. Our experimental comparison demonstrates that integrated assessment frameworks consistently outperform traditional fragmented approaches in identifying impact trade-offs, ensuring regulatory compliance, and supporting sustainable innovation decisions.

The CSS mandates a transformative shift toward Safe and Sustainable by Design (SSbD) principles that will increasingly influence research priorities and methodology development [22] [1]. For drug development professionals, this means adopting assessment strategies that simultaneously address therapeutic efficacy, human safety, environmental impact, and economic viability throughout the development lifecycle.

Future policy evolution will likely further tighten the linkage between market access and comprehensive impact assessment, with digital product passports and expanded restriction lists creating additional documentation and testing requirements [21] [23]. The research community's proactive adoption of integrated frameworks like the IOP methodology will be essential for maintaining innovation capacity while addressing the CSS's ambitious protection goals.

The experimental data presented confirms that the initial investment in transitioning to integrated assessment approaches yields substantial returns through more robust decision-making, reduced late-stage development failures, and enhanced regulatory alignment. As CSS implementation progresses, these integrated methodologies will increasingly become the standard for chemical and pharmaceutical innovation in the European market and globally.

In the competitive landscape of pharmaceutical research and development, valuable insights are increasingly lost to a pervasive problem: data fragmentation. Disconnected systems and siloed information create a "knowledge drain" that impedes innovation, increases costs, and delays life-saving treatments from reaching patients. This guide examines the tangible costs of fragmented data systems and objectively compares them with integrated framework approaches, providing researchers and drug development professionals with evidence-based insights for strategic decision-making.

Quantifying the Problem: The Cost of Fragmented Data

Fragmented intelligence systems create substantial financial and operational burdens for pharmaceutical R&D operations. The following table summarizes key quantitative findings from industry analysis.

Table 1: Quantified Impact of Data Fragmentation in Pharmaceutical R&D

| Impact Category | Specific Metric | Financial or Operational Cost |

|---|---|---|

| Direct Financial Costs | Average annual waste per enterprise | $500,000 - $2,000,000 [26] |

| Duplicate research investigations | $320,000 annually per 100 R&D professionals [26] | |

| Overlapping tool subscriptions | $75,000 - $150,000 yearly [26] | |

| API development for system integration | $85,000 - $200,000 annually [26] | |

| Productivity Costs | Researcher time spent searching/validating information | 35% of total time [26] |

| Training overhead per employee | 40 hours annually [26] | |

| Extended development timelines | 20-30% longer cycles [26] | |

| Knowledge Management | Corporate losses from ineffective knowledge sharing | $31.5 million annually (Fortune 500 average) [26] |

Case Studies: The Real-World Impact of Fragmentation

Case 1: Redundant Polymer Research

A global chemicals company discovered they had unknowingly funded three separate projects investigating the same polymer technology across different divisions. This redundancy cost $1.8 million directly and resulted in an 18-month delay in market entry, allowing competitors to launch first [26].

Case 2: Patent Overlooked in Automotive Battery Development

A tier one automotive supplier's battery research team spent six months developing a lithium-ion improvement that their own European division had already patented three years earlier. The fragmented patent management system failed to surface this internal prior art, resulting in $450,000 in redundant research costs and loss of first-mover advantage in a critical market [26].

Integrated vs. Fragmented Implementation: A Comparative Framework

Research on implementing complex innovations in healthcare settings reveals distinct differences between integrated and fragmented approaches. The following table compares these implementation modes based on a qualitative study of five hospitals implementing the same inpatient discharge innovation.

Table 2: Integrated vs. Fragmented Implementation Modes for Complex Innovations

| Implementation Factor | Integrated Implementation Mode | Fragmented Implementation Mode |

|---|---|---|

| Strategic Approach | Clear overall purpose with one umbrella initiative subsuming others [17] | Several overlapping, competing innovations that overwhelm sites [17] |

| Resource Allocation | Guided by the integrative initiative with deliberate upfront planning [17] | Inconsistent resource allocation without clear prioritization [17] |

| Stakeholder Engagement | Hospital executives, frontline managers, and staff buy into the initiative [17] | Limited engagement due to competing priorities and confusion |

| Implementation Support | Continual support and evaluation with allowance for local customization [17] | Minimal ongoing support with rigid or inconsistent application |

| Reported Outcomes | Significant progress and realized benefits within 2.5 years [17] | Minimal progress with ongoing implementation difficulties [17] |

Experimental Protocols: Measuring Knowledge Integration

Protocol 1: Quantifying Intelligence Fragmentation

Objective: To measure the degree of data fragmentation across R&D intelligence systems and calculate associated costs [26].

Methodology:

- Tool Inventory: Catalog all intelligence platforms (patent databases, scientific literature repositories, market intelligence tools, competitive analysis systems)

- Usage Analysis: Track actual usage patterns and feature utilization for each platform

- Content Overlap Assessment: Analyze redundancy across platforms using automated content matching

- Time Tracking: Measure hours researchers spend searching across systems and validating information

- Cost Calculation: Sum subscription costs, integration expenses, and training overhead

Key Metrics:

- Number of disparate intelligence platforms per organization (typically 5-12)

- Content overlap percentage between platforms (up to 60% redundancy found)

- Weekly hours spent by researchers searching across systems (average: 15 hours)

Protocol 2: Evaluating Knowledge Graph Integration

Objective: To assess the effectiveness of knowledge graph technology in connecting fragmented data sources [27].

Methodology:

- Data Source Mapping: Identify all public and private data sources to be integrated

- Semantic Layer Development: Implement a unified semantic data layer using standardized ontologies

- Connection Automation: Configure knowledge graph to automatically surface relationships across datasets

- AI Integration: Apply large language models to synthesize insights across connected datasets

- Performance Measurement: Compare time-to-insight before and after implementation

Key Metrics:

- Reduction in research duplication (up to 70% reported)

- Improvement in prior art search speed (up to 50% faster)

- Decrease in time to insight (up to 40% reduction)

Visualizing the Solutions: From Fragmented to Integrated Data

The following diagrams illustrate the transition from fragmented data systems to integrated knowledge frameworks and their impact on pharmaceutical R&D workflows.

The Scientist's Toolkit: Research Reagent Solutions for Data Integration

The following table details essential tools and technologies mentioned in the search results for combating data fragmentation in pharmaceutical research.

Table 3: Research Reagent Solutions for Pharmaceutical Data Integration

| Tool/Category | Primary Function | Key Benefits in Fragmented Environments |

|---|---|---|

| Unified Intelligence Platforms (e.g., Cypris) [26] | Consolidated access to patents, literature, and market data | Single interface replacing 5-12 disparate platforms; Reduces cognitive load on researchers |

| Knowledge Graph Technology [27] [26] | Semantic integration of disparate data sources | Automatically connects insights across disciplines; Adds contextual meaning to internal data |

| AI-Powered Synthesis Tools [26] | LLM-powered analysis across massive datasets | Processes complex technical queries; Generates comprehensive reports from thousands of sources |

| Data Visualization Tools (e.g., KeyLines) [28] | Graph-based visualization of complex relationships | Reveals hidden connections between drugs, targets, and pathways; Intuitive interface for complex data |

| Business Intelligence Platforms (e.g., Power BI, Tableau) [29] | Data analysis and visualization | Interactive dashboards for complex datasets; Real-time insights across departments |

| Advanced Analytics Platforms (e.g., SAS, IBM Watson Health) [29] | Predictive modeling and clinical trial analysis | AI-powered insights for faster decision-making; Streamlines clinical trials and research processes |

The evidence clearly demonstrates that fragmented data systems create substantial costs and inefficiencies in pharmaceutical R&D, while integrated approaches using knowledge graphs and unified platforms deliver measurable benefits. Organizations that successfully consolidate their R&D intelligence infrastructure report 70% reduction in research duplication, 50% faster prior art searches, and 40% decrease in time to insight [26]. The transition from fragmented to integrated frameworks represents not just a technological shift, but a strategic imperative for organizations seeking to accelerate innovation and maintain competitive advantage in the rapidly evolving pharmaceutical landscape.

Building a Unified System: Core Components of an Integrated Assessment Framework

The Adverse Outcome Pathway (AOP) framework has revolutionized chemical safety assessment by providing a structured, mechanistic framework for organizing toxicological data from molecular initiating events to adverse outcomes relevant to risk assessment. This guide introduces the Impact Outcome Pathway (IOP) as a conceptual extension of the AOP framework, designed to integrate a broader spectrum of biological and technical data streams. By comparing the established AOP framework against the proposed IOP paradigm, we highlight how this holistic approach can address current limitations in fragmented chemical impact assessment research, offering researchers a more comprehensive tool for drug development and safety science.

Understanding the Foundation: The Adverse Outcome Pathway (AOP)

The Adverse Outcome Pathway (AOP) is a conceptual framework that serves as a knowledge assembly and communication tool in toxicology [30]. It is designed to support the translation of pathway-specific mechanistic data into responses relevant to assessing and managing risks of chemicals to human health and the environment [30]. An AOP describes a sequential chain of causally linked events beginning with a Molecular Initiating Event (MIE), where a chemical stressor interacts with a biological target, and progressing through measurable Key Events (KEs) at various biological levels of organization, culminating in an Adverse Outcome (AO) of regulatory relevance at the individual or population level [30]. The AOP framework is chemically-agnostic, meaning it captures response-response relationships that can be initiated by any number of chemical or non-chemical stressors [30].

The Critical Role of AOPs in Modern Toxicology

AOPs facilitate the use of data streams often not employed by traditional risk assessors, including information from in silico models, in vitro assays, and short-term in vivo tests with molecular endpoints [30]. This capability is crucial for increasing the capacity and efficiency of safety assessments for single chemicals and complex mixtures, aligning with legislative mandates like the EU's REACH program and the revised US Toxic Substances Control Act (TSCA) that require evaluating vast numbers of chemicals [30]. The framework is supported by international organizations like the Organisation for Economic Cooperation and Development (OECD), which maintains the AOP Wiki, an interactive knowledgebase containing over 200 AOPs at various development stages [30].

The Proposed Extension: The Impact Outcome Pathway (IOP)

The Impact Outcome Pathway (IOP) is proposed as an integrative extension of the AOP framework. While the AOP focuses primarily on adverse biological outcomes from a toxicological perturbation, the IOP framework aims to incorporate a wider, more holistic set of impact indicators, including adaptive responses, recovery mechanisms, and system-level resilience metrics. This paradigm acknowledges that biological systems respond to perturbations through complex, interconnected networks rather than linear pathways. The IOP seeks to capture this complexity by integrating diverse data domains, including advanced omics technologies, real-time bio-monitoring data, and physiologically based pharmacokinetic modeling, providing a more comprehensive landscape of biological impact.

Conceptual Distinctions: AOP vs. IOP

The table below outlines the core conceptual and operational differences between the AOP and IOP frameworks.

Table 1: Framework Comparison: AOP vs. IOP

| Feature | Adverse Outcome Pathway (AOP) | Impact Outcome Pathway (IOP) |

|---|---|---|

| Primary Focus | Mechanistic pathways leading to adverse health effects [30] | Holistic impact analysis, including adaptive and adverse outcomes |

| Scope | Linear pathways; can be assembled into networks [30] | Inherently networked, systems-level interactions |

| Chemical Applicability | Chemically-agnostic [30] | Multi-stressor inclusive (chemical, physical, biological) |

| Temporal Dimension | Primarily prospective (predictive of adversity) | Integrates prospective, concurrent, and retrospective data |

| Regulatory Integration | Directly supports chemical risk assessment [31] [30] | Supports broader environmental and health impact assessment |

| Data Integration | Mechanistic toxicological data (e.g., in vitro, omics) [30] | Multi-domain data (e.g., eco-toxicological, exposure science, real-world monitoring) |

Comparative Analysis: Experimental Applications and Data

AOPs in Action: Skin Sensitization and Endocrine Disruption

The practical application of AOPs is best illustrated through real-world case studies. The AOP for skin sensitization (AOP 40) has been successfully used to develop a suite of in vitro assays that replace traditional animal tests [30]. This AOP starts with the MIE of covalent protein binding by an electrophile, progresses through KEs like inflammatory cytokine induction and T-cell proliferation, and results in the AO of allergic contact dermatitis [30]. Similarly, AOPs have been central to prioritizing endocrine-disrupting chemicals by linking in vitro data on molecular initiating events (e.g., estrogen receptor activation) to adverse in vivo outcomes [30]. These applications showcase the AOP's power in translating mechanistic data into regulatory decisions.

IOP Potential: Integrating Broader Impact Metrics

An IOP approach for a complex endpoint like intraocular pressure (IOP) would extend beyond a single adverse outcome. It would integrate data from multiple measurement technologies (e.g., rebound tonometry, pneumatonometer) while accounting for confounding factors like the effect of topical anesthetics (e.g., proparacaine, which can significantly lower measured IOP values) [32]. Furthermore, it could incorporate susceptibility factors (e.g., genetic predispositions from genomic data) and compensatory biological mechanisms that an AOP focused solely on adversity might overlook. This exemplifies the IOP's capacity for a more integrated systems analysis.

Table 2: Experimental Data from Tonometer Comparison Study [32]

| Tonometer Type | Mean Difference vs. GAT (mmHg) | Agreement with GAT (Lin's CCC) | Statistical Equivalence (±2 mmHg) |

|---|---|---|---|

| Rebound Tonometer (RT) | Lowest | Strongest | Yes |

| Pneumatonometer (PN) | > 2 mmHg higher | Poorest | No |

| Ocular Response Analyzer (CC) | Low | Strong | Yes |

| Goldmann Applanation (GAT) | Reference | Reference | Reference |

Experimental Protocol (Summarized): A multicenter trial measured IOP in healthy adults using four mechanistically different tonometers (iCare RT, ocular response analyzer CC, pneumatonometer PN, and GAT) [32]. IOP readings for RT and CC were collected with and without topical proparacaine. Agreement was analyzed using Bland-Altman Limits of Agreement, Lin's concordance correlation coefficient, and robust equivalence tests [32].

Visualizing the Frameworks

AOP Workflow: From Data to Application

The following diagram illustrates the structured workflow of developing and applying an Adverse Outcome Pathway.

AOP Development and Application Workflow

IOP Concept: An Integrated Network

The proposed IOP framework can be visualized as an interconnected network that captures a broader range of impacts and interactions, as shown below.

IOP as an Integrated Knowledge Network

Successfully developing or applying AOPs and IOPs requires a suite of computational and data resources. The table below details key tools and platforms essential for researchers in this field.

Table 3: Key Research Reagents & Solutions for Pathway Research

| Tool / Resource | Type | Primary Function | Relevance to AOP/IOP |

|---|---|---|---|

| AOP-Wiki [30] | Knowledgebase | International repository for AOP development and sharing | Core platform for curating and storing AOP mechanistic data |

| EPA AOP-DB [33] | Database (Profiler) | Biological and mechanistic characterization of AOP data; provides systems-level biological context | Assists in biological profiling and semantic data integration |

| FAIR AOP Guidelines [31] | Data Standard | Implements Findable, Accessible, Interoperable, Reusable (FAIR) metadata standards for AOP data | Ensures data reliability, re-usability, and computational readiness |

| AI/ML Tools [34] | Computational Method | Accelerates AOP development by extracting evidence and formulating hypotheses from large datasets | Key for building more complex IOP networks and predictive models |

| New Approach Methods (NAMs) [31] | Assay/Test Method | Non-animal testing methods (e.g., in vitro, in silico) that generate mechanistic data | Primary data source for populating and validating AOPs/IOPs |

The Adverse Outcome Pathway framework has proven its immense value in organizing mechanistic toxicological knowledge and supporting the transition to animal-free, next-generation risk assessment [31] [30]. The proposed Impact Outcome Pathway (IOP) builds upon this solid foundation, advocating for a more expansive and holistic analysis that captures not just adversity but the full spectrum of biological impact. As toxicology continues to evolve with advances in AI, multi-omics, and complex systems biology, the integration of AOPs into a broader IOP context holds the promise of a truly unified and predictive framework for chemical safety and drug development. This evolution from fragmented analyses to an integrated framework is essential for tackling the complex chemical assessment challenges of the 21st century.

The modern landscape of chemical impact assessment and drug development is defined by a critical challenge: managing vast amounts of complex data across fragmented sources while ensuring scientific rigor and regulatory compliance. The FAIR Guiding Principles (Findable, Accessible, Interoperable, and Reusable) have emerged as an essential framework for addressing this data management crisis, which costs the EU economy an estimated €10.2 billion annually in the research sector alone [35]. Simultaneously, the European Union's "One Substance, One Assessment" (OSOA) initiative represents a regulatory paradigm shift toward integrated chemical assessment, aiming to eliminate duplication and enhance scientific coherence across legislative frameworks [36]. This comparative analysis examines the implementation of FAIR Principles and Knowledge Graphs as technological solutions that enable the transition from fragmented data silos to integrated research environments, with particular relevance to chemical impact assessment and drug development workflows.

Theoretical Foundation: From FAIR Data to CLEAR Understanding

The FAIR Guiding Principles

The FAIR Principles establish a framework for scientific data management and stewardship that benefits both machines and humans alike [37] [38]. While all four principles are interconnected, the interoperability dimension is particularly crucial for integrated chemical assessment. True interoperability requires that (meta)data use formal, accessible, shared, and broadly applicable languages for knowledge representation, with vocabularies that follow FAIR principles and include qualified references to other (meta)data [35]. This goes beyond simple data exchange to enable meaningful integration and analysis across disparate sources.

Knowledge Graphs as Implementation Vehicles