Beyond the Silos: Achieving FAIR Chemical Data Interoperability for Drug Discovery and Biomedical Research

This article addresses the critical challenge of chemical data interoperability, a major bottleneck in life sciences R&D.

Beyond the Silos: Achieving FAIR Chemical Data Interoperability for Drug Discovery and Biomedical Research

Abstract

This article addresses the critical challenge of chemical data interoperability, a major bottleneck in life sciences R&D. For researchers, scientists, and drug development professionals, we explore the fragmentation of chemical identifiers and databases that hinders data reuse and AI-driven discovery. The article provides a comprehensive guide, from foundational principles like the FAIR guidelines and InChI identifiers to methodological approaches for implementation, common troubleshooting of data quality issues, and validation through real-world case studies. By outlining a path toward harmonized chemical data ecosystems, this resource aims to empower professionals to unlock the full potential of their data, accelerating innovation and improving collaborative outcomes.

The Chemical Data Interoperability Imperative: Why Silos Hinder Discovery

FAQs and Troubleshooting Guides

This section addresses common challenges researchers face with chemical data interoperability and provides practical solutions.

FAQ 1: Why is our organization's chemical data described as being in a "poor state," and how does this impact our R&D efficiency?

- Problem: Data is siloed, not findable, accessible, interoperable, or reusable (FAIR). There is a lack of consistency and quality; data is not curated, and data and metadata are not standardized [1].

- Impact: This is the most significant barrier not only to artificial intelligence and machine learning (AI/ML) but also to scientists using experimental data in decision-making. It leads to:

- Inefficiency: Scientists spend considerable time assembling information from multiple locations to make decisions rather than on innovation [1].

- Barriers to AI/ML: Data science models require structured, normalized, and accurate datasets. Inconsistent data is incompatible from a machine perspective, rendering AI/ML initiatives ineffective [1].

- Poor Reproducibility: A fragmented data landscape with inconsistent definitions compromises the end-to-end integrity of research and limits cross-study validation [2].

FAQ 2: We work at the interface of chemical and macromolecular crystallography. What specific interoperability challenges should we anticipate?

- Problem: Research combining small-molecule (chemical crystallography, CX) and macromolecular (MX) data faces unique obstacles [3].

- Troubleshooting Guide:

- Challenge: Terminology Differences. The term "ligand" has different meanings. In CX, it binds to a central metal atom. In MX/biochemistry, it is a substance that binds to a biomolecule. This can cause confusion in interdisciplinary research [3].

- Solution: Establish and use project-specific controlled vocabularies (CVs) agreed upon by all team members from different disciplines.

- Challenge: Incompatible File Formats and Software. CX and MX use specialized software that often has incompatible data formats [3].

- Solution: Identify and use software that can handle multiple file formats or develop scripts for conversion. Be prepared for a "circuitous route" to convert data, for example, to use small-molecule structural data in protein refinement software [3].

- Challenge: Varying Data Precision. Parameters like B factors (MX) and anisotropic displacement parameters (ADP) (CX) describe atomic displacement but are refined and interpreted differently due to the typical resolution of the structures [3].

- Solution: Do not directly compare these parameters. Understand the context of each and use validation reports specific to each discipline (e.g., IUCr's CheckCIF for CX, wwPDB validation for MX) [3].

- Challenge: Terminology Differences. The term "ligand" has different meanings. In CX, it binds to a central metal atom. In MX/biochemistry, it is a substance that binds to a biomolecule. This can cause confusion in interdisciplinary research [3].

FAQ 3: What is the tangible benefit of investing in data harmonization for predictive modeling?

- Problem: Models built on messy, unharmonized data have lower predictive power, leading to wasted resources on flawed experiments or poor drug candidates [4].

- Evidence-Based Solution: A study retraining an AI model with a harmonized dataset demonstrated significant accuracy improvements [4].

- Table: Impact of Data Harmonization on Predictive Model Accuracy [4]

| Metric | Improvement |

|---|---|

| Standard Deviation between predicted and experimental results | Reduced by 23% |

| Discrepancy in predicted vs. experimental ligand-target interactions | Decreased by 56% |

FAQ 4: What is a semi-automated method for harmonizing chemical property data from different sources?

- Problem: Chemical data for thousands of substances are available from sources like the REACH regulation, but they require systematic curation for use in risk and impact assessments [5].

- Experimental Protocol: Semi-Automated Data Harmonization [5]

- Objective: To derive a representative nominal value (e.g., mean) and confidence intervals for a given chemical property from multiple reported data points.

- Method Workflow:

- Data Collection: Assemble all reported data for a specific substance-property combination (e.g., octanol-water partition coefficients, Kow).

- Application of Criteria: Apply a set of aligned data selection and harmonization criteria to the dataset. This includes assessing data quality and relevance.

- Statistical Derivation: Calculate a representative mean value and related confidence intervals from the curated data points.

- Outcome: A reliable, harmonized value that reflects the quality and variability of the underlying data, suitable for use in various science and policy assessment frameworks.

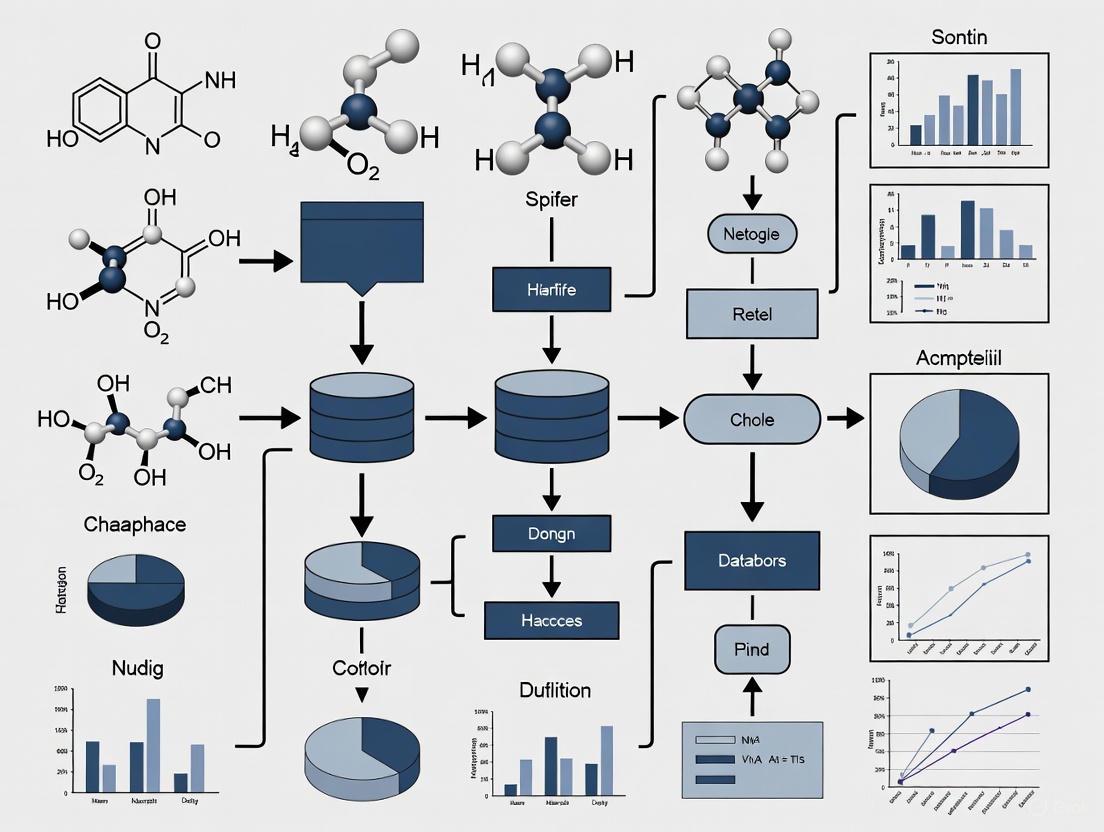

The diagram below illustrates the logic and workflow of this semi-automated harmonization process.

The Scientist's Toolkit: Essential Solutions for Data Interoperability

The following table details key reagents, tools, and methodologies essential for addressing chemical data interoperability issues.

Table: Key Research Reagent Solutions for Data Interoperability

| Item/Reagent | Function & Explanation |

|---|---|

| Controlled Vocabularies (CVs) & Ontologies | Standardized terminologies that resolve discrepancies in naming and definitions (e.g., defining "ligand" for a specific project). They are critical for enabling downstream computational use and making data interoperable [1] [6]. |

| FAIR Data Principles | A guiding framework to make data Findable, Accessible, Interoperable, and Reusable. Adhering to these principles transforms data from a research byproduct into a strategic organizational asset [1] [2]. |

| Automated Data Marshaling | The use of automated workflows and ETL (Extract, Transform, Load) pipelines to import, export, transform, and move data. This reduces manual effort, minimizes errors, and is central to scaling data preparation [1] [2]. |

| Semi-Automated Harmonization Method | A specific methodology that combines automated scripts with human expert oversight to curate, select, and derive representative values from disparate chemical data sources, as described in the experimental protocol above [5]. |

| Robust Data Governance Framework | A set of policies and standards that define data ownership, validation rules, and stewardship. It provides the organizational structure needed to maintain data quality and interoperability at scale [2]. |

| Data Catalogues & Metadata Management | Tools that provide context (glossaries, lineage) for data, making it understandable and accessible. They are essential for managing the provenance and reusability of complex chemical data [2]. |

Visualizing the Data Interoperability Challenge Ecosystem

The challenges of non-interoperable data are interconnected. The following diagram maps the core problems, their consequences, and the required foundational solutions.

FAQs on FAIR Principles and Chemical Data

What are the FAIR Principles and why are they important for chemical research? The FAIR Principles are a set of guiding principles to make digital assets, including data and metadata, Findable, Accessible, Interoperable, and Reusable [7]. They emphasize machine-actionability, which is the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention [7]. In chemical research, adopting FAIR helps address challenges in data standardization and interoperability, which are crucial for areas like drug discovery and materials science. FAIR data is a fundamental enabler for digital transformation, allowing powerful analytical tools like artificial intelligence (AI) and machine learning (ML) to access data at scale [8].

How do I make my chemical data Findable? To make data findable, you must assign a globally unique and persistent identifier (like a DOI) to both the dataset and its metadata. The data should be described with rich metadata and be registered or indexed in a searchable resource [7] [9]. For chemical data, this means using standardized representations for molecular structures (e.g., InChI, SMILES) and ensuring they are part of the metadata record [10].

My data is sensitive. Can it still be FAIR? Yes. FAIR does not necessarily mean "open" or "free" [8]. The Accessible principle states that (meta)data should be retrievable by their identifier using a standardized protocol, which can include authentication and authorization steps [7] [9]. It is critical to implement security measures like authentication procedures, rules for access, and data encryption to protect privacy when working with sensitive data [11]. The metadata, which describes the data, should remain accessible even if the data itself is no longer available [7].

What does 'Interoperable' mean for a chemical dataset? Interoperability means that data can be integrated with other data and used with applications or workflows for analysis. This is achieved by using formal, accessible, shared, and broadly applicable languages for knowledge representation, such as standardized vocabularies, ontologies, and semantic models that follow FAIR principles themselves [7] [12]. In chemistry, this involves using community standards like the Allotrope Foundation Ontology to structure metadata [12].

How can I ensure my data is Reusable? The key to reusability is rich description and clarity. (Meta)data should be described with a plurality of accurate and relevant attributes [7]. This includes clear provenance (how the data was generated), licensing (terms of use), and detailed methodology that aligns with domain-specific community standards [13]. For experimental chemistry data, this means reporting both successful and failed synthesis attempts to create bias-resilient datasets for AI training [12].

Troubleshooting Common FAIR Implementation Issues

| Problem Area | Common Issue | Potential Solution |

|---|---|---|

| Data Fragmentation | Data is scattered across various platforms, databases, and file formats, making it hard to locate and access [9]. | Implement a centralized research data infrastructure (RDI) or a FAIR-compliant Laboratory Information Management System (LIMS) to serve as a unified data backbone [12]. |

| Interoperability | Incompatible software systems and a lack of standardized data models or ontologies impede data exchange [9]. | Adopt and map metadata to structured, community-accepted ontologies (e.g., the Allotrope Foundation Ontology) to ensure semantic interoperability [12] [10]. |

| Data Quality & Documentation | Inadequate documentation, incomplete metadata, and inconsistent data formats affect reliability and reuse [9]. | Utilize electronic lab notebooks (ELNs) that enforce metadata capture at the point of data generation and use standardized templates for experimental workflows [8]. |

| Legal & Ethical Compliance | Concerns about data protection (e.g., GDPR), intellectual property, and confidentiality restrict data sharing [11] [9]. | Conduct a Data Protection Impact Assessment (DPIA), implement granular access controls, and seek explicit consent from participants where necessary [11]. |

| Cultural & Incentive Barriers | A traditional emphasis on publishing over data sharing, and a lack of recognition for data stewardship, discourages researchers [9]. | Advocate for institutional policies that recognize and reward data sharing, and provide training to foster a culture of open research [14] [11]. |

FAIRification Framework and Workflow

Implementing FAIR is a process often called "FAIRification." The following workflow diagram outlines the key stages for making a dataset FAIR, particularly in the context of high-throughput chemistry.

Essential Research Reagent Solutions for a FAIR Chemistry Lab

The following tools and solutions are critical for generating and managing FAIR chemical data.

| Item | Function in FAIR Context |

|---|---|

| Electronic Lab Notebook (ELN) | Captures experimental procedures, observations, and data at the source, ensuring data is attributable, legible, and contemporaneous (ALCOA+). A FAIR-compliant ELN helps structure data and push it directly into analytics software [8]. |

| Research Data Infrastructure (RDI) | A community-driven platform for standardizing and sharing data. It transforms experimental metadata into validated, structured formats (e.g., RDF graphs) using an ontology-driven model, making data findable and interoperable [12]. |

| Standardized Ontologies (e.g., Allotrope) | Provide a formal, shared language for describing chemical data and metadata. They are essential for achieving semantic Interoperability by ensuring that data from different instruments and labs can be integrated and understood uniformly [12] [10]. |

| Persistent Identifier Services | Assign globally unique and persistent identifiers (e.g., DOIs, Handles) to datasets and their components. This is a foundational requirement for ensuring the long-term Findability and citability of digital assets [7]. |

| Standard Molecular Identifiers (InChI, SMILES) | Provide consistent, non-proprietary representations of molecular structures. Their use in metadata is crucial for the accurate Findability and Interoperability of chemical data across different databases and platforms [15] [10]. |

Technical Support & Troubleshooting Guides

Common Identifier Generation Issues and Solutions

Table 1: Troubleshooting Guide for Common Chemical Identifier Issues

| Problem Scenario | Likely Cause | Solution | Prevention Best Practice |

|---|---|---|---|

| Different SMILES strings for the same molecule [16] [17] | Use of non-canonical SMILES algorithms. | Use a reliable, canonical SMILES generator or switch to InChI for a unique identifier [16] [17]. | Ensure your software uses a canonicalization algorithm. |

| InChI conversion fails for a structure [17] | The molecule may contain features not yet fully supported (e.g., specific polymers, atropisomers). | For polymers, use the non-standard InChI (prefix InChI=1B) with pseudo-element atoms (Zz or *) [17]. |

Check the InChI Trust website for supported chemical features and known limitations. |

| Inability to distinguish between tautomeric forms. | Default InChI and SMILES may represent a single, dominant tautomer or a mobile hydrogen system [17] [18]. | Use the "FixedH" layer in non-standard InChI or specific isomeric SMILES to represent a specific tautomer [18]. | Understand the identifier's default handling of tautomerism for your application. |

| The same macroscopic substance maps to multiple molecular identifiers. | The substance (e.g., glucose in solution) is a mixture of multiple distinct molecular structures (tautomers, isomers) [19]. | Use a substance identifier (like PubChem SID) or a collection of all relevant molecular identifiers (CIDs) to represent the substance accurately [19]. | Differentiate between molecular-level (InChI, SMILES) and substance-level (CAS RN) identifiers. |

| CAS Registry Number lookup is expensive or inaccessible. | CAS RN is a proprietary identifier requiring licensing [19]. | Use InChI or SMILES as open alternatives. PubChem provides CAS RNs on its Substance pages, aggregated from public depositors [19]. | Utilize open databases like PubChem that may link to CAS RNs provided by depositors. |

Frequently Asked Questions (FAQs)

Q1: Why does my software generate a different SMILES string for caffeine than another tool? A: This is a classic issue with SMILES. While "canonical" SMILES algorithms aim to generate a unique string, the canonical form is dependent on the specific algorithm used by the software (Daylight, OpenEye, CDK, etc.) [16]. For caffeine, different algorithms can produce different, yet equally valid, canonical SMILES. InChI was designed to solve this problem by providing a single, standardized canonical representation [17].

Q2: When should I use InChIKey instead of the full InChI string? A: The InChIKey is a 27-character hashed version of the full InChI, designed for easy web searching and database indexing due to its fixed length [20]. Use the InChIKey for quick lookups and when storage space is a concern. However, the full InChI contains more detailed, layered information and should be used when the complete structural description is needed or for differentiating stereoisomers, as this detail can be lost in the InChIKey.

Q3: Can InChI handle all types of chemical structures?

A: The standard InChI (prefix InChI=1S) reliably covers a vast majority of organic and organometallic molecules and is over 99.99% reliable [17]. However, some complex areas are still under active development. These include polymers (handled by the non-standard InChI=1B with pseudo-atoms), certain tautomers, and atropisomers [17]. It is less suitable for materials with variable compositions, like clays [19].

Q4: What is the fundamental difference between a CAS RN and an InChI? A: CAS RN is a substance-based identifier assigned by the Chemical Abstracts Service, often representing a commercially available material or a specific mixture [19]. InChI is a structure-based identifier algorithmically derived from a connection table representing a single molecular structure [21] [20]. A single substance (e.g., glucose) can have multiple InChIs for its different tautomeric forms, but it may have one CAS RN [19].

Q5: How do I represent a reaction or a polymer using these identifiers?

A: Extensions of the standard identifiers exist for this purpose. RInChI (Reaction InChI) is available for describing chemical reactions [17]. For polymers, a non-standard InChI (InChI=1B) can be used, often employing pseudo-element atoms (Zz or *) to represent connection points in the polymer chain [17].

Experimental Protocols for Identifier Harmonization

Protocol: Implementing Reference Standardization for Metabolomics Data Harmonization

Objective: To correct for systematic technical variations and enable cross-study and cross-laboratory harmonization of untargeted high-resolution metabolomics (HRM) data using a calibrated reference sample [22].

Key Research Reagent Solutions:

| Item | Function in the Protocol |

|---|---|

| Calibrated Reference Plasma Pool (e.g., NIST SRM 1950) | Serves as a long-term, chemically characterized standard for batch correction and quantification [22]. |

| Authentic Chemical Standards | Used to create standard curves for absolute quantification of metabolites in the reference material [22]. |

| Stable Isotope Labeled Internal Standards | Accounts for variability in sample preparation and instrument analysis [22]. |

| HILIC & C18 Chromatography Columns | Provide complementary separation mechanisms to increase metabolite coverage [22]. |

| High-Resolution Mass Spectrometer (e.g., LC-FTMS) | Detects thousands of metabolite features with high mass accuracy [22]. |

Methodology:

- Reference Material Characterization:

- Prepare a pooled reference material (e.g., from NIST or a custom pool) that is representative of your study samples.

- Analyze this reference material alongside a series of spiked authentic standards to build calibration curves for identified metabolites.

- Quantify the concentration of approximately 200+ metabolites in the reference material to create a calibrated "reference metabolome" [22].

- Concurrent Analysis:

- Analyze the calibrated reference sample at predefined intervals (e.g., with every batch of study samples) throughout your data collection period [22].

- Data Processing and Harmonization:

- For each identified metabolite in a study sample, calculate the ratio of its peak area to the peak area of the same metabolite in the concurrently analyzed reference sample.

- Use the known concentration of the metabolite in the reference sample to estimate its concentration in the study sample via this ratio.

- Apply this "reference standardization" to all study samples and across different studies to place metabolite measurements on a common, harmonized scale [22].

Workflow Diagram: Chemical Identifier Integration for Database Interoperability

The following diagram illustrates a logical workflow for resolving chemical identity across different databases using InChI as the key harmonizing agent.

Quantitative Comparison of Chemical Identifiers

Table 2: Characteristic Comparison of Major Chemical Identifiers

| Feature | CAS Registry Number (CAS RN) | IUPAC International Chemical Identifier (InChI) | Simplified Molecular-Input Line-Entry System (SMILES) |

|---|---|---|---|

| Type | Substance-based, proprietary registry identifier [19] | Structure-based, open-source line notation [21] [20] | Structure-based, open line notation [16] [23] |

| Governance | Chemical Abstracts Service (ACS) [19] | IUPAC & InChI Trust (Not-for-profit) [21] [20] | Originally Daylight CIS; OpenSMILES by Blue Obelisk community [16] |

| Canonical / Unique | Unique, as assigned by authority [19] | Canonical by design; one standard InChI per structure [17] [20] | Can be canonical, but algorithm-dependent [16] [17] |

| Key Strength | Widely used in regulatory and commerce; links to substances [19] | Free, open, and standardized; enables database interoperability [21] [20] | Human-readable; compact; widely supported [16] [23] |

| Key Limitation | Cost for access and integration; assignment logic not public [19] | Does not cover all of chemistry (e.g., some polymers); long string length [17] [19] | Multiple valid strings per molecule; canonical form not universal [16] [17] |

| Tautomer Handling | Assigned at the substance level [19] | Default layer treats some tautomers as identical; FixedH for specific forms [17] [18] | Represents the specific input structure; tautomers are distinct [18] |

| Reliability | High, as it is assigned by human experts [19] | Extremely high; tested at >99.99% on large databases [17] | Varies by implementation and canonicalization algorithm [16] |

FAQs on Chemical Database Interoperability

1. What are the most common technical sources of fragmentation in chemical databases? The most common technical sources are legacy systems, proprietary data formats, and inconsistent standards. Legacy systems, designed before modern interoperability was a concern, often create data silos and are incompatible with newer technologies [24]. Proprietary formats from different vendors lead to non-interoperable data, meaning systems cannot effectively communicate even when using the same overarching standards like HL7 or FHIR, which can be implemented in different ways [24]. Inconsistent adoption of standards for chemical identifiers and terminology leads to semantic misunderstandings, where data can be exchanged but its meaning is lost or misinterpreted [24].

2. How does a lack of semantic interoperability affect chemical research and AI initiatives? Semantic interoperability ensures that different systems can accurately interpret exchanged data. Without it, data becomes unreliable for advanced analytics and AI [24]. AI models operate on a "garbage in, garbage out" principle; if trained on data where the meaning of chemical identifiers or properties is inconsistent or flawed, the models will produce incorrect predictions and correlations. This poses a significant risk for research and drug development, where accurate data is critical for safety and efficacy [24].

3. What are the key regulatory trends impacting chemical data standards in 2025? A key trend is the global push for stronger chemical safety and sustainability regulations, which is increasing the demand for high-quality, interoperable data [25]. This includes the expansion of the Globally Harmonized System of Classification and Labelling of Chemicals (GHS) by more countries [25]. Furthermore, regulatory bodies like the European Chemicals Agency (ECHA) are promoting the use of New Approach Methodologies (NAMs)—such as in vitro and computational tools—to reduce animal testing. This requires robust and standardized data to support alternative methods like read-across and quantitative structure-use relationship (QSUR) models [26].

4. What resources are available to help harmonize chemical exposure data? The U.S. Environmental Protection Agency's Chemical and Products Database (CPDat) is a key resource. Its latest version (v4.0) uses a rigorous data curation pipeline and controlled vocabularies to provide FAIR (Findable, Accessible, Interoperable, and Reusable) data on chemical compositions, functional uses, and list presences in products [27]. The database links records to original sources and maps chemical identifiers to harmonized DSSTox Substance Identifiers (DTXSIDs), supporting exposure assessments and prioritization workflows [27].

Troubleshooting Guides

Issue 1: Resolving Data Interpretation Errors from Inconsistent Standards

Problem: Data is successfully transferred between systems but contains errors or is misinterpreted upon receipt, indicating a failure of semantic interoperability.

Diagnosis and Resolution: This is often caused by inconsistent use of medical coding, terminology, or chemical identifiers across systems [24].

- Map Your Vocabularies: Identify the specific data fields (e.g., chemical names, units of measure, property codes) where discrepancies occur.

- Implement Controlled Vocabularies: Adopt and enforce the use of standardized, curated vocabularies. For chemical substances, use unique identifiers like DSSTox Substance Identifiers (DTXSIDs) to ensure accurate cross-referencing [27].

- Validate with a Reference Database: Use a reference resource like CPDat, which employs rigorous chemical curation and quality assurance (QA) workflows, to verify the accuracy and harmonization of your chemical identifiers and associated data [27].

Issue 2: Integrating Data from Legacy Systems and Proprietary Formats

Problem: Inability to access or integrate valuable historical data stored in outdated legacy systems or proprietary formats.

Diagnosis and Resolution: Legacy systems often lack modern Application Programming Interfaces (APIs) and use non-standard data formats [24].

- Assess the Data Source: Determine the original data format and the business logic of the legacy system.

- Develop a Data Pipeline: Create a custom extraction, transformation, and loading (ETL) pipeline. This involves:

- Extraction: Writing scripts to pull raw data from the legacy system.

- Transformation: Converting the proprietary data into a modern, standardized format (e.g., based on FHIR resources or other relevant standards). This step includes chemical curation to map reported names and CASRNs to verified identifiers like DTXSIDs [27].

- Loading: Ingesting the transformed and harmonized data into your target database or platform.

- Utilize Modern Curation Tools: Leverage platforms like the Factotum system used for CPDat, which provides tools for manual and script-based data extraction, curation, and QA tracking to make this process more efficient and reproducible [27].

Experimental Protocols for Data Harmonization

Protocol 1: Chemical Curation and Identifier Harmonization Workflow

Objective: To map reported chemical identifiers from various sources to a standardized, verified substance identifier to ensure accurate data integration and interpretation.

Methodology:

- Data Acquisition: Collect chemical data records from source documents (e.g., safety data sheets, scientific literature). Key data points include reported chemical name and CASRN [27].

- Chemical Record Registration: Assign a unique internal chemical record ID to each entry from a source document [27].

- Identifier Mapping: Submit the reported chemical identifiers (name, CASRN) to a chemical curation service (e.g., EPA's DSSTox database) to be mapped to a unique DSSTox Substance Identifier (DTXSID) [27].

- Quality Assurance (QA): A second human curator checks the vocabulary assignments and extracted text for accuracy against the raw data file. This QA step is critical for data integrity [27].

- Data Delivery: Once QA is approved, the curated data—now linked to a verified DTXSID—is made available for use, with access to preferred names, verified CASRNs, and chemical structures [27].

Chemical Identifier Harmonization Workflow

Protocol 2: Building an Interoperable Chemical Database Pipeline

Objective: To establish a reproducible pipeline for aggregating, curating, and delivering chemical data that adheres to FAIR principles.

Methodology (Based on the CPDat Pipeline) [27]: The pipeline consists of three main stages:

- Intake Stage:

- Identify and prioritize publicly available data sources relevant to chemical use and exposure.

- Acquire data files (PDFs, spreadsheets) and extract relevant text and metadata, either manually or with custom scripts.

- Curation Stage:

- Use a data management platform (e.g., Factotum) to assign controlled vocabulary terms to extracted data entries.

- Curation is performed according to Standard Operating Procedures (SOPs) for different document types (composition, functional use, list presence).

- The chemical curation workflow (Protocol 1) is executed within this stage.

- A separate QA task is performed by a different curator to verify accuracy.

- Delivery Stage:

- Perform an Extract, Transform, Load (ETL) process to move data from the document-centric curation database to a public-facing, product/use-centric database.

- The final database is structured for easy public access and exploration.

FAIR Chemical Data Pipeline

Key Research Reagent Solutions

Table: Essential Resources for Chemical Database Interoperability Research

| Research Reagent / Resource | Function / Description |

|---|---|

| CPDat (Chemical and Products Database) | An EPA database providing curated data on chemical ingredients in products, functional uses, and general chemical presence lists. It uses controlled vocabularies and DSSTox IDs to support exposure assessments [27]. |

| DSSTox (Distributed Structure-Searchable Toxicity) | A public chemistry resource and database of quality-controlled chemical structures, providing unified and curated DTXSIDs for mapping disparate chemical identifiers [27]. |

| Factotum | An internal EPA data management and curation application that facilitates the collection, curation, and QA of chemical exposure data from public documents, forming the backbone of the CPDat pipeline [27]. |

| FHIR (Fast Healthcare Interoperability Resources) | An API-based standard for exchanging healthcare data. Its principles of structured, web-based data formats are increasingly relevant for standardizing chemical and toxicological data exchange [24]. |

| GHS (Globally Harmonized System) | An international standard for classifying chemicals and communicating hazard information via safety data sheets and labels. Its ongoing adoption is a key regulatory trend promoting global standardization [25]. |

| New Approach Methodologies (NAMs) | A collective term for non-animal testing methods (e.g., in vitro, computational, omics). Their use in regulatory decisions relies on high-quality, standardized data for read-across and QSUR models [26]. |

Table: Quantitative Impact of Interoperability Challenges

| Challenge Area | Quantitative Impact / Metric |

|---|---|

| Economic Impact | Lack of interoperability is estimated to cost the U.S. health system over \$30 billion annually, illustrating the massive financial burden of fragmented systems [24]. |

| Prevalence of Legacy Systems | A high percentage of healthcare providers report struggling with outdated systems, a key technical hurdle that is directly analogous to the chemical regulatory domain [24]. |

| Data Quality for AI | Poor data quality, often a direct result of semantic interoperability failures, is identified as a major barrier that can render AI models unreliable for clinical or research use [24]. |

Technical Support Center: Chemical Database Interoperability

Frequently Asked Questions (FAQs)

1. What are the most common causes of chemical data interoperability failure? Interoperability failures most often occur due to incompatible chemical file formats and incorrect or ambiguous chemical identifiers. Using a linear notation like SMILES for database storage is efficient, but it lacks 3D spatial information, which is critical for applications like molecular docking [28]. Furthermore, chemical identifiers from different sources (e.g., common names, CAS numbers, IUPAC names) can be inconsistent. The International Chemical Identifier (InChI) was developed to solve this by providing a standardized, non-proprietary identifier that ensures all researchers refer to the same molecular entity, avoiding confusion across different software tools [28] [29].

2. How can I perform a structure search across multiple chemical databases at once?

You can use a SPARQL service with chemical search extensions to perform federated queries. The IDSM SPARQL service, for example, provides predicates like sachem:substructureSearch and sachem:similaritySearch that can be integrated into a SPARQL query [30] [31]. This allows you to execute a single query that searches for a specific molecular structure or substructure across multiple linked databases (such as ChEMBL or DrugBank) that have been indexed by the service, combining the results automatically [31].

3. My tools can't read the stereochemistry from my chemical file. What should I do? Ensure you are using a file format that explicitly encodes stereochemical information. While SMILES can denote some stereochemistry, formats like MOL or SDF are more robust for storing and exchanging 3D structural data, including stereochemistry [28]. When working with databases, verify that the software tools and APIs you are using can read and interpret the stereochemical layer of InChI strings, as this capability is being increasingly embedded in modern cheminformatics platforms to enable accurate stereochemistry searches [32].

4. We are building a new chemical database. How can we ensure it is FAIR-compliant? Adopting a structured data pipeline is key. A FAIR-compliant pipeline, like the one used for the Chemical and Products Database (CPDat), involves Intake, Curation, and Delivery stages [27]. This includes:

- Intake: Identifying and acquiring priority data sources.

- Curation: Using a controlled vocabulary and mapping all chemical substances to unique, verified identifiers (like DSSTox Substance IDs, or DTXSIDs) through a rigorous quality assurance process [27].

- Delivery: Implementing extraction, transformation, and loading (ETL) processes to publish the data in an interoperable format, often using semantic web standards like RDF and providing a SPARQL endpoint for querying [27].

Troubleshooting Guides

Issue: Failed Cross-Database Query with Chemical Structure

- Symptoms: A SPARQL query that includes a chemical structure search returns no results, times out, or returns an error.

- Resolution Workflow: The following diagram outlines a systematic approach to diagnose and resolve this issue.

Diagnostic Steps and Actions:

Verify Chemical Structure Syntax:

- Action: Validate the structure representation (e.g., SMILES, InChI) using a separate tool like RDKit or the online InChI resolver [28] [29]. Ensure the notation is correct and describes a valid chemical structure.

- Example Code:

- Expected Outcome: The tool successfully generates a molecular object or returns a valid InChI string.

Check Search Service Parameters:

- Action: Review the parameters for the structure search predicate. For the IDSM service, this includes

sachem:query,sachem:topn(to limit results), and mode parameters likesachem:tautomerModeorsachem:chargeMode[30]. - Example Code (SPARQL pattern):

- Expected Outcome: The query executes without syntax errors related to the procedure call.

- Action: Review the parameters for the structure search predicate. For the IDSM service, this includes

Confirm SPARQL Endpoint and Dataset:

- Action: Ensure the query is being sent to the correct SPARQL endpoint (e.g.,

https://idsm.elixir-czech.cz/) [30] [31]. Verify that the target dataset (e.g., ChEMBL, DrugBank) is available and indexed on that endpoint. - Expected Outcome: The endpoint is accessible, and the specified datasets are listed as available.

- Action: Ensure the query is being sent to the correct SPARQL endpoint (e.g.,

Test with a Simple, Known Compound:

- Action: Isolate the problem by running a similarity or substructure search for a very common molecule (e.g., adenine or benzene) with minimal parameters.

- Expected Outcome: The simple query returns a list of known results, confirming that the service is functioning. If it fails, the issue may be with service availability or fundamental connectivity.

Issue: Chemical Identifier Mismatch During Data Integration

- Symptoms: Records for the same compound from different databases cannot be linked or are incorrectly merged, leading to data loss or corruption.

- Resolution Workflow: The following workflow ensures consistent chemical identification across sources.

Diagnostic Steps and Actions:

Standardize on an InChI-Based Identifier:

- Action: Convert all chemical records to a standard identifier. Use the InChIKey, a hashed version of the full InChI, for fast database indexing and lookup [28] [33]. The full InChI provides the detailed structural information.

- Example Protocol: Use a tool like RDKit or Open Babel to generate InChI and InChIKeys from existing structural files (e.g., MOL, SDF) or other identifiers.

- Example Code:

Cross-Reference via a Curated Registry:

- Action: Map reported chemical names and CAS numbers to a curated, non-proprietary substance identifier. The US EPA's DSSTox database provides such a service, assigning unique DTXSIDs that link multiple identifiers to a single, verified substance [27].

- Methodology: Submit a list of chemical identifiers (names, CASRN) to a chemical curation service or use the publicly available DSSTox resources to find the corresponding DTXSIDs.

Implement a Robust Curation Pipeline:

- Action: Adopt a data curation workflow with quality assurance checks. The CPDat pipeline, managed by the Factotum tool, uses Standard Operating Procedures (SOPs) for data extraction, cleaning, and the assignment of controlled vocabulary terms [27]. This ensures ongoing data quality and harmonization.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and tools essential for overcoming chemical interoperability challenges.

| Tool/Resource Name | Function | Key Application in Interoperability |

|---|---|---|

| RDKit (Cheminformatics Library) [28] | Converts between chemical file formats; generates and validates chemical identifiers. | Core utility for scripting data standardization pipelines (e.g., SMILES to InChI, SDF generation). |

| Open Babel (Chemical Toolbox) [28] | Batch conversion of chemical file formats between hundreds of different types. | Pre-processing diverse datasets into a single, unified format for database loading or analysis. |

| IDSM SPARQL Service [30] [31] | Provides interoperable substructure and similarity search via a standard SPARQL endpoint. | Enables complex, federated queries across multiple chemical databases using structural search as a core component. |

| International Chemical Identifier (InChI) [28] [29] [33] | A non-proprietary, standardized identifier for chemical substances. | Serves as the master key for accurately linking and merging chemical records from disparate data sources. |

| DSSTox Substance Identifier (DTXSID) [27] | A unique identifier assigned to a curated chemical substance in the EPA's DSSTox database. | Provides a reliable, cross-referenced registry to resolve ambiguous chemical names and CAS numbers. |

| Factotum (Curation System) [27] | An internal EPA data management platform for curating chemical and exposure-related data. | Implements a reproducible, quality-assured pipeline for making chemical data FAIR (Findable, Accessible, Interoperable, Re-usable). |

Building a Connected Ecosystem: Practical Strategies and Standards for Harmonization

What are InChI and InChIKey?

The IUPAC International Chemical Identifier (InChI) is a non-proprietary, standardized textual identifier for chemical substances that enables the precise encoding of molecular information in a machine-readable format [34]. Developed under the auspices of the International Union of Pure and Applied Chemistry (IUPAC) with principal contributions from the U.S. National Institute of Standards and Technology (NIST) and the InChI Trust, this open-source algorithm generates a unique character string representing a chemical structure [35] [36].

The InChIKey is a condensed, 27-character hashed version of the full InChI, designed to facilitate web searches for chemical compounds [34]. While the full InChI provides detailed structural information in a layered format, the InChIKey serves as a compact digital fingerprint ideal for database indexing and quick comparisons [37].

The Critical Need for Standardization in Chemical Databases

Chemical information faces a significant interoperability challenge due to the "Tower of Babel" of chemical names and identifiers [36]. For example, common substances like Valium (diazepam) have at least 291 different names in PubChem, while benzene has 498 depositor-supplied synonyms [36]. This naming inconsistency creates substantial barriers to finding and linking chemical information across diverse databases and research platforms.

InChI addresses this challenge by providing a single, canonical representation that can bridge different identification systems, enabling more effective data integration and discovery in chemical research [36].

Technical Foundation: Understanding InChI Structure and Generation

The Layered Architecture of InChI

The InChI identifier employs a hierarchical, layered structure that systematically encodes different aspects of molecular information [38]. Each layer is separated by a forward slash (/) and contains specific structural data:

Table: InChI Layers and Their Functions

| Layer | Prefix | Function | In Standard InChI? |

|---|---|---|---|

| Main Layer | None (formula), c, h | Contains chemical formula, atom connections, and hydrogen atoms | Always present |

| Charge Layer | q, p | Encodes charge state and proton information | Optional |

| Stereochemical Layer | b, t, m, s | Describes double bond, tetrahedral, and allene stereochemistry | Optional |

| Isotopic Layer | i | Specifies isotopic information | Optional |

| Fixed-H Layer | f | Identifies tautomeric hydrogens | Never included |

| Reconnected Layer | r | Provides structure with reconnected metal atoms | Never included |

This layered approach allows users to select the appropriate level of structural detail for their specific application [34]. The "Standard InChI" provides a consistent representation by excluding user-selectable options for handling stereochemistry and tautomeric layers, ensuring interoperability across different systems [36].

The InChI Generation Process

The InChI algorithm converts input structural information into a unique identifier through a rigorous three-step process [34]:

- Normalization: Removes redundant information and converts the structure to a core parent representation, handling issues such as tautomerism and formal charges.

- Canonicalization: Generates unique number labels for each atom in the structure, ensuring the same identifier is always produced for the same compound regardless of input orientation.

- Serialization: Assembles the normalized and canonicalized information into the final character string according to the layered format.

InChI Generation and Hashing Workflow

InChIKey: The Hashed Fingerprint

The InChIKey is derived from the full InChI string using the SHA-256 cryptographic hash algorithm [34]. Its 27-character fixed-length format consists of three hyphen-separated parts:

- First block (14 characters): Encodes the core molecular structure

- Second block (10 characters): Encodes structural features including stereochemistry, isotopes, and charges

- Third block (1 character): A check digit that verifies the key's validity

While hash collisions (different structures producing the same InChIKey) are theoretically possible, they are extremely rare in practice, with an estimated probability of only one duplication in 75 databases each containing one billion unique structures [34].

Implementation Guide: Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between InChI and registry numbers like CAS RN? InChI is structure-based and non-proprietary, meaning anyone can generate it from structural information without requiring assignment by an organization [35] [34]. Unlike authority-assigned registry numbers, InChI is computable, open, and provides human-readable (with practice) structural information in its layered format [34].

Q2: Why should I implement InChI when we already use SMILES in our database? While SMILES is widely used, different software implementations can generate different SMILES strings for the same molecule (caffeine has been shown to have up to 4,160 different SMILES representations) [21]. InChI provides a single, standardized canonical representation, ensuring that the same structure always produces the same identifier regardless of the software used to generate it [21].

Q3: Can InChI handle tautomers and stereochemistry? Yes, InChI has specific layers to encode stereochemical and isotopic information [34]. For tautomers, the standard InChI generates the same identifier for different tautomeric forms by normalizing to a core parent structure, while the non-standard InChI with the fixed-H layer (/f) can distinguish specific tautomers [34].

Q4: What are the limitations of InChI for database applications? InChI does not represent 3-dimensional atomic coordinates, and for very large molecules (such as proteins or polymers), the identifier can become excessively long [34]. Additionally, the current implementation has specific limitations in handling organometallic compounds and certain complex stereochemical environments [35].

Q5: How reliable is InChIKey for uniquely identifying compounds? While hash collisions are theoretically possible, they are extremely rare with current database sizes [34]. For critical applications where absolute certainty is required, it is recommended to verify matches using the full InChI string, which contains complete structural information [34].

Troubleshooting Common Implementation Issues

Problem: Different structures generating the same Standard InChI

- Cause: This typically occurs when using Standard InChI with tautomeric compounds. The Standard InChI intentionally normalizes different tautomers to the same core parent structure [34].

- Solution: Use the non-standard InChI with the fixed-H layer (/f) to distinguish specific tautomers when this level of discrimination is necessary for your application [34].

Problem: InChIKey collision suspected

- Cause: While extremely rare, hash collisions can theoretically occur when two different structures produce the same InChIKey [34].

- Solution: Always verify potential matches by comparing the full InChI strings. For critical applications, implement a confirmation step that checks the complete structural representation [34].

Problem: InChI generation fails for metal-containing compounds

- Cause: The Standard InChI disconnects all metal atoms during normalization, which can sometimes produce unexpected results for organometallic compounds [34].

- Solution: Use the non-standard InChI with the reconnected layer (/r), which maintains bonds to metal atoms and may provide more intuitive representations for these compounds [34].

Problem: Database search performance issues with full InChI strings

- Cause: The variable length and complexity of full InChI strings can impact database search performance, especially with large chemical collections [37].

- Solution: Implement a hybrid approach using InChIKey for initial indexing and fast lookup, with the full InChI stored for verification and detailed comparison when needed [37] [34].

Problem: Inconsistent InChI generation across different software tools

- Cause: While the InChI algorithm is standardized, different software implementations might use different options or preprocessing steps [38].

- Solution: Use the official InChI software library available from the InChI Trust (https://www.inchi-trust.org) to ensure consistency, and specify that you are using the Standard InChI for interoperability [36] [39].

Research Reagent Solutions: Essential Tools for Implementation

Table: Essential Resources for InChI Implementation

| Resource | Function | Access Information |

|---|---|---|

| InChI Software Library | Core algorithm for generating and parsing InChI identifiers | Available from InChI Trust (https://www.inchi-trust.org) under MIT License [39] |

| NCI/CADD Chemical Identifier Resolver | Web service for converting between different chemical representations | https://cactus.nci.nih.gov/chemical/structure [40] |

| InChI OER (Open Education Resource) | Training materials and educational content about InChI | https://www.inchi-trust.org/oer/ [21] |

| PubChem Sketcher | Web-based tool for drawing structures and generating InChIs | https://pubchem.ncbi.nlm.nih.gov/edit/ [39] |

| NIST WebBook InChI Search | Search thermodynamic data by InChI or InChIKey | https://webbook.nist.gov/chemistry/inchi-ser/ [41] |

| ChemSpider | Chemical structure database with extensive InChI search capabilities | https://www.chemspider.com [36] |

Experimental Protocols and Methodologies

Protocol: Generating and Validating InChI Identifiers

Objective: To consistently generate and verify Standard InChI and InChIKey identifiers for chemical structures.

Materials:

- Chemical structure in a supported format (MOL file, SMILES, etc.)

- Access to InChI generation software (standalone or library)

- Database or spreadsheet for tracking results

Procedure:

- Input Preparation: Ensure your chemical structure representation includes all necessary components: atoms, bonds, formal charges, and stereochemistry if applicable.

- Software Configuration: Set the InChI generator to produce Standard InChI (excluding tautomer and metal reconnection options) to ensure interoperability.

- Generation Execution:

- Input the structure to the InChI algorithm

- Execute the three-step process: normalization, canonicalization, and serialization

- Capture both the full InChI string and the derived InChIKey

- Validation:

- Verify the InChIKey checksum character matches the calculated value

- Use a resolver service (e.g., NCI/CADD) to convert the InChIKey back to a structure and confirm consistency

- Cross-reference with public databases (PubChem, ChemSpider) when available

- Documentation: Record both the full InChI and InChIKey in your database, noting the software version and generation date.

Troubleshooting Tips:

- If stereochemistry is not properly encoded, verify that your input structure includes appropriate stereochemical descriptors.

- For large molecules, check for potential performance issues and consider processing in batches.

- If generated InChIs don't match expected results, compare using the Standard InChI options to ensure consistent processing.

Protocol: Database Integration and Cross-Referencing

Objective: To implement InChI-based searching and cross-referencing in chemical databases.

Materials:

- Existing chemical database with structure information

- InChI software library integrated with your database system

- Indexing framework for efficient search

Procedure:

- Batch Generation: Process all existing structures in your database to generate both Standard InChI and InChIKey identifiers.

- Database Schema Modification:

- Add dedicated columns for both full InChI and InChIKey

- Create indexes on the InChIKey field for fast searching

- Consider storing the individual layers if layer-specific querying is needed

- Integration Points:

- Implement pre-insert and pre-update triggers to automatically generate InChI identifiers for new or modified structures

- Create API endpoints that accept InChI or InChIKey for structure searching

- Cross-Database Interoperability:

- Use InChIKey to link your records with external databases (PubChem, ChemSpider)

- Implement resolution services to convert between different identifier types using InChI as the intermediate

- Validation and Quality Control:

- Periodically verify that identical structures have identical InChIs

- Check for and investigate any InChIKey collisions

- Monitor data source integration for consistency

Database Interoperability Through InChI

The adoption of InChI and InChIKey as universal identifiers represents a foundational step toward resolving critical interoperability challenges in chemical databases. By providing a non-proprietary, standardized method for structure representation, these identifiers enable researchers to bridge disparate data sources, enhance discovery, and facilitate the integration of chemical information across the research ecosystem.

The hierarchical layered structure of InChI offers both precision and flexibility, allowing implementation at various levels of complexity depending on application requirements. When combined with robust troubleshooting protocols and the growing ecosystem of supporting tools, InChI provides a practical pathway for harmonizing chemical identification that serves the evolving needs of modern chemical research and data-intensive scientific discovery.

Frequently Asked Questions (FAQs)

Q1: What are the key advantages of the V3000 molfile format over the older V2000 standard?

The V3000 molfile format, an extension of the chemical table file family, introduces several critical enhancements that address limitations of the V2000 standard. It supports molecules with more than 999 atoms or bonds, which is a hard limit in V2000 [42]. Furthermore, V3000 provides more robust and flexible capabilities for representing complex chemical features, including enhanced stereochemistry (absolute, racemic, and relative stereo groups), Rgroups, and Sgroups (abbreviations/superatoms and polymer blocks) [43] [44]. Its structure is also more human-readable, using BEGIN/END blocks for different data sections like the atom and bond blocks [44].

Q2: How can I encode custom data or highlighting in a V3000 molfile?

You can use the user-specified collection block mechanism to extend the V3000 format. This allows you to create custom, tagged groupings of molecular features (atoms, bonds, etc.). For example, to highlight specific bonds in red, you could define a collection like this [43]:

In this example, "MM" is a user-defined namespace, "HIGHLIGHT" is the function, and "#FF0000" is a hexadecimal color code. It is important to note that readers who do not recognize this user-specified tag will typically ignore it, potentially with a warning, but will not reject the entire file [43].

Q3: What is the relationship between the ISO IDMP standards and HL7 FHIR in regulatory submissions?

ISO IDMP (Identification of Medicinal Products) is a suite of five standards (ISO 11615, 11616, 11238, 11239, 11240) that provide an international framework for uniquely identifying and describing medicinal products with consistent documentation and terminologies [45]. HL7 FHIR (Fast Healthcare Interoperability Resources) is a standard for exchanging healthcare information electronically using modern web technologies like RESTful APIs and XML/JSON [46].

The relationship is synergistic, not competitive. Regulatory agencies, like the European Medicines Agency (EMA), are leading efforts to use HL7 FHIR as the preferred data exchange format to transmit the rich, structured data defined by the IDMP data model. This approach enhances interoperability between systems in the pharmaceutical sector and supports a data-centric target operating model [47].

Q4: Our organization uses V2000 molfiles. What is the first step in transitioning to V3000?

The most critical first step is to assess your software ecosystem. Verify that all the software applications and databases in your workflow (e.g., chemical registries, visualization tools, calculation software) are capable of reading and, if necessary, writing the V3000 molfile format. While most modern cheminformatics toolkits support V3000, compatibility issues can still arise with older or more specialized software [44]. Once compatibility is confirmed, you can begin a phased transition, starting with using V3000 for new projects involving large molecules or complex stereochemistry.

Troubleshooting Guides

Issue 1: V3000 Molfile is Not Read by a Legacy Application

Problem: A V3000 molfile created with a new software tool cannot be opened by an older, legacy application, which may display an error or show an incorrect structure.

Solution:

- Check for V3000 Support: Confirm that the legacy application explicitly states support for "V3000," "CTab V3000," or "Extended MOLfiles." If not, it likely only supports V2000 [44].

- Down-convert to V2000: If the molecule is simple enough (has fewer than 1000 atoms and bonds and does not use advanced V3000-specific features), use a modern cheminformatics toolkit (e.g., RDKit, CDK, Open Babel) to convert the file to the V2000 format.

- Simplify the Structure: If the molecule uses advanced V3000 features like enhanced stereochemistry, consider saving a simplified version without these features for use with the legacy tool.

- Update or Replace Software: As a long-term solution, consider upgrading the legacy application or replacing it with a tool that supports modern standards.

Issue 2: FHIR Message Rejected by Regulatory Submission Gateway

Problem: A FHIR message generated for an IDMP-based submission to a regulatory authority (e.g., EMA's Product Management Service) is rejected.

Solution: Follow this systematic diagnostic workflow:

- Check Submission Status: In systems like Veeva Vault, the Product Data Submission record's status will change to "Submission Rejected." Use the provided link to download the detailed CSV error report [48].

- Baseline IDMP Records: Ensure all relevant Medicinal Product records have been baselined, meaning critical identifiers like the PMS ID, PCID, and MPID have been populated via the regulatory agency's API before submission [48].

- Validate FHIR Profile: Ensure your FHIR message strictly conforms to the required Implementation Guide (IG) specified by the regulatory authority. Use FHIR validation tools to check for conformity against profiles like QI-Core [46].

- Review Operation Type: Confirm that the FHIR message's operation type (e.g., "Manufacturing Enrichment") matches the intended regulatory activity and is supported by the gateway [48].

Issue 3: Inconsistent Substance Identification Across Global Dossiers

Problem: The same drug substance or product is identified differently in regulatory dossiers submitted to various national regulatory agencies (NRAs), hindering collaboration and mutual reliance.

Solution: Adopt the primary identifiers recommended by international working groups (like ICMRA) which are aligned with ISO IDMP standards [49].

Table 1: Primary Identifiers for Determining Product 'Sameness'

| Identifier Category | Specific Data Elements | Standard / Source |

|---|---|---|

| Substance | Drug Substance Name | ISO 11238 (Substance Identification) |

| Product | Dosage Form, Route of Administration, Unit of Presentation | ISO 11239 (Dosage Form & Route of Admin) |

| Organization | Marketing Authorization Holder (MAH) Name & Address, Manufacturer | ISO 11615 (Medicinal Product ID) |

| Application | Application Type (e.g., Chemical, Biological) | Regional Conventions |

- Implement ISO IDMP Standards: Begin structuring substance and product data according to ISO 11238 (substances) and ISO 11616 (pharmaceutical products). This creates a consistent foundation for identification globally [45].

- Use Controlled Vocabularies: Where possible, use internationally recognized controlled terminologies for fields like dosage form and route of administration to minimize regional variations in labeling [49].

- Leverage Unique Substance Identifiers: Utilize globally unique identifiers where available, such as the FDA's Unique Ingredient Identifier (UNII), which conforms to ISO 11238 concepts [45].

Data Standards Comparison

Table 2: Key Capabilities of V2000 vs. V3000 Molfiles

| Feature | V2000 | V3000 |

|---|---|---|

| Maximum Atoms/Bonds | 999 | Unlimited |

| Readability | Terse, fixed column widths | More human-readable, block-based |

| Stereochemistry | Basic parity | Enhanced (Absolute, AND/OR groups) [43] |

| Extension Mechanism | Limited properties block | Flexible user-defined collections [43] |

| Polymer & Mixtures | Limited Sgroup support | Comprehensive Sgroup and Rgroup blocks [42] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Digital Tools and Standards for Data Interoperability

| Tool / Standard | Function | Relevance to Research |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit | Converts between chemical file formats (V2000/V3000); generates and validates structures; calculates descriptors. Essential for pre-processing compound data [50]. |

| HL7 FHIR Resources | Standardized data elements (e.g., Substance, Medication) | Provides the "building blocks" to structure product and substance data for regulatory reporting and exchange, aligning with IDMP concepts [46] [47]. |

| InChI (International Chemical Identifier) | A non-proprietary identifier for chemical substances | A critical standard for establishing substance "sameness" across different databases and platforms, facilitating data linking and retrieval [50]. |

| SPOR (Substances, Products, Organisations, Referentials) | EMA's data management services | Provides the master data and controlled terminologies needed for IDMP implementation in the EU region [49]. |

| FHIR Implementation Guide (IG) | A set of rules for applying FHIR in a specific context (e.g., IDMP) | Ensures that FHIR messages are structured correctly for a particular regulatory purpose, such as submission to the EMA's PMS [46]. |

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Chemical Identifier Mapping Failures

Problem: Chemical curation processes fail to map reported chemical names or CASRNs to standardized substance identifiers (DTXSIDs), breaking the data pipeline [27].

| Step | Action | Expected Outcome | Tools/Logs to Check |

|---|---|---|---|

| 1 | Verify original chemical identifier in source document. | Confirm reported name/CASRN is correctly transcribed. | Factotum curation interface, original (M)SDS or data source file [27]. |

| 2 | Execute automated DSSTox mapping workflow. | Reported identifier is successfully mapped to a DTXSID [27]. | DSSTox curation logs, check for provisional DTXSID assignment [27]. |

| 3 | Initiate manual chemical curation. | Chemical curation team resolves conflict and assigns verified DTXSID [27]. | Internal curation ticket system, updated chemical record in Factotum [27]. |

| 4 | Re-run ETL (Extract, Transform, Load) process for affected data. | Curated data propagates to the public-facing CPDat database [27]. | ETL pipeline logs, CPDat public API or exploration application [27]. |

Underlying Cause: Common causes include typographical errors in source data, use of proprietary chemical names not in standard dictionaries, or incorrect CASRNs [27].

Preventive Measures:

- Implement automated data validation checks during the initial data intake stage [27].

- Use structured data extraction scripts to minimize manual entry errors [27].

Guide 2: Debugging Interoperability in a Loosely-Coupled Component Architecture

Problem: A service component (e.g., a data processing module) fails to communicate with other components, leading to system errors or data silos [51].

| Step | Action | Expected Outcome | Tools/Logs to Check |

|---|---|---|---|

| 1 | Verify component interface definitions (APIs). | Confirm all components interact via well-defined interfaces without hidden dependencies [51] [52]. | Component design documentation, API contracts (e.g., OpenAPI specs) [52]. |

| 2 | Check communication protocols and data formats. | Ensure components agree on protocols (e.g., REST, messaging) and data formats (e.g., JSON, XML) [51] [52]. | Network configuration, message queue logs, data serialization/deserialization modules. |

| 3 | Test component in isolation (Unit Test). | The component functions correctly with mocked inputs and outputs [52]. | Unit testing frameworks, dependency injection container logs. |

| 4 | Test component interactions (Integration Test). | Data and commands flow seamlessly between components in an end-to-end workflow [52]. | System integration test logs, transaction traces, and monitoring dashboards. |

Underlying Cause: Often results from inconsistent data schemas between components, network connectivity issues, or unhandled exceptions in one component affecting others [51] [52].

Preventive Measures:

- Adopt an event-driven architecture to decouple components further [52].

- Implement comprehensive logging and monitoring for each independent component [52].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a data-informed and a data-driven approach in our research context?

A: The key difference lies in the role of data in decision-making:

- Data-Informed: Customer and experimental data help you evaluate your design decisions. Experience and intuition still make the final call, unless data strongly suggests otherwise [53]. This is useful for analytics and usability evaluation.

- Data-Driven: Customer data from experiments shows you what to design next. Behavioral data is the primary driver for decisions, often overriding intuition. This is a continuous process of testing ideas and letting the resulting data make decisions, which is central to de-risking product ideas in development [53].

Q2: Our team is struggling with data biases in chemical datasets used for QSAR modeling. How can we mitigate this?

A: Data biases can lead to incorrect conclusions and flawed models. To mitigate them [54]:

- Strive for diverse and representative samples: Ensure your chemical datasets cover a broad and relevant chemical space.

- Cross-validate with multiple sources: Use different data sources (e.g., PubChem, ChEMBL) to validate your findings [15].

- Incorporate negative data: For reliable predictive modeling, such as QSAR, include data on chemically similar but inactive compounds to improve model accuracy and generalizability [15].

- Involve a multidisciplinary team: Include chemists, data scientists, and domain experts in data analysis to identify potential biases from different perspectives [54].

Q3: What are the most critical design principles to ensure a component-based architecture remains interoperable and reusable?

A: The core design principles for a successful Component-Based Architecture (CBA) are [52]:

- Modularity: Divide the system into cohesive, self-contained components, each with a single, well-defined purpose.

- Abstraction: Hide complex implementation details inside components, exposing only necessary, simple interfaces.

- Encapsulation: Components should encapsulate their own data and behavior, preventing external components from creating unwanted dependencies.

- Loose Coupling: Design components to have minimal knowledge of other components, interacting primarily through well-defined interfaces. This allows components to be replaced or updated with minimal impact [51] [52].

- Clear Interfaces: Define explicit APIs that specify the methods, inputs, outputs, and data formats for interaction [52].

Experimental Protocols & Methodologies

Protocol 1: Implementing a Component-Based, Data-Driven IoT Architecture

This protocol is adapted from research on creating interoperable IoT systems, which is methodologically analogous to building a federated chemical data platform [51].

1. Goal: To build a system architecture that supports interoperability between heterogeneous devices or data sources and incorporates a data-driven feedback loop for automation [51].

2. Methodology:

- Requirements Analysis: Identify all data sources (e.g., laboratory instruments, external databases like PubChem/ChEMBL) and key functional components needed [52].

- Define Component Boundaries: Decompose the system into independent components (e.g., "Data Ingestion," "Chemical Curation," "Analysis Engine") based on functionality [51] [52].

- Design Component Interfaces: Specify API contracts and communication protocols (e.g., REST/JSON) for each component [52].

- Implement Components: Develop each component as a standalone service, ensuring it encapsulates its own functionality and data. This allows for independent development and testing [51] [52].

- Integrate Data-Driven Feedback: Implement a central mechanism (e.g., a rules engine or machine learning model) that analyzes data from the components and sends automated commands back to them, creating a closed-loop system [51].

- Testing: Conduct unit testing on each component followed by integration testing to validate end-to-end workflows [52].

The workflow for this architecture and its data-driven feedback loop is illustrated below.

Protocol 2: Chemical Data Curation and Harmonization for Exposure Assessments

This protocol details the rigorous curation process used for the Chemical and Products Database (CPDat), which directly addresses chemical identifier interoperability [27].

1. Goal: To transform raw, heterogeneous chemical data from public sources into a FAIR (Findable, Accessible, Interoperable, Reusable) and harmonized database [27].

2. Methodology:

- Intake Stage:

- Curation Stage:

- Quality Assurance (QA) Stage:

- A second curator checks vocabulary assignments and extracted text against the original source file for accuracy [27].

- Delivery Stage:

- Perform an ETL process to move curated data from the document-centric curation database to the public-facing, product/use-centric database [27].

The following diagram visualizes this multi-stage pipeline.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and tools essential for experiments in component-based, data-driven framework design, particularly for solving chemical interoperability issues.

| Item Name | Function/Benefit | Application in Research Context |

|---|---|---|

| Controlled Vocabularies & Ontologies | Provides standardized terminology to harmonize data across different sources, enabling conceptual alignment and composability [27] [55]. | Used to categorize product uses and chemical functions in CPDat, ensuring consistent data interpretation and interoperability [27]. |

| Standardized Identifier Systems (e.g., DTXSID, InChI) | Unique, non-proprietary identifiers for chemical substances that facilitate unambiguous data exchange and linkage across disparate databases [15] [27]. | The cornerstone of chemical curation in CPDat, resolving conflicts between different chemical names and CASRNs to a single verified substance [27]. |

| Component-Based Architecture (CBA) | A software design methodology that builds systems from reusable, modular, and loosely-coupled components, promoting flexibility, scalability, and easier maintenance [52]. | Serves as the structural foundation for proposed IoT and healthcare frameworks, allowing integration of diverse devices and services [56] [51]. |

| Data-Driven Feedback Loop | A system feature that uses analyzed data to automatically trigger actions or optimize processes, reducing reliance on manual human intervention [51]. | A key feature in IoT architectures for enabling automation and intelligent system behavior based on sensor data analysis [51]. |

| Factotum (Curation Tool) | An internal web-based data management platform that supports reproducible data curation, quality assurance tracking, and provenance management [27]. | The central tool used in the CPDat pipeline for managing the intake, curation, and QA of chemical and product data [27]. |

The FAIR Guiding Principles—Findable, Accessible, Interoperable, and Reusable—provide a framework for managing scientific data to enhance its reuse by both humans and machines [7] [57]. In the chemical sciences, implementing these principles addresses critical challenges in data sharing and interoperability, which is essential for harmonizing chemical identifiers and resolving database interoperability issues [58] [59].

Several platforms have been developed to facilitate this FAIRification process. NFDI4Chem provides a specialized infrastructure for chemistry data, offering tools to make chemical research data findable through persistent identifiers and accessible through standardized protocols [60] [58]. Similarly, the FAIR4Health platform, while designed for health data, demonstrates a workflow applicable to sensitive research data, emphasizing data curation, validation, and anonymization [61].

Key FAIRification Platforms and Tools

NFDI4Chem for Chemical Sciences

NFDI4Chem is building tools and infrastructures specifically designed for FAIR chemistry data [58]. Key features include:

- RADAR4Chem Repository: A central service for depositing chemical research data with persistent identifiers [60]

- Terminology Service Integration: Connection to the TS4NFDI service allows selection of standardized terms from curated chemical ontologies [60]

- GitLab/GitHub Import: Supports direct import of data from code repositories, facilitating workflow integration [60]

FAIR4Health Solution Architecture

While focused on health research, the FAIR4Health architecture demonstrates a comprehensive approach to FAIRification that can inform chemical data practices:

FAIR4Health FAIRification Workflow. This workflow shows the process of converting raw data into FAIR data through curation and privacy protection steps. [61]

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q: The standardized terminology suggestion function is not appearing in my RADAR4Chem keyword field. How can I fix this? A: This function must be activated by your institution's RADAR4Chem curators via the "Edit Workspace" form. The curators need to assign one or more terminologies or ontology collections (e.g., the NFDI4Chem ontology collection) for configuration. Contact your institutional curator or the RADAR4Chem team at info@radar-service.eu for assistance [60].

Q: I cannot import data directly from my GitLab repository to RADAR4Chem. What should I do? A: The GitLab/GitHub import option must be activated by FIZ Karlsruhe for your specific RADAR4Chem workspace. Contact the RADAR4Chem team at info@radar-service.eu to request activation of this feature for your workspace [60].

Q: How can I ensure my chemical data is interoperable with other research databases? A: Use established chemistry data formats (CIF for crystal structures, JCAMP-DX for spectral data) and community-agreed metadata standards. Apply International Chemical Identifiers (InChIs) for all chemical structures, as they provide machine-readable descriptions that enable cross-database interoperability [58].

Q: My FAIRified dataset includes sensitive research information. How can I maintain privacy while enabling reuse? A: Implement data de-identification and anonymization techniques before making data available. The FAIR4Health Data Privacy Tool demonstrates one approach, applying privacy-preserving computation techniques that allow data analysis without exposing sensitive information [61].

Troubleshooting Common Experimental Scenarios

Problem: Inconsistent chemical identifier mapping across databases

Troubleshooting Approach: