Beyond the Straight Line: A Scientist's Guide to Troubleshooting Nonlinear Relationships in Environmental Scatterplots

This article provides a comprehensive framework for researchers and scientists to identify, analyze, and validate complex nonlinear relationships in environmental data.

Beyond the Straight Line: A Scientist's Guide to Troubleshooting Nonlinear Relationships in Environmental Scatterplots

Abstract

This article provides a comprehensive framework for researchers and scientists to identify, analyze, and validate complex nonlinear relationships in environmental data. Moving beyond traditional linear assumptions, we explore the foundational principles of nonlinearity, demonstrate advanced methodological approaches using explainable machine learning and AI, address common troubleshooting and optimization challenges, and establish rigorous validation protocols. The insights are tailored for professionals in drug development and biomedical research who rely on accurate environmental data modeling for critical decisions, covering techniques from initial scatterplot exploration to the implementation of cutting-edge, interpretable AI models for actionable insights.

Seeing the Patterns: From Basic Scatterplots to Identifying Nonlinearity in Environmental Data

The Critical Role of Scatterplots in Environmental Exploratory Data Analysis

Frequently Asked Questions

Q1: My scatterplot reveals a cloud of points with no clear linear trend. Does this mean there is no relationship between my environmental variables? Not necessarily. A lack of a linear pattern often indicates a nonlinear relationship. For instance, research on the natural environment and health has found convincing evidence for nonlinear associations, where the relationship between two variables changes direction or strength across different ranges of values [1]. Instead of discarding the results, you should investigate these complex patterns further.

Q2: How can I formally check for a nonlinear relationship in my scatterplot? You can use specialized statistical techniques to explore these relationships:

- Locally Weighted Scatterplot Smoothing (LOWESS): This method fits many simple models to local subsets of your data to produce a smooth line that describes the potential nonlinear relationship [1].

- Piecewise Linear Regression: If an inflection point is visible (e.g., from a LOWESS curve), you can model the relationship as two or more connected straight lines that meet at that point [1].

Q3: How do I handle outliers in my environmental scatterplot? First, determine if the outlier is a valid data point or an error. Calculate the Z-score for the data point; a Z-score less than -3 or greater than 3 is typically considered an outlier [2]. If it is a valid measurement, it may represent a genuine, albeit extreme, environmental event. Do not remove valid outliers without careful consideration, as they can be highly informative.

Q4: My scatterplot is used for sensor calibration. What does a good result look like? For calibration, a strong correlation is desired. The points on the scatterplot should lie neatly along a line, indicating that your sensor's readings closely follow those of a reference monitor. If the points are widely scattered, you should investigate the cause of the discrepancy [3].

Troubleshooting Guides

Issue 1: Unable to Identify Trends or Patterns in the Scatterplot

Problem: The scatterplot appears as an indistinct cloud of points, making it difficult to discern any clear relationship between the environmental variables.

Solution:

- Apply Smoothing Techniques: Use a LOWESS curve to overlay a trend line on your scatterplot. This helps visualize the underlying pattern without assuming a specific shape (e.g., linear or quadratic) [1].

- Check for Subgroups: Segment your data by a third variable (e.g., season, location, demographic factor). Plotting each subgroup with a different color or symbol can reveal patterns that are hidden in the aggregate data [3] [1].

- Transform Your Data: Apply mathematical transformations (e.g., log, square root) to one or both axes. This can sometimes linearize a nonlinear relationship, making it easier to interpret.

- Consult the Domain Literature: Understand the known mechanisms in your field. For example, the relationship between natural amenities and health was found to be nonlinear only after researchers specifically tested for it [1].

Issue 2: Poor Color Contrast Hampers Readability

Problem: Data points or trend lines are difficult to see against the background or from each other, especially when presenting to diverse audiences.

Solution:

- Choose a High-Contrast Palette: Select colors that stand out against both white and dark backgrounds. The official Google palette (

#4285F4,#EA4335,#FBBC05,#34A853) offers strong, distinct colors [4]. - Test for Accessibility: Use online color contrast analyzers to ensure the combination of your data point colors and background meets accessibility standards (WCAG guidelines). This is crucial for users with color vision deficiencies [4].

- Use Different Point Shapes: In addition to color, differentiate data series or groups using distinct marker shapes (e.g., circles, squares, triangles). This provides a secondary visual cue.

Issue 3: Suspected Non-Linear Relationship

Problem: A visual inspection of the scatterplot suggests a curved relationship (e.g., U-shaped, S-shaped, or with a clear inflection point), but a standard linear model fits poorly.

Solution:

- Visual Inspection with LOWESS: Begin by fitting a LOWESS curve to get a non-parametric first estimate of the relationship's shape [1].

- Model Comparison: If an inflection point is suggested, fit a piecewise linear regression model with a knot at that point. Compare the fit of this model to a simple linear model using the Akaike Information Criterion (AIC) to confirm that the nonlinear model is a better fit [1].

- Interpret the Segments: In the piecewise model, interpret the slopes of the different segments separately. For example, a study found that in areas with low natural amenities, more amenities were associated with better health, but this relationship changed in high-amenity areas [1].

Experimental Protocol: Analyzing Nonlinear Relationships in Environmental Data

This protocol outlines the steps for using scatterplots to uncover and model nonlinear relationships, using a public dataset on forest fires [2].

1. Objective: To investigate the relationship between temperature and the burned area of forest fires, testing for a potential nonlinear association.

2. Dataset: Forest Fire Data (e.g., forestfires.csv) [2].

Variables:

- Independent Variable:

temp(temperature in Celsius) - Dependent Variable:

area(burned area in hectares)

3. Software & Reagent Solutions

| Item Name | Function/Brief Explanation |

|---|---|

| Python/R | Programming environments for statistical computing and graphics. |

| Pandas Library | Data manipulation and analysis toolkit for loading and preparing the dataset [2]. |

| Scipy Library | Provides the zscore function for outlier detection [2]. |

| LOWESS Function | Non-parametric smoothing function available in statsmodels (Python) or native in R. |

forestfires.csv |

A real-world dataset containing meteorological and fire data for analysis [2]. |

4. Methodology

- Data Preparation: Load the dataset using Pandas. Check for and handle missing values. Examine the distribution of the

areavariable, as it is often skewed [2]. - Outlier Detection: Calculate Z-scores for the

areavariable to identify and validate outliers. Retain valid outliers as they represent real extreme events [2]. - Initial Visualization: Create a basic scatterplot with

tempon the x-axis andareaon the y-axis. - Nonlinear Trend Analysis: Overlay a LOWESS curve on the scatterplot to visualize the potential nonlinear trend [1].

- Model Fitting & Comparison:

- If the LOWESS curve suggests an inflection point, note its approximate location.

- Fit a piecewise linear regression model with a knot at this point.

- Fit a simple linear regression model.

- Compare the AIC values of both models; the model with the lower AIC is preferred [1].

- Interpretation and Reporting: Report the slopes from the piecewise model for each segment and describe how the relationship between temperature and burned area changes.

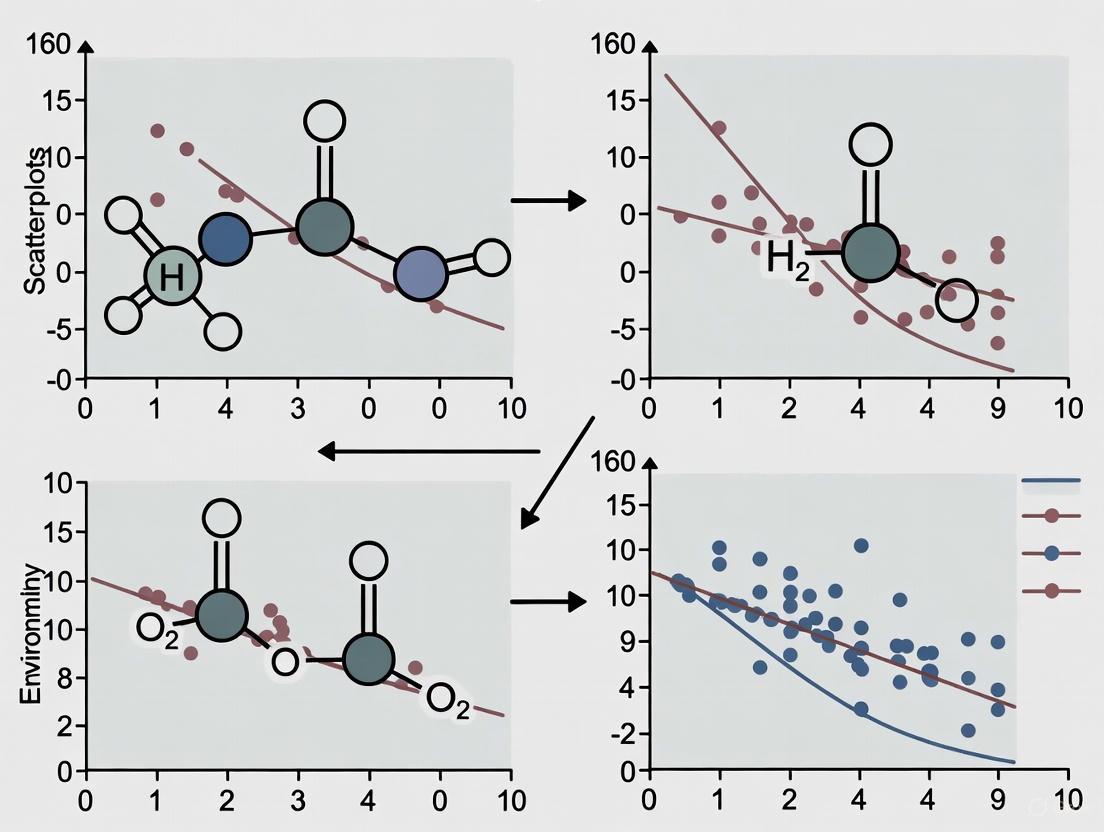

The workflow for this analysis can be summarized as follows:

Data Presentation and Key Metrics

Table 1: Common Nonlinear Relationship Types in Environmental Research

| Relationship Type | Description | Potential Environmental Example |

|---|---|---|

| U-shaped / Inverted U-shaped | A relationship where the effect reverses at a certain point. | The association between natural amenities and health, which was positive in one range and negative in another [1]. |

| Saturating / Logarithmic | A relationship where the effect is strong initially but plateaus. | The effect of a nutrient on plant growth, which diminishes after a certain concentration. |

| Threshold / Piecewise | A relationship with a clear breakpoint (knot) where the slope changes. | A study found an inflection point at NAS=0 for the relationship between natural amenities and health [1]. |

| Cyclical / Periodic | A relationship that repeats over a known period. | Diurnal or seasonal variations in air pollutant concentrations [3]. |

Table 2: Key Statistical Metrics for Scatterplot Analysis

| Metric | Use Case | Interpretation | ||

|---|---|---|---|---|

| Z-score | Outlier Detection | Identifies data points that are unusually far from the mean (typically | Z | > 3) [2]. |

| Skewness | Distribution Shape | Quantifies the asymmetry of a variable's distribution. A high positive skew is common in environmental data like fire area [2]. | ||

| Akaike Information Criterion (AIC) | Model Comparison | Used to compare the goodness-of-fit of different models, with a lower AIC indicating a better model that balances fit and complexity [1]. |

Frequently Asked Questions (FAQs)

1. My environmental scatterplot shows a clear grouping of data points, not a straight line. How can I objectively identify these clusters? The presence of clusters, rather than a linear or monotonic relationship, is a common nonlinear pattern. To move from visual suspicion to objective identification, you can use several established methods [5] [6]:

- Elbow Method: This method involves plotting the within-cluster sum of squares (WSS) against the number of clusters. The optimal number is often at the "elbow" of the plot, where the rate of WSS decrease sharply slows down [5] [7].

- Average Silhouette Method: This measures how well each data point lies within its cluster. The optimal number of clusters maximizes the average silhouette width across all data points [5] [6].

- Gap Statistic Method: This compares the total within-cluster variation for your data to that of a reference null distribution (e.g., uniform random data). The number of clusters that maximizes this "gap" is considered optimal [5] [6].

Each method has its strengths, and it is considered a best practice to use multiple methods to reach a consensus on the appropriate number of clusters [7].

2. How can I determine if my data has a threshold effect, where the response variable changes abruptly at a specific value? Identifying a threshold requires specialized regression techniques that go beyond standard linear models.

- Piecewise Regression (or Breakpoint Analysis): This method fits two or more linear regression models to different intervals of your independent variable. The point where these models connect is the estimated threshold or breakpoint.

- Statistical Testing: After fitting a piecewise model, you can perform hypothesis tests (e.g., using confidence intervals) on the breakpoint parameter to determine if the observed threshold is statistically significant and not due to random chance.

3. My scatterplot suggests a relationship that flattens out, approaching a maximum or minimum value. What model should I use? This pattern, known as an asymptote, is typical in saturation or growth processes. You should employ nonlinear regression with models specifically designed to capture this behavior [8]. Common models include:

- Michaelis-Menten Model: Often used in enzyme kinetics, it describes a relationship that approaches a maximum value (asymptote) as the independent variable increases. Its formula is: ( y = \frac{V{max} \cdot x}{Km + x} ).

- Logistic Growth Model: This "S-shaped" curve models growth that is initially exponential but slows and approaches a carrying capacity (upper asymptote).

- Exponential Decay Model: This describes a relationship where the dependent variable decreases towards zero or a lower asymptote.

Fitting these models typically requires iterative optimization algorithms (e.g., Gauss-Newton, Levenberg-Marquardt) and careful selection of initial parameter values to ensure the model converges to the correct solution [8].

4. A colleague warned that a high correlation coefficient from a linear model on my scatterplot could be misleading. How is that possible? This is a critical and common issue. A high correlation coefficient (( r )) only measures the strength of a linear relationship. It can be dangerously misleading when applied to data with a strong, but nonlinear, pattern [9] [10]. A dataset following a perfect U-shaped (quadratic) curve, for example, will have a linear correlation ( r ) close to zero, despite the obvious systematic relationship. This is why visual inspection of the scatterplot is an indispensable first step before any statistical calculation [9]. Relying solely on ( r ) can lead to the fallacious identification of associations [9].

Troubleshooting Guides

Issue: Ambiguous or Contradictory Results from Cluster Analysis

Problem: You have applied different methods (Elbow, Silhouette, Gap Statistic) to determine the number of clusters in your environmental data, but they suggest different optimal values (e.g., 2 vs. 4 clusters).

| Potential Cause | Solution |

|---|---|

| The data does not have well-separated clusters. The natural grouping in the data may be weak or ambiguous, leading to different methods interpreting the structure differently [6]. | Use a majority rule approach. Compute over 30 different indices (e.g., via the NbClust R package) and choose the number of clusters recommended by the majority of indices [5]. |

| The "elbow" in the Elbow Method plot is not clear. This method is known to be sometimes subjective and ambiguous [5] [6]. | Prioritize the Gap Statistic or Silhouette Method. The Gap Statistic is a more sophisticated method that provides a statistical procedure to formalize the elbow heuristic [5]. The value of k that maximizes the Gap Statistic is typically chosen [6]. |

| The data has not been properly preprocessed. Clustering algorithms are sensitive to the scale of variables. | Standardize your data. Transform all variables to have a mean of zero and a standard deviation of one before performing clustering analysis [5]. |

Issue: Overplotting in Scatterplots Obscures Patterns

Problem: Your scatterplot has too many data points, causing them to overlap and making it impossible to see the density or the true nature of the relationship between variables [10].

| Potential Cause | Solution |

|---|---|

| Large sample size with limited plot area. | Use transparency (alpha blending). Reduce the opacity of each data point so that areas with a high density of points appear darker. |

| The data is discrete or rounded. | Jitter the data. Add a small amount of random noise to the position of each point to prevent perfect overlap. |

| The relationship is still not clear. | Use a 2D density plot or hexagonal binning. These plots summarize the density of points in a grid, using color to show areas of high and low concentration, making patterns and clusters much clearer. |

Experimental Protocols & Methodologies

Protocol 1: Determining the Optimal Number of Clusters using the Gap Statistic

The Gap Statistic is a robust method for determining the number of clusters by comparing the within-cluster variation of your data to that of a reference dataset with no inherent clustering structure [5] [6].

Step-by-Step Methodology:

- Cluster the Observed Data: For a range of cluster numbers ( k = 1, 2, ..., k{max} ), apply a clustering algorithm (e.g., k-means) and compute the total within-cluster sum of squares, ( Wk ) [6].

- Generate Reference Data Sets: Generate ( B ) (e.g., 500) reference datasets using a uniform random distribution over the same range as your observed data [5] [6].

- Cluster the Reference Data: For each reference dataset and each value of ( k ), compute the within-cluster sum of squares, ( W_{kb} ) [6].

- Compute the Gap Statistic: Calculate the gap for each ( k ) using the formula: ( \text{Gap}(k) = \frac{1}{B} \sum{b=1}^B \log(W{kb}^) - \log(W_k) ) where ( W_{kb}^ ) is the within-cluster sum of squares for the ( b )-th reference dataset [6].

- Choose the Optimal k: Select the smallest ( k ) such that ( \text{Gap}(k) \geq \text{Gap}(k+1) - s{k+1} ), where ( s{k+1} ) is the standard deviation of the reference gaps at ( k+1 ) [5] [6].

Protocol 2: Fitting a Nonlinear Asymptotic Model (Michaelis-Menten)

This protocol outlines fitting a model to data that approaches a saturation point [8].

Step-by-Step Methodology:

- Model Selection: Define the Michaelis-Menten model: ( y = \frac{V{max} \cdot x}{Km + x} ), where ( V{max} ) is the maximum value (asymptote) and ( Km ) is the half-saturation constant.

- Initial Parameter Estimation: Provide initial guesses for ( V{max} ) and ( Km ). A good guess for ( V{max} ) is near the maximum observed ( y )-value. The initial ( Km ) can be set to the ( x )-value at which ( y ) is roughly half of the guessed ( V_{max} ). The success of nonlinear regression heavily depends on these initial estimates [8].

- Model Fitting: Use an iterative optimization algorithm like the Levenberg-Marquardt algorithm, available in most statistical software, to find the parameter values that minimize the sum of squared residuals [8].

- Goodness-of-Fit Assessment: Evaluate the model using:

- Residual Plots: Check that residuals are randomly scattered around zero with no obvious pattern.

- Pseudo-R²: Calculate the proportion of variance explained by the nonlinear model.

- Confidence Intervals: Examine the confidence intervals for the parameters ( V{max} ) and ( Km ) to assess their precision.

Workflow Visualization

The following diagram illustrates the core decision process for recognizing and troubleshooting nonlinear patterns in scatterplots.

Research Reagent Solutions

The following table details key analytical "reagents" – in this context, software tools and statistical packages – essential for diagnosing and modeling nonlinear relationships.

| Tool / Solution | Function / Purpose |

|---|---|

| R Statistical Environment | An open-source software environment for statistical computing and graphics, essential for implementing a wide array of clustering and nonlinear modeling techniques [5]. |

factoextra & NbClust R Packages |

The factoextra package provides functions to easily compute and visualize the Elbow, Silhouette, and Gap Statistic methods. The NbClust package provides 30 indices for determining the optimal number of clusters in a single function call [5]. |

| Nonlinear Regression Algorithms (e.g., Gauss-Newton, Levenberg-Marquardt) | Iterative optimization algorithms used to estimate the parameters of nonlinear models (e.g., Michaelis-Menten) by minimizing the difference between the model's predictions and the observed data [8]. |

| Piecewise Regression Software Modules | Software tools (available in R, Python, etc.) capable of fitting segmented relationships and identifying breakpoints or thresholds in data. |

Data Visualization Libraries (e.g., ggplot2 in R) |

Powerful libraries for creating high-quality scatterplots, density plots, and residual plots, which are critical for the initial visual identification of patterns and for diagnosing model fit [9] [10]. |

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: My scatterplot of greenspace coverage versus PM2.5 concentration shows a nonlinear relationship. How should I interpret this? A common challenge in environmental scatterplot analysis is assuming linearity. Nonlinear patterns often reveal critical thresholds.

- Problem: The scatterplot shows a curved relationship, not a straight line.

- Diagnosis: This is expected. The relationship between environmental variables like greenspace and PM2.5 is often complex and nonlinear. A curved pattern suggests the effect of greenspace changes at different levels of coverage.

- Solution: Do not force a linear fit. Use interpretable machine learning (ML) models like XGBoost combined with SHAP (Shapley Additive Explanations) analysis. This approach can identify specific thresholds where the relationship changes. For example, research has shown that the PM2.5 mitigation effect of greenspace coverage (

G_PLAND) may strengthen only after it exceeds a threshold of 40% [11].

Q2: My analysis shows that adding greenspace sometimes increases local PM2.5. What could be causing this paradoxical effect? This frequently occurs when experimental scale and configuration are not properly considered.

- Problem: Green space interventions are linked to higher, not lower, PM2.5 readings.

- Diagnosis: At micro-scales, vegetation can act as a barrier, blocking the dispersion and ventilation of pollutants, thereby trapping them in specific areas [12]. This is often a result of poor greenspace configuration or placement in already poorly-ventilated areas.

- Solution:

- Check the Scale: This effect is most common at the micro-scale (e.g., a single street canyon). At macro (city-wide) or meso scales, the effect is typically negative [12].

- Optimize Configuration: Focus on improving ventilation. Avoid dense, solid green walls in areas with low wind flow. Use strategies that "promote ventilation through weakening sources and strengthening sinks" [12].

- Analyze Interactions: Use ML interaction plots to see if the negative effect occurs when high greenspace coverage is combined with specific urban form factors (e.g., low sky view factor, high building density).

Q3: How do I account for the interaction between green and blue spaces in my PM2.5 model? Ignoring co-effects can lead to an incomplete or biased model.

- Problem: The model does not capture how green and blue spaces work together.

- Diagnosis: Green and blue spaces (UGBS) have documented synergistic effects. For instance, humidity from water bodies can increase leaf moisture, enhancing the deposition of PM particles [13].

- Solution: Integrate metrics that quantify the spatial coupling of green and blue spaces into your model. Key metrics include:

- Distance from green space to the nearest blue space [13].

- Area of waterfront green spaces (e.g., green areas within 300m of a water body) [13].

- Use ML models to test for interaction effects. Studies have found that the co-mitigation effect is reinforced under specific conditions, such as when greenspace coverage is above 40% and the mean distance between blue space patches is below 200m [11].

Experimental Protocols & Key Data

The following table synthesizes quantitative thresholds identified from recent explainable ML studies on green-blue space landscapes and PM2.5.

Table 1: Documented Thresholds for PM2.5 Mitigation by Green-Blue Space Features

| Category | Metric | Key Threshold | Effect on PM2.5 | Source |

|---|---|---|---|---|

| Greenspace Composition | Greenspace Coverage (G_PLAND) |

> 40% | Significant negative influence | [11] |

| Urban Greenspace (UGS) Proportion | 25% - 30% | Desirable range for co-mitigation of PM2.5 and heat | [14] | |

| Greenspace Configuration | Mean Greenspace Patch Size (G_AREA_MN) |

> 50 hectares | Negative influence | [11] |

| < 12 hectares | Reinforces co-mitigation with blue space | [11] | ||

| Greenspace Aggregation Index | > 97 | Beneficial for co-mitigation | [14] | |

| Greenspace Patch Density | > 1650 | Beneficial for co-mitigation | [14] | |

| Blue Space Configuration | Blue Space Patch Contiguity (W_CONTIG_MN) |

> 0.26 | Positive impact on PWP (mitigation) | [11] |

Mean Distance Between Blue Patches (W_ENN_MN) |

< 400 m | Positive impact on PWP (mitigation) | [11] | |

| < 200 m | Reinforces co-mitigation with greenspace | [11] |

Core Analytical Workflow Protocol

This protocol details the methodology for applying explainable ML to uncover nonlinear thresholds, as used in the cited studies [15] [11] [14].

Step 1: Data Collection and Integration

- PM2.5 Data: Obtain population-weighted PM2.5 exposure data or high-resolution spatial concentration data from monitoring networks or satellite-derived products.

- Green-Blue Space Metrics: Use GIS and remote sensing (e.g., high-resolution land cover classification datasets) to calculate landscape metrics. Key metrics include those in Table 1, derived from tools like FragStats.

- Covariates: Collect data on potential confounders (e.g., road density, building height, population density, industrial land use).

Step 2: Model Training and Validation

- Algorithm Selection: Employ a gradient boosting decision tree model such as XGBoost or LightGBM due to their high performance with tabular data and ability to capture complex nonlinearities.

- Training: Split data into training and testing sets (e.g., 80/20). Use k-fold cross-validation on the training set to tune hyperparameters and prevent overfitting.

- Validation: Validate the final model on the held-out test set. Evaluate performance using metrics like R², Root Mean Square Error (RMSE), or Mean Absolute Error (MAE).

Step 3: Model Interpretation and Threshold Extraction

- SHAP Analysis: Apply the SHAP framework to the trained model.

- Feature Importance: Use SHAP summary plots to identify the most influential green-blue space metrics.

- Partial Dependence Plots (PDPs): Generate PDPs for the top features to visualize their marginal effect on PM2.5 prediction. The inflection points on these plots reveal critical thresholds.

- Interaction Effects: Use SHAP interaction values to create 2D plots that reveal how combinations of variables (e.g., greenspace coverage and blue space connectivity) jointly influence PM2.5.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Computational and Data Resources

| Item | Function in Analysis | Example/Tool Name |

|---|---|---|

| Explainable ML Library | Provides the implementation of model interpretation algorithms to uncover nonlinear relationships and thresholds. | SHAP (Shapley Additive Explanations) Python library |

| Gradient Boosting Framework | A powerful machine learning algorithm used to model the complex, nonlinear relationships between landscape variables and PM2.5. | XGBoost, LightGBM |

| Landscape Metric Calculator | Quantifies the spatial patterns of green and blue spaces from land cover maps (e.g., area, density, connectivity, shape). | Fragstats software |

| Geographic Information System (GIS) | Used for spatial data management, integration, calculation of spatial coupling metrics (e.g., blue-green distances), and visualization of results. | ArcGIS, QGIS |

| High-Resolution Land Cover Data | Provides the foundational map to identify green (vegetation) and blue (water) spaces for subsequent metric calculation. | UBGG-3m dataset [13], Copernicus CORINE Land Cover |

Workflow and Troubleshooting Visualization

Research Workflow and Troubleshooting

Scale-Dependent Effects on PM2.5

Pitfalls of Linear Assumptions in Complex Environmental Systems

Frequently Asked Questions

Q1: My linear model shows a statistically significant relationship. Why should I still be concerned about nonlinearity?

- A: A significant linear relationship can be misleading. It may capture only a portion of a more complex, nonlinear effect, leading to incorrect conclusions about the strength and nature of the relationship, especially at the extremes of your data. Assuming linearity where it does not exist can cause you to miss critical thresholds or saturation points in your environmental system [16].

Q2: What are the most common visual signs of nonlinearity in a scatterplot?

- A: Look for patterns that are not a straight line. Common indicators include a curved, U-shaped, or S-shaped cloud of data points; a pattern that appears to flatten out (saturate) at high or low values; or the presence of distinct clusters that suggest different relationships within subgroups of your data [16].

Q3: My dataset is large and high-dimensional. How can I effectively test for nonlinear relationships?

- A: Traditional regression struggles with high-dimensional data. Machine learning (ML) methods like Random Forest or XGBoost are particularly well-suited for this task. They can automatically model complex interactions and nonlinear effects without requiring you to specify their form beforehand. You can then use interpretability techniques like SHAP to understand and visualize these complex relationships [16].

Q4: How can I communicate complex nonlinear findings to a non-technical audience?

- A: Move beyond standard scatterplots. Use clear annotations on your charts to explain what is happening at different regions (e.g., "threshold effect visible here") [17]. Employ intuitive, colorblind-friendly color schemes [18] [19] and consider interactive visualizations that allow stakeholders to explore the data themselves [17]. Always provide a plain-language narrative that focuses on the key insight, not the model's complexity [17].

Troubleshooting Guides

Problem: A linear model provides a poor fit or misleading conclusions for your environmental data.

This guide helps you diagnose and resolve issues arising from incorrect linear assumptions.

| Step | Action | What to Look For | Common Pitfalls & Solutions |

|---|---|---|---|

| 1. Visual Diagnosis | Create a simple scatterplot of your variables. | Patterns that are not a straight line (e.g., curves, clusters, flattening trends) [16]. | Pitfall: Relying solely on correlation coefficients (R).Solution: Always visualize the raw data first. |

| 2. Residual Analysis | Plot the residuals (errors) of your linear model against the predicted values. | A random scatter of residuals indicates a good fit. A systematic pattern (e.g., U-shape) indicates a missing nonlinear relationship [16]. | Pitfall: Ignoring residual patterns if the R² is high.Solution: A nonlinear model is likely required. |

| 3. Model Comparison | Fit a nonlinear or machine learning model (e.g., XGBoost) and compare its performance to the linear model. | A significant improvement in prediction accuracy (e.g., higher R², lower Root Mean Square Error) [16]. | Pitfall: Overfitting a complex model to small data.Solution: Use cross-validation to ensure model robustness. |

| 4. Interpretation | Use interpretable ML techniques like SHAP (SHapley Additive exPlanations) to understand the nonlinear relationship. | The SHAP summary plot shows how a variable impacts the model's output across its entire range, revealing thresholds and saturation points [16]. | Pitfall: Treating the ML model as a "black box."Solution: SHAP provides both global and local interpretability. |

The following table summarizes a hypothetical experiment comparing linear and nonlinear models when analyzing a complex environmental relationship, such as the impact of building coverage on urban vitality [16].

| Model Type | R-Squared (R²) | Root Mean Squared Error (RMSE) | Key Insight from Model |

|---|---|---|---|

| Linear Regression | 0.45 | 12.5 | A 10% increase in building coverage is associated with a linear increase in vitality. |

| XGBoost (Nonlinear) | 0.72 | 7.1 | Positive impact on vitality peaks at ~60% building coverage, with diminishing returns beyond this threshold [16]. |

Experimental Protocol: Detecting Nonlinearity with Interpretable ML

This protocol outlines the methodology for using an interpretable machine learning framework to analyze the nonlinear relationship between the built environment and urban vitality, as demonstrated in recent research [16].

Objective: To investigate the potential nonlinear interactions between built environment factors (e.g., building coverage, population density) and urban vitality using an interpretable spatial machine learning framework.

Materials & Data Sources:

- Urban Vitality Metric: Data from location-based services (e.g., mobile phone data, social media check-ins) to represent human activity intensity.

- Macro-scale Built Environment Data: Point of Interest (POI) data, road network data, population density data, and land use data.

- Micro-scale Built Environment Data: Street view imagery analyzed via semantic segmentation models to calculate indicators like the Green View Index.

- Software: Python or R programming environment with libraries including

XGBoost,SHAP, and geospatial processing tools (e.g.,GDAL,GeoPandas).

Procedure:

- Data Collection & Processing:

- Gather multi-source data for the study area (e.g., a major city).

- Process spatial data to a consistent geographic unit (e.g., grid cells or census tracts).

- Use a semantic segmentation model (e.g., PSPNet) on street view images to extract micro-scale features like the percentage of sky, trees, and buildings [16].

- Variable Calculation:

- Calculate macro-scale variables based on the "5Ds" framework (Density, Diversity, Design, Destination Accessibility, Distance to transit) [16].

- Calculate the micro-scale Green View Index from street view imagery.

- Aggregate the urban vitality metric for each geographic unit.

- Model Training & Validation:

- Split the data into training and testing sets (e.g., 80/20).

- Train an XGBoost regression model to predict urban vitality using all built environment variables.

- Tune the model's hyperparameters using cross-validation on the training set.

- Validate the model's performance on the held-out test set using R² and RMSE.

- Nonlinear Interpretation with SHAP:

- Calculate SHAP values for the trained XGBoost model.

- Generate a SHAP summary plot to rank the importance of all features.

- Generate SHAP dependence plots for the top most important features to visualize their marginal effect on urban vitality, revealing any nonlinear patterns and thresholds [16].

Experimental Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Purpose |

|---|---|

| XGBoost Model | A powerful, scalable machine learning algorithm based on gradient boosting that excels at capturing complex nonlinear relationships and interactions in structured data [16]. |

| SHAP (SHapley Additive exPlanations) | A unified approach to interpreting model output, based on game theory. It quantifies the contribution of each feature to the prediction for any given instance, allowing for global and local interpretability of complex models [16]. |

| Semantic Segmentation Model (e.g., PSPNet) | A deep learning model used to partition street view images into semantically meaningful parts (e.g., sky, building, tree, road) to quantify micro-scale visual environmental features [16]. |

| Green View Index | A micro-scale metric calculated from street view imagery that quantifies the visibility of greenery from a pedestrian's perspective, providing ground-truthed data on street-level greenness [16]. |

| Spatial Cross-Validation | A validation technique used to assess model performance by partitioning data based on spatial location. It helps prevent over-optimistic results due to spatial autocorrelation, ensuring the model generalizes to new geographic areas [16]. |

Advanced Tools and Techniques: Quantifying Nonlinear Relationships with Explainable Machine Learning

Troubleshooting Guide: Common XGBoost & SHAP Issues in Environmental Research

FAQ: Model Performance and Training

Q: My XGBoost model for predicting ecosystem services is overfitting to the training data. What regularization strategies are most effective?

A: XGBoost includes built-in regularization parameters to prevent overfitting, which is crucial for ecological models that must generalize to new environmental conditions [20] [21]. Implement these strategies:

- Adjust Key Parameters: Increase

lambda(L2 regularization) andalpha(L1 regularization) to penalize complex models. Setgammato control minimum loss reduction required for further splits. - Limit Model Complexity: Reduce

max_depthto create shallower trees and decreasesubsampleorcolsample_bytreeto use random subsets of data and features [21]. - Apply Early Stopping: Use the

early_stopping_roundsparameter to halt training when validation performance stops improving [21].

Table: Key XGBoost Regularization Parameters for Environmental Data

| Parameter | Default Value | Recommended Range | Effect on Model |

|---|---|---|---|

lambda (reg_lambda) |

1 | 1-10 | Increases L2 regularization to reduce leaf weights |

alpha (reg_alpha) |

0 | 0-5 | Adds L1 regularization to encourage sparsity |

gamma (minsplitloss) |

0 | 0.1-0.5 | Controls minimum loss reduction for split |

max_depth |

6 | 3-8 | Limits tree depth to prevent over-complexity |

subsample |

1 | 0.7-0.9 | Uses fraction of data to reduce overfitting |

Q: How should I handle missing environmental data in my dataset when using XGBoost?

A: XGBoost has a sparsity-aware algorithm that automatically handles missing values by learning a default direction for missing data in each tree node [21]. For environmental datasets with common missing sensor readings:

- Leave missing values as

NaNorNonerather than imputing - Ensure your data is loaded into

xgboost.DMatrixformat, which is optimized for handling sparse inputs [21] - The algorithm will learn whether missing values should go to left or right child nodes based on training loss reduction

FAQ: SHAP Interpretation Challenges

Q: My SHAP summary plots show unexpected feature importance rankings that contradict domain knowledge. How should I troubleshoot this?

A: This common issue in environmental research often stems from feature correlations or data leakage:

- Check for Multicollinearity: Use correlation matrices to identify highly correlated environmental variables (e.g., temperature and elevation). SHAP values can be unstable with correlated features.

- Validate Data Splitting: Ensure no data leakage between training and test sets, particularly with temporal environmental data.

- Use Multiple Interpretability Methods: Complement SHAP with partial dependence plots (PDP) to validate relationships [22].

- Examine Interaction Effects: Use

shap.TreeExplainer(model).shap_interaction_values()to detect feature interactions that might be affecting importance.

Table: SHAP Value Interpretation Guide for Environmental Variables

| SHAP Pattern | Possible Interpretation | Example from Environmental Research |

|---|---|---|

| High variance for a feature | Strong but context-dependent effect | Precipitation showing threshold effects on water yield [23] |

| Consistent directional effect | Linear or monotonic relationship | Human Footprint Index negatively impacting biodiversity [22] |

| Mixed positive/negative values | Complex nonlinear relationship | Temperature effects on ecosystem services showing optimal ranges [22] |

| Clustered point groups | Subpopulation-specific effects | Urban vs. rural differences in built environment impacts [15] |

Q: How can I effectively visualize and communicate nonlinear relationships and threshold effects detected by XGBoost-SHAP to interdisciplinary teams?

A: For effective science communication:

- Create SHAP Dependence Plots: Isolate individual feature effects while coloring by interacting features

- Identify Threshold Values: Calculate specific inflection points where relationships change direction

- Use Actual Value Scales: Plot SHAP values against original measurement units (e.g., °C, mm precipitation) for intuitive interpretation

XGBoost-SHAP Workflow with Checkpoints

FAQ: Technical Implementation

Q: What are the current best practices for installing and configuring XGBoost to work efficiently with large-scale environmental datasets?

A: For optimal performance with environmental data:

- Installation: Use

pip install xgboostfor the latest stable release (currently 3.0.4) [21] - Memory Management: For large spatial datasets, utilize

xgboost.DMatrixfor efficient memory handling and data compression [21] - GPU Acceleration: Enable GPU support for faster training on large datasets using

tree_method='gpu_hist' - External Memory: For datasets exceeding RAM, use external memory configuration to stream from disk [24]

Q: How can I extract specific threshold values from SHAP plots to quantify critical points in environmental relationships?

A: To operationalize SHAP-detected thresholds in environmental management:

Experimental Protocols for Environmental Data Analysis

Protocol 1: Assessing Ecosystem Services with XGBoost-SHAP

Based on: Anhui Province Ecosystem Study (2000-2020) - Sustainability 2025 [22] Wensu County ES Trade-offs Analysis - Frontiers 2025 [23]

Methodology:

- Data Collection: Compile spatial datasets across climatic (precipitation, temperature), topographic (elevation, slope), land use (remote sensing classifications), and anthropogenic (human footprint index) dimensions [22]

- Ecosystem Service Quantification: Calculate key metrics using established models:

- Water Yield (WY) - InVEST model

- Soil Conservation (SC) - RUSLE equation

- Carbon Sequestration - CASA model

- Biodiversity Maintenance - habitat quality indices [22]

- XGBoost Configuration:

- Set

objective='reg:squarederror'for continuous ES variables - Use

early_stopping_rounds=50with 70/30 training-validation split - Optimize hyperparameters via 5-fold cross-validation [23]

- Set

- SHAP Analysis:

Protocol 2: Analyzing Urban Built Environment Impacts

Based on: Yantai Urban Vitality Study - ScienceDirect 2025 [15]

Methodology:

- Multidimensional Feature Engineering:

- Functionality: POI density, mixed-use indices

- Building Form: Floor area ratio, building density

- Accessibility: Network centrality, transit proximity

- Human Perception: Street view imagery, social media data [15]

- Urban Vitality Measurement: Quantify using multi-source geospatial data (mobile phone data, social media check-ins, nighttime lighting) [15]

- Model Training: Configure separate XGBoost models for daytime vs. nighttime vitality patterns

- Interpretation: Apply SHAP to identify synergistic and antagonistic interactions between built environment elements [15]

SHAP Interpretation Troubleshooting Path

Research Reagent Solutions: Essential Tools for XGBoost-SHAP Environmental Research

Table: Computational Tools for Environmental ML Research

| Tool/Resource | Function | Application in Environmental Research |

|---|---|---|

| XGBoost 3.0+ | Gradient boosting framework | Modeling complex nonlinear environmental relationships [24] [21] |

| SHAP Library | Model interpretation | Explaining feature effects and detecting thresholds [22] [23] |

| InVEST Model | Ecosystem service quantification | Calculating water yield, soil retention, habitat quality [22] |

| Google Earth Engine | Geospatial data processing | Accessing and processing satellite imagery for environmental variables [22] |

| PySal | Spatial analysis | Calculating spatial autocorrelation and neighborhood effects [15] |

| Cartopy/Geopandas | Spatial visualization | Mapping SHAP values and model predictions geographically [22] |

Table: Key Environmental Data Sources for ML Applications

| Data Category | Specific Metrics | Sources & Handling |

|---|---|---|

| Climate Data | Precipitation, Temperature, Evapotranspiration | WorldClim, CHIRPS, MODIS products [22] |

| Land Use/Land Cover | Classification maps, Change detection | CLCD, MODIS Land Cover, ESA CCI [22] |

| Topography | Elevation, Slope, Aspect | SRTM, ASTER GDEM [22] |

| Anthropogenic | Human Footprint Index, Nighttime Lights | Global Human Settlement Layer, VIIRS [22] |

| Ecosystem Services | Water yield, Carbon sequestration, Biodiversity | Model-derived (InVEST, CASA) [22] |

Frequently Asked Questions (FAQs)

Q1: What is the core difference in what PDPs and SHAP visualizations reveal about my model?

While both are interpretability tools, their focus is fundamentally different. PDPs show the average marginal effect of a feature on the model's predictions across your entire dataset [25]. In contrast, SHAP (SHapley Additive exPlanations) values explain individual predictions by quantifying the contribution of each feature to the difference between the actual prediction and the average model output [26] [27]. SHAP values have the advantage of being consistent with local explanations that aggregate into global interpretations.

Q2: My PDP line is nearly flat, suggesting a feature is unimportant, but my model's performance drops when I remove it. Why is this happening?

This is a classic limitation of PDPs. A flat line can be misleading because the PDP shows only the average effect [25]. It is possible that the feature has strong but opposing effects on different subsets of your data (e.g., high values push predictions up for some instances and down for others), which cancel each other out on average. To diagnose this, use Individual Conditional Expectation (ICE) plots to see the prediction line for each individual instance. If the ICE lines are not flat but cross, it indicates the presence of interaction effects that the PDP is hiding [25].

Q3: In my SHAP scatter plot, I see significant vertical dispersion for a single feature value. What does this mean, and how can I investigate it?

Vertical dispersion at a single feature value is a tell-tale sign of interaction effects in your model [28]. It means the impact of that feature on the prediction depends on the value of another, correlated feature. You can investigate this by using the coloring feature in shap.plots.scatter. The library will automatically try to select the most likely interacting feature to color the points by, allowing you to visually identify the source of the interaction [28].

Q4: How can I make my interpretability plots accessible to colleagues with color vision deficiencies?

- Avoid Red-Green Color Palettes: These are the most common source of confusion [29] [30].

- Use High-Contrast, Colorblind-Friendly Palettes: Predefined palettes like

tableau-colorblind10are a safe and easy choice [30]. - Incorporate Patterns and Textures: For bar charts or fill areas, use patterns (e.g., dots, stripes, hashes) in addition to, or instead of, color [29] [30].

- Leverage Labels and Annotations: Directly label data series and trends instead of relying solely on a color-coded legend [29].

Troubleshooting Guides

Issue 1: Partial Dependence Plots Show Unrealistic or Misleading Relationships

| Symptom | Potential Cause | Solution / Diagnostic Action |

|---|---|---|

| PDP shows model behavior in data regions that are physically impossible (e.g., high rainfall with zero cloud cover). | The model is being probed with unrealistic data instances because the feature of interest is highly correlated with others. Forcing one feature to a specific value across the entire dataset breaks these natural correlations [25]. | 1. Check for strong feature correlations in your dataset.2. Use Accumulated Local Effects (ALE) Plots instead of PDPs. ALE plots are specifically designed to handle correlated features by calculating differences in predictions within local intervals, avoiding out-of-distribution combinations. |

| The PDP line is flat, but the feature is known to be important from other metrics. | Averaging Effect: The feature has strong, opposing effects that cancel out on average [25]. | 1. Generate an ICE plot to visualize the trajectory of individual predictions.2. Look for lines that have a clear slope but are oriented in different directions, confirming the cancellation. |

| The PDP is dominated by a few extreme values, making the general trend hard to see. | The distribution of the feature is highly skewed [25]. | 1. Always plot a histogram or density plot of the feature's distribution along the x-axis of the PDP.2. Focus interpretation on regions where the data is densely populated. |

The following workflow can help you diagnose and resolve common issues with PDPs:

Issue 2: SHAP Scatter Plots are Noisy or Hard to Interpret

| Symptom | Potential Cause | Solution / Diagnostic Action |

|---|---|---|

| The scatter plot is a mess of points, making it difficult to discern any pattern. | Overplotting: Too many points are overlapping, hiding the density and true structure of the data [28]. | 1. Use the alpha parameter (transparency) to make points semi-transparent (e.g., alpha=0.2). This helps reveal dense areas [28].2. Reduce the dot_size to minimize overlap.3. For categorical or binned data, add a small amount of x_jitter (e.g., x_jitter=0.5) to separate points that would otherwise form a single vertical line [28]. |

| The plot is dominated by a few outliers, compressing the majority of the data. | The feature or its SHAP values have a long-tailed distribution. | 1. Use the xmin/xmax and ymin/ymax parameters with percentile notation (e.g., xmin=age.percentile(1), xmax=age.percentile(99)) to zoom in on the main body of the data and exclude extreme outliers [28]. |

| It's unclear which feature is causing the interaction effects visible as vertical dispersion. | The default automatically selected feature for coloring may not be the most relevant for your research question. | 1. Manually specify the color parameter to test different features you suspect might be interacting. For example: shap.plots.scatter(shap_values[:, 'Age'], color=shap_values[:, 'Education-Num']) [28].2. Use shap.utils.potential_interactions() to get a ranked list of features likely to interact with your primary feature and plot the top candidates [28]. |

Experimental Protocols for Key Interpretability Methods

Protocol 1: Generating and Analyzing a Partial Dependence Plot

Purpose: To visualize the global average marginal effect of one or two features on the predictions of a trained machine learning model.

Materials: See "Research Reagent Solutions" below.

Procedure:

- Train Model: Train your chosen machine learning model (e.g.,

RandomForestRegressor) on your dataset [25]. - Select Feature: Choose the feature of interest for the PDP.

- Create Probing Dataset: For each unique value

vof the selected feature:- Make a copy of the original dataset.

- Set the value of the selected feature to

vin every row of this copy. - Use the trained model to generate predictions for this entire modified dataset [25].

- Calculate Average Prediction: Compute the average prediction for each unique value

v[25]. - Plot: Create a line plot with the unique feature values on the x-axis and the average predictions on the y-axis.

- Interpretation: Analyze the plot to understand the relationship. A positively sloped curve indicates a positive correlation with the model's output, while a non-linear curve suggests a complex relationship. Always overlay a histogram of the feature's distribution to ensure your interpretation focuses on regions with sufficient data [25].

Protocol 2: Creating and Interpreting a SHAP Dependence Scatter Plot

Purpose: To visualize the impact of a single feature on the model's output for every instance in the dataset, and to identify interaction effects.

Materials: See "Research Reagent Solutions" below.

Procedure:

- Compute SHAP Values: Use a

shap.Explainer(e.g.,shap.TreeExplainerfor tree-based models) on your trained model and dataset to compute a matrix of SHAP values. Each element in this matrix is the SHAP value for a specific feature and a specific data instance [26] [28]. - Generate Basic Scatter Plot: Use

shap.plots.scatter(shap_values[:, 'Feature_Name']). This will create a plot where the x-axis is the value of the feature from the input data, and the y-axis is the corresponding SHAP value for that feature [28]. - Identify Interactions: Observe vertical dispersion of SHAP values for a single feature value. This indicates the feature's effect depends on another feature [28].

- Color by Interaction Feature: To investigate, add

color=shap_valuesto thescatterfunction. This will automatically color the points by the feature with the strongest interaction. Alternatively, manually specify a feature you hypothesize is interacting [28]. - Interpretation: The SHAP value represents the magnitude and direction (positive/negative) of a feature's contribution for each instance. The coloring reveals how the value of a second feature influences this contribution.

The following diagram outlines the logical decision process for creating and refining SHAP scatter plots:

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential software tools and libraries required for implementing the interpretability methods discussed in this guide.

| Item Name | Function / Application | Specification / Notes |

|---|---|---|

| SHAP (SHapley Additive exPlanations) Python Library | A unified framework for calculating and visualizing SHAP values to explain the output of any machine learning model. Provides both local and global explanations [26] [27]. | Core explainer classes include TreeExplainer (for tree-based models), KernelExplainer (model-agnostic), and Explainer (auto-selects best explainer). Key plots: scatter, beeswarm, waterfall [26] [28]. |

Partial Dependence Plot Toolbox (PDPbox) |

A Python library specifically designed for creating Partial Dependence Plots and Individual Conditional Expectation (ICE) plots [27]. | Useful for visualizing one-way and two-way interactions. Helps in identifying non-linear relationships and thresholds in the model's logic [27]. |

| Matplotlib | A core plotting library in Python used for creating static, animated, and interactive visualizations. | Used as the backend for many SHAP plots and for customizing plots (adding titles, labels, adjusting colors, etc.) [28] [30]. Essential for implementing PDPs from scratch [25]. |

| Scikit-learn | A fundamental library for machine learning in Python. | Provides datasets, model implementations (e.g., RandomForestRegressor), and data preprocessing utilities essential for the machine learning workflow that precedes model interpretation [25]. |

| XGBoost | An optimized distributed gradient boosting library, often used for high-performance machine learning. | A common model type used in research (e.g., [15] [16]) that is highly compatible with shap.TreeExplainer for fast and exact SHAP value calculation [26] [28]. |

| ColorBrewer / Color Oracle | Tools for selecting and testing color palettes to ensure visualizations are accessible to users with color vision deficiencies [29]. | Critical for accessibility. Helps researchers avoid problematic color combinations (like red-green) and select high-contrast, colorblind-friendly palettes for their plots [29]. |

This technical support center provides troubleshooting guidance for researchers analyzing the nonlinear relationships between built environment factors (e.g., density, land use mix, accessibility) and urban vitality metrics (e.g., pedestrian volume, social interaction intensity) using scatterplots. The complex, non-proportional nature of these relationships often presents challenges in visualization and interpretation. The following guides address these specific issues to ensure the validity and clarity of your research findings.

Frequently Asked Questions (FAQs)

Q1: My scatterplot shows a dense cluster of data points, making it impossible to see relationships. How can I fix this overplotting?

- A: Overplotting occurs when high data density causes points to overlap, obscuring patterns. To alleviate this:

- Apply Transparency: Reduce the

alphavalue of your data points to see overlapping areas. - Reduce Point Size: Use smaller markers to minimize overlaps.

- Use a Heatmap (2D Histogram): This alternative visualization bins data points and uses color to represent density, clearly revealing patterns in overcrowded areas.

- Sample Your Data: For very large datasets, use a random subset of points for initial pattern recognition [31] [32].

- Apply Transparency: Reduce the

Q2: I've identified a clear correlation in my scatterplot. Can I state that one built environment variable causes changes in urban vitality?

- A: No, correlation does not imply causation. A observed relationship might be driven by a third, unplotted variable that influences both your variables (e.g., land value), the causal link could be reversed, or the pattern could be coincidental. Always critically examine your hypothesis and underlying urban theory before making causal claims [31] [32].

Q3: The relationship in my scatterplot appears to be exponential, not a straight line. How should I proceed with the analysis?

- A: This indicates a nonlinear relationship, which is common in urban systems.

- Apply a Transformation: Use a logarithmic scale on one or both axes. This can transform an exponential curve into a more linear form, making it easier to model and interpret.

- Use Non-Linear Trendlines: Instead of a linear regression, fit a non-linear model (e.g., polynomial, exponential) to better capture the relationship [32].

Q4: My scatterplot has many outliers. Should I remove them?

- A: Not necessarily. Outliers should be investigated, not automatically deleted. In urban studies, an outlier (e.g., a district with extremely high vitality despite low density) may reveal a significant exception to the rule, warranting further qualitative investigation to understand the underlying reasons [31].

Q5: How can I ensure my scatterplot visualizations are accessible to readers with color vision deficiencies?

- A: Adhere to Web Content Accessibility Guidelines (WCAG).

- Contrast Ratio: Ensure a minimum contrast ratio of 4.5:1 for text and graphical elements against their background [33] [34].

- Dual Encoding: Do not rely on color alone. Use a combination of color and shape (e.g., circles vs. squares) or different texture patterns to distinguish between categorical groups [31].

- Test in Grayscale: Preview your scatterplot in black and white to verify that information is still perceivable.

Troubleshooting Guides

Guide 1: Resolving Weak or No Apparent Correlation

Symptoms: Data points in the scatterplot are spread widely with no discernible pattern; the trend line is almost flat; statistical correlation coefficients are close to zero.

Diagnosis and Solutions:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Check Variable Selection | Confirmed theoretical link between chosen built environment metric and vitality indicator. |

| 2 | Investigate Non-Linearity | A clear, interpretable pattern (e.g., logarithmic, threshold) emerges after transformation. |

| 3 | Control for Confounding Variables | The relationship becomes clearer and stronger when a third variable (e.g., income level, time of day) is accounted for. |

| 4 | Check for Interaction Effects | Different, stronger correlations are revealed within specific subgroups of the data. |

Guide 2: Correcting for Non-Linear Data Distribution

Symptoms: The data points curve upwards or downwards, forming a parabola, S-shape, or other non-straight pattern. A linear trend line poorly fits the majority of data points.

Diagnosis and Solutions:

| Step | Action | Example Analysis |

|---|---|---|

| 1 | Visual Inspection | Plot the data and visually assess if the relationship curves. |

| 2 | Apply Logarithmic Scale | Apply a log scale to the axis of the variable suspected of having diminishing returns (e.g., log(Park Density)). |

| 3 | Fit a Non-Linear Model | Use statistical software to fit and plot a non-linear regression line (e.g., a polynomial regression of order 2 or 3). |

| 4 | Interpret the Coefficients | Interpret the coefficients of the non-linear model within the context of urban theory (e.g., "Vitality increases with density up to a threshold, then plateaus"). |

Experimental Protocols & Methodologies

Protocol 1: Data Preparation for Urban Vitality Scatterplots

Objective: To structure raw urban data into a format suitable for creating insightful scatterplots that test for nonlinear relationships.

Materials: Raw datasets (e.g., census tracts, sensor data, land use maps), statistical software (R, Python, Stata).

Procedure:

- Variable Definition: Precisely define your Independent Variable (X, e.g., "Street Network Density"), Dependent Variable (Y, e.g., "Peak Hour Pedestrian Count"), and potential Control Variables (Z, e.g., "Neighborhood Median Income").

- Data Cleaning: Handle missing values using appropriate imputation techniques. Check for and correct data entry errors.

- Normalization/Standardization: If variables are on different scales (e.g., density vs. monetary value), normalize or standardize them to ensure comparability, especially if creating composite indices.

- Data Structuring: Organize your data into a table where each row represents a single spatial unit (e.g., a city block, a census tract) and columns represent the variables [31].

- Output: A clean data table ready for visualization and analysis.

Protocol 2: Creating an Accessible Multi-Category Scatterplot

Objective: To generate a scatterplot that effectively visualizes the relationship between two primary variables while also incorporating a third categorical variable (e.g., land-use zone type), adhering to accessibility standards.

Materials: Prepared data table, visualization software (e.g., Python's Matplotlib/Seaborn, R's ggplot2).

Procedure:

- Create Base Plot: Plot the primary independent variable (X) against the dependent variable (Y).

- Encode Third Variable by Color: Map the categorical variable (e.g., land-use zone) to distinct colors. Use a color palette with sufficient contrast between categories [33].

- Encode by Shape: Simultaneously map the same categorical variable to different point shapes (e.g., circles, triangles, squares) to ensure accessibility for color-blind readers [31].

- Add Trend Lines: Add a separate trend line (linear or non-linear) for each category to illustrate group-specific relationships.

- Add Accessible Annotations: Include a clear legend, title, and axis labels. Ensure all text has a contrast ratio of at least 4.5:1 against the background [34] [35].

Data Presentation & Quantitative Summaries

| Relationship Type | Description | Example in Urban Context | Suggested Transformation |

|---|---|---|---|

| Logarithmic | Returns diminish as the independent variable increases. | Impact of green space area on perceived well-being. | Apply log(X) to the independent variable. |

| Exponential | Growth accelerates as the independent variable increases. | Spread of a cultural trend through a network of public spaces. | Apply log(Y) to the dependent variable. |

| U-Shaped (Quadratic) | The dependent variable is high at low and high values of X, but low in the middle. | Crime rates versus population density (low in rural and very dense areas, higher in suburbs). | Fit a polynomial model (e.g., Y ~ X + X²). |

| S-Shaped (Sigmoid) | Growth is slow, then rapid, then slows again, reaching a saturation point. | Adoption of a new transport technology across districts. | Fit a logistic or sigmoid function. |

Table 2: Essential Research Reagent Solutions for Urban Data Analysis

| Item | Function in Analysis | Example/Tool |

|---|---|---|

| Geographic Information System (GIS) | To process, manage, and analyze spatial data; calculate built environment metrics (density, mix, proximity). | ArcGIS, QGIS |

| Statistical Software | To perform correlation analysis, regression (linear and non-linear), and data visualization. | R, Python (Pandas, Scikit-learn), Stata |

| Data Visualization Library | To create, customize, and export scatterplots, heatmaps, and other diagnostic charts. | ggplot2 (R), Matplotlib/Seaborn (Python) |

| Accessibility Checker | To verify that colors used in visualizations meet WCAG contrast ratio requirements. | Colour Contrast Analyser, WebAIM Contrast Checker |

Mandatory Visualizations

Scatterplot Analysis Workflow

Third-Variable Encoding Strategies

Troubleshooting Guides and FAQs

Installation and Setup

Q: I'm encountering errors during the initial setup of YOLO on my system. What are the common causes and solutions?

A: Installation issues often stem from environment incompatibilities. Please verify the following:

- Python Version: Ensure you are using Python 3.8 or later [36].

- PyTorch Version: You must have PyTorch 1.8 or later correctly installed [36].

- Virtual Environment: Use a virtual environment (e.g.,

condaorvenv) to prevent package conflicts [36]. - CUDA for GPU Usage: To utilize a GPU, confirm your system is CUDA compatible. Run

nvidia-smiin your terminal to check the CUDA version. Verify PyTorch recognizes the GPU by runningimport torch; print(torch.cuda.is_available())in Python. This should returnTrue[36].

Q: My YOLO model is not using the GPU during training, even though it's available. How can I force it to use the GPU?

A: You can explicitly specify the device for training. In your training configuration or command, set the device argument. For example:

- To use the first GPU:

device=0[36] - To use the CPU:

device=cpu[36] You can verify the active device in the training logs.

Model Training

Q: My model's training loss is not decreasing, or the performance metrics are poor. What parameters should I monitor and adjust?

A: Beyond the primary loss function, continuously track key performance metrics to diagnose model convergence [36]:

- Precision: Measures the model's accuracy when it makes a positive prediction.

- Recall: Measures the model's ability to find all positive samples.

- Mean Average Precision (mAP): A comprehensive metric that combines precision and recall, often reported at an IoU threshold of 0.5 (mAP@50) or averaged from 0.5 to 0.95 (mAP@50:95) [36] [37].

Tools like TensorBoard, Comet, or Ultralytics HUB are highly recommended for visualizing these metrics during training [36].

Q: How can I speed up the training process on a machine with multiple GPUs?

A: Leveraging multiple GPUs can significantly accelerate training. Ensure your system recognizes multiple GPUs, then modify your training command to utilize them and increase the batch size accordingly. For example [36]:

Note: The specific argument might be device or gpus depending on your YOLO version. Always adjust the batch size to fit within the total GPU memory.

Q: My custom model is only detecting the objects I trained it on, but I want it to also detect the objects from the original pre-trained model. Is this possible?

A: Yes, you can combine the capabilities of a pre-trained model and your custom model. Two common approaches are:

- Fine-Tuning: Start training from a pre-trained model (e.g.,

yolov8n.pt) on your custom dataset. This helps the model retain its original knowledge while learning new classes, provided your dataset contains annotations for all desired classes [38]. - Ensemble Models: Run inference using both your custom model and the pre-trained model separately on the same input, then combine their predictions programmatically [38].

Model Prediction and Performance

Q: How can I filter the model's predictions to show only specific object classes?

A: Use the classes argument to specify a list of class indices you want to detect. This is useful for focusing on specific environmental anomalies and reducing visual clutter [36].

Q: What is the difference between box precision, mask precision, and the precision in a confusion matrix?

A: These are distinct metrics evaluating different aspects of model performance [36]:

- Box Precision: Measures the accuracy of the predicted bounding boxes against the ground truth boxes, typically using the Intersection over Union (IoU) metric.

- Mask Precision: Relevant for segmentation tasks, it assesses the pixel-wise agreement between predicted masks and ground truth masks.

- Confusion Matrix Precision: A classification metric that represents the proportion of correct positive predictions (True Positives) against all positive predictions (True Positives + False Positives).

Experimental Protocols and Performance Data

Protocol for Deforestation Anomaly Detection

This protocol outlines the methodology for training a YOLO model to identify indicators of deforestation, such as tree stumps and logging machinery, from aerial imagery [39].

- Model Architecture: YOLOv8 or YOLOv11, potentially integrated with a LangChain agent for dynamic threshold adjustment and contextual reasoning [39].

- Data Source: Annotated satellite and drone imagery [39].

- Key Modifications:

- Integration of a shallow feature detection layer (P2-scale) to improve the capture of small objects [40].

- Use of reparameterized convolution modules (RCS-OSA) in the backbone and neck networks to enhance feature extraction while reducing computational load [40].

- Adoption of Wise-IoU v3 (WIoU v3) as the bounding box regression loss function to improve localization accuracy and handle low-quality annotations [40].

- Training Augmentation: Extensive data augmentation is critical to simulate diverse weather and lighting conditions, improving model robustness for real-world deployment [37].

Table 1: Performance Metrics in Deforestation Detection

| Model Variant | Key Modifications | mAP@50 | Notes | Source |

|---|---|---|---|---|

| Baseline YOLO | - | ~0.07 | Baseline mAP for deforestation task | [39] |

| YOLO + LangChain | Context-aware agent, dynamic thresholds | Recall ↑ 24% | Reduced false positives, increased recall | [39] |

| SRW-YOLO (YOLOv11) | P2 layer, RCS-OSA, WIoU v3 | 79.1% | Precision: 80.6% on State Grid dataset | [40] |

Protocol for Infrastructure Anomaly Detection

This protocol describes the process for detecting anomalies, such as climbing activities or damaged cables, on telecommunications infrastructure [37].

- Model Architecture: A modified YOLOv8s model [37].

- Data Source: A custom dataset of fiber optic cables in various states (normal, sagging, detached, manipulated), including "climbing activities, poles, and person and animal" [37].

- Key Modifications: Optimizations to the model backbone and training on a well-balanced, scenario-rich dataset [37].

- Training Augmentation: Various augmentation approaches were used to enhance model performance and reduce overfitting [37].

Table 2: Performance Metrics in Infrastructure Anomaly Detection

| Model Variant | Epochs | mAP@50 | mAP@50:95 | Precision | Recall |

|---|---|---|---|---|---|

| YOLOv8s-modified | 20 | 78.9% | - | - | - |

| YOLOv8s-modified | 50 | 87.5% | - | - | - |

| YOLOv8s-modified | 100 | 97.3% | 71.5% | 96.9% | 86.6% |

| YOLOv8-original | 100 | 89.6% | 59.0% | - | - |

Data derived from experiments on fiber optic cable anomaly detection [37].

Workflow and Signaling Diagrams

Troubleshooting Workflow

Environmental Anomaly Detection Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Tools and Datasets for Environmental Anomaly Detection

| Item | Function / Purpose | Example in Context |

|---|---|---|

| Pre-trained YOLO Model | Provides a foundational starting point with general feature detection capabilities, enabling faster convergence via transfer learning. | yolov8n.pt, yolov11n.pt [36] [38] |

| Custom Annotated Dataset | A domain-specific dataset with labeled objects of interest (e.g., tree stumps, sagging cables) essential for fine-tuning the model. | Datasets for "deforestation indicators" or "fiber optic cable anomalies" [39] [37]. |

| Data Augmentation Pipeline | A set of techniques (e.g., geometric transformations, color jitter) to artificially expand the training dataset, improving model robustness and reducing overfitting. | Used to simulate "diverse weather and lighting conditions" [37]. |

| GIS (Geographic Information System) | Integrates detected anomalies with spatial data, providing geolocated alerts and enabling analysis within an environmental context. | Used for "dynamic threshold adjustment" and "GIS-driven reporting" [39]. |

| High-Performance Computing (HPC) / GPU | Provides the computational power necessary for processing large-scale environmental data (e.g., satellite imagery) and training complex deep learning models. | Critical for handling "big data analytics" and "real-time processing" [41] [42]. |

Solving Real-World Problems: Overcoming Data and Model Challenges in Nonlinear Analysis

Addressing False Positives and Dynamic Thresholds in Real-Time Monitoring

Frequently Asked Questions

Q: What are the most common causes of false positives in environmental sensor data? A: The primary causes are sensor drift due to environmental exposure (e.g., temperature, humidity), transient environmental artifacts (e.g., sudden wind gusts, animal activity), and particulate interference (e.g., pollen, dust) that scatter light similarly to the target analyte. Implementing a baseline correction protocol and data smoothing filters can mitigate these.

Q: How do I determine the optimal dynamic threshold for my specific monitoring application? A: Optimal thresholds are determined by analyzing historical data to establish a baseline signal distribution. Calculate the moving average and standard deviation over a defined window, then set the threshold to a multiple (e.g., 3x) of the moving standard deviation above the moving average. The specific multiplier should be calibrated based on your acceptable false positive rate.

Q: My scatterplot shows a nonlinear relationship between two environmental variables. How should I adjust my analysis? A: Nonlinear relationships require moving beyond simple linear correlation coefficients. Apply local regression (LOESS) to model the trend. For threshold setting, segment the data range and establish different thresholds for each segment based on the local variance, ensuring sensitivity across the entire measurement scale.

Q: Can you recommend a standard protocol for validating a dynamic thresholding method? A: The validation protocol should involve three stages: 1) Using a held-out historical dataset to calculate the false positive and false negative rates. 2) A controlled challenge test where known concentrations of an analyte are introduced. 3) A field trial in a controlled environment to simulate real-world conditions and finalize the threshold parameters.

The following table summarizes the performance of different dynamic threshold multipliers when applied to a historical dataset of particulate matter concentration.

| Threshold Multiplier | False Positive Rate (%) | False Negative Rate (%) | Overall Accuracy (%) |

|---|---|---|---|

| 2.0 | 8.5 | 1.2 | 90.3 |

| 2.5 | 4.3 | 2.1 | 93.6 |

| 3.0 | 1.8 | 3.5 | 94.7 |

| 3.5 | 0.9 | 5.1 | 94.0 |

| 4.0 | 0.5 | 7.3 | 92.2 |

Research Reagent Solutions for Environmental Monitoring

| Item | Function |

|---|---|

| Calibration Standard Gases | Provides known concentration references for sensor calibration, essential for maintaining measurement accuracy and detecting sensor drift. |

| Particulate Matter (PM) Filters | Used in controlled challenges to validate sensor readings against gravimetric analysis, the gold standard for PM mass concentration. |

| Data Logging Solution | Hardware/software for high-frequency time-series data collection, forming the raw dataset for scatterplot analysis and threshold calculation. |

| LOESS Smoothing Software | Statistical package or library to perform Local Regression, crucial for identifying and modeling the underlying nonlinear trends in scatterplots. |

Dynamic Threshold Adjustment Workflow

Signal Interpretation Logic

Frequently Asked Questions (FAQs)