Bridging the Gap: A Data Science Roadmap for Emerging Contaminant Research in Biomedicine

This article addresses critical research gaps at the intersection of data science and emerging contaminants (ECs), a pressing concern for researchers and drug development professionals.

Bridging the Gap: A Data Science Roadmap for Emerging Contaminant Research in Biomedicine

Abstract

This article addresses critical research gaps at the intersection of data science and emerging contaminants (ECs), a pressing concern for researchers and drug development professionals. It explores the foundational challenges of translating complex environmental and biological data into meaningful insights, evaluates advanced machine learning methodologies for EC detection and risk assessment, and identifies common pitfalls like data leakage and inadequate causal inference. The content further examines validation frameworks and comparative analyses of regulatory science, providing a comprehensive roadmap for leveraging data-driven approaches to mitigate the health risks posed by pharmaceuticals, PFAS, and microplastics. The synthesis aims to foster robust, clinically relevant data science applications in environmental health and toxicology.

The Data Landscape: Defining Emerging Contaminants and Foundational Knowledge Gaps

Emerging contaminants (ECs)—primarily pharmaceuticals, per- and polyfluoroalkyl substances (PFAS), and microplastics—represent a pressing global challenge for environmental and human health. Their continuous release, persistence, and complex bioactivity necessitate advanced detection and remediation strategies. This whitepaper provides a technical overview of these contaminants, detailing their sources, environmental fate, and proven analytical methodologies. Furthermore, it frames these issues within the critical context of data science research gaps, highlighting the urgent need for more comprehensive, globally representative data and advanced computational models to fully understand and mitigate the risks these substances pose.

Contaminant Profiles and Environmental Impact

The following table summarizes the core characteristics, primary sources, and key environmental impacts of the three major classes of emerging contaminants.

Table 1: Profile of Major Emerging Contaminants

| Contaminant Class | Core Characteristics | Primary Sources | Key Environmental & Health Impacts |

|---|---|---|---|

| Pharmaceuticals [1] | Bioactive compounds designed to produce biological effects in humans and animals. | Wastewater effluent, agricultural runoff (veterinary medicines), improper disposal [1]. | - Endocrine disruption in aquatic life (e.g., male fish developing female characteristics) [1].- Contribution to antimicrobial resistance (AMR) [1] [2].- Cytotoxic and genotoxic damage to aquatic organisms [1]. |

| PFAS (Forever Chemicals) [3] | Large group of synthetic chemicals; persistent in environment, bioaccumulative [3]. | Firefighting foam (AFFF), industrial sites, food packaging, consumer products (stain-resistant fabrics) [3] [4]. | - Reproductive effects (decreased fertility) [3].- Developmental delays in children [3].- Increased risk of certain cancers (e.g., prostate, kidney) [3].- Reduced immune response [3]. |

| Microplastics [5] [6] | Plastic particles <5 mm in size; highly persistent, can adsorb other pollutants [6]. | Plastic mulch, wastewater sludge, tire wear, breakdown of larger items, atmospheric deposition [6] [7]. | - Ingestion by soil and aquatic fauna, causing physiological harm [6].- Uptake by plants, entering food chain [6].- In humans, linked to cardiovascular risks and potential neurotoxic effects [5] [7].- Alters soil microbial structure and function [6]. |

Analytical Methodologies for Detection and Characterization

Robust experimental protocols are essential for the accurate identification and quantification of emerging contaminants in complex environmental matrices.

Detection of Pharmaceutical Residues

Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS) is a cornerstone technique for detecting trace-level pharmaceuticals in water and soil.

- Sample Preparation: Water samples are filtered (0.7 µm glass fiber filter) to remove particulates. Solid samples (soil, sediment) require pressurized liquid extraction (PLE) or solid-liquid extraction. An internal standard (e.g., isotope-labeled analog of the target analyte) is added to correct for matrix effects and losses during preparation.

- Solid Phase Extraction (SPE): Filtered water samples are passed through SPE cartridges (e.g., Oasis HLB) to concentrate and clean up the analytes. Cartridges are conditioned with methanol and reagent water, loaded with sample, dried, and eluted with a solvent like methanol.

- Instrumental Analysis: Extracts are analyzed via LC-MS/MS. Separation is achieved on a reversed-phase C18 column with a gradient of methanol and water, both containing 0.1% formic acid. MS/MS detection operates in Multiple Reaction Monitoring (MRM) mode for high specificity and sensitivity.

- Data Quantification: Quantification is performed using an internal standard calibration curve, comparing the peak area ratio of the analyte to its internal standard.

Analysis of Microplastics

The analysis of microplastics typically involves a combination of visual, spectroscopic, and thermal techniques.

- Sample Digestion & Separation: Environmental samples (water, soil, tissue) undergo digestion in a 30% hydrogen peroxide (H₂O₂) solution to remove organic matter. Density separation (using a saturated sodium chloride, NaCl, solution) is employed to float microplastics away from denser mineral particles.

- Filtration and Identification: The separated particles are filtered onto membrane filters. Visual identification under a stereo-microscope is followed by confirmatory analysis via Fourier-Transform Infrared (FTIR) or Raman spectroscopy. These techniques provide a molecular "fingerprint" to identify the polymer type.

- Mass Quantification: For mass-based concentration, Pyrolysis-Gas Chromatography-Mass Spectrometry (Py-GC/MS) is used, where the polymer is thermally decomposed and the resulting fragments are analyzed to identify and quantify the plastic.

Research Reagent Solutions for EC Analysis

Table 2: Essential Reagents and Materials for Emerging Contaminant Analysis

| Research Reagent / Material | Primary Function in Experimental Protocol |

|---|---|

| Oasis HLB SPE Cartridge | A reversed-phase polymer sorbent for extracting a wide range of polar and non-polar pharmaceuticals and other ECs from water samples [8]. |

| Isotope-Labeled Internal Standards (e.g., ¹³C- or ²H-labeled analogs) | Added to samples prior to extraction to correct for matrix effects and analyte loss during sample preparation; crucial for accurate LC-MS/MS quantification. |

| Hydrogen Peroxide (H₂O₂) | Used in the digestion step of microplastics analysis to remove natural organic matter that would otherwise interfere with spectroscopic identification [6]. |

| Sodium Chloride (NaCl) Solution | Used for density separation to isolate microplastic particles from denser sediment and soil matrices during sample preparation [6]. |

| FTIR Microspectroscopy | A non-destructive analytical technique that identifies the polymer type of microplastic particles by measuring their absorption of infrared light, creating a unique spectral fingerprint [8]. |

The Critical Data Science Research Gap

While laboratory studies are vital, a significant chasm exists between our current data and a holistic understanding of ECs in natural ecosystems. The field of EC data science faces several common and pressing issues [9].

- Global Data Imbalance: There is a severe geographical bias in EC research, with approximately 75% of studies focused on North America and Europe, leaving the Global South drastically underrepresented despite often bearing a higher pollution burden [2]. This imbalance risks developing mitigation strategies that are inappropriate or even detrimental for regions with different pollution profiles and ecosystems [2].

- Methodological and Conceptual Shortcomings: Many data-driven models suffer from data leakage, where information from the test set is inadvertently used during training, leading to over-optimistic and non-generalizable predictions [9]. There is also an over-reliance on laboratory data, which frequently ignores critical real-world factors like matrix effects (e.g., the impact of soil or sediment composition), trace-level concentrations, and complex mixture toxicology [9].

- Insufficient Causal Discovery: The current focus of many models is primarily on prediction. There is a critical need for approaches that can reveal strong causal relationships and underlying mechanisms, moving beyond correlation to true understanding [9]. For PFAS, a key challenge is integrating data from abiotic (water, soil, air) and biotic (living organisms) systems to fully elucidate exposure pathways [10].

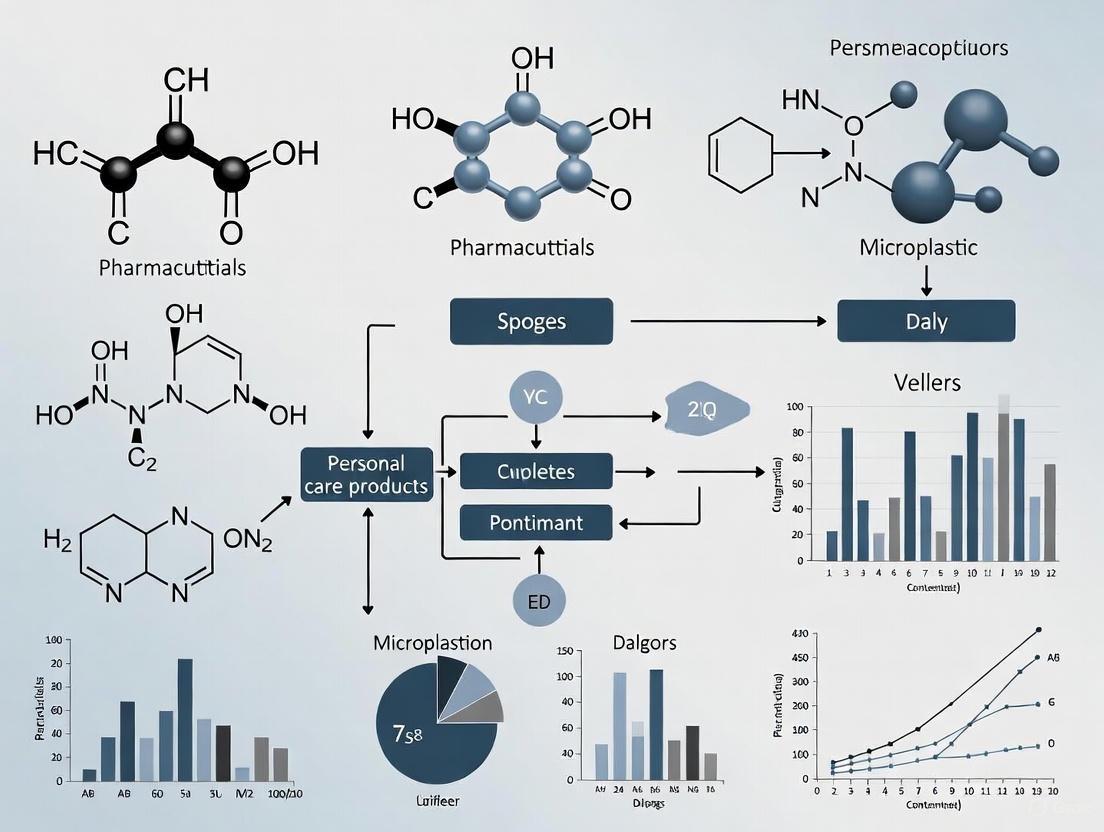

The following diagram illustrates the interconnected workflow for studying emerging contaminants, from sample collection to data analysis, and highlights the critical research gaps that currently limit the field.

Pharmaceuticals, PFAS, and microplastics each present unique and persistent threats to environmental and human health. Addressing these threats requires a dual approach: continuing to refine and apply robust analytical protocols for their detection, and simultaneously confronting the significant data science challenges that limit our understanding. Future research must prioritize closing the global data gap, developing models that are both predictive and mechanistically insightful, and fostering integrated research frameworks that connect laboratory findings with complex, real-world ecosystems. Without a concerted effort to address these research gaps, our ability to accurately assess risk and develop effective, equitable mitigation strategies for emerging contaminants will remain critically limited.

The application of data-driven approaches, particularly machine learning, has transformed the study of Emerging Contaminants (ECs) over the past decade. These methods increasingly replace or supplement traditional laboratory studies, leveraging continuously enriched datasets to predict contaminant behavior and risk. However, a significant and critical disconnect persists between computational findings and their actual meaning within natural eco-environmental systems [9]. While numerous reviews have organized knowledge by contaminant type, the fundamental data science challenges common across all EC categories remain insufficiently addressed. This whitepaper identifies the most pressing disconnects between laboratory data and real-world environmental meaning, proposing an integrated research framework to bridge these gaps. The issues span from methodological oversights like data leakage to conceptual challenges in translating simplified models to complex environmental scenarios where matrix effects, trace concentrations, and dynamic conditions dominate contaminant behavior. Without addressing these foundational issues, data science may generate precise yet environmentally irrelevant predictions, necessitating a paradigm shift toward mutual inspiration among computational, experimental, and field-based approaches [9].

Critical Disconnects in Current EC Data Science

Fundamental Data and Modeling Limitations

The table below summarizes the primary data and modeling limitations creating disconnects between laboratory studies and real-world environmental contexts.

Table 1: Key Data and Modeling Limitations in EC Research

| Limitation Category | Specific Challenge | Impact on Real-World Relevance |

|---|---|---|

| Data Quality & Complexity | Complicated biological/ecological data often simplified [9] | Loss of system-level interactions and emergent properties |

| Matrix influence and trace concentrations ignored [9] | Overestimation of bioavailability and effects in natural systems | |

| Modeling Artifacts | Data leakage in model validation [9] | Overly optimistic performance estimates with poor field generalizability |

| Insufficient causal relationships [9] | Accurate predictions without mechanistic understanding for intervention | |

| Scenario Complexity | Oversimplified laboratory conditions [9] | Failure to capture multi-stressor interactions and dynamic exposures |

| Spatial and temporal trends inadequately modeled [9] | Limited predictive capability across ecosystems and time scales |

The Ecological Validity Dilemma in Environmental Science

The fundamental challenge in bridging laboratory findings and environmental meaning mirrors the "real-world or the lab" dilemma long debated in psychological science [11]. This dilemma represents a methodological choice between pursuing generality through traditional controlled laboratory research versus demanding direct generalizability to complex "real-world" environments [11]. In EC research, this manifests as a tension between the controlled conditions necessary for precise measurement and the environmental complexity where these contaminants actually exist.

The concept of "ecological validity" has been widely advocated as a solution to this dilemma, with researchers calling for experiments that more closely resemble real-world conditions [11]. However, this concept remains ill-formed and lacks specificity, often leading to misleading conclusions when vaguely applied [11]. The key misunderstanding lies in conflating experimental realism with generalizability. An environmentally relevant EC study must specifically define the context of contaminant behavior and effects in which it is interested, rather than broadly claiming "real-world" relevance [11].

Critical assumptions underpinning the ecological validity debate include:

- Artificiality vs. Naturality: Laboratory conditions are often deemed "artificial" while field conditions are considered "natural," though most environmental contexts involve anthropogenic influences.

- Simplicity vs. Complexity: Controlled experiments necessarily simplify systems, potentially eliminating crucial interactions that determine EC fate and effects in natural environments [11].

- General vs. Specific Mechanisms: The pursuit of universal principles may overlook context-dependent phenomena that dominate environmental outcomes.

Proposed Integrated Research Framework

Bridging Conceptual and Methodological Divides

An integrated research framework for ECs must connect laboratory studies, computational approaches, and field observations through iterative refinement. The following diagram visualizes this essential tripartite relationship:

Experimental Protocol for Integrated EC Assessment

Bridging laboratory and environmental contexts requires standardized yet flexible methodologies that account for real-world complexity while maintaining scientific rigor. The following protocol outlines an integrated approach for EC assessment:

Phase 1: Contaminant Prioritization & Initial Characterization

- Step 1: Computational Pre-screening: Apply quantitative structure-activity relationship (QSAR) models and cheminformatics approaches to prioritize ECs based on persistence, bioaccumulation potential, and predicted toxicity using existing databases [12].

- Step 2: Analytical Method Development: Establish sensitive detection methods (LC-MS/MS, GC-MS) capable of measuring target ECs at environmentally relevant concentrations (ng/L to μg/L) in complex matrices [9].

- Step 3: Laboratory Toxicity Screening: Conduct standardized acute and chronic toxicity tests using representative organisms (algae, Daphnia, fish) at multiple trophic levels under controlled laboratory conditions.

Phase 2: Environmental Relevance Integration

- Step 4: Matrix Effect Quantification: Evaluate how environmental matrices (natural waters, sediments) modify EC bioavailability and effects through sorption experiments and chemical characterization [9].

- Step 5: Multi-stressor Experimental Designs: Incorporate relevant environmental co-factors (temperature, pH, background contaminants) using factorial designs to assess interactive effects [9].

- Step 6: Metabolomic & Biomarker Profiling: Apply high-throughput omics techniques to identify mechanistic pathways and sensitive biomarkers at environmentally relevant exposures [12].

Phase 3: Model Development & Field Validation

- Step 7: Ensemble Model Development: Build machine learning ensembles that integrate laboratory data with environmental parameters to predict field outcomes, implementing strict train-validation-test splits to prevent data leakage [9].

- Step 8: Mesocosm Validation: Test model predictions in intermediate complexity systems (mesocosms) that bridge laboratory and field conditions [13].

- Step 9: Field Verification: Conduct targeted field sampling and monitoring to validate predictions across spatial and temporal gradients, measuring both EC concentrations and biological effects [9].

Phase 4: Iterative Refinement & Knowledge Integration

- Step 10: Model Updating: Refine computational models based on field observations, with particular attention to spatial and temporal extrapolation capacity [9].

- Step 11: Framework Integration: Incorporate validated findings into regulatory risk assessment frameworks and monitoring programs [12].

Essential Research Toolkit for Integrated EC Studies

The table below details critical reagents, materials, and methodologies required for implementing the proposed integrated research framework.

Table 2: Research Reagent Solutions for Integrated EC Studies

| Tool Category | Specific Items | Function & Application |

|---|---|---|

| Analytical Standards | Stable isotope-labeled EC analogs (e.g., ¹³C-PFAS, d₄-microcystins) | Internal standards for precise quantification in complex matrices via LC-MS/MS |

| Passive Sampling Devices | POCIS (Polar Organic Chemical Integrative Samplers), SPMD (Semipermeable Membrane Devices) | Time-weighted average concentration measurement of ECs in water, porewater, and air |

| Biosensors & Assays | Enzyme-linked immunosorbent assays (ELISAs), Whole-cell bioreporters, CALUX assays | High-throughput screening for specific EC classes and mode-specific toxicity |

| Omics Reagents | RNA/DNA extraction kits (soil, water, tissue), cDNA synthesis kits, PCR/qPCR reagents, Next-gen sequencing library prep kits | Molecular profiling to detect exposure effects and identify mechanisms of action |

| Reference Materials | Certified reference materials (CRMs) for sediments, biota, water; Proficiency testing samples | Quality assurance/quality control for method validation and inter-laboratory comparability |

| Data Science Tools | R/Python ML libraries (scikit-learn, TensorFlow), Molecular descriptor software, Spatial analysis tools (GIS) | Predictive model development, pattern recognition, and spatiotemporal analysis |

Visualization Framework for Enhanced Understanding

Strategic Visualization for Science-Policy Communication

Environmental visualizations serve as powerful framing devices at the science-policy interface, influencing how EC risks are perceived and acted upon by diverse audiences [14]. The production and circulation of visualizations involves multiple framing levels that researchers must consciously address:

Effective visualization for EC communication requires balancing multiple competing demands. Producers must navigate trade-offs between clarity, correctness, and relevance while considering diverse audience perspectives [14]. When visualizations circulate beyond their original context, they frequently undergo modifications—including color adjustments, format changes, and data aggregation—that can introduce contrasting frames and alter their interpretive meaning [14]. This reframing during circulation represents a critical yet often overlooked dimension of environmental visualization that can significantly impact science-policy-society interactions.

Implementation Guidelines for Effective Visualization

Based on analysis of visualization challenges in environmental science [15] [14], the following guidelines ensure effective communication of EC research:

- Inclusive & Accessible Design: Create visualizations understandable by diverse audiences, including marginalized groups, through straightforward visual encodings (bar charts, donut charts) and consideration of color blindness, language barriers, and cultural differences [15].

- Interactive Exploration: Develop interactive visualizations that allow users to explore data, customize experiences, and understand consequences of different scenarios through "what-if" simulations, particularly effective in collaborative decision-making contexts [15].

- In-Situ Presentation: Reduce spatial indirection by presenting data in context, such as displaying local contamination levels on maps of specific watersheds, to enhance relevance and impact [15].

- Transparency & Credibility: Ensure visualizations maintain accuracy and integrity by clearly representing uncertainty, data sources, and methodological approaches to build trust among diverse stakeholders [15] [14].

Addressing the critical disconnects between laboratory data and real-world environmental meaning requires fundamental shifts in how EC research is conceptualized, conducted, and communicated. Moving beyond prediction as the primary objective, data science must increasingly serve to inspire novel scientific questions and guide targeted experimental and field investigations [9]. This mutually reinforcing relationship between computation, mechanism, and observation represents the most promising path toward meaningful understanding and effective management of emerging contaminant risks. The proposed integrated framework—combining rigorous laboratory studies, causally-aware ensemble modeling, and field validation in environmentally relevant contexts—provides a structured approach for bridging current disconnects. Furthermore, conscious attention to visualization design and science-policy communication ensures that insights gained will effectively inform decision-making and collective action on these pressing environmental challenges [15] [14]. As the number of unregulated contaminants continues to grow, exceeding current regulatory frameworks by orders of magnitude [12], such integrative approaches become increasingly essential for proactive environmental protection and public health preservation.

The Complexity of Biological and Ecological Data in Contaminant Research

The study of Emerging Contaminants (ECs) represents a critical frontier in environmental science, driven by the continuous introduction of new chemical and biological agents into global ecosystems [16]. These contaminants—including pharmaceuticals, personal care products, microplastics, per- and polyfluoroalkyl substances (PFAS), and pesticide residues—pose significant threats to environmental and human health through complex biological pathways [2] [17]. The fundamental challenge in EC research lies in the inherent complexity of biological and ecological data, which often reveals significant gaps between laboratory findings and their real-world environmental meaning [18]. This complexity is compounded by the trace concentrations, matrix effects, and complicated exposure scenarios that characterize environmental systems, creating substantial obstacles for accurate risk assessment and effective policy development.

The global data landscape for ECs is further characterized by profound imbalances, with considerably more research available for the Global North compared to the Global South [2]. This disparity risks developing mitigation strategies based on GN pollution profiles that may be inappropriate or even detrimental for GS regions with different contaminant mixtures, ecosystems, and environmental risk factors [2]. Addressing these challenges requires advanced data science approaches that can integrate complex biological and ecological data while acknowledging the global inequities in current research efforts.

Key Complexities in Biological and Ecological Data

Fundamental Data Challenges

Data-driven approaches, including machine learning and ensemble modeling, face significant hurdles when applied to EC research due to several inherent complexities in biological and ecological systems [18]. These challenges stem from the multifaceted nature of environmental contamination and the limitations of current assessment methodologies.

Table 1: Core Data Complexities in Emerging Contaminant Research

| Complexity Factor | Impact on Data Quality | Research Consequences |

|---|---|---|

| Matrix Influence | Interference from complex environmental matrices (soil, sediment, water) | Altered contaminant bioavailability and detection accuracy |

| Trace Concentrations | Contaminants present at near-detection limit levels | Increased analytical uncertainty and potential for false negatives |

| Complex Biological/Ecological Data | Multivariate interactions across biological scales | Difficulty establishing causal relationships from correlative data |

| Data Leakage | Inappropriate preprocessing or validation methods | Overly optimistic model performance that fails in real-world applications |

| Spatiotemporal Variability | Dynamic concentration fluctuations across time and space | Challenges in representative sampling and trend identification |

The presence of ECs in environmental compartments creates particularly complicated data scenarios because these substances were designed to be biologically active at low concentrations [17]. Pharmaceuticals, for instance, are specifically engineered to produce biological effects in vertebrates, and these effects extend to non-target organisms in aquatic and terrestrial ecosystems [17]. This biological potency, combined with environmental persistence and transformation potential, generates data interpretation challenges that exceed those of traditional pollutants.

Global Data Imbalances and Representative Challenges

The current global distribution of EC research creates significant knowledge gaps that hinder comprehensive risk assessment and policy development. Recent analyses indicate that approximately 75% of CECs research has focused on North America and Europe, despite the majority of the global population residing in Asia and Africa [2]. This disparity means that pollution profiles and biological impacts relevant to GS regions may remain undetected or unprioritized, potentially leading to inappropriate interventions based solely on GN data [2]. The consequences of this data imbalance extend beyond scientific understanding to affect global policy and resource allocation for environmental protection.

Advanced Methodologies for Complex Data Interpretation

Effect-Based Ecological Hazard Assessment

Traditional chemical-specific hazard assessment approaches have limitations in capturing the complex biological implications of EC exposures. Recent methodologies have evolved toward effect-based assessments that evaluate multiple hazard categories simultaneously. A 2025 study on the Great Lakes–Upper St. Lawrence River drainage demonstrated this approach by analyzing 21,441 surface water CEC concentrations from 7,162 samples collected at 1,021 sampling sites [17]. The assessment evaluated hazards to fish across 12 distinct effect categories, generating a database of 93,864 hazard scores that provided a more comprehensive biological impact perspective than conventional single-chemical assessments [17].

Table 2: Effect Categories and Hazard Incidence in Fish from CEC Exposure

| Effect Category | Elevated Hazard Incidence | Primary Contaminant Associations |

|---|---|---|

| Reproductive Effects | 39.5% of assessed samples | Endocrine-disrupting chemicals, hormones |

| Developmental Effects | 20.3% of assessed samples | Pharmaceuticals, PFAS |

| Mortality Effects | 20.4% of assessed samples | Pesticides, acute toxicity contaminants |

| Growth Effects | Data Not Specified | Metabolic disruptors |

| Behavioral Effects | Data Not Specified | Neuroactive compounds |

| Endocrine Effects | Data Not Specified | Synthetic hormones, plasticizers |

The ecological hazard assessment methodology employed pairs of screening values to generate contaminant- and effect-specific ordinal hazard scores, creating a more nuanced interpretation framework than traditional quotient-based approaches [17]. This method revealed that the highest hazard levels to fish were broadly distributed and often associated with municipal areas, with mortality, reproductive, and developmental effect categories accounting for 17.5% of high hazard observations [17].

Transcriptomic Data and Mechanistic Network Models

Integrating transcriptomic data with mechanistic network models represents a cutting-edge approach for quantitative biological impact assessment. This methodology leverages hierarchically organized network models to investigate exposure impacts at molecular, pathway, and process levels [19]. The approach provides a coherent framework for interpreting system-wide responses to contaminants by integrating experimental measures with a priori knowledge about biological systems and molecular interactions [19].

Diagram 1: Transcriptomic Data Analysis Workflow

This systems biology-based methodology evaluates biological impact in an objective, systematic, and quantifiable manner, enabling computation of systems-wide and pan-mechanistic biological impact measures for active substances or mixtures [19]. Validation studies using both in vitro systems with simple exposures and in vivo systems with complex exposures have demonstrated the methodology's ability to recapitulate known biological responses matching expected or measured phenotypes [19]. The quantitative results showed agreement with experimental endpoint data for many assessed mechanistic effects, providing objective confirmation of the approach's utility across multiple research contexts.

One Health Perspective and Integrated Approaches

Addressing the complexity of EC impacts requires integrated approaches that recognize the interconnectedness of human, animal, and environmental health. The One Health perspective emphasizes interdisciplinary collaboration to understand and mitigate the impacts of ECs across these domains [16]. This approach acknowledges that emerging contaminants represent a planetary health challenge that cannot be adequately addressed through siloed research paradigms.

Source control and remediation strategies informed by the One Health perspective prioritize the integration of green and benign-by-design principles into production processes to eliminate hazardous materials from global supply chains [16]. Simultaneously, robust and socially equitable environmental policies at regional and international levels are essential for implementing effective contaminant management while acknowledging the disproportionate impacts of pollution on vulnerable communities worldwide [2] [16].

Experimental Protocols and Research Framework

Integrated Research Framework for Complex Data

Conventional laboratory studies often fail to capture the complexity of real-world environmental scenarios where multiple stressors interact across biological scales. An integrated research framework that connects natural field conditions, ecological systems, and large-scale environmental problems is urgently needed to advance EC risk assessment [18]. This framework must bridge the gap between controlled laboratory conditions and environmentally relevant exposure scenarios.

Diagram 2: Integrated Research Framework

The mutual inspiration among data science, process and mechanism models, and laboratory and field research represents a critical direction for future EC research [18]. This integrated approach moves beyond prediction-only purposes to inspire the discovery of fundamental scientific questions about contaminant behavior, biological effects, and ecological consequences across spatial and temporal scales.

Essential Research Reagent Solutions

The implementation of advanced methodologies for EC research requires specialized reagents and materials designed to address the challenges of complex biological and ecological data. These research tools enable more accurate detection, analysis, and interpretation of contaminant effects across biological scales.

Table 3: Essential Research Reagents and Materials for EC Studies

| Research Reagent/Material | Function in EC Research | Application Context |

|---|---|---|

| Transcriptomic Analysis Kits | Genome-wide expression profiling | Mechanistic network model development [19] |

| Effect-Specific Bioassays | Targeted hazard assessment | Ecological hazard screening across multiple effect categories [17] |

| Passive Sampling Devices | Time-integrated contaminant concentration measurement | Field deployment for representative exposure assessment [17] |

| Isotopic Tracers (13C/12C) | Carbon flux quantification in metabolic studies | Tracking contaminant fate and transformation in biological systems [20] |

| High-Throughput Screening Assays | Rapid in vitro bioactivity assessment | Priority setting and initial hazard identification [19] |

These research reagents and materials facilitate the generation of high-quality data necessary for understanding complex biological responses to EC exposures. Their appropriate application within integrated research frameworks strengthens the connection between laboratory findings and environmental relevance, ultimately supporting more accurate risk assessment and evidence-based policy development.

Future Directions and Research Priorities

Addressing the complexity of biological and ecological data in contaminant research requires strategic advances in multiple domains. Future research should prioritize the development of ensemble models that reveal mechanisms and spatiotemporal trends with strong causal relationships and without data leakage [18]. Particular attention must be paid to the matrix influence, trace concentration, and complex exposure scenarios that have often been neglected in previous research efforts.

The global data imbalance in EC research represents both an ethical and scientific challenge that must be addressed through equitable international collaborations [2]. Meaningfully including Indigenous Peoples and local communities in research design, implementation, and knowledge co-production is essential for developing representative global data and effective governance frameworks [2]. This inclusion is not merely a matter of social justice but a scientific necessity for creating comprehensive understanding of EC impacts across diverse ecosystems and cultural contexts.

Future methodological developments should also focus on enhancing causal inference capabilities in ecological risk assessment, moving beyond correlative relationships to establish mechanistic understanding of contaminant effects across biological scales. The integration of novel data streams from remote sensing, citizen science, and automated monitoring technologies offers promising avenues for capturing the spatiotemporal complexity of EC exposure and effects in natural systems.

Key Unmet Needs in EC Data Sourcing, Standardization, and Annotation

The data science pipeline for emerging contaminants (ECs) is fraught with critical challenges that hinder effective risk assessment and regulatory action. This whitepaper delineates the key unmet needs in sourcing, standardizing, and annotating EC data. We identify the proliferation of novel chemicals and their transformation products as a fundamental blind spot in data sourcing, a lack of cohesive standards for data integration, and the resource intensity of manual data annotation as primary bottlenecks. The analysis is framed within the context of advancing sustainable chemistry and protecting public health, providing researchers and drug development professionals with a detailed examination of these research gaps and proposing structured methodologies to address them.

Emerging contaminants (ECs), such as per- and polyfluoroalkyl substances (PFAS), pharmaceuticals, and halogenated flame retardants, represent a significant and growing challenge for environmental chemistry and public health [21]. The number of synthetic chemicals and products being used and produced that can contaminate the environment during their lifecycle has risen dramatically over the past 30 years [21]. Effective data science is critical for understanding the environmental fate, transport, and biological impact of these substances. However, the entire data lifecycle for ECs—from initial sourcing to final annotation—is plagued by systemic unmet needs that create critical research gaps. This whitepaper provides an in-depth technical analysis of these gaps, focusing on data sourcing, standardization, and annotation, and offers actionable experimental protocols and resources for the scientific community.

Unmet Needs in EC Data Sourcing

Data sourcing for ECs is fundamentally complicated by the vast and dynamic nature of the chemical universe and significant monitoring disparities.

The "Blind Spot" of Novel and Transformation Products

A primary challenge is the sheer volume of chemicals and their potential transformation products. Over 10,000 synthetic chemicals are used in plastic products alone, with hundreds of thousands more employed across other industries [21]. Standard analytical techniques, such as non-targeted analysis using high-resolution mass spectrometry, often fail to identify novel compounds or, more critically, the products formed when a parent chemical transforms in the environment. Some pharmaceuticals, PFASs, and other chemicals can transform into even more problematic compounds, but it is hard to identify these transformation products using standard approaches [21]. This creates a significant blind spot, as the environmental and health impacts of these transformation products may be greater than the original substance.

Disparate Monitoring and Funding Gaps

The infrastructure for monitoring ECs is inconsistent, particularly in small or disadvantaged communities. While the U.S. EPA's Emerging Contaminants in Small or Disadvantaged Communities (EC-SDC) grant program provides funding to address this—with a $945.7 million appropriation for FY 2025 [22]—the allocation and focus may not fully address the global scale and diversity of ECs. The grant program focuses heavily on PFAS in drinking water and contaminants on EPA's Contaminant Candidate Lists [23], potentially leaving other critical ECs under-monitored. This results in geographically and chemically skewed datasets that are not representative of the true global burden of EC contamination.

Table 1: Key Unmet Data Sourcing Needs and Their Implications

| Unmet Need | Description | Research Consequence |

|---|---|---|

| Transformation Product Identification | Inability to rapidly identify and source data on environmental and biological transformation products of ECs. | Incomplete risk assessments; underestimation of chemical persistence and toxicity. |

| Global Monitoring Inequity | Lack of consistent, harmonized monitoring data, especially from disadvantaged communities and developing nations. | Skewed datasets that do not represent true exposure landscapes, leading to environmental injustice. |

| Funding and Resource Allocation | EPA funding, while substantial, is non-competitively awarded to states/territories and may not target the most pressing research gaps [23]. | Critical data gaps remain unfilled if state-level priorities do not align with overarching scientific needs. |

Unmet Needs in EC Data Standardization

Without robust standardization, data from different sources cannot be integrated, compared, or meaningfully interpreted, crippling large-scale analysis.

Inconsistent Terminology and Data Structures

Research in network visualization has highlighted a fundamental challenge: a lack of clarification and uniformity between the terminology used across different surveys and databases [24]. For example, in dynamic network visualization, the concept of juxtaposition has been referred to as "small multiples," "static flipbooks," or "visualization of multiple timeslices" [24]. This problem is mirrored in EC research, where the same chemical may have multiple identifiers, and key properties may be defined and measured differently across studies. This inconsistency makes it nearly impossible to automatically merge datasets or perform meta-analyses.

Lack of a Unified Data Ecosystem

The absence of a centralized, curated repository for EC data that enforces common standards is a major impediment. Data exists in silos—regulatory data from the EPA, experimental data from academic journals, and monitoring data from various national and local programs. Integrating these disparate datasets requires significant manual effort due to incompatible formats and a lack of universal metadata descriptors. This prevents the formation of a comprehensive "network" of EC data where relationships between chemical structure, environmental fate, and biological activity can be easily visualized and analyzed [25] [24].

Diagram 1: Data Standardization Workflow

Unmet Needs in EC Data Annotation

Data annotation—the process of enriching raw data with labels, tags, or markers—is vital for training machine learning (ML) models to interpret EC data, but it faces significant scalability and quality challenges [26].

Resource Intensity and Scalability

Annotation is a resource-intensive operation, making it expensive and time-consuming, which creates pressure on project budgets and timelines [26]. This is particularly acute for complex EC data types, such as 3D point clouds from environmental sensors or mass spectrometry spectra. The demand for high-quality annotated data is soaring, with the annotation market expected to grow at a CAGR of 26.5% from 2023 to 2030 [26]. This growth underscores the need for more efficient annotation methodologies to keep pace with the volume of data being generated.

Ambiguity and Annotator Bias

When data exhibits unclear or multiple interpretations, it confuses annotators, increasing the chances of incorrect label assignment [26]. For example, classifying the toxicity of a novel transformation product based on its chemical structure can be highly subjective. Furthermore, the personal opinions, perspectives, or judgments of individuals labeling the data can introduce annotator bias, leading to inconsistent or skewed annotations that detrimentally affect model performance and generalization [26].

The Promise of AI-Assisted Annotation

A key trend to address these challenges is the rise of AI-assisted data annotation with human oversight. By 2025, AI-assisted annotation tools will collaborate more with human experts to guarantee that annotations adhere to high standards, particularly in sensitive areas [27]. This human-in-the-loop (HITL) approach is essential for maintaining accuracy while improving scalability. Furthermore, generative AI models, such as GANs (Generative Adversarial Networks), show promise for synthetic data generation, which can decrease the need for extensive manual annotation, especially in scenarios where collecting real-world data is difficult [27].

Table 2: Data Annotation Techniques and Applications for ECs

| Annotation Type | Description | Relevant EC Data Application |

|---|---|---|

| Semantic Segmentation | Assigns a class label to every pixel in an image. | Analyzing microscopic images to identify microplastic particles in environmental samples. |

| Time Series Annotation | Labels data points in a sequence over time. | Tracking the fluctuation of pharmaceutical concentrations in wastewater effluent. |

| 3D Point Cloud Annotation | Labels individual points in a 3D space. | Interpreting LIDAR or sensor data for modeling contaminant dispersion in a landscape. |

| Text Annotation | Tags specific text in documents for NLP. | Extracting EC information and their properties from scientific literature and regulatory documents. |

Diagram 2: AI-Human Annotation Workflow

Experimental Protocols for Addressing Key Gaps

This section provides a detailed methodology for an experiment aimed at tackling the critical unmet need of identifying transformation products.

Protocol: High-Throughput Identification of EC Transformation Products

Objective: To experimentally and computationally predict and validate the environmental transformation products of a target EC.

1. Sample Preparation and Stressor Exposure:

- Reagents: Prepare a 10 ppm stock solution of the target EC in relevant matrices (e.g., purified water, simulated sunlight). The pH should be adjusted to cover a environmentally relevant range (e.g., 5.5, 7.0, 8.5).

- Stressors: Expose aliquots of the stock solution to various environmental stressors in controlled reactors:

- Photolysis: Using a solar simulator (e.g., Xenon arc lamp).

- Hydrolysis: At different pH levels and temperatures.

- Biodegradation: Using inoculum from activated sludge or river water.

- Controls: Include dark controls and sterile controls for each matrix.

2. High-Resolution Mass Spectrometry (HRMS) Analysis:

- Instrumentation: Use a Liquid Chromatography (LC) system coupled to a high-resolution mass spectrometer (e.g., Q-TOF or Orbitrap).

- Chromatography: Employ a C18 column with a water/acetonitrile gradient mobile phase.

- Data Acquisition: Run in full-scan MS mode (e.g., m/z 50-1000) with data-dependent MS/MS acquisition for fragmentation.

3. Computational Data Processing and Network Analysis:

- Software: Use Python with libraries such as

networkx[25] orpython-igraph[25] to construct a chemical reaction network. - Workflow: a. Peak Picking: Use algorithms (e.g., XCMS) to extract all chromatographic peaks from HRMS data. b. Molecular Formula Assignment: Assign potential formulas to the parent EC and all detected peaks. c. Network Construction: Create a network where nodes are assigned molecular formulas (potential chemicals) and edges represent plausible biochemical or photochemical transformations (e.g., +O, -H2, +OH, -CH2). d. Propagation: Start from the node of the parent EC and propagate through the network using a set of reaction rules to connect to experimentally detected nodes (transformation products).

4. Validation:

- MS/MS Fragmentation: Compare the experimental MS/MS spectra of tentatively identified transformation products with those of authentic standards or in-silico predicted spectra.

- Quantification: If standards are available, perform quantitative analysis to determine reaction kinetics.

Table 3: The Scientist's Toolkit for Transformation Product Research

| Research Reagent / Tool | Function / Explanation |

|---|---|

| High-Resolution Mass Spectrometer (HRMS) | The core analytical instrument for accurately determining the mass of unknown compounds and their fragments, enabling formula prediction. |

| LC-Q-TOF or LC-Orbitrap | Specific HRMS configurations that combine separation power (LC) with high mass accuracy and fragmentation capability, ideal for non-target analysis. |

| Solar Simulator Reactor | A controlled system that exposes chemical solutions to simulated sunlight, allowing for the study of photodegradation pathways. |

Python with networkx/igraph |

Programming libraries essential for creating, manipulating, and analyzing the complex networks of chemicals and their transformation relationships [25]. |

| Authentic Chemical Standards | Commercially available pure samples of suspected transformation products; critical for confirming identifications and quantifying formation yields. |

The data science landscape for emerging contaminants is defined by profound unmet needs that stymie research and regulatory progress. The blind spots in sourcing data on novel chemicals and their transformation products, the Tower of Babel-like confusion in data standardization, and the scalability crisis in data annotation represent a triad of interconnected challenges. Addressing these gaps requires a concerted effort that combines advanced computational and high-throughput experimental methods, as outlined in this whitepaper. The adoption of AI-assisted workflows, the development and enforcement of common data standards, and a focus on predictive environmental chemistry are no longer optional but essential for building a sustainable and effective defense against the risks posed by emerging contaminants.

Advanced Analytical Tools: Machine Learning and Sensing Technologies for EC Discovery

Leveraging Machine Learning for EC Risk Prediction and Exposure Modeling

The rapid proliferation of emerging contaminants (ECs)—including pharmaceuticals, personal care products, per- and polyfluoroalkyl substances (PFAS), and microplastics—has created unprecedented challenges for environmental risk assessment. Traditional toxicological approaches, reliant on laboratory studies and linear models, are increasingly inadequate for characterizing the complex behavior and health impacts of these substances across diverse environmental matrices. In this context, machine learning (ML) has emerged as a transformative methodology, enabling researchers to decode complex, high-dimensional relationships between contaminant properties, environmental variables, and biological effects that elude conventional analytical frameworks [28] [9]. The integration of artificial intelligence into environmental chemistry represents a paradigm shift from observation-based to prediction-driven science, offering powerful tools for forecasting contaminant fate, bioavailability, and potential health risks.

Despite this promise, significant research gaps impede the full realization of ML's potential in EC risk assessment. Current studies exhibit substantial geographic imbalances, with China dominating research output (82.1% of 28 major studies on plant uptake) while Africa remains critically underrepresented despite prevalent contamination issues [29]. Furthermore, models frequently prioritize predictive accuracy over mechanistic interpretability, suffer from data leakage issues in validation protocols, and struggle with the "trace concentration and complex scenario" problem inherent to real-world EC exposure [9]. This technical review examines state-of-the-art ML applications in EC risk prediction and exposure modeling, with particular emphasis on bridging these methodological gaps through standardized workflows, explainable AI, and ecological validity enhancements.

Current Landscape of ML Applications in EC Research

Dominant ML Algorithms and Performance Characteristics

ML applications in environmental chemistry have experienced exponential growth since 2015, with publication output surging from fewer than 25 papers annually pre-2015 to over 719 publications in 2024 alone [28]. This expansion reflects a fundamental shift in methodological approaches toward data-driven discovery. Ensemble methods currently dominate the research landscape, with Random Forest (RF) and eXtreme Gradient Boosting (XGBoost) emerging as the most frequently cited algorithms due to their robust performance across diverse prediction tasks [29] [28]. These algorithms excel at handling high-dimensional, nonlinear data structures characteristic of environmental chemical mixtures while providing intrinsic feature importance metrics that aid model interpretation.

Deep learning architectures—including Deep Neural Networks (DNNs), Recurrent Neural Networks (RNNs), and Long Short-Term Memory (LSTM) networks—increasingly complement traditional ML approaches, particularly for temporal forecasting of contaminant transport and spatial mapping of contamination hotspots [29]. The performance superiority of these ML approaches over traditional statistical models is particularly evident in complex prediction tasks such as plant uptake of contaminants, where ML models consistently demonstrate enhanced predictive accuracy for bioaccumulation factors across diverse plant species and contaminant classes [29].

Table 1: Dominant Machine Learning Algorithms in EC Research

| Algorithm Category | Specific Models | Primary Applications | Key Advantages |

|---|---|---|---|

| Ensemble Methods | Random Forest, XGBoost, Gradient Boosting | Contaminant classification, concentration prediction, risk assessment | Handles nonlinear relationships, provides feature importance, robust to outliers |

| Deep Learning | Deep Neural Networks, Recurrent Neural Networks, LSTM | Temporal forecasting, spatial mapping, high-dimensional pattern recognition | Captures complex temporal and spatial dependencies, automatic feature learning |

| Interpretable ML | SHAP, LIME, Bayesian Networks | Mechanism elucidation, regulatory decision support, risk communication | Model transparency, quantifies feature contributions, supports causal inference |

| Traditional Classifiers | SVM, Logistic Regression, k-NN | Binary classification tasks, preliminary feature screening | Computational efficiency, simplicity, strong theoretical foundations |

Key Predictors for EC Exposure and Risk Modeling

Meta-analyses of ML applications reveal consistent patterns in feature importance across diverse prediction tasks. For plant uptake modeling, soil properties (particularly pH and organic matter content), compound-specific characteristics (logKow, molecular weight), and plant physiological traits emerge as the most influential predictors [29]. Similarly, in soil contamination studies of potentially toxic elements (PTEs), ML models identify soil pH, organic matter, industrial activities, and soil texture as critical variables enhancing prediction accuracy for spatial distribution and source identification [30].

The transition from single-contaminant to mixture exposure modeling represents a particularly advanced application of ML in environmental health. Studies predicting depression risk from environmental chemical mixtures have successfully identified serum cadmium and cesium, along with urinary 2-hydroxyfluorene, as the most influential predictors among 52 candidate ECMs, achieving exceptional predictive performance (AUC: 0.967) [31]. These findings highlight ML's capacity to decipher complex exposure-response relationships that traditional epidemiological approaches frequently miss due to their limitations in handling high-dimensional, correlated exposures.

Table 2: Key Predictive Features in ML Models for EC Risk Assessment

| Feature Category | Specific Variables | Influence on EC Behavior | Data Sources |

|---|---|---|---|

| Compound Properties | logKow, molecular weight, solubility, volatility | Determines environmental partitioning, bioavailability, and mobility | QSAR databases, laboratory measurements, chemical registries |

| Environmental Parameters | Soil pH, organic matter, temperature, dissolved oxygen | Modifies degradation rates, bioavailability, and transformation pathways | Field sensors, remote sensing, laboratory analysis |

| Biological Factors | Species traits, metabolic capacity, tissue type | Influences uptake, biotransformation, and trophic transfer | Ecological databases, -omics technologies, laboratory studies |

| Anthropogenic Drivers | Industrial discharges, land use, infrastructure age | Determines contamination sources, magnitude, and spatial patterns | Census data, permits, satellite imagery, utility records |

Experimental Protocols and Methodological Frameworks

Integrated Workflow for ML-Assisted EC Source Identification

The integration of non-target analysis (NTA) with machine learning represents a cutting-edge approach for contaminant source identification, employing a systematic four-stage workflow that transforms raw analytical data into actionable environmental insights [32]. This framework addresses the critical challenge of linking complex chemical signatures to specific contamination sources in heterogeneous environmental systems.

Figure 1: ML-Assisted Non-Target Analysis Workflow for EC Source Identification.

Stage (i): Sample Treatment and Extraction requires careful optimization to balance selectivity and sensitivity. Solid-phase extraction (SPE) remains the cornerstone technique, with multi-sorbent strategies (e.g., Oasis HLB with ISOLUTE ENV+) expanding contaminant coverage across diverse physicochemical properties [32]. Green extraction techniques like QuEChERS, microwave-assisted extraction (MAE), and supercritical fluid extraction (SFE) have gained prominence for large-scale environmental samples due to reduced solvent consumption and processing time while maintaining comprehensive analyte recovery.

Stage (ii): Data Generation and Acquisition relies on high-resolution mass spectrometry (HRMS) platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems, typically coupled with liquid or gas chromatographic separation (LC/GC). The critical data processing steps include centroiding, extracted ion chromatogram (EIC/XIC) analysis, peak detection, alignment, and componentization to group related spectral features into molecular entities [32]. Quality assurance measures—particularly confidence-level assignments (Levels 1-5) and batch-specific quality control samples—ensure data integrity for subsequent ML analysis.

Stage (iii): ML-Oriented Data Processing transforms raw HRMS data into interpretable patterns through sequential computational steps. Initial preprocessing addresses data quality through noise filtering, missing value imputation (e.g., k-nearest neighbors), and normalization to mitigate batch effects [32]. Dimensionality reduction techniques like principal component analysis (PCA) and t-SNE simplify high-dimensional data, while clustering methods (hierarchical cluster analysis, k-means) group samples by chemical similarity. Supervised ML models, including Random Forest and Support Vector Classifiers, are then trained on labeled datasets to classify contamination sources, with feature selection algorithms optimizing model accuracy and interpretability.

Stage (iv): Result Validation employs a three-tiered approach to ensure analytical and environmental relevance. First, analytical confidence is verified using certified reference materials or spectral library matches. Second, model generalizability is assessed through external dataset validation and cross-validation techniques. Finally, environmental plausibility checks correlate model predictions with contextual data like geospatial proximity to emission sources or known source-specific chemical markers [32].

Interpretable ML Framework for Health Risk Assessment

The application of interpretable ML for linking environmental chemical mixtures to health endpoints represents a methodological advancement beyond traditional epidemiological approaches. A validated protocol for depression risk prediction from ECMs demonstrates this approach [31]:

Participant Selection and Data Preparation: The study analyzed data from 1,333 adults from NHANES 2011-2016 cycles, with depression assessed via PHQ-9 scores (score ≥10 indicating depression). Five categories of environmental chemicals were measured: polycyclic aromatic hydrocarbons (PAHs), metals, per- and polyfluoroalkyl substances (PFAS), phthalate esters (PAEs), and phenols. Urinary creatinine levels corrected for dilution, and concentrations were natural logarithm-transformed to achieve normality.

Feature Selection with Recursive Feature Elimination: To optimize prediction from high-dimensional data, researchers applied Recursive Feature Elimination (RFE) with 10-fold cross-validation. Initially, 84 features (52 chemical exposure variables and 32 demographic/clinical covariates) were considered. RFE with Random Forest evaluated feature subset sizes of 5, 10, and 15, using both general control functions and RF-specific controls. The process was integrated within a bootstrap framework to validate feature selection consistency across resampled datasets.

Model Training and Evaluation: Nine supervised ML algorithms were evaluated: Neural Network (NN), Multilayer Perceptron (MLP), Gradient Boosting Machine (GBM), AdaBoost, XGBoost, Random Forest (RF), Decision Tree (DT), Support Vector Machine (SVM), and Logistic Regression (LR). Models were trained using 10-fold cross-validation with stratified sampling to maintain class distribution. The Random Forest model demonstrated superior performance (AUC: 0.967, F1 score: 0.91) in predicting depression risk from ECM exposures.

Model Interpretation and Mediation Analysis: SHapley Additive exPlanations (SHAP) quantified the relative contribution of individual predictors, identifying serum cadmium and cesium, and urinary 2-hydroxyfluorene as the most influential predictors. Mediation network analysis further implicated oxidative stress and inflammation as crucial pathways linking ECMs to depression, providing mechanistic plausibility to the statistical associations [31].

Table 3: Essential Research Reagents and Computational Resources for ML-EC Studies

| Category | Item | Specification/Purpose | Application Examples |

|---|---|---|---|

| Analytical Standards | Certified Reference Materials (CRMs) | Verify compound identities, validate quantitative analysis | PFAS mixtures, metal solutions, pesticide panels |

| Extraction Materials | Solid-Phase Extraction Cartridges | Multi-sorbent strategies for broad-spectrum extraction | Oasis HLB, ISOLUTE ENV+, Strata WAX/WCX |

| Chromatography | LC/GC Columns | High-resolution separation prior to MS detection | C18 columns, HILIC columns, chiral columns |

| Mass Spectrometry | HRMS Instruments | Structural elucidation, non-target analysis | Q-TOF, Orbitrap systems with LC/GC coupling |

| ML Libraries | Python/R Packages | Model development, validation, and interpretation | Scikit-learn, XGBoost, SHAP, TensorFlow |

| Environmental Data | Geospatial Covariates | Enhance spatial prediction accuracy | Soil pH, organic matter, land use, climate data |

Visualization Framework for ML-EC Model Interpretation

Explainable AI Workflow for Environmental Mixture Risk Assessment

The black-box nature of complex ML models presents significant challenges for regulatory acceptance and scientific interpretation. Explainable AI (XAI) methods address this limitation by elucidating the reasoning behind model predictions, thereby building trust and facilitating mechanistic insights [31] [33].

Figure 2: Explainable AI Workflow for Environmental Mixture Risk Assessment.

The visualization framework illustrates the sequential process from raw exposure data to biological mechanism elucidation. Machine Learning Model Training begins with comprehensive data preprocessing, including handling missing values, normalization, and feature engineering specific to environmental chemical data [31]. Feature selection techniques, particularly Recursive Feature Elimination with cross-validation, identify the most informative subset of contaminants from complex mixtures. Model training incorporates rigorous cross-validation protocols to prevent overfitting and ensure generalizability.

Explainable AI Methods form the core of model interpretation. SHapley Additive exPlanations (SHAP) quantifies the marginal contribution of each chemical to the predicted risk, while Local Interpretable Model-agnostic Explanations (LIME) provides localized explanations for individual predictions [31] [33]. Partial Dependence Plots visualize the relationship between specific chemical concentrations and health risk while accounting for the average effect of all other chemicals in the mixture.

Mechanistic Validation bridges statistical associations with biological plausibility. Mediation analysis identifies intermediate biological pathways linking chemical exposures to health outcomes, while pathway enrichment tests determine whether chemicals associated with risk predictions target specific biological processes [31]. Biomarker correlation analyses substantiate model findings by examining relationships between identified priority chemicals and established biomarkers of effect.

Research Gaps and Future Directions

Despite rapid methodological advances, significant research gaps persist in the application of ML for EC risk prediction. Geographic representation remains heavily skewed, with China dominating research output (82.1% of plant uptake studies) while Africa is critically underrepresented despite documented contamination issues [29]. This imbalance risks developing models with limited transferability to diverse ecological and socioeconomic contexts. Future efforts should prioritize global data collection initiatives and transfer learning approaches to enhance model generalizability.

The interpretability-transparency gap represents another critical challenge. While complex ensemble and deep learning models often achieve superior predictive performance, their black-box nature complicates regulatory acceptance and mechanistic understanding [9] [32]. The integration of explainable AI techniques like SHAP represents significant progress, but further methodological development is needed to establish causal relationships rather than correlational patterns. Future research should prioritize hybrid approaches that couple ML's predictive power with process-based models' mechanistic foundations.

The data quality and standardization gap undermines model reproducibility and comparability across studies. Inconsistent feature reporting, limited data availability, and underexplored uncertainty-sensitivity coupling present substantial barriers to operationalizing ML approaches for regulatory decision-making [29] [9]. Concerted efforts to develop standardized databases, reporting frameworks, and benchmark datasets would substantially advance the field.

Finally, the translational gap between model predictions and actionable interventions remains largely unbridged. While ML excels at identifying contamination patterns and predicting risk, translating these insights into targeted remediation strategies, early warning systems, and evidence-based policies requires stronger collaboration between data scientists, environmental chemists, and public health professionals [33] [32]. Future work should focus on developing decision-support tools that integrate ML predictions with cost-benefit analyses and intervention planning frameworks to maximize public health impact.

The study of emerging contaminants (ECs) is pivotal for environmental and public health, yet it is hampered by significant research gaps that limit our understanding of their full impact. Contaminants of emerging concern (CECs)—including pharmaceuticals, microplastics, per- and polyfluoroalkyl substances (PFAS), and antibiotic resistance genes—are ubiquitously present in the environment but remain critically under-characterized [2] [34]. A profound global data imbalance exists, with approximately 75% of CEC research focusing on North America and Europe, despite the majority of the world's population residing in Asia and Africa [2]. This geographical bias results in strategies that may be inappropriate or even detrimental for regions with different pollution profiles and environmental risks [2].

The core challenge extends beyond mere detection. Traditional laboratory methods, such as gas chromatography and high-performance liquid chromatography, are expensive (equipment can cost up to $100,000), time-consuming, and ill-suited for capturing the dynamic nature of ECs in complex environmental matrices [35] [34]. Furthermore, current research often overlooks complex scenarios including synergistic effects of contaminant mixtures, transgenerational impacts, and the influence of matrix effects at trace concentrations [9] [18] [34]. To bridge these gaps, the integration of advanced sensors with real-time detection platforms represents a paradigm shift, enabling a more comprehensive, accurate, and globally representative understanding of ECs.

Core Technologies in Advanced Sensor Platforms

Advanced sensor systems are revolutionizing environmental monitoring by moving from periodic, lab-based sampling to continuous, in-field analysis. These platforms leverage a variety of technological principles to achieve high sensitivity and specificity for ECs.

Biosensor Architectures and Mechanisms

Biosensors integrate a biological recognition element (e.g., enzymes, antibodies, whole cells, or nucleic acids) with a physicochemical transducer that converts the biological response into a quantifiable signal [35]. They are broadly classified based on their transduction principle:

- Optical Biosensors: Measure changes in light properties (absorbance, fluorescence, luminescence) resulting from the interaction between the bioreceptor and the target analyte. For example, a paper-based, cell-free biosensor utilizing allosteric transcription factors (aTFs) has been developed for detecting Hg²⁺ and Pb²⁺ in water with limits of detection (LOD) of 0.5 nM and 0.1 nM, respectively [35].

- Electrochemical Biosensors: Detect electrical changes (current, potential, or impedance) caused by biochemical reactions. Amperometric, enzyme-based biosensors have been used to identify polybrominated diphenyl ethers (PBDEs) in landfill leachates with an LOD as low as 0.014 μg/L [35].

- Piezoelectric Biosensors: Rely on the measurement of mass changes on a crystal surface, which alters its vibrational frequency [35].

The performance of these biosensors is significantly enhanced by the integration of nanomaterials and hybrid designs. Nanomaterials such as gold nanoparticles, graphene, and carbon nanotubes boost sensitivity and functional efficiency by providing a large surface area for bioreceptor immobilization and enhancing signal transduction [35].

Commercially Deployed Sensor Systems

Beyond laboratory biosensors, robust commercial systems are being deployed for continuous environmental monitoring. These platforms demonstrate the practical application of sensor technology in real-world conditions:

- UviTec Water Quality Monitoring Platform: This system uses optical sensors and sophisticated analytics to measure key parameters like biochemical oxygen demand (BOD) and chemical oxygen demand (COD) in just five seconds, a significant advantage over traditional lab-based methods that can take days [36].

- Real-time Bacteria Sensor for Water: A state-of-the-art, fully automatic device designed for instantaneous detection of bacterial contamination across various water systems, from drinking water to industrial ultra-pure water, without the need for skilled personnel [37].

- ABB's MobileGuard: A laser-based system that detects gas leaks from oil and gas infrastructure with a sensitivity over 1,000 times higher than conventional technologies and can identify single parts per billion (ppb) of methane and ethane ten times faster than traditional equipment [36].

Experimental Protocols for Sensor Deployment and Validation

The successful implementation of advanced monitoring platforms requires rigorous methodologies. The following protocols outline the key steps for deploying and validating sensor systems for EC detection.

Protocol 1: Deployment of a Real-Time Water Quality Monitoring Station

Objective: To establish a continuous, in-situ monitoring station for detecting emerging water contaminants (e.g., pharmaceuticals, microplastics) in a water body. Materials: Optical sensor platform (e.g., UviTec), flowmeter (e.g., AquaMaster), data logger, power supply (solar or grid), programmable auto-sampler, IoT communication module, calibration standards. Procedure:

- Site Selection: Identify a location representative of the water body, considering flow dynamics, potential contamination sources, and accessibility for maintenance.

- Sensor Calibration: Prior to deployment, calibrate all sensors according to manufacturer specifications using a series of standard solutions spanning the expected concentration range of target contaminants.

- System Integration and Installation: Securely mount the sensor suite and flowmeter in the water. Connect the sensors to the data logger and power supply. Install the auto-sampler programmed to collect discrete water samples triggered by specific events (e.g., a spike in a sensor reading or a scheduled time).

- Data Acquisition and Transmission: Configure the data logger to record measurements at pre-set intervals (e.g., every 5 seconds to 15 minutes). The IoT module transmits this data in real-time to a cloud-based platform for remote access and analysis [38] [39].

- Validation and Maintenance: Regularly validate sensor accuracy by comparing real-time data with laboratory analysis of the auto-collected discrete samples. Perform routine maintenance (e.g., cleaning optical windows, replacing membranes) as per the manufacturer's schedule to prevent biofouling and drift.

Protocol 2: Field Validation of an AI-Enhanced Biosensor Array

Objective: To validate the performance of a multi-analyte biosensor array against standard analytical methods in a complex environmental matrix. Materials: Biosensor array (e.g., electrochemical or optical), reference samples (with known analyte concentrations), portable potentiostat/spectrometer (if required), sampling equipment, AI/ML analytics platform. Procedure:

- Pre-Field Laboratory Testing: Characterize the biosensor's sensitivity, selectivity, and LOD for each target EC in a controlled laboratory setting using spiked buffer solutions.

- Field Sampling Campaign: Collect a statistically significant number of environmental samples (water, soil leachate) from various sites. Simultaneously, deploy the biosensor array for in-situ measurement at each site.

- Parallel Analysis: Split each field sample: one portion is analyzed immediately with the biosensor array, and another is preserved and transported to an accredited laboratory for analysis using standard methods (e.g., LC-MS/MS).

- Data Analysis and Model Training: The data from the biosensor and reference lab are used to train and validate machine learning models. These models are designed to compensate for matrix effects and improve the prediction of actual contaminant concentrations from the biosensor's complex signal output [9] [39].

- Performance Metrics Calculation: Calculate key performance metrics such as accuracy (compared to lab results), precision (repeatability), and the correlation coefficient (R²) to quantify the biosensor's field performance.

The workflow for developing and validating such an integrated monitoring system is complex and involves multiple interconnected stages, as visualized below.

Integrated Workflow for Advanced Environmental Monitoring

The Scientist's Toolkit: Essential Research Reagents and Materials

The development and operation of advanced sensor platforms rely on a suite of specialized reagents and materials. The following table details key components and their functions in the context of environmental monitoring for ECs.

Table 1: Research Reagent Solutions for Sensor Development and Environmental Monitoring

| Item | Function in Research/Application | Example Use Case |

|---|---|---|

| Biological Recognition Elements | Provides specificity for target analyte binding. | Enzymes (e.g., laccase for phenol detection), aptamers, allosteric transcription factors (aTFs), whole cells (e.g., engineered E. coli) [35]. |

| Nanomaterials | Enhances signal transduction and sensor sensitivity. | Gold nanoparticles, graphene, carbon nanotubes used to functionalize electrode surfaces or as fluorescent probes [35]. |

| Calibration Standards | Quantifies analyte concentration and ensures sensor accuracy. | Certified reference materials (CRMs) for pharmaceuticals, PFAS, or heavy metals in environmental matrices [34]. |

| Environmental DNA (eDNA) | A non-invasive tool for biodiversity monitoring and species identification. | Water samples are analyzed for genetic traces to identify species (e.g., fish, marine mammals) present in an area, as used in the SeaMe project [40]. |

| AI/Machine Learning Models | Processes complex data, predicts trends, and identifies pollution sources. | Ensemble models analyze data from IoT sensor networks to forecast air quality changes or identify illicit discharge points [9] [38]. |

Quantitative Performance of Advanced Monitoring Technologies

The efficacy of any monitoring technology is ultimately judged by its quantitative performance. The following table summarizes key metrics for a selection of advanced sensors and platforms, providing a basis for comparison and selection.

Table 2: Performance Metrics of Advanced Environmental Sensors

| Technology / Platform | Target Analyte(s) | Key Performance Metrics | Application Context |

|---|---|---|---|

| Paper-based Cell-free Biosensor [35] | Hg²⁺, Pb²⁺ | LOD: 0.5 nM (Hg²⁺), 0.1 nM (Pb²⁺). Linear Range: 0.5–500 nM (Hg²⁺), 1–250 nM (Pb²⁺). | On-site water quality screening |

| Enzymatic Biosensor [35] | Polybrominated diphenyl ethers (PBDE) | Limit of Detection (LOD): 0.014 μg/L. | Analysis of landfill leachates |

| Whole-cell Microbial Biosensor [35] | Heavy Metals | LOD: 0.1–1 μM. | General water quality monitoring |

| UviTec Platform [36] | BOD, COD | Analysis Time: 5 seconds. | Real-time wastewater and surface water monitoring |

| MobileGuard [36] | Methane, Ethane | Sensitivity: Single ppb detection. Speed: 10x faster than traditional equipment. | Leak detection in oil & gas infrastructure |

| Long-range Drone with HiDef [40] | Birds, Marine Mammals | Endurance: Up to 15 hours. Carbon Footprint: Up to 90% reduction vs. aerial surveys. | Offshore wind farm environmental monitoring |