Conditional Probability Analysis: A Powerful Framework for Environmental Stressor Identification and Biomedical Risk Assessment

This article provides a comprehensive exploration of conditional probability analysis as a critical tool for identifying environmental stressors and assessing risk.

Conditional Probability Analysis: A Powerful Framework for Environmental Stressor Identification and Biomedical Risk Assessment

Abstract

This article provides a comprehensive exploration of conditional probability analysis as a critical tool for identifying environmental stressors and assessing risk. Tailored for researchers, scientists, and drug development professionals, it bridges methodologies from ecological risk assessment and clinical development. The content covers foundational principles, practical applications in regulatory and field settings, strategies for overcoming common methodological challenges, and advanced techniques for model validation. By synthesizing insights from environmental monitoring and biomedical assurance calculations, this resource offers a versatile probabilistic framework to support data-driven decision-making in complex, uncertain environments.

Understanding Conditional Probability: The Statistical Bedrock of Stressor Identification

Conditional probability is a fundamental concept in probability theory that measures the likelihood of an event occurring given that another event has already happened [1]. This powerful statistical tool enables researchers to update probabilities based on new information or observed conditions, making it indispensable for data-driven decision-making across scientific disciplines [2]. The notation P(A|B) represents the probability of event A occurring given that event B has occurred, read as "the probability of A given B" [1].

In environmental stressor identification research, conditional probability provides a mathematical framework for analyzing complex relationships between multiple stressors and biological responses. By understanding how the probability of specific environmental outcomes changes under different conditions, researchers can identify critical stressors, predict ecosystem responses, and prioritize management interventions [3] [4]. This approach moves beyond simple correlation analysis to establish predictive relationships that account for the complex dependencies inherent in environmental systems.

Theoretical Foundation

Mathematical Definition and Formula

The conditional probability of event A given event B is formally defined as:

P(A|B) = P(A∩B) / P(B), provided that P(B) > 0 [5] [1] [2]

Where:

- P(A|B) is the conditional probability of A given B

- P(A∩B) is the joint probability of both A and B occurring

- P(B) is the probability of event B

This formula derives from the probability multiplication rule, which states that P(A∩B) = P(A|B) × P(B) [5]. The vertical bar (|) in the notation indicates the conditioning relationship, emphasizing that the probability of A is being evaluated under the condition that B has already occurred [1].

Distinction from Joint and Marginal Probability

It is crucial to distinguish conditional probability from related concepts:

- Joint probability P(A∩B) measures the likelihood of both events A and B occurring together without any conditioning [1]

- Marginal probability P(A) measures the likelihood of a single event without considering any other events

- Conditional probability P(A|B) assumes B has already occurred, effectively restricting the sample space to outcomes where B is satisfied [1] [2]

This distinction becomes particularly important in environmental stressor research, where researchers often need to differentiate between the overall probability of a stressor occurring and the probability of that stressor given specific environmental conditions [3].

Key Applications in Environmental Stressor Identification

Climate Stressor Analysis in Fisheries Management

Recent research has demonstrated the utility of conditional probability frameworks for analyzing regional perceptions of climate stressors across fishery management systems. Survey data revealed that perceptions of environmental stressors vary significantly across different regions, with adjacent regions more likely to agree on observed stressors than non-adjacent regions [3]. This spatial dependency creates an ideal application for conditional probability analysis.

Table 1: Regional Observation of Climate Stressors in US Fisheries

| Stressor Type | Regions Observing Current Impacts | Regions Predicting Future Impacts |

|---|---|---|

| Species Distribution Changes | 6 out of 8 regions | 2 out of 8 regions |

| Temperature Changes | 5 out of 8 regions | 3 out of 8 regions |

| Ocean Acidification | 4 out of 8 regions | 4 out of 8 regions |

| Oxygen Minimum Zone Expansion | 3 out of 8 regions | 5 out of 8 regions |

In this context, conditional probability allows researchers to calculate the probability of observing a specific stressor given regional characteristics. For example, P(StressorA|RegionX) represents the likelihood of observing StressorA in RegionX, enabling targeted management strategies based on regional vulnerabilities [3].

Projection of Environmental Stressors in Marine Ecosystems

Conditional probability frameworks facilitate the assessment of future changes in environmental stressors using climate projection models. Research on seamount chains in the Southeast Pacific has employed quantile regression techniques—a method closely related to conditional probability—to evaluate how key biogeochemical variables are projected to change under different climate scenarios [4].

Table 2: Projected Changes in Environmental Stressors for Southeast Pacific Seamounts

| Environmental Variable | SSP245 Scenario Trend | SSP585 Scenario Trend | Biological Impact |

|---|---|---|---|

| Temperature | Increase | Strong increase | Species migration, metabolic changes |

| Dissolved Oxygen | Variable (region-dependent) | Decrease in Salas & Gómez ridge | Habitat compression |

| pH | Decrease | Strong decrease | Calcification impairment |

| Chlorophyll-a | Mostly increase | Variable | Primary productivity changes |

This approach enables researchers to calculate conditional probabilities such as P(OxygenDecline|HighEmissions_Scenario), providing crucial information for conservation planning under uncertainty [4]. The statistical modeling reveals that perceptions of stressors are significantly predicted by the management region in which a respondent primarily works, highlighting the importance of regional context in stressor identification [3].

Activity Landscape Prediction for Compound Screening

In pharmaceutical development and toxicology screening, conditional probability methods predict compound activity landscapes—a crucial application for identifying chemical stressors. Research has shown that conditional probabilistic analysis can evaluate a compound comparison methodology's ability to provide accurate information about unknown compounds and prioritize active compounds over inactive ones [6].

The methodology involves calculating conditional probability estimation functions using the formula:

F(K,N)(x) = P(ΔA(N) ≤ A* | Sim(K,N) ≥ x)

Where this function measures the probability that a compound pair with a similarity value ≥ x also has an activity difference ≤ A* [6]. This approach has demonstrated superior compound prioritization compared to random sampling, with applicability varying across different compound comparison methods [6].

Experimental Protocols and Methodologies

Conditional Probability Calculation Protocol

Objective: To calculate conditional probabilities from empirical data for environmental stressor identification.

Materials and Equipment:

- Environmental monitoring dataset

- Statistical software (R, Python, or specialized probability software)

- Data visualization tools

Procedure:

- Data Preparation: Organize data into a contingency table format with stressor occurrences as rows and environmental conditions as columns.

- Joint Probability Calculation: Compute P(A∩B) by dividing the number of occurrences where both stressor A and condition B are present by the total number of observations.

- Marginal Probability Calculation: Compute P(B) by dividing the number of occurrences where condition B is present by the total number of observations.

- Conditional Probability Calculation: Apply the formula P(A|B) = P(A∩B) / P(B).

- Validation: Verify that P(B) > 0 to ensure conditional probability is defined.

- Sensitivity Analysis: Assess how changes in condition definition affect the conditional probability.

Interpretation: The resulting conditional probability represents the likelihood of observing stressor A when condition B is present. Values significantly different from the marginal probability P(A) indicate a dependency relationship between A and B [5] [1].

Stressor Identification Using Bayesian Methods

Objective: To identify significant environmental stressors using Bayesian conditional probability frameworks.

Materials and Equipment:

- Long-term environmental monitoring data

- Bayesian statistical software (Stan, PyMC3, JAGS)

- Computational resources for model fitting

Procedure:

- Prior Probability Specification: Define prior distributions for stressor occurrences based on historical data or expert knowledge.

- Likelihood Function Definition: Specify the probability of observed data given the model parameters.

- Posterior Probability Calculation: Apply Bayes' theorem to compute updated probabilities: P(Stressor|Data) = P(Data|Stressor) × P(Stressor) / P(Data)

- Model Convergence Checking: Verify algorithm convergence using diagnostic statistics.

- Posterior Analysis: Examine the posterior distributions to identify stressors with high probability of impact.

Interpretation: Bayesian methods provide a robust framework for updating stressor probabilities as new data becomes available, allowing researchers to quantify uncertainty in stressor identification [7] [1].

Visualization of Conditional Probability Relationships

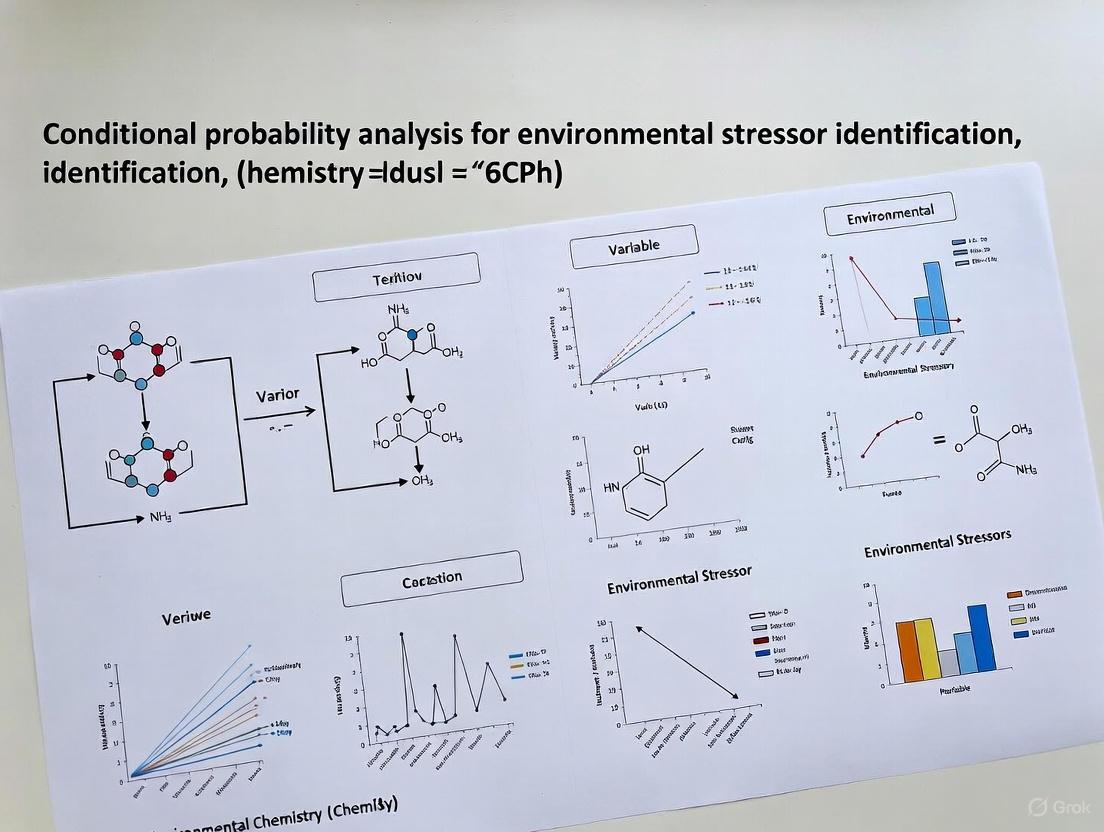

Conditional Probability Analysis Workflow

Bayesian Stressor Identification Framework

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Conditional Probability Analysis

| Research Tool | Function | Application Example |

|---|---|---|

| Statistical Software (R/Python) | Probability calculation and data analysis | Computing conditional probabilities from observational data |

| Climate Projection Models (CMIP6) | Future scenario generation | Projecting stressor probabilities under climate change |

| Bayesian Analysis Packages | Probabilistic modeling | Estimating posterior distributions for stressor impacts |

| Environmental Monitoring Equipment | Data collection | Measuring stressor presence and intensity in field studies |

| Geographic Information Systems | Spatial data analysis | Mapping regional variations in stressor probabilities |

| Survey Instruments | Perceptual data collection | Gathering expert assessments of stressor impacts [3] |

| Quantile Regression Tools | Distributional analysis | Assessing changes in entire distributions of environmental variables [4] |

| Compound Comparison Algorithms | Structural similarity assessment | Predicting activity landscapes for chemical stressors [6] |

Advanced Methodological Considerations

Handling Dependent Events in Environmental Systems

Environmental stressors rarely occur independently, creating challenges for conditional probability analysis. When events are dependent, the probability of their intersection is not simply the product of individual probabilities [2]. Researchers must identify and account for these dependencies to avoid biased estimates.

In fisheries management, for example, perceptions of species distribution changes were significantly determined by an individual's region, creating spatial dependencies that must be incorporated into probability models [3]. Similarly, in seamount ecosystems, multiple stressors like temperature increase and pH decrease often co-occur, requiring multivariate conditional probability approaches [4].

Law of Total Probability for Comprehensive Stressor Assessment

The law of total probability provides a framework for integrating conditional probabilities across multiple conditions:

P(A) = P(A|B₁) × P(B₁) + P(A|B₂) × P(B₂) + ... + P(A|Bₙ) × P(Bₙ)

This approach is particularly valuable when stressors manifest differently under various environmental conditions [2]. For example, the probability of oxygen minimum zone expansion might be calculated conditional on different climate scenarios, then combined according to the probability of each scenario occurring [4].

Validation and Cross-Verification

Given the predictive applications of conditional probability in environmental management, validation is essential. Researchers should:

- Employ cross-validation techniques to assess model performance [6]

- Compare conditional probability estimates with mechanistic models where possible

- Conduct sensitivity analyses to identify influential assumptions

- Validate against independent datasets when available

In compound activity prediction, cross-validation has shown that conditional probability methods provide improved accuracy over random sampling, though the degree of success varies across methods [6]. Similar rigorous validation should be applied to environmental stressor identification.

Conditional probability serves as a powerful analytical framework for identifying and assessing environmental stressors across diverse ecosystems. From fisheries management to pharmaceutical development, the ability to calculate and interpret probabilities conditional on specific observations or scenarios enhances our capacity to predict, prioritize, and manage complex environmental challenges.

The protocols and methodologies outlined in this document provide researchers with practical tools for implementing conditional probability analysis in their stressor identification research. By following structured approaches to probability calculation, dependency analysis, and model validation, scientists can generate robust, actionable insights to support evidence-based environmental decision-making.

The Role of Probability Surveys in Broad-Scale Ecological Risk Assessment

Probability-based surveys provide a critical methodological foundation for conducting ecological risk assessments over extensive geographic regions. By employing standardized sampling designs, these surveys generate unbiased, population-level estimates that enable researchers to quantify relationships between environmental stressors and ecological responses. This protocol details the application of conditional probability analysis within the framework of probability surveys, offering a robust empirical approach for estimating the likelihood of ecological impairment given the magnitude of exposure to specific environmental stressors. The integration of these methodologies allows for a data-driven assessment of risk that supports informed environmental management and regulatory decision-making.

Probability surveys utilize statistical sampling designs where each unit in the population has a known, non-zero probability of being selected. This foundational principle enables the extrapolation of findings from a limited set of sample locations to characterize conditions across vast and heterogeneous ecosystems, such as entire regional watersheds or biogeographical provinces [8]. The U.S. Environmental Protection Agency's (U.S. EPA) Environmental Monitoring and Assessment Program (EMAP) is a prime example of such an approach, systematically collecting biological, physical, and chemical data to evaluate the status and trends of ecological resources [9].

When coupled with conditional probability analysis, these surveys form a powerful tool for ecological risk assessment. Conditional probability analysis models the empirical relationship between stressor intensity and the probability of observing an adverse biological effect. This approach does not produce a single model equation but rather plots the probabilities of observing a defined impairment across a gradient of stressor intensity, providing a direct, quantitative estimate of risk [9]. This methodology is particularly valuable for informing the Analysis phase of the ecological risk assessment process, as outlined by the U.S. EPA, where it helps quantify the exposure-effects relationship [10].

Application Notes: Core Concepts and Case Studies

The practical application of these methods involves a sequence of steps from study design to risk estimation. The core workflow, illustrated in the diagram below, moves from regional sampling to actionable risk metrics.

Key Application Cases

The following cases demonstrate the real-world implementation of this approach across different ecosystems and stressors.

Table 1: Summary of Case Studies Applying Probability Surveys and Conditional Probability Analysis

| Ecosystem | Stressor | Biological Endpoint | Key Finding | Source |

|---|---|---|---|---|

| Mid-Atlantic Highland Streams | Percent Fines (silt/clay) in substrate | EPT Taxa Richness < 9 | Probability of impairment modeled against gradient of percent fines; n=99 sites. | [9] |

| Mid-Atlantic Freshwater Streams | Low Dissolved Oxygen (DO) | Benthic Community Impairment | Risk estimates consistent with U.S. EPA ambient water quality criteria for DO. | [8] |

| Virginian Biogeographical Province Estuaries | Low Dissolved Oxygen (DO) | Benthic Community Impairment | Broad-scale risk assessment validated against established water quality criteria. | [8] |

| Cangnan Offshore Area, China | Chlorophyll-a & Suspended Solids | Macrobenthic Biodiversity Damage | CPA used to define ecological thresholds for sustainable wind farm management. | [11] |

Quantitative Data in Risk Assessment

Modern probabilistic frameworks are also applied to emerging contaminants, moving beyond single threshold values to characterize the full distribution of risk.

Table 2: Probabilistic Ecological Risk Assessment (PERA) of Microplastics in the Hanjiang River

| Assessment Characteristic | Details | Finding |

|---|---|---|

| Pollutant | Small-sized microplastics (20–500 μm) | --- |

| Average Abundance | 7,278 particles/L (or 2.867 mg/L mass concentration) | Exceeded traditional methods by 2–3 orders of magnitude [12] |

| Dominant Morphology | 20–50 μm size group (64.7%), film-form (60.7%) | --- |

| Assessment Method | Species Sensitivity Distributions (SSD) & Joint Probability Curves (JPC) | Characterized likelihood of effects across species [12] |

| Risk Outcome | High chronic and acute ecological risk | More severe in mass-based than number-based assessment [12] |

Detailed Experimental Protocols

This section provides a step-by-step guide for implementing a probability survey and conducting a conditional probability analysis for ecological risk assessment.

Protocol 1: Implementing a Probability-Based Survey Design

Objective: To collect unbiased, representative data on ecological responses and environmental stressors across a broad geographic region.

Materials & Reagents:

- GPS Unit: For precise spatial location of sampling sites.

- Field Sampling Kits: Specific to media (e.g., benthic kicknet with 595-micron mesh, water samplers, sediment corers).

- Preservatives: (e.g., ethanol for benthic macroinvertebrates) for sample integrity.

- Calibrated Multiparameter Meter: For in-situ measurement of parameters like dissolved oxygen, pH, conductivity, and temperature.

- Chain of Custody Forms: For documenting sample handling and transfer.

Procedure:

- Define the Target Population: Clearly specify the spatial extent of the ecosystem to be assessed (e.g., "all wadeable streams in the Mid-Atlantic Highlands").

- Develop Sample Frame: Create a list or GIS layer of all possible sample locations within the target population.

- Select Sample Sites: Use a randomized or stratified random design to select sites from the sample frame, ensuring each element has a known probability of selection. Stratification by factors like ecoregion or elevation can improve precision.

- Conduct Field Sampling:

- Execute sampling during specified index periods (e.g., spring low-flow) to minimize natural variability [9].

- At each site, collect concurrent measurements of the biological endpoint (e.g., benthic macroinvertebrate assemblage) and the environmental stressor(s) of concern (e.g., percent fines, dissolved oxygen).

- Adhere to standardized, published methods for all collection and handling procedures to ensure data consistency and quality [9].

- Laboratory Processing: Process biological samples (e.g., taxonomic identification of benthic organisms) and chemical samples according to established QA/QC protocols.

Protocol 2: Conditional Probability Analysis for Risk Estimation

Objective: To model the empirical relationship between stressor intensity and the probability of ecological impairment.

Materials & Software:

- Statistical Software: (e.g., R, S-Plus, Python with sci-kit learn) capable of running regression and probability models.

- Curated Dataset: Combined stressor and response data from the probability survey.

Procedure:

- Define Impairment Threshold: Establish a dichotomous condition for the biological endpoint based on scientific literature or management goals. For example, define "impairment" as an EPT Taxa Richness of less than 9 [9].

- Prepare Data: Pair each biological response measurement with the concurrent stressor measurement from the same site.

- Model Development: Fit a statistical model (e.g., logistic regression, non-linear curve fit) to the data. The independent variable is the stressor intensity, and the dependent variable is the binary condition (impaired/not impaired) or the probability of impairment.

- Generate Conditional Probability Curve:

- Plot the fitted model to show how the probability of impairment changes across the observed gradient of the stressor.

- Calculate and plot 95% confidence intervals (e.g., as dashed lines) around the central tendency to express uncertainty [9].

- Interpret Results: The resulting curve allows for direct estimation of risk. For instance, one can read the probability of benthic impairment associated with a specific dissolved oxygen concentration [8].

The Scientist's Toolkit: Essential Reagents & Materials

The following table lists key materials and their functions for conducting field surveys and subsequent analyses.

Table 3: Essential Research Reagent Solutions and Materials

| Item | Function/Application |

|---|---|

| Benthic Kicknet (595 μm mesh) | Standardized collection of benthic macroinvertebrate communities in wadeable streams [9]. |

| Laser Direct Infrared (LDIR) Imaging | Automated identification and quantification of small-sized microplastics (20-500 μm) in environmental samples, providing high-resolution abundance and polymer type data [12]. |

| Calibrated Dissolved Oxygen Sensor | Precise in-situ measurement of a key water quality stressor that can cause benthic impairment [8]. |

| Taxonomic Guides & Databases | Accurate identification of benthic organisms to the required taxonomic level (e.g., genus or species) for calculating metrics like EPT richness. |

| Statistical Software (R, S-Plus) | Performing conditional probability analysis, including non-linear curve fitting and confidence interval estimation [9]. |

| Species Sensitivity Distribution (SSD) Models | A probabilistic framework for integrating multi-species toxicity data with environmental monitoring data to quantify the likelihood of ecological risk from stressors like microplastics [12]. |

The integration of probability-based survey designs with conditional probability analysis constitutes a rigorous, empirical methodology for broad-scale ecological risk assessment. This approach directly addresses the challenge of extrapolating from discrete samples to landscape-level inferences, providing environmental managers with quantifiable estimates of the risk posed by environmental stressors. The protocols outlined herein, from field sampling to statistical modeling, offer a replicable framework for generating scientifically defensible evidence to inform watershed management, regulatory standards, and the conservation of ecological resources.

A fundamental challenge in environmental science is definitively linking observed biological impairment in aquatic ecosystems to its specific causes. These systems are often affected by multiple, co-occurring stressors originating from anthropogenic activities such as urbanization, agriculture, and resource extraction [13]. The Causal Analysis/Diagnosis Decision Information System (CADDIS), developed by the U.S. Environmental Protection Agency (EPA), provides a structured, weight-of-evidence framework to help scientists and resource managers identify the primary causes of biological impairment [14]. This framework is critical because management and restoration efforts often fail to improve biological conditions when they do not target the true primary stressors [13]. This application note details how conditional probability analysis (CPA) can be integrated within the CADDIS framework to strengthen causal assessments in aquatic systems, providing researchers with robust protocols for stressor identification.

Conceptual Framework for Stressor Identification

The process of linking stressors to biological effects follows a logical, evidence-based pathway. The diagram below outlines the core workflow for cause-effect analysis.

The framework begins with the observation of an undesirable biological effect, such as a reduced diversity of benthic macroinvertebrate communities. Investigators then list plausible candidate causes based on local knowledge and site conditions [14]. The core of the analysis involves generating and weighing multiple lines of evidence to evaluate the candidate causes. This includes examining the spatial and temporal co-occurrence of the stressor and effect, analyzing stressor-response relationships from field data, and incorporating data from laboratory or experimental studies [14] [13]. The evidence is then systematically compared to established criteria for causation. Finally, the cause(s) that best explain the observed impairment are identified, providing a scientifically defensible basis for management actions.

The Role of Conditional Probability Analysis

Conditional Probability Analysis (CPA) is a powerful empirical tool for quantifying stressor-response relationships from field data, particularly data collected through probability-based survey designs [15] [8]. It answers a critical question for causal assessment: What is the probability of observing a biological impairment given the presence or exceedance of a specific stressor?

Theoretical Foundation

CPA leverages the concept of conditional probability, expressed as P(Y|X), which is the probability of event Y (e.g., biological impairment) occurring given that event X (e.g., a stressor level is exceeded) has occurred [15]. Formally, it is calculated by dividing the joint probability of observing both events by the probability of the conditioning event:

P(Impairment | Stressor > Threshold) = P(Impairment ∩ Stressor > Threshold) / P(Stressor > Threshold) [15]

In practice, this involves:

- Dichotomizing the Biological Response: A threshold is applied to a continuous biological response metric to categorize sites as "impaired" or "not impaired" [15]. For example, a site might be classified as impaired if the relative abundance of clinger taxa is less than 40%.

- Calculating Probabilities: The probability of impairment is calculated across a gradient of the stressor variable. This involves determining the proportion of sites that are impaired within different ranges of the stressor value.

Application Workflow

The following diagram details the step-by-step process for implementing CPA.

For instance, an analysis might reveal that the probability of observing a low relative abundance of clinger taxa increases from 60% to 80% as the percentage of fine sediments in the substrate increases from 0% to 50% [15]. This provides strong, quantifiable evidence that fine sediment is a likely cause of impairment for this biological endpoint.

Integrated Analysis Protocols

Exploratory Data Analysis for Causal Assessment

Before conducting formal causal analyses like CPA, Exploratory Data Analysis (EDA) is an essential first step to identify general patterns, outliers, and relationships between potential stressors and biological responses [15]. Key EDA techniques include:

- Variable Distributions: Examine the distribution of stressor and response variables using histograms, boxplots, and quantile-quantile (Q-Q) plots. This helps in understanding the data's structure and identifying transformations (e.g., log-transformation) that may be needed for subsequent analyses [15].

- Scatterplots and Correlation Analysis: Scatterplots visually reveal the form (linear or non-linear) and strength of relationships between pairs of variables. Correlation coefficients (e.g., Pearson's r, Spearman's ρ) provide a quantitative measure of these associations and can reveal confounding factors where stressors are highly correlated with each other [15].

Protocol: Conducting a Conditional Probability Analysis

Objective: To quantify the probability of a biological impairment occurring given different levels of a potential stressor.

Materials and Data Requirements:

- A dataset from a probability-based survey design (e.g., EPA's Environmental Monitoring and Assessment Program) that includes concurrent measurements of biological condition and potential stressors [8].

- Statistical software (e.g., R, Python) or specialized tools like EPA's CADStat, which includes a module for calculating conditional probabilities [15].

Step-by-Step Procedure:

Define Biological Impairment:

- Select a relevant benthic macroinvertebrate metric (e.g., taxa richness, EPT index, relative abundance of clingers).

- Establish a scientifically justified threshold that dichotomizes the metric into "impaired" and "not impaired" categories [15].

Prepare Stressor Data:

- Select a continuous stressor variable (e.g., fine sediment percentage, nutrient concentration).

- Ensure the stressor data aligns spatially and temporally with the biological data.

Calculate Conditional Probabilities:

- For a given stressor threshold (Xc), identify all sites where the stressor value exceeds Xc.

- Among those sites, calculate the proportion that are biologically impaired.

- This proportion is the conditional probability P(Impairment | Stressor > Xc).

- Repeat this calculation for multiple stressor thresholds across the observed range of the data [15].

Visualize and Interpret Results:

- Plot the calculated conditional probabilities against the stressor thresholds to create a conditional probability curve.

- Interpret the curve: A sharp increase in the probability of impairment at a specific stressor range provides strong evidence of a causal relationship.

Research Reagent Solutions and Tools

Table 1: Essential Tools and Data Sources for Stressor Identification Analysis

| Tool/Solution Name | Type | Primary Function | Key Features & Context of Use |

|---|---|---|---|

| CADDIS Platform | Information System | Framework & Guidance | Provides the structured, weight-of-evidence methodology for causal assessment, including volumes on Stressor Identification, sources, and data analysis techniques [14]. |

| CADStat | Software Tool | Data Analysis | A menu-driven software package that includes specific tools for conducting conditional probability analysis and correlation analysis within the CADDIS workflow [15]. |

| Probability Survey Data (e.g., EMAP) | Data Source | Empirical Data Input | Data from statistically designed surveys (e.g., EPA's Environmental Monitoring and Assessment Program) that are essential for generating unbiased, population-level estimates of risk using CPA [15] [8]. |

| Stressor-Response Databases | Database | Evidence Synthesis | Curated databases within CADDIS (Volume 5) that store and display evidence from scientific literature on causal pathways, helping to inform and evaluate hypotheses [14]. |

Application in Environmental Management

Applying this conceptual framework to real-world synthesis efforts reveals key stressors driving impairment. A major study in the Chesapeake Bay watershed, which utilized both literature review and regulatory impairment listings, identified geomorphology (physical habitat and sediment), salinity, and nutrients as the most frequently reported stressors causing biological impairment in freshwater streams [13]. This integrated approach allows resource managers to prioritize monitoring and restoration efforts. For example, knowing that physical habitat is a primary stressor in agricultural areas, while salinity is a major concern in urban and mining settings, enables targeted management actions that are more likely to succeed [13].

The combination of a rigorous conceptual framework like CADDIS, coupled with quantitative empirical tools like Conditional Probability Analysis, provides a powerful approach for moving from correlation to causation in complex environmental systems. This, in turn, lays the groundwork for effective and defensible watershed restoration and protection.

Bayesian statistics represents a fundamental approach to probabilistic inference that interprets probability as a measure of believability or confidence in an event occurring, rather than merely as a long-run frequency [16]. This philosophical framework provides researchers across environmental and clinical domains with powerful mathematical tools to rationally update prior beliefs in light of new evidence [17]. The core mechanism enabling this learning process is Bayes' theorem, which formally combines prior knowledge with current data to produce posterior distributions that represent updated understanding of parameters of interest [17].

The Bayesian approach has gained significant traction in both environmental and clinical research due to its transparent handling of uncertainty and its flexibility in incorporating diverse forms of evidence [18] [19]. In environmental science, Bayesian methods help address complex, multi-stressor problems where traditional frequentist approaches often struggle [20]. Similarly, in clinical research, Bayesian statistics enable more adaptive trial designs and facilitate the incorporation of historical data and expert knowledge [19]. This protocol document outlines the foundational principles and practical methodologies for applying Bayesian inference across these domains, with particular emphasis on their application within conditional probability analysis for environmental stressor identification research.

Theoretical Framework and Key Concepts

Core Principles of Bayesian Inference

Bayesian statistics operates on three essential ingredients: (1) prior distributions representing background knowledge about parameters before seeing current data; (2) likelihood functions expressing the probability of the observed data given specific parameter values; and (3) posterior distributions combining prior knowledge and observed evidence through Bayes' theorem [17]. The mathematical formulation of Bayes' theorem is:

[ P(A|B) = \frac{P(B|A) P(A)}{P(B)} ]

Where (P(A|B)) is the posterior probability of A given B, (P(B|A)) is the likelihood of B given A, (P(A)) is the prior probability of A, and (P(B)) is the marginal probability of B [16].

This framework enables researchers to treat unknown parameters as random variables described by probability distributions, contrasting with the frequentist view where parameters are fixed but unknown quantities [17]. This probabilistic treatment of parameters naturally accommodates uncertainty quantification throughout the analysis.

Comparative Advantages in Environmental and Clinical Contexts

Table 1: Advantages of Bayesian Methods in Environmental and Clinical Research

| Feature | Environmental Applications | Clinical Applications |

|---|---|---|

| Uncertainty Quantification | Explicitly represents uncertainty in complex ecological systems [20] | Propagates uncertainty through trial simulations and decision models [19] |

| Information Integration | Combines expert knowledge with observational data [21] | Incorporates historical data and external evidence into trials [19] |

| Adaptive Learning | Updates understanding as new monitoring data becomes available [18] | Enables adaptive trial designs with modifications based on interim results [19] |

| Complex System Modeling | Handles multiple interacting stressors and non-linear responses [22] | Models complex dose-response relationships and biomarker interactions [23] |

Bayesian Applications in Environmental Stressor Identification

Stressor-Response Analysis Using Bayesian Networks

Bayesian networks (BNs) have emerged as particularly valuable tools for identifying and quantifying environmental stressor-response relationships [24] [20]. These probabilistic graphical models represent systems as networks of interactions between variables via cause-effect relationship diagrams, enabling researchers to map interdependencies among environmental, social, and biological predictors [23]. A BN consists of two main components: (1) a directed acyclic graph (DAG) depicting conditional dependencies between variables, and (2) conditional probability distributions quantifying the strength and shape of these dependencies [21] [20].

In freshwater ecosystem studies, for example, BNs have been successfully applied to identify how water quality and physical habitat stressors influence benthic macroinvertebrate response metrics [24]. Research demonstrates that in mountainous regions, water temperature and specific conductivity are prevalent stressors, while in agriculturally dominated regions, physical habitat alterations predominate [24]. These models enable researchers to predict changes in biological indicators based on habitat and water quality parameters, supporting the implementation of management frameworks such as resist-accept-direct (RAD) [24].

Meta-Analysis of Multiple Stressor Effects

Recent advances in Bayesian meta-analysis have enabled more systematic quantification of individual stressor effects across diverse ecosystems. A global synthesis of stressor-response relationships across five key riverine organism groups (prokaryotes, algae, macrophytes, invertebrates, and fish) utilized Bayesian meta-analyses to quantify responses to the most prevalent stressors [22]. This analysis revealed consistent biodiversity loss associated with elevated salinity, oxygen depletion, and fine sediment accumulation across taxa, while responses to nutrient enrichment and warming varied among organism groups [22].

Table 2: Bayesian Meta-Analysis of Stressor Effects on Riverine Taxa [22]

| Stressor | Prokaryotes | Algae | Macrophytes | Invertebrates | Fish |

|---|---|---|---|---|---|

| Salinity | Variable | Strong negative | Negative | Strong negative | Negative |

| Oxygen depletion | No clear trend | Weak negative | Positive | Strong negative | Negative |

| Fine sediment | Insufficient data | Weak negative | Negative | Strong negative | Negative |

| Nutrient enrichment | Contrasting (N+/P-) | Positive | Negative | Weak | Minimal |

| Warming | Positive | Variable | Negative | Negative | Positive |

The meta-analysis compiled 1,332 stressor-response relationships from 276 studies across 87 countries, with nearly half focusing on invertebrates [22]. This quantitative baseline enables more accurate prediction of biodiversity responses to increasing anthropogenic pressures and informs targeted conservation strategies.

Bayesian Methods in Clinical Research and Drug Development

Evolution of Bayesian Clinical Trial Design

Bayesian methods have transformed clinical trial design and analysis through the implementation of adaptive designs that can modify trial characteristics based on accumulating data [19]. The historical development of Bayesian clinical trials has been influenced by foundational statisticians like Leonard J. Savage, with wider adoption facilitated by computational advances such as Markov Chain Monte Carlo (MCMC) methods [19]. These developments have enabled more efficient trial designs that can respond to emerging patterns while maintaining statistical rigor.

Notable examples of successful Bayesian trials include the I-SPY 2 platform trial for breast cancer and REMAP-CAP for critical care, which implemented adaptive randomization and used Bayesian methods to evaluate treatment efficacy across multiple subgroups [19]. These trials demonstrate how Bayesian approaches can accelerate therapeutic development by more efficiently allocating patients to promising treatments and incorporating external information through prior distributions.

Regulatory Acceptance and Implementation

Regulatory acceptance of Bayesian methods has grown substantially, with agencies like the FDA providing guidance on their use in medical product development [19]. The upcoming workshop on "The use of Bayesian statistics in clinical development" scheduled for June 2025 by the European Medicines Agency further signals the mainstream adoption of these approaches [25]. This regulatory acceptance has been facilitated by methodological advances that address potential concerns about subjectivity in prior specification and type I error control.

Bayesian methods offer particular advantages in settings where patient populations are limited, such as rare diseases, or where rapid decision-making is critical, as demonstrated during the COVID-19 pandemic [19]. The ability to incorporate external data through prior distributions and to make probabilistic statements about treatment effects aligns well with clinical decision-making processes.

Integrated Methodological Protocols

Protocol for Bayesian Network Development in Environmental Stressor Identification

Objective: To construct a Bayesian network for identifying key environmental stressors and quantifying their effects on biological endpoints.

Materials and Software:

- R statistical environment with bnlearn, BNSL, or gRain packages

- Python with pgmpy library (alternative)

- Commercial BN software (GeNIe, Netica, Hugin)

- Dataset with complete cases for structure learning

Procedure:

Problem Formulation and Variable Selection

- Define the environmental management objective

- Identify potential stressors (e.g., chemical, physical, biological)

- Select relevant biological response indicators

- Consider contextual variables (e.g., spatial, temporal, environmental)

Network Structure Development

- Option A: Expert-driven structure specification

- Convene domain experts to identify causal relationships

- Create directed acyclic graph (DAG) representing proposed causal structure

- Validate structure with independent expert review

- Option B: Data-driven structure learning

- Apply constraint-based algorithms (Grow-Shrink, Incremental Association)

- Implement score-based algorithms (Hill-Climbing, Tabu Search)

- Use hybrid approaches combining expert knowledge and algorithmic learning

- Option A: Expert-driven structure specification

Parameter Estimation

- Define conditional probability distributions for each node

- Use expert elicitation for prior probabilities where data are limited

- Apply Bayesian parameter estimation with observational data

- Validate conditional probabilities with holdout data

Model Validation and Refinement

- Conduct sensitivity analysis to identify influential parameters

- Compare predictions with independent datasets

- Use cross-validation to assess predictive performance

- Refine structure and parameters iteratively based on validation results

Application for Decision Support

- Enter evidence for observed variables

- Propagate probabilities through the network

- Identify critical pathways and leverage points

- Evaluate potential management interventions

Protocol for Bayesian Adaptive Trial Design in Clinical Research

Objective: To implement a Bayesian adaptive design for clinical trial optimization.

Materials and Software:

- Clinical trial simulation software (e.g., FACTS, East)

- R with rjags, RStan, or brms packages

- SAS with BAYES procedure

- WinBUGS, OpenBUGS, or JAGS for MCMC sampling

Procedure:

Trial Objectives and Endpoint Specification

- Define primary and secondary endpoints

- Specify target product profile and success criteria

- Identify potential adaptive elements (dose selection, sample size, population enrichment)

Prior Distribution Elicitation

- Systematically review historical data and literature

- Convene expert panel for prior parameter specification

- Consider skeptical, enthusiastic, or non-informative priors based on context

- Document prior justification for regulatory submission

Adaptive Algorithm Specification

- Define adaptation rules and decision criteria

- Specify timing of interim analyses

- Establish stopping boundaries (efficacy, futility)

- Determine randomization ratios for response-adaptive randomization

Operating Characteristic Evaluation

- Simulate trial under multiple scenarios (null, alternative)

- Evaluate type I error rate and power

- Assess sample size distribution and trial duration

- Refine design parameters to achieve desirable operating characteristics

Trial Execution and Analysis

- Implement data monitoring committee charter

- Conduct interim analyses according to pre-specified plan

- Execute adaptations based on Bayesian decision criteria

- Perform final analysis incorporating all accumulated data

- Report posterior probabilities of treatment effect and associated uncertainty

Visualization of Bayesian Methodologies

Bayesian Belief Updating Process

Environmental Stressor Identification Workflow

Table 3: Essential Resources for Bayesian Analysis in Environmental and Clinical Research

| Resource Category | Specific Tools/Software | Primary Application | Key Features |

|---|---|---|---|

| Statistical Computing | R (bnlearn, RStan, brms) | General Bayesian modeling | Open-source, extensive package ecosystem, MCMC implementation |

| Specialized BN Software | GeNIe, Netica, Hugin | Bayesian network development | Graphical interface, efficient inference algorithms |

| Clinical Trial Software | FACTS, East | Bayesian adaptive trials | Specialized for clinical trial simulation and design |

| MCMC Engines | WinBUGS, OpenBUGS, JAGS, Stan | Complex hierarchical models | Flexible model specification, various sampling algorithms |

| Data Integration Tools | PREDICTION, R-meta | Meta-analysis and evidence synthesis | Bayesian hierarchical models, random-effects meta-analysis |

Bayesian methods provide a coherent framework for updating scientific beliefs with new evidence across diverse research contexts. In environmental science, they enable more nuanced understanding of complex stressor-response relationships, supporting more effective ecosystem management [24] [20]. In clinical research, they facilitate more efficient and ethical trial designs through adaptive methodologies [19]. The common thread across these applications is the Bayesian capacity to formally integrate prior knowledge with current data while explicitly quantifying uncertainty.

Future methodological developments will likely focus on improving computational efficiency for high-dimensional problems, enhancing methods for prior specification, and developing more sophisticated Bayesian machine learning approaches [21]. As these methods continue to evolve, they will further strengthen our ability to make informed decisions in the face of uncertainty across scientific domains.

From Theory to Practice: Implementing Conditional Probability Analysis in Environmental and Biomedical Settings

Conditional probability analysis provides a powerful empirical framework for estimating ecological risk by quantifying the likelihood of a biological response given the presence of an environmental stressor [26] [8]. Within this context, assessing risks to benthic invertebrate communities from low dissolved oxygen (DO) represents a critical application for environmental managers. Benthic communities are widely used biological indicators in environmental assessments due to their sedentary nature, predictable responses to pollution, and role in integrating stress over temporal scales [27] [28]. This case study outlines protocols for applying conditional probability analysis to estimate hypoxia-related risks to benthic communities, providing a methodological approach that can be adapted across aquatic systems.

Background and Significance

Hypoxia (typically defined as dissolved oxygen < 2 mg L⁻¹) constitutes a widespread form of anthropogenic habitat degradation in aquatic ecosystems [29]. In systems like Chesapeake Bay, hypoxia results from nutrient runoff, algal bloom deposition, high benthic respiration, and water column stratification [29]. The effects of low oxygen on benthos operate across multiple biological levels, from physiological stress (altered metabolic rates) to individual-level impacts (reduced growth and mortality), population-level changes (abundance shifts), and community-level alterations (species composition changes) [29].

Different benthic species exhibit varying tolerances to hypoxia, with bivalves and polychaetes often tolerating short-lived hypoxia (< 2 mg L⁻¹), while crustaceans and echinoderms may experience mortality from milder hypoxia (2-3 mg L⁻¹) lasting only hours [29]. The risk to benthic communities depends on multiple factors including critical oxygen levels, temporal duration of low oxygen, spatial extent of exposure, species-specific tolerances, and ontogenetic variations in tolerance [29].

Table 1: Benthic Community Response to Environmental Gradients in Chesapeake Bay (1996-2004) [29]

| Environmental Variable | Depth Relationship | Correlation with Benthic Density | Correlation with Benthic Biomass | Correlation with Diversity (H′) |

|---|---|---|---|---|

| Dissolved Oxygen | Negative correlation | Significant positive correlation | Significant positive correlation | Significant positive correlation |

| Water Depth | - | Significant negative correlation | Significant negative correlation | Significant negative correlation |

| Salinity | Variable with depth | Not primary factor | Contributory factor with depth/DO | Not primary factor |

| Sediment Silt-Clay | Increases with depth | Not primary factor | Not primary factor | Not primary factor |

| Temperature | Decreases with depth | Not primary factor | Not primary factor | Not primary factor |

Table 2: Oxygen Parameters and Benthic Community Status Across Ecosystems [29] [30]

| Ecosystem/Location | Dissolved Oxygen Range | Benthic Community Status | Key Environmental Context |

|---|---|---|---|

| Chesapeake Bay Mainstem | 0.49 - 7.26 mg L⁻¹ | Historically low diversity (2001-2004) correlated with severe hypoxia | Summer hypoxia, deep channels with stratification |

| Namibian Margin OMZ | 0-0.15 mL L⁻¹ (0-9% saturation) | Fossil coral mounds overgrown by sponges and bryozoans | Oxygen minimum zone, high organic matter supply |

| Angolan Margin OMZ | 0.5-1.5 mL L⁻¹ (7-18% saturation) | Living cold-water coral reefs on mounds | Moderate OMZ, internal tidal food supply |

Methodological Protocols

Field Sampling and Monitoring Design

Protocol 1: Probability-Based Environmental Monitoring

- Site Selection: Implement probability-based survey designs that ensure statistical representation of the target population of water bodies [26] [8]. Stratify sampling based on suspected hypoxia gradients and habitat types.

- Water Quality Measurement:

- Benthic Community Sampling:

Data Preparation and Metric Calculation

Protocol 2: Benthic Index Development

- Calculate M-AMBI Index:

- Categorize invertebrate taxa into ecological groups (sensitive to tolerant) [27].

- Compute AMBI index values using abundance-weighted tolerance scores [27].

- Calculate Shannon's diversity (H′) and species richness [27].

- Apply factor analysis to combine AMBI, H′, and richness into a single M-AMBI value (0-1 scale) with degraded sites closer to zero [27].

- Stratify by Habitat: Calculate separate M-AMBI expectations for different salinity zones (tidal freshwater, oligohaline, mesohaline, polyhaline, euhaline) to account for natural variation [27].

Conditional Probability Analysis

Protocol 3: Risk Estimation Using Conditional Probability

- Exposure-Response Modeling:

- Define exposure thresholds based on dissolved oxygen criteria (e.g., <2 mg L⁻¹ for hypoxia) [29] [26].

- Calculate conditional probability as P(Biological Impairment | DO Exposure) using monitoring data pairs [26] [8].

- Model exposure-response relationships through empirical data plotting biological condition against DO gradients [26] [8].

- Risk Estimation:

Conditional Probability Analysis Workflow for Benthic Risk Assessment

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Analytical Approaches for Benthic Risk Assessment

| Category/Item | Function/Application | Protocol Specifications |

|---|---|---|

| Field Equipment | ||

| CTD Profiler | Measures depth-specific conductivity, temperature, dissolved oxygen | Calibrate before each survey; record bottom measurements [30] [27] |

| Van Veen or Ponar Grab | Collects standardized sediment samples for benthic analysis | Use consistent grab size (0.04 m² or 0.1 m²); replicate per station [27] |

| Laboratory Supplies | ||

| Sieving Apparatus | Separates benthic organisms from sediment | Standardized mesh size (0.5-1.0 mm) [27] |

| Preservation Solutions | Maintains specimen integrity for identification | 10% buffered formalin or 70% ethanol [27] |

| Analytical Approaches | ||

| AMBI Ecological Groups | Classifies taxa by pollution tolerance | Use regionally validated species classifications [27] |

| Random Forest Modeling | Ranks stressor importance in multiple stressor contexts | Machine learning approach for identifying key drivers [27] |

| Boosted Regression Trees | Models nonlinear stressor-response relationships | Handles multiple predictors; identifies threshold effects [28] |

Advanced Analytical Framework

Multiple Stressor Considerations

In complex environmental systems, dissolved oxygen rarely acts in isolation. Implement multivariate modeling approaches to address co-occurring stressors:

- Apply Boosted Regression Trees (BRTs) to rank relative importance of multiple stressors including nutrients, metals, micropollutants, and morphological alterations [28].

- Use Principal Component Analysis (PCA) to identify major environmental gradients that may confound simple DO-impact relationships [28].

- Account for natural covariates including salinity, temperature, depth, and sediment characteristics that create underlying patterns in benthic community structure [29] [28].

Data Interpretation Guidelines

Interpreting Conditional Probability Outputs:

- Calculate exceedance curves showing proportion of water bodies exceeding impairment thresholds across DO gradients [26] [8].

- Derive risk-based criteria by identifying DO thresholds where probability of benthic impairment exceeds management benchmarks [26] [8].

- Consider contextual factors including system productivity, tidal influence, and background variability when applying generalized risk relationships to specific water bodies [29] [30].

Pathways of Low Dissolved Oxygen Effects on Benthic Communities

Conditional probability analysis applied to probability-based monitoring data offers a robust empirical approach for estimating risks to benthic communities from low dissolved oxygen [26] [8]. This methodology enables researchers to quantify exposure-response relationships directly from field data, providing a scientifically defensible basis for establishing protective criteria and prioritizing management interventions. The protocols outlined herein facilitate standardized assessment across systems while allowing adaptation to regional conditions and specific management questions. As expanding oxygen minimum zones present growing threats to aquatic ecosystems worldwide [30], these approaches will become increasingly vital for effective environmental protection and resource management.

Application Context

Conditional probability analysis (CPA) is a statistical technique used in environmental science to quantify the likelihood of an ecological impairment occurring given the magnitude of a specific environmental stressor. The U.S. Environmental Protection Agency (EPA) employs this method to establish scientifically defensible, cause-effect relationships that inform water quality criteria and management decisions [31]. The analysis of Chlorophyll a (Chl-a) response to Total Phosphorus (TP) in Northeast Lakes provides a canonical example of this approach, linking a key nutrient stressor (TP) to a biological response indicator (Chl-a) that signifies eutrophication and potential harmful algal bloom risk [31] [32]. This protocol details the methods for conducting such an analysis, serving as a model for environmental stressor identification research.

Experimental Protocol

Study Design and Sampling Methodology

Data Origin and Temporal Scope: Data were collected under the EPA's Environmental Monitoring and Assessment Program (EMAP) for Surface Waters, Northeast Lakes Data [31] [33]. Sample collection occurred during the summer index period (July through September) across multiple years (1991-1994) [31].

Site Selection: The sampling design utilized an EMAP probability-based survey design, which allows for statistical inference to the broader population of lakes in the Northeastern United States [31] [33]. For related diatom studies, this included lakes with a surface area of at least 0.01 km² and a minimum depth of 1 meter [33].

Field Sampling Protocol:

- Water Column Sampling: A single grab sample was collected from the upper water column at 1.5 meters below the surface using a van Dorn sampler [31].

- Sample Type: This design yields a cross-sectional, observational dataset suitable for stressor-response modeling across a gradient of conditions.

- Target Analytes: Samples were analyzed for total phosphorus (TP, µg/L) and chlorophyll a (Chl-a, µg/L) concentrations [31].

Analytical and Statistical Procedures

Data Analysis Platform: The conditional probability analysis was performed using S-Plus Version 7.0 software with user-written scripts [31].

Defining the Biological Impairment: A key step is defining a threshold for an "unacceptable condition" for the biological response variable. In this analysis, a Chl-a concentration exceeding 30 µg/L was set as the impairment threshold [31].

Core Analytical Method - Conditional Probability Analysis:

- Conditional probability analysis does not produce a traditional regression equation. Instead, it models and plots the probabilities of observing the stated impairment (Chl-a > 30 µg/L) across a continuous gradient of the stressor (TP concentration) [31].

- The analysis generates a curve showing the probability of impairment (P(Impairment | X)) for any given stressor level (X).

- Uncertainty Estimation: The analysis includes calculating 95% confidence intervals (represented as dashed lines in the output plot) around the conditional probability curve [31].

Table 1: Key Parameters for Conditional Probability Analysis

| Parameter | Description | Value/Example | |

|---|---|---|---|

| Independent Variable | Environmental stressor | Total Phosphorus (TP) concentration (µg/L) | |

| Dependent Variable | Biological response indicator | Chlorophyll a (Chl-a) concentration (µg/L) | |

| Impairment Threshold | Chl-a level defining "unacceptable" condition | > 30 µg/L [31] | |

| Sample Size (n) | Number of lake observations | 483 [31] | |

| Output | Functional relationship | P(Chl-a > 30 µg/L | TP) |

Data Presentation

The primary output of this analysis is a graphical plot and associated data that characterize the stressor-response relationship. The following table summarizes the functional relationships and key quantitative findings derived from the EPA's analysis and related contemporary studies.

Table 2: Summary of Chl-a and TP Relationship Findings from Lake Studies

| Study / Analysis Focus | Key Quantitative Relationship or Finding |

|---|---|

| EPA Conditional Probability (NE Lakes) | Probability of Chl-a > 30 µg/L increases with rising Total Phosphorus concentration in the upper water column [31]. |

| National Lakes Assessment 2022 | 50% of U.S. lakes were in poor condition due to elevated phosphorus; 49% had poor Chl-a levels; 30% were hypereutrophic [34]. |

| Lake Gehu Study (2024) | Demonstrated a negative Chl-a:TP correlation at very high algal production efficiency (ETP); TP dominated interannual ETP variation (28.9% explanation) [35]. |

| Systematic Review (Lotic Ecosystems) | Meta-analysis confirmed positive mean effect sizes for TP-sestonic Chl-a and TN-benthic Chl-a relationships; effect strength can be influenced by measurement method and can saturate at high nutrient levels [36]. |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for Lake Condition Studies

| Item | Function / Application |

|---|---|

| Van Dorn Sampler | Water sampling device for collecting grab samples at specific depths (e.g., 1.5m) with minimal disturbance [31]. |

| Total Phosphorus (TP) Assay | Analytical method to measure the sum of all dissolved and particulate phosphorus forms, representing the integrated nutrient stressor [31] [36]. |

| Chlorophyll a Measurement | Spectrophotometry or fluorometry analysis of the photosynthetic pigment used as a proxy for algal biomass and eutrophication status [31] [36]. |

| Conditional Probability Model | Statistical script (e.g., for S-Plus/R) to model the probability of biological impairment across a stressor gradient, outputting the relationship with confidence intervals [31]. |

| Harmonized Diatom Dataset | Taxonomically consistent biological data from sediment cores, used for paleolimnological studies to reconstruct historical lake conditions and trends [33]. |

Workflow and Logical Diagram

The following diagram visualizes the logical workflow for conducting a conditional probability analysis, from study design through to application in management.

Stressor-Response Relationship Diagram The core output of the analysis is visualized as a conditional probability curve, illustrating how the risk of ecological impairment increases with the stressor level.

In the high-stakes landscape of drug development, where late-stage failures incur tremendous financial and opportunity costs, conditional assurance has emerged as a powerful Bayesian framework for strategic decision-making. This methodology extends beyond traditional probability of success calculations by quantifying how achieving pre-defined success criteria in an initial study updates our beliefs about a drug's true treatment effect and impacts the predicted success of subsequent development stages [37]. The pharmaceutical industry has historically viewed development as a series of independent experiments, with compounds progressing based on "sufficiently positive data" without fully quantifying what this achievement means for future success probabilities [37]. Conditional assurance addresses this gap by providing a quantitative framework to transparently assess how a planned study de-risks later phase development, enabling organizations to make investment choices aligned with their risk tolerance and potential return.

The fundamental shift in perspective offered by conditional assurance is particularly valuable for environmental stressor identification research, where researchers must prioritize compounds for development despite significant uncertainty about their mechanisms of action and therapeutic potential. By modeling how information collected in earlier phases modulates uncertainty about the true biological effect, drug development professionals can construct more robust development pathways and allocate resources to candidates most likely to succeed in later-stage trials.

Theoretical Foundations and Mathematical Framework

From Power to Conditional Assurance

Traditional power calculations in clinical development assume a fixed, known treatment effect—a scenario rarely reflecting reality. Power represents the probability that a study will achieve its success criteria conditional on a specific assumed treatment effect, but provides limited value for portfolio-level decision-making when uncertainty exists about this assumption [37]. Assurance, as introduced by O'Hagan et al., advances beyond power by incorporating current uncertainty about the true treatment effect through a design prior distribution (π_D(Δ)), which represents all available knowledge about the drug's effect [37]. The assurance calculation integrates the power function with this prior distribution:

Where P(S₁|Δ) is the power function defining the probability of success for a given Δ, and π_D(Δ) is the design prior distribution.

Conditional assurance builds upon this foundation by calculating the predicted assurance of a subsequent study conditional on success in an initial study. The mathematical derivation involves updating the design prior based on the initial study's success to create a conditional design posterior, which then serves as the design prior for the subsequent study [37]. This Bayesian updating process formally incorporates the knowledge gained from the initial study's success to refine predictions about future studies.

Mathematical Derivation of Conditional Assurance

The conditional design posterior is calculated using Bayes' theorem, combining the likelihood of observing success in the initial study with the original design prior [37]:

Where the denominator represents the assurance of the initial study. This updated distribution then becomes the design prior for calculating the conditional assurance of the subsequent study:

This framework allows for quantitative assessment of how an initial study's success de-risks subsequent development, measured by the absolute and relative difference between the conditional assurance and the unconditional assurance of the subsequent study [37].

Table 1: Key Probability Concepts in Drug Development Decision-Making

| Concept | Definition | Calculation | Application Context |

|---|---|---|---|

| Power | Probability of success given a fixed treatment effect | P(S|Δ) where Δ is fixed | Traditional sample size determination |

| Assurance | Unconditional probability of success integrating uncertainty | ∫P(S|Δ)π_D(Δ)dΔ | Study design with uncertain treatment effects |

| Conditional Probability | Probability of an event given another event has occurred | P(A|B) = P(A∩B)/P(B) | General statistical inference |

| Conditional Assurance | Assurance of a future study given initial study success | P(S₂|S₁) = ∫P(S₂|Δ)π_D(Δ|S₁)dΔ | Portfolio optimization and development sequencing |

Practical Implementation Protocols

Protocol for Calculating Conditional Assurance

Objective: Quantify how success in an initial study updates the probability of success for a subsequent study in the development pathway.

Materials and Data Requirements:

- Historical data on similar mechanisms of action or therapeutic classes

- Preclinical and early clinical data for the compound of interest

- Defined success criteria for both initial and subsequent studies

- Statistical software capable of Bayesian computation (e.g., R, Stan, PyMC)

Procedure:

- Specify Design Prior: Construct an initial design prior (π_D(Δ)) that represents current uncertainty about the true treatment effect, incorporating all available relevant data through formal meta-analytic or elicitation techniques [37].

- Define Success Criteria: Establish clear, quantitative success criteria for both the initial (S₁) and subsequent (S₂) studies, including minimal critical values (x₁, x₂) for decision-making.

- Calculate Initial Study Assurance: Compute the unconditional assurance for the initial study by integrating its power function over the design prior.

- Compute Conditional Design Posterior: Update the design prior using Bayes' theorem to account for the initial study's success, generating π_D(Δ|S₁).

- Calculate Conditional Assurance: Use the conditional design posterior as the new design prior to compute the assurance of the subsequent study.

- Quantify De-risking Value: Calculate both absolute and relative improvements in success probability for the subsequent study attributable to the initial study's success.

Validation and Sensitivity Analysis:

- Perform sensitivity analysis on the design prior specification

- Validate the model using historical development programs with similar characteristics

- Assess robustness to variations in success criteria and effect size assumptions

Protocol for Bayesian Machine Learning in Target Identification

Objective: Implement the BANDIT framework for drug target identification using diverse data types to inform early development decisions.

Materials:

- Compound structures and chemical descriptors

- Drug efficacy data (e.g., NCI-60 growth inhibition screens)

- Post-treatment transcriptional responses

- Reported adverse effects databases

- Bioassay results and known target databases

- Computational resources for large-scale similarity calculations

Procedure:

- Data Collection and Curation: Assemble approximately 20,000,000 data points across six distinct data types, including drug efficacies, transcriptional responses, structures, adverse effects, bioassays, and known targets [38].

- Similarity Calculation: Compute similarity scores for all drug pairs within each data type using appropriate metrics for each data modality.

- Likelihood Ratio Conversion: Transform individual similarity scores into distinct likelihood ratios representing the evidence for shared targets.

- Total Likelihood Ratio Calculation: Combine individual likelihood ratios to obtain a Total Likelihood Ratio (TLR) proportional to the odds of two drugs sharing a target given all available evidence.

- Voting Algorithm Application: Implement a voting algorithm to predict specific targets for orphan compounds by identifying recurring targets across high-TLR shared target predictions.

- Experimental Validation: Design targeted experimental screens based on computational predictions to validate identified targets.

Table 2: BANDIT Framework Data Types and Discriminative Performance

| Data Type | Key Metrics | Discriminative Performance (D Statistic) | Utility in Target Identification |

|---|---|---|---|

| Drug Structure | Chemical descriptors, molecular fingerprints | 0.39 (Highest) | Primary driver for shared target prediction |

| Bioassay Results | Activity profiles across assay panels | 0.327 | Strong differentiator of shared targets |

| NCI-60 Efficacy | Growth inhibition (GI50) profiles | 0.331 | Effective for oncology target identification |

| Transcriptional Response | Gene expression changes post-treatment | 0.10 | Moderate predictive utility |

| Adverse Effects | Side effect similarity | 0.14 | Supplemental predictive value |

| Integrated Data (BANDIT) | Total Likelihood Ratio (TLR) | 0.69 | Superior to any single data type |

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Conditional Probability Analysis

| Reagent/Tool | Function | Application Context | Key Features |

|---|---|---|---|

| BANDIT Platform | Bayesian machine learning for target identification | Early-stage target discovery | Integrates 6+ data types; ~90% accuracy on 2000+ compounds |

| AutoSense Sensor Suite | Continuous physiological data collection | Stress measurement validation | Wireless ECG and respiration monitoring |

| Probabilistic Boolean Networks (PBN) | Modeling biological network dynamics | Signaling pathway analysis | Combines rule-based modeling with uncertainty principles |

| axe-core Accessibility Engine | Color contrast verification | Data visualization standards | Open-source JavaScript library for contrast validation |

| WebAIM Contrast Checker | Color contrast ratio evaluation | Scientific presentation accessibility | Checks against WCAG 2 AA standards (4.5:1 for text) |

| Bayesian Computational Tools | MCMC sampling, posterior estimation | Conditional assurance calculation | Stan, PyMC, JAGS for Bayesian inference |

Visualization of Methodological Frameworks

Conditional Assurance Calculation Workflow

BANDIT Target Identification Pipeline

Applications in Environmental Stressor Research

The integration of conditional assurance methodologies with environmental stressor identification represents a promising frontier in drug development. The cStress model demonstrates how rigorous computational approaches can be applied to stress measurement, achieving 89% recall with 5% false positive rates in lab settings and 72% accuracy in field validation [39]. This model carefully addresses challenges in data quality, physical activity confounding, and feature discrimination through a comprehensive pipeline including data collection, screening, cleaning, filtering, feature computation, normalization, and model training [39].

For stressor identification research, conditional assurance provides a framework to quantitatively assess how early biomarkers of stress response can predict later physiological manifestations. By viewing stressor exposure and response as a developmental pathway, researchers can apply similar Bayesian updating principles to determine which early indicators provide meaningful de-risking for subsequent adverse outcome pathways. The BANDIT approach further offers methodology for integrating diverse data types—from transcriptional responses to physiological measurements—to build more robust predictors of stressor effects [38].

Probabilistic Boolean Networks (PBNs) extend these applications by providing modeling frameworks that combine rule-based representation with uncertainty principles, suitable for describing biological systems at multiple scales from molecular networks to physiological responses [40]. In stressor identification, PBNs can model the complex interplay between environmental exposures, cellular responses, and organism-level outcomes, with the probabilistic components naturally accommodating the uncertainty inherent in biological systems.

Stressor identification is a critical scientific and regulatory process for determining the causes of biological impairment in water bodies. Within the framework of the Clean Water Act (CWA), accurate stressor identification directly informs regulatory actions, restoration goals, and management strategies. This document details the application of advanced probabilistic methods, specifically conditional probability analysis and related techniques, to enhance the objectivity and defensibility of stressor identification across key water management programs. These methodologies provide a quantifiable link between observed stressors and ecological effects, supporting decisions under conditions of uncertainty inherent in environmental systems.

Application Notes: The Role of Stressor Identification in Water Management Programs

The following table summarizes the purpose and specific role of stressor identification, highlighting requisite certainty levels, in major CWA programs.

Table 1: Stressor Identification in Key Water Management Programs

| Program / Context | Regulatory Purpose | Role & Required Certainty of Stressor Identification |

|---|---|---|

| CWA Section 303(d): Impaired Waters Listings & TMDLs | Identify specific waterbodies violating water quality standards (including biocriteria) and develop Total Maximum Daily Loads [41]. | High accuracy and reliability are necessary to identify the cause(s) of impairment and establish load allocations [41]. |

| CWA Section 402: NPDES Permit Program | Regulate point source discharges through permits to prevent violations of water quality standards [41]. | Critical for fairness and success; SI determines if a discharge is the cause of biological impairment, especially when modifying standards. A high degree of accuracy is required [41]. |

| Compliance & Enforcement | Take legal action against entities causing water quality violations [41]. | Requires a high degree of confidence and legal defensibility to clearly identify the pollution types and sources causing the violation [41]. |