Data Leakage in Machine Learning: A Critical Guide for Reliable Environmental Contaminant Research

This article addresses the critical yet often overlooked challenge of data leakage in machine learning (ML) applications for environmental contaminant research.

Data Leakage in Machine Learning: A Critical Guide for Reliable Environmental Contaminant Research

Abstract

This article addresses the critical yet often overlooked challenge of data leakage in machine learning (ML) applications for environmental contaminant research. Aimed at researchers, scientists, and professionals, it provides a comprehensive framework covering the foundational concepts of data leakage, its impact on the validity of models predicting the eco-environmental risks of emerging contaminants, and methodological best practices for its prevention. The content further delves into advanced troubleshooting techniques for detecting leakage and offers robust validation strategies to ensure model reproducibility and real-world applicability, ultimately guiding the development of trustworthy, data-driven environmental insights.

What is Data Leakage? Foundational Concepts and Critical Risks in Environmental ML

In the field of machine learning, particularly in scientific domains such as environmental contaminant research, the integrity of the predictive modeling process is paramount. Data leakage represents a critical threat to this integrity, occurring when information from outside the training dataset—typically from the test set—is used to create the model [1]. This breach in protocol causes a model to appear highly accurate during training and validation phases, only to fail dramatically when deployed in real-world scenarios where future data is genuinely unseen [1]. The consequences extend beyond poor performance to include flawed scientific insights, misallocated resources, and compromised decision-making in critical environmental and health applications.

The fundamental purpose of predictive modeling is to create systems that can generalize to new, unseen data. To simulate this real-world condition, the established practice involves splitting available data into separate sets for training and validation [1]. Data leakage violates this core principle by blurring the boundary between what the model should learn from and what it should genuinely predict. In environmental contaminant research, where models may be used to forecast pollution levels or identify contamination sources, data leakage can lead to dangerously inaccurate assessments that undermine public health interventions and policy decisions.

Types and Mechanisms of Data Leakage

Data leakage manifests in several distinct forms, each compromising the model validation process through different mechanisms. Understanding these categories is essential for developing effective prevention strategies.

Target Leakage

Target leakage occurs when models incorporate data that would not be available at the time of prediction in a real-world deployment scenario [1]. This type of leakage creates an unrealistic relationship between features and the target variable, teaching the model to exploit information it wouldn't normally have access to.

A classic example involves credit card fraud detection. A model trained with a "chargeback received" column would appear highly accurate during validation because chargebacks almost always indicate confirmed fraud [1]. However, in practice, a chargeback typically occurs after fraud has been detected and would not be available when the system needs to make a real-time decision on whether to block a transaction. When deployed without this future information, the model's performance degrades significantly [1].

Train-Test Contamination

Train-test contamination arises when the separation between training and validation data is compromised, often during improper data splitting or preprocessing procedures [1]. This form of leakage can be subtle and unintentional, making it particularly dangerous in complex research pipelines.

A common manifestation occurs when standardization or normalization of numerical features is applied to the entire dataset before splitting into training and test sets [1]. When this happens, the model indirectly "sees" information from the test set during training because the preprocessing parameters (mean, standard deviation) were calculated using the complete dataset. The result is artificially inflated performance on the test set, as the model has effectively received prior knowledge about the distribution of the validation data [1].

Specialized Leakage in Scientific Research

In research domains such as neuroimaging and environmental science, additional specialized forms of leakage have been identified:

- Feature selection leakage: Selecting features or brain areas of interest from the entire pool of data rather than from the training data only [2] [3].

- Repeated subject leakage: When data from the same individual appears in both training and testing sets, particularly problematic in longitudinal studies or datasets with family members [2] [3].

- Covariate-related leakage: Performing covariate regression or site correction across the entire dataset before splitting rather than within the cross-validation folds [3].

Table 1: Categories and Characteristics of Data Leakage

| Leakage Type | Definition | Common Causes | Primary Impact |

|---|---|---|---|

| Target Leakage | Inclusion of future/unavailable information during training | Improper feature selection; causal misunderstanding | Overfitting to unrealistic patterns |

| Train-Test Contamination | Breach of separation between training and validation data | Preprocessing before splitting; improper cross-validation | Artificially inflated performance metrics |

| Feature Selection Leakage | Selecting features using complete dataset statistics | Dimensionality reduction on full dataset; biomarker identification prior to splitting | Significant performance inflation, especially in low-signal domains |

| Subject-Level Leakage | Non-independent observations between training and test sets | Repeated measurements; family members in different sets; data duplication | Invalid generalizability claims; reduced reproducibility |

Quantitative Impact of Data Leakage

Recent empirical studies have quantified the dramatic effects of data leakage on model performance across different domains and data types.

Neuroimaging Case Study

A comprehensive 2023 study evaluated the effects of multiple leakage types on connectome-based machine learning models across four large datasets (ABCD, HBN, HCPD, PNC) and three phenotypes (age, attention problems, matrix reasoning) [3]. The research employed over 400 different pipelines to systematically assess how various forms of leakage impact prediction performance, as measured by Pearson's correlation (r) and cross-validation R² (q²) [3].

Table 2: Quantitative Impact of Data Leakage on Model Performance (HCPD Dataset)

| Leakage Type | Impact on Attention Problems | Impact on Age Prediction | Impact on Matrix Reasoning |

|---|---|---|---|

| No Leakage (Baseline) | r=0.01, q²=-0.13 | r=0.80, q²=0.63 | r=0.30, q²=0.08 |

| Feature Leakage | Δr=+0.47, Δq²=+0.35 | Δr=+0.03, Δq²=+0.05 | Δr=+0.17, Δq²=+0.13 |

| Subject Leakage (20%) | Δr=+0.28, Δq²=+0.19 | Δr=+0.04, Δq²=+0.07 | Δr=+0.14, Δq²=+0.11 |

| Leaky Covariate Regression | Δr=-0.06, Δq²=-0.17 | Δr=-0.02, Δq²=-0.03 | Δr=-0.09, Δq²=-0.08 |

| Family Leakage | Δr=+0.02, Δq²=0.00 | Δr=0.00, Δq²=0.00 | Δr=0.00, Δq²=0.00 |

The findings reveal several critical patterns. First, the magnitude of performance inflation is inversely related to baseline performance—models with weaker baseline performance (like attention problems with r=0.01) showed dramatically greater inflation from leakage than strong baseline models (like age prediction with r=0.80) [3]. This pattern is particularly concerning for environmental contaminant research, where true effect sizes may be modest and signals subtle.

Second, not all leakage inflates performance; some forms actually degrade it. Leaky covariate regression consistently decreased prediction performance across all phenotypes [3]. This demonstrates that leakage can produce both optimistically biased performance measures (hindering reproducibility) and pessimistic ones (obscuring true effects).

Brodomain Implications

A National Library of Medicine study found that across 17 different scientific fields where machine learning methods have been applied, at least 294 scientific papers were affected by data leakage, leading to overly optimistic performance reports [1]. This widespread occurrence highlights the systemic nature of the problem and the need for heightened awareness and prevention measures across scientific disciplines, including environmental research.

Detection and Prevention Methodologies

Experimental Protocols for Leakage Detection

Establishing rigorous experimental protocols is essential for identifying and preventing data leakage in research workflows. The following methodologies, adapted from empirical studies, provide a framework for maintaining data integrity:

Protocol 1: Proper Cross-Validation for Temporal Data For time-series environmental data (e.g., contaminant concentration measurements), standard random splitting violates temporal dependencies. Instead, use:

- Chronological splitting: Ensure training data always precedes test data temporally [1]

- Rolling window validation: Train on window [t-n, t-1], test at time t, then increment [1]

- Walk-forward validation: Expand training window while maintaining temporal sequence [1]

- Seasonal adjustment: Account for periodic patterns when creating splits to prevent seasonal information leakage

Protocol 2: Feature Selection Safeguards Based on findings that feature leakage causes significant performance inflation [3]:

- Perform all feature selection, including dimensionality reduction and biomarker identification, strictly within training folds

- Apply selection results (e.g., feature subsets, transformation parameters) to validation data without re-computation

- For neuroimaging and environmental sensor data, select regions of interest or significant sensors using training data only [2]

- Document all feature selection procedures with explicit mention of how training-test separation was maintained

Protocol 3: Preprocessing Integrity Verification To prevent train-test contamination during data preprocessing [1]:

- Fit preprocessing transformations (scaling, normalization, imputation) exclusively on training data

- Apply the fitted transformers to validation data without refitting

- For multi-site studies, calculate and apply site-correction parameters within training folds only [3]

- Validate preprocessing independence by testing whether transformation parameters differ when calculated on full dataset versus training data only

The Researcher's Toolkit: Essential Safeguards Against Leakage

Table 3: Research Reagent Solutions for Preventing Data Leakage

| Tool/Category | Function | Implementation Examples |

|---|---|---|

| Stratified & Time Series Splitters | Creates data splits preserving distributional characteristics or temporal relationships | StratifiedKFold, TimeSeriesSplit (Scikit-learn), GroupShuffleSplit for dependent data |

| Pipeline Constructs | Encapsulates preprocessing and modeling steps to prevent cross-validation contamination | Pipeline and ColumnTransformer (Scikit-learn), tf.data.Dataset (TensorFlow) |

| Data Provenance Trackers | Monitors data lineage and transformation history across experimental iterations | MLflow, Weights & Biases, DVC (Data Version Control), custom experiment trackers |

| Model Validation Suites | Comprehensive performance assessment with leakage detection capabilities | cross_validate (Scikit-learn), check_estimator for protocol verification, custom sanity checks |

| Domain-Specific Splitting Utilities | Handles specialized data dependencies (family, longitudinal, geographic) | GroupKFold for subject independence, LeaveOneGroupOut, spatial blocking for environmental data |

Red Flags and Diagnostic Indicators

Several indicators can signal potential data leakage during model development:

- Unusually high performance: Models showing significantly higher accuracy, precision, or recall than expected, especially on validation data [1]

- Discrepancies between training and test performance: Minimal gap between training and validation performance metrics may indicate contamination [1]

- Inconsistent cross-validation results: High variance across folds or performance that seems too consistent may indicate improper splitting [1]

- Unexpected feature importance: Heavy reliance on features that don't align with domain knowledge or would be unavailable during real-world prediction [1]

- Performance degradation on truly unseen data: Significant drop when moving from validation to external test sets or production deployment [1]

Implications for Environmental Contaminant Research

The methodologies and findings from neuroimaging and other fields have direct relevance to machine learning applications in environmental contaminant research. The systematic review of machine learning in air pollution epidemiology reveals parallel challenges and opportunities [4]. As environmental datasets grow in complexity and volume, traditional statistical methods face limitations, creating both the need for machine learning approaches and vulnerability to data leakage pitfalls.

In environmental monitoring applications, several domain-specific leakage risks emerge:

Temporal Leakage in Contaminant Forecasting Predicting future contamination levels based on historical data requires strict chronological splitting. Using future measurements to inform predictions of past events represents a fundamental violation of causal structure that can create seemingly accurate but useless forecasting models.

Spatial Autocorrelation in Geographic Data Environmental measurements from nearby locations are often correlated. Standard random splitting that places adjacent sampling points in both training and test sets can artificially inflate performance by allowing the model to effectively "cheat" through spatial proximity.

Instrumentation and Laboratory Effects When data comes from multiple sensors or analytical techniques, preventing information leakage about measurement characteristics across splits is essential. Correcting for batch effects or sensor calibration must be performed within training data only.

Transferring the prevention protocols from neuroimaging to environmental contexts requires adapting the core principles while respecting domain-specific data structures. The workflow for environmental contaminant prediction would maintain the same rigorous separation but account for spatial and temporal dependencies unique to ecological systems.

Data leakage represents a fundamental challenge to the validity and reproducibility of machine learning models in scientific research, including environmental contaminant studies. The empirical evidence demonstrates that leakage can dramatically inflate—or in some cases deflate—performance metrics, leading to incorrect conclusions about model capability and potentially flawed real-world decisions.

The most effective approach to data leakage is comprehensive prevention through rigorous experimental design:

- Maintain strict separation between training and test data throughout the entire pipeline, from preprocessing through model evaluation

- Implement temporal and spatial splitting protocols that respect the inherent dependencies in environmental data

- Use pipeline constructs that encapsulate all data transformations and ensure they are fitted only on training data

- Apply domain expertise to scrutinize feature selections and model interpretations for realistic relationships

- Maintain healthy skepticism toward unexpectedly high performance and validate findings through multiple approaches

As machine learning applications in environmental research continue to expand, establishing and adhering to leakage prevention protocols becomes increasingly critical for producing reliable, actionable scientific insights. The methodologies and safeguards outlined provide a foundation for developing more robust predictive models that can genuinely advance our understanding and management of environmental contaminants.

The reproducibility crisis refers to the growing accumulation of published scientific findings that other researchers are unable to reproduce, striking at the core credibility of scientific knowledge [5]. This phenomenon, also termed the replicability crisis, represents a fundamental challenge across numerous scientific disciplines, undermining the reliability of theories built upon irreproducible results and potentially calling substantial portions of scientific knowledge into question [5]. While frequently discussed in relation to psychology and medicine, data strongly indicate that many other natural and social sciences are similarly affected [5].

The crisis gained prominence in the early 2010s through a series of pivotal events that exposed methodological flaws across fields [5]. These included failed replications of highly-cited social priming studies, controversial experiments on extrasensory perception that utilized common but flawed statistical practices, and alarming reports from biotech companies Amgen and Bayer Healthcare indicating replication rates of only 11-20% for landmark findings in preclinical cancer research [5]. Concurrently, metascience studies revealed how widespread questionable research practices—such as exploiting flexibility in data collection and analysis—dramatically increased false positive rates [5].

The machine learning (ML) field faces particular reproducibility challenges, with researchers often spending weeks attempting to reproduce "state-of-the-art" results from top-tier papers without success, frequently hampered by missing code, unspecified random seeds, and unresponsive authors [6]. This crisis represents not merely a technical failure but a systemic issue threatening the scientific enterprise's credibility, especially as data-driven approaches increasingly replace or assist traditional laboratory studies across fields like environmental science [7].

Defining the Scope: Reproducibility vs. Replicability

Within the reproducibility crisis discourse, terminological precision is crucial. Although sometimes used interchangeably, reproducibility and replicability represent distinct concepts with important technical differences [5] [8]:

Reproducibility refers to reexamining and validating the analysis of a given set of data, essentially obtaining the same or similar results when rerunning analyses from previous studies using the original design, data, and code [5] [8].

Replicability involves repeating an existing experiment or study with new, independent data to verify the original conclusions, obtaining similar results when repeating, in whole or part, a prior study [5] [8].

Researchers further categorize replication attempts into several distinct types [5]:

- Direct or exact replication: The experimental procedure is repeated as closely as possible to the original study.

- Systematic replication: The experimental procedure is largely repeated but with some intentional changes to specific parameters.

- Conceptual replication: The finding or hypothesis is tested using a completely different procedure or methodology.

The scientific method operationalizes objectivity through replication, serving as proof that knowledge can be separated from the specific circumstances (time, place, or persons) under which it was originally gained [5]. The inability to achieve consistent results through these processes therefore strikes at the very heart of scientific epistemology.

Quantitative Evidence of the Crisis Across Disciplines

Empirical studies across multiple fields reveal alarming rates of irreproducibility, though estimates vary considerably by discipline and methodology. The following table summarizes key findings from large-scale replication efforts:

Table 1: Reproducibility Rates Across Scientific Disciplines

| Field | Reproducibility Rate | Study Details | Year |

|---|---|---|---|

| Cancer Biology (Bayer Healthcare) | ~34% | Replication of published pre-clinical studies before drug development programs | 2011 [9] |

| Cancer Biology (Amgen) | ~11% | Replication of landmark pre-clinical cancer studies | 2012 [9] |

| Psychology | 36-68% | Large-scale collaborative replication projects (Many Labs) | 2011-2015 [5] [8] |

| Biomedical Research | ~50% | More recent systematic assessment | ~2024 [9] |

| Economics & Social Sciences | 30-70% | Various many-lab replication projects | 2010-2018 [8] |

Beyond these direct replication attempts, survey evidence further illustrates the pervasiveness of the problem. A Nature survey conducted in 6 found that between 60-80% of scientists across various disciplines reported encountering hurdles in reproducing their peers' work, with 40-60% experiencing difficulties replicating their own experiments [8]. It is important to note, however, that some scholars question the existence of a full-blown "crisis," pointing to the lack of conclusive evidence quantifying its true scale and arguing that the current approach to addressing it may not adhere to the rigorous standards normally applied to the scientific method [10].

A Case Study in Machine Learning and Environmental Contaminant Research

The field of environmental contaminant research exemplifies both the promise and pitfalls of data-driven science, particularly as machine learning approaches increasingly replace or supplement traditional laboratory studies [7] [11]. Research on emerging contaminants (ECs)—such as antibiotics, microplastics, and PFAS—faces specific data science challenges that exacerbate reproducibility concerns [7] [11].

Common Data Issues in Contaminant Research

Table 2: Data Science Challenges in Environmental Contaminant Research

| Challenge | Impact on Reproducibility | Potential Solutions |

|---|---|---|

| Matrix Influence | Effects of complex environmental matrices on contaminant behavior are often ignored, limiting real-world applicability [7]. | Develop ensemble models that account for complex environmental interactions [7]. |

| Trace Concentration | Low concentration detection and effect prediction create signal-to-noise issues in ML models [7]. | Implement specialized detection algorithms and validation protocols. |

| Complex Scenarios | Oversimplified laboratory conditions fail to capture environmental complexity [7]. | Create integrated research frameworks combining lab and field studies [7]. |

| Data Leakage | Inadvertent sharing of information between training and test sets creates overly optimistic performance metrics [7]. | Implement rigorous validation schemes and preprocessing pipelines. |

Exemplary Approach: ML for High Voltage Insulator Contamination

A 2025 study on contamination classification of polluted high voltage insulators using leakage current demonstrates a robust methodological approach that addresses several reproducibility challenges [12]. This research developed a meticulous dataset under controlled laboratory conditions while incorporating critical parameters of temperature and varying humidity to reflect real-world environmental impact [12]. The methodology included:

- Dataset Generation: Artificially polluted insulators divided into three contamination classes (high, moderate, low) with leakage current measurements under varied environmental conditions [12].

- Feature Extraction: Comprehensive preprocessing and feature extraction from time, frequency, and time-frequency domains, with feature ranking to identify the most important variables [12].

- Model Optimization: Four distinct ML models (including decision trees and neural networks) trained using Bayesian optimization for parameter tuning [12].

Notably, this study achieved accuracies consistently exceeding 98%, with decision tree-based models exhibiting significantly faster training and optimization times compared to neural network counterparts [12]. This research exemplifies how carefully controlled data collection and processing can produce highly reproducible results even in complex environmental applications.

Experimental Protocols for Reproducible Research

Detailed Methodology: Leakage Current Experiment

The experimental protocol from the high-voltage insulator contamination study provides a template for reproducible research design in ML-driven environmental applications [12]:

1. Sample Preparation

- Porcelain insulators were artificially polluted according to standardized contamination protocols

- Three distinct contamination classes were established: high, moderate, and low contamination

- Contamination levels were quantitatively verified through direct measurement

2. Data Collection Under Controlled Conditions

- Leakage current measurements were taken under systematically varied laboratory conditions

- Critical environmental parameters (temperature, humidity) were precisely controlled and documented

- Multiple trials were conducted for each contamination class under identical conditions

3. Data Preprocessing Pipeline

- Raw leakage current signals were processed to remove artifacts and noise

- Data were normalized to account for experimental variations

- Dataset was partitioned using strict separation between training, validation, and test sets

4. Feature Extraction and Selection

- Features were extracted from multiple domains: time, frequency, and time-frequency

- Extracted features were ranked by importance using statistical methods

- Most predictive features were selected for model training

5. Model Training with Bayesian Optimization

- Four ML models were trained: decision trees, neural networks, and others

- Bayesian optimization technique was systematically applied to hyperparameter tuning

- Performance was evaluated using multiple metrics: accuracy, precision, recall, F1-score

Research Reagent Solutions

Table 3: Essential Materials for Reproducible ML-Environmental Research

| Research Reagent | Function in Experimental Protocol | Specifications for Reproducibility |

|---|---|---|

| Porcelain Insulators | Standardized test subject for contamination studies | Identical material composition and surface properties [12] |

| Contamination Simulants | Artificially reproduce environmental pollutant deposition | Precise chemical composition and concentration documentation [12] |

| Leakage Current Sensors | Measure electrical current flow across insulator surfaces | Calibration certification and measurement frequency specifications [12] |

| Environmental Chambers | Control temperature and humidity during experiments | Precision control parameters (±0.5°C, ±2% RH) and validation records [12] |

| Feature Extraction Algorithms | Convert raw signals into analyzable features | Code availability with version control and dependency documentation [12] |

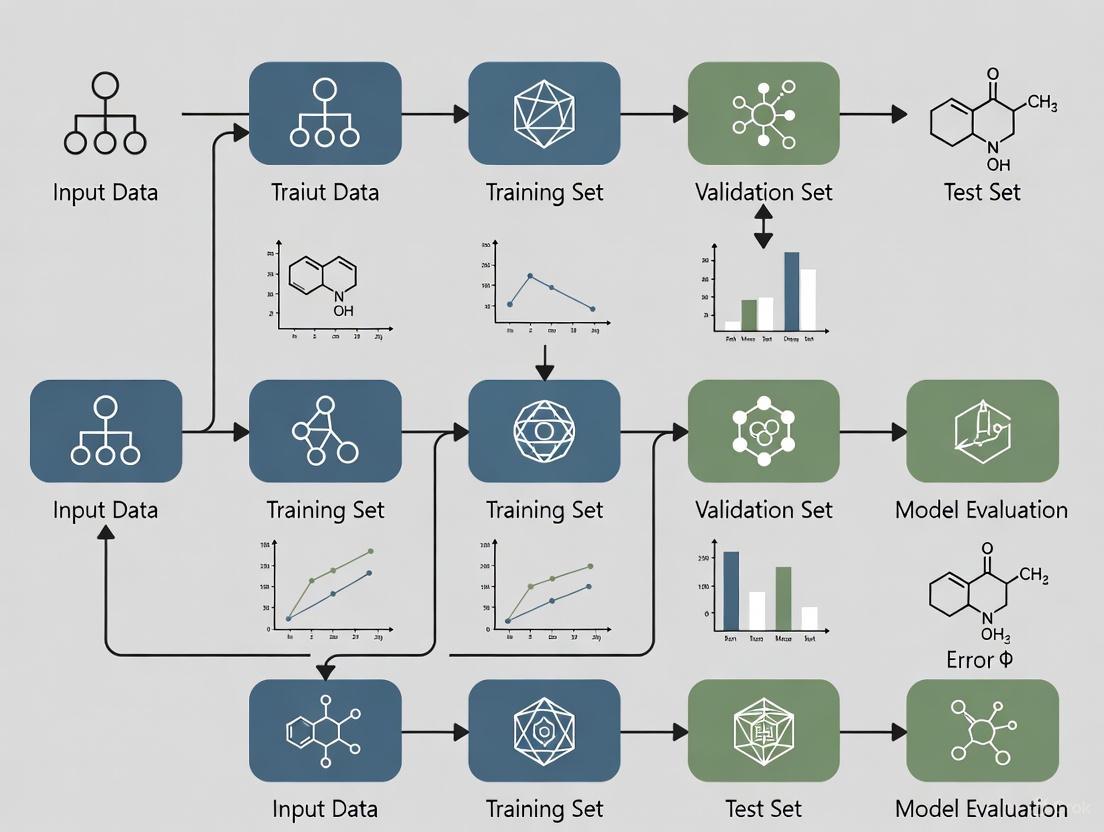

Visualization of Research Workflows and Relationships

The following diagrams illustrate key experimental workflows and conceptual relationships in reproducible research, created using Graphviz DOT language with the specified color palette.

Research Workflow for Environmental ML

Crisis Root Causes and Solutions

Solutions and Emerging Approaches

Addressing the reproducibility crisis requires multi-faceted interventions targeting various stages of the research lifecycle. Promising approaches include:

Open Science Practices

Systematic adoption of open science practices represents one of the most promising avenues for improving reproducibility [8]. These include:

- Data and Code Sharing: Making datasets and analysis code publicly available enables other researchers to verify and build upon existing work [8].

- Study Preregistration: Registering study designs, hypotheses, and analysis plans before data collection reduces questionable research practices [8].

- Open Access Publishing: Making research findings freely available ensures broader scrutiny and validation [13].

However, evidence for the effectiveness of these interventions remains limited. A 2025 scoping review found that of 105 studies examining interventions to improve reproducibility, only 15 directly measured the effect on reproducibility or replicability, with the remainder addressing proxy outcomes like data sharing or methods transparency [8]. Moreover, 30 studies were non-comparative and 27 used cross-sectional observational designs that preclude causal inference [8].

Specialized Reproducibility-Focused Venues

New scholarly venues are emerging with reproducibility as a core principle. Computo, for example, is a journal for transparent and reproducible research in statistics and machine learning that requires submissions to be formatted as executable notebooks integrating text, code, equations, and references [13]. Each submission must be associated with a git repository configured to demonstrate dynamic and durable reproducibility of the contribution [13].

Computo distinguishes between "editorial reproducibility" (the ability to re-run provided code and obtain the same outputs) and "scientific reproducibility" (the robustness and generalizability of findings), acknowledging that complex fields like deep learning present unique challenges for reproducibility standards [13].

Community-Led Initiatives

The machine learning community has developed specific initiatives to address reproducibility, such as the Machine Learning Reproducibility Challenge, a conference venue for sharing reproducible methods and tools, investigating the reproducibility of papers from top conferences, and testing the generalizability of scientific findings through novel insights and empirical results [14].

These community efforts acknowledge that reproducibility in ML is often a "heroic act" that is "not efficient, not legal, not credited," as noted by Soumith Chintala of Meta in a keynote address [14], highlighting the systemic barriers to reproducible research even when technical solutions exist.

The reproducibility crisis affects a broad spectrum of scientific fields, from psychology and medicine to machine learning and environmental science. Quantitative evidence from large-scale replication efforts reveals concerning rates of irreproducibility, though precise estimates vary by discipline. The crisis stems from multiple root causes, including questionable research practices, insufficient methodological training, and a pervasive publish-or-perish culture that often prioritizes novel findings over robust verification.

The case of machine learning applications in environmental contaminant research illustrates both the specific challenges and potential solutions. Data leakage, matrix effects, and oversimplified experimental scenarios can compromise reproducibility, while careful study design, comprehensive feature engineering, and robust validation protocols can enhance it. Emerging approaches centered on open science, specialized reproducible research venues, and community-led initiatives offer promising paths forward.

Addressing the reproducibility crisis requires concerted effort across the scientific ecosystem—funders, institutions, publishers, and individual researchers all have roles to play in creating incentives for reproducibility and providing the tools and training necessary to achieve it. As scientific research grows increasingly complex and data-driven, ensuring the reliability and verifiability of published findings becomes ever more critical to maintaining public trust and advancing knowledge.

The application of machine learning (ML) to environmental contaminant research represents a paradigm shift in how scientists monitor, assess, and mitigate ecological threats. However, this promising intersection faces fundamental data vulnerability challenges that threaten the validity and real-world applicability of research findings. Environmental data possesses inherent characteristics—complex scenarios and trace concentrations—that create unique obstacles for ML workflows. These vulnerabilities are particularly problematic within the context of data leakage, where information from outside the training dataset inadvertently influences the model, creating overly optimistic performance metrics that fail to generalize to real-world conditions [7]. The matrix effect, where complex environmental matrices interfere with contaminant detection and quantification, further compounds these challenges by introducing systematic biases that can be amplified by ML algorithms [7] [11]. This technical analysis examines the core vulnerabilities of environmental data within ML workflows and proposes methodological frameworks to enhance research rigor.

Fundamental Vulnerabilities in Environmental Data

The Challenge of Complex Environmental Scenarios

Environmental systems operate as interconnected networks of biological, chemical, and physical processes that create multidimensional complexity difficult to capture in ML models. The integrated research framework encompassing natural fields, ecological systems, and large-scale environmental problems is often compromised when models are trained solely on simplified laboratory data [7]. This disconnect between training data and real-world complexity manifests in several critical ways:

Spatiotemporal Heterogeneity: Environmental contaminants distribute unevenly across landscapes and water bodies, with concentrations fluctuating based on seasonality, weather patterns, and anthropogenic activities. ML models trained on limited spatial or temporal data fail to capture these dynamics, leading to inaccurate predictions when applied to new contexts or timeframes [7].

Multivariate Interactions: Contaminants rarely exist in isolation; they interact with other compounds, environmental media, and biological systems in ways that alter their behavior, toxicity, and detectability. Most ML approaches struggle to model these higher-order interactions, especially when training data comes from reductionist laboratory studies that control for environmental variables [11].

Ecological System Complexity: The transition from controlled laboratory conditions to natural ecosystems introduces countless confounding factors—from microbial communities to sediment characteristics—that significantly impact contaminant fate and transport but are rarely comprehensively included in ML training datasets [7].

The Analytical Precision Problem at Trace Concentrations

Emerging contaminants (ECs) frequently exist in the environment at concentrations that push against the detection limits of analytical instrumentation, creating fundamental data quality challenges for ML applications. The trace concentration problem manifests across multiple dimensions of the ML pipeline [7]:

Signal-to-Noise Ratio Limitations: At part-per-billion or part-per-trillion levels, instrumental signals for target contaminants approach the noise floor of detection systems, creating inherent uncertainty in the training data itself. ML models trained on these noisy measurements may learn to amplify analytical artifacts rather than true environmental patterns.

Matrix Interference Effects: The presence of co-extracted compounds in environmental samples can suppress or enhance analyte signals, leading to inaccurate quantification. When these matrix influence effects are not consistent across samples, they introduce non-systematic errors that ML algorithms cannot easily distinguish from true concentration variations [7].

Censored Data Challenges: Measurements below method detection limits create left-censored datasets that require specialized statistical handling before they can be utilized in ML workflows. Common approaches (e.g., substitution with MDL/2) can introduce bias that propagates through the modeling process, particularly when censoring levels are high [11].

Table 1: Data Vulnerability Framework for Environmental ML Applications

| Vulnerability Category | Technical Manifestation | Impact on ML Model Performance |

|---|---|---|

| Complex Scenarios | Disconnect between laboratory training data and field conditions | Poor generalization to real-world environments; inaccurate spatial predictions |

| Trace Concentrations | High measurement uncertainty near detection limits | Reduced predictive accuracy; amplification of analytical noise |

| Matrix Effects | Signal suppression/enhancement from co-occurring substances | Systematic bias in concentration predictions; inaccurate source attribution |

| Spatiotemporal Dynamics | Non-stationary contamination patterns across space and time | Model degradation when applied to new locations or time periods |

| Multivariate Interactions | Unmeasured confounding variables in environmental systems | Omitted variable bias; incorrect causal inference |

The Data Leakage Crisis in Environmental ML Research

Mechanisms of Data Leakage in Environmental Contexts

Data leakage represents a critical threat to the validity of ML applications in environmental science, often creating an illusion of model performance that disintegrates when deployed in real-world settings. In the context of environmental contaminants, leakage occurs when information from outside the training dataset influences model development, typically through improper separation of data that should remain independent. The ensemble models designed to reveal mechanisms and spatiotemporal trends must be developed without data leakage to maintain their validity and predictive power [7]. Several specific leakage mechanisms plague environmental ML research:

Temporal Leakage: Using future data to predict past contamination events represents a fundamental violation of temporal causality common in environmental forecasting. For example, training models on water quality parameters that incorporate seasonal variation without proper time-series splitting can lead to inflated performance metrics that fail to manifest in actual forecasting scenarios [15].

Spatial Autocorrelation: Environmental data points collected from proximity to one another are typically more similar than distant points, violating the assumption of independence fundamental to many cross-validation approaches. When spatial dependencies are not properly accounted for during data splitting, models appear to perform well but cannot generalize to new geographic areas [16].

Feature Leakage: Including variables in training that would not be available during actual prediction scenarios creates feature-based leakage. In environmental contexts, this often occurs when using expensive laboratory measurements as predictors for field-deployable sensors or when incorporating downstream effects as predictors for upstream causes [7].

Case Study: Data Leakage in Drinking Water Quality Prediction

Recent research applying ML to predict drinking water quality in California demonstrates how modeling decisions can introduce leakage with significant environmental justice implications. Studies have found that modeling choice transparency is critically important when using ML for environmental justice applications, as optimization parameter choices and classification threshold selections can dramatically affect error distribution across demographic groups [15]. In one analysis, altering classification thresholds changed which communities were most likely to be false negatives—a critical consideration when misclassification could expose vulnerable populations to contaminated water [15]. This exemplifies how technical decisions in the ML pipeline can either exacerbate or mitigate systemic environmental inequalities, moving beyond mere statistical accuracy to consequential real-world impacts.

Methodological Framework for Robust Environmental ML

Experimental Protocols for Vulnerability Mitigation

Implementing rigorous methodological protocols throughout the ML pipeline is essential for producing environmentally relevant models that maintain validity under complex real-world conditions. The following experimental frameworks address the core vulnerabilities of environmental data:

Integrated Validation Framework: Establish a multi-tiered validation approach incorporating (1) hold-out testing with strict spatiotemporal segregation, (2) external validation using completely independent datasets from different geographic regions or time periods, and (3) field validation comparing predictions with actual environmental measurements collected specifically for model verification purposes [7] [11].

Causal Relationship Development: Prioritize strong causal relationships in model development through incorporation of domain knowledge, mechanistic understanding, and causal inference techniques rather than relying solely on correlational patterns that may reflect spurious relationships or unmeasured confounding [7].

Uncertainty Quantification Protocol: Implement comprehensive uncertainty propagation that accounts for analytical measurement error, spatial interpolation uncertainty, and model parameter uncertainty, providing decision-makers with probabilistic predictions rather than point estimates, which is especially critical for trace-level contaminants [11].

Table 2: Research Reagent Solutions for Environmental ML Workflows

| Research Reagent | Technical Function | Application in Environmental ML |

|---|---|---|

| Ensemble Models | Combines multiple algorithms to improve predictive performance and robustness | Reduces variance in predictions; handles complex nonlinear relationships in environmental data |

| Explainable AI (XAI) | Provides interpretable insights into model decisions and feature importance | Identifies key drivers of contamination; builds regulatory trust in model outputs |

| Spatiotemporal Cross-Validation | Preserves data structure during model evaluation | Prevents data leakage from spatial autocorrelation and temporal autocorrelation |

| Censored Data Handling | Specialized statistical methods for values below detection limits | Maintains data integrity for trace-level contaminants without introducing bias |

| Multi-Modal Data Fusion | Integrates disparate data types (remote sensing, field measurements, laboratory assays) | Captures environmental complexity; improves model comprehensiveness |

Visualization of Environmental ML Workflow and Vulnerabilities

Environmental ML Vulnerability and Mitigation Workflow

Data Leakage Prevention Protocol

Data Leakage Prevention Protocol in Environmental ML

The vulnerabilities inherent in environmental data—particularly complex scenarios and trace concentrations—represent significant but surmountable challenges for machine learning applications. Addressing these issues requires moving beyond predictive accuracy as the sole metric of model success toward a more comprehensive framework that prioritizes causal understanding, real-world applicability, and equity considerations. The mutual inspiration among data science, process and mechanism models, and laboratory and field research emerges as a critical pathway forward, ensuring that ML applications remain grounded in environmental reality rather than mathematical abstraction [7]. As the field continues to evolve, researchers must maintain rigorous standards for data quality, model transparency, and validation protocols to ensure that machine learning fulfills its potential as a tool for environmental protection rather than a source of misleading conclusions. By directly confronting the vulnerabilities outlined in this analysis, the environmental ML community can develop more robust, reliable, and equitable applications that effectively address the pressing challenge of environmental contamination.

In environmental contaminant research, data leakage represents a critical methodological pitfall that occurs when information from outside the training dataset is inadvertently used to create a model. This flaw produces overoptimistic performance metrics during development that vanish when the model encounters real-world data, leading to dangerously inaccurate environmental decisions [17]. The consequences are particularly severe in fields like contaminant prediction and risk assessment, where model outputs directly influence public health interventions and multi-million-dollar remediation strategies. This technical guide examines the origins and impacts of data leakage in machine learning (ML) for environmental science, providing researchers with robust detection and prevention methodologies to ensure model reliability and regulatory compliance.

The Data Leakage Problem in Environmental Research

Fundamental Concepts and Definitions

Data leakage in machine learning refers to the erroneous incorporation of information from outside the training dataset during model development, creating an unrealistic advantage that inflates performance estimates. This problem manifests through two primary mechanisms:

Feature Leakage: When datasets contain features that would not be available at the time of prediction in a real-world deployment scenario. In environmental monitoring, this might include using future contaminant concentration measurements to predict current levels or incorporating data from remediation sites that would not be available for uncontaminated locations.

Temporal Leakage: Particularly prevalent in time-series environmental data, this occurs when future observations influence the training of models intended for forecasting. For spatiotemporal contamination models predicting hexavalent chromium distributions, using data from multiple time periods without proper temporal segregation creates fundamentally flawed validation [18].

Prevalence in Environmental Contaminant Research

Recent bibliometric analyses reveal a concerning acceleration of ML applications in environmental chemical research, with publications surging from fewer than 25 annually before 2015 to over 719 in 2024 alone [16]. This rapid adoption has outpaced the implementation of rigorous methodological safeguards, creating fertile ground for inadvertent data leakage. The analysis of 3,150 peer-reviewed articles identified eight major research clusters, with water quality prediction and quantitative structure-activity relationship (QSAR) modeling among the most prominent domains where leakage frequently occurs [16].

Table 1: Domains Most Vulnerable to Data Leakage in Environmental ML

| Research Domain | Primary Leakage Risks | Typical Consequences |

|---|---|---|

| Water Quality Prediction [17] [16] | Temporal autocorrelation in sensor data; spatial autocorrelation in monitoring wells | Overestimation of prediction accuracy by 15-25% |

| Chemical Risk Assessment [16] | Use of test set chemicals during feature selection | False negative predictions for novel contaminants |

| Groundwater Contamination Forecasting [18] | Improper separation of spatiotemporal data | Faulty remediation planning and resource allocation |

| Environmental Health Risk Modeling [19] | Leakage of demographic or health outcome data into exposure features | Inaccurate identification of high-risk populations |

Case Studies: Leakage Consequences in Environmental Research

Groundwater Contamination Forecasting

At the Hanford 100-Area, a site historically contaminated with hexavalent chromium (Cr[VI]), researchers applied random forest algorithms to predict spatiotemporal contaminant distributions in groundwater [18]. The complex hydrogeology and multiple potential contamination pathways created significant challenges for traditional conceptual site models. The initial modeling approach improperly handled the temporal relationship between river stage fluctuations and contaminant measurements, creating a model that appeared highly accurate during validation but failed to provide reliable predictions for directing pump-and-treat operations. This case exemplifies how spatiotemporal dependencies in environmental systems present particularly subtle leakage pathways that can compromise remediation decisions with significant financial and environmental consequences [18].

Lead Contamination Risk Prediction

In Washington, DC, explainable machine learning models were developed to predict blood lead levels and school drinking water contamination using environmental, topographic, socioeconomic, and infrastructure features [19]. The research team implemented rigorous cross-validation techniques to prevent leakage between distinct geographical areas and between individual-level and community-level data sources. Models achieved exceptional discriminative performance (AUC = 0.90-0.95) specifically because they addressed potential leakage pathways during feature engineering [19]. This case demonstrates that proactive leakage prevention enables the development of reliable tools for prioritizing lead service line replacements and protecting vulnerable populations.

Diagram 1: Data leakage impact cascade (49 characters)

Detection and Prevention Methodologies

Experimental Design Protocols

Preventing data leakage begins with meticulous experimental design that respects the temporal and spatial dependencies inherent in environmental data collection. The following protocols provide robust safeguards:

Temporal Segregation: For time-series contamination data, establish a clear temporal cutoff where all data before a specific date is used for training and all subsequent data is reserved for testing. This approach is essential for groundwater contamination forecasting where seasonal patterns and multi-year trends create autocorrelation [18].

Spatial Blocking: When dealing with geographically distributed sampling (e.g., groundwater monitoring wells, air quality sensors), implement spatial blocking techniques that ensure nearby locations remain in either training or testing sets, preventing models from exploiting spatial autocorrelation as a false signal.

Feature Validation: Rigorously audit each feature to confirm its real-world availability at the time of prediction. For lead contamination risk models, this means verifying that infrastructure data (e.g., pipe material, building age) reflects historical records rather than current assessments [19].

Technical Implementation Framework

Implementing leakage prevention requires both algorithmic strategies and validation methodologies:

Nested Cross-Validation: Employ nested (double) cross-validation where the inner loop performs hyperparameter optimization and the outer loop provides unbiased performance estimation. This approach was successfully applied in assessing China's industrial policy impacts on green economic growth using the double machine learning model [20].

Domain-Aware Splitting: Instead of random data splitting, use knowledge of the environmental domain to create semantically meaningful splits. For school drinking water contamination, this might involve splitting by school district rather than individual schools to prevent leakage of shared infrastructure characteristics [19].

Explainability Audits: Implement SHAP (SHapley Additive exPlanations) or similar interpretability frameworks to identify features with implausibly high predictive power that may indicate leakage [19]. This approach helped researchers validate that lead pipe density and social vulnerability—rather than leaked features—were genuinely driving contamination risk predictions.

Table 2: Leakage Prevention Techniques for Environmental Data Types

| Data Type | Primary Prevention Method | Validation Approach | Tools/Implementations |

|---|---|---|---|

| Time-Series Contamination Measurements [18] | Forward chaining (e.g., TimeSeriesSplit) | Comparison of temporal vs. random split performance | scikit-learn TimeSeriesSplit, custom temporal validators |

| Spatial Environmental Sampling [18] | Spatial blocking with buffer zones | Spatial autocorrelation analysis of residuals | GIS integration, scikit-learn ClusterCrossValidation |

| Structural Environmental Data (e.g., pipe materials) [19] | Temporal validation of feature availability | Domain expert feature audit | Feature documentation protocols, model cards |

| High-Throughput Screening Data [16] | Scaffold splitting based on chemical structure | Performance disparity analysis on novel compounds | RDKit, specialized cheminformatics splitting algorithms |

Diagram 2: Leakage prevention workflow (45 characters)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Leakage Prevention

| Tool/Category | Function | Implementation Example |

|---|---|---|

| Temporal Cross-Validation [18] | Prevents time-based leakage in monitoring data | scikit-learn TimeSeriesSplit with seasonality awareness |

| Spatial Cross-Validation [18] | Addresses spatial autocorrelation in environmental samples | SpatialBlockCV using GIS coordinates of monitoring wells |

| Double Machine Learning [20] | Provides robust causal inference in high-dimensional settings | Orthogonalization for policy impact assessment on green growth |

| Explainable AI (XAI) Frameworks [19] | Identifies leaked features through interpretability | SHAP analysis for lead contamination risk factor identification |

| Chemical Splitting Algorithms [16] | Prevents leakage in QSAR and chemical risk assessment | Scaffold splitting based on molecular structure similarity |

| MLOps Platforms with Carbon Awareness [21] | Ensures reproducible, efficient model training | Kubernetes with autoscaling for lifecycle management |

Data leakage represents a fundamental challenge to the integrity of machine learning applications in environmental contaminant research. The consequences of undetected leakage extend beyond statistical anomalies to directly impact environmental decision-making, remediation resource allocation, and public health protection. As ML adoption accelerates across environmental science, the implementation of rigorous methodological safeguards against data leakage must become standard practice. Through temporal segregation, spatial blocking, domain-aware validation, and explainability audits, researchers can develop models that maintain their validity when deployed in real-world environmental management contexts. The future of trustworthy environmental ML depends on this methodological rigor, ensuring that promising laboratory results translate to genuine field efficacy.

Building Robust Pipelines: Methodological Strategies to Prevent Leakage in Contaminant Analysis

In environmental machine learning, the accurate prediction of phenomena—from contaminant concentrations to ecological shifts—hinges on the integrity of the model validation process. Data leakage, wherein information from the future inadvertently influences the model's understanding of the past, represents a pervasive threat to model validity, leading to overly optimistic performance estimates and models that fail in real-world deployment. This in-depth technical guide addresses the core challenge of implementing temporally correct data splitting for environmental time-series data, framing it within the broader thesis of mitigating data leakage in environmental contaminant research. Unlike traditional random train-test splits, which ignore the intrinsic temporal ordering of observations, methodologies that preserve chronological order are essential for producing reliable, generalizable models that can truly support scientific and regulatory decision-making [22] [23].

The consequences of improper splitting are particularly acute in environmental contexts. For instance, a model predicting NOx concentrations might achieve deceptively high accuracy if trained on a randomly shuffled dataset, as it could subtly memorize short-term patterns that are not causal. When deployed to forecast future pollution events, such a model would likely perform poorly, compromising early warning systems [24]. Similarly, in landslide detection, using satellite imagery from after an event to help identify precursors of that same event constitutes a severe temporal leak, invalidating any assessment of the model's predictive capability [25]. This guide provides researchers, scientists, and development professionals with the experimental protocols and theoretical foundation needed to implement robust temporal splitting, thereby ensuring the development of models that are both scientifically sound and practically useful.

Foundational Concepts: Why Time Series Demand Specialized Splitting

The Problem of Temporal Dependence and Data Leakage

Standard cross-validation techniques, such as K-Fold, operate on the assumption that data points are independently and identically distributed (i.i.d.). In this framework, randomly splitting the data into training and testing subsets is statistically valid. However, time-series data, by its nature, violates this core assumption. Environmental observations collected over time exhibit temporal dependence; the value at time t is often correlated with values at times t-1, t-2, and so on [22].

Applying random splitting to such data creates a fundamental flaw: the model may be trained on data points that chronologically occur after those in its test set. This allows the model to leverage "future" information to "predict" the past, a scenario that is impossible in a real-world forecasting context. This inflates performance metrics and constitutes data leakage, producing a model that has memorized temporal correlations rather than learned underlying causal or systemic patterns [23]. As noted in discussions on time-series cross-validation, "We cannot choose random samples... because it makes no sense to use the values from the future to forecast values in the past" [22].

Core Principles for Temporal Splitting

To avoid these pitfalls, any data splitting strategy for time series must adhere to two key principles established in the literature [22] [23]:

- Chronological Ordering: The training set must always consist of observations that occur strictly before those in the validation or test set. This mimics the real-world forecasting scenario where only past data is available to predict the future.

- Preservation of Temporal Structure: The sequence and dependencies within the data (e.g., seasonality, trends) must be maintained within the training and test sets. Shuffling individual data points destroys this structure and invalidates the model evaluation.

Methodologies for Temporal Data Splitting and Cross-Validation

This section details established experimental protocols for splitting temporal data, progressing from simple single splits to more sophisticated cross-validation techniques.

Single Temporal Split

The most straightforward approach is a single split that reserves a contiguous block of the most recent data for testing.

- Protocol: The dataset is divided into two segments based on a selected point in time. All data before this point is used for training, and all data after is used for testing.

- Use Case: This is suitable for initial model development or when data is abundant. Its main limitation is that the evaluation is based on a single test period, which may not be representative of all temporal variations (e.g., a specific season or anomalous event) [24].

- Implementation: In a 10-year dataset of contaminant concentrations, one might use years 1-8 for training and years 9-10 for testing.

Rolling Origin Cross-Validation (Forward Chaining)

A more robust and widely recommended method is Rolling Origin Cross-Validation, also known as forward chaining or evaluation on a rolling forecasting origin [26] [23]. This method creates multiple training-test splits, providing a more reliable estimate of model performance.

- Protocol: The process starts with a small training set. The model is trained and used to forecast the next period(s), which serve as the test set. These forecasted data points are then incorporated into the training set for the next iteration, and the process repeats, rolling the origin forward [22] [23].

- Use Case: This is the canonical approach for time-series model selection and evaluation, as it effectively simulates the process of making successive forecasts in a live environment [23].

- Implementation Example: For a six-year series, the splits would be [23]:

- Fold 1: Train [1], Test [2]

- Fold 2: Train [1, 2], Test [3]

- Fold 3: Train [1, 2, 3], Test [4]

- Fold 4: Train [1, 2, 3, 4], Test [5]

- Fold 5: Train [1, 2, 3, 4, 5], Test [6]

Time Series Split Cross-Validation

A variant of rolling origin, TimeSeriesSplit (as implemented in libraries like scikit-learn), uses a fixed-size training window that slides through the data, or an expanding window that grows with each fold [22] [27].

- Protocol: The dataset is split into

n_splits + 1segments. For each fold i, the first i segments are used for training, and the (i+1)th segment is used for testing. This ensures the test set is always ahead of the training set [22]. - Use Case: Ideal for evaluating how a model's performance changes as more data becomes available. It is a standardized method that is easy to implement using common programming libraries [27].

- Experimental Workflow: The following diagram illustrates the logical workflow for implementing a time-series cross-validation experiment, from data preparation to performance evaluation.

Advanced and Blocked Methodologies

For more complex scenarios, advanced methodologies offer additional safeguards.

- Nested Cross-Validation: This involves an outer loop for performance estimation and an inner loop for hyperparameter tuning, all while strictly preserving temporal order. This is critical for obtaining unbiased performance estimates when model selection is part of the process.

- Blocked Cross-Validation: Standard rolling windows can still leak information if the model uses lagged features. Blocked cross-validation introduces a gap, or "margin," between the training and validation folds to prevent the model from observing lagged values that are used both as a regressor and a response. A second gap between folds themselves prevents the model from memorizing patterns from one iteration to the next [22].

- Population-Informed Splitting for Multiple Time Series: When the dataset contains independent time series from different sources (e.g., multiple contaminant monitoring stations), the strict temporal ordering can be relaxed between these series. The training set can include all data from other stations, even if it is from a "future" date, because the series are independent. However, temporal order must be preserved within each station's data [22].

Quantitative Comparison of Splitting Methodologies

The table below summarizes the key characteristics, advantages, and limitations of the primary temporal splitting methods discussed.

Table 1: Comparative Analysis of Temporal Data Splitting Methodologies

| Methodology | Core Principle | Key Advantage | Primary Limitation | Ideal Use Case |

|---|---|---|---|---|

| Single Temporal Split | Single contiguous split into past (train) and future (test). | Simple to implement and understand; computationally efficient. | Performance evaluation relies on a single, potentially non-representative, test period. | Initial model prototyping with very large datasets. |

| Rolling Origin (Forward Chaining) | Training set expands to include each test set for the next iteration. | Closely mimics real-world forecasting; provides multiple performance estimates. | Training set size increases over time, conflating performance with data volume. | Robust model evaluation and selection for standard forecasting tasks. |

| Time Series Split (Fixed Window) | Training window of fixed size slides through the data. | Controls for training set size; evaluates performance consistently over time. | Does not utilize all available historical data for training in earlier folds. | Evaluating model performance under a fixed memory constraint. |

| Blocked Cross-Validation | Introduces gaps between training and validation sets. | Mitigates leakage from lagged features and between iterations; highly robust. | Reduces the amount of data available for training and validation. | Models that heavily rely on lagged observations or recurrent architectures. |

| Nested Cross-Validation | Outer loop for evaluation, inner loop for temporal tuning. | Provides unbiased performance estimate when hyperparameter tuning is required. | Computationally very expensive, especially with long time series. | Final model assessment and benchmarking in research publications. |

Case Studies in Environmental Research

Air Pollution Modelling (NOx Concentration)

A study on combined NOx concentration modelling highlights the importance of data splitting, where models using Artificial Neural Networks (ANN) and Random Forests (RF) were able to achieve strong fits (MAPE values of 18.3–18.5%) for predicting NOx levels. The careful structuring of the data, ensuring that models were trained on past data to predict future concentrations, was fundamental to obtaining reliable results that could inform pollution mitigation strategies [24]. The use of meteorological factors and past concentrations as features makes temporal splitting non-negotiable to avoid learning from future conditions.

Landslide Detection with Satellite Imagery

The Sen12Landslides dataset, a large-scale, multi-modal, and multi-temporal resource for satellite-based landslide detection, was explicitly designed to address temporal dynamics. The dataset includes "pre- and post-event timestamps" for landslide events, which are crucial for constructing temporally valid training and testing splits. Benchmark experiments using models like U-ConvLSTM and 3D-UNet, which leverage this temporal information, achieved F1-scores exceeding 83%. This underscores that using single or bi-temporal images can lead to models that misclassify regular land surface changes as landslides, whereas a proper multi-temporal setup allows the model to learn genuine event-based dynamics [25].

Vegetation Leaf Area Index (LAI) Estimation

Research on estimating high spatio-temporal resolution LAI using an Ensemble Kalman Filter-NDVI (ENKF-NDVI) model generated a time series from 2016 to 2022. The validation of this product with ground-based measurements (R² of 0.85, RMSE of 0.39) inherently required a temporal split where the model was trained on earlier data and validated on later periods to confirm its predictive capability for forest planning and management [28].

Implementing robust temporal models requires a suite of computational tools and data resources. The following table details key reagents and their functions in this domain.

Table 2: Key Research Reagent Solutions for Temporal Modeling in Environmental Science

| Tool / Resource | Type | Primary Function | Relevance to Temporal Splitting |

|---|---|---|---|

Scikit-learn (TimeSeriesSplit) |

Python Library | Provides a ready-to-use implementation of time-series cross-validation. | Simplifies the process of creating multiple temporally valid train-test splits. [27] |

Statsmodels (ARIMA) |

Python Library | Offers a comprehensive suite for estimating and forecasting statistical models. | Used within each fold of a cross-validation loop to build and test time-series models. [27] |

| Sentinel-1/-2 & Copernicus DEM | Satellite Data | Provides multi-modal, multi-temporal satellite imagery and elevation data. | The foundational data for environmental time-series studies (e.g., landslides, LAI). Requires strict temporal splitting. [25] |

| High-Resolution Mass Spectrometry (HRMS) | Analytical Instrument | Generates complex, high-dimensional data for non-target analysis of contaminants. | Produces time-series data where machine learning models for source identification must avoid temporal leakage. [29] |

| Sen12Landslides Dataset | Benchmark Dataset | A curated dataset with pre- and post-event landslide imagery. | Serves as a benchmark for testing and validating spatio-temporal models with built-in temporal annotations. [25] |

Visualizing Model Architecture for Temporal Data

Advanced model architectures are being developed to better handle the challenges of long time-series forecasting. The following diagram illustrates the conceptual structure of a Temporal Mix of Experts (TMOE) model, which is designed to dynamically select relevant historical context and mitigate the influence of anomalous segments—a common issue in environmental sensor data [30].

In the realm of machine learning for environmental contaminant research, data preprocessing forms the foundational step that can determine the ultimate success or failure of predictive models. Data leakage during preprocessing represents a critical yet frequently overlooked threat that compromises model integrity, particularly in scientific applications such as groundwater pollution mapping and contamination classification. This phenomenon occurs when information from outside the training dataset—typically from the test set or future data—is used during model training, creating an overly optimistic performance assessment that fails to generalize in real-world scenarios [1]. In environmental research, where models inform public health decisions and resource allocation, such leakage can lead to inaccurate contamination risk assessments with significant societal consequences [31].

The insidious nature of preprocessing leakage lies in its ability to create models that appear highly accurate during validation yet perform poorly when deployed. A 2021 study analyzing scientific papers across 17 fields found that at least 294 publications were affected by data leakage, leading to overly optimistic performance metrics [1]. Within environmental contaminant research, this issue is particularly acute due to the complex, multivariate, and often sparse nature of contamination datasets [31]. As machine learning becomes increasingly integral to environmental science, establishing rigorous preprocessing protocols that prevent information leakage is paramount for generating reliable, actionable insights.

Understanding Preprocessing-Induced Data Leakage

Mechanisms of Leakage in Normalization and Feature Selection

Normalization leakage occurs when scaling parameters are calculated using the entire dataset before splitting into training and test sets. This common error allows the model to gain information about the global distribution of features, including those in the test set, which would not be available during actual deployment [32] [1]. For example, when predicting groundwater contaminant levels, applying min-max normalization across all samples before splitting can artificially inflate performance by allowing the model to "see" the range of values in the test set during training [33]. The proper approach involves calculating normalization parameters (e.g., mean, standard deviation, min, max) exclusively from the training data, then applying these same parameters to transform the test data [1].

Feature selection leakage represents another critical vulnerability, occurring when feature importance is evaluated using the entire dataset rather than only training data. This practice inadvertently reveals relationships between features and the target variable that exist in the test set, creating features that are artificially optimized for the specific dataset rather than generalizable patterns [33]. In contamination research, where identifying relevant environmental predictors is scientifically meaningful, this leakage can lead to incorrect conclusions about which factors truly influence contaminant transport and distribution [31]. For instance, when using recursive feature elimination or correlation-based selection, the evaluation must be performed solely on training data to prevent the model from learning test-specific patterns [33].

Impact on Environmental Research Models

The consequences of preprocessing leakage in environmental contaminant research extend beyond mere statistical inaccuracies to affect real-world decision-making. A recent study on groundwater contamination revealed that machine learning models compromised by data leakage could dramatically underestimate the prevalence of co-occurring pollutants, incorrectly suggesting that up to 80% of sampling locations had no contaminants above regulatory limits, while properly validated models indicated only 15-55% of locations were contamination-free [31]. Such discrepancies directly impact remediation prioritization and public health protection efforts.

Leakage during preprocessing typically produces several characteristic warning signs: unrealistically high performance metrics on validation data, significant performance degradation when models are deployed on new data, and discrepancies between validation performance and real-world utility [34] [1]. In one case study involving contamination classification of high-voltage insulators, researchers noted that proper attention to preprocessing protocols and leakage prevention was instrumental in achieving consistently high accuracy exceeding 98% across multiple machine learning models [12].

Table 1: Impact of Data Leakage on Model Performance in Environmental Applications

| Model Aspect | With Data Leakage | Without Data Leakage | Impact on Environmental Decisions |

|---|---|---|---|

| Reported Accuracy | Inflated by 15-25% [1] | Reflects true performance | Prevents overconfidence in contamination predictions |

| Generalization to New Locations | Poor performance on new geographic areas [31] | Maintains consistent performance | Enables reliable expansion to unmonitored sites |

| Feature Importance | Identifies spurious correlations | Reveals causally relevant factors | Correctly identifies true contaminant sources |

| Regulatory Compliance Predictions | Underestimates contamination extent [31] | Accurate risk assessment | Proper prioritization of remediation resources |

Methodologies for Leakage-Free Preprocessing

Strategic Data Splitting Protocols

Chronological splitting represents a fundamental strategy for temporal environmental data, such as contaminant concentration measurements collected over time. This approach ensures that models are trained on past data and validated on future observations, directly simulating the real-world prediction scenario [1]. For spatial contamination data, grouped splitting techniques prevent leakage by ensuring that all samples from the same geographic location or sampling campaign reside in either training or test sets, avoiding artificial inflation of performance through spatial autocorrelation [35].

Advanced computational tools now offer sophisticated solutions for leakage-free data partitioning. The DataSAIL framework, specifically designed for biological and environmental data, formulates optimal data splitting as a combinatorial optimization problem that minimizes similarity between training and test sets while preserving class distributions [35]. This approach is particularly valuable for contamination studies with limited sample sizes, where random splitting frequently results in highly similar molecules or environmental profiles appearing in both training and test sets. The DataSAIL algorithm employs clustering and integer linear programming to create splits where test samples demonstrate controlled dissimilarity from training instances, more accurately representing true out-of-distribution performance [35].

Pipeline-Based Preprocessing Implementation

Implementing preprocessing operations within a scikit-learn Pipeline provides a technical safeguard against normalization and feature selection leakage by automatically ensuring that all transformations are fitted exclusively on training data [33]. This approach encapsulates the entire sequence of preprocessing and modeling steps, guaranteeing that when the pipeline is applied to test data, the same training-derived parameters are used without information leakage from the test set.

The nested cross-validation approach provides an additional layer of protection against subtle leakage, particularly during hyperparameter tuning and model selection [1]. This methodology maintains multiple layers of data separation, with inner loops dedicated to parameter optimization and outer loops reserved for final performance estimation. For environmental contamination datasets with complex clusterings (e.g., samples from the same watershed, related chemical structures), grouped cross-validation ensures that all correlated samples remain within the same split, preventing the model from artificially learning cluster-specific patterns that wouldn't generalize to new contexts [35].

Domain-Specific Feature Engineering

In contamination research, temporal feature engineering requires particular vigilance to prevent leakage. Features such as rolling averages or seasonal decompositions must be computed using only historical data available at the time of prediction [1]. Similarly, when incorporating external datasets (e.g., land use records, weather data, industrial activity reports), researchers must ensure that these sources reflect information available prior to the prediction period rather than future data that wouldn't be accessible in operational scenarios [34].

Causal validation of features represents another critical leakage prevention strategy, wherein domain experts assess whether proposed features would genuinely be available and causally relevant at the time of prediction [1]. For instance, when predicting groundwater contamination, using features derived from water treatment outcomes that haven't yet occurred would constitute target leakage, as these represent future information unavailable during actual monitoring.

Preprocessing Workflow with Leakage Prevention