Data Normalization Techniques for Heterogeneous Environmental Operations: A Comprehensive Guide for Researchers and Practitioners

This article provides a systematic framework for applying data normalization techniques to complex, heterogeneous environmental datasets.

Data Normalization Techniques for Heterogeneous Environmental Operations: A Comprehensive Guide for Researchers and Practitioners

Abstract

This article provides a systematic framework for applying data normalization techniques to complex, heterogeneous environmental datasets. Tailored for researchers, scientists, and drug development professionals, it addresses the critical challenge of transforming disparate environmental data into a comparable and reliable format for robust analysis. The content spans from foundational principles and a comparative analysis of methodological approaches to practical troubleshooting and validation strategies. By synthesizing current research and real-world case studies, this guide empowers professionals to select and implement optimal normalization methods, thereby enhancing the accuracy and interpretability of their environmental data-driven models and supporting advancements in biomedical and environmental health research.

Why Normalization is Non-Negotiable in Heterogeneous Environmental Data Science

In environmental studies, data normalization is a fundamental pre-processing step that transforms disparate data into a comparable format. This process adjusts values measured on different scales to a common scale, which is crucial for accurately analyzing complex, heterogeneous environmental datasets, such as metal concentrations in water or sustainability indicators across regions [1] [2] [3]. By eliminating redundancies and standardizing information, normalization enhances data integrity, ensures consistency, and enables meaningful comparison of data from diverse sources and locations [4]. This technical support guide provides researchers and scientists with the essential methodologies and troubleshooting knowledge to effectively apply data normalization in environmental operations research.

Frequently Asked Questions (FAQs)

1. Why is data normalization specifically important in environmental research? Environmental data often comes from diverse sources, formats, and units (e.g., pollution levels from different sensors, biodiversity metrics from various studies). Normalization provides a "common language," ensuring this data can be meaningfully compared, shared, and aggregated. This is vital for tackling transboundary issues like climate change and for creating reliable composite sustainability indices [4] [5].

2. My environmental dataset is highly skewed. Is this a problem? Yes, many environmental parameters (e.g., metal concentrations in water) are naturally highly skewed. Statistical tests like the Shapiro-Wilk test can confirm non-normal distribution (a p-value < 0.05 indicates the data is not normally distributed). This skewness can bias statistical analyses and regression models. Normalization techniques, particularly logarithmic transformation, are often used to rescale this data, reduce skewness, and produce a Gaussian (normal) distribution, making the data suitable for further parametric analysis [1].

3. What is the difference between database normalization and data normalization for analysis?

- Database Normalization is a process of organizing data in a relational database to reduce redundancy and improve data integrity by following rules known as "normal forms" (e.g., 1NF, 2NF, 3NF) [2] [6] [3].

- Data (Value) Normalization for analysis involves transforming the actual numerical values of a dataset to a common scale without distorting differences in ranges. This is the primary focus for environmental data analysis and includes methods like Min-Max Scaling and Z-Score normalization [2] [3].

4. How do I choose the right normalization method for my environmental indicators? The choice depends on your data's characteristics and the goal of your analysis. The table below summarizes common techniques used in sustainability assessments and environmental research [5] [3].

Table 1: Common Data Normalization Techniques in Environmental Studies

| Method | Formula | Best Use Cases | Key Advantages | Key Drawbacks |

|---|---|---|---|---|

| Ratio Normalization | ( x' = \frac{x}{r} )r is a reference value | Creating simple, unit-less ratios for indicators. | Simple to compute and interpret. | Result is sensitive to the choice of reference value. |

| Z-Score (Standardization) | ( z = \frac{x - \mu}{\sigma} )μ is mean, σ is std. dev. | Data with Gaussian distribution; preparing for multivariate analysis. | Results in a mean of 0 and std. dev. of 1. Handles outliers. | Does not bound the range of data, which can be problematic for some analyses. |

| Min-Max Scaling | ( x' = \frac{x - min(x)}{max(x) - min(x)} ) | When you need data bounded on a specific scale (e.g., [0, 1]). | Preserves relationships; outputs a standardized range. | Highly sensitive to outliers, which can compress the scale. |

| Target Normalization | ( x' = \frac{x}{target} ) | Assessing performance against a specific goal or regulatory limit. | Interpretation is intuitive (e.g., >1 means exceeding target). | Dependent on a relevant and well-defined target value. |

| Log Transformation | ( x' = \log(x) ) | Highly skewed data, such as contaminant concentrations. | Effectively reduces positive skew, producing a more normal distribution. | Not defined for zero or negative values. Interpretation can be less direct. |

Troubleshooting Guides

Problem 1: Statistical Model Performing Poorly on Raw Environmental Data

Symptoms: Regression models are biased; cluster analysis groups data based on the scale of measurement rather than intrinsic properties; one variable with a large range dominates the model.

Diagnosis: The features (variables) in your dataset are measured on different scales, causing algorithms to weigh variables with larger ranges more heavily.

Solution: Apply value-based normalization before model training.

- Determine Distribution: Use a statistical test (e.g., Shapiro-Wilk) or visualizations (histogram, Q-Q plot) to check for normality [1].

- Select and Apply a Technique:

- For non-normal, skewed data (common for concentrations), use Log Transformation [1].

- For normally distributed data, use Z-Score Standardization, especially for distance-based algorithms [5] [3].

- For data where the absolute minimum and maximum are known, use Min-Max Scaling to bound values to a range like [0, 1] [3].

- Validate: Re-run your model and compare performance metrics (e.g., R², accuracy) to the pre-normalized results.

Problem 2: Inability to Combine Indicators for a Composite Sustainability Score

Symptoms: You have multiple environmental indicators (e.g., CO₂ emissions, water usage, biodiversity index) in different units and cannot aggregate them into a single composite score.

Diagnosis: Directly aggregating data with different units is mathematically invalid and produces meaningless results.

Solution: Normalize all indicators to a common, unit-less scale prior to aggregation [5].

- Choose a Normalization Scheme: Refer to Table 1. Z-Score and Min-Max Scaling are common for this purpose.

- Choose an Aggregation Function: Decide how to combine the normalized scores (e.g., weighted sum, arithmetic mean).

- Calculate Composite Score: Apply the aggregation function to the normalized values. Be aware that the choice of normalization method can influence the final ranking in the composite score [5].

Problem 3: Database for Environmental Samples is Bloated and Prone to Errors

Symptoms: The same data (e.g., site location details) is repeated across many records; updating a piece of information requires changes in multiple places, leading to inconsistencies.

Diagnosis: The database schema violates the principles of database normalization, leading to data redundancy and "update anomalies" [2] [6].

Solution: Restructure the database by applying normal forms.

- First Normal Form (1NF): Eliminate repeating groups. Create separate tables for related data and use a primary key.

- Example: Instead of having

SampleID,Test1,Test2,Test3columns, create a relatedTeststable with one row per sample-test combination [6].

- Example: Instead of having

- Second Normal Form (2NF): Meet 1NF and remove partial dependencies. Ensure all non-key attributes depend on the entire primary key.

- Example: In a

Samplingtable with a composite key(SampleID, AnalystID), theAnalystNamedepends only onAnalystID. MoveAnalystNameto a separateAnalyststable [3].

- Example: In a

- Third Normal Form (3NF): Meet 2NF and remove transitive dependencies. Ensure non-key attributes depend only on the primary key, not on other non-key attributes.

Experimental Protocols & Workflows

Protocol: Normalizing Metal Concentration Data Against Total Suspended Solids (TSS)

Background: In environmental forensics, understanding the relationship between metal concentrations and Total Suspended Solids (TSS) is critical. Raw data is often skewed, requiring normalization before analysis [1].

Materials:

- Dataset: Concentrations of metals (Arsenic, Lead, etc.) and TSS from multiple samples.

- Software: Statistical software (R, Python, SPSS) capable of statistical testing and transformations.

Methodology:

- Test for Normality: Perform the Shapiro-Wilk test on the raw metal and TSS data.

- Null Hypothesis (H₀): The data is normally distributed.

- If p-value < 0.05, reject H₀ and conclude the data is not normal, necessitating normalization [1].

- Apply Logarithmic Transformation: Calculate the base-10 logarithm (log₁₀) of each metal concentration and TSS value.

- Re-test for Normality: Perform the Shapiro-Wilk test again on the log-transformed data. The goal is to not reject the null hypothesis (p-value > 0.05), confirming the transformed data is normally distributed [1].

- Proceed with Analysis: Use the log-normalized data in subsequent analyses, such as multivariate linear regression, to accurately model the relationship between metals and TSS.

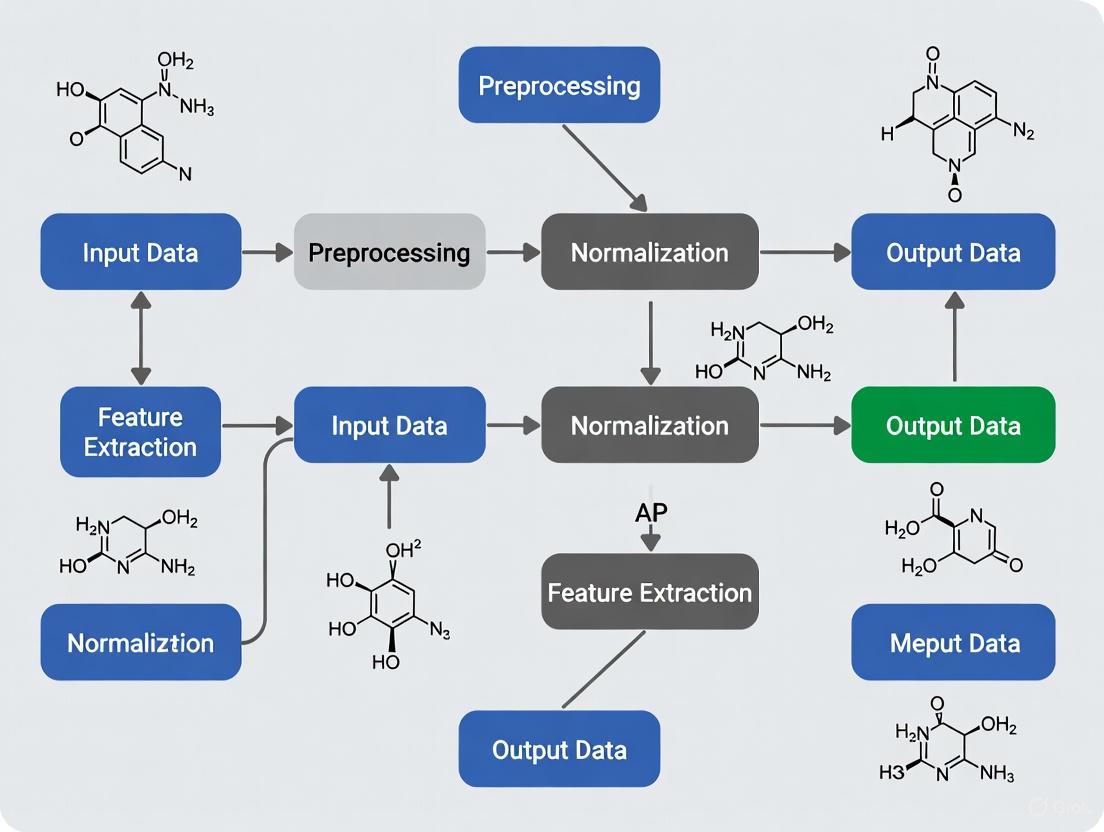

Diagram 1: Workflow for normalizing skewed environmental data.

Protocol: Constructing a Normalized Composite Sustainability Index

Background: Assessing progress toward sustainability requires combining diverse social, economic, and environmental indicators into a single, comparable index. Normalization is a mandatory step to render the different units comparable [5].

Materials:

- Indicator Dataset: Quantified values for all selected sustainability indicators.

- Reference Data: Targets, goals, or baseline values for target normalization (if applicable).

Methodology:

- Indicator Selection: Define a relevant set of indicators (e.g., GHG emissions, water consumption, employment rate).

- Directionality Alignment: Ensure all indicators are aligned so that a higher value universally means "more sustainable." This may require inverting some indicators (e.g., multiplying pollution metrics by -1).

- Normalization: Choose and apply a normalization method from Table 1 (e.g., Z-score for a balanced view, Min-Max for a bounded index).

- Weighting & Aggregation: Assign weights to each indicator based on importance and aggregate the normalized scores using a chosen function (e.g., weighted arithmetic mean).

- Interpretation: Analyze the final composite scores to compare the sustainability performance of different systems or time periods.

Diagram 2: Process for creating a normalized sustainability index.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for Data Normalization in Environmental Research

| Item / Technique | Function / Purpose | Example Application in Environmental Studies |

|---|---|---|

| Shapiro-Wilk Test | A statistical test used to check if a dataset deviates significantly from a normal distribution. | Testing the normality of contaminant concentration data (e.g., Arsenic in water) before statistical analysis [1]. |

| Logarithmic Transformation | A mathematical transformation used to reduce positive skewness in data, making it more normally distributed. | Normalizing highly skewed data, such as metal concentrations or microbial counts, for use in parametric statistical tests [1]. |

| Z-Score Normalization | Transforms data to have a mean of zero and a standard deviation of one, centering and scaling the distribution. | Preparing different environmental indicators (e.g., temperature, pH, species count) for multivariate analysis or clustering [5] [3]. |

| Min-Max Scaler | Rescales data to a fixed range, typically [0, 1], based on the minimum and maximum values. | Creating a composite sustainability index where all indicators must contribute to a score on a fixed, bounded scale [3]. |

| Relational Database | A structured database that allows for the application of database normalization rules to minimize redundancy. | Storing environmental sample data, site information, and lab results efficiently and without duplication [2] [6]. |

Frequently Asked Questions (FAQs)

Diagnosing Heterogeneity in Your Dataset

Q1: How can I test if my environmental dataset requires normalization? To determine if your dataset requires normalization, you should first assess its statistical distribution. Environmental data, such as metal concentrations, often deviates from a normal (Gaussian) distribution, which is a key assumption for many parametric statistical tests.

- Experimental Protocol: Shapiro-Wilk Test for Normality

- Formulate Hypotheses: Null Hypothesis (H₀): The data is normally distributed. Alternative Hypothesis (H₁): The data is not normally distributed.

- Run the Test: Perform the Shapiro-Wilk test using statistical software (e.g., R, Python with SciPy). The test quantifies the similarity between your observed data and a normal distribution.

- Interpret the p-value: A p-value less than 0.05 (p < 0.05) leads to a rejection of the null hypothesis, confirming your data is not normally distributed and requires normalization [1].

Q2: My meta-analysis shows inconsistent effect sizes. What are the potential sources of this heterogeneity? Heterogeneity in effect sizes across studies, common in fields like genomics and environmental science, can stem from multiple sources. Accurately identifying these is crucial for selecting the correct analytical model.

- Primary Sources of Variation:

- Genetic Ancestry: Differences in linkage disequilibrium patterns and allele frequencies between populations can cause effect size heterogeneity [7].

- Environmental Exposures: Varying environmental factors (e.g., climate, urban vs. rural status, lifestyle factors, pollutant levels) can interact with genetic variants or directly influence measurements, leading to heterogeneity that correlates with these exposures [7].

- Methodological Variation: Differences in sample collection, laboratory processing, and measurement technologies between studies introduce technical heterogeneity [8].

Selecting and Applying Normalization Methods

Q3: What are the primary normalization methods for heterogeneous environmental data? Different normalization methods are suited for different types of data and challenges. The table below summarizes common techniques.

Table 1: Common Normalization Techniques for Environmental Data

| Method | Principle | Best Used For | Key Considerations |

|---|---|---|---|

| Logarithmic Transformation [1] | Transforms skewed data using a logarithm to achieve a more normal (Gaussian) distribution. | Highly skewed data (e.g., metal concentrations, species counts). | Simple and effective for right-skewed data. Cannot be applied to zero or negative values. |

| Z-Score Normalization [5] | Rescales data to have a mean of 0 and a standard deviation of 1. | Comparing indicators measured in different units prior to aggregation. | Facilitates comparison but is sensitive to outliers. |

| Percent Relative Abundance [9] | Converts absolute counts to percentages within each sample. | Microbiome data and ecological community composition. | Easy to interpret but makes abundances within a sample interdependent. |

| Variance Stabilizing Transformation (VST) [9] | Applies a function to ensure data variability is not related to its mean value. | Data where variance scales with the mean (e.g., RNA-seq data). | Robust for data with large variances and small sample sizes. |

| Random Subsampling (Rarefaction) [9] | Randomly subsamples counts to the same depth across all samples. | Comparing species richness in microbiome datasets. | Reduces data depth, potentially increasing Type II errors. Debate exists on its appropriateness [9]. |

Q4: How do I implement a log normalization to address a non-normal distribution? Log transformation is a standard technique to correct for positive skewness in environmental data.

- Experimental Protocol: Log Normalization

- Test for Normality: First, confirm non-normality using the Shapiro-Wilk test [1].

- Apply Transformation: Create a new variable in your dataset where each value is the natural logarithm (or log₁₀) of the original value. For data with zeros, add a small constant (e.g., 1) to all values before transformation.

- Verify Success: Re-run the Shapiro-Wilk test on the new, log-transformed variable. A p-value greater than 0.05 indicates the data is now normally distributed and suitable for subsequent parametric analysis [1].

The following workflow diagram outlines the decision process for diagnosing and addressing heterogeneity in environmental datasets:

Troubleshooting Guides

Problem: Inconsistent Findings in Meta-Analysis Due to Ancestral or Environmental Heterogeneity

Symptoms: Wide confidence intervals in summary effect estimates, a high I² statistic indicating substantial heterogeneity, and opposing effect directions between studies.

Solution: Employ advanced meta-regression models that account for structured heterogeneity.

Experimental Protocol: Environment-Adjusted Meta-Regression (env-MR-MEGA) This protocol is designed for genome-wide association study (GWAS) meta-analysis but is a robust framework for any environmental meta-analysis with summary-level data [7].

- Gather Summary-Level Data: Collect effect sizes, standard errors, and sample sizes for each variable of interest from all included studies.

- Define Ancestral and Environmental Covariates:

- Genetic Ancestry: Represent ancestry using principal components (PCs) derived from genome-wide data or, if unavailable, use predefined population labels as proxies [7].

- Environmental Exposures: Obtain study-level summaries of environmental factors (e.g., average BMI, percentage of urban residents, average pollutant exposure) [7].

- Model Fitting: Fit the env-MR-MEGA model. The model regresses the study-specific effect sizes (βᵢ) against the axes of genetic variation (PC1, PC2, ...) and the environmental covariates (E). The model is weighted by the inverse of the study-specific variances (seᵢ²) [7].

- Interpretation: The model tests two primary hypotheses:

- Association Test: Whether the genetic/environmental variable is significantly associated with the trait across all studies, after accounting for heterogeneity.

- Heterogeneity Test: Whether the axes of genetic variation and environmental covariates significantly explain the between-study heterogeneity.

The following diagram visualizes the analytical workflow for the env-MR-MEGA model:

Problem: Aggregating Sustainability Indicators with Different Units

Symptoms: Inability to directly combine indicators into a composite sustainability score; results are biased towards indicators with larger numerical values.

Solution: Apply a rigorous normalization and aggregation framework.

- Experimental Protocol: Constructing a Composite Sustainability Index

Table 2: Properties of Normalization Schemes for Aggregation

| Normalization Scheme | Formula | Impact on Aggregate Score | Advantage | Disadvantage |

|---|---|---|---|---|

| Z-Score | ( z = \frac{x - \mu}{\sigma} ) | Linear | Centers data, allows for comparison of outliers. | Sensitive to extreme values. |

| Ratio | ( R = \frac{x}{R_{ref}} ) | Linear, depends on reference ( R_{ref} ) | Intuitive and simple. | Choice of reference value is critical and subjective. |

| Target [0,1] | ( T = \frac{x - min}{max - min} ) | Linear, bounded | Easy to understand, results in a bounded score. | Highly sensitive to min and max values. |

| Unit Equivalence | ( U = \frac{x}{E_{equiv}} ) | Linear, depends on equivalence ( E_{equiv} ) | Useful when a functional equivalence is known. | Requires expert knowledge to set equivalence. |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Heterogeneity Analysis

| Reagent / Tool | Function in Analysis |

|---|---|

| Statistical Software (R, Python) | Provides the computational environment for performing Shapiro-Wilk tests, data normalization, and advanced meta-regression models like env-MR-MEGA [1] [7]. |

| Shapiro-Wilk Test | A specific statistical test used as a reagent to diagnose the need for normalization by testing the null hypothesis that data is normally distributed [1]. |

| Genetic Principal Components (PCs) | Used as covariates in meta-analyses to quantify and adjust for heterogeneity stemming from population genetic structure [7]. |

| Study-Level Environmental Covariates | Summary-level data on factors like BMI or urban status, used as inputs in the env-MR-MEGA model to account for non-ancestral heterogeneity [7]. |

| Normalization Functions (e.g., Log, Z-score) | Mathematical functions applied to raw data to transform them onto a common scale, making different indicators comparable and suitable for aggregation [1] [5]. |

In statistical analysis, many foundational techniques—including t-tests, ANOVA, and linear regression—carry a critical underlying assumption: that the data are normally distributed [10] [11]. Violating this normality assumption can lead to misleading or invalid conclusions, making it a prerequisite for many parametric tests [10]. For researchers in environmental operations and drug development, verifying this assumption is not merely a statistical formality but a essential step to ensure the reliability of their findings.

This technical guide focuses on the Shapiro-Wilk test, a powerful statistical procedure developed by Samuel Shapiro and Martin Wilk in 1965 [10] [12]. It is widely regarded as one of the most powerful tests for assessing normality, particularly for small to moderate sample sizes [13] [11]. The test provides an objective method to determine whether a dataset can be considered to have been drawn from a normally distributed population, thereby guiding the appropriate choice of subsequent analytical techniques.

Understanding the Shapiro-Wilk Test

Core Principles and Hypotheses

The Shapiro-Wilk test is a hypothesis test that evaluates whether a sample of data comes from a normally distributed population [10] [14]. Its calculation is based on the correlation between the observed data and the corresponding expected normal scores [15] [11]. The test produces a statistic, denoted as W, which ranges from 0 to 1. A value of W close to 1 suggests that the data closely follow a normal distribution [13].

The test formalizes its assessment through the following hypotheses:

- Null Hypothesis (H₀): The data are normally distributed [10] [13].

- Alternative Hypothesis (H₁): The data are not normally distributed [10] [13].

Test Interpretation

The key to interpreting the Shapiro-Wilk test lies in the p-value. The p-value quantifies the probability of obtaining the observed sample data (or something more extreme) if the null hypothesis of normality were true [16]. The decision rule is straightforward:

- If p-value > α (typically 0.05): You fail to reject the null hypothesis. There is not enough evidence to conclude that the data deviates significantly from a normal distribution [10] [16].

- If p-value ≤ α (typically 0.05): You reject the null hypothesis. There is significant evidence to suggest that the data are not normally distributed [10] [16].

It is crucial to consider effect size and practical significance, especially with large sample sizes, as the test may detect trivial deviations from normality that have little practical impact on subsequent analyses [12] [16].

Troubleshooting Guide: Common Issues and Solutions

FAQ: Frequently Asked Questions

Q1: My sample size is very large (n > 2000), and the Shapiro-Wilk test gives a significant result (p < 0.05), but my Q-Q plot looks almost normal. What should I do? A: This is a common issue due to the test's high sensitivity in large samples [10] [14]. With large N, the test can detect minuscule, practically insignificant deviations from normality [11]. Your course of action should be:

- Prioritize Graphical Methods: Rely on Q-Q plots and histograms for a practical assessment of the deviation [11] [12].

- Assess Robustness: Many parametric tests (like t-test or ANOVA) are robust to mild deviations from normality, especially with large sample sizes [11].

- Consider Alternatives: If the deviation is pronounced in the plots, consider non-parametric tests, but do not base this decision solely on the significant p-value from the Shapiro-Wilk test.

Q2: What should I do if my data fails the normality test (p ≤ 0.05)? A: A significant result indicates that your data is not normally distributed, and using parametric tests may be inappropriate. You have two main options:

- Data Transformation: Apply transformations to make your data more symmetrical. Common transformations include:

- Logarithmic (Log): Highly effective for right-skewed (positively skewed) data, common in environmental concentrations [1].

- Square Root: Useful for data representing counts.

- Box-Cox or Yeo-Johnson: More sophisticated, parameterized transformation methods that can handle a wider range of data [14].

- Important: After transformation, you must re-run the Shapiro-Wilk test on the transformed data to check for improvement [1].

- Use Non-Parametric Tests: These tests do not assume a specific data distribution.

- Instead of a t-test, use the Mann-Whitney U test (for two independent groups) or the Wilcoxon Signed-Rank test (for two related groups).

- Instead of one-way ANOVA, use the Kruskal-Wallis H test.

Q3: How do I check for normality when my sample size is very small (n < 20)? A: The Shapiro-Wilk test is known for its high statistical power with small samples and is an excellent choice in this scenario [13] [11]. However, be aware that with very small samples, the test's power to detect non-normality is inherently limited—it may fail to reject the null hypothesis even when the population is non-normal [11]. Therefore, for small samples, it is critical to:

- Use the Shapiro-Wilk test.

- Examine graphical methods like Q-Q plots.

- Consider your prior knowledge about the data's source distribution.

Q4: What is the difference between the Shapiro-Wilk test and a t-test? A: These tests serve fundamentally different purposes:

- The Shapiro-Wilk test is a "normality test." Its sole purpose is to check if a single sample of data follows a normal distribution [14].

- The t-test is a "parametric test" that compares the mean values between two groups. It assumes the data within each group is normally distributed, which is why the Shapiro-Wilk test is often used before conducting a t-test to verify this assumption [14].

Troubleshooting Table

The following table outlines common problems, their likely causes, and recommended solutions for researchers using the Shapiro-Wilk test.

| Problem Encountered | Likely Cause | Recommended Solution |

|---|---|---|

| Significant test (p ≤ 0.05) with large sample size, but data looks normal on a plot. | Test is overly sensitive to trivial deviations in large datasets [10] [14]. | Base your decision on graphical plots (Q-Q plot, histogram) and the robustness of your intended parametric test [12]. |

| Data strongly fails the normality test (e.g., p < 0.01). | The underlying population distribution is not normal; data may be skewed or have heavy tails. | Apply a data transformation (e.g., log) or switch to a non-parametric statistical test [1] [16]. |

| Test result is non-significant (p > 0.05) with a very small sample (n < 10). | The test has low power to detect non-normality due to the small sample size [11]. | Do not over-interpret a "pass." Use graphical methods and consider the known behavior of the variable being measured. |

| Software warning: "p-value may not be accurate for N > 5000." | The algorithm's approximation may be less precise for very large samples [14]. | The p-value is likely still a good indicator of severe non-normality, but for large N, graphical assessment is paramount. |

Practical Implementation and Protocols

Experimental Protocol for Testing Normality

A robust normality assessment involves more than just running a single statistical test. Follow this step-by-step protocol:

- Data Collection and Preparation: Gather your dataset. Ensure it is in a suitable numeric format, free of input errors and missing values that could skew the analysis [15].

- Visual Inspection (First Pass): Create a histogram and a Q-Q plot (Quantile-Quantile plot) [11].

- The histogram should show a rough bell-shaped curve.

- The Q-Q plot should see data points closely following the diagonal line. Major deviations from the line suggest non-normality.

- Statistical Testing: Perform the Shapiro-Wilk test.

- This provides a quantitative, objective measure to complement your visual inspection.

- Synthesis and Decision: Combine the evidence from the graphical and statistical analyses.

- If both the plots and the test (p > 0.05) suggest normality, proceed with parametric tests.

- If either the plots show clear non-normality or the test is significant (p ≤ 0.05), consider data transformation or non-parametric methods.

- Iteration (If Applicable): If you transform the data, return to Step 2 and repeat the process on the transformed dataset.

Code Implementation

The Shapiro-Wilk test is readily available in most statistical software. Below are examples in two commonly used languages.

In Python (using SciPy):

In R:

The Scientist's Toolkit: Key Reagents and Materials

For researchers, especially in environmental and pharmaceutical fields, the "toolkit" for preparing data for normality testing includes both conceptual and practical items.

| Item / Concept | Function / Explanation | Relevance to Environmental/Drug Development |

|---|---|---|

| Shapiro-Wilk Test | A powerful statistical test to objectively assess if a data sample comes from a normal distribution [13] [11]. | Used to validate assumptions before applying parametric tests to data like chemical concentration levels or dose-response measurements. |

| Q-Q Plot (Graphical Tool) | A visual method to compare the quantiles of a data sample to a theoretical normal distribution [11]. | Helps identify the nature of deviations (e.g., skewness, outliers) in datasets such as pollutant concentrations or patient recovery times. |

| Log Transformation | A mathematical operation applied to each data point (using the natural logarithm) to reduce right-skewness [1] [14]. | Crucial for normalizing highly skewed environmental data (e.g., metal concentrations in water [1]) or biological assay data. |

| Non-Parametric Tests | Statistical tests (e.g., Mann-Whitney, Kruskal-Wallis) that do not assume an underlying normal distribution [16]. | The fallback option when data transformation fails to achieve normality, ensuring robust analysis of heterogeneous operational data. |

| Statistical Software (R/Python) | Programming environments with extensive libraries for statistical testing and data visualization. | Essential for automating the analysis workflow, from data cleaning and transformation to normality testing and final inference. |

While the Shapiro-Wilk test is often the best choice, other normality tests exist. The table below provides a concise comparison.

| Test Name | Key Characteristics | Best Used For |

|---|---|---|

| Shapiro-Wilk | High statistical power, especially for small samples [13] [11]. | Small to moderate sample sizes (n < 2000) where high power is needed. |

| Kolmogorov-Smirnov (K-S) | Compares the empirical distribution function of the sample to a normal CDF. Less powerful than Shapiro-Wilk [11]. | Large sample sizes; considered less powerful for normality testing [11]. |

| Anderson-Darling | An EDF test that gives more weight to the tails of the distribution than K-S [11]. | When sensitivity to deviations in the distribution tails is critical. |

| Jarque-Bera | Based on the sample skewness and kurtosis (third and fourth moments) [11]. | Large sample sizes, as a test for departures from normality based on symmetry and tailedness. |

For researchers in environmental operations and drug development, ensuring the validity of statistical conclusions is paramount. The Shapiro-Wilk test serves as a critical gatekeeper, providing a powerful and reliable method to verify the normality assumption that underpins many common analytical techniques. By integrating this test into a comprehensive workflow that includes visual inspection and sound judgment—especially regarding sample size—scientists can make robust, data-driven decisions. When normality is violated, the toolkit provides clear paths forward through data transformation or the use of non-parametric tests, ensuring that research findings remain credible and actionable.

Frequently Asked Questions

Q1: What is spatial autocorrelation and why is it a problem in environmental data analysis? Spatial autocorrelation describes how a variable is correlated with itself through space, essentially quantifying whether things that are close together are more similar than things that are far apart [17] [18]. It becomes a problem in statistical analysis because it violates the assumption of independence between observations, which is foundational to many traditional statistical models. This can lead to biased parameter estimates, incorrect standard errors, and ultimately, misleading conclusions about the relationships you are studying.

Q2: How can I technically test for spatial autocorrelation in my dataset? You can test for spatial autocorrelation using global and local indices. The most common method is Global Moran's I [17] [18]. The methodology involves:

- Defining Spatial Weights: First, determine which geographic units are neighbors. This can be done using the

poly2nbfunction in R to create a neighbors list, specifying criteria like shared borders (Queen's case) or only shared edges (Rook's case) [18]. - Converting to a Weighted List: Convert the neighbors list into a spatial weights object using a function like

nb2listw[17] [18]. - Running the Test: Conduct the Moran's I test using the

moran.test()function from thespdeppackage in R, passing your data variable and the spatial weights object [17]. The output provides a statistic and a p-value to assess significance.

Q3: What are the main technical biases that can affect a geospatial analysis? Technical biases in geospatial analysis often stem from the data and algorithms used [19] [20]. The table below summarizes the key types:

Table: Types and Sources of Technical Bias in Geospatial Analysis

| Bias Type | Description | Potential Impact on Analysis |

|---|---|---|

| Data Bias [19] [20] | Arises from training datasets that are unrepresentative, incomplete, or reflect historical patterns of discrimination. | Results in models that perform poorly for underrepresented geographic areas or demographic groups, perpetuating existing inequities [21]. |

| Algorithmic Bias [19] | Unfairness emerging from the design and structure of machine learning algorithms themselves. | May optimize for overall accuracy while ignoring performance disparities across different regions or communities. |

| Measurement Bias [20] | Emerges from inconsistent or culturally biased data collection methods across different locations. | Creates skewed data that does not accurately reflect the true situation on the ground, leading to incorrect inferences. |

| Sampling Bias [20] | Occurs when data collection does not represent the entire population or geographic area of interest. | Leads to "hot spots" being over-represented while other areas are invisible in the data, misdirecting resources [22] [21]. |

Q4: My data is highly skewed. Which normalization technique should I use? Choosing a normalization technique depends on your data's distribution and the presence of outliers, which is common in heterogeneous environmental data [23] [24]. The PROVAN method, designed for socio-economic and innovation assessments, integrates multiple normalization techniques to enhance decision accuracy, which can be a robust approach for complex, skewed data [25]. For machine learning pipelines, consider these common scalers:

Table: Data Scaling and Normalization Techniques for Skewed Data

| Technique | Description | Best For |

|---|---|---|

| Min-Max Scaler [24] | Scales features to a specific range, often [0, 1]. | Data without extreme outliers, when you know the bounded range. |

| Standard Scaler [24] | Standardizes features by removing the mean and scaling to unit variance. | Data that is roughly normally distributed. Can be affected by outliers. |

| Robust Scaler [24] | Scales data using the interquartile range (IQR), making it robust to outliers. | Datasets with many outliers and skewed distributions. |

Q5: How can I mitigate AI bias in a geospatial decision support system? Mitigating AI bias requires a comprehensive strategy across the entire AI lifecycle [19]:

- Pre-processing: Fix bias in the training data before model development. This includes ensuring geographic and demographic representativeness in your datasets and applying techniques to give more weight to underrepresented groups [19].

- In-processing: Modify the learning algorithms themselves to build fairness directly into the model during training, such as through adversarial debiasing [19].

- Post-processing: Adjust the AI outputs after the model makes its initial decisions to ensure fair results across different geographic or demographic groups [19].

- Governance & Teams: Implement strong governance frameworks with accountability and establish diverse development teams to identify blind spots [19].

Troubleshooting Guides

Issue 1: Handling Spatial Autocorrelation in Regression Analysis

Problem: You are running a regression on environmental data but suspect that spatial autocorrelation in the residuals is invalidating your model's results.

Solution: Follow this workflow to diagnose and address the issue.

Detailed Protocol for Moran's I Test on Residuals [17] [18]:

- Fit a Standard Regression Model: Begin with your ordinary least squares (OLS) model.

- Extract Residuals: Obtain the residuals from the fitted model.

- Define Spatial Weights: Create a neighbors list (e.g., using

poly2nb) and convert it to a listw object (e.g., usingnb2listw(..., style="W")for row-standardized weights). - Run Moran's I Test: Use the

moran.test()function on the residuals, specifying the spatial weights object and the alternative hypothesis (e.g.,alternative="greater"to test for positive autocorrelation). - Interpret Results: A statistically significant Moran's I (p-value < 0.05) indicates significant spatial autocorrelation in the residuals, suggesting a spatial regression model like a Spatial Autoregressive (SAR) or Spatial Error Model (SEM) is needed.

Issue 2: Identifying and Correcting for Technical Bias in a Geospatial Dashboard

Problem: A geospatial dashboard for public health intervention is found to be systematically under-representing needs in certain communities, leading to biased resource allocation.

Solution: A multi-faceted approach to identify and correct the bias.

Detailed Methodology for Bias Audit:

Data Source Interrogation:

- Check for Sampling Bias: Evaluate if data collection points (e.g., health clinics, police reports) are evenly distributed across all communities. Underserved "pharmacy deserts" or "treatment deserts" can create massive data gaps [21] [26].

- Check for Historical Bias: Determine if the data reflects historical inequities in service provision or law enforcement. For example, using arrest data alone may over-represent drug abuse in over-policed communities [21] [20].

Algorithmic Logic Audit:

- Review Feature Selection: Identify if any input variables (features) act as proxies for protected attributes like race or income. For example, using ZIP code can perpetuate racial bias due to segregation [19] [20].

- Perform Cross-Group Performance Analysis: Calculate the dashboard's key metrics (e.g., predicted risk score, service allocation) separately for different demographic groups and geographic regions to identify performance disparities [19].

Stakeholder Feedback: Conduct focus groups with community health workers and residents from the underrepresented areas. Their lived experience can reveal context and biases that quantitative data misses [21].

Issue 3: Managing Skewed Data in Multi-Criteria Decision-Making (MCDM)

Problem: You are applying an MCDM method like TOPSIS to rank locations for a new environmental facility, but your criteria data is highly skewed, distorting the rankings.

Solution: Carefully select and potentially combine normalization techniques to handle the skew.

Experimental Protocol for the PROVAN Method [25]: The PROVAN (Preference using Root Value based on Aggregated Normalizations) method is designed for this purpose. It enhances robustness by integrating five different normalization techniques to avoid the pitfalls of relying on a single method. The general workflow is:

- Construct the Decision Matrix: Create a matrix where rows are alternatives (e.g., locations) and columns are criteria (e.g., environmental, economic factors).

- Apply Multiple Normalizations: Independently normalize the decision matrix using several techniques (e.g., vector, linear, max, logarithmic).

- Aggregate Normalized Matrices: Combine the multiple normalized matrices into a single aggregated matrix, often using a method like the Aczel-Alsina aggregation function to preserve the integrity of the different normalization outputs.

- Determine Criteria Weights: Calculate the weights of each criterion using a method like an extended WENSLO (Weights by ENvelope and SLOpe) that can incorporate multiple normalization strategies.

- Rank Alternatives: Use the aggregated normalized matrix and the criteria weights to compute a final score and rank for each alternative.

The Scientist's Toolkit

Table: Essential Analytical Reagents for Spatial and Bias-Aware Research

| Research 'Reagent' (Tool/Metric) | Function / Purpose |

|---|---|

| Global Moran's I [17] [18] | A global index to test for the presence and degree of spatial autocorrelation across the entire study area. |

| LISA (Local Indicators of Spatial Association) [17] | Provides a local measure of spatial autocorrelation, identifying specific hot spots, cold spots, and spatial outliers. |

| CRITIC Method [23] | An objective weighting method used in MCDM that determines criterion importance based on the contrast intensity and conflicting character between criteria. |

| PROVAN Method [25] | A robust MCDM ranking method that integrates five normalization techniques to improve decision accuracy for heterogeneous data. |

| Robust Scaler [24] | A data preprocessing technique that scales features using statistics that are robust to outliers (median and IQR). |

| Spatial Weights Matrix [17] [18] | A mathematical structure that formally defines the spatial relationships between geographic units in a dataset (e.g., contiguity, distance). |

| Task-Technology Fit (TTF) & PSSUQ [22] | Models and questionnaires used to evaluate the usability and sufficiency of decision support systems and dashboards from the user's perspective. |

The Impact of Poor Normalization on Model Performance and Scientific Inference

Troubleshooting Guides

Troubleshooting Guide 1: Diagnosing Poor Normalization in Heterogeneous Environmental Data

Q: How can I identify if my environmental dataset suffers from poor normalization? A: Poor normalization often manifests as model instability and biased scientific inferences. Key indicators include high variance in model performance across different data subsets, sensitivity to minor changes in training data, and coefficients that contradict established domain knowledge.

- Symptom: Model performance degrades when integrating new, heterogeneous data sources (e.g., combining sensor data with public participation reports).

- Diagnosis: Check for significant differences in scale and distribution between data sources. For instance, command-and-control regulatory data might be binary, while market-incentive data could be continuous monetary values [27].

Solution: Apply domain-specific normalization protocols before integration, ensuring all variables contribute equally to the model.

Symptom: A model predicting environmental pollution levels shows high accuracy on training data but fails to generalize to new geographical regions or time periods.

- Diagnosis: This can indicate that the model learned spurious correlations specific to the scale of the original dataset, rather than the underlying environmental processes [27].

- Solution: Implement and compare multiple normalization techniques (e.g., Z-score, Min-Max, Robust Scaling) and validate model performance on temporally and spatially distinct test sets.

The following workflow outlines a diagnostic protocol for identifying normalization issues:

Troubleshooting Guide 2: Resolving Normalization-Induced Bias in Scientific Inference

Q: My model's conclusions about the effectiveness of environmental regulations are heavily skewed. Could poor normalization be the cause? A: Yes. Improper normalization can artificially inflate or suppress the perceived importance of different variables, leading to flawed scientific inferences.

- Symptom: The measured impact of one type of environmental regulation (e.g., market-incentive) disproportionately dominates others (e.g., command-and-control or public-participation) in a multi-variable analysis [27].

- Diagnosis: Investigate if the variable representing the dominant regulation has a fundamentally larger scale or variance compared to others, causing the model to attribute more predictive power to it.

Solution: Re-normalize all regulatory variables using a method that puts them on a common scale (e.g., Z-score), then re-run the analysis to assess if the inferred relationships change.

Symptom: A mediating variable, such as "technological innovation," shows a statistically significant but counterintuitive relationship [27].

- Diagnosis: The process vs. product innovation indicators may have been normalized incorrectly, masking their true mediating role between regulation and pollution reduction.

- Solution: Normalize sub-constructs (process innovation, product innovation) separately before combining them into a composite "technological innovation" variable, ensuring each sub-construct contributes appropriately to the mediation analysis.

Frequently Asked Questions (FAQs)

Q1: What is the most critical step to avoid poor normalization when integrating heterogeneous environmental data? A: The most critical step is conducting a thorough exploratory data analysis (EDA) before any modeling. This involves visualizing the distributions (using histograms, box plots) of all variables from each data source to understand their original scales, variances, and the presence of outliers. This initial profiling guides the choice of the most appropriate normalization technique.

Q2: Does the choice of normalization technique depend on the type of environmental data? A: Absolutely. The table below summarizes recommended techniques based on data characteristics:

| Data Characteristic | Recommended Normalization Technique | Brief Rationale | Example in Environmental Research |

|---|---|---|---|

| Normally Distributed, Few Outliers | Z-Score Standardization | Centers data around mean with unit variance, preserving shape of distribution. | Standardizing temperature or pH readings from sensors. |

| Bounded Values (e.g., 0-100%) | Min-Max Scaling | Scales data to a fixed range (e.g., [0,1]), useful for indices. | Normalizing efficiency scores or capacity utilization. |

| Many Outliers, Skewed | Robust Scaling | Uses median and IQR, resistant to outliers. | Handling pollutant concentration data with extreme events. |

| Sparse Data | Max Absolute Scaling | Scales by maximum absolute value, preserving sparsity and zero entries. | Processing data from intermittent public participation reports. |

Q3: How can I validate that my normalization procedure has been effective? A: Effective normalization can be validated by:

- Post-normalization Distribution Check: Confirm that the normalized features have similar scales and distributions suitable for the chosen model.

- Model Stability: The model's performance (e.g., R², AUC) should become more consistent across different data splits and bootstrap samples.

- Sensitivity Analysis: The relative importance of model coefficients should align more closely with theoretical expectations from environmental science after normalization.

Experimental Protocols

Protocol 1: Evaluating Normalization Techniques for Mediation Analysis

Objective: To empirically determine the impact of different normalization techniques on the results of a mediation analysis examining how environmental regulation reduces pollution through technological innovation [27].

Methodology:

- Data Acquisition: Gather a panel dataset containing metrics for:

- Independent Variables: Three types of environmental regulation (Command-and-Control, Market-Incentive, Public-Participation).

- Mediating Variable: Technological Innovation, with sub-indicators for Process Innovation and Product Innovation.

- Dependent Variable: Environmental Pollution levels.

- Control Variables: Economic growth, industrial structure, etc. [27].

- Pre-processing: Create four versions of the dataset:

- Version A: Raw, unnormalized data.

- Version B: Z-Score normalized data.

- Version C: Min-Max normalized data.

- Version D: Robust scaled data.

- Model Execution: For each dataset version, run a hierarchical regression analysis to test the mediation effect of technological innovation.

- Comparison Metric: Compare the estimated coefficients, significance levels (p-values), and calculated mediating effect sizes across the four versions.

The logical flow of this experimental protocol is as follows:

Protocol 2: Testing Model Robustness Across Spatiotemporal Regimes

Objective: To assess whether proper normalization improves a model's ability to generalize across different time periods and geographical locations, a common challenge in environmental operations research.

Methodology:

- Data Splitting: Split the integrated environmental dataset into distinct spatiotemporal blocks (e.g., Data from 2011-2015 for Region X, 2016-2020 for Region Y) [27].

- Training and Normalization: Train a predictive model (e.g., for pollution levels) on one block. Crucially, fit the normalization parameters (e.g., mean, standard deviation) only on the training block.

- Testing: Apply the same fitted normalization parameters to the held-out test block before generating predictions.

- Evaluation: Measure the performance drop (e.g., increase in Mean Absolute Error) between training and test sets. A smaller performance drop indicates a more robust normalization method that generalizes better.

The Scientist's Toolkit: Research Reagent Solutions

Essential computational and data handling "reagents" for research in this field.

| Item / Tool | Function / Description | Application Context |

|---|---|---|

| Python (Scikit-learn) | A programming library providing robust implementations of StandardScaler (Z-score), MinMaxScaler, and RobustScaler. | The primary tool for implementing and comparing different normalization techniques in a reproducible pipeline. |

| R (dplyr, scale) | Statistical programming environment with comprehensive functions for data manipulation and normalization. | Used for statistical analysis, particularly for hierarchical regression and mediation analysis common in social science-oriented environmental research [27]. |

| Extract, Transform, Load (ETL) Pipelines | A system used to extract data from multiple sources, transform it (including normalization), and load it into a unified structure [28]. | Critical for physically integrating heterogeneous data from command-and-control, market-incentive, and public-participation sources into a single analysis-ready dataset. |

| Virtual Data Integration Systems | A system where data remains in original sources and is queried via a mediator, reducing implementation costs [28]. | Useful when working with highly sensitive or rapidly updating proprietary datasets that cannot be easily copied and normalized in a central warehouse. |

| Ontology-Based Integration | Using a formal representation of knowledge (ontology) to resolve semantic heterogeneity between data sources at a conceptual level [28]. | Helps ensure that when data is normalized, it is done so with a consistent understanding of what each variable represents (e.g., defining "technological innovation" consistently across studies). |

A Practical Toolkit: Selecting and Applying Data Normalization Methods

Troubleshooting Guides

Guide 1: Addressing Skewed Data and Failed Normality After Log Transformation

- Problem: My data is still skewed after applying a log transformation, or the transformation has made the skewness worse.

- Explanation: A common misconception is that log transformation always reduces skewness and makes data normal. However, this is only reliably true if the original data approximately follows a log-normal distribution. If this assumption is violated, a log transformation can sometimes exacerbate skewness or create new patterns [29].

- Solution:

- Diagnose: Before transforming, check the distribution of your raw data using histograms and Q-Q plots. If the data contains zeros or negative values, a standard log transformation will fail, as it is undefined for these values.

- Consider Alternatives:

- For data with zeros, use a generalized log (glog) transformation, such as the one implemented in VSN, which handles low-intensity values more gracefully and is defined for a wider range of values [30].

- Use Variance Stabilizing Normalization (VSN), which combines a glog transformation with calibration to stabilize variance across the dynamic range of measurements, making it robust for various data distributions common in molecular data [30] [31].

- Employ distribution-free methods, such as Generalized Estimating Equations (GEE), which do not rely on the normality assumption [29].

Guide 2: Handling Dominant Features and Poor Algorithm Performance

- Problem: My machine learning model (e.g., SVM, K-means) is performing poorly, likely because features with larger scales are dominating the model.

- Explanation: Algorithms that use distance calculations are sensitive to the scale of features. A feature with a broad range (e.g., annual income) can disproportionately influence the model compared to a feature with a smaller range (e.g., age in years) [32].

- Solution:

- Apply Z-Score Normalization: Standardize all features to have a mean of 0 and a standard deviation of 1 using the formula

z = (x - μ) / σ[32] [33]. This ensures all features contribute equally to the distance calculations. - Implementation:

- Calculate the mean (μ) and standard deviation (σ) for each feature.

- Subtract the mean from each data point and divide by the standard deviation.

- In Python, this can be done efficiently using libraries like NumPy or Scikit-learn's

StandardScaler.

- Apply Z-Score Normalization: Standardize all features to have a mean of 0 and a standard deviation of 1 using the formula

- Problem: I need to combine datasets from different experimental batches, platforms, or sources, but systematic biases and different scales are making integration difficult.

- Explanation: Heterogeneous data from different sources often contains non-biological variation due to differences in sample handling, instrumentation, or calibration. Normalization is the process that aims to account for this bias and make samples more comparable [31].

- Solution:

- Choose a Robust Normalization Method:

- VSN is highly effective for this purpose, as it includes an affine transformation (calibration) to adjust for systematic differences between samples or arrays, followed by a variance-stabilizing glog transformation [30]. Studies have shown VSN performs consistently well in reducing variation between technical replicates in proteomic data [31].

- Other effective methods include Linear Regression Normalization and Local Regression Normalization [31].

- Workflow:

- For multiple arrays or samples, apply VSN globally to the entire dataset. The method will calibrate each column (sample) and then apply the glog transformation, making the samples directly comparable [30].

- Use the

meanSdPlotfunction (in R) post-normalization to verify that variance has been stabilized across the entire range of mean intensities [30].

- Choose a Robust Normalization Method:

Frequently Asked Questions (FAQs)

FAQ 1: When should I use a log transformation versus a Z-score?

The choice depends entirely on your goal, as these methods address different problems.

| Method | Primary Goal | Ideal Use Case |

|---|---|---|

| Log Transformation | Stabilize variance and reduce right-skewness in positive-valued data. | Preparing data for analysis when the variance increases with the mean (e.g., gene expression counts, protein intensities) [29]. |

| Z-Score Normalization | Standardize features to a common scale with a mean of 0 and standard deviation of 1. | Preprocessing for machine learning algorithms that are sensitive to feature scale (e.g., SVM, K-means, PCA) [32] [33]. |

| VSN | Combine calibration and variance stabilization for multi-sample datasets. | Integrating data from multiple sources or arrays (e.g., microarray, proteomics) to remove systematic bias and stabilize variance across the dynamic range [30] [31]. |

FAQ 2: Can Z-scores be used to identify outliers?

Yes, Z-scores are a common tool for outlier detection. The underlying principle is that in a normal distribution, the vast majority of data points (99.7%) lie within three standard deviations of the mean. Therefore, data points with Z-scores greater than +3 or less than -3 are often considered potential outliers and can be flagged for further investigation [32]. This is particularly useful in fields like quality control.

FAQ 3: What does a Z-score of 0 mean?

A Z-score of 0 indicates that the data point's value is exactly equal to the mean of the dataset [34] [33]. It is located zero standard deviations away from the average.

FAQ 4: My data contains zeros or negative values. Can I still use a log transformation?

No, you cannot use a standard log transformation because the logarithm of zero or a negative number is undefined [29] [30]. In such cases, you should:

- Use a generalized log (glog) transformation as implemented in VSN, which is designed to handle these values [30].

- Apply a started log by adding a small constant to all values before transforming (e.g.,

log(x + 1)), though this requires careful choice of the constant.

Method Comparison Table

The following table summarizes the core characteristics of the three scaling and transformation methods for easy comparison.

| Method | Formula | Key Assumptions | Primary Advantage | Common Pitfalls |

|---|---|---|---|---|

| Log Transformation | x_new = log(x) |

Data is positive-valued and ideally log-normally distributed. [29] | Compresses large values and can reduce right-skewness. | Fails with zeros/negative values; can increase skewness if assumptions are violated. [29] |

| Z-Score Normalization | z = (x - μ) / σ [32] [33] |

No strong distributional assumption, but sensitive to outliers. | Places all features on a comparable, unitless scale. | Does not change the underlying distribution shape; outliers can distort mean and SD. [32] |

| Variance Stabilizing Normalization (VSN) | x_new = glog(x, a, b) (with calibration) [30] |

Most data is unaffected by biological effects; a subset is stable. | Simultaneously calibrates samples and stabilizes variance, robust for low intensities. [30] [31] | More complex computationally; parameters are estimated from the data. |

Experimental Protocol: Normalization for Proteomic Data Analysis

This protocol outlines the steps for normalizing label-free proteomics data using VSN, based on methodology from a systematic evaluation of normalization methods [31].

Data Preprocessing

- Input: Raw mass spectrometry files.

- Software: Process raw files using software like Progenesis QI or MaxQuant for feature detection and peptide identification.

- Format: Export a non-normalized protein abundance matrix (samples in columns, proteins/peptides in rows).

Normalization with VSN

- Tool: Use the

vsnpackage in R (or equivalent implementation). - Key Consideration: VSN performs its own transformation, so the input data should be untransformed (do not log-transform beforehand) [31].

- Code Example:

Post-Normalization Quality Control

- Variance Stabilization Check: Use the

meanSdPlotfunction to create a plot of standard deviation versus mean abundance. A successful normalization will show a roughly horizontal trend, indicating stable variance across the expression range [30] [31]. - Differential Expression Analysis: Proceed with statistical testing (e.g., t-tests, linear models) on the VSN-normalized data.

Workflow Diagram: Method Selection for Heterogeneous Data

The diagram below illustrates a logical decision pathway for selecting an appropriate scaling or transformation method based on data characteristics and analysis goals.

Research Reagent Solutions

The following table lists key software tools and packages essential for implementing the described normalization methods in a research environment.

| Item | Function | Key Application Context |

|---|---|---|

| VSN R Package [30] | Implements Variance Stabilizing Normalization. Performs calibration and a generalized log (glog) transformation on data. | Normalization of microarray and label-free proteomics data; integration of datasets with systematic bias. |

| Scikit-learn (Python) | Provides the StandardScaler module for efficient Z-score normalization of feature matrices. |

Preprocessing for machine learning pipelines in Python, ensuring features are on a comparable scale. |

| NumPy (Python) [32] | A fundamental library for numerical computation. Enables manual calculation of Z-scores and other mathematical transformations. | Custom data preprocessing scripts and foundational numerical operations for data analysis. |

| Normalyzer Tool [31] | A tool designed to evaluate and compare the performance of multiple normalization methods on a given dataset. | Method selection for proteomics data; assessing the effectiveness of normalization in reducing non-biological variance. |

Frequently Asked Questions (FAQs)

1. What makes my microbiome or geochemical data "compositional"? Your data is compositional if each sample conveys only relative information. This occurs when your measurements are constrained to a constant total (e.g., proportions summing to 1 or 100%, or raw sequencing reads limited by the instrument's capacity). In such cases, an increase in the relative abundance of one component necessarily leads to an apparent decrease in one or more others [35] [36]. This constant-sum constraint violates the assumptions of standard statistical methods that treat each variable as independent.

2. Why can't I use standard correlation analysis on my compositional data? Using standard correlation (e.g., Pearson correlation) on raw compositional data almost guarantees spurious correlations [35] [36]. This problem was identified over a century ago by Karl Pearson. Because the data is constrained, the change in one component creates an illusory correlation between all the others. Consequently, correlation structures can change dramatically upon subsetting your data or aggregating taxa, leading to unreliable and non-reproducible results in network analysis or ordination [36].

3. My dataset contains many zeros (e.g., unobserved taxa). Can I still use Compositional Data Analysis? Yes, but zeros require special handling. Not all zeros are the same; they can be classified as:

- Rounded Zeros: Values below a detection limit.

- Count Zeros: Absences from a discrete counting process (common in microbiome data).

- Essential Zeros: True absences (e.g., a mineral not present in a rock formation).

Specialized R packages like

zCompositionsprovide coherent imputation methods for zeros and non-detects, allowing for subsequent log-ratio analysis without distorting the data's properties [37].

4. What is the most robust log-ratio transformation to use? The choice depends on your question and data structure:

- CLR (Centered Log-Ratio): Excellent for PCA-like ordination (creating biplots) and when you need to analyze all components simultaneously. Its drawback is that it leads to a singular covariance matrix, making it unsuitable for some correlation-based methods.

- ILR (Isometric Log-Ratio): Ideal for maintaining Euclidean geometry for statistical modeling and for creating orthogonal balances, which can be designed to reflect a priori hypotheses (e.g., phylogenetic groupings) [38] [35].

- ALR (Additive Log-Ratio): Simple to compute but is not isometric, meaning it distorts distances, and its results depend on the chosen denominator component.

5. Is it acceptable to normalize my microbiome data using rarefaction or count normalization methods like TMM? While common, rarefaction (subsampling to an even depth) wastes data and reduces precision [36]. Methods like TMM from RNA-seq analysis are less suitable for highly sparse and asymmetrical microbiome datasets [36]. The core issue is that these methods do not fully address the fundamental problem of compositionality. The total read count from a sequencer is arbitrary and contains no information about the absolute abundance of microbes in the original sample; it only informs the precision of the relative abundance estimates [36]. Log-ratio transformations are a more principled approach.

Troubleshooting Guides

Problem: You detect spurious correlations in your network analysis.

Symptoms:

- High number of strong negative correlations.

- Correlation structure changes drastically when you add or remove a few taxa from the analysis.

- Network appears overly connected with difficult-to-interpret modules.

Solution:

- Acknowledge Compositionality: Cease analysis using raw counts or proportions.

- Choose a Log-Ratio Transformation: Apply the CLR transformation to your data.

- Calculate a Robust Correlation Metric: Instead of standard correlation, use a proportionality metric derived from log-ratio variance. A suitable choice is the variance of log-ratios (VLR), which is not affected by the closure problem [39].

- Re-build Network: Construct your correlation network using the new proportionality matrix.

Problem: Your data fails a normality test, preventing parametric statistics.

Symptoms:

- Shapiro-Wilk test or Q-Q plots indicate a highly skewed, non-Gaussian distribution [1].

- Data visualization shows a long tail toward higher values.

Solution:

- Test for Normality: Perform a Shapiro-Wilk test on your raw data. A p-value < 0.05 confirms non-normality [1].

- Apply a Log Transformation: Use a logarithmic (log) or log-ratio transformation. This simultaneously normalizes the distribution and addresses compositionality.

- Re-test for Normality: Confirm that the transformed data does not reject the null hypothesis of the Shapiro-Wilk test (p-value > 0.05) [1].

- Proceed with Analysis: You can now use parametric statistics (e.g., linear regression, t-tests) on the log-ratio transformed data.

Problem: Your dataset contains many zeros, preventing log transformation.

Symptoms:

- Error messages when applying

log()due to zeros (log(0) is undefined). - A significant portion of your features are absent in many samples.

Solution:

- Classify the Zeros: Determine the nature of the zeros (rounded, count, or essential). For microbiome data, most are "count zeros" [37].

- Select an Imputation Method: Use a specialized package to replace zeros with sensible small values.

- Proceed with Log-Ratio Analysis: After imputation, you can apply any log-ratio transformation.

Comparative Table of Log-Ratio Transformations

Table 1: Key characteristics and use cases for common log-ratio transformations.

| Transformation | Acronym | Formula (for parts A, B, C) | Advantages | Disadvantages | Ideal Use Case |

|---|---|---|---|---|---|

| Additive Log-Ratio [35] | ALR | ( \ln(A/C), \ln(B/C) ) | Simple to compute and interpret. | Not isometric; results depend on choice of denominator. | Comparing parts relative to a fixed, reference component. |

| Centered Log-Ratio [35] | CLR | ( \ln\left( \frac{A}{g(composition)} \right) ) | Symmetric; good for PCA and covariance estimation. | Leads to singular covariance matrix (parts sum to zero). | Creating biplots; analyses where all components are considered equally. |

| Isometric Log-Ratio [38] [35] | ILR | ( \sqrt{\frac{rs}{r+s}} \ln\left( \frac{g(parts1)}{g(parts2)} \right) ) | Maintains exact Euclidean geometry; orthogonal coordinates. | More complex to define; requires a sequential binary partition. | Any multivariate statistical analysis (regression, clustering). |

Experimental Protocol: Conducting a Full Compositional Data Analysis

This protocol provides a step-by-step guide for analyzing a typical microbiome dataset from raw counts to statistical inference.

1. Data Preprocessing and Filtering

- Objective: Remove low-quality samples and non-informative features.

- Steps:

2. Handling Zeros via Imputation

- Objective: Replace zeros to allow for log-ratio transformations.

- Steps (using R and

zCompositions):- Install and load the

zCompositionspackage. - Apply the multiplicative replacement method:

imputed_data <- cmultRepl(your_count_data, method="CZM", label=0). - This function replaces zeros with positive probabilities drawn from a Bayesian model, preserving the compositional structure [37].

- Install and load the

3. Log-Ratio Transformation and Ordination

- Objective: Visualize the data structure in a compositionally valid way.

- Steps (using R and

compositionsorrobCompositions):- Apply the CLR transformation:

clr_data <- clr(imputed_data). - Perform a principal component analysis (PCA) on the

clr_data. - Create a biplot to visualize samples and variables (taxa) in the same space, identifying patterns and potential outliers [37].

- Apply the CLR transformation:

4. Statistical Testing and Modeling

- Objective: Identify features differentially abundant between groups.

- Steps:

- Instead of methods like t-tests on proportions, use a log-ratio-based framework.

- Option A (Simple): Perform a MANOVA on the ILR coordinates of your data.

- Option B (Advanced): Use specialized tools like

propr[39] orcoda4microbiome[37] which are designed for high-dimensional compositional data and can identify associated features without spurious results.

The Scientist's Toolkit

Table 2: Essential software tools and packages for Compositional Data Analysis.

| Tool / Package | Language | Primary Function | Key Features / Notes |

|---|---|---|---|

| compositions [37] | R | General-purpose CoDA | Core package for acomp class, descriptive stats, visualization, and PCA. |

| robCompositions [37] | R | Robust CoDA | Focus on robust methods, includes PCA, factor analysis, and regression. |

| zCompositions [37] | R | Handling Irregular Data | Suite of methods for imputing zeros, nondetects, and missing data. |

| easyCODA [37] | R | Multivariate Analysis | Emphasizes pairwise log-ratios and variable selection. |

| compositional [39] | Python | General-purpose CoDA | Pandas/NumPy compatible, functions for CLR, VLR, and proportionality. |

| ggtern [37] | R | Visualization | Creates ternary diagrams using ggplot2 syntax. |

| coda4microbiome [37] | R | Microbiome Applications | Penalized regression for variable selection in microbiome studies. |

Key Reagent Solutions for Computational Analysis

Table 3: Essential "reagents" for a compositional data workflow.

| "Reagent" (Method/Concept) | Function in the Workflow |

|---|---|

| Shapiro-Wilk Test [1] | Diagnostic tool to check if data is normally distributed before/after transformation. |

| Log / Log-Ratio Transformation [1] [35] | Core operation to normalize data distributions and create a valid Euclidean geometry for relative data. |

| Aitchison Distance [35] | The correct metric for calculating distances between compositions, based on log-ratios. |

| Isometric Log-Ratio (ILR) Coordinates [38] [37] | Transforms compositions into Euclidean coordinates for use in any standard multivariate statistical method. |

| Multiplicative Replacement [37] | A specific "reagent" for the problem of zeros, replacing them with sensible estimates to permit log-transformation. |

Troubleshooting Guides and FAQs

Common PQN Issues and Solutions

Q1: After applying PQN, my biological treatment variance seems to have decreased or disappeared. What could be the cause? A: This can occur if the machine learning model overfits the data or if the assumption that the majority of features are not biologically altered is violated. SERRF, a machine learning-based normalization, has been noted to inadvertently mask treatment-related variance in some datasets [40].

- Solution: Validate your results by comparing the variance explained by treatment factors before and after normalization. Consider using a simpler method like LOESS or Median normalization if you suspect over-correction [40].

Q2: When should a reference sample be used in PQN, and how do I choose one? A: A reference spectrum is used to minimize the influence of experimental errors. It is typically the median or mean spectrum calculated from all samples or from a set of pooled Quality Control (QC) samples [41] [42] [43].

- Solution: For time-course or multi-batch experiments, using the median of pooled QC samples as a reference is more robust. For simpler study designs, the median of all study samples is sufficient [40] [44].

Q3: Why is a total area normalization sometimes recommended before performing PQN? A: Total area normalization (or total sum scaling) is often applied as a preliminary step to standardize the overall intensity of all samples. This can improve the performance of subsequent PQN by initially accounting for global differences in concentration or dilution [41] [43] [44].

Common MRN Issues and Solutions

Q1: My MRN normalization factors are highly variable across replicates. Is this normal? A: The MRN method calculates a single scaling factor per sample based on the median of ratios across all genes/features. Some variability is expected, but high variability can indicate issues with the data.

- Solution: Investigate potential outliers in your samples. Check the initial data quality and the assumption that most features are not differentially expressed. The method is less sensitive to parameters like the number of upregulated genes compared to others [45].

Q2: For a simple two-condition experiment, does the choice between TMM, RLE, and MRN matter? A: For a simple two-condition, non-replicated design, these methods often yield similar results with minimal impact on the final analysis [46] [45].

- Solution: In more complex experimental designs (e.g., time-series, multiple factors), the MRN method has been shown to be more consistent and robust, producing a lower number of false discoveries in some simulations [45].

Performance Comparison of Normalization Methods

The table below summarizes the performance of PQN, MRN, and other common normalization methods across different data types, as evaluated in various studies.

Table 1: Normalization Method Performance Across Data Types

| Method | Recommended Data Types | Key Strengths | Key Limitations / Considerations |

|---|---|---|---|

| Probabilistic Quotient Normalization (PQN) | Metabolomics (RP, HILIC), Lipidomics [40] | Robust to dilution effects in complex biological mixtures; identified as optimal for metabolomics & lipidomics in temporal studies [40] [41]. | Relies on the assumption that the median metabolite concentration fold-change is approximately 1 [42]. |