Decoding Complexity: A Comprehensive Guide to Non-Target Analysis Data Interpretation with High-Resolution Mass Spectrometry

This article provides a comprehensive framework for interpreting complex datasets generated by high-resolution mass spectrometry (HRMS) in non-targeted analysis (NTA).

Decoding Complexity: A Comprehensive Guide to Non-Target Analysis Data Interpretation with High-Resolution Mass Spectrometry

Abstract

This article provides a comprehensive framework for interpreting complex datasets generated by high-resolution mass spectrometry (HRMS) in non-targeted analysis (NTA). Covering foundational principles, methodological workflows, optimization strategies, and validation protocols, we address critical challenges including uncertainty management, data processing techniques, machine learning integration, and quantitative interpretation. Designed for researchers and analytical professionals, this guide synthesizes current best practices to enhance confidence in chemical identification, support robust study design, and facilitate the transition of NTA from research tool to decision-support application in biomedical and environmental health contexts.

Fundamental Principles and Core Concepts of Non-Targeted Analysis

In the fields of environmental monitoring, food safety, and pharmaceutical development, the ability to comprehensively characterize complex chemical mixtures is paramount. Traditional targeted analytical methods have long been the gold standard for quantifying specific, predefined analytes. However, the expanding universe of chemical substances, including numerous emerging environmental contaminants (EECs) and non-intentionally added substances (NIAS), has revealed the limitations of targeted approaches [1] [2]. These challenges have propelled the adoption of non-targeted analysis (NTA), a powerful paradigm that enables the detection and identification of unknown or unexpected chemicals without prior knowledge of their presence [3] [4].

NTA, often used as a blanket term that encompasses both suspect screening and true non-targeted analysis, represents a fundamental shift in analytical strategy [3]. This in-depth technical guide delineates the core principles, methodological workflows, and key differentiators of NTA from traditional targeted approaches, providing researchers and drug development professionals with a framework for selecting and implementing appropriate analytical strategies for their specific applications.

Core Definitions and Conceptual Differentiation

Targeted Analysis: The Conventional Paradigm

Targeted analysis is a quantitative analytical method designed to detect and measure specific, predefined analytes with a high degree of confidence [5]. This approach relies on the availability of authentic chemical standards for each target compound to optimize detection parameters, establish retention times, and generate calibration curves for accurate quantification [3]. The performance of targeted methods is evaluated using well-established metrics including selectivity (ability to differentiate the target analyte from interferents), sensitivity (limit of detection and quantification), accuracy (closeness to the true value), and precision (reproducibility across measurements) [5]. Targeted methods are ideal for regulatory compliance monitoring, routine quantification of known contaminants, and any application where the chemical targets are well-defined and reference standards are available.

Non-Targeted Analysis: The Exploratory Paradigm

Non-targeted analysis (NTA), also referred to as non-target screening or untargeted screening, is a theoretical concept broadly defined as "the characterization of the chemical composition of any given sample without the use of a priori knowledge regarding the sample's chemical content" [3]. Unlike targeted methods, NTA does not focus on specific predefined analytes but aims to comprehensively detect a wide range of chemicals present in a sample [4]. The resulting detections may be used to classify samples based on their entire chemical profile, and subsequent analyses may focus on identifying individual chemicals of interest [3].

A related approach, suspect screening analysis (SSA), occupies a middle ground between targeted and true non-targeted analysis. SSA involves the identification of chemicals by comparison to a predefined list or library containing known chemicals of interest, essentially narrowing the scope of the investigation to compounds of suspected relevance [3]. In practical usage, the term "NTA" is often applied as a blanket term that encompasses both suspect screening and true non-targeted analysis, particularly when workflows incorporate elements of both approaches [3].

Table 1: Fundamental Differences Between Targeted, Suspect Screening, and Non-Targeted Analysis

| Aspect | Targeted Analysis | Suspect Screening Analysis (SSA) | Non-Targeted Analysis (NTA) |

|---|---|---|---|

| Objective | Quantify specific, predefined analytes | Identify suspected chemicals from a predefined list | Comprehensively characterize sample chemical composition |

| Prior Knowledge Requirement | Complete (reference standards required) | Partial (suspect list required) | None |

| Scope of Analysis | Narrow (limited to target analytes) | Moderate (limited to suspect list) | Broad (theoretically unlimited) |

| Quantitative Capability | Fully quantitative | Semi-quantitative or qualitative | Primarily qualitative |

| Standard Dependence | Dependent on authentic standards | Not dependent, but improves confidence | Not dependent |

| Primary Application | Regulatory compliance, routine monitoring | Chemical forensics, hypothesis testing | Discovery, exploratory research, hazard identification |

The Analytical Spectrum: A Conceptual Workflow

The relationship between targeted, suspect screening, and non-targeted approaches can be visualized as a spectrum of analytical strategies with varying levels of prior knowledge requirements and chemical scope. The following diagram illustrates the conceptual workflow and relationship between these approaches:

Methodological Workflows and Technical Implementation

Instrumentation and Analytical Platforms

The implementation of NTA relies heavily on high-resolution mass spectrometry (HRMS) platforms, which provide the mass accuracy and resolving power necessary to distinguish between thousands of chemical features in complex samples [1] [5]. Common HRMS instruments used in NTA include quadrupole time-of-flight (QTOF) and Orbitrap mass spectrometers, often coupled with separation techniques such as liquid chromatography (LC) or gas chromatography (GC) [6] [2]. The emergence of multidimensional separation techniques, including two-dimensional chromatography (LC×LC or GC×GC) and high-resolution ion mobility spectrometry (HRIMS), has further enhanced the peak capacity and separation power available for NTA, enabling more comprehensive analysis of complex mixtures [6].

The data acquisition modes commonly employed in NTA include data-dependent acquisition (DDA), which selects the most abundant ions for fragmentation, and data-independent acquisition (DIA), which fragments all ions within predefined mass windows [2]. Both approaches generate MS/MS spectral data that are crucial for compound identification, with each offering distinct advantages in coverage and reproducibility.

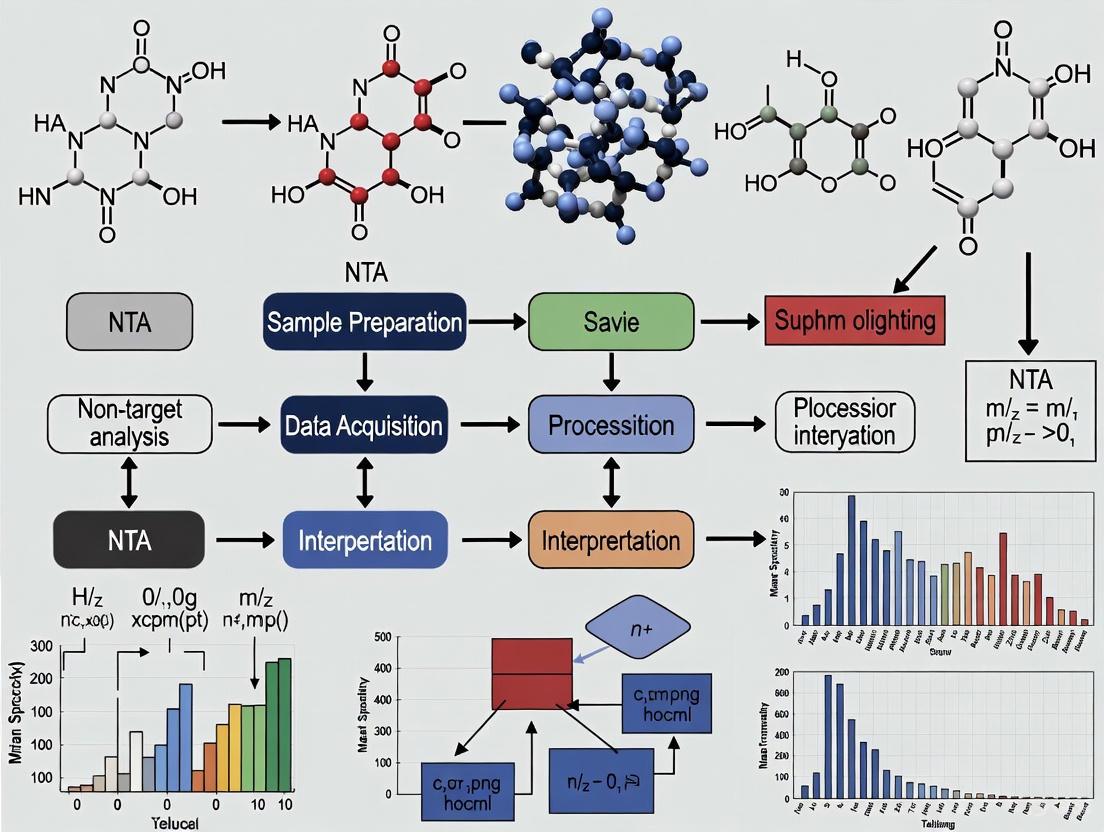

A comprehensive NTA study involves multiple interconnected steps, from initial study design to final data interpretation. The following diagram illustrates a generalized NTA workflow, highlighting key stages and decision points:

Critical Methodological Differentiators

Sample Preparation and Study Design

Targeted methods employ optimized sample preparation techniques specifically tailored to the physicochemical properties of the target analytes, aiming to maximize extraction efficiency and minimize matrix effects for those specific compounds [3]. In contrast, NTA utilizes generic sample preparation protocols designed to extract a broad range of chemicals with diverse properties, inevitably introducing biases toward certain compound classes while potentially missing others [3] [2]. The study design in NTA must intentionally incorporate quality assurance and quality control (QA/QC) approaches, including procedural blanks, quality control materials, and internal standards, to enable performance assessment after data acquisition and analysis is complete [3].

Data Processing and Compound Identification

Data processing in targeted analysis is relatively straightforward, focusing on quantifying specific precursor-product ion transitions for each target analyte [5]. NTA, however, generates complex, high-dimensional datasets that require sophisticated data processing pipelines for feature detection, peak alignment, and molecular formula assignment [1]. The identification process in NTA follows confidence levels based on the available evidence, ranging from level 1 (confirmed structure with authentic standard) to level 5 (exact mass of interest) [7] [3].

Compound identification in NTA relies on multiple lines of evidence, including:

- Accurate mass measurements for elemental composition assignment

- MS/MS fragmentation patterns for structural elucidation

- Retention time and chromatographic behavior

- Collision cross-section (CCS) values when ion mobility spectrometry is employed [6]

- Isotopic patterns for element identification

The integration of computational tools and spectral libraries is essential for NTA data interpretation. Resources such as the NIST Mass Spectral Library, NORMAN Suspect List Exchange, and various open-source spectral databases support compound identification by providing reference spectra for comparison [7] [8].

Quantification Approaches

While targeted analysis provides absolute quantification using authentic standards and calibration curves, NTA typically offers semi-quantitative estimates based on assumed response factors or class-based calibration [4]. Recent advancements in quantitative non-targeted analysis (qNTA) aim to address this limitation by developing approaches for more accurate concentration estimation without reference standards for every compound [1] [4].

Performance Assessment and Quality Assurance

Performance Metrics for Targeted vs. Non-Targeted Analysis

The criteria for assessing method performance differ significantly between targeted and non-targeted approaches. The table below summarizes key performance metrics and their application in each paradigm:

Table 2: Performance Assessment in Targeted vs. Non-Targeted Analysis

| Performance Metric | Targeted Analysis Application | Non-Targeted Analysis Application |

|---|---|---|

| Selectivity | Ability to distinguish target analyte from interferents using unique ion transitions | Chemical space coverage, isomeric resolution, specificity of identification |

| Sensitivity | Limit of detection (LOD) and quantification (LOQ) for specific analytes | Feature detection rate, minimum identifiable concentration across chemical space |

| Accuracy | Agreement with true value using certified reference materials | Structural identification correctness, database matching accuracy |

| Precision | Reproducibility of quantitative results across replicates | Consistency of feature detection and identification across replicates |

| Uncertainty | Well-defined confidence intervals for concentrations | Multiple sources: feature detection, compound identification, quantification estimation |

Quality Assurance in Non-Targeted Analysis

Quality assurance in NTA presents unique challenges due to the absence of reference standards for most detected compounds [5]. Best practices include:

- Implementing comprehensive QA/QC protocols throughout the analytical workflow [3]

- Using internal standards covering diverse chemical classes to monitor system performance

- Incorporating blank samples to identify and filter contamination

- Assessing replicate consistency to ensure detection reliability

- Applying confidence levels for compound identification to communicate uncertainty [7] [5]

Initiatives such as the Best Practices for Non-Targeted Analysis (BP4NTA) have developed reporting frameworks and quality control metrics to improve the reliability and comparability of NTA results across different laboratories and studies [7] [3].

Advanced Applications and Future Directions

Prioritization Strategies for NTA Data Interpretation

The large number of features detected in NTA studies (often thousands per sample) creates a bottleneck in data interpretation, necessitating effective prioritization strategies to focus resources on the most relevant compounds [9]. Recent approaches include:

- Effect-directed prioritization: Integrating biological response data with chemical analysis to focus on bioactive compounds [9]

- Prediction-based prioritization: Using in silico tools to predict concentrations and toxicities for risk-based ranking [9]

- Chemistry-driven prioritization: Applying chemical intelligence (mass defect filtering, homologous series detection) to identify compounds of interest [9]

- Process-driven prioritization: Leveraging spatial, temporal, or technical processes to highlight relevant features [9]

The US Environmental Protection Agency's INTERPRET NTA tool exemplifies efforts to streamline NTA data review by integrating chemical metadata, predicted spectra, and hazard information to support defensible prioritization of chemical candidates [8].

Machine Learning and Artificial Intelligence in NTA

Recent advancements in machine learning (ML) and artificial intelligence (AI) are addressing key challenges in NTA, including:

- Structure identification: ML models are being developed to improve the accuracy of compound identification from MS/MS spectra [1]

- Toxicity prediction: Approaches like MSFragTox leverage MS/MS fragmentation data to directly predict toxicity endpoints, bridging analytical data and hazard assessment [10]

- Workflow optimization: Computational tools are enhancing various stages of the NTA workflow, from feature detection to compound annotation [1]

These developments are gradually transforming NTA from a purely exploratory tool toward a more robust approach capable of supporting chemical risk assessment and regulatory decision-making [1] [8].

Table 3: Key Research Reagent Solutions for Non-Targeted Analysis

| Resource Category | Examples | Function and Application |

|---|---|---|

| Spectral Libraries | NIST Mass Spectral Library, MassBank, mzCloud | Reference spectra for compound identification via spectral matching |

| Suspect Lists | NORMAN Suspect List Exchange, EPA's CompTox Chemicals Dashboard | Predefined lists of potential contaminants for suspect screening |

| Data Processing Tools | MS-DIAL, XCMS, OpenMS | Feature detection, peak alignment, and data preprocessing |

| Quantitative Prediction | MS2Quant | Concentration prediction from MS/MS spectra without standards |

| Toxicity Prediction | MS2Tox, MSFragTox | Toxicity estimation from fragmentation patterns |

| Identification Tools | CSI:FingerID, SIRIUS, CFM-ID | In silico fragmentation and compound structure elucidation |

| Data Integration Platforms | INTERPRET NTA (EPA) | Tools for reviewing, interpreting, and reporting NTA data quality |

Non-targeted analysis represents a paradigm shift in analytical chemistry, moving from hypothesis-driven targeted approaches to discovery-oriented comprehensive characterization. While targeted methods remain essential for precise quantification of known analytes, NTA provides unparalleled capability for detecting unknown and unexpected compounds across diverse sample matrices [4] [2]. The key differentiators between these approaches extend beyond technical implementation to encompass fundamental differences in philosophy, application, and performance assessment.

The ongoing development of standardized practices, advanced computational tools, and harmonized reporting frameworks is addressing current limitations in NTA, particularly regarding compound identification confidence and quantitative capability [5]. As these advancements mature, the integration of targeted and non-targeted approaches within unified analytical workflows offers the most powerful strategy for comprehensive chemical characterization, combining the quantitative rigor of targeted methods with the expansive scope of non-targeted discovery [3] [9].

For researchers and drug development professionals, understanding these complementary analytical paradigms is crucial for selecting appropriate methodologies to address specific research questions, whether the goal is precise quantification of defined targets or exploratory investigation of complex chemical mixtures. As NTA continues to evolve, its integration with emerging technologies like machine learning and high-resolution ion mobility spectrometry promises to further enhance its capabilities, ultimately strengthening environmental monitoring, pharmaceutical development, and public health protection.

Essential HRMS Concepts for Effective NTA Data Interpretation

Non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) represents a paradigm shift in analytical chemistry, moving from the detection of predefined analytes to the comprehensive investigation of all detectable chemical species in a sample [11]. This approach is particularly crucial for addressing emerging environmental contaminants (EECs) such as pharmaceuticals, pesticides, and industrial chemicals that pose significant challenges for detection and identification due to their structural diversity and lack of analytical standards [1]. Unlike traditional targeted methods that screen for a specific list of known compounds, NTA focuses on assigning structures or formulae to unknown signals in HRMS data, making it an indispensable tool for discovering novel contaminants, characterizing complex mixtures, and responding to unknown chemical releases [12] [13]. The versatility of HRMS-based NTA allows it to be applied to virtually any sample medium, including air, water, sediment, soil, food, consumer products, and biological specimens, providing researchers with a powerful capability for chemical discovery and exposure characterization [14].

Core HRMS Principles for NTA

Mass Resolution and Accuracy

The exceptional resolution and mass accuracy of HRMS instruments form the foundational principle enabling effective NTA. High mass resolution allows the instrument to distinguish between ions with subtle mass differences, which is critical for separating compounds in complex mixtures and reducing false positives from isobaric interferences [11]. Modern HRMS systems, including Time of Flight (TOF), Orbitrap, and Fourier Transform Ion Cyclotron Resonance (FT-ICR) instruments, achieve resolution powers ranging from tens of thousands to several million, enabling precise separation of ions with minute mass differences [11]. Mass accuracy, typically measured in parts per million (ppm), determines how closely the measured mass-to-charge ratio (m/z) aligns with the theoretical value. For NTA applications, mass accuracy within 3-5 ppm is generally required for confident molecular formula assignment, with higher accuracy significantly reducing the number of candidate formulas [13].

The combination of high resolution and accuracy allows for the determination of elemental compositions with high confidence, a capability that is fundamental for identifying unknown compounds when reference standards are unavailable [15]. This is particularly valuable for emerging contaminants like per- and poly-fluoroalkyl substances (PFAS), where nontarget HRMS methods have led to the discovery of more than 750 PFASs belonging to more than 130 diverse classes in environmental samples, biofluids, and commercial products [15].

Chromatographic Separation Considerations

Effective NTA requires the integration of high-performance chromatographic separation with HRMS detection to manage sample complexity and reduce ion suppression. Liquid chromatography (LC), particularly reversed-phase liquid chromatography (RPLC), coupled with HRMS has emerged as a prominent methodology for analyzing complex mixtures [16]. The chromatographic step separates compounds based on their chemical properties before they enter the mass spectrometer, reducing matrix effects and allowing for the detection of co-eluting isomers that would be indistinguishable by MS alone.

The retention time (RT) of a compound provides valuable supplementary information for identification. While not a direct HRMS parameter, RT can be predicted from chemical structure using quantitative structure-retention relationship (QSRR) models and used as an additional filter to increase identification confidence [16]. Recent advancements have focused on developing calibrant-free predicted retention time indices (RTIs) through machine learning models to enhance identification probability without the need for extensive calibration standards [16]. For high-quality data, laboratories should monitor critical chromatographic parameters including resolution, peak shape, and retention time reproducibility across samples, with greater than 94% of compounds demonstrating less than 20% relative standard deviation for peak height in quality control measures [13].

Tandem Mass Spectrometry (MS/MS) for Structural Elucidation

Tandem mass spectrometry (MS/MS or MSn) provides structural information critical for confident compound identification in NTA [1]. In MS/MS mode, precursor ions are isolated and fragmented through collisions with gas molecules, producing fragment ions that reveal structural characteristics of the original molecule [13]. The resulting fragmentation patterns serve as molecular fingerprints that can be matched against experimental or in silico reference spectra.

The acquisition of MS/MS data in NTA can follow either data-dependent acquisition (DDA) or data-independent acquisition (DIA) approaches. DDA selects the most abundant ions from the survey scan for fragmentation, providing clean spectra but potentially missing lower-abundance compounds. DIA fragments all ions within predefined mass windows, ensuring comprehensive coverage but producing more complex spectra that require advanced computational deconvolution [14]. For unknown identification, MS/MS spectra are searched against spectral libraries using similarity matching algorithms, though library coverage remains a challenge, with molecular networking and in silico fragmentation prediction serving as complementary strategies [13].

Table 1: Key HRMS Instrument Types and Their Characteristics in NTA

| Instrument Type | Typical Resolution | Mass Accuracy (ppm) | Strengths in NTA | Limitations |

|---|---|---|---|---|

| Time of Flight (TOF) | 40,000-100,000 | 1-5 ppm | Fast acquisition speed, wide dynamic range | Requires frequent calibration for high accuracy |

| Orbitrap | 100,000-500,000+ | 1-3 ppm | High resolution and accuracy, stable mass calibration | Slower acquisition at highest resolutions |

| FT-ICR | 1,000,000+ | <1 ppm | Ultra-high resolution, exceptional mass accuracy | High cost, complex operation, limited accessibility |

NTA Workflow and Data Interpretation

Comprehensive NTA Workflow

The end-to-end NTA workflow encompasses multiple stages from sample preparation to final reporting, with each stage requiring careful execution to ensure data quality and interpretability. The workflow can be visualized as a connected process of sequential stages:

The initial stage involves sample preparation and quality control, where implementing robust quality assurance/quality control (QA/QC) procedures is essential for generating trustworthy data [13]. This includes using non-targeted standard quality control (NTS/QC) mixtures containing compounds covering a wide range of physicochemical properties to monitor critical data quality parameters such as mass accuracy (typically within 3 ppm), isotopic ratio accuracy, and peak height reproducibility [13]. HRMS data acquisition follows, employing either data-dependent or data-independent approaches to comprehensively capture the chemical composition of samples.

Feature detection and peak picking algorithms then process the raw HRMS data to detect chromatographic peaks and extract relevant information including m/z values, retention times, and intensities [14]. The subsequent compound annotation and identification phase represents the core interpretive challenge, where multiple lines of evidence are combined to assign chemical structures to detected features [1]. Finally, prioritization and confirmation steps help focus efforts on the most relevant compounds, followed by comprehensive data interpretation and reporting to translate findings into actionable insights [17].

Data Processing and Feature Identification

Data processing in NTA converts raw instrument data into chemically meaningful information through a multi-step procedure. Feature detection algorithms identify chromatographic peaks and assemble related ions (isotopes, adducts, and fragments) into features representing unique chemical entities [14]. This step requires careful parameter optimization to balance sensitivity (detecting true features) and specificity (avoiding false positives from noise).

Once features are detected, compound annotation begins with molecular formula assignment based on accurate mass measurements and isotopic patterns. The number of possible molecular formulas increases exponentially with mass and decreasing mass accuracy, highlighting the critical importance of high mass accuracy in HRMS instruments [11]. For example, a mass measurement of 300.1000 Da with 5 ppm accuracy could correspond to dozens of plausible molecular formulas, while the same mass with 1 ppm accuracy might yield only a few possibilities.

Structural elucidation leverages MS/MS fragmentation data through spectral matching against reference databases. The Universal Library Search Algorithm (ULSA) and similar tools compare experimental spectra to reference libraries, generating matching scores that indicate similarity [16]. However, library coverage remains incomplete, necessitating complementary approaches including molecular networking which groups compounds based on spectral similarity to identify structurally related compounds, and in silico fragmentation which predicts MS/MS spectra for candidate structures to expand identification capabilities beyond library coverage [13].

Confidence Assessment and Identification Levels

A critical aspect of NTA data interpretation is establishing confidence levels for compound identifications, as NTA data are inherently less certain than targeted data [14]. The scientific community has developed reporting standards that categorize identifications into different confidence levels based on the supporting evidence:

Table 2: Confidence Levels for Compound Identification in NTA

| Confidence Level | Required Evidence | Typical Uncertainty | Reporting Considerations |

|---|---|---|---|

| Level 1: Confirmed Structure | Match to reference standard using RT and MS/MS spectrum | Minimal | Considered definitive identification |

| Level 2: Probable Structure | Library spectrum match or in silico evidence | Moderate | Structure is plausible but not confirmed |

| Level 3: Tentative Candidate | Diagnostic evidence (e.g., class-specific fragments) | High | Class may be known but not exact structure |

| Level 4: Unknown Feature | Molecular formula or m/z only | Very High | Insufficient evidence for structural assignment |

This framework acknowledges that in NTA, unlike targeted analysis, if an analyst reports that a chemical is present in a sample, it may actually be absent (e.g., it may be an isomer or an incorrect identification), and if an analyst reports that a chemical is not present, it may actually be present but not correctly identified during data processing [14]. This inherent uncertainty necessitates careful reporting and interpretation of NTA results, with clear communication of confidence levels to stakeholders.

Advanced Applications of Machine Learning in NTA

Machine Learning-Enhanced Identification

Machine learning (ML) approaches are revolutionizing NTA by enhancing identification confidence and streamlining data interpretation workflows. ML models can leverage multiple data dimensions including MS/MS spectra, retention time information, and molecular descriptors to improve the discrimination between true positive and false positive identifications [1] [16]. One innovative approach introduces class probability of true positives (P(TP)) as a metric that leverages data from MS/MS spectra and calibrant-free predicted retention time indices through multiple ML models to enhance identification probability [16].

A demonstrated implementation involves three sequential ML models: first, a molecular fingerprint-based regression model that correlates molecular fingerprints to retention time indices; second, a cumulative neutral loss-based regression model that predicts expected RTI values using experimental MS/MS spectra; and finally, a binary classification model that integrates information from both retention and m/z domains to calculate P(TP) for each matched reference spectrum [16]. This approach has shown significant improvements in identification probability, with reported increases of 54.5%, 52.1%, and 46.7% for pesticides spiked in blank, 10× diluted, and 100× diluted tea matrices, respectively, compared to library matching alone [16].

ML Workflow for Enhanced Identification

The application of machine learning in NTA follows a structured workflow that integrates traditional analytical data with computational predictions:

The workflow begins with input data collection including MS/MS spectra, retention times, and m/z values. The first model (MF-to-RTI) uses random forest regression to correlate molecular fingerprints to true RTI values of calibrants, trained on diverse chemical structures to ensure coverage of structural diversity [16]. The second model (CNL-to-RTI) employs cumulative neutral loss masses as features to predict expected RTI values using experimental MS/MS spectra from known compounds, leveraging the discriminative power of fragmentation patterns [16].

The third model (binary classification) integrates features from both RTI and m/z domains, including RTI error between values derived from the first two models, monoisotopic mass, and parameters obtained from spectral matching algorithms [16]. This model calculates the probability of true positive (P(TP)) for each matched reference spectrum, with larger RTI errors indicating true negative spectral matches while smaller errors correlate with true positive matches [16]. The final output provides an enhanced identification probability that incorporates multiple dimensions of evidence, significantly improving upon traditional spectral matching alone.

Performance Assessment and Quality Assurance

NTA Performance Metrics

Assessing and communicating the performance of NTA methods presents unique challenges compared to targeted analyses. While targeted methods rely on well-established performance metrics for selectivity, sensitivity, accuracy, and precision, defining analogous metrics for NTA requires consideration of different study objectives [14]. Performance assessment in NTA typically focuses on three primary objectives: sample classification (distinguishing sample groups based on chemical patterns), chemical identification (confidently assigning structures to detected features), and chemical quantitation (estimating concentrations without reference standards) [14].

For chemical identification, performance can be evaluated using metrics derived from the confusion matrix, including recall (ability to correctly identify present compounds), precision (ability to avoid false identifications), and overall accuracy [14]. However, these metrics require knowledge of ground truth, which is often unavailable in true non-targeted applications. Alternative approaches include using identification confidence levels and reporting the distribution of identifications across these levels, or employing benchmark compounds with known presence/absence to characterize method performance [14].

For quantitative NTA (qNTA), performance assessment becomes even more challenging due to the lack of reference standards for most compounds. Performance can be estimated using a set of chemical standards that represent different chemical classes, with metrics including accuracy (closeness to true concentration), precision (reproducibility across replicates), and linear dynamic range [14]. However, these metrics are necessarily limited to the available standards and may not represent performance for all detected compounds.

Quality Assurance and Control Procedures

Implementing robust quality assurance and control (QA/QC) procedures is essential for generating reliable NTA data. Recommended practices include:

- System Suitability Testing: Using standardized quality control mixtures containing compounds covering a wide range of physicochemical properties to verify instrument performance before sample analysis [13].

- Blank Analysis: Regularly analyzing procedural blanks to identify and subtract background contamination and carryover effects.

- Pooled Quality Control Samples: Injecting pooled samples throughout the analytical sequence to monitor instrument stability and performance drift over time.

- Reference Standard Controls: Including known reference compounds at various concentrations to validate identification and quantification capabilities.

Data quality parameters should be continuously monitored, including mass accuracy (typically within 3-5 ppm), retention time stability, intensity reproducibility, and chromatographic peak shape [13]. Any deviations beyond predefined thresholds should trigger investigation and potentially re-analysis of affected samples. These QA/QC measures help ensure that the complex data generated in NTA studies is trustworthy and fit for its intended purpose, whether for exploratory research or decision-support applications.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for HRMS-Based NTA

| Reagent/Material | Function in NTA Workflow | Key Considerations |

|---|---|---|

| NTS/QC Mixture | Quality control for data quality assessment | Should contain 80+ compounds covering diverse physicochemical properties (molecular weights 126–1100 Da, log Kow -8 to 8.5) [13] |

| HRMS Instrument | High-resolution mass measurement | Orbitrap, TOF, or FT-ICR systems with resolution >50,000 and mass accuracy <5 ppm [11] |

| Chromatography System | Compound separation before MS detection | UHPLC systems with C18 columns most common; method should resolve early eluting polar analytes [13] |

| Spectral Libraries | Reference for MS/MS spectrum matching | Combine commercial, public, and in-house libraries; recognize limitations in coverage [16] |

| Molecular Networking Tools | Grouping related compounds by spectral similarity | Identifies molecular families when reference spectra are unavailable [13] |

| Retention Time Prediction Models | Providing additional evidence for compound identification | Machine learning models trained on diverse chemical sets improve transferability [16] |

| In Silico Fragmentation Tools | Predicting MS/MS spectra for candidate structures | Expands identification beyond library coverage; domain of applicability is crucial [13] |

Effective interpretation of NTA data requires a solid understanding of core HRMS concepts including mass resolution, accuracy, chromatographic separation principles, and fragmentation pattern analysis. The integration of machine learning approaches with traditional analytical techniques significantly enhances identification confidence by leveraging multiple dimensions of chemical information. As the field continues to evolve, standardized performance assessment methods and robust quality control procedures will be essential for generating reliable, reproducible data that can support environmental monitoring, public health protection, and regulatory decision-making. By mastering these essential HRMS concepts and maintaining critical assessment of data quality and uncertainty, researchers can fully leverage the powerful capabilities of non-targeted analysis to discover and characterize novel chemicals in complex samples.

Understanding the NTA Chemical Space and Detectable Coverage

Non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) represents a paradigm shift in analytical chemistry, enabling the detection and identification of unknown chemicals without a priori knowledge of sample composition. This technical guide explores the fundamental concept of "chemical space" in NTA, defining the theoretical and practical boundaries of what is detectable and identifiable within a given analytical workflow. We examine the key methodological parameters that define the "detectable space," present standardized workflows for chemical space mapping, and discuss advanced computational tools that enhance NTA capabilities. Understanding and communicating the coverage and limitations of NTA methods is critical for advancing environmental monitoring, exposomics research, and drug development applications, ultimately supporting more reliable and reproducible chemical exposure assessments.

The concept of "chemical space" in non-targeted analysis refers to the multidimensional domain of chemical properties that characterizes the constituents of a sample [18]. In principle, NTA can detect and identify a broad range of organic compounds across diverse matrices, but in practice, no single method can cover the entirety of the chemical universe, which encompasses >10⁶⁰ possible organic compounds [18]. Instead, each NTA method accesses specific domains of chemical space through a combination of sample preparation, instrumental analysis, and data processing techniques [18] [19].

The fundamental challenge in NTA lies in determining whether the non-detection of an analyte indicates its true absence above a detection limit or represents a false negative resulting from workflow limitations [18]. To address this, researchers have proposed mapping the "detectable space" of NTA methods—the region of chemical space where compounds are amenable to detection and identification given specific methodological constraints [18] [19]. This conceptual framework allows for better communication of method capabilities, more accurate interpretation of results, and direct comparison between different NTA workflows [18] [20].

Defining the Detectable and Identifiable Chemical Space

Theoretical Framework

In NTA methodology, chemical space is partitioned into three distinct regions [18]:

- The detectable space: Compounds amenable to detection using applied methods for sampling, preparation, and data acquisition

- The identifiable space: Compounds within the detectable space that can be confidently identified based on available spectral evidence and database matching

- The non-detectable space: Compounds not detectable or identifiable using the selected methods

The relationship between these spaces is hierarchical; the identifiable space is necessarily a subset of the detectable space, as identification requires additional confirmatory data beyond mere detection [18].

Key Parameters Defining Detectable Space

Eight fundamental analytical parameters predominantly influence the region of chemical space accessible by an NTA method [18] [19]:

Table 1: Key Parameters Defining the Detectable Space in NTA

| Parameter Category | Specific Factors | Impact on Chemical Space |

|---|---|---|

| Sample Preparation | Sample matrix type, extraction solvent, extract pH, extraction/cleanup media, elution buffers | Determines which compounds are extracted from the matrix and prepared for analysis |

| Instrument Platform | LC-MS, GC-MS, ion mobility | Defines the physicochemical properties of amenable compounds (e.g., polarity, volatility) |

| Ionization Technique | Ionization type (ESI, APCI, EI), ionization mode (positive/negative) | Influences which compounds can be effectively ionized for detection |

| Separation | Chromatographic method, retention time | Separates complex mixtures into individual components |

These parameters work in concert to define the "method applicability domain" [18]. For example, LC-MS with electrospray ionization (ESI) is more amenable to polar, water-soluble compounds, while GC-MS with electron ionization (EI) better detects volatile, non-polar compounds [19]. The selection of extraction solvents and pH further narrows the chemical space by determining which compounds are efficiently recovered from specific sample matrices [18].

Experimental Workflows for Chemical Space Characterization

Comprehensive NTA Workflow

A generalized NTA workflow encompasses multiple stages from sample preparation to compound identification, each step influencing the final detectable space [21]:

This workflow illustrates the comprehensive process for NTA, where each stage progressively refines the detectable chemical space [21]. Sample preparation methods (e.g., extraction solvents, pH adjustment, clean-up media) determine initial compound recovery [18]. Data acquisition parameters (chromatography, ionization, mass analysis) further constrain detectable compounds based on their physicochemical properties [18] [19]. Finally, data processing approaches (feature detection, annotation algorithms) influence which detected features are ultimately identified [21].

Chemical Space Mapping Methodology

The proposed Chemical Space Tool (ChemSpaceTool) would implement a systematic approach to define the detectable space of any NTA method through sequential filtering [18]:

This conceptual framework parses down the vast chemical universe into an Amenable Compound List (ACL) specific to a given methodology [18]. Each filtering step corresponds to a key methodological decision that excludes compounds outside the operable parameters. The resulting ACL represents the plausible detectable space and can be used both prospectively (to guide method selection) and retrospectively (to filter identification candidates) [18].

Computational Tools for Chemical Space Analysis

Software Platforms for NTA

Various software tools have been developed to implement NTA workflows, each with distinct capabilities and limitations:

Table 2: Software Tools for Non-Targeted Analysis

| Software Tool | Type | Key Features | Applications |

|---|---|---|---|

| patRoon | Open-source (R) | Comprehensive workflow, environmental focus, combines multiple algorithms | Environmental monitoring, suspect screening [21] |

| Compound Discoverer | Commercial | Vendor-specific optimizations, user-friendly interface | General NTA, metabolomics [19] |

| MetFrag | Open-source | In silico fragmentation, compound identification | Structure elucidation [21] |

| SIRIUS/CSI:FingerID | Open-source | Molecular formula identification, structure database searching | Unknown compound identification [21] |

| XCMS | Open-source | Feature detection, peak alignment, statistical analysis | Metabolomics, exposomics [21] |

| MZmine | Open-source | Modular pipeline, visualization, vendor-neutral | General NTA, metabolomics [21] |

These tools help researchers navigate the complex data generated in NTA studies. Open-source platforms like patRoon provide tailored functionality for environmental applications and allow integration of various algorithms [21]. Commercial software often offers more user-friendly interfaces and vendor-specific optimizations but may limit data transparency and sharing [19].

Machine Learning in NTA

Machine learning (ML) approaches are increasingly applied throughout NTA workflows to enhance chemical space characterization [1]. ML algorithms improve compound identification through better prediction of retention times, collision cross-section values, and mass fragmentation patterns [1] [22]. These models can also prioritize features for identification based on likelihood of detection and potential toxicological concern [1]. Furthermore, ML techniques enable quantitative structure-property relationship modeling to predict a compound's amenability to specific analytical methods based on its physicochemical properties [1].

Research Reagent Solutions for NTA Workflows

Table 3: Essential Materials and Tools for NTA Experiments

| Category | Specific Examples | Function in NTA Workflow |

|---|---|---|

| Extraction Media | HLB (hydrophilic-lipophilic balance) sorbents, C18 silica, ion-exchange resins | Isolate and concentrate analytes from complex matrices [18] |

| Chromatography Columns | Reverse-phase C18, HILIC, GC capillary columns | Separate complex mixtures into individual components [18] |

| Ionization Sources | Electrospray ionization (ESI), atmospheric pressure chemical ionization (APCI), electron ionization (EI) | Generate gas-phase ions for mass analysis [19] |

| Mass Analyzers | Orbitrap, time-of-flight (TOF), quadrupole | Provide high-resolution mass measurements for molecular formula assignment [22] [19] |

| Data Processing Tools | patRoon, XCMS, MZmine, Compound Discoverer | Extract features, align chromatograms, and annotate compounds [21] [19] |

| Chemical Databases | PubChem, CompTox, NIST, mzCloud | Support compound identification through spectral matching [21] |

Applications and Analytical Platforms in NTA

The detectable chemical space varies significantly across different analytical platforms and application areas. A review of NTA literature revealed that 51% of studies use only LC-HRMS, 32% use only GC-HRMS, and 16% use both platforms to expand chemical coverage [19]. This distribution reflects the complementary nature of these techniques, with LC-HRMS better suited for polar, thermally labile compounds and GC-HRMS more appropriate for volatile, non-polar analytes [19].

In environmental applications, the frequently detected chemical classes reflect both environmental prevalence and methodological biases [19]. Per- and polyfluoroalkyl substances (PFAS) and pharmaceuticals predominate in water samples due to their polarity and ionization efficiency in LC-ESI-MS [19]. Pesticides and polyaromatic hydrocarbons are more common in soil and sediment analyses, while flame retardants and plasticizers feature prominently in dust and consumer product studies [19]. In human biospecimens, plasticizers, pesticides, and halogenated compounds are frequently detected, reflecting exposure patterns and analytical considerations [19].

Characterizing the chemical space and detectable coverage of NTA methods is fundamental to producing reliable, interpretable, and comparable results across studies. The proposed frameworks for chemical space mapping, including the ChemSpaceTool concept, provide systematic approaches to define method boundaries and communicate capabilities [18]. As NTA continues to evolve, integration of machine learning approaches [1], development of open-source computational platforms [21], and community-wide standardization efforts [20] will enhance our ability to navigate the chemical exposome. Understanding the detectable space of NTA methods enables researchers to make informed decisions about method selection, appropriately interpret negative findings, and advance toward more comprehensive chemical exposure assessment.

Non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) represents a paradigm shift from traditional targeted methods, enabling researchers to characterize the chemical composition of complex samples without a priori knowledge of their content [19] [20]. This discovery-based approach generates immensely complex datasets that require sophisticated data processing and interpretation strategies. The fundamental challenge lies in accurately reducing raw instrumental data into meaningful chemical information, a process that hinges on precise terminology and standardized workflows [23] [20]. Unlike targeted analysis, which focuses on specific predefined chemicals, NTA aims to capture a broader "chemical space" – the conceptual collection of all possible chemicals within a sample, limited only by methodological choices [19] [3]. Within this framework, three critical concepts form the foundation of data interpretation: features (the raw observations from instrumentation), annotations (the attribution of chemical characteristics to these features), and identifications (the confident assignment of a specific chemical structure) [23]. This technical guide establishes precise definitions, methodologies, and confidence assessment protocols for these core terminologies, providing researchers with a standardized framework for NTA data interpretation within drug development and related chemical research fields.

Defining the Fundamental Terminology

The Building Block: Molecular Features

In NTA, a feature represents the primary signal entity detected during data processing. Specifically, a feature is defined as a set of grouped, associated m/z-retention time pairs (mz@RT) that represent MS1 components for an individual compound, which may include isotopologues, adducts, and in-source product ion m/z peaks [23] [20]. Where no such associations exist, a feature may simply be a single mz@RT pair. This grouping is crucial as it distinguishes signals arising from a single chemical entity from the thousands of individual data points collected by the HRMS instrument [23]. The process of feature formation involves several computational steps that transform raw mass spectral data into these defined chemical signals, which subsequently serve as the substrates for all further annotation and identification efforts.

The Interpretive Step: Annotations

Annotation represents the next critical step in NTA data interpretation, defined as the attribution of one or more properties or molecular characteristics to an MS1 feature or its components (such as isotopologues or adducts), or to MS/MS product ions [23]. It is essential to recognize that annotations provide evidence but do not typically constitute sufficient proof to confidently identify a single compound. Examples of common annotations include: designation of an observed mz@RT as a specific adduct (e.g., [M+H]+, [M+Na]+), assignment of a molecular formula to a feature or an MS/MS product ion, and assignment of a suggested substructure to an MS/MS product ion [23]. Annotations thus represent hypothetical assignments that require further evidence before progressing to confident identification.

The Confirmatory Stage: Identifications

Identification constitutes the highest level of confidence in NTA workflows, occurring when the annotated components, features, and/or product ions collectively provide sufficient evidence to attribute a specific compound to the detected feature, within a stated identification scope or confidence level [23]. The key distinction from annotation lies in the evidentiary standard – identification requires multiple lines of concordant evidence that collectively point to a single chemical structure with high confidence. This evidence hierarchy typically includes retention time matching with authentic standards, spectral library matching, and consistent fragmentation patterns, among other confirmatory data [23] [14].

Table 1: Comparative Definitions of Core NTA Terminology

| Term | Formal Definition | Key Characteristics | Examples |

|---|---|---|---|

| Feature | A set of grouped mz@RT pairs representing MS1 components for an individual compound [23] [20] | - Represents raw instrumental observations- Grouped signals from same compound- Foundation for further analysis | - Set of m/z peaks for isotopologues- Group of adduct signals- Single mz@RT where no grouping exists |

| Annotation | Attribution of properties/molecular characteristics to features or their components [23] | - Provides evidence but not conclusive- Represents hypothetical assignment- Multiple annotations possible per feature | - Adduct designation ([M+H]+)- Molecular formula assignment- Substructure assignment from MS/MS |

| Identification | Confident attribution of a specific compound to a detected feature [23] | - Requires sufficient evidence- States confidence level/scope- Represents conclusive assignment | - Match to authentic standard (RT, MS/MS)- Level 1 confidence identification- Definitive structure elucidation |

Experimental Workflows and Data Processing

From Raw Data to Features: Processing Steps

The transformation of raw HRMS data into meaningful features follows a multi-step computational workflow that intentionally reduces data complexity while preserving chemically relevant information. This data processing segment encompasses all steps that transform raw data into meaningful information prior to annotation and identification efforts, with inputs being raw or converted data files and outputs being lists of features in each sample with associated chromatography, MS, and MS/MS data [23]. As detailed in Table 2, these steps include both fundamental signal processing and advanced grouping algorithms that collectively enable the detection and definition of molecular features.

Table 2: Key Data Processing Steps in NTA Workflows

| Processing Step | Description | Purpose | Common Algorithms/Software |

|---|---|---|---|

| Initial m/z Detection | Selection of unique mz@RT pairs from raw data | Identify potential signals of interest | Vendor software, MZmine, MS-DIAL |

| Retention Time Alignment | Modifies RTs within a dataset based on representative compounds | Correct for chromatographic shift between runs | Cross-sample correlation algorithms |

| Isotopologue Grouping | Groups mz@RT pairs representing isotopologues of same compound | Reduce redundancy; link related signals | Isotope pattern recognition |

| Adduct Grouping | Groups mz@RT pairs representing adducts of same compound | Consolidate signals from same chemical entity | Adduct rule application |

| Between-Sample Alignment | Comparison of features across multiple samples | Identify same feature in different samples | MZ/RT window matching |

| Gap-Filling | Detection of features missed during initial selection | Improve comprehensiveness of feature detection | Recursive peak extraction |

Workflow Visualization: From Raw Data to Confident Identification

The following diagram illustrates the complete NTA workflow, highlighting the critical pathway from raw data acquisition through feature detection, annotation, and finally to confident identification, with key decision points and confidence assessment stages.

Quality Assurance and Method Validation

Robust quality assurance procedures are essential throughout the NTA workflow to ensure reliable results. Unlike targeted analyses with well-established validation frameworks, NTA requires specialized approaches to performance assessment [14]. Key considerations include: implementing blank samples to identify and subtract contamination; using quality control spikes to monitor instrument performance and feature detection rates; employing pooled quality control samples to assess reproducibility; and utilizing internal standards to evaluate extraction efficiency and matrix effects [23] [14]. For performance assessment, promising approaches include using the confusion matrix for qualitative study outputs (sample classification and chemical identification) and adapting estimation procedures from targeted methods for quantitative applications, with consideration for additional sources of uncontrolled experimental error [14]. These procedures help address the inherent uncertainties in NTA, where false positives (reporting a chemical present when it is actually absent) and false negatives (failing to detect a present chemical) can significantly impact data interpretation and subsequent decision-making [14].

Confidence Assessment and Reporting Standards

Establishing Identification Confidence Levels

Confidence in chemical identification exists on a spectrum, and standardized levels have been established to communicate the degree of certainty unambiguously. The highest confidence (Level 1) requires confirmation with an authentic chemical standard analyzed under identical experimental conditions, providing matching retention time and MS/MS spectrum [23] [14]. Level 2 identification, considered probable structure, requires compelling evidence such as a library spectrum match without retention time confirmation or characteristic fragmentation patterns indicative of a specific compound class [14]. Level 3 confidence, representing tentative candidates, applies when a molecular formula can be unambiguously determined but insufficient evidence exists for structural elucidation [23]. For annotations without molecular formula assignment (Level 4), the chemical identity remains unknown but distinguishable based on spectral data [14]. This tiered confidence framework enables researchers to appropriately communicate the certainty of their findings and prevents overinterpretation of insufficient data.

Reporting Standards and Metadata Requirements

Comprehensive reporting of experimental details is essential for interpreting NTA results and assessing their reliability. The Benchmarking and Publications for Non-Targeted Analysis (BP4NTA) Working Group has established guidelines to improve transparency and reproducibility across NTA studies [20]. Critical reporting elements include: complete description of sample preparation procedures; detailed instrumentation parameters and data acquisition methods; comprehensive documentation of data processing steps and software parameters with version information; clear description of annotation and identification criteria including scoring thresholds; and full disclosure of quality assurance/quality control measures and results [23] [20]. For suspect screening analyses, researchers must report the specific suspect list used, its source, version, and size, while for true NTA, the approaches for unknown compound identification should be thoroughly documented [3]. These reporting standards enable proper evaluation of study limitations, facilitate inter-laboratory comparisons, and support the growing integration of NTA data into regulatory decision-making frameworks.

Computational Tools for NTA Data Processing

The computational demands of NTA necessitate specialized software tools for data processing, annotation, and identification. Both commercial and open-source options are available, each with distinct strengths and applications. As evidenced by literature reviews, vendor-specific software (such as Thermo Compound Discoverer and Agilent MassHunter) is currently used in most studies (approximately 57%), while open-source platforms (including MZmine, MS-DIAL, and Cardinal) offer flexible, customizable alternatives [19] [24]. The selection of appropriate software depends on multiple factors including instrumental platform, sample type, study objectives, and computational resources. As shown in Table 3, these tools encompass the complete NTA workflow from raw data processing to final identification.

Table 3: Essential Research Reagents and Computational Tools for NTA

| Tool Category | Specific Examples | Primary Function | Application in NTA Workflow |

|---|---|---|---|

| Data Conversion Tools | Proteowizard MSConvert, Reifycs Abf Converter | Convert proprietary vendor files to open formats | Pre-processing: Enables cross-platform data analysis |

| Commercial Processing Software | Compound Discoverer, MassHunter | Comprehensive workflow management | Data Processing: Feature detection, alignment, annotation |

| Open-Source Processing Platforms | MZmine, MS-DIAL, XCMS | Flexible data processing and analysis | Data Processing: Customizable workflows for feature detection |

| Spectral Libraries | NIST, mzCloud, MassBank | Reference spectra for compound matching | Annotation & Identification: Spectral comparison and matching |

| Visualization Tools | QUIMBI, Cardinal, SCiLS | Interactive data exploration and visualization | Data Interpretation: Spatial distribution, spectral analysis |

| In Silico Prediction Tools | CFM-ID, MetFrag, SIRIUS | Predict fragmentation spectra and structures | Annotation: Generate hypotheses for unknown compounds |

Chemical Standards and Reference Materials

While NTA aims to detect unknowns, reference standards remain essential for method validation, retention time calibration, and confident identification. Key categories include: isotope-labeled internal standards for quality control and semi-quantification; chemical class-specific standards for evaluating method performance for particular compound classes; retention time index standards for chromatographic alignment; and authentic chemical standards for definitive confirmation of identifications (Level 1) [14]. The strategic use of these materials throughout the NTA workflow, from initial method development to final confirmation, significantly enhances data quality and reliability.

The precise definitions and standardized application of the terms feature, annotation, and identification form the critical foundation for rigorous non-targeted analysis using high-resolution mass spectrometry. Features represent the fundamental observations detected through data processing; annotations provide interpretive hypotheses about chemical characteristics; and identifications constitute confident assignments of specific chemical structures based on sufficient evidence. As NTA methodologies continue to evolve and expand into new application areas including drug development, environmental monitoring, and exposomics research, consistent terminology and reporting standards become increasingly vital for scientific communication and data interoperability [19] [20] [14]. The frameworks, workflows, and confidence assessments presented in this technical guide provide researchers with a standardized approach for NTA data interpretation that promotes transparency, reproducibility, and appropriate confidence in analytical results. Through the continued adoption and refinement of these standards by the scientific community, NTA will increasingly deliver on its potential to comprehensively characterize complex chemical mixtures and uncover previously unrecognized chemical exposures and transformations.

Inherent Uncertainties in NTA and Strategies for Management

Non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) has emerged as a powerful discovery tool for identifying unknown and unexpected chemicals across diverse sample matrices, from environmental samples to biological specimens [19]. Unlike targeted methods that provide definitive, quantitative data for predefined analytes, NTA generates information-rich data with inherent uncertainties that prevent its widespread acceptance by decision-makers [14]. If an analyst reports that a chemical is present in a sample, it may actually be absent; if reported absent, it may be present; and if a concentration is reported, the true value could be orders of magnitude different [14]. This technical guide examines the fundamental sources of uncertainty in NTA workflows and presents structured strategies for their management, enabling researchers to communicate data reliability effectively within chemical exposure and drug development research.

Uncertainty in NTA stems from multiple interconnected sources throughout the analytical workflow. These can be categorized into three primary domains: analytical, data processing, and identification uncertainties.

Analytical Uncertainties

The initial stage of NTA introduces significant uncertainties through sample preparation and instrumental analysis. The "detectable chemical space" – the subset of chemicals ultimately observed – is heavily influenced by eight key analytical considerations: sample matrix type, extraction solvent, pH, extraction/cleanup media, elution buffers, instrument platform, ionization type, and ionization mode [19]. For instance, the choice between liquid chromatography (LC) and gas chromatography (GC) platforms fundamentally alters detectable chemicals, with LC being more amenable to water-soluble compounds with polar functional groups, while GC better captures non-polar, volatile compounds [19]. This methodological bias represents a fundamental uncertainty in comprehensive exposome characterization.

Table 1: Analytical Techniques and Their Influence on Detectable Chemical Space

| Analytical Technique | Chemical Space Bias | Common Ionization Modes | Frequency of Use in NTA Studies |

|---|---|---|---|

| LC-HRMS | Polar, water-soluble compounds | ESI+, ESI-, APCI | 51% (LC-only); 16% (combined with GC) |

| GC-HRMS | Non-polar, volatile compounds | EI, CI | 32% (GC-only); 16% (combined with LC) |

| Direct Injection HRMS | No chromatography separation | Various | 1% |

Data Processing and Computational Uncertainties

The transformation of raw HRMS data into meaningful chemical information introduces computational uncertainties through peak picking, feature alignment, and database searching. Most NTA studies (approximately 57 of 76 reviewed studies) rely on vendor software (e.g., Thermo Compound Discoverer, Agilent MassHunter), while only a minority use open-source platforms (e.g., MzMine, MS-DIAL) [19]. This software dependency creates consistency challenges as different algorithms employ distinct parameters and confidence metrics. The feature detection process must distinguish true chemical signals from instrumental noise, with variations in sensitivity thresholds directly affecting reported chemical spaces. Similarly, molecular formula assignment from accurate mass measurements carries uncertainty, particularly for compounds with complex isotopic patterns or those containing unusual elemental compositions.

Identification and Confidence Assessment Uncertainties

The most significant uncertainty in NTA lies in compound identification, where multiple orthogonal criteria are needed to establish confidence. The absence of universal standards for unknown identification means that, unlike targeted methods, NTA cannot provide unambiguous links between detected features and specific chemical structures [14]. Research indicates substantial variability in identification confidence, with many studies relying on level 3 identifications (probable structures based on spectral library matching without reference standards) rather than level 1 (confirmed with reference standards) [19]. This uncertainty is compounded by the presence of isomeric compounds that may share virtually identical mass spectra but possess different toxicological properties, potentially leading to misidentification in exposure assessment.

Strategic Framework for Uncertainty Management

Effective uncertainty management requires a systematic approach addressing each stage of the NTA workflow. The following strategies provide a structured framework for enhancing reliability in NTA data interpretation.

Experimental Design and Analytical Standardization

Implementing rigorous quality assurance/quality control (QA/QC) protocols forms the foundation for uncertainty management. Blank samples (method, procedural, and instrumental) must be incorporated to identify contamination, while pooled quality control samples (PQC) assess system stability and aid in distinguishing true features from artifacts [14]. For quantitative estimations, standard addition methods with internal standards spanning diverse physicochemical properties help account for matrix effects. Analytical standardization should also include reference materials when available, with established criteria for retention time stability, mass accuracy (< 5 ppm error), and signal intensity variation (< 30% RSD in QC samples) [14].

Table 2: Confidence Levels for Chemical Identification in NTA

| Confidence Level | Identification Criteria | Required Data | Uncertainty Level |

|---|---|---|---|

| Level 1: Confirmed Structure | Match to authentic standard | Retention time, MS/MS spectrum, accurate mass | Low |

| Level 2: Probable Structure | Library spectrum match or diagnostic evidence | MS/MS spectrum, accurate mass | Medium |

| Level 3: Tentative Candidate | Library match without MS/MS or in silico prediction | Molecular formula, possible structure | High |

| Level 4: Unknown Feature | No structural information | Accurate mass, retention time | Highest |

Data Analysis and Computational Harmonization

Managing computational uncertainties requires transparent reporting of all processing parameters and implementation of standardized confidence frameworks. The Schymanski scale for confidence assessment provides a harmonized approach for communicating identification certainty [14]. For peak picking, signal-to-noise thresholds should be optimized and consistently applied, with manual verification of high-priority features. Molecular formula assignment should incorporate multiple scoring algorithms that consider isotopic patterns, elemental probability, and ring double bond equivalents. Implementing open-source computational workflows enhances reproducibility and allows cross-validation between different software platforms, addressing a critical gap in current NTA research [19].

Uncertainty Quantification and Communication

Translating NTA results into actionable information requires clear quantification and communication of uncertainties. Confusion matrices can assess qualitative performance (sample classification, chemical identification) by comparing true positives, false positives, true negatives, and false negatives [14]. For quantitative applications, traditional accuracy and precision metrics should be applied with consideration for additional sources of uncontrolled experimental error [14]. Stakeholder communication should include clear confidence statements using standardized terminology, distinguishing between identified, annotated, and unknown features, with explicit acknowledgment of methodological limitations, such as coverage biases introduced by platform selection (LC vs. GC) and ionization techniques.

Experimental Protocols for Uncertainty Assessment

Protocol for Confidence Tier Assignment

This protocol establishes a standardized approach for assigning confidence levels to chemical identifications in NTA studies, adapted from community-established guidelines.

Level 1 Identification (Confirmed Structure)

- Acquire MS/MS spectrum of feature of interest

- Analyze authentic reference standard using identical chromatographic and MS conditions

- Confirm match of retention time (RT tolerance ± 0.1 min)

- Verify MS/MS spectral similarity (dot product score > 0.8)

- Record accurate mass measurement (mass error < 5 ppm)

Level 2 Identification (Probable Structure)

- Obtain high-resolution MS/MS spectrum

- Search against mass spectral databases (e.g., mzCloud, MassBank, NIST)

- Require minimum dot product score of 0.7 for spectral match

- Verify molecular formula from accurate mass (error < 5 ppm)

- Assess biological/environmental plausibility of proposed structure

Level 3 Identification (Tentative Candidate)

- Determine molecular formula from accurate mass (error < 5 ppm)

- Search compound databases (e.g., PubChem, ChemSpider) using molecular formula

- Prioritize candidates based on likelihood of occurrence

- Report as tentative structure with appropriate uncertainty qualifications

Protocol for Performance Assessment Using Spiked Samples

This procedure quantifies method performance characteristics using chemically spiked samples to establish uncertainty metrics.

Sample Preparation

- Select 20-50 representative compounds spanning diverse physicochemical properties

- Prepare calibration curves in representative sample matrix across 3-5 orders of magnitude

- Spike samples at low, medium, and high concentrations (n=5 each)

- Include unspiked controls (n=5) to assess background levels

Data Acquisition and Processing

- Analyze samples in randomized order to minimize batch effects

- Process data using standardized NTA workflow

- Record detection frequency for each spiked compound

- Calculate accuracy as (measured concentration/true concentration) × 100%

- Determine precision as relative standard deviation (RSD) of replicate measurements

Performance Metric Calculation

- For qualitative assessment: Calculate false positive rate (FPR) and false negative rate (FNR)

- For semi-quantitative assessment: Determine linearity (R²) and relative error (RE%)

- Establish limit of identification (LOI) as lowest concentration with ≥95% detection rate

- Document all parameters for comparison across laboratories and studies

Visualization of NTA Uncertainty Management

NTA Uncertainty Sources and Relationships: This diagram illustrates the sequential nature of uncertainty propagation throughout the NTA workflow, from sample preparation to final quantification, highlighting specific uncertainty contributors at each stage.

NTA Uncertainty Management Workflow: This workflow diagram outlines key stages in managing NTA uncertainties, highlighting quality control checkpoints (green), high-uncertainty stages requiring special attention (red), and critical reporting phases (blue), with mitigation strategies at each step.

Essential Research Reagent Solutions

Table 3: Key Research Reagents for NTA Uncertainty Management

| Reagent / Material | Function in NTA Workflow | Uncertainty Addressed |

|---|---|---|

| Authentic Analytical Standards | Confirmation of compound identity | Identification uncertainty |

| Stable Isotope-Labeled Internal Standards | Correction for matrix effects and recovery | Quantitative uncertainty |

| Reference Materials (NIST, EPA) | Method validation and benchmarking | Method performance uncertainty |

| Quality Control Pooled Samples | Monitoring instrumental performance | Analytical variability |

| Blank Samples (Method, Procedural) | Contamination identification | False positive uncertainty |

| Retention Time Index Standards | Retention time alignment and prediction | Chromatographic variability |

| Ionization Efficiency Calibrants | Response factor estimation | Semi-quantification uncertainty |

Uncertainty management in non-targeted analysis represents both a fundamental challenge and critical opportunity for advancing exposure science and drug development research. The inherent uncertainties throughout the NTA workflow – from analytical biases affecting detectable chemical spaces to computational limitations in compound identification – necessitate systematic approaches to quality assurance and validation [14] [19]. By implementing the structured frameworks, experimental protocols, and uncertainty quantification strategies outlined in this guide, researchers can enhance the reliability and interpretability of NTA data. The advancing standardization of confidence assessment frameworks and growing availability of open-source computational tools promise to reduce current limitations, ultimately supporting the transition of NTA from a research tool to a methodology capable of informing regulatory decisions and public health protection. As the field progresses, transparent communication of uncertainties will remain essential for appropriate interpretation and utilization of NTA results by diverse stakeholders across scientific disciplines.

Non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) has emerged as a powerful analytical approach for detecting and identifying unknown and unexpected compounds across diverse sample matrices, including environmental, biological, and food samples [14]. Unlike targeted methods that focus on specific predefined analytes, NTA generates global chemical information, providing a comprehensive characterization of sample composition [14]. This capability makes NTA particularly valuable for discovering novel chemical stressors, retrospectively assessing past exposures through archived samples, and classifying samples based on their chemical profiles [14]. However, this information-rich data presents significant challenges in evaluation and interpretation, necessitating specialized experimental designs and performance assessment approaches distinct from traditional targeted methods.

The inherent uncertainty in NTA data represents a fundamental characteristic that differentiates it from targeted analysis [14]. When analysts report chemical presence through NTA, the compound may actually be absent due to misidentification as an isomer; conversely, reported absence may reflect failed detection rather than true absence [14]. Similarly, sample classification models may lack repeatability over time or transferability between instruments, and concentration estimates often lack confidence intervals, potentially deviating from true values by orders of magnitude [14]. These uncertainties have limited broader adoption of NTA data by decision-makers, creating an urgent need for methods that accurately measure and communicate uncertainty extent and implications for specific use cases. This article defines and explores the three primary NTA study objectives—sample classification, chemical identification, and chemical quantitation—that structure most NTA projects and yield results most useful for stakeholders [14].

Defining the Three Primary NTA Study Objectives

Sample Classification