Decoding Environmental Contaminants: A PCA Framework for Validating Anthropogenic vs. Natural Sources in Drug Development

This article provides a comprehensive framework for using Principal Component Analysis (PCA) to discriminate between anthropogenic and natural source contributions, a critical task in environmental risk assessment for pharmaceutical development.

Decoding Environmental Contaminants: A PCA Framework for Validating Anthropogenic vs. Natural Sources in Drug Development

Abstract

This article provides a comprehensive framework for using Principal Component Analysis (PCA) to discriminate between anthropogenic and natural source contributions, a critical task in environmental risk assessment for pharmaceutical development. It covers the foundational theory of PCA in source apportionment, details a step-by-step methodological workflow from data preprocessing to model interpretation, and addresses key troubleshooting and optimization strategies for robust analysis. By integrating validation techniques and comparative case studies from recent research, this guide equips scientists and drug development professionals with the tools to accurately identify contamination sources, inform targeted remediation strategies, and ensure the safety of pharmaceutical products and their supply chains.

The Critical Why: Foundational Principles of Source Apportionment with PCA

The Imperative for Source Discrimination in Environmental and Pharmaceutical Contexts

In both environmental science and pharmaceutical development, the ability to accurately discriminate between different sources of materials is paramount. In environmental contexts, this involves distinguishing natural origins from anthropogenic pollution, which is crucial for effective regulation and remediation. In the pharmaceutical industry, it ensures the authenticity, safety, and efficacy of natural products and final drug formulations. This guide explores how Principal Component Analysis (PCA) and its advanced derivatives serve as a powerful, unifying statistical framework for validating these source contributions across disparate fields. PCA achieves this by reducing the dimensionality of complex, multi-variable datasets (like spectral or compositional data), transforming them into a simpler set of components that highlight the greatest patterns of variation, thereby enabling clear visual and statistical separation of different sample origins.

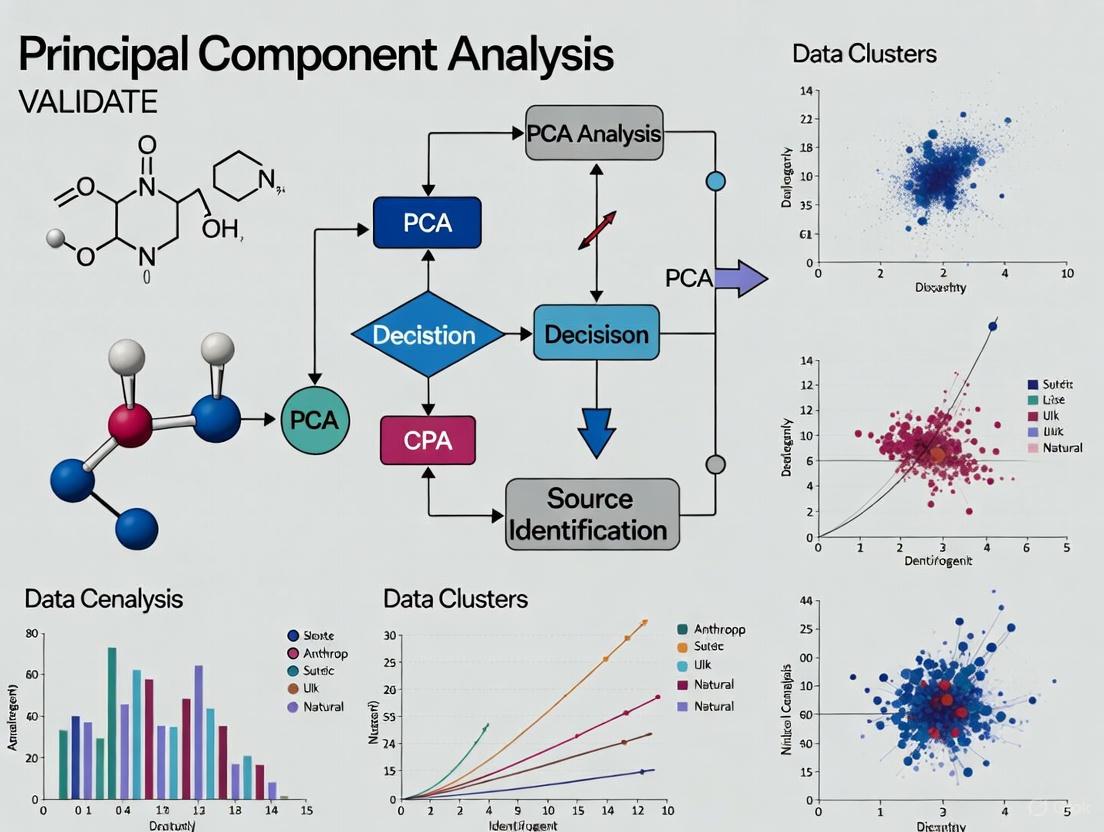

The following diagram illustrates the universal workflow of a source discrimination study, from sample analysis to final validation, which is applicable across both environmental and pharmaceutical contexts.

Comparative Experimental Data and Protocols

The application of PCA for source discrimination is demonstrated effectively through specific case studies in food safety, traditional medicine, and environmental monitoring. The quantitative outcomes from these distinct fields are summarized in the table below for direct comparison.

Table 1: Comparative Performance of PCA-Based Source Discrimination Methods

| Field of Application | Analytical Technique | PCA Variant | Key Discriminatory Variables | Performance Outcome | Reference |

|---|---|---|---|---|---|

| Food Safety (Flour) | Near-Infrared (NIR) Spectroscopy | Comparative PCA (cPCA) | Spectral profiles of talcum powder | 0.3% adulteration detection rate; eliminated brand-related background factors | [1] |

| Traditional Medicine (Poria) | Raman Spectroscopy | Multi-matrix Projection PCA | Molecular fingerprint (polysaccharides, triterpenoids) | 97.5% accuracy in geographical origin identification | [2] |

| Environmental Science (Estuaries) | FTIR & Fluorescence Spectroscopy | PCA-APCS-MLR Receptor Model | Functional group composition (e.g., terrestrial, synthetic OM) | >85% compositional variance captured; validated against water quality data | [3] |

Detailed Experimental Protocols

1. Flour Adulteration Identification via NIR and cPCA This protocol is designed to detect minute, deliberate contamination in food products.

- Sample Preparation: Obtain pure flour samples from different brands. Create adulterated samples by mixing pure flour with talcum powder at known, varying concentrations (e.g., from 0.1% to 5% by weight). Ensure homogeneous mixing.

- Spectral Acquisition: Use a NIR spectrometer to collect spectral data from each sample. A minimum of 20 spectral replicates per concentration level is recommended to ensure statistical robustness [1].

- Data Pre-processing: Apply standard spectral pre-processing techniques such as Standard Normal Variate (SNV) or multiplicative scatter correction to remove physical light-scattering effects and enhance chemical-based spectral features.

- cPCA Modeling: Implement the comparative PCA algorithm. This involves defining a "background" dataset (e.g., spectra from different flour brands) that the model will learn to ignore. The cPCA then effectively focuses on the variance specifically related to the adulterant, dramatically improving sensitivity and allowing detection at levels as low as 0.3% [1].

2. Geographical Origin Tracing of Poria via Raman and Multi-Matrix PCA This protocol provides a non-destructive method for authenticating the origin of high-value medicinal herbs.

- Sample Collection & Preparation: Source authenticated Poria cocos samples from distinct geographical origins (e.g., Yunnan, Anhui, Shaanxi, Hubei). The samples should be prepared as powders to ensure consistent spectral acquisition.

- Raman Spectroscopy: Use a 785 nm laser excitation Raman spectrometer. Acquire multiple Raman spectra (e.g., 25 spectra per origin) to build a robust training dataset. Reserve an independent set of spectra (e.g., 10 per origin) for model testing [2].

- Multi-Matrix Projection Discrimination:

- Step 1: Perform PCA separately on the spectral dataset from each geographical origin. This generates a unique eigenvector matrix (a spectral "fingerprint") for each origin.

- Step 2: For each test spectrum, project it onto all four origin-specific eigenvector matrices, creating four different reconstructed spectra.

- Step 3: Calculate the Euclidean distance between the original test spectrum and each of its four reconstructions. The geographical origin is assigned based on the reconstruction with the smallest Euclidean distance, indicating the best fit [2].

3. Estuarine Sediment Source Apportionment via Multi-Spectroscopy and PCA-APCS-MLR This protocol is critical for identifying pollution sources in complex aquatic ecosystems.

- Field Sampling & Extraction: Collect surface sediment samples from various points in an estuary with contrasting land use. In the lab, extract the Water Extractable Organic Matter (WEOM) from each sediment sample.

- Multi-Spectroscopic Analysis:

- FTIR Spectroscopy: Acquire infrared spectra of the WEOM. Develop and validate novel Infrared-Based Indices (IRIs) designed to be sensitive to functional groups from terrestrial, synthetic, and petroleum-derived organic matter [3].

- Fluorescence Spectroscopy: Collect fluorescence excitation-emission matrices (EEMs) of the same extracts to gain complementary information on dissolved organic matter composition.

- Receptor Modeling: Integrate the spectroscopic data using the PCA-APCS-MLR model. The PCA reduces the multi-spectral data to a few key components. The Absolute Principal Component Scores (APCS) are used to estimate the contribution of each identified source (e.g., anthropogenic vs. natural) to the total sediment organic matter, with the Multiple Linear Regression (MLR) step quantifying their respective contributions [3].

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of source discrimination studies relies on a set of key reagents, materials, and software tools.

Table 2: Essential Research Toolkit for Source Discrimination Studies

| Tool Category | Specific Item / Technique | Primary Function in Source Discrimination |

|---|---|---|

| Analytical Instruments | Near-Infrared (NIR) Spectrometer | Rapid, non-destructive profiling of chemical composition in solids and liquids [1]. |

| Raman Spectrometer | Provides molecular fingerprint data based on vibrational modes; ideal for non-destructive analysis [2]. | |

| Fourier-Transform Infrared (FTIR) Spectrometer | Identifies functional groups and organic compounds in environmental and material samples [3]. | |

| Mass Spectrometer (e.g., LC-MS, FT-ICR-MS) | Provides definitive identification and quantification of individual compounds; used for model validation [3]. | |

| Chemometric Software | PCA & Comparative PCA (cPCA) | Core algorithm for dimensionality reduction and isolating target variance from complex backgrounds [1]. |

| PCA-APCS-MLR Receptor Model | A hybrid model that apportions contributions from multiple sources to a mixture [3]. | |

| Experimental Design | Design of Experiments (DoE) | A systematic, statistical approach for optimizing analytical methods and evaluating their robustness [4] [5]. |

| Reference Materials | Authenticated Geographical Samples | Crucial for building and validating classification models for product origin [2]. |

| Analytical Grade Solvents | Essential for sample preparation and extraction without introducing contaminating signals. |

The comparative data and methodologies presented in this guide unequivocally demonstrate that PCA-based statistical frameworks are indispensable for robust source discrimination. From ensuring food and pharmaceutical authenticity to apportioning environmental pollution, these techniques transform complex analytical data into actionable, validated insights. The consistent success of methods like cPCA and multi-matrix projection across diverse fields underscores their power and versatility, providing researchers and regulators with a reliable means to validate natural and anthropogenic source contributions.

A critical challenge across scientific disciplines is untangling mixed signals from multiple sources. In environmental science, this means distinguishing human-made (anthropogenic) pollution from naturally occurring (geogenic) elements; in pharmacology, it involves separating a drug's therapeutic effects from background biological noise. Principal Component Analysis (PCA) is a powerful, unsupervised multivariate statistical technique designed specifically for this task of dimensionality reduction and source identification. It works by transforming a large set of correlated variables into a smaller, more manageable set of uncorrelated variables called Principal Components (PCs), which capture the most significant patterns and variances in the data [6] [7]. This transformation allows researchers to visualize complex datasets, identify hidden structures, and apportion observed effects to their underlying sources, making it an indispensable tool for validating anthropogenic versus natural contributions in complex systems.

The core objective of PCA is to distill high-dimensional data into its essential features without significant loss of information. This process reveals the dominant patterns of variance, where the first principal component (PC1) captures the direction of maximum variance in the dataset, and each subsequent component captures the next highest variance under the constraint of being orthogonal to preceding components [6]. By analyzing which original variables contribute most heavily to each component, researchers can infer the distinct processes or sources—such as industrial emissions, volcanic activity, or specific biological pathways—that generate these characteristic patterns within the data [8].

Fundamental Principles of PCA

Mathematical Foundation and Workflow

Principal Component Analysis operates on a fundamental principle of linear algebra, transforming original variables into a new coordinate system. The mathematical procedure begins with the covariance matrix (or correlation matrix for standardized data), which expresses the relationships between all pairs of variables in the dataset [9]. The algorithm then performs an eigen decomposition of this matrix, calculating the eigenvectors (which define the directions of the new component axes) and eigenvalues (which represent the amount of variance explained by each component) [9]. The resulting principal components are linear combinations of the original variables, weighted by their contributions to each pattern of variance [10].

The standard workflow for implementing PCA follows a structured sequence: (1) Data Standardization - transforming variables to have zero mean and unit variance to prevent dominance by variables with larger scales; (2) Covariance Matrix Computation - calculating how variables vary together; (3) Eigenvalue Decomposition - extracting eigenvectors and eigenvalues; (4) Component Selection - choosing the number of components to retain based on variance explanation; (5) Interpretation - analyzing component loadings to infer meaningful patterns and sources [8]. This systematic approach ensures that the resulting components capture the most significant, independent sources of variation in the dataset.

Key Distinctions from Related Methods

PCA is often confused with Factor Analysis (FA), as both are dimension-reduction techniques, but they possess fundamental philosophical and mathematical differences. PCA creates new variables (components) as linear combinations of the original measured variables, with the goal of explaining maximum total variance in the data. In contrast, Factor Analysis posits latent variables (factors) that are assumed to cause the observed patterns in the measured variables, distinguishing between common variance (shared across variables) and unique variance (specific to each variable plus error) [10]. This distinction becomes crucial when selecting the appropriate method for source identification, with PCA generally being preferred for data reduction and pattern recognition, while FA is more suited for testing theoretical models of underlying constructs.

Beyond FA, PCA also differs from other ordination methods like Principal Coordinate Analysis (PCoA) and Non-Metric Multidimensional Scaling (NMDS). While PCA operates directly on the original data matrix (typically using Euclidean distance), PCoA starts with a distance matrix and can accommodate various distance measures, making it more flexible for ecological community data [6]. NMDS further differs by preserving only the rank-order of dissimilarities between samples, making it suitable for non-linear relationships [6]. The table below summarizes these key distinctions:

Table: Comparison of Multivariate Dimension Reduction Techniques

| Characteristic | PCA | PCoA | NMDS | Factor Analysis |

|---|---|---|---|---|

| Input Data | Original feature matrix | Distance matrix | Distance matrix | Original feature matrix |

| Distance Measure | Covariance/Correlation matrix | Any distance measure | Any distance measure | Covariance/Correlation matrix |

| Variance Model | Explains total variance | Preserves relative distances | Preserves rank-order | Distinguishes common & unique variance |

| Primary Strength | Optimal variance capture | Flexible distance measures | Handles non-linear relationships | Models theoretical constructs |

| Typical Applications | Feature extraction, source identification | Ecological diversity, microbiome studies | Complex ecological communities | Psychology, social sciences |

Comparative Performance of PCA

PCA Versus Clustering and Other Statistical Methods

The performance advantages of PCA become evident when compared to alternative statistical approaches like clustering algorithms. In a large-scale neuroimaging study comparing PCA with K-means clustering on identical data from 2,436 participants, PCA demonstrated superior goodness of fit when predicting diagnosis and body mass index (BMI) [11]. The study found that clustering methods artificially categorized continuous variables like age and BMI, while PCA more naturally represented the continuous gradients inherent in biological systems [11]. This advantage is particularly important when analyzing complex systems where boundaries between groups are ambiguous and variation occurs along spectra rather than discrete categories.

The performance of PCA also varies significantly depending on the analytical context, particularly in genetic association studies. Research has revealed that in SNP-set settings (testing multiple genetic variants against a single outcome), principal components with large eigenvalues tend to provide highest power, whereas in multiple phenotype settings (testing a single variant against multiple outcomes), higher-order components with smaller eigenvalues often yield better performance [12]. This counterintuitive finding highlights the importance of understanding context-specific PCA properties rather than applying the method generically across different study designs.

Application-Specific Performance Metrics

The effectiveness of PCA can be quantified through application-specific performance metrics across diverse fields. In financial portfolio risk analysis, PCA implementation improved risk assessment model accuracy from an adjusted R² of 0.62 to 0.88, while reducing portfolio risk exposure by 3.4% through more informed asset selection [13]. The correlation between PCA-extracted factors and portfolio performance increased from 0.82 to 0.88 over a four-year period, demonstrating its growing predictive alignment with market dynamics [13].

In process performance evaluation for manufacturing, PCA-based capability indices enabled robust assessment of multivariate process data without requiring the often-violated assumption of multivariate normality [7]. By transforming correlated quality characteristics into independent components, PCA provided accurate process capability metrics that would be unreliable using traditional multivariate methods, particularly for non-normal data distributions [7]. This flexibility across data types and structures further underscores PCA's utility as a robust analytical tool.

Table: Quantitative Performance Improvements Using PCA Across Disciplines

| Field/Application | Performance Metric | Without PCA | With PCA | Improvement |

|---|---|---|---|---|

| Financial Risk Analysis | Model Accuracy (Adj. R²) | 0.62 | 0.88 | +42% |

| Financial Risk Analysis | Portfolio Risk Exposure | Baseline | -3.4% | Risk Reduction |

| Genetic Studies | Statistical Power | Varies by component | Optimal component selection | Context-dependent |

| Neuroimaging | Goodness of Fit (vs. Clustering) | Lower for clustering | Superior for PCA | Better clinical correlation |

| Process Manufacturing | Data Distribution Assumptions | Multivariate normal required | No distribution assumption | Greater robustness |

Experimental Protocols for PCA Implementation

Standardized Workflow for Environmental Source Apportionment

A robust PCA protocol for environmental source identification requires meticulous data preparation and analytical standardization. A comprehensive study analyzing over 7,000 topsoil samples in Southern Italy established a standardized workflow that begins with Normal Score Transformation (NST) to stabilize variance and handle extreme outliers [8]. This preprocessing step pulls extreme values back toward normal ranges, creating a more suitable dataset for multivariate analysis without losing the meaningful information contained in outliers [8]. The transformed data then undergoes PCA with Varimax rotation, which maximizes the simplicity of the factor structure by enhancing the separation of variable loadings onto components, making interpretation more straightforward [8].

The experimental protocol continues with the spatialization of component scores, mapping them geographically to identify spatial patterns associated with different contamination sources [8]. In the Campania case study, this approach successfully discriminated between four primary independent sources controlling geochemical variability: two distinct volcanic districts, a siliciclastic component, and an anthropogenic component [8]. The integration of RGB composite maps further refined this differentiation by visualizing the coexistence and relative predominance of each source component across the region [8]. This comprehensive protocol provides a reproducible framework for environmental source apportionment in complex, anthropized regions.

Diagram: Experimental Workflow for PCA-Based Source Apportionment

Protocol for Molecular Dynamics Analysis in Drug Discovery

In drug discovery, PCA provides crucial insights into protein dynamics and ligand interactions that simpler metrics like RMSD (Root Mean Square Deviation) cannot capture. The standard protocol begins with Molecular Dynamics (MD) simulations of protein-ligand complexes, typically running for 50-200 nanoseconds to adequately sample conformational space [9]. The atomic coordinates from trajectory frames are then used to construct a 3N × 3N covariance matrix, where N represents the number of atoms analyzed [9]. Diagonalization of this matrix produces eigenvalues (representing the variance along each collective coordinate) and eigenvectors (the principal components themselves), which are sorted in descending order of explained variance [9].

The analytical phase involves projecting the original trajectories onto the first two or three principal components to create 2D or 3D maps of conformational space [9]. These projections reveal distinct protein conformational states and transitions that might be obscured in conventional analyses. For example, a PCA might reveal that a protein samples three distinct macrostates during a simulation, while RMSD analysis of the same data suggests only a single, stable conformation [9]. This capability makes PCA particularly valuable for identifying allosteric effects and understanding how different ligand modifications influence protein flexibility and binding site geometry in Free Energy Perturbation (FEP) studies [9].

Essential Research Toolkit for PCA Implementation

Software and Computational Tools

Successful PCA implementation requires appropriate computational tools tailored to specific research domains. For general statistical analysis, platforms like R (with packages like FactoMineR, factoextra, and prcomp), Python (with scikit-learn, pandas, and NumPy), and MATLAB provide robust, flexible environments for PCA computation and visualization [9]. Specialized software includes MDAnalysis for molecular dynamics trajectories [9], Flare for drug discovery applications [9], and IMSL (International Mathematics and Statistics Library) for manufacturing process capability analysis [7].

Many commercial statistical packages like SPSS, SAS, and JMP offer user-friendly PCA implementations with comprehensive visualization options, making the technique accessible to non-programmers [10]. The choice of software often depends on the scale of data, need for customization, integration with existing workflows, and domain-specific requirements. For extremely large datasets (e.g., genomic data), computational efficiency becomes a critical consideration, with some methods exhibiting complexity of O(n²d) for n samples and d dimensions [6].

Domain-Specific Reagents and Materials

The experimental inputs for PCA vary significantly across application domains, though all share the common requirement of high-quality, multidimensional data. The table below details essential "research reagents" and materials required for PCA across different fields:

Table: Essential Research Reagents and Materials for PCA Across Disciplines

| Field | Essential "Reagents"/Inputs | Function in PCA Workflow | Technical Specifications |

|---|---|---|---|

| Environmental Science | Topsoil Samples | Primary source of geochemical data | Composite sampling, 100×100m grid [8] |

| ICP-MS Analysis | Quantifies elemental composition | Measures heavy metals, trace elements [8] | |

| Normal Score Transformation | Preprocessing for skewed data | Stabilizes variance, handles outliers [8] | |

| Drug Discovery | Molecular Dynamics Trajectories | Raw protein-ligand coordinate data | 50-200ns simulation, all-atom resolution [9] |

| Crystal Structures | Reference conformations | High-resolution (<2.5Å) protein-ligand complexes [9] | |

| MDAnalysis Toolkit | Trajectory analysis and PCA | Python package for MD analyses [9] | |

| Neuroimaging | T1-Weighted MRI Scans | Cortical thickness measurements | Standardized protocols (ENIGMA) [11] |

| Clinical/Demographic Data | Correlation with components | Diagnosis, medication, BMI, age [11] | |

| Manufacturing | Product Quality Measurements | Multivariate process data | Multiple dimensions per unit (e.g., weight, dimensions) [7] |

| Engineering Specifications | Tolerance limits for components | Upper/lower specification limits [7] |

Diagram: Logical Relationships in PCA Workflow from Data to Interpretation

Case Studies in Source Validation

Mercury Pollution: Quantifying Natural vs. Anthropogenic Contributions

A compelling application of PCA in environmental source apportionment comes from research on atmospheric mercury at Canadian rural sites, where scientists combined long-term measurements with Positive Matrix Factorization (PMF) to quantify source contributions [14]. The study revealed that natural surface emissions dominated total gaseous mercury (TGM) at all three monitoring sites, accounting for 71-77.5% of annual TGM, while anthropogenic contributions showed consistent declining trends [14]. PCA-assisted analysis further delineated specific source types within these broad categories, identifying contributions from terrestrial re-emissions (24-26%), regional mercury emissions (11% at one site), oceanic re-emissions (6-8%), shipping emissions (10%), and local combustion (a few percent) [14].

This detailed source profiling enabled temporal analysis showing increasing contributions from natural surface Hg emissions (1.8% per year at one site) resulting from declining anthropogenic emissions and increasing oceanic re-emissions [14]. The study demonstrated striking seasonal patterns, with Hg pool contributions greater in cold seasons, while wildfire and surface re-emission contributions became more significant in warm seasons [14]. Such nuanced understanding of source dynamics would be impossible without PCA-based source apportionment, highlighting the technique's value in developing targeted environmental policies that address the most consequential emission sources.

Heavy Metal Contamination: Industrial Source Identification

In heavy metal contamination assessment, PCA enables precise identification of industrial sources through advanced statistical approaches. A study of soil heavy metals in Jiaozuo City, China, combined PCA with random forest models optimized by genetic algorithms to quantify specific influencing factors for pollution sources [15]. The analysis revealed three principal components representing distinct pollution sources with contribution rates of 47.2%, 33.3%, and 19.5%, respectively [15]. The first source was dominated by industrial activities, with factory density (27.7%) and distance from factory (36.3%) identified as the main factors, loading heavily with Cr, Cu, Mn, and Ni [15].

The second source represented a mixed natural/transportation influence, primarily affected by vegetation index (37.8%), road network density (16.8%), and proximity to roads (15.3%) [15]. The third source was linked to agricultural activities, with cultivated land density contributing 39.1% and As showing a high load (91.1%) [15]. This precise quantification of specific contributing factors moves beyond traditional source apportionment, which merely estimates percentage contributions of sources, providing instead a novel perspective for heavy metal source identification under complex environmental conditions [15].

Drug Discovery: Protein Conformational Analysis

In pharmaceutical research, PCA reveals subtle protein conformational changes induced by ligand binding that directly impact drug efficacy. Research has demonstrated that PCA of molecular dynamics trajectories can identify allosteric effects in dimeric proteins, where ligand binding at one site induces conformational changes at distant sites [9]. In one case study, PCA revealed that when only one binding site in a dimeric protein was occupied, the protein underwent significant restructuring, while occupancy of both sites resulted in a narrower conformational space closer to the initial structure [9]. This allosteric effect would have remained undetected using conventional analysis methods like RMSD.

PCA further supports drug discovery by identifying outlier compounds in congeneric series during Free Energy Perturbation (FEP) studies [9]. By projecting structures from FEP transformations onto PCA maps defined by reference simulations, researchers can quickly identify compounds that induce unusual protein conformations, potentially indicating undesirable binding modes or induced-fit effects [9]. This application allows medicinal chemists to prioritize compounds with optimal binding characteristics and understand the structural basis for energy predictions, ultimately accelerating the drug optimization process.

Principal Component Analysis stands as a versatile, powerful method for uncovering hidden source signatures across diverse scientific domains. Its mathematical foundation in covariance structure and eigen decomposition provides a robust framework for distilling complex, multivariate datasets into interpretable patterns that reveal underlying processes. The technique's demonstrated success in discriminating anthropogenic from natural contributions—whether in environmental mercury pollution, soil heavy metal contamination, or protein conformational changes—validates its essential role in source attribution studies.

As research datasets grow in dimensionality and complexity, PCA's importance as an analytical tool continues to increase. Future developments will likely focus on integration with machine learning approaches, enhanced computational efficiency for massive datasets, and improved visualization techniques for communicating complex multivariate relationships. The consistent performance of PCA across fields demonstrates its fundamental utility for researchers seeking to understand the hidden signatures embedded within their data, making it an indispensable component of the modern scientific toolkit.

Principal Component Analysis (PCA) is a powerful statistical technique for reducing the dimensionality of complex datasets, widely used to disentangle and quantify natural and anthropogenic pollution sources. This guide details the interpretation of core PCA outputs—loadings, scores, and variance—for accurate source identification, providing a structured comparison with alternative receptor modeling approaches.

Theoretical Foundations of PCA for Source Apportionment

Core Concepts and Definitions

Principal Component Analysis (PCA) is a dimensionality-reduction technique that transforms complex, high-volume datasets into a set of new, uncorrelated variables called principal components (PCs). These components are linear combinations of the original variables and are designed to successively maximize variance, thereby preserving as much statistical information as possible from the original data [16] [17]. The adaptability of PCA makes it particularly valuable for environmental forensics, where it helps identify underlying patterns in pollution data that signify different emission sources.

The technique operates by solving an eigenvalue/eigenvector problem derived from the covariance or correlation matrix of the original dataset [17]. In the context of source identification, each principal component potentially represents a distinct emission source or process, with the relative contributions of original chemical species to these components revealed through loadings, and the influence of this source across different samples revealed through scores [18] [19].

Mathematical Framework

Mathematically, PCA begins with a data matrix X containing observations on p numerical variables for each of n entities or samples. PCA seeks linear combinations of the columns of X with maximum variance, given by ( \mathbf{Xa} ), where a is a vector of constants [17]. The variance of such a linear combination is ( \text{var}(\mathbf{Xa}) = \mathbf{a'Sa} ), where S is the sample covariance matrix. Identifying the linear combination with maximum variance equates to finding a p-dimensional vector a that maximizes the quadratic form ( \mathbf{a'Sa} ) [17].

The solution to this optimization problem leads to the eigenvector equation: [ \mathbf{Sa} = \lambda\mathbf{a} ] where ( \lambda ) is a Lagrange multiplier and also an eigenvalue of the covariance matrix S [17]. The eigenvectors ak represent the PC loadings, while the projections of the original data onto these eigenvectors, ( \mathbf{Xa}k ), are the PC scores. The eigenvalues ( \lambdak ) represent the variances of the principal components, indicating how much variance each PC captures from the original dataset [18] [17].

Interpreting Key PCA Outputs for Source Identification

Loadings: Deciphering Source Profiles

PCA loadings, contained within the eigenvectors, are crucial for interpreting the meaning of each principal component in source identification studies. Loadings indicate the relative weight and direction of each original variable's contribution to a principal component [18]. In environmental source apportionment, each variable typically represents a specific chemical species or marker measured in the samples.

To interpret each principal component as a potential pollution source, researchers examine both the magnitude and direction (sign) of the loadings [18]. The larger the absolute value of a loading coefficient, the more important the corresponding variable is in calculating that component. For instance, in a study of PM2.5 sources, a component with high loadings for lead (Pb) and zinc (Zn) was interpreted as representing metals industry emissions, while a component with high loadings for elemental carbon (EC) and nitrogen dioxide (NO2) was identified as motor vehicle traffic [19]. The direction of the loadings indicates whether variables correlate positively (same direction) or negatively (opposite directions) within the component, potentially revealing complex source interactions or transformation processes.

Scores: Mapping Source Contributions

PCA scores represent the values that each individual sample would score on a given principal component [17]. These scores are calculated as linear combinations of the original data determined by the PC loadings [18]. In practical terms, scores indicate the relative contribution or influence of the source represented by a PC across different samples, locations, or time periods.

Scores can be visualized spatially to identify geographical patterns of source impacts or temporally to understand seasonal variations in source strength [19]. For example, in a nationwide PM2.5 source apportionment, plotting scores for a "biomass burning" component revealed highest impacts in the U.S. Northwest, while "residual oil combustion" showed elevated scores in Northeastern cities and major seaports [19]. Score plots also help identify outliers—samples with unusual source characteristics that may warrant further investigation [20].

Variance Explained: Determining Source Significance

The variance explained by each principal component indicates its importance in capturing the overall variability of the dataset. Eigenvalues represent the variances of the principal components, with larger eigenvalues indicating components that explain greater portions of the total variance [18]. The proportion of variance explained by each PC is calculated by dividing its eigenvalue by the total sum of all eigenvalues.

The cumulative proportion of variance explained by consecutive PCs guides researchers in deciding how many components (sources) to retain for interpretation [18]. As shown in Table 1, the first three principal components often explain the majority of variance in environmental datasets. The Kaiser criterion (retaining components with eigenvalues >1) provides a common retention threshold, though the adequacy of variance explained ultimately depends on the specific application [18].

Table 1: Representative Eigenanalysis Output for Source Identification Studies

| Principal Component | Eigenvalue | Proportion | Cumulative Proportion |

|---|---|---|---|

| PC1 | 3.5476 | 0.443 (44.3%) | 0.443 (44.3%) |

| PC2 | 2.1320 | 0.266 (26.6%) | 0.710 (71.0%) |

| PC3 | 1.0447 | 0.131 (13.1%) | 0.841 (84.1%) |

| PC4 | 0.5315 | 0.066 (6.6%) | 0.907 (90.7%) |

| PC5 | 0.4112 | 0.051 (5.1%) | 0.958 (95.8%) |

| PC6 | 0.1665 | 0.021 (2.1%) | 0.979 (97.9%) |

| PC7 | 0.1254 | 0.016 (1.6%) | 0.995 (99.5%) |

| PC8 | 0.0411 | 0.005 (0.5%) | 1.000 (100.0%) |

Source: Adapted from Minitab's PCA interpretation guide [18]

Comparative Analysis with Alternative Receptor Models

PCA vs. Absolute Principal Component Analysis (APCA)

While standard PCA identifies source profiles through loadings, it doesn't directly quantify mass contributions. Absolute Principal Component Analysis (APCA) extends PCA to provide quantitative source apportionment by scaling components using measured species concentrations [19]. In APCA, component scores are rescaled to represent absolute contributions to the total mass, enabling direct quantification of source impacts.

In a nationwide PM2.5 study, APCA was employed to quantify contributions from various sources after initial identification through standard PCA with varimax rotation [19]. This two-stage approach—using PCA for qualitative source identification followed by APCA for quantitative apportionment—provides a comprehensive framework for both understanding pollution sources and quantifying their contributions to ambient concentrations.

PCA-APCS-MLR: An Integrated Framework

The PCA-Absolute Principal Component Scores-Multiple Linear Regression (PCA-APCS-MLR) model represents a further refinement that integrates PCA with regression techniques for enhanced source quantification. This approach was successfully applied in a recent study deciphering natural and anthropogenic sources in estuarine sediment organic matter using multi-spectroscopic fingerprinting [3].

The PCA-APCS-MLR framework involves several stages: (1) PCA identifies potential sources and their profiles; (2) APCS calculates absolute component scores representing source contributions in each sample; and (3) MLR quantifies the relationship between source contributions and total mass, enabling precise apportionment. This integrated approach captured >85% of the compositional variance across all study sites, demonstrating its effectiveness for complex environmental systems [3].

Performance Comparison of Receptor Modeling Approaches

Table 2: Comparison of PCA-Based Receptor Models for Source Apportionment

| Model Type | Key Features | Strengths | Limitations | Typical Applications |

|---|---|---|---|---|

| Standard PCA | Identifies source profiles through loadings; orthogonal components maximize variance [17] | No prior knowledge of sources required; reduces data dimensionality; visualizable through biplots [20] | Qualitative rather than quantitative; rotational ambiguity in interpretation | Initial exploratory analysis; hypothesis generation; identifying source types [19] |

| APCA | Extends PCA with absolute scoring for mass apportionment; uses measured species concentrations [19] | Quantitative mass apportionment; utilizes same statistical foundation as PCA | Requires measured mass data; sensitive to outliers and missing data | Quantifying source contributions to PM2.5 [19]; national-scale source apportionment |

| PCA-APCS-MLR | Integrated framework combining PCA, absolute scores, and multiple linear regression [3] | High explanatory power (>85% variance); quantitatively links sources to concentrations | Complex implementation; requires validation with independent datasets | Estuarine sediment sourcing [3]; complex environmental systems with mixed sources |

Workflow and Methodological Protocols

Standardized PCA Protocol for Source Identification

The following workflow diagram illustrates the systematic approach for applying PCA in source identification studies:

PCA Workflow for Source Identification

Data Preparation and Standardization: The initial stage involves collecting and preparing the compositional data, typically including trace elements, ions, organic carbon (OC), elemental carbon (EC), and other source markers. Continuous variables should be standardized (mean-centered and scaled by standard deviation) to prevent variables with larger ranges from dominating the analysis [16]. In source apportionment studies, it's sometimes beneficial to exclude secondary components (sulfates, nitrates, organic carbon) from the initial PCA to more clearly discern primary emission sources, incorporating them later in mass apportionment [19].

Computational Core: The mathematical heart of PCA involves calculating the covariance or correlation matrix and performing eigendecomposition to extract eigenvalues and eigenvectors [17]. The covariance matrix captures how variables deviate from the mean together, revealing relationships between different chemical species that suggest common sources. Eigendecomposition transforms this matrix into eigenvectors (loadings) and eigenvalues (variances) that define the principal components [16] [17].

Interpretation and Validation: The final stage involves selecting meaningful components (often using Kaiser criterion of eigenvalue >1 or scree plot analysis), interpreting loadings to identify source profiles, and analyzing scores to understand spatial/temporal patterns of source impacts [18]. Validation may involve comparing PCA results with independent measurements or applying quantitative extensions like APCA or PCA-APCS-MLR for mass apportionment [19] [3].

Advanced Protocol: PCA-APCS-MLR for Quantitative Apportionment

For researchers requiring quantitative source contributions, the PCA-APCS-MLR protocol provides a comprehensive approach:

Perform PCA on Standardized Data: Conduct PCA on the standardized dataset of compositional markers, retaining components with eigenvalues >1 [18] [3].

Varimax Rotation: Apply varimax rotation to simplify the component structure, enhancing interpretability by maximizing high loadings and minimizing low ones.

Calculate Absolute Principal Component Scores (APCS): Compute APCS by introducing a hypothetical "zero" sample and scaling the scores relative to this baseline, transforming them into absolute contributions [3].

Multiple Linear Regression (MLR): Regress total measured mass concentrations against the APCS to obtain regression coefficients that represent the mass contribution of each source [3].

Validation: Validate source contribution estimates using independent indicators, such as comparing modeled anthropogenic contributions with bottom water quality measurements in estuarine studies [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Analytical Tools for PCA-Based Source Apportionment

| Category | Specific Tools/Reagents | Function in Source Identification |

|---|---|---|

| Chemical Speciation Networks | U.S. EPA CSN (Chemical Speciation Network) data [19] | Provides standardized, nationwide PM2.5 composition data for multivariate analysis |

| Molecular Tracers | Fatty acids, fatty alcohols, saccharides, lignin and resin products, sterols [21] | Serve as molecular markers for specific sources (e.g., biomass burning, terrestrial organics) |

| Spectroscopic Tools | FTIR (Fourier Transform Infrared) spectroscopy, Fluorescence spectroscopy [3] | Provides fingerprinting capabilities for organic matter source discrimination |

| Statistical Software | R programming language, MATLAB, Minitab, SPSS, XLSTAT [16] | Performs PCA calculations, eigenvalue decomposition, and visualization |

| Validation Techniques | Ultrahigh-resolution mass spectrometry (FT-ICR-MS) [3] | Confirms chemical relevance of identified sources through molecular correlation |

| Ancillary Data | Bottom water quality datasets, meteorological data [3] | Provides independent validation of source contribution estimates |

Application in Anthropogenic vs. Natural Source Discrimination

Case Study: Global Dust Emissions

PCA and related multivariate techniques have proven particularly valuable in quantifying the contributions of natural and anthropogenic dust sources across different climatic regions. A global simulation study revealed that while natural dust sources (primarily located in hyper-arid and arid regions like the Sahara and Arabian Desert) contributed 81.0% of global dust emissions, anthropogenic sources still accounted for a significant 19.0% [22]. This study demonstrated distinct spatial patterns: natural dust emissions dominated in arid regions with emission fluxes of 1-50 μg m⁻² s⁻¹, while anthropogenic dust emissions concentrated in semi-arid, sub-humid, and humid regions with generally lower fluxes of 0.1-10 μg m⁻² s⁻¹ [22].

The complex interplay between natural and anthropogenic sources was particularly evident in semi-arid regions, where mixed land cover types (grasslands, urban areas, croplands, open shrub lands) complicated simple discrimination. These regions accounted for 42.99% of global anthropogenic dust emissions, highlighting the importance of sophisticated statistical approaches like PCA for unraveling multiple source contributions in complex environments [22].

Case Study: Organic Aerosol Pollution in Urban Environments

In a comprehensive analysis of organic aerosols in Nanchang, Central China, receptor modeling approaches helped clarify the relative contributions of primary emissions and secondary formations to urban OA pollution. The results demonstrated that anthropogenic sources were the dominant determinant, contributing approximately 89% of primary organic carbon (POC) and primary organic aerosols (POA), and 60% of secondary organic carbon (SOC) and secondary organic aerosols (SOA) [21]. This clear discrimination between anthropogenic and natural influences, along with the quantification of primary versus secondary contributions, showcases the power of multivariate approaches in urban pollution studies.

Seasonal variations revealed further complexity: biogenic emissions exerted stronger influence during spring and summer, while anthropogenic emissions dominated in autumn and winter [21]. Such temporal patterns, identifiable through PCA score analysis, provide crucial information for targeted seasonal air quality management strategies.

Case Study: Estuarine Sediment Organic Matter

A recent innovative application of PCA-APCS-MLR successfully deciphered natural and anthropogenic sources in estuarine sediment organic matter across four South Korean estuaries with contrasting land use [3]. The study developed novel infrared-based indices (IRIs) from diagnostic FTIR absorbance features to capture source-specific functional group compositions linked to terrestrial, synthetic, and petroleum-derived organic matter.

The integrated framework, combining spectroscopic fingerprinting with receptor modeling, captured >85% of the compositional variance at all sites, providing a cost-effective alternative to more expensive molecular tools for routine source tracking in estuarine systems impacted by diverse anthropogenic pressures [3]. This approach demonstrates how PCA-based methods can be adapted and enhanced for specific environmental compartments and research questions.

The interpretation of PCA loadings, scores, and variance explained provides a robust foundation for identifying and apportioning pollution sources across diverse environmental contexts. When standard PCA is extended through approaches like APCA and PCA-APCS-MLR, it transitions from a qualitative exploratory tool to a quantitative method capable of discriminating between natural and anthropogenic contributions with high precision. The consistent application of these methods across global dust emissions, urban organic aerosols, and estuarine sediments demonstrates their versatility and effectiveness in addressing one of environmental science's most persistent challenges: reliably attributing pollution to its origins for more targeted and effective management.

In environmental and geochemical research, accurately distinguishing between anthropogenic (human-induced) and geogenic (naturally occurring) sources represents a fundamental challenge with significant implications for environmental risk assessment and remediation planning [8] [23]. The concept of "source end-members" refers to the definitive chemical profiles of pure contamination sources, which enable researchers to quantitatively apportion contributions from different sources in mixed environmental samples [24]. This distinction is particularly crucial in geochemically complex regions such as ore regions with historical mining activities, where naturally elevated concentrations of potentially toxic elements (PTEs) overlap with anthropogenic contamination [23]. Without robust methodological approaches to differentiate these sources, environmental assessments may misattribute contamination, leading to ineffective remediation strategies and misallocated resources.

Principal Component Analysis (PCA) has emerged as a powerful multivariate statistical technique for discerning patterns in complex environmental datasets and facilitating this critical distinction [8] [25]. By transforming correlated variables into a set of uncorrelated principal components, PCA captures the underlying structure of geochemical data, allowing researchers to identify distinct element groupings that reflect specific geochemical processes or sources [8]. This technical workflow provides a standardized, reproducible approach for characterizing anthropogenic and geogenic profiles across diverse environmental contexts, from soils and sediments to atmospheric particulate matter [8] [26].

Theoretical Framework and Key Concepts

Defining Geogenic and Anthropogenic End-Members

Geogenic sources derive from natural geological processes and exhibit chemical compositions reflecting local lithology, mineralogy, and weathering patterns. These natural backgrounds can be highly variable, with some regions exhibiting naturally elevated concentrations of potentially toxic elements due to geochemical haloes around mineralized zones [23]. In contrast, anthropogenic sources stem from human activities such as industrial production, mining, smelting operations, agricultural practices, and waste disposal, often introducing distinct chemical signatures into the environment [8] [23].

The fundamental principle of end-member mixing analysis leverages differences in chemical properties of environmental samples to quantify contributions from various sources without introducing man-made tracers [24]. This approach requires comprehensive characterization of potential source materials through detailed chemical analysis, recognizing that chemical compositions of end-members can vary both spatially and temporally [24]. In strongly anthropized regions, multiple independent sources often contribute to environmental contamination, requiring sophisticated statistical approaches to disentangle their respective contributions [8].

The Role of Principal Component Analysis in Source Discrimination

Principal Component Analysis serves as a dimensionality reduction technique that transforms correlated environmental variables into uncorrelated principal components, each representing linear combinations of original variables that capture maximum variance in the dataset [8]. The application of rotation methods such as Varimax produces a simpler structure, making it easier to associate element groupings with specific geochemical processes or sources [8]. The spatialization of PCA component scores can reveal primary independent sources controlling geochemical variability across a region, as demonstrated in the Campania region of Southern Italy where PCA successfully identified two distinct volcanic districts plus siliciclastic and anthropogenic components [8].

PCA applications in environmental geochemistry have proven particularly valuable for examining spatial distribution of elements in soils [8], discriminating contributions from different sources [25], and identifying key variables for environmental monitoring purposes [25]. The interpretability of PCA results depends heavily on appropriate data pre-treatment and understanding of local geological and anthropogenic contexts [25].

Analytical Approaches and Methodological Framework

Critical Constituents for End-Member Characterization

Robust end-member characterization requires analysis of comprehensive constituent groups that provide diagnostic information about potential sources. Based on extensive literature review of tracers useful for oil and gas activities and other industrial processes, the following constituent groups provide critical information for distinguishing anthropogenic and geogenic sources [24]:

Table 1: Key Analytical Constituents for End-Member Characterization

| Constituent Group | Specific Parameters | Source Discrimination Utility |

|---|---|---|

| Dissolved Gases | Methane concentrations, carbon isotopic composition of methane (δ13C), hydrogen isotopic composition of methane (δ2H) | Distinguishes thermogenic (oil and gas sources) vs. biogenic (microbial processes) sources; identifies oxidation processes [24] |

| Major and Trace Elements | Major ions (Ca, Mg, Na, K, Cl, SO4), trace elements (As, Pb, Zn, Cu, Ti, Fe) | Element ratios (e.g., Pb/Ti, As/Fe) distinguish anthropogenic inputs from geogenic backgrounds; salinity sources [24] [23] [26] |

| Isotopic Tracers | Stable isotopes of water (δ2H, δ18O), dissolved carbon (δ13C), strontium (87Sr/86Sr), boron (δ11B), lithium (δ7Li) | Determine sources of water and salts; calculate groundwater ages; distinguish contamination sources [24] |

| Noble Gases | Helium (He), neon (Ne), argon (Ar) isotopic ratios | Identify atmospheric vs. deep subsurface sources; detect fluid injection impacts; determine recharge locations [24] |

| Organic Compounds | Volatile organic compounds (VOCs), polycyclic aromatic hydrocarbons (PAHs), dissolved organic carbon (DOC) fractions | Differentiate petroleum sources vs. other organic carbon sources; indicators of industrial activities [24] |

| Radioactive Elements | Radium (Ra), uranium (U) isotopes | Effective tracers of fluids from zones of oil and gas deposits; natural radioactive decay series [24] |

Experimental Design and Sampling Strategies

Comprehensive end-member characterization requires strategic sampling approaches that account for both spatial and temporal variability:

Vertical Profiling: Comparative analysis of topsoils (0-20 cm) and subsoils (40-80 cm) enables discrimination between surface anthropogenic inputs and natural geogenic backgrounds [23]. Subsoils typically reflect local geochemical backgrounds, while topsoils integrate both geogenic and anthropogenic contributions, with their difference indicating anthropogenic contamination [23].

Reference Sampling: Collection of samples from known anthropogenic sources (industrial emissions, mining waste, agricultural runoff) and representative geological materials (bedrock, unweathered parent material) provides essential reference end-members [23].

Temporal Sampling: For atmospheric particulates or dynamic water systems, time-series sampling captures temporal variations in source contributions, essential for understanding seasonal patterns or response to specific events [26].

Density and Scale: High-density sampling across geological and anthropogenic gradients ensures adequate representation of spatial heterogeneity, particularly in geochemically complex areas [8] [23]. The Campania region study analyzed over 7000 topsoil samples across 13,600 km² to adequately characterize regional patterns [8].

Laboratory Analytical Methods

Advanced analytical techniques are required to measure the comprehensive suite of constituents necessary for end-member characterization:

Table 2: Essential Analytical Methods for End-Member Characterization

| Analytical Technique | Measured Parameters | Application in Source Discrimination |

|---|---|---|

| ICP-MS/OES | Major and trace element concentrations | Elemental fingerprints for different source materials; calculation of element ratios [23] |

| Isotope Ratio Mass Spectrometry | Stable isotope ratios (C, H, O, Sr, B, Li) | Natural tracers of specific geological formations and anthropogenic processes [24] |

| Gas Chromatography with Combustion-IRMS | Carbon and hydrogen isotopic composition of methane | Distinguishes thermogenic vs. biogenic methane sources; identifies methane oxidation [24] |

| GC-MS | Volatile and semi-volatile organic compounds | Molecular markers for specific industrial activities or petroleum sources [24] |

| Gamma Spectrometry | Naturally occurring radioactive materials | Tracers for fluids from specific geological formations [24] |

| Ion Chromatography | Major anions and cations | Salinity sources; water-rock interaction indicators [24] |

Data Pre-Treatment and Statistical Analysis

Data Pre-Treatment for PCA

Appropriate data pre-treatment is essential for meaningful PCA results, as different pre-treatment methods can significantly influence outputs and interpretation [25]:

Normalization Techniques: Geochemical data often exhibit non-normal distributions with outliers that can strongly influence statistical analysis [8]. Normal Score Transformation (NST) stabilizes variance in datasets, pulling extreme outliers back to normal ranges and making data more suitable for multivariate analysis [8]. Alternative approaches include Box-Cox transformation and log-transformation, though results can vary moderately between methods [8].

Grain Size Normalization: For sediment datasets, granulometric normalization reduces the overwhelming influence of grain size on metal variability, allowing other factors including mineralogy and anthropogenic sources to be identified more clearly [25].

Outlier Management: Identification and removal of outlying samples is critical, as a small number of high/low magnitude samples can create factors that do not represent the majority of the data [25]. Statistical approaches such as the use of correlation matrices rather than transformations can help reduce outlier influence [25].

Centering and Scaling: Performing PCA on a correlation matrix corrects for magnitude and scale differences between variables measured in different units [25]. The choice of data center (sample means vs. control group means) should align with experimental design objectives [27].

PCA Implementation and Interpretation

The PCA workflow involves multiple stages from data preparation to interpretation:

Component Selection: The number of meaningful components is determined through scree plot analysis, evaluating eigenvalues, and considering the percentage of variance explained [8]. In environmental applications, the first few components typically capture the major sources of variability, with subsequent components representing noise or minor influences [25].

Source Interpretation: Factor loadings indicate the correlation between original variables and principal components, revealing element associations that reflect specific geochemical processes or sources [8] [25]. For example, associations of Pb, Zn, and Cu typically indicate anthropogenic mining sources, while associations of Al, Ti, and Fe reflect natural lithogenic sources [23]. Factor scores indicate how strongly individual samples associate with each component, facilitating identification of samples with similar composition and potential sources [25].

Spatialization: Mapping PCA component scores across study areas reveals spatial patterns of source influences, highlighting areas dominated by specific anthropogenic or geogenic sources [8]. RGB composite maps of multiple component scores can further refine differentiation, emphasizing the coexistence or predominance of one component over another [8].

Case Studies and Applications

Distinguishing Mining Contamination in the Ore Mountains

In the Ore Mountains (Czech Republic), a region with extensive historical mining and smelting activities, researchers employed empirical cumulative distribution functions (ECDFs) to distinguish geogenic and anthropogenic contributions to soil contamination by As, Cu, Pb, and Zn [23]. The approach combined detailed topsoil and subsoil sampling with element ratio analysis (As/Fe, Pb/Ti) to account for natural variability in soil composition. The study found that local backgrounds for As/Fe and Pb/Ti were naturally elevated (5.7-9.8 times and 2.1-2.7 times higher than global averages, respectively) due to geochemical haloes around ore veins [23]. Through ECDF analysis, researchers quantified anthropogenic contributions as approximately 16% and 12% for As/Fe and 17% and 14% for Pb/Ti in the two study areas, corresponding to topsoil enrichment of approximately 15-14 mg kg⁻¹ for As and 35-42 mg kg⁻¹ for Pb [23].

Regional Geochemical Mapping in Campania, Italy

A large-scale study in Campania (Southern Italy) applied PCA to over 7000 topsoil samples across 13,600 km² to identify sources controlling geochemical variability [8]. The methodology applied Normal Score Transformation to stabilize variance before conducting PCA with Varimax rotation [8]. The spatialization of four selected component scores revealed four primary independent sources: two distinct volcanic districts, a siliciclastic component, and an anthropogenic component [8]. RGB composite maps further refined this differentiation, demonstrating the value of PCA for identifying regional-scale geochemical patterns in complex geological settings with significant anthropogenic pressure [8].

Atmospheric Particulate Source Apportionment in Beijing

A two-year sampling study of total suspended particles (TSP) in Beijing used element ratios (Pb/Ti) to distinguish between periods dominated by geogenic or anthropogenic pollution [26]. The research demonstrated that chemical composition combined with meteorological data could reflect specific influences of distinct aerosol sources, with considerable variation in source contributions over the course of the year [26]. The interactions between aerosols from different sources were numerous, highlighting the complexity of source apportionment in urban atmospheres with multiple contamination sources [26].

Advanced Techniques and Methodological Considerations

Uncertainty Assessment in PCA Results

The application of bootstrap methods such as the Truncated Total Bootstrap (TTB) procedure enables assessment of uncertainty in PCA results [28]. This approach simulates multiple "virtual panels" from original data to investigate uncertainty in paired comparisons between samples [28]. Accounting for mutual dependencies in bootstrap-derived results prevents misleading conclusions that can arise when treating results from the same virtual panel as independent data [28]. Visualization of uncertainty regions for each sample facilitates more robust interpretation of differences between anthropogenic and geogenic sources.

Element Ratios and Diffuse Contamination Assessment

Element ratios normalized to conservative lithogenic elements (e.g., Pb/Ti, As/Fe, Zn/Al) provide powerful tools for distinguishing anthropogenic contributions from natural geochemical variability [23] [26]. This approach accounts for natural variations in soil composition due to differences in mineralogy and texture [23]. The assessment of diffuse contamination—widespread low-level contamination often challenging to detect—is particularly facilitated by ECDF analysis of element ratios in topsoil and subsoil pairs [23]. This method enables identification of diffuse contamination through systematic shifts in ECDF curves that affect most samples in a dataset rather than creating distinct outliers [23].

Multi-Proxy Statistical Approaches

While PCA represents a powerful standalone technique, its effectiveness increases when integrated with complementary statistical approaches:

End-Member Mixing Analysis (EMMA): Chemical analyses of "end-member" samples enable quantification of each end-member's contribution to groundwater or soil samples, using differences in chemical properties without introducing man-made tracers [24].

Empirical Cumulative Distribution Functions (ECDFs): Comparison of ECDFs for potentially toxic elements and lithogenic elements in topsoils and subsoils facilitates distinction between natural topsoil enrichment, point contamination, and diffuse contamination [23].

Cluster Analysis: Combined with PCA, cluster analysis identifies groups of samples with similar chemical composition, potentially representing areas influenced by similar sources [28].

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents and Materials for End-Member Characterization

| Category | Specific Items | Application and Function |

|---|---|---|

| Field Sampling | Stainless steel trowels, grab samplers, soil corers, GPS units | Collection of representative soil/sediment samples with accurate spatial referencing [25] |

| Sample Preservation | Chemical-grade acids, sterile containers, temperature-controlled storage | Preservation of sample integrity between collection and analysis [24] |

| Laboratory Analysis | ICP-MS/OES calibration standards, isotope reference materials, chromatography supplies | Quality assurance/quality control for analytical measurements [24] [23] |

| Data Analysis | Statistical software with multivariate analysis capabilities (R, SPSS, Statistica) | Implementation of PCA and complementary statistical techniques [8] [25] |

| Geospatial Analysis | GIS software, spatial interpolation tools | Spatialization of PCA results and mapping of source contributions [8] |

The characterization of anthropogenic and geogenic source end-members through Principal Component Analysis and complementary statistical techniques provides a robust framework for environmental source apportionment in complex systems. The methodological workflow encompassing appropriate sampling design, comprehensive chemical analysis, careful data pre-treatment, and informed statistical interpretation enables researchers to distinguish between natural and human-derived contamination even in geochemically anomalous settings. The integration of element ratios, spatial analysis, and uncertainty assessment further strengthens these approaches, providing environmental managers with scientifically-defensible basis for remediation decisions and risk assessments. As analytical capabilities continue to advance and statistical methods become more sophisticated, the precision and reliability of source end-member characterization will continue to improve, supporting more effective environmental management in increasingly complex anthropized landscapes.

Principal Component Analysis (PCA) is a powerful statistical technique for simplifying complex, high-dimensional datasets. By transforming numerous correlated variables into a smaller set of uncorrelated principal components, PCA reveals underlying patterns and structures that are critical for interpreting real-world phenomena. This guide explores how PCA is applied to distinguish between natural and human-made sources of contamination in environmental science and to analyze high-dimensional data in drug discovery, providing a direct comparison of its performance against other dimensionality reduction methods.

A primary application of PCA in environmental science is identifying the origin of pollutants in soil and groundwater, which is a crucial first step for effective remediation and policy-making.

Case Study 1: Topsoil Contamination in Southern Italy

A 2025 study analyzed over 7,000 topsoil samples across the Campania region to determine the sources of geochemical elements [8].

- Experimental Protocol: Researchers collected topsoil samples based on methodologies from the EuroGeoSurveys geochemical mapping program [8]. The area was divided into a 100x100 m grid, and composite samples were created for each site for representativeness [8]. The key methodological steps are outlined below:

- Key Findings and Source Discrimination: The PCA successfully isolated four independent sources controlling the region's geochemistry [8]. The subsequent spatial analysis mapped these components, revealing their geographic predominance and co-existence [8]. The results are summarized in the following table:

| Principal Component | Identified Source Type | Key Characteristics / Elements |

|---|---|---|

| Component 1 | Volcanic District 1 | Associated with the Somma-Vesuvius volcanic system [8]. |

| Component 2 | Volcanic District 2 | Associated with the Phlegraean Fields volcanic system [8]. |

| Component 3 | Siliciclastic Geology | Derived from sedimentary rocks like siltstones and sandstones in hilly regions [8]. |

| Component 4 | Anthropogenic Activity | Linked to urban, industrial, and agricultural inputs [8]. |

Case Study 2: Groundwater Radioactivity and Nitrates in Southern Tunisia

A study of 33 groundwater samples from the Gafsa basin used PCA to differentiate sources of radioactive and nitrate contamination [29].

- Experimental Protocol: The study area is known for phosphate mining and agriculture [29]. Researchers collected groundwater samples and analyzed them for radioactive isotopes (e.g., radium) and nitrate concentrations [29]. The resulting data matrix was processed through PCA without specific normalization mentioned in the abstract [29].

- Key Findings and Source Discrimination: PCA classified the samples into distinct groups based on contamination origin [29]. The analysis revealed that samples most impacted by human activity showed high concurrent levels of radium and nitrates [29].

| Contamination Source | Key Contributing Activities | Impact on Groundwater |

|---|---|---|

| Phosphate Mining | Extraction and processing of phosphate rock. | Primary source of radioactivity (e.g., radium) [29]. |

| Agricultural Runoff | Use of fertilizers and manures on crops. | Major source of nitrate (NO₃⁻) contamination [29]. |

| Fossil Geothermal Waters | Natural deep groundwater upwelling. | Contributed to radioactivity from the North Western Sahara Aquifer System [29]. |

Case Study 3: Groundwater Quality in Ho Chi Minh City, Vietnam

A study analyzing 233 well-water samples in 2015 and 20 in 2019 used PCA/FA (Factor Analysis) to apportion pollution sources [30].

- Experimental Protocol: Water samples were analyzed for key parameters. In 2015, 8 parameters were measured, expanding to 15 in 2019 [30]. PCA/FA was applied to the dataset to identify latent factors representing different pollution sources.

- Key Findings and Source Discrimination: The analysis identified three major pollution sources, ranking them in order of importance: agricultural, urban, and industrial activities [30]. The Groundwater Quality Index (GQI) showed that water quality ranged from poor to very poor, with the agricultural area being the most severely degraded [30].

Health Sciences Case Study: Analyzing Drug-Induced Transcriptomic Data

Beyond environmental science, PCA is a foundational tool for analyzing high-dimensional data in health research, though it is often benchmarked against newer algorithms.

Case Study: Benchmarking Dimensionality Reduction for Drug Response

A 2025 study systematically evaluated 30 different dimensionality reduction (DR) methods, including PCA, on their ability to preserve biological information in drug-induced transcriptomic data from the Connectivity Map (CMap) dataset [31].

- Experimental Protocol: The benchmark used 2,166 drug-induced transcriptomic profiles from nine cell lines [31]. DR methods were tested under four conditions to evaluate their ability to preserve biological similarity [31]:

- Different cell lines treated with the same drug.

- Same cell line treated with different drugs.

- Same cell line treated with drugs having different Mechanisms of Action (MOAs).

- Same cell line treated with varying dosages of the same drug.

- Performance Metrics: Methods were evaluated using internal validation metrics (Davies-Bouldin Index, Silhouette score) and external validation metrics (Normalized Mutual Information, Adjusted Rand Index) that measured how well the low-dimensional embeddings agreed with known biological labels [31].

Performance Comparison of Dimensionality Reduction Methods

The benchmarking study provided a direct, data-driven comparison of PCA's performance against modern alternatives like UMAP and t-SNE [31].

| Dimensionality Reduction Method | Key Algorithmic Principle | Performance in Preserving Biological Structure | Suitability for Drug Response Data |

|---|---|---|---|

| PCA (Principal Component Analysis) | Identifies directions of maximal variance; linear transformation. | Relatively poor in preserving local biological similarity and cluster compactness compared to top performers [31]. | Good for global structure and interpretability; may obscure finer, local differences crucial for distinguishing drug MOAs [31]. |

| t-SNE (t-Distributed Stochastic Neighbor Embedding) | Minimizes divergence between high- and low-dimensional similarities; emphasizes local neighborhoods. | Top performer in separating distinct drug responses and grouping drugs with similar MOAs [31]. | Well-suited for capturing local cluster structures; effective for discrete drug responses [31]. |

| UMAP (Uniform Manifold Approximation and Projection) | Uses cross-entropy loss to balance local and global structure. | Top performer, comparable to t-SNE, with strong cluster separability [31]. | Offers improved global coherence; well-suited for studying discrete drug responses [31]. |

| PaCMAP (Pairwise Controlled Manifold Approximation) | Incorporates distance-based constraints for local and global structure. | Top performer, consistently high rankings across internal and external validation metrics [31]. | Effective at preserving both local detail and long-range relationships [31]. |

| PHATE | Models diffusion-based geometry to reflect manifold continuity. | Stronger performance in detecting subtle dose-dependent transcriptomic changes [31]. | Well-suited for datasets with gradual biological transitions, such as dose-response curves [31]. |

The study concluded that while t-SNE, UMAP, and PaCMAP are excellent for studying discrete drug responses (e.g., different MOAs), most methods, including PCA, struggled with subtle dose-dependent changes, an area where Spectral methods and PHATE showed more strength [31]. A key finding was that PCA's widespread use is not supported by top-tier performance in this biological context, as it was outperformed by multiple non-linear techniques [31].

The Researcher's Toolkit for PCA-Based Studies

The following table details essential reagents, software, and methodological components for conducting PCA in environmental and health studies.

| Tool / Material | Function / Role in PCA Workflow |

|---|---|

| Geochemical Soil Samples | Primary environmental matrix for analyzing elemental concentrations and identifying geogenic vs. anthropogenic sources [8]. |

| Groundwater Samples | Primary environmental matrix for measuring chemical and radioactive contaminants to apportion pollution sources [29] [30]. |

| Cell Lines (e.g., A549, MCF7) | In vitro model systems used to generate drug-induced transcriptomic data for pharmacological analysis [31]. |

| Normal Score Transformation (NST) | A normalization technique that stabilizes variance in skewed geochemical data, pulling extreme outliers back to normal ranges for more robust multivariate analysis [8]. |

| Varimax Rotation | An orthogonal rotation method used in PCA to simplify the factor structure, making it easier to associate element groupings with specific processes or sources [8]. |

| Connectivity Map (CMap) Dataset | A comprehensive public resource of drug-induced transcriptomic profiles used as a benchmark for evaluating analytical methods in drug discovery [31]. |

| Internal Validation Metrics (DBI, Silhouette) | Metrics like the Davies-Bouldin Index (DBI) and Silhouette score that assess cluster compactness and separation without external labels, used to evaluate DR method quality [31]. |

The case studies demonstrate that PCA is a versatile and powerful method for source apportionment in environmental science, effectively distinguishing between natural geological signals and anthropogenic pollution in soil and water [8] [29] [30]. However, in the analysis of highly complex biological data like drug-induced transcriptomics, its performance is surpassed by modern non-linear dimensionality reduction techniques such as t-SNE, UMAP, and PaCMAP [31]. The choice of method should be guided by the data's nature and the research question—whether it requires capturing global variance (PCA) or intricate local structures (UMAP, t-SNE) [31].

From Theory to Practice: A Step-by-Step PCA Workflow for Source Validation