Disentangling Sources: A Framework for Separating Natural and Anthropogenic Drivers in Water Quality Data

This article provides a comprehensive framework for researchers and scientists tasked with distinguishing between natural geological and human-induced influences on water quality.

Disentangling Sources: A Framework for Separating Natural and Anthropogenic Drivers in Water Quality Data

Abstract

This article provides a comprehensive framework for researchers and scientists tasked with distinguishing between natural geological and human-induced influences on water quality. It covers foundational concepts of natural hydrogeochemical baselines and common anthropogenic contaminants, explores advanced methodological approaches including chemical fingerprinting, isotopic tracing, and machine learning models, addresses critical troubleshooting for data quality control and sampling design, and outlines validation techniques through multivariate statistics and case study analysis. The content is tailored to support environmental risk assessment and inform robust water resource management strategies.

Understanding the Baseline: Core Concepts of Natural and Anthropogenic Water Quality Influences

Frequently Asked Questions

FAQ: What is the core challenge in defining a natural hydrogeochemical baseline? The primary challenge is separating the influence of complex natural systems from anthropogenic (human) activities. Natural baselines are not static; they are dynamic and shaped by the interconnected processes of geology, climate, and biogeochemical cycles. Distinguishing these natural background levels from human-induced contamination is essential for accurate risk assessment and environmental management [1] [2].

FAQ: My water samples show elevated levels of certain elements. How can I tell if this is from natural geology or pollution? A combination of methods is needed. You should first characterize the local geology, as certain rock types like limestone can naturally lead to higher concentrations of elements like calcium and bicarbonate [2]. Then, use pollution indices (such as the Contamination Factor or Pollution Index) and ecological risk indices to quantify the likelihood of anthropogenic influence. For example, in a limestone quarry study, while most parameters were within guidelines, elements like As, Cr, Ni, and Pb in some samples were linked to pollution sources [2].

FAQ: How does climate change interfere with establishing a reliable baseline? Climate change alters key natural processes that govern water quality. It can exacerbate regional water scarcity and shift precipitation patterns, which affects how nutrients and contaminants are leached and transported through a watershed [1]. Furthermore, climate change can intensify marine stratification and deoxygenation, driving microbial processes that, for instance, increase the loss of nitrogen to the atmosphere, thereby changing natural biogeochemical cycles [3].

FAQ: What is a common methodological error when trying to separate natural and anthropogenic water consumption? A common error is treating the watershed as homogenous. Some methods use a constant coefficient to estimate natural evapotranspiration (ET), which ignores the significant heterogeneity of climate, terrain, and soil conditions within a basin [1]. Advanced approaches using machine learning and remote sensing at a pixel level are now being developed to reduce this uncertainty and provide a more accurate separation [1].

The table below summarizes key parameters and indices used in a hydrogeochemical baseline and risk assessment study conducted around a limestone quarry [2].

Table 1: Measured Parameter Ranges and Guidelines

| Parameter | Measured Range | WHO Guideline | Notes |

|---|---|---|---|

| pH | 2.61 - 8.16 | - | Indicates acidic to slightly alkaline conditions. |

| Dominant Ions | Ca²⁺, HCO₃⁻ | - | Mg-HCO₃ was the prevailing water type. |

| Arsenic (As) | Some samples exceeded | WHO limits | Identified as a carcinogenic risk. |

| Lead (Pb) | Some samples exceeded | WHO limits | Identified as a neurotoxic risk. |

Table 2: Irrigation Suitability Indices and Interpretation

| Index Name | Acronym | Measured Range | Suitability Interpretation |

|---|---|---|---|

| Sodium Adsorption Ratio | SAR | < 10 | Suitable for irrigation. |

| Magnesium Adsorption Ratio | MAR | 4.37 – 25.89% | Values within acceptable range. |

| Kelly's Ratio | KR | 0.06 – 0.37% | Suitable for irrigation. |

| Soluble Sodium Percentage | Na% | 5.16 – 16.57 | Suitable for irrigation. |

| Potential Salinity | PS | 43.38 – 162.75 | Elevated values suggest possible long-term soil salinization. |

Table 3: Pollution and Risk Assessment Indices

| Index Name | Acronym | Finding | Risk Classification |

|---|---|---|---|

| Pollution Index | PN | Low to Moderate | Low to moderate contamination. |

| Potential Ecological Risk Index | PERI | 39.45 | Low ecological risk. |

Detailed Experimental Protocol: Hydrogeochemical Characterization and Risk Assessment

This protocol outlines the methodology for assessing water quality, establishing baselines, and evaluating human health risks, as derived from current research [2].

Objective: To determine the natural hydrogeochemical baseline of a watershed, assess its suitability for irrigation, and evaluate pollution levels and associated human health risks.

Step 1: Field Sampling and Laboratory Analysis

- Sample Collection: Collect water samples from various sources in the study area (e.g., rivers, groundwater wells, runoff).

- In-situ Measurements: Measure physical parameters like pH and temperature on-site using calibrated portable meters.

- Laboratory Analysis: Analyze samples using standard methods (e.g., ICP-MS) for major ions (Ca²⁺, Mg²⁺, Na⁺, K⁺, HCO₃⁻, Cl⁻, SO₄²⁻) and Potentially Toxic Elements (PTEs) such as As, Cr, Ni, and Pb.

Step 2: Data Integrity and Organization

- Data Cleaning: Organize data in a spreadsheet, ensuring sample names are correct and units are consistent. Check for geologically unreasonable values (e.g., negative concentrations) and label them as "below detection." [4]

- Initial Assessment: Compare all measured parameters against World Health Organization (WHO) guidelines to identify immediate exceedances [2].

Step 3: Hydrochemical Classification and Irrigation Suitability

- Water Type Classification: Create a Piper or Durov diagram to classify the dominant water type (e.g., Mg-HCO₃).

- Calculate Indices: Compute irrigation suitability indices (SAR, MAR, KR, Na%, PS) using their standard formulas to assess potential impacts on soil [2].

Step 4: Pollution and Risk Assessment

- Pollution Evaluation: Calculate pollution indices (Contamination Factor - CF, Pollution Index - PN) to quantify the level of contamination from PTEs [2].

- Ecological Risk Assessment: Determine the Potential Ecological Risk Index (PERI) to evaluate risk to the local ecosystem [2].

- Human Health Risk Assessment:

- Exposure Pathways: Model exposure through ingestion, inhalation, and dermal contact. Ingestion is typically the dominant pathway [2].

- Risk Quantification: Calculate non-carcinogenic (hazard quotient) and carcinogenic risks for identified PTEs like As and Pb.

Step 5: Interpretation and Governance

- Synthesize Findings: Integrate all hydrochemical, pollution, and risk data to differentiate natural background levels from anthropogenic contributions.

- Recommend Actions: Propose management strategies, such as continuous monitoring, wastewater treatment at pollution sources (e.g., quarries), and community health surveillance [2].

- Support SDGs: Frame the findings within the context of Sustainable Development Goals (SDG 6 - Clean Water and Sanitation) [2].

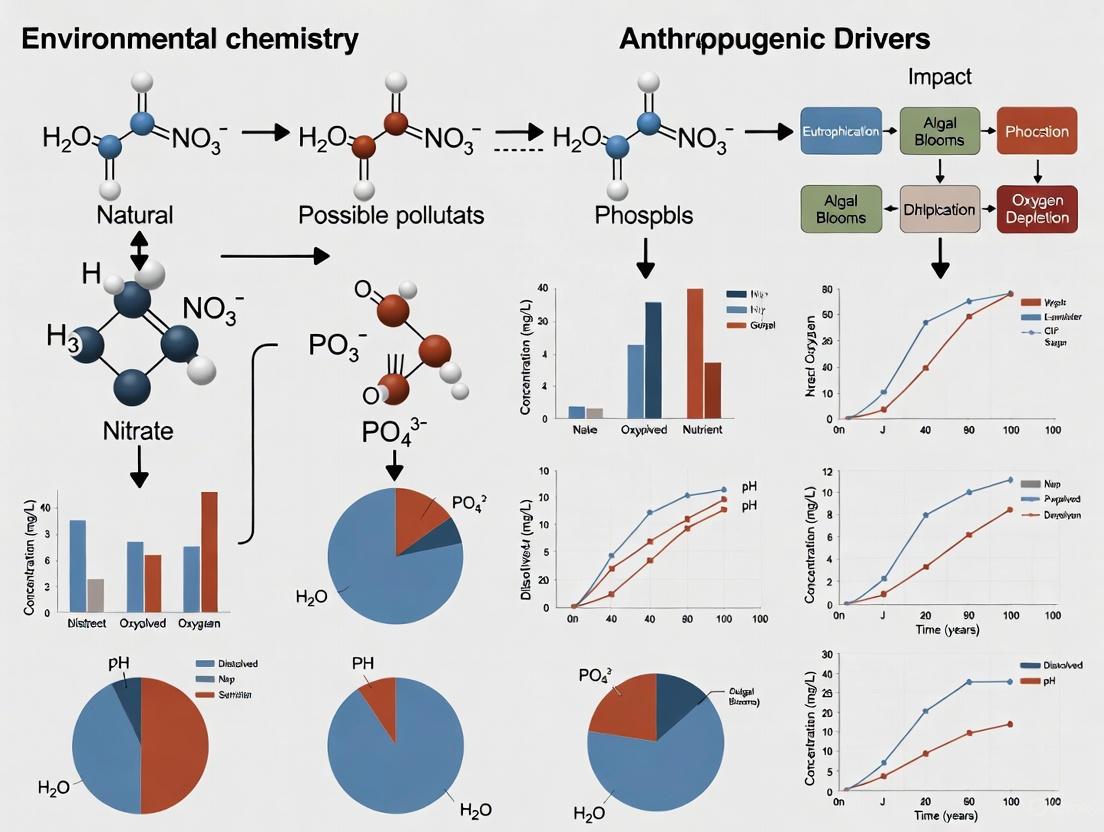

Workflow Diagram

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Reagents and Materials for Hydrogeochemical Analysis

| Item | Function / Application |

|---|---|

| Standard Reference Materials | Certified materials with known element concentrations used to calibrate analytical instruments and ensure the accuracy and precision of data [4]. |

| Acids (e.g., HNO₃) | High-purity acids are used to preserve water samples and digest solid samples to prevent precipitation and keep metals in solution for analysis. |

| Ion Chromatography System | Used for the quantitative analysis of major anions (e.g., Cl⁻, SO₄²⁻, NO₃⁻) and cations (e.g., Na⁺, K⁺, Ca²⁺, Mg²⁺) in water samples. |

| ICP-MS (Inductively Coupled Plasma Mass Spectrometry) | An analytical technique that provides extremely low detection limits for a wide range of elements, essential for measuring trace levels of Potentially Toxic Elements (PTEs) [4]. |

| XRF (X-Ray Fluorescence) | An instrumental method used for the non-destructive elemental analysis of solid samples like rocks and soils, providing data on major and trace elements [4]. |

| Geochemical Database & Plotting Software | Specialized software (e.g., IoGas, GCDkit) is used to manage large datasets, create standard classification plots, tectonic discrimination diagrams, and model geochemical processes [4]. |

Troubleshooting Guide: Identifying Anthropogenic Pollution Signatures

This guide helps researchers diagnose the dominant anthropogenic drivers in water quality datasets by providing characteristic signatures and diagnostic steps.

Q1: My water quality data shows elevated nitrogen and phosphorus levels. How can I determine if the source is agricultural?

A1: Nutrient pollution is a hallmark of agricultural runoff. Follow these steps to confirm an agricultural signature:

- Step 1: Check for Seasonal Patterns: Analyze your data for seasonal spikes. Concentrations of nitrate (NO₃⁻) and phosphorus are often highest during the wet, agricultural growing season or following fertilizer application periods [5] [6]. In contrast, a study in China found high nitrogen levels in the dry season in some managed watersheds, highlighting the importance of local context [5].

- Step 2: Correlate with Land Use: Cross-reference your sampling sites with land use maps. A strong positive correlation between nutrient levels and the proportion of upstream farmland, particularly within a 500-meter buffer zone, is a key indicator [7].

- Step 3: Look for Co-pollutants: Check for the presence of pesticides or herbicides in your samples, which are commonly associated with agricultural activities [8].

- Expected Data Signature: You will likely see a correlation between nutrient loads and specific agricultural land use types. For example, paddy fields and dryland farms are strongly correlated with nutrient and Chlorophyll-a concentrations [6].

Q2: I have detected E. coli and chloride spikes in an urban stream. What is the likely cause?

A2: This combination is characteristic of urban water pollution.

- Step 1: Analyze Temporal Trends: Examine the timing of the spikes. E. coli concentrations often peak following rainfall events due to combined sewer overflows (CSOs) or stormwater runoff washing waste from impervious surfaces into waterways [7]. Chloride (Cl⁻) spikes are typically highest in winter and early spring, coinciding with the application and subsequent runoff of road de-icing salts [7].

- Step 2: Correlate with Impervious Surfaces: Determine the relationship between pollutant concentrations and the extent of urban built-up land. Research shows a positive correlation between E. coli levels and the percentage of urban land cover within a 1000-meter buffer around sampling sites [7].

- Step 3: Review Local Infrastructure: Investigate whether the area has a combined sewer system, which is common in older cities and prone to overflows during heavy precipitation [7].

- Expected Data Signature: You will typically find that urban built-up land is a primary driver for these pollutants, while green spaces with higher NDVI (Normalized Difference Vegetation Index) are negatively correlated with them [7].

Q3: My analysis shows a mix of heavy metals in the water. How do I distinguish industrial influence from other sources?

A3: Heavy metals like arsenic, lead, and mercury are often indicators of industrial activity or mining.

- Step 1: Identify the Metal Portfolio: The specific metals present can point to particular industries. For example, arsenic is a frequent byproduct of industrial processes and a primary contributor to carcinogenic risk [6].

- Step 2: Conduct Spatial Analysis: Map the contamination against point sources. Unlike diffuse agricultural runoff, industrial pollution often shows a strong point-source gradient, with concentrations decreasing significantly with distance from a known discharge point, such as a factory or wastewater outfall [6].

- Step 3: Perform a Health Risk Assessment: Calculate the carcinogenic and non-carcinogenic risk to human health. A study in the Naoli River found the heavy metal risk for children exceeded acceptable limits, primarily driven by industrial-related arsenic [6].

- Expected Data Signature: Redundancy Analysis (RDA) will often show a strong association between heavy metal concentrations and specific industrial land use types [6].

Data Presentation: Characteristic Signatures of Anthropogenic Drivers

The table below summarizes key indicators and data patterns for different anthropogenic pollution sources.

Table 1: Characteristic Signatures of Major Anthropogenic Drivers

| Anthropogenic Driver | Key Indicator Parameters | Typical Spatial Pattern | Typical Temporal Pattern |

|---|---|---|---|

| Agricultural Runoff | Nitrate (NO₃⁻), Phosphorus, Pesticides, Sediment [8] | Non-point source; correlates with upstream farmland area, especially paddy fields and dry land [6] | Peaks during wet seasons and/or following fertilizer application; high nitrogen can also occur in dry seasons [5] [7] |

| Urban Runoff | E. coli, Chloride (Cl⁻), Heavy Metals [7] | Non-point source; correlates with impervious surface cover (e.g., built-up areas) [7] | E. coli peaks after rainfall; Chloride peaks in winter/spring from de-icing salts [7] |

| Industrial Effluent | Heavy Metals (e.g., Arsenic, Lead), Sulfate (SO₄²⁻), specific industrial chemicals [6] | Often a point-source; shows a steep gradient from discharge location [6] | Can be continuous or intermittent, depending on production cycles and wastewater treatment |

Experimental Protocols for Driver Identification

Protocol 1: Land Use and Water Quality Correlation Analysis

This methodology is used to quantitatively link water quality parameters to watershed land use.

- Watershed Delineation: For each water quality sampling point, delineate the corresponding drainage area (watershed) using GIS hydrological tools based on a Digital Elevation Model (DEM) [6].

- Land Use Quantification: Using land use/cover data (e.g., from ESA World Cover or national datasets), calculate the percentage of each land use type (e.g., urban, agricultural, forest) within the delineated watersheds and within multiple circular buffer zones (e.g., 500 m, 1000 m) around each sampling site [6] [7].

- Statistical Analysis: Perform Redundancy Analysis (RDA) or linear regression to quantify the relationship between land use percentages and measured water quality parameters (e.g., NO₃⁻, E. coli, heavy metals) [6] [7]. This identifies which land uses are the strongest predictors of pollution.

Protocol 2: Trend-Based Metric for Isolating Human Impact

This method separates climatic effects from anthropogenic pressures on water quality trends.

- Data Collection: Compile long-term, seasonal water quality data (e.g., COD, DO) for both natural (minimally disturbed) and managed (human-impacted) watersheds that share similar climatic conditions [5].

- Trend Analysis: Calculate seasonal trends (e.g., slope of concentration over time) for each water quality parameter in both watershed types [5].

- Calculate T-NM Index: Compute the Trend-based Natural-Managed (T-NM) index. This index compares trends in managed watersheds to those in nearby natural watersheds, quantifying the extent to which human activities amplify or suppress natural climatic trends [5]. The formula is:

- T-NM index = (Trendmanaged - Trendnatural) / |Trend_natural| [5].

- A positive value indicates human amplification of a trend, while a negative value indicates human suppression.

Diagnostic Workflow for Anthropogenic Driver Analysis

The diagram below outlines a logical workflow for diagnosing primary anthropogenic drivers based on water quality data.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Reagents and Materials for Water Quality Source Analysis

| Item | Function in Analysis | Example Application |

|---|---|---|

| Ion Chromatography System | Quantifies concentrations of anions and cations in water samples [7]. | Measuring nitrate (NO₃⁻), sulfate (SO₄²⁻), and chloride (Cl⁻) ions to identify fertilizer or road salt contamination [7]. |

| ICP-MS (Inductively Coupled Plasma Mass Spectrometry) | Detects and quantifies trace heavy metals and elements at very low concentrations [6]. | Identifying and sourcing industrial pollution by analyzing for metals like arsenic, lead, and mercury [6]. |

| Colilert Test Kits / IDEXX | Provides a standardized method for quantifying Escherichia coli (E. coli) bacteria in water samples [7]. | Detecting fecal contamination from sewage or animal waste in urban and agricultural settings [7]. |

| Multiparameter Water Quality Probe | Measures physico-chemical parameters in situ (on-site) at the time of sampling [7]. | Recording dissolved oxygen (DO), pH, temperature, and total dissolved solids (TDS), which provide context for other chemical analyses [7]. |

| GIS (Geographic Information System) Software | Used for watershed delineation, land use classification, and spatial analysis of pollution patterns [6]. | Correlating land use types (urban, agricultural) with water quality measurements at sampling sites [6] [7]. |

Frequently Asked Questions (FAQs)

Q: What is the most effective statistical method for linking land use to water quality? A: Multivariate techniques like Redundancy Analysis (RDA) are highly effective. RDA can quantify how much of the variation in your water quality data (e.g., nutrients, metals) is explained by different land use types (e.g., percentage of urban, agricultural, or forested land) in the watershed [6] [7].

Q: Why is it crucial to analyze seasonal water quality trends? A: Seasonal analysis helps disentangle natural climatic effects from human impacts. For example, a study found that human activities amplified decreasing COD (Chemical Oxygen Demand) trends in 22-158% of watersheds in the summer, a season heavily influenced by agricultural and urban runoff [5]. Understanding these patterns is key to accurate source identification.

Q: How can I account for natural background variability in my data? A: Use a reference or "natural watershed" as a control. By comparing trends in your study area to those in a nearby, minimally disturbed watershed with similar climate, you can isolate the human-induced signal. The T-NM index is a metric designed specifically for this purpose [5].

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary indicators of anthropogenic influence on groundwater quality in urban areas like Kano? Anthropogenic influence is often indicated by elevated levels of specific chemical parameters. Key indicators include increased concentrations of nitrate (NO₃⁻), chloride (Cl⁻), and sulfate (SO₄²⁻), which are often linked to human activities [9]. In the Kano study, elevated levels of Electrical Conductivity, Total Dissolved Solids (TDS), Hardness, and certain major ions in urban and peri-urban districts were strong indicators of human impact, contrasting with areas dominated by natural geology [10]. The presence of these constituents, especially when correlated with known urban or agricultural land use, helps distinguish human pollution from natural background levels.

FAQ 2: Which statistical methods are most effective for differentiating natural and anthropogenic sources in water quality data? Multivariate statistical methods are highly effective for this purpose [11].

- Principal Component Analysis (PCA): Helps reduce data dimensionality and identify underlying factors (e.g., a "hardness" factor from natural geology vs. a "pollution" factor from sewage) that control water chemistry [10] [11].

- Correlation Analysis: Reveates relationships between parameters (e.g., a strong correlation between sodium and chloride may point to sewage contamination) [10] [9].

- Redundancy Analysis (RDA): A powerful method to quantitatively link water quality parameters (response variables) to specific environmental factors (explanatory variables) like land use or hydrogeology, directly testing hypotheses about driving forces [11].

FAQ 3: My data shows high spatial variability. How can I model this to understand plume behavior? For spatially variable data, especially from large monitoring networks, spatiotemporal modeling tools are more accurate than analyzing trends at individual wells or single-time contour maps [12] [13]. The GroundWater Spatiotemporal Data Analysis Tool (GWSDAT) applies a spatiotemporal solute concentration smoother using penalized splines. This method simultaneously estimates spatial distribution and temporal trends, providing a coherent picture of dynamic contamination plumes, their stability, and migration pathways [12] [13]. This approach is less biased by missing data points or irregular sampling rounds.

FAQ 4: What are the best color scheme practices for creating clear and accessible groundwater quality maps? Effective color choices are crucial for accurate interpretation [14].

- Sequential Data: Use a single-hue sequential color bar (e.g., light to dark blue) for data like concentration levels, as it allows easy identification of high and low values [14].

- Diverging Data: Use a diverging color bar (e.g., blue to red) for anomaly maps, such as showing parameter levels against a background standard [14].

- Avoid Rainbows: Traditional rainbow color schemes increase the error rate in identifying values and should be replaced with more intuitive sequential or diverging schemes [14].

Troubleshooting Common Experimental & Data Analysis Issues

Problem 1: Inconsistent or "noisy" trends in time-series data from monitoring wells.

- Potential Cause: Natural hydrological fluctuations, seasonal effects, or sampling inconsistencies.

- Solution: Apply a nonparametric smoother to the time-series data for individual wells. This technique, available in tools like GWSDAT, estimates trends without assuming a fixed shape (linear or logarithmic), allowing the true direction—increasing, decreasing, or stable—to emerge from noisy data [12] [13].

Problem 2: Difficulty in visualizing the evolution of a contamination plume over both space and time.

- Potential Cause: Relying on independent contour maps for each sampling event, which can be disjointed and difficult to compare.

- Solution: Use a spatiotemporal modeling approach. This method creates a smooth, continuous model of solute concentrations that changes over time, providing a more accurate and interpretable visualization of plume dynamics, including migration and dilution [13].

Problem 3: Uncertainty in health risk assessment due to variability in exposure parameters.

- Potential Cause: Deterministic health risk models that use single, fixed values for exposure parameters can over- or underestimate true risk.

- Solution: Employ a probabilistic health risk assessment using Monte Carlo simulation. This method runs thousands of simulations with variable input parameters (e.g., ingestion rate, body weight) to generate a probability distribution of risk, providing a more realistic and robust risk quantification [11] [9].

Problem 4: A chart or map is cluttered and the key message is not clear.

- Potential Cause: Excessive "chartjunk"—gridlines, labels, or decorative elements that do not convey information.

- Solution: Adhere to the principle of maximizing the data-ink ratio. Remove any non-essential elements from the visualization. Use annotations and highlight the most important data story, keeping other contextual data in the background for comparison [15].

The following table summarizes key physicochemical parameters and their implications for distinguishing water quality drivers, based on the research in Kano [10].

Table 1: Summary of Key Groundwater Quality Parameters and Their Interpretations from the Kano Study

| Parameter | Observed Range / Characteristics in Kano | Interpretation / Implication |

|---|---|---|

| pH | Slightly acidic to slightly alkaline | Indicates the corrosivity of water and influences chemical reaction rates. |

| Dissolved Oxygen (DO₂) | Generally poor levels | Suggests possible impact of organic pollutants or eutrophication (anthropogenic). |

| Major Hydrochemical Facies | Sodium-Chloride (Na-Cl) and Calcium-Magnesium Bicarbonate (Ca-Mg HCO₃) | Na-Cl facies often linked to anthropogenic urban pollution (e.g., sewage); Ca-Mg HCO₃ is more typical of natural water-rock interactions [10]. |

| Trace Metals (e.g., Fe, Zn) | Generally low, but with localized elevations | Suggests overall low acute risk; sporadic increases point to localized contamination sources. |

| Spatial Variability | High heterogeneity across the five studied sites | Confirms the combined and varying influence of local geology (natural) and human activities (anthropogenic) across the region. |

Essential Experimental Protocols & Methodologies

Protocol for Multivariate Hydrochemical Characterization

This protocol outlines the steps for collecting and analyzing groundwater samples to characterize hydrochemistry and identify influencing factors [10].

- Site Selection & Sampling: Select monitoring points (wells) across different geological units and land use types (e.g., urban, agricultural, natural). In the Kano study, 51 samples were collected from five principal sites [10].

- Field Measurements: Measure field parameters in situ using a multiparameter probe: pH, Temperature, Electrical Conductivity (EC), Dissolved Oxygen (DO), and Turbidity [10].

- Sample Collection & Preservation: Collect water samples in clean, appropriate bottles. Preserve samples for lab analysis (e.g., refrigeration at 4°C for metals and ions) [9].

- Laboratory Analysis: Analyze for major cations (Na⁺, K⁺, Mg²⁺, Ca²⁺), major anions (Cl⁻, SO₄²⁻, HCO₃⁻), and trace metals (Cr, As, Fe, Zn, Cu, Ni, Pb, Cd). Ensure charge balance error is <5% for data validity [10].

- Data Analysis & Interpretation:

- Construct Piper Diagrams to identify dominant hydrochemical facies [10].

- Perform Correlation Analysis and Principal Component Analysis (PCA) to identify groups of related parameters and potential sources [10] [11].

- Use ionic ratios (e.g., Na⁺/Cl⁻, Ca²⁺/Mg²⁺) and stable isotope analysis (δ¹⁵N-NO₃⁻, δ¹⁸O-NO₃⁻) to pinpoint specific geochemical processes and nitrate sources [9].

Protocol for Probabilistic Health Risk Assessment (HRA)

This protocol uses Monte Carlo simulation to quantify the uncertainty in non-carcinogenic health risks from contaminants like nitrate [11] [9].

- Hazard Identification: Identify the contaminant of concern (e.g., nitrate).

- Dose-Response Assessment: Obtain the reference dose (RfD) for the contaminant from relevant authorities (e.g., US EPA).

- Exposure Assessment: Calculate the chronic daily intake (CDI). The formula for ingestion is: CDI = (C × IR × EF × ED) / (BW × AT), where:

- C = Concentration of contaminant in water (mg/L)

- IR = Ingestion rate (L/day)

- EF = Exposure frequency (days/year)

- ED = Exposure duration (years)

- BW = Body weight (kg)

- AT = Averaging time (days)

- Monte Carlo Simulation:

- Define probability distributions (e.g., log-normal, normal) for the variable exposure parameters (IR, BW, ED) instead of using single values.

- Run a large number of simulations (e.g., 10,000) to compute a probability distribution for the Hazard Quotient (HQ = CDI / RfD).

- Risk Characterization: Interpret the results. An HQ > 1 indicates potential non-carcinogenic risk. Report the probability (percentage) of the population exceeding an HQ of 1 for different demographic groups (e.g., children, adults) [9].

Analytical Workflow for Source Separation

The following diagram illustrates the logical workflow for separating natural and anthropogenic drivers in a groundwater quality study.

Figure 1: Workflow for separating natural and anthropogenic drivers in groundwater quality studies.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for Groundwater Quality and Source Separation Research

| Item / Solution | Function / Application |

|---|---|

| Multiparameter Field Probe | For in-situ measurement of critical physical parameters like pH, Electrical Conductivity (EC), Temperature, Dissolved Oxygen (DO), and Turbidity [10]. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Highly sensitive analytical technique for accurate determination of trace metal concentrations (e.g., Cr, As, Pb, Cd) in water samples [9]. |

| Ion Chromatography (IC) | Used for the simultaneous quantification of major anions (Cl⁻, SO₄²⁻, NO₃⁻) and cations (Na⁺, K⁺, Ca²⁺, Mg²⁺) in water samples [9]. |

| Stable Isotope Ratio Mass Spectrometer (IRMS) | For analyzing stable isotopes of water (δ²H-H₂O, δ¹⁸O-H₂O) and nitrate (δ¹⁵N-NO₃⁻, δ¹⁸O-NO₃⁻) to identify water sources and trace the origin of nitrate contamination [9]. |

| GWSDAT (GroundWater Spatiotemporal Data Analysis Tool) | User-friendly, open-source software for the visualization and spatiotemporal analysis of groundwater monitoring data, including trend analysis and plume diagnostics [12] [13]. |

| R / Python with Statistical Packages | Programming environments for performing advanced multivariate statistics (PCA, RDA), generating custom visualizations, and running probabilistic risk assessments with Monte Carlo simulation [11] [12]. |

Troubleshooting Guide: Common Data Interpretation Challenges

FAQ: Why do two lakes in the same region show diverging water quality trends despite similar external pressures?

The Problem Researchers often observe that adjacent lakes exhibit different eutrophication trajectories, which can complicate the attribution of causes. This divergence suggests that local factors and internal lake processes may be overriding regional anthropogenic pressures.

Diagnosis and Solution

- Compare Trophic State Indices: Calculate and track multiple water quality indicators over time to identify which parameters are driving the divergence.

- Analyze Dominant Pollutants: Different primary pollutants (e.g., phosphorus vs. organic matter) indicate different pollution sources and pathways.

- Assess Historical Trajectories: Examine whether systems are on recovery trajectories or continuing to degrade, as this reveals the effectiveness of management interventions.

Table: Comparative Water Quality Trends in Macrophytic Lakes

| Water Quality Parameter | East Taihu Lake Trend (2005-2023) | Liangzi Lake Trend (2005-2022) | Primary Driver Identification |

|---|---|---|---|

| Trophic State Index (TSI) | Initial increase pre-2018, then gradual decline; remains eutrophic (TSI >50) | Consistent upward trend; mesotrophic (30< TSI <50) | Anthropogenic nutrient loading [16] |

| Total Phosphorus (TP) | Increase identified | Increase identified | Primary pollution driver in East Taihu Lake (p<0.01) [16] |

| Chlorophyll α | Increase identified | Upward trend | Indicator of algal biomass response [16] |

| Chemical Oxygen Demand (CODMn) | Decline observed | Upward trend; dominant pollution parameter | Primary pollution driver in Liangzi Lake (p<0.01) [16] |

| Ammonia-Nitrogen (NH₃-N) | Increase identified | Upward trend | Indicator of wastewater and agricultural inputs [16] |

| Secchi Depth (SD) | Decline observed | Upward trend | Indicator of water clarity and suspended solids [16] |

| Comprehensive Pollution Index (Pw) | Higher than Liangzi Lake | Lower than East Taihu Lake | Overall pollution burden indicator [16] |

FAQ: How can researchers distinguish between natural hydrological changes and anthropogenic impacts in floodplain lakes?

The Problem Paleolimnological records often show asynchronous changes in different biological proxies, making it difficult to identify primary drivers and leading to conflicting interpretations of ecosystem responses.

Diagnosis and Solution

- Multi-Proxy Analysis: Employ complementary biological proxies (pigments, chironomids, cladocera) to detect phased ecosystem responses.

- Historical Timeline Reconstruction: Correlate ecosystem changes with documented anthropogenic events and hydrological modifications.

- Hydrological Connectivity Assessment: Evaluate how connection to main river channels mediates both natural and anthropogenic impacts.

Table: Asynchronous Responses to Hydrological Alteration in Luhu Lake

| Time Period | Chironomid Community Response | Algal/Pigment Response | Identified Primary Driver |

|---|---|---|---|

| Pre-1970 | Stable community dominated by Microchironomus tener-type | Low and stable algal production | Relatively natural conditions [17] |

| 1970-2000 | Major shift to Tanytarsus marmoratus-type dominance (~80%) | Gradual increase beginning | Hydrological alteration from dam construction [17] |

| Post-2000 | Community remains stable | Rapid increase in algal production | Combined effect of hydrological alteration AND increased nutrient influx [17] |

Experimental Protocols for Driver Separation

Water Quality Monitoring and Trophic State Assessment

Purpose: To systematically track water quality parameters that differentiate natural seasonal variations from anthropogenic pollution trends.

Methodology:

- Sample Collection: Collect water samples seasonally from standardized locations and depths

- Parameter Analysis:

- Nutrients: Analyze Total Phosphorus (TP), Total Nitrogen (TN), and Ammonia-Nitrogen (NH₃-N) using standard spectrophotometric methods

- Biological Response: Measure Chlorophyll α as a proxy for algal biomass

- Physical Parameters: Record Secchi Depth (SD) for water clarity and calculate Chemical Oxygen Demand (CODMn) for organic pollution

- Index Calculation:

- Compute Trophic State Index (TSI) and Comprehensive Pollution Index (Pw) using established formulas [16]

- Conduct correlation analysis between water quality parameters and anthropogenic activity data

Troubleshooting Tip: When parameters show conflicting trends (e.g., decreasing TN but increasing TP), investigate specific anthropogenic sources such as wastewater discharge patterns or agricultural runoff composition [16].

Multi-Proxy Paleolimnological Reconstruction

Purpose: To disentangle long-term anthropogenic impacts from natural variability using sediment cores.

Methodology:

- Core Collection: Extract sediment cores using gravity or piston coring devices from accumulation zones

- Dating: Establish chronology using ²¹⁰Pb, ¹³⁷Cs, or ¹⁴C dating methods

- Proxy Analysis:

- Pigments: Extract and analyze chlorophyll and carotenoid pigments to reconstruct primary production history [17]

- Chironomids: Isplicate, identify, and count chironomid head capsules to infer bottom-up food web changes [17]

- Statistical Analysis: Use CONISS (constrained incremental sum of squares) to identify significant zones of change in biological communities

- Historical Correlation: Compare proxy changes with documented historical events (dam construction, land use changes)

Troubleshooting Tip: When proxies show asynchronous responses (e.g., chironomid changes preceding algal responses), consider differential sensitivity to various stressors—chironomids may respond more directly to hydrological change while algae respond more to nutrient inputs [17].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents and Materials for Lake Ecosystem Research

| Item | Function/Application | Technical Specifications |

|---|---|---|

| Water Quality Sampling Kit | Collection and preservation of water samples for nutrient analysis | Includes acid-washed bottles, preservatives (H₂SO₄ for nutrients), cold chain equipment [16] |

| Secchi Disk | Measurement of water transparency | Standard 20cm diameter disk with alternating black/white quadrants; deployment apparatus with calibrated line [16] |

| Filtration Apparatus | Chlorophyll α extraction and analysis | Glass fiber filters (0.7μm pore size), vacuum pump, acetone for extraction, spectrophotometer/fluorometer [16] |

| Sediment Corer | Collection of undisturbed sediment sequences for paleolimnological study | Gravity corer, piston corer, or freeze corer; core extrusion equipment [17] |

| Microscope with Counting Chamber | Identification and enumeration of biological indicators | Compound microscope with 100-400x magnification; Sedgewick-Rafter or similar counting chamber for chironomids/diatoms [17] |

Research Workflow: Separating Natural and Anthropogenic Drivers

Decision Framework for Management Interventions

Troubleshooting Guide: Isolating Natural Climate Signals in Water Quality Data

This guide helps researchers diagnose and correct for the influence of natural climate variability in water quality datasets, ensuring a clearer identification of anthropogenic signals.

Problem 1: Unexplained Seasonal or Decadal Shifts in Water Quality Parameters You observe cyclical fluctuations in parameters like Chemical Oxygen Demand (COD) or Dissolved Oxygen (DO) that do not correlate with known human activities.

| Observed Anomaly | Potential Natural Driver | Diagnostic Experiment & Data to Collect |

|---|---|---|

| Rapid, short-term cooling; increased water turbidity; altered pH [18]. | Volcanic Eruptions (sulfate aerosols, ash) [18] [19]. | Verify: Cross-reference event timing with the Smithsonian Global Volcanism Program database. Analyze satellite data for aerosol optical depth (AOD) and local temperature records. |

| Multi-year warming or cooling trends correlating with ~11-year cycles [18]. | Solar Cycles (variation in solar irradiance) [18] [19]. | Verify: Obtain time-series data for total solar irradiance (TSI) and sunspot numbers from NASA. Perform spectral analysis on your water quality data to detect matching periodicities. |

| Multi-decadal to millennial-scale trends in temperature and hydrological patterns [20] [19]. | Orbital Forcings (Milankovitch Cycles: eccentricity, obliquity, precession) [18] [19]. | Verify: Use paleoclimatic proxy data (ice cores, ocean sediments) to establish long-term baselines. Statistical detrending of datasets to remove these very low-frequency oscillations. |

| Periodic warming (El Niño) or cooling (La Niña) altering precipitation, runoff, and river flow [18]. | El Niño-Southern Oscillation (ENSO) [18]. | Verify: Monitor oceanic Niño index (ONI). Correlate with local precipitation and discharge data to understand impacts on pollutant concentration and dilution [5]. |

Problem 2: Failure to Statistically Separate Natural and Anthropogenic Influences Your model cannot confidently attribute water quality changes (e.g., COD/DO trends) to specific causes.

- Step 1 – Identify the Problem: Define whether the issue is with interannual trends or specific seasonal patterns (e.g., summer DO reductions) [5].

- Step 2 – List All Possible Explanations: Create a comprehensive list of drivers, including both natural (e.g., seasonal rainfall, geological background) and anthropogenic factors (e.g., land use changes, point source pollution) [21].

- Step 3 – Collect Baseline Data: Gather data from nearby natural watersheds with minimal human impact. Consistent trends in these areas suggest climatic dominance, providing a baseline for natural variability [5].

- Step 4 – Eliminate Explanations: Use controlled models (e.g., the T-NM index) to quantify the human amplification or suppression effect on the natural baseline trend [5].

- Step 5 – Check with Experimentation: Implement multivariable analysis. In natural watersheds, factors like rainfall and slope may explain most variation, while in managed watersheds, landscape metrics (e.g., Shannon Diversity Index) may dominate, clarifying the primary driver [5].

- Step 6 – Identify the Cause: The remaining unexplained variance, after accounting for the natural baseline, can be attributed to anthropogenic activities.

Diagnostic Workflow Diagram

This diagram outlines the logical process for diagnosing the influence of natural climate drivers on water quality data.

Frequently Asked Questions (FAQs)

Q1: What are the most significant natural climate forcings I need to account for in my water quality models? The most significant forcings are orbital changes (Milankovitch cycles affecting long-term climate over thousands of years), volcanic eruptions (injecting aerosols that cause short-term global cooling), and solar radiation variations (linked to the ~11-year sunspot cycle) [18] [19]. Attribution analysis shows that seasonal factors and rainfall can account for over 70% of water quality variation in natural watersheds, highlighting their primary role [5].

Q2: How can a volcanic eruption on the other side of the world affect local water quality data? Large volcanic eruptions at low latitudes can inject sulfur dioxide (SO₂) high into the stratosphere, where winds distribute it globally [18]. These gases form sulfate aerosols that scatter incoming solar radiation, leading to a measurable drop in surface temperature for 1-2 years [18] [19]. This can alter local precipitation patterns, reduce photosynthetic activity in water bodies, and change runoff dynamics, thereby affecting parameters like COD and DO.

Q3: What is a practical method to disentangle the impact of natural climate variability from human pollution in a specific river basin? A robust method involves using a paired watershed approach [5]. Compare long-term seasonal water quality trends from a managed watershed against those from a nearby natural watershed with similar climate but minimal human impact. Consistent trends in both suggest climatic dominance. The difference in the magnitude and direction of trends can then be quantified as the human impact using a metric like the T-NM index [5].

Q4: Why is there a time lag between a climate forcing and its full impact on surface temperature or water systems? This lag is primarily due to the immense heat capacity of the global ocean [20]. The oceans absorb vast amounts of heat, giving the climate system a "thermal inertia" [20]. This means that even after a radiative imbalance occurs (e.g., from increased greenhouse gases or volcanic aerosols), it may take years or decades for the full surface temperature response to be realized, which in turn gradually influences aquatic systems [20].

Experimental Protocol: Attributing DO Fluctuations to Climatic vs. Anthropogenic Drivers

Objective: To determine the primary driver(s) of dissolved oxygen (DO) depletion in a freshwater system during summer months.

1. Hypothesis Development:

- Null Hypothesis (H₀): Summer DO variations are not significantly influenced by natural climate drivers.

- Alternative Hypothesis (H₁): Natural climate drivers (e.g., temperature from solar cycles, runoff patterns from ENSO) are a significant factor in summer DO variations.

2. Data Collection Protocol:

- Water Quality Data: Obtain high-frequency (daily/weekly) time-series data for DO, water temperature, COD, and pH for at least a 15-year period from monitoring stations [5].

- Climate Data: Collect concurrent local air temperature, solar irradiance, and precipitation data.

- Large-Scale Climate Indices: Compile data for the Oceanic Niño Index (ONI) and Total Solar Irradiance (TSI) records.

- Anthropogenic Data: Gather data on seasonal wastewater discharge volumes, agricultural fertilizer application schedules, and land-use changes.

3. Controlled Data Analysis:

- Trend Analysis: Perform seasonal Mann-Kendall trend analysis on DO concentrations to identify significant increasing or decreasing patterns, separately for natural and managed watersheds [5].

- Correlation Analysis: Calculate correlation coefficients between DO levels and potential drivers (water temperature, ONI, TSI, fertilizer use).

- Multivariable Modeling: Build regression models (e.g., multiple linear regression or machine learning models) with DO as the dependent variable. Use climate indices and anthropogenic data as independent variables. The relative contribution of each variable reveals the primary drivers [5].

Experimental Attribution Workflow

This diagram details the key steps in the experimental protocol for attributing causes of water quality changes.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Research |

|---|---|

| T-NM Index | A trend-based metric used to isolate and quantify the asymmetric amplification or suppression effects of human activities on natural climatic water quality trends [5]. |

| Multivariable Regression Models | Statistical models that simulate water quality parameters (e.g., COD, DO) using multiple explanatory variables (climate and human) to partition the variance and attribute causes [5]. |

| Paired Watershed Study Design | A methodological framework comparing a "natural" watershed (climate control) with a "managed" watershed to isolate the impact of human activities from background natural variability [5]. |

| Oceanic Niño Index (ONI) | A primary indicator for monitoring the El Niño-Southern Oscillation (ENSO), used to correlate large-scale climate patterns with local hydrological and water quality data [18]. |

| Total Solar Irradiance (TSI) Data | A key dataset from satellite observations used to correlate periodic changes in the sun's energy output with long-term trends in water temperature and ecosystem productivity [18]. |

Advanced Techniques for Source Separation: From Chemical Tracers to Machine Learning

Chemical Fingerprinting and Isotopic Tracers for Source Identification

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind using isotopic tracers for source identification? Isotopic tracers operate on the principle that different sources of pollutants often have distinct isotopic "fingerprints." For example, nitrate from manure and sewage has a different isotopic composition (δ¹⁵N) than nitrate from synthetic fertilizers. By measuring these ratios in environmental samples, researchers can trace the pollutant back to its origin [22].

Q2: When should I use a multi-isotope approach versus a single isotope? A multi-isotope approach (e.g., combining δ¹⁵N-NO₃, δ¹⁸O-NO₃, δ¹³C) is highly recommended for complex systems. While a single isotope can provide clues, multiple isotopes provide convergent lines of evidence, greatly increasing the accuracy of your source apportionment and helping to account for overlapping signatures or isotopic fractionation during biogeochemical processes [22] [23].

Q3: My isotopic data is ambiguous. What could be the cause? Ambiguity often arises from isotopic fractionation, where physical or biological processes alter the original isotopic signature. Alternatively, you might be dealing with mixed sources that have overlapping signatures. To resolve this, consider:

- Using Compound-Specific Isotope Analysis (CSIA): This provides isotopic data for individual compounds, reducing ambiguity from bulk sample analysis [23].

- Incorporating Molecular Markers: Combine isotopic data with molecular biomarkers like n-alkanes or PAHs to strengthen your conclusions [23].

- Applying Statistical Models: Use models like End-Member Mixing Analysis (EMMA) to quantitatively apportion sources [22].

Q4: How do I distinguish anthropogenic organic matter from natural sources in sediments? An integrated approach is most effective. This involves measuring bulk elemental contents (TOC, TN) and their stable isotopes (δ¹³C, δ¹⁵N), and then refining the analysis with molecular markers and their CSIA. For instance, aliphatic hydrocarbons (n-alkanes) can indicate natural plant waxes, while polycyclic aromatic hydrocarbons (PAHs) are often markers for anthropogenic combustion [23].

Troubleshooting Guides

Issue: Low Signal-to-Noise Ratio in Complex Environmental Samples

Problem: It is difficult to detect the target isotopic signal against a high background of natural organic matter.

| Step | Action | Rationale |

|---|---|---|

| 1 | Pre-concentration | Use solid-phase extraction (SPE) or similar techniques to concentrate the target analytes, improving detectability. |

| 2 | Purification | Employ chromatographic methods to separate the compound of interest from interfering substances in the sample matrix. |

| 3 | Switch to CSIA | Move from bulk isotope analysis to Compound-Specific Isotope Analysis to isolate the signal of the specific compound [23]. |

Problem: Two or more potential pollution sources have similar or overlapping isotopic values, making them impossible to distinguish.

| Step | Action | Rationale |

|---|---|---|

| 1 | Expand the Isotopic Suite | Incorporate additional isotopes. For nitrate, adding δ¹⁸O to δ¹⁵N can help separate soil nitrogen from fertilizer nitrate [22]. |

| 2 | Integrate Complementary Tracers | Use chemical or molecular markers. For organic matter, combining δ¹³C with n-alkane distributions provides a more robust source identification [23]. |

| 3 | Apply Advanced Statistical Models | Implement multivariate statistical methods or machine learning models to quantitatively apportion contributions from multiple sources [22] [5]. |

Experimental Protocols

This protocol is adapted from a study on shallow groundwater in a large irrigation area [22].

1. Sample Collection:

- Collect groundwater samples from monitoring wells or springs in clean, acid-washed HDPE bottles.

- Filter samples immediately in the field through a 0.45 μm membrane filter.

- For nitrate isotope analysis, preserve samples by freezing until analysis.

2. Chemical and Isotopic Analysis:

- Major Ions: Analyze cations (Ca²⁺, Mg²⁺, Na⁺, K⁺) and anions (Cl⁻, SO₄²⁻, HCO₃⁻, NO₃⁻) using Ion Chromatography (IC) or Inductively Coupled Plasma Optical Emission Spectrometry (ICP-OES). This helps classify water type and understand geochemical background [22].

- Nitrate Isotopes (δ¹⁵N-NO₃ and δ¹⁸O-NO₃): Analyze using the denitrifier method or by chemical conversion, followed by Isotope Ratio Mass Spectrometry (IRMS).

3. Data Interpretation:

- Source Identification: Plot δ¹⁵N-NO₃ against δ¹⁸O-NO₃. Different nitrate sources (e.g., manure & sewage, soil nitrogen, chemical fertilizer) often cluster in distinct regions of the cross-plot [22].

- Quantitative Apportionment: Use a model like End-Member Mixing Analysis (EMMA) to calculate the proportional contribution of each identified source to the total nitrate concentration.

Protocol 2: Apportioning Natural and Anthropogenic Organic Matter in Sediments

This protocol is based on an integrated approach used for lake sediments [23].

1. Sample Collection and Preparation:

- Collect surface sediment samples (e.g., top 2 cm) using a grab sampler or corer.

- Freeze-dry the samples and homogenize them with a mortar and pestle.

- Sieve the dried sediment to a consistent particle size (e.g., < 63 μm) for analysis.

2. Bulk Analysis:

- Total Organic Carbon (TOC) and Total Nitrogen (TN): Determine using an elemental analyzer.

- Bulk Stable Isotopes (δ¹³C and δ¹⁵N): Analyze the stable isotope ratios of the bulk sediment using an Elemental Analyzer coupled to an IRMS.

3. Molecular Marker and CSIA Analysis:

- Lipid Extraction: Extract aliphatic and aromatic hydrocarbons from the sediment using a Soxhlet apparatus or accelerated solvent extractor with a dichloromethane/methanol solvent mixture.

- Fractionation: Separate the total extract into different fractions (e.g., aliphatic hydrocarbons, aromatic hydrocarbons) using silica gel column chromatography.

- Molecular Analysis:

- Analyze n-alkanes and Polycyclic Aromatic Hydrocarbons (PAHs) using Gas Chromatography-Mass Spectrometry (GC-MS).

- Perform Compound-Specific Isotope Analysis (CSIA) on target compounds (e.g., n-alkanes) using GC-Isotope Ratio Mass Spectrometry (GC-IRMS) to obtain δ¹³C values for individual molecules [23].

4. Data Interpretation:

- Use molecular ratios (e.g., Carbon Preference Index for n-alkanes, PAH isomer ratios) to differentiate between natural (terrestrial, aquatic) and anthropogenic (petrogenic, pyrogenic) sources.

- Combine the molecular data with the CSIA data to quantitatively constrain the contributions of the different organic matter sources.

Workflow Visualization

Diagram 1: Isotopic Source Identification Workflow

Diagram 2: Multi-Method Approach for Organic Matter

Key Research Reagent Solutions

The following table details essential materials and reagents used in chemical fingerprinting and isotopic tracer studies.

| Reagent/Material | Function in Experiment | Key Considerations |

|---|---|---|

| Reference Isotopic Standards | Calibrate the isotope ratio mass spectrometer (IRMS) and ensure data accuracy and comparability. | Must be certified for specific isotopes (e.g., USGS standards for δ¹⁵N, IAEA standards for δ¹⁸O). |

| Solid-Phase Extraction (SPE) Cartridges | Pre-concentrate and purify target analytes (e.g., nitrate, organic compounds) from complex water samples. | Select sorbent phase based on target analyte chemistry (e.g., anion exchange for nitrate, C18 for organic compounds). |

| Organic Solvents (Dichloromethane, Methanol) | Extract lipid biomarkers (e.g., n-alkanes, PAHs) from solid samples like sediments. | High purity (GC-MS grade) is critical to avoid contamination and interfering signals. |

| Silica Gel | Separate complex total lipid extracts into fractions (e.g., aliphatic, aromatic) via column chromatography. | Must be activated by heating before use to remove moisture and ensure consistent performance. |

| Chemical Denitrifiers | Convert aqueous nitrate into N₂O gas for δ¹⁵N and δ¹⁸O analysis via IRMS. | Requires specific denitrifying bacteria (e.g., Pseudomonas aureofaciens) or chemical methods. |

| Nitrate Source | Approximate Contribution (%) | Key Identifying Isotopic Tracer(s) |

|---|---|---|

| Manure and Sewage | Largest Contributor | δ¹⁵N (typically enriched) |

| Soil Organic Nitrogen (SON) | Significant Contributor | δ¹⁵N, δ¹⁸O |

| NH₄⁺-based Fertilizer | Significant Contributor | δ¹⁵N (typically depleted), δ¹⁸O |

| Land-Use Type | TOC (%) | TN (%) | δ¹³C (‰) | δ¹⁵N (‰) |

|---|---|---|---|---|

| Urban Areas | 3.9 ± 3.2 | 0.1 ± 0.1 | -25.6 ± 1.1 | Data Not Specified |

| Old Industrial Complexes | 6.3 ± 6.8 | 2.3 ± 6.1 | -25.9 ± 1.7 | Data Not Specified |

| Lake Sediment | 0.7 ± 0.3 | < 0.1 | -24.5 ± 2.2 | 4.2 ± 2.7 |

Leveraging Water Quality Indices (CWQI) to Track Anthropogenic Impact

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My CWQI results show water quality deterioration, but I cannot identify if the cause is anthropogenic or natural. What analytical steps should I take?

A: We recommend implementing a T-NM index framework to decouple these influences [5]. This trend-based metric isolates asymmetric human amplification and suppression effects by comparing watersheds under managed conditions with nearby natural watersheds sharing similar climatic backgrounds. Calculate seasonal trends for parameters like COD and DO across both watershed types. Human activities typically intensify or attenuate natural trends by 22–158% and 14–56%, respectively, with strongest effects observed during summer months [5].

Q2: How can I account for seasonal variability when using CWQI to track long-term anthropogenic impact?

A: Seasonal factors can explain up to 47.08% of water quality variation [5]. Implement these approaches:

- Collect high-resolution data across multiple seasons, especially summer when anthropogenic effects are most pronounced [5]

- Apply multivariable models that separately analyze seasonal trends

- Use remote sensing data (e.g., Sentinel-2) to capture spatial and temporal patterns at higher resolution [24]

Q3: What parameters should I include in my CWQI to best detect anthropogenic influence?

A: Beyond conventional parameters (DO, BOD, COD, TSS, ammonia, pH), consider including:

- Chloride and sulfate: Key indicators of urban and industrial contamination [25]

- Nitrogen and phosphorus compounds: Indicators of agricultural runoff [26]

- Petroleum hydrocarbons (∑n-Alks, ∑PAHs): Critical for rivers affected by shipping and industrial activity [26] Prioritize parameters based on likely pollution sources in your study area.

Q4: How can I address data scarcity when calculating CWQI for anthropogenic impact assessment?

A: Implement computational approaches:

- Apply Monte Carlo simulation to your CWQI model to generate probability distributions of water quality status from limited samples [26]

- Use remote sensing retrieval with Sentinel-2 data to estimate parameters like NH4+-N and TP across broader areas [24]

- Leverage machine learning frameworks to identify patterns in incomplete datasets [5]

Q5: My CWQI shows "good" water quality, but biological indicators suggest ecosystem impairment. Why this discrepancy?

A: This common issue arises because CWQI primarily reflects chemical parameters. To resolve:

- Integrate biological indicators with your CWQI assessment [25]

- Analyze individual parameter excursions rather than just the final index value [27]

- Examine the F3 (amplitude) component of CCME WQI, which quantifies how much guidelines are exceeded [27]

Common CWQI Calculation Issues and Solutions

| Problem | Possible Causes | Solutions |

|---|---|---|

| Inconsistent trend interpretation | Failure to separate natural and anthropogenic drivers | Apply T-NM index to compare managed vs. natural watersheds [5] |

| High seasonal variability | Single-season sampling or analysis | Collect multi-season data; use seasonal trend analysis [5] |

| Insufficient parameter selection | Over-reliance on conventional parameters only | Include specific anthropogenic markers (e.g., hydrocarbons, chloride) [25] [26] |

| Limited data confidence | Small sample size | Implement Monte Carlo simulation to estimate probabilities [26] |

| Spatial resolution limitations | Point sampling missing spatial patterns | Incorporate remote sensing data (e.g., Sentinel-2) [24] |

Experimental Protocols for CWQI-Based Anthropogenic Impact Assessment

Protocol 1: Isolating Anthropogenic Influence Using the T-NM Index Framework

Purpose: To quantitatively separate natural and anthropogenic influences on water quality trends.

Materials:

- Water quality monitoring data (15+ years recommended)

- Watershed classification data (natural vs. managed)

- Climatic data (precipitation, temperature)

Methodology:

- Watershed Classification: Classify watersheds as "natural" (minimal human impact) and "managed" (significant human activities) [5]

- Parameter Selection: Focus on COD and DO as key indicators of organic pollution and ecosystem health [5]

- Trend Analysis: Calculate seasonal trends for both watershed types over the study period

- T-NM Index Calculation: Compute the T-NM index to quantify human amplification or suppression of natural trends:

- Compare trends between matched natural and managed watershed pairs

- Calculate the direction and strength of human intervention

- Attribution Analysis: Use multivariable models to attribute variation to natural vs. anthropogenic factors

Expected Outcomes:

- Quantification of human amplification/attenuation effects (typically 22-158% intensification or 14-56% attenuation) [5]

- Identification of seasons with strongest anthropogenic influence (typically summer) [5]

Protocol 2: Monte Carlo-CWQI for Data-Limited Situations

Purpose: To generate robust water quality assessments from limited monitoring data.

Materials:

- Limited water quality monitoring data (minimum 20 sampling points recommended)

- Parameter-specific water quality guidelines

- Computational resources for simulation

Methodology:

- Data Collection: Conduct monitoring for key parameters (TN, NH4+-N, TP, ∑n-Alks, ∑PAHs recommended) [26]

- Single Factor Pollution Index Calculation: For each parameter, calculate Pi = Ci/C0, where Ci is measured value and C0 is guideline value [26]

- CWQI Calculation: Compute baseline CWQI = (1/n)∑Pi for n parameters [26]

- Monte Carlo Simulation:

- Set Pi values as random variables

- Perform thousands of iterations (recommended: 10,000+)

- Generate probability distribution of possible CWQI values

- Interpretation: Classify water quality based on CWQI probability distribution:

- 0-0.4: Clean; 0.4-0.7: Slight pollution; 0.7-1.0: Moderate pollution; 1.0-2.0: Serious pollution; >2.0: Very serious pollution [26]

Expected Outcomes:

- Probability-based CWQI values with significantly higher statistical confidence [26]

- Identification of main pollutants through Spearman rank correlation analysis [26]

Protocol 3: Remote Sensing-Enhanced CWQI Monitoring

Purpose: To overcome spatial and temporal limitations of point sampling.

Materials:

- Sentinel-2 multispectral imagery

- Field validation data for water quality parameters

- Image processing software (e.g., GIS, Python/R with appropriate libraries)

Methodology:

- Field Data Collection: Collect simultaneous field measurements and satellite imagery acquisitions [24]

- Model Development: Establish regression relationships between satellite band reflectances and water quality parameters [24]

- Parameter Retrieval: Develop algorithms for:

- Comprehensive Water Quality Index (CWQI)

- NH4+-N concentrations

- Total Phosphorus (TP) concentrations [24]

- Model Validation: Compare remote sensing estimates with field measurements

- Spatial Application: Apply models to entire water body using satellite imagery

Expected Outcomes:

- Spatial maps of CWQI and key parameters across entire water bodies [24]

- Average relative errors of ~9.8% for CWQI, 19.4% for NH4+-N, and 24.7% for TP [24]

Methodological Workflows

Methodological Framework for CWQI-Based Anthropogenic Impact Assessment

Monte Carlo-CWQI Framework for Data-Limited Situations

Research Reagent Solutions and Essential Materials

Key Analytical Methods for CWQI-Based Research

| Method/Technique | Primary Function | Key Parameters | Applicability to Anthropogenic Impact Studies |

|---|---|---|---|

| CCME WQI Framework [27] | Standardized water quality assessment | F1 (Scope), F2 (Frequency), F3 (Amplitude) | Baseline method; allows comparison across regions |

| T-NM Index [5] | Separates natural vs. anthropogenic trends | Seasonal COD/DO trends, amplification/attenuation factors | Critical for attribution studies; quantifies human influence |

| Monte Carlo Simulation [26] | Handles data uncertainty | Probability distributions, confidence intervals | Essential for data-limited environments |

| Remote Sensing Retrieval [24] | Spatial water quality assessment | CWQI, NH4+-N, TP from satellite imagery | Overcomes point sampling limitations |

| Structural Equation Modeling [28] | Tests complex driver relationships | Pathway coefficients, model fit indices | Identifies indirect and direct anthropogenic effects |

| Spearman Rank Correlation [26] | Identifies main polluting factors | Correlation coefficients, significance levels | Prioritizes management actions on key pollutants |

Essential Parameters for Anthropogenic Impact Detection

| Parameter Category | Specific Parameters | Anthropogenic Linkages | Detection Methods |

|---|---|---|---|

| Conventional Indicators | DO, BOD, COD, pH, TSS | General pollution assessment | Field sensors, lab analysis [29] |

| Nutrient Indicators | TN, NH4+-N, TP | Agricultural runoff, wastewater | Spectrophotometry, chromatography [26] |

| Industrial Markers | Chloride, sulfate | Industrial discharge, urban runoff | Ion chromatography, field sensors [25] |

| Emerging Concerns | ∑PAHs, ∑n-Alks | Petroleum contamination, shipping | HPLC, GC-MS [26] |

| Biological Indicators | Fecal coliforms | Sewage contamination | Culturing, molecular methods [27] |

Data Presentation and Analysis Tables

Table 1: Seasonal Anthropogenic Influence on Water Quality Parameters Across China (2006-2020)

| Season | Watersheds with Significant COD Reduction | Watersheds with Significant DO Increase | Watersheds with Significant DO Reduction (Summer) | Strength of Human Influence |

|---|---|---|---|---|

| Spring | 17.9% | 13.3% | <3% | Moderate |

| Summer | 12.3% | Not specified | 9.2% | Strongest (22-158% intensification) |

| Fall | 22.2% | 19.7% | <3% | Moderate |

| Winter | 22.5% | 25.5% | <3% | Weakest |

Data synthesized from national-scale analysis of 195 natural and 1540 managed watersheds [5]

Table 2: CWQI Classification Schemes and Interpretation Guidelines

| Index System | Quality Categories | Value Ranges | Key Applications | Anthropogenic Sensitivity |

|---|---|---|---|---|

| CCME WQI [27] | Excellent (95-100), Good (80-94.9), Fair (65-79.9), Marginal (45-64.9), Poor (0-44.9) | 0-100 | General aquatic ecosystem protection | Moderate (depends on parameter selection) |

| Monte Carlo-CWQI [26] | Clean (0-0.4), Slight Pollution (0.4-0.7), Moderate Pollution (0.7-1.0), Serious Pollution (1.0-2.0), Very Serious (>2.0) | 0+ | Data-limited environments, specific pollution studies | High (when including anthropogenic markers) |

| Custom CWQI [25] | Context-dependent classification | Variable | Targeted studies, regional adaptations | customizable based on local anthropogenic pressures |

Table 3: Attribution of Water Quality Variation to Different Driver Categories

| Watershed Type | Seasonal Factors | Climate (Rainfall) | Topography (Slope) | Land Use Patterns | Human Management |

|---|---|---|---|---|---|

| Natural Watersheds | 47.08% | 25.37% | 17.40% | Not dominant | Minimal |

| Managed Watersheds | Secondary influence | Modified by human activities | Modified by human activities | 11.58-10.66%* | Primary driver |

As measured by Shannon Diversity Index (11.58%) and Largest Patch Index (10.66%) of land use [5]

Machine Learning and AI Models for Pattern Recognition and Anomaly Detection

Frequently Asked Questions (FAQs)

Q1: My anomaly detection job has failed and is stuck in a 'failed' state. What steps should I take to recover it?

A1: To recover from a failed state, follow this three-step recovery procedure [30]:

- Force stop the datafeed using the Stop Datafeed API with the

forceparameter set totrue.- Example:

POST _ml/datafeeds/my_datafeed/_stop { "force": "true" }

- Example:

- Force close the job using the Close Anomaly Detection Job API with the

forceparameter set totrue.- Example:

POST _ml/anomaly_detectors/my_job/_close?force=true

- Example:

- Restart the job via your machine learning application's job management interface. If the job fails again immediately, inspect the node logs for persistent errors related to the specific job ID [30].

Q2: What is the minimum amount of data required to build an effective anomaly detection model?

A2: Data requirements vary by metric type [30]:

- For sampled metrics (e.g.,

mean,min,max): A minimum of eight non-empty bucket spans or two hours of data, whichever is greater. - For count-based metrics (e.g.,

count,sum): The same as sampled metrics—eight buckets or two hours. - For other non-zero/null metrics: A minimum of four non-empty bucket spans or two hours. As a general rule of thumb, for reliable modeling, aim for more than three weeks of data for periodic patterns or a few hundred buckets for non-periodic data [30].

Q3: How can I evaluate the performance of my unsupervised anomaly detection model when I lack labeled data?

A3: For unsupervised models, conventional accuracy metrics can be misleading. Instead, focus on [30]:

- Operational Correlation: Track real-world incidents and see how well they correlate with the model's anomaly predictions.

- Score Ranking: Evaluate if the model successfully ranks periods where known anomalies occurred higher than normal periods. When labeled data is available, use metrics suitable for imbalanced datasets, such as precision, recall, and the F1-score, rather than pure accuracy [31].

Q4: My model struggles with high false positive rates. How can I improve it?

A4: High false positives often stem from an inability to distinguish normal environmental variations from true anomalies. To address this [31]:

- Incorporate Context: Use models that account for seasonal patterns (e.g., higher turbidity after storms) to prevent contextually normal events from being flagged.

- Leverage Machine Learning: ML models can learn complex, normal patterns from historical data, reducing alerts for harmless variations that rule-based systems would flag.

- Model Retraining: Implement continuous learning or periodic retraining to allow the model to adapt to slow drifts in normal behavior, such as gradual land-use changes [30].

Troubleshooting Guides

Issue: Model Fails to Adapt to Seasonal Changes and Sudden Shifts in Water Quality Data

Problem Description: The model's performance degrades over time, failing to account for seasonal hydrological patterns (like monsoon-related nutrient runoff) or sudden, persistent shifts in baseline water quality parameters, leading to inaccurate anomaly detection [5].

Diagnosis Steps:

- Visualize Temporal Trends: Plot the specific parameter (e.g., nitrate concentration) over a multi-year period. Look for recurring seasonal patterns or a definitive step-change that the model has not captured.

- Analyze Model Residuals: Check if the errors (differences between model predictions and actual values) show a non-random pattern over time, indicating unlearned systematic trends.

- Check Model Update Mechanisms: Review whether the model employs online learning or if it was trained on a static, outdated dataset that doesn't reflect current conditions.

Resolution Methods: Modern ML frameworks manage this trade-off through several adaptive techniques [30]:

- Dynamic Pattern Recognition: The algorithm runs continuous hypothesis tests on various time windows to detect significant evidence of new or changed periodic patterns, updating the model when changes are confirmed.

- Error Monitoring: The model learns an optimal decay rate by monitoring forecast bias and error distribution, allowing it to gradually adapt to slow drifts.

- Change Point Detection: For sudden shifts, hypothesis testing is used to detect changes in scaling, value shifting, or large time shifts (e.g., daylight saving time effects), triggering a model update.

Preventive Measures:

- Implement a scheduled retraining pipeline using a rolling window of the most recent data (e.g., the last 24-36 months).

- Ensure your training dataset encompasses at least two full annual cycles to capture seasonal variability effectively [30].

- Utilize models specifically designed for non-stationary data, such as Temporal Convolutional Networks (TCN) or LSTM networks, which are adept at learning temporal dependencies [32] [33].

Issue: Differentiating Natural vs. Anthropogenic Patterns in Water Quality Data

Problem Description: A researcher cannot determine whether a rising trend in riverine total nitrogen (TN) is due to increased fertilizer use (anthropogenic) or changes in precipitation patterns (natural), which is crucial for informing policy decisions [34].

Diagnosis Steps:

- Data Segmentation: Analyze trends separately in natural (minimally disturbed) watersheds and heavily managed watersheds. Consistent trends across both types suggest a dominant climatic driver [5].

- Calculate the T-NM Index: Employ a trend-based metric like the T-NM index to quantify human amplification or suppression effects on natural trends. This index isolates asymmetric human impacts, which are often most pronounced in summer [5].

- Attribution Analysis: Use explainable machine learning models (e.g., SHAP analysis on Random Forest models) to quantify the relative contribution of factors like rainfall (natural) versus land-use indices (anthropogenic) to the observed variation [5] [34].

Resolution Protocol:

- Compile a Multivariable Dataset: Gather data on climate (precipitation, temperature), watershed attributes (slope, soil type), socio-economic factors (fertilizer application, population density), and landscape metrics (e.g., Shannon Diversity Index, Largest Patch Index) [5] [34].

- Train Separate Models: Develop one model for natural watersheds and another for managed watersheds.

- Compare Driver Importance: The attribution analysis will reveal that in natural watersheds, factors like rainfall and slope dominate, while in managed watersheds, landscape and socio-economic factors are more influential [5]. For example, one study found seasonal factors explained 47.08% of variation, with rainfall (25.37%) and slope (17.40%) dominating in natural watersheds, while the Shannon Diversity Index (11.58%) and Largest Patch Index (10.66%) were key in managed watersheds [5].

Issue: Detecting Pattern Anomalies in Multivariate Sensor Data

Problem Description: Standard point anomaly detection methods are failing to identify complex, multi-sensor pattern anomalies, such as a distorted peak in dissolved organic carbon that spans multiple time steps, potentially indicating a sensor malfunction or a significant hydrological event [33].

Diagnosis Steps:

- Data Visualization: Manually inspect the time series data for specific, recurring anomalous shapes (e.g., "flat peaks," "double peaks") that have been documented by domain scientists but are not being caught by existing rules [33].

- Review Model Type: Confirm that you are using a model capable of learning sequential dependencies. Traditional methods like mean/standard deviation or models that only look at single data points are unsuitable for pattern anomaly detection [35] [33].

Resolution Methods: Adopt a deep learning-based framework designed for multivariate time series:

- Model Selection: Employ architectures like Multivariate Multiple Convolutional Networks with LSTM (MCN-LSTM) or Temporal Convolutional Networks (TCN). These models excel at capturing spatial (across sensors) and temporal (over time) relationships within the data [32] [33].

- Automated Machine Learning (AutoML) Pipeline: For complex pattern anomalies, consider an end-to-end AutoML framework like HF-PPAD. This framework [33]:

- Uses Time-Series Generative Adversarial Networks (TimeGAN) to synthesize realistic, labeled time series data with injected peak-pattern anomalies, solving the problem of scarce ground-truth labels.

- Automatically selects and optimizes the best model from a pool (e.g., TCN, InceptionTime, LSTM, ResNet, MiniRocket) based on your preference for accuracy versus computational cost.

Experimental Protocols & Data

Table 1: Performance Metrics of Selected Anomaly Detection Models

This table compares the performance of different machine learning models as reported in recent studies for water quality monitoring tasks.

| Model Name | Application Context | Key Performance Metrics | Reference |

|---|---|---|---|

| MCN-LSTM (Multivariate Multiple Convolutional Networks with LSTM) | Real-time water quality sensor monitoring | Accuracy: 92.3% [32] | Sensors 2023 |

| Modified QI with Encoder-Decoder | Water treatment plant anomaly detection | Accuracy: 89.18%, Precision: 85.54%, Recall: 94.02% [36] | Scientific Reports 2025 |

| HF-PPAD Framework (Best Model Instance) | Watershed peak-pattern anomaly detection | Automatically selects the best model from a pool (e.g., TCN, InceptionTime, LSTM) based on user-defined accuracy/cost trade-offs [33] | arXiv 2023 |

Table 2: Key Drivers of Seasonal Water Quality Variation (China Study)

Attribution analysis from a national study showing the relative influence of different factors on seasonal COD and DO variations [5].

| Factor Category | Specific Factor | Contribution in Natural Watersheds | Contribution in Managed Watersheds |

|---|---|---|---|

| Overall Seasonal Factor | Seasonality | 47.08% | 47.08% |

| Natural Drivers | Rainfall | 25.37% | - |

| Slope | 17.40% | - | |

| Anthropogenic Drivers | Shannon Diversity Index (Land Use) | - | 11.58% |

| Largest Patch Index (Land Use) | - | 10.66% |

Detailed Methodology: The T-NM Index for Isolating Anthropogenic Effects

This protocol is designed to quantify the human amplification or suppression of natural water quality trends [5].

Watershed Classification: Classify your study watersheds into two groups:

- Natural Watersheds: Minimally disturbed by human activities (e.g., 195 watersheds used in the reference study).