Ensemble Machine Learning for Spatiotemporal Analysis of Environmental Contaminants: From Fundamentals to Advanced Applications

This comprehensive review explores the transformative potential of ensemble machine learning models in analyzing spatiotemporal trends of environmental contaminants.

Ensemble Machine Learning for Spatiotemporal Analysis of Environmental Contaminants: From Fundamentals to Advanced Applications

Abstract

This comprehensive review explores the transformative potential of ensemble machine learning models in analyzing spatiotemporal trends of environmental contaminants. Targeting researchers, scientists, and environmental health professionals, the article systematically examines foundational principles, diverse methodological approaches, optimization strategies, and validation frameworks. By synthesizing cutting-edge research across air quality monitoring, water quality assessment, and soil contamination mapping, we demonstrate how ensemble techniques enhance prediction accuracy, improve generalization capabilities, and provide interpretable insights into complex environmental systems. The integration of explainable AI methods with ensemble frameworks addresses critical challenges in model transparency, enabling more reliable decision-making for environmental protection and public health initiatives.

Understanding Ensemble Models and Environmental Contaminant Dynamics

Ensemble learning is a machine learning paradigm that combines multiple models, often called base learners, to achieve better predictive performance than any single constituent model. Within environmental science, particularly in the complex field of spatiotemporal trends analysis for contaminants, ensemble methods have become indispensable for interpreting vast, heterogeneous datasets characterized by strong nonlinear dependencies across space and time. These approaches effectively mitigate the limitations and inherent biases of individual models, leading to more robust and generalizable predictions. This article delineates the core principles of ensemble learning, focusing on the critical distinction between homogeneous and heterogeneous ensembles, and provides a detailed examination of their applications, protocols, and implementation frameworks within environmental contaminants research.

Core Definitions and Theoretical Framework

Homogeneous Ensembles

Homogeneous ensemble methods utilize multiple instances of the same base learning algorithm. The diversity among the base learners, which is crucial for the ensemble's success, is artificially induced through techniques that manipulate the training data or the model's internal structure.

Key Strategies:

- Bagging (Bootstrap Aggregating): This method generates multiple bootstrap samples (random samples with replacement) from the original training dataset. A base learner is trained on each of these samples, and their predictions are aggregated, typically by a majority vote for classification or an average for regression. Bagging is highly effective at reducing variance and preventing overfitting, especially for high-variance models like decision trees. The Random Forest algorithm is a prominent example that combines bagging with random feature selection for each split in the tree [1].

- Boosting: This is a sequential strategy where base learners are trained one after the other. Each subsequent model focuses more on the instances that previous models misclassified or mispredicted. Boosting algorithms, such as Gradient Boosting, adaptively change the distribution of the training data, assigning greater weight to harder-to-predict observations. This sequential learning process primarily reduces bias, often leading to powerful predictive models [1].

Heterogeneous Ensembles

Heterogeneous ensemble methods combine predictions from multiple different types of learning algorithms. Diversity in this approach is innate, stemming from the distinct inductive biases and underlying assumptions of the various models.

Key Strategies:

- Blending or Stacking: This advanced technique uses a meta-learner to combine the predictions of the base models. The base models (e.g., a support vector machine, a decision tree, and a neural network) are first trained on the original data. Their predictions are then used as input features to train a final meta-model, which learns how to best combine these predictions to produce the final output [2] [3]. This is particularly useful for leveraging the unique strengths of different algorithms for various patterns in the data.

- Weighted Averaging/Voting: A simpler approach where the predictions from different models are combined through a weighted average (for regression) or a weighted vote (for classification). The weights can be assigned based on the individual performance of each model on a validation set [4].

Table 1: Comparative Analysis of Homogeneous and Heterogeneous Ensembles

| Feature | Homogeneous Ensembles | Heterogeneous Ensembles |

|---|---|---|

| Base Learners | Multiple instances of the same algorithm (e.g., all Decision Trees) [1] | Different types of algorithms (e.g., SVM, NN, RF combined) [2] [4] |

| Source of Diversity | Artificial manipulation of training data or model parameters [1] | Innate, from different model architectures and assumptions [2] |

| Common Strategies | Bagging, Boosting [1] | Stacking (Blending), Weighted Voting [2] [3] [4] |

| Primary Advantage | Effective at stabilizing and improving a single strong algorithm. | Can overcome inherent bias of any single model class [2]. |

| Inherent Bias | The ensemble can carry the inherent bias of the single base model type [2]. | Mitigates inherent bias by combining different model types [2]. |

| Computational Cost | Generally lower, as it involves training one algorithm type multiple times. | Can be higher, requiring training and tuning of multiple different algorithms. |

Application in Spatiotemporal Environmental Research

The prediction of environmental contaminants is a quintessential spatiotemporal problem, where concentrations vary across geographic locations and over time. Ensemble learning has proven highly effective in this domain by capturing complex, nonlinear relationships between pollutants and their drivers (e.g., meteorology, land use, emissions).

Case Studies and Quantitative Performance

Table 2: Ensemble Model Performance in Environmental Applications

| Application Domain | Ensemble Type | Base Learners Used | Reported Performance (Metric / Value) | Citation |

|---|---|---|---|---|

| Land Subsidence Prediction | Heterogeneous | Seq2Seq, GCN-Seq2Seq, DCRNN, GMAN [2] | Significantly higher accuracy than individual models [2] | [2] |

| Water Quality Classification | Homogeneous (Voting) | Decision Tree, Logistic Regression, SVM [4] | Accuracy: 96.39% (Soft Voting) [4] | [4] |

| Ozone (O(_3)) Concentration Estimation | Geographically Weighted Ensemble | Neural Network, Random Forest, Gradient Boosting [1] | Cross-validated R²: 0.90 (Ensemble) [1] | [1] |

| Spatiotemporal Water Quality Variation | Heterogeneous (Stacking) | Ensemble Across-watersheds Model (EAM) with multiple base models [3] | Test set R²: 0.62 (DO), 0.74 (NH(_3)-N), 0.65 (TP) [3] | [3] |

| Pollutant Concentration Forecasting | Hybrid (CNN-LSTM with XGBoost) | CNN, LSTM, XGBoost [5] | Superior accuracy and higher R² vs. benchmark models [5] | [5] |

Experimental Protocol: Heterogeneous Ensemble for Spatiotemporal Prediction

The following protocol outlines a methodology for predicting an environmental variable, such as land subsidence or pollutant concentration, using a heterogeneous ensemble learning approach that explicitly accounts for spatiotemporal heterogeneity [2].

Phase 1: Data Preprocessing and Spatiotemporal Clustering

- Objective: Partition the dataset into internally homogeneous spatio-temporal clusters to account for heterogeneity.

- Steps:

- Data Consolidation: Compile a spatiotemporal data matrix where rows represent unique spatial locations (e.g., monitoring sites, grid cells) and columns represent sequential time points. Integrate all relevant predictor variables (e.g., land use, meteorological data, remote sensing data, chemical transport model outputs) [1].

- Two-Stage Hybrid Clustering:

- Stage 1 (Co-clustering): Apply the Bregman Block Average Co-clustering with I-divergence (BBAC_I) algorithm. This treats spatial and temporal dimensions equally, dividing the large-scale dataset into smaller, internally homogeneous spatio-temporal blocks or clusters [2].

- Stage 2 (Regionalization): Use the REgionalization with Dynamically Constrained Agglomerative clustering and Partitioning (REDCAP) method on the results from Stage 1. This further refines the clusters to reveal complex spatio-temporal structures that may not be captured by co-clustering alone [2].

Phase 2: Base Model Training and Prediction

- Objective: Train multiple, diverse base models on each spatio-temporal cluster to generate preliminary predictions.

- Steps:

- Model Selection: Select a suite of models that capture different aspects of spatiotemporal dependencies. A recommended set includes:

- A Sequence-to-Sequence model for pure time series forecasting.

- A Graph Convolutional Network-Sequence-to-Sequence (GCN-Seq2Seq) model to capture complex spatio-temporal dependencies in graph structures.

- A Diffusion Convolutional Recurrent Neural Network (DCRNN) to model dynamic changes in spatiotemporal relationships.

- A Graph Multi-Attention Network (GMAN) that incorporates attention mechanisms to weight the importance of different spatial and temporal signals [2].

- Training: Within each spatio-temporal cluster identified in Phase 1, independently train each of the selected base models.

- Prediction: Each trained base model generates a prediction for the target variable within its assigned cluster.

- Model Selection: Select a suite of models that capture different aspects of spatiotemporal dependencies. A recommended set includes:

Phase 3: Heterogeneous Ensemble via Blending

- Objective: Combine the predictions from the base models into a single, superior prediction using a meta-learner.

- Steps:

- Create Meta-Features: For each spatio-temporal point in the dataset, the predictions from the four base models form a new feature vector.

- Train Meta-Learner: Use these meta-feature vectors and the corresponding true target values to train a blending model (meta-learner). A simple linear model or a more complex algorithm like XGBoost can serve as the meta-learner [2] [5]. This model learns the optimal way to weight and combine the base models' predictions.

- Generate Final Prediction: The trained blending model is applied to the base models' predictions to produce the final, ensemble prediction for the environmental target.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Algorithms

| Item / Algorithm | Type / Category | Primary Function in Ensemble Workflow |

|---|---|---|

| Random Forest [1] | Homogeneous Ensemble (Bagging) | Serves as a robust base learner or standalone ensemble for tasks like classification and regression, effective with tabular data. |

| Gradient Boosting [1] | Homogeneous Ensemble (Boosting) | A powerful sequential ensemble method often used as a base learner or as the final meta-learner in stacking due to its high performance. |

| XGBoost [5] | Boosting Algorithm | An optimized implementation of gradient boosting frequently used for its speed and performance, both as a base model and a meta-learner. |

| Long Short-Term Memory (LSTM) [5] | Deep Learning Model | Base learner specialized for capturing long-term temporal dependencies in time-series data (e.g., pollutant concentration over time). |

| Graph Convolutional Network (GCN) [2] | Deep Learning Model | Base learner designed to operate on graph-structured data, capturing spatial dependencies between monitoring stations or geographic grid cells. |

| Bregman Co-clustering (BBAC_I) [2] | Clustering Algorithm | Part of the preprocessing pipeline to account for spatiotemporal heterogeneity by partitioning data into coherent clusters before model training. |

| SHAP (SHapley Additive exPlanations) [3] | Model Interpretation Tool | Provides post-hoc interpretability for complex ensemble models, quantifying the contribution of each input feature to the final prediction. |

Application Note: Quantitative Profiles of Key Environmental Contaminants

This section provides standardized reference data on major environmental contaminants, supporting exposure assessment and model variable selection in spatiotemporal analyses.

Table 1: Air Pollutants: Health Impacts and Exposure Levels

| Pollutant | Major Sources | Key Health Impacts | WHO Guideline Values | Population Exposure Metrics |

|---|---|---|---|---|

| PM2.5 | Wildfires, coal-fired power plants, diesel engines, wood-burning stoves [6] | Premature death, asthma attacks, heart attacks, strokes, preterm births, lung cancer [6] | 5 μg/m³ (annual), 15 μg/m³ (24-hour) [7] | 46% of U.S. population (156M) live in areas with failing grades for air quality [6] |

| Ground-level Ozone (O₃) | Photochemical reactions of NOx and VOCs from vehicles and industry [1] [7] | Respiratory irritant, asthma exacerbation, reduced lung function, shortened life [6] | - | 37% of U.S. population (125M) live in areas with unhealthy levels [6] |

| Nitrogen Dioxide (NO₂) | High-temperature combustion (vehicles, power generation) [7] | Airway irritation, aggravated respiratory diseases, asthma [7] | 10 μg/m³ (annual), 25 μg/m³ (24-hour) [7] | - |

| Sulfur Dioxide (SO₂) | Combustion of fossil fuels for heating, industries, power generation [7] | Asthma hospital admissions, emergency room visits [7] | 40 μg/m³ (24-hour) [7] | - |

Table 2: Regulated Water Contaminants and Pharmaceutical Pollutants

| Contaminant Category | Example Contaminants | Primary Concerns / Standards | Regulatory Status |

|---|---|---|---|

| U.S. EPA National Primary Standards [8] | Lead, Copper, Nitrate, Arsenic, Pathogens | Legally enforceable limits to protect public health [8] | NPDWRs (Legally enforceable) |

| U.S. EPA Secondary Standards [8] | Aluminum, Chloride, Iron, Manganese, Sulfate | Non-enforceable guidelines for cosmetic and aesthetic effects (taste, color, odor) [8] | NSDWRs (Non-enforceable) |

| Pharmaceutical Contaminants [9] | Antibiotics, NSAIDs (Ibuprofen), Synthetic Estrogens (EE2), Antidepressants | Ecosystem damage, antibiotic resistance, endocrine disruption in aquatic life [9] | Emerging concern; some on EU watch lists |

Table 3: Soil Contaminants and Health Implications

| Contaminant | Major Sources | Key Impacts |

|---|---|---|

| Metals [10] | Industrial activities, agricultural practices | Threat to food security and quality, human health risks via food chain [10] |

| Polycyclic Aromatic Hydrocarbons (PAHs) [7] | Incomplete combustion of organic matter, fossil fuels, tobacco smoke | Long-term exposure linked to lung cancer [7] |

| Pharmaceuticals [9] | Spreading of contaminated manure/sewage sludge, livestock grazing | Indirect human exposure via food chain, contribution to antibiotic resistance [9] |

Experimental Protocols: Ensemble Machine Learning for Spatiotemporal Contaminant Modeling

Protocol 1: High-Resolution Ozone (O₃) Prediction Using Geographically Weighted Ensemble Modeling

This protocol details a method for estimating daily ground-level O₃ at a high spatial resolution (1 km²) across large geographic areas, suitable for intra-urban health studies [1].

- Air Quality Monitoring Data: Daily maximum 8-hour average O₃ concentrations from regulatory monitoring networks.

- Predictor Variables: A consolidated set of 169 variables across categories [1]:

- Land Use Terms: Traffic density, industrial areas, urbanization indices.

- Meteorological Data: Temperature, solar radiation, wind speed, relative humidity.

- Chemical Transport Model (CTM) Outputs: Gridded simulations of atmospheric processes.

- Remote Sensing Observations: Satellite-derived data on atmospheric constituents.

II. Computational Equipment

- Software: R or Python programming environments with machine learning libraries (e.g.,

scikit-learn,TensorFlow,XGBoost). - Hardware: High-performance computing resources are recommended. The dataset described is computationally intensive, requiring approximately 20 TB of storage [1].

III. Procedure

Data Consolidation (Stage 2)

- Use GIS techniques to create a unified data frame.

- Spatially join O₃ monitoring data and all 169 predictor variables at each monitor location and across a 1 km x 1 km grid covering the study area.

Data Preprocessing (Stage 3)

- Apply a machine learning algorithm (e.g., Neural Network, Random Forest) to impute missing values in the predictor variables across the grid.

Model Training (Stage 4)

- Train three distinct machine learning algorithms independently on the consolidated data at monitor locations:

- Neural Network (NN)

- Random Forest (RF)

- Gradient Boosting (GB)

- Tune the key hyperparameters for each algorithm (e.g., number of trees for RF, number of layers and neurons for NN).

- Train three distinct machine learning algorithms independently on the consolidated data at monitor locations:

Spatiotemporal Prediction (Stage 5)

- Use each of the three trained models to generate daily predictions of O₃ concentration for every 1 km² grid cell across the study region and time period.

Ensemble Blending (Stage 6)

- Create a final prediction for each grid cell and day by averaging the predictions from the three individual models (NN, RF, and GB). This geographically weighted ensemble model typically outperforms any single algorithm [1].

Model Validation (Stage 7)

- Perform cross-validation by withholding data from entire monitors.

- Quantify model performance using the coefficient of determination (R²) against observations.

- Estimate spatiotemporal uncertainty by predicting the monthly standard deviation of the difference between predictions and observations.

IV. Expected Outcomes

- The model should achieve high performance, with an average cross-validated R² of 0.90 against daily observations and 0.86 for annual averages [1].

- Model performance is typically strongest in summer (R² of 0.88) due to more stable photochemical conditions [1].

Protocol 2: Explainable Ensemble Learning for Nitro-Aromatic Compound (NAC) Source Attribution

This protocol uses an explainable ensemble machine learning approach to identify and quantify the drivers of specialized pollutants, demonstrating application to NACs in Eastern China [11].

I. Data Collection and Preprocessing

- Field Observations: Collect 24-hour ambient particulate matter samples at diverse sites (urban, rural, background).

- Laboratory Analysis: Quantify concentrations of individual NACs (e.g., nitrophenols, nitrocatechols) using techniques like liquid chromatography-mass spectrometry.

- Ancillary Data:

- Meteorological Parameters: Temperature, relative humidity, solar radiation.

- Source Apportionment Data: Execute a Positive Matrix Factorization (PMF) model on the chemical composition data to obtain source contribution estimates (e.g., coal combustion, biomass burning, traffic emissions) for each sample.

II. Computational Setup

- Software: Python or R with ML libraries and the

SHAPlibrary for interpretability. - Algorithms: Ensemble model combining multiple base learners (e.g., Random Forest, Gradient Boosting).

III. Procedure

Model Construction

- Train an ensemble machine learning (EML) model to predict measured NAC concentrations using the input features: PMF-resolved source contributions and meteorological parameters [11].

Interpretation with SHAP

- Apply the SHapley Additive exPlanations (SHAP) algorithm to the trained EML model.

- Use SHAP values to quantify the marginal contribution of each input variable (e.g., coal combustion source strength, temperature) to the model's prediction for each sample.

Driver Quantification

- Aggregate SHAP values across the entire dataset to calculate the global importance of each driving factor.

- The study using this method attributed NAC levels to: anthropogenic emissions (49.3%), meteorology (27.4%), and secondary formation (23.3%) [11].

Spatiotemporal Analysis

- Repeat the SHAP analysis stratified by season or location to uncover shifting dominant factors, such as the dominant role of anthropogenic sources in urban areas and the influence of temperature at a mountain site in winter [11].

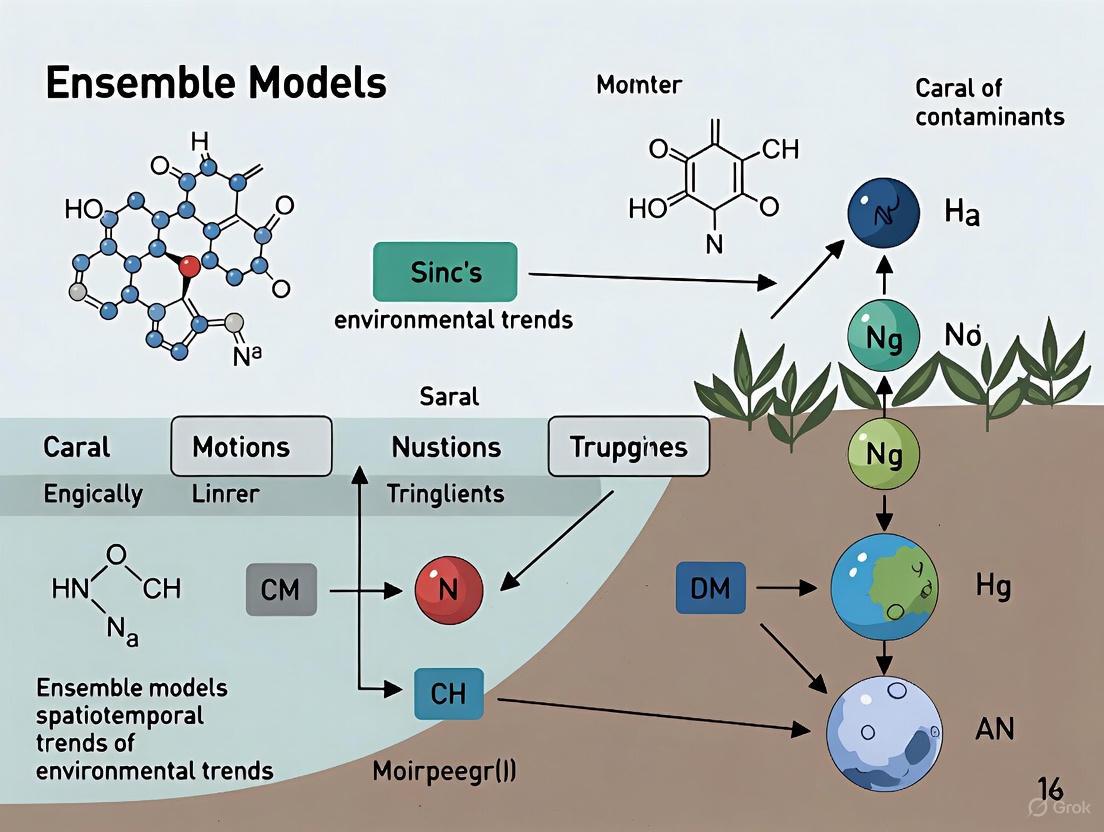

Visualization of Pathways and Workflows

Environmental Contaminant Impact Pathway

Ensemble Model Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Environmental Contaminant Research

| Research Reagent / Material | Function / Application | Specific Use-Case |

|---|---|---|

| Surface-based Pollutant Monitors | Provides ground-truth concentration data for model training and validation. | Measuring daily max 8-hr O₃ and PM₂.5 at monitoring sites [1]. |

| Chemical Transport Model (CTM) Output | Provides gridded, physics-based simulations of atmospheric chemistry and pollutant dispersion. | Used as a key set of predictor variables in ensemble machine learning models [1]. |

| Positive Matrix Factorization (PMF) Model | A receptor model that resolves the relative contributions of different emission sources to measured pollutant concentrations. | Source apportionment of Nitro-aromatic Compounds (NACs) for use as model inputs [11]. |

| SHAP (SHapley Additive exPlanations) | An interpretable AI tool that quantifies the contribution of each input variable to a complex model's prediction. | Identifying key drivers (e.g., coal combustion vs. temperature) of NAC concentrations from the ensemble model [11]. |

| Cuckoo Search (CS) Metaheuristic Algorithm | A swarm-based optimization algorithm used to fine-tune the parameters of machine learning models for peak performance. | Optimizing the Random Forest model for spatio-temporal O₃ pollution modeling [12]. |

Spatiotemporal Data Characteristics in Environmental Monitoring

Environmental monitoring data is inherently spatiotemporal, capturing the geographic distribution and temporal evolution of contaminants and ecological parameters. These datasets are crucial for understanding the transport, transformation, and fate of environmental pollutants across landscapes and over time. The complex nature of spatiotemporal data presents both challenges and opportunities for researchers tracking environmental contaminants, particularly when integrating multiple data streams into ensemble modeling frameworks. Spatiotemporal characteristics in environmental monitoring encompass both the geographic positioning of sampling locations and the timing of measurements, creating multidimensional datasets that require specialized analytical approaches [13].

The moss technique, developed in Sweden in the late 1960s, represents one of the earliest systematic approaches to spatiotemporal environmental monitoring of atmospheric metal deposition [13]. This method exemplifies the core challenges of spatiotemporal data: samples are often collected on irregular grids that may differ between sampling years, with varying sampling density dependent on material availability [13]. Such irregularity complicates statistical analysis and trend detection, necessitating robust analytical methods that can accommodate these inherent data structures within ensemble modeling frameworks.

Key Characteristics of Spatiotemporal Monitoring Data

Fundamental Data Attributes

Spatiotemporal environmental monitoring data possesses distinct characteristics that influence analytical approaches and modeling strategies within ensemble frameworks. These characteristics determine how data can be integrated, analyzed, and interpreted to track contaminant trends and patterns.

Table 1: Core Characteristics of Spatiotemporal Environmental Monitoring Data

| Characteristic | Description | Implications for Analysis |

|---|---|---|

| Spatial Irregularity | Data collected on irregular grids with varying sampling density [13] | Requires geostatistical methods or spatial interpolation that accommodate uneven distribution |

| Temporal Resolution | Measurements collected at different time intervals (e.g., daily, seasonal, annual) [13] | Complicates trend analysis and requires temporal alignment for ensemble modeling |

| Multivariate Nature | Multiple parameters measured simultaneously (e.g., metals, meteorological factors) [13] [11] | Enables comprehensive assessment but increases analytical complexity |

| Varying Support | Differing spatial and temporal scales of measurement [14] [1] | Creates challenges for data integration and comparison across studies |

| Censored Values | Data below detection limits or above measurement thresholds [15] | Requires specialized statistical handling to avoid bias in trend analysis |

Data Quality Considerations

Data quality represents a fundamental aspect of spatiotemporal environmental monitoring, with significant implications for ensemble model performance and reliability. Quality control measures should include graphical procedures (histograms, box plots, time sequence plots) and descriptive numerical measures (mean, standard deviation, measures of skewness and kurtosis) to screen data as it is received from field or laboratory sources [15]. The handling of censored data—values below detection limits—requires particular attention, as common ad hoc approaches (treating as missing, using zero, or applying half the detection limit) can severely underestimate sample variance and introduce bias when standard statistical techniques are applied [15].

The integrity of environmental monitoring data can be compromised at multiple stages, from sample collection and preparation through to interpretation and reporting [15]. Gross errors resulting from data manipulation (transcribing, transposing, editing, recoding, unit conversion) can be detected through careful screening, while more subtle erroneous effects (repeated data, accidental deletion, mixed scales) require more sophisticated detection methods [15]. In multivariate contexts, outlier identification becomes increasingly complex, as observations may appear "unusual" even when reasonably close to the respective means of individual variables due to covariance structures [15].

Experimental Protocols for Spatiotemporal Data Analysis

Ensemble Machine Learning Framework for Contaminant Modeling

The integration of ensemble machine learning with spatiotemporal analysis represents a cutting-edge approach for environmental contaminant research. The following protocol outlines a comprehensive methodology for developing ensemble models capable of capturing complex spatiotemporal patterns in environmental monitoring data.

Table 2: Ensemble Machine Learning Protocol for Spatiotemporal Contaminant Analysis

| Stage | Procedure | Purpose |

|---|---|---|

| Data Consolidation | Integrate monitoring data with predictor variables (land use, meteorological, remote sensing, transport models) using GIS techniques [1] | Create unified data structure for analysis across spatial and temporal dimensions |

| Predictor Imputation | Apply machine learning to fill missing values in predictor variables [1] | Maintain dataset completeness and maximize usable observations |

| Multi-Algorithm Training | Implement diverse ML algorithms (neural networks, random forests, gradient boosting) [1] | Capture different aspects of spatiotemporal relationships through complementary approaches |

| Spatiotemporal Prediction | Generate predictions at high resolution across spatial and temporal domains [1] | Create comprehensive contaminant distribution maps at relevant scales |

| Ensemble Integration | Blend predictions from multiple algorithms into unified output [1] | Improve accuracy and robustness beyond individual model capabilities |

| Performance Validation | Conduct cross-validation with temporal and spatial withholding [1] | Assess model generalizability and identify potential overfitting |

| Uncertainty Quantification | Estimate spatiotemporal variation in prediction uncertainty [1] | Provide confidence intervals for model applications and decision support |

Diagram 1: Ensemble machine learning workflow for spatiotemporal analysis.

Explainable Ensemble Machine Learning with SHAP Analysis

For research requiring interpretability in ensemble modeling, the integration of SHapley Additive exPlanation (SHAP) analysis provides insights into factor importance and directionality across spatial and temporal contexts. This approach is particularly valuable for understanding the driving factors behind contaminant distribution patterns.

Data Integration and Preprocessing: Combine field observations of target contaminants with meteorological data, source apportionment results from receptor models like Positive Matrix Factorization (PMF), and other relevant predictor variables [11]. Ensure consistent spatial and temporal alignment across all datasets.

Ensemble Model Development: Implement multiple machine learning algorithms (e.g., random forest, gradient boosting, neural networks) using the consolidated dataset. Optimize hyperparameters for each algorithm through cross-validation appropriate for spatiotemporal data (e.g., spatial blocking, temporal withholding) [11].

Model Interpretation with SHAP: Apply SHapley Additive exPlanation analysis to the trained ensemble model to quantify the contribution of each predictor variable to the final model output. Calculate SHAP values for each observation-predictor combination to assess both the magnitude and direction of effects [11].

Spatiotemporal Factor Analysis: Aggregate SHAP values by geographic regions, seasons, or other relevant spatiotemporal groupings to identify how the importance of driving factors varies across space and time. This analysis reveals heterogeneous relationships that might be obscured in global feature importance measures [11].

Validation and Implementation: Validate model interpretations against physical and chemical understanding of the system. Implement the explainable ensemble framework for scenario analysis and hypothesis testing regarding contaminant sources, transport, and transformation processes [11].

Table 3: Research Reagent Solutions for Spatiotemporal Environmental Analysis

| Resource Category | Specific Tools & Techniques | Research Application |

|---|---|---|

| Machine Learning Algorithms | Random Forest, Neural Networks, Gradient Boosting [1] | Capturing nonlinear spatiotemporal relationships in contaminant data |

| Interpretability Frameworks | SHAP (SHapley Additive exPlanation) [11] | Quantifying factor importance and directionality in ensemble models |

| Spatial Analysis Tools | GIS software, Geographically Weighted Regression [14] | Analyzing spatial heterogeneity and geographic patterns in contaminants |

| Data Visualization Platforms | XmdvTool, Parallel coordinate plots [13] | Visual exploration of high-dimensional spatiotemporal monitoring data |

| Source Apportionment Methods | Positive Matrix Factorization (PMF) [11] | Identifying and quantifying contamination sources in multivariate data |

| Quality Control Protocols | Field QA/QC procedures, statistical screening methods [15] | Ensuring data integrity throughout collection and analysis pipeline |

Advanced Application: Ensemble Modeling for Ozone Pollution

A sophisticated application of ensemble modeling for spatiotemporal environmental data demonstrates the approach's capabilities for complex contaminant analysis. Research on ozone pollution modeling illustrates the integration of multiple machine learning algorithms with optimization techniques for enhanced prediction accuracy.

The optimization of spatiotemporal ozone pollution modeling using random forest ensemble methods with cuckoo search metaheuristic algorithms has achieved remarkable accuracy, with seasonal risk maps demonstrating performance metrics of 95.2% for autumn, 97% for spring, 96.7% for summer, and 95.7% for winter [12]. This ensemble approach analyzed fourteen environmental factors to model seasonal ozone distribution, identifying altitude and wind direction as the most influential factors across seasons [12]. The methodology exemplifies how ensemble techniques can capture complex spatiotemporal patterns in environmental contaminants with high precision.

Another large-scale study integrated multiple predictor variables and three machine learners into a geographically weighted ensemble model to estimate daily maximum 8-hour ozone concentrations at 1 km × 1 km resolution across the contiguous United States from 2000 to 2016 [1]. This ensemble model achieved an average cross-validated R² of 0.90 against observations, outperforming any single algorithm, and demonstrated strongest performance in the East North Central region (R² = 0.93) with slightly weaker performance in western mountainous regions (R² = 0.86) and New England (R² = 0.87) [1]. The research further quantified monthly model uncertainty across the spatial domain, providing essential context for interpreting predictions in environmental health studies.

Diagram 2: Ensemble modeling framework for ozone prediction.

Data Management and Visualization Protocols

Spatial and Temporal Data Integration

The integration of spatiotemporal monitoring data requires careful attention to data structures, formats, and quality assurance measures. Effective data management practices form the foundation for robust ensemble modeling and analysis of environmental contaminants.

Environmental Data Management Systems provide essential infrastructure for handling spatiotemporal data throughout its lifecycle, from collection through analysis to dissemination [16]. Data governance policies should establish frameworks for data access, use, storage, and retention across multiple projects, with these policies incorporated into specific data management plans for individual research initiatives [16]. For field data collection, proper planning is essential, including determination of data types and collection methods, development of field processes, implementation of quality assurance/quality control protocols, and comprehensive staff training [16].

When integrating data from multiple monitoring campaigns, researchers must address challenges such as varying analytical techniques, differing detection limits, changing numbers of measured chemical elements, and evolving analytical precision over time [13]. These factors can introduce systematic biases that complicate spatiotemporal trend analysis and require careful normalization or adjustment before inclusion in ensemble models. Visualization tools such as parallel coordinate and scatterplot displays enable exploratory data analysis of complex spatiotemporal datasets, facilitating the identification of patterns, relationships, and anomalies that might be overlooked in purely numerical analyses [13].

Visualization and Communication Strategies

Effective communication of spatiotemporal environmental monitoring data requires tailored approaches for different audiences and purposes. The selection of appropriate formats—such as reports, dashboards, infographics, maps, or videos—should align with audience needs and the specific message being conveyed [17]. Visual aids including graphs, charts, tables, and maps can significantly enhance communication effectiveness when designed according to data visualization best practices [17].

Accessibility considerations, particularly color contrast requirements, are essential for creating inclusive visualizations that are interpretable by users with diverse visual capabilities. For body text, the Web Content Accessibility Guidelines recommend a minimum contrast ratio of 4.5:1 for standard text and 3:1 for large-scale text, while active user interface components and graphical objects such as icons and graphs should maintain at least a 3:1 contrast ratio [18]. These guidelines ensure that visualizations remain interpretable for individuals with low vision or color vision deficiencies, who may experience reduced ability to distinguish elements with insufficient luminance differences [19].

When presenting spatiotemporal environmental data to stakeholders and public audiences, providing appropriate context and interpretation helps communicate the significance and implications of the findings [17]. This includes relevant background information, comparisons to benchmarks or standards, discussion of trends and patterns, and acknowledgment of limitations and uncertainties in the data. Framing the information within a compelling narrative structure further enhances engagement and understanding [17].

The Role of Remote Sensing and IoT in Contaminant Data Collection

The accurate characterization of spatiotemporal trends in environmental contaminants is a fundamental objective in modern public health and ecological research. Ensemble models, which integrate multiple machine learning algorithms and data sources, have emerged as powerful tools for predicting contaminant concentrations across space and time with high resolution. The performance of these models is critically dependent on the quality, density, and frequency of input data. Remote Sensing and Internet of Things (IoT) technologies now serve as pivotal platforms for supplying this data, enabling the collection of multi-scale, multi-pollutant information essential for training robust ensemble models [20] [21]. This document outlines application notes and experimental protocols for their effective deployment in contaminant monitoring campaigns, with a specific focus on supporting ensemble-based spatiotemporal modeling research.

Remote Sensing and IoT platforms capture complementary data that, when fused, provide a comprehensive picture of environmental contamination. Their core characteristics are summarized in Table 1.

Table 1: Comparison of Remote Sensing and IoT for Contaminant Data Collection

| Feature | Remote Sensing | IoT-Based Sensor Networks |

|---|---|---|

| Spatial Coverage | Extensive (Regional to Global) [22] | Localized (Point-based to Intra-urban) [23] |

| Spatial Resolution | Coarse to Moderate (e.g., 1km²) [1] | Fine (Single-point measurements) [24] |

| Temporal Resolution | Low (Hours to Days, depends on satellite revisit) [22] | Very High (Real-time to Minutes) [24] [25] |

| Primary Contaminants Monitored | O₃, PM₂.₅, PM₁₀, NO₂, Water Chlorophyll-a, Turbidity [12] [26] [1] | NH₃, CO, NO₂, CH₄, CO₂, SO₂, O₃, PM₂.₅, PM₁₀, Water pH, DO, Turbidity [24] [25] [22] |

| Key Strengths | Synoptic view, historical archives, access to remote areas [22] | Real-time alerts, high-frequency time-series, ground-truthing [23] [25] |

| Key Limitations | Susceptible to atmospheric interference, indirect measurement (inversion required) [22] | Requires calibration/maintenance, limited spatial representativeness [23] |

The synergy between these technologies is key. IoT sensors provide dense, ground-truthed data for calibrating remote sensing imagery, while remote sensing extrapolates point measurements from IoT networks to create continuous spatial fields [21] [22]. This fused data layer is ideal for training and validating ensemble models that predict contaminant levels in unsampled locations and times.

Experimental Protocols for Integrated Data Collection

This section provides a detailed methodology for designing a monitoring campaign to generate data for spatiotemporal ensemble modeling of contaminants, using air quality as a primary example.

Protocol: Deployment of an IoT Sensor Network for Airborne Contaminants

Objective: To establish a distributed sensor network for collecting real-time, high-frequency data on airborne contaminants and meteorological parameters at fixed ground locations.

Materials and Reagents:

- Gas Sensors: Electrochemical sensors for NO₂, CO, SO₂, O₃; Metal-Oxide Semiconductor (MOS) sensors for CH₄, VOCs [24] [25].

- Particulate Matter (PM) Sensors: Optical particle counters (OPC) for PM₂.₅ and PM₁₀ [24].

- Meteorological Sensors: Capacitive humidity sensor, thermistor for temperature, anemometer for wind speed/direction, barometric pressure sensor [24] [1].

- Microcontroller & Data Acquisition: Waspmote, Arduino, or similar microcontroller with analog-to-digital converter (ADC) [22].

- Communication Module: 4G/LTE, LoRaWAN, or GSM/GPRS module for data transmission [22].

- Power Supply: Solar panel with battery backup or mains power.

- Calibration Gases: Standardized gas cylinders for target analytes for sensor calibration.

Methodology:

- Site Selection: Deploy sensors using a stratified random sampling design based on land use (industrial, residential, background). Geotag each node [1].

- Sensor Calibration: Prior to deployment, calibrate all sensors against reference-grade instruments in a controlled chamber using standard calibration gases over a range of expected concentrations and environmental conditions [23].

- Node Assembly & Enclosure: House sensors, microcontroller, communication module, and power supply in a weather-proof, ventilated enclosure to protect from elements while allowing air flow.

- Data Collection & Transmission: Program the microcontroller to record sensor readings at 5-10 minute intervals. Transmit data packets to a cloud platform (e.g., AWS IoT, Azure IoT, The Things Network) via the communication module [25] [22].

- Data Validation: Implement a two-stage validation. First, use embedded algorithms for basic range and spike tests. Second, on the server side, apply machine learning models (e.g., Random Forest) to identify and flag sensor drift or failure by comparing readings across the network [23] [21].

Protocol: Satellite-Based Remote Sensing of Ozone (O₃)

Objective: To acquire and process satellite imagery for estimating ground-level O₃ concentrations over a large spatial domain.

Materials and Software:

- Satellite Data: Acquire data from relevant satellite platforms (e.g., Landsat-9, Sentinel-5P) via USGS EarthExplorer or Copernicus Open Access Hub [22].

- Software: Python (with libraries like Rasterio, GDAL, Scikit-learn) or GIS software (e.g., ArcGIS, QGIS).

- Ancillary Data: Obtain data on environmental predictors: land use, elevation, road networks, and output from chemical transport models (CTMs) like CMAQ [12] [1].

- Ground Truth Data: Collocated, time-matched O₃ measurements from reference monitors or the validated IoT network.

Methodology:

- Data Acquisition & Preprocessing: Download satellite imagery for the study area and period. Perform atmospheric correction to remove the effects of aerosols and water vapor [22].

- Predictor Variable Extraction: Extract and process a comprehensive set of predictor variables for each satellite pixel and day. These typically include:

- Remote Sensing Data: Tropospheric NO₂ columns, aerosol optical depth (AOD), formaldehyde (HCHO) as a VOC proxy [1].

- Meteorological Data: Reanalysis data on temperature, wind speed/direction, relative humidity, and boundary layer height [12] [1].

- Static Geographic Data: Altitude, population density, distance to roads and industrial points [12] [1].

- Model Training for Inversion: Train an ensemble machine learning model (e.g., Random Forest optimized with Cuckoo Search metaheuristic) using the prepared predictor variables as inputs and the ground-level O₃ measurements from the IoT/reference network as the target output [12].

- Spatial Prediction & Validation: Apply the trained model to the entire stack of predictor variables to generate a continuous, gridded O₃ concentration map. Validate the final map using hold-out ground monitoring data, reporting performance metrics (e.g., R², MAE, AUC) [12] [1].

The following workflow diagram illustrates the integration of these protocols for ensemble model development.

Integrated Workflow for Contaminant Data Collection and Modeling

The Researcher's Toolkit

Table 2: Essential Research Reagent Solutions and Materials

| Item | Function/Application |

|---|---|

| Electrochemical Gas Sensors | Detect and quantify specific gaseous pollutants (e.g., O₃, NO₂, CO) in IoT nodes via electrochemical reactions [24] [25]. |

| Optical Particle Counters (OPC) | Measure mass concentration of particulate matter (PM₂.₅, PM₁₀) in air by measuring light scattering of individual particles [24]. |

| LoRaWAN Communication Module | Enables long-range, low-power wireless transmission of sensor data from field-deployed IoT nodes to cloud gateways [22]. |

| Calibration Gas Standards | Certified concentration gases used for periodic calibration of electrochemical and metal-oxide gas sensors to ensure data accuracy [23]. |

| Sentinel-5P Satellite Data | Provides global, daily measurements of atmospheric trace gases (NO₂, O₃, HCHO) for regional-scale contaminant modeling [1] [22]. |

| Cuckoo Search (CS) Metaheuristic | A swarm-based optimization algorithm used to fine-tune hyperparameters of machine learning models (e.g., Random Forest), enhancing prediction accuracy [12]. |

| Geographically Weighted Ensemble (GWE) | A modeling framework that combines predictions from multiple base learners (e.g., Neural Networks, Random Forest, Gradient Boosting) to improve robustness and accuracy across diverse geographic regions [1]. |

Data Integration and Modeling Protocols

Protocol: Building a Spatiotemporal Ensemble Model

Objective: To integrate IoT and remote sensing data into an ensemble machine learning model for predicting daily contaminant levels at high spatial resolution.

Methodology:

- Data Fusion: Create a spatiotemporal dataset where each record represents a unique location (e.g., 1km x 1km grid cell) and day. Fuse the following data layers:

- Dependent Variable: Daily ground-level contaminant concentration, derived from IoT sensors or regulatory monitors.

- Predictor Variables: Satellite-derived proxies, reanalysis meteorological data, static land use variables, and output from chemical transport models [1]. This can involve over 100 predictor variables.

- Model Training: Train multiple base learners on the fused dataset. Common algorithms include:

- Random Forest (RF): An ensemble of decision trees effective for capturing non-linear relationships [24] [12] [1].

- Long Short-Term Memory (LSTM): A type of recurrent neural network ideal for capturing temporal dependencies in time-series data [24].

- Gradient Boosting: A sequential ensemble technique that builds trees to correct errors of previous ones [1].

- Ensemble Optimization: Optimize the base learners using metaheuristic algorithms like Cuckoo Search (CS) to fine-tune their hyperparameters, maximizing predictive performance [12].

- Geographically Weighted Ensemble: Create a final prediction by combining the outputs of the optimized base models. This can be a simple averaging or a weighted average where weights are learned [1]. The model performance should be rigorously evaluated via spatial, temporal, and sample-based cross-validation.

Table 3: Exemplary Performance Metrics of AI Models in Contaminant Forecasting

| Contaminant | Model | Performance Metrics | Application Context |

|---|---|---|---|

| PM₂.₅ | Random Forest | R² = 0.84, MAE = 10.11 [24] | Industrial IoT Forecasting |

| O₃ | RF with Cuckoo Search Optimization | AUC = 0.97 (Spring) [12] | Spatio-temporal Risk Mapping |

| O₃ | Geographically Weighted Ensemble (GWE) | Average R² = 0.90 [1] | Continental-scale Daily Estimation |

| Temperature/Humidity | LSTM | R² = 0.99, MAE = 0.33 [24] | Industrial IoT Forecasting |

| Water Contamination | AquaDynNet (CNN) | Accuracy = 90.75%, AUC = 0.92 [26] | Remote Sensing Detection |

The following diagram outlines the architecture of a geographically weighted ensemble model that integrates multiple data sources and machine learning algorithms.

Ensemble Model Architecture for Contaminant Prediction

The integration of Remote Sensing and IoT technologies creates an unparalleled data pipeline essential for advancing ensemble model-based research into the spatiotemporal dynamics of environmental contaminants. The protocols outlined provide a framework for generating the high-quality, multi-scale data required to train, validate, and apply these sophisticated models. As these sensing technologies continue to advance, coupled with more powerful ensemble machine learning techniques, our ability to accurately monitor, forecast, and mitigate the public health and ecological impacts of environmental pollution will be fundamentally enhanced.

Within the evolving field of environmental contaminants research, accurately modeling the complex, dynamic nature of pollutant dispersion presents a significant challenge. The spatiotemporal trends of contaminants are governed by non-linear interactions between meteorological conditions, emission patterns, and geographical factors. In this context, ensemble learning models have emerged as a powerful alternative to single-model approaches, offering enhanced predictive performance, improved stability, and superior generalization capabilities for forecasting environmental risks [27] [28]. This document details the quantitative advantages and provides standardized protocols for implementing ensemble models in research focused on the spatiotemporal analysis of environmental contaminants.

Quantitative Superiority of Ensemble Models

Empirical evidence from environmental science consistently demonstrates that ensemble models outperform single models across key performance metrics. The core principle behind this success is the combination of multiple base models (learners), which reduces the risk of relying on a single, potentially flawed, model structure. By integrating diverse predictions, ensemble methods mitigate individual model errors, leading to more accurate and reliable forecasts [29] [30].

The table below summarizes documented performance improvements of ensemble over single models in environmental forecasting applications:

Table 1: Documented Performance of Ensemble vs. Single Models in Environmental Research

| Application Area | Ensemble Model Type | Reported Performance Metric | Single Model Performance | Ensemble Model Performance | Reference Study Context |

|---|---|---|---|---|---|

| Building Energy Prediction | Heterogeneous Ensemble | Accuracy Improvement | Baseline (Single Model) | +2.59% to +80.10% | [27] |

| Building Energy Prediction | Homogeneous Ensemble | Accuracy Improvement | Baseline (Single Model) | +3.83% to +33.89% | [27] |

| Coastal Water Quality | Across-Watersheds Stacking (EAM) | Test Set R-squared (R²) | Lower R² (SWM & GWM) | R²: 0.62 (DO), 0.74 (NH₃-N), 0.65 (TP) | [3] |

| Urban Air Quality (PM2.5/PM10) | Random Forest / Decision Tree | Prediction Accuracy | Not Specified | 0.99 (PM2.5), 0.98 (PM10) | [28] |

Beyond raw accuracy, ensemble models exhibit enhanced robustness, making them less sensitive to noisy data, outliers, or slight perturbations in the input data [29] [30]. Furthermore, their generalization capability—the ability to perform well on new, unseen data—is often superior. This is critical for spatiotemporal modeling, where models must be applicable across different geographic regions and time periods not present in the training data [27] [3].

Ensemble Learning Protocols for Environmental Contaminants

This section outlines standardized protocols for developing ensemble models to predict spatiotemporal trends of environmental contaminants, such as PM₂.₅, heavy metals, or per-/polyfluoroalkyl substances (PFAS).

Protocol 1: Implementing a Heterogeneous Stacking Ensemble

Principle: Combine predictions from diverse base algorithms (e.g., tree-based, neural, linear) using a meta-learner to integrate their strengths [27] [31] [32].

Workflow:

- Data Preprocessing: Clean data, handle missing values, and normalize features. For spatiotemporal data, engineer features like temporal lags, spatial coordinates, and distance to pollution sources.

- Base Model Training: Train multiple diverse base models on the same training dataset.

- Meta-Feature Generation: Use k-fold cross-validation on the training set to generate out-of-fold predictions from each base model. These predictions become the input features (meta-features) for the meta-learner.

- Meta-Learner Training: Train a final model (the meta-learner) on the meta-features. A linear model or a simple logistic regression is often effective and avoids overfitting [31].

- Final Prediction: For new data, predictions from all base models are generated and fed into the trained meta-learner to produce the final ensemble forecast.

The following diagram visualizes this multi-stage workflow:

Protocol 2: Building a Homogeneous Boosting Ensemble

Principle: Sequentially train multiple instances of the same base algorithm, where each new model focuses on correcting errors made by the previous ones [29]. This is highly effective for reducing bias.

Workflow:

- Data Preprocessing: Same as Protocol 1.

- Initial Model Training: Train a first base model (e.g., a shallow decision tree) on the training data.

- Residual Calculation & Weight Adjustment: Calculate the prediction errors (residuals) of the current ensemble. Increase the weight of the data points that were mispredicted.

- Subsequent Model Training: Train a new model on a version of the dataset that gives more emphasis to the poorly predicted instances.

- Model Integration: Add the new model to the ensemble, typically with a learning rate to prevent overfitting.

- Iteration: Repeat steps 3-5 for a predefined number of iterations or until performance converges.

The Scientist's Toolkit: Essential Reagents for Ensemble Research

Successfully implementing ensemble models requires leveraging a suite of computational tools and algorithms. The following table lists key "research reagents" in this domain.

Table 2: Essential Reagents for Ensemble Modeling Research

| Category | Reagent / Algorithm | Primary Function in Ensemble Research |

|---|---|---|

| Core Algorithms | Random Forest (RF) | A bagging-based homogeneous ensemble; excellent for benchmarking and capturing non-linear relationships. [29] [28] |

| XGBoost / LightGBM | Highly efficient gradient boosting frameworks; often achieve state-of-the-art results in structured data tasks. [31] [32] [33] | |

| Stacking / Voting | A framework for heterogeneous combination; integrates predictions from diverse models like RF, SVM, and ANN. [27] [29] [31] | |

| Data Preprocessing | SMOTE (Synthetic Minority Over-sampling Technique) | Addresses class imbalance in datasets (e.g., rare pollution events) by generating synthetic samples for minority classes. [31] |

| Min-Max Scaler / Standard Scaler | Normalizes or standardizes feature scales to ensure stable and equitable model training. [5] | |

| Model Interpretation | SHAP (SHapley Additive exPlanations) | Explains the output of any ensemble model by quantifying the contribution of each feature to a single prediction. [3] [31] [32] |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates local, interpretable approximations to explain individual predictions from complex ensemble models. [32] | |

| Optimization & Validation | k-Fold Cross-Validation | Robustly estimates model performance and prevents overfitting by rotating training and validation subsets. [31] |

| Hyperparameter Optimization (e.g., Grid Search, Bayesian) | Systematically tunes model parameters to maximize predictive performance on a given task. |

Implementing Ensemble Techniques for Contaminant Mapping and Prediction

Application Notes

Ensemble machine learning architectures have become a cornerstone in modern spatiotemporal environmental research, significantly enhancing the predictive accuracy and interpretability of models for contaminants. The following table summarizes the performance of various ensemble architectures as documented in recent scientific literature.

Table 1: Quantitative Performance of Ensemble Architectures in Environmental Research

| Ensemble Architecture | Application Context | Key Performance Metrics | Citation |

|---|---|---|---|

| Stacking (EAM) | Predicting spatiotemporal water quality variations across 432 coastal sites | Test set R²: Dissolved Oxygen (0.62), Ammonia Nitrogen (0.74), Total Phosphorus (0.65) | [3] |

| Stacking (Multiple Base Models + Linear Meta-Learner) | Forecasting Water Quality Index (WQI) using 1,987 river samples | R²: 0.9952, Adjusted R²: 0.9947, MAE: 0.7637, RMSE: 1.0704 | [34] |

| Stacking (ML/DL Models + Linear Regression Meta-Learner) | Spatiotemporal rainfall prediction in the Bengawan Solo River Watershed | MAE: 53.735 mm, RMSE: 69.242 mm, R²: 0.795826 | [35] |

| Ensemble Model (GAM + XGBoost) | Estimating spatiotemporal distributions of elemental PM2.5 | Superior interpretability for spatial variation and industry-related features | [36] |

| Committee Average / Median Ensemble | Global ecosystem service modeling (5 services including water supply & carbon storage) | 2-14% more accurate than individual models | [37] |

The application of these architectures provides distinct advantages. Stacking ensembles excel in achieving high predictive accuracy for complex, multi-parameter forecasting tasks like Water Quality Index prediction, where they can integrate the strengths of diverse base learners such as XGBoost, CatBoost, and Random Forest [34]. The "Ensemble Across-watersheds Model" demonstrates superior generalizability over single-watershed models, effectively capturing shared patterns across diverse geographical areas [3]. Furthermore, ensembles that combine process-based models with statistical learning, as seen in the Lake Erie nutrient response study, provide a robust framework for environmental forecasting and policy guidance [38].

Experimental Protocols

Protocol for Implementing a Stacking Ensemble for Water Quality Prediction

This protocol outlines the methodology for developing a stacking ensemble regression model, as validated for Water Quality Index forecasting [34].

Workflow Overview:

- Data Preprocessing: Handles missing values and outliers to ensure data quality.

- Base Model Training: Multiple diverse models are trained on the preprocessed data.

- Meta-Learner Training: A final model learns to best combine the base model predictions.

- Interpretation & Validation: Model predictions are explained and performance is rigorously evaluated.

Procedure:

- Data Preprocessing and Feature Engineering:

- Missing Data Imputation: Replace missing values using median imputation or time-series linear interpolation for temporal data [34] [39].

- Outlier Mitigation: Apply the Interquartile Range method, winsorizing values outside the range of [Q1 - 1.5IQR, Q3 + 1.5IQR] [34] [39]. For additional robustness, use a three-sigma rule to truncate extreme deviations [39].

- Noise Reduction: Employ smoothing techniques like Kalman filtering or sliding-median filters to suppress random noise while preserving underlying spatiotemporal trends [39].

- Data Normalization: Normalize all features to a consistent scale to optimize model training.

Base Model Training with Cross-Validation:

- Select a diverse set of 6-10 machine learning algorithms as base learners (e.g., XGBoost, CatBoost, Random Forest, Gradient Boosting, Extra Trees, AdaBoost, Support Vector Regression) [34] [35].

- To prevent data leakage and generate meta-features for the subsequent layer, train each base model using 5-fold cross-validation on the training set. The out-of-fold predictions from each fold are collected to form the meta-feature dataset for the training data.

Meta-Learner Training:

- The out-of-fold predictions from all base models serve as the input features (meta-features) for the meta-learner.

- The original target variable (e.g., WQI, contaminant level) remains the target for the meta-learner.

- A relatively simple, linear model such as Linear Regression or Ridge Regression is often employed as the meta-learner to learn the optimal combination of the base models' predictions [34] [35].

Model Interpretation and Validation:

- Apply SHapley Additive exPlanations analysis on the trained ensemble model to identify the most influential spatiotemporal features and quantify their marginal effects on the prediction [3] [34] [11]. This reveals key drivers, such as dissolved oxygen or specific anthropogenic sources.

- Evaluate the final model on a held-out test set that was not used at any stage of training, reporting metrics like R², MAE, and RMSE.

Protocol for Spatiotemporal Ensemble with GAM + XGBoost

This protocol is designed for scenarios requiring high interpretability of spatial variations, such as source apportionment of elemental PM2.5 [36].

Procedure:

- Temporal Decomposition:

- Fit a Generalized Additive Model to the time-series of the target contaminant at each monitoring station. Use meteorological factors (e.g., temperature, wind speed, relative humidity) as predictors to capture the temporal trend.

- Calculate the residuals (observed value minus GAM-predicted value) for each data point. These residuals represent the portion of the concentration not explained by meteorological temporal trends.

Spatial Modeling:

- Use the GAM residuals as the new target variable.

- Model these residuals using XGBoost, with time-invariant spatial predictors as features (e.g., land-use patterns, industrial area proximity, population density, topographic indices).

- This step explicitly models the spatial variation of the contaminant.

Final Prediction and Interpretation:

- The final spatiotemporal prediction is the sum of the GAM-predicted temporal component and the XGBoost-predicted spatial residual component.

- Perform SHAP analysis on the trained XGBoost model to identify which spatial features (e.g., industrial land use) are the primary drivers of residual variation.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Ensemble Modeling

| Tool/Reagent | Function/Description | Application Example |

|---|---|---|

| SHapley Additive exPlanations | A game theory-based method to interpret complex model predictions, providing feature importance and direction of effect. | Identifying dissolved oxygen and specific anthropogenic emissions as key drivers of water/air quality [3] [34] [11]. |

| High-Performance Computing Cluster | Computational resources for training multiple base models and deep learning architectures, often with parallel processing. | Training ensembles of multiple machine learning and deep learning models for rainfall prediction [35]. |

| eXtreme Gradient Boosting | A highly efficient and effective gradient-boosting algorithm, frequently used as a base learner in ensembles. | Serving as a primary base model in stacking ensembles and in hybrid GAM+XGBoost frameworks [34] [36]. |

| Generalized Additive Model | A statistical model that captures nonlinear relationships using smooth functions of predictors. | Isolating the temporal component of pollutant concentrations by modeling the effect of meteorological variables [36]. |

| Positive Matrix Factorization | A receptor model that apportions measured contaminant levels to specific source profiles. | Providing input data on source contributions (e.g., coal combustion, traffic) for the ensemble model [11]. |

| CHIRPS Rainfall Data | A long-term, high-resolution global satellite-based rainfall dataset. | Serving as the primary spatiotemporal input for training and validating rainfall prediction ensembles [35]. |

| K-fold Cross-Validation | A resampling procedure used to assess model performance and, crucially, to generate out-of-fold predictions for stacking. | Creating the meta-feature dataset for training the meta-learner without data leakage [34]. |

Integration of Machine Learning and Deep Learning Models

Application Notes

Machine Learning Frameworks for Environmental Research

The selection of an appropriate machine learning framework is fundamental to developing robust ensemble models for spatiotemporal analysis. The following table summarizes key frameworks and their applicability to environmental contaminants research.

Table 1: Machine Learning Frameworks for Ensemble Modeling

| Framework | Primary Strengths | Environmental Research Applications | Ensemble Compatibility |

|---|---|---|---|

| TensorFlow | Production scalability, flexible deployment [40] | Large-scale spatiotemporal data processing, model serving for continuous monitoring | High - supports complex neural network architectures for integration with other models |

| PyTorch | Dynamic computational graphs, research flexibility [40] | Rapid prototyping of novel ensemble architectures, experimental model designs | High - excellent for combining multiple model types in custom workflows |

| Scikit-learn | Classical ML algorithms, simplicity [40] | Preprocessing environmental data, traditional statistical models in ensembles | Medium - ideal for random forests, gradient boosting in hybrid approaches |

| Keras | User-friendly API, modularity [40] | Accessible deep learning for domain experts, quick model iteration | Medium - acts as interface to TensorFlow/PyTorch for unified workflows |

| Apache Spark MLlib | Big data processing, scalability [40] | Continental-scale contaminant modeling, distributed computing for large datasets | Medium - handles data preprocessing for ensemble training on massive spatiotemporal data |

Ensemble Modeling Approaches for Spatiotemporal Data

Ensemble methods that integrate multiple machine learning algorithms demonstrate superior performance for modeling complex environmental phenomena. Research on ozone pollution estimation provides compelling evidence for this approach.

Table 2: Ensemble Model Performance for Spatiotemporal Contaminant Modeling

| Model Type | Average Cross-Validated R² | Best Performance Context | Key Advantages for Environmental Data |

|---|---|---|---|

| Neural Network | 0.90 (with ensemble) [1] | Complex nonlinear relationships | Captures intricate spatiotemporal interactions |

| Random Forest | 0.90 (with ensemble) [1] | Feature importance analysis | Handles high-dimensional predictor variables |

| Gradient Boosting | 0.90 (with ensemble) [1] | Sequential learning from residuals | Effective with heterogeneous data sources |

| Geographically Weighted Ensemble | 0.90 (overall) [1] | Regional variations (East North Central: R²=0.93) [1] | Combines strengths of all algorithms; outperforms any single model |

Emerging Trends Enhancing Ensemble Modeling

Several technological trends in machine learning are particularly relevant to advancing ensemble models for environmental contaminants research:

- Automated Feature Engineering: Streamlines identification of optimal predictors from diverse environmental datasets with minimal human intervention [41]

- Edge Computing: Enables real-time processing of contaminant data closer to source, reducing latency for monitoring applications [41]

- Federated Learning: Facilitates collaborative model training across institutions without sharing proprietary environmental data, addressing privacy concerns [41]

- MLOps Practices: Ensures reliable deployment and continuous monitoring of ensemble models in production environments [42]

Experimental Protocols

Protocol for Ensemble Modeling of Spatiotemporal Contaminants

This protocol outlines a comprehensive methodology for developing ensemble models to estimate environmental contaminant concentrations at high spatiotemporal resolution, adapted from successful approaches in air pollution modeling [1].

Data Acquisition and Preparation

Materials:

- Environmental monitoring data (contaminant concentrations)

- Geographic Information Systems (GIS) software

- Meteorological data sources

- Land use and demographic datasets

- Remote sensing data

- Chemical transport model outputs

Procedure:

- Acquire daily contaminant concentration measurements from monitoring networks across study domain

- Collect and preprocess 169+ predictor variables across categories [1]:

- Meteorological parameters (temperature, solar radiation, wind speed, relative humidity)

- Land use variables (industrial areas, transportation networks, vegetation indices)

- Chemical transport model simulations

- Remote sensing observations

- Topographic and demographic data

- Apply GIS techniques to consolidate all variables into unified spatiotemporal framework

- Handle missing values using appropriate imputation techniques

- Partition data into training/validation sets with temporal and spatial cross-validation

Model Training and Implementation

Materials:

- Python/R programming environments

- Machine learning frameworks (TensorFlow, PyTorch, Scikit-learn)

- High-performance computing resources

Procedure:

- Implement three diverse machine learning algorithms [1]:

- Neural Networks: Configure architecture (layers, neurons), activation functions, regularization

- Random Forest: Set number of trees, maximum depth, feature consideration parameters

- Gradient Boosting: Define learning rate, number of boosting stages, tree complexity

- Train each model independently on the consolidated dataset

- Optimize hyperparameters for each algorithm using cross-validation

- Generate predictions from each model across the entire spatiotemporal domain

Ensemble Integration and Validation

Procedure:

- Develop geographically weighted ensemble framework to combine predictions from all three models

- Implement weighting scheme that accounts for regional performance variations

- Generate final contaminant estimates at high resolution (1km × 1km daily) [1]

- Perform comprehensive validation:

- Temporal cross-validation (withhold time periods)

- Spatial cross-validation (withhold monitoring locations)

- Seasonal performance analysis

- Quantify model uncertainty by predicting monthly standard deviations of estimation errors

Workflow Visualization

Ensemble Modeling Workflow

Ensemble Architecture Diagram

Ensemble Model Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Ensemble Modeling

| Tool/Category | Specific Examples | Function in Research Workflow |

|---|---|---|

| Core ML Frameworks | TensorFlow, PyTorch, Scikit-learn [40] | Foundation for implementing neural networks, random forests, and gradient boosting models |

| Specialized Libraries | Keras (API abstraction), Apache Spark MLlib (big data) [40] | Simplify model development and handle large-scale environmental datasets |

| Data Processing Tools | GIS software, Python Pandas, NumPy | Spatiotemporal data consolidation, feature engineering, and preprocessing |

| Validation Frameworks | Spatial/temporal cross-validation, performance metrics (R², RMSE) | Model evaluation, bias detection, and uncertainty quantification |

| Visualization Platforms | Matplotlib, Plotly, GIS mapping tools | Exploration of spatiotemporal patterns and model results communication |

Implementation Considerations

Data Quality and Governance

Ensemble model performance is critically dependent on data quality. Implement comprehensive data validation protocols to address missing values, measurement errors, and spatial inconsistencies. Establish reproducible data pipelines with version control for all input datasets, particularly when integrating multiple data sources with varying temporal resolutions and spatial coverages.

Computational Resource Management

High-resolution spatiotemporal modeling demands substantial computational resources. The referenced ozone modeling study consolidated approximately 20TB of predictor variables across 11 million grid cells [1]. Plan for distributed computing approaches when working at continental scales, considering cloud computing platforms or high-performance computing clusters for model training and prediction.

Model Interpretation and Validation

While ensemble models often achieve superior predictive performance, their complexity can reduce interpretability. Implement model explanation techniques to maintain scientific transparency. Employ rigorous spatial and temporal cross-validation strategies to avoid overfitting and ensure model generalizability across geographic regions and time periods.

Feature Engineering for Spatiotemporal Environmental Data

Feature engineering is a critical prerequisite for developing accurate machine learning models in spatiotemporal environmental research. It involves the process of creating predictive variables from raw data that effectively capture spatial dependencies, temporal dynamics, and complex environmental relationships. For ensemble models analyzing spatiotemporal trends of environmental contaminants, thoughtful feature engineering enables researchers to transform heterogeneous data sources into meaningful predictors that enhance model performance and interpretability. This protocol outlines comprehensive feature engineering methodologies tailored specifically for environmental contaminant research, providing researchers with practical tools to improve the predictive capability of ensemble machine learning approaches for environmental monitoring and public health protection.

Core Feature Categories for Environmental Data

Effective feature engineering for spatiotemporal environmental data requires systematic creation of predictors across several domains. The table below outlines core feature categories with specific examples from environmental research.

Table 1: Core Feature Categories for Spatiotemporal Environmental Modeling

| Category | Sub-category | Feature Examples | Environmental Application Examples |

|---|---|---|---|

| Spatial Features | Proximity Metrics | Distance to pollution sources, road networks, water bodies | Distance to industrial sites for ozone prediction [1] |

| Spatial Lag | Mean pollutant values in neighboring areas | Spatial autocorrelation in water quality parameters [3] | |

| Land Use Patterns | Land cover percentages, impervious surface areas | Tree cover (55%) as threshold for water quality [3] | |

| Temporal Features | Cyclical Encoding | sin/cos of hour, day, season | Seasonal ozone variations [1] |

| Lagged Variables | Previous time steps (t-1, t-2, t-n) | Lag-based PM(_{2.5}) predictions [43] | |

| Temporal Trends | Moving averages, rate of change | Decadal trends in persistent organic pollutants [44] | |

| Spectral & Transform Features | Decomposition | Fast Fourier Transform (FFT) | Spectral decomposition for PM(_{2.5}) forecasting [43] |

| Indices | Spectral indices from satellite imagery | Landsat-derived indices for land cover classification [45] | |

| Meteorological Features | Direct Measurements | Temperature, humidity, wind speed | Temperature (17-25°C) thresholds for water quality [3] |

| Derived Metrics | Atmospheric pressure, solar radiation | Relative humidity correlation with ozone formation [1] | |

| Source & Emission Features | Chemical Transport | CMAQ model outputs | Gridded output from chemical transport models for ozone [1] |

| Remote Sensing | AOD, AAI, gas column densities | MAIAC AOD at 550nm for air quality mapping [46] |

Experimental Protocols for Feature Engineering

Protocol 1: Spatial Feature Engineering for Watershed Analysis

This protocol details the creation of spatial features for predicting water quality variations across watersheds, based on methodologies successfully applied in coastal urbanized areas [3].

Materials and Reagents

- GIS software (ArcGIS, QGIS)

- Watershed boundary data

- Land use/land cover maps

- Digital Elevation Model (DEM)

- Monitoring station location data

Procedure

- Spatial Data Collection: Compile multi-year land use data, elevation models, and monitoring locations for the target watersheds.

- Proximity Feature Calculation:

- Compute Euclidean distance from each monitoring point to coastline (critical threshold: 10km [3])

- Calculate distance to nearest urban center and industrial areas

- Determine flow accumulation paths using DEM data

- Land Use Composition:

- Extract percentage of tree cover within buffer zones (critical threshold: 55% [3])

- Calculate impervious surface percentages

- Quantify agricultural and residential land use proportions

- Spatial Autocorrelation:

- Compute spatial lag variables using inverse distance weighting

- Calculate Moran's I to quantify spatial dependence

- Generate spatial cluster indicators using Getis-Ord Gi* statistic

Validation Method

- Perform spatial cross-validation by grouping data by watershed regions

- Assess feature importance using SHAP values to interpret spatial contributions [3]

Protocol 2: Temporal Feature Engineering for Air Quality Analysis