Evaluating Machine Learning Classifiers in Environmental Forensics: A Guide to Performance Metrics and Best Practices

This article provides a comprehensive framework for selecting, applying, and interpreting performance metrics for machine learning classifiers in environmental forensics.

Evaluating Machine Learning Classifiers in Environmental Forensics: A Guide to Performance Metrics and Best Practices

Abstract

This article provides a comprehensive framework for selecting, applying, and interpreting performance metrics for machine learning classifiers in environmental forensics. Tailored for researchers and scientific professionals, it bridges the gap between theoretical data science and the practical demands of forensic investigations. The scope covers foundational metric principles, methodological applications to diverse evidence types—from chemical biomarkers to microbial communities—strategies for troubleshooting common data challenges, and rigorous validation protocols essential for legal admissibility. The guide aims to empower practitioners to build robust, reliable, and court-defensible ML models that enhance the accuracy and efficiency of environmental crime investigations.

Core Concepts: Why Performance Metrics Are Critical for ML in Environmental Forensics

The Role of Machine Learning in Modern Environmental Forensics

Environmental forensics involves the systematic investigation of environmental contamination to determine sources, timing, and responsibility. This field has progressively evolved from relying solely on conventional statistical methods to incorporating sophisticated machine learning (ML) classifiers that can decipher complex, multivariate environmental data. The application of ML in this domain represents a paradigm shift, enabling researchers to analyze vast datasets with enhanced precision, identify subtle patterns of contamination, and allocate liability based on probabilistic modeling of forensic evidence. By leveraging algorithms that learn directly from data, environmental forensic experts can now address challenging problems including source attribution, pathway identification, and impact assessment with unprecedented accuracy.

The integration of machine learning into environmental forensics is driven by the growing complexity of environmental data and the need for robust, defensible analytical methods. Modern environmental monitoring generates massive datasets from diverse sources such as continuous emission monitoring systems, remote sensing platforms, and high-resolution chemical analysis. Traditional analytical techniques often struggle with the volume, variety, and veracity of this data, particularly when dealing with non-linear relationships and complex interactions between multiple environmental variables. Machine learning classifiers excel in precisely these scenarios, providing powerful tools for pattern recognition, anomaly detection, and predictive modeling that form the core of modern environmental forensic investigations.

Performance Metrics for ML Classifiers in Environmental Forensics

Evaluating the effectiveness of machine learning classifiers in environmental forensics requires specialized performance metrics that align with the field's unique requirements. While standard classification metrics such as accuracy, precision, and recall provide foundational insights, environmental applications often demand additional considerations including model interpretability, robustness to noise, and performance stability across diverse environmental conditions. The selection of appropriate metrics is further complicated by the frequent class imbalance in environmental datasets, where contamination events may be rare compared to background conditions.

For regression tasks common in environmental forecasting, metrics like Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and coefficient of determination (R²) are routinely employed. The Nash-Sutcliffe efficiency (NSE) and Kling-Gupta Efficiency (KGE) offer specialized measures for hydrological and environmental models, assessing how well predictions match observations relative to the variability in the measured data [1]. In classification contexts, area under the receiver operating characteristic curve (AUC-ROC) provides a robust measure of a model's ability to discriminate between classes, which is particularly valuable for contamination detection and source identification problems. Environmental forensic applications must also consider computational efficiency and scalability, as models may need to process streaming data from monitoring networks in near real-time for rapid incident response.

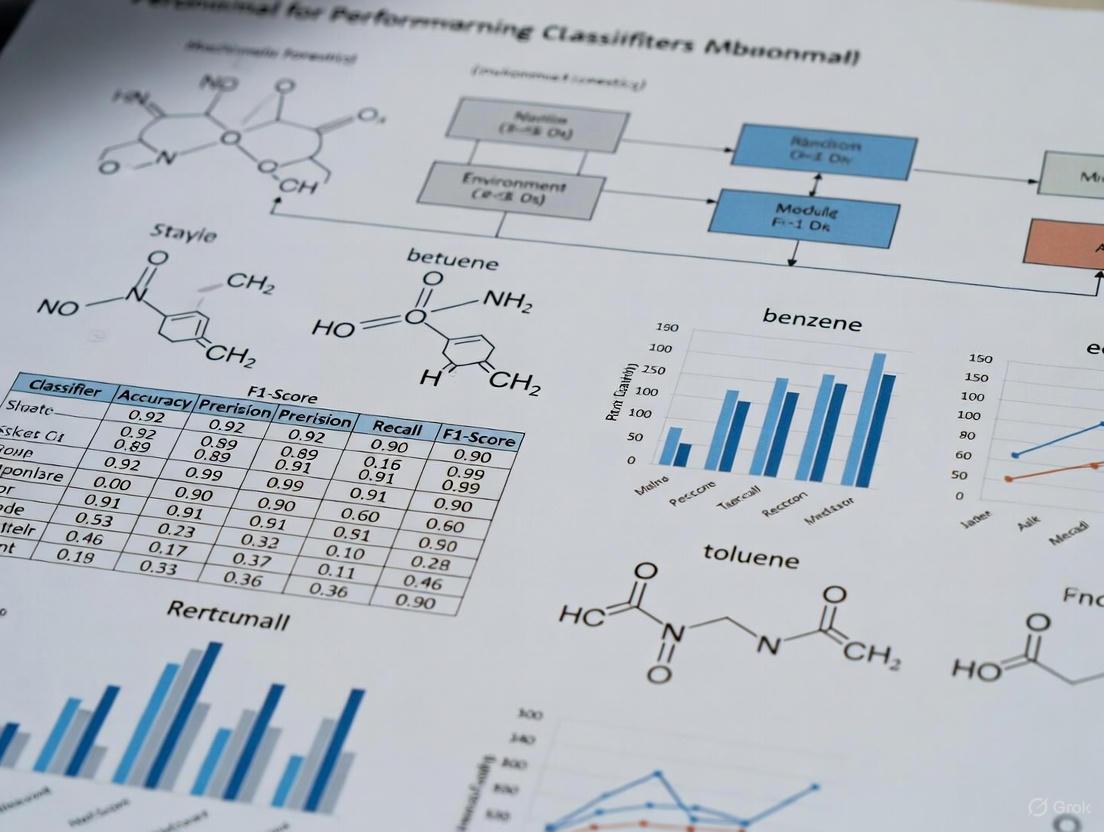

Table 1: Key Performance Metrics for Environmental Forensic ML Models

| Metric Category | Specific Metrics | Environmental Forensic Application |

|---|---|---|

| Overall Accuracy | Accuracy, F1-Score | General model performance assessment for classification tasks |

| Error Measurement | RMSE, MAE | Quantifying prediction error for continuous variables (e.g., contaminant concentrations) |

| Explanatory Power | R², NSE | Evaluating how well models explain variability in environmental data |

| Discriminatory Power | AUC-ROC, Precision-Recall | Assessing ability to distinguish between sources or contamination events |

| Stability Metrics | KGE, Variance in Cross-Validation | Measuring model consistency across different temporal or spatial contexts |

Comparative Analysis of ML Classifiers in Environmental Applications

Performance Across Environmental Domains

Rigorous benchmarking studies across diverse environmental applications reveal distinct performance patterns among machine learning classifiers. In a comprehensive comparison of five ML models for predicting climate variables in Johor Bahru, Malaysia, Random Forest (RF) demonstrated superior performance for most temperature-related variables, exhibiting the lowest error rates for Temperature at 2m (RMSE: 0.2182, MAE: 0.1679), Dew/Frost Point at 2m (RMSE: 0.2291, MAE: 0.1750), and Wet Bulb Temperature at 2m (RMSE: 0.1621, MAE: 0.1251) [1]. The study utilized 15,888 daily time series climate data points from NASA's Prediction of Worldwide Energy Resources (POWER) database, providing robust evidence of RF's capabilities with extensive environmental datasets.

Similarly, in aquatic toxicology and water quality monitoring, tree-based ensemble methods consistently outperform other approaches. Research comparing 10 machine learning models for predicting Chlorophyll a concentrations in western Lake Erie found that Gradient Boosting Decision Trees (GBDT) and Random Forest achieved the top two performances (R² = 0.84 and 0.82, respectively) following careful outlier removal and feature selection [2]. The critical importance of data preprocessing was highlighted by the substantial performance improvements observed after outlier removal, with RMSE decreasing by up to 92% for the optimal GBDT model. These findings underscore that model selection must consider both algorithmic capabilities and data quality management strategies.

Table 2: Comparative Performance of ML Classifiers in Environmental Applications

| Environmental Application | Best Performing Model(s) | Key Performance Metrics | Reference |

|---|---|---|---|

| Climate Variable Prediction | Random Forest | RMSE: 0.1621-0.2291 for temperature variables; R² > 0.90 | [1] |

| Water Quality Monitoring | GBDT, Random Forest | R² = 0.84 (GBDT), 0.82 (RF) for Chlorophyll a prediction | [2] |

| Contamination Classification | Decision Trees, Neural Networks | Accuracy > 98% for insulator contamination classification | [3] |

| Emission Pattern Analysis | Random Forest Classifier | Up to 100% accuracy for specific datasets | [4] |

| Metabarcoding Data Analysis | Random Forest | Superior performance in regression and classification without feature selection | [5] |

Specialized Applications in Forensic Contexts

In specialized forensic applications such as emission monitoring and contamination detection, machine learning classifiers demonstrate remarkable precision. A study analyzing Continuous Emission Monitoring Systems (CEMS) data from 107 waste discharge outlets in a chemical industrial park found that Random Forest classifiers (RFC) consistently achieved high accuracy (up to 100% for specific datasets) in identifying emission patterns and detecting data anomalies [4]. The research evaluated 17 machine learning models, with gradient boost-based methods also performing well. This capability to identify subtle pattern changes in emission data provides a powerful tool for detecting potential regulatory non-compliance that might escape conventional monitoring approaches.

For contamination classification of critical infrastructure components, experimental validations show exceptional model performance. In a study classifying pollution levels on high voltage insulators using leakage current data, both decision tree-based models and neural networks achieved accuracies consistently exceeding 98% [3]. The researchers developed a comprehensive dataset under controlled laboratory conditions that incorporated critical parameters of temperature and varying humidity, creating realistic scenarios for model evaluation. Notably, decision tree-based models exhibited significantly faster training and optimization times compared to neural network counterparts, highlighting the importance of computational efficiency in practical forensic applications where rapid analysis may be required.

Experimental Protocols and Methodologies

Standardized Experimental Framework

Implementing machine learning in environmental forensics requires systematic experimental protocols to ensure reproducible, defensible results. A robust methodology encompasses multiple phases from data acquisition and preprocessing to model validation and interpretation. Based on analyzed studies, successful implementations share common methodological elements while adapting specific approaches to particular environmental contexts.

The experimental workflow typically begins with comprehensive data collection from relevant environmental monitoring systems. For example, in the CEMS pattern analysis study, researchers collected emission data from 107 waste discharge outlets across 31 corporations in a chemical industrial park, categorizing outlets into 12 datasets based on monitoring parameters [4]. This systematic organization of data sources enabled targeted analysis of emission patterns specific to different industrial processes. Similarly, in the climate prediction study, researchers obtained 15,888 daily time series climate data points from NASA's POWER database, incorporating six distinct climate variables to capture multidimensional environmental dynamics [1].

Data preprocessing represents a critical phase where domain expertise intersects with machine learning best practices. The Lake Erie water quality study demonstrated that outlier removal using the Isolation Forest (IF) method dramatically improved model performance, with RMSE values decreasing by 35-92% across all 10 tested ML models [2]. This finding underscores that effective data cleaning is not merely a technical prerequisite but substantially influences model efficacy. Additional preprocessing steps commonly include data normalization, handling missing values through imputation techniques, and temporal alignment of multivariate time series data.

Feature Engineering and Selection Strategies

Feature engineering and selection emerge as crucial determinants of model success across environmental forensic applications. Research on metabarcoding datasets indicates that while feature selection can improve model interpretability, it may impair performance for robust tree ensemble models like Random Forests [5]. This suggests that the optimal feature selection strategy depends on both dataset characteristics and the chosen modeling approach.

Comprehensive feature evaluation approaches yield significant dividends. The Lake Erie study exhaustively tested all 32,767 possible feature combinations of measured water quality parameters to identify optimal inputs for each ML model [2]. This rigorous approach identified particulate organic nitrogen (PON) as the most critical predictor for Chlorophyll a concentrations, providing valuable insights for targeted monitoring program design. Similarly, in the high voltage insulator contamination study, researchers extracted features from multiple domains (time, frequency, and time-frequency) from leakage current signals, with Bayesian optimization techniques used to identify optimal model parameters [3].

Model Validation and Interpretation Protocols

Robust validation methodologies are essential for establishing scientific credibility in environmental forensic applications. The climate prediction study employed multiple validation metrics including RMSE, MAE, R², Nash-Sutcliffe efficiency (NSE), and Kling-Gupta Efficiency (KGE) to comprehensively assess model performance from different perspectives [1]. This multi-faceted evaluation revealed that while Random Forest excelled in most metrics, Support Vector Regression demonstrated superior generalization in testing phases with the highest KGE value (0.88), highlighting the value of diverse performance assessment.

Temporal validation approaches address unique challenges in environmental time series data. Several studies implemented temporal splitting strategies where models are trained on historical data and tested on more recent observations, simulating real-world forecasting scenarios and preventing overly optimistic performance estimates from random data splitting. For the CEMS pattern analysis, researchers conducted temporal emission pattern analysis that revealed significant changes in 334 instances across collection weeks, with only 24 aligning with regulatory offsite supervision records [4]. This demonstrates how ML approaches can identify potential compliance issues that might escape conventional monitoring.

Essential Research Reagents and Computational Tools

The effective implementation of machine learning in environmental forensics requires both computational resources and domain-specific data assets. Benchmark datasets curated specifically for environmental applications have emerged as critical resources for model development and comparison. The ADORE dataset provides extensive information on acute aquatic toxicity in three relevant taxonomic groups (fish, crustaceans, and algae), incorporating ecotoxicological experiments expanded with phylogenetic, species-specific, and chemical properties data [6]. Similarly, the GEMS-GER dataset offers a benchmark for groundwater level modeling in Germany, containing 32 years of gapless weekly observations from 3,207 monitoring wells enriched with meteorological forcing variables and over 50 site-specific static attributes [7].

Specialized software tools form another essential component of the environmental forensic toolkit. For digital evidence handling and analysis, forensic tools such as Autopsy, FTK (Forensic Toolkit), and Volatility provide specialized capabilities for retrieving, inspecting, and analyzing digital evidence from various devices [8]. Meanwhile, AI-powered environmental impact analysis platforms like IBM Envizi, Microsoft Sustainability Manager, and Persefoni offer automated carbon accounting, predictive analytics, and compliance tracking functionalities that support large-scale environmental assessment [9].

Table 3: Essential Research Resources for ML in Environmental Forensics

| Resource Category | Specific Tools/Datasets | Primary Function | Accessibility |

|---|---|---|---|

| Benchmark Datasets | ADORE Dataset, GEMS-GER | Standardized data for model development and comparison | Open access [6] [7] |

| Digital Forensics Software | Autopsy, FTK, Volatility | Digital evidence retrieval and analysis | Mixed (open source and commercial) [8] |

| AI Environmental Platforms | IBM Envizi, Watershed, Persefoni | Enterprise-scale environmental impact analysis | Commercial [9] |

| Programming Frameworks | Python, R, Scikit-learn | Model development and implementation | Open source |

| Specialized Monitoring Equipment | CEMS, Remote Sensors, IoT Networks | Real-time environmental data collection | Commercial |

Machine learning has fundamentally transformed environmental forensics by providing powerful analytical capabilities for complex environmental data. The comparative analysis presented in this review demonstrates that tree-based ensemble methods, particularly Random Forest and Gradient Boosting variants, consistently deliver superior performance across diverse environmental applications including climate forecasting, water quality monitoring, and contamination detection. Their robust performance, relative interpretability, and resistance to overfitting make them particularly well-suited for environmental forensic investigations where defensible results are essential.

Future advancements in the field will likely focus on several key areas. Interpretable AI approaches will become increasingly important as regulatory and legal applications demand transparent decision-making processes. The integration of physical models with data-driven machine learning approaches represents another promising direction, potentially combining the mechanistic understanding of environmental processes with the pattern recognition capabilities of ML. Additionally, transfer learning methodologies may help address the common challenge of limited labeled data in specific environmental contexts by leveraging knowledge from related domains. As environmental challenges continue to evolve in complexity, machine learning classifiers will play an increasingly central role in uncovering the forensic evidence needed to protect environmental resources and assign responsibility for contamination events.

In the field of environmental forensics research, accurately identifying pollutants, tracing contamination sources, and assessing ecological risks relies heavily on machine learning classifiers. These models help researchers analyze complex environmental datasets, from spectral fingerprints of contaminants to genomic markers of biological indicators. However, the performance of these classifiers must be rigorously evaluated using metrics that align with the high-stakes nature of environmental decision-making. While accuracy provides a superficial measure of overall correctness, it can be dangerously misleading when dealing with imbalanced datasets common in environmental forensics, such as rare contamination events or endangered species detection [10] [11].

This guide provides an objective comparison of five key performance metrics—Accuracy, Precision, Recall, F1-Score, and AUC-ROC—within the context of environmental forensics research. We examine the mathematical foundations, practical applications, and limitations of each metric, supported by experimental data from relevant studies. By understanding these metrics' distinct characteristics, researchers and drug development professionals can select the most appropriate evaluation framework for their specific classification tasks, particularly when dealing with the complex, imbalanced datasets characteristic of environmental forensics and pharmaceutical research [12] [13].

Metric Definitions and Mathematical Foundations

Core Concepts and Formulas

All classification metrics derive from four fundamental outcomes in the confusion matrix: True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN) [11] [14]. These elements represent the basic types of correct and incorrect predictions made by a binary classifier.

- Accuracy: Measures the overall proportion of correct predictions, calculated as (TP+TN)/(TP+TN+FP+FN) [10] [11] [14]. It assumes equal importance for all prediction types.

- Precision: Quantifies the reliability of positive predictions, calculated as TP/(TP+FP) [15] [11] [14]. Also called Positive Predictive Value, it answers "What fraction of positive identifications were actually correct?"

- Recall: Measures the ability to identify all actual positive instances, calculated as TP/(TP+FN) [15] [11] [14]. Also known as Sensitivity or True Positive Rate, it answers "What fraction of actual positives were correctly identified?"

- F1-Score: Represents the harmonic mean of Precision and Recall, calculated as 2×(Precision×Recall)/(Precision+Recall) [15] [11]. This metric balances the two components, penalizing extreme values in either direction.

- AUC-ROC: The Area Under the Receiver Operating Characteristic curve plots the True Positive Rate (Recall) against the False Positive Rate (FP/(FP+TN)) across all classification thresholds [12] [15] [16]. The area under this curve provides a threshold-agnostic measure of model performance.

Visualizing Metric Relationships and Trade-offs

The diagram below illustrates the logical relationships between core classification concepts and the inherent trade-offs between different metrics.

Metric Relationships and Trade-offs

The diagram above shows how all metrics derive from fundamental confusion matrix elements. A critical relationship exists between Precision and Recall, which typically exhibit an inverse correlation: increasing one often decreases the other [11] [17] [14]. This trade-off emerges from the classification threshold adjustment—lowering the threshold increases Recall but decreases Precision, while raising the threshold has the opposite effect [11].

Comparative Analysis of Metrics

Experimental Performance Comparison

The table below summarizes quantitative results from experimental studies in biomedical and environmental domains, demonstrating how different metrics portray model performance across varied applications.

| Study Context | Model Description | Accuracy | Precision | Recall | F1-Score | AUC-ROC | Key Insight |

|---|---|---|---|---|---|---|---|

| Clinical Trial Prediction [13] | OPCNN (Imbalanced Data: 757 approved vs 71 failed drugs) | 0.9758 | 0.9889 | 0.9893 | 0.9868 | 0.9824 | High scores across all metrics, with F1-Score balancing precision and recall effectively |

| Drug-Target Interaction [18] | GAN + Random Forest (BindingDB-Kd dataset) | 0.9746 | 0.9749 | 0.9746 | 0.9746 | 0.9942 | AUC-ROC provides the most optimistic assessment due to excellent class separation |

| Fraud Detection [17] | Binary Classifier (Imbalanced: 300 vs 9,700 transactions) | 0.9100 | 0.1250 | 0.3330 | 0.1818* | 0.8000* | Precision and Recall offer crucial insights missed by accuracy in imbalanced scenarios |

| Disease Diagnosis [10] | Decision Tree (Imbalanced cancer data) | 0.9464 | Low* | Low* | Low* | Not Reported | Accuracy misleadingly high while minority class (malignant) largely missed |

*Values estimated from context or calculated based on provided confusion matrices

Interpretation of Comparative Results

The experimental data reveals critical patterns in metric behavior. In the fraud detection and disease diagnosis examples, accuracy provides a misleadingly optimistic view of model performance (91-94.64%), while precision and recall reveal significant deficiencies in identifying the positive class [10] [17]. The clinical trial prediction study demonstrates balanced performance across all metrics, suggesting effective handling of the inherent data imbalance [13]. Notably, the drug-target interaction study shows that AUC-ROC (0.9942) can present the most favorable assessment when a model has strong class separation capability, even when threshold-dependent metrics like accuracy and F1-score are slightly lower [18].

When to Use Each Metric: A Decision Framework

Metric Selection Guidelines

The choice of evaluation metric should align with your research objectives, dataset characteristics, and error cost implications. The table below provides a structured framework for selecting appropriate metrics in environmental forensics and drug development contexts.

| Research Scenario | Priority Metrics | Rationale and Application Examples |

|---|---|---|

| Balanced Class Distribution | Accuracy, AUC-ROC | When classes are approximately equal and all error types have similar costs [12] [19]. Example: Classifying general chemical vs. biological contaminants in water samples. |

| High Cost of False Positives | Precision | When incorrectly labeling negative instances as positive has serious consequences [11] [14]. Example: Identifying regulated toxic substances where false alarms trigger unnecessary costly remediation. |

| High Cost of False Negatives | Recall | When missing positive instances poses significant risks [11] [14]. Example: Early detection of highly contagious pathogens or rare endangered species in environmental DNA. |

| Imbalanced Datasets | F1-Score, PR-AUC | When positive class is rare and both false positives and false negatives matter [12] [10]. Example: Predicting drug trial failures or detecting rare contamination events. |

| Threshold Selection Uncertainty | AUC-ROC | When the optimal classification threshold is unknown and overall ranking ability is important [12] [15]. Example: Initial screening of compound libraries in drug discovery. |

| Comprehensive Assessment | MCC, Multiple Metrics | When a single balanced measure considering all confusion matrix elements is needed [13] [11]. Example: Final model evaluation for high-stakes environmental policy decisions. |

Special Considerations for Environmental Forensics

In environmental forensics research, several domain-specific factors influence metric selection. The field frequently deals with highly imbalanced datasets (e.g., rare pollution events, endangered species detection) where F1-Score and Precision-Recall curves typically provide more meaningful evaluations than accuracy or ROC-AUC [12] [10]. The regulatory and public health implications of misclassification often create asymmetric costs between false positives and false negatives, necessitating careful consideration of precision versus recall based on specific application contexts [14].

Additionally, multi-class problems are common (e.g., identifying multiple contaminant sources), requiring adaptations of these binary metrics through macro, micro, or weighted averaging approaches [10]. Researchers should also consider stakeholder communication needs, as metrics like accuracy and F1-Score are generally more interpretable for non-technical audiences than AUC-ROC [12].

Experimental Protocols and Methodologies

Standard Evaluation Workflow

The diagram below illustrates a comprehensive experimental workflow for evaluating classification models in environmental forensics and pharmaceutical research contexts.

Model Evaluation Workflow

Detailed Methodological Components

Dataset Collection and Preparation: Environmental forensics studies might utilize spectral data, chemical measurements, or genomic sequences, while drug development research often employs chemical structures, target protein features, and clinical outcomes [13] [18]. Critical preprocessing includes handling missing values, normalization, and feature selection to enhance model performance.

Addressing Class Imbalance: Techniques such as Synthetic Minority Over-sampling (SMOTE), informed under-sampling, or using class weights during model training are essential for handling skewed distributions common in these domains [18]. Some advanced studies have employed Generative Adversarial Networks (GANs) to generate synthetic minority class samples, significantly improving model sensitivity and reducing false negatives [18].

Model Training and Validation: Implement appropriate cross-validation strategies (e.g., k-fold, stratified k-fold) to ensure reliable performance estimation, particularly with limited data [13]. Hyperparameter tuning should optimize for the metric most relevant to the research objective, not necessarily default accuracy.

Comprehensive Evaluation: Generate both ROC and Precision-Recall curves to understand model behavior across all thresholds [12] [16]. The Precision-Recall curve is particularly informative for imbalanced datasets where ROC curves may provide an overly optimistic view [12].

Essential Research Reagents and Computational Tools

Research Reagent Solutions

The table below details key computational tools and data resources essential for implementing the experimental protocols in environmental forensics and drug development research.

| Research Reagent/Tool | Function and Application | Example Use Case |

|---|---|---|

| MACCS Keys | Structural molecular fingerprints representing drug chemical features [18] | Encoding drug molecules for drug-target interaction prediction |

| Amino Acid/Dipeptide Composition | Feature extraction from protein sequences for target representation [18] | Representing target biomolecular properties in clinical trial prediction |

| Generative Adversarial Networks | Synthetic data generation for minority class in imbalanced datasets [18] | Addressing false negatives in rare event detection (e.g., drug failures) |

| BindingDB Database | Curated database of drug-target interaction information [18] | Benchmarking predictive models in pharmaceutical research |

| Random Forest Classifier | Ensemble learning method for classification tasks [18] | Robust prediction of drug-target interactions with high-dimensional data |

| scikit-learn Library | Python machine learning library with metric implementation [12] [10] [15] | Calculating accuracy, precision, recall, F1-score, and AUC-ROC |

| Cross-Validation Modules | Statistical method for robust performance estimation [13] | Reliable model evaluation with limited environmental or clinical data |

Selecting appropriate performance metrics is not merely a technical formality but a critical decision that reflects the fundamental priorities and cost structures of a research problem in environmental forensics and drug development. Accuracy serves as a useful starting point for balanced problems but becomes dangerously misleading with imbalanced datasets common in these fields. Precision-focused approaches minimize false alarms when incorrectly identifying negative instances carries high costs, while recall-oriented strategies ensure comprehensive detection when missing positive cases poses significant risks. The F1-Score provides a balanced perspective when both error types warrant consideration, and AUC-ROC offers a threshold-independent assessment of overall model discrimination capability.

The most robust evaluation strategy employs multiple metrics that align with specific research objectives, complemented by visualization tools like ROC and Precision-Recall curves. By applying the decision frameworks and experimental protocols outlined in this guide, researchers can make informed choices about model selection and optimization, ultimately enhancing the reliability and practical utility of classification systems in high-stakes environmental and pharmaceutical applications.

Understanding Confusion Matrices in a Forensic Context

In environmental forensics, accurately attributing pollution to its source is a critical task with significant legal and remediation implications. Machine learning (ML) classifiers have become indispensable tools for this purpose, capable of analyzing complex geochemical or chemical data to identify the origin of contaminants. The performance of these classifiers must be rigorously evaluated to ensure reliable, legally defensible results. Among the various evaluation tools, the confusion matrix stands as a fundamental, intuitive framework for visualizing and quantifying classifier performance [20]. This guide provides an objective comparison of common ML classifiers used in environmental forensics, with performance data contextualized through confusion matrices and their derived metrics, offering researchers a clear pathway for model selection in their investigations.

Theoretical Framework: The Confusion Matrix

Core Structure and Terminology

A confusion matrix is a specific table layout that allows visualization of an algorithm's performance in supervised classification. Each row of the matrix represents the instances in an actual class, while each column represents the instances in a predicted class [20]. This structure provides a complete picture of correct classifications and the types of errors made by a model.

For a binary classification task common in forensic analysis (e.g., "Pollutant from Source A" vs. "Pollutant not from Source A"), the matrix is a 2x2 grid with the following designations:

- True Positive (TP): The model correctly predicts the positive class (e.g., correctly identifies a sample from Source A).

- True Negative (TN): The model correctly predicts the negative class (e.g., correctly identifies a sample not from Source A).

- False Positive (FP): The model incorrectly predicts the positive class (Type I error). In a forensic context, this could lead to falsely attributing pollution to a source.

- False Negative (FN): The model incorrectly predicts the negative class (Type II error). This could lead to exonerating a true pollution source.

Key Metrics Derived from the Confusion Matrix

From the counts of TP, TN, FP, and FN, several essential performance metrics are calculated [20]:

- Accuracy: Overall, how often the classifier is correct.

(TP+TN)/(TP+TN+FP+FN). Can be misleading with imbalanced datasets. - Precision (Positive Predictive Value): When it predicts a positive class, how often is it correct?

TP/(TP+FP). Crucial for minimizing false attributions. - Recall (Sensitivity or True Positive Rate): How often does it correctly identify the actual positive samples?

TP/(TP+FN). Important for ensuring a true source is not missed. - F1-Score: The harmonic mean of precision and recall. Provides a single metric that balances both concerns.

- Matthew’s Correlation Coefficient (MCC): A correlation coefficient between the observed and predicted classifications that is generally regarded as a balanced measure, especially useful for imbalanced classes [20].

The following workflow diagram illustrates the process of building and evaluating a classifier, with the confusion matrix as the central evaluation tool.

Comparative Analysis of Machine Learning Classifiers

The choice of algorithm significantly impacts classification performance. Below is a comparative analysis of widely used classifiers, with experimental data drawn from forensic and environmental science applications.

Table 1: Comparative performance of classifiers across various forensic and environmental studies.

| Classifier | Application Context | Reported Accuracy | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Chemical fingerprinting for environmental source tracking [21]; Satellite image classification [22] | 92-100% (Balanced Accuracy) [21]; 81.3% [22] | Effective in high-dimensional spaces; Clear margin of separation [23] | Memory-intensive; Requires careful hyperparameter tuning [23] |

| Random Forest | Oil spill origin identification [24]; Satellite image classification [22] | 91% [24]; 78.9% [22] | Reduces overfitting; Handles large datasets well; Provides feature importance [23] | Computationally intensive; Less interpretable than a single tree [23] |

| XGBoost | Speech audiometry prediction (Healthcare) [25] | High (Demonstrated balanced performance) [25] | High performance and speed; Effective at handling diverse data structures. | Can be less interpretable; Requires tuning. |

| Decision Tree | Base model for ensemble methods [23] | N/A (Typically lower than ensembles) | Easy to visualize and interpret; Minimal data preprocessing [23] | Prone to overfitting; Unstable to small data changes [23] |

| Naive Bayes | General use for small datasets and text classification [23] | N/A | Fast and efficient; Performs well with small datasets [23] | Assumes feature independence, which is rarely true [23] |

Detailed Experimental Protocols and Performance Metrics

To ensure reproducibility, this section outlines the methodologies from key studies cited in the comparison.

Protocol A: Chemical Fingerprinting for Source Tracking

This study [21] established a quantitative workflow for discriminating environmental sources using chemical fingerprints.

- Objective: To select diagnostic chemical features (a fingerprint) that can predict the presence of a specific pollution source in a novel environmental sample.

- Materials: 51 grab samples from five distinct chemical sources (agricultural runoff, headwaters, livestock manure, (sub)urban runoff, municipal wastewater).

- Methodology: Support Vector Classification was used to select the top 10, 25, 50, and 100 chemical features that best discriminate each source from all others. The model was iterated 1,000 times.

- Performance Metrics: The primary metric was cross-validation balanced accuracy, which accounts for imbalanced datasets.

- Results: The workflow achieved a cross-validation balanced accuracy of 92-100% for all sources. It also demonstrated high sensitivity, distinguishing the presence and absence of sources even at 10,000-fold dilutions in simulated mixtures [21].

Protocol B: Forensic Geochemistry of Oil Spills

This study [24] integrated geochemical data with machine learning to identify the origin of oil spills in the Santos Basin.

- Objective: To develop a robust model for classifying the field origin of oil spills using geochemical biomarker data.

- Materials: 2200 presalt oil samples with 75 geochemical attributes.

- Methodology: The dataset was preprocessed, and 7 machine learning algorithms were evaluated. The Random Forest algorithm was implemented using Python's Scikit-learn library.

- Performance Metrics: Classification accuracy was the primary metric for model selection.

- Results: The Random Forest model achieved the highest classification accuracy of 91%. The model was successfully validated using independent oil samples from spill events and a natural seep, accurately predicting their field origins with high confidence [24].

Protocol C: Satellite Image Classification for Land Use

This study [22] provides a direct, empirical comparison of multiple classifiers on a remote sensing task, analogous to classifying large-scale environmental damage.

- Objective: To compare the Maximum Likelihood, SVM, and Random Forest techniques in classifying stages of cotton crops from satellite imagery.

- Materials: A RapidEye satellite image with 5 spectral bands. A random sample of 6000 pixels (2000 for training, 4000 for validation) was used.

- Methodology: The three classification techniques were applied to the same dataset, and their results were validated using confusion matrices and statistical tests in R software.

- Performance Metrics: The percentage of correct classification (PCC) was derived from the confusion matrices.

- Results: The confusion matrix analysis revealed that SVM correctly classified 81.325% of cases, outperforming Random Forest (78.925%) and the conventional Maximum Likelihood method (68.95%). Statistical confidence tests showed non-overlapping intervals, confirming SVM's superiority for this specific task [22].

Critical Analysis of Classifier Selection

The experimental data demonstrates that no single algorithm is universally superior. The optimal choice is highly context-dependent.

- For High-Dimensional Chemical Data: SVM has proven exceptionally effective, as shown by the 92-100% balanced accuracy in chemical fingerprinting [21]. Its ability to find complex separation boundaries in high-dimensional spaces is a key advantage for forensic chemometrics.

- For Robust Geochemical Source Attribution: Random Forest offers high accuracy (91% in oil spill identification) and the added benefit of feature importance, which can identify the most diagnostic biomarkers (e.g., specific terpanes or steranes) for a given source [24]. This provides both a prediction and scientifically interpretable insight.

- For Model Interpretability: While often outperformed in accuracy by ensemble methods like Random Forest or advanced algorithms like SVM, a single Decision Tree remains valuable when the model's decision path must be simple and auditable for legal testimony [23].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key solutions and materials required for developing forensic classification models based on the experimental protocols analyzed.

Table 2: Key research reagents and computational tools for forensic classification projects.

| Item Name | Function/Application | Example from Cited Studies |

|---|---|---|

| Geochemical Biomarker Standards | Calibration and quantification of diagnostic compounds (e.g., terpanes, steranes) in environmental samples. | Used in oil spill forensics to generate the 75 predictive attributes for the ML model [24]. |

| Reference Environmental Sample Sets | Curated samples from known sources used to train and validate classification models. | 51 grab samples from five known chemical sources (e.g., agricultural runoff, wastewater) [21]. |

| Python with Scikit-learn Library | An open-source programming environment providing implementations of a wide array of machine learning algorithms. | Used to implement and evaluate the seven machine learning algorithms for oil classification [24]. |

| R Software with Specialized Libraries | A statistical computing environment used for data analysis, validation, and generating confusion matrices. | Used for validation and confidence testing of classification results using confusion matrices [22]. |

| High-Resolution Mass Spectrometry | Analytical technique for identifying and quantifying chemical compounds in complex environmental mixtures. | Gas Chromatography-Mass Spectrometry (GC-MS) was used to analyze saturated biomarker profiles [24]. |

The confusion matrix is more than a simple table; it is the cornerstone of rigorous classifier evaluation in environmental forensics. This guide demonstrates that while classifiers like Support Vector Machines and Random Forests consistently show high performance in forensic applications, the choice must be guided by the specific data structure and investigative question. By employing a standardized experimental protocol—from data collection using tools like mass spectrometry to model evaluation via confusion matrices in platforms like Python's Scikit-learn—researchers can generate reliable, defensible, and impactful results. This rigorous approach is essential for translating machine learning predictions into credible scientific evidence for environmental protection and legal accountability.

The Critical Link Between Model Performance and Legal Admissibility

The integration of machine learning (ML) into environmental forensics represents a paradigm shift, offering powerful new tools for analyzing complex ecological evidence. However, the path from a high-performing algorithm to courtroom-admissible evidence is fraught with technical and legal challenges. In legal contexts, a model's performance is not merely an academic metric; it is the foundation upon which its reliability and validity are judged under evidentiary standards such as the Daubert standard [26] [27] [28]. Proposed Federal Rule of Evidence 707 specifically targets "machine-generated evidence," requiring that it satisfies the same reliability requirements as expert testimony [27] [29]. For researchers and practitioners, understanding this critical link is essential for developing forensic tools that are not only scientifically sound but also legally defensible.

Machine Learning Performance Metrics as Legal Benchmarks

Under proposed Rule 707, the proponent of AI-generated evidence must show it is based on sufficient facts or data, is the product of reliable principles and methods, and reflects a reliable application of those principles to the case [27] [29]. Performance metrics directly address these legal requirements, transforming quantitative measures into indicators of evidentiary reliability.

Core Performance Metrics and Their Legal Significance

Table 1: Key ML Performance Metrics and Their Legal Relevance

| Performance Metric | Technical Definition | Legal Significance | Application in Environmental Forensics |

|---|---|---|---|

| Accuracy | Proportion of true results (both true positives and true negatives) among the total number of cases examined. | Demonstrates the model's fundamental correctness; foundational for establishing basic reliability. | Species identification from degraded environmental samples [30]. |

| Precision & Recall | Precision: Proportion of true positives against all positive predictions. Recall: Proportion of true positives identified from all actual positives. | Addresses specific error profiles. High precision minimizes false accusations; high recall ensures critical evidence isn't missed. | Tracking pollution sources to specific industrial sites. |

| Robustness | Ability to maintain performance with noisy, incomplete, or heterogeneous data. | Shows the method is fit for real-world conditions, not just ideal lab settings. | Analyzing mixed or low-quantity DNA samples from soil or water [31] [32]. |

| Explainability | The degree to which a model's decisions can be understood and traced by a human. | Counters the "black box" problem; essential for cross-examination and satisfying due process [33] [28]. | Justifying a conclusion about the age of a chemical spill. |

Experimental Protocols for Validating ML Classifiers in Forensic Research

Rigorous, documented experimental protocols are the cornerstone of legal admissibility. The following methodology, synthesizing best practices from forensic science literature, provides a framework for generating legally defensible validation data.

Protocol for Species Identification Using ML (Inspired by Wildlife Forensics)

This protocol details a process for developing an ML classifier to identify species from trace environmental DNA (eDNA), a common task in environmental crime investigations [30].

- 1. Sample Collection and Preparation: Environmental samples (soil, water, scat) are collected from crime scenes. DNA is extracted using a validated method, such as a phenol chloroform organic extraction protocol [30]. A negative control is processed alongside case samples to detect contamination from the start.

- 2. Data Generation and Preprocessing: Target genetic markers (e.g., CytB for mtDNA) are amplified and sequenced via Sanger sequencing [30]. The resulting sequences are analyzed and compared with reference databases (e.g., NCBI BLAST). For closely related species, additional analysis using STR panels or protein serology may be required [30].

- 3. Model Training and Validation: Sequenced data is used to train a classifier (e.g., a Convolutional Neural Network for image-based genetic data or a Random Forest model for STR data). The model is trained on a representative dataset that reflects the population involved in real cases, a key consideration under proposed Rule 707 [27]. Performance is evaluated using k-fold cross-validation, and metrics like accuracy, precision, and recall are recorded.

- 4. Independent Testing and Benchmarking: The final model is tested on a held-out dataset or, ideally, through a blind trial by an independent laboratory. This step mirrors the scientific principle of peer review and is critical for demonstrating general acceptance and reliability.

Table 2: Research Reagent Solutions for Forensic ML Validation

| Reagent / Material | Function in Experimental Protocol |

|---|---|

| Phenol Chloroform Organic Extraction Kit | Isolates high-purity DNA from complex environmental matrices for downstream analysis. |

| Sanger Sequencing Reagents | Generates the primary genetic sequence data used as input for the ML model. |

| Reference DNA Databases (e.g., NCBI) | Provides the ground-truth labeled data required for supervised model training and validation. |

| STR Multiplex Panels (e.g., OdoPlex) | Enables differentiation of closely related species where standard sequencing is insufficient [30]. |

| Validated Positive & Negative Controls | Ensures the entire analytical process, from wet lab to model inference, is functioning correctly. |

Workflow Diagram: From Sample to Admissible Evidence

The following diagram visualizes the integrated experimental and legal validation workflow, highlighting the critical decision points that impact legal admissibility.

The Legal Framework: From Model Output to Courtroom Evidence

The transition of an ML model's output from a research finding to courtroom evidence hinges on a legal framework designed to ensure reliability and fairness. Proposed Federal Rule of Evidence 707 is a direct response to this need, explicitly applying the Daubert/Rule 702 standard to machine-generated evidence offered without a testifying expert [26] [27] [29].

Navigating the Daubert Standard with Performance Metrics

A judge's gatekeeping role under Daubert involves assessing whether the proffered evidence is scientifically reliable. Performance metrics are the primary language for this assessment.

- Known or Potential Error Rate: This is the most direct link between model performance and the law. A model's error rate must be known and disclosed through rigorous testing [28]. For instance, a study evaluating AI in crime scene analysis reported performance variations across different scenarios (e.g., homicide vs. arson scenes), which is precisely the kind of contextual error rate information a court requires [34].

- Testing and Peer Review: The model's development and validation process must be subject to peer review and publication. This involves documenting the experimental protocols, such as the use of positive and negative controls in every test method, as seen in accredited wildlife forensic laboratories [30].

- General Acceptance: While not the sole factor, acceptance within the relevant scientific community is persuasive. Using standardized reagents and methodologies, like those in Table 2, helps align a novel ML tool with established forensic practices.

- Maintenance of Standards: The "black box" nature of some complex models like deep neural networks poses a significant challenge to the standards of testing and peer review [33] [28]. Therefore, there is a growing legal impetus to prioritize explainable AI (XAI) in forensic applications to allow for meaningful cross-examination.

Diagram: The Legal Admissibility Decision Pathway

This flowchart outlines the judicial decision-making process for admitting AI-generated evidence under the proposed legal framework, showing where performance metrics directly influence the outcome.

For researchers in environmental forensics, the era of developing ML classifiers in a purely academic vacuum is over. The critical link between model performance and legal admissibility necessitates a paradigm where experimental design from the outset incorporates the stringent requirements of the courtroom. Performance metrics are the quantifiable bridge between a technically sound model and one that is legally robust. As the legal landscape evolves with rules like Proposed FRE 707, the responsibility falls on scientists to not only achieve high accuracy but to rigorously document, validate, and explain their models. By treating legal admissibility as a core design constraint, researchers can ensure their powerful analytical tools will stand up in court, thereby maximizing their impact in the critical fight against environmental crime.

Exploring Unsupervised vs. Supervised Learning and Their Evaluation Needs

In environmental forensics research, the selection between supervised and unsupervised learning paradigms is pivotal, dictated primarily by the availability of labeled data and the specific analytical goals, whether prediction or discovery. These approaches demand distinct evaluation protocols and performance metrics to validate their findings. This guide provides an objective comparison of their performance, supported by experimental data from environmental applications, detailing the experimental methodologies and essential tools required for implementation.

The foundational distinction in machine learning lies in the use of labeled datasets. Supervised learning algorithms are trained on labeled data, where each input example is paired with a correct output, enabling the model to learn the mapping function for predicting outcomes on new, unseen data [35]. This approach is analogous to learning with a teacher who provides the correct answers. In contrast, unsupervised learning algorithms analyze and cluster unlabeled data sets, discovering hidden patterns or intrinsic structures without human intervention [35]. This is akin to exploration, where the model identifies interesting features or groupings on its own.

This distinction directly influences their application in environmental forensics. Supervised learning is typically deployed for well-defined prediction or classification tasks, such as forecasting pollutant concentrations or classifying a sensor reading as "faulty" or "normal" [36]. Unsupervised learning is employed for exploratory data analysis, such as identifying novel anomaly patterns in sensor networks or segmenting geographical areas based on similar pollution profiles [35] [36]. The following sections will dissect their evaluation needs, supported by experimental data and detailed methodologies.

Core Differences and Evaluation Metrics

The core difference between supervised and unsupervised learning drives the need for fundamentally different evaluation frameworks, as summarized in Table 1.

Table 1: Core Differences and Evaluation Metrics

| Aspect | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Data Requirements | Labeled data with known input-output pairs [35] | Unlabeled data without predefined categories [35] |

| Primary Goal | Predict specific outcomes for new data [35] [37] | Discover hidden patterns and structures [35] [37] |

| Common Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, R², RMSE [36] [38] | Silhouette Score, Domain Expert Validation, Visual Inspection [35] |

| Typical Environmental Applications | Sensor calibration, predictive maintenance, pollutant classification [36] [38] | Anomaly detection in sensor networks, customer/region segmentation, novel pattern discovery [35] [36] |

Supervised Learning Evaluation

Supervised learning models are evaluated based on their predictive performance against a ground-truth dataset that is withheld during training (the test set). Common metrics include [36] [38]:

- Accuracy: The proportion of total predictions that are correct.

- Precision & Recall: Precision measures the correctness of positive predictions, while Recall measures the ability to find all positive instances.

- F1-Score: The harmonic mean of Precision and Recall, providing a single metric for model balance.

- R² (Coefficient of Determination): Indicates the proportion of variance in the dependent variable that is predictable from the independent variables.

- RMSE (Root Mean Square Error): Measures the average magnitude of the prediction errors.

Unsupervised Learning Evaluation

Evaluating unsupervised learning is more complex due to the absence of ground truth. Common approaches include [35]:

- Internal Indices: Metrics like the Silhouette Score, which evaluates the cohesion and separation of created clusters.

- Domain Expert Validation: Human experts must validate that the discovered patterns (e.g., clusters or anomalies) are meaningful and logically sound [35]. For instance, an anomaly detected in sensor data must be confirmed by an analyst as a genuine fault and not random noise [35].

- Visual Inspection: Using visualization techniques to assess the quality of the clustering or dimensionality reduction.

Experimental Data and Performance Comparison

Recent studies in environmental monitoring demonstrate the performance of both paradigms. A hybrid approach that uses unsupervised learning to generate labels for a subsequent supervised model is particularly effective, showcasing how the two can be combined.

Table 2: Experimental Performance in Environmental Applications

| Study Focus | Learning Type & Model | Key Performance Metrics | Result Summary |

|---|---|---|---|

| Sensor Anomaly Detection & Prediction [36] | Unsupervised: Isolation Forest (for labeling)Supervised: Random Forest, Neural Network, AdaBoost | Accuracy: Random Forest: 99.93%Neural Network: 99.05%AdaBoost: 98.04% | A two-step method where Isolation Forest autonomously labeled unlabeled sensor data, which was then used to train supervised models with exceptional accuracy. |

| Low-Cost Air Quality Sensor Calibration [38] | Supervised: Eight regression algorithms (GB, kNN, RF, etc.) | CO2 Calibration (GB): R² = 0.970, RMSE = 0.442PM2.5 Calibration (kNN): R² = 0.970, RMSE = 2.123Temp/Humidity (GB): R² = 0.976, RMSE = 2.284 | Machine learning-based calibration significantly enhanced sensor accuracy, making LCS a viable alternative to reference-grade systems. |

| On-Board Animal Behavior Classification [39] | Supervised: SVM, ANN, RF, XGBoost | Quality Criteria: Accuracy, Runtime, Storage Requirements | SVM, ANN, RF, and XGBoost performed well. ANN, RF, and XGBoost were identified as most suitable for on-board classification due to runtime and storage efficiency. |

Detailed Experimental Protocols

To ensure reproducibility, this section outlines the methodologies from the key experiments cited.

Protocol 1: Two-Step Anomaly Detection for Predictive Maintenance

This methodology transforms unlabeled environmental sensor telemetry (e.g., temperature, humidity, CO, LPG, smoke) into a predictive model for sensor faults [36].

Unsupervised Anomaly Labeling:

- Algorithm: Isolation Forest.

- Process: The Isolation Forest algorithm is applied to the raw, unlabeled sensor data. It "isolates" anomalies by randomly selecting a feature and then randomly selecting a split value between the maximum and minimum values of the selected feature. The core premise is that anomalies are fewer and different, making them easier to isolate. Each data point is assigned an anomaly score.

- Output: Data points are labeled as "normal" or "anomalous" based on their scores, effectively creating a labeled dataset from previously unlabeled data.

Supervised Anomaly Prediction:

- Training: The newly generated labeled dataset is used to train supervised learning models.

- Models Tested: Random Forest, Neural Network (MLP Classifier), and AdaBoost.

- Validation: The performance of the trained models is evaluated on new, unseen sensor data using metrics such as accuracy, precision, recall, and F1-score to ensure robust predictive capability [36].

The following diagram illustrates this integrated workflow:

Diagram 1: Two-step anomaly detection and prediction workflow.

Protocol 2: ML-Based Calibration of Air Quality Sensors

This protocol details the process for calibrating low-cost air quality sensors (LCS) using supervised learning to improve their accuracy against reference-grade instruments [38].

Data Collection:

- An IoT-based system collects concurrent measurements from LCS (e.g., PM2.5, CO2, temperature, humidity) and a high-accuracy reference instrument. Data is collected at a high frequency (e.g., one-minute resolution) under various real-world conditions, including exposure to emission sources like cigarette smoke, cooking, and cleaning agents [38].

Model Training & Evaluation:

- The LCS readings (and often environmental factors like temperature and humidity) are used as input features. The corresponding readings from the reference instrument are the target labels.

- A suite of supervised regression algorithms (e.g., Decision Tree, Linear Regression, Random Forest, k-Nearest Neighbors, AdaBoost, Gradient Boosting, Support Vector Machines) is trained to learn the mapping from raw LCS signals to reference values.

- The best-performing model for each sensor type is selected based on the highest R² value and the lowest RMSE and MAE (Mean Absolute Error) when compared to the reference data [38].

The Scientist's Toolkit: Essential Research Reagents & Materials

Implementing machine learning in environmental forensics requires a suite of computational tools and data resources.

Table 3: Essential Research Reagents & Materials

| Tool / Material | Function / Purpose | Example Use Case |

|---|---|---|

| Scikit-learn | Open-source library for classical ML algorithms; ideal for rapid prototyping [37]. | Implementing Random Forest for classification or k-means clustering. |

| TensorFlow / PyTorch | Open-source libraries for deep learning; suitable for production deployment and complex research, respectively [37]. | Building neural networks for complex sensor data pattern recognition. |

| Labeled Environmental Datasets | Datasets where sensor or spectral data is paired with known outcomes (e.g., contaminant type, concentration) [36] [40]. | Training and validating supervised learning models. |

| Unlabeled Sensor Telemetry | Large volumes of raw data from IoT networks without predefined labels [36]. | Applying unsupervised learning for anomaly detection or pattern discovery. |

| NSL-KDD Dataset | A benchmark dataset for network intrusion detection, useful for testing anomaly detection algorithms [40]. | Developing and testing models for cybersecurity in environmental monitoring networks. |

In environmental forensics, the choice between supervised and unsupervised learning is not a matter of superiority but of strategic alignment with the research objective and data landscape. Supervised learning offers high-accuracy, trustworthy predictions for well-defined problems with labeled data, as evidenced by its success in sensor calibration. Unsupervised learning provides unparalleled capability to explore unknown patterns in vast, unlabeled datasets, crucial for detecting novel anomalies. The emerging trend of hybrid methodologies, which leverage the strengths of both paradigms, represents a powerful frontier for developing intelligent, reliable, and proactive environmental monitoring and forensic analysis systems.

From Theory to Practice: Applying Metrics to Forensic Evidence Types

In environmental forensics, accurately attributing the source of an oil spill is critical for mitigating ecological damage, guiding remediation efforts, and assigning liability. Traditional geochemical analysis, while effective, often involves time-consuming laboratory processes and can be influenced by interpretative biases. The integration of machine learning (ML) classifiers offers a promising pathway to enhance the speed, objectivity, and accuracy of oil spill source attribution. This case study objectively evaluates the performance of various ML classifiers applied to geochemical data, providing a comparative analysis grounded in experimental data and defined performance metrics relevant to researchers and forensic scientists.

Comparative Performance of Machine Learning Classifiers

Data from recent peer-reviewed studies demonstrates the efficacy of different ML algorithms. The table below summarizes the performance metrics of top-performing classifiers from key experiments.

Table 1: Performance Metrics of Machine Learning Classifiers for Oil Spill Attribution

| Study Context | Best-Performing Classifier(s) | Accuracy | Precision | Recall/Sensitivity | F1-Score | Key Performance Notes |

|---|---|---|---|---|---|---|

| Santos Basin Geochemistry (Presalt Oils) [24] | Random Forest (RF) | 91% | Not Specified | Not Specified | Not Specified | Highest classification accuracy among 7 evaluated algorithms. |

| SPME-GC-MS Chemometric Analysis [41] | Spearman's Rank Correlation (SRC) & 3D Covariance | True Positive Rate (TPR)=100% | False Positive Rate (FPR)=0% | TPR=100% | Not Specified | Optimal performance with no misclassifications on a validation set. |

| Gulf of Mexico SAR Slick Classification [42] | Random Forest (RF) | 73.15% | Not Specified | Not Specified | Not Specified | Maximum accuracy achieved; RF was the most robust algorithm in 81% of tested scenarios. |

| Southern California Granitic Rock Classification [43] | Decision Trees | 87% | 89% | 89% | 81% | Best values for classifying granitic rock samples in a supervised learning context. |

Key Findings from Comparative Data

Random Forest's Robust Performance: The Random Forest algorithm consistently demonstrates high performance across different contexts, achieving the highest accuracy (91%) in classifying presalt oil samples from the Santos Basin [24] and proving to be the most robust model in distinguishing natural from anthropic oil slicks in the Gulf of Mexico [42]. Its ensemble nature, which reduces overfitting by averaging multiple decision trees, makes it particularly suited for complex geochemical datasets.

High-Accuracy Alternative Methods: While not always classified as ML, chemometric approaches like Spearman's Rank Correlation and 3D covariance can achieve perfect discrimination (100% TPR, 0% FPR) under controlled conditions with specific analytical techniques like HS-SPME-GC-MS [41]. This highlights that the choice of data preprocessing and similarity metrics can be as critical as the classifier itself.

Context-Dependent Algorithm Suitability: The superior performance of Decision Trees in rock classification [43] underscores that no single algorithm is universally best. The optimal classifier depends on data characteristics, with Decision Trees offering high interpretability for multi-class problems, while Random Forest provides better generalization for larger, more complex feature sets.

Detailed Experimental Protocols and Workflows

The high performance of classifiers is underpinned by rigorous and methodical experimental protocols. The following workflow synthesizes the common steps from the cited studies.

Data Acquisition and Analytical Techniques

Reliable geochemical data forms the foundation of any robust classification model. Key methodologies include:

Gas Chromatography-Mass Spectrometry (GC-MS): This is the most widely used method for analyzing petroleum biomarker distributions (e.g., terpanes and steranes) [24]. These biomarkers provide diagnostic ratios that are highly resistant to weathering and serve as unique fingerprints for oil sources [24] [45].

Headspace Solid-Phase Microextraction GC-MS (HS-SPME-GC-MS): A greener, solvent-free approach that captures and analyzes the volatile organic compounds (VOCs) emitted from crude oil samples. This non-destructive method maintains sample integrity for further analysis [41].

Data Quality Objectives (DQOs): As emphasized in mineral oil spill studies, establishing clear DQOs is paramount. This involves rigorous quality control/assurance (QC/QA) procedures, including the use of blanks, replicates, and spikes to ensure data precision, accuracy, and representativeness [45].

Data Preprocessing and Exploratory Analysis

The "garbage in, garbage out" principle is critical in ML. The cited studies involve extensive data preparation [24]:

- Preprocessing: Cleaning the dataset by addressing missing values, removing duplicates, and detecting anomalous compositional data using algorithms like Isolation Forest. Data normalization (e.g., normal score transformation) is applied to ensure all variables are on a comparable scale.

- Exploratory Data Analysis (EDA) and Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) and t-SNE (t-Distributed Stochastic Neighbor Embedding) are employed to transform multivariate data into a lower-dimensional space, revealing underlying patterns and structures [24] [44]. K-Means Clustering is also used as an unsupervised method to group similar samples before supervised classification [24] [43].

Model Validation and Robustness Testing

A classifier's performance on training data is insufficient; its robustness must be tested against independent data.

- Independent Sample Validation: The methodology developed for Santos Basin oils was validated using three independent oil samples (from spill events and a natural seep), successfully predicting their field origins with high confidence [24].

- Scenario-Specific Tuning: The Gulf of Mexico study highlighted that model performance is influenced by external factors. Creating Specific Classification Models (SCMs) tuned for specific seasons and satellite beam modes improved accuracy, with the best model achieving 83.05% accuracy in winter using ScanSAR Narrow B mode [42].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, instruments, and software essential for conducting geochemical analysis and building classifiers for oil spill attribution.

Table 2: Essential Research Reagents and Solutions for Geochemical Analysis and ML

| Item Name | Function/Brief Explanation | Relevant Context |

|---|---|---|

| GC-MS System | Separates and identifies hydrocarbon compounds in oil samples; the workhorse for biomarker analysis (terpanes, steranes). | Petroleum Geochemistry [24] |

| HS-SPME Fibers | Captures volatile organic compounds (VOCs) from the headspace of crude oil samples for solvent-free analysis. | Green Analytical Chemistry [41] |

| Certified Reference Materials | Provides a known standard for instrument calibration and data validation, ensuring analytical accuracy and reliability. | Data Quality & Usability [45] |

| Python Libraries (e.g., Scikit-learn, Pandas) | Provides open-source tools for data preprocessing, implementing ML algorithms, and model evaluation. | Machine Learning Workflow [24] [43] |

| Synthetic Aperture Radar (SAR) Data | Enables detection of oil slicks as dark patches on the sea surface via satellite, used for initial spill identification. | Remote Sensing & Oil Slick Detection [46] [42] |

This evaluation demonstrates that machine learning classifiers, particularly Random Forest, significantly enhance the objectivity and accuracy of oil spill source attribution when applied to robust geochemical data. The experimental protocols reveal a standardized workflow from rigorous data acquisition to independent validation, which is critical for generating defensible results in environmental forensic research. While classifier performance is context-dependent, the integration of ML with geochemical analysis represents a transformative advancement, reducing diagnostic workflows from days to minutes and providing a scalable solution for monitoring and protecting complex marine ecosystems. Future work should focus on standardizing data formats and developing automated machine learning (AutoML) pipelines to further increase the accessibility of these powerful tools for the scientific community.

Microbial Source Tracking (MST) has emerged as a critical discipline in environmental forensics, enabling researchers to identify and quantify sources of fecal contamination in water bodies [47]. Traditional methods that rely solely on fecal indicator bacteria, such as Escherichia coli, are limited by their inability to distinguish between contamination from different host sources [48] [49]. The advent of high-throughput sequencing technologies, particularly those targeting the 16S rRNA gene, has revolutionized this field by allowing comprehensive profiling of microbial communities [48] [50]. When combined with machine learning-based community classifiers, these approaches provide a powerful framework for source attribution in complex environmental systems. This case study examines the performance of various MST methodologies, with particular emphasis on the integration of 16S rRNA data with community classification algorithms, and situates these techniques within the broader thesis that quantitative performance metrics are essential for advancing environmental forensics research.

Experimental Protocols in Microbial Source Tracking

Sample Collection and DNA Extraction

Standardized protocols for sample collection and processing are fundamental for generating reliable, comparable MST data. In aquatic environments, water samples (typically 0.5-1.5 L) are collected from various sites representing potential pollution sources and affected sinks [48] [51]. Samples are filtered through membranes (0.2-0.4 μm) to concentrate microbial biomass, followed by DNA extraction using commercial kits such as the MoBio PowerWater kit [48]. Nucleic acid quality and concentration are assessed using spectrophotometric (e.g., Nanodrop) and fluorometric (e.g., Qubit) methods, respectively [48].

16S rRNA Gene Amplification and Sequencing

The V3-V4 hypervariable region of the bacterial 16S rRNA gene is amplified using primer pairs (e.g., 343F-804R or 338F-806R) [48] [51]. Library preparation incorporates dual index tags to enable multiplexing of samples, followed by high-throughput sequencing on Illumina platforms (e.g., MiSeq) with 2×250 bp paired-end reads [48] [51]. This targeted approach provides the taxonomic resolution necessary for distinguishing host-associated microbial communities.

Bioinformatic Processing

Sequencing data undergoes preprocessing to remove low-quality sequences and merge paired-end reads using tools such as PANDAseq [48]. Operational Taxonomic Units (OTUs) are clustered at 97% sequence similarity using algorithms like UCLUST within the QIIME pipeline, followed by taxonomic assignment against reference databases (e.g., Greengenes, SILVA) [48] [51]. Alternatively, more recent methods employ denoising algorithms (e.g., DADA2) to generate Amplicon Sequence Variants (ASVs) [50]. The resulting feature tables of taxonomic abundances serve as input for downstream statistical and machine learning analyses.

Comparison of MST Methodologies

Library-Dependent vs. Library-Independent Methods

Microbial source tracking methodologies can be broadly categorized into library-dependent and library-independent approaches, each with distinct advantages and limitations as summarized in Table 1.

Table 1: Comparison of Major MST Methodologies

| Method Type | Examples | Target | Sensitivity Range | Specificity Range | Key Limitations |

|---|---|---|---|---|---|

| Library-Dependent | Antibiotic Resistance Analysis (ARA), Carbon Utilization | Cultured isolates (E. coli, enterococci) | 12-100% [47] | 0-100% [47] | Culture-based, time-consuming, database dependent |

| Library-Independent (Host-Specific Markers) | HF183 (human), Rum-2-Bac (ruminant) | Host-associated 16S rRNA genes | 20-100% [47] [49] | 54-100% [47] [49] | Limited to known markers, cross-reactivity issues |

| Community Analysis | SourceTracker, Random Forest | Entire microbial community via 16S rRNA | High (qualitative) [51] | High (qualitative) [51] | Computational complexity, requires reference database |

DNA versus rRNA Templates

The choice of genetic template significantly impacts MST assay performance. While DNA-based approaches target marker genes, rRNA-based methods leverage the higher copy numbers of ribosomal RNA to enhance detection sensitivity, particularly valuable for identifying low-level contamination [49]. However, this increased sensitivity may come at the cost of reduced specificity, as demonstrated by the HF183 human-associated marker which showed decreased specificity when using an rRNA template (54%) compared to its rDNA counterpart (>95%) [49]. This tradeoff between sensitivity and specificity must be carefully considered based on study objectives.

Performance Metrics for Method Evaluation

Rigorous assessment of MST methods requires standardized performance metrics including sensitivity (true positive rate), specificity (true negative rate), and accuracy (overall correctness) [47] [49]. These quantitative measures enable direct comparison between methodologies and inform selection of appropriate approaches for specific monitoring scenarios. For instance, mitochondrial DNA assays exhibit excellent performance (95-100% across metrics) but are seldom detected in environmental waters, limiting their practical utility despite strong technical characteristics [49].

Machine Learning Classifiers for Community-Based MST

Machine learning classifiers applied to microbial community data represent a paradigm shift in MST, moving beyond targeted markers to leverage the complete microbial assemblage for source attribution [51] [50]. This approach recognizes that different pollution sources harbor distinct microbial communities that serve as "fingerprints" for source identification, even after mixing and environmental processing [51].

Table 2: Performance of Machine Learning Classifiers in Environmental Forensics

| Classifier Algorithm | Application Context | Key Performance Metrics | Reference |

|---|---|---|---|

| Random Forest | Oil spill identification | 91% classification accuracy | [24] |

| Gradient Boosting Machine | PFAS source tracking (water) | AUC: 0.9864, Accuracy: 0.8929 | [52] |

| Distributed Random Forest | PFAS source tracking (soil) | AUC: 0.9936, Accuracy: 0.9787 | [52] |

| SourceTracker (Bayesian) | River contamination sourcing | Correctly identified 31/34 pollution sources | [51] |

The SourceTracker Algorithm

SourceTracker implements a Bayesian algorithm that uses Gibbs sampling to calculate the proportional contributions of known source microbial communities to sink samples [51]. The method employs default parameters including rarefaction depth (1,000), burn-in (100), and restart (10) to optimize performance [51]. Validation through double-blind testing demonstrated its capability to correctly identify 31 out of 34 mixed pollution sources, establishing its reliability for environmental applications [51].

Supervised Machine Learning Approaches