Explainable AI (XAI) in Environmental Chemical Risk Assessment: Transforming Toxicology and Drug Development

This article explores the transformative role of Explainable Artificial Intelligence (XAI) in environmental chemical risk assessment, a critical field for drug development and public health.

Explainable AI (XAI) in Environmental Chemical Risk Assessment: Transforming Toxicology and Drug Development

Abstract

This article explores the transformative role of Explainable Artificial Intelligence (XAI) in environmental chemical risk assessment, a critical field for drug development and public health. It addresses the inherent 'black box' problem of complex AI models by detailing how XAI techniques provide transparent, interpretable insights into chemical toxicity predictions. The scope covers foundational principles, key methodological applications like QSAR modeling and exposure assessment, strategies to overcome data and interpretability challenges, and the validation of XAI models for regulatory decision-making. Tailored for researchers, scientists, and drug development professionals, this review synthesizes current advancements and practical frameworks to build trust and enhance the reliability of AI-driven risk assessment.

The Urgent Need for Transparency: Why XAI is Revolutionizing Chemical Risk Assessment

The integration of artificial intelligence (AI) into predictive toxicology represents a paradigm shift from a purely empirical science to a data-rich discipline poised for technological transformation [1]. Modern toxicology faces the critical challenge of integrating multifarious information sources, a task for which AI and machine learning (ML) are uniquely suited [2]. However, the "black-box" nature of many complex AI models—where internal decision-making processes remain opaque—presents significant limitations for scientific and regulatory applications [1] [2] [3]. This opacity undermines trust, impedes regulatory acceptance, and limits the scientific value of AI-derived predictions [3] [4]. As toxicology increasingly informs high-stakes decisions in chemical risk assessment and drug development, resolving this transparency deficit through explainable AI (XAI) methodologies becomes imperative for advancing environmental chemical risk assessment research [3].

The Black Box Challenge in Toxicological Applications

The "black box" problem manifests when AI models, particularly deep learning and complex ensemble methods, achieve high predictive accuracy at the expense of interpretability [3] [4]. In toxicology, this opacity is problematic because model results must be scientifically justified to avoid employing erroneous or biased models, improve fitted models, and discover hidden patterns within data [3]. The lack of transparency ultimately affects trust in model predictions for forecasting, decision support, automation, and hypothesis generation [3].

This trust deficit is particularly critical in environmental and health applications where AI predictions inform high-stakes decision-making for environmental management, planning, and chemical risk assessment [3]. While the AI model may demonstrate high accuracy, the inability to understand its reasoning creates significant implementation barriers [4]. For instance, in emergency toxicology, where AI tools show promise for enhancing diagnostic accuracy and predicting clinical outcomes, the black-box nature complicates regulatory acceptance and clinical adoption [5].

Table 1: Performance Comparison of AI Models in Predictive Toxicology

| AI Model/Application | Performance Metrics | Interpretability Level | Key Limitations |

|---|---|---|---|

| RASAR (Read-Across Structure Activity Relationships) [1] [2] | 87% balanced accuracy across 9 OECD tests, 190,000 chemicals [1] [2] | Low (Black Box) | Limited explanation for predictions |

| Deep Neural Network for Poison Identification [5] | 97-98% specificity for specific drugs [5] | Low (Black Box) | Opaque decision process for toxic identification |

| Transformer Model for Environmental Assessment [4] | 98% accuracy, AUC 0.891 [4] | Medium (with XAI) | Requires additional explainability methods |

| Animal Test Reproducibility (Benchmark) [1] [2] | 81% average reproducibility across six OECD tests [1] [2] | High (Transparent) | Ethical concerns, time-consuming |

Explainable AI (XAI) Methodologies for Toxicological Research

Explainable AI (XAI) encompasses methods designed to illuminate the learning processes of AI models, enhancing understanding of what models have learned and the reasons behind specific predictions [3]. These methodologies are particularly valuable for environmental and Earth system sciences, where scientific justification based on evidence and systems understanding is essential [3].

predominant xai techniques

The XAI landscape includes diverse approaches that can be categorized by their operation scope and model specificity:

SHAP (SHapley Additive exPlanations): This game theory-based approach is the most popular XAI method, featured in 135 articles according to a review of 575 publications [3]. It quantifies the contribution of each feature to individual predictions, providing both global and local interpretability.

LIME (Local Interpretable Model-agnostic Explanations): Employed in 21 studies, LIME approximates black-box models with interpretable local models to explain individual predictions [3].

Feature Importance Analysis: A fundamental interpretability method used in 27 articles that ranks input variables by their predictive influence [3].

Partial Dependence Plots (PDP): Visualizes the relationship between feature values and predicted outcomes, appearing in 22 studies [3].

Saliency Maps: Particularly useful for image and spatial data, this method was applied in 15 studies to highlight influential regions in input data [4].

Table 2: Explainable AI (XAI) Methods in Environmental and Toxicological Sciences

| XAI Method | Application Examples | Key Advantages | Implementation Considerations |

|---|---|---|---|

| SHAP/Shapley Values [3] | Ecology, remote sensing, water resources (135 studies) [3] | Solid theoretical foundation, both local and global explanations | Computationally intensive for large datasets |

| LIME [3] | Species distribution modeling, atmospheric sciences (21 studies) [3] | Model-agnostic, intuitive local explanations | Instability in explanations, sensitive to parameters |

| Feature Importance [3] | Geochemistry, soil science, environmental engineering (27 studies) [3] | Simple implementation, easy to communicate | Can be misleading with correlated features |

| Partial Dependence Plots [3] | Climate modeling, risk assessment (22 studies) [3] | Intuitive visualization of feature relationships | Assumes feature independence, fails to capture complex interactions |

| Saliency Maps [3] [4] | Image-based toxicity recognition, environmental assessments (15 studies) [3] [4] | Visual interpretation, identifies critical regions | Sometimes highlights irrelevant features, prone to noise |

experimental protocol: SHAP analysis for toxicity prediction

Objective: To explain predictions from a black-box model for chemical toxicity using SHAP values.

Materials and Reagents:

- Chemical Dataset: Pre-screened compounds with known toxicological endpoints (e.g., ToxCast, Tox21)

- Computational Environment: Python 3.8+ with SHAP, pandas, scikit-learn, and matplotlib libraries

- AI Model: Pre-trained gradient boosting machine (GBM) for toxicity classification

Procedure:

- Model Training: Train a GBM classifier using chemical descriptors (molecular fingerprints, physicochemical properties) and toxicity labels.

- SHAP Explainer Initialization: Initialize a TreeSHAP explainer compatible with the GBM architecture.

- Explanation Generation: Calculate SHAP values for the test set predictions to determine feature contributions.

- Visualization:

- Generate summary plots showing global feature importance across the entire dataset.

- Create force plots for individual chemical predictions to illustrate local interpretability.

- Produce dependence plots to reveal relationships between specific features and model outputs.

- Biological Validation: Correlate high-impact features with known toxicological mechanisms and pathways.

Expected Outcomes: The protocol yields quantifiable contributions of each molecular descriptor to toxicity predictions, enabling toxicologists to validate AI outputs against established biological knowledge and identify potentially novel structure-activity relationships.

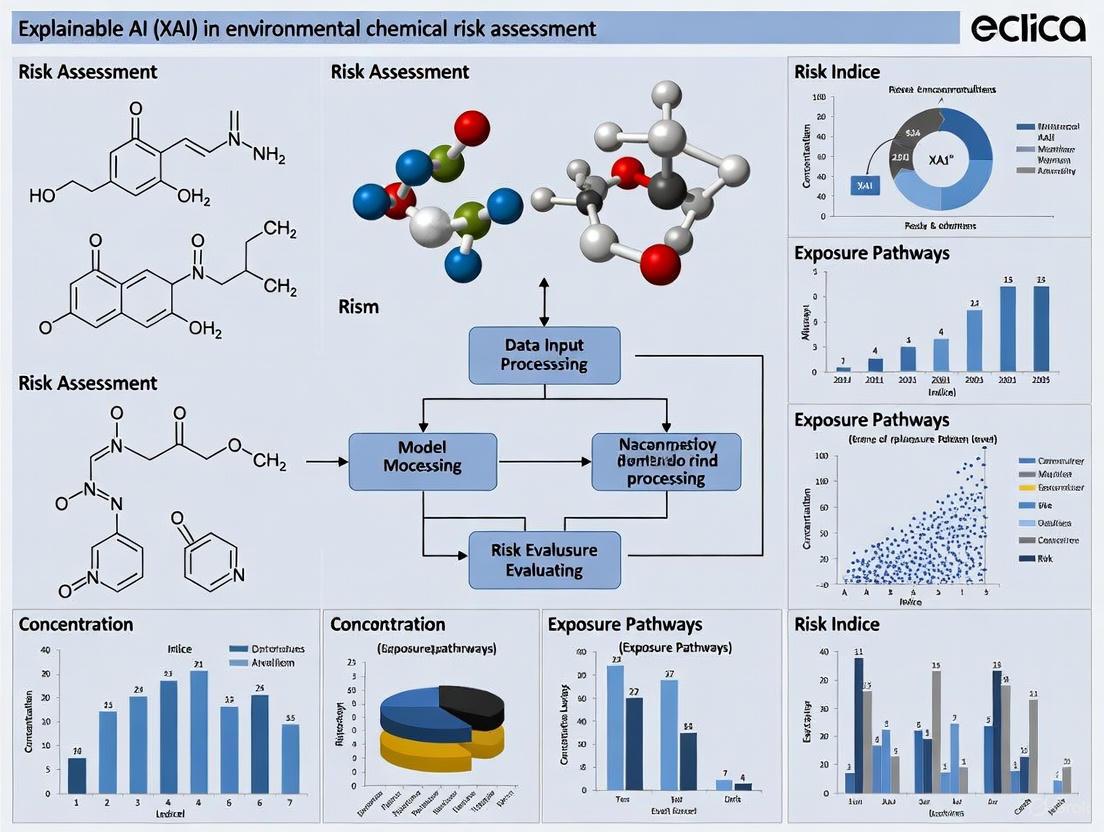

Visualization of XAI Workflows

XAI implementation workflow for toxicological AI

XAI-enhanced risk assessment pipeline

Table 3: Essential Research Resources for XAI in Predictive Toxicology

| Resource Category | Specific Tools/Platforms | Application in XAI Toxicology |

|---|---|---|

| Chemical Databases | ToxCast, Tox21, ChEMBL, PubChem | Provide curated chemical structures and associated toxicological data for model training and validation [2] [6] |

| XAI Software Libraries | SHAP, LIME, InterpretML, AIX360 | Implement explainability algorithms for interpreting black-box model predictions [3] [4] |

| ML Frameworks | Scikit-learn, PyTorch, TensorFlow, XGBoost | Enable development of predictive toxicology models with varying complexity levels [5] [4] |

| Toxicological Expert Systems | DEREK, OncoLogic, StAR | Provide knowledge-based reasoning for comparison with data-driven AI approaches [2] |

| High-Performance Computing | Cloud computing platforms, GPU clusters | Handle computational demands of large-scale toxicological data analysis and complex model explanations [2] |

Advanced Experimental Protocols

protocol: comparative model interpretability assessment

Objective: Systematically evaluate and compare the interpretability of various AI models for predicting chemical carcinogenicity.

Materials:

- Chemical Dataset: ~10,000 compounds with carcinogenicity labels from EPA's Toxicity Forecaster (ToxCast)

- Molecular Descriptors: ECFP6 fingerprints, molecular weight, logP, H-bond donors/acceptors

- Software: Python with SHAP, LIME, PDPbox, and scikit-learn libraries

Procedure:

- Model Training: Implement five different algorithms:

- Logistic Regression (baseline interpretable model)

- Decision Tree (interpretable)

- Random Forest (moderately complex)

- Gradient Boosting Machine (complex)

- Deep Neural Network (highly complex)

Performance Evaluation: Assess predictive accuracy using 5-fold cross-validation with AUC-ROC, balanced accuracy, and F1-score.

Explainability Analysis:

- Apply SHAP to all models to generate feature importance rankings

- Use LIME to explain 100 random individual predictions from each model

- Generate partial dependence plots for top 5 features across models

- Calculate explanation consistency metrics across different XAI methods

Expert Validation: Engage three toxicology domain experts to qualitatively assess explanation plausibility and biological relevance.

Expected Outcomes: This protocol will quantify the trade-off between model complexity and explainability, identify optimal model configurations for specific toxicological endpoints, and establish best practices for XAI implementation in regulatory contexts.

protocol: transformer model with integrated explainability

Objective: Implement and explain a transformer-based model for environmental risk assessment using multi-source data.

Materials:

- Environmental Data: Multivariate and spatiotemporal datasets encompassing natural and anthropogenic indicators [4]

- Parameters: Water hardness, total dissolved solids, arsenic concentrations, and other pollution indicators [4]

- Computational Framework: Transformer architecture with saliency map explainability [4]

Procedure:

- Data Integration: Fuse heterogeneous environmental data sources into a unified tensor structure with spatial and temporal dimensions.

Transformer Implementation:

- Configure encoder layers with multi-head self-attention mechanisms

- Implement positional encoding for temporal sequences

- Train model to predict environmental risk levels (I-V) from input features

Explainability Integration:

- Compute saliency maps using gradient-based attribution methods

- Identify top influential indicators for each prediction

- Generate spatial heatmaps of feature importance across geographical regions

Validation:

- Compare model accuracy against traditional assessment methods (e.g., DRASTIC)

- Correlate explanation results with known environmental contamination patterns

- Assess actionable insights for targeted environmental management

Expected Outcomes: Development of a high-accuracy (target >95%) environmental assessment model with inherent explainability capabilities, enabling transparent environmental governance decisions and identification of critical pollution indicators [4].

The transformation of predictive toxicology through AI necessitates parallel advances in model interpretability. While black-box models often demonstrate superior predictive performance, their utility in scientific and regulatory contexts remains limited without appropriate explainability safeguards [3] [4]. The XAI methodologies and experimental protocols outlined provide a framework for developing transparent, trustworthy AI systems for toxicological prediction. As the field progresses, the integration of explainability should not be an afterthought but a fundamental design requirement—ensuring that AI-powered toxicology remains both predictive and comprehensible [2] [3]. This approach will ultimately bridge the gap between computational power and scientific insight, enabling more informed chemical risk assessment decisions while maintaining scientific rigor and regulatory compliance.

The field of environmental chemical risk assessment is undergoing a paradigm shift, moving from traditional empirical methods towards data-rich, artificial intelligence (AI)-driven approaches. Modern toxicology has evolved from a purely observational science to a discipline characterized by the generation of vast, multifaceted datasets from sources like high-throughput screening (e.g., ToxCast) and omics technologies [2]. While machine learning (ML) models show exceptional strength in analyzing these complex datasets to identify correlations between chemical exposures and biological outcomes, their frequent "black-box" nature has been a significant barrier to their adoption in regulatory and public health decision-making [7] [2]. Explainable AI (XAI) is emerging as a critical discipline that bridges this gap, transforming opaque correlations into interpretable, causal insights. This document outlines specific application notes and experimental protocols for integrating XAI into environmental health research, providing a practical toolkit for researchers and risk assessors.

Application Notes: XAI in Action

The following applications demonstrate how XAI is currently being deployed to solve real-world problems in environmental science, moving beyond prediction to mechanistic understanding.

Decoding Chemical Toxicity and Mode of Action

Application Objective: To predict the aquatic toxicity of organic compounds and interpret the molecular features and toxic modes of action (MOA) driving the predictions.

Background: Quantitative Structure-Activity Relationship (QSAR) models have long been used for toxicity prediction, but often lack transparency. XAI addresses this by identifying which chemical substructures contribute most to toxicity [7].

Key Findings:

- Ensemble Models for Robust Prediction: An ensemble model named "AquaticTox," which combines six diverse machine and deep learning methods (GACNN, Random Forest, AdaBoost, Gradient Boosting, Support Vector Machine, and FCNet), was developed to predict aquatic toxicity. This ensemble approach demonstrated superior performance compared to any single model [7].

- Illuminating the Black Box with LIME: In a study targeting nuclear receptors, researchers used the Local Interpretable Model-agnostic Explanations (LIME) method in conjunction with Random Forest classifiers. This XAI technique successfully identified specific molecular fragments that impact key receptor targets, including the androgen receptor (AR), estrogen receptor (ER), and aryl hydrocarbon receptor (AhR) [7]. This provides crucial insight into the potential endocrine-disrupting effects of chemicals.

High-Resolution Environmental Exposure Assessment

Application Objective: To predict and interpret the Water Quality Index (WQI) in a watershed, identifying the most influential physicochemical parameters.

Background: Managing water resources requires analyzing complex environmental data. ML models can predict WQI, but without explainability, the results are not actionable for targeted management [8].

Key Findings:

- Gradient Boosting for Superior Accuracy: In a case study of the Ziz Basin, Morocco, an ensemble Gradient Boosting model was trained on 26 parameters from 80 monitoring stations. It achieved high predictive performance, making it a reliable tool for assessment [8].

- SHAP for Prioritizing Contaminants: The application of SHapley Additive exPlanations (SHAP), an XAI method, to the model identified the most influential water quality parameters. This allows environmental managers to prioritize intervention efforts on the most critical contaminants, moving from simply knowing the WQI to understanding how to improve it [8].

Uncovering Complex Drivers of Eco-Environmental Quality

Application Objective: To investigate the complex, non-linear drivers of eco-environmental effects resulting from land-use transitions.

Background: Traditional spatial models struggle to capture the non-linear relationships inherent in complex ecosystems. Conversely, standard ML models often ignore geographic spatial effects [9].

Key Findings:

- A Geospatial XAI (GeoXAI) Framework: A novel GeoXAI framework was implemented in the Poyang Lake Region, China, to address this. This framework integrates machine learning with geographic data, effectively capturing both non-linear relationships and spatial effects [9].

- Identifying Land-Use Impacts: The GeoXAI model revealed that the conversion of agricultural space to forest and lake spaces was the primary factor improving eco-environmental quality. Conversely, the occupation of forest and lake spaces by agricultural and residential uses was the main driver of degradation [9]. This provides a spatially-aware, causal understanding for land-use planning.

Table 1: Summary of XAI Applications in Environmental Health Research

| Application Area | Primary XAI Technique(s) | Key Interpretable Output | Regulatory or Scientific Impact |

|---|---|---|---|

| Chemical Toxicity | LIME, SHAP, Ensemble Learning | Toxicophore identification, MOA assignment [7] | Informs chemical prioritization and safer chemical design. |

| Water Quality | SHAP | Ranking of influential physicochemical parameters [8] | Enables targeted water resource management. |

| Eco-Environmental Assessment | Geospatial XAI (GeoXAI) | Identification of key land-use transitions and their spatial impact [9] | Supports sustainable territorial spatial planning. |

| Immunotoxicity | Interpretable Algorithms (e.g., rh-SiRF) | "Metal-microbial clique signatures" linking exposures to health [7] | Advances the framework for "precision environmental health". |

Experimental Protocols

This section provides a detailed, step-by-step protocol for implementing an XAI-driven analysis, using the prediction and interpretation of chemical toxicity as a representative example.

Protocol: XAI-Driven Toxicity Prediction with ToxCast Data

1. Objective: To build a high-performance, interpretable ML model for predicting a specific toxicity endpoint (e.g., endocrine disruption) using ToxCast data and to explain the model's predictions using SHAP.

2. Research Reagent Solutions

Table 2: Essential Computational Tools and Data Sources

| Item Name | Function / Description | Source / Example |

|---|---|---|

| ToxCast Database | A comprehensive high-throughput screening database providing bioactivity data for thousands of chemicals across hundreds of assay endpoints [10]. | U.S. EPA (https://www.epa.gov/chemical-research/toxicity-forecaster-toxcasttm-data) |

| Molecular Descriptors & Fingerprints | Numerical representations of chemical structures that serve as input features for QSAR models (e.g., ECFP, MACCS keys) [10]. | RDKit, PaDEL-Descriptor |

| Machine Learning Library | Software library providing implementations of ensemble and deep learning algorithms (e.g., Gradient Boosting, Random Forest). | Scikit-learn, XGBoost |

| XAI Framework (SHAP) | A game theory-based method to explain the output of any machine learning model, providing both global and local interpretability [8]. | SHAP (SHapley Additive exPlanations) Python library |

| Chemical Structure Drawing Tool | Software to visualize chemical structures and highlight features/functional groups identified by XAI. | ChemDraw, RDKit |

3. Methodology

Step 1: Data Acquisition and Curation

- Download the latest ToxCast data (e.g.,

invitrodb). - Select a target endpoint of interest (e.g., estrogen receptor activity).

- Extract the chemical structures (SMILES) and their corresponding activity calls (active/inactive) from the relevant assays.

- Curate the data by removing duplicates and compounds with inconclusive results.

Step 2: Feature Engineering

- Using a cheminformatics toolkit (e.g., RDKit), calculate a set of molecular descriptors and generate molecular fingerprints for each chemical.

- This transforms the structural information into a numerical feature matrix suitable for machine learning.

Step 3: Model Training and Validation

- Split the dataset into training (80%) and testing (20%) sets.

- Train multiple ML models, such as Random Forest, Gradient Boosting, and a simple Neural Network.

- Optimize the hyperparameters of each model using cross-validation on the training set.

- Evaluate the final models on the held-out test set using metrics like Accuracy, AUC-ROC, and Balanced Accuracy. Select the best-performing model for explanation [10].

Step 4: Model Explanation with SHAP

- Initialize a SHAP explainer object compatible with the chosen model (e.g.,

TreeExplainerfor tree-based models). - Calculate SHAP values for the test set instances. SHAP values represent the contribution of each feature (molecular descriptor) to the prediction for each individual chemical.

- Global Interpretation: Generate a SHAP summary plot to visualize the overall importance of the top molecular features across the entire dataset.

- Local Interpretation: For a specific chemical of interest (e.g., one predicted to be highly active), create a SHAP force plot or waterfall plot to illustrate how each feature pushed the model's prediction from the base value to the final output.

4. Workflow Visualization

Signaling Pathways and Mechanistic Insights

XAI helps bridge statistical correlations to testable biological hypotheses by identifying key molecular initiators in adverse outcome pathways (AOPs). A prominent example is the activation of the Aryl Hydrocarbon Receptor (AhR), a key event in multiple toxicity pathways.

AhR Signaling Pathway and XAI Interpretation

- Ligand Binding: The pathway is initiated when a planar hydrophobic chemical (e.g., a dioxin or polycyclic aromatic hydrocarbon) enters the cell and binds to the cytosolic AhR.

- Nuclear Translocation and Dimerization: The ligand-bound AhR translocates to the nucleus, sheds its chaperone proteins, and dimerizes with the AhR nuclear translocator (ARNT).

- Gene Transcription: The AhR-ARNT complex binds to xenobiotic response elements (XREs) in the DNA, leading to the upregulation of genes, including those from the cytochrome P450 family (e.g., CYP1A1).

- Immunotoxicity and Adverse Outcomes: Sustained AhR activation can disrupt immune system function, a key event that XAI models have helped uncover. By utilizing large public datasets, researchers have built QSAR models that connect AhR-related key events to reveal potential immunotoxicity mechanisms [7].

The following diagram outlines this pathway and highlights where XAI provides causal insight.

The "black-box" nature of complex artificial intelligence (AI) models presents a significant barrier to their adoption in high-stakes domains like environmental chemical risk assessment. Explainable AI (XAI) has emerged as a critical field aimed at making AI decision-making processes understandable to humans, thereby bridging the gap between powerful predictive performance and practical utility. For researchers, scientists, and drug development professionals working in environmental toxicology, XAI provides the necessary tools to understand, trust, and effectively manage AI-driven risk assessments. The core principles of XAI—interpretability, transparency, and trustworthiness—form the foundational pillars that enable this understanding [2] [11].

Interpretability refers to the ability to comprehend the AI model's mechanics and the reasoning behind its specific predictions. Transparency ensures that the model's structure, operations, and limitations are open to examination. Trustworthiness builds upon these principles by guaranteeing that the model's decisions are reliable, fair, and accountable, which is particularly crucial when informing environmental regulations or public health policies [12] [11]. The integration of these principles is transforming environmental science, moving from opaque predictions to actionable, evidence-based insights for chemical risk management.

Quantitative Comparison of XAI Techniques

The field of XAI encompasses a diverse set of techniques, each with distinct methodological approaches and applicability. The table below summarizes the primary XAI categories, their operating mechanisms, and key performance characteristics relevant to environmental data analysis.

Table 1: Overview of Prominent XAI Technique Categories

| XAI Category | Core Methodology | Key Strengths | Common Techniques | Relevant Domains |

|---|---|---|---|---|

| Attribution-Based | Generates saliency maps by tracing model predictions back to input features using gradients or activations [13]. | Class-discriminative; requires no architectural changes; provides spatial localization. | Grad-CAM, FullGrad [13] | Computer vision, Environmental image analysis [12] [13] |

| Perturbation-Based | Assesses feature importance by modifying parts of the input and observing output changes [13]. | Model-agnostic; intuitive concept; does not require model internals. | RISE [13] | General predictive modeling, Sensor data analysis |

| Transformer-Based | Leverages built-in self-attention mechanisms to trace information flow across model layers [12] [13]. | Offers global interpretability; inherently more transparent architecture. | Self-attention maps [12] [13] | Multivariate spatiotemporal data analysis [12] |

| Model-Agnostic | Explains any black-box model by treating it as an input-output function [11]. | Highly flexible; applicable to any model type (e.g., Random Forests, Neural Networks). | SHAP, LIME, PDPs [11] | Quantitative prediction tasks, Biomedical sensing, Risk assessment [2] [11] |

Evaluations of these techniques reveal critical performance trade-offs. For instance, the perturbation-based method RISE demonstrates high faithfulness in reflecting the model's reasoning but is computationally expensive, limiting its use in real-time scenarios [13]. In contrast, Grad-CAM is efficient but produces coarser explanations and is limited to specific model architectures [13]. A systematic review of quantitative prediction tasks identified SHAP as the most frequently used technique, appearing in 35 out of 44 high-quality studies, followed by LIME, Partial Dependence Plots (PDPs), and Permutation Feature Index (PFI) [11].

Experimental Protocols for XAI in Risk Assessment

Protocol: Transformer-Based Environmental Assessment with Saliency Map Explanation

This protocol outlines the methodology for developing a high-precision, explainable environmental assessment model, adapted from a published study that achieved 98% accuracy and a 0.891 AUC using a Transformer model integrated with multi-source data [12].

1. Research Question and Objective Formulation:

- Define the specific environmental risk assessment objective (e.g., classifying water quality levels based on chemical contaminants).

- Formulate the research questions that the XAI model is expected to address, ensuring they align with the principles of transparency and trustworthiness [14].

2. Multi-Source Data Acquisition and Curation:

- Data Collection: Gather large-scale, multi-source datasets encompassing both natural and anthropogenic environmental indicators. Key data types include:

- Chemical Properties: Total dissolved solids, water hardness, heavy metal concentrations (e.g., arsenic).

- Spatiotemporal Data: Geographic and time-series data to capture regional and temporal variations [12].

- Data Preprocessing: Clean, normalize, and fuse the heterogeneous data sources into a structured format suitable for model training. Ensure data complies with FAIR principles (Findable, Accessible, Interoperable, and Reusable) [2].

3. Model Training and Validation:

- Model Selection: Implement a Transformer model architecture, chosen for its performance and inherent self-attention mechanisms that aid interpretability [12].

- Training Regime: Train the model on the curated dataset. Employ cross-validation techniques to ensure robustness.

- Performance Validation: Quantify model performance using standard metrics:

- Accuracy: Percentage of correct predictions.

- Area Under the Curve (AUC): Measure of the model's ability to distinguish between classes. The benchmark study achieved an AUC of 0.891 [12].

4. Explainability Analysis using Saliency Maps:

- Explanation Generation: Apply saliency map techniques to the trained Transformer model. These maps highlight which input features (e.g., arsenic concentration, total dissolved solids) most strongly influenced the model's final prediction for a given sample [12] [13].

- Output Interpretation: Analyze the generated saliency maps to identify the most influential risk indicators. In the benchmark study, this process identified water hardness, total dissolved solids, and arsenic concentrations as the most critical factors for the model's decisions [12].

5. Validation and Actionable Insight Generation:

- Domain Expert Validation: Present the model's predictions and corresponding saliency explanations to environmental science and toxicology experts. This step is crucial for grounding the AI's output in established scientific knowledge and building trust [14].

- Reporting: Translate the model's outputs and explanations into actionable insights for targeted environmental management and regulatory decision-making [12].

Protocol: Model-Agnostic Explanation for Toxicology Prediction

This protocol utilizes model-agnostic XAI tools like SHAP to explain predictions from any underlying model, making it highly versatile for various data types in toxicology [2] [11].

1. Problem Framing and Model Development:

- Define a quantitative prediction task, such as forecasting chemical toxicity based on molecular descriptors and assay data [2] [11].

- Develop or select a predictive model (e.g., a complex ensemble method or deep learning model). The model itself can remain a "black box."

2. Application of SHAP for Global and Local Explanations:

- Global Explanations: Calculate SHAP values for the entire dataset to understand the overall average impact of each feature on the model's output. This provides a global view of feature importance.

- Local Explanations: Calculate SHAP values for individual predictions to understand the rationale behind a specific risk assessment for a single chemical.

3. Explanation Synthesis and Risk Communication:

- Aggregate SHAP results to rank features by their overall importance in the model's predictive process.

- Use visualizations like summary plots and dependence plots to communicate how different features, such as specific chemical properties or results from high-throughput tests (ToxCast, Tox21), influence the predicted toxicity risk [2] [11].

Visualization of XAI Workflow

The following diagram illustrates the logical workflow and key decision points for implementing XAI in an environmental chemical risk assessment pipeline.

Diagram 1: XAI workflow for chemical risk assessment.

The Scientist's Toolkit: Key Research Reagents & Solutions

The table below details essential computational tools and conceptual frameworks that serve as the "research reagents" for implementing XAI in environmental chemical risk assessment.

Table 2: Essential Research Reagents for XAI in Environmental Risk Assessment

| Tool/Reagent | Type | Primary Function | Application Context |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) [11] | Software Library | Quantifies the marginal contribution of each feature to a model's prediction for any given instance. | Explaining predictions from any model (e.g., tree-based models, neural networks) for toxicological outcomes. |

| Grad-CAM & Variants [13] | Algorithm | Generates visual explanations for decisions made by convolutional neural networks (CNNs). | Interpreting models that process environmental image data (e.g., satellite imagery, digital pathology). |

| Saliency Maps [12] [13] | Explanation Output | Highlights the most influential input features in a model's prediction in a spatially coherent manner. | Identifying key indicators (e.g., water hardness, arsenic) in multivariate environmental data [12]. |

| RASAR (Read-Across Structure-Activity Relationships) [2] | Predictive Tool | An automated read-across tool that uses chemical similarity for toxicity prediction. | Provides a transparent and interpretable baseline model for chemical risk assessment, achieving ~87% accuracy [2]. |

| FAIR Data Principles [2] | Framework | Ensures data is Findable, Accessible, Interoperable, and Reusable. | Foundation for building trustworthy and auditable AI models on high-quality, curated toxicology data. |

| Transformer Models [12] | Model Architecture | A neural network architecture using self-attention mechanisms for handling sequential and multivariate data. | Building high-precision (e.g., 98% accuracy) models for spatiotemporal environmental assessment [12]. |

The field of toxicology is undergoing a profound transformation, evolving from a purely empirical science focused on observing apical outcomes of chemical exposure to a data-rich discipline ripe for the integration of artificial intelligence (AI) [2] [15]. This shift is driven by the exponential growth in toxicological data generated from diverse sources, including legacy animal studies, open scientific literature, high-throughput screening assays (e.g., ToxCast, Tox21), sensor technologies, and multi-omics platforms [2] [15]. The resulting information landscape is characterized by the "Five V's" of big data: Volume, Variety, Velocity, Veracity, and Value [15] [16]. AI, particularly machine learning (ML) and deep learning (DL), is uniquely suited to handle and integrate these large, heterogeneous datasets that are both structured and unstructured—a key challenge in modern toxicology [2] [15]. This technological synergy is enabling more predictive, mechanism-based, and evidence-integrated approaches to chemical safety assessment, ultimately promising to better safeguard human and environmental wellbeing across diverse populations [15].

The integration of Explainable AI (XAI) is particularly critical for regulatory acceptance and scientific understanding [2] [17]. While powerful AI models often function as "black boxes," XAI methods provide transparency by elucidating the mechanisms underlying chemical toxicity predictions [18] [19]. This capability to interpret model decisions is essential for building trust among researchers, regulators, and drug development professionals [19]. As the field progresses, XAI is emerging as a cornerstone for developing reliable and transparent models aligned with recommendations from international regulatory bodies [17].

Application Notes: AI-Driven Paradigms in Toxicology

Predictive Toxicology and Chemical Risk Assessment

AI-powered predictive toxicology represents one of the most significant applications of machine learning in chemical safety assessment. By training on existing datasets of chemicals and their toxicity profiles, ML models can predict potential toxicity of new chemical entities, accelerating chemical screening and reducing reliance on animal testing [15]. For instance, the automated read-across tool RASAR (Read-Across-based Structure Activity Relationships) achieved 87% balanced accuracy across nine OECD tests and 190,000 chemicals in five-fold cross-validation, outperforming the average 81% reproducibility of six OECD animal tests [2]. This demonstrates that well-validated AI approaches can potentially provide more reliable toxicity predictions than some traditional animal-based methods.

The application of Explainable AI (XAI) further enhances the utility of these predictive models by unraveling the contribution of specific features to toxicity outcomes. A recent study implemented XAI, primarily through the SHAP (SHapley Additive exPlanations) method, to identify optimal in-silico biomarkers for cardiac drug toxicity evaluation [18]. The analysis revealed that an Artificial Neural Network (ANN) model coupled with eleven key in-silico biomarkers achieved outstanding classification performance for Torsades de Pointes (TdP) risk, with Area Under the Curve (AUC) scores of 0.92 for high-risk, 0.83 for intermediate-risk, and 0.98 for low-risk drugs [18]. This systematic approach to biomarker selection and model interpretation advances the field of cardiac safety evaluations under the Comprehensive In-vitro Proarrhythmia Assay (CiPA) initiative.

Table 1: Performance Metrics of AI Models in Predictive Toxicology

| Application Area | AI Technique | Key Performance Metrics | Reference |

|---|---|---|---|

| General Toxicity Prediction | RASAR (Read-Across) | 87% balanced accuracy across 9 OECD tests, 190,000 chemicals | [2] |

| Cardiac Drug Toxicity (TdP Risk) | Artificial Neural Network (ANN) with XAI | AUC: 0.92 (high-risk), 0.83 (intermediate-risk), 0.98 (low-risk) | [18] |

| hERG Inhibition Prediction | XGBoost | 84.4% accuracy, AUC: 0.876 | [18] |

| Arrhythmogenicity Classification | Support Vector Machine (SVM) | AUC: 0.963, 12.8% misclassification rate | [18] |

Environmental Chemical Risk Assessment via Nontarget Screening

The combination of Nontarget Screening (NTS) analysis with Computational Toxicology (CT) represents a promising "big data" solution for identification and risk assessment of environmental pollutants in complex mixtures [20]. NTS allows for simultaneous chemical identification and quantitative reporting of tens of thousands of chemicals in environmental matrices, while computational toxicology serves as a high-throughput means of rapidly screening chemicals for toxicity [20]. This integrated approach is particularly valuable for addressing the challenges posed by Contaminants of Emerging Concern (CECs) and complex chemical mixtures in environmental samples.

Two primary strategies have been proposed for combining NTS and CT in environmental studies [20]:

- Top-down strategy: Begins with observed adverse effects and works backward to identify causative chemicals.

- Bottom-up strategy: Starts with chemical analysis data and predicts potential biological effects.

A universal framework combining NTS and CT enables more comprehensive risk assessment of chemical mixtures and prioritization of pollutants for further testing and regulation [20]. Future enhancements to this paradigm are expected to involve multistep combination approaches, multidisciplinary databases, application platforms, multilayered functionality, effect validation, and standardization [20].

Emergency and Clinical Toxicology Applications

In emergency toxicology, where rapid and precise decision-making is critical for managing acute poisonings, AI has emerged as a valuable tool to enhance diagnostic accuracy, predict clinical outcomes, and improve clinical decision support systems [21]. The development of ToxNet at the Technical University of Munich represents a significant advancement in poison prediction. This architecture comprises a literature-matching network and graph convolutional network functioning in parallel, optimized using inductive graph attention networks [21]. Trained on data from 781,278 recorded calls, this computer-aided diagnosis system demonstrated superior performance compared against both other algorithmic models and clinicians experienced in clinical toxicology [21].

Table 2: AI Applications in Emergency Toxicology

| Clinical Application | AI Technology | Performance/Utility | Reference |

|---|---|---|---|

| Poison Identification | ToxNet (Graph Convolutional Network) | Superior to experienced clinicians in some assessments | [21] |

| Snake Species Identification | Vision Transformer | 92.2% F1-score, 96.0% species-level accuracy | [21] |

| Digoxin Toxicity Detection | Deep Learning ECG Analysis | AUC: 0.929, non-inferior to cardiac specialists | [21] |

| Methanol Poisoning Triage | LSTM, Random Forest, XGBoost | Up to 99% specificity and 100% sensitivity for intubation prediction | [21] |

Experimental Protocols

Protocol: XAI-Based Cardiac Drug Toxicity Evaluation

This protocol outlines the methodology for implementing explainable artificial intelligence to identify optimal in-silico biomarkers for cardiac drug toxicity evaluation, based on the approach described by [18].

Objective: To develop an interpretable machine learning system for predicting Torsades de Pointes (TdP) risk of drugs using in-silico biomarkers and explainable AI techniques.

Materials and Reagents:

- In-vitro patch clamp experimental data for 28 drugs from CiPA group dataset (available at: https://github.com/FDA/CiPA/)

- Computational resources for in-silico simulations (hardware/software compatible with O'Hara-Rudy human ventricular cardiomyocyte model)

- Python programming environment with libraries: scikit-learn, TensorFlow/PyTorch, SHAP, NumPy, Pandas

Procedure:

Data Generation and Preprocessing:

- Employ the Markov chain Monte Carlo method to generate a detailed dataset for 28 drugs.

- Compute twelve in-silico biomarkers for each drug: (\frac{dVm}{dt}{repol}, \frac{dVm}{dt}{max}, {APD}{90}, {APD}{50}, {APD}{tri}, {CaD}{90}, {CaD}{50}, {Ca}{tri}, {Ca}_{Diastole}, qInward, ) and (qNet).

- Split data into training set (12 drugs) and test set (16 drugs).

Machine Learning Model Training:

- Train multiple classifier types: Artificial Neural Networks (ANN), Support Vector Machines (SVM), Random Forests (RF), XGBoost, K-Nearest Neighbors (KNN), and Radial Basis Function (RBF) networks.

- Optimize hyperparameters for each model using Grid Search.

- Perform five-fold cross-validation to assess model stability.

Explainable AI Analysis:

- Apply SHAP (SHapley Additive exPlanations) method to quantify feature importance.

- Identify optimal biomarker subsets for each classifier type.

- Analyze directionality of biomarker contributions (risk increasing vs. decreasing).

Model Validation:

- Evaluate final model performance on independent test set (16 drugs).

- Calculate AUC values for high-risk, intermediate-risk, and low-risk drug classifications.

- Compare performance across classifier types with and without biomarker optimization.

Expected Outcomes: The ANN model coupled with the eleven most influential in-silico biomarkers is expected to show the highest classification performance with AUC scores of approximately 0.92 for high-risk, 0.83 for intermediate-risk, and 0.98 for low-risk drugs [18]. SHAP analysis will reveal that optimal biomarker selection varies for different classification models, providing insights into the mechanistic basis of cardiac drug toxicity.

Protocol: AI-Driven Nontarget Screening for Environmental Risk Assessment

This protocol describes the integration of Nontarget Screening (NTS) with Computational Toxicology (CT) for identification and risk assessment of environmental pollutants, following the framework proposed by [20].

Objective: To combine high-resolution mass spectrometry-based nontarget screening with computational toxicology tools for comprehensive characterization of chemical mixtures in environmental samples.

Materials and Reagents:

- Environmental samples (water, soil, sediment, biota)

- High-resolution liquid chromatography or gas chromatography mass spectrometry system

- Chemical database resources (e.g., CompTox Chemicals Dashboard, PubChem)

- Computational toxicology platforms (e.g., OPERA, TEST, VEGA)

- ML/DL platforms for toxicity prediction

Procedure:

Sample Preparation and Nontarget Screening:

- Extract chemicals from environmental matrices using appropriate techniques (Solid Phase Extraction for water, accelerated solvent extraction for solids).

- Analyze extracts using LC-HRMS or GC-HRMS with appropriate quality controls.

- Perform peak picking, componentization, and molecular formula assignment.

Compound Identification:

- Query experimental spectra against spectral databases (e.g., NIST, MassBank).

- Utilize in silico fragmentation tools (e.g., CFM-ID, MetFrag) for unknown annotation.

- Apply confidence levels for identification per Schymanski et al. framework.

Computational Toxicology Assessment:

- Import identified compounds and their concentrations to computational toxicology workflow.

- Apply QSAR models and read-across approaches to predict toxicity endpoints.

- Utilize deep learning models for toxicity hazard assessment.

- Estimate exposure potential based on chemical use and detection frequency.

Risk Prioritization and Mixture Assessment:

- Calculate risk quotients based on predicted toxicity and estimated exposure.

- Prioritize chemicals based on risk-based ranking.

- Assess potential mixture effects using concentration addition or independent action models.

- Validate predictions with targeted bioassays where appropriate.

Expected Outcomes: This integrated approach enables simultaneous identification and risk assessment of thousands of chemicals in complex environmental matrices [20]. The protocol supports both "top-down" (effect-based) and "bottom-up" (chemical-based) strategies for chemical prioritization, facilitating more comprehensive assessment of contaminant mixtures in environmental samples.

Visualization: AI Workflows in Toxicology

XAI-Based Cardiac Toxicity Screening Workflow

Integrated NTS-CT Environmental Assessment Framework

Table 3: Key Research Reagent Solutions for AI-Enhanced Toxicology

| Resource Category | Specific Tool/Platform | Function in AI Toxicology | Application Example |

|---|---|---|---|

| Chemical Databases | CompTox Chemicals Dashboard | Provides curated chemical structures and properties for model training | Chemical identifier standardization for QSAR modeling [20] |

| Toxicity Data Repositories | ToxCast/Tox21 Database | Supplies high-throughput screening data for machine learning | Training set for predictive toxicology models [2] [15] |

| Computational Toxicology Platforms | OPERA, VEGA, TEST | Offers open-source QSAR models for toxicity prediction | Rapid hazard assessment for chemical prioritization [20] |

| XAI Libraries | SHAP (SHapley Additive exPlanations) | Interprets complex ML model predictions | Feature importance analysis in cardiac toxicity models [22] [18] |

| Workflow Management Systems | KNIME, Pipeline Pilot | Enables construction of reproducible analysis workflows | Integration of NTS and CT data streams [20] |

| Cardiac Cell Models | O'Hara-Rudy (ORd) Human Ventricular Model | Provides in-silico biomarkers for proarrhythmia risk | Simulation of drug effects on action potential [18] |

| Mass Spectrometry Tools | Various LC/GC-HRMS Platforms | Enables nontarget screening of complex mixtures | Identification of unknown environmental contaminants [20] |

| Deep Learning Frameworks | TensorFlow, PyTorch | Facilitates development of custom neural network models | Toxicity prediction from chemical structures [15] |

XAI in Action: Key Techniques and Real-World Applications for Chemical Safety

The adoption of artificial intelligence (AI) and machine learning (ML) in environmental chemical risk assessment has introduced a critical challenge: the "black-box" nature of complex models. As these models are increasingly used to predict chemical toxicity, environmental fate, and human health impacts, their lack of transparency poses significant limitations for regulatory acceptance and scientific trust. Explainable AI (XAI) has emerged as an essential solution to this problem, providing techniques that elucidate the underlying decision-making processes of ML models. In high-stakes fields like chemical risk assessment, where model predictions can influence regulatory decisions affecting public health and environmental policy, understanding how models arrive at their predictions is not merely advantageous—it is fundamental to scientific validity and ethical implementation [7] [2].

The transformation of toxicology from a purely empirical science to a data-rich discipline has created an environment where AI methods are uniquely suited to handle and integrate large, diverse data volumes [2]. However, this transition also highlights the tension between model complexity and interpretability. As noted in recent research, "The lack of interpretability in AI-based intrusion detection systems poses a critical barrier to their adoption in forensic cybersecurity, which demands high levels of reliability and verifiable evidence" [23]. This challenge is equally pertinent to environmental health sciences, where the need for transparent, auditable, and trustworthy AI systems is paramount for regulatory decision-making and public health protection [7].

Fundamental XAI Concepts and Terminology

Core Principles of Explainable AI

Explainable AI operates on several foundational principles that distinguish it from conventional "black-box" modeling approaches. Interpretability refers to the ability to comprehend the mechanistic pathway from input data to model prediction, enabling researchers to understand which features the model uses and how it combines them to generate outputs. Fidelity measures how accurately an explanation captures the true reasoning process of the underlying model, not just correlative relationships in the data. Stability ensures that similar instances receive consistent explanations, preventing contradictory interpretations for nearly identical inputs. Causality represents the aspiration to move beyond correlative associations to identify cause-effect relationships, though this remains challenging in practice [23].

The distinction between global and local explainability represents another crucial concept in XAI. Global explainability aims to provide a comprehensive understanding of overall model behavior across the entire feature space, answering questions about which features are most important in general and how they interact. In contrast, local explainability focuses on individual predictions, clarifying why a specific chemical was classified as toxic or why a particular exposure level was deemed hazardous. As evidenced in environmental health applications, "XAI helps to understand 'black box' models, improving transparency in model predictions, which is essential for their applications in regulatory and public health decision-making" [7].

The XAI Taxonomy: Model-Specific vs. Model-Agnostic Approaches

XAI techniques can be categorized based on their relationship to the underlying ML model. Model-specific methods are intrinsically tied to particular algorithm architectures and leverage their internal structures to generate explanations. Examples include feature importance measures in tree-based models like Random Forest or attention mechanisms in deep learning architectures. These approaches typically offer high fidelity but limited flexibility across different modeling paradigms.

Model-agnostic methods constitute the majority of contemporary XAI techniques and can be applied to virtually any ML model. These methods treat the model as a true black box, analyzing input-output relationships without knowledge of the internal architecture. As demonstrated across multiple domains, "SHAP and LIME have gained prominence for offering both global and local interpretability" regardless of the underlying model complexity [23]. This flexibility makes model-agnostic methods particularly valuable in environmental chemical risk assessment, where researchers often experiment with multiple modeling approaches to address complex questions about chemical toxicity and environmental fate.

Prominent XAI Techniques: Theoretical Foundations

SHAP (SHapley Additive exPlanations)

SHAP represents one of the most mathematically rigorous approaches to explainable AI, rooted in cooperative game theory and specifically the concept of Shapley values. The fundamental principle behind SHAP involves calculating the marginal contribution of each feature to the final prediction by considering all possible combinations of features. This approach satisfies key mathematical properties including local accuracy (the explanation model matches the original model for the specific instance being explained), missingness (features not present in the instance have no impact), and consistency (if a model changes so that a feature's contribution increases, the SHAP value should not decrease) [23].

The mathematical foundation of SHAP makes it particularly valuable for environmental health applications where understanding feature interactions is crucial. For example, when assessing the toxicity of chemical mixtures, SHAP can help quantify the individual contribution of each chemical component while accounting for synergistic or antagonistic effects. Recent research has demonstrated that "SHAP, grounded in cooperative game theory, assigns consistent and accurate attribution values to features," making it especially suitable for high-stakes applications like chemical risk assessment [23]. In practical terms, SHAP explanations provide both global insights into overall model behavior and local explanations for individual predictions, creating a comprehensive interpretability framework for environmental health researchers.

LIME (Local Interpretable Model-Agnostic Explanations)

LIME operates on a fundamentally different principle from SHAP, focusing on creating local surrogate models to explain individual predictions. The core intuition behind LIME is that complex global models may be too difficult to interpret overall, but their behavior in the immediate vicinity of a specific instance can be approximated by a simpler, interpretable model such as linear regression or decision trees. LIME generates perturbations of the instance being explained, observes how the black-box model behaves for these perturbed instances, and then weights these observations by their proximity to the original instance to fit an interpretable surrogate model [7] [23].

In environmental chemical risk assessment, LIME has proven particularly valuable for investigating unexpected model predictions. For instance, when a QSAR model identifies a seemingly benign chemical as highly toxic, LIME can help identify which specific molecular fragments or descriptors drove this classification. Research has shown that "utilizing the Local Interpretable Model-agnostic Explanations (LIME) method in conjunction with Random Forest (RF) classifier models, Rosa et al. identified molecular fragments impacting five key nuclear receptor targets: androgen receptor (AR), estrogen receptor (ER), aryl hydrocarbon receptor (AhR), aromatase receptor (ARO), and peroxisome proliferator-activated receptors (PPAR)" [7]. This capability to identify specific structural features associated with toxicity mechanisms makes LIME an invaluable tool for chemical safety assessment.

Additional XAI Techniques

Beyond SHAP and LIME, several other XAI techniques show promise for environmental health applications. Partial Dependence Plots (PDPs) visualize the relationship between a feature and the predicted outcome while marginalizing over the values of all other features, showing how the model's prediction changes as a specific feature varies. Individual Conditional Expectation (ICE) plots extend PDPs by showing the relationship for individual instances, revealing heterogeneity in model behavior. Permutation Feature Importance measures the decrease in model performance when a single feature is randomly shuffled, indicating which features the model relies on most heavily for accurate predictions [24].

Each technique offers distinct advantages and limitations, suggesting that a diversified approach to explainability may be most appropriate for comprehensive chemical risk assessment. As demonstrated in healthcare applications, the combination of multiple XAI techniques can provide complementary insights that enhance overall understanding and trust in model predictions [24].

Quantitative Comparison of XAI Techniques

Table 1: Comparative Analysis of Prominent XAI Techniques

| Technique | Theoretical Foundation | Explanation Scope | Key Advantages | Documented Limitations | Environmental Health Applications |

|---|---|---|---|---|---|

| SHAP | Cooperative game theory (Shapley values) | Global & Local | Mathematical rigor; consistency guarantees; unified framework | Computational intensity; feature dependence assumption | Toxicity prediction; chemical mixture assessment; exposure modeling [7] [23] |

| LIME | Local surrogate modeling | Local | Intuitive explanations; model-agnostic; computationally efficient | Instability to sampling variations; local fidelity concerns | Molecular fragment identification; structural alert discovery [7] [23] |

| Permutation Feature Importance | Model performance degradation | Global | Simple implementation; intuitive interpretation | Can be biased toward correlated features; no local explanations | Feature selection in QSAR models; biomarker identification [24] |

| Partial Dependence Plots | Marginal effect estimation | Global | Visual interpretability; captures non-linear relationships | Assumption of feature independence; ecological fallacy | Exposure-response relationship visualization [25] |

Table 2: Performance Metrics for XAI Techniques in Research Studies

| Study Context | ML Model | XAI Technique | Key Performance Metrics | Interpretability Insights |

|---|---|---|---|---|

| Intrusion Detection [23] | XGBoost | SHAP & LIME | Explanation stability: SHAP > LIME; Fidelity: SHAP (0.98), LIME (0.94) | SHAP provided more stable and globally coherent explanations |

| Chemical Hazard Prediction [25] | XGBoost, Random Forest | SHAP, ICE | ROC-AUC: 0.768 (toxicity), 0.917 (reactivity); Key descriptors: MIC4, ATSC2i | Identified critical molecular descriptors for hazard classification |

| Depression Risk Assessment [26] | Random Forest | SHAP | AUC: 0.967; F1 score: 0.91 | Serum cadmium and cesium identified as top risk predictors |

| Osteoporosis Risk [24] | XGBoost | SHAP, LIME, Permutation | Accuracy: 91%; Precision: 0.92; Recall: 0.91 | Age confirmed as primary risk factor, validating clinical knowledge |

XAI Experimental Protocols in Chemical Risk Assessment

Protocol 1: SHAP for Chemical Toxicity Prediction

Objective: To identify molecular features driving toxicity predictions in quantitative structure-activity relationship (QSAR) models and generate mechanistic hypotheses for experimental validation.

Materials and Reagents:

- Chemical Dataset: Curated toxicity data with molecular descriptors (e.g., PubChem, Tox21)

- Computational Environment: Python 3.8+ with SHAP package (v0.41.0)

- ML Models: XGBoost, Random Forest, or Deep Neural Networks

- Descriptor Software: RDKit (2022.09.5+) for molecular feature calculation

Procedure:

- Data Preparation: Curate a dataset of chemicals with reliable toxicity endpoints. Calculate molecular descriptors (e.g., topological, electronic, and geometrical descriptors) using cheminformatics software.

- Model Training: Split data into training (70%), validation (15%), and test sets (15%). Train multiple ML models using cross-validation and select the best performer based on ROC-AUC and precision-recall metrics.

- SHAP Explanation Generation:

- For global explanations: Compute SHAP values for the entire test set using the

shap.TreeExplainer()for tree-based models orshap.KernelExplainer()for other models. - For local explanations: Select specific chemicals of interest and compute their SHAP values using the same explainer.

- For global explanations: Compute SHAP values for the entire test set using the

- Result Interpretation:

- Generate summary plots to visualize feature importance across the entire dataset.

- Create force plots for individual predictions to illustrate how features combine to yield the final prediction.

- Analyze dependence plots to understand interaction effects between key molecular descriptors.

- Validation: Compare identified key features with known toxicophores and structural alerts from scientific literature. Design experimental studies to test hypotheses generated from SHAP analysis.

This protocol has been successfully applied in recent research where "SHAP and ICE analyses identified key molecular descriptors such as MIC4, ATSC2i, ATS4i and ETAdEpsilonC as critical determinants for toxicity, flammability, reactivity, and RW respectively" [25].

Protocol 2: LIME for Chemical Mixture Toxicity Assessment

Objective: To interpret model predictions of mixture toxicity and identify contributing components in complex chemical mixtures.

Materials and Reagents:

- Mixture Toxicity Data: Experimental data on binary or complex mixture toxicities

- Component Characterization: Pure component chemical descriptors and concentrations

- Computational Resources: Python with LIME package (v0.2.0.1)

- Visualization Tools: Matplotlib (3.7.0+) for explanation visualization

Procedure:

- Data Representation: Develop feature representations that capture both chemical properties and relative proportions in mixtures.

- Model Development: Train ensemble models (Random Forest or Gradient Boosting) to predict mixture toxicity from component features and concentrations.

- LIME Implementation:

- For each mixture prediction of interest, generate perturbed samples by slightly varying component concentrations and descriptors.

- Obtain predictions from the black-box model for these perturbed samples.

- Fit a weighted linear model to the perturbed samples and their predictions.

- Extract coefficients from the local surrogate model as feature importance scores.

- Analysis:

- Identify which mixture components drive toxicity predictions for specific mixtures.

- Detect non-linear concentration-response relationships through multiple local explanations.

- Compare explanations across similar mixtures to identify consistent patterns.

- Mechanistic Hypothesis Generation: Use explanation results to propose molecular mechanisms of mixture toxicity for experimental validation.

This approach aligns with recent work that developed "linear QSAR model to predict time dependent toxicities of binary mixtures of five antibiotics and found the number of hydrogen-bonded donor and positively charged pharmacophore point pairs at a topological distance of four bonds will significantly influence such mixture toxicity" [7].

Diagram 1: XAI Workflow for Chemical Risk Assessment - This diagram illustrates the comprehensive pipeline for applying explainable AI techniques in chemical risk assessment, from data preparation through experimental validation.

Table 3: Essential Research Reagents and Computational Resources for XAI in Chemical Risk Assessment

| Category | Item | Specifications | Application in XAI Workflows |

|---|---|---|---|

| Chemical Data Resources | Tox21 Database | ~10,000 chemicals; 70+ assay endpoints | Training and validating ML models for toxicity prediction [7] |

| PubChem Bioassay | 1,000,000+ compounds; 200+ bioassays | Feature generation and model training data [2] | |

| Software Libraries | SHAP Python Package | Version 0.41.0+ | Unified framework for explaining model predictions [23] [25] |

| LIME Python Package | Version 0.2.0.1+ | Local interpretable model-agnostic explanations [23] | |

| RDKit Cheminformatics | 2022.09.5+ release | Molecular descriptor calculation and feature engineering [25] | |

| Computational Infrastructure | High-Performance Computing Cluster | 64+ GB RAM; 16+ CPU cores | Handling large-scale chemical datasets and complex models [2] |

| GPU Acceleration | NVIDIA A100 or V100 | Accelerating deep learning models and SHAP computations [23] | |

| Reference Materials | OECD QSAR Toolbox | Version 4.5+ | Regulatory framework integration and analog identification [27] |

| Chemical Regulatory Lists | EPA DSSTox; REACH | Benchmarking and validation against known hazardous chemicals [25] |

Implementation Framework and Best Practices

Strategic Selection of XAI Techniques

Choosing appropriate XAI techniques requires careful consideration of research objectives, model complexity, and audience needs. For regulatory submissions where auditability and reproducibility are paramount, SHAP provides mathematically rigorous explanations with consistency guarantees. For exploratory research aimed at hypothesis generation, LIME offers intuitive, case-specific insights that can guide experimental design. For model debugging and feature selection, Permutation Feature Importance efficiently identifies data leaks and redundant features [23] [25].

The complementary nature of these techniques suggests that a hybrid approach often yields the most comprehensive insights. Recent studies in cybersecurity and healthcare demonstrate that "the results confirm the complementary strengths of SHAP and LIME, supporting their combined use in building transparent, auditable, and trustworthy AI systems" [23]. This principle extends directly to chemical risk assessment, where different questions may require different explanatory approaches.

Addressing XAI Limitations in Chemical Applications

Despite their utility, XAI techniques present important limitations that researchers must acknowledge and address. Explanation stability remains a concern, particularly for LIME, where different random seeds can yield meaningfully different explanations. Feature correlation can distort importance measures in both SHAP and permutation methods. Cognitive overload may result from presenting too many explanations without strategic prioritization [23].

To mitigate these limitations, implement explanation validation through domain expertise consultation and experimental verification. Employ multiple techniques to triangulate findings and identify robust patterns. Incorporate domain knowledge constraints to filter out chemically implausible explanations. Recent research emphasizes that "prompt engineering and multi-step reasoning" can enhance the relevance and actionability of AI-generated explanations in scientific domains [27].

Future Directions and Emerging Applications

The integration of XAI with emerging AI paradigms represents the next frontier in chemical risk assessment. Generative AI methods show promise for creating synthetic chemical data that maintains privacy while enabling model explanation. Large Language Models (LLMs) are being developed as "dynamic interfaces to guide decision-making in complex data environments" for hazard assessment [27]. Federated learning approaches enable model explanation across distributed datasets without compromising data sovereignty.

The concept of causal explainability represents perhaps the most significant future direction, moving beyond correlative associations to identify causal mechanisms linking chemical structures to biological outcomes. As the field progresses, "AI should not just replicate human skills at scale" but rather "find new ways to do so" that enhance our fundamental understanding of chemical-biological interactions [2]. This perspective suggests that XAI will evolve from simply explaining predictions to actively driving scientific discovery in environmental health sciences.

The ethical implementation of XAI in regulatory contexts will require continued attention to documentation standards, bias mitigation, and validation frameworks. Recent proposals include "a checklist of ethical guidelines in data collection, data analysis, and data sharing in the AI era" with specific checkpoints such as "clear labeling of simulated or augmented data, proper documentation of model architecture and hyperparameter optimization to track bias, and implementation of XAI techniques to improve interpretability" [7]. As these frameworks mature, XAI promises to transform chemical risk assessment from a predominantly empirical science to a more predictive and mechanistic discipline capable of addressing the challenges posed by the thousands of new chemicals introduced into commerce each year.

The field of toxicology has progressively shifted from a purely empirical science to a data-rich discipline, creating an urgent need for innovative solutions that can handle large, diverse data volumes [2]. Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) models have long served as crucial tools for predicting compound bioactivity and toxicity based on structural information [7]. However, these models have traditionally operated as "black boxes," providing predictions without mechanistic explainability, which has limited their acceptance in regulatory decision-making [28].

Explainable Artificial Intelligence (XAI) has emerged as a transformative approach to address this opacity, aiming to provide understandable explanations for model predictions and thereby increasing trust and transparency [7] [2]. The implementation of XAI in environmental chemical risk assessment represents a paradigm shift, moving from purely predictive models to interpretable systems that can elucidate the underlying structural features and mechanisms driving chemical toxicity and bioactivity [7] [29]. This transparency is particularly crucial for regulatory applications and public health decision-making, where understanding the "why" behind a prediction is as important as the prediction itself [7].

XAI Methodologies and Experimental Protocols

Core XAI Techniques for QSAR/QSPR

SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) represent the most widely adopted XAI methods in chemical informatics [29]. SHAP operates on game theory principles to allocate feature importance, providing both local and global explanations, while LIME creates locally faithful interpretable models around specific predictions [29]. These methods help researchers identify molecular fragments and structural features that significantly impact biological activity and toxicity endpoints [7].

The integration of these XAI methods with large language models (LLMs) through frameworks like XpertAI represents a cutting-edge advancement, combining the strengths of XAI and natural language generation to produce scientifically accurate, interpretable explanations [29]. This synergy enables the automatic generation of natural language explanations that connect structural features to target properties based on both model analysis and scientific literature evidence [29].

Protocol: Implementing XAI-Enhanced QSAR Modeling

Objective: To develop an interpretable QSAR model for predicting chemical toxicity using XAI methodologies.

Materials and Software Requirements:

- Python 3.8+

- RDKit for chemical representation

- Scikit-learn for machine learning algorithms

- SHAP and LIME libraries for explainability

- XGBoost for gradient boosting

- Molecular datasets with toxicity endpoints

Step-by-Step Procedure:

Data Preparation and Representation

- Curate chemical structures and associated toxicity data from reliable sources (e.g., EPA ToxCast, ChEMBL)

- Compute molecular descriptors (e.g., topological, electronic, geometrical) or generate fingerprint representations (e.g., MACCS keys, Morgan fingerprints)

- Perform data splitting (70% training, 30% testing) with appropriate stratification to maintain activity class distribution

Model Training and Validation

- Train multiple machine learning algorithms (Random Forest, XGBoost, Neural Networks) using 5-fold cross-validation

- Optimize hyperparameters through grid search or Bayesian optimization

- Evaluate model performance using metrics including accuracy, precision, recall, F1-score, and AUC-ROC

- Apply external validation using hold-out test sets to assess generalizability

XAI Implementation and Interpretation

- Compute SHAP values for the trained model to determine global feature importance

- Apply LIME to generate local explanations for specific chemical predictions

- Identify critical molecular fragments and structural alerts contributing to toxicity

- Validate explanations against known toxicological mechanisms and structural alerts

Explanation Generation and Validation

- Integrate XAI outputs with literature evidence using retrieval-augmented generation (RAG) approaches

- Generate natural language explanations connecting structural features to toxicity mechanisms

- Engage domain experts to assess the scientific plausibility of generated explanations

- Refine models based on explanatory insights to improve both performance and interpretability

Quantitative Performance of XAI-Enhanced Models

Table 1: Performance Comparison of AI/ML Models in Toxicity Prediction

| Model Type | Application | Performance | Key Advantages |

|---|---|---|---|

| Ensemble Learning (AquaticTox) | Aquatic toxicity prediction across five species | Outperformed all single models [7] | Combines six diverse ML/DL methods; incorporates toxic mode of action (MOA) knowledge base |

| Automated Read-Across (RASAR) | Nine OECD tests across 190,000 chemicals | 87% balanced accuracy [2] | Exceeded animal test reproducibility (81%) |

| Multiplayer Perception (MLP) | Lung surfactant inhibitors assessment | Best performance among classic and deep learning models [7] | Effective for specific endpoint prediction |

| Random Forest with LIME | Identification of molecular fragments impacting nuclear receptors | Enabled interpretation of "black box" predictions [7] | Critical for understanding endocrine disruption pathways |

Table 2: XAI Methods and Their Applications in Chemical Risk Assessment

| XAI Method | Implementation | Key Outcomes | Regulatory Relevance |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) | Integration into MolPipeline package for chemical compound tasks [30] | Automatic extraction of chemical information; visualization of significant contributions on molecular structure [30] | Facilitates comparison with known structural alerts; validates model explanations |

| LIME (Local Interpretable Model-agnostic Explanations) | Used with Random Forest classifiers for nuclear receptor targets [7] | Identified molecular fragments impacting AR, ER, AhR, ARO, and PPAR receptors [7] | Essential for understanding endocrine disruption mechanisms |

| XpertAI Framework | Combines XAI with Large Language Models (LLMs) [29] | Generates natural language explanations from raw chemical data; combines specificity with scientific accuracy [29] | Mimics scientific reasoning processes; enhances trust through literature-grounded explanations |

| Repeated Hold-out Signed-Iterated Random Forest (rh-SiRF) | Analysis of metal-microbiome interactions in intestinal inflammation [7] | Identified "metal-microbial clique signatures" associated with health outcomes [7] | Enables "precision environmental health" through detection of multiordered predictor combinations |

Advanced Applications and Workflows

Workflow: XAI-Driven Chemical Risk Assessment

XAI-Driven Chemical Risk Assessment Workflow

Protocol: XAI for Mixture Toxicity Assessment

Objective: To predict time-dependent toxicities of binary chemical mixtures using interpretable QSAR modeling.

Special Considerations: Mixture toxicity represents a significant challenge in chemical risk assessment due to the lack of experimental data and complex interaction effects [7].

Experimental Procedure:

Data Collection and Curation

- Compile experimental data on binary mixture toxicities from scientific literature and databases

- Represent each chemical component using molecular descriptors and fingerprints

- Include interaction terms to capture potential synergistic or antagonistic effects

Model Development with Interpretability Focus

- Develop linear QSAR models specifically designed for mixture toxicity prediction

- Incorporate explicit interaction descriptors between mixture components