Feature Selection Algorithms for Environmental Source Identification: A Data-Driven Guide for Researchers

This article provides a comprehensive guide to feature selection algorithms for environmental source identification, tailored for researchers and scientists.

Feature Selection Algorithms for Environmental Source Identification: A Data-Driven Guide for Researchers

Abstract

This article provides a comprehensive guide to feature selection algorithms for environmental source identification, tailored for researchers and scientists. It explores the foundational challenges of environmental data, including high dimensionality, sparsity, and compositionality. The review covers a suite of methodological approaches, from filter to wrapper and embedded methods, with specific applications in genomics, pollution tracking, and sensor calibration. It further addresses critical troubleshooting and optimization strategies for real-world data and offers a comparative analysis of algorithm performance and validation frameworks. The synthesis aims to equip professionals with the knowledge to build more accurate, robust, and interpretable models for identifying the sources of environmental phenomena.

The Unique Challenges of Environmental Data in Source Identification

Understanding High Dimensionality and the 'Curse of Dimensionality' in Metabarcoding and Genomic Data

Frequently Asked Questions

What is the "Curse of Dimensionality" in the context of genomic data?

The curse of dimensionality refers to the phenomena and challenges that arise when analyzing data with a vast number of features (dimensions), a common scenario in genomics and metabarcoding. As the number of dimensions increases, the volume of the feature space expands exponentially, causing the data within it to become sparse. This sparsity makes it difficult for machine learning models to learn meaningful patterns, increases computational costs, and heightens the risk of overfitting, where a model performs well on its training data but fails to generalize to new, unseen data [1] [2].

Why are metabarcoding datasets particularly prone to this curse?

Metabarcoding datasets are often characterized by a "short, fat data" problem, where the number of features (e.g., Operational Taxonomic Units or OTUs, Amplicon Sequence Variants or ASVs) far exceeds the number of samples gathered. For example, a dataset might have tens of thousands of ASVs but only a few hundred samples [3]. This high-dimensionality is compounded by the data's inherent sparsity and compositionality, creating an ideal environment for the curse of dimensionality to impair data analysis [3].

How can I tell if my model is suffering from the curse of dimensionality?

A primary indicator is a significant performance gap between your model's performance on the training data and its performance on a held-out validation or test set, suggesting overfitting. You might also observe that the model becomes computationally very expensive to train, or that distance-based metrics become less meaningful [2] [4].

What is the Hughes Phenomenon?

The Hughes Phenomenon describes the relationship between the number of features and a classifier's performance. Initially, performance improves as more features are added. However, beyond an optimal point, adding more features introduces noise and irrelevant information, which leads to a degradation in the model's generalization performance [2].

Troubleshooting Guides

Problem: Model Overfitting and Poor Generalization

Symptoms:

- High accuracy on training data but low accuracy on validation or test data.

- The model's predictions have high variance.

Solutions:

- Apply Feature Selection: Identify and retain only the most informative features.

- Filter Methods: Use statistical tests to select features independent of the model. Common methods include

SelectKBest[4]. - Wrapper Methods: Use the model itself to evaluate feature subsets. A powerful technique is Recursive Feature Elimination (RFE), which has been shown to enhance the performance of models like Random Forest on metabarcoding data [3].

- Embedded Methods: Use models that perform feature selection as part of their training process. Random Forest and Lasso (L1) Regularization are excellent examples. Lasso shrinks the coefficients of irrelevant features to zero, effectively removing them [2] [4].

- Filter Methods: Use statistical tests to select features independent of the model. Common methods include

- Use Regularization: Techniques like L1 (Lasso) and L2 (Ridge) regularization add a penalty to the model's loss function to constrain its complexity and prevent overfitting [2] [4].

Problem: High Computational Cost and Long Training Times

Symptoms:

- Model training takes an impractically long time.

- High memory usage during model training.

Solutions:

- Dimensionality Reduction: Transform your high-dimensional data into a lower-dimensional space.

- Principal Component Analysis (PCA): A linear technique that finds the directions of maximum variance in the data. It is highly effective for reducing computational load [2] [4].

- t-SNE (t-Distributed Stochastic Neighbor Embedding): A non-linear technique particularly useful for visualizing high-dimensional data in 2D or 3D, though it is less commonly used for pre-processing for machine learning models [2].

- Variance Thresholding: A simple, fast filter method that removes all features whose variance doesn't meet some threshold. This can rapidly reduce the number of features and is especially useful as an initial preprocessing step [3].

Problem: Low Predictive Performance on a Validation Set

Symptoms:

- The model fails to achieve satisfactory accuracy, R², or other performance metrics during cross-validation.

Solutions:

- Leverage Ensemble Methods: Algorithms like Random Forest and Gradient Boosting are often robust to the challenges of high-dimensional data. Benchmark analyses on metabarcoding data have shown that tree ensemble models consistently outperform other approaches, even without additional feature selection [3].

- Experiment with Data Representation: A benchmark study found that models trained on absolute ASV or OTU counts outperformed those using relative counts (i.e., compositional data). Normalization can obscure important ecological patterns, so consider using statistical methods designed for compositional data or models that can handle raw counts [3].

Experimental Protocols and Benchmark Data

The following table summarizes key findings from a benchmark analysis of feature selection and machine learning methods across 13 environmental metabarcoding datasets [3].

| Aspect | Key Finding | Recommendation |

|---|---|---|

| Best Performing Model | Tree ensemble models (Random Forest, Gradient Boosting) excelled in regression and classification tasks. | Start with Random Forest or Gradient Boosting as a baseline model. |

| Impact of Feature Selection | Feature selection is more likely to impair than improve the performance of tree ensemble models. | For tree ensembles, consider skipping an explicit feature selection step. |

| Recursive Feature Elimination | Enhanced Random Forest performance across various tasks when feature selection was beneficial. | If feature selection is needed, try RFE with a Random Forest estimator. |

| Variance Thresholding | Significantly reduced runtime by eliminating low-variance features. | Use for fast, initial feature pre-screening to reduce computational load. |

| Data Compositionality | Models trained on absolute counts outperformed those on relative counts. | Avoid converting to relative abundances; use absolute counts where possible. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Method | Function in Experiment |

|---|---|

| Validated Primer Sets (COI, rbcL, matK, ITS) | Ensures specific amplification of the target barcode region, reducing trial-and-error and improving reproducibility [5]. |

| BSA (Bovine Serum Albumin) | Mitigates the effects of PCR inhibitors often found in complex environmental samples, improving amplification success [5]. |

| PhiX Control Library | Spiked into low-diversity amplicon sequencing runs on Illumina platforms to improve base calling accuracy and cluster identification [5]. |

| dUTP/UNG Carryover Control System | Prevents contamination from previous PCR amplicons; UNG enzyme degrades uracil-containing DNA before amplification, leaving native DNA unaffected [5]. |

| Unique Dual Indexes (UDI) | Unique barcodes on both ends of sequencing adapters minimize index hopping (tag-jumping), which can cause sample cross-contamination in multiplexed runs [5]. |

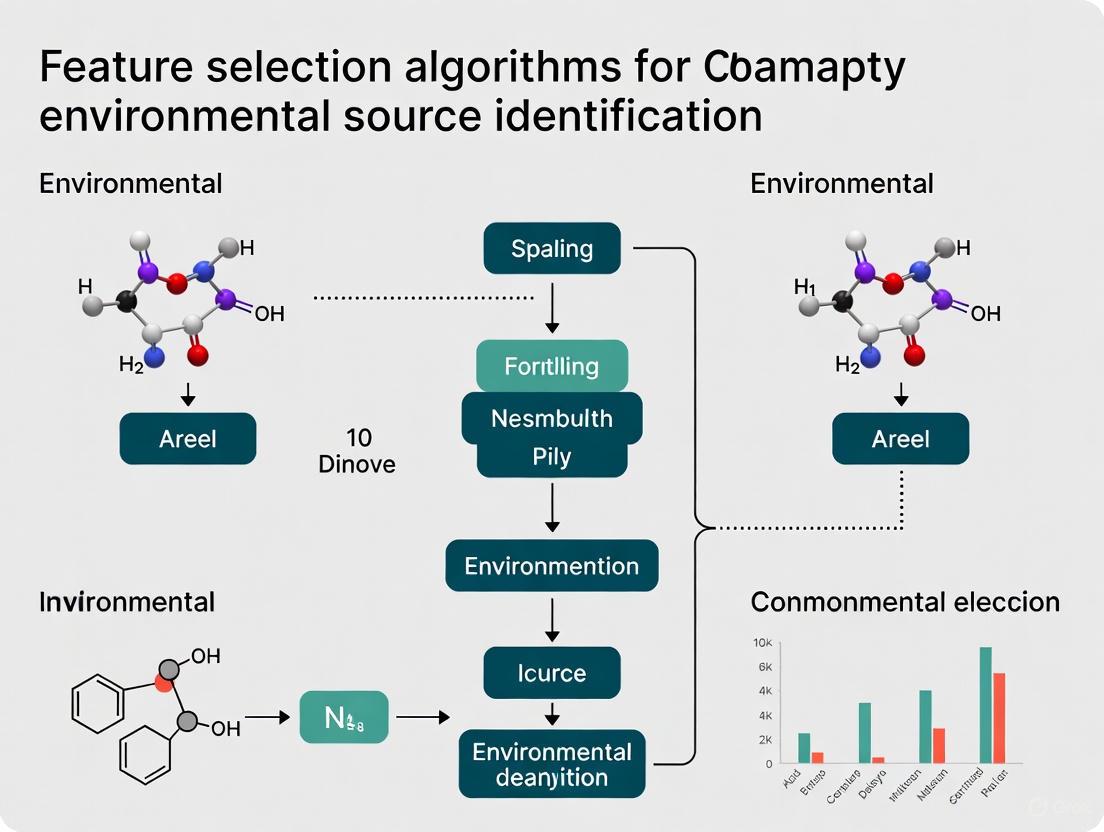

Workflow Visualization

The following diagram illustrates a recommended machine learning workflow for analyzing high-dimensional metabarcoding data, integrating solutions to the curse of dimensionality.

Recommended ML Workflow for Metabarcoding Data

Addressing Data Sparsity and Compositionality in Microbial Community Analysis

Frequently Asked Questions

1. Why do my microbial community datasets produce misleading machine learning results? Microbiome data from high-throughput sequencing are inherently compositional, meaning they represent relative proportions that sum to a constant rather than absolute abundances. This property violates fundamental assumptions of many statistical tests and machine learning algorithms, potentially leading to spurious correlations and erroneous conclusions [6] [7]. Additionally, these datasets are typically sparse, containing an excess of zero counts (often ~90%) due to rare taxa and sampling limitations, which further complicates analysis and interpretation [8] [9].

2. What is the practical difference between absolute and relative abundance in microbiome analysis? Absolute abundance refers to the actual quantity of a microbe in a unit volume of an ecosystem, while relative abundance represents the proportion of that microbe compared to all microbes detected in a sample [8]. Since sequencing data only provides relative information, you cannot determine from sequencing alone whether a microbe's increase in relative abundance represents actual growth or merely a decrease in other community members [8].

3. How does data sparsity impact my differential abundance analysis? Sparsity, characterized by a high percentage of zero counts, presents significant challenges for statistical analysis. Excess zeros can bias statistical estimates, reduce power to detect true differences, and increase false discovery rates if not appropriately modeled [9]. The impact is particularly pronounced for rare taxa, which may be biologically relevant despite their low abundance [8] [9].

4. Which normalization methods effectively address compositionality? Several normalization strategies can mitigate compositional effects:

- Centered Log-Ratio (CLR) Transformation: Effectively handles compositional constraints but requires careful handling of zeros [6]

- Rarefying: Subsampling to equal sequencing depth helps with library size differences but discards valid data [8]

- Sampling Fraction Correction: Methods like those in ANCOM account for differential sampling efficiencies between samples [8]

No single method works optimally under all conditions—selection depends on your specific data characteristics and research question [9].

Troubleshooting Guides

Problem: Compositional Effects Creating False Positives

Symptoms: Apparent correlations between taxa that don't reflect biological reality; inconsistent results between different analysis approaches.

Solutions:

- Apply Compositionally-Aware Methods: Use tools specifically designed for compositional data (e.g., ALDEx2, ANCOM, Songbird) that don't assume data independence [6] [7]

- Center Log-Ratio Transformation: Transform your data using CLR after addressing zeros with pseudo-counts or imputation [6]

- Reference-Based Approaches: Analyze taxon ratios rather than individual abundances to obtain valid inference [7]

- Focus on Rankings: In some cases, analyzing microbial rankings rather than abundances may be more robust to compositionality [8]

Experimental Protocol for Compositionality-Aware Analysis:

- Start with raw count data from your feature table

- Apply a zero-handling strategy (pseudo-count or imputation)

- Implement CLR transformation using the formula:

CLR(x) = ln[x_i/g(x)]whereg(x)is the geometric mean of all taxa - Verify transformation success by checking that data are approximately normally distributed

- Proceed with downstream analysis using standard statistical methods

Problem: Excessive Zeros Obscuring True Signals

Symptoms: Inability to detect differences in low-abundance taxa; model instability; reduced statistical power.

Solutions:

- Zero-Inflated Models: Use statistical approaches specifically designed for zero-inflated data (e.g., zero-inflated negative binomial models) [9]

- Appropriate Zero Handling: Classify zeros as true absences, technical dropouts, or sampling zeros, then apply targeted strategies [8]

- Aggregation: Analyze data at higher taxonomic levels (e.g., genus instead of ASV) to reduce sparsity

- Pre-filtering: Remove taxa with negligible prevalence across samples to reduce noise [9]

Experimental Protocol for Zero Handling:

- Zero Classification:

- Identify taxa absent from positive controls as potential technical dropouts

- Flag taxa absent from entire sample groups as potential structural zeros

- Classify remaining zeros as sampling zeros

- Apply tailored solutions:

- For technical dropouts: Consider imputation or removal

- For structural zeros: Include in models as true absences

- For sampling zeros: Use models that account for sampling depth variation

- Validate with positive controls and spike-ins when available

Problem: Integrating Microbial Data with Environmental Variables

Symptoms: Poor prediction accuracy when combining microbiome and environmental data; difficulty identifying meaningful environmental predictors.

Solutions:

- Feature Selection: Implement methods like Boruta or correlation-based selection to identify the most relevant environmental covariates [10]

- Multi-Omics Integration: Use specialized frameworks (e.g., MixOmics) designed for integrating heterogeneous data types [6]

- Regularization: Apply penalized regression methods (e.g., LASSO, ridge) that handle high-dimensional predictor spaces [10]

Method Comparison Tables

Normalization Methods for Compositional Data

| Method | Key Principle | Advantages | Limitations | Best Use Cases |

|---|---|---|---|---|

| CLR Transformation | Log-ratio of components to geometric mean | Preserves relative information; enables standard statistical tests | Requires zero-handling; may distort distances | General-purpose; machine learning applications [6] |

| Rarefying | Subsamples to equal sequencing depth | Simple; intuitive; reduces library size effects | Discards valid data; introduces artificial uncertainty | Comparing community diversity; small datasets [8] |

| TSS (Total Sum Scaling) | Divides counts by total reads | Simple; preserves compositionality | Perpetuates compositionality issues; sensitive to dominant taxa | Preliminary exploration; when combined with compositional methods [6] |

| GMPR (Geometric Mean of Pairwise Ratios) | Uses pairwise ratios to estimate size factors | Robust to compositionality; handles zero-inflation | Computationally intensive; less established | Zero-inflated datasets; differential abundance [9] |

Feature Selection Approaches for Environmental Identification

| Method | Mechanism | Implementation | Performance Considerations |

|---|---|---|---|

| Boruta | Wrapper around Random Forest using permutation importance | Iteratively compares original feature importance to shadow features | High computational demand; identifies all relevant features [10] |

| Pearson's Correlation | Filters features based on linear relationship with outcome | Simple correlation coefficient calculation | Fast; only detects linear relationships [10] |

| LASSO (L1 Regularization) | Embedded feature selection via L1 penalty | Shrinks coefficients of irrelevant features to zero | Built into model training; requires careful tuning [10] |

| Recursive Feature Elimination | Iteratively removes least important features | Works with any ML classifier; backward selection approach | Computationally intensive; model-dependent results [11] |

Experimental Workflows

Microbial Community Analysis with Feature Selection

Tiered Validation Strategy for Environmental Source Tracking

Research Reagent Solutions

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| Solid Phase Extraction (SPE) Cartridges | Comprehensive analyte recovery from environmental samples | Multi-sorbent strategies (e.g., Oasis HLB + ISOLUTE ENV+) provide broader chemical coverage [11] |

| QuEChERS Kits | Rapid extraction with minimal solvent use | Ideal for large-scale environmental samples; reduces processing time [11] |

| 16S rRNA Primers | Taxonomic profiling of bacterial communities | Selection critical for taxonomic resolution and bias minimization [6] [12] |

| Certified Reference Materials (CRMs) | Analytical validation and quality control | Essential for verifying compound identities in non-target analysis [11] |

| Mock Communities | Method validation and benchmarking | Contain known microbial compositions to assess technical variability [8] |

| DNA/RNA Stabilization Buffers | Preservation of nucleic acids pre-sequencing | Critical for accurate representation of in-situ microbial communities [6] |

The Problem of Spatial Autocorrelation and Imbalanced Data in Geospatial Modeling

Troubleshooting Guides

Troubleshooting Guide for Spatial Autocorrelation (SAC)

Problem: My model shows deceptively high predictive power during training but fails to generalize to new geographic areas.

Diagnosis Questions:

- Are your training samples clustered closely together in space?

- Are you predicting to locations far from your training data locations?

- Does your validation strategy randomly split data without considering geographic location?

Solutions:

- Quantify SAC: Calculate spatial autocorrelation indicators (like Moran's I) for your target variable and key predictors to determine the minimum independent sampling distance [13].

- Implement Spatial CV: Use spatial cross-validation, where data are split into spatially distinct folds, to test the model's ability to generalize to new locations [14].

- Include Spatial Features: Explicitly model spatial dependence by incorporating spatial coordinates or environmental covariates that capture the spatial structure as model features [15].

Troubleshooting Guide for Imbalanced Data

Problem: My classifier has high overall accuracy but fails to identify the critical, rare events (e.g., pollution sources, rare species).

Diagnosis Questions:

- Is one class (e.g., "absence" or "common event") significantly more frequent than another (e.g., "presence" or "rare event")?

- Are you using simple accuracy as your primary performance metric?

Solutions:

- Use Appropriate Metrics: Immediately stop using simple accuracy. Adopt metrics like F1-score, Precision-Recall AUC (PR-AUC), or Balanced Accuracy [16] [17].

- Apply Resampling Techniques: Use algorithms like SMOTE to generate synthetic samples for the minority class or carefully downsample the majority class [16].

- Leverage Algorithmic Fixes: Use built-in class weighting in algorithms like Random Forest or XGBoost to penalize misclassifications of the minority class more heavily [16] [17].

Frequently Asked Questions (FAQs)

Q1: What is spatial autocorrelation and why does it break my geospatial model? Spatial autocorrelation (SAC) is the concept that observations close to each other in space are more likely to be similar than observations further apart [13]. For example, the temperature measured at one location in a forest will be very similar to the temperature 10 meters away [13]. This violates the assumption of independence in many standard statistical models. When training and test data are not spatially separated, the model's performance appears high because it is effectively "cheating" by predicting on nearby, similar data. This leads to poor generalization and an overly optimistic performance estimate when the model is applied to new, distant geographic areas [14] [18].

Q2: My dataset is imbalanced. When should I use resampling vs. cost-sensitive learning? The choice depends on your dataset size and specific context. The table below summarizes guidance based on common scenarios [16]:

| Scenario | Recommended Strategy | Key Consideration |

|---|---|---|

| Severe imbalance with small dataset | SMOTE or ADASYN | Synthetic data generation can create variety without simple duplication [16]. |

| Large dataset with redundant majority class | Undersampling or BalancedBagging | Reduces computational cost and information loss is minimized [16]. |

| High cost of false negatives | Cost-sensitive learning or Focal Loss | Directly increases the penalty for missing the rare class [16]. |

| Need for model interpretability | Class weighting or threshold adjustment | Avoids altering the original data distribution [16]. |

Q3: How can I validate my model if I suspect spatial autocorrelation? Traditional random train-test splits are insufficient. You must use spatial cross-validation [14]. This involves partitioning your data based on location, for example, using k-means clustering on spatial coordinates to create spatially distinct folds. The model is trained on data from several spatial folds and validated on the held-out fold. This tests the model's ability to predict in truly new locations, providing a more robust and realistic performance estimate for real-world deployment [14].

Q4: Are 60/40 class ratios considered "imbalanced"? A 60/40 split is moderately imbalanced [16]. While not as severe as a 99/1 split, it can still impact model performance, especially if the minority class is of critical interest (e.g., a rare but high-risk contaminant source) or if the dataset is very small. It is essential to monitor class-specific performance metrics (like recall for the minority class) rather than relying on overall accuracy [16].

Experimental Protocols & Data

Detailed Protocol: Correcting for Imbalanced Data in Species Distribution Models

This protocol is adapted from a systematic study on improving SDM performance [17].

Objective: To build a robust species distribution model using machine learning despite a strong class imbalance between species presence and absence records.

Materials:

- Software: R or Python with relevant ML libraries (e.g.,

scikit-learn,caret). - Data: A dataset of species occurrence (presence/absence) linked to environmental variables.

Methodology:

- Data Preparation:

- Compile and clean species occurrence data and environmental raster data (e.g., climate, soil, topography).

- Extract environmental variable values at each presence and absence location.

- Calculate the prevalence of the species (number of presences / total observations).

Model Training with Imbalance-Correction:

- Select a suite of machine-learning algorithms (e.g., Random Forest, Gradient Boosting, SVM).

- For each algorithm, train a model using several imbalance-correction methods:

- Base: No correction.

- Down-sampling: Randomly remove samples from the majority class (absence) to balance the classes.

- Up-sampling: Randomly duplicate samples from the minority class (presence).

- Class Weighting: Assign a higher penalty for misclassifying the minority class during model training.

- Use spatial cross-validation to tune hyperparameters and evaluate performance.

Evaluation:

- Evaluate all models on a held-out test set that reflects the true, imbalanced class distribution.

- Use metrics robust to imbalance: True Skill Statistic (TSS), F1-score, and Precision-Recall curves [17].

- Select the model and correction method that provides the best balance of sensitivity (true positive rate) and specificity (true negative rate).

Key Finding from Literature: A systematic study found that all imbalance-correction methods (down-sampling, up-sampling, weighting) substantially improved model performance (TSS) over the base algorithms for 15 macroinvertebrate species. Down-sampling was a consistently effective and computationally efficient method [17].

Detailed Protocol: Accounting for Spatial Autocorrelation in Citizen Science Data

This protocol is based on research that derived robust bat population trends from citizen science data [15].

Objective: To derive accurate population trends from spatially clustered citizen science monitoring data.

Materials:

- Software: R with packages for spatial analysis and Bayesian modeling (e.g.,

INLA). - Data: Georeferenced time-series of species counts or occupancy from a citizen science program.

Methodology:

- Data Assessment:

- Map all survey locations to visually identify gaps and clusters in sampling effort.

- Test for spatial autocorrelation in the residuals of a standard non-spatial model using Moran's I.

Spatial Model Building:

- Build a Bayesian hierarchical model using Integrated Nested Laplace Approximation (INLA).

- Include spatial random effects (e.g., a Gaussian Markov random field) to account for the spatial structure not explained by the environmental covariates.

- Also include relevant environmental variables (e.g., land cover, climate) as fixed effects.

Model Validation and Trend Estimation:

- Compare the spatial model to a non-spatial model using metrics like Deviance Information Criterion (DIC) or Watanabe-Akaike information criterion (WAIC).

- Use the superior model to derive population trends, which will be more robust to the underlying spatial biases in the data [15].

Key Finding from Literature: Research on a UK bat monitoring program showed that while overall trends were broadly robust, accounting for spatial autocorrelation and environmental variables improved model fit and revealed important national-level differences masked by the overall British trend [15].

Visualizations

Geospatial AI Troubleshooting Workflow

Machine Learning-Oriented Geospatial Analysis Pipeline

Research Reagent Solutions

The following table details key computational tools and methodological "reagents" essential for tackling the discussed challenges in geospatial modeling for environmental source identification.

| Research Reagent | Function/Brief Explanation | Relevant Context |

|---|---|---|

| Spatial Cross-Validation | A validation technique that partitions data by spatial location to test model generalizability to new areas, directly countering Spatial Autocorrelation [14]. | Essential for any geospatial model to avoid over-optimistic performance estimates. |

| Integrated Nested Laplace Approximation (INLA) | A computational method for Bayesian hierarchical modeling that efficiently accounts for spatial random effects and complex error structures [15]. | Used for deriving robust population trends from spatially biased citizen science data [15]. |

| SMOTE & Variants | Synthetic Minority Over-sampling Technique; generates synthetic samples for the minority class to balance datasets, overcoming model bias toward the majority class [16]. | Applied in species distribution modeling and fraud detection to improve prediction of rare events [16] [17]. |

| Class Weighting | An algorithmic strategy that assigns a higher cost to misclassifying minority class samples during model training, improving sensitivity without resampling [16] [17]. | Supported natively in many ML algorithms (e.g., Scikit-learn, XGBoost); found to broadly improve SDM performance [17]. |

| Extremely Randomized Trees (ERT) | An ensemble ML algorithm that demonstrated optimal performance in learning the relationship between environmental factors and microbial community types [18]. | Used to identify key environmental factors (e.g., latitude, temperature) that collectively shape microbial communities [18]. |

| Feature Selection Techniques (SFS, LASSO) | Sequential Forward Selection (SFS) and Least Absolute Shrinkage and Selection Operator (LASSO) are methods to identify the most predictive features, enhancing model efficiency and interpretability [19]. | Critical for building robust models with small sample sizes, as often encountered in regional environmental forecasting [19]. |

Navigating Non-Linear Relationships and Complex Interactions in Ecological Systems

Troubleshooting Guides

Guide 1: Diagnosing and Addressing Non-Linear Ecological Responses

Problem: My model fails to predict an abrupt ecological change (e.g., population collapse) in response to gradual environmental pressure.

Solution:

- Check for Tipping Points: Non-linear responses often occur when environmental perturbations exceed critical thresholds. Model simulations show that irreversible, non-linear responses commonly occur in terrestrial ecosystems when vegetation removal exceeds 80%, especially for higher trophic levels and in less productive ecosystems [20].

- Re-evaluate Driver-Response Assumptions: Do not assume linear relationships. It is safer for scientists and managers to assume that pelagic ecosystems respond nonlinearly to environmental and human drivers [21]. Use methods designed to detect non-linearities and threshold responses.

- Inspect Trophic Levels: Non-linearity is often more pronounced for organisms in higher trophic levels. Predators are more sensitive to bottom-up resource limitation due to dynamic predator-prey interactions and patchily distributed resources [20].

- Assess Ecosystem Productivity: Low-productivity ecosystems may exhibit rapid, non-linear changes even at low levels of perturbation due to higher resource limitation [20].

Preventive Measures:

- Incorporate mechanistic models that simulate underlying biological interactions, which are better suited to predicting dynamic changes than purely statistical models [20].

- Use modeling approaches like System Dynamics that can explicitly represent feedback loops and non-linear relationships within socio-ecological systems [22].

Guide 2: Managing High-Dimensional Ecological Data for Feature Selection

Problem: My ecological dataset (e.g., from DNA metabarcoding) is too high-dimensional and sparse, making it difficult to identify features (e.g., species) relevant for prediction or classification.

Solution:

- Evaluate the Need for Feature Selection: For tree ensemble models like Random Forests, feature selection is more likely to impair model performance than to improve it for analyzing ecological metabarcoding data [23]. Test model performance with and without feature selection on your specific dataset.

- Select an Appropriate Algorithm: If feature selection is necessary, choose a method suited to your data and goals. A benchmark analysis suggests that Recursive Feature Elimination can enhance Random Forest performance across various tasks [23]. Other advanced multi-objective evolutionary algorithms (MOEAs) like DRF-FM are also designed for high-dimensional feature selection [24].

- Address Data Compositionality: Be aware that calculating relative counts (common in microbial ecology) can impair model performance. Novel methods to combat the compositionality of metabarcoding data may be required [23].

- Define Feature Relevance: Formally define "relevant" and "irrelevant" feature combinations to guide the search process toward subsets with high utility potential, thereby improving exploration efficiency [24].

Preventive Measures:

- For small sample datasets, integrate advanced feature selection methods (e.g., Sequential Forward/Backward Selection, Lasso Regression) with data augmentation techniques to enhance model robustness and predictive accuracy [19].

Frequently Asked Questions (FAQs)

FAQ 1: What is a non-linear response in an ecological system, and why is it important?

A non-linear response means that a small change in a driver (e.g., fishing pressure, pollution) creates a disproportionately large ecological response (e.g., stock collapse), instead of an incremental change [21]. This is critical because such "ecological surprises" can have broad, severe, and sometimes irreversible consequences, complicating management and prediction efforts [20] [21].

FAQ 2: My statistical model assumes linearity. How can I account for potential non-linear relationships?

You should adopt more robust modeling frameworks that can inherently capture complexity:

- System Dynamics (SD) Modeling: Effective for including explicit feedbacks between natural and social systems, and for modeling delays and non-linear relationships [22].

- Mechanistic Ecosystem Models: Simulate underlying biological interactions among individual organisms and processes, making them better suited for predicting dynamical changes in whole ecosystems compared to statistical models [20].

- Multi-objective Evolutionary Algorithms (MOEAs): Useful for feature selection tasks with high-dimensional data, as they can handle non-convex and non-linear relationships prevalent in ecological data [24].

FAQ 3: In feature selection, should I prioritize model accuracy or a minimal number of features?

This is a classic trade-off. The two primary objectives are minimizing the number of selected features and reducing the error rate [24]. However, these objectives are not equal. Error rate should be prioritized as the primary objective. A solution with poor error performance is generally unacceptable, even if it uses very few features. A bi-level selection framework can first ensure convergence on error rate before balancing it with feature count [24].

FAQ 4: What are the biggest challenges in modeling socio-ecological systems (SES)?

Key challenges include [22]:

- Analyzing spatiotemporal dynamics of Ecosystem Services (ES) and SES.

- Integrating bidirectional relationships and feedback loops between human and ecological subsystems.

- Modeling human decision-making processes that consider multiple criteria.

- The significant requirement for diverse and high-quality information to parameterize models.

Table 1: Thresholds for Non-linear Responses in Modelled Terrestrial Ecosystems [20]

| Ecosystem Property | Perturbation | Threshold for Non-linear/Irreversible Change | Key Influencing Factors |

|---|---|---|---|

| Biomass & Abundance | Plant biomass removal | >80% removal | More pronounced in higher trophic levels and less productive ecosystems |

| Ecosystem Structure | Plant biomass removal | 80% - 90% removal | Leads to simplified structure, loss of high trophic levels, and reduced functional diversity |

| Functional Properties | Plant biomass removal | >50% - >90% removal (varies) | Trophic level range and body mass range decline substantially |

Table 2: Performance of Machine Learning Approaches on Ecological Data [23] [19]

| Method | Best Suited For / Key Finding | Note on Feature Selection |

|---|---|---|

| Random Forest (RF) | Excels in regression and classification tasks for metabarcoding data [23]. | Feature selection often impairs performance; models are robust without it in high-dimensional data [23]. |

| Recursive Feature Elimination (RFE) | Can enhance Random Forest performance [23]. | A wrapper-based feature selection method. |

| Extreme Gradient Boosting (XGBoost) | Outperforms other models for small-sample predictions (e.g., CO₂ emissions), especially with Gaussian noise augmentation [19]. | Benefits from feature selection techniques like SFS, SBS, and Lasso on small data [19]. |

| Long Short-Term Memory (LSTM) | Suitable for time-series forecasting [19]. | Shows greater sensitivity to noise [19]. |

Experimental Protocols

Protocol 1: Simulating Non-linear Ecosystem Responses using a General Ecosystem Model

This protocol is based on methodologies used in scientific research to model human impacts on complex ecosystems [20].

Objective: To project how ecosystems across different biomes respond to increasing levels of human pressure (e.g., land-use change) and identify potential thresholds for non-linear change and irreversibility.

Methodology:

- Model Selection: Use a general ecosystem model (e.g., the Madingley Model) that simulates all plants and non-microbial heterotrophs, their age/size-structuring, metabolism, growth, and predator-prey interactions [20].

- Define Simulation Scenarios:

- Perturbation Gradient: Apply a gradient of plant biomass removal (e.g., from 0% to 95% of Net Primary Production) as a proxy for human land use [20].

- Biome Selection: Run simulations across biomes with differing productivity and seasonality (e.g., tropical forest, temperate forest, arid shrubland, desert) [20].

- Measure Response Variables: Track key metrics across trophic levels:

- Test for Reversibility: After escalating perturbation, run a second set of simulations where the pressure is gradually removed to see if the ecosystem recovers to its original state or settles into an alternative stable state [20].

- Data Analysis: Identify non-linearity by looking for disproportionate responses and tipping points where small increases in pressure cause large changes in ecosystem metrics [20].

Protocol 2: A Benchmark Workflow for Feature Selection on Ecological Metabarcoding Data

This protocol outlines a workflow for applying and evaluating feature selection methods to high-dimensional ecological data, as benchmarked in recent studies [23].

Objective: To identify a subset of informative taxa (features) from a metabarcoding dataset that are relevant for a specific ecological prediction or classification task.

Methodology:

- Data Preprocessing: Prepare your species abundance matrix. Note that using relative counts (compositional data) may impair model performance, and alternative normalization methods should be considered [23].

- Define the Learning Task: Clearly specify the target variable (e.g., an environmental parameter like pH, temperature, or a classification like healthy/diseased).

- Select and Apply Feature Selection Methods: Compare a suite of methods. These can include:

- Filter Methods: Using statistical measures (e.g., Pearson correlation) between individual features and the target [19].

- Wrapper Methods: Such as Sequential Forward Selection (SFS) and Sequential Backward Selection (SBS) [19].

- Embedded Methods: Such as Lasso Regression [19] or feature importance from tree-based models.

- Advanced MOEAs: For multi-objective feature selection (minimizing feature count and error rate simultaneously) [24].

- Model Training and Evaluation:

- Train machine learning models (e.g., Random Forest, XGBoost) on the full feature set and on each of the selected feature subsets [23].

- Use cross-validation to evaluate model performance based on accuracy, robustness, and generalization error.

- Benchmarking: Compare the performance of workflows (preprocessing + feature selection + model) to determine the optimal pipeline for your specific dataset [23]. The benchmark should answer whether feature selection improves analyzability for your task.

Workflow and Relationship Diagrams

Diagram 1: Analytical Workflow for Ecological Feature Selection

Diagram 2: Non-linear Ecosystem Response to Perturbation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Modeling and Analytical Tools

| Tool / Solution | Function | Application Context |

|---|---|---|

| General Ecosystem Models (GEMs) | Mechanistically simulate the dynamics of entire ecosystems, including all trophic levels. | Projecting ecosystem-wide impacts of human pressures and identifying potential collapse thresholds [20]. |

| System Dynamics (SD) Modeling | A simulation approach to model complex systems with explicit feedback loops, delays, and non-linearities. | Understanding interactions in Socio-Ecological Systems (SES), like land-use change dynamics [22]. |

| Multi-objective Evolutionary Algorithms (MOEAs) | Optimize multiple conflicting objectives simultaneously (e.g., feature count vs. error rate). | Performing feature selection on high-dimensional ecological data to find a Pareto-optimal set of solutions [24]. |

| Random Forest (RF) | A robust, ensemble machine learning algorithm for classification and regression. | Analyzing ecological metabarcoding datasets; often performs well without additional feature selection [23]. |

| Recursive Feature Elimination (RFE) | A wrapper-based feature selection method that recursively removes the least important features. | Can be used to enhance the performance of models like Random Forest on ecological data [23]. |

A Toolkit of Feature Selection Methods for Environmental Applications

In environmental source identification research, the ability to pinpoint the origin of contaminants accurately is paramount for effective remediation and policy-making. A significant challenge in building robust predictive models is the high-dimensional nature of environmental data, which often includes a vast number of potential chemical markers, meteorological parameters, and geographical features. Filter methods for feature selection provide a critical first step in tackling this challenge. These computationally efficient, model-independent techniques help refine the pool of features to the most relevant and non-redundant predictors, thereby enhancing model performance, interpretability, and generalizability. This technical support guide focuses on three core filter methods—Variance Thresholding, Correlation, and Mutual Information—framed within the context of environmental source tracking. The following FAQs and troubleshooting guides are designed to address specific, common issues researchers encounter when implementing these methods in their experiments.

Frequently Asked Questions (FAQs)

1. What are the primary advantages of using filter methods over other feature selection techniques in environmental studies?

Filter methods are particularly advantageous in the initial stages of environmental data analysis due to their computational efficiency and model independence [25]. They evaluate features based on intrinsic statistical properties of the data rather than a specific machine learning algorithm. This makes them fast and scalable for high-dimensional datasets, such as those generated from high-resolution mass spectrometry (HRMS) in non-targeted analysis [11]. Furthermore, their simplicity and speed make them ideal for a preliminary screening to rapidly narrow down thousands of potential chemical features to a manageable subset of candidates for further, more computationally intensive, analysis.

2. When should I avoid using the Variance Threshold method?

You should avoid relying solely on Variance Threshold when a feature's low variance is actually informative for your specific environmental target [26]. For instance, a compound that is consistently absent in background samples but consistently present at a low, constant concentration in a specific pollution plume could be a highly specific biomarker. Variance Threshold would filter this feature out. This method only assesses the variability within the feature itself and ignores the relationship between the feature and the target variable [26]. It is best used as an initial step to remove obviously uninformative, constant features.

3. How do I handle highly correlated features without losing potentially valuable information?

The standard practice is to identify pairs of highly correlated features and then remove one of them to reduce multicollinearity. To decide which feature to keep, you should evaluate their individual correlations with the target variable and retain the one with the stronger relationship [27] [28]. Alternatively, you can create a new feature that is a composite (e.g., an average or ratio) of the correlated ones if it has a chemically meaningful interpretation. Domain knowledge is crucial; if two correlated compounds are known to originate from different biochemical pathways, it might be worth keeping both despite the correlation.

4. Can I use Mutual Information for both regression and classification problems in source identification?

Yes. Mutual Information is a versatile metric that can be used for both regression (predicting a continuous value, like concentration) and classification (categorizing a pollution source) tasks. In Python's scikit-learn, you would use mutual_info_regression for continuous targets and mutual_info_classif for discrete targets [28]. This flexibility is valuable in environmental research, where tasks range from predicting contaminant concentrations (regression) to classifying samples by source type (classification).

5. My model performance decreased after feature selection. What might have gone wrong?

A decrease in performance often indicates that informative features were incorrectly removed. This can happen if:

- The threshold for selection was too aggressive. For example, a variance threshold that is too high might remove quasi-constant features that are key discriminators for a rare source.

- Important feature interactions were lost. Filter methods typically evaluate features independently [25] [26]. Two features that are weak predictors alone might be strong in combination. Re-evaluating your thresholds or considering wrapper or embedded methods might be necessary.

- Data was not properly preprocessed. Since Variance Threshold and Correlation are sensitive to scale, applying them to unstandardized data can lead to biased feature removal [26]. Always standardize or normalize your data before applying these methods.

Troubleshooting Guides

Issue 1: Inconsistent Feature Selection Results After Data Scaling

Problem: When you re-run your feature selection pipeline, different features are selected, especially after standardizing the data for Variance Thresholding.

Solution: This is a common pitfall. Variance is scale-dependent, so a feature measured in large units (e.g., parts per billion) will naturally have a higher variance than one measured in small units (e.g., parts per trillion).

Experimental Protocol:

- Standardize Your Data: Before applying Variance Threshold, standardize all features to have a mean of 0 and a standard deviation of 1. This ensures all features are on a comparable scale. Use

StandardScalerfromsklearn.preprocessing. - Apply Variance Threshold: After standardization, apply the

VarianceThresholdselector. A common starting threshold for standardized data is 0.01 or 0.05 to filter out quasi-constant features [26]. - Validate: Use the

get_support()method to get a boolean mask of selected features and ensure the results are stable across runs.

Issue 2: Managing Multicollinearity in Environmental Marker Panels

Problem: Your analysis identifies a set of potential chemical markers, but many are highly correlated, leading to an unstable and overfitted model when all are used.

Solution: Use Pearson's correlation to systematically identify and remove redundant features.

Experimental Protocol:

- Calculate Correlation Matrix: Compute the correlation matrix for all features in your dataset.

- Identify Highly Correlated Pairs: Define a correlation coefficient threshold (e.g., |0.8| or |0.9|). Iterate through the matrix to find feature pairs exceeding this threshold [26].

- Prioritize Feature-Target Relationship: For each correlated pair, calculate the correlation of each feature with the target variable (e.g., source label). Remove the feature with the lower absolute correlation with the target.

- Iterate: Continue this process until no highly correlated pairs remain.

The workflow for this systematic filtering process is outlined below.

Issue 3: Selecting the Optimal Number of Features with Mutual Information

Problem: Mutual Information ranks all features, but you need an objective way to determine the top k features to select for your model.

Solution: Combine Mutual Information with the SelectKBest function, using cross-validation to find the k that gives the best model performance.

Experimental Protocol:

- Rank Features: Use

mutual_info_classiformutual_info_regressionto get MI scores for all features. - Use SelectKBest: Employ

SelectKBestwith the mutual information scorer to select different numbers of top k features. - Cross-Validation Loop: For a range of potential k values, perform cross-validation on your predictive model (e.g., Random Forest). Use a performance metric like accuracy or F1-score for classification, or RMSE for regression.

- Plot and Choose: Plot the cross-validation performance versus the number of features (k). The optimal k is often at the "elbow" of the curve, where adding more features yields diminishing returns.

Comparative Analysis of Filter Methods

The table below summarizes the key characteristics, use cases, and limitations of the three primary filter methods discussed.

| Method | Key Principle | Data Type | Primary Use Case | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Variance Threshold | Removes features with low variance (little to no change in value) [27]. | Numeric | Preprocessing to remove constant and quasi-constant features [26]. | Fast, simple, effective for removing obviously uninformative data. | Ignores feature-target relationship; sensitive to data scaling [26]. |

| Correlation (Pearson's) | Measures linear relationship between two variables [27]. | Numeric | Identifying and removing redundant features (multicollinearity) [28]. | Intuitive; excellent for finding and reducing redundancy in feature sets. | Only captures linear relationships; can miss complex dependencies. |

| Mutual Information | Measures the dependency between two variables, quantifying how much information one reveals about the other [29]. | Numeric & Categorical (with encoding) | Capturing both linear and non-linear relationships between features and the target [28]. | Versatile; detects any kind of relationship, not just linear. More computationally intensive than correlation. |

The Scientist's Toolkit: Essential Research Reagents & Software

The following table details key computational tools and their functions essential for implementing filter-based feature selection in environmental informatics.

| Item | Function in Analysis | Example/Note |

|---|---|---|

Scikit-learn (sklearn) |

A core Python library providing implementations for VarianceThreshold, correlation_matrix, mutual_info_classif/regression, and SelectKBest [27] [28]. |

The primary API for building the feature selection pipeline. |

| Pandas DataFrame | Data structure for storing and manipulating the feature-intensity matrix (samples x features) [27]. | Essential for handling tabular data, removing duplicates, and subsetting features. |

| High-Resolution Mass Spectrometer (HRMS) | Analytical instrument generating high-dimensional chemical data for non-target analysis (NTA) [11]. | e.g., Q-TOF or Orbitrap systems. Produces the raw data for source identification. |

| StandardScaler | A preprocessing module in sklearn used to standardize features by removing the mean and scaling to unit variance [26]. |

Critical pre-step for Variance Threshold and Correlation to ensure scale-independence. |

| Seaborn/Matplotlib | Python libraries for visualization, used for plotting correlation heatmaps and mutual information scores [28]. | Aids in visual inspection of feature relationships and selection results. |

Troubleshooting Guides and FAQs

This technical support resource addresses common challenges researchers face when implementing feature selection methods in environmental source identification studies.

Recursive Feature Elimination (RFE) Basics

Q1: What is Recursive Feature Elimination and how does it work in environmental biomarker studies?

RFE is a wrapper-style feature selection algorithm that recursively removes the least important features from a dataset until a specified number of features remains [30]. The process works as follows:

- Initialization: Train your chosen estimator on the entire set of features

- Importance Calculation: Rank all features by their importance scores (from

coef_orfeature_importances_attributes) - Feature Elimination: Remove the weakest feature(s) based on the step parameter

- Recursion: Repeat the process on the remaining features until the target number of features is reached [31]

In environmental metabarcoding studies, RFE helps identify the most informative microbial taxa by eliminating redundant or irrelevant species, enhancing the analyzability of sparse, compositional datasets [23].

Q2: How do I choose the optimal number of features to select?

Use RFECV (RFE with Cross-Validation) to automatically determine the optimal number of features. The RFECV visualizer plots cross-validated scores against the number of features, showing the point where additional features no longer improve performance [32]. For environmental datasets with known sparsity patterns, you can set n_features_to_select based on domain knowledge.

Common RFE Implementation Issues

Q3: My RFE model performance fluctuates dramatically between iterations. What could be wrong?

This instability often stems from these technical issues:

- Insufficient Feature Importance Contrast: When many features have similar importance scores, elimination order becomes arbitrary. Solution: Use a larger step size or filter methods pre-selection [30]

- Data Leakage: Ensure RFE is fitted only on training data within a Pipeline

- Small Dataset Size: For high-dimensional environmental data with few samples, consider using

RFECVwith more folds or repeats

Technical Fix Pipeline:

Q4: Which estimator should I use as RFE's base estimator for environmental data?

The choice depends on your data characteristics and problem type:

Table 1: Estimator Selection Guide for Environmental Data

| Data Type | Recommended Estimator | Rationale | Use Case Example |

|---|---|---|---|

| Linear relationships | LinearSVC (C=0.01, penalty="l1") | Provides sparse coefficients for clear feature ranking [33] | Identifying linear pollutant gradients |

| Complex non-linear | RandomForestClassifier | Robust to outliers, provides impurity-based importance [23] | Microbial source tracking |

| High-dimensional omics | SVR(kernel="linear") | Handles high dimensionality well [31] | Metabolomic biomarker discovery |

| Sparse compositional | LogisticRegression(penalty='l1') | L1 regularization induces sparsity [33] | Metabarcoding data analysis |

Tree-Based Feature Importance Challenges

Q5: Why do my tree-based feature importances seem biased toward high-cardinality features?

This is a known limitation of impurity-based importance (Mean Decrease in Impurity). High-cardinality features (e.g., continuous environmental measurements with many unique values) can appear more important because they have more split opportunities [34].

Solutions:

- Use Permutation Importance:

- Pre-process continuous features using binning to reduce cardinality

- Combine multiple importance metrics for robust feature selection

Table 2: Comparison of Feature Importance Methods

| Method | Advantages | Limitations | Computation Cost |

|---|---|---|---|

| Impurity-based (MDI) | Fast, native to tree models | Biased toward high-cardinality features [34] | Low |

| Permutation Importance | Unbiased, model-agnostic | Computationally expensive [34] | High |

| SHAP values | theoretically optimal | Very computationally intensive [35] | Very High |

Q6: How can I validate that my selected features are biologically relevant in environmental studies?

Implement a multi-stage validation protocol:

- Statistical Validation: Use holdout sets and cross-validation to ensure selected features generalize

- Biological Plausibility Check: Compare with known ecological relationships from literature

- Temporal Stability: For time-series environmental data, verify feature importance consistency across sampling periods

- Independent Cohort Validation: Test selected features on geographically distinct datasets

In cotton environmental interaction studies, researchers combined RFE with SHAP analysis to identify key environmental drivers active during specific growth stages, then validated findings through sliding-window regression analysis [35].

Performance Optimization

Q7: My RFE implementation is too slow for large environmental datasets. How can I improve performance?

Optimization strategies for large environmental datasets:

- Increase Step Size: Set

step=5or higher to remove multiple features per iteration [31] - Use Faster Estimators: Linear models train faster than tree-based methods for RFE

- Subsampling: Use strategic subsampling during elimination phases

- Parallelization: Leverage

n_jobs=-1parameter where available

Q8: When should I avoid using RFE in environmental research?

RFE may be suboptimal when:

- Very High Dimensionality: With thousands of features and few samples, filter methods often perform better [23]

- Strong Multicollinearity: RFE can arbitrarily select among correlated features

- Computational Constraints: For rapid screening, use variance threshold or univariate selection instead [33]

- Tree Ensemble Models: Benchmark analyses show tree ensembles like Random Forests often perform well without additional feature selection [23]

Experimental Protocols

Protocol 1: RFE for Microbial Source Tracking

Application: Identify minimal microbial biomarker panels for contamination source identification [23]

- Data Preprocessing: Rarefy metabarcoding data to even sequencing depth, filter taxa present in <5% of samples

- Feature Elimination: Implement RFE with RandomForest estimator, 5-fold stratified cross-validation

- Validation: Assess selected features on temporal holdout samples using F1-score

- Biological Validation: Compare selected taxa with known host-associated microbial signatures

Protocol 2: Environmental Driver Identification

Application: Identify key environmental factors influencing phenotypic traits in crops [35]

- Data Collection: Aggregate environmental parameters (temperature, precipitation, humidity) across growth stages

- Window Analysis: Apply sliding-window regression to identify critical temporal windows

- Feature Selection: Use RFE with SHAP interpretation to select dominant environmental drivers

- Model Validation: Compare cross-environment prediction accuracy with and without selected drivers

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Notes |

|---|---|---|

| scikit-learn RFE/RFECV | Core feature selection implementation | Use in Pipeline to prevent data leakage [30] [31] |

| Yellowbrick RFECV | Visualization of feature selection performance | Ideal for determining optimal feature count [32] |

| SHAP (SHapley Additive exPlanations) | Feature importance interpretation | Validates biological relevance of selected features [35] |

| MetaBarcoding Data | Environmental sample source material | Filter low-abundance taxa before feature selection [23] |

| Random Forest Classifier | Robust estimator for RFE | Preferred for non-linear ecological relationships [23] [35] |

| Permutation Importance | Alternative to impurity-based importance | Unbiased feature ranking [34] |

Method Workflows

Key Benchmark Findings

Table 4: Performance Benchmarks of Feature Selection Methods in Environmental Studies

| Study Context | Optimal Method | Performance Gain | Key Insight |

|---|---|---|---|

| Environmental Metabarcoding (13 datasets) | Random Forest without feature selection | RFE improved RF performance in various tasks [23] | Feature selection more likely to impair than improve tree ensemble models [23] |

| Cotton G×E Interaction Analysis | RFE with Random Forest + SHAP | Improved cross-environment prediction accuracy by 0.02-0.15 [35] | Identified 0.1-2.4% of original environmental variables as key drivers [35] |

| Synthetic Dataset Benchmark | RFE with SVR(kernel='linear') | Accurate selection of 5 informative from 10 total features [31] | Effective elimination of redundant features while retaining informative ones [30] |

| High-Dimensional Microbiome Data | Ensemble models without feature selection | Robust performance without feature selection [23] | Novel methods needed to combat compositionality of metabarcoding data [23] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of causality-driven feature selection over traditional correlation-based methods for sensor calibration? Causality-driven feature selection identifies features that have a genuine cause-effect relationship with the target variable, unlike correlation-based methods that may select features based on spurious correlations. This leads to models that are more robust and generalizable to new environments and changing conditions. In practice, this approach reduced the mean squared error for PM2.5 calibration by 33.2%, outperforming the 30.2% reduction achieved by SHAP value-based selection [36] [37].

FAQ 2: How does convergent cross mapping (CCM) differ from Granger causality in establishing causal relationships? While both methods aim to establish causality, CCM is particularly effective for nonlinear dynamical systems commonly encountered in environmental monitoring. CCM tests whether historical information of one variable can reliably estimate states of another, making it suitable for complex systems where traditional linear causality tests may fail [36].

FAQ 3: What are the most common environmental factors that trigger calibration drift in low-cost sensors? The primary environmental stressors affecting sensor calibration include: dust and particulate accumulation (obstructing sensor elements), humidity variations (causing condensation and chemical reactions), and temperature fluctuations (leading to physical expansion/contraction of components). These factors necessitate regular calibration to maintain data accuracy [38].

FAQ 4: When should researchers consider using causality-based feature selection instead of traditional filter or wrapper methods? Causality-based approaches are particularly valuable when: (1) models must perform reliably under changing environmental conditions, (2) the research goal includes understanding underlying mechanisms rather than just prediction, and (3) working with complex, dynamic systems where spurious correlations are common [36] [39].

FAQ 5: How can researchers validate that selected features truly represent causal relationships? Validation should include: (1) testing model performance on datasets from different environments than the training data, (2) comparing with domain knowledge and physical principles, and (3) assessing invariance of relationships across different conditions and time periods [36].

Troubleshooting Common Experimental Challenges

Issue 1: Poor Model Generalization to New Environments

Symptoms: Model performs well on training data but accuracy drops significantly when deployed in new locations or under different environmental conditions.

Solutions:

- Implement causal feature selection using convergent cross mapping to identify environmentally invariant relationships

- Include diverse environmental conditions during the collocation period with reference instruments

- Test feature invariance by evaluating whether selected features maintain their relationship to the target across different subsets of your data [36]

Prevention: During initial experimental design, collect data across multiple seasons and varying environmental conditions to ensure sufficient diversity in your training dataset.

Issue 2: Handling Sensor Drift and Environmental Stressors

Symptoms: Gradual degradation of model performance over time, often with seasonal patterns or following extreme weather events.

Solutions:

- Implement preventative maintenance schedules based on environmental stressor exposure

- For dust-prone areas, establish regular cleaning protocols and consider protective housings

- In high-humidity environments, incorporate humidity compensation features in your models

- Monitor for calibration drift indicators such as unexpected changes in data trends or persistent mismatches with reference values [38]

Prevention: Document all maintenance and calibration activities meticulously, noting environmental conditions at the time of service to identify patterns in calibration drift.

Issue 3: Weak Causal Signals in Complex Environmental Data

Symptoms: CCM analysis fails to identify strong causal relationships, or identified features do not improve model performance.

Solutions:

- Ensure sufficient time series length for CCM analysis—typically hundreds to thousands of observations

- Preprocess data to address missing values and outliers that can obscure causal relationships

- Consider multivariate CCM extensions that can handle complex interactions between multiple variables

- Validate with alternative causal discovery methods to confirm relationships [36]

Prevention: During data collection, prioritize longer time series over higher frequency measurements when studying causal relationships.

Experimental Protocols & Methodologies

Protocol 1: Convergent Cross Mapping for Causal Feature Selection

Purpose: To identify features with genuine causal relationships to the target variable for robust sensor calibration.

Materials:

- Time-series data from collocated low-cost and reference sensors

- Computational environment with CCM implementation (e.g., R, Python with appropriate packages)

Procedure:

- Data Preparation: Compile synchronized time-series data from all candidate features and reference measurements. Ensure sufficient data length (typically >500 observations).

- State Space Reconstruction: For each feature-target pair, reconstruct the state space using time-delay embedding.

- CCM Analysis: Calculate cross-mapping skill between each feature and target variable, testing whether the feature can reliably predict the target states.

- Convergence Testing: Verify that cross-mapping skill increases with time series length—a key indicator of causality.

- Feature Ranking: Rank features based on their convergence properties and cross-mapping skill.

- Validation: Compare selected features with domain knowledge and test model performance with causality-selected features versus traditional methods [36].

Protocol 2: Performance Comparison Framework

Purpose: To quantitatively evaluate improvements from causality-driven feature selection against traditional methods.

Procedure:

- Baseline Establishment: Train models using all available features and record performance metrics.

- Traditional Feature Selection: Implement SHAP value-based selection and mutual information ranking.

- Causal Feature Selection: Apply CCM-based method to identify causally relevant features.

- Model Training: Train identical model architectures using features selected by each method.

- Performance Assessment: Compare mean squared error, R-squared values, and computational efficiency across methods.

- Generalization Testing: Evaluate all models on held-out data from different environmental conditions than the training set [36].

Table 1: Comparative Performance of Feature Selection Methods for PM Calibration

| Feature Selection Method | PM1 MSE Reduction | PM2.5 MSE Reduction | Key Advantages |

|---|---|---|---|

| Causality-Driven (CCM) | 43.2% | 33.2% | Superior generalizability, physically meaningful features |

| SHAP Value-Based | 29.6% | 30.2% | Model-specific relevance, computational efficiency |

| Mutual Information | Not reported | Not reported | Captures nonlinear dependencies |

| All Features (Baseline) | 0% | 0% | Comprehensive but prone to overfitting |

Table 2: Environmental Stressors and Impact Mitigation Strategies

| Environmental Stressor | Impact on Sensor Performance | Recommended Mitigation |

|---|---|---|

| Dust & Particulate Accumulation | Physical obstruction of sensor elements, altered measurements | Regular cleaning, protective housings, strategic placement |

| Humidity Variations | Condensation, chemical reactions, short-circuiting | Humidity compensation algorithms, protective designs |

| Temperature Fluctuations | Component expansion/contraction, material stress | Thermal compensation, robust materials selection |

| Seasonal Variations | Combined effects of multiple stressors, long-term drift | Seasonal recalibration, multi-season training data |

Research Reagent Solutions

Table 3: Essential Research Tools for Causality-Driven Sensor Calibration

| Tool/Resource | Function | Implementation Examples |

|---|---|---|

| Convergent Cross Mapping Algorithms | Identify causal relationships in time-series data | Python (PyCausal), R (rEDM), custom implementations |

| Reference Grade Instruments | Provide ground truth for calibration development | Research-grade spectrometers, regulatory monitoring stations |

| Low-Cost Sensor Platforms | Target systems for calibration improvement | Optical particle counters (OPC-N3), electrochemical sensors |

| Feature Selection Frameworks | Compare multiple feature selection approaches | Scikit-learn, specialized benchmark frameworks [3] |

Workflow Visualization

Causality-Driven Feature Selection Workflow

Causal vs Traditional Feature Selection

Frequently Asked Questions (FAQs)

Q1: Does integrating environmental covariates always improve genomic prediction accuracy? No, the integration of environmental covariates does not automatically guarantee an improvement in prediction accuracy. The outcome is highly dependent on the dataset and how the environmental information is incorporated. Simple incorporation may increase or decrease accuracy, but the optimal use of feature selection to identify the most relevant environmental predictors can lead to significant improvements, with one study reporting accuracy gains between 14.25% and 218.71% in four out of six datasets in a leave-one-environment-out cross-validation scenario [40].

Q2: When is feature selection necessary before integrating environmental data? Feature selection is particularly crucial when dealing with a high number of environmental covariates relative to the number of observations. It helps to avoid overfitting, reduces model complexity, and can enhance model performance by discarding redundant or irrelevant features. For instance, in a benchmark analysis of environmental datasets, while the optimal approach depended on the dataset, feature selection was more likely to impair the performance of robust models like Random Forests, suggesting that the need for feature selection should be evaluated based on the model and data characteristics [23].

Q3: What are common methods for selecting relevant environmental covariates? Two commonly evaluated methods are Pearson’s correlation and the Boruta algorithm [40]. Additionally, Recursive Feature Elimination (RFE) has been shown to enhance the performance of Random Forest models across various tasks in environmental metabarcoding analyses [23]. For ultra-high-dimensional data, supervised rank aggregation methods coupled with clustering have also been employed [41].

Q4: Can these approaches be applied to non-model species or field samples? Yes, methods like the ChronoGauge ensemble model, trained on model species data, can be applied to non-model species by identifying orthologs of informative gene features. This allows for predictions in species that lack large, dedicated training datasets, including samples collected from the field [42].

Q5: How is high-dimensional 'omics' data, like microbiome composition, integrated with environmental covariates? Dimensionality reduction techniques like Principal Component Analysis (PCA) are often used first to condense the high-dimensional data while preserving essential biological information. The resulting principal components can then be treated as intermediate traits and integrated into prediction models alongside host genomic and environmental data using specialized models like Neural Network GBLUP (NN-GBLUP) [43].

Troubleshooting Guides

Problem 1: Low Prediction Accuracy After Adding Environmental Covariates

Potential Causes and Solutions:

Cause: Irrelevant or Noisy Covariates The environmental covariates added may be unrelated to the response variable, adding noise instead of signal.

- Solution: Implement feature selection methods (e.g., Boruta, Pearson's correlation) to identify and retain only covariates with predictive power for your specific trait [40].

- Action: Protocol: Boruta Feature Selection

- Install the

Borutapackage in R. - Create a data frame where your environmental covariates are the features and your phenotypic trait is the response.

- Run the Boruta algorithm to identify all relevant covariates confirmed by a statistical test.

- Use the confirmed features in your final genomic prediction model.

- Install the

Cause: Suboptimal Model Choice The model may not effectively capture the complex relationships between genotype, environment, and phenotype.

- Solution: Consider using ensemble models or methods designed for multi-source data. For example, multi-kernel models that integrate genomic, environmental, and secondary trait data have been shown to substantially improve prediction accuracy for traits like biomass partitioning in wheat [44]. Similarly, tree ensemble models like Random Forests are often robust without explicit feature selection for high-dimensional data [23].

Problem 2: Handling High-Dimensional Environmental and Omics Data

Potential Causes and Solutions:

Cause: The "p >> n" Problem The number of features (p), such as thousands of environmental variables or microbial OTUs, far exceeds the number of observations (n), leading to model overfitting and high computational cost.

- Solution: Apply dimensionality reduction techniques before model integration.

- Action: Protocol: Dimensionality Reduction with PCA

- Standardize your high-dimensional data (e.g., rumen microbiome composition data).

- Perform PCA on the standardized data.

- Select the top principal components (PCs) that explain a sufficient amount of variation (e.g., 25-50% for microbiome data [43]). These PCs serve as a condensed representation of the original data.

- Integrate these PCs as intermediate traits or covariates in your prediction model.

Cause: Computational Limitations The sheer volume of data makes analysis time-consuming or infeasible.

- Solution: Utilize efficient feature selection and computational frameworks. For ultra-high-dimensional genomic data, a multi-dimensional supervised rank aggregation (MD-SRA) approach provides a good balance between classification quality and computational efficiency, offering lower analysis time and data storage requirements compared to other methods [41].

Problem 3: Predicting Performance in Untested Environments

Potential Causes and Solutions:

- Cause: Inadequate Environmental Characterization

The environmental data may not sufficiently capture the conditions of the target population of environments (TPE).

- Solution: Improve the spatial interpolation and sampling of environmental data. Using machine learning-based interpolation methods like Random Forest Spatial Interpolation (RFSI) and optimizing spatial sampling to exclude non-agricultural areas can significantly enhance the environmental characterization for predictions in untested locations [45].

- Action: Protocol: GIS-FA for Untested Environments

- Collect high-resolution environmental data (e.g., soil, weather, topography) via GIS for your TPE.

- Use RFSI to interpolate and create continuous surfaces of environmental variables.

- Fit a Factor Analytic (FA) model to your multi-environment trial data to obtain latent environmental loadings and genotypic scores.

- Use PLS regression to model the relationship between the interpolated environmental data and the FA loadings.

- Predict the loadings for untested locations and combine them with genotypic scores to obtain empirical BLUPs for genotype performance in those new environments [45].

Table 1: Impact of Feature Selection on Genomic Prediction with Environmental Covariates

| Dataset | Scenario | Performance Metric | Result | Key Finding |

|---|---|---|---|---|

| Six Diverse Datasets [40] | Leave-One-Environment-Out Cross-Validation | Normalized Root Mean Squared Error (NRMSE) | Improvement in 4/6 datasets (14.25% - 218.71%) | Feature selection (Pearson/Boruta) is crucial for optimal integration of environmental covariates. |

| Wheat Biomass Partitioning [44] | Multi-Kernel Model vs. Genomics-Only | Prediction Accuracy | Increase from 18% to 78% for 1000-grain weight | Integrating environmental covariates and secondary traits via multi-kernel models vastly improves accuracy. |