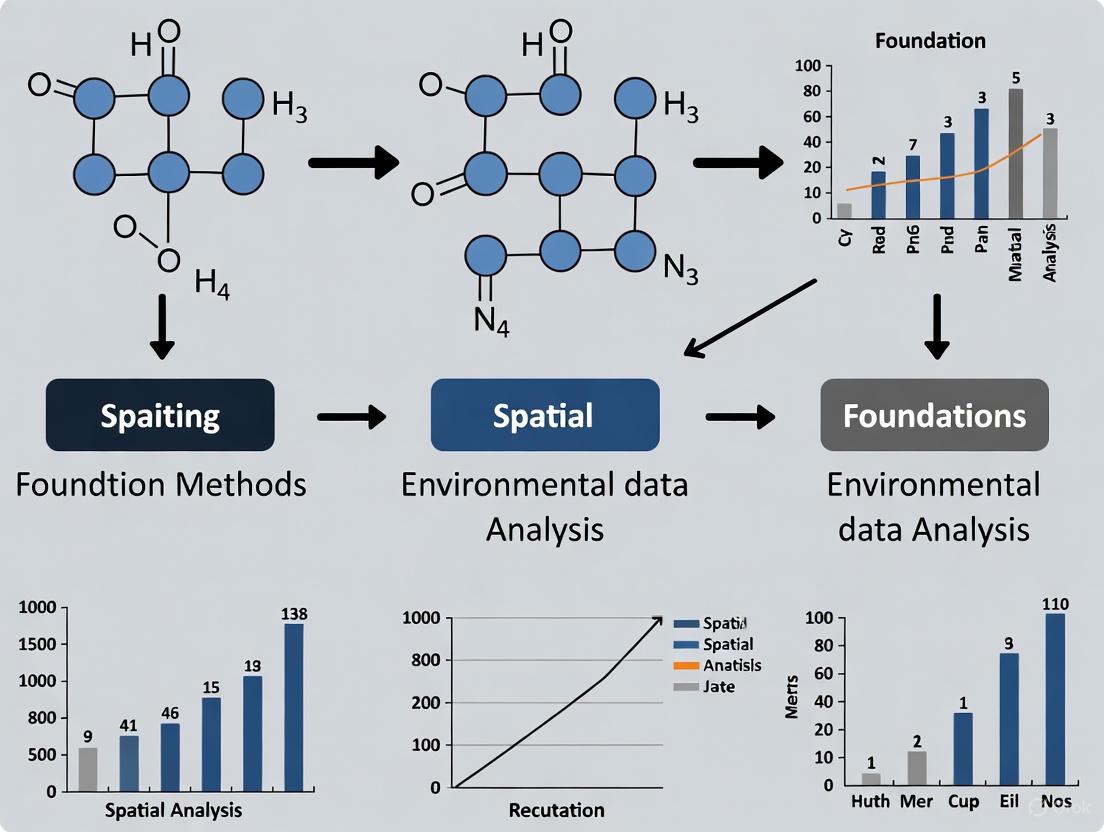

Foundational Methods for Spatial Analysis in Environmental Data: From Core Concepts to Advanced Applications

This article provides a comprehensive overview of foundational spatial analysis methods for environmental data, tailored for researchers and drug development professionals.

Foundational Methods for Spatial Analysis in Environmental Data: From Core Concepts to Advanced Applications

Abstract

This article provides a comprehensive overview of foundational spatial analysis methods for environmental data, tailored for researchers and drug development professionals. It covers the complete workflow from core concepts and geographic frameworks to practical methodological applications, addressing critical challenges like spatial autocorrelation and data imbalance. The content explores validation techniques and comparative performance of methods like kriging and IDW, while highlighting transformative technologies including cloud-native platforms, GeoAI, and real-time analytics that are reshaping environmental research and its applications in biomedical contexts.

Core Principles and Geographic Frameworks for Environmental Spatial Data

In the face of complex global challenges, from climate adaptation to rapid urbanization, researchers and scientists require robust methodological frameworks to structure their investigations. The Geographic Approach provides a systematic process for spatial problem-solving that is particularly vital for environmental data research and practice. This five-step methodology transforms raw data into actionable intelligence by leveraging the power of location-based analysis [1]. As geospatial technologies evolve, this approach has expanded from a linear path to a continuous iterative loop, enabling scientists to deepen their understanding of environmental processes through successive refinement [1]. The integration of continuous sensing technologies and AI has fundamentally transformed data collection from periodic sampling to real-time monitoring, creating what can be conceptualized as a "planetary nervous system" for environmental tracking [1].

The Five-Step Framework of the Geographic Approach

Step 1: Collect Data

The initial phase involves gathering the multi-faceted information required to understand a geographic situation. Traditional environmental research relied on manual data compilation, but technological advances have shifted this process toward continuous sensing infrastructure [1]. GIS professionals now architect systems that ingest diverse data streams from satellites, sensors, mobile devices, and field teams [1].

Experimental Protocol for Environmental Data Collection:

- Sensor Network Deployment: Establish a grid of environmental sensors (e.g., soil moisture, air quality, water quality) at predetermined intervals based on preliminary spatial analysis of the study area.

- Remote Sensing Data Acquisition: Procure time-series satellite imagery (e.g., Landsat, Sentinel) covering the temporal range of the study, ensuring consistency in resolution and atmospheric correction.

- Field Validation: Conduct ground-truthing expeditions to collect physical samples and precise GPS coordinates for calibration of remotely-sensed data.

- Data Integration: Develop an ETL (Extract, Transform, Load) pipeline to harmonize disparate data formats, projections, and temporal scales into a unified geodatabase.

The critical advancement in this phase is the shift from GIS experts as data handlers to systems architects who design infrastructures that maintain coherent, continuously updated representations of dynamic environmental processes [1].

Step 2: Visualize and Map

Visualization transforms raw geospatial data into understandable representations that reveal patterns, relationships, and trends. Modern environmental research utilizes interactive visualization environments that update continuously as conditions change, rather than static maps [1]. The most sophisticated expression of this capability is the creation of digital twins—virtual replicas of physical environments that synthesize multiple GIS layers and simulate future conditions [1].

Experimental Protocol for Environmental Visualization:

- Multi-dimensional Mapping: Develop layered maps incorporating topography, hydrology, land use, and anthropogenic factors using consistent coordinate systems and scale dependencies.

- Temporal Animation: Create time-series visualizations that illustrate environmental change dynamics, such as deforestation progression, urban heat island intensification, or coastal erosion.

- Interactive Dashboard Development: Build web-based visualization platforms that allow researchers to filter, query, and manipulate environmental data layers in real-time.

- Digital Twin Implementation: Construct a dynamic 3D model of the study area that integrates real-time sensor data with predictive models for scenario testing.

The power of contemporary visualization lies in creating time-aware representations that communicate both spatial and temporal patterns, requiring equal parts technical sophistication and thoughtful design [1].

Table 1: Visualization Techniques for Environmental Data Analysis

| Visualization Type | Environmental Applications | Data Requirements | Technical Considerations |

|---|---|---|---|

| Heat Maps | Pollution concentration, Species distribution, Temperature variation | Point data with intensity values | Kernel density parameters, Color ramp selection |

| Time-series Animations | Land cover change, Glacial retreat, Urban expansion | Multi-temporal raster data | Frame rate optimization, Change detection algorithms |

| 3D Digital Twins | Watershed management, Urban planning, Flood modeling | Elevation data, Building footprints, Real-time sensor feeds | Computational resources, Data integration protocols |

| Interactive Dashboards | Ecosystem monitoring, Disaster response, Resource management | Multiple vector and raster layers | Web GIS architecture, User interface design |

Step 3: Analyze and Model

The analysis phase applies spatial reasoning to understand relationships, test hypotheses, and predict outcomes. GIS professionals increasingly design systems that enable domain experts to conduct sophisticated analyses without deep technical knowledge of GIS tools [1]. For environmental research, this includes critical analyses of connectivity and flow—how materials, species, or pollutants move through landscapes, watersheds, or atmospheric systems [1].

Experimental Protocol for Spatial Analysis:

- Spatial Autocorrelation Assessment: Conduct Moran's I analysis to determine clustering patterns in environmental phenomena and adjust statistical models accordingly [2].

- Habitat Suitability Modeling: Implement Maximum Entropy (MaxEnt) or Generalized Linear Models (GLM) to predict species distribution based on environmental covariates [2].

- Hydrological Network Analysis: Delineate watersheds, flow paths, and drainage patterns using digital elevation models to understand contaminant transport.

- Land Use Change Detection: Apply machine learning classifiers (e.g., Random Forest, Support Vector Machines) to multi-spectral imagery to quantify landscape transformation.

A critical consideration in spatial analysis is addressing spatial autocorrelation (SAC), which, if ignored, can create deceptively high predictive performance metrics while actually producing poor model generalization [2]. Proper spatial validation methods are essential for accurate environmental modeling [2].

Step 4: Plan and Geodesign

Geodesign utilizes geographic intelligence to develop interventions—determining not just what exists, but what should be. Modern planning occurs through iterative cycles where design, impact assessment, and refinement happen simultaneously rather than sequentially [1]. Environmental researchers can immediately see the consequences of proposed interventions, enabling real-time understanding of trade-offs [1].

Experimental Protocol for Environmental Geodesign:

- Scenario Development: Create multiple alternative futures based on different policy decisions, climate projections, or management strategies.

- Impact Simulation: Model the cascading effects of each scenario on interconnected environmental systems using multi-criteria decision analysis.

- Stakeholder Integration: Develop participatory GIS tools that incorporate local knowledge and community values into the planning process.

- Adaptive Management Framework: Design monitoring protocols that trigger specific management responses when environmental thresholds are approached.

A critical advancement in geodesign is the incorporation of broader perspectives beyond technical criteria, including community values, equity considerations, and long-term resilience [1]. The geographic framework helps balance multiple objectives that might otherwise conflict, making trade-offs explicit and measurable.

Table 2: Geodesign Applications in Environmental Research

| Planning Context | Key Spatial Analyses | Stakeholder Considerations | Outcome Metrics |

|---|---|---|---|

| Watershed Management | Hydrological modeling, Non-point source pollution tracking, Riparian buffer optimization | Agricultural interests, Municipal water needs, Recreational users | Water quality indices, Habitat connectivity, Economic impacts |

| Conservation Planning | Habitat connectivity analysis, Species distribution modeling, Climate resilience assessment | Landowner rights, Indigenous knowledge, Economic development goals | Biodiversity indices, Ecosystem services valuation, Landscape permeability |

| Renewable Energy Siting | Solar/wind resource assessment, Transmission corridor planning, Visual impact analysis | Community acceptance, Wildlife impacts, Grid integration costs | Energy production potential, Environmental footprint, Implementation timeline |

| Climate Adaptation | Vulnerability assessment, Managed retreat planning, Green infrastructure design | Social equity, Cultural preservation, Economic disruption | Risk reduction, Cost-benefit analysis, Community cohesion |

Step 5: Make Decisions and Act

The final phase converts spatial insights into actionable interventions, sharing findings, building consensus, and implementing solutions. Implementation typically reveals new questions and changing conditions, creating a feedback loop that returns to earlier steps in the geographic approach [1]. GIS professionals increasingly build systems that deliver location intelligence directly to decision-makers in context-appropriate formats [1].

Experimental Protocol for Decision Support:

- Real-time Monitoring Dashboard: Implement an operational display that shows current environmental conditions, resource status, and response team locations.

- Collaborative Decision Platform: Develop a web-based system that allows distributed stakeholders to examine the same information, propose alternatives, and work toward consensus.

- Adaptive Management Triggers: Establish quantitative thresholds that automatically trigger specific management actions when environmental conditions reach critical levels.

- Impact Evaluation Framework: Implement before-after-control-impact (BACI) monitoring to assess the effectiveness of interventions and inform future decisions.

The evolution in this phase is the shift from creating individual map products to architecting platforms that translate complex spatial analyses for different audiences and use cases [1]. Location intelligence becomes a shared reference point that grounds discussions in specific places and measurable impacts.

Research Reagent Solutions: Essential Tools for Spatial Analysis

Table 3: Key Research Tools and Platforms for Geographic Analysis

| Tool Category | Specific Solutions | Function in Research | Environmental Applications |

|---|---|---|---|

| GIS Platforms | ArcGIS Pro, QGIS | Spatial data management, analysis, and visualization | Multi-criteria decision analysis, Habitat suitability modeling, Land use change detection |

| Remote Sensing Software | ERDAS Imagine, ENVI | Processing satellite and aerial imagery | Vegetation index calculation, Change detection, Classification |

| Spatial Statistics | GeoDa, R-spatial | Analyzing spatial patterns and relationships | Spatial autocorrelation analysis, Hotspot detection, Regression modeling |

| Data Collection Tools | Field Maps, Survey123 | Mobile field data collection | Ground truthing, Environmental monitoring, Sample location tracking |

| Visualization Libraries | Python GeoMaps, Datashader | Creating interactive visualizations | Environmental dashboard development, Time-series animation, 3D modeling |

Challenges and Considerations in Geographic Analysis

While the Geographic Approach provides a powerful framework for environmental research, several specific challenges must be addressed to ensure robust outcomes:

Data Imbalance and Spatial Bias

Environmental data frequently exhibits inherent imbalance, where certain phenomena or classes are rare compared to others [2]. This creates challenges for predictive modeling, as minority class occurrences may be ignored by algorithms optimized for uniform distributions [2]. In geospatial modeling, sparse or nonexistent data in certain regions poses particular difficulties for comprehensive analysis [2].

Spatial Autocorrelation

A fundamental aspect of geospatial modeling is spatial autocorrelation (SAC), the principle that nearby locations tend to have more similar values than distant ones [2]. Ignoring SAC during model validation can create deceptively high performance metrics while actually producing poor generalization capabilities [2]. Appropriate spatial validation methods, such as spatial cross-validation, are essential for accurate assessment of model performance [2].

Uncertainty Estimation

Understanding the accuracy of predictions is obligatory for applying trained models, yet many studies lack proper statistical assessment and necessary uncertainty estimations [2]. This is particularly important in machine learning geospatial applications where input data distribution may differ from the distribution of the data sample used for model building—a phenomenon known as the out-of-distribution problem [2].

The Geographic Approach provides environmental researchers with a systematic framework for addressing complex spatial problems through its five interconnected steps. This methodology enables scientists to transform disparate environmental data into coherent understanding and actionable intelligence. The iterative nature of the process—continually cycling through data collection, visualization, analysis, planning, and action—creates a continuous learning system that adapts as understanding deepens and new questions emerge [1].

The power of this approach lies in its integration of geography as a unifying framework that aligns information from different sources, times, and perspectives [1]. When environmental data, social factors, economic considerations, and infrastructure capacity share a geographic foundation, they can be combined to reveal crucial relationships and inform sustainable decisions. For researchers tackling pressing environmental challenges, from biodiversity protection to climate adaptation, the Geographic Approach offers a structured path toward more resilient and equitable solutions.

Geospatial data, also referred to as spatial data, is information that identifies the geographic location and characteristics of natural or constructed features and boundaries on Earth [3]. This data is foundational to Geographic Information Systems (GIS), which are the tools used to analyze, visualize, and manage geospatial information [4]. In the context of environmental data research, spatial data provides the critical framework for understanding patterns and relationships in ecological processes, climate change, and resource distribution, enabling researchers to move from abstract numbers to place-based understanding.

The core value of spatial data lies in its integrative power. It weaves together disparate disciplines—such as geology, climatology, ecology, and sociology—into a coherent framework for understanding the world [1]. For environmental scientists and drug development professionals, this means public health data, environmental conditions, infrastructure capacity, and social demographics, when shared on a geographic foundation, can be combined to reveal crucial relationships that would otherwise remain invisible [1].

Core Data Types and Structures

Spatial data is broadly categorized into two main types, each with distinct structures and use cases. Understanding these is essential for selecting the appropriate data model for environmental research questions.

Vector Data

Vector data uses discrete geometric objects—points, lines, and polygons—to represent spatial features [3].

- Points: Defined by a single coordinate pair (X, Y), points represent features that are too small to be depicted as areas at the given scale. In environmental research, points can model locations of soil sampling sites, animal sightings, or monitoring stations [3].

- Lines: Formed by sequences of points, lines represent linear features such as rivers, roads, or topographic contours. Tracking pollutant dispersion along a river system is a typical application [3].

- Polygons: Closed loops of lines that enclose an area, polygons are used for features with a defined boundary and area. Examples include lakes, land use zones, watershed boundaries, or habitat ranges for species [3].

Raster Data

Raster data is essentially pixel-based, representing the world as a continuous grid of cells [3]. Each cell contains a value representing information, making it ideal for data that varies continuously across space.

- Digital Elevation Models (DEMs): Each cell in a DEM contains a value representing the elevation of the Earth's surface at that location, crucial for watershed analysis and slope stability studies [3].

- Satellite Imagery: Cells contain color values that collectively form an image. This is invaluable for land cover mapping, change detection (e.g., deforestation), and environmental monitoring [3].

- Thematic Maps: Rasters can represent continuous phenomena like temperature, precipitation, or soil pH, where each cell's value corresponds to a measurement [3].

Table 1: Comparison of Vector and Raster Data Models

| Feature | Vector Data | Raster Data |

|---|---|---|

| Representation | Points, lines, polygons (discrete objects) | Grid of cells/pixels (continuous field) |

| Data Structure | Coordinate-based geometry | Matrix of values (rows & columns) |

| Best For | Precise features, boundaries, networks | Continuous data, imagery, surfaces |

| Examples | Roads, land parcels, sampling points | Elevation, satellite imagery, temperature |

| Environmental Use Cases | Habitat boundaries, river networks, site locations | Climate modeling, vegetation indices, flood inundation |

Supporting Data Components

Beyond geometry, spatial data includes other critical components:

- Attribute Data: These are tabular data that describe the characteristics of the spatial features. For a point representing a soil sample, attributes might include pH, organic content, and contaminant levels [3].

- Temporal Data: Many environmental analyses require understanding change over time. Temporal data associates a specific time or period with spatial features, enabling the tracking of phenomena like urban sprawl, shifting coastlines, or the spread of a disease vector [1] [3].

Spatial Relationships and Analysis Techniques

Spatial analysis is the process of examining the locations, attributes, and relationships of geographic features to address research questions. The "geographic approach" provides a logical, multi-stage framework for this process, comprising five interconnected steps: Ask and Define, Acquire and Prepare, Explore and Analyze, Act and Manage, and Share and Reflect [1]. The following workflow diagram illustrates this continuous analytical process.

Core techniques in spatial analysis include:

- Spatial Querying: This involves selecting features based on their location or attribute values. An example is querying all water bodies within a specified distance of an industrial site.

- Overlay Analysis: This technique combines different spatial datasets to create a new composite layer. Overlaying soil type, slope, and land cover data is fundamental for erosion risk assessment [3].

- Proximity (Buffer) Analysis: This defines an area around a feature of interest. Creating a buffer around a protected wetland can help regulate activities in its sensitive periphery.

- Network Analysis: This studies connectivity and flow through networks, such as analyzing the path of a pollutant through a watershed or identifying optimal routes for field data collection [1].

- Spatial Statistics: These methods quantify spatial patterns, helping to identify statistically significant clusters (e.g., disease outbreaks) or trends across a landscape.

A critical challenge in spatial analysis, particularly with aggregated data, is the Modifiable Areal Unit Problem (MAUP). This well-documented issue means that the results of an analysis can be sensitive to the choice of boundaries (the zonal effect) and the level of aggregation (the scale effect) [5]. For instance, analyzing socioeconomic data by census tract may yield different patterns than analyzing it by zip code. Researchers must be aware of this when interpreting results, and machine-guidance approaches are being developed to help analysts assess and mitigate its effects [5].

Experimental Protocols for Spatial Analysis

This section outlines a generalized, replicable methodology for conducting a spatial analysis project in environmental research, from data acquisition to insight generation.

Data Acquisition and Preprocessing Protocol

Objective: To gather and prepare all necessary spatial and attribute data for analysis.

- Data Collection: Identify and acquire data from relevant sources. Modern GIS has shifted from periodic data capture to continuous sensing, ingesting streams from satellites, sensors, mobile devices, and field teams [1]. Key sources include:

- Data Integration: Harmonize data from different sources, scales, and formats. This involves converting all data to a common coordinate reference system (CRS) to ensure alignment.

- Attribute Management: Compile and clean non-spatial data (e.g., lab results, survey data) and join them to the corresponding spatial features using a unique identifier.

Spatial Modeling and Analysis Protocol

Objective: To apply spatial operations and statistical models to extract meaningful insights related to the research hypothesis.

- Exploratory Spatial Data Analysis (ESDA): Visualize the data using maps and charts to identify initial patterns, outliers, and data distributions.

- Hypothesis Testing: Formulate a spatial hypothesis (e.g., "The concentration of heavy metals is significantly higher downstream from the mining site").

- Execute Spatial Analysis:

- Perform a buffer analysis to define zones of influence (e.g., upstream vs. downstream).

- Use an overlay analysis to extract attribute values for sampling points within these zones.

- Apply spatial statistical tests (e.g., a paired t-test or spatial regression) to determine if the observed differences are statistically significant.

- Modeling: For more complex phenomena, employ spatial modeling techniques. Generalized Additive Models (GAMs) and other spatially varying coefficient models can be used to accommodate non-linear relationships and space-time scaling issues in environmental data [5].

The entire analytical process, from raw data to actionable knowledge, can be visualized as a transformation pipeline, as shown in the following diagram.

The Scientist's Toolkit: Essential Research Reagents & Materials

In GIS-based environmental research, "research reagents" translate to core datasets, software tools, and analytical techniques. The following table details these essential components.

Table 2: Essential GIS Research Toolkit for Environmental Science

| Tool/Reagent | Type | Function in Analysis |

|---|---|---|

| Satellite Imagery (e.g., Landsat, Sentinel) | Raster Data | Provides base layers for land cover classification, change detection, and vegetation health monitoring (e.g., via NDVI). |

| Digital Elevation Model (DEM) | Raster Data | Represents topographic variation; essential for hydrological modeling, slope analysis, and habitat suitability studies. |

| GPS/GNSS Receiver | Data Collection Hardware | Precisely geolocates field samples, transects, and observation points for ground-truthing. |

| QGIS / ArcGIS | GIS Software Platform | The primary environment for data management, visualization, spatial analysis, and map creation. |

| PostGIS / Spatially-enabled Databases | Data Management | Stores, queries, and manages large, complex spatial datasets efficiently. |

| Python (Geopandas, Rasterio) | Programming Library | Enables automation of repetitive analyses, custom spatial algorithm development, and handling of big geospatial data. |

| OpenStreetMap (OSM) Data | Vector Data | Provides foundational layers of roads, buildings, water bodies, and points of interest for context and analysis. |

| Spatial Statistics (e.g., Global/Local Moran's I) | Analytical Method | Quantifies spatial autocorrelation to identify significant clusters or hotspots of a measured variable. |

The field of spatial data collection is undergoing a fundamental transformation, moving from static, periodic snapshots to dynamic, continuous sensing paradigms. This evolution is critically reshaping foundational methods for spatial analysis in environmental data research, enabling unprecedented insights into complex biological and ecological systems. Traditional spatial transcriptomics technologies, for instance, have significantly advanced our capacity to quantify gene expression within tissue sections while preserving crucial spatial context information. However, these approaches have historically been limited to analyzing single two-dimensional slices, creating a theoretical concern regarding potential reduction in statistical power due to low gene expression coverage and the neglect of spatial relationships in the three-dimensional tissue context [6]. The emerging framework of continuous sensing addresses these limitations through integrated computational architectures that process heterogeneous data streams, enabling researchers to capture spatial phenomena as dynamic processes rather than discrete observations. This paradigm shift is particularly relevant for drug development professionals seeking to understand spatial-temporal patterns in disease progression and therapeutic response at cellular and molecular levels.

Traditional Foundations: Periodic Capture Methodologies

The establishment of spatial analysis in environmental research began with periodic capture methodologies, which provided foundational insights but contained inherent limitations. Spatial transcriptomics (ST) technologies exemplify this approach, where tissue sections are sliced into multiple thin slices spatially represented in two-dimensional coordinate spaces, with each data point representing a spot consisting of one to 100 cells and their corresponding messenger RNA expression values [6]. These methodologies relied on discrete sampling intervals and manual alignment protocols, creating significant challenges for comprehensive tissue analysis.

The key limitation of periodic capture approaches lies in their fundamental structure: a single 2D coordinate space only represents a single slice of the tissue section, limiting comprehensive analysis of the entire tissue context [6]. This fragmentation necessitated complex computational alignment strategies to reconstruct three-dimensional understanding from two-dimensional samples. Researchers demonstrated better biological insights derived from downstream analyses of single ST tissue slices compared to single-cell RNA and bulk RNA analyses for applications like cell-type identification and spatial clustering analysis [6]. However, the manual alignment and integration of multiple tissue slices remained time-consuming and required significant technical expertise, creating bottlenecks in research workflows.

Table 1: Limitations of Periodic Spatial Capture Methods in Research Contexts

| Aspect | Technical Limitation | Impact on Research |

|---|---|---|

| Temporal Resolution | Discrete sampling intervals | Inability to capture dynamic processes and transient states |

| Spatial Comprehension | 2D representation of 3D phenomena | Loss of z-axis information and spatial relationships |

| Data Integration | Manual alignment requirements | Time-consuming processes requiring technical expertise |

| Statistical Power | Limited gene expression coverage per slice | Reduced analytical sensitivity for rare cell populations |

For environmental research, traditional remote sensing platforms operated on similar periodic principles, capturing raw spatial data through satellites or aircraft at specific intervals rather than continuously [7]. Geographic Information Systems (GIS) then managed, analyzed, and visualized this information in a spatial context, facilitating mapping and integration with other data sources [7]. While this approach generated valuable insights, the inherent lag between data capture and analysis limited its utility for understanding dynamic processes and real-time phenomena.

The Transition to Continuous Sensing Architectures

The evolution from periodic to continuous spatial sensing represents a fundamental architectural shift enabled by advances in multiple technology domains. This transition leverages multi-modal sensing platforms, real-time data processing, and AI-driven analytics to create responsive systems that capture spatial phenomena as dynamic processes. In urban environmental research, for example, continuous sensing frameworks employ hierarchical data fusion architectures that process heterogeneous sensor streams including visual, acoustic, and environmental data through advanced machine learning algorithms [8].

The conceptual shift from periodic to continuous sensing represents a fundamental reimagining of spatial data collection's temporal dimension, moving from snapshot documentation to ongoing conversation with phenomena. This architectural evolution enables researchers to address critical gaps in traditional methodologies, particularly regarding temporal dynamics and system responsiveness.

Table 2: Core Components of Continuous Sensing Architectures

| Architectural Component | Function | Research Application Examples |

|---|---|---|

| Multi-modal Sensing Infrastructure | Complementary data collection across visual, acoustic, and environmental sensors | Correlating environmental factors with behavioral patterns in urban spaces [8] |

| Real-time Processing Framework | Sub-100ms response through optimized computational architectures | Dynamic optimization of urban open spaces based on current usage [8] |

| AI-Driven Analytics | Deep learning-based spatial optimization with reinforcement learning | Predicting spatial usage patterns and identifying optimal locations for urban amenities [8] |

| Continuous Feedback Loop | Sensing-planning-actuation cycles maintaining system responsiveness | Adaptive interventions responding to environmental changes within human perceptual thresholds [8] |

In biological research, an analogous transition is occurring in spatial transcriptomics, where automated and robust alignment and integration of multiple slices within and across datasets addresses the critical challenge of tissue heterogeneity and plasticity [6]. This approach recognizes that meaningful analysis requires capturing complete tissue context through multiple slices rather than relying on isolated two-dimensional representations. The computational foundation for this transition relies on sophisticated data fusion algorithms that represent the core computational framework for integrating heterogeneous sensing data streams into coherent information representations suitable for AI-driven optimization [8].

Technical Framework: Implementing Continuous Sensing Systems

Implementing continuous sensing systems requires a structured technical framework encompassing data acquisition, processing, and analysis components. The methodology integrates specialized hardware configurations with sophisticated computational pipelines to transform raw sensor data into actionable spatial insights.

Multi-Modal Data Acquisition Infrastructure

Continuous spatial sensing employs a hierarchical data acquisition system where different sensing modalities complement each other's limitations [8]. The technical infrastructure includes:

Visual Sensors: High-resolution cameras and depth sensors providing rich spatial information about movements, density, and utilization patterns through computer vision techniques. These systems enable automated density mapping but struggle in low-light conditions, creating data gaps addressed by complementary modalities [8].

Acoustic Monitoring Technologies: Audio sensors capturing sound-based environmental data reflecting activity levels, social interactions, and ambient conditions through sophisticated signal processing algorithms. These systems excel at activity detection regardless of illumination levels by filtering background noise and identifying specific sound signatures [8].

Environmental Sensors: Instruments monitoring temperature, humidity, air quality, wind speed, and lighting levels to establish baseline conditions that contextualize behavioral patterns observed through other modalities. These parameters directly influence user comfort, space attractiveness, and usage patterns [8].

Data Processing Methodologies

Data preprocessing for continuous sensing systems involves several critical stages including noise reduction, signal filtering, feature extraction, and temporal alignment of heterogeneous data streams [8]. Advanced preprocessing techniques employ machine learning approaches to automatically identify and correct sensor malfunctions, data gaps, and measurement anomalies that could compromise subsequent analysis reliability.

The core analytical transformation occurs through data fusion algorithms that enable effective integration of heterogeneous sensing data streams into coherent information representations. Contemporary fusion approaches utilize probabilistic models, deep learning architectures, and ensemble methods to combine multi-modal data while preserving unique information content from each sensing modality [8]. These algorithms must balance computational efficiency and information completeness, ensuring real-time processing requirements are met without sacrificing fused data quality.

Diagram 1: Continuous Sensing System Architecture - 76 characters

AI-Driven Analytical Framework

Artificial intelligence applications form the computational core of continuous spatial sensing systems, enabling sophisticated pattern recognition and predictive capabilities:

Supervised learning techniques, particularly support vector machines (SVM) and random forest algorithms, have demonstrated effectiveness in predicting spatial usage patterns and identifying optimal locations for various urban amenities based on historical data and environmental characteristics [8]. The mathematical foundation of these approaches employs optimization functions to define spatial classification boundaries and decision thresholds for pattern recognition in complex urban environments.

Deep learning models provide sophisticated pattern recognition and feature extraction mechanisms that process complex multi-dimensional sensing data [8]. Convolutional neural networks (CNNs) enable automatic feature extraction from spatial imagery without manual programming of detection rules, while recurrent neural networks (RNNs) and Long Short-Term Memory (LSTM) variants process temporal sequences to predict dynamic usage patterns by learning sequential dependencies in historical data.

Reinforcement learning algorithms enable dynamic decision-making processes for responsive spatial design by learning optimal strategies through iterative interaction with environments [8]. These algorithms utilize trial-and-error learning mechanisms to discover interventions that maximize predefined objectives, employing mathematical frameworks like the Bellman equation to represent optimal value functions for different spatial states.

Experimental Protocols and Validation Methodologies

Rigorous experimental protocols are essential for validating continuous sensing systems and demonstrating their advantages over traditional periodic approaches. The validation methodology encompasses performance benchmarking, comparative analysis, and real-world deployment case studies.

Performance Metrics and Evaluation Framework

Experimental validation of continuous sensing systems requires comprehensive metrics that quantify performance across multiple dimensions:

Spatial Utilization Efficiency: Measured as the ratio of actively used area to total available space, with experimental results demonstrating 34.2% increase compared to conventional static design approaches [8].

Flow Optimization Performance: Quantified through movement speed and path directness metrics, with experimental validation showing 28.7% enhancement in pedestrian flow optimization [8].

Operational Efficiency: Assessment of resource utilization and cost-effectiveness, with experiments documenting 22.3% reduction in operational costs compared to static approaches [8].

Alignment and Integration Accuracy: For spatial transcriptomics applications, evaluation measures include alignment error rates, spatial coherence scores, clustering accuracy, and gene expression coverage improvements [6].

Case Study: Urban Vertical Greening Assessment

A representative experimental implementation demonstrating continuous sensing methodology assessed vertical greening systems across Tokyo's 23 wards. The protocol employed:

Data Acquisition: Collection of 88,750 street-view images processed through a YOLOv8 deep learning model to identify and map 7,205 vertical greening systems, distinguishing green façades from living walls with computer vision precision [9].

Spatial Analysis: Application of ordinary least squares and geographically weighted regression to assess correspondence with four indicator groups of environmental and urban factors, quantifying distribution patterns and identifying spatial mismatches [9].

Demand Quantification: Development of a vertical greening demand index (VGDI) with hybrid analytic hierarchy process (AHP) and Entropy weights to translate spatial relationships into priority zones for intervention, operationalizing supply-demand alignment at city scale [9].

The experimental results revealed clustered, uneven distributions of vertical greening systems with the clearest correspondence to land use indicators, highlighting specific supply-demand gaps and actionable targets for policy intervention [9].

Diagram 2: Vertical Greening Assessment Workflow - 49 characters

Validation: AI-Driven Urban Space Optimization

A comprehensive validation case study conducted at Metropolitan Central Plaza, a 2.4-hectare transit-oriented public space in Shanghai's dense urban district, demonstrated the practical effectiveness of continuous sensing methodologies in real-world deployment [8]. The experimental protocol implemented:

Multi-Modal Sensing Infrastructure: Deployment of complementary visual, acoustic, and environmental sensors creating a hierarchical data acquisition system that continuously monitored spatial usage patterns, environmental conditions, and user behaviors across the urban space.

Real-Time Processing Framework: Implementation of optimized computational architectures achieving sub-100ms response times through intelligent caching strategies, enabling dynamic spatial adaptations within human perceptual thresholds.

Performance Validation: Quantitative assessment showing substantial improvements in user satisfaction metrics and environmental quality indicators, validating the methodology's effectiveness for continuous spatial optimization in complex urban environments [8].

The Researcher's Toolkit: Essential Solutions for Spatial Analysis

Implementing continuous spatial sensing requires specialized computational tools and analytical frameworks. The following table details essential solutions for researchers developing continuous spatial sensing capabilities:

Table 3: Essential Research Solutions for Continuous Spatial Sensing

| Tool/Category | Function | Specific Applications |

|---|---|---|

| YOLOv8 Deep Learning Model | Computer vision object detection | Mapping vertical greening systems from street-view imagery; automated spatial element identification [9] |

| Geographically Weighted Regression (GWR) | Spatial statistical analysis | Assessing location-specific relationships between environmental variables; identifying spatial mismatches [9] |

| Multi-Modal Data Fusion Architecture | Heterogeneous data integration | Processing complementary sensor streams (visual, acoustic, environmental) into unified spatial representations [8] |

| Spatial Transcriptomics Alignment Tools | Tissue slice integration | Automated alignment and integration of multiple 2D tissue slices into coherent 3D spatial contexts [6] |

| Reinforcement Learning Algorithms | Dynamic spatial optimization | Learning optimal design interventions through continuous interaction with environmental feedback [8] |

| Hybrid AHP-Entropy Weighting | Demand index quantification | Translating spatial relationships into priority zones through multi-criteria decision analysis [9] |

Advanced computational frameworks for spatial alignment and integration have become particularly critical for biological research, with at least 24 distinct methodologies currently proposed to address the specific challenge of aligning and integrating multiple tissue slices in spatial transcriptomics [6]. These tools can be categorized by methodological approach—statistical mapping, image processing and registration, and graph-based methods—each with specific strengths for different research contexts [6].

For environmental applications, the integration of remote sensing and GIS creates powerful capabilities for continuous spatial monitoring, enabling professionals to track environmental changes like impacts of climate change, desertification, or deforestation through large-scale and uninterrupted observation of areas [7]. This data is then processed by GIS to perform thorough analysis considering various factors such as land use patterns or population growth, supporting evidence-based decision-making for resource management and conservation strategies [7].

The evolution from periodic capture to continuous sensing represents a fundamental transformation in spatial data collection methodologies with profound implications for environmental research and therapeutic development. This paradigm shift enables researchers to move beyond static snapshots to dynamic, process-oriented understanding of complex spatial phenomena across biological and environmental domains. The integration of multi-modal sensing technologies with AI-driven analytical frameworks creates unprecedented capabilities for capturing spatial-temporal dynamics at multiple scales, from cellular interactions to urban ecosystems.

Future advancements will likely focus on enhancing computational efficiency, improving real-time processing capabilities, and developing more sophisticated fusion algorithms that can integrate increasingly diverse data streams. As these technologies mature, continuous spatial sensing will become increasingly central to foundational methods in environmental data research, enabling more responsive, adaptive, and evidence-based approaches to understanding and managing complex spatial systems across scientific disciplines.

The field of environmental research is undergoing a profound transformation in how spatial data is visualized and analyzed. The journey from static representations to dynamic, interactive digital models represents a paradigm shift in our ability to understand and respond to complex environmental challenges. This evolution is driven by advances in computational power, data availability, and analytical frameworks that enable researchers to move beyond descriptive mapping toward predictive simulation and interactive exploration.

Digital twins represent the cutting edge of this evolution, serving as living digital surrogates of physical objects and processes that evolve alongside their real-world counterparts [10]. Unlike traditional static maps or even interactive visualizations, digital twins are actionable, responsive models updated in near real-time by sensor data, device inputs, and other sources, enabling unprecedented capabilities for simulation, monitoring, and decision-support [10]. For environmental researchers and drug development professionals working with spatially-distributed data, these technologies offer new pathways for understanding environmental health determinants, modeling exposure pathways, and developing targeted interventions.

This technical guide examines the complete spectrum of visualization strategies available to modern environmental researchers, with particular focus on their application within spatial analysis frameworks. We will explore methodological foundations, implementation protocols, and emerging opportunities that define the current state of spatial visualization in environmental science.

Foundational Visualization Methods for Environmental Data

Static and Interactive Mapping Techniques

Static maps continue to serve essential functions in environmental research, particularly for publication, reporting, and communicating established spatial patterns. These visualizations are characterized by fixed representations that capture environmental conditions at specific points in time. Common static mapping approaches include choropleth maps for aggregated data, point maps for discrete observations, and symbol maps for representing quantitative differences across locations [11].

Interactive visualizations represent a significant advancement, enabling researchers to explore spatial-temporal dynamics through user-controlled interfaces. These systems typically feature filtering capabilities, zoom functionality, tooltips with detailed information on demand, and temporal sliders for animating change over time [11]. The technical implementation of interactive maps often leverages JavaScript libraries (such as Leaflet or D3.js) and web-based mapping platforms that support real-time data exploration without requiring advanced programming skills from end users.

Table 1: Comparative Analysis of Mapping Techniques for Environmental Data

| Visualization Type | Primary Environmental Applications | Technical Requirements | Interpretation Complexity | Spatiotemporal Flexibility |

|---|---|---|---|---|

| Static Choropleth | Policy reporting, publication figures | GIS software, standard visualization tools | Low | Single time point, aggregated areas |

| Interactive Web Maps | Public communication, exploratory data analysis | Web mapping libraries, cloud hosting | Low to moderate | Multiple time points, user-controlled zoom |

| Animated Temporal Sequences | Climate trend visualization, diffusion patterns | Video production, sequenced exports | Moderate | Fixed animation path, multiple time points |

| 3D Scene Visualization | Topographic analysis, urban canopy models | 3D rendering, specialized software | High | Static or controlled perspectives |

| Digital Twins | Predictive modeling, scenario testing, real-time monitoring | IoT sensors, cloud computing, AI/ML algorithms | High | Continuous updates, immersive interaction |

Color Theory and Visual Semiotics in Environmental Visualization

Effective color usage is fundamental to creating environmental visualizations that accurately and intuitively communicate complex data. Research demonstrates that color directly affects human information processing, influencing pattern recognition, memory retention, and attention allocation [12]. For environmental researchers, strategic color implementation follows several evidence-based principles:

Sequential color schemes utilize a single hue in varying saturations or gradients to represent continuous data such as pollution concentrations or temperature gradients [12] [13]. These palettes effectively communicate quantitative differences through intuitive lightness-to-darkness relationships, with lighter colors typically representing lower values and darker colors representing higher values [13].

Diverging color schemes employ two contrasting hues to represent deviation from a critical midpoint or baseline value, making them particularly valuable for visualizing parameters that have meaningful central values, such as temperature anomalies or pollution levels relative to regulatory standards [12] [13]. The center color should ideally be a light neutral tone (e.g., light grey) rather than pure white to maintain visual distinction [13].

Qualitative color schemes use distinct hues to represent categorical data without implied ordering, such as land cover classifications or ecosystem types [12]. Best practices limit these palettes to approximately seven clearly distinguishable colors to avoid visual confusion and support pre-attentive processing [12] [13].

Accessibility considerations require that color choices accommodate diverse visual abilities, including color vision deficiencies. Technical implementations should ensure sufficient contrast ratios (at least 4.5:1 for standard text) and avoid problematic color combinations such as red-green simultaneity [13]. Additionally, leveraging both hue and lightness variations ensures that visualizations remain interpretable when converted to grayscale [13].

Digital Twins: Architecture and Implementation

Conceptual Framework and Core Characteristics

Digital twins represent a fundamental advancement beyond traditional visualization approaches, creating dynamic digital surrogates that evolve alongside their physical counterparts [10]. The European Centre for Medium-Range Weather Forecasts' Destination Earth initiative exemplifies this approach, developing Earth-system digital twins that simulate planetary behavior with unprecedented resolution to better assess climate change implications and extreme event impacts [14].

Three defining characteristics distinguish digital twins from conventional spatial visualizations:

- Continuous Synchronization: Digital twins maintain active connections to their physical counterparts through continuous data streams from sensors, satellites, and other monitoring systems, enabling them to reflect near real-time conditions [10].

- Semantic Enrichment: Beyond geometric representation, digital twins incorporate layered semantic information that captures the functionality, relationships, and behaviors of environmental elements [10].

- Interactivity and Scenario Modeling: Advanced digital twins support interactive exploration and "what-if" scenario testing, allowing researchers to simulate interventions and forecast potential futures under different conditions [10] [14].

The DIDYMOS-XR project illustrates a comprehensive implementation framework for environmental digital twins, beginning with high-accuracy 3D model creation using photogrammetry and total stations, followed by semantic enrichment through object segmentation and classification, and culminating in continuous updating via automated sensor networks [10].

Technical Architecture and Workflow

The development of functional digital twins for environmental applications follows a structured workflow that transforms raw spatial data into interactive, semantically-rich digital models. The DIDYMOS-XR framework provides a representative architecture that progresses through several technical phases [10]:

Digital Twin Development Workflow

This workflow produces digital twins with varying levels of sophistication. The initial "Day0 twin" represents the baseline digital model, which is subsequently enriched through continuous data integration to become a fully functional digital twin capable of supporting analytical and predictive applications [10].

Table 2: Digital Twin Capabilities for Environmental Applications

| Capability Category | Technical Components | Environmental Research Applications | Implementation Considerations |

|---|---|---|---|

| High-Resolution Modeling | Photogrammetry, laser scanning, satellite imagery | Microclimate modeling, urban heat island analysis, watershed delineation | Computational requirements, data storage, processing pipelines |

| Real-Time Sensor Integration | IoT networks, edge computing, data assimilation algorithms | Air/water quality monitoring, extreme weather response, ecological disturbance detection | Sensor calibration, data quality control, network latency |

| Semantic Scene Understanding | Machine learning classification, ontology development, relationship mapping | Habitat suitability assessment, infrastructure vulnerability, ecosystem service quantification | Training data requirements, domain knowledge integration |

| Interactive Scenario Modeling | Simulation engines, parameter adjustment interfaces, visualization dashboards | Climate adaptation planning, intervention effectiveness testing, disaster response planning | Computational performance, user interface design, model validation |

| Extended Reality Integration | VR/AR platforms, positioning systems, immersive visualization | Public engagement, planning stakeholder workshops, environmental education | Hardware requirements, user experience design, accessibility |

Methodological Protocols for Environmental Visualization

Geospatial Artificial Intelligence (GeoAI) Implementation

Geospatial Artificial Intelligence (GeoAI) represents the integration of artificial intelligence and machine learning methodologies with geospatial data and analysis [15]. This approach has emerged as a transformative methodology for environmental visualization and modeling, particularly through its ability to process massive datasets and identify complex spatial patterns that may elude traditional analytical approaches.

The implementation of GeoAI for environmental visualization follows a structured protocol:

Phase 1: Data Acquisition and Preparation

- Acquire multisource geospatial data including satellite imagery, administrative boundaries, sensor networks, and street-level imagery [15].

- Address data quality issues including missing values, spatial inconsistencies, and temporal mismatches through preprocessing and normalization [2] [15].

- Perform spatial-temporal alignment to ensure consistent resolution and coverage across data sources [15].

Phase 2: Algorithm Selection and Training

- Select appropriate machine learning architectures based on the analytical objective (e.g., convolutional neural networks for image classification, recurrent networks for temporal sequences) [2].

- Implement spatial cross-validation techniques to address spatial autocorrelation and avoid overoptimistic performance estimates [2].

- Apply regularization methods to enhance model generalizability beyond training data distributions [2].

Phase 3: Visualization and Interpretation

- Generate prediction surfaces with associated uncertainty estimates to communicate model reliability [2].

- Develop interactive interfaces that allow users to explore different scenarios and model parameters [16].

- Create explanatory visualizations that illustrate the relationship between input features and model predictions [15].

GeoAI approaches are particularly valuable for environmental health research, where they enable high-resolution exposure assessment and pattern detection across large populations and geographic areas [15]. Example applications include classifying greenspace from street view imagery, predicting air pollution concentrations at fine spatial scales, and identifying communities vulnerable to environmental hazards [15].

Spatial Vulnerability Assessment Methodology

The development of spatial vulnerability indices represents an important application of advanced visualization strategies in environmental health research. These methodologies integrate diverse environmental and population data to identify areas where environmental risks and social vulnerability intersect [17]. A replicable protocol for constructing mortality-weighted vulnerability indices includes:

Vulnerability Index Development Protocol

This methodology improves upon traditional approaches by directly incorporating observed health outcomes (e.g., all-cause mortality) to weight index components, resulting in indices that more accurately reflect real-world health impacts [17]. The implementation produces vulnerability assessments across multiple spatial and temporal resolutions, enabling fine-grained analysis of population vulnerability patterns and trends [17].

Implementation Tools and Research Reagents

The successful implementation of advanced visualization strategies requires appropriate computational tools and data resources. The environmental research community benefits from a diverse ecosystem of commercial platforms, open-source tools, and specialized data products that support the progression from static mapping to interactive digital twins.

Table 3: Essential Research Reagents for Environmental Visualization

| Tool Category | Specific Platforms | Primary Functionality | Implementation Level |

|---|---|---|---|

| Geospatial Analysis | ArcGIS, QGIS, GRASS GIS | Spatial data management, geoprocessing, basic cartography | Beginner to Advanced |

| Statistical Programming | R (sf, terra packages), Python (geopandas, xarray) | Data cleaning, spatial statistics, custom algorithm development | Intermediate to Advanced |

| Interactive Visualization | Infogram, Datawrapper, Tableau | Web-based mapping, dashboard creation, public communication | Beginner to Intermediate |

| 3D Modeling & XR | Unity, Unreal Engine, WebXR | Digital twin development, immersive visualization, scenario simulation | Advanced |

| Sensor Integration | IoT platforms (AWS IoT, Azure IoT) | Real-time data streaming, sensor network management, edge computing | Intermediate to Advanced |

| Cloud Computing | Google Earth Engine, ECMWF's Digital Twin Engine | Large-scale data processing, model deployment, collaborative analysis | Intermediate to Advanced |

Platforms like Infogram offer AI-powered chart suggestion features that analyze environmental datasets and recommend appropriate visualization types, while tools like ClearPoint provide specialized functionality for integrating qualitative and quantitative data in management reporting [18] [16]. For digital twin implementation, the Destination Earth Digital Twin Engine exemplifies specialized platforms designed to support interactive access to models, data, and workflows through cloud-based solutions [14].

The evolution from static maps to interactive digital twins represents a fundamental transformation in how environmental researchers conceptualize, analyze, and communicate spatial information. This progression enables increasingly sophisticated approaches to understanding complex environmental systems, from basic pattern recognition to dynamic simulation and predictive modeling.

Digital twins particularly represent a paradigm shift by creating living digital representations that evolve alongside their physical counterparts, enabling researchers to move beyond observation to active exploration of "what-if" scenarios [10]. These technologies show particular promise for urban planning, environmental health assessment, climate adaptation, and sustainable development applications where complex systems interact across multiple spatial and temporal scales [10] [14].

As these technologies continue to mature, several emerging trends suggest future development directions. The integration of Geospatial Artificial Intelligence (GeoAI) will enhance our ability to extract meaningful patterns from massive environmental datasets [15]. Advances in extended reality interfaces will make complex environmental data more accessible and interpretable to diverse stakeholders [10]. And increasingly sophisticated uncertainty quantification methods will improve the transparency and reliability of environmental visualizations [2].

For environmental researchers and drug development professionals, these advances offer unprecedented capabilities for understanding the spatial dimensions of environmental health, modeling exposure pathways, and developing targeted interventions. By strategically adopting appropriate visualization strategies across this spectrum—from purpose-built static maps to comprehensive digital twins—the research community can enhance both scientific understanding and public engagement with critical environmental challenges.

Exploratory Spatial Data Analysis (ESDA) constitutes a critical set of techniques designed to analyze spatial data to uncover patterns, trends, and relationships that might otherwise remain hidden in complex datasets. As a foundational methodology within geographic information science, ESDA emphasizes visual exploration and statistical interrogation of spatial distributions, providing researchers with powerful tools to understand the underlying structure of geographic phenomena [19]. Within environmental research and drug development contexts, ESDA serves as an indispensable first step in formulating hypotheses, guiding subsequent analytical approaches, and informing decision-making processes based on spatial evidence.

The fundamental premise of ESDA rests on the principle that spatial data possess inherent characteristics—specifically spatial autocorrelation and heterogeneity—that distinguish them from conventional datasets. Spatial autocorrelation refers to the systematic variation of a variable in geographic space, where nearby locations tend to exhibit more similar values than distant ones. ESDA methodologies specifically target the identification and quantification of such spatial effects, enabling researchers to move beyond aspatial analytical frameworks that may produce misleading results when applied to geographically referenced information [19] [20].

Theoretical Foundations of ESDA

Core Spatial Concepts

ESDA operates on several foundational spatial concepts that govern its application and interpretation. Spatial autocorrelation represents perhaps the most fundamental concept, describing the degree to which attribute values at one location are similar to values at nearby locations. Positive spatial autocorrelation occurs when similar values cluster together in space, while negative autocorrelation manifests when dissimilar values cluster. The detection and measurement of spatial autocorrelation forms a cornerstone of ESDA, as it violates the assumption of independence underlying many traditional statistical methods [19].

Spatial heterogeneity complements this concept by acknowledging that relationships between variables may not be constant across a study area. This geographic variation in relationships necessitates local approaches to spatial analysis rather than relying exclusively on global models that assume spatial stationarity. Environmental phenomena frequently exhibit both autocorrelation and heterogeneity, making ESDA particularly well-suited for ecological, epidemiological, and resource management applications where these spatial effects are inherent to the systems under investigation [21].

The Role of Geoinformatics

Modern ESDA is deeply intertwined with geoinformatics, which Ehlers (1993) defines as "art, science or technology dealing with the acquisition, storage, processing, production, presentation, and dissemination of geoinformation" [20]. This interdisciplinary field provides the technological infrastructure—including geographic information systems (GIS), remote sensing platforms, and spatial database management systems—that enables the practical implementation of ESDA techniques. The integration of ESDA within geoinformatics frameworks has revolutionized environmental data analysis by facilitating the visualization, manipulation, and interpretation of complex spatial relationships that would be difficult to discern through numerical analysis alone [20].

Geochemical data exemplify the typical structure of spatial datasets, expressed as X, Y, and Zi, where X and Y represent geographic coordinates, and Zi (i = 1, 2, …, k) represents attributes (e.g., element concentrations, biological markers, environmental measurements) at those locations [20]. Such point-referenced data serve as the fundamental input for ESDA, with the spatial referencing enabling the application of specialized analytical techniques that explicitly incorporate geographic context into the exploratory process.

Key Methodologies and Techniques

Spatial Pattern Visualization and Representation

The visualization of spatial distributions forms the most fundamental ESDA activity, providing researchers with intuitive understanding of data structure before applying more sophisticated analytical techniques. Effective spatial representation begins with appropriate geochemical mapping approaches that translate numerical measurements into visual representations that highlight spatial structure [20]. Reimann (2005) documented various classification methods for mapping geospatial data, including arbitrary class boundaries, standard deviation-based classifications, and percentile-based approaches, each offering distinct advantages for different analytical contexts [20].

Table 1: Spatial Interpolation Methods for ESDA

| Method | Principle | Best Use Cases | Software Implementation |

|---|---|---|---|

| Inverse Distance Weighting (IDW) | Estimates values at unknown locations using weighted averages of known points, with weights inversely proportional to distance | Data with complete spatial coverage; preliminary exploration | ArcGIS, QGIS, GeoDAS [20] |

| Kriging | Uses variograms to model spatial dependence, providing optimal unbiased estimates with variance measures | Data with spatial autocorrelation; when uncertainty quantification is required | ArcGIS, R-gstat, GeoDAS [20] |

| Multifractal Interpolation Method (MIM) | Based on fractal theory; captures scaling properties and local singularities | Data with multiscale patterns; geochemical anomaly detection | GeoDAS [20] |

Beyond basic mapping, local neighborhood analysis enables researchers to characterize spatial patterns through moving window operations that calculate statistics within defined geographic contexts. This approach facilitates the identification of spatial trends, patterns, and anomalies that may be obscured in global analyses. Zhang et al. (2007) demonstrated how local statistics can reveal subtle spatial patterns in environmental contamination data that would remain undetected using traditional analytical approaches [20].

Spatial Autocorrelation Measures

The quantification of spatial autocorrelation represents a cornerstone of ESDA, with several established statistics providing robust measures of spatial dependence:

Global Moran's I provides a single measure of spatial autocorrelation across an entire study area, ranging from -1 (perfect dispersion) to +1 (perfect clustering), with 0 indicating random spatial arrangement. The statistic evaluates whether the pattern expressed is clustered, dispersed, or random, but does not indicate where specific clusters are located.

Local Indicators of Spatial Association (LISA) decompose global spatial autocorrelation into contributions from individual observations, enabling researchers to identify specific locations of spatial clusters (hot spots and cold spots) and spatial outliers. The LISA methodology, particularly through Local Moran's I, allows for the detection of statistically significant spatial clusters of high values (high-high), low values (low-low), and spatial outliers (high-low and low-high) [19].

Geary's C provides a complementary measure of spatial autocorrelation that is more sensitive to differences in adjacent values. While Moran's I measures covariance, Geary's C evaluates the squared differences between neighboring locations, making it particularly sensitive to local spatial patterns rather than global structure.

Anomaly Detection Methods

The identification of anomalous spatial patterns constitutes a primary objective in many ESDA applications, particularly in environmental monitoring and resource exploration. Several specialized techniques have been developed specifically for this purpose:

Local Singularity Analysis (LSA) has emerged as a powerful tool for identifying weak geochemical anomalies in environmental and exploration datasets [20]. Developed by Cheng (2007), LSA characterizes local singular behavior in spatial patterns through the singularity exponent, which quantifies how concentration values change with scale in the neighborhood of a point [20]. The method has proven particularly effective for detecting subtle anomalies that might be obscured in conventional analysis.

Fractal and Multifractal Modeling provides a theoretical framework for distinguishing anomalous patterns from background variation based on scaling properties. The concentration-area (C-A) fractal model serves as a fundamental technique for separating geochemical anomalies from background by identifying breakpoints in log-log plots of concentration versus area [20]. This approach has been extended through the spectrum-area (S-A) multifractal model, which operates in the frequency domain to identify anomalous patterns [20].

Table 2: Anomaly Detection Techniques in ESDA

| Technique | Underlying Principle | Key Advantage | Application Context |

|---|---|---|---|

| Local Singularity Analysis (LSA) | Quantifies scaling behavior using singularity exponents | Detects weak anomalies; scale-independent | Mineral exploration; environmental contamination [20] |

| Concentration-Area (C-A) Fractal Model | Identifies breakpoints in concentration-area relationships | Distinguishes anomalies from background without arbitrary thresholds | Geochemical anomaly mapping; pollution studies [20] |

| Student's t-statistic | Measures significance of spatial correlation between anomalies and known occurrences | Provides statistical validation of anomaly significance | Target generation; hypothesis testing [20] |

Experimental Protocols and Workflows

Comprehensive ESDA Workflow

A systematic ESDA workflow integrates multiple techniques in a logical sequence to maximize analytical insight while maintaining statistical rigor. The following protocol outlines a comprehensive approach to spatial pattern identification and anomaly detection:

Phase 1: Data Preparation and Exploration

- Step 1: Data Acquisition and Georeferencing: Collect spatially referenced data with appropriate metadata documenting collection methods, coordinate systems, and attribute definitions [20]. Ensure all observations include accurate geographic coordinates (X, Y) and attribute measurements (Zi).

- Step 2: Spatial Interpolation: Apply appropriate interpolation methods (e.g., IDW, kriging, MIM) to convert point data to continuous surfaces where necessary for visualization and analysis [20]. Validate interpolation results through cross-validation techniques.

- Step 3: Preliminary Visualization: Generate multiple map representations using different classification schemes (percentiles, standard deviations, natural breaks) to develop initial understanding of spatial distributions [20].

Phase 2: Spatial Autocorrelation Analysis

- Step 4: Global Spatial Autocorrelation: Calculate Global Moran's I to test the hypothesis of spatial randomness. A statistically significant result (p < 0.05) indicates structured spatial patterning warranting further investigation.

- Step 5: Local Spatial Autocorrelation: Compute LISA statistics to identify specific locations of spatial clustering (hot spots and cold spots) and spatial outliers. Apply appropriate multiple testing corrections to minimize false discoveries.

- Step 6: Spatial Scale Investigation: Analyze spatial autocorrelation at multiple distance bands to identify characteristic spatial scales of patterning using variogram analysis or multiscale Moran's I.

Phase 3: Anomaly Detection and Validation

- Step 7: Application of Anomaly Detection Methods: Implement specialized techniques such as LSA or C-A fractal analysis to identify statistical anomalies relative to background variation [20].

- Step 8: Spatial Association Analysis: Evaluate relationships between detected anomalies and potential causal factors or known occurrences using spatial correlation measures such as Student's t-statistic [20].

- Step 9: Interpretation and Hypothesis Generation: Synthesize results from multiple techniques to develop explanatory hypotheses regarding underlying spatial processes, with particular attention to anomalies confirmed through multiple methods.

Case Study Protocol: Geochemical Anomaly Detection

For researchers applying ESDA to environmental geochemical data, the following detailed protocol exemplifies the application of specific techniques for anomaly detection:

Objective: Identify statistically significant geochemical anomalies associated with potential mineralization or contamination sources.

Materials and Software Requirements:

- Geochemical sample data with precise coordinates and elemental concentrations

- GIS software with spatial statistical capabilities (ArcGIS, QGIS, or specialized tools like GeoDAS) [20]

- Statistical software for additional validation (R, Python with spatial libraries)

Methodology:

- Data Preprocessing: Log-transform concentration data to approximate normal distribution if necessary. Apply appropriate compositional data analysis techniques if working with closed-number systems (e.g., percentage data).

- Trend Surface Analysis: Fit polynomial trend surfaces to identify and remove large-scale regional patterns, leaving local residuals for anomaly detection.

- Multifractal Analysis: Apply the C-A fractal method by:

- Creating a cumulative frequency distribution of concentration values

- Plotting log(concentration) versus log(area) and identifying breakpoints

- Classifying values above the primary breakpoint as anomalous [20]

- Local Singularity Analysis: Implement LSA by:

- Calculating concentration-area relationships across multiple scales around each sample point

- Estimating singularity exponents that quantify divergence from normal scaling behavior

- Classifying locations with significantly high singularity exponents as anomalous [20]

- Spatial Validation: Compare detected anomalies with known mineral occurrences or contamination sources using Student's t-statistic to assess statistical significance of spatial associations [20].

The Researcher's Toolkit: Essential Solutions for ESDA

Table 3: Essential Research Reagent Solutions for ESDA Implementation

| Tool/Category | Specific Examples | Function/Role in ESDA | Implementation Considerations |

|---|---|---|---|

| GIS Platforms | ArcGIS, QGIS, GeoDAS [20] | Primary environment for spatial data management, visualization, and analysis | GeoDAS specializes in fractal analysis; ArcGIS offers comprehensive toolset; QGIS provides open-source alternative |

| Statistical Software | R (spdep, gstat), Python (PySAL, GeoPandas) | Implementation of specialized spatial statistics and custom analyses | R offers extensive spatial statistics libraries; Python provides integration with machine learning workflows |

| Spatial Interpolation Tools | Kriging (GSTAT), IDW, Multifractal Interpolation [20] | Conversion of point data to continuous surfaces for visualization and analysis | Selection depends on data characteristics and study objectives |

| Anomaly Detection Specialized Tools | Local Singularity Analysis, C-A Fractal Model [20] | Identification of statistically significant spatial anomalies | Particularly valuable for detecting weak anomalies in noisy environmental data |

| Visualization Libraries | Matplotlib, D3.js, Tableau [22] | Creation of specialized visual representations of spatial patterns | Critical for effective communication of spatial patterns and relationships |

Applications in Environmental Research

ESDA methodologies find diverse applications across environmental research domains, each leveraging the capacity to identify meaningful spatial patterns and anomalies:

Climate Change Studies: Researchers employ ESDA to analyze spatial patterns of temperature and precipitation changes, identify regions experiencing anomalous warming or cooling trends, and map vulnerabilities to sea-level rise or extreme weather events. The spatial heterogeneity of climate change impacts makes ESDA particularly valuable for developing targeted adaptation strategies [21].

Biodiversity and Conservation: Spatial analysis techniques support conservation efforts by mapping species distributions, identifying critical habitats, and monitoring changes in biodiversity across landscapes. ESDA helps delineate biologically significant areas, track fragmentation effects, and optimize protected area designs [21].

Land Use and Land Cover Change: The analysis of temporal land use changes represents a classic ESDA application, where researchers track deforestation, urbanization, agricultural expansion, and habitat fragmentation patterns. Spatial temporal ESDA enables the identification of change hotspots and the modeling of future development scenarios [21].