From Correlation to Causation: How Mechanistic AOP Models and Machine Learning Are Reshaping Drug Discovery

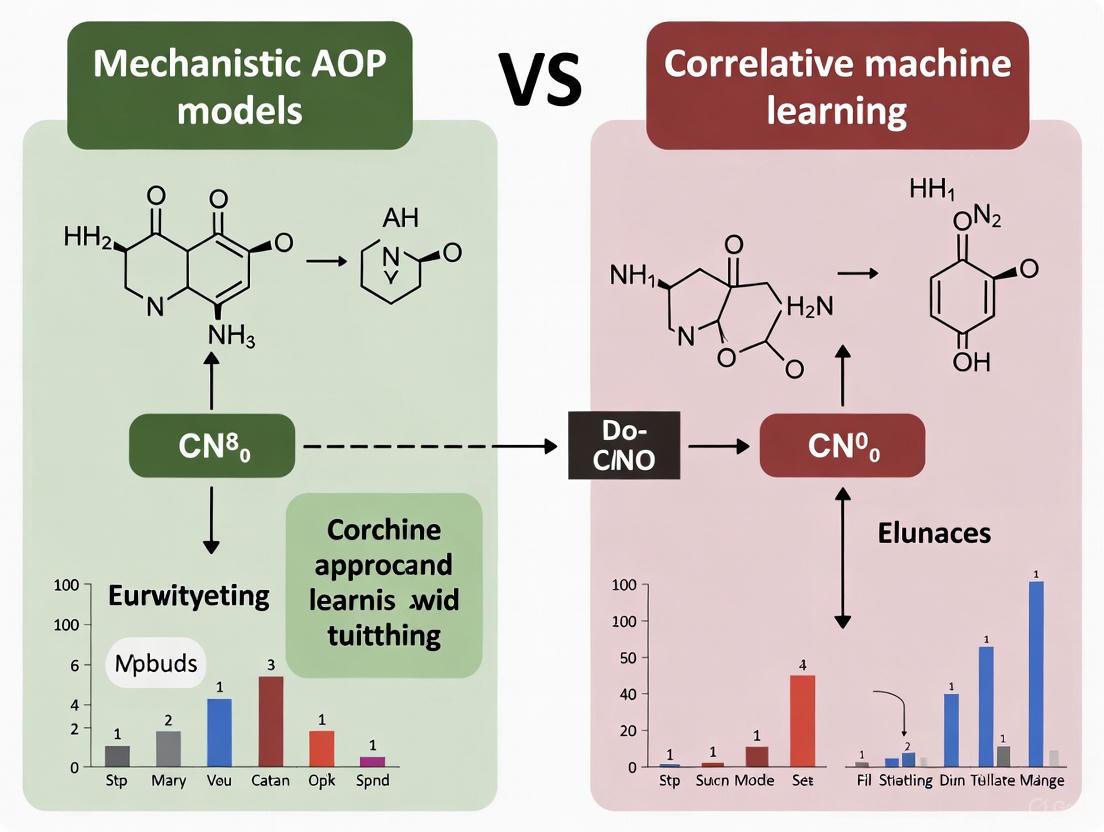

This article explores the paradigm shift from correlative machine learning to causal, mechanistic models in biomedical research and drug development.

From Correlation to Causation: How Mechanistic AOP Models and Machine Learning Are Reshaping Drug Discovery

Abstract

This article explores the paradigm shift from correlative machine learning to causal, mechanistic models in biomedical research and drug development. We examine the fundamental limitations of traditional correlation-based AI, which identifies patterns without understanding underlying causes, leading to fragile predictions and poor generalization. The piece introduces Adverse Outcome Pathways (AOPs) as a structured framework for representing mechanistic knowledge and demonstrates how they provide causal, interpretable models of disease pathways. Through comparative analysis and real-world case studies across toxicology, oncology, and therapeutic development, we illustrate how integrating mechanistic AOPs with machine learning's predictive power creates robust, reliable models that can predict intervention effects and answer counterfactual questions, ultimately enabling more efficient and successful drug discovery pipelines.

The Fundamental Shift: Why Correlation Is No Longer Enough in Biomedical AI

For decades, the machine learning revolution has been built on the foundation of correlation-based models, which excel at identifying statistical relationships and patterns in historical data [1]. These models learn from vast datasets to determine how certain inputs align with specific outputs, enabling predictions that have driven billions in economic value and transformed entire industries [1]. In drug discovery and development, correlation-based artificial intelligence (AI) has become particularly influential for predicting toxicity, estimating key variables in bioprocesses, and identifying potential drug candidates [2] [3].

However, these models operate primarily at the level of statistical association—they can identify that variables move together but cannot explain the underlying mechanisms or causal relationships [1]. This fundamental limitation becomes critically important in fields like pharmaceutical development, where understanding why a compound exhibits toxicity is as important as knowing that it does. As we enter an era demanding more interpretable and reliable AI systems, the scientific community is increasingly examining the trade-offs between correlation-based pattern recognition and mechanistic models built on understanding causal biological pathways [1].

Comparative Analysis: Correlation-Based vs. Mechanistic AOP Models

The table below summarizes the fundamental distinctions between these two approaches in toxicological prediction.

Table 1: Fundamental characteristics of correlation-based and mechanistic models

| Characteristic | Correlation-Based Models | Mechanistic AOP Models |

|---|---|---|

| Primary Focus | Identifying statistical patterns and associations in data [1] | Understanding cause-effect relationships and biological pathways [3] [4] |

| Core Question | "What" is happening? [1] | "Why" is it happening? [1] |

| Data Foundation | Historical datasets, often large-scale (e.g., Tox21, ToxCast) [3] | Biological knowledge of pathways (e.g., Adverse Outcome Pathways framework) [3] |

| Interpretability | Often "black box"; limited explanation capabilities [1] | High; built on transparent biological mechanisms [4] |

| Handling Novel Compounds | Limited to chemical space similar to training data | Potentially broader application based on mechanistic understanding |

| Regulatory Acceptance | Growing for early screening, but may require supplementary data [3] | Established for specific contexts (e.g., QSP, PBPK) [4] |

Experimental Comparison: Predictive Performance in Toxicity Assessment

To quantitatively evaluate both approaches, researchers conduct benchmarking studies using standardized datasets and experimental protocols. The following table summarizes typical performance metrics reported in the literature.

Table 2: Experimental performance comparison for toxicity prediction

| Model Type | Representative Endpoint | Reported AUROC | Key Strengths | Principal Limitations |

|---|---|---|---|---|

| Correlation-Based ML (Graph Neural Networks) | Hepatotoxicity, Cardiotoxicity (hERG) [3] | 0.75 - 0.90 [3] | High throughput, cost-effective for early screening [3] | Vulnerable to dataset bias; poor generalizability [1] |

| Correlation-Based ML (Random Forest, SVM) | Nuclear receptor signaling (Tox21) [3] | 0.70 - 0.85 [3] | Handles complex, high-dimensional data [3] | Cannot predict intervention effects [1] |

| Mechanistic AOP/QSP | Drug-Induced Liver Injury (DILI) [4] | Qualitative/Mechanistic Insight | Human-relevant predictions; explores "what-if" scenarios [4] | Model development can be resource-intensive [4] |

Detailed Experimental Protocol for Correlation-Based Models

Objective: To train and evaluate a correlation-based machine learning model for predicting compound hepatotoxicity using a public benchmark dataset.

Data Collection & Preprocessing:

- Data Source: Curate a dataset from public sources such as the DILIrank dataset (contains 475 compounds with annotated hepatotoxic potential) or Tox21 (8,249 compounds across 12 targets) [3].

- Molecular Representation: Encode chemical structures using one or more of: SMILES strings, molecular descriptors (e.g., molecular weight, clogP), or molecular fingerprints [3].

- Data Splitting: Split data into training (~80%) and test (~20%) sets using scaffold-based splitting to evaluate generalizability to novel chemical structures and prevent data leakage [3].

Model Training & Evaluation:

- Algorithm Selection: Implement multiple algorithms for comparison, including Random Forest, XGBoost, and a Graph Neural Network (GNN) [3].

- Model Training: Train each model on the training set, using techniques like cross-validation to optimize hyperparameters.

- Performance Assessment: Evaluate models on the held-out test set using metrics including Accuracy, Precision, Recall, F1-score, and Area Under the ROC Curve (AUROC) [3].

- Interpretability Analysis: Apply post-hoc interpretability tools like SHAP (SHapley Additive exPlanations) to identify molecular substructures influencing predictions [3].

Detailed Experimental Protocol for Mechanistic AOP Models

Objective: To develop a Quantitative Systems Pharmacology (QSP) model that simulates a known Adverse Outcome Pathway (AOP) for drug-induced liver injury.

Model Construction:

- AOP Framework Definition: Establish the AOP using a molecular initiating event (e.g., chemical binding to a receptor), a series of causally connected key events, and an adverse outcome at the organism level [3].

- Mathematical Formalization: Translate the AOP into a set of ordinary differential equations (ODEs) or rule-based systems that describe the dynamics of each key event [4].

- Parameterization: Populate the model with kinetic parameters and quantitative relationships obtained from relevant in vitro assays, scientific literature, and comparator molecule data [4].

Simulation & Validation:

- Virtual Population: Generate a population of in silico patients reflecting human physiological variability [4].

- Intervention Simulation: Simulate the administration of different drug doses to the virtual population and observe the progression through the AOP [4].

- Model Qualification: Validate the model's predictions by comparing its output against independent clinical or experimental data not used during model building [4].

Successful implementation of both modeling paradigms requires specific data resources and computational tools. The table below details key components of the modern computational toxicologist's toolkit.

Table 3: Essential research reagents and resources for predictive toxicology

| Resource Name | Type/Function | Application Context |

|---|---|---|

| Tox21 Dataset [3] | Publicly available benchmark dataset with qualitative toxicity measurements for 8,249 compounds across 12 biological targets. | Training and validation data for correlation-based ML models predicting nuclear receptor and stress response pathway activity. |

| DILIrank Dataset [3] | Curated dataset of 475 compounds annotated for their potential to cause Drug-Induced Liver Injury. | Critical for building and benchmarking both correlation-based and mechanistic models of hepatotoxicity. |

| hERG Central [3] | Extensive database containing over 300,000 experimental records on hERG channel blockade, linked to cardiotoxicity. | Supports classification and regression tasks for predicting compound cardiotoxicity risk. |

| Adverse Outcome Pathway (AOP) Framework [3] | Conceptual framework that organizes knowledge linking a Molecular Initiating Event (MIE) to an Adverse Outcome (AO) via Key Events (KEs). | Provides the structural backbone for developing mechanistic QSP and AOP models. |

| SHAP (SHapley Additive exPlanations) [3] | A game theory-based method to explain the output of any machine learning model. | Used for interpreting "black box" correlation-based models and identifying features driving predictions. |

The "age of correlation-based models" has provided powerful pattern recognition capabilities that continue to deliver significant value in high-throughput screening applications [3] [1]. However, their inherent limitation of recognizing patterns without understanding mechanisms presents critical challenges for drug development, where predicting the effects of interventions and generalizing to novel chemical spaces is paramount [1].

The future of predictive toxicology and drug development lies not in choosing one paradigm over the other, but in strategically integrating them. Correlation-based models can efficiently prioritize candidates and generate hypotheses, while mechanistic AOP and QSP models can provide deeper biological understanding and predict outcomes in uncharted territories [4]. This synergistic approach, leveraging the scale of AI-driven correlation with the explanatory power of mechanistic models, promises to enhance the efficiency, success rate, and human-relevance of the entire drug discovery pipeline [3] [4].

In the data-driven landscape of modern scientific research, correlation-based models, particularly those powered by machine learning (ML), have become indispensable for identifying patterns and making predictions from large datasets. These models excel at uncovering statistical relationships between variables, enabling tasks from image recognition to predictive analytics in drug discovery [1]. However, this reliance on correlation presents a fundamental challenge for scientific inquiry, which ultimately seeks to understand causal mechanisms. The core limitations of correlation—its susceptibility to confounding factors, its tendency to detect spurious links, and its resulting fragility in predictive power when applied to new contexts—pose significant risks in research and development, where decisions based on flawed inferences can lead to costly failures [1] [5].

This guide objectively compares correlative machine learning approaches with mechanistic models and the emerging paradigm of Causal AI within the specific context of Advanced Oxidation Process (AOP) research for environmental science and drug development. By framing this comparison through experimental data and methodological rigor, we aim to equip researchers with a clear understanding of when and why moving beyond mere correlation is not just beneficial, but necessary for robust and reliable scientific outcomes.

The Fundamental Limitations of Correlation-Based Analysis

Correlation is a measure of statistical association, but it does not imply causation. This foundational principle is often the first casualty in the rush to derive insights from big data. The inherent constraints of correlation-based analysis can be categorized into three critical areas, each with profound implications for scientific research.

Confounding and Spurious Links

A confounder is an unmeasured or hidden variable that influences both the independent and dependent variables, creating a non-causal, spurious correlation between them [6]. Traditional ML models, which primarily operate on the first rung of Judea Pearl's "Ladder of Causation" (Association), are exceptionally adept at detecting these spurious links but incapable of distinguishing them from genuine causal relationships [1].

- Classic Example: The observed correlation between ice cream sales and shark attacks is not causal but is driven by a confounding variable—warm weather—which independently increases both swimming activity and ice cream consumption [1] [6].

- Research Implication: In AOP research, a correlation might be found between a specific catalyst property (e.g., surface area) and pollutant degradation efficiency. However, a confounder, such as the simultaneous presence of a specific functional group on the catalyst, could be the true causal driver. A correlation-based model would misleadingly attribute the effect to surface area, leading to inefficient catalyst optimization [7].

Fragile Predictions and Poor Generalization

Correlation-based models learn patterns from their training data. When the underlying data distribution changes—a phenomenon known as distribution shift—the model's predictions often become unreliable due to poor external validity [1].

- The Problem of Distribution Shifts: A model trained on pre-pandemic data might fail dramatically when consumer behavior suddenly shifts. Similarly, an AOP prediction model trained on laboratory-grade water might perform poorly when predicting efficiency in real wastewater with complex, variable matrices [1] [7]. These models are brittle because they describe "what is" in a specific dataset rather than "what must be" due to underlying mechanisms.

- Inability to Handle Interventions: Correlative systems show what usually happens but cannot reliably predict the effects of deliberate changes or new interventions [1]. For instance, they can predict the degradation rate under historical conditions but cannot accurately forecast what would happen if a entirely new type of catalyst is introduced, as this represents a shift outside the observed data [8].

The Black Box and Bias Perpetuation

Correlation-based ML models often function as "black boxes," providing predictions without transparent explanations [1]. This opacity makes it difficult for researchers to audit results, spot flawed logic, or understand the model's failure modes. Furthermore, these models can inadvertently amplify existing biases in the training data. A stark example is a US healthcare algorithm that used healthcare spending as a proxy for medical needs. Due to historical inequities in access, Black patients had lower past spending, leading the algorithm to systematically underestimate their care needs, thus perpetuating the very bias it should have helped to eliminate [1].

Table 1: Core Limitations of Correlation-Based Models in Research

| Limitation | Description | Impact on Research & Development |

|---|---|---|

| Confounding | Inability to distinguish causal links from spurious correlations caused by a third, unobserved variable. | Leads to incorrect identification of key drivers, misdirecting R&D efforts and resource allocation. |

| Fragility under Distribution Shift | Poor performance when applied to data that differs from the training set (low external validity). | Models fail in real-world conditions or with new material classes, requiring constant retraining and validation. |

| Inability to Model Interventions | Cannot answer "what if" questions about actions or changes not present in the historical data. | Hinders the design of novel experiments, new molecules, or innovative catalyst structures. |

| Lack of Counterfactual Reasoning | Cannot reason about what would have happened under different circumstances for a specific case. | Prevents root-cause analysis, personalized treatment optimization, and true understanding of individual outcomes. |

Beyond Correlation: Paradigms for Causal Understanding

To overcome the limitations of correlation, scientific modeling must advance to higher levels of causal reasoning. This involves both established mechanistic approaches and innovative Causal AI frameworks.

Mechanistic Models: Deductive Reasoning from First Principles

Mechanistic models, also known as process-based or white-box models, seek to emulate the underlying physical, chemical, or biological processes governing a system. They are built on deductive reasoning from established scientific principles [8].

- Core Philosophy: These models start with a hypothesis about the causal mechanisms (e.g., reaction pathways, enzyme kinetics, fluid dynamics) and translate them into mathematical equations. They are calibrated and validated with experimental data, but their structure is based on causality [8].

- Exemplar: The Hodgkin-Huxley model of the nerve action potential is a paradigm of mechanistic modeling. It mathematically represents the causal mechanisms of ion channel dynamics to explain and predict neuronal electrical activity, a feat for which its creators won a Nobel Prize [8].

- Application to AOPs: A mechanistic model of an AOP would be based on chemical kinetics, representing the causal chain of reactions: catalyst activation, radical generation (

•OH,SO4•-), and the subsequent oxidation of pollutant molecules [8].

Causal AI: A Framework for Inference and Intervention

Causal AI represents a revolutionary paradigm that integrates causal inference with machine learning. It aims to move beyond pattern recognition to model cause-and-effect relationships explicitly [1]. This approach operates on all three rungs of Pearl's Ladder of Causation:

- Association: Seeing/observing (What is?).

- Intervention: Doing/intervening (What if I do X?).

- Counterfactuals: Imagining (What would have happened if?).

Key methodologies include:

- Directed Acyclic Graphs (DAGs): Visual representations of assumed causal relationships between variables, which help identify confounding paths and guide analysis [1].

- Structural Causal Models (SCMs): A mathematical framework that combines DAGs with functional equations to formalize causal relationships, enabling the simulation of interventions and counterfactuals [1].

- Causal Inference Methods: Techniques like instrumental variables, difference-in-differences, and doubly robust estimation are designed to isolate causal effects from observational data, even in the presence of confounders [1] [6].

Diagram 1: The Hierarchy of Causal Reasoning

Comparative Analysis: Mechanistic AOP Models vs. Correlative ML

The distinction between mechanistic and correlative approaches becomes stark when applied to a concrete research problem, such as predicting the efficiency of an Advanced Oxidation Process.

Experimental Protocol for an AOP ML Model

A recent study provides a clear protocol for a correlation-based ML approach to predicting organic pollutant degradation kinetics in a Fe-carbon catalyst/PMS system [7].

- Data Collection: A database was constructed from 27 published articles, collating data on:

- Catalyst Properties: Specific surface area, pore volume, Fe-Nx content, graphitic N content.

- Pollutant Properties: Parameters from the Linear Solvation Energy Relationship (LSER) model.

- Environmental Factors & Dosages: pH, temperature, catalyst dosage, pollutant concentration.

- Target Variable: The kinetic constant (k) of pollutant degradation.

- Data Preprocessing: Missing catalyst property data were imputed using a specialized ANN model (R² = 0.9151) to create a complete dataset.

- Model Building & Training: An Artificial Neural Network (ANN) was constructed. The model's hyperparameters were tuned, and its performance was evaluated using the coefficient of determination (R²) between simulated and experimental k values.

- Feature Importance Analysis: The trained model was analyzed to rank the importance of all input variables in predicting the kinetic constant.

Performance and Limitations of the Correlative ML Model

The ANN model achieved a high R² value of 0.9272, indicating a strong correlation between the model's inputs and the output [7]. Feature analysis identified the top five influential variables as:

- Catalyst dosage (12.41%)

- Pore volume (7.06%)

- Pollutant dosage (6.36%)

- S value of LSER (5.35%)

- B value of LSER (4.24%)

While powerful for prediction within the scope of its training data, this approach has inherent limitations. It identifies statistical associations but does not confirm that catalyst dosage causes a change in the kinetic constant; an unmeasured confounder could be at play. Furthermore, its performance is contingent on the data distribution. If a novel catalyst with properties outside the training set is introduced, the model's predictions may fail, demonstrating its fragility [7].

Contrasting with a Mechanistic AOP Model Approach

A mechanistic model for the same AOP system would be constructed differently, focusing on representing the causal chain of events [8]:

- Hypothesis Formulation: Define the proposed reaction mechanism (e.g., radical vs. non-radical pathways).

- Equation Building: Translate the mechanism into a system of differential equations based on mass-action kinetics and adsorption isotherms (e.g.,

d[Pollutant]/dt = -k_OH • [•OH][Pollutant] - k_SO4 • [SO4•-][Pollutant]). - Parameter Estimation: Use experimental data to estimate unknown parameters (e.g., rate constants

k_OH,k_SO4). - Validation and Prediction: Test the model's predictions against independent experimental results. Once validated, the model can be used to simulate the effects of entirely new interventions.

Table 2: Mechanistic vs. Machine Learning Modeling Approaches [8]

| Aspect | Mechanistic Modeling | Machine Learning (Correlative) |

|---|---|---|

| Primary Goal | Establish causal, mechanistic relationships between inputs and outputs. | Establish statistical relationships and correlations between inputs and outputs. |

| Data Requirements | Capable of handling small datasets. | Requires large datasets for training. |

| Handling Novelty | Once validated, can be used as a predictive tool for scenarios not present in the original data (e.g., new treatments). | Can only make predictions related to patterns within the data supplied; struggles with novelty. |

| Interpretability | High (White-box); provides understanding of the "why". | Low (Black-box); provides an answer without a mechanistic explanation. |

| Scalability | Difficult to scale and incorporate multiple space and time scales. | Excellent at tackling problems with multiple scales and high dimensionality. |

| Inductive/Deductive | Deductive: Reasons from general principles to specific predictions. | Inductive: Infers general patterns from specific data examples. |

Diagram 2: Contrasting Methodological Workflows

The Scientist's Toolkit: Research Reagent Solutions for AOP Studies

Table 3: Essential Materials and Reagents for AOP Catalyst and Efficiency Studies

| Reagent/Material | Function in AOP Research | Research Context |

|---|---|---|

| Fe-carbon Catalysts | Serves as the heterogeneous catalyst to activate peroxymonosulfate (PMS) and generate reactive oxygen species. | The core material under investigation; properties like Fe-Nx content and pore volume are key variables [7]. |

| Peroxymonosulfate (PMS) | The oxidant precursor activated by the catalyst to generate powerful sulfate (SO4•-) and hydroxyl (•OH) radicals. |

A standard oxidant in AOP studies; its dosage is a critical experimental factor [7]. |

| Target Organic Pollutants | Model compounds (e.g., pharmaceuticals, dyes) used to quantify the degradation efficiency of the AOP system. | Pollutant properties (LSER parameters) are key inputs for predictive models [7]. |

| Artificial Neural Network (ANN) | A machine learning algorithm used to model complex, non-linear relationships between catalyst properties, conditions, and degradation kinetics. | Used as a correlative predictive tool to analyze variable importance and predict kinetic constants from a database [7]. |

| Linear Solvation Energy Relationship (LSER) | A model that describes the physicochemical properties of pollutants using parameters (S, B, etc.) related to solubility and polarity. | Provides quantitative descriptors for pollutant molecules as inputs for ML models [7]. |

The limitations of correlation—confounding, spurious links, and fragile predictions—are not merely statistical curiosities but fundamental obstacles to scientific progress. While correlative ML models offer powerful predictive capabilities within their training domain, they lack the causal understanding required for true scientific insight and reliable extrapolation to novel situations.

The future of robust research, particularly in complex fields like AOP optimization and drug development, lies in a synergistic approach. Mechanistic models provide the indispensable causal backbone and deductive power. Causal AI offers a rigorous framework for reasoning about interventions and counterfactuals from data. Correlative ML serves as a powerful tool for pattern detection and initial hypothesis generation from large-scale datasets. By integrating these paradigms, researchers can move beyond asking "what is correlated?" to the more profound and actionable questions of "why does it happen?" and "how can we effectively intervene?"

In the complex landscape of modern biological research, particularly in drug development, two distinct approaches have emerged for understanding and predicting compound effects: correlative machine learning and mechanistic reasoning. Correlative machine learning models, particularly those using deep learning algorithms, identify statistical patterns in large datasets to predict outcomes such as drug toxicity [9]. While these models can achieve high predictive accuracy, they often function as "black boxes" with limited transparency into the underlying biological causality. In contrast, mechanistic reasoning seeks to elucidate the causal chain of molecular events—from initial interaction to cellular and tissue-level responses—that explain how and why a biological effect occurs [10]. This comparative guide objectively examines both approaches through the lens of drug-induced toxicity prediction, providing researchers with experimental data and methodologies to inform their investigative strategies.

Theoretical Foundations: AOP Models vs. Correlative ML

Correlative Machine Learning in Toxicology

Machine learning approaches in toxicology leverage chemical structure data and biological activity profiles to build predictive models. These models utilize various algorithms including traditional methods like Random Forest (RF) and Support Vector Machine (SVM), alongside deep learning approaches such as Graph Neural Networks (GNN) and Transformers [9]. The predictive capability stems from identifying patterns in molecular descriptors, fingerprints, or graph-based representations that correlate with toxic outcomes. However, these models typically lack explicit biological pathway information, instead relying on statistical associations between chemical features and observed effects.

A significant limitation of purely correlative ML approaches is their limited performance when training data is scarce. Deep learning models particularly "often achieve suboptimal performance compared to traditional ML models when trained on small toxicity datasets, as DL models typically require large amounts of data for effective training" [9]. This data dependency restricts their applicability in early-stage drug development where novel compounds may have little analogous toxicity data.

Mechanistic Reasoning in Biological Systems

Mechanistic reasoning represents a fundamentally different approach that focuses on constructing causal explanations for biological phenomena. According to research on biology undergraduates' reasoning processes, mechanistic reasoning involves "identifying entities across levels of organization and their relevant activities" and "exploring how processes interact and connect in a complex system" [10]. In the context of toxicology, this translates to building Adverse Outcome Pathways (AOPs) that describe sequential events from molecular initiating event to organism-level response.

Studies of student learning indicate that effective mechanistic models require connecting entities across biological organization levels with specific causal relationships. However, researchers often struggle with this integration, as "most connections were considered nonnormative and lacked important entities, leading to an abundance of unspecified causal connections" [10]. This highlights the challenge of building complete mechanistic understanding even when the goal is explicit causal explanation.

Experimental Comparison: Methodologies & Protocols

Experimental Design for Model Validation

Robust experimental design is critical for comparative evaluation of correlative ML and mechanistic approaches. The Design of Experiments (DOE) framework provides a systematic methodology for simultaneously investigating multiple factors and their interactions, offering significant advantages over traditional one-factor-at-a-time (OFAT) approaches [11]. DOE "requires fewer resources for the amount of information obtained, saving on time and materials" while providing "deeper insight into complex systems" [11].

For toxicity prediction studies, key experimental design principles include:

- Adequate replication: Ensuring sufficient biological replicates rather than just large feature datasets

- Appropriate controls: Including both positive and negative controls for assay validation

- Noise reduction: Using blocking strategies and covariates to minimize experimental variability

- Randomization: Preventing confounding factors and enabling rigorous interaction testing [12]

Power analysis should be conducted prior to experimentation to optimize sample size and ensure statistically valid comparisons between modeling approaches.

Protocol for Mechanistic Model Construction

Building a mechanistic model of drug-induced toxicity requires systematic investigation of causal pathways:

- Entity Identification: Identify relevant biological entities across organizational levels (molecular, cellular, tissue, organ)

- Activity Characterization: Determine the specific activities and interactions between entities

- Connection Mapping: Establish causal relationships between activities across biological levels

- Validation Testing: Design experiments to test predicted causal relationships [10]

This protocol emphasizes the importance of explicit causal connections rather than merely associative relationships. For example, a complete mechanistic model of hepatotoxicity would identify specific metabolic enzymes, reactive intermediates, cellular stress pathways, and tissue damage markers in a connected causal sequence.

Protocol for Correlative ML Model Development

Developing correlative ML models for toxicity prediction follows a standardized workflow:

- Data Collection: Compile toxicity data from sources like TOXRIC, EPA DSSTox, or PubChem [9]

- Molecular Representation: Encode compounds using fingerprints (Morgan, MACCS), molecular descriptors, or graph-based representations

- Model Selection: Choose appropriate algorithms based on dataset size—traditional ML (RF, SVM, XGB) for smaller datasets, deep learning (GNN, Transformers) for large datasets

- Interpretability Analysis: Apply post-hoc interpretation methods like SHAP or counterfactual analysis to identify features driving predictions [9]

This protocol prioritizes predictive accuracy while acknowledging the need for interpretability methods to gain limited insights into potential mechanisms.

Comparative Performance Data

Table 1: Performance Comparison of Modeling Approaches for Toxicity Prediction

| Metric | Correlative ML (Random Forest) | Correlative ML (Deep Learning) | Mechanistic AOP Models |

|---|---|---|---|

| Prediction Accuracy (Acute Toxicity) | ~80% (rat LD50) [9] | Varies significantly with data size [9] | Dependent on pathway completeness |

| Data Requirements | Medium to Large datasets | Large datasets (>10,000 compounds) | Can work with smaller, focused datasets |

| Interpretability | Medium (requires SHAP/LIME) | Low (black box) | High (explicit pathways) |

| Domain Transferability | Limited to chemical space of training data | Limited without transfer learning | Higher when mechanisms are conserved |

| Handling Novel Compounds | Poor for structurally unique compounds | Limited without analogous training data | Possible if mechanism is understood |

| Experimental Validation Cost | High (requires wet-lab testing) | High (requires wet-lab testing) | Targeted (hypothesis-driven testing) |

| Regulatory Acceptance | Growing for screening | Emerging for specific endpoints | Well-established for risk assessment |

Table 2: Analysis of Model Strengths and Limitations

| Aspect | Correlative ML | Mechanistic AOP Models |

|---|---|---|

| Primary Strength | High predictive accuracy for data-rich domains | Causal understanding and biological insight |

| Key Limitation | Limited insight into biological mechanisms | Often incomplete knowledge of pathways |

| Resource Intensity | Computational resources | Domain expertise and experimental validation |

| Time to Implementation | Rapid once data is available | Lengthy pathway construction and validation |

| Error Analysis | Difficult to diagnose failure modes | Clear identification of knowledge gaps |

| Integration with Existing Knowledge | Data-driven, may contradict established knowledge | Builds upon established biological knowledge |

Visualizing Workflows and Pathways

Correlative ML Workflow for Toxicity Prediction

Mechanistic AOP Model Construction

Integrated Approach Combining Both Paradigms

Table 3: Key Research Resources for Toxicity Modeling

| Resource Type | Specific Tools/Databases | Function & Application |

|---|---|---|

| Toxicity Databases | TOXRIC, EPA DSSTox, ICE, ChemIDplus | Provide curated toxicity data for model training and validation [9] |

| Chemical Databases | PubChem, eChemPortal, NITE CRIP | Offer chemical structure information and properties [9] |

| Omics Databases | Various transcriptomics, proteomics databases | Supply mechanistic pathway information for AOP development [9] |

| Benchmark Databases | Specific toxicity benchmark datasets | Enable standardized model comparison and performance assessment [9] |

| Experimental Design Tools | JMP, R DOE packages | Facilitate statistical experimental design for model validation [11] [12] |

| Interpretability Tools | SHAP, Counterfactual Analysis | Provide post-hoc interpretation of ML model predictions [9] |

The comparative analysis reveals that correlative ML and mechanistic AOP models offer complementary rather than competing approaches to biological understanding. Correlative ML excels in rapid prediction and pattern recognition across large chemical spaces, while mechanistic models provide causal understanding and biological insight that is critical for interpreting unexpected results and extrapolating beyond training data. The most promising path forward involves integrating both approaches—using ML to identify patterns and generate mechanistic hypotheses, then employing targeted experiments to validate causal pathways, ultimately creating mechanism-informed ML models with enhanced predictive capability and interpretability. This integrated framework represents the most robust approach for addressing the complex challenge of drug-induced toxicity prediction and advancing the broader quest for causal understanding in biology.

Adverse Outcome Pathways (AOPs) represent a conceptual framework that organizes existing knowledge about biologically plausible and empirically-supported links between molecular-level perturbation of a biological system and an adverse outcome of regulatory relevance [13]. This framework has emerged as a critical tool in toxicology for addressing contemporary challenges, including the need to assess tens of thousands of chemicals while reducing animal testing, costs, and time required for chemical safety assessment [14] [13]. The AOP framework provides a structured approach to describing toxicological mechanisms that is not chemical-specific but rather focuses on the sequence of biological events that can be triggered by any stressor acting on a particular molecular target [14].

At its core, an AOP is a linear sequence that begins with a Molecular Initiating Event (MIE), where a chemical stressor directly interacts with a biomolecule, progresses through a series of measurable Key Events (KEs) at different levels of biological organization, and culminates in an Adverse Outcome (AO) at the individual or population level [15] [14] [13]. The relationships between these key events are described as Key Event Relationships (KERs), which detail the causal linkages between an upstream and downstream key event [13]. This structured approach provides the biological context for developing Integrated Approaches to Testing and Assessment (IATA) for regulatory decision-making [16].

Core Components of the AOP Framework

The Structural Elements of an AOP

The AOP framework is built upon specific, well-defined components that together describe the progression of toxicity from molecular interaction to adverse outcome:

Molecular Initiating Event (MIE): The initial point of interaction between a stressor (chemical or non-chemical) and a biological target at the molecular level. Examples include a chemical binding to a specific receptor, inhibiting an enzyme, or directly damaging DNA [14] [17]. The MIE represents the first "biological domino" in the sequence [14].

Key Events (KEs): Measurable biological changes at cellular, tissue, or organ levels that are essential to the progression from the MIE to the AO [14] [13]. These events represent intermediate steps in the pathway and must be both measurable and essential for progression toward the adverse outcome [13].

Key Event Relationships (KERs): Descriptions of the causal relationships between pairs of KEs, explaining how an upstream KE leads to a downstream KE [14] [13]. KERs are supported by three types of evidence: biological plausibility, empirical support, and quantitative understanding of the conditions under which the relationship holds [14].

Adverse Outcome (AO): A biological change at the level of the individual organism or population that is considered relevant for risk assessment or regulatory decision-making [14] [17]. Examples include impaired development, reduced reproduction, tumor formation, or population-level impacts [15] [14].

Foundational Principles of AOP Development

The development and application of AOPs are guided by five fundamental principles that ensure consistency and utility across the toxicological community:

AOPs are not chemical-specific: They depict generalized sequences of biological effects that can be initiated by any stressor acting on a particular molecular target [14] [13].

AOPs are modular and composed of reusable components: Key Events and Key Event Relationships can be shared across multiple AOPs, preventing redundancy and building interconnected networks [14] [13].

An individual AOP is a pragmatic unit of development: A single sequence of KEs and KERs linking one MIE to one AO represents a manageable unit for development and evaluation [13].

AOP networks are the functional unit of prediction: Most real-world scenarios involve multiple AOPs connected through shared KEs and KERs, providing a more comprehensive understanding of complex toxicity [14] [13].

AOPs are living documents: They evolve as new knowledge emerges, allowing for continuous refinement and expansion of the framework [14] [13].

AOPs in Practice: Applications and Workflows

Experimental Design and Workflow for AOP Development

The process of developing and applying an AOP follows a systematic workflow that integrates computational, in vitro, and in vivo approaches. The diagram below illustrates this iterative process.

AOP Development Workflow

The development process begins with problem formulation and extensive literature review to identify potential MIEs and KEs [13]. Researchers then systematically map the sequence of events from MIE to AO, establishing KERs supported by biological plausibility and empirical evidence [14] [13]. A formal weight-of-evidence assessment is conducted to evaluate the confidence in the AOP, followed by integration of the AOP into broader networks [13]. The process is iterative, with AOPs continually refined as new data emerges [14].

Essential Research Reagents and Tools for AOP Development

AOP research utilizes specific reagents, tools, and platforms that enable the construction, visualization, and application of pathways. The table below details these essential resources.

Table 1: Essential Research Tools for AOP Development

| Tool/Reagent Category | Specific Examples | Function in AOP Development |

|---|---|---|

| Knowledge Assembly Platforms | AOP-Wiki, Effectopedia, AOP Xplorer | Collaborative development of AOP descriptions; semantic annotation of knowledge; graphical representation of AOP networks [13] [18] |

| Data Repositories | Intermediate Effects Database | Host chemical-related data from non-apical endpoints; links empirical observations with AOP descriptions [18] |

| In Vitro Assay Systems | High-throughput screening assays, receptor binding assays, transcriptional activation assays | Measure Molecular Initiating Events and early Key Events; generate mechanistic data for AOP development [15] [14] |

| Analytical Tools | OECD Harmonised Templates, SeqAPASS | Standardized data reporting; cross-species conservation analysis of molecular targets [14] [13] |

| Computational Modeling Tools | Quantitative Structure-Activity Relationship (QSAR) models, kinetic models | Predict chemical interactions with biological targets; quantify relationships between Key Events [14] [13] |

AOPs vs. Correlative Machine Learning: A Comparative Analysis

Foundational Differences in Approach and Application

While both AOPs and correlative machine learning (ML) approaches aim to enhance predictive capabilities in toxicology, they differ fundamentally in their methodology, interpretability, and application. The table below systematically compares these approaches across multiple dimensions.

Table 2: Comparison of AOP and Correlative Machine Learning Approaches

| Feature | Adverse Outcome Pathways (AOPs) | Correlative Machine Learning |

|---|---|---|

| Primary Basis | Mechanistic understanding of biological pathways [15] [13] | Statistical patterns in data [19] |

| Interpretability | High (explicit biological events and relationships) [14] [13] | Variable (model-dependent; often "black box") [19] |

| Data Requirements | Curated biological knowledge from diverse sources [13] | Large, structured datasets for training [19] |

| Regulatory Acceptance | Established in international programs (OECD) [13] [18] | Emerging, with validation challenges [19] |

| Extrapolation Capability | Biologically-informed across species and conditions [14] | Limited to training data domains [19] |

| Chemical Applicability | Chemical-agnostic (applicable to any stressor acting on the MIE) [14] [13] | Dependent on chemical space of training data [19] |

| Temporal Resolution | Explicit sequence of events with causal relationships [15] [13] | Typically static correlations without temporal dynamics |

| Uncertainty Characterization | Qualitative strength of evidence for each KER [14] [13] | Quantitative confidence intervals based on model performance [19] |

Case Study: Thyroid Disruption and Developmental Neurotoxicity

The application of AOPs to thyroid disruption-mediated developmental neurotoxicity provides an illustrative example of the framework's utility. This AOP begins with the Molecular Initiating Event of chemical binding to and inhibition of thyroid peroxidase, leading to reduced synthesis of thyroid hormones (T4/T3) [17]. Key Events progress through: decreased circulating thyroxine levels; reduced thyroid hormone availability in developing brain tissue; altered neural cell differentiation/migration; and finally the Adverse Outcome of impaired cognitive function and neurodevelopmental deficits [17].

The strength of this AOP lies in its biological plausibility and strong empirical support, including evidence from epidemiological studies, experimental animal models, and in vitro systems [17]. This pathway has directly informed testing strategies for the Endocrine Disruptor Screening Program, highlighting how AOPs can guide targeted, mechanistic testing that reduces reliance on apical endpoint animal studies [17]. The diagram below visualizes this pathway.

Thyroid Disruption AOP

Quantitative AOPs: Bridging Mechanistic Understanding and Prediction

From Qualitative to Quantitative Frameworks

The evolution from qualitative to quantitative AOPs (qAOPs) represents a significant advancement in the field, enhancing the predictive power and regulatory utility of the framework [14]. Quantitative AOPs incorporate mathematical relationships that describe the dose-response, temporal, and incidence characteristics of Key Event Relationships [14]. This quantitative understanding enables prediction of the conditions under which a change in an upstream KE will cause a change in downstream KEs, ultimately allowing forecasting of the probability and severity of the Adverse Outcome based on early key events [14].

The transition to qAOPs requires systematic collection of data on the dynamics of key events, including understanding of threshold effects, response thresholds, and timing relationships between events [14]. This quantitative framework supports more confident extrapolation across species, as demonstrated by tools like EPA's SeqAPASS, which evaluates conservation of molecular targets across species to inform cross-species applicability of AOPs [14]. The diagram below illustrates the structure of a quantitative AOP network.

Quantitative AOP Network

AOPs in Chemical Prioritization and Risk Assessment

AOPs provide a scientifically robust foundation for chemical prioritization and risk assessment by organizing mechanistic data into formats directly applicable to regulatory decision-making [14]. The framework enhances the use of data from New Approach Methodologies (NAMs) by providing biological context for interpreting in vitro and high-throughput screening data [14] [17]. For example, a chemical causing a specific DNA mutation in an in vitro screening assay can be evaluated in the context of an AOP for liver cancer, where that DNA mutation serves as the Molecular Initiating Event [14].

The utility of AOPs extends to evaluating complex mixtures, where AOP networks can identify shared KEs across chemicals, informing hypothesis-driven testing of additive or synergistic effects [14]. This application is particularly relevant for contaminants of emerging concern, such as per- and polyfluoroalkyl substances (PFAS), where EPA researchers are developing AOPs relevant to human health and ecological impacts across a range of adverse outcomes including reproductive impairment, developmental toxicity, and metabolic disorders [17].

The Adverse Outcome Pathway framework represents a transformative approach in toxicology, shifting the paradigm from observational toxicology to mechanistic, pathway-based understanding of chemical effects on living systems. As a framework for organizing mechanistic knowledge, AOPs provide the biological context necessary to interpret data from New Approach Methodologies, supporting more human-relevant, efficient chemical safety assessment [15] [17]. The ongoing development of quantitative AOPs and AOP networks further enhances the predictive power of this framework, enabling more confident extrapolation from mechanistic data to adverse outcomes of regulatory concern.

While correlative machine learning approaches offer advantages in processing large datasets and identifying complex patterns, their "black box" nature and limited biological interpretability present challenges for regulatory decision-making [19]. The integration of ML techniques with AOP frameworks represents a promising direction for the field, where ML can identify potential key events and relationships from large datasets, while AOPs provide the mechanistic context and biological plausibility needed for regulatory acceptance. This synergistic approach leverages the strengths of both methodologies, advancing the ultimate goal of more efficient, human-relevant chemical safety assessment that reduces reliance on traditional animal testing while enhancing protection of human health and the environment.

The "Ladder of Causation," a conceptual framework introduced by Judea Pearl, describes a three-level hierarchy of causal reasoning that distinguishes between different types of questions and the capabilities required to answer them. This hierarchy is particularly relevant in scientific research and drug development, as it provides a lens through which to evaluate the limitations of purely correlative machine learning models and the necessity of mechanistic, causal models for robust scientific discovery. While traditional machine learning excels at finding patterns and associations (the first rung), it falls short in answering questions about interventions or hypothetical scenarios, which are the bedrock of experimental science and therapeutic development [20].

This framework is crucial for understanding the paradigm shift from correlative approaches to causal models. Correlative machine learning, which includes most deep learning applications, operates primarily on the first rung. Pearl characterizes this as "curve fitting"—associating a set of input variables (X) with an outcome (y) without underlying causal information [20]. In contrast, mechanistic Adverse Outcome Pathway (AOP) models aim to explicitly represent cause-effect relationships within a biological system, operating on the second and third rungs of the ladder. This allows researchers not only to predict what will happen under observation but also to anticipate the consequences of specific interventions and reason about why a particular outcome occurred.

The Three Rungs of Causal Reasoning

The Ladder of Causation consists of three distinct levels, each building upon the capabilities of the previous one. The following diagram illustrates this hierarchy and the typical questions asked at each level.

Rung 1: Association (Seeing)

The bottom rung of the ladder is Association, which involves reasoning about observations and correlations. At this level, one can answer questions based solely on passive observation of data, such as "How would seeing X change my belief about Y?" This is the domain of traditional statistics and most machine learning, including deep learning. A model operating at this level might identify that patients taking a certain drug have a lower incidence of a disease, but it cannot determine if the drug caused the improvement. The model merely recognizes a pattern or association in the available data. Pearl notes that while this "curve fitting" is powerful, it does not constitute genuine machine intelligence, as it lacks understanding of the underlying mechanisms [20].

Rung 2: Intervention (Doing)

The middle rung is Intervention, which involves asking "What if?" questions about active interventions. This requires understanding what would happen to a variable Y if we were to forcibly set another variable X to a specific value, denoted as do(X). This is the language of randomized controlled trials (RCTs) in drug development, where researchers actively administer a treatment to isolate its causal effect from confounding factors. A model operating at this level can predict the effect of a novel drug or therapy, even if that specific intervention has never been observed in the historical data. Moving from Rung 1 to Rung 2 requires a causal model that represents how variables influence one another.

Rung 3: Counterfactuals (Imagining)

The highest rung is Counterfactuals, which deals with retrospective questions and reasoning about "what might have been." It involves answering questions like "What would Y have been if X had been different?" Counterfactual reasoning is essential for assigning blame or credit, understanding the root cause of an outcome, and personalizing treatments. In drug development, a counterfactual question might be: "For this patient who recovered after taking the drug, would they have still recovered if they had not taken it?" Answering such questions requires a fully specified structural causal model, as it involves reasoning about a world that did not actually happen, but could have under different circumstances. Pearl emphasizes that this ability to imagine alternatives that aren't factual is a crucial component of causal reasoning [20].

Mechanistic AOP Models vs. Correlative ML: An Experimental Comparison

The fundamental distinction between mechanistic AOP models and correlative machine learning lies in their position on the Ladder of Causation. The following table summarizes their core differences across several key dimensions relevant to biomedical research.

Table 1: Quantitative Comparison of Mechanistic AOP Models and Correlative Machine Learning

| Feature | Mechanistic AOP Models | Correlative Machine Learning |

|---|---|---|

| Primary Rung of Causation | Rung 2 (Intervention) & Rung 3 (Counterfactuals) | Rung 1 (Association) |

| Core Function | Encode explicit cause-effect relationships; represent underlying biological mechanisms [21]. | Identify patterns, correlations, and associations from data without underlying causal information [20]. |

| Representation of Knowledge | Causal diagrams with directed arrows showing causal flow [21]. | Statistical models (e.g., neural networks, decision trees) mapping inputs to outputs. |

| Handling of Novel Interventions | High. Can predict outcomes of new treatments by modifying the model structure. | Low. Can only extrapolate based on patterns in past data. |

| Interpretability | High. The model structure is transparent and reflects biological understanding. | Low to Medium. Often a "black box," making it difficult to explain predictions. |

| Data Requirement | Can integrate diverse data types (in vitro, in vivo, in silico) to inform model parameters. | Requires large, high-quality datasets for training, which can be biased or incomplete. |

| Typical Experimental Use | Hypothesis generation, trial design, risk assessment, and understanding system-level effects. | Pattern recognition, classification, and prediction from observed data. |

Experimental Evidence Supporting Causal Diagrams

The superiority of causal models for understanding complex relationships is supported by empirical research. In a controlled study, participants who studied a causal diagram while reading an expository science text demonstrated a better understanding of the five causal sequences in the text compared to those who only read the text, even when study time was controlled [21]. This supports the causal explication hypothesis, which posits that causal diagrams improve comprehension by making the implicit causal structure of a system explicit in a visual format [21].

The experimental protocol for such a study typically involves:

- Participant Assignment: Randomly assigning participants to one of two groups: an experimental group (text-and-diagram) and a control group (text-only) [21].

- Material Presentation: Providing the experimental group with both the expository text and a causal diagram that visually represents the cause-effect relationships described. The control group receives only the text [21].

- Controlled Study Time: Allowing participants a fixed amount of time (e.g., 10 minutes) to study the materials to ensure that any differences in outcomes are not due to unequal study effort [21].

- Assessment: Administering tests to measure comprehension, specifically focusing on the understanding of causal sequences and interrelationships among steps in a cause-and-effect chain [21].

This protocol provides a template for evaluating the utility of causal models in specific research contexts, such as predicting drug toxicity or efficacy.

Implementing Causal Reasoning: A Toolkit for Researchers

A Generic Causal AOP Workflow

Implementing a causal modeling approach involves a specific workflow that moves from knowledge assembly to simulation and validation. The following diagram outlines a generalized protocol for building and testing a mechanistic AOP model, which can be adapted for various research scenarios in drug development.

The Scientist's Toolkit: Essential Reagents for Causal Research

Building and testing causal models requires a combination of conceptual frameworks and practical tools. The following table details key "research reagents" essential for work in this field.

Table 2: Essential Reagents for Causal Model-Based Research

| Item/Tool | Function/Benefit | Causal Rung Addressed |

|---|---|---|

| Causal Diagrams (DAGs) | Visual maps that make implicit causal assumptions explicit, aiding in identifying confounders and sources of bias [21]. | Rung 1 & 2 |

| Structural Causal Models (SCMs) | A mathematical framework combining graphical models and structural equations to formalize causal relationships, enabling counterfactual analysis. | Rung 2 & 3 |

| Do-Calculus | A set of mathematical rules that allow researchers to determine if a causal effect can be estimated from observational data, bridging Rung 1 and Rung 2. | Rung 2 |

| Randomized Controlled Trials (RCTs) | The gold-standard experimental protocol for establishing causal effects (the do operator) by actively intervening on a treatment variable. |

Rung 2 |

| Causal Inference Software (e.g., DoWhy, CausalML) | Open-source libraries that implement algorithms for causal effect estimation from data using SCMs and DAGs. | Rung 2 & 3 |

| High-Throughput Screening (HTS) Data | Large-scale experimental data used to inform key relationships and parameters within a mechanistic AOP model. | Rung 1 |

| 'What-If' Simulation Platforms | Computational environments that allow researchers to simulate interventions and counterfactuals using a validated causal model. | Rung 2 & 3 |

Judea Pearl's Ladder of Causation provides a powerful framework for evaluating analytical approaches in scientific research. It clearly demonstrates that correlative machine learning, while useful for prediction, is fundamentally limited to the first rung of association. In contrast, mechanistic AOP models, which explicitly represent cause-effect relationships, operate on the higher rungs of intervention and counterfactuals. This allows them to answer the critical "what if" and "why" questions that are essential for reliable drug development and safety assessment. The experimental evidence confirms that making causal structure explicit enhances understanding of complex systems. For researchers and drug development professionals, embracing the tools and methodologies of causal modeling is not merely an technical improvement, but a necessary step toward achieving truly explainable, robust, and predictive science.

Building Causal Models: Methodologies and Real-World Applications in Drug Development

The Adverse Outcome Pathway (AOP) framework is a conceptual structure designed to organize and communicate knowledge concerning the sequence of measurable biological events that link a direct, molecular-level initial interaction of a chemical stressor (the Molecular Initiating Event, or MIE) to an Adverse Outcome (AO) of regulatory relevance at the organism or population level [22] [17]. AOPs serve as a foundational tool for translating mechanistic data from in silico models, in vitro assays, and high-throughput testing into predictions relevant for human health and ecological risk assessment [22]. This framework is inherently chemically-agnostic, meaning it describes biological response pathways that can be initiated by any number of chemical or non-chemical stressors, thereby facilitating a shift away from traditional, resource-intensive animal testing towards more efficient, pathway-based safety assessments [22] [17].

The core structure of an AOP is modular, consisting of a series of causally linked Key Events (KEs). These events are connected by Key Event Relationships (KERs), which describe the evidence supporting the causal inference from one key event to the next [22] [23]. This modular design allows for the re-use of key events across different AOPs, enabling the construction of more complex AOP networks that capture the pleiotropic and interactive effects common in real-world exposure scenarios [23]. The AOP framework does not seek to capture the full complexity of biology but provides a simplified, pragmatic scaffold to support prediction and decision-making [23].

Core Components of an AOP: From MIE to AO

An AOP provides a standardized and structured description of the progression of toxicity along a defined pathway. The following diagram illustrates the logical flow and core components of a generalized AOP, showing the cascade from the initial molecular interaction to the adverse outcome at the organism level.

The individual components of this pathway are:

- Molecular Initiating Event (MIE): The MIE is the initial point of interaction between a chemical stressor and a specific biological molecule within an organism [17] [18]. This event triggers the cascade of subsequent key events. Examples include a chemical binding to a specific receptor (e.g., estrogen receptor), inhibiting a critical enzyme (e.g., aromatase), or directly damaging DNA [22] [17].

- Key Events (KEs): Key Events are measurable, essential changes in biological state that occur between the MIE and the AO [17]. They represent a progression of toxicity across different levels of biological organization, from molecular and cellular changes to effects on tissues and organs. The causal linkage between these KEs is a defining feature of an AOP.

- Key Event Relationships (KERs): A KER describes the causal or mechanistic relationship between two adjacent Key Events [22] [23]. The KER documents the scientific evidence that supports the claim that a change in one key event is likely to lead to a change in the next. This evidence can be based on biological plausibility, empirical observations, or quantitative understanding.

- Adverse Outcome (AO): The AO is an adverse effect of direct regulatory significance at the individual level (e.g., cancer, organ failure, reduced fertility) or the population level (e.g., reduced population sustainability) [17] [18]. The AO is the endpoint that risk assessment aims to prevent, and the AOP framework provides the mechanistic justification for using data from earlier KEs to predict this outcome.

AOPs in Action: From Linear Pathways to Complex Networks

While individual AOPs are often presented as linear chains for clarity, real-world biological systems involve significant interconnectivity. The AOP framework accommodates this complexity through the concept of AOP networks, which are assemblages of individual AOPs that share one or more Key Events [23]. These networks provide a more realistic and holistic view of how different stressors can interact and lead to multiple or synergistic adverse outcomes.

The following diagram illustrates a simplified AOP network, demonstrating how shared Key Events can connect different pathways and create a more complex predictive model.

Quantitative AOPs (qAOPs)

To move beyond qualitative descriptions, the field is advancing towards the development of Quantitative AOPs (qAOPs). A qAOP formalizes the relationships between KEs using mathematical models that define the dose-response and time-course behaviors [22]. For example, a qAOP might use a feedback-controlled model of the hypothalamic-pituitary-gonadal axis to predict how a chemical that inhibits steroid synthesis leads to quantifiable reductions in reproductive capacity in fish [22]. These quantitative models are critical for defining the dynamic thresholds and modulating factors that determine whether a perturbation at the molecular level will ultimately propagate to an adverse outcome.

AOPs vs. Machine Learning: A Comparative Analysis of Mechanistic and Correlative Approaches

Within the context of modern toxicology and drug development, the mechanistic, hypothesis-driven AOP framework presents a distinct paradigm compared to data-driven, correlative machine learning (ML) approaches. The following table provides a structured comparison of these two methodologies, highlighting their complementary strengths and limitations.

Table 1: Comparative analysis of Adverse Outcome Pathway (AOP) and Machine Learning (ML) approaches.

| Feature | Adverse Outcome Pathway (AOP) | Machine Learning (ML) |

|---|---|---|

| Primary Objective | Establish causal, mechanistic relationships between a molecular perturbation and an adverse outcome [8]. | Establish statistical relationships and correlations between inputs and outputs from large datasets [8]. |

| Underlying Logic | Deductive reasoning: Uses established biological principles to make predictions about new scenarios, even those not present in the original data [8]. | Inductive reasoning: Identifies patterns and learns from past data to make predictions, but is limited to the scope and quality of the data supplied [8]. |

| Data Requirements | Can be developed and applied with small, targeted datasets focused on specific pathway components [8]. | Requires large, extensive datasets for training and validation to build accurate predictive models [8]. |

| Handling of Complexity | Can struggle with multi-scale complexity; AOP networks are used to manage interconnected pathways [23]. | Excels at tackling problems with multiple space and time scales by identifying complex, non-linear patterns [8]. |

| Interpretability & Insight | High interpretability; provides biological understanding and insight into mechanisms of action, which can inform intervention strategies [22] [8]. | Often operates as a "black box"; high predictive power but may offer limited mechanistic insight or understanding of causality [8]. |

| Regulatory Application | Directly supports mechanism-based risk assessment and the use of alternative testing methods (NAMs) by providing a biological rationale [22] [17]. | Primarily used for prioritization and screening of chemicals or for predicting properties based on structural similarities [8]. |

As the table illustrates, AOPs and ML are not inherently competitive but rather complementary. A synergistic approach, where ML models are used to analyze high-throughput data to identify potential MIEs or KEs, and AOPs provide the causal framework to validate and interpret these findings, represents the future of predictive toxicology [8]. Mechanistic models can provide the "why" that underpins the "what" predicted by machine learning.

Case Studies and Experimental Applications of AOPs

Case Study 1: Development of a Defined Approach for Skin Sensitization

The AOP for skin sensitization is one of the most developed and successfully applied examples in the framework. This AOP describes how electrophilic chemicals (stressor) covalently bind to skin proteins (MIE), leading to a cascade of KEs including inflammatory cytokine release and T-cell proliferation, ultimately resulting in the allergic response (AO) [22].

- Experimental Protocol: The testing strategy leverages a suite of in vitro and in chemico assays, each designed to measure a specific KE within the AOP [22]:

- Direct Peptide Reactivity Assay (DPRA): Measures the MIE (covalent binding to proteins).

- KeratinoSens or LuSens Assay: Measures the KE of keratinocyte activation and antioxidant response element pathway activation.

- Human Cell Line Activation Test (h-CLAT): Measures the KE of dendritic cell activation and specific surface marker expression.

- Data Integration and Outcome: Data from these individual assays are integrated using Bayesian networks or other defined approaches to generate a categorical prediction (e.g., sensitizer/non-sensitizer) [22]. This AOP-based testing strategy has been formally adopted by the OECD, enabling the replacement of traditional in vivo tests for skin sensitization [22].

Case Study 2: Prioritizing Endocrine Disrupting Chemicals

The US EPA's Endocrine Disruptor Screening Program faces the challenge of prioritizing over 10,000 chemicals for potential endocrine activity. AOPs provide the necessary linkage between high-throughput screening (HTS) data and adverse outcomes.

- Experimental Protocol: This approach relies on HTS assays to identify chemicals that interact with specific MIEs of concern [22]:

- ToxCast/Tox21 HTS Assays: A battery of in vitro assays used to screen chemicals for MIEs such as estrogen receptor (ER) and androgen receptor (AR) binding, activation, and antagonism.

- AOP Anchoring: The AOP framework "anchors" the HTS data by providing the biological context that links receptor activation (MIE) to adverse outcomes like reproductive dysfunction [22]. For example, AOP 25 explicitly describes the pathway from aromatase inhibition to reproductive failure in fish.

- Data Integration and Outcome: The HTS output is used to prioritize chemicals for those most likely to act via endocrine MIEs. The associated AOPs provide the mechanistic evidence that supports the use of these in vitro bioactivity data for prioritization, significantly increasing the efficiency of the screening program [22] [17].

Successfully building and applying AOPs requires a combination of bioinformatics tools, experimental reagents, and data resources. The following table details key components of the AOP researcher's toolkit.

Table 2: Key research reagents, tools, and resources for AOP development and application.

| Tool/Resource Category | Specific Examples & Functions |

|---|---|

| AOP Knowledge Bases | AOP-Wiki [22] [18]: Central repository for collaborative AOP development. Effectopedia [18]: Platform for building quantitative, modular AOPs. Intermediate Effects DB [18]: Links chemical data to MIEs and KEs. |

| In Vitro Assay Systems | Cell-based assays (e.g., KeratinoSens, h-CLAT) [22]: Measure key events like cell activation. Receptor binding & transactivation assays: Quantify Molecular Initiating Events (MIEs) for endocrine pathways. High-Throughput Screening (HTS) platforms: Enable rapid testing of thousands of chemicals. |

| 'Omics Technologies | Transcriptomics (RNA-seq): Identifies gene expression changes as potential key events. Proteomics: Measures alterations in protein expression and modification. Metabolomics: Profiles changes in metabolite levels, linking molecular events to tissue/organ responses. |

| Computational Modeling Tools | Quantitative AOP (qAOP) models [22]: Mathematical models describing quantitative relationships between KEs. AOP Xplorer [18]: Computational tool for graphical representation of AOP networks. Bayesian Network Models [22]: Integrate data from multiple assays for probabilistic prediction. |

| Reference Chemicals | Potent agonists/antagonists (e.g., 17β-estradiol, flutamide): Used as positive controls in assay validation. Chemicals with known adverse outcomes: Essential for establishing and testing Key Event Relationships (KERs). |

The Adverse Outcome Pathway framework provides a powerful, structured, and mechanistic foundation for modernizing toxicology and risk assessment. By explicitly linking molecular perturbations to adverse outcomes through a series of causally connected key events, AOPs facilitate the use of mechanistic data in safety decisions, support the development of non-animal testing methods, and enable a more efficient and informative evaluation of chemicals. While distinct from correlative machine learning approaches, AOPs are highly complementary to them. The future of predictive toxicology lies in a synergistic paradigm where high-throughput, data-rich ML models are used to generate hypotheses and prioritize chemicals, and mechanism-rich AOPs are used to validate predictions, establish causality, and provide the biological context essential for credible and protective risk assessment.

In the context of mechanistic Adverse Outcome Pathways (AOPs) versus correlative machine learning (ML) research, Directed Acyclic Graphs (DAGs) and Structural Causal Models (SCMs) provide a formal framework for moving beyond prediction to causal understanding. While ML models excel at identifying correlative patterns from high-dimensional data, they inherently face challenges in establishing causality, a limitation particularly problematic in drug development where interventions are planned [24]. DAGs and SCMs address this gap by explicitly encoding causal assumptions, enabling researchers to identify confounders, guide data collection, and estimate causal effects—capabilities essential for translating mechanistic AOP models into reliable safety assessments [25] [24].

Directed Acyclic Graphs (DAGs)

A Directed Acyclic Graph (DAG) is a graphical causal model consisting of nodes (representing variables) and directed edges (arrows) showing the assumed causal influences between them, with no directed cycles [25]. DAGs encode qualitative causal knowledge, illustrating which variables are presumed to affect others [26].

Structural Causal Models (SCMs)

A Structural Causal Model (SCM) is a mathematical framework that formalizes the qualitative assumptions of a DAG [27]. An SCM is a tuple (V, F, N, Pₙ) where V represents endogenous variables, F is a collection of functions (structural equations) defining how each variable is caused by others, N represents exogenous (noise) variables, and Pₙ is their probability distribution [27]. The SCM framework provides the do-calculus, a set of rules for computing causal effects from observational data under the model's assumptions [24].

The logical relationship between a DAG and an SCM is that a DAG provides the qualitative structure, while the SCM provides the quantitative, functional form of the causal relationships.

Comparative Analysis: DAGs/SCMs vs. Correlative Machine Learning

This analysis objectively compares the performance of causal frameworks (DAGs/SCMs) against standard correlative ML approaches across capabilities critical for drug development.

Table 1: Performance Comparison of Causal Frameworks vs. Correlative ML

| Performance Metric | DAGs/SCMs | Correlative ML |

|---|---|---|

| Causal Effect Identification | Explicitly models and identifies causal effects using do-calculus [24] | Limited to detecting associations; prone to confounding [24] |

| Handling of Confounders | Graphically identifies confounders for adjustment via backdoor criterion [25] | No inherent mechanism; confounders can bias predictions [24] |

| Interpretability & Mechanism | High; provides transparent, interpretable causal structure [26] | Often low; "black box" models obscure reasoning [24] |

| Prediction Under Intervention | Can predict effects of interventions (do-operator) [25] |

Predicts based on observed data; performance degrades under intervention [24] |

| Data Requirement Assumptions | Requires causal assumptions (often untestable) and domain knowledge [27] [24] | Primarily requires large, representative datasets for correlation |

| Handling of Unobserved Confounding | Acknowledges threat; some extensions (e.g., IV) can address it [24] | Highly vulnerable; leads to spurious correlations and flawed predictions [24] |

Experimental Protocols and Validation

Protocol 1: Bounding Causal Effects under DAG Uncertainty

A critical experimental protocol addresses the common critique that an assumed DAG may be incorrect [27].

- Objective: To compute bounds for causal queries, such as the Average Treatment Effect (ATE), over a collection of plausible DAGs compatible with imperfect prior knowledge, without enumerating all graphs exhaustively [27].

- Methodology: An efficient, gradient-based optimization method is employed. The method operates within the SCM framework, considering a set of plausible graphs

Gcompatible with available knowledge. The optimization finds the minimum and maximum possible value for a target causal query across all SCMs compatible with any DAG inGand the observed data distribution [27]. - Validation: The method is validated using synthetic data (both linear and non-linear) and real-world data. Performance is assessed based on coverage (whether the true effect lies within the bounds) and sharpness (width of the bounds) [27].

The workflow for this protocol involves defining a set of plausible graphs and then using an optimization procedure to find the bounds on the causal effect.

Protocol 2: Integrating Causal and Statistical Models in Social Network Analysis

This protocol from behavioral ecology illustrates a full Bayesian workflow for estimating causal drivers from noisy data, analogous to inferring network effects in biological systems [26].

- Objective: To estimate the causal effects of individual-, dyad-, and group-level features (network structuring features) on a latent social interaction network, which is only partially observed through behavioral samples [26].

- Methodology: