From Raw Data to Actionable Insights: A Guide to Exploratory Data Analysis in Environmental Monitoring

This article provides a comprehensive guide to Exploratory Data Analysis (EDA) for researchers and scientists applying these techniques in environmental monitoring.

From Raw Data to Actionable Insights: A Guide to Exploratory Data Analysis in Environmental Monitoring

Abstract

This article provides a comprehensive guide to Exploratory Data Analysis (EDA) for researchers and scientists applying these techniques in environmental monitoring. It covers the foundational principles of EDA, from data integrity checks and handling missing values to graphical and numerical distribution analysis. The guide then explores advanced methodological applications, including multivariate analysis, machine learning integration, and specialized frameworks like Effect-Directed Analysis (EDA). It addresses critical troubleshooting and optimization strategies for dealing with outliers, censored data, and ensuring quality control. Finally, it examines validation and comparative techniques through case studies, potency balance analysis, and benchmarking against traditional methods. The synthesis aims to enhance the rigor of environmental data analysis and discusses its broader implications for evidence-based policy and biomedical research.

Laying the Groundwork: Core Principles and Data Integrity for Robust Environmental EDA

The Critical Role of EDA as a First Step in Data Analysis

Exploratory Data Analysis (EDA) is an indispensable approach that data scientists and researchers employ to analyze, investigate, and summarize datasets before formal modeling or hypothesis testing [1]. Originally developed by American mathematician John Tukey in the 1970s, EDA techniques continue to be a widely used method in the data discovery process today [1]. The primary purpose of EDA is to understand data patterns, identify obvious errors, detect outliers or anomalous events, and find interesting relations among variables without making premature assumptions [1]. In environmental monitoring research, EDA serves as a critical first step that enables researchers to understand complex environmental systems, recognize spatial and temporal patterns, and design appropriate statistical analyses that yield meaningful results [2].

Within environmental monitoring, EDA helps researchers comprehend where outliers occur and how variables are related, which is particularly important when sites are likely affected by multiple stressors [2]. By conducting initial explorations of stressor correlations, environmental scientists can better relate stressor variables to biological response variables and identify candidate causes that should be included in causal assessment [2]. The exploratory nature of EDA provides insights that might be missed if researchers moved directly to confirmatory analysis, making it especially valuable for investigating complex environmental systems where relationships between variables are not fully understood.

Core Principles and Types of EDA

Fundamental Principles

EDA operates on several key principles that distinguish it from confirmatory data analysis. The approach emphasizes visualization techniques to uncover patterns, resistance to outliers through robust statistical measures, and iterative investigation that encourages researchers to let the data reveal its underlying structure [1]. Rather than testing formal hypotheses, EDA employs a flexible strategy to detect visible patterns that might suggest new hypotheses or research directions. This philosophy aligns particularly well with environmental monitoring, where researchers often begin with limited a priori knowledge about the complex interactions within ecosystems.

The open-ended investigative process of EDA allows environmental researchers to understand the distribution of environmental variables, recognize measurement errors, identify unexpected gaps in data collection, and discover potential relationships between stressors and biological responses [2]. This understanding is crucial for designing subsequent statistical analyses that are appropriate for the data's characteristics and distribution. For instance, examining the distribution of water quality parameters might reveal skewed distributions that require transformation before applying parametric statistical tests [2].

Types of Exploratory Data Analysis

There are four primary types of EDA, each serving distinct purposes in the data investigation process [1]:

Univariate non-graphical analysis represents the simplest form of data analysis, where the examined data consists of just one variable. Since it deals with a single variable, it doesn't address causes or relationships. The main purpose is to describe the data and find patterns that exist within it through summary statistics including mean, median, mode, variance, range, and quartiles.

Univariate graphical analysis enhances non-graphical methods through visual representations that provide a more complete picture of the data. Common techniques include stem-and-leaf plots that show all data values and distribution shape, histograms that represent frequency or proportion of cases for value ranges, and box plots that graphically depict the five-number summary of minimum, first quartile, median, third quartile, and maximum [1].

Multivariate nongraphical analysis examines relationships between two or more variables through cross-tabulation or statistics without visual representations. These techniques typically show how variables interact within the dataset, revealing potential correlations or associations that might warrant further investigation.

Multivariate graphical analysis uses graphics to display relationships between two or more datasets through visualizations such as scatter plots, multivariate charts, run charts, bubble charts, and heat maps [1]. These representations help researchers identify complex interactions that might be difficult to detect through numerical summaries alone.

Table 1: Types of Exploratory Data Analysis and Their Applications in Environmental Monitoring

| EDA Type | Key Techniques | Environmental Monitoring Applications |

|---|---|---|

| Univariate Non-graphical | Summary statistics (mean, median, mode, variance, range) | Initial screening of individual water quality parameters (e.g., nutrient concentrations, metal levels) |

| Univariate Graphical | Histograms, box plots, stem-and-leaf plots | Examining distribution of pollutant concentrations across sampling sites [2] |

| Multivariate Nongraphical | Cross-tabulation, correlation coefficients | Assessing relationships between multiple stressors (e.g., temperature, pH, contaminant levels) [2] |

| Multivariate Graphical | Scatter plots, heat maps, bubble charts | Visualizing spatial and temporal patterns of pollution across watersheds [2] |

EDA in Environmental Monitoring: Methodologies and Workflows

Data Preparation and Quality Assessment

Careful data preparation represents a critical preliminary step before conducting EDA in environmental monitoring contexts. This process ensures that proposed analysis is feasible, valid results are obtained, and analytical outcomes are not unduly influenced by anomalies or errors [3]. Data preparation should not be hurried, as it often constitutes the most time-consuming aspect of data analysis in environmental science. Researchers must check and clean electronic data before comprehensive analysis, which may involve formatting, collating, and manipulating datasets while maintaining the ability to retrace steps back to raw data [3].

Environmental data presents unique challenges that EDA helps address. Data integrity issues can arise from multiple sources, including losses or errors during sample collection, preparation, interpretation, and reporting [3]. After quality assurance/quality control (QA/QC) checked data leave the field or laboratory, accidental alterations can occur during transcription, transposing rows and columns, editing, recoding, or unit conversions. Effective screening methods incorporating both graphical procedures (histograms, box plots, time sequence plots) and descriptive numerical measures (mean, standard deviation, coefficient of variation, skewness, and kurtosis) can detect these issues before formal analysis [3].

Two particularly challenging data issues in environmental monitoring include:

Outliers: Environmental researchers must exercise caution when labeling extreme observations as outliers. Statistical tests exist for identifying outliers, but simple descriptive statistical measures and graphical techniques combined with the monitoring team's understanding of the system remain valuable tools [3]. In multivariate contexts, outlier identification becomes more complex, as observations may be 'unusual' even when reasonably close to respective means of constituent variables due to correlation structures [3].

Censored data: Data below or above detection limits (left and right 'censored' data, respectively) are common in environmental datasets and require appropriate handling [3]. Unless a water body is degraded, failure to detect contaminants is common, leading to 'below detection limit' (BDL) recordings. Ad hoc approaches include treating observations as missing or zero, using the numerical value of the detection limit, or using half the detection limit, though each method has limitations [3].

Key EDA Techniques for Environmental Data

Environmental monitoring employs specific EDA techniques tailored to the characteristics of environmental data:

Variable Distribution Analysis represents an initial EDA step that examines how values of different variables are distributed [2]. Graphical approaches include:

- Histograms: These summarize data distribution by placing observations into intervals and counting observations in each interval. The appearance depends on interval definition, making careful selection important for proper interpretation [2].

- Boxplots: These provide compact distribution summaries through a box defined by the 25th and 75th percentiles, a line at the median, and whiskers extending to extreme values or a calculated span [2]. Boxplots are particularly useful for comparing distributions of different subsets of a single variable across sampling sites or time periods.

- Cumulative Distribution Functions (CDF): A CDF represents the probability that observations of a variable are not larger than a specified value. Reverse CDFs display the probability that observations exceed a specified value. When constructed with weights (e.g., inclusion probabilities from probability design), CDFs can estimate probabilities for statistical populations [2].

- Q-Q Plots: These graphical tools compare variables to theoretical distributions or other variables. A common application checks whether a variable is normally distributed, which informs subsequent statistical method selection [2].

Scatterplots graphically display matched data with one variable on the horizontal axis and another on the vertical axis [2]. Environmental scientists typically plot influential parameters as independent variables and responsive attributes as dependent variables. Scatterplots help visualize relationships and identify issues (e.g., outliers) that might influence subsequent statistical analyses [2]. Different data set characteristics become apparent through scatterplots, including nonlinear relationships and non-constant variance about mean relationships, both of which might necessitate alternative analytical techniques beyond simple linear regression [2].

Correlation Analysis measures covariance between two random variables in matched data sets, usually expressed as a unitless correlation coefficient ranging from -1 to +1 [2]. The correlation coefficient's magnitude indicates the standardized degree of association between variables, while the sign indicates association direction. Environmental scientists employ different correlation measures:

- Pearson's product-moment correlation coefficient (r): Measures degree of linear association between two variables.

- Spearman's rank-order correlation coefficient (ρ): Uses data ranks and can provide more robust association estimates.

- Kendall's tau (τ): Shares assumptions with Spearman's but represents probability that variables are ordered nonrandomly.

Different correlation coefficients may provide different estimates depending on data distribution, making EDA crucial for selecting appropriate measures [2].

Conditional Probability Analysis (CPA) estimates the probability of some event (Y) given another event's occurrence (X), written as P(Y | X) [2]. In environmental monitoring, this typically involves dichotomous response variables created by applying thresholds to continuous response variables (e.g., poor quality vs. not poor quality). CPA estimates the probability of observing poor biological condition when particular environmental conditions exceed given values. Conditional probabilities are calculated by dividing the joint probability of observing both events by the probability of observing the conditioning event [2].

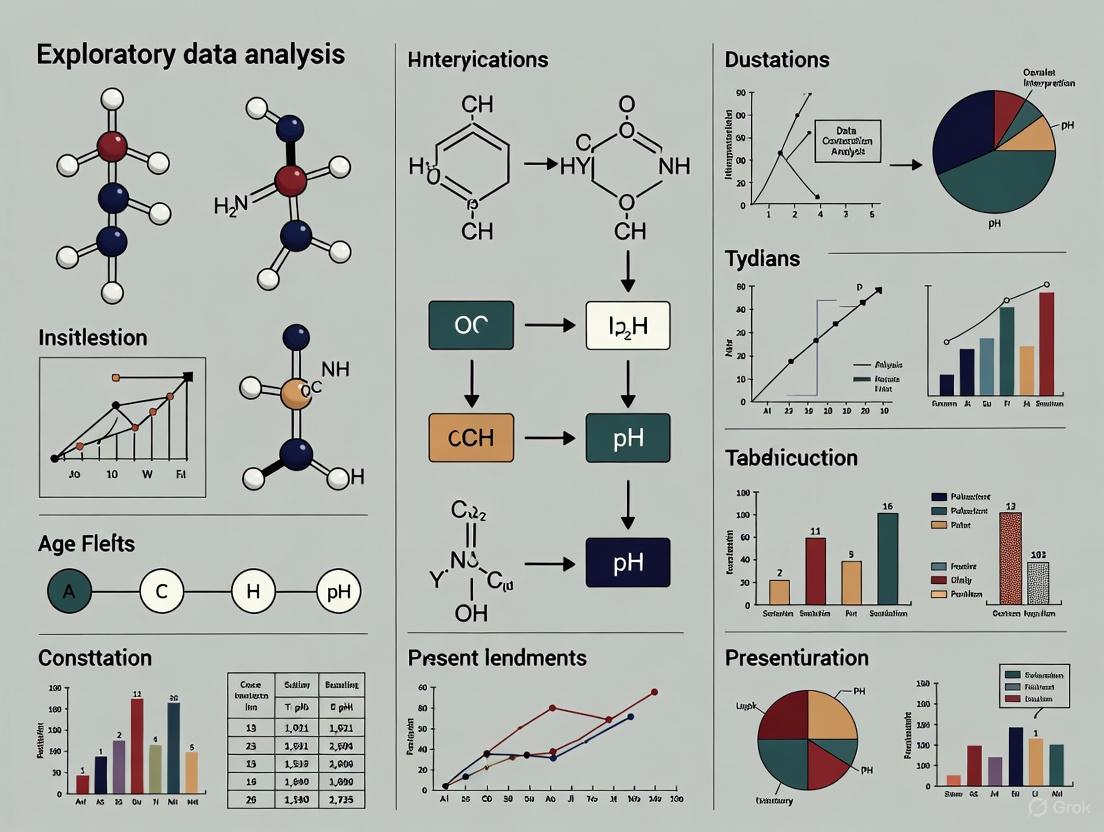

Diagram 1: Comprehensive EDA Workflow for Environmental Data Analysis. This diagram illustrates the sequential process of conducting exploratory data analysis in environmental monitoring contexts, from initial data preparation through insight generation.

Practical Implementation: Tools and Research Reagents

Computational Tools and Programming Languages

Implementing EDA in environmental monitoring requires appropriate computational tools and programming languages that facilitate data manipulation, visualization, and analysis. The most common data science programming languages used for EDA include [1]:

Python: An interpreted, object-oriented programming language with dynamic semantics. Its high-level, built-in data structures, combined with dynamic typing and dynamic binding, make it attractive for rapid application development and as a scripting language to connect existing components. Python and EDA can be used together to identify missing values in datasets, which is crucial for deciding how to handle missing values for subsequent analysis and machine learning applications.

R: An open-source programming language and free software environment for statistical computing and graphics supported by the R Foundation for Statistical Computing. The R language is widely used among statisticians in data science for developing statistical observations and data analysis, particularly in environmental research contexts.

Both languages offer extensive libraries and packages specifically designed for EDA, including visualization libraries (ggplot2 in R, Matplotlib and Seaborn in Python), data manipulation frameworks (dplyr in R, Pandas in Python), and specialized statistical packages for handling environmental data challenges such as censored values and spatial correlations.

Table 2: Essential Computational Tools for Environmental Data Exploration

| Tool Category | Specific Tools/Libraries | Key Functions in Environmental EDA |

|---|---|---|

| Programming Languages | Python, R [1] | Data manipulation, statistical analysis, visualization |

| Data Visualization | ggplot2 (R), Matplotlib/Seaborn (Python) | Creating histograms, scatterplots, boxplots for environmental data |

| Statistical Analysis | Statsmodels (Python), car (R) | Correlation analysis, distribution fitting, outlier detection |

| Specialized Environmental Packages | NADA (R), envStats (R) | Handling censored data, environmental trend analysis |

| Data Management | Pandas (Python), dplyr (R) | Data cleaning, transformation, handling missing values |

The Researcher's Toolkit: Essential EDA Techniques and Their Applications

Environmental researchers employ a diverse toolkit of EDA techniques to address different analytical needs throughout the investigation process. These techniques form the essential "research reagents" for extracting insights from complex environmental datasets:

Histograms: Used to visualize the distribution of environmental variables such as pollutant concentrations, enabling identification of skewness, multimodality, and outliers that might indicate data quality issues or meaningful environmental phenomena [2].

Boxplots: Provide compact visual summaries of variable distributions across different sites, time periods, or environmental conditions, facilitating quick comparisons and outlier detection [2]. The compact nature of boxplots makes them particularly valuable for environmental reports with space constraints.

Scatterplots: Essential for visualizing potential relationships between environmental variables, such as nutrient concentrations and biological response indicators, helping researchers identify linear and nonlinear associations before formal statistical modeling [2].

Correlation Analysis: Measures the strength and direction of association between pairs of environmental variables, with different correlation coefficients (Pearson's, Spearman's, Kendall's) appropriate for different data characteristics and distributions [2].

Q-Q Plots: Used to assess how well environmental data conform to theoretical distributions such as normality, informing decisions about data transformation and selection of appropriate statistical tests [2].

Cumulative Distribution Functions (CDF): Enable comparison of environmental variable distributions across different populations or assessment against environmental standards and guidelines, particularly when using weighted approaches that account for sampling design [2].

Advanced EDA Applications in Environmental Research

Multivariate Analysis and Spatial Visualization

Environmental monitoring increasingly involves multivariate data analysis to understand complex interactions between multiple stressors and biological responses. Basic pairwise correlation analyses often provide insufficient insights for these complex systems, necessitating multivariate approaches to exploratory data analysis [2]. Multivariate graphical techniques enable researchers to visualize interactions between multiple variables simultaneously, revealing patterns that might be obscured when examining variables in isolation.

Spatial visualization represents another critical EDA component in environmental monitoring, as the geographic distribution of sampling sites and measured parameters often reveals patterns essential for understanding environmental phenomena [2]. Mapping data helps researchers recognize spatial relationships between samples, identify geographic hotspots of contamination, and understand regional variations in environmental conditions. These spatial patterns might suggest underlying geological, hydrological, or anthropogenic factors influencing measured parameters, guiding subsequent investigation and targeted monitoring efforts.

Effect Directed Analysis (EDA) in Environmental Toxicology

A specialized application of exploratory approaches in environmental science is Effect Directed Analysis (EDA), which combines biological-effect testing with chemical analysis to identify causative agents in complex environmental mixtures [4] [5]. This methodology is particularly valuable for identifying new-age pollutants showing multitudinous health effects that are difficult to predict based solely on environmental concentration [4]. The EDA process involves three key components: (1) biotests to evaluate effects on cells/organisms, (2) fractionation of individual chemicals by chromatography, and (3) probing samples for multi-target and non-target chemical analysis [4].

The specificity, functionalities, and limitations of effect directed analysis depend on factors including the type of bioassay, sample preparation and fractionation methods, and instruments used to identify toxic pollutants [4]. Advanced instrumental techniques such as time of flight-mass spectrometry (ToF-MS), Fourier transform-ion cyclotron resonance (FT-ICR), and Orbitrap high resolution mass spectrometry provide fingerprints of hidden contaminants in complex environmental samples, even at concentrations below parts per billion levels [4]. This approach has enabled modern science to understand cause-and-effect relationships of complex emerging contaminants and their mixtures, representing a sophisticated application of exploratory principles to identify previously unrecognized environmental hazards.

Exploratory Data Analysis remains a critical first step in environmental data analysis, providing researchers with essential insights into data structure, quality, and relationships before undertaking formal statistical testing or modeling. The visual and quantitative techniques comprising EDA help environmental scientists understand complex systems, identify data issues, recognize patterns, and generate hypotheses for further investigation. As environmental monitoring efforts generate increasingly large and complex datasets, the principles of EDA developed by Tukey decades ago continue to provide valuable guidance for extracting meaningful information from environmental data. By employing the comprehensive workflow outlined in this technical guide and utilizing the appropriate tools and techniques for their specific research context, environmental professionals can ensure their analytical approaches are well-founded and their conclusions are supported by thorough preliminary data investigation.

In the highly regulated realms of environmental monitoring, pharmaceutical development, and biomedical research, data integrity serves as the foundational bedrock for scientific credibility, regulatory compliance, and public safety. Data integrity refers to the complete accuracy, consistency, and reliability of data throughout its entire lifecycle, from initial collection and processing to final analysis, reporting, and archival [6] [7]. Within the context of exploratory data analysis (EDA) in environmental research, robust QA/QC measures are not merely administrative formalities but are scientifically essential for ensuring that the patterns, trends, and outliers revealed during EDA are genuine reflections of environmental conditions rather than artifacts of poor data management [2] [3].

The ALCOA+ principles provide a widely recognized framework for data integrity, mandating that all data be Attributable, Legible, Contemporaneous, Original, and Accurate, with the "+" extending these to include Complete, Consistent, Enduring, and Available [6] [8]. Regulatory bodies like the FDA and EPA increasingly demand strict adherence to these principles, and failures can result in severe consequences, including warning letters, study rejection, and reputational damage [6] [8]. This technical guide outlines the core QA/QC measures that safeguard data integrity, with a specific focus on their critical role in supporting valid exploratory data analysis within environmental monitoring research.

Core Principles and the QA/QC Framework

A robust Quality Assurance (QA) and Quality Control (QC) framework is essential for maintaining data integrity. QA encompasses the broad planned actions necessary to provide confidence that data quality requirements will be met, including study design, training, and documentation. QC comprises the specific technical activities used to assess and control the quality of the data as it is generated, such as calibration of instruments, replicate analyses, and control charts [9].

Table 1: Core Aspects of Environmental Data Integrity [8] [9] [7]

| Aspect | Technical Definition | Role in QA/QC Framework |

|---|---|---|

| Accuracy | Closeness of a measured value to its true or accepted reference value. | Achieved through instrument calibration, use of certified reference materials, and method validation. |

| Reliability | Consistency and repeatability of data over time and under defined conditions. | Ensured via standardized operating procedures (SOPs), routine maintenance, and qualified personnel. |

| Completeness | Proportion of all required data points that are collected and available for analysis. | Managed through chain-of-custody forms, data review processes, and handling protocols for missing data. |

| Timeliness | Availability of data within a timeframe that allows for effective decision-making. | Governed by project schedules, data processing workflows, and rapid reporting systems for critical results. |

| Attributability | Ability to trace a data point to its source (person, instrument, time, and location). | Implemented via secure login credentials, audit trails, and detailed metadata capture. |

| Security | Protection of data from unauthorized access, alteration, or destruction. | Maintained through user access controls, audit trails, data backup, and encryption. |

The Quality Assurance Project Plan (QAPP) is a formal document that operationalizes this framework. The QAPP describes in comprehensive detail the QA/QC requirements and technical activities that must be implemented to ensure the results of environmental operations will satisfy stated performance criteria [9]. For researchers, a well-constructed QAPP is not a burden but a vital tool that pre-defines data quality objectives, standardizes protocols, and ultimately ensures that the data is fit for its intended purpose, including sophisticated exploratory and statistical analyses.

The Data Lifecycle: QA/QC Measures from Collection to Analysis

Data integrity must be maintained throughout the entire environmental data lifecycle. The integration of QA/QC measures at each stage creates a seamless chain of custody and quality, which is fundamental for trustworthy Exploratory Data Analysis.

Stage 1: Data Generation and Collection

This initial stage is critical, as errors introduced here are often impossible to correct later. Key QA/QC measures include:

- Standardized Sampling Protocols: Using scientifically valid, standardized procedures for sample collection, preservation, and transportation to prevent contamination or degradation [9].

- Instrument Calibration and Validation: Ensuring all monitoring instruments and sensors are properly calibrated against traceable standards and are functioning within specified parameters before and during data collection [8]. This includes Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ) for critical systems [8].

- Automated Data Capture: Wherever possible, replacing manual, paper-based logging with automated digital systems to eliminate transcription errors and ensure data is recorded contemporaneously [6] [8]. Barcode systems, as shown in Figure 1 of the search results, can enhance traceability and accuracy [6].

Stage 2: Data Processing and Management

Once collected, raw data often requires processing and secure storage.

- Data Validation and Verification: Implementing checks to confirm that data falls within expected ranges and is consistent with other related parameters. This includes checking for impossible values (e.g., negative concentrations) or extreme outliers that may indicate an error [3].

- Handling Censored Data: A significant challenge in environmental data is values reported as "Below Detection Limit" (BDL). Ad hoc approaches like substituting with zero, half the detection limit, or the detection limit itself can bias statistical analyses. For robust EDA, it is recommended to flag all censored data and, if a significant proportion (>25%) of data is censored, employ more sophisticated statistical methods for left-censored data [3].

- Secure Data Storage: Utilizing secure, managed databases with features like user access controls and audit trails to prevent unauthorized alteration and maintain a record of all changes to the data [6] [8]. Robust backup and disaster recovery plans are essential to ensure data endurance and availability [7].

Stage 3: Data Analysis and Reporting

This is the stage where EDA comes to the fore, and its validity depends entirely on the integrity of the preceding stages.

- Exploratory Data Analysis (EDA): EDA is an essential first step that uses a suite of graphical and numerical tools to identify general patterns, features, and potential issues in the data before formal hypothesis testing or modeling is conducted [2]. Key EDA techniques for verifying data quality and informing subsequent analysis are detailed in Section 4.

- Managing Outliers: EDA often reveals outliers. It is critical to investigate the cause of an outlier before deciding to exclude it. A statistical outlier may be a genuine extreme value or a result of a measurement error. Exclusion should only occur if a compelling technical reason exists (e.g., a known sampling error); otherwise, the value should be retained and flagged for further scrutiny, as it may represent a critical environmental signal [3].

- Metadata and Documentation: Complete and accurate reporting must include comprehensive metadata describing the methodologies, instruments, and processing steps used. This ensures attributability and allows for the proper interpretation and replication of the analysis [9] [7].

The Integral Role of Exploratory Data Analysis in QA/QC

Exploratory Data Analysis is a powerful component of the QC toolkit. By applying EDA techniques, researchers can assess data quality, identify potential integrity issues, and confirm that assumptions for subsequent statistical analyses are met [2] [3]. The workflow below visualizes this iterative process of using EDA for data quality assessment.

Diagram 1: EDA for QA/QC Workflow

Table 2: Key EDA Techniques for Data Quality Assessment [2] [3] [10]

| EDA Technique | Primary QA/QC Function | Methodology & Interpretation |

|---|---|---|

| Histograms & Boxplots | Examine the distribution of a single variable and identify potential outliers. | Methodology: Plot frequency of values (histogram) or a 5-number summary (boxplot).QA/QC Use: Reveals skewness, bimodality, and values outside the "whiskers" of the boxplot (potential outliers requiring investigation). |

| Scatterplots & Correlation Analysis | Visualize and quantify relationships between two variables. | Methodology: Plot one variable against another; calculate Pearson's (linear) or Spearman's (monotonic) correlation coefficient.QA/QC Use: Identifies expected/unexpected relationships, non-linearity, and clusters of data that may indicate sampling bias or data quality issues. |

| Quantile-Quantile (Q-Q) Plots | Assess if data follows a theoretical distribution (e.g., normality). | Methodology: Plot sample quantiles against theoretical quantiles.QA/QC Use: A straight line suggests the data follows the theoretical distribution. Significant deviations indicate skewness or heavy tails, informing the choice of subsequent statistical tests or the need for data transformation. |

| Spatial Mapping & Variograms | Evaluate spatial autocorrelation and trends for geospatial data. | Methodology: Map sample locations with posted results; a variogram plots semivariance against distance between points.QA/QC Use: Identifies spatial trends, clusters of high/low values, and the range of spatial correlation. Helps detect outliers that are anomalous in a spatial context. |

The power of EDA is exemplified in its application to complex environmental challenges. For instance, one study used boxplots for geochemical mapping of stream sediments, successfully identifying outliers that corresponded with known mineralization sites despite complex variability from topography and climate [11]. Furthermore, in a multivariate context—common in water quality monitoring with measurements of numerous correlated parameters—multivariate EDA techniques are crucial, as an observation can be "unusual" even if it appears normal for each variable individually [3].

Essential Research Reagent Solutions and Tools

Implementing the QA/QC and EDA protocols described requires a suite of reliable tools and materials. The following table details key solutions for environmental monitoring research.

Table 3: Essential Research Reagent Solutions and Tools for Environmental Monitoring [6] [12] [8]

| Category / Item | Primary Function in QA/QC | Technical Specification & Application Notes |

|---|---|---|

| Validated Microbial Air Samplers (e.g., MAS-100) | Accurate and attributable collection of airborne viable contaminants in cleanrooms and manufacturing environments. | Samplers should be 21 CFR Part 11 compliant, with features like barcode tracking for full sample traceability and integration with EM software for direct data transfer [6]. |

| Calibrated Data Loggers (e.g., MadgeTech) | Continuous, accurate monitoring of critical environmental parameters (temperature, humidity, pressure). | Systems must have validated calibration certificates, secure digital storage, audit trails, and real-time alerting capabilities to maintain data integrity for GMP studies [12] [8]. |

| Laboratory Information Management System (LIMS) | Centralized management of sample lifecycle, associated data, and standard operating procedures (SOPs). | A compliant LIMS enforces SOPs, manages user access, maintains a complete audit trail, and ensures data is original, accurate, and secure [7]. |

| Certified Reference Materials (CRMs) | Calibration of analytical instruments and verification of method accuracy for specific analytes. | CRMs must be traceable to national or international standards and used consistently as part of QC procedures to demonstrate analytical accuracy [9]. |

| Statistical Software with EDA Capabilities (e.g., R, Python, CADStat) | Performing comprehensive exploratory data analysis and advanced statistical modeling. | Software should generate standard EDA graphics (histograms, Q-Q plots, scatterplot matrices) and support robust statistical tests for outlier detection and correlation analysis [2] [10]. |

In environmental monitoring research, data integrity is non-negotiable. It is the essential precondition for generating reliable knowledge, making sound regulatory and public health decisions, and maintaining scientific and public trust. A systematic approach—combining a strong QA/QC framework based on ALCOA+ principles with the rigorous application of Exploratory Data Analysis—provides a powerful methodology for achieving this integrity. By embedding these practices throughout the data lifecycle, from collection through to reporting, researchers and drug development professionals can ensure their data is not only compliant but also fundamentally worthy of confidence.

Within the framework of exploratory data analysis (EDA) for environmental monitoring research, understanding the distribution of data is an critical first step. EDA is an analysis approach that identifies general patterns in the data, including outliers and features that might be unexpected [2]. In biological monitoring data, for example, sites are likely to be affected by multiple stressors, making initial explorations of data distributions and correlations critical before relating stressor variables to biological response variables [2]. The distribution of environmental data—whether concentrations of pollutants in soil, water quality parameters, or air quality measurements—directly influences the selection of appropriate statistical analyses and the validity of subsequent conclusions. This guide provides an in-depth examination of three foundational graphical techniques for distribution analysis: histograms, boxplots, and quantile-quantile (Q-Q) plots, with specific methodologies and applications tailored to environmental research.

Histograms

A histogram is a graphical representation that summarizes the distribution of a continuous dataset by dividing the observations into intervals (also called classes or bins) and counting the number of observations that fall into each interval [2]. The x-axis represents the range of the data, divided into consecutive bins, while the y-axis can represent the frequency (count), percent of total, fraction of total, or density of observations in each bin. Histograms are particularly useful for visualizing the shape, central tendency, and spread of a dataset, and for identifying potential outliers or unexpected features in environmental data, such as multi-modality which may indicate multiple populations [13].

Experimental Protocol and Implementation

The construction of a histogram involves several key steps, with choices at each step influencing the final visual output and interpretation.

- Data Preparation: Begin with a cleaned and validated dataset. Address issues such as missing data or values below detection limits appropriately, as these can skew distributional understanding [3].

- Bin Selection: The number and width of bins are crucial. While many software packages default to a reasonable number, the appropriate choice can depend on the data size and variability. Too few bins can obscure patterns, while too many can introduce noise. Formal rules like Sturges' rule or the Freedman-Diaconis rule can provide guidance, but iteration and domain knowledge are often required.

- Axis Labeling: The y-axis must be clearly labeled to indicate what is being measured (e.g., "Frequency," "Density").

- Interpretation: Analyze the resulting plot for shape (symmetric, skewed), center, spread, and any potential outliers or gaps.

Table 1: Histogram Components and Interpretation Guide

| Component | Description | Considerations for Environmental Data |

|---|---|---|

| Bins (Intervals) | Contiguous, non-overlapping intervals into which data is grouped. | The appearance can depend on how intervals are defined. Soil contaminant data may require different bin widths than atmospheric gas concentrations. |

| Y-Axis (Frequency) | The count of observations within each bin. | Simplest to interpret but dependent on sample size. |

| Y-Axis (Density) | The frequency relative to the bin width, so that the area of each bar represents the proportion of data. | Allows for a direct representation of the probability distribution; required when using unequal bin widths. |

| Skewness | Asymmetry in the data distribution. | Environmental data (e.g., pollutant concentrations) are often positively skewed (long tail to the right) [13]. A log-transform may be needed to approximate normality [2]. |

Application in Environmental Monitoring

Histograms are indispensable for initial data screening. For instance, the U.S. EPA demonstrates the use of a histogram for log-transformed total nitrogen data from the Environmental Monitoring and Assessment Program (EMAP)-West Streams Survey [2]. The log-transform was applied to make the distribution of total nitrogen values more closely approximate a normal distribution, which is a common requirement for many parametric statistical tests. This simple transformation, guided by the histogram's shape, ensures subsequent analyses are more valid and powerful.

Boxplots (Box-and-Whisker Plots)

A boxplot (or box-and-whisker plot) provides a compact, standardized visual summary of a data distribution based on its five-number summary: minimum, first quartile (25th percentile), median (50th percentile), third quartile (75th percentile), and maximum [2] [14]. Its design efficiently communicates the data's center, spread, and potential outliers, making it ideal for comparing distributions across different subsets of data, such as different sites, time periods, or environmental conditions.

Experimental Protocol and Implementation

The construction of a standard boxplot follows a specific statistical protocol:

- Calculate the Five-Number Summary: Determine the minimum, first quartile (Q1), median, third quartile (Q3), and maximum of the dataset.

- Draw the Box: A box is drawn from Q1 to Q3. A line inside the box marks the median.

- Calculate the Interquartile Range (IQR): IQR = Q3 - Q1.

- Draw the Whiskers: The upper whisker extends from Q3 to the largest data point less than or equal to Q3 + 1.5×IQR. The lower whisker extends from Q1 to the smallest data point greater than or equal to Q1 - 1.5×IQR.

- Plot Outliers: Any data points that fall outside the whiskers are typically plotted as individual points (e.g., dots or circles) and are considered potential outliers [2].

Table 2: Boxplot Components and Their Statistical Meaning

| Component | Statistical Meaning | Visual Representation |

|---|---|---|

| Box | Represents the middle 50% of the data (the Interquartile Range, IQR). | The edges are at Q1 and Q3. |

| Median Line | The central value of the dataset. | A line within the box. |

| Whiskers | Show the range of "typical" data values, excluding outliers. | Extend to the minimum and maximum values within 1.5×IQR from the quartiles. |

| Outliers | Data points that are unusually far from the rest of the distribution. | Plotted as individual points beyond the whiskers. |

Application in Environmental Monitoring

Boxplots are particularly powerful for comparative analysis. A study on soil CO₂ in the Marble Mountains of California effectively used a Tukey boxplot to visualize measurements across an 11-point transect, allowing for easy comparison of the central tendency and variability of CO₂ levels across different sampling locations [14]. Similarly, boxplots can stratify data by a factor, such as site or tree type in a eucalyptus and oak study, and can be enhanced by using color to communicate additional categorical information [14]. This makes them ideal for assessing differences in pollutant concentrations between control and impact sites, or for visualizing seasonal variations in water quality parameters.

Figure 1: Boxplot Construction Workflow. This diagram outlines the key steps in creating a statistical boxplot, from data preparation to the final visualization, including outlier identification.

Quantile-Quantile (Q-Q) Plots

A quantile-quantile (Q-Q) plot is a graphical technique used to assess if a dataset plausibly came from a theoretical distribution (e.g., normal, lognormal, exponential) [15]. It is a scatterplot created by plotting two sets of quantiles against one another. If the data follows the theoretical distribution, the points will form a roughly straight line. Q-Q plots are more sensitive than histograms or boxplots to deviations from normality, especially in the tails of the distribution, making them a critical tool for validating assumptions underlying many parametric statistical methods common in environmental data analysis.

Experimental Protocol and Implementation

Creating a Q-Q plot involves a systematic comparison of empirical and theoretical quantiles.

- Sort and Rank Data: Sort the sample data in ascending order: ( x{(1)} \leq x{(2)} \leq ... \leq x_{(n)} ).

- Calculate Theoretical Quantiles: For a sample of size ( n ), calculate the theoretical quantiles from the chosen distribution. For a normal Q-Q plot, the i-th theoretical quantile is often calculated for the proportion ( p = (i - 0.5) / n ) using the inverse cumulative distribution function of the standard normal distribution.

- Create Scatter Plot: Plot the sorted sample data (empirical quantiles) on the y-axis against the calculated theoretical quantiles on the x-axis.

- Assess Linearity: Assess whether the points fall approximately along a straight line. Deviations from linearity indicate deviations from the theoretical distribution.

Table 3: Interpreting Patterns in Normal Q-Q Plots

| Pattern Observed | Interpretation | Common in Environmental Data |

|---|---|---|

| Points follow a straight line | The sample data is consistent with the theoretical distribution (e.g., normal). | Suggests data may be suitable for parametric tests. |

| Points form an "S-shaped" curve | The sample data has heavier or lighter tails than the theoretical distribution. | Heavy tails indicate more extreme values than expected. |

| Points form a curved line | The sample data is skewed relative to the theoretical distribution. | Positive skew (curve upward) is very common for untransformed concentration data [15]. |

| Presence of outliers | One or a few points deviate sharply from the line formed by the bulk of the data. | May indicate contamination, measurement error, or genuine extreme events. |

Application in Environmental Monitoring

The Q-Q plot is a cornerstone for assumption checking. The U.S. EPA highlights its use in comparing EMAP-West total nitrogen observations and log-transformed total nitrogen observations to a normal distribution [2]. The plot clearly showed that the log-transform made the data approximate a normal distribution much more closely, thereby justifying its use in subsequent analyses. Similarly, in soil background studies, Q-Q plots and histograms are used to determine the presence of multiple populations within a dataset, which is critical for defining a representative background threshold value [13].

Figure 2: Q-Q Plot Creation and Assessment. This workflow details the process of creating a Q-Q plot, from data sorting to the final interpretation of distribution fit.

Table 4: Key Research Reagent Solutions for Distributional Analysis

| Tool or Resource | Function | Application Example |

|---|---|---|

| R Statistical Language | A powerful, open-source environment for statistical computing and graphics. | Creating histograms (hist()), boxplots (boxplot()), and Q-Q plots (qqnorm(), qqplot()) [14] [15]. |

| ggplot2 R Package | A widely-used R package based on the "Grammar of Graphics" that provides considerable control over plot aesthetics and layout [14]. | Generating publication-quality histograms, density plots, and boxplots with layered customization (e.g., color, faceting). |

| ColorBrewer Palettes | A tool designed for selecting color palettes that are perceptually uniform and colorblind-safe for maps and other graphics [16]. | Applying appropriate sequential, diverging, or qualitative color schemes to enhance readability and accessibility in visualizations [16] [17]. |

| ProUCL Software (EPA) | A specialized statistical software package developed by the U.S. EPA for environmental applications, particularly for analyzing datasets with non-normal distributions and nondetect values [13]. | Calculating background threshold values for soil contaminants, handling skewed (e.g., lognormal, gamma) distributions common in environmental data [13]. |

| Data Preprocessing Protocols | Established methods for handling common data issues like missing values, censored data (e.g., Below Detection Limit), and outliers [3]. | Ensuring data integrity before analysis; for example, using robust statistical methods or imputation for BDL data rather than simple substitution [3]. |

Integrated Workflow for Environmental Data Analysis

The graphical techniques described are not used in isolation but form part of a cohesive EDA workflow. The process typically begins with data preparation and integrity checks, which include identifying and appropriately handling missing data, censored values (e.g., below detection limits), and potential outliers [3]. Following this, the distribution of key variables is examined using histograms and density plots to understand their shape and general properties. Boxplots are then employed to compare distributions across different strata or groups, such as sites, seasons, or land-use types, which can reveal potential stressors or patterns. Finally, Q-Q plots are used for a formal assessment of distributional assumptions, such as normality, which is often a prerequisite for confirmatory statistical tests like analysis of variance (ANOVA) or linear regression. This iterative process of visualization and analysis ensures that environmental scientists build a robust understanding of their data, leading to more defensible and meaningful conclusions in their research.

This technical guide provides environmental researchers with a comprehensive framework for employing numerical summaries within Exploratory Data Analysis (EDA). Focusing on the core concepts of central tendency, spread, skewness, and kurtosis, we detail standardized protocols for quantifying and interpreting these measures in the context of environmental monitoring. The document integrates practical methodologies, visual workflows, and analytical toolkits specifically designed to address the complexities of environmental data, such as non-normal distributions and data comparability challenges, thereby establishing a rigorous foundation for subsequent statistical modeling and hypothesis testing.

Exploratory Data Analysis (EDA), a philosophy and set of techniques pioneered by John Tukey, is a critical first step in the data discovery process, enabling scientists to analyze data sets, summarize their main characteristics, and uncover underlying patterns [1]. In environmental monitoring research—where data often involves complex spatio-temporal structures from diverse measuring instruments—a robust EDA is indispensable for validating data quality, checking assumptions, and formulating sound hypotheses [18] [19]. This guide focuses on the essential numerical summaries that form the bedrock of EDA: measures of central tendency, spread, skewness, and kurtosis. Mastery of these concepts allows researchers to move beyond simple descriptive statistics and develop a deeper, more nuanced understanding of their data's distribution, which is vital for everything from detecting trends in climate data to assessing the impact of environmental interventions [20].

Foundational Concepts

The Role of Distributions

A fundamental concept in statistics is the probability distribution, which describes the occurrences of random variables [21]. The most recognized is the normal distribution, which is symmetric and follows a 'bell-shaped curve' [21]. However, environmental data frequently deviates from this ideal form. The shape of a distribution—where it is centered, how spread out it is, how symmetrical it is, and the heaviness of its tails—directly influences the choice of summary statistics and the validity of subsequent inferential tests. Understanding these properties through numerical summaries ensures that analytical conclusions are built on a accurate representation of the data.

EDA in Environmental Research Context

Environmental data presents unique challenges, including spatial and temporal correlations, diverse data sources, and the presence of outliers [19]. Furthermore, achieving environmental data comparability—the ability to meaningfully compare information across different sources or periods—is a critical concern [22]. This requires standardization of methodologies, metrics, and reporting protocols. Consistent application of numerical summaries is a key step in this harmonization process, allowing for valid performance comparisons year-over-year, across different monitoring sites, or against regulatory benchmarks [22].

Core Numerical Summaries

Measures of Central Tendency

Central tendency measures the typical or middle values of a dataset [18]. The choice of measure is critical and depends on the nature of the data.

Table 1: Measures of Central Tendency

| Measure | Formula/Calculation | Ideal Use Case | Environmental Example |

|---|---|---|---|

| Mean | ( \bar{x} = \frac{\sum{i=1}^{n} xi}{n} ) [23] | Symmetrical, normally distributed data without significant outliers [21]. | Calculating average summer temperature from daily readings at a single station. |

| Median | Middle value in an ordered list [23]. | Skewed data or data with outliers [21] [23]. | Reporting the central value of contaminant concentration data, which is often skewed. |

| Mode | Most frequent value in a dataset [23]. | Categorical (nominal) data or identifying peaks in a frequency distribution [23]. | Identifying the most common species found in a water quality survey. |

Special Consideration for Environmental Data: The mean is highly susceptible to the influence of outliers, which are common in environmental datasets (e.g., a sudden pollutant spill) [23]. Therefore, for quantitative data with significant skew (e.g., absolute skewness > |2.0|), the median is the recommended measure of central tendency as it is more robust [23]. Additionally, special handling is required for certain measurements like pH, as the pH scale is logarithmic. The mean pH must be calculated by first converting pH values to hydrogen ion concentrations (([H^+] = 10^{-pH})), averaging these concentrations, and then converting the result back to pH ((pH = -\log[\text{average } H^+])) [23].

Measures of Spread

Spread (or dispersion) indicates how much the data values deviate from the central tendency [18].

Table 2: Measures of Spread

| Measure | Formula/Calculation | Interpretation |

|---|---|---|

| Variance ((s^2)) | Mean of the squared deviations from the mean [18]. | The average squared distance from the mean. Provides a basis for more advanced statistics. |

| Standard Deviation ((s)) | Square root of the variance [18]. | The average distance of data points from the mean. Reported in the original units of the data, making it more interpretable. |

| Range | Maximum value - Minimum value. | A simple measure of the total span of the data. Highly sensitive to outliers. |

| Interquartile Range (IQR) | ( Q3 - Q1 ) (the range of the middle 50% of the data). | A robust measure of spread not influenced by outliers. Used in the construction of boxplots. |

Skewness

Skewness is a measure of the asymmetry of a probability distribution [18] [21]. A distribution can be symmetric (zero skew), right-skewed (positive skew), or left-skewed (negative skew).

- Right Skew (Positive Skew): The tail of the distribution is longer on the right side. The mean is typically greater than the median [21] [24]. Example: Annual rainfall data in an arid region, where most years have low rainfall but a few years have very high rainfall.

- Left Skew (Negative Skew): The tail of the distribution is longer on the left side. The mean is typically less than the median [21]. Example: The concentration of a successfully mitigated pollutant where most readings are low.

Interpretation of Skewness Values:

- Highly Skewed: +1 or more, or -1 or less [21].

- Moderately Skewed: Between +0.5 and +1, or -0.5 and -1 [21].

- Approximately Symmetric: Between -0.5 and +0.5 [21].

Kurtosis

Kurtosis is a more subtle measure of the "tailedness" or the peakedness of a distribution compared to a normal distribution [18] [21]. It is often interpreted through the lens of excess kurtosis (calculated as sample kurtosis minus 3) [21].

- Mesokurtic (Excess Kurtosis ≈ 0): Tailedness similar to a normal distribution. A kurtosis value of 3 is mesokurtic [21] [24].

- Platykurtic (Excess Kurtosis < 0): Distributions with thinner tails. A kurtosis value of less than 3 is platykurtic [21].

- Leptokurtic (Excess Kurtosis > 0): Distributions with fatter tails. A kurtosis value greater than 3 is leptokurtic [21] [24]. Leptokurtic distributions are more prone to outliers [21].

Note: There has been historical controversy around kurtosis, with modern understanding emphasizing that outliers (fatter tails) dominate the kurtosis effect more than the peakedness of the distribution [24].

Experimental Protocols for Environmental Data

Protocol 1: Comprehensive Univariate Analysis

This protocol outlines the steps for a full numerical summary of a single environmental variable.

Objective: To fully characterize the distribution of a univariate environmental dataset (e.g., daily PM2.5 readings from a single sensor over one year). Materials: The dataset and statistical software (e.g., R or Python). Procedure:

- Data Validation: Check for and document missing values and obvious errors (e.g., negative concentrations).

- Calculate Central Tendency:

- Compute the mean.

- Compute the median.

- Compare the mean and median. A large difference suggests skewness.

- Calculate Spread:

- Compute the standard deviation and variance.

- Compute the range and Interquartile Range (IQR).

- Calculate Higher Moments:

- Compute skewness.

- Compute kurtosis (and excess kurtosis).

- Interpretation: Synthesize the results. For example: "The PM2.5 data shows strong positive skewness (1.2) and leptokurtosis (excess kurtosis 4.5), indicating a distribution with most readings at lower concentrations but a long tail of high-concentration events and a higher propensity for extreme outliers than a normal distribution. Therefore, the median and IQR are more appropriate summaries than the mean and standard deviation."

Protocol 2: Assessing Data Comparability Across Sites

This protocol ensures that data from different environmental monitoring stations can be meaningfully compared.

Objective: To compare the central tendency and distribution of a variable (e.g., nitrate concentration in streams) across multiple sampling sites. Materials: Datasets from multiple sites, collected using standardized methods (e.g., consistent water sampling and lab analysis protocols) [22]. Procedure:

- Methodology Alignment: Confirm that data collection and processing methodologies are consistent across all sites (e.g., sampling depth, time of day, preservation methods, analytical technique) [22].

- Independent Univariate Analysis: For each site's dataset, perform Protocol 1.

- Comparative Summary: Create a summary table for easy cross-site comparison.

Table 3: Comparative Summary for Nitrate Concentrations (Hypothetical Data)

Site ID n Mean (ppm) Median (ppm) Std. Dev. Skewness Kurtosis Site A 120 2.1 1.8 1.5 1.8 (High Pos) 5.1 (Lepto) Site B 115 5.3 5.2 2.1 0.3 (Approx Sym) 2.9 (Platy) Site C 118 1.5 1.1 1.2 2.1 (High Pos) 7.3 (Lepto) - Interpretation: Analyze the table. "Site B shows higher median nitrate levels with a symmetric distribution. Sites A and C have lower central tendencies but highly right-skewed distributions, indicating frequent low-level readings punctuated by occasional severe contamination events. This skewness must be accounted for in any downstream statistical tests."

Visual Workflows and Logical Relationships

The following diagram illustrates the decision-making process for summarizing and interpreting a univariate environmental dataset, integrating the concepts of central tendency, spread, and distribution shape.

Diagram 1: Workflow for summarizing a univariate environmental dataset.

The Scientist's Toolkit: Essential Analytical Reagents

In the context of data analysis, "research reagents" are the software tools and statistical functions required to perform EDA.

Table 4: Essential Research Reagent Solutions for Numerical EDA

| Tool / Function | Category | Primary Function | Example Use in Environmental Context |

|---|---|---|---|

| R Programming Language [18] [1] | Software Environment | Statistical computing and graphics. | Calculating spatial statistics for pollutant dispersion or performing complex time-series decomposition on climate data. |

| Python Programming Language [18] [1] | Software Environment | General-purpose programming with extensive data science libraries (e.g., Pandas, SciPy, NumPy). | Identifying missing values in large-scale sensor network data or building predictive models for resource use. |

summary() / describe() |

Descriptive Statistics | Provides a quick overview of central tendency and spread for all variables in a dataset. | Initial data screening for a multi-parameter water quality dataset. |

skew() / kurtosis() (e.g., from SciPy) |

Distribution Shape | Calculates the skewness and kurtosis of a dataset. | Quantifying the asymmetry and tailedness of species population count data. |

| Histogram & Q-Q Plot [21] | Graphical EDA | Visually assesses distribution shape and normality. | Diagnosing non-normality in ground-level ozone concentration data [19]. |

| K-means Clustering [18] [1] | Multivariate Analysis | An unsupervised learning algorithm that assigns data points into K groups. | Market segmentation in sustainable product studies or identifying patterns in remote sensing imagery for land cover classification. |

Numerical summaries are far more than simple descriptive statistics; they are the foundational language through which environmental data tells its story. A rigorous, methodical application of measures of central tendency, spread, skewness, and kurtosis, as outlined in this guide, allows researchers to move from raw data to robust insight. By embedding these analyses within a structured EDA process and utilizing the appropriate toolkit, scientists can ensure their work in environmental monitoring is built upon a accurate, defensible, and deeply informed understanding of the complex systems they study.

Exploratory Data Analysis (EDA) is an essential first step in any data analysis, serving to identify general patterns, detect outliers, and reveal unexpected features within datasets [2]. In environmental monitoring research, understanding these patterns is crucial before attempting to relate stressor variables to biological response variables [2]. However, real-world environmental datasets frequently present significant analytical challenges that complicate this process, primarily through missing values and censored data, such as values reported as Below Detection Limit (BDL).

Missing values are prevalent in environmental monitoring due to sensor failures, network outages, communication errors, or device destruction [25] [26]. Similarly, censored data occurs when analytical instruments cannot precisely quantify pollutant concentrations below certain detection thresholds, leading to left-censored datasets where values are known only to be below the Limit of Detection (LOD) [27]. Both issues, if not properly addressed, can lead to biased statistical analyses, inaccurate predictions, and ultimately flawed scientific conclusions and environmental policies [28] [27].

This technical guide examines advanced methodologies for handling these data quality issues within the context of environmental monitoring research, providing researchers with scientifically-grounded approaches to maintain data integrity throughout the analytical pipeline.

Handling Missing Data in Environmental Monitoring

The Scope of the Problem in Modern Sensor Networks

The scale of wireless sensor networks (WSNs) for environmental monitoring has expanded dramatically in recent years, generating extensive spatiotemporal datasets [26]. For instance, the "CurieuzeNeuzen in de Tuin" (CNidT) citizen science project deployed IoT-based microclimate sensors in 4,400 gardens across Flanders, recording temperature and soil moisture every 15 minutes [26]. Despite their value, such datasets often contain significant missing values due to random sensor failure, power depletion, network outages, communication errors, or physical destruction [26]. This data incompleteness hampers subsequent analysis and can weaken the reliability of conclusions drawn from sensor data [26].

Classification of Imputation Methods

Imputation methods for addressing missing data in environmental datasets can be categorized into three primary approaches based on their underlying strategy:

Table 1: Classification of Missing Data Imputation Methods for Environmental Monitoring

| Method Category | Key Methods | Underlying Principle | Best Use Cases |

|---|---|---|---|

| Temporal Correlation Methods | Mean Imputation, Linear Spline Interpolation [26] | Uses historical data from the same sensor location to estimate missing values | Single-sensor datasets with strong temporal autocorrelation |

| Spatial Correlation Methods | k-Nearest Neighbors (KNN), Multiple Imputation by Chained Equations (MICE), Markov Chain Monte Carlo (MCMC), MissForest [26] | Leverages measurements from spatially correlated sensors at the same time point | Dense sensor networks with high spatial correlation |

| Spatiotemporal Hybrid Methods | Matrix Completion (MC), Multi-directional Recurrent Neural Network (M-RNN), Bidirectional Recurrent Imputation for Time Series (BRITS) [26] | Combines both temporal patterns and spatial correlations for estimation | Large-scale sensor networks with both spatial and temporal dependencies |

Performance Evaluation of Imputation Methods

Recent research has evaluated numerous imputation techniques under different missing data scenarios. A comprehensive study assessed 12 imputation methods on microclimate sensor data with artificial missing rates ranging from 10% to 50%, as well as more realistic "masked" missing scenarios that replicate actual observed missing patterns [26].

Table 2: Performance Comparison of Selected Imputation Methods for Environmental Sensor Data

| Imputation Method | Strategy | Performance Notes | Computational Complexity |

|---|---|---|---|

| Matrix Completion (MC) | Spatiotemporal (static) | Tends to outperform other methods in comprehensive evaluations [26] | Moderate to High |

| MissForest | Spatial correlations | Generally performs well; random forest-based solutions often outperform others [26] | Moderate |

| M-RNN | Deep learning | Effective for complex spatiotemporal patterns [26] | High |

| BRITS | Deep learning | Directly learns missing value imputation in time series [26] | High |

| K-Nearest Neighbors (KNN) | Spatial correlations | Shows high performance in some comparative studies [26] | Low to Moderate |

| MICE | Spatial correlations | Flexible framework for multiple variable types | Moderate |

| MCMC | Spatial correlations | Yields favorable results in some environmental applications [26] | Moderate |

| Spline Interpolation | Temporal correlations | Simple but effective for gap-filling in continuous series [26] | Low |

Practical Implementation Considerations

When implementing imputation methods for environmental monitoring data, several practical considerations emerge:

- Proportion of Missing Data: Method performance varies significantly with the percentage of missing values. Studies typically evaluate performance between 10-50% missingness [26].

- Missing Data Mechanisms: Understanding whether data is Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR) informs method selection.

- Computational Resources: Simple methods like spline interpolation require minimal resources, while deep learning approaches like M-RNN and BRITS demand significant computational power [26].

- Real-time Processing Requirements: For near real-time monitoring systems, cloud-based data processing architectures that combine multiple algorithms may be necessary [25].

Figure 1: Method Selection Workflow for Missing Data Imputation. This diagram outlines a systematic approach for selecting appropriate imputation methods based on data patterns and correlation structures.

Statistical Approaches for Censored Data (BDL)

The Challenge of Left-Censored Environmental Data

In environmental monitoring, censored data most frequently occurs when pollutant concentrations fall below analytical detection limits, creating left-censored datasets where values are known only to be below the Limit of Detection (LOD) [27]. This presents significant challenges for accurate statistical analysis and environmental risk assessment [27]. For instance, studies of atmospheric organochloride pesticide (OCP) concentrations near Tibet's Namco Lake found many compounds falling below detection limits, complicating accurate monitoring and risk assessment [27].

The problem is particularly consequential because low detection limits do not necessarily equate to low risk. Research in the Namco Lake region found that while most OCPs were below detection limits in lake water, they were fully detected in fish, suggesting that trace pollutants can bioaccumulate through the food chain despite low environmental concentrations [27].

Traditional Methods and Their Limitations

Several traditional approaches have been used to handle left-censored environmental data:

Table 3: Traditional Methods for Handling Left-Censored Data (BDL Values)

| Method | Description | Advantages | Limitations |

|---|---|---|---|

| Simple Substitution (LOD/2) | Replaces non-detect values with LOD/2 or LOD/√2 | Simple, widely used, requires no specialized software | Can introduce significant bias, ignores variability below LOD [27] |

| Maximum Likelihood Estimation (MLE) | Estimates parameters assuming underlying distribution | Statistically rigorous, efficient with large samples | Can exhibit greater bias with small sample sizes (<160) [27] |

| Regression on Order Statistics (ROS) | Fits distribution to detected values, predicts non-detects | Good performance with lognormal data | Limited to lognormal distribution, not applicable to gamma-distributed data [27] |

| Tobit Models | Models latent variable through MLE | Valuable for regression-based inference | Requires normal distribution assumption, not ideal for estimating summary statistics [27] |

Advanced Weighted Substitution Method

To address limitations of traditional approaches, recent research has developed a weighted substitution method (ωLOD/2) that significantly improves estimation accuracy for left-censored data [27]. This method derives weight expressions that eliminate bias for both lognormal and gamma distributions, which are common for environmental pollutant data [27].

The weighted value can be calculated as: ωLOD/2 = Weight × (LOD/2)

Where the weight is approximated through a function of the form:

Weight ∼ f(

This approach addresses three key factors that influence substitution accuracy:

- Sample size, which determines the smoothness of the Empirical Cumulative Distribution Function

- Percentage of observations below LOD (

- Distribution parameters, which affect the shape of the distribution curve [27]

Distribution Considerations for Environmental Data

A critical consideration in handling censored environmental data is the underlying distribution of the pollutant concentrations. While many environmental scientists assume lognormal distributions for pollutants, research has shown that more than half of OCPs in the atmosphere of Namco Lake followed a gamma distribution [27]. This distinction is important because the median of gamma data does not align with the geometric mean, unlike lognormal data [27].

Table 4: Performance Comparison of Methods for Censored Data (Sample Size <160)

| Method | Arithmetic Mean Estimation | Geometric Mean Estimation | Standard Deviation Estimation | Distribution Flexibility |

|---|---|---|---|---|

| ωLOD/2 | Outperforms MLE and ROS in most scenarios [27] | Superior performance for lognormal data [27] | Bias within 5% in most cases [27] | Suitable for both lognormal and gamma distributions [27] |

| MLE | Can show greater bias with small samples [27] | Good performance with correct distribution assumption | Comparable to ωLOD/2 [27] | Requires correct distribution assumption |

| ROS | Not the best performer with small samples [27] | Limited to lognormal distribution | N/A | Limited to lognormal distribution [27] |

| LOD/2 Substitution | Potentially significant bias [27] | Reasonable when >50% data above LOD [27] | Often inaccurate | Distribution independent |

Figure 2: Analytical Workflow for Censored Data (BDL Values). This workflow guides researchers through appropriate method selection based on distribution characteristics and sample size considerations.

Research Reagent Solutions for Environmental Data Analysis

Table 5: Essential Computational Tools for Handling Data Quality Issues

| Tool/Resource | Function | Application Context |

|---|---|---|

| WebAIM Contrast Checker | Verifies color contrast ratios for data visualization accessibility [29] | Ensuring visualizations meet WCAG 2.1 AA standards (≥4.5:1 for normal text) [29] [30] |

| EnvStats R Package | Provides comprehensive tools for analyzing censored data, distribution fitting, and parameter estimation [27] | Implementing MLE for left-censored data under lognormal and gamma distributions [27] |

| Ajelix BI | Automated data visualization platform with AI-powered analytics [31] | Generating accessible charts and dashboards for environmental data communication |

| axe DevTools | Accessibility testing framework for data visualizations [30] | Identifying and resolving color contrast issues in web-based dashboards |

| Custom Web Applications | Specialized tools for specific methodological implementations [27] | Applying weighted substitution methods (ωLOD/2) without programming expertise |

Proper handling of missing and censored data is fundamental to maintaining scientific integrity in environmental monitoring research. The choice of imputation method for missing data should be guided by the underlying data structure, with spatial methods often outperforming temporal approaches in densely networked sensor systems [26]. For censored data, the novel weighted substitution method (ωLOD/2) provides significant advantages over traditional approaches, particularly for small sample sizes common in environmental monitoring [27].

As environmental datasets continue to grow in scale and complexity, employing statistically sound methods for addressing data quality issues becomes increasingly crucial. By implementing the methodologies outlined in this guide, researchers can enhance the reliability of their analyses, leading to more accurate environmental assessments and better-informed policy decisions. Future developments in artificial intelligence and machine learning promise even more sophisticated approaches to these persistent challenges in environmental data science [25] [32].

Advanced Techniques and Real-World Applications: From Multivariate Analysis to AI

In environmental monitoring research, the ability to visualize complex, multi-dimensional data is paramount for transforming raw measurements into actionable insights. Exploratory Data Analysis (EDA) serves as a critical first step, employing techniques to identify general patterns, detect outliers, and understand the relationships between variables before formal statistical modeling [2]. This process is especially vital in environmental science, where researchers often grapple with data from multiple stressors, geographic locations, and time periods [33] [2].

This guide details three powerful visualization techniques for relationship analysis: scatterplots, scatterplot matrices, and heat maps. When applied within the context of environmental monitoring—from tracking pollutant dispersion to analyzing biomarker responses—these tools form an essential component of the data science workflow, enabling researchers to formulate hypotheses and guide subsequent analytical decisions [34] [33].

Core Visualization Techniques

Scatterplots

A scatterplot is a fundamental graphical display that represents matched data by plotting one variable on the horizontal axis and another on the vertical axis [2]. Its primary strength lies in visualizing the relationship between two continuous variables.

- Purpose and Use Cases: Scatterplots are indispensable for revealing correlations, trends, and potential causal relationships. In environmental science, they are typically used to plot an influential parameter (independent variable) against a responsive attribute (dependent variable) [2]. They help answer questions such as, "How does the concentration of a specific chemical stressor relate to the decline in a biological population?"

- Revealing Data Issues: Scatterplots can effectively expose key characteristics of a dataset that might violate the assumptions of statistical models. These include:

- Non-linear relationships where the pattern of points curves, indicating that a simple linear model may be inadequate [2].

- Non-constant variance (heteroscedasticity), where the spread of data points widens or narrows across the range of values, suggesting the need for techniques like quantile regression or generalized linear models [2].

- Interpretation of Correlation: The overall pattern of points in a scatterplot provides a visual estimate of the correlation between two variables. This can be quantified using correlation coefficients like Pearson's r (for linear relationships) or Spearman's ρ (for monotonic relationships) [35] [2].

The following workflow outlines the standard process for creating and interpreting a scatterplot in environmental research.

Scatterplot Matrices

When dealing with more than two variables, a scatterplot matrix (or SPLOM) becomes an invaluable tool. It is a grid of scatterplots that allows for the simultaneous examination of pairwise relationships between multiple variables [2].

- Multivariate Exploration: A scatterplot matrix enables a comprehensive overview of a dataset by displaying all possible two-way interactions in a single, consolidated view. This is crucial for environmental studies, where systems are often affected by numerous interacting factors [2]. For example, a researcher can quickly assess relationships between several water quality parameters (e.g., nitrogen, phosphorus, turbidity, pH) and multiple biological indicators.

- Identifying Confounding Factors: By visualizing multiple relationships at once, researchers can spot confounding variables—factors that are correlated with both the putative stressor and the biological response—which is a critical step in causal analysis [2].

- Diagonal Utilization: The cells along the diagonal of the matrix, which would otherwise show a variable plotted against itself, are often used to display the distribution (e.g., a histogram or density plot) of each individual variable [36].

Heat Maps

A heat map is a graphical representation of data where individual values contained in a matrix are represented as colors [37] [38]. This technique is exceptionally powerful for visualizing complex, high-dimensional data, such as that generated in modern environmental and biomarker studies [34].