Handling Missing Data in Environmental Time Series: A Comprehensive Guide for Biomedical and Clinical Researchers

This article provides a comprehensive framework for handling missing data in environmental time series, tailored for researchers and professionals in biomedical and clinical development.

Handling Missing Data in Environmental Time Series: A Comprehensive Guide for Biomedical and Clinical Researchers

Abstract

This article provides a comprehensive framework for handling missing data in environmental time series, tailored for researchers and professionals in biomedical and clinical development. It addresses the critical gap between theoretical imputation methods and their real-world application, covering foundational concepts of missing data mechanisms, a comparative analysis of traditional and machine learning imputation techniques, strategies for troubleshooting common pitfalls, and robust validation frameworks. By integrating insights from recent studies in environmental monitoring and healthcare, the content offers practical guidance to ensure data integrity, improve analytical accuracy, and support reliable decision-making in research and drug development.

Understanding Missing Data Mechanisms and Their Impact on Environmental and Clinical Time Series

FAQs: Understanding Missing Data Mechanisms

What are MCAR, MAR, and MNAR, and why is distinguishing between them crucial?

The classification of missing data into MCAR, MAR, and MNAR is a foundational concept for handling incomplete datasets. Understanding the distinction is critical because the validity of your statistical analysis and the correctness of your conclusions depend on using methods appropriate for your missing data mechanism [1].

MCAR (Missing Completely at Random): The probability that a value is missing is unrelated to both the observed data and the unobserved data. For example, a water quality sensor might fail due to a random power outage, independent of the pollution levels it was measuring [2]. Analyses on data that are MCAR remain unbiased, though there is a loss of power.

MAR (Missing at Random): The probability that a value is missing may depend on observed data but not on the unobserved data. For instance, in a clinical trial, younger participants might be more likely to miss follow-up visits regardless of their unobserved health outcome. Modern statistical methods like multiple imputation or maximum likelihood estimation can provide valid results under MAR [3] [1].

MNAR (Missing Not at Random): The probability of missingness depends on the unobserved value itself. For example, in an air pollution study, sensors in highly polluted areas might fail more frequently due to the corrosive environment, meaning the missing data values are systematically related to the very pollution levels you want to measure. MNAR is the most challenging scenario and requires specialized techniques like selection models or pattern-mixture models [4] [2].

How can I determine if my data are MCAR, MAR, or MNAR?

Diagnosing the missing data mechanism involves a combination of statistical tests and logical, domain-based reasoning [4].

Testing for MCAR: You can use statistical tests like Little’s test or conduct logistic regression analyses where the outcome is a binary indicator of missingness and the predictors are other observed variables. If no observed variables are significant predictors of missingness, it may be consistent with MCAR, though this cannot be proven definitively [4].

Distinguishing MAR from MNAR: This is a more significant challenge, as there is no definitive statistical test because the crucial information is missing [3] [4]. Diagnosis often relies on:

- Domain Knowledge and Intuition: Consider the data collection process. Is it plausible that the value of the missing variable itself caused it to be missing? For sensitive topics like income or heavy smoking, MNAR is often likely [4].

- Analyzing Auxiliary Variables: Use variables correlated with the missing variable to infer patterns. If the missingness can be explained by other observed variables, it supports a MAR mechanism [4].

- Sensitivity Analysis: This is a recommended best practice. You test how your results change under different plausible assumptions for the missing data (e.g., under MAR vs. various MNAR scenarios). The robustness of your conclusions is then assessed across these different scenarios [3] [4].

What are the best practices for preventing missing data in environmental and clinical studies?

Prevention is always superior to statistical correction [3]. A proactive data management plan is essential.

- Study Design Phase: In clinical trials, use run-in periods to screen for participant compliance. In environmental monitoring, choose robust sensors and design redundant sampling networks. Minimize participant burden in PRO (Patient-Reported Outcome) studies by keeping questionnaires concise [3].

- Data Collection Phase: Ensure adequate training for field staff or clinical trial personnel. Implement rigorous quality assurance and quality control (QA/QC) procedures. For clinical trials, continue to collect outcome data even if a participant discontinues the treatment [3] [5].

- Data Management Phase: Develop a strong Data Governance framework and a detailed Data Management Plan (DMP). This includes standards for data storage, documentation, and security to prevent data loss [5].

What are robust methods for handling missing data in environmental time series?

Time series data present unique challenges due to temporal dependencies. Effective strategies often combine multiple methods.

- For Outlier Detection (which can be treated as missing): A hybrid approach is effective. Use:

- Statistical Methods: Z-Score, Interquartile Range (IQR).

- Machine Learning Models: Isolation Forest, Local Outlier Factor (LOF), which are robust to non-normal data distributions [6].

- For Imputation:

- Short Gaps: Linear interpolation is simple and can be highly effective (R² up to 0.97) [6].

- Longer or Curved Sequences: Use shape-preserving methods like Piecewise Cubic Hermite Interpolating Polynomial (PCHIP) or Akima interpolation, which minimize errors (MSE between 0.002–0.004) and preserve natural data trends [6].

- Advanced Methods: K-Nearest Neighbors (KNN) imputation or regression-based models that can incorporate spatial information from other sensor locations [6].

Troubleshooting Guides

Problem: High Dropout Rate in a Clinical Trial with Patient-Reported Outcomes (PROs)

Background: A significant number of participants in your behavioral or drug intervention trial have dropped out, leading to monotonic missing data in your primary PRO, such as quality of life.

Diagnosis Steps:

- Define the Estimand: Before analysis, precisely define what you want to estimate. Specify how you will account for participants who dropped out in your research question [3].

- Diagnose the Mechanism:

- Check if dropout is associated with observed baseline data (e.g., baseline symptom severity, age, treatment arm) using logistic regression. If a relationship exists, the data are not MCAR [3] [4].

- Use domain knowledge. If patients on a more aggressive drug regimen drop out due to unrecorded side effects, the mechanism could be MNAR [3].

Solutions:

- Primary Analysis: Use statistically principled methods that assume data are MAR, such as:

- Sensitivity Analysis: Mandatory. Conduct analyses under MNAR assumptions (e.g., using pattern-mixture models or delta-adjustment) to see if your trial's conclusion changes. This assesses the robustness of your primary finding [3].

Problem: Intermittent Missing Values in Environmental Sensor Data

Background: Your network of air quality sensors has intermittent missing readings due to transient communication failures or temporary sensor malfunctions.

Diagnosis Steps:

- Map the Missingness Pattern: Determine if the missing data is isolated or in blocks. Check for zero readings, which are often physiologically implausible for gas concentrations and should be treated as outliers or missing [6].

- Investigate Correlates: Analyze if missingness is related to other observed variables (e.g., a specific sensor unit, time of day, or extreme weather conditions like high humidity). This suggests a MAR mechanism [4] [6].

Solutions:

- Preprocessing: Identify and remove outliers using a hybrid method (e.g., IQR and Isolation Forest) and treat them as missing values [6].

- Tailored Imputation:

- For short, isolated gaps, use linear interpolation [6].

- For longer sequential gaps or data with natural curvature, use PCHIP or Akima interpolation to better preserve the trend and shape of the data [6].

- For multivariate datasets with several correlated sensors, leverage KNN imputation or regression models that use spatial correlations from other nearby stations to fill gaps [6].

Experimental Protocols for Handling Missing Data

Detailed Methodology: A Dual-Phase Framework for Environmental Time Series

This protocol is adapted from a study focused on improving gas and weather data quality [6].

1. Phase One: Outlier Detection and Removal

- Objective: Identify and flag anomalous data points that could skew analysis and imputation.

- Materials: A time series dataset (e.g., from environmental sensors) with timestamps.

- Procedures:

- Statistical Methods:

- Z-Score: Calculate for each data point. Flag points where |Z-Score| > 3 as potential outliers.

- Interquartile Range (IQR): Calculate the IQR (Q3 - Q1). Flag points below (Q1 - 1.5IQR) or above (Q3 + 1.5IQR).

- Machine Learning Methods:

- Isolation Forest: Fit the model to the data. This algorithm is efficient at isolating anomalies in high-dimensional data.

- Local Outlier Factor (LOF): Fit the model to identify samples with substantially lower density than their neighbors.

- Action: All flagged outliers and zero readings are removed and treated as missing values for the imputation phase.

- Statistical Methods:

2. Phase Two: Missing Value Imputation

- Objective: Fill missing gaps in a way that restores temporal coherence and realistic trends.

- Procedures:

- Characterize the Gap: Determine if the missing sequence is short/isolated or a prolonged block.

- Apply Imputation Method:

- For short, isolated gaps: Apply linear interpolation.

- For longer sequential gaps or data with inherent curvature: Apply PCHIP or Akima interpolation. These methods are designed to preserve the shape of the data and avoid overshooting.

- Validation (if ground truth is known): Artificially remove some known values, apply the imputation, and calculate performance metrics like Mean Squared Error (MSE) and R-squared to select the best method.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Methods for Handling Missing Data

| Item Name | Type (Method/Software) | Primary Function/Benefit |

|---|---|---|

| Multiple Imputation (MI) | Statistical Method | Creates several complete datasets to account for uncertainty in the imputation process, valid under MAR [3]. |

| Mixed Model for Repeated Measures (MMRM) | Statistical Model | Uses all available data points under the MAR assumption; standard for primary analysis in clinical trials [3]. |

| Piecewise Cubic Hermite Interpolating Polynomial (PCHIP) | Interpolation Method | Excellent for time series; preserves data shape and monotonicity, minimizing error in sequential gaps [6]. |

| Isolation Forest | Machine Learning Algorithm | Unsupervised model efficient for detecting anomalies in multivariate data without needing normal distribution assumptions [6]. |

| Sensitivity Analysis | Analytical Framework | Tests the robustness of study conclusions by comparing results under different missing data assumptions (MAR vs. MNAR) [3] [4]. |

| Data Management Plan (DMP) | Governance Document | Provides a proactive framework for preventing missing data throughout the project lifecycle [5]. |

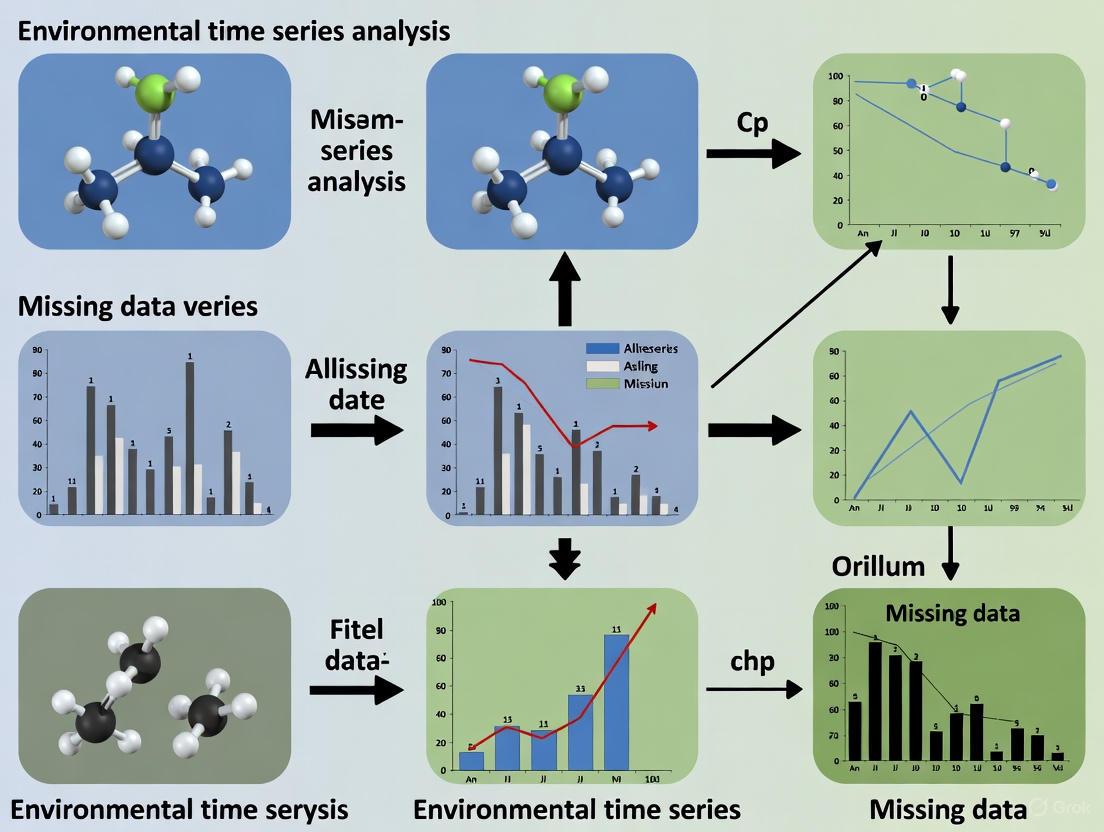

Diagrams and Workflows

Diagnostic and Handling Workflow for Missing Data

Diagram Title: Missing Data Mechanism Diagnostic Workflow

Comparison of Missing Data Mechanisms

Table: Key Characteristics of MCAR, MAR, and MNAR

| Characteristic | MCAR | MAR | MNAR |

|---|---|---|---|

| Definition | Missingness is unrelated to any data, observed or unobserved. | Missingness is related to other observed variables only. | Missingness is related to the unobserved missing value itself. |

| Potential Bias | None (only reduces power). | Can be accounted for with appropriate methods. | High risk of bias in standard analyses. |

| Common Handling Methods | Complete-case analysis, if low volume. | Multiple Imputation, Maximum Likelihood, Mixed Models. | Pattern-mixture models, Selection models, Sensitivity Analysis. |

| Clinical Example | A blood sample is lost in transit. | Younger participants are more likely to miss visits, regardless of outcome. | Patients feeling worse (unrecorded) drop out of a study. |

| Environmental Example | A sensor fails randomly due to a dead battery. | Sensors in a specific model fail more often (observed maker). | Sensors in highly polluted areas corrode and fail (unobserved level). |

Common Causes of Missingness in Sensor Data, EHRs, and Clinical Trials

In data-driven research, missing data is a rule rather than an exception. Effectively troubleshooting this issue requires understanding its origins. Missing data occurs when values are absent in specific fields or attributes within a dataset, which can arise during collection, storage, or processing [7]. In high-stakes fields like clinical research and environmental science, missing data can lead to biased estimates, reduced statistical power, and invalid conclusions, ultimately impacting scientific validity and decision-making [8] [9].

➤ FAQs: Diagnosing Missing Data Problems

FAQ 1: What are the different types of missing data mechanisms I might encounter?

Understanding the mechanism behind the missingness is the first critical step in choosing the correct handling method. The literature primarily defines three types, which describe whether the reason for missingness is related to the data itself [7] [10].

- Missing Completely at Random (MCAR): The probability of data being missing is unrelated to any observed or unobserved data. An example is a lab result missing due to a clerical error or a random sensor power failure [8] [10].

- Missing at Random (MAR): The probability of data being missing is related to other observed variables in the dataset but not the missing value itself. For instance, missing blood pressure readings might be related to a patient's age, which is recorded [8] [10].

- Missing Not at Random (MNAR): The probability of data being missing is related to the unobserved missing value itself. For example, a monitor may shut down during extreme pollution levels it cannot measure, or patients might be less likely to report sensitive information like high alcohol consumption [8] [11] [10].

FAQ 2: Why is EHR data so often incomplete, and how does it affect clinical trials?

Electronic Health Records (EHRs) are designed for clinical and billing purposes, not research, which leads to several inherent challenges [8] [12].

- Inconsistent Documentation: Provider workflows and localized clinical guidelines lead to variability in what data is recorded and when [13] [8].

- System Limitations: Data can be lost due to disconnection of sensors, errors in communicating with database servers, or electricity failures [10].

- Unstructured Data: Critical information is often buried in unstructured clinical notes, making it difficult to extract and analyze systematically [8].

When EHRs are used in clinical trials, this incompleteness is a major risk. A notable example is a randomized controlled trial where, despite American Diabetes Association guidelines, 70% and 49% of patients were missing HbA1C values at 3 and 6 months, respectively, because the data relied on unpredictable clinical encounters [13].

FAQ 3: What are the common failure points for sensor data in environmental monitoring?

Sensor data, particularly in environmental time-series, is highly susceptible to gaps [6].

- Power Source Failure: A frequent issue in field studies, especially in resource-limited areas, is battery power loss, which can lead to consecutive hours of missing data [11].

- Equipment Malfunction: Sensors can shut down due to high filter loading, extreme temperatures, or relative humidity beyond the manufacturer's operating range [11] [6].

- Sensor Communication Errors: Data transmission issues between the sensor and the central database can result in lost data points [10].

➤ Troubleshooting Guide: Identifying Causes of Missing Data

Use the following table to diagnose the likely causes of missingness in your data. This can guide your initial investigation and help you understand the underlying mechanism.

Table 1: Common Causes and Classifications of Missing Data Across Domains

| Data Source | Common Causes of Missingness | Typical Missing Mechanism | Real-World Example |

|---|---|---|---|

| Electronic Health Records (EHRs) | Inconsistent provider documentation; unstructured clinical notes; billing-oriented data entry; financial burden of ordering tests [8] [12]. | MAR, MNAR | A lab test is not ordered because the clinician, based on a patient's observed good health (observed data), deems it unnecessary (MAR) [12]. |

| Clinical Trials (EHR-based) | Reliance on routine clinical practice for data collection; patient drop-out; protocol deviations [13]. | Primarily MNAR | HbA1C values are missing because patients who feel sicker (unobserved health status) are less likely to return for follow-up (MNAR) [13]. |

| Environmental Sensors | Power/battery failure; extreme weather conditions; sensor malfunction; communication transmission errors [11] [6]. | MCAR, MAR, MNAR | A monitor shuts down due to extremely high temperatures (unobserved value), causing data to be missing (MNAR) [11]. |

➤ Experimental Protocols for Investigating Missingness

Before applying any imputation technique, it is essential to systematically characterize the nature of the missing data in your dataset. Here is a detailed methodology.

Protocol: Characterizing Missing Data Patterns

1. Compute the Proportion of Missing Data

- Calculate the percentage of missing values for each variable and each observation (e.g., patient, sensor). This helps decide if a variable or observation should be candidate for removal. A common rule of thumb is to consider rejecting variables with >50% missingness, though this is not risk-free [10].

2. Visualize and Analyze Missing Data Patterns

- Create visualizations (e.g., missingness matrices, heatmaps) to identify if missingness is isolated, in blocks, or follows a specific pattern. This can reveal systematic issues, such as all data from a particular sensor being missing after a certain date [6].

3. Investigate the Missing Data Mechanism

- For MCAR: Use statistical tests like Little's MCAR test to check if the missingness is completely random.

- For MAR/MNAR: Conduct logistic regression analyses where the response variable is the "missingness indicator" (1 for observed, 0 for missing) for a variable. If missingness is significantly associated with other observed variables, it suggests MAR. If you suspect it is related to the unobserved value itself (often inferred from domain knowledge), it suggests MNAR [11] [10].

4. Learn from Historical and Similar Data

- Use historical EHR data to estimate potential missing data rates for your variables of interest [13].

- Consult literature from similar studies to understand expected missingness rates. For example, various studies have shown considerable missing data rates for laboratory values in ambulatory settings [13].

The following workflow provides a logical pathway for diagnosing and responding to missing data based on the results of your initial analysis.

➤ The Scientist's Toolkit: Key Reagents & Methods

This table outlines essential "research reagents" — in this context, key methodological tools and concepts — that are fundamental for any researcher working with incomplete datasets.

Table 2: Essential Methodological Tools for Handling Missing Data

| Tool / Concept | Category | Primary Function | Key Consideration |

|---|---|---|---|

| Multiple Imputation by Chained Equations (MICE) [11] [9] | Model-Based Imputation | Creates multiple plausible values for each missing data point, accounting for uncertainty. | Generally assumes data is MAR [9]. |

| MissForest [9] | Model-Based Imputation | A random forest-based method for imputing missing values; can handle non-linear relationships. | Effective for mixed data types (continuous & categorical). |

| Denoising Autoencoders [9] [12] | Deep Learning Imputation | Learns a compressed data representation to reconstruct original inputs, naturally handling missing values. | Can identify complex patterns but requires large datasets and has issues with interpretability [9]. |

| Last Observation Carried Forward (LOCF) [11] | Univariate Time-Series | Fills gaps with the last recorded value. Simple but can introduce significant bias. | |

| Piecewise Cubic Hermite Interpolating Polynomial (PCHIP) [6] | Interpolation | A curvature-aware interpolation method that preserves the shape of time-series data. | Superior to linear interpolation for sequential gaps in environmental data [6]. |

| Vine Copulas [14] | Multiple Imputation | Models complex dependencies between variables (e.g., from multiple monitoring stations) to impute missing values, suitable for extremes. | Operates in a Bayesian framework, ideal for spatial-time series with tail dependence. |

Troubleshooting Guide: Diagnosing Missing Data Mechanisms

FAQ: How can I determine why my environmental time series data is missing?

Identifying the underlying mechanism of missing data is the crucial first step in selecting an appropriate handling method. The mechanism influences both the potential for bias and the choice of imputation technique [15].

- Missing Completely at Random (MCAR): The missingness is unrelated to any observed or unobserved data. For example, a water sample is lost due to a dropped vial.

- Missing at Random (MAR): The missingness is related to observed variables but not the missing value itself. For instance, the likelihood of a missing nitrate reading is higher on days with recorded heavy rainfall, but after accounting for rainfall, the missingness is random.

- Missing Not at Random (MNAR): The missingness is related to the unobserved missing value itself. A classic environmental example is when a sensor fails to record precisely because the pollutant concentration exceeds its detectable range [15] [16].

Diagnostic Steps:

- Visualize Missingness Patterns: Use plots (e.g.,

aggrplot from theVIMpackage in R) to identify if missingness is random or appears in structured blocks [15]. - Conduct Statistical Tests: For MCAR, tests like Little's MCAR test can assess if the missing data pattern is random across all data partitions.

- Apply Domain Knowledge: Consult with field experts to understand data collection processes. Sensor failures, protocol changes, or refusal to participate in sub-studies can point to MAR or MNAR mechanisms [16].

Troubleshooting Guide: Addressing Block-Wise Missingness in Integrated Datasets

FAQ: My integrated multi-source dataset has entire blocks of data missing. What should I do?

In large-scale environmental studies, data is often collected from various sub-studies or monitoring networks. Block-wise missingness (or structured missingness) occurs when entire groups of variables are missing for a subset of subjects, often because they did not participate in a specific sub-study [16] [17]. A naive approach of listwise deletion would discard a vast amount of data.

Solution: Profile-Based Analysis This method involves partitioning the dataset into groups, or "profiles," based on data availability across different sources [17].

- Step 1: Identify Profiles: For each sample (e.g., a monitoring station), create a binary indicator vector showing which data sources are available. Convert this vector into a profile identifier [17].

- Step 2: Form Complete Data Blocks: Group samples that share a compatible data profile. For example, samples with Profile A (Source 1 and 2 available) can be analyzed together for a model using only those sources [17].

- Step 3: Implement a Two-Step Algorithm: Learn model coefficients from each complete data block and then integrate them using a weighting scheme to build a unified model that uses all available information without imputation [17].

Quantitative Comparison of Common Imputation Methods

The table below summarizes the performance and characteristics of various imputation methods as evaluated in recent studies.

Table 1: Comparison of Modern Imputation Methods

| Method | Reported Performance / Characteristics | Best Suited For | Key Considerations |

|---|---|---|---|

| Generative Adversarial Networks (GANs) | Excels over other deep learning methods for climate data imputation [18]. | Complex, high-dimensional data with nonlinear patterns (e.g., satellite-derived climate data). | High computational cost; requires significant data and expertise. |

| MissForest | Non-parametric, works well for mixed data types; shows stability and consistency in sensitivity analyses [15] [19]. | General-purpose use with mixed (continuous, categorical) data. | Based on Random Forests; robust to non-linear relationships. |

| k-Nearest Neighbors (kNN) | Produces imputed data closely matching original data; stable and consistent in sensitivity analysis [19]. | Datasets where local similarity between samples is a reasonable assumption. | Choice of 'k' and distance metric can impact results. |

| Multiple Imputation by Chained Equations (MICE) | Considered a gold standard for MAR data; incorporates imputation uncertainty [15] [20]. | Most scenarios with MAR data, particularly for statistical inference and estimation. | Can be computationally intensive; requires careful model specification. |

| Deterministic Imputation | Preferred for deployed clinical risk prediction models; easily applied to new patients [20]. | Prognostic model deployment where computational efficiency and simplicity are key. | Does not account for imputation uncertainty; outcome variable must be omitted from the imputation model [20]. |

Experimental Protocol: Evaluating Imputation Method Performance

This protocol outlines a robust procedure for comparing the accuracy of different imputation methods on your environmental dataset, based on a state-of-the-art evaluation framework [16].

Objective: To evaluate and select the best-performing imputation method for an environmental time series dataset with structured missingness.

Materials:

- A dataset with known values (as complete as possible).

- R or Python programming environment with relevant packages (e.g.,

mice,missForest,scikit-learn).

Method:

- Synthetic Data Generation with Realistic Missingness: Instead of removing data randomly, use a tool that mimics the real missingness patterns of your dataset. This involves:

- Identifying blocks of missingness corresponding to sub-studies or sensor networks using hierarchical clustering of missingness patterns.

- Modelling the dependence between variable correlation and co-missingness patterns.

- Imposing both structured (block-wise) and unstructured missingness that is informative (MAR) [16].

- Induce Artificial Missingness: On the synthetic dataset from Step 1, artificially remove a known proportion of values (e.g., 5%, 10%, 20%) using a specific mechanism (e.g., MCAR, MAR).

- Apply Imputation Methods: Run the candidate imputation methods (e.g., MICE, MissForest, kNN) on the dataset with induced missingness.

- Evaluate Accuracy: Compare the imputed values against the known, originally removed values. Use error metrics such as:

- Mean Absolute Error (MAE)

- Root Mean Square Error (RMSE)

- Mean Absolute Percentage Error (MAPE) [19]

- Sensitivity Analysis: Test the stability of the methods by varying their internal parameters and re-calculating the error metrics [19].

The following workflow diagram illustrates the experimental protocol for evaluating imputation methods:

The Researcher's Toolkit: Essential Software for Handling Missing Data

Table 2: Key Software Packages for Missing Data Imputation

| Tool / Package | Primary Function | Application Context |

|---|---|---|

mice (R) |

Multiple Imputation by Chained Equations [15]. | Gold standard for MAR data; ideal for statistical inference where accounting for uncertainty is critical. |

missForest (R) |

Non-parametric imputation using Random Forests [15]. | Robust imputation for mixed data types (continuous & categorical) without assuming a specific data distribution. |

bmw (R) |

Handles block-wise missing data in multi-source datasets [17]. | Integrating multi-omics or multi-network environmental data without imputing missing blocks. |

scikit-learn (Python) |

Provides various estimators (e.g., KNNImputer) and machine learning models that can be adapted for imputation. |

Flexible, general-purpose machine learning workflows in Python. |

softImpute (R) |

Matrix completion for high-dimensional data [15]. | Useful for large-scale datasets like those from sensor networks or satellite imagery. |

simputation (R) |

Provides a simple, unified interface for several imputation methods [15]. | Streamlining data preprocessing workflows with a consistent syntax. |

Frameworks for Documentation and Disclosure in Scientific Reporting

Troubleshooting Guide: Common Documentation Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Missing data points in environmental time series | Sensor malfunction, transmission errors, or environmental interference [18] | Implement appropriate imputation methods (e.g., mean imputation, regression, machine learning techniques) based on data patterns [18] |

| Inaccessible data visualizations for colorblind users | Insufficient color contrast between foreground and background elements [21] [22] | Ensure all text and graphical objects meet WCAG AA contrast ratios (≥4.5:1 for normal text) [23] |

| Uncertainty in disclosing AI tool usage in research | Lack of standardized framework for reporting AI contributions [24] | Implement the Artificial Intelligence Disclosure (AID) Framework to transparently document AI use throughout the research process [24] |

| Ineffective scientific figures obscuring research findings | Poor geometry selection or suboptimal data visualization practices [25] | Prioritize message before selecting visualization; use high data-ink ratio geometries that match your data type [25] |

| Ethical uncertainty in disclosing genetic research findings | Unclear thresholds for determining what constitutes valid, valuable information worthy of disclosure [26] | Apply framework analyzing three key concepts: validity (analytic validity), value (clinical utility), and volition (participant preferences) [26] |

Frequently Asked Questions (FAQs)

What are the most effective methods for handling missing data in climate time series? Conventional statistical techniques include mean imputation, simple and multiple linear regression, interpolation, and Principal Component Analysis (PCA). Advanced methods include artificial neural networks to identify complex patterns, with Generative Adversarial Networks (GANs) showing particular promise for climate data imputation [18].

How can I ensure my data visualizations are accessible to all readers? Ensure all text elements maintain a minimum contrast ratio of 4.5:1 against their background (3:1 for large text). Use tools like the WebAIM Contrast Checker to verify ratios. Avoid using color as the sole means of conveying information, and consider how your visuals appear to users with various forms of color vision deficiency [21] [22] [23].

What specific team capabilities enhance scientific disclosure through publications? R&D teams with higher proportions of PhD-trained researchers, younger scientists, and foreign-trained team members demonstrate greater success in scientific publishing. Team diversity and specific human resource allocations are crucial factors, as scientific disclosure requires distinctive capabilities beyond standard R&D activities [27].

How should I document the use of AI tools in my research workflow? Use the Artificial Intelligence Disclosure (AID) Framework, which provides a standardized structure for reporting AI tool usage. Include the specific tools and versions used, along with descriptions of how AI was employed across various research stages such as conceptualization, methodology, data analysis, and writing [24].

What are the key considerations for selecting the right data visualization geometry? First, determine your core message - are you showing comparisons, compositions, distributions, or relationships? Select geometries based on your data type: bar plots for amounts, density plots for distributions, scatterplots for relationships. Avoid misusing bar plots for group means when distributional information is available, and prioritize geometries with high data-ink ratios [25].

Experimental Protocols

Documentation Framework for Missing Data in Environmental Research

Title: Missing Data Handling Protocol

Objective: Establish standardized protocol for handling and documenting missing data in environmental time series research.

Procedure:

- Data Assessment Phase:

- Identify extent and patterns of missingness using descriptive statistics

- Document percentage of missing values and potential mechanisms (MCAR, MAR, MNAR)

- Visualize missing data patterns using specialized plotting techniques

Method Selection:

- For low missingness (<5%): Consider simple imputation (mean, median, regression)

- For complex patterns: Implement advanced methods (multiple imputation, neural networks)

- Justify method choice based on data characteristics and research objectives

Implementation:

- Apply selected imputation method to dataset

- Create complete dataset for analysis while preserving original missing data indicators

- Document all parameters and assumptions of the imputation process

Documentation:

- Record percentage of missing values in final report

- Disclose imputation methodology in methods section

- Include sensitivity analysis comparing results with and without imputation

AI Use Disclosure Framework

Title: AI Disclosure Workflow

Objective: Implement standardized artificial intelligence disclosure process throughout research workflow.

Procedure:

- Tool Identification:

- Record all AI tools and specific versions used

- Note dates of use and institutional instances where applicable

- Document any known biases or limitations of models or datasets

Phase-Specific Documentation:

- Conceptualization: Document AI assistance in developing research questions or hypotheses

- Methodology: Record AI contributions to study design or instrument development

- Information Collection: Note AI use in literature review or pattern identification

- Data Analysis: Document AI role in statistical analysis or theme identification

- Writing: Record AI assistance in editing, translation, or revision

Privacy and Security Considerations:

- Document data handling procedures when using AI tools

- Specify compliance with institutional privacy policies

- Note any identifiable data shared with AI systems

Statement Generation:

- Compile AID Statement using standardized format

- Include only headings relevant to actual AI usage

- Append statement to manuscript before acknowledgments section

Research Reagent Solutions

| Item | Function | Application Notes |

|---|---|---|

| Mean/Regression Imputation | Replaces missing values with statistical estimates | Best for low percentage missingness with random patterns; simple to implement but may reduce variance [18] |

| Multiple Imputation | Creates several complete datasets accounting for uncertainty | Superior for complex missing data mechanisms; provides better variance estimates than single imputation [18] |

| Neural Network Models | Identifies complex, nonlinear patterns in incomplete data | Effective for large datasets with complex missingness patterns; requires substantial computational resources [18] |

| Generative Adversarial Networks | Generates synthetic data to fill missing values | State-of-the-art for climate time series; particularly effective for multiple correlated variables [18] |

| Color Contrast Analyzers | Verifies accessibility compliance of visualizations | Essential for ensuring figures meet WCAG standards; use before publication [23] |

| AID Framework Template | Standardizes AI use disclosure | Provides consistent structure for reporting AI contributions across research phases [24] |

Missing Data Imputation Method Performance

| Method | Data Type Suitability | Complexity | Implementation Ease |

|---|---|---|---|

| Mean/Median Imputation | Continuous variables | Low | High |

| Regression Imputation | Continuous, correlated variables | Medium | Medium |

| Multiple Imputation | All variable types, MAR data | High | Low |

| k-Nearest Neighbors | Continuous, categorical data | Medium | Medium |

| Neural Networks | Complex patterns, large datasets | High | Low |

| Generative Adversarial Networks | Multiple correlated climate variables | Very High | Very Low |

Scientific Disclosure Capability Factors

| Factor | Impact on Publication Output | Evidence Strength |

|---|---|---|

| PhD-trained Researchers | Strong positive correlation | High [27] |

| Young Researchers | Moderate positive correlation | Medium [27] |

| Foreign-trained Team Members | Moderate positive correlation | Medium [27] |

| Basic Research Orientation | Limited direct impact | Low [27] |

| Diverse R&D Teams | Positive correlation | Medium [27] |

Accessibility Contrast Standards

| Element Type | WCAG AA Standard | WCAG AAA Standard |

|---|---|---|

| Normal Text | 4.5:1 | 7:1 |

| Large Text (18pt+) | 3:1 | 4.5:1 |

| Graphical Objects | 3:1 | Not specified |

| User Interface Components | 3:1 | Not specified |

A Practical Guide to Imputation Methods: From Simple Techniques to Advanced Machine Learning

FAQs: Choosing and Troubleshooting Interpolation Methods

1. How do I choose between simple imputation (Mean/Median) and interpolation (Linear/Spline) for my environmental time series?

The choice depends on the nature of your data and the missingness pattern. Simple imputation methods like mean or median are suitable when the data is completely random and the gaps are small, as they are easy to implement. However, they ignore the temporal structure and can introduce significant bias, especially in data with trends or seasonality [28]. Interpolation methods, such as linear or spline, are preferred for time-series data as they utilize the temporal order and adjacent data points to provide more accurate estimates [29]. For environmental data like temperature or pollutant concentrations, which often exhibit smooth changes over time, interpolation methods generally provide superior accuracy [30].

2. My interpolated values for a sensor data series show unexpected "wiggles" or overshoots. What is causing this and how can I fix it?

This is a classic symptom of Runge's phenomenon, which can occur when using high-degree polynomial interpolation on evenly spaced data points [28]. The solution is to switch to a method that provides smoother transitions, such as spline interpolation. Spline interpolation, particularly cubic splines, divides the data range into segments and fits low-degree polynomials to each, ensuring smooth transitions (C² continuity) and avoiding the oscillation problems of high-degree polynomials [28]. For a sensor dataset with gaps, cubic spline interpolation has been demonstrated to provide high modeling accuracy [30].

3. After interpolating my dataset, how can I quantitatively assess the accuracy of the filled values?

Since the true values for missing data are unknown, the standard practice is to use cross-validation [28] [29]. A robust method is Leave-One-Out Cross-Validation (LOOCV):

- Procedure: Systematically remove each known data point one at a time, treat it as "missing," and interpolate its value using the remaining data.

- Assessment: Compare the interpolated values against the actual, known values that were removed.

- Metrics: Calculate error metrics like Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) to quantify accuracy [30]. A lower MAE and RMSE indicate better performance. Willmott's Index of Agreement is another metric used to assess the degree of model prediction error [30].

4. What are the fundamental differences between exact and inexact interpolators?

- Exact Interpolators (e.g., some kriging models, basic spline interpolation) produce values that are exactly equal to the observed values at all measurement locations. The interpolated surface passes perfectly through every data point [31].

- Inexact Interpolators (or smoothing interpolators) account for potential measurement error or uncertainty by allowing the model to predict values at sampling locations that are slightly different from the exact measurements. This often produces a smoother, more realistic surface that better reflects the overall spatial or temporal correlation of the dataset, especially when data is noisy [31].

5. When performing linear interpolation on my time series, should I consider the uncertainty of the interpolated values?

Yes, quantifying uncertainty is a critical best practice in modern data analysis [28]. Traditional deterministic methods like linear interpolation provide a single-point estimate but do not inherently convey the reliability of that estimate. Treating interpolated values with the same confidence as measured data is a common oversight that can lead to false confidence in downstream analyses [28]. For critical applications, consider exploring more advanced probabilistic frameworks like Gaussian Process Regression, which generates confidence intervals alongside point estimates [28]. For simpler methods, you can assess variability through the cross-validation errors described in FAQ #3.

Comparative Analysis of Traditional Methods

The table below summarizes the key characteristics, advantages, and limitations of the methods discussed.

Table 1: Comparison of Traditional Statistical Methods for Handling Missing Data

| Method | Principle | Best For | Advantages | Limitations & Common Pitfalls |

|---|---|---|---|---|

| Mean/Median Imputation | Replaces missing values with the variable's mean or median. | Quick, simple analyses; completely random missingness. | Simple, fast to compute. | Ignores temporal structure; distorts data distribution and covariance; can introduce significant bias [28]. |

| Linear Interpolation | Estimates a value between two points by assuming a constant rate of change. Formula: ( y = y1 + (y2 - y1) \times (x - x1) / (x2 - x1) ) [28] [29]. | Short gaps in time-series data with a roughly linear trend between points [28] [29]. | Simple, preserves first-order trends; computationally efficient. | Produces sharp corners at data points; poor representation of curved relationships; underestimates uncertainty [28]. |

| Cubic Spline Interpolation | Fits a series of piecewise cubic polynomials to segments of data, ensuring smoothness at the connections (knots). | Time-series data where smoothness is assumed; environmental data like temperature or air quality [30]. | Produces visually smooth and realistic curves; avoids Runge's phenomenon; high accuracy for short intervals [28] [30]. | Can be sensitive to outliers; may produce unrealistic overshoots if data is very noisy. |

Table 2: Typical Performance Metrics for Interpolation Methods in Environmental Data Modeling (e.g., Air Temperature, SO₂) [30]

| Method | Mean Absolute Error (MAE) | Root Mean Squared Error (RMSE) | Willmott's Index of Agreement |

|---|---|---|---|

| Linear Interpolation | Low to Moderate | Low to Moderate | High |

| Cubic Polynomial | Moderate | Moderate | Moderate to High |

| Cubic Spline | Lowest | Lowest | Highest |

Experimental Protocol: Evaluating Interpolation Methods

This protocol provides a step-by-step guide for comparing the performance of different interpolation methods on a time-series dataset, such as one from an environmental monitoring station.

Objective: To empirically evaluate and select the most accurate method for imputing missing values in a specific environmental time series (e.g., air temperature, pollutant concentration).

Materials & Computational Tools:

- Dataset: A time-series dataset with known values (e.g., from LSEG, government environmental monitoring networks like U.S. EPA AQS) [32] [33].

- Software: MATLAB [30], R [17], or Python with libraries like Pandas and SciPy [34].

- Key Functions:

interpolate()in Pandas [34], spline functions in MATLAB [30].

Procedure:

- Data Preparation: Load your complete time-series dataset. It is crucial to begin with a high-quality dataset that has no missing values for the variable of interest to allow for validation.

- Introduce Artificial Gaps: Systematically remove data points from the complete series to create controlled, artificial gaps. Vary the gap length (e.g., 1 hour, 6 hours, 24 hours) and the variability of the data surrounding the gap to test method robustness [30].

- Apply Interpolation Methods: For each created gap, apply the interpolation methods under investigation:

- Mean/Median Imputation

- Linear Interpolation

- Cubic Spline Interpolation

- Cross-Validation & Error Calculation: For each method and gap scenario, compare the interpolated values ((Xi)) to the true, known values ((xi)) that were removed. Calculate performance metrics:

- Mean Absolute Error (MAE): ( \text{MAE} = \frac{1}{n}\sum{i=1}^{n} |xi - Xi| )

- Root Mean Squared Error (RMSE): ( \text{RMSE} = \sqrt{\frac{1}{n}\sum{i=1}^{n} (xi - Xi)^2} )

- Willmott's Index of Agreement [30]

- Result Analysis and Method Selection: Compare the error metrics across methods. The method with the lowest MAE and RMSE, and the highest Index of Agreement, is generally the most accurate for your specific dataset and typical gap patterns.

The Researcher's Toolkit

Table 3: Essential Resources for Time-Series Interpolation Research

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| Pandas (Python) | Software Library | Data manipulation and analysis; provides high-level interpolate() function for linear and spline methods on time series [34]. |

| MATLAB | Software Environment | Numerical computing; offers extensive built-in functions (e.g., interp1) for implementing linear, cubic, and spline interpolation with high accuracy [30]. |

| R Statistical Software | Software Environment | Statistical analysis; packages like bwm and built-in functions support advanced imputation and handling of complex missing data patterns [17]. |

| Geostatistical Kriging | Method | An advanced geostatistical interpolation technique that provides optimal estimates and uncertainty quantification, useful for spatial environmental data [33] [31]. |

| Leave-One-Out Cross-Validation (LOOCV) | Methodology | A standard technique for empirically assessing the accuracy and robustness of an interpolation method on a specific dataset [28] [29]. |

Workflow Diagram: Interpolation Method Selection

The diagram below outlines a logical workflow for selecting and validating an interpolation method based on the user's data characteristics and research goals.

Decision Workflow for Interpolation Method Selection

Frequently Asked Questions (FAQs)

Q1: What are the key advantages of using machine learning-based imputation methods like KNN, MissForest, and MICE over simple statistical methods for environmental time series data?

Simple methods like mean or median imputation are easy to implement but can distort the underlying distribution and relationships in the data, potentially leading to biased analyses [35] [36]. Machine learning methods offer significant advantages:

- Preservation of Data Structure: KNN and MissForest are designed to preserve the variance and covariance structure of the dataset, maintaining the relationships between variables, which is crucial for subsequent multivariate analysis [37] [36].

- Handling Complex Patterns: These algorithms can identify and leverage complex, non-linear relationships between variables to make more accurate predictions for missing values [38] [39].

- Flexibility: MICE can handle variables of different types (e.g., continuous, binary) simultaneously by specifying an appropriate model for each variable [40].

Q2: My environmental sensor data has over 30% missing values. Can I still use KNN imputation effectively?

Proceed with caution. While KNN can be used with higher missingness rates, its performance may degrade. KNN imputation works best when the proportion of missing data is small to moderate, typically ≤30% [37]. Beyond this threshold, the algorithm may struggle to find reliable nearest neighbors due to the increased sparsity of the data, leading to less accurate imputations [38] [37]. For high levels of missingness, MissForest has demonstrated robust performance, correctly imputing datasets with up to 40% randomly distributed missing values in some environmental applications [39].

Q3: Why is my MICE algorithm producing different results each time I run it, and how can I ensure reproducibility?

The MICE algorithm incorporates random elements, meaning it will produce different imputed values across separate runs unless you explicitly set a random seed [40]. This is a feature, not a bug, as it helps account for the uncertainty in the imputation process. To ensure reproducibility:

- Set a Random Seed: Always initialize the random number generator with a specific seed value before running the MICE algorithm in your code.

- Specify Model Parameters: Clearly define and document the number of imputations (

m), the number of iterations, and the specific imputation models (e.g., linear regression for continuous variables, logistic regression for binary variables) used for each variable in the chain [36] [40].

Q4: I need to perform data imputation directly on an edge device with limited computational resources (like a Raspberry Pi). Are these methods feasible?

Yes, but your choice of method is critical. Research has successfully deployed both kNN and missForest on Raspberry Pi devices for environmental data imputation [39]. Considerations include:

- Computational Load: KNN can be computationally expensive for very large datasets due to the need for pairwise distance calculations, but it is often feasible for typical sensor data streams [39] [37]. MissForest, while accurate, is generally more computationally intensive than KNN [36].

- Execution Time: Studies show that for typical environmental sensor sampling periods, the execution times for both KNN and MissForest on a Raspberry Pi can be shorter than the sampling interval, making near real-time imputation possible [39].

- Recommendation: For edge computing, start with KNN imputation and monitor device performance. If resources allow, MissForest can provide higher accuracy.

Troubleshooting Guides

Issue 1: Poor Imputation Accuracy with KNN

Symptoms: Imputed values do not align with expected trends; high RMSE/MAE when validating with a test set.

Solution Steps:

- Standardize Your Data: KNN is a distance-based algorithm and is highly sensitive to variable scales. Ensure all numerical features are standardized (e.g., using

StandardScalerorMinMaxScaler) before imputation [37]. - Tune the Hyperparameter

k: The number of neighbors (k) is critical. - Evaluate Distance Metrics: Try different distance metrics. Euclidean distance is common, but Manhattan or Cosine distance might be more suitable for your specific data characteristics [37] [36].

- Check for Sufficient Data: Verify that the dataset is not too sparse and that there are enough complete cases to find meaningful neighbors [37].

Issue 2: The MICE Algorithm Fails to Converge or is Too Slow

Symptoms: The imputed values show large fluctuations between iterations; the process takes an excessively long time.

Solution Steps:

- Increase Iterations: The default number of iterations (often 10) may be insufficient for your dataset. Increase the number of cycles (e.g., 20, 50) and check for convergence [40].

- Simplify the Imputation Model: The computational cost increases with the number of variables. Include only variables that are predictive of the missingness or correlated with the incomplete variables in the imputation model. Avoid including a very large number of variables unnecessarily [40].

- Inspect Variable Types: Ensure you have specified the correct model type for each variable (e.g., linear regression for continuous, logistic regression for binary) in the MICE procedure [40].

- Use a Powerful Enough Machine: For very large datasets, consider running MICE on a system with more computational resources, as the chained equations can be resource-intensive [35].

Issue 3: Handling Mixed Data Types (Continuous and Categorical) with MissForest

Symptoms: Errors during model fitting or implausible imputed values for categorical features.

Solution Steps:

- Verify Implementation: Ensure you are using a MissForest implementation that natively handles mixed data types. The algorithm itself is designed to use regression models for continuous data and classification models for categorical data [38] [36].

- Preprocess Categorical Variables: If required by your software package, convert categorical variables into a numerical representation (e.g., label encoding) before passing them to the MissForest algorithm.

- Check Stopping Criterion: MissForest iterates until a stopping criterion is met. If the convergence tolerance is too low, it may stop before finding good estimates. You can adjust the stopping criteria (e.g., maximum number of iterations) if needed [36].

Performance Comparison of Imputation Methods

The following table summarizes quantitative findings from comparative studies on imputation techniques, which can guide method selection. RMSE (Root Mean Squared Error) and MAE (Mean Absolute Error) are common metrics, where lower values indicate better performance.

Table 1: Performance Comparison of Imputation Methods Across Various Studies

| Method | Reported Performance & Characteristics | Context / Dataset | Source |

|---|---|---|---|

| MissForest | Generally superior performance; lowest RMSE/MAE. Can handle mixed data types. Computationally intensive. | Healthcare diagnostic datasets (Breast Cancer, Heart Disease, Diabetes). | [36] |

| MICE | Strong performance, often second to MissForest. Accounts for uncertainty via multiple datasets. | Healthcare diagnostic datasets. | [36] |

| KNN Imputation | Robust and effective. Performance can degrade with high missingness (>30%) or large, sparse datasets. | Air quality data; general data imputation. | [38] [37] [36] |

| Mean/Median Imputation | Lower accuracy. Can distort data distribution and underestimate variance. Simple and fast. | Used as a baseline method in multiple comparative studies. | [35] [36] |

Experimental Protocol: Benchmarking Imputation Methods

This protocol provides a step-by-step methodology for evaluating and comparing the performance of KNN, MissForest, and MICE on a environmental time series dataset, as commonly practiced in research [38] [39] [36].

1. Data Preparation and Simulation of Missingness

- Obtain a Complete Dataset: Start with a high-quality environmental dataset (e.g., water quality, air pollution) that has no missing values to serve as your ground truth benchmark [42] [38].

- Introduce Missing Values Artificially: Randomly remove values from the complete dataset under the Missing Completely at Random (MCAR) mechanism. Common practice is to simulate missingness levels such as 5%, 10%, 20%, 30%, and 40% [38] [39] [36].

2. Application of Imputation Methods

- Configure Algorithms: Set up the three imputation methods with initial parameters.

- Perform Imputation: Run each algorithm on the dataset with artificially introduced missingness to generate completed datasets.

3. Performance Evaluation

- Calculate Error Metrics: Compare the imputed values against the original, known values from your benchmark dataset. Commonly used metrics include:

- Assess Data Distribution: Use statistical tests (e.g., Kolmogorov-Smirnov) and visualizations (e.g., density plots, Q-Q plots) to check if the distribution of the imputed data is indistinguishable from the original benchmark distribution [39].

Workflow Diagram: Data Imputation Process for Environmental Time Series

The following diagram illustrates the logical workflow for a robust experimental evaluation of imputation methods, from data preparation to decision-making.

Methodology Diagram: The MICE Algorithm

The MICE (Multiple Imputation by Chained Equations) algorithm operates through an iterative, cyclic process. The diagram below details the steps involved in one iteration for a simple dataset.

Research Reagent Solutions: Essential Tools for Data Imputation

Table 2: Key Software Tools and Packages for Implementing Imputation Methods

| Tool / Package Name | Function / Purpose | Example Use Case |

|---|---|---|

Scikit-learn (sklearn.impute) |

A comprehensive machine learning library in Python. Provides KNNImputer and IterativeImputer (which can be used for MICE). |

Implementing KNN imputation and a base MICE algorithm for numerical data [35]. |

| MissingPy | A Python library specifically designed for missing data imputation. Contains implementations of MissForest and KNN. | Running the MissForest algorithm on a dataset with mixed data types [36]. |

| ImputeNA | A Python package offering automated and customized handling of missing values, supporting several standard techniques. | Quickly testing and comparing multiple simple and advanced imputation methods [36]. |

R mice Package |

A widely used and mature package in R for performing Multiple Imputation by Chained Equations (MICE). | Conducting a full MICE analysis with full control over imputation models for different variable types [40]. |

Frequently Asked Questions (FAQs)

1. What are the key advantages of RNN-based models like BRITS over traditional imputation methods for environmental data? RNN-based models excel at capturing temporal dependencies and complex missing patterns inherent in environmental time series (e.g., sensor data from water quality or climate monitoring). Unlike simple interpolation or statistical methods, models like BRITS treat missing values as variables within a bidirectional RNN graph, updating them during backpropagation to learn from both past and future context [43] [44]. This allows them to handle informative missingness, where the pattern of missing data itself is correlated with the target variable, a common scenario in real-world datasets [44] [45].

2. My climate time series has long gaps due to sensor failure. Will standard RNNs handle this effectively? Standard RNNs can struggle with long-range dependencies due to the vanishing gradient problem [43] [46]. For long gaps, consider advanced architectures:

- BRITS: Uses a bidirectional RNN to incorporate information from both before and after the gap [43].

- CSAI: Extends BRITS by incorporating a self-attention mechanism to better capture long-term dynamics [43].

- Dual-SSIM: Employs a dual-head sequence-to-sequence model with attention, which processes information before and after a missing gap separately, showing effectiveness in environmental applications like water quality monitoring [47].

3. Should I prioritize imputation accuracy or final task performance (e.g., classification) in my model? For downstream tasks like classification, a growing body of research suggests that prioritizing final task performance can be more effective. A highly accurate imputation is not always necessary for a successful classification outcome. End-to-end models that jointly learn imputation and classification allow the imputation process to be guided by label information, often leading to better results than a traditional two-stage process that separates imputation and classification [48].

4. What is a "non-uniform masking strategy" and why is it important for evaluation? Most models are evaluated using random masking (MCAR - Missing Completely At Random), which oversimplifies real-world missingness [43] [45]. A non-uniform masking strategy creates missing patterns that are correlated across time and variables (simulating MAR - Missing At Random, or MNAR - Missing Not At Random). Evaluating with these more realistic patterns is crucial, as benchmark studies show that imputation accuracy is significantly better on MCAR data than on MAR or NMAR data [43] [45].

Troubleshooting Guides

Issue 1: Poor Imputation Performance on Long-Term Climate Trends

Problem: Your model captures short-term fluctuations but fails to accurately impute long-term trends or seasonal patterns in climate data (e.g., temperature, precipitation).

Solutions:

- Architecture Modification: Integrate an attention mechanism with your RNN. Models like CSAI use self-attention for hidden state initialization to capture long- and short-range dependencies simultaneously [43].

- Leverage External Variables: If the long-term trend is correlated with other, more frequently measured variables (e.g., air pressure with temperature), ensure your model uses these correlated features. The recording patterns of correlated variables can provide strong clues for imputation [43] [49].

- Use a Hybrid Model: Consider a model like Dual-SSIM, which uses separate encoders to process temporal information before and after a missing gap, allowing it to better handle larger gaps [47].

Issue 2: Model Fails to Converge or Training is Unstable

Problem: During training, the loss function does not converge or shows high volatility.

Solutions:

- Inspect Gradient Flow: This is a classic sign of the vanishing/exploding gradient problem in RNNs. Switch from a vanilla RNN to a gated architecture like LSTM (Long Short-Term Memory) or GRU (Gated Recurrent Unit), which are designed to mitigate this issue [44] [46].

- Review Input Data and Masking: Ensure your input tensors correctly combine the observed data, masking matrix (

m_t), and time-interval matrix (δ_t). In GRU-D, for example, these elements are critically used to adjust the input and hidden states [44]. - Check Imputation Consistency (for BRITS): BRITS uses consistency constraints between forward and backward imputations. Verify that this loss component is being calculated correctly, as it is essential for stable training [43].

Issue 3: Model Does Not Generalize to Real-World Missing Patterns

Problem: The model performs well on your test set with random missingness (MCAR) but fails when deployed on real environmental data with structured missingness (e.g., all sensors fail during a storm).

Solutions:

- Re-evaluate Your Training Data: Train and evaluate your model using more realistic, non-random masking patterns that mimic the true missing data mechanisms (MAR/MNAR) in your application domain [43] [45].

- Incorporate Domain-Informed Decay: Models like CSAI introduce a domain-informed temporal decay function. This adjusts the model's attention to past observations based on clinical recording patterns, a concept that can be adapted to environmental data recording frequencies (e.g., how long a past rainfall measurement is relevant for imputing a current missing value) [43].

- Benchmark with Simple Methods: Compare your model's performance against simple methods like linear interpolation. Surprisingly, one benchmark study found that linear interpolation had the lowest RMSE across multiple missing data mechanisms and percentages for health time series, highlighting that complex models do not always win on all metrics [45].

Experimental Protocols & Benchmarking

Standardized Evaluation Protocol for Imputation Methods

To ensure fair and realistic comparison of different imputation methods, follow this protocol:

- Data Preparation: Start with a complete dataset (or one with a very low missing rate). For environmental data, this could be a high-quality time series of temperature or water pH from a well-maintained station [49].

- Introduce Missingness: Artificially mask values in the complete dataset using different mechanisms. Do not rely solely on MCAR.

- MCAR: Randomly mask values.

- MAR: Mask values based on other observed variables (e.g., mask humidity readings when temperature is high).

- MNAR: Mask values based on the variable itself (e.g., mask precipitation values when they exceed a certain threshold, simulating sensor failure during heavy rain) [7] [45].

- Vary Missing Rates: Test each mechanism at multiple missing rates (e.g., 5%, 10%, 30%) to assess robustness [45].

- Imputation: Apply the imputation methods (e.g., BRITS, M-RNN, simple interpolation) to the masked dataset.

- Performance Calculation: Compare the imputed values against the held-out ground truth using multiple metrics.

Table 1: Key Metrics for Evaluating Imputation Performance [45]

| Metric | Formula | Interpretation and Use Case |

|---|---|---|

| Root Mean Square Error (RMSE) | $\sqrt{\frac{1}{n}\sum{i=1}^{n}(yi - \hat{y}_i)^2}$ | Measures the standard deviation of prediction errors. Sensitive to large errors. |

| Mean Absolute Error (MAE) | ${\frac{1}{n}\sum{i=1}^{n}|yi - \hat{y}_i|}$ | Measures the average magnitude of errors. More robust to outliers than RMSE. |

| Bias | ${\frac{1}{n}\sum{i=1}^{n}(yi - \hat{y}_i)}$ | Measures the average direction and magnitude of error. Crucial for identifying systematic over/under-estimation. |

| Dynamic Time Warping (DTW) | (Algorithmic calculation) | Measures similarity between two temporal sequences that may vary in speed. Useful for evaluating shape preservation in time series [47]. |

Sample Experimental Workflow

The following diagram illustrates a typical workflow for training and evaluating a deep learning imputation model like BRITS or CSAI.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Tools and Resources for Deep Learning-Based Imputation

| Item | Function/Description | Example Use Case |

|---|---|---|

| PyPOTS Toolbox | An open-source Python toolbox specifically designed for machine learning tasks on partially observed time series. | Provides implementations of state-of-the-art models like CSAI, facilitating quick prototyping and benchmarking [43]. |

| Gated Recurrent Unit (GRU) / LSTM | RNN variants with gating mechanisms to control information flow, mitigating the vanishing gradient problem and capturing long-range dependencies. | The foundational building block for models like GRU-D, BRITS, and M-RNN [43] [44] [46]. |

| Masking Matrix (M) | A binary matrix indicating the presence (1) or absence (0) of an observation at a given time and feature. | Informs the model about the locations of missing values and is a direct input to many architectures [43] [44]. |

| Time Interval Matrix (δ) | A matrix recording the time elapsed since the last observation for each feature at each time step. | Used in models like GRU-D to apply a temporal decay to the influence of past observations [44]. |

| Bidirectional RNN (BRNN) | An RNN that processes sequence data in both forward and backward directions. | Core component of BRITS, allowing it to impute a missing value using both past and future context [43] [50]. |

| Self-Attention Mechanism | A mechanism that allows the model to weigh the importance of different elements in a sequence when encoding a specific element. | Used in CSAI and Transformers to capture long-range dependencies that are challenging for RNNs alone [43]. |

Frequently Asked Questions

1. What are the primary types of missing data mechanisms I need to know? A three-category typology is standard for describing missing data [51]:

- MCAR (Missing Completely at Random): The fact that the data is missing is unrelated to any observed or unobserved variables. Example: a survey packet is lost in the mail [51].

- MAR (Missing at Random): The propensity for a value to be missing is related to other observed variables but not to the underlying missing value itself. Example: missing survey responses from homeless participants, where homelessness status was recorded [51].

- MNAR (Missing Not at Random): The propensity for a value to be missing is directly related to the value that would have been observed. Example: participants with increased drug use are less likely to respond to questions about it [51].

2. Why is simply deleting missing data (Complete Case Analysis) often a bad idea? Complete Case Analysis, which drops any record with a missing value, is rarely appropriate for MAR or MNAR data [51]. It can lead to:

- Significant reduction in statistical power [51] [52].

- Biased parameter estimates, as the remaining complete cases may not be representative of the entire population [51] [52].

- Compromised generalizability of the study findings [52].

3. What is the key difference between single and multiple imputation?

- Single Imputation (e.g., mean imputation, Last Observation Carried Forward) replaces a missing value with one estimated value. This approach does not account for the uncertainty about the true value, often leading to biased estimates and artificially narrow confidence intervals [51] [53] [54].

- Multiple Imputation generates several different plausible values for each missing value, creating multiple complete datasets. After analyzing each dataset, the results are combined, accounting for the uncertainty of the missing data and providing more robust statistical inferences [14] [51] [53].

4. How do I handle missing data in environmental time series specifically? Environmental time series present a unique challenge because simply deleting missing values disrupts the temporal dependence between data points [55]. Suitable methods include:

- Advanced machine learning models like Support Vector Machine Regression (SVMR) that can iteratively impute and predict missing values while estimating the time series model order [55].

- Vine copula models that can jointly model the time series of a target station and its neighboring stations, which is particularly useful for capturing dependence in extremes [14].

- Deep learning methods like Generative Adversarial Networks (GANs), which have shown promise in imputing missing climate data [49].

5. What should I do if I suspect my data is Missing Not at Random (MNAR)? MNAR is the most challenging mechanism to address, as the reason for missingness is not in your observed data [51]. Methods include:

- Pattern-mixture models or selection models, which stratify data by dropout patterns or jointly model the dropout and outcome processes [54].

- Sensitivity analyses, such as delta-adjustment imputation (or "tipping point" analysis), which systematically adjust imputed values to see how different MNAR assumptions affect your conclusions [54].

- Bayesian methods, which can incorporate expert knowledge or historical data to inform the model about the missing data process [54].

Troubleshooting Guides

Problem: My analysis results seem biased after using mean imputation.

- Potential Cause: Mean imputation is a single imputation method that distorts the data distribution by ignoring the uncertainty of the missing values. It does not preserve relationships between variables and can severely bias parameter estimates, especially under MAR or MNAR mechanisms [51] [53].

- Solution: Shift to a method that accounts for uncertainty, such as Multiple Imputation or Maximum Likelihood estimation [51]. For time series data, consider model-based approaches like Mixed Models for Repeated Measures (MMRM) or machine learning techniques like Support Vector Machine Regression designed for sequential data [55] [54].

Problem: My dataset has a large block of missing climate sensor readings.

- Potential Cause: Sensor failure or system maintenance can lead to blocks of missing data in environmental time series [49] [55].

- Solution: Utilize spatial and temporal correlations. You can employ:

- Iterative Imputation and Prediction (IIP) algorithms that use correlation dimension estimation and machine learning to predict missing values [55].

- D-vine copula models that leverage information from neighboring monitoring stations to impute missing values in a target station, even when the neighbors also have missing data [14].

- Deep learning methods such as Generative Adversarial Networks (GANs), which have been shown to excel at imputing missing climate data [49].

Problem: I am facing high dropout rates in my clinical trial, and a regulator has criticized my use of Last Observation Carried Forward (LOCF).

- Potential Cause: LOCF assumes that a participant's outcome remains unchanged after dropout, which is often a clinically implausible assumption and can lead to biased estimates of treatment effects [53] [54].

- Solution: Pre-specify a more robust primary analysis method in your statistical plan. Regulators recommend:

- Mixed Models for Repeated Measures (MMRM) for primary analysis under the MAR assumption [54].

- Multiple Imputation for a flexible and robust approach to handling missing data [53] [54].

- For sensitivity analysis to assess potential MNAR, use methods like control-based imputation or delta-adjustment [54].

Decision Framework and Method Selection

The following table summarizes the recommended imputation methods based on the missing data mechanism and data type, particularly focusing on environmental time series.

Table 1: Method Selection Guide Based on Missingness Mechanism and Data Type

| Mechanism | Description | Recommended Methods | Common Applications & Notes |

|---|---|---|---|

| MCAR | Missingness is unrelated to any data [51]. | • Complete Case Analysis (if minimal missingness) [51]• Single Imputation (e.g., mean) [52] | Simple methods may be sufficient, but bias is still possible if the missing data rate is high. |

| MAR | Missingness is related to other observed variables [51]. | • Multiple Imputation [51] [52]• Maximum Likelihood [51]• MMRM (for longitudinal data) [54] | The primary recommended approaches for robust results. Suitable for clinical trials and observational studies [51] [54]. |

| MNAR | Missingness is related to the unobserved value itself [51]. | • Pattern-mixture models [54]• Selection models [54]• Sensitivity Analyses (e.g., delta-adjustment) [54]• Bayesian methods [54] | Used for worst-case scenario planning, often as part of sensitivity analysis. Challenging to implement and verify [51]. |

| Environmental Time Series (MAR/MNAR) | Missing data in sequential measurements with temporal dependence [14] [55]. | • Iterated Imputation & Prediction (IIP) with SVM Regression [55]• D-vine copula models (leverages spatial correlation) [14]• Generative Adversarial Networks (GANs) [49] | These methods explicitly model the temporal or spatial structure of the data, which is destroyed by simple deletion [55]. |

The logic of how to select an appropriate method based on the problem context can be visualized in the following workflow:

Diagram 1: Method Selection Workflow

Experimental Protocols for Key Methods

Protocol 1: Multiple Imputation using Rubin's Framework Multiple Imputation is a robust method for handling MAR data that accounts for the uncertainty of missing values [51] [53].

- Impute: Create multiple (e.g., 3-5) complete datasets by replacing missing values with plausible ones. These values are drawn from a distribution that incorporates random variation [53].

- Analyze: Perform the desired standard statistical analysis (e.g., regression, ANOVA) on each of the completed datasets separately [53].

- Pool: Combine the results from the analyses of the multiple datasets:

- Average the parameter estimates (e.g., regression coefficients) to get a single estimate [53].

- Calculate the final standard error by combining the average of the squared standard errors from within each dataset (within-imputation variance) and the variance of the parameter estimates across the datasets (between-imputation variance) [53].

Protocol 2: Iterated Imputation and Prediction (IIP) for Environmental Time Series This algorithm is designed for predicting time series with missing data by iteratively estimating the model order and imputing values [55].

- Initialization: Fill the missing values in the time series using a simple imputation method (e.g., linear interpolation) [55].

- Model Order Estimation: Use the Grassberger-Procaccia-Hough (GPH) algorithm on the currently imputed dataset to estimate the correlation dimension and thus the model order