In Silico Environmental Risk Assessment: A Computational Revolution in Chemical Safety for Researchers

This article provides a comprehensive overview of in silico environmental risk assessment (ERA), a computational approach that uses mathematical models to predict the environmental fate and effects of chemicals.

In Silico Environmental Risk Assessment: A Computational Revolution in Chemical Safety for Researchers

Abstract

This article provides a comprehensive overview of in silico environmental risk assessment (ERA), a computational approach that uses mathematical models to predict the environmental fate and effects of chemicals. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles, including tiered approaches and the role of the Adverse Outcome Pathway (AOP) framework. The article details core methodologies like QSAR, read-across, and toxicokinetic-toxicodynamic models, supported by real-world applications in pesticide management and pharmaceutical assessment. We further address current challenges in model optimization and data integration, compare in silico performance against traditional methods, and validate its use through case studies and regulatory acceptance. Finally, the discussion outlines future directions, emphasizing the potential of these tools to enable proactive, animal-free safety evaluation in biomedical research and development.

Defining In Silico ERA: Core Principles and the Shift from Traditional Toxicology

In silico Environmental Risk Assessment (ERA) represents a computational paradigm that uses sophisticated software and mathematical models to predict the environmental fate and effects of chemicals, thereby supporting regulatory decision-making and safety evaluations. Rather than relying solely on live animal testing or physical experiments, this approach leverages the power of computer simulations to analyze molecular structures and predict potential hazards [1]. The core philosophy centers on using non-testing methods to fill data gaps, prioritize chemicals for further testing, and ultimately reduce reliance on traditional animal studies while increasing the efficiency and scope of risk assessments [2] [3] [4].

The development and adoption of in silico ERA have been largely driven by regulatory frameworks such as the European Union's Registration, Evaluation, Authorisation and Restriction of Chemicals (REACH), which explicitly advocates for the use of alternative methods to animal testing [4]. This methodological shift is particularly valuable for addressing the challenges posed by the vast number of chemicals in commercial use and the continuous emergence of new substances, including pharmaceuticals, pesticides, and industrial compounds, where traditional testing approaches would be prohibitively costly, time-consuming, and ethically concerning [2] [5].

Core Principles and Methodological Framework

Fundamental Components of In Silico ERA

In silico environmental risk assessment operates through several interconnected methodological approaches, each serving specific functions within a comprehensive assessment strategy:

Quantitative Structure-Activity Relationship (QSAR) Models: These computational models establish mathematical relationships between the chemical structure of compounds (described by molecular descriptors) and their biological activity or physicochemical properties. QSAR models enable the prediction of ecotoxicological endpoints and environmental fate parameters directly from molecular structure, serving as a primary tool for filling data gaps when experimental information is unavailable [1] [6].

Toxicokinetic-Toxicodynamic (TK-TD) Models: These biologically-based models simulate how chemicals are absorbed, distributed, metabolized, and excreted in organisms (toxicokinetics), and how they subsequently exert toxic effects at their target sites (toxicodynamics). They provide mechanistic insights into toxicity processes across different species and exposure scenarios [1].

Physiologically Based Pharmacokinetic (PBPK) Models: PBPK models represent the anatomy, physiology, and biochemistry of organisms to predict the internal dose metrics of chemicals in specific tissues and organs over time. They are particularly valuable for cross-species extrapolation, such as calculating human equivalent doses from animal studies [7].

Dynamic Energy Budget (DEB) Models: DEB models simulate how organisms acquire and utilize energy across various physiological processes, and how chemical stressors alter these energy allocations. They help predict sublethal effects and population-level consequences from individual-level exposure [1].

Read-Across and Chemical Categorization: This technique involves using data from tested chemicals (source compounds) to predict the properties of untested chemicals (target compounds) based on structural and mechanistic similarity. It requires establishing a valid hypothesis for why chemicals can be grouped together [4] [6].

The Tiered Assessment Approach

In silico ERA typically follows a tiered framework that progresses from simple screening-level assessments to more complex and refined evaluations [1]. This stepped approach ensures efficient resource allocation, where higher-tier (and typically more resource-intensive) assessments are reserved for chemicals that warrant further investigation based on lower-tier results.

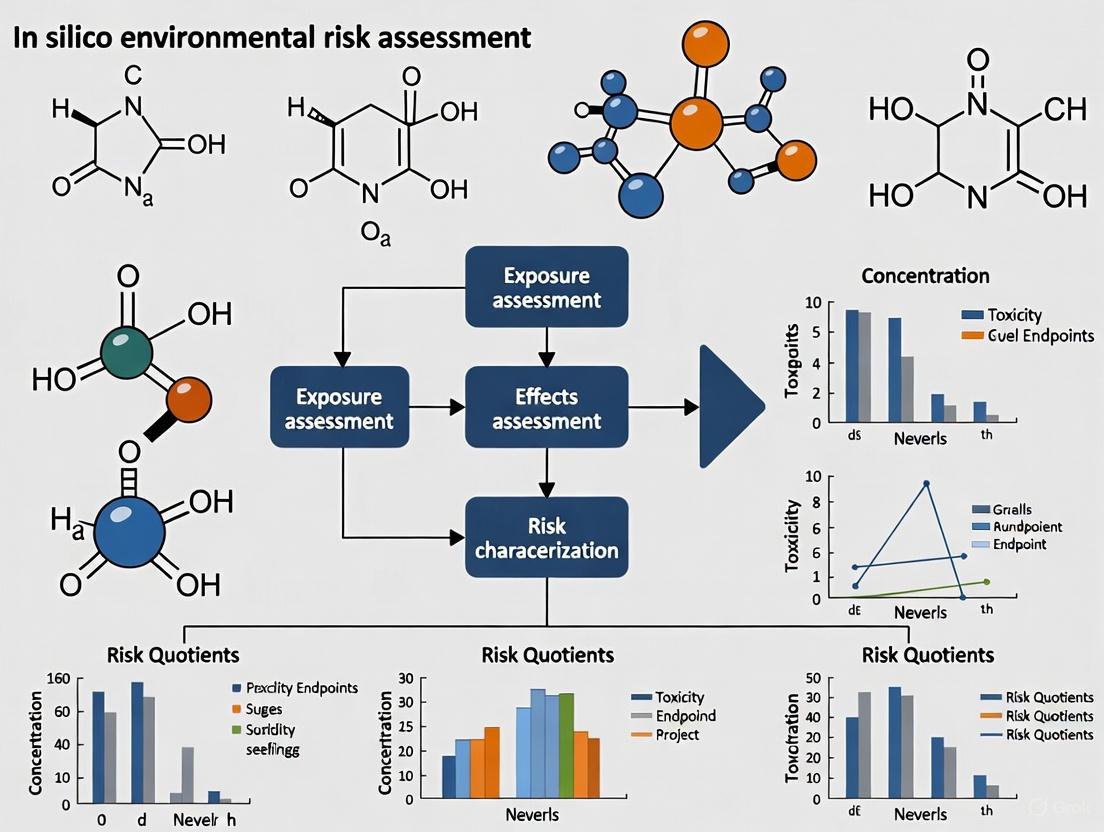

The diagram below illustrates this conceptual workflow and the interrelationships between different model types within a tiered assessment strategy:

Quantitative Advantages and Applications

Documented Benefits of In Silico Approaches

The adoption of in silico methods in environmental risk assessment provides substantial quantitative advantages over traditional testing paradigms, particularly in resource efficiency and animal welfare.

Table 1: Quantitative Benefits of In Silico ERA

| Benefit Category | Traditional Approach | In Silico Approach | Reference |

|---|---|---|---|

| Cost Requirements | Up to $9,919,000 for conventional pesticide testing | Potential savings of $50-70 billion for assessing 261 compounds | [2] |

| Animal Usage | High (∼8% of experimental animals used for toxicity testing) | Potential reduction of 100,000-150,000 test animals for 261 compounds | [2] |

| Testing Timeline | Chronic toxicity studies can take up to 2 years | Rapid predictions (hours to days) depending on model complexity | [2] |

| Regulatory Efficiency | Limited by data availability for thousands of chemicals | Enables screening and prioritization of large chemical inventories | [4] |

Practical Applications Across Chemical Classes

In silico ERA has demonstrated practical utility across diverse chemical domains and regulatory contexts:

Pesticide Risk Assessment: Computational tools have been developed to predict pesticide exposure in environmental compartments (air, water, soil) and toxicity to aquatic, terrestrial, and soil organisms. Models like AGDISP predict pesticide spray drift, while specialized models like BeeTox distinguish bee-toxic chemicals with high accuracy (specificity: 0.891) [2].

Per- and Polyfluoroalkyl Substances (PFAS) Evaluation: Integrated frameworks combine experimental data with PBPK modeling to calculate human equivalent doses and support the establishment of tolerable daily intakes for problematic compounds like PFOS and PFOA [7].

Pharmaceuticals and Personal Care Products (PPCPs): QSAR tools are employed to screen for persistent, mobile, and toxic (PMT) and persistent, bioaccumulative, and toxic (PBT) properties among emerging contaminants, enabling prioritization of concerning compounds for further testing and regulation [5].

Biofuel Development: In silico assessments evaluate the ecotoxicity of biofuel mixtures based on production conditions, predicting key parameters like biodegradability, bioaccumulation potential, and soil sorption coefficients to optimize for reduced environmental impact [8].

Experimental Protocols and Methodologies

Standardized Workflow for QSAR-Based Hazard Assessment

The application of QSAR models in environmental risk assessment follows a systematic protocol to ensure scientifically defensible results. The methodology described below outlines the process for screening chemicals for PMT/PBT properties, as applied to PPCPs [5]:

Chemical Selection and Representation: Curate a dataset of target chemicals with verified structural identifiers (e.g., Simplified Molecular Input Line Entry System [SMILES] or Chemical Abstract Service [CAS] registry numbers). For the PPCP assessment, 245 substances were included after quality filtering.

Molecular Descriptor Calculation: Generate physicochemical and structural descriptors for each compound using appropriate software tools. These descriptors numerically represent features relevant to chemical behavior and toxicity.

Model Selection and Application: Apply multiple QSAR tools to ensure comprehensive endpoint coverage and model consensus. The recommended toolkit includes:

- OECD QSAR Toolbox (v.4.4 or higher): For mechanistic profiling, category formation, and read-across

- OPERA (v2.9 or higher): For predictions of physicochemical properties and environmental fate parameters

- EPI Suite (v4.1 or higher): For baseline toxicity and environmental persistence predictions

- QSAR-ME Profiler: For additional model verification

Applicability Domain Assessment: For each model prediction, evaluate whether the target chemical falls within the model's applicability domain (the chemical space for which the model was developed and validated). Predictions for chemicals outside this domain should be treated with caution.

Endpoint Prediction and Threshold Comparison: Compare predicted values against regulatory thresholds for persistence (e.g., half-life in water, soil, or sediment), bioaccumulation (e.g., Bioconcentration Factor [BCF]), and toxicity (e.g., Lethal Concentration 50 [LC50]) as defined in regulations such as REACH Annex XIII.

Data Integration and Weight-of-Evidence: Combine predictions across multiple models and endpoints to form a weight-of-evidence conclusion regarding PMT/PBT classification.

Validation and Uncertainty Characterization: Document the reliability of each prediction through measures of goodness-of-fit, predictive performance, and uncertainty quantification.

Integrated In Vitro - In Silico Testing Strategy

Advanced assessment approaches combine high-throughput in vitro bioassays with in silico modeling, as demonstrated in fish ecotoxicology [9]:

In Vitro Bioactivity Testing: Expose relevant cell lines (e.g., RTgill-W1 cells from rainbow trout) to concentration ranges of test chemicals. Measure multiple endpoints including:

- Cell viability (using miniaturized OECD TG 249 assay)

- Cytomorphological changes (using Cell Painting assay)

In Vitro Disposition (IVD) Modeling: Apply computational models that account for sorption effects (binding to plastic labware and cellular components) to predict freely dissolved concentrations that actually interact with biological targets.

Concentration Adjustment: Adjust nominal in vitro effect concentrations (e.g., Phenotype Altering Concentrations [PACs]) using IVD modeling to derive bioavailable concentrations.

In Vitro to In Vivo Extrapolation (IVIVE): Compare adjusted in vitro effect concentrations with in vivo fish toxicity data to establish protective correlations. In validation studies, this approach demonstrated that 73% of adjusted PACs were protective of in vivo toxicity, with 59% within one order of magnitude of measured lethal concentrations [9].

The Scientist's Toolkit: Software and Databases

Successful implementation of in silico ERA requires access to specialized software tools and curated databases. The table below summarizes key resources available to researchers in this field.

Table 2: Essential Computational Tools for In Silico ERA

| Tool Name | Type | Key Features | Application in ERA | Access |

|---|---|---|---|---|

| OECD QSAR Toolbox | Integrated Workflow System | 60+ databases, 150,000+ chemicals, 3M+ data points, read-across capability | Chemical categorization, metabolite prediction, data gap filling | Free |

| VEGA | QSAR Platform | 90+ models for properties, toxicity, and environmental fate | Predictions for bioaccumulation, aquatic toxicity, mutagenicity | Free |

| Toxtree | Rule-Based System | Cramer classification, structural alerts, threshold of toxicological concern | Hazard identification, priority setting | Free, open-source |

| Danish QSAR Database | Predictive Platform | Suite of 30+ models built in Leadscope software | Regulatory screening, early-stage hazard assessment | Free, web-based |

| EPI Suite | Predictive System | Estimates physicochemical properties and environmental fate | Prediction of persistence, bioaccumulation potential | Free |

| OPERA | QSAR Application | Predicts physicochemical properties and environmental fate parameters | High-throughput screening of chemical libraries | Free |

| AGDISP | Exposure Model | Predicts pesticide deposition and spray drift | Assessment of pesticide aerial transport and deposition | Not specified |

Future Perspectives and Challenges

The future development of in silico environmental risk assessment is moving toward more integrated approaches that address current limitations while expanding application domains. Key research priorities include:

Addressing Chemical Mixtures: Developing frameworks to assess the combined effects of multiple chemicals and other environmental stressors, moving beyond single-substance evaluations [1] [2].

Uncertainty Quantification: Improving methods to characterize and communicate uncertainties associated with in silico predictions, particularly for regulatory decision-making [4].

Advanced Model Integration: Creating interoperable computational frameworks that seamlessly combine QSAR, PBPK, TK-TD, and ecosystem-level models [1] [3].

Regulatory Acceptance: Establishing standardized validation protocols and reporting formats (e.g., QSAR Model Reporting Format [QMRF]) to build confidence in computational predictions for regulatory applications [4] [6].

Data Quality and Availability: Expanding curated, high-quality databases for model training and validation, addressing current gaps for specific chemical classes and endpoints [2] [5].

As these challenges are addressed, in silico methods are poised to become increasingly central to environmental risk assessment, enabling more proactive, comprehensive, and efficient evaluation of chemical safety in an increasingly complex chemical landscape.

Tiered Approaches and Weight of Evidence in Problem Formulation

Within the domain of in silico environmental risk assessment (ERA), problem formulation establishes the strategic foundation for the entire evaluation process. It defines the scope, objectives, and depth of the assessment by explicitly considering the specific regulatory or protection goals, the data availability, and the resources and timeframes involved [1]. Tiered approaches and Weight of Evidence (WoE) are two pivotal, interlinked concepts that operationalize problem formulation. A tiered approach provides a stepwise strategy, starting with simple, conservative models and progressing to more complex ones only as needed. Concurrently, a WoE approach offers a structured framework for integrating results from multiple in silico models and, potentially, other data sources to form a robust, reliable conclusion [10] [11]. This guide details the methodologies and applications of these principles for researchers and safety assessors.

Tiered Approaches in ERA: From Screening to Complex Modeling

Tiered approaches are designed to optimize efficiency in risk assessment. They begin with conservative, high-throughput methods to identify substances of potential concern, reserving more resource-intensive, sophisticated analyses for situations where they are truly necessary [1]. The following table summarizes a typical tiered framework for using in silico models in ERA.

Table 1: A Tiered Approach to the Application of In Silico Models in Environmental Risk Assessment

| Tier | Data Situation | Example In Silico Methods | Application Context | Outcome |

|---|---|---|---|---|

| Tier 1 (Screening) | No chemical property or ecotoxicological data available | Quantitative Structure-Activity Relationship (QSAR) models (e.g., ECOSAR) [12] [11] | Priority setting, initial hazard identification, data gap filling for high-volume chemicals | Conservative hazard estimates to screen out low-risk substances |

| Tier 2 (Refined) | Some data available, requiring more accurate characterization | Read-Across from data-rich analogue substances [13] | Hazard assessment for chemicals with some experimental data on analogues | More reliable, substance-specific hazard characterization |

| Tier 3 (Complex) | Data-rich situations or specific research questions | Biologically-Based Models (e.g., Toxicokinetic-Toxicodynamic (TK-TD) models, Dynamic Energy Budget (DEB) models, Physiologically Based Models (PBMs)) [1] [11] | Derivation of specific reference points, extrapolation to population-level effects, understanding mechanisms | High-resolution risk estimates, often for specific ecosystems or populations |

This logical progression through tiers is outlined in the workflow below.

The Weight of Evidence (WoE) Framework: Integrating Multiple Lines of Evidence

A WoE approach is a structured process for transparently assembling, weighing, and integrating multiple, sometimes conflicting, strands of evidence to reach a conclusive assessment [10]. It is particularly critical when using in silico methods, as no single model is universally reliable.

The process involves systematically combining results from complementary in silico tools, such as statistical-based QSARs and expert rule-based systems, and potentially integrating them with existing in vitro or in vivo data [10] [3]. The convergence of predictions from multiple independent models increases confidence in the assessment, while conflicting results signal a need for more refined analysis or indicate high uncertainty.

Table 2: Key In Silico Tools for Integration in a Weight of Evidence Framework

| Tool Category | Specific Tool/Model | Function in WoE | Key Endpoints |

|---|---|---|---|

| QSAR Platforms | ECOSAR (Ecological Structure Activity Relationships) [12] | Predicts aquatic toxicity for screening; provides quantitative estimates for data-poor chemicals. | Acute and chronic toxicity to fish, daphnids, algae. |

| Expert Knowledge Systems | OECD QSAR Toolbox, Derek Nexus | Identifies structural alerts associated with toxicity; provides mechanistically-supported predictions. | Genotoxicity, skin sensitization, endocrine disruption. |

| Open-Access Databases | ECOTOX Knowledgebase [12], EPA CompTox Chemicals Dashboard [11] | Provides curated in vivo ecotoxicity data for validation of in silico predictions and read-across. | Experimental toxicity values for aquatic and terrestrial species. |

| Toxicokinetic Models | High-Throughput Toxicokinetic (HTTK) or generic one-compartment models [11] | Predicts internal doses from external exposures, linking in vitro bioactivity to in vivo relevance. | Bioavailability, metabolism, half-life. |

The following diagram illustrates the dynamic process of synthesizing evidence from these diverse tools.

Experimental Protocols for Key In Silico Methodologies

Protocol for Read-Across Assessment

Read-across is a powerful data-gap filling technique that requires a rigorous, transparent protocol to be acceptable for regulatory purposes [13].

- Define Target Substance: Clearly identify the substance for which data is missing (the "target").

- Identify Analogues: Systematically search for potential source analogues based on:

- Structural similarity (common functional groups, carbon chain length).

- Similarity in physical-chemical properties (e.g., log Kow, molecular weight).

- A common metabolic pathway or mode of action.

- Justify Analogue Selection: Provide a robust scientific rationale for the chosen analogues, demonstrating that the similarities are sufficient to support extrapolation for the specific endpoint in question.

- Data Collection and Evaluation: Gather all available experimental data for the source analogues for the target endpoint (e.g., ecotoxicity). Assess the reliability of these data.

- Address Uncertainties: Explicitly discuss any differences between the target and source analogues and how they might affect the prediction. This may involve providing a qualitative or quantitative uncertainty analysis.

- Documentation: Compile the entire assessment, including all data, justifications, and uncertainty analyses, in a complete and transparent report following guidelines from ECHA and OECD [13].

Protocol for a WoE Assessment Using QSARs

This protocol ensures in silico toxicological assessments are performed and evaluated in a consistent, reproducible, and well-documented manner [10].

- Endpoint and Model Selection: Precisely define the toxicological endpoint of interest (e.g., fish acute toxicity). Select at least two complementary QSAR methodologies—one statistical-based (e.g., from EPA's ECOSAR) and one expert rule-based (e.g., from OECD QSAR Toolbox) [10].

- Prediction and Documentation: Run the predictions for the target chemical. Document the exact software, version, and all input parameters used.

- Assess Applicability Domain (AD): For each model, evaluate whether the target chemical falls within its AD—the chemical space on which the model was built and for which it is reliable. If outside the AD, the prediction should be discounted or used with extreme caution.

- Evaluate Reliability and Relevance: Determine the reliability of the prediction (based on the model's validation and AD) and its relevance to the specific assessment context.

- Integrate Findings: Synthesize the results from the different models:

- If predictions are concordant and within the models' AD, confidence is high.

- If predictions are discordant, investigate the cause (e.g., different structural alerts, one model outside its AD). The assessment may require additional models or default to the more conservative prediction.

- Final Confidence Statement: Issue a final conclusion on the hazard, accompanied by a clear statement on the overall confidence level based on the relevance, reliability, and consistency of the integrated evidence [10].

Table 3: Key Research Reagent Solutions for In Silico ERA

| Tool Name | Type | Primary Function in Research |

|---|---|---|

| ECOSAR [12] | QSAR Software | Predicts acute and chronic toxicity of chemicals to aquatic organisms (fish, daphnids, green algae) based on chemical structure. |

| EPA CompTox Chemicals Dashboard [11] | Database | Provides access to curated physicochemical, toxicity, and exposure data for thousands of chemicals, supporting read-across and model development. |

| OECD QSAR Toolbox | Expert System | Identifies structural alerts, profiles metabolites, and facilitates grouping of chemicals for read-across to fill data gaps. |

| ECOTOX Knowledgebase [12] | Database | A curated repository of single-chemical ecological toxicity data for aquatic and terrestrial life, essential for validating in silico predictions. |

| OpenFoodTox [11] | Database | EFSA's open-source toxicological database on chemicals in food and feed, used for hazard identification and characterization. |

The future of in silico ERA lies in addressing increasingly complex challenges. Tiered and WoE approaches are fundamental to next-generation risk assessment (NGRA), which aims to holistically evaluate the impacts of multiple chemicals and multiple stressors (e.g., chemicals combined with temperature stress or pathogens) on living organisms and ecosystems [1] [11]. The development of landscape-based modeling approaches, which integrate geospatial data and landscape characteristics into exposure and effect assessments, represents the cutting edge of this field, moving risk assessment from a generic to a specific, systems-based context [1] [11]. The continued development and standardization of protocols for in silico methods, as surveyed in international initiatives [10], will be crucial for their wider regulatory acceptance and application. By firmly embedding tiered approaches and Weight of Evidence within problem formulation, the scientific community can ensure that in silico environmental risk assessment remains a robust, efficient, and transformative tool for protecting environmental health.

The Adverse Outcome Pathway (AOP) is a conceptual framework that systematically organizes existing knowledge concerning biologically plausible and empirically supported links between a molecular-level perturbation of a biological system and an adverse outcome at a level of biological organization relevant to regulatory decision-making [14]. The AOP framework provides a structure for capturing and representing the sequence of causal events that connect a molecular initiating event (MIE), such as the interaction of a chemical with a specific biological target, through a series of intermediate key events (KEs), to an adverse outcome (AO) of regulatory significance [15]. This framework facilitates greater integration and more meaningful use of mechanistic data in regulatory toxicology, potentially improving the efficiency and reliability of chemical safety assessment and environmental risk assessment [14].

Within the broader context of in silico environmental risk assessment research, AOPs play a pivotal role in supporting the transition from traditional chemical safety testing, which relies heavily on whole-animal studies, toward a more mechanistic and predictive approach [2]. By providing a structured representation of toxicity pathways, AOPs enable the development of computational models and in vitro testing strategies that can reduce reliance on animal testing, decrease costs, and accelerate the evaluation of chemical hazards [16] [2]. The framework supports a paradigm shift in toxicology toward greater utilization of mechanistic data for predicting adverse effects, which is particularly valuable for assessing the thousands of chemicals in commercial use with limited safety information [17].

Foundational Principles and Structure of AOPs

The development and application of AOPs are guided by five fundamental principles that ensure consistency and utility across the scientific community [14]:

AOPs are not chemical-specific: They describe generalizable biological pathways that can be initiated by any stressor (chemical, physical, or biological) capable of triggering the molecular initiating event.

AOPs are modular and composed of reusable components: The fundamental building blocks of AOPs are key events (KEs) and key event relationships (KERs), which can be shared across multiple AOPs.

An individual AOP is a pragmatic unit of development: A single linear sequence of KEs and KERs represents a manageable unit for initial development and evaluation.

Networks of AOPs are the functional unit of prediction: Most real-world scenarios involve interconnected AOPs that share common KEs and KERs, forming networks that better represent complex biological responses.

AOPs are living documents: They evolve over time as new scientific knowledge is generated, requiring periodic updates and refinements.

The core structural components of any AOP consist of [14] [16]:

- Molecular Initiating Event (MIE): The initial point of interaction between a stressor and a biological macromolecule (e.g., protein binding, receptor activation).

- Key Events (KEs): Measurable changes in biological state that are essential for progression along the pathway. These occur at different levels of biological organization (cellular, tissue, organ, organism).

- Key Event Relationships (KERs): Causal linkages between key events that describe how one event leads to another.

- Adverse Outcome (AO): An effect of regulatory significance at the individual or population level.

Visualizing the AOP Framework

The following diagram illustrates the generalized structure of an Adverse Outcome Pathway, showing the sequential progression from molecular initiation to adverse outcome:

Quantitative AOPs (qAOPs): From Qualitative to Quantitative Frameworks

While qualitative AOPs provide valuable conceptual frameworks, the development of quantitative AOPs (qAOPs) is essential for enabling predictive toxicology and risk assessment [16]. A qAOP incorporates mathematical models that quantitatively describe the relationships between key events, allowing for the prediction of the probability, timing, and severity of adverse outcomes based on the intensity or duration of exposure to a stressor [17]. The transition from qualitative AOPs to qAOPs represents a significant advancement in the field, as it enables more robust and reliable chemical safety assessments [16].

Methodological Approaches for qAOP Development

Several computational approaches have been employed to develop qAOPs, each with distinct advantages and applications [16]:

Response-Response Relationships: These involve fitting mathematical functions (e.g., regression models) to empirical data that describe the relationship between two adjacent key events. This approach is relatively straightforward but may lack biological mechanistic depth.

Biologically-Based Mathematical Modeling: This approach uses systems of ordinary differential equations to represent the underlying biological processes mechanistically. While more complex to develop, these models provide greater insight into the dynamics of the pathway.

Bayesian Networks (BNs): BNs are graphical models that represent probabilistic relationships among key events. They are particularly useful for handling uncertainty, integrating diverse data types, for modeling complex AOP networks with multiple branching pathways, as long as there are no feedback loops [16]. Dynamic Bayesian Networks can further incorporate temporal aspects.

Workflow for qAOP Development

The conversion of a qualitative AOP to a quantitative qAOP follows a systematic process [16] [17]:

Comprehensive Literature Review: A thorough examination of existing scientific literature to gather qualitative and quantitative data relevant to the AOP components.

Data Extraction and Categorization: Quantitative data suitable for model development is extracted and categorized. Ideally, this includes studies that measure multiple key events simultaneously.

Model Structure Definition: Based on the AOP structure and available data, an appropriate mathematical modeling approach is selected.

Parameter Estimation: Model parameters are estimated using available experimental data through statistical fitting procedures.

Model Evaluation and Validation: The qAOP model is tested against independent datasets not used in model development to assess its predictive performance.

Uncertainty Analysis: Sources of uncertainty in the model predictions are identified and quantified.

Implementation and Application: The validated qAOP is implemented in user-friendly tools and applied for chemical risk assessment.

Quantitative Modeling Techniques Used in qAOP Development

Table 1: Summary of Quantitative Modeling Approaches for qAOPs

| Modeling Approach | Key Features | Data Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Response-Response Relationships [16] | Statistical fitting of functions between adjacent KEs | Paired measurements of adjacent KEs | Simple to implement; Minimal computational resources | Limited extrapolation capability; Less biological mechanistic depth |

| Biologically-Based Mathematical Models [16] | Systems of differential equations representing biological mechanisms | Time-course data; Kinetic parameters | Mechanistic insight; Good extrapolation potential | High data requirements; Computational complexity |

| Bayesian Networks (BNs) [16] [15] | Probabilistic graphs representing relationships between KEs | Conditional probability distributions | Handles uncertainty; Integrates diverse data types | Cannot model feedback loops without extensions |

AOP Case Studies in Environmental Risk Assessment

Case Study 1: Acetylcholinesterase Inhibition Leading to Neurodegeneration

AOP 281, "Acetylcholinesterase Inhibition Leading to Neurodegeneration," provides a well-characterized example of AOP development and the challenges associated with quantitative implementation [16]. This AOP describes the sequence of events through which inhibition of acetylcholinesterase (AChE) can ultimately lead to neurodegenerative effects:

The molecular initiating event is AChE inhibition, resulting in an excess of acetylcholine (ACh) in the synapse. This build-up of ACh leads to overactivation of muscarinic acetylcholine receptors (mAChR) within the brain, initiating local (focal) seizures. Through subsequent glutamate release and activation of NMDA receptors, the excitotoxicity propagates, leading to elevated intracellular calcium levels, status epilepticus, and ultimately cell death and neurodegeneration [16].

The qAOP development for this pathway faced several challenges, including the availability of quantitative data amenable to model development, the lack of studies measuring multiple key events simultaneously, and issues regarding model accessibility and transferability across platforms [16]. The case study highlighted the importance of improving key event and key event relationship descriptions in the AOP Wiki to facilitate the transition from qualitative to quantitative AOPs.

Case Study 2: Aromatase Inhibition Leading to Reproductive Dysfunction

AOP 25, "Aromatase Inhibition Leading to Reproductive Dysfunction," represents one of the more advanced AOPs with a developed quantitative component [16]. This AOP describes how inhibition of the aromatase enzyme, which converts androgens to estrogens, can lead to impaired reproduction in fish. The development of a qAOP for this pathway demonstrated that even AOPs with primarily textual descriptions in their quantitative understanding sections can be successfully converted to quantitative models [16]. This case study illustrates the potential for qAOPs to support predictive risk assessment for endocrine-disrupting chemicals in aquatic environments.

Visualizing the Acetylcholinesterase Inhibition AOP

The following diagram details the specific key events and relationships in AOP 281 (Acetylcholinesterase Inhibition Leading to Neurodegeneration), including the positive feedback loop that exacerbates the adverse outcome:

AOPs in Regulatory Science and Chemical Safety Assessment

The AOP framework plays an increasingly important role in modernizing chemical safety assessment and environmental risk assessment paradigms. By providing a structured representation of toxicity pathways, AOPs support several key applications in regulatory science [14] [15] [2]:

Integrated Approaches to Testing and Assessment (IATA): AOPs provide the scientific basis for developing integrated testing strategies that efficiently combine non-animal methods, such as high-throughput in vitro assays and in silico models, for chemical hazard characterization.

Chemical Prioritization and Screening: AOPs enable the development of targeted testing strategies for identifying chemicals with specific modes of action, facilitating more efficient prioritization of chemicals for further testing.

Extrapolation Across Species: By focusing on conserved biological pathways, AOPs support extrapolation of effects across species, which is particularly valuable for ecological risk assessment.

Quantitative Risk Assessment: qAOPs provide a foundation for developing predictive models that can estimate the probability and magnitude of adverse effects at environmentally relevant exposure concentrations.

The use of AOPs in regulatory decision-making is facilitated by international collaborations, particularly through the Organisation for Economic Co-operation and Development (OECD) AOP Development Programme, which oversees the formal review and endorsement of AOPs [16]. This harmonized approach ensures that AOPs developed by the scientific community meet agreed-upon standards of scientific rigor and reliability for application in regulatory contexts.

Experimental Protocols and Methodologies for AOP Development

Weight of Evidence Assessment for AOP Development

The establishment of scientifically credible AOPs requires a systematic weight of evidence (WoE) assessment based on modified Bradford-Hill criteria [16]. This assessment evaluates three fundamental aspects of each Key Event Relationship (KER):

Biological Plausibility: Evaluation of the strength of evidence supporting a causal relationship between key events based on current understanding of biological system.

Empirical Support: Assessment of the extent and consistency of experimental observations demonstrating that a change in the upstream key event leads to an appropriate change in the downstream key event.

Quantitative Understanding: Evaluation of the extent to which dose-response, temporal, and incidence relationships between key events are understood.

The WoE assessment is typically documented in the AOP Wiki (https://aopwiki.org/), the central repository for AOP knowledge, which provides standardized forms for capturing and evaluating the evidence supporting each KER [16].

Essential Research Reagents and Tools for AOP-Related Research

Table 2: Key Research Reagents and Tools for AOP Development and Validation

| Reagent/Tool Category | Specific Examples | Research Application in AOP Context |

|---|---|---|

| In Vitro Bioassays [2] | Acetylcholinesterase activity assays; Aromatase inhibition assays; Receptor binding assays | Measuring Molecular Initiating Events (MIEs) and cellular-level Key Events (KEs) for high-throughput chemical screening |

| Biomarker Assays [15] | Oxidative stress markers; Hormone level measurements; Specific protein biomarkers (e.g., Vitellogenin) | Quantifying changes in intermediate Key Events (KEs) to track progression along the AOP |

| 'Omics Technologies [15] | Transcriptomics; Proteomics; Metabolomics | Discovering novel Key Events (KEs) and providing system-wide evidence for biological plausibility of Key Event Relationships (KERs) |

| Computational Models [16] [2] | Physiologically Based Kinetic (PBK) models; Bayesian Networks; Quantitative Structure-Activity Relationship (QSAR) models | Developing Quantitative AOPs (qAOPs); Extrapolating from in vitro to in vivo exposures and across species |

| Reference Chemicals [16] | Specific agonists/antagonists for molecular targets; Chemicals with well-characterized modes of action | Establishing biological plausibility and empirical support for Key Event Relationships (KERs) during AOP development |

Challenges and Future Directions in AOP Development

Despite significant progress in AOP development and application, several challenges remain to be addressed [16] [2] [17]:

Quantitative Data Gaps: Many existing AOPs lack the quantitative data necessary for developing robust qAOP models. There is a particular need for studies that measure multiple key events simultaneously across different biological levels.

AOP Network Complexity: Most adverse outcomes result from multiple interconnected pathways rather than simple linear sequences. Modeling these complex networks presents both conceptual and computational challenges.

Biological Variability: Accounting for inter-individual and cross-species variability in quantitative AOP models remains difficult but is essential for accurate risk assessment.

Technical Barrier: Model accessibility and transferability across different platforms and research groups need improvement to facilitate wider adoption of qAOPs.

Dynamic and Feedback Processes: Many biological systems include feedback mechanisms and adaptive responses that are not yet fully captured in current AOP frameworks.

Future directions in AOP research include the development of more sophisticated computational approaches for qAOP modeling, enhanced international collaboration for populating the AOP knowledgebase, and stronger integration of AOPs into regulatory decision-making processes [16] [2] [17]. The continued evolution of the AOP framework is expected to play a crucial role in advancing the goals of 21st-century toxicology and in silico environmental risk assessment, ultimately leading to more efficient, cost-effective, and human-relevant chemical safety assessment.

For decades, animal testing has served as the cornerstone of regulatory safety assessment for pharmaceuticals, pesticides, and industrial chemicals. However, a growing body of evidence demonstrates significant scientific and economic limitations in this approach. Animal models are poor predictors of human toxicity, with analysis suggesting they are "little better than what would result merely by chance—or tossing a coin—in providing a basis to decide whether a compound should proceed to testing in humans" [18]. Approximately 89% of novel drugs fail human clinical trials, with about half of these failures due to unanticipated human toxicity not detected in animal studies [18].

The economic implications are equally staggering. Rodent testing in cancer therapeutics adds an estimated 4 to 5 years to drug development and costs $2 to $4 million per compound. For industrial toxicity testing, completing all required animal studies for a single pesticide takes approximately 10 years and $3 million [18]. Compared with in vitro testing, animal tests range from 1.5× to >30× more expensive [18]. These scientific and economic limitations have catalyzed the development and adoption of New Approach Methodologies (NAMs), particularly in silico methods, which offer faster, cost-effective, and increasingly accurate alternatives for environmental risk assessment [19].

Limitations of Animal Testing: Scientific and Economic Concerns

Scientific Concordance and Predictive Value

The scientific foundation of animal testing is undermined by poor concordance with human outcomes. A review of 221 animal experiments found agreement in human studies just 50% of the time—essentially random chance [18]. Analysis of the U.S. National Toxicology Program concluded that toxicities other than carcinogenesis were not reproducible between rats and mice, between sexes, or compared with historic control animals [18].

Table 1: Concordance Between Animal Studies and Human Outcomes

| Study Focus | Number of Studies/Chemicals Analyzed | Concordance Rate | Key Finding |

|---|---|---|---|

| Animal experiment reproducibility | 221 experiments | 50% | Agreement with human studies no better than chance |

| Mouse to rat toxicity prediction | 37 chemicals | 55.3% (long-term), 44.8% (short-term) | Little better than random prediction |

| Pharmaceutical failure rate | N/A | 89% overall failure rate | ~50% due to unanticipated human toxicity |

| Post-marketing safety issues | 93 serious adverse outcomes | 19% | Only 19% identified in preclinical animal studies |

Notable Cases of Failed Prediction

Historical evidence reveals numerous concerning failures of animal studies to predict human toxicity:

- Vioxx (Merck): Withdrawn after release despite animal testing, associated with an estimated 88,000 heart attacks and 38,000 deaths [18]

- Thalidomide: Caused devastating phocomelia in 20,000-30,000 infants despite testing in 10 strains of rats, 11 breeds of rabbits, 2 breeds of dogs, 3 strains of hamsters, 8 species of primates, and various other species [18]

- TGN1412: An antibody given at 1/500th the dose found safe in animal studies rendered all 6 human volunteers critically ill within minutes [18]

- Fialuridine: Caused the deaths of 5 volunteers during phase II clinical trials despite being safe in multiple animal species at hundreds of times higher doses [18]

The Economic Burden of Animal Testing

The economic impact of reliance on animal testing extends beyond direct costs to include opportunity costs from abandoned potentially beneficial compounds and delayed market access:

- Direct costs: Pesticide testing can cost up to $9,919,000 overall, with chronic toxicity studies taking up to 2 years [2]

- Opportunity costs: Drugs falsely identified as toxic in animal studies are often abandoned, though the magnitude of this error is unknown as these compounds rarely proceed to human testing [18]

- Delay costs: Compassionate human use of ganciclovir demonstrated efficacy and safety in more patients than required for phase I/II trials, but FDA delayed approval for 4 years due to lack of animal studies [18]

In Silico Methodologies: A New Paradigm for Risk Assessment

Defining In Silico Environmental Risk Assessment

In silico environmental risk assessment (ERA) represents a paradigm shift from traditional animal-based testing to computational approaches that predict chemical behavior, toxicity, and environmental fate. These methodologies use computer simulations, mathematical models, and computational chemistry to evaluate potential hazards [20]. The European Commission encourages the use of validated in silico techniques such as (Q)SAR models as part of reduction, refinement, and replacement strategies for animal use [4].

The framework for ERA typically involves four distinct steps: hazard identification, exposure assessment, toxicity assessment, and risk characterization [2]. In silico tools can contribute to each of these steps, creating an integrated approach that minimizes animal use while providing robust safety data.

Key In Silico Approaches and Tools

Quantitative Structure-Activity Relationship (QSAR) Models

QSAR models correlate chemical structure with biological activity or properties using mathematical relationships. These models operate on the principle that structurally similar chemicals have similar properties or activities. The OECD QSAR Toolbox is a freely available software application that supports reproducible chemical hazard assessment, offering functionalities for retrieving experimental data, simulating metabolism, and profiling properties of chemicals [21].

Read-Across and Category Approaches

Read-across is a data gap filling technique where properties of a data-rich "source" chemical are used to predict the same properties for a similar, data-poor "target" chemical. The OECD Toolbox facilitates this approach by helping to find structurally and mechanistically defined analogues and chemical categories [21]. Tools like RAXpy enable read-across based on structural, biological, and metabolic similarities [22].

Exposure Assessment Models

For pesticide exposure assessment, models like AGDISP (AGricultural DISPersal model) effectively monitor pesticide deposition and spray drift, successfully tracking atrazine drift up to 400 meters from application sites [2]. These tools help characterize environmental fate and potential human exposure without extensive field studies.

Integrated Testing Strategies

The most powerful applications combine multiple in silico approaches through Integrated Approaches for Testing and Assessment (IATA), which combine different data sources to conclude on chemical toxicity [19]. IATA frameworks integrate and weigh all relevant existing evidence while guiding targeted new data generation.

Table 2: Key In Silico Tools for Environmental Risk Assessment

| Tool Name | Primary Function | Access | Key Features |

|---|---|---|---|

| OECD QSAR Toolbox [21] | Chemical hazard assessment | Free | 63 databases with 155K+ chemicals and 3.3M+ experimental data points; read-across and category formation |

| VERMEER Cosmolife [22] | Cosmetic risk assessment | Not specified | Evaluation of cosmetic ingredients and detailed investigation of risk scenarios |

| SILIFOOD [22] | Food contact material assessment | Free | Fast risk assessment of non-evaluated Food Contact Material substances |

| AGDISP [2] | Pesticide spray drift prediction | Not specified | Predicts pesticide deposition and drift in agricultural applications |

| BeeTox [2] | Bee toxicity prediction | Not specified | Graph attention convolutional neural network distinguishing bee-toxic chemicals with 83.7% accuracy |

| ToxEraser FCM [22] | Food contact material identification | Free | Identifies risky food contact materials and suggests safer alternatives |

Quantitative Benefits: Cost and Time Savings of In Silico Methods

The transition to in silico methods offers substantial quantitative benefits in both cost reduction and testing efficiency. Analysis of 261 compounds demonstrated that in silico methods could eliminate the use of 0.1–0.15 million test animals and save $50–70 billion [2]. This represents a paradigm shift in the economics of toxicity testing.

A framework for cost-effectiveness analyses of toxicity tests that accounts for cost, duration, and uncertainty uses the metric of "cost per correct regulatory decision" [23]. This approach recognizes that either a fivefold reduction in cost or duration can be a larger driver of optimal methodology selection than a fivefold reduction in uncertainty, particularly for simpler regulatory decisions [23].

Table 3: Economic Comparison: Animal Testing vs. In Silico Approaches

| Parameter | Animal Testing | In Silico Approaches | Advantage Ratio |

|---|---|---|---|

| Pesticide registration | ~10 years [18] | Significantly reduced (case-dependent) | >5× faster [23] |

| Pesticide testing cost | Up to $9,919,000 [2] | Dramatically reduced | 1.5× to >30× cost savings [18] |

| Cancer therapeutic rodent testing | $2-4 million [18] | Cost of software and computational resources | Potentially orders of magnitude |

| Animal use per 261 compounds | 100,000-150,000 animals [2] | Minimal to none | Near total replacement |

| Drug development timeline impact | Adds 4-5 years [18] | Can be integrated early and rapidly | Significant acceleration |

Implementation Framework: Protocols for In Silico ERA

Systematic Workflow for In Silico Assessment

A robust in silico environmental risk assessment follows a structured workflow that ensures scientific rigor and regulatory acceptance. The CADASTER project (Case studies on the Development and Application of in-Silico Techniques for Environmental hazard and Risk assessment) exemplifies this approach through its focus on collecting existing data and models, assessing quality of toxicity data, developing new QSAR models, and characterizing uncertainty [4].

Experimental Protocols for Model Development and Validation

Data Collection and Curation (Task 2.1 from CADASTER)

The foundation of any in silico assessment is robust data collection. The protocol includes:

- Literature search for endpoints relevant to environmental risk assessment

- Database mining of existing databases on risk and hazard assessment parameters

- Data quality assessment to characterize variability and uncertainty in available data

- Supplementation with new experimental data where essential for model development [4]

Model Development and Validation

The development of new QSAR models follows rigorous protocols:

- Descriptor calculation: Breaking chemical structures into fragments or calculating molecular descriptors

- Dataset splitting: Dividing data into training, calibration, and test sets to avoid overtraining [22]

- Model training: Using algorithms such as counter-propagation artificial neural networks (CPANN) or self-organising maps (SOM) [22]

- Applicability domain assessment: Defining the chemical space where the model provides reliable predictions [4]

- Validation: Assessing predictive power using external test sets and statistical measures

Integrated Risk Assessment Protocol

For comprehensive environmental risk assessment of pharmaceuticals and transformation products, researchers have successfully implemented protocols that:

- Evaluate biodegradability using predictive software

- Assess carcinogenicity and mutagenicity through computational models

- Apply chemometric analyses (HCA and PCA) to enhance result interpretation [24]

- Prioritize compounds of concern based on multiple endpoints

Successful implementation of in silico approaches requires access to specialized software, databases, and computational resources. This toolkit enables researchers to replace animal testing while maintaining scientific rigor.

Table 4: Essential Research Reagent Solutions for In Silico Risk Assessment

| Tool Category | Specific Tools | Key Function | Regulatory Relevance |

|---|---|---|---|

| QSAR Platforms | OECD QSAR Toolbox [21], SARpy, CORAL, QSARpy [22] | Develop and apply QSAR models for various endpoints | Accepted in REACH assessments [4] |

| Read-Across Tools | RAXpy [22], Read-Across functionality in OECD Toolbox [21] | Identify analogues and fill data gaps using similar compounds | Recommended by EFSA and ECHA for genotoxicity assessment [19] |

| Exposure Models | AGDISP [2], TOXSWA | Predict environmental fate and concentration of chemicals | Used in pesticide registration and environmental monitoring |

| Toxicity Prediction | BeeTox [2], aiQSAR [22] | Predict specific toxicity endpoints for environmental organisms | Supports pesticide risk assessment and prioritization |

| Metabolic Simulators | Metabolism simulators in OECD Toolbox [21] | Predict metabolic pathways and transformation products | Critical for assessing persistence and bioaccumulation |

| Reporting Tools | QMRF reporting, Data matrix wizard in OECD Toolbox [21] | Generate transparent assessment reports | Essential for regulatory acceptance and review |

Validation and Regulatory Acceptance: Building Confidence in In Silico Methods

The regulatory acceptance of in silico methods depends on rigorous validation and demonstration of reliability. The CADASTER project addressed this through several key activities: development of methodologies for assessing applicability domains of models, characterization of uncertainty and variability, and sensitivity analysis of individual models [4].

Current regulatory frameworks increasingly recognize the value of in silico approaches. The REACH system requires that non-animal methods should be used for the majority of tests in the 1-10 tonne band [4]. Similarly, the European animal testing bans for cosmetics (Regulation No 1223/2009) have accelerated development and validation of alternative approaches [25].

For complex endpoints, regulatory agencies including the U.S. EPA, EFSA, and ECHA have been developing frameworks to implement and use NAMs for regulatory applications [19]. The integration of in silico predictions with other data sources through "weight of evidence" approaches allows for confident decision-making even for complex endpoints.

The field of in silico environmental risk assessment continues to evolve rapidly, with several promising developments on the horizon. The integration of artificial intelligence and machine learning approaches is enhancing predictive capabilities, while initiatives for data FAIRification (Findable, Accessible, Interoperable, and Reusable) are improving data quality and accessibility [19].

Future advancements need to address several critical challenges, including better consideration of environmental exposure concentrations, interactions among mixtures of contaminants, and development of in silico models specifically tailored for ERA of emerging contaminants like pharmaceuticals [20]. Additionally, increasing the regulatory acceptance and standardization of these methods will be crucial for wider adoption.

In conclusion, in silico methods represent a scientifically robust and economically viable alternative to traditional animal testing for environmental risk assessment. The compelling evidence of cost savings, coupled with improved efficiency and growing regulatory acceptance, positions these approaches as key drivers in overcoming the limitations of animal testing. As computational power increases and algorithms become more sophisticated, the role of in silico methods is poised to expand further, ultimately leading to more predictive, cost-effective, and ethical chemical safety assessment.

Global chemical regulatory frameworks are actively driving a pivotal transformation in environmental and human health risk assessment. Spearheaded by REACH (Registration, Evaluation, Authorisation and Restriction of Chemicals) in the European Union, in conjunction with international standards from the Organisation for Economic Co-operation and Development (OECD) and specific sectoral regulations like those for cosmetics, regulators are increasingly mandating a move away from traditional animal-based testing. This shift is underpinned by the parallel goals of enhancing ethical standards and embracing more scientifically advanced, human-relevant methodologies. The core of this transformation lies in the adoption of New Approach Methodologies (NAMs), which include in silico (computational) tools, in vitro assays, and advanced data integration techniques. These approaches are recognized not merely as alternatives but as superior pathways for generating robust, efficient, and mechanistically insightful safety data, thereby firmly establishing the regulatory context that encourages and validates in silico environmental risk assessment research [26] [27] [28].

Regulatory Frameworks as Catalysts for Change

REACH and the Push for NAMs

The REACH regulation establishes a comprehensive framework for chemicals in the EU, creating a direct impetus for the development and use of alternative assessment methods. Its core processes—registration, evaluation, and restriction—increasingly incorporate provisions for non-animal data.

- Registration and Data Gaps: REACH requires that chemical substances produced or imported in quantities exceeding one tonne per year be registered with the European Chemicals Agency (ECHA). For this process, companies must identify and manage the risks linked to the substances [29]. ECHA's 2025 report on "Key Areas of Regulatory Challenge" explicitly outlines research needs where NAMs are critical, including for assessing neurotoxicity, immunotoxicity, endocrine disruption, and environmental endpoints like persistence and bioaccumulation [27].

- Analogical Reasoning and Read-Across: REACH explicitly endorses the use of read-across, where data from a tested "source" substance is used to predict the properties of a similar "target" substance. ECHA notes that NAMs can strengthen this process by "generating toxicokinetic and toxicodynamic data for candidate analog substances," thereby validating the assumptions of similarity and filling data gaps without additional animal testing [27].

- Specific Endpoint Challenges: The regulatory body highlights specific areas where in silico and in vitro methods are poised to reduce vertebrate testing. For instance, for fish toxicity, both short-term and long-term, NAMs are under development to predict adverse outcomes without the use of live animals. Similarly, for carcinogenicity, which traditionally relies on two-year rodent bioassays, there is an active push to "use alternative and new methods to speed up the detection of carcinogens" [27].

Cosmetic Regulations: A Vanguard for Non-Animal Approaches

Cosmetic regulations have been the most forceful drivers of the transition to NAMs, effectively creating a regulatory environment where non-animal methods are a necessity, not an option.

- The EU Animal Testing Ban: EU Cosmetic Regulation (EC) No 1223/2009 explicitly prohibits animal testing for finished cosmetic products and their ingredients [30] [31]. This ban has made the development and application of NAMs a commercial and regulatory imperative for the entire cosmetics industry operating in the EU market.

- Next-Generation Risk Assessment (NGRA): The cosmetics industry, in response to these regulations, is pioneering NGRA. This is defined as a "human-relevant, exposure-led, hypothesis-driven risk assessment approach, designed to prevent harm" [26] [28]. NGRA relies heavily on a suite of NAMs—including in silico and in vitro tools—to perform safety assessments without animal data. The International Cooperation on Cosmetics Regulation (ICCR) is actively working to define best practices to advance the regulatory acceptance of these methodologies [26].

- Global Harmonization of Cosmetic Rules: The influence of the EU's approach is global. The United States' Modernization of Cosmetics Regulation Act of 2022 (MoCRA) mandates safety substantiation for cosmetic products, a requirement that inherently promotes the use of advanced, efficient assessment techniques [30] [32]. Similarly, Brazil recently passed a law prohibiting the use of animals in cosmetic testing, further expanding the global market for which non-animal safety data is required [30].

OECD and the International Validation of Methods

The OECD plays a critical role in the international harmonization of chemical safety testing guidelines. The adoption of an OECD Test Guideline (TG) is a key step for a method to achieve widespread regulatory acceptance. The ongoing development and updating of TGs for NAMs provide the essential, globally recognized protocols that lend credibility and reliability to data generated through in silico and in vitro means, thereby facilitating their use in regulatory dossiers submitted under frameworks like REACH [33] [27].

Table 1: Regulatory Drivers for In Silico and NAM Adoption Across Frameworks

| Regulatory Framework | Key Mechanism | Impact on In Silico/NAM Adoption |

|---|---|---|

| REACH (EU) | Requirement for data on thousands of chemicals; endorsement of read-across [27]. | Creates a necessity to fill data gaps efficiently. Promotes use of (Q)SAR and other computational tools to meet information requirements. |

| EU Cosmetics Regulation | Full ban on animal testing for cosmetics [30] [31]. | Makes NAMs the only viable pathway for safety assessment, driving innovation in NGRA. |

| MoCRA (USA) | Requirement for safety substantiation of cosmetic products [30] [32]. | Encourages industry to adopt modern, efficient safety assessment methodologies, including in silico approaches. |

| OECD Guidelines | International validation and standardization of test methods [33]. | Provides the essential regulatory legitimacy and interoperability for non-animal methods across jurisdictions. |

Foundational In Silico Methodologies and Protocols

The regulatory push has accelerated the refinement and application of specific computational methodologies. These tools are integral to modern chemical safety and risk assessment pipelines.

Predictive Models for Transformation Products (TPs)

Understanding a chemical's environmental fate, particularly the formation and toxicity of TPs, is a critical aspect of a holistic risk assessment. In silico methods offer efficient solutions for prioritization and screening [33].

Rule-Based Models: These models are grounded in expert-curated, mechanistic reaction rules derived from experimental studies. They predict transformation pathways (e.g., hydroxylation, oxidation) by applying these predefined rules to a parent compound's structure. Their key strength is high interpretability, but they are limited to predicting known transformations [33].

- Protocol Example: Using a tool like

enviPathto predict biotic degradation pathways.- Input: Prepare the SMILES (Simplified Molecular-Input Line-Entry System) string of the parent compound.

- Parameter Setting: Select the desired transformation library (e.g., "biotic" for microbial degradation).

- Execution: Run the prediction algorithm to generate a tree of potential transformation products.

- Output Analysis: Review the predicted TPs and the proposed transformation pathways. The results are typically presented as a combination of chemical structures and reaction descriptions.

- Protocol Example: Using a tool like

Machine Learning (ML) Models: These data-driven models can uncover complex, non-linear relationships between chemical structure and transformation potential or toxicity. They are trained on large datasets of chemical properties and biological activities. While powerful and flexible, their "black-box" nature can sometimes hinder mechanistic interpretation [33].

- Protocol Example: Training an ML model to predict acute aquatic toxicity.

- Data Curation: Compile a high-quality dataset of chemical structures and associated experimental toxicity values (e.g., LC50 for fish).

- Descriptor Calculation: Generate numerical molecular descriptors (e.g., topological, electronic, physicochemical) for all compounds in the dataset.

- Model Training & Validation: Use an algorithm (e.g., Random Forest, Support Vector Machine) to learn the relationship between descriptors and toxicity. The dataset is typically split into training and validation sets to assess model performance and prevent overfitting.

- Prediction: Apply the trained model to new chemicals to estimate their toxicity.

- Protocol Example: Training an ML model to predict acute aquatic toxicity.

Integrated Workflows: The most advanced approaches combine rule-based and ML methods. Quantitative Structure-Activity Relationship (QSAR) models serve as a bridge, as they can be built using expert-defined descriptors or trained via ML. Similarly, read-across is increasingly enhanced by ML to identify optimal analogue substances and improve predictive accuracy [33].

The following diagram illustrates a typical computational workflow for the in silico assessment of transformation products, integrating both prediction and hazard evaluation:

Diagram: A computational workflow for predicting and prioritizing transformation products (TPs) for environmental risk assessment.

The reliability of any in silico model is contingent on the quality and breadth of its underlying data. Key resources for TP and toxicity data include:

- NORMAN Suspect List Exchange (NORMAN-SLE): A collaborative repository of suspect lists for screening chemical substances in the environment [33].

- PubChem: A public database of chemical molecules and their activities, which includes a "Transformations" section with parent-TP mappings [33].

- enviPath: A resource for predicting microbial biodegradation pathways and storing experimental data [33].

It is critical to note that while large language models (LLMs) might be prompted to propose TPs, they are not based on curated chemical rules and "should be treated with caution," as they may generate plausible but false information. Expert-curated databases are the recommended source [33].

The Scientist's Toolkit: Key Reagents and Research Solutions

Implementing a modern, in silico-driven risk assessment strategy requires a suite of computational and experimental tools. The table below details key components of this toolkit.

Table 2: Essential Research Tools for Next-Generation Risk Assessment

| Tool Category | Example / Solution | Function in Risk Assessment |

|---|---|---|

| Computational Prediction Platforms | enviPath, BioTransformer, OECD QSAR Toolbox |

Predicts environmental transformation pathways and metabolites of parent compounds [33]. |

| Toxicity Prediction Models | (Q)SAR models, molecular docking simulations, AOP (Adverse Outcome Pathway) networks | Forecasts key toxicological endpoints (e.g., mutagenicity, endocrine activity) from chemical structure [33] [28]. |

| Data Curation & Analysis | NORMAN-SLE, PubChem Transformations, ShinyTPs | Provides curated data on known transformation products and supports text-mining for literature-derived TP information [33]. |

| Exposure Assessment | ECHA Use Maps, SPERCs (Specific Environmental Release Categories), SCEDs (Specific Consumer Exposure Determinants) | Provides standardized exposure scenarios for workers, consumers, and the environment for use in Chemical Safety Assessments (CSAs) under REACH [34]. |

| Integrated Workflow Software | Chesar (Chemical Safety Assessment and Reporting tool) |

Enables companies to conduct, manage, and report their chemical safety assessments in a standardized and efficient manner [34]. |

| In Vitro Assays (for NGRA) | Transcriptomics, high-throughput screening assays, in vitro toxicokinetics | Generates human-relevant biological effect data to be integrated with in silico predictions and exposure data in a weight-of-evidence approach [26] [28]. |

The regulatory landscape, shaped by REACH, OECD, and groundbreaking cosmetic regulations, has unequivocally moved from merely accepting in silico methods to actively encouraging their development and application. The future of environmental risk assessment lies in integrated testing strategies that seamlessly combine in silico predictions, in vitro data, and human exposure information within a Next-Generation Risk Assessment (NGRA) framework. This approach is not only more ethical but also more scientifically relevant, efficient, and protective of human health and the environment. For researchers and drug development professionals, proficiency in these methodologies is no longer a niche specialty but a core competency required to navigate global regulatory requirements and contribute to the development of safer, more sustainable chemicals and products.

Tools of the Trade: Key In Silico Models and Their Real-World Applications

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational toxicology and environmental risk assessment. These in silico models predict the biological activity or toxicity of chemicals based on their molecular structure, utilizing statistical and machine learning methods to establish relationships between chemical descriptors and biological endpoints. As regulatory agencies increasingly advocate for New Approach Methodologies (NAMs) to reduce animal testing, QSAR models have gained significant importance for supporting safety assessment of consumer products, pharmaceuticals, and environmental contaminants. This technical guide examines the fundamental principles, development methodologies, validation frameworks, and applications of QSAR modeling, with particular emphasis on environmental risk assessment contexts. The document also explores emerging trends, including the integration of artificial intelligence and knowledge-based approaches that enhance predictive capabilities beyond traditional structure-based paradigms.

In silico environmental risk assessment (ERA) represents a paradigm shift in how scientists evaluate the potential hazards of chemicals in the environment. As a fundamental component of this approach, QSAR modeling enables researchers to predict the environmental fate and toxicological effects of chemicals without exhaustive laboratory testing. The foundation of QSAR rests on the principle that chemical structure determines biological activity—a concept formally established by Corwin Hansch in 1962 but with roots extending back to earlier work on linear free-energy relationships by Hammett and others [35].

The regulatory landscape has increasingly embraced QSAR methodologies. The U.S. Food and Drug Administration's 2025 Roadmap to Reducing Animal Testing in Preclinical Safety Studies emphasizes the adoption of NAMs, while the FDA Modernization Act 3.0 further supports this transition by modernizing toxicological assessment requirements [36]. Similarly, the European Union's REACH regulation promotes the use of QSAR to fill data gaps, particularly following bans on animal testing for cosmetics [37]. These developments position QSAR as an essential tool for addressing the thousands of chemicals requiring assessment while reducing reliance on animal studies and containing costs.

Environmental risk assessment for chemicals like pesticides exemplifies the value of QSAR approaches. Traditional testing can cost nearly $10 million and take up to two years for a single compound, whereas in silico methods offer rapid, cost-effective alternatives that can potentially save 50-70 billion dollars and reduce animal use by 100,000-150,000 for assessing 261 compounds [2]. For pesticide risk assessment, QSAR models help characterize environmental behavior, exposure potential, and ecological effects across aquatic, terrestrial, and soil compartments.

Fundamental Principles of QSAR Modeling

Historical Development and Theoretical Foundation

QSAR modeling has evolved significantly since its inception more than fifty years ago. The field originated from physical organic chemistry, particularly the work of Louis Hammett who established linear free-energy relationships to explain substituent effects on chemical reactivity. Hansch and Fujita's pioneering research in the early 1960s formalized QSAR by demonstrating that biological activity could be correlated with physicochemical parameters through mathematical equations [35]. Their approach incorporated hydrophobicity (measured by octanol-water partition coefficients, log P) alongside electronic and steric parameters, establishing the Hansch equation that remains influential today.

The fundamental QSAR paradigm operates on the similarity principle—the concept that structurally similar compounds tend to have similar biological properties [38]. This principle enables the prediction of activities for untested compounds based on their structural resemblance to chemicals with known activity profiles. However, this principle has limitations, particularly when minor structural modifications result in significant toxicity changes, as exemplified by the drug pair ibuprofen (generally safe) and ibufenac (withdrawn due to hepatotoxicity), which differ by only a single methyl group [36].

Key Components of QSAR Models

All QSAR models comprise three essential components: (1) molecular descriptors that numerically encode structural and physicochemical properties; (2) an algorithm that establishes the relationship between descriptors and biological activity; and (3) a defined applicability domain that specifies the model's scope and limitations.

Molecular descriptors quantify aspects of molecular structure and properties, including:

- Physicochemical descriptors: Hydrophobicity (log P), electronic properties (sigma constants), steric parameters

- Topological descriptors: Molecular connectivity indices, fingerprint patterns

- Geometric descriptors: Molecular shape, size, surface areas

- Quantum chemical descriptors: HOMO/LUMO energies, molecular orbital properties

Algorithm selection depends on the modeling context, with options ranging from traditional regression methods to advanced machine learning approaches:

Table 1: Machine Learning Algorithms for QSAR Development

| Algorithm | Complexity | Applicability | Interpretability |

|---|---|---|---|

| k-Nearest Neighbors (KNN) | Low | Small datasets, similarity-based | High |

| Logistic Regression (LR) | Low | Linear relationships | High |

| Support Vector Machine (SVM) | Medium | Non-linear relationships | Medium |

| Random Forest (RF) | High | Complex datasets, feature importance | Medium |

| Extreme Gradient Boosting (XGBoost) | High | Large datasets, predictive accuracy | Medium |

The applicability domain (AD) defines the structural space where the model can reliably predict activity, helping users identify when compounds fall outside the model's training set, which is crucial for regulatory acceptance [37].

QSAR Development Workflow and Methodologies

Data Acquisition and Curation

The foundation of any robust QSAR model is a high-quality dataset with well-defined endpoints. For environmental applications, key toxicity endpoints include:

- Aquatic toxicity: LC50 for fish, Daphnia, algae

- Bioaccumulation potential: Bioconcentration factor (BCF)

- Environmental persistence: Biodegradation half-life

- Environmental mobility: Soil adsorption coefficient (Koc)

Data sources for QSAR development include publicly available databases (EPA ECOTOX, REACH registration dossiers) and proprietary collections. Critical curation steps involve checking for duplicates, verifying experimental conditions, and standardizing measurement units [35]. For regulatory applications, data should comply with standardized testing guidelines (OECD, EPA) to ensure consistency and reliability.

Chemical Representation and Feature Generation

Molecular structure representation forms the basis for descriptor calculation. The process typically begins with structure representation (SMILES, InChI, or 2D/3D molecular files), followed by geometry optimization and descriptor computation using tools such as PaDEL, RDKit, or Dragon.

Table 2: Essential Descriptor Categories for Environmental QSAR

| Descriptor Category | Key Parameters | Environmental Relevance |

|---|---|---|

| Hydrophobic | log P, log D, water solubility | Bioaccumulation, membrane permeability |

| Electronic | pKa, HOMO/LUMO energies, polarizability | Reactivity, transformation potential |

| Steric | Molecular weight, molar volume, refractivity | Molecular transport, enzyme interactions |

| Topological | Connectivity indices, molecular fingerprints | Similarity assessment, read-across |

| Quantum Chemical | Partial charges, electrostatic potential | Reaction pathways, metabolite formation |

Feature selection techniques (genetic algorithms, stepwise regression, Random Forest importance) help identify the most relevant descriptors, reducing dimensionality and minimizing the risk of overfitting.

Model Building and Validation