In Silico Exposure Models for Air, Water, and Soil: A Comparative Review of Tools, Applications, and Best Practices

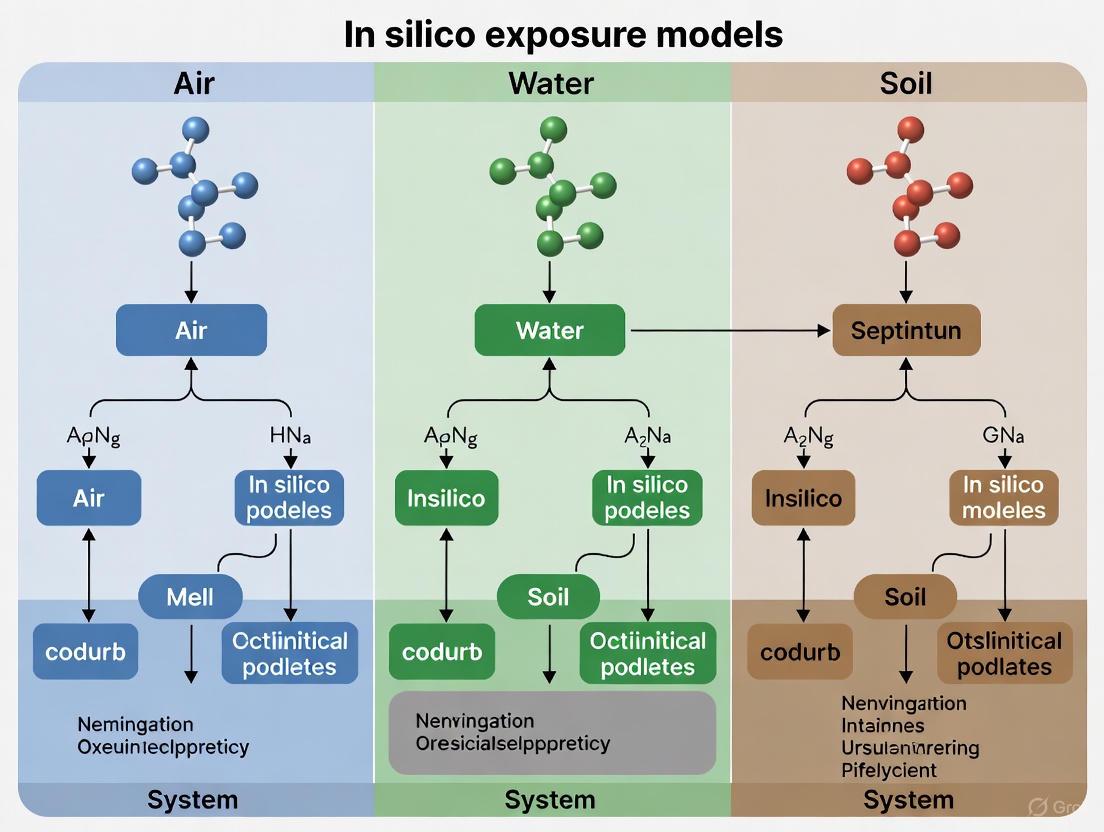

This article provides a comprehensive comparison of in silico exposure models for air, water, and soil systems, addressing critical needs in environmental risk assessment and drug development.

In Silico Exposure Models for Air, Water, and Soil: A Comparative Review of Tools, Applications, and Best Practices

Abstract

This article provides a comprehensive comparison of in silico exposure models for air, water, and soil systems, addressing critical needs in environmental risk assessment and drug development. With increasing regulatory requirements and a push to reduce animal testing, computational tools have become essential for predicting chemical fate and exposure. We explore the foundational principles of these models, evaluate specific methodologies and software applications across different environmental compartments, address common challenges and optimization strategies, and present a rigorous validation framework. Designed for researchers, scientists, and drug development professionals, this review synthesizes current evidence to guide model selection and application, supporting more reliable and efficient chemical safety assessments.

Fundamental Principles and Landscape of Environmental Exposure Modeling

The Critical Role of In Silico Models in Modern Risk Assessment

In silico models, which use computational simulations to predict the environmental fate and biological effects of chemicals, have become indispensable tools in modern risk assessment. The drive to develop these tools stems from the limitations of traditional methods, which are often complex, time-consuming, and costly processes [1]. For pesticide risk assessment, for example, conventional toxicity studies can take up to two years and cost millions of dollars, requiring a significant number of experimental animals [1]. In silico approaches offer a powerful alternative by providing rapid, cost-effective, and accurate predictions, potentially saving billions of dollars and reducing animal testing by hundreds of thousands [1].

These computational methods are particularly vital for assessing Emerging Contaminants such as pharmaceuticals, personal care products (PPCPs), and pesticides, which are increasingly detected in environmental compartments and pose potential risks to ecosystems and human health [2] [3]. This article provides a comparative analysis of in silico exposure models for air, water, and soil systems, detailing their methodologies, applications, and performance to guide researchers and drug development professionals.

Comparative Analysis of In Silico Exposure Models by Environmental Compartment

In silico tools have been adapted to assess chemical exposure and risk in diverse ecosystems. Their application varies significantly across different environmental compartments, each with distinct model types and representative tools.

Table 1: Overview of In Silico Models for Exposure Assessment by Environmental Compartment

| Environmental Compartment | Model Types | Representative Tools | Primary Application & Case Study |

|---|---|---|---|

| Air | Spray Drift & Deposition Models | AGricultural DISPersal (AGDISP) [1] | Predicts pesticide deposition and spray drift; successfully monitored atrazine drift up to 400m from sorghum fields [1]. |

| Water | Fugacity-based Models, QSARs, Biodegradation Models | TOXSWA [1], VEGA [4] [5], EPI Suite [3] [5], OPERA [3] [5] | Models pesticide fate in stagnant ditches (TOXSWA) [1]; QSARs predict toxicity and environmental fate (e.g., persistence, bioaccumulation) for aquatic organisms [4] [5]. |

| Soil | Compartmental & Multimedia Fate Models | QSAR Toolbox [3], QSAR-ME Profiler [3] | Screening and prioritization of chemicals based on persistence, bioaccumulation, and toxicity (PBT) in soil and other media [3]. |

The workflows for developing and applying these models, particularly for data-gap filling, follow a structured computational pathway.

This coupled modeling approach enables the derivation of a Predicted No-Effect Concentration (PNEC), a critical value for determining ecological risk quotients [4].

Experimental Protocols and Model Validation

Protocol for Coupled QSAR-ICE Modeling

The integration of Quantitative Structure-Activity Relationship (QSAR) and Interspecies Correlation Estimation (ICE) models represents a advanced methodology for generating robust toxicity data. The following provides a detailed experimental protocol:

- Chemical Input Preparation: Define the chemical structure of the substance under investigation using Simplified Molecular Input Line Entry System (SMILES) notation or other structural descriptors [3].

- QSAR Model Execution:

- Tool Selection: Utilize freely available platforms such as the VEGA platform (https://www.vegahub.eu) [4] or USEPA's T.E.S.T. [5].

- Endpoint Prediction: Input the chemical structure to predict toxicity values (e.g., LC50, NOEC) for specific surrogate species (e.g., Daphnia magna, Pimephales promelas) [4].

- Applicability Domain (AD) Check: Critically assess whether the prediction falls within the model's AD, which defines the chemical space for which it is reliable. Predictions outside the AD should be treated with caution [5].

- ICE Model Extrapolation:

- Platform: Use the USEPA's Web-ICE application (https://www.epa.gov/webice) [4].

- Procedure: Input the toxicity data obtained for the surrogate species from the QSAR step into the ICE model.

- Output: The model extrapolates and generates predicted toxicity values for a wider range of taxonomic groups [4].

- Data Validation (Where Possible): Compare a subset of the QSAR-ICE predicted data with any available experimental data from databases like the USEPA ECOTOX (https://cfpub.epa.gov/ecotox) to verify model accuracy [4].

Protocol for PBPK Modeling in Drug Development

Physiologically Based Pharmacokinetic (PBPK) models are crucial for predicting drug exposure in humans. The standard workflow is as follows:

- System Characterization: Develop a mathematical model representing the human body as interconnected compartments (e.g., liver, gut, plasma) with blood flow rates [6] [7].

- Compound Characterization: Populate the model with the drug-specific physicochemical and biochemical parameters (e.g., solubility, permeability, metabolic rate constants) [7].

- Virtual Population Construction: Generate virtual populations that reflect the physiological variability of the target population (e.g., pediatric, geriatric, pregnant, or organ-impaired patients) using clinical and real-world data [6].

- Simulation and Validation: Execute the model to simulate drug concentration-time profiles in plasma and tissues. The model must be validated against any available clinical data to ensure its predictive reliability [6] [7].

Performance Comparison of Key In Silico Tools

The performance of in silico models varies depending on their design, application domain, and the specific endpoint being predicted. The table below provides a comparative summary based on recent studies.

Table 2: Performance Comparison of Select In Silico Tools for Environmental Risk Assessment

| Tool Name | Primary Use | Key Endpoints | Reported Performance / Highlights |

|---|---|---|---|

| BeeTox (GACNN) [1] | Toxicity Prediction | Honeybee toxicity | Accuracy: 0.837; Specificity: 0.891; Sensitivity: 0.698 [1]. |

| VEGA QSAR Models [4] [5] | Toxicity & Fate Prediction | Ecotoxicity, Persistence, Bioaccumulation (Log Kow, BCF), Mobility (Log Koc) | Widely accepted; Arnot-Gobas & KNN-Read Across models found most appropriate for BCF prediction; OPERA model relevant for Log Koc [5]. |

| EPI Suite (KOWWIN) [5] | Fate Prediction | Log Kow | Identified as a relevant model for predicting bioaccumulation potential [5]. |

| BIOWIN (EPI Suite) [5] | Fate Prediction | Biodegradation/Persistence | Showed high performance in predicting persistence of cosmetic ingredients [5]. |

| AGDISP [1] | Exposure Prediction | Pesticide spray drift in air | Successfully validated for monitoring atrazine drift over long distances [1]. |

| Coupled QSAR-ICE [4] | Toxicity Extrapolation | Chronic toxicity for aquatic species | Effectively generated data to derive PNECs for BPA and alternatives (BPS, BPF), revealing equivalent ecological risks [4]. |

The Scientist's Toolkit: Essential Research Reagent Solutions

The effective application of in silico risk assessment relies on a suite of computational "reagents" and databases.

- QSAR Platforms (VEGA, EPI Suite, OECD QSAR Toolbox): These are fundamental software suites that provide collections of models for predicting a wide array of physicochemical, fate, and toxicological properties from molecular structure [3] [4] [5]. Their function is to fill data gaps for chemicals lacking experimental data.

- Toxicity Databases (USEPA ECOTOX): This database is an essential resource that aggregates curated experimental toxicity data for aquatic and terrestrial organisms. It serves as a critical source for model training, validation, and benchmarking [4].

- PBPK/PD Modeling Software (GastroPlus, Simcyp): These advanced simulation platforms are used to predict the absorption, distribution, metabolism, and excretion (ADME) of drugs in virtual human populations. They are key for evaluating inter-individual variability in drug exposure and response [6] [7].

- Molecular Dynamics (MD) & Docking Software (e.g., GROMACS, AutoDock): These tools simulate the interaction between a chemical and a biological macromolecule (e.g., a protein receptor) at an atomic level of detail. They help elucidate mechanisms of action, such as endocrine disruption [8] [9].

- Applicability Domain (AD) Assessment: This is not a single tool but a critical methodological component within QSAR models. It defines the chemical space where a model's predictions are considered reliable, thus serving as a vital quality control measure [5].

In silico models have fundamentally transformed the landscape of modern risk assessment. As demonstrated, a diverse arsenal of computational tools—from QSARs and ICE models for ecological risk to PBPK models for human health—now enables scientists to predict chemical exposure and toxicity with significant efficiency and growing accuracy. The critical comparison of these tools reveals that their performance is highly context-dependent, necessitating careful selection based on the environmental compartment, endpoint of interest, and the chemical's position within a model's applicability domain.

The ongoing integration of these models with artificial intelligence and expanding real-world data sources promises to further enhance their predictive power and regulatory acceptance. For researchers and drug development professionals, mastering this in silico toolkit is no longer optional but essential for navigating the complex challenges of ensuring chemical safety and environmental health in the 21st century.

In chemical risk assessment, accurately characterizing how humans and ecosystems are exposed to stressors is as crucial as determining the inherent toxicity of the chemicals. The conceptual framework for this characterization often divides the exposure environment into two distinct compartments: the near field and the far field [10]. The near field refers to microenvironments in close proximity to a receptor, such as the indoor environment of a home, vehicle, or workplace, where exposure occurs through direct contact with consumer products, materials, or indoor air [10]. In contrast, the far field encompasses the broader, indirect environment—including ambient air, surface water, soil, and food stuffs—from which chemicals disperse and transport before reaching a receptor [10]. Understanding the differences between these pathways is fundamental for developing accurate exposure models, which are essential tools for prioritizing chemicals for further testing and for informing regulatory decisions, particularly when actual monitoring data are scarce [11] [10].

This guide objectively compares the application of near-field and far-field models within the context of in silico exposure assessment for air, water, and soil systems. It provides a detailed comparison of their underlying principles, data requirements, and performance, supported by experimental data and case studies from the scientific literature.

Conceptual Frameworks and Model Definitions

The Near-Field (NF) Environment and Models

Near-field models are designed to quantify exposure from sources within a person's immediate vicinity. A quintessential example is the Near Field/Far Field (NF/FF) model, a well-accepted tool for precautionary exposure assessment in occupational and indoor settings [12] [13]. This model estimates exposures for an individual located close to an emission source, such as a worker at a bench applying a solvent or a process generating particulate matter [12]. The NF/FF model is fundamentally a two-box mass-balance model that treats the near field (the room or area containing the source and the receptor) and the far field (the adjoining or ambient environment) as separate but connected well-mixed compartments [12]. The model can incorporate complex, time-dependent emission functions to reflect real-world use patterns, such as the constant application of a chemical mass with an exponentially decreasing emission rate [12].

The Far-Field (FF) Environment and Models

Far-field models estimate exposure from diffuse, indirect sources in the general environment. These models typically follow the pathway of a chemical from its release into an environmental medium (e.g., air, water, or soil) through its fate and transport, eventually predicting human exposure via ingestion of food and water, inhalation of ambient air, or contact with contaminated soil [10]. Examples of far-field models include RAIDAR, FHX, and USEtox [10]. These models are often applied for regional-scale assessment and prioritize chemicals based on metrics like the intake fraction, which represents the fraction of a chemical emitted from a source that is eventually taken in by a population [10]. The exposure setting for far-field models is defined by physical characteristics like groundwater flow, soil type, meteorological conditions, and land use, which affect the contaminant's movement and transformation [11].

Visualizing the Integrated Exposure Pathway

The following diagram illustrates the logical relationship and primary pathways linking chemical sources to receptor exposure, differentiating between near-field and far-field environments.

Diagram Title: Near and Far Field Exposure Pathways

Comparative Analysis of Model Performance

The table below synthesizes the core characteristics of near-field and far-field modeling approaches based on comparative studies.

Table 1: Comparative Overview of Near-Field and Far-Field Exposure Models

| Feature | Near-Field Models | Far-Field Models |

|---|---|---|

| Primary Domain | Microenvironments (e.g., homes, vehicles, workplaces) [10] | General environment (e.g., regional air, water, soil) [10] |

| Typical Sources | Direct use of consumer products, off-gassing from materials, occupational handling [10] | Diffuse emissions to environment (e.g., pesticide spray drift, industrial effluent) [1] [10] |

| Exposure Pathways | Direct inhalation, dermal contact, dust ingestion [10] | Indirect ingestion (food, water), inhalation of ambient air, contact with soil [10] |

| Key Input Parameters | Emission rate from product/process, room volume, ventilation rate, duration of contact [12] [13] | Chemical emission rate to environment, physicochemical properties, meteorological & hydrological data [11] [1] |

| Representative Tools | NF/FF model, PRoTEGE [12] [10] | RAIDAR, USEtox, FHX, AGDISP [1] [10] |

| Temporal Scale | Short-term, task-based, or episodic exposure [12] | Long-term, continuous, or seasonal exposure [1] |

| Spatial Scale | Localized (cubic meters) [12] | Regional to continental [10] |

Experimental Data and Case Study Comparisons

Case Study 1: Performance of NF/FF for Particulate Matter

Experimental Protocol: A study tested the NF/FF model's performance in predicting particulate matter (PM) concentrations in a paint factory during powder pouring from big bags and small bags [13]. The experimental methodology was as follows:

- Measurement: PM concentration levels were measured during actual powder pouring operations.

- Dustiness Characterization: The dustiness index of the specific powders used was determined experimentally using a rotating drum apparatus.

- Model Application: The dustiness index was used as an input to the NF/FF model to predict mass concentrations of PM.

- Calibration: The handling energy factor, a model parameter that scales the dustiness index to reflect the energy of the industrial process, was adjusted so that the modeled concentrations matched the measured levels [13].

Results and Performance: The study found that the handling energy factor required to align the model with measurements varied considerably depending on the specific material and process, even for seemingly similar operations [13]. This indicates that while the NF/FF framework is applicable, accurate PM source characterization is critical and that process-specific handling energies need further refinement for robust model-based exposure assessment [13].

Case Study 2: Prioritizing Chemicals Using Multiple Models

Experimental Protocol: A model "Challenge" was conducted to compare how different modeling approaches prioritized a common set of chemicals based on exposure potential [10]. The methodology involved:

- Model Selection: Several far-field models (RAIDAR, FHX, USEtox) and a near-field model (PRoTEGE) were applied to the same set of chemicals.

- Input Assumptions: Models were run with both standardized unit emission rates and with more refined, scenario-specific emission estimates.

- Output Analysis: The resulting chemical rankings from each model were compared using statistical methods to assess their level of agreement [10].

Results and Performance: The analysis revealed that:

- There was close agreement in chemical rankings between the different far-field models when the assumed emission compartments (e.g., water vs. air) and rates were consistent.

- However, the ranking results were highly sensitive to the initial assumptions about emission rates.

- When comparing near-field and far-field model rankings, the agreement was lower, underscoring that these two classes of models capture fundamentally different exposure scenarios [10]. This highlights the importance of the exposure scenario and the mode of entry into the environment in determining the model outcome.

The Researcher's Toolkit for Exposure Modeling

Table 2: Essential Resources for In Silico Exposure Assessment

| Tool or Resource | Function/Description | Applicable Context |

|---|---|---|

| NF/FF Model | A two-box model for estimating exposure to airborne contaminants in indoor/occupational settings near an emission source [12] [13]. | Near-Field |

| USEtox | A far-field model that characterizes the fate, exposure, and toxicity of chemicals in a regional environment [10]. | Far-Field |

| RAIDAR | A far-field screening-level risk assessment model for chemical fate and effects in the environment [10]. | Far-Field |

| AGDISP | A model for predicting pesticide deposition and spray drift into air systems post-application [1]. | Far-Field |

| CompTox Chemistry Dashboard (U.S. EPA) | A database providing access to chemical properties, hazard, exposure, and risk data, useful for obtaining model inputs [14]. | Both |

| EPI Suite | A suite of physical/chemical property and environmental fate estimation programs, often used for predicting inputs like logP [15]. | Both |

| Dustiness Index | An experimentally determined measure of a powder's tendency to generate airborne particles, used to characterize PM source strength [13]. | Near-Field |

| Handling Energy Factor | A modifying factor used in exposure models to scale a dustiness index to reflect the energy of a specific industrial process [13]. | Near-Field |

The comparative analysis of near-field and far-field exposure models demonstrates that the choice of modeling framework is dictated by the specific research or regulatory question. Near-field models are indispensable for assessing exposures from direct, proximate sources in microenvironments, while far-field models are essential for evaluating population-scale exposures from indirect, diffuse environmental contamination. A comparative study showed that models within the same category (far-field) show good agreement, but results differ significantly between near-field and far-field categories, reflecting their different domains [10].

A critical insight from empirical data is that the accuracy of both near-field and far-field models is profoundly sensitive to their input parameters, particularly the emission rate and, for near-field PM, the handling energy factor [13] [10]. This underscores that sophisticated model frameworks rely on high-quality, context-specific input data for robust predictions. For a comprehensive risk assessment, particularly for chemicals with complex life cycles, an integrated approach that considers both near-field and far-field exposure pathways is often necessary to fully characterize the potential for human and ecological exposure.

The European Union's chemical regulation REACH (Registration, Evaluation, Authorisation and Restriction of Chemicals) has long promoted the replacement, reduction, and refinement (3Rs) of animal testing in regulatory decision-making. Directive 2010/63/EU establishes the goal of phasing out animal use for research and regulatory purposes in the EU as soon as scientifically possible, with many chemical legislation pieces requiring animal testing only as a last resort [16]. In response to the European Citizens' Initiative "Save cruelty-free cosmetics," the European Commission is developing a detailed "Roadmap Towards Phasing Out Animal Testing for Chemical Safety Assessments" with intended publication by the first quarter of 2026 [16]. This roadmap will outline specific milestones and actions for transitioning toward an animal-free regulatory system for chemical safety assessments.

Concurrently, New Approach Methodologies (NAMs) have emerged as innovative, human-relevant tools that can potentially replace traditional animal testing. These include in silico (computational) approaches, advanced in vitro models, and microphysiological systems that offer scientifically superior alternatives for safety assessment [17]. The regulatory landscape is rapidly evolving to accommodate these methodologies, with the U.S. Food and Drug Administration releasing its own "Roadmap to Reducing Animal Testing" in April 2025, encouraging drug developers to use NAMs as the default rather than exception [18]. This article examines the current state of in silico exposure models for environmental systems within this shifting regulatory framework.

In Silico Models for Environmental Exposure Assessment

In silico models represent a cornerstone of NAMs for environmental risk assessment, enabling researchers to predict chemical fate, distribution, and potential exposure without animal testing. These computational tools have gained significant traction for their ability to provide rapid, cost-effective assessments while reducing reliance on traditional animal studies.

Model Typologies and Their Applications

In silico models for environmental exposure assessment can be broadly categorized into three main classes, each with distinct capabilities and applications as summarized in Table 1.

Table 1: Classification of In Silico Models for Environmental Exposure Assessment

| Model Category | Primary Applications | Key Advantages | Inherent Limitations |

|---|---|---|---|

| Conventional Water Quality Models | Predicting contaminant concentrations in aquatic environments [19] | High prediction accuracy and spatial resolution [19] | Limited functionality beyond concentration prediction; handles only conventional contaminants [19] |

| Multimedia Fugacity Models | Simulating contaminant transport between different environmental media (air, water, soil, sediment) [19] | Excellent at depicting cross-media transport; handles numerous chemical types [19] | Assumes constant concentrations within same environmental compartment; cannot analyze variations in different parts of the same media [19] |

| Machine Learning (ML) Models | Contaminant identification, risk assessment, toxicity prediction, and concentration forecasting [19] | Applicable to diverse scenarios beyond concentration prediction; handles complex, non-linear relationships [19] | Outcomes can be difficult to interpret; requires substantial training data; "black box" concerns [19] |

Regulatory Context and Validation Frameworks

Under REACH, in silico approaches are explicitly encouraged for generating information on substance properties, particularly through the use of (quantitative) structure-activity relationship ((Q)SAR) models [20]. The European Chemicals Agency (ECHA) guidance acknowledges these methods for filling data gaps and conducting initial identifications of potential persistent, bioaccumulative, and toxic (PBT) properties when experimental data are unavailable.

The development of the EU's roadmap involves dedicated working groups focusing on human health and environmental safety aspects. The Environmental Safety Assessment Working Group (ESA WG) specifically addresses breaking down the replacement of animal testing for assessing environmental hazards and risks into different objectives, proposing specific actions, and defining milestones [16]. This group identifies both short-term and long-term solutions for reducing or replacing animal testing, including existing non-animal approaches ready for implementation and advancing methods still in development.

For regulatory acceptance, in silico models must demonstrate scientific validity, reproducibility, and relevance to the specific endpoint being assessed. The FDA's "weight of evidence" philosophy encourages sponsors to integrate multiple data streams—including disease context, clinical need, drug target information, and in silico predictions—to form a comprehensive, human-relevant picture of drug safety and efficacy [18].

Comparative Performance of In Silico Exposure Models

Model Performance Across Environmental Compartments

In silico tools have demonstrated particular utility for pesticide risk assessment, with various models adapted for specific environmental compartments. Table 2 summarizes the capabilities and performance metrics of prominent models for assessing pesticide exposure in different environmental media.

Table 2: Performance of In Silico Models for Pesticide Exposure Assessment Across Environmental Compartments [1]

| Environmental Compartment | Representative Models | Primary Application | Key Performance Metrics |

|---|---|---|---|

| Air | AGDISP (AGricultural DISPersal model) | Predicting pesticide deposition and spray drift | Successfully monitored atrazine drift up to 400m from application site [1] |

| Water | TOXSWA (TOXic substances in Surface WAters) | Predicting pesticide fate in water bodies | Validated against observed chlorpyrifos in water, sediment, and macrophytes in stagnant ditches [1] |

| Soil | Not specified in search results | Predicting pesticide persistence and mobility in soil | k-NN models for soil persistence showed accuracy >0.79 in training sets and >0.76 in test sets [20] |

The AGDISP model has been particularly effective for predicting pesticide spray drift into air systems, where approximately 30% of applied pesticides can enter the atmosphere through spray drift, volatilization, degradation pathways, and wind erosion [1]. When pesticides are applied to target surfaces, nearly 90% may enter the environment, causing persistent pollution issues in modern agricultural systems.

Integrated Strategies for Environmental Hazard Assessment

Recent research demonstrates the power of combining multiple NAMs for comprehensive environmental hazard assessment. A 2025 study published in Environmental Toxicology and Chemistry detailed a strategy combining high-throughput in vitro assays with in silico* modeling for fish ecotoxicology [21]. The methodology employed:

- A miniaturized version of the OECD test guideline 249 - A plate reader-based acute toxicity assay using RTgill-W1 cells

- The Cell Painting (CP) assay - Adapted for use in RTgill-W1 cells with imaging-based cell viability measurement

- In vitro disposition (IVD) modeling - Accounting for sorption of chemicals to plastic and cells over time to predict freely dissolved concentrations

This integrated approach demonstrated that for 65 chemicals where comparison was possible, 59% of adjusted in vitro phenotype altering concentrations (PACs) were within one order of magnitude of in vivo fish toxicity lethal concentrations, with in vitro PACs proving protective for 73% of chemicals [21]. This showcases the potential of combined in vitro and in silico approaches to reduce or replace fish in toxicity testing.

Diagram 1: Integrated Testing Strategy for Environmental Hazard Assessment

Advanced Methodologies and Experimental Protocols

Machine Learning-Enabled Detection of Environmental Contaminants

Cutting-edge research is integrating theoretical spectral calculations with machine learning to identify environmental contaminants with unprecedented precision. A 2025 study established a physics-informed machine learning pipeline for detecting polycyclic aromatic hydrocarbons (PAHs) in contaminated soil [22]. The methodology operates in two distinct stages:

- Characteristic Peak Extraction (CaPE) algorithm - Isolates distinctive spectral features from complex soil samples

- Characteristic Peak Similarity (CaPSim) algorithm - Identifies analytes with high robustness to spectral shifts and amplitude variations

This approach demonstrated strong similarity values (>0.6) between density functional theory (DFT)-calculated and experimental Surface-Enhanced Raman Spectroscopy (SERS) spectra for multiple PAHs, confirming its discriminative capability [22]. The method successfully addressed the challenge of extraordinarily complex SERS spectral backgrounds created by the extensive number of molecules and microbes in soil samples.

IntegratedIn SilicoStrategy for Persistence Assessment

Under REACH, assessment of persistent, bioaccumulative and toxic (PBT) properties is mandatory for substances manufactured or imported at volumes exceeding one tonne per year [20]. Researchers have developed an integrated in silico strategy for predicting chemical persistence across sediment, soil, and water compartments:

The methodology employs k-nearest neighbor (k-NN) algorithms built using half-life (HL) data for each environmental compartment. These models demonstrated accuracies exceeding 0.79 and 0.76 in training and test sets, respectively, for all three compartments [20]. To support k-NN predictions, the strategy identifies:

- Structural alerts with high true-positive percentages using SARpy software

- Chemical classes related to persistence using IstChemFeat software

The final integrated model combines these elements to reach an overall conclusion on substance persistence, with results on external validation sets supporting its use for regulatory purposes and substance prioritization [20].

Diagram 2: Machine Learning-Enabled Contaminant Detection Workflow

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Computational Platforms for In Silico Environmental Assessment

| Tool/Platform Name | Type | Primary Function | Application Context |

|---|---|---|---|

| SARpy | Software | Identifies structural alerts associated with chemical persistence [20] | REACH PBT/vPvB assessment; chemical prioritization |

| IstChemFeat | Software | Identifies chemical classes related to persistence [20] | REACH PBT/vPvB assessment; chemical categorization |

| k-NN Algorithms | Computational Method | Predicts persistence class based on chemical similarity [20] | Half-life prediction in sediment, soil, and water compartments |

| DeTox Database | In Silico Tool | Predicts developmental toxicity probability from chemical structure [23] | Developmental and Reproductive Toxicity (DART) screening |

| AGDISP | Environmental Model | Predicts pesticide deposition and spray drift into air systems [1] | Pesticide exposure assessment for aerial applications |

| TOXSWA | Environmental Model | Predicts fate of toxic substances in surface waters [1] | Pesticide exposure assessment in aquatic environments |

| ToxStudio | Software Suite | Addresses cardiac safety, off-target safety, and drug-induced liver injury [18] | Pharmaceutical safety assessment during drug development |

The regulatory landscape for chemical safety assessment is undergoing a profound transformation, driven by ethical concerns, scientific advancement, and policy evolution. In silico exposure models for air, water, and soil systems represent a cornerstone of this transition, offering human-relevant, efficient, and cost-effective alternatives to traditional animal testing.

While challenges remain—including model validation, regulatory acceptance, and interpretation of complex machine learning outputs—the direction is clear. With REACH establishing a framework for phasing out animal testing and regulatory agencies worldwide promoting NAMs, computational approaches will increasingly become the first line of assessment for chemical safety. As models continue to improve through integration with novel data streams and advanced artificial intelligence, their predictive power and regulatory acceptance will only increase, ultimately leading to more human-relevant safety assessment while reducing reliance on animal testing.

In silico models are indispensable in modern environmental science and drug development, offering a powerful means to predict chemical behavior and biological effects without constant laboratory testing. This guide objectively compares three core computational model types: Quantitative Structure-Activity Relationship ((Q)SAR), Toxicokinetic-Toxicodynamic (TKTD), and Machine Learning (ML) approaches. Framed within a broader thesis on exposure models for multi-media environmental systems (air, water, soil), this analysis provides researchers and scientists with a clear comparison of their operational principles, applications, and performance, supported by experimental data and protocols.

The table below summarizes the core characteristics, primary applications, and key outputs of the three model types, highlighting their distinct roles in environmental research and risk assessment.

Table 1: Core Characteristics of In Silico Model Types

| Feature | (Q)SAR Models | TKTD Models | Machine Learning (ML) Approaches |

|---|---|---|---|

| Core Principle | Relates chemical structure descriptors to a biological activity or property using statistical methods [5] [24]. | Mechanistically simulates the internal uptake (TK) and subsequent biological effects (TD) of a substance over time [25] [26]. | Learns complex, non-linear patterns from large datasets using algorithm-driven pattern recognition [27] [28]. |

| Primary Application | Predicting endpoint properties like biodegradation, bioconcentration, and toxicity [5] [24] [29]. | Forecasting time-resolved toxicity and bioaccumulation under dynamic exposure scenarios [25] [26]. | Tasks requiring high-dimensional pattern recognition and forecasting (e.g., air quality prediction, image-based risk mapping) [28] [30]. |

| Typical Output | A predicted quantitative value (e.g., Log BCF) or a classification (e.g., biodegradable/not) [5] [24]. | Time-course simulations of internal concentration, damage, and survival/impairment [25] [26]. | Predictive scores, classifications, or forecasts (e.g., PM2.5 concentration for the next 24 hours) [28] [30]. |

| Key Advantage | Cost-effective for high-throughput screening and filling data gaps [5] [24]. | High ecological relevance for realistic, fluctuating exposure conditions [25] [26]. | High predictive accuracy and adaptability to diverse, complex data types [28] [30]. |

Performance Data and Comparative Analysis

Predictive Performance in Environmental Fate Applications

(Q)SAR models are widely used for predicting critical environmental fate parameters. Their performance varies, and selecting the best-performing model for a specific endpoint is crucial. The following table summarizes the top-performing models for persistence, bioaccumulation, and mobility of cosmetic ingredients, as identified in a comparative study [5].

Table 2: Performance of (Q)SAR Models for Environmental Fate Prediction [5]

| Endpoint | Parameter | Top-Performing Model(s) | Key Finding |

|---|---|---|---|

| Persistence | Ready Biodegradability | Ready Biodegradability IRFMN (VEGA), Leadscope (Danish QSAR), BIOWIN (EPISUITE) | Showed the highest performance for classifying biodegradability. |

| Bioaccumulation | Log Kow | ALogP (VEGA), ADMETLab 3.0, KOWWIN (EPISUITE) | Most appropriate for predicting lipophilicity. |

| Bioaccumulation | Bioconcentration Factor (BCF) | Arnot-Gobas (VEGA), KNN-Read Across (VEGA) | Best for predicting bioaccumulation in fish. |

| Mobility | Soil Adsorption (Log Koc) | OPERA v.1.0.1 (VEGA), KOCWIN-Log Kow (VEGA) | Deemed most relevant for mobility assessment. |

For specific chemical classes, local (Q)SAR models can offer superior performance over general models. For instance, a local model developed for the Bioconcentration Factor (BCF) of organophosphate pesticides demonstrated robust statistics, with cross-validated R² (Q²) between 0.709–0.722 and external validation R² (Q²Ext) between 0.717–0.903 [24].

Accuracy in Forecasting and Toxicity Prediction

Machine Learning and TKTD models excel in forecasting complex, real-world phenomena with high precision.

In air quality forecasting, a comparative study of ten ML models showed that hyperparameter optimization significantly enhances performance. Support Vector Regression (SVR) optimized with Bayesian optimization achieved an exceptional R² score of 99.94%, with an MAE of 0.0120 and MSE of 0.0005 [28]. Ensemble strategies, which combine the strengths of multiple base models, further improved prediction accuracy.

For toxicity prediction, TKTD models like the General Unified Threshold model of Survival (GUTS) are highly reliable. A novel variant, BufferGUTS, was developed for terrestrial above-ground exposure (e.g., honeybees) and demonstrated a similar or better reproduction of survival curves compared to existing models (GUTS-RED and BeeGUTS) for 13 pesticides, without increasing model complexity [25]. This makes it particularly suitable for event-based exposure scenarios like contact or feeding.

Experimental Protocols

Protocol for Developing a Local (Q)SAR Model

The following workflow details the methodology for developing a local (Q)SAR model, as used for predicting the BCF of organophosphate pesticides [24].

- Data Curation: A dataset of 55 organophosphate pesticides with experimentally verified BCF values was compiled from the Pesticide Properties Database. The response variable was the logarithmic value of BCF (Log BCF).

- Descriptor Calculation and Pruning: Chemical structures were downloaded in SDF format, and 4,759 2D descriptors were calculated using PaDEL descriptor software. Constant values and descriptors with a pairwise correlation >0.95 were removed to reduce redundancy, resulting in 853 descriptors for modeling.

- Data Splitting: The dataset was split into a training set (75% of compounds, n=41) and an external test set (25%, n=14) using two techniques: biological sorting (by response value) and structure-based splitting to ensure representativeness.

- Model Development: Multiple Linear Regression (MLR) models were developed using the Genetic Algorithm-Variable Subset Selection (GA-VSS) for descriptor selection, implemented in QSARINS software.

- Model Validation: Models were validated internally (e.g., leave-one-out cross-validation, yielding Q²) and externally using the held-out test set (Q²Ext). The application domain was analyzed to identify reliable predictions.

Protocol for Applying a TKTD Model (BufferGUTS)

This protocol outlines the procedure for applying the BufferGUTS model to honeybee survival data, as described in the terrestrial exposure study [25].

- Data Collection and Preprocessing: Survival data were obtained from standard regulatory reports (e.g., OECD guidelines 213, 214, 245). The dataset included 51 exposure scenarios for 13 pesticides across acute oral, chronic oral, and acute contact routes. Data were discretized into time-series exposure profiles.

- Exposure Normalization: To facilitate comparison across routes and substances, external exposure concentrations were converted to Toxic Units (TUs) based on effect thresholds.

- Model Parameterization: The BufferGUTS model was parameterized. This model introduces an intermediate "buffer" compartment (representing residues on the exoskeleton or in the gut) between the external concentration and the internal damage state of the organism. Key parameters include the dominant rate constant (kₚ), buffer dynamics, and the threshold (z) and killing rate (d) for the Stochastic Death (SD) mechanism.

- Model Calibration and Evaluation: Model parameters were fitted to the observed survival data from the training set. Performance was evaluated by comparing the simulated survival curves to the experimental data, assessing the goodness-of-fit. The model's performance was benchmarked against existing models like GUTS-RED and BeeGUTS.

Protocol for an ML-Based Air Quality Forecast

This protocol describes the methodology for building a high-accuracy ML model for air quality prediction, as demonstrated in a comparative study [28].

- Dataset Preparation: An air quality dataset with 9,357 hourly records of pollutants (PM2.5, NOx, CO, benzene) and meteorological data was used. The data was split, preserving temporal order, into 80% for training and 20% for testing.

- Model Selection and Hyperparameter Optimization: Ten regression models (XGBoost, LightGBM, Random Forest, SVR, etc.) were trained. Hyperparameters for each model were rigorously tuned using Bayesian Optimization and Randomized Cross-Validation to minimize overfitting and maximize performance.

- Ensemble Modeling: A stacking ensemble method was employed. Predictions from the base models were used as inputs to a meta-model (e.g., linear regression) to produce a final, aggregated prediction.

- Model Assessment: The performance of each model and the ensemble was evaluated on the test set using metrics such as R², Mean Absolute Error (MAE), and Mean Squared Error (MSE).

Workflow and Pathway Diagrams

Diagram 1: TKTD Model with Buffer Concept

Diagram 2: ML for Air Quality and Risk

Diagram 3: QSAR Model Development

The following table lists essential software tools and platforms used in the development and application of the featured in silico models.

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Function | Application Context |

|---|---|---|

| QSARINS | Software for developing MLR-based QSAR models with genetic algorithm variable selection and robust validation [24]. | Used to build and validate local QSAR models for organophosphate BCF prediction [24]. |

| PaDEL Descriptor | Open-source software for calculating 2D molecular descriptors and fingerprints from chemical structures [24]. | Generates input descriptors for QSAR model development [24]. |

| VEGA Platform | A freely available suite of (Q)SAR models for predicting toxicity, environmental fate, and physicochemical properties [5]. | Used for comparative assessment of model performance for cosmetic ingredients (e.g., CAESAR, Meylan models) [5]. |

| EPI Suite | A Windows-based suite of physical/chemical property and environmental fate estimation models developed by the US EPA. | Used for predicting properties like Log Kow (KOWWIN) and biodegradability (BIOWIN) [5]. |

| Python/R with ML Libraries (XGBoost, Scikit-learn) | Programming environments with libraries for implementing a wide range of machine learning algorithms and statistical analyses. | Core platforms for building and optimizing ML regression and classification models for air quality and other forecasts [28] [30]. |

| BufferGUTS Model | A specific TKTD model variant incorporating a buffer compartment to handle discrete exposure events in terrestrial arthropods [25]. | Applied to simulate honeybee survival data from pesticide exposure across different routes [25]. |

This guide provides a comparative analysis of four widely used in silico platforms—VEGA, EPI Suite, OPERA, and ADMETLab—for predicting the environmental fate and physicochemical properties of chemicals. The evaluation is framed within the context of exposure models for air, water, and soil systems. The analysis, based on recent benchmarking and application studies, reveals that while all platforms are valuable, their performance is highly endpoint-dependent. OPERA and ADMETLab often demonstrate superior overall predictivity, whereas VEGA and EPI Suite contain specific, well-regarded models for environmental parameters like persistence and bioaccumulation. The critical role of the Applicability Domain (AD) in evaluating prediction reliability is a consistent theme across studies [5] [31].

The table below summarizes the core characteristics and optimal use cases for each platform.

| Platform | Developer / Source | Primary Access | Key Strengths & Recommended Uses |

|---|---|---|---|

| VEGA | Mario Negri Institute | Freeware | Persistence: Ready Biodegradability IRFMN model [5].Bioaccumulation: ALogP (for Log Kow), Arnot-Gobas, and KNN-Read Across (for BCF) [5].Mobility: OPERA and KOCWIN-Log Kow models [5]. |

| EPI Suite | US EPA & Syracuse Research Corp. (SRC) | Freeware | Comprehensive Suite: Includes KOWWIN, BIOWIN, BCFBAF, KOCWIN, AOPWIN, etc. [32].Persistence: BIOWIN model [5].Bioaccumulation: KOWWIN (Log Kow) [5].Regulatory Acceptance: Widely used for screening-level assessment [32] [33]. |

| OPERA | U.S. NIEHS | Open Source | Overall Performance: Identified as a recurring optimal choice in benchmarking [31].Physicochemical Properties: Accurate predictions of boiling point and melting point [34].Mobility: Relevant for Log Koc prediction [5]. |

| ADMETLab | N/A | Freemium / Commercial | Overall Performance: Exhibits good predictivity for PC and TK properties [31].Bioaccumulation: Appropriate for Log Kow prediction [5].Broad Applicability: Useful for a range of ADMET and property predictions [34]. |

Performance Comparison by Environmental Fate Endpoint

Recent comparative studies have evaluated these tools against specific, regulatory-relevant endpoints for environmental fate. The following table synthesizes findings from a 2025 study focused on cosmetic ingredients and other benchmarking efforts [5] [31] [34].

| Endpoint Category | Specific Endpoint | Recommended Platform(s) & Models | Performance Notes |

|---|---|---|---|

| Persistence | Ready Biodegradability | VEGA (Ready Biodegradability IRFMN), EPI Suite (BIOWIN), Danish QSAR (Leadscope) [5] | These models showed the highest performance for assessing environmental persistence [5]. |

| Bioaccumulation | Log Kow (Octanol-Water Partition Coefficient) | VEGA (ALogP), ADMETLab, EPI Suite (KOWWIN) [5] | These models were found to be the most appropriate for this key lipophilicity metric [5]. |

| BCF (Bioconcentration Factor) | VEGA (Arnot-Gobas, KNN-Read Across) [5] | These models were identified as best for BCF prediction [5]. | |

| Mobility | Log Koc (Soil Organic Carbon-Water Partition Coefficient) | VEGA (OPERA, KOCWIN-Log Kow), EPI Suite (KOCWIN) [5] [32] | VEGA's OPERA and KOCWIN models were deemed most relevant for predicting soil mobility [5]. |

| Physicochemical Properties | Boiling Point / Melting Point | OPERA, ACD/Labs Percepta [34] | Delivered the most accurate predictions in a study on Novichok agents [34]. |

| Vapour Pressure | EPI Suite, TEST [34] | Excelled in vapour pressure estimates for challenging chemical structures [34]. |

Experimental Protocols for Model Benchmarking

The performance data presented in this guide are derived from rigorous external validation studies. The standard protocol for such benchmarking involves several key stages, from data collection to chemical space analysis [31].

Data Collection and Curation

- Source Identification: Experimental datasets are collected from scientific literature and databases (e.g., PubMed, Web of Science, EPA ECOTOX) [31] [4].

- Standardization: Chemical structures are converted into a standardized SMILES notation. This process includes neutralizing salts, removing duplicates, and excluding inorganic/organometallic compounds [31].

- Data Consistency Check: For a given property, data points across different datasets are compared. Compounds with highly inconsistent experimental values (standardized standard deviation > 0.2) are removed to ensure dataset quality [31].

Model Prediction and Validation

- Tool Selection: Software platforms are selected based on availability, usability, and regulatory relevance [31].

- Prediction Execution: The curated chemical datasets are run through the selected platforms to obtain in silico predictions for the target properties.

- Performance Assessment: Predictions are compared against the curated experimental data. Statistical metrics such as the coefficient of determination (R²) for regression models and balanced accuracy for classification models are calculated [31].

Applicability Domain and Chemical Space Analysis

- Applicability Domain (AD): The reliability of each prediction is evaluated based on whether the query chemical falls within the model's AD, a theoretical space defined by the structures and properties of the chemicals used to train the model. Predictions inside the AD are considered more reliable [5] [31].

- Chemical Space Mapping: Principal Component Analysis (PCA) is often performed on molecular fingerprints to visualize how the validation dataset relates to reference chemical spaces (e.g., industrial chemicals, pharmaceuticals). This confirms the relevance of the validation results for real-world applications [31].

The following diagram illustrates this multi-stage validation workflow.

Successful in silico toxicology and environmental fate assessment relies on a combination of software, databases, and computational resources.

| Tool / Resource | Function & Purpose |

|---|---|

| SMILES Notation | A line notation for representing molecular structures, required as input for most QSAR platforms [33]. |

| PubChem PUG REST API | A public service to retrieve chemical structures (SMILES) and other data using CAS numbers or chemical names, facilitating dataset creation [31]. |

| RDKit | An open-source cheminformatics toolkit used for standardizing chemical structures, calculating molecular descriptors, and handling chemical data in Python [31]. |

| ECOTOX Knowledgebase (US EPA) | A comprehensive database compiling single-chemical toxicity data for aquatic and terrestrial organisms, essential for model validation [4]. |

| OECD QSAR Toolbox | A software application designed to help users group chemicals into categories and fill data gaps via read-across and QSAR models, supporting regulatory assessments. |

Critical Considerations for Platform Selection

The Central Role of the Applicability Domain

The Applicability Domain (AD) is a cornerstone for reliable (Q)SAR predictions. A 2025 comparative study highlighted that qualitative predictions, when classified by regulatory criteria, are generally more reliable than quantitative ones, and the AD plays an important role in evaluating this reliability [5]. Predictions for chemicals falling outside a model's AD should be treated with caution, regardless of the platform used. Tools like VEGA provide explicit AD assessments for each prediction, which is a key feature for risk assessment [5] [31].

Performance Across Property Types

Large-scale benchmarking indicates that predictive performance varies significantly between property types. A 2024 review found that models for physicochemical properties (average R² = 0.717) generally outperformed those for toxicokinetic properties (average R² = 0.639) [31]. This underscores the importance of selecting a platform that is benchmarked for the specific endpoint of interest.

A Framework for Model Selection

Given the endpoint-dependent performance, a strategic approach to platform selection is recommended. The following decision diagram outlines a workflow based on the user's primary objective and the specific property of interest.

The comparative analysis of VEGA, EPI Suite, OPERA, and ADMETLab reveals that no single platform is universally superior. EPI Suite remains a robust, freely available toolkit for comprehensive, screening-level environmental fate assessment, while VEGA hosts several best-in-class models for specific endpoints like biodegradation and bioconcentration. For general physicochemical properties and broad-scale benchmarking, OPERA and ADMETLab frequently emerge as top performers [5] [31] [34]. The most critical practice for researchers is to align the tool selection with the specific endpoint, verify the chemical's placement within the model's Applicability Domain, and consult multiple sources or conduct validation where possible, especially for novel or extreme chemical structures.

Model Implementation and Compartment-Specific Applications

Predicting how airborne substances transport through the atmosphere and ultimately result in human inhalation exposure is a critical challenge in environmental health sciences. In silico air system models are computational frameworks designed to simulate this entire pathway, from the initial release of a contaminant to its intake by the human respiratory system. Within the broader context of in silico exposure models for environmental systems, air models are uniquely complex due to the dynamic and turbulent nature of the atmosphere. These models are indispensable for proactive risk assessment, allowing researchers and drug development professionals to evaluate the potential human health impacts of airborne chemicals, pesticides, or particulate matter without relying solely on costly and time-consuming field studies [1] [35].

The core objective of these models is to bridge the gap between source emissions and internal human dose. This process involves several interconnected stages: atmospheric dispersion, where pollutants are transported and diluted by wind; environmental concentration, which determines the level of pollutants in the air people breathe; and human exposure and intake, which accounts for the duration of exposure and inhalation rates to calculate the final inhaled dose [36]. By integrating computational fluid dynamics (CFD), meteorological data, and human activity patterns, these models provide a powerful tool for quantifying inhalation exposures in various settings, from urban commutes to indoor occupational spaces.

Comparative Analysis of Modeling Approaches

Different computational approaches have been developed to model atmospheric transport and exposure, each with distinct methodologies, data requirements, and applications. The table below summarizes three primary categories of models used in this field.

Table 1: Comparison of In Silico Air System Model Types

| Model Type | Core Methodology | Typical Spatial Scale | Key Inputs | Primary Outputs | Strengths | Limitations |

|---|---|---|---|---|---|---|

| Computational Fluid Dynamics (CFD) Models | Solves Navier-Stokes equations for fluid flow numerically. | Microscale (e.g., a room, a street canyon) | 3D geometry, boundary conditions (velocity, pressure), emission source strength. | High-resolution 3D maps of pollutant concentration, airflow velocity, and pressure. | High spatial accuracy, models complex geometries and turbulence. | Computationally intensive, requires expertise to set up and validate. |

| Statistical Exposure Models | Uses regression and multivariate analysis on measured exposure data. | Local (e.g., a city, a commute route) | Empirical pollutant measurements, meteorology (e.g., temperature, humidity), travel mode, traffic density. | Personal or microenvironmental exposure levels, identification of key exposure factors. | Quantifies real-world variability, identifies significant predictors of exposure. | Relies on availability of extensive measurement data, less predictive for new scenarios. |

| Intake Fraction Models | Uses a fate and transport factor to link emission to intake. | Local to Regional | Emission rate, breathing rate, population density. | The fraction of a released pollutant that is inhaled by a population. | Simple, efficient for comparative risk screening and life-cycle assessment. | Low spatial resolution, does not provide concentration maps. |

Supporting Experimental Data and Validation

The validity of these models hinges on their ability to replicate real-world conditions, which is demonstrated through rigorous comparison with experimental data.

- CFD Model Validation: In one study, a CFD model was built to simulate an air purifier in a bio-aerosol test chamber. The model used the turbulent k-ε model in ANSYS Fluent to simulate airflow and particle tracking. Experimental data gathered using a TSI model 3321 Aerodynamic Particle Sizer (APS) showed a "close correlation" with the model's predictions for contaminant reduction over time, thereby validating the model's accuracy for simulating device performance [37].

- Statistical Model Insights: A travel mode exposure study in Barcelona conducted 172 trips measuring Black Carbon (BC), Ultrafine Particles (UFP), and CO. The study's pairwise design controlled for meteorology, and multivariate analyses revealed that travel mode was the dominant factor, explaining up to 70% of the variability in exposure to CO. The data showed car commuters experienced concentrations of particulate pollutants (PM2.5, BC, UFP) that were 2–3 times higher than cyclists and pedestrians on adjacent lanes [36]. This type of empirical data is crucial for building and validating statistical exposure models.

Experimental Protocols for Model Input and Validation

To ensure the reliability of in silico air system models, standardized experimental protocols are essential for generating high-quality input and validation data.

Protocol for Commuter Exposure Measurement

This protocol is designed to collect data on personal exposure across different transportation microenvironments, which can be used to build or validate statistical models [36].

- Route and Mode Selection: Define round-trip routes that incorporate various traffic conditions and urban configurations (e.g., street canyons, open roads). Plan for multiple travel modes (e.g., car, bus, bicycle, walking) to be tested.

- Pairwise Sampling Design: Conduct measurements for different travel modes concurrently on the same route. This controls for the effects of meteorology and background pollutant levels, allowing for a direct comparison of the microenvironment's contribution.

- Instrumentation and Calibration: Deploy portable, high-time-resolution monitors for pollutants of interest. Key instruments measure:

- Black Carbon (BC): Using an aethalometer.

- Ultrafine Particles (UFP): Using a condensation particle counter.

- Particulate Matter (PM2.5): Using a laser photometer.

- Carbon Monoxide (CO): Using an electrochemical sensor.

- All instruments must be calibrated prior to the sampling campaign.

- Data Collection: Execute trips during different times of day (e.g., morning rush hour, evening rush hour, off-peak) to capture temporal variability. Record GPS data, temperature, and relative humidity simultaneously.

- Data Processing and Analysis: Synchronize all data streams. Exclude trips with excessive instrument downtime (>25% data loss). Calculate mean exposure concentrations for each trip and mode. Use pairwise t-tests and multivariate regression analysis to determine statistically significant differences between modes and the factors explaining exposure variance.

Protocol for CFD Model Validation of Air Purification

This protocol outlines the steps for generating experimental data to validate CFD models simulating air purification devices [37].

- Controlled Chamber Testing: Place the air purification device in a sealed, controlled environmental test chamber (e.g., a bio-aerosol chamber).

- Contaminant Introduction: Introduce a known quantity and size distribution of test aerosol particles into the chamber to create a homogeneous initial concentration.

- Performance Monitoring: Use high-precision particle instrumentation, such as an Aerodynamic Particle Sizer (APS), to characterize the particle concentration in real-time at designated locations within the chamber. The device is turned on, and the decay in particle concentration is monitored over time.

- CFD Model Construction:

- CAD Model Design: Create a precise digital replica of the test chamber and the air purification device using computer-aided design (CAD) software.

- Meshing: Generate a computational mesh, dividing the CAD model into a finite volume grid where the equations of fluid motion can be solved.

- Initial and Boundary Conditions: Set realistic initial conditions and boundary conditions (e.g., velocity inlet at the purifier, pressure outlets, no-slip walls) based on the experimental setup.

- Simulation Execution: Run the simulation using an appropriate turbulence model (e.g., k-ε model) to achieve a steady-state airflow. Subsequently, run a particle tracking simulation to model the reduction of contaminants over time.

- Model Validation: Compare the simulated particle reduction results from the CFD model with the experimental data obtained from the chamber test. A close correlation validates the model's accuracy, allowing it to be extended to simulate real-world scenarios like classrooms or offices.

Visualization of Modeling Workflows

The following diagrams, generated with Graphviz DOT language, illustrate the logical workflows for the key experimental and modeling protocols described above.

Commuter Exposure Assessment Workflow

CFD Model Validation Workflow

The Scientist's Toolkit: Key Research Reagents and Materials

The experimental and computational work in this field relies on a suite of specialized tools and reagents. The following table details essential items for conducting exposure assessments and model validation.

Table 2: Essential Research Reagents and Materials for Air System Modeling

| Item Name | Type/Category | Primary Function in Research |

|---|---|---|

| Aerodynamic Particle Sizer (APS) | Instrument | Measures the size distribution and concentration of aerosol particles in real-time, providing critical data for model validation [37]. |

| Portable Aethalometer | Instrument | Provides real-time, high-time-resolution measurements of Black Carbon (BC) concentration, a key tracer for traffic-related air pollution [36]. |

| Condensation Particle Counter (CPC) | Instrument | Counts the number concentration of ultrafine particles (UFP) in air, essential for assessing exposure to nanoparticles [36]. |

| Test Aerosols | Reagent | Particles of known composition and size (e.g., sodium chloride, polystyrene latex) used in controlled chamber experiments to calibrate instruments and validate CFD models [37]. |

| ANSYS Fluent | Software | A commercial Computational Fluid Dynamics (CFD) software package used to simulate airflow, turbulence, and particle dispersion in complex environments [37]. |

| AGDISP Model | Software | An in silico tool specifically designed for predicting pesticide spray drift and deposition, assessing exposure risk in air systems post-application [1]. |

| CAD Software | Software | Used to create precise digital geometries of test chambers, rooms, or urban environments, which form the basis for CFD model meshing [37]. |

Environmental risk assessment (ERA) for aquatic systems is a critical process for evaluating the impact of chemicals, such as pesticides and industrial compounds, on ecosystem health. This complex procedure involves hazard identification, exposure assessment, toxicity assessment, and risk characterization [1]. Traditionally reliant on extensive and costly toxicity testing, the field has increasingly adopted in silico computational tools to improve efficiency and accuracy. These models offer significant advantages, including reduced animal testing, lower costs, and faster assessment times, with potential savings of 50-70 billion USD and elimination of 100,000-150,000 test animals [1]. For researchers and drug development professionals, understanding the capabilities and limitations of these models is essential for predicting how substances behave in aquatic environments, particularly their persistence, bioaccumulation potential, and ecological impacts.

The challenge of assessing chemical fate is particularly acute for emerging contaminants like per- and polyfluoroalkyl substances (PFAS), which exhibit unique bioaccumulation behaviors not adequately captured by traditional models designed for lipophilic compounds [38]. This comparison guide provides an objective analysis of leading aquatic system models, their operational methodologies, and performance data to inform selection for specific research applications.

Comparative Analysis of Aquatic Fate Models

Table 1: Overview of Aquatic Fate and Bioaccumulation Models

| Model Name | Primary Application | Chemical Classes | Spatial Scale | Temporal Scale | Key Outputs |

|---|---|---|---|---|---|

| BASS [39] | Population & bioaccumulation dynamics | Hydrophobic organics, metals (Cd, Cu, Hg, Pb, Ni, Ag, Zn) | Hectare | Day | Chemical concentrations in age-structured fish communities |

| OECD Tool [40] | Screening-level prioritization | Organic chemicals | Regional to global | Steady-state | Overall persistence (Pov), transfer efficiency (TE), characteristic travel distance |

| EPI Suite [40] | Property estimation | Broad organic chemicals | N/A | N/A | Bioaccumulation factor (BAF), degradation half-lives |

| PFAS-Specific Models [38] | PFAS bioaccumulation | Per- and polyfluoroalkyl substances | Food web | Steady-state | Concentrations in aquatic and terrestrial organisms |

Technical Specifications and Methodologies

Table 2: Technical Specifications of Featured Models

| Model | Mathematical Approach | Key Parameters | Uptake Pathways | Elimination Pathways |

|---|---|---|---|---|

| BASS [39] | Diffusion kinetics + bioenergetics | Gill morphometry, feeding rate, proximate composition | Dietary intake, respiratory diffusion | Egestion, respiration, excretion, mortality |

| OECD Tool [40] | Multimedia mass balance | Persistence (Pov), long-range transport (TE, CTD) | Intermedia transfer | Degradation in air, water, soil |

| PFAS Models [38] | Steady-state mass balance | Protein-water distribution (DPW), membrane-water distribution (DMW) | Dietary, respiratory | Renal, fecal, biliary, maternal transfer, metabolism |

Model Performance and Experimental Validation

Quantitative Performance Metrics

The reliability of aquatic fate models is established through rigorous validation against laboratory and field data. The BASS model, for instance, has been successfully applied to predict PCB dynamics in Lake Ontario salmonids and methylmercury bioaccumulation in the Florida Everglades and Virginia river systems [39]. Similarly, PFAS-specific bioaccumulation models demonstrate strong performance when predicting field-based bioaccumulation factors in fish, with accuracy measured through mean model bias (MB) and its standard deviation representing systematic and random uncertainty components [38].

For screening-level assessment, models like the OECD Tool have been validated against reference sets of well-characterized chemicals. In one extensive screening of 8,648 substances, models successfully identified chemicals fitting persistent organic pollutant (POP) and very persistent and very bioaccumulative (vPvB) profiles through percentile ranking against 148 reference contaminants [40]. This approach allows researchers to contextualize hazard scores of less-studied chemicals on a comparative scale.

Experimental Protocols for Model Validation

Laboratory Bioconcentration Testing Protocol

- Exposure Chamber Setup: Organisms (typically fish) are maintained in flow-through aquaria with controlled temperature, pH, and oxygenation

- Chemical Dosing: Water is spiked with test compound at sublethal concentrations

- Sampling Regimen: Tissue samples collected at predetermined intervals during uptake and depuration phases

- Analytical Quantification: Chemical concentrations measured via LC-MS/MS or GC-MS

- Parameter Calculation: Uptake (k1) and elimination (k2) rate constants derived from concentration-time data

- Model Comparison: Predicted versus observed bioconcentration factors (BCF) statistically evaluated

Field Validation Protocol for Bioaccumulation Models

- Site Selection: Identify ecosystems with known chemical contamination gradients

- Food Web Characterization: Sample water, sediment, and trophic species to establish dietary relationships

- Field Measurements: Collect physical-chemical parameters (pH, temperature, organic carbon)

- Tissue Residue Analysis: Measure chemical concentrations in all sampled organisms

- Model Parameterization: Input site-specific data and run simulations

- Performance Evaluation: Compare predicted versus observed bioaccumulation factors using statistical measures (MB, R², RMSE) [38]

Advanced Modeling Approaches

Specialized Frameworks for Problematic Contaminants

Recent advances address challenging contaminant classes like PFAS, which deviate from traditional bioaccumulation paradigms due to their protein-binding affinity rather than lipid partitioning. Modern PFAS models incorporate six different distribution coefficients to represent equilibrium partitioning in organisms: albumin-water (DALB-W), transporter protein-water (DTP-W), structural protein-water (DSP-W), neutral lipid-water (DNL-W), phospholipid-water (DMW), and carbohydrate-water (DCW) [38]. These frameworks explicitly account for renal clearance mechanisms, which prove critical for accurately predicting the elimination of certain PFAS compounds from aquatic organisms [38].

High-Throughput Screening Applications

For rapid prioritization of large chemical inventories, simplified modeling approaches have been developed. The Screen-POP methodology combines persistence, bioaccumulation, and long-range transport metrics multiplicatively to identify potential POP and vPvB candidates [40]. This exposure-based hazard scoring enables efficient screening of thousands of chemicals, as demonstrated in assessments of Arctic contaminants and OECD country production volumes [40].

Model Selection Workflow for Aquatic Fate Assessment

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagents and Computational Tools for Aquatic Fate Studies

| Tool/Reagent | Function | Application Context | Example Sources |

|---|---|---|---|

| EPI Suite | Estimates physicochemical properties & BCF | Screening-level assessment for organic chemicals | US Environmental Protection Agency [40] |

| VEGA Platform | (Q)SAR modeling for persistence & bioaccumulation | Prioritization of cosmetic ingredients & industrial chemicals | VEGA QSAR Models [5] |

| Variant Albumin Proteins | In vitro measurement of protein-binding affinities | PFAS bioaccumulation studies | Equilibrium dialysis assays [38] |

| Solid-Supported Lipid Membranes | Determination of membrane-water distribution | Measuring phospholipid partitioning | Validated experimental methods [38] |

| OECD Tool | Calculates overall persistence & long-range transport | Regional to global exposure assessment | OECD Guidelines [40] |

The evolving landscape of aquatic fate models reflects increasing sophistication in addressing diverse chemical classes and ecosystem complexities. Traditional models like BASS and EPI Suite remain valuable for hydrophobic contaminants, while emerging frameworks specifically address the unique behaviors of PFAS and ionizable compounds. For researchers, selection criteria should prioritize alignment between chemical properties, model capabilities, and assessment goals, with particular attention to a model's representation of key partitioning processes and elimination pathways. As chemical diversity continues to expand, particularly with novel polymeric and electrolyte substances, ongoing model refinement will remain essential for accurate aquatic risk assessment and protective environmental management.

Understanding the behavior of chemicals in soil and sediment systems is fundamental to accurate environmental risk assessment. The interplay between sorption, degradation, and bioavailability determines the ultimate environmental fate and ecological impact of pesticides, pharmaceuticals, and other contaminants. Sorption describes the binding of chemicals to soil or sediment particles, while bioavailability refers to the fraction of a contaminant that is accessible for uptake or transformation by microorganisms [41] [42]. These processes are critical for predicting the persistence and mobility of chemicals, informing regulatory decisions, and developing effective remediation strategies for contaminated sites.

Traditionally, environmental fate models assumed that soil-sorbed contaminants were unavailable for biodegradation without first desorbing into the aqueous phase. However, a growing body of research challenges this assumption, indicating that microorganisms can, under certain conditions, directly access sorbed fractions, leading to enhanced biodegradation rates that deviate from model predictions [41] [42]. This article provides a comparative analysis of key experimental methodologies and modeling approaches used to quantify these complex interactions, offering researchers a guide to available tools and their applications.

Comparative Analysis of Key Models and Experimental Approaches

Different experimental and computational approaches have been developed to elucidate the relationship between sorption and bioavailability. The table below compares three prominent methodologies cited in the literature.

Table 1: Comparison of Bioavailability Assessment Approaches

| Approach Name | Core Principle | Key Measured Parameters | Chemicals Studied | Reported Finding |

|---|---|---|---|---|

| Desorption-Biodegradation-Mineralization (DBM) Model [41] | Links sorption/desorption kinetics with microbial degradation. | Mineralization (CO₂ production), sorption isotherms, desorption rate coefficients. | Atrazine | Accurately predicted atrazine mineralization in many cases, but failed for high-sorption soil, suggesting direct microbial access to sorbed phase. |

| In Vitro Disposition (IVD) Model [21] | Accounts for chemical sorption to in vitro system components (plastic, cells) to predict freely dissolved concentration. | Phenotype altering concentrations (PACs), cell viability, bioactivity. | 225 diverse chemicals | Adjusting in vitro bioactivity using IVD modeling improved concordance with in vivo fish toxicity data for 59% of chemicals. |

| Soil Mineralization Assay [42] | Measures microbial conversion of a contaminant to CO₂ under various soil conditions to assess bioavailability. | Mineralization rate and extent, first-order degradation parameters. | Chlorobenzene | Mineralization rates exceeded predictions based on aqueous-phase concentration, indicating bacteria access sorbed contaminant. |

The Desorption-Biodegradation-Mineralization (DBM) Model

The DBM model is a mathematical framework designed to quantitatively evaluate the bioavailability of soil-sorbed contaminants. It integrates three key processes:

- Desorption: A three-site model describes atrazine residing in equilibrium, rate-limited, and non-desorption sites [41].

- Biodegradation: The model typically assumes that only the liquid-phase contaminant is available for biodegradation.

- Mineralization: The ultimate conversion of the contaminant to CO₂ is measured and predicted.

A key finding from the application of this model to atrazine was that its predictions were accurate for many soil types. However, in a Houghton muck soil with very high sorbed atrazine concentrations, observed mineralization rates were significantly higher than those predicted, even when assuming instantaneous desorption. This suggests that bacteria were able to directly access the sorbed atrazine, a phenomenon potentially facilitated by chemotaxis and cell attachment to soil particles [41].

Modeling Bioavailability and Thermodynamic Constraints

Beyond the DBM approach, other models have incorporated additional biological and physical constraints. For instance, biogeochemical models of atrazine degradation have been extended to include:

- Mass-transfer limitations across the cell membrane, which can be a critical factor at low contaminant concentrations [43].