In Silico vs Traditional Methods in Drug Discovery: A New Era for Efficacy, Risk, and Safety Assessment

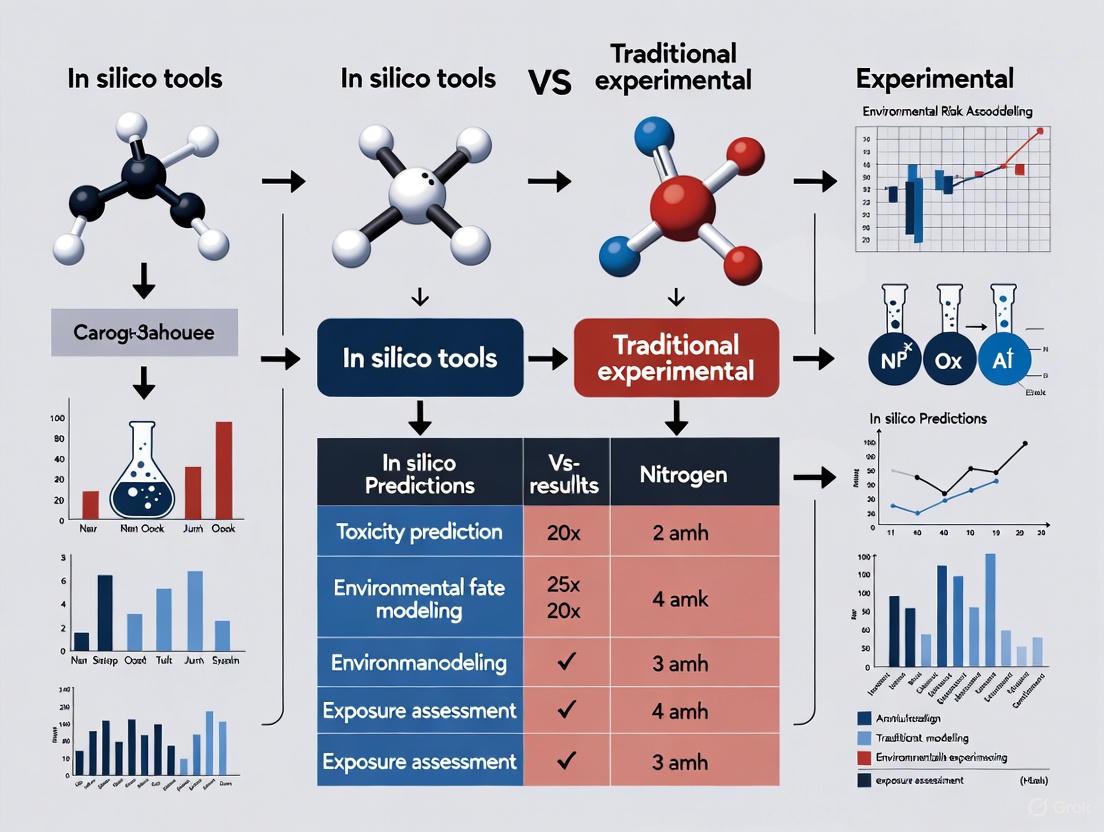

This article provides a comprehensive comparison between in silico computational tools and traditional experimental methods for efficacy, risk, and safety assessment (ERA) in drug development.

In Silico vs Traditional Methods in Drug Discovery: A New Era for Efficacy, Risk, and Safety Assessment

Abstract

This article provides a comprehensive comparison between in silico computational tools and traditional experimental methods for efficacy, risk, and safety assessment (ERA) in drug development. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of in silico technologies like PBPK, QSP, and AI models. The scope extends to their practical applications in virtual patient cohorts and drug repurposing, addresses key methodological challenges and optimization strategies, and critically examines validation frameworks and comparative effectiveness against conventional in vivo and in vitro approaches. The article synthesizes these insights to outline a future where integrated, model-informed drug development paradigms enhance precision, efficiency, and success rates.

The Rise of In Silico Technologies: Foundations for Modern Efficacy and Risk Assessment

The field of scientific research, particularly in drug development and environmental risk assessment (ERA), is undergoing a fundamental transformation. For decades, the traditional approach relying primarily on in vivo (within living organisms) and in vitro (in controlled laboratory environments) methodologies has been the cornerstone of discovery. However, a new paradigm is rapidly emerging, shifting the focus toward in silico (conducted via computer simulation) technologies. This transition represents more than just a change in tools; it signifies a fundamental restructuring of how scientific inquiry is conducted, promising unprecedented gains in speed, cost-efficiency, and ethical compliance. The recent landmark decision by the U.S. Food and Drug Administration (FDA) in April 2025 to phase out mandatory animal testing for many drug types underscores the regulatory momentum behind this shift, signaling that in silico methodologies are maturing from ancillary supports to central components of the scientific workflow [1].

This guide provides an objective comparison of these three methodological paradigms, framing the analysis within the context of modern environmental risk assessment and drug development. By examining the capabilities, limitations, and appropriate applications of each approach, we aim to equip researchers and scientists with the knowledge needed to navigate this evolving landscape.

Defining the Methodological Paradigms

In Vivo (Within the Living Organism)

In vivo research involves the study of biological processes within a whole, living organism. In the context of ERA and drug development, this typically refers to animal models (e.g., rodents, zebrafish) and, ultimately, human clinical trials. This approach provides a holistic view of a substance's effect within a complex, integrated physiological system, accounting for metabolism, organ-system interactions, and overall behavior [2].

In Vitro (Within the Glass)

In vitro methodologies involve experiments conducted with microorganisms, cells, or biological molecules outside their normal biological context. These are typically performed in controlled laboratory environments using tools like cell cultures, tissue samples, and multi-well plates. This approach allows for the isolation of specific biological pathways and high-throughput screening in a simplified system [3].

In Silico (Within the Silicon)

In silico methodologies use computer-based algorithms, models, and simulations to replicate and study complex biological systems. This paradigm leverages advanced computational techniques—including artificial intelligence (AI), machine learning (ML), molecular dynamics, and physiological-based pharmacokinetic (PBPK) modeling—to predict the behavior and effects of chemical entities or drugs under various conditions without the immediate need for physical experiments [3] [2]. The term originates from "silicon," the key material in computer chips.

Table 1: Core Definitions and Characteristics of the Three Methodologies

| Methodology | Core Principle | Key Tools & Systems | Primary Data Output |

|---|---|---|---|

| In Vivo | Study within a whole, living organism | Animal models (mice, rats), human clinical trials | Holistic physiological response, survival, behavior |

| In Vitro | Study in an artificial environment outside a living organism | Cell cultures, tissue samples, multi-well plates | Cellular response, protein binding, toxicity markers |

| In Silico | Study via computer simulation | AI/ML models, molecular docking, PBPK, QSAR | Predictive data on binding, toxicity, PK/PD, efficacy |

Comparative Analysis: Performance and Applications

The choice between in vivo, in vitro, and in silico methods is not a simple matter of superiority, but rather one of context and application. Each paradigm offers a distinct set of advantages and faces unique challenges, making them suited for different stages of research and development.

Quantitative Performance Comparison

The transformative impact of in silico methods is most evident in key performance metrics such as time, cost, and scalability. The following table provides a comparative summary based on recent data and case studies.

Table 2: Quantitative Comparison of Key Performance Metrics

| Metric | In Vivo | In Vitro | In Silico |

|---|---|---|---|

| Typical Timeline | Years (e.g., 3-6 years for animal+early clinical) [2] | Months to a year | Days to weeks [3] |

| Relative Cost | Exorbitant (Billions for a new drug) [1] | High (Reagents, cell cultures, labor) | Significantly lower (Up to 60% reduction in preclinical R&D) [3] |

| Throughput | Very Low | High | Exceptionally High (Thousands of virtual compounds screened simultaneously) [1] |

| Ethical Considerations | Major ethical concerns (3Rs) | Reduced concerns (cell/tissue use) | Minimal direct ethical concerns |

| Regulatory Acceptance | Gold standard for safety/efficacy | Accepted for early screening | Growing acceptance (FDA Modernization Act 2.0, EMA guidance) [1] [4] |

| Translational Value | High, but species differences exist | Limited by system simplification | Potentially high, but model-dependent [5] |

Advantages and Limitations in Practice

In Vivo Strengths and Weaknesses: The primary strength of in vivo studies lies in their ability to reveal unexpected systemic effects, complex immune responses, and overall pharmacodynamics in a fully integrated biological system. However, they are plagued by high costs, lengthy timelines, ethical controversies, and significant species-to-species translatability issues. The majority of drugs that show promise in animal models fail in late-stage human trials, highlighting a critical limitation of this paradigm [1] [5].

In Vitro Strengths and Weaknesses: In vitro methods excel in mechanistic studies, allowing researchers to isolate specific pathways and perform high-throughput screening in a controlled environment. They are more cost-effective than in vivo studies and raise fewer ethical concerns. Their main weakness is their inability to fully replicate the complexity of a living organism, often leading to poor extrapolation to whole-body outcomes [5].

In Silico Strengths and Weaknesses: In silico approaches offer unparalleled speed and scalability, enabling the testing of thousands of drug candidates, doses, and scenarios in a virtual space. They are highly cost-effective and eliminate ethical concerns related to animal testing. Their success, however, is entirely dependent on the quality and quantity of the underlying data used to build and train the models. Challenges include model inaccuracy for complex biological processes, the "black-box" nature of some AI algorithms, and the ongoing need for rigorous validation against experimental data to establish regulatory credibility [1] [3] [6].

Experimental Protocols and Workflows

Understanding the practical application of these methodologies requires a detailed look at their experimental workflows.

A Standard In Silico Workflow for Toxicity Prediction

The following diagram illustrates a generalized, iterative workflow for conducting an in silico study, such as predicting chemical toxicity or drug binding.

Diagram: In Silico Experiment Workflow. This shows the iterative process from hypothesis to validated model prediction.

- Define the Virtual Experiment: The process begins with a clear, quantitative hypothesis. Example: "Predict the binding energy between Chemical Candidate X and the HER2 receptor using free energy perturbation (FEP) calculations" [3].

- Tool Selection: Researchers select appropriate software based on the task (e.g., AutoDock Vina for molecular docking, OpenFOAM for fluid dynamics, Gaussian for quantum chemistry) [3].

- Data Preparation: Input data is gathered and prepared. This includes obtaining structural files (e.g., from the Protein Data Bank), chemical descriptors (e.g., SMILES strings), and setting experimental parameters (pH, temperature). Structures are often "cleaned" through energy minimization to avoid unrealistic conformations [3] [7].

- Run Simulation: The computational experiment is executed. A molecular dynamics run, for instance, might apply a force field like AMBER to define atomic interactions and simulate nanoseconds of protein movement, which can take days on high-performance computing clusters [3].

- Validation & Iteration: This is a critical step for regulatory and scientific credibility. The virtual results are compared against wet-lab assay data (e.g., comparing predicted IC50 to experimentally measured IC50). Discrepancies lead to model refinement, such as adjusting solvation parameters, and the cycle repeats until predictions are validated [3] [8] [6].

The Synergistic Validation Cycle

A key modern concept is the perpetual refinement cycle, where in silico and experimental methods are integrated to continuously improve model accuracy and scientific insight.

Diagram: Perpetual Model Refinement Cycle. This synergistic loop integrates computational and experimental data.

The Scientist's Toolkit: Key Reagent Solutions

The transition to in silico methodologies requires a new set of "research reagents" – primarily software tools and data resources. The table below details essential solutions for setting up a computational research environment.

Table 3: Essential In Silico Research Reagents and Tools

| Tool Category | Example Software/Platforms | Primary Function | Key Capabilities |

|---|---|---|---|

| Molecular Docking & Dynamics | AutoDock Vina, GROMACS, AMBER, Glide [3] | Simulates interaction between drug and target protein | Predicts binding affinity, protein folding, molecular interactions |

| Toxicity & ADMET Prediction | ProTox-3.0, ADMETlab, DeepTox [1] | Predicts absorption, distribution, metabolism, excretion, and toxicity | Flags liver toxicity risks, predicts pharmacokinetics, early safety screening |

| Systems Biology & QSP | MATLAB SimBiology, Schrödinger Suite [3] [2] | Models complex biological systems and pharmacodynamics | Simulates disease progression, predicts patient-specific responses (Digital Twins) |

| Cheminformatics & QSAR | KNIME, Various QSAR software [3] [9] | Analyzes chemical data and quantitative structure-activity relationships | Predicts biological activity based on chemical structure, virtual screening |

| Data & Structure Resources | Protein Data Bank (PDB), UK Biobank [10] [3] | Provides foundational data for model building | Sources for protein structures, genomic data, and real-world evidence |

The paradigm shift from predominantly in vivo/in vitro to in silico methodologies is undeniable and accelerating. Regulatory support, demonstrated by the FDA Modernization Act 2.0 and the FDA's recent 2025 ruling, solidifies the role of computational approaches as credible and often indispensable [1] [4].

However, the future of research, particularly in critical fields like environmental risk assessment and drug development, is not a simple replacement of one paradigm by another. The most powerful and reliable strategy is a synergistic, integrated approach. In silico models are refined and validated using high-quality data from in vitro and in vivo studies. In return, these models can optimize and reduce the need for subsequent experimental work, guiding researchers toward the most promising candidates and experimental designs. As one computational biologist noted, the true potential lies in "bridging the gap between computational biology and experimental validation," creating a continuous cycle of prediction and empirical confirmation that accelerates discovery while enhancing its rigor and relevance [10] [6]. In this new era, the failure to employ in silico methods may soon be viewed not merely as a missed opportunity, but as an impractical and inefficient approach to scientific inquiry [1].

Environmental Risk Assessment (ERA) traditionally relies on in vitro and in vivo experimental data to characterize the potential hazards of chemicals and pollutants. While these methods provide valuable information, they are often resource-intensive, time-consuming, and raise ethical concerns regarding animal testing. The emergence of sophisticated in silico tools represents a paradigm shift, enabling researchers to simulate chemical disposition, biological interactions, and adverse outcomes through computational modeling. Among these tools, Physiologically Based Pharmacokinetic (PBPK) models, Quantitative Systems Pharmacology/Toxicology (QSP/QST) models, and Artificial Intelligence/Machine Learning (AI/ML) approaches have gained significant prominence. These methodologies offer mechanistic insights, enhance predictive capability, and support a more efficient evaluation of chemical risks, ultimately strengthening the scientific foundation of regulatory decision-making [11] [3] [12]. This guide provides a comparative analysis of these core in silico tools, evaluating their performance, applications, and integration within modern ERA frameworks.

Defining the Core In Silico Tools

Physiologically Based Pharmacokinetic (PBPK) Models are mathematical constructs that simulate the absorption, distribution, metabolism, and excretion (ADME) of chemicals within an organism. They represent the body as a network of anatomically meaningful compartments (e.g., liver, kidney, fat) interconnected by blood circulation. By integrating chemical-specific properties with physiological parameters, PBPK models quantitatively predict tissue-specific concentrations of a substance and its metabolites over time [11] [13]. This is particularly valuable for extrapolating across species, doses, and exposure scenarios, which are central challenges in ERA.

Quantitative Systems Pharmacology/Toxicology (QSP/QST) Models extend beyond pharmacokinetics to model the complex interactions between a chemical and biological systems, focusing on the mechanisms of action and the subsequent pharmacological or toxicological outcomes. QST models often integrate PBPK components with detailed molecular pathways and cellular responses to predict system-level effects, such as organ toxicity or disease progression [14]. They are particularly suited for understanding how perturbations at a molecular level cascade into adverse outcomes at the organism level.

Artificial Intelligence and Machine Learning (AI/ML) Models encompass a suite of data-driven approaches that learn patterns from large datasets to make predictions. In ERA, AI/ML algorithms can be applied to tasks such as quantitative structure-activity relationship (QSAR) modeling for toxicity prediction, virtual screening of chemical libraries, and analysis of high-throughput omics data [15] [12]. Unlike the mechanistic foundation of PBPK and QST, ML models often operate as "black boxes," but they excel in handling high-dimensional data and identifying complex, non-linear relationships that may be difficult to model mechanistically.

Comparative Performance and Application

The table below summarizes the core characteristics, strengths, and limitations of PBPK, QST, and AI/ML models for ERA applications.

Table 1: Comparative Analysis of Core In Silico Tools in Environmental Risk Assessment

| Feature | PBPK Models | QST Models | AI/ML Models |

|---|---|---|---|

| Primary Focus | Predicting internal tissue dose (pharmacokinetics) [11] | Predicting system-level biological effects (pharmacodynamics/toxicodynamics) [14] | Identifying patterns and predicting endpoints from chemical structure and bioactivity data [15] [12] |

| Core Application in ERA | Interspecies and cross-route extrapolation; risk assessment from internal dose [11] [16] | Mechanistic investigation of toxicity pathways; hypothesis testing [17] | High-throughput toxicity screening; ADME and bioactivity prediction [15] [12] |

| Key Advantage | Physiologically grounded, enabling credible extrapolations [13] | Holistic, systems-level understanding of adverse outcomes [17] | High speed and scalability for data-rich problems [3] [12] |

| Data Requirements | High: Requires in vitro/in vivo data for parameterization and validation [11] | Very High: Requires multi-scale data from molecular to physiological levels [17] | High: Quality and quantity of training data are critical for model performance [15] [12] |

| Interpretability & Transparency | High (Mechanistic) [11] | High (Mechanistic) [14] | Variable, often low ("Black Box") [12] |

| Regulatory Acceptance | Established in drug development; growing in chemical risk assessment [13] | Emerging, often used in a supportive role [14] | Growing for specific endpoints (e.g., QSAR, read-across) [15] |

| Computational Demand | Moderate to High [16] | High to Very High | Low to High, depending on model complexity |

Performance Evaluation: Experimental Data and Protocols

Quantitative Performance Metrics

Evaluating the performance of in silico tools requires assessing their predictive accuracy, computational efficiency, and reliability. The following table synthesizes experimental data and findings from published studies applying these tools.

Table 2: Experimental Performance Metrics of In Silico Tools

| Tool Category | Case Study / Chemical | Key Performance Metric | Result | Source |

|---|---|---|---|---|

| PBPK | Computational Time (Dichloromethane, Chloroform) | Simulation time savings from model optimization | 20-35% reduction in computational time achieved by reducing state variables [16] | |

| PBPK | Computational Workflow | Impact of fixed vs. time-varying parameters | Treating body weight and dependent quantities as constant parameters saved ~30% computational time [16] | |

| AI/ML (Generative AI) | Insilico Medicine (Idiopathic Pulmonary Fibrosis drug) | Discovery and preclinical timeline | Target to Phase I trials achieved in 18 months, significantly faster than traditional timelines [18] | |

| AI/ML (Generative Chemistry) | Exscientia | Design cycle efficiency | In silico design cycles ~70% faster, requiring 10x fewer synthesized compounds than industry norms [18] | |

| In Silico Screening | COVID Moonshot Project | Throughput and efficiency | 14,000 molecules screened in silico in weeks, identifying 30 promising antivirals [3] | |

| In Silico Toxicology | Toxicity Prediction | Reduction in animal testing | ML models for liver toxicity could potentially reduce animal testing by 30-50% [3] |

Detailed Experimental Protocols

To ensure the reliability and reproducibility of in silico tools, standardized protocols are essential. Below are detailed methodologies for implementing PBPK modeling and AI/ML-based virtual screening, two cornerstone approaches in modern ERA.

Protocol 1: Development and Application of a PBPK Model for ERA

- Problem Definition: Clearly define the assessment goal, such as "Predict the concentration-time profile of Chemical X in the liver and kidney of rats following oral exposure to support dose-response analysis."

- Model Structure Definition: Select the relevant physiological compartments (e.g., liver (metabolizing), kidney (excreting), fat (storage), and slowly/perfused tissues). Define the routes of entry (e.g., oral, inhalation) and elimination [11] [16].

- Parameter Acquisition:

- Physiological Parameters: Obtain species-specific values for organ weights, blood flow rates, and ventilation rates from peer-reviewed literature.

- Chemical-Specific Parameters: Gather or experimentally determine parameters for the chemical of interest, including partition coefficients (tissue:air, tissue:blood), absorption rate constants, and metabolic constants (V~max~, K~m~) [11].

- Model Implementation: Code the differential equations representing mass balance in each compartment. Use mathematical software (e.g., R, MATLAB) or specialized platforms (e.g., GastroPlus, Simcyp). The model can be implemented in a stand-alone manner or using a flexible PBPK model template [16].

- Model Validation: Simulate existing in vivo kinetic studies and compare model predictions against independent experimental data (not used for parameterization). Statistical and graphical methods (e.g., goodness-of-fit plots) are used to assess predictive performance [11] [16].

- Simulation and Analysis: Run simulations for the ERA scenarios of interest (e.g., various exposure durations and levels). Conduct sensitivity analysis to identify the parameters to which the model outputs are most sensitive, guiding future research needs [16].

Protocol 2: AI/ML-Based Virtual Screening for Toxicity Prediction

- Objective and Endpoint Definition: Define the toxicological endpoint for prediction, such as "Classify chemicals as mutagenic or non-mutagenic using a QSAR model."

- Curate Training Dataset: Assemble a high-quality dataset of chemicals with reliable experimental results for the endpoint. Public databases like the EPA's ToxCast or the NTP can be sources. Apply strict curations for data quality and remove duplicates and compounds with conflicting results [15].

- Calculate Molecular Descriptors: For each chemical structure, compute numerical descriptors that encode structural and physicochemical properties (e.g., molecular weight, logP, topological surface area, electronic parameters) using software like PaDEL-Descriptor or RDKit [15].

- Model Training and Validation:

- Split the dataset into a training set (e.g., 80%) and a hold-out test set (e.g., 20%).

- Use the training set to build a predictive model using machine learning algorithms (e.g., Random Forest, Support Vector Machines, or Deep Neural Networks).

- Apply cross-validation on the training set to optimize model hyperparameters and prevent overfitting.

- Model Evaluation: Use the untouched test set to evaluate the final model's performance. Report standard metrics such as accuracy, sensitivity, specificity, and receiver operating characteristic (ROC) curves [15].

- Application for Prediction: Apply the validated model to screen new, untested chemicals for potential toxicity, prioritizing them for further experimental evaluation.

Visualizing Workflows and Signaling Pathways

PBPK Model Workflow and Structure

The following diagram illustrates the generalized workflow for developing and applying a PBPK model, from problem definition to risk assessment application.

QST-Based Adverse Outcome Pathway (AOP)

Quantitative Systems Toxicology models often formalize the mechanistic understanding described in an Adverse Outcome Pathway (AOP). The diagram below depicts a generalized AOP, from molecular initiation to an adverse organism-level effect, which a QST model would mathematically represent.

AI/ML Model Development Cycle

The application of AI/ML in ERA typically follows an iterative cycle of training, validation, and prediction, as visualized below.

The effective application of in silico tools requires a suite of computational "reagents" – software, databases, and platforms that form the essential materials for modern ERA research.

Table 3: Essential Research Reagents for In Silico ERA

| Tool Category | Resource / Platform | Type / Function | Key Application in ERA |

|---|---|---|---|

| PBPK Modeling | GastroPlus, Simcyp Simulator | Commercial PBPK Platform | Simulating ADME and predicting internal dose in virtual human and animal populations. Industry-preferred (e.g., ~80% usage in FDA submissions) [13]. |

| PBPK Modeling | R/mcsim | Open-Source Modeling Framework | Implementing and simulating PBPK models using a combination of R for scripting and MCSim for efficient model specification and solution [16]. |

| AI/ML & Virtual Screening | AutoDock Vina, Glide | Molecular Docking Software | Predicting how a small molecule (e.g., environmental contaminant) interacts with a biological target (e.g., protein, receptor) [3]. |

| AI/ML & Cheminformatics | RDKit, PaDEL-Descriptor | Open-Source Cheminformatics Library | Calculating molecular descriptors and fingerprints from chemical structures for QSAR and machine learning modeling [15]. |

| AI/ML & Protein Structure | AlphaFold | AI-based Protein Structure Prediction | Accurately predicting the 3D structure of proteins, which is critical for understanding molecular interactions when experimental structures are unavailable [12]. |

| Data Integration & Modeling | Schrödinger Suite | Comprehensive Drug Discovery Platform | Integrates physics-based simulations (e.g., FEP) with machine learning for molecular design and optimization, applicable to toxicant design [18]. |

| General Workflow & Analytics | KNIME, Python (scikit-learn) | Data Analytics and ML Workflow Platform | Building, testing, and deploying end-to-end data pipelines for toxicity prediction and analysis of high-throughput screening data [3]. |

The integration of PBPK, QST, and AI/ML models into ERA represents a fundamental advancement toward a more predictive, efficient, and mechanistic toxicology. As demonstrated, each tool class offers distinct strengths: PBPK models provide a physiologically grounded framework for predicting tissue-specific dosimetry; QST models enable a systems-level understanding of toxicological pathways; and AI/ML models offer unparalleled speed and pattern recognition for data-driven prioritization and screening. The future of ERA lies not in the isolated application of any single tool, but in their strategic integration. A powerful approach involves using AI/ML to rapidly screen chemicals and inform parameter estimation for PBPK models, whose outputs of internal dose then serve as the input for QST models to predict adverse outcomes. This synergistic, fit-for-purpose use of in silico tools will continue to enhance the scientific rigor of environmental risk assessment while aligning with the global push to reduce, refine, and replace animal testing.

The study of underrepresented populations—including those with rare diseases, specific genetic subtypes, or ethnic minorities—presents a fundamental challenge in biomedical research. Traditional clinical trials and experimental methods often struggle to recruit sufficient participants from these groups, leading to significant gaps in understanding disease mechanisms and treatment efficacy across the full human spectrum. Virtual populations, defined as computer-generated simulations that mimic the clinical characteristics of real patients, have emerged as a powerful alternative for studying these underrepresented groups [19]. These in silico models enable researchers to simulate clinical trials, predict drug effects, and explore disease mechanisms without the recruitment barriers and ethical constraints of traditional studies [19] [20].

The integration of virtual populations represents a paradigm shift in environmental risk assessment (ERA) research and drug development. By creating digital representations of human variability, researchers can now investigate questions that were previously scientifically or ethically prohibitive, particularly for rare diseases and population subtypes where patient numbers are insufficient for traditional statistical analysis [21] [20]. This guide provides a comprehensive comparison between these innovative computational approaches and traditional experimental methods, offering researchers practical frameworks for implementation.

Virtual vs. Traditional Methods: A Comparative Analysis

Fundamental Capabilities and Limitations

Table 1: Core Methodological Comparison

| Aspect | Virtual Population Approaches | Traditional Experimental Methods |

|---|---|---|

| Population Representation | Can simulate rare genetic subtypes and underrepresented groups [19] [20] | Limited by recruitment feasibility and prevalence of condition [19] |

| Scalability | Highly scalable once initial framework established [22] | Limited by resources, time, and participant availability [19] |

| Time Requirements | Significantly reduced (weeks to hours for simulations) [20] | Protracted timelines (often years for trial completion) [19] |

| Cost Factors | High initial development cost, lower per-simulation cost [19] | Consistently high costs throughout study duration [19] |

| Ethical Considerations | Reduces need for animal testing and human trial risks [21] [19] | Significant ethical oversight required for animal and human studies [19] |

| Regulatory Acceptance | Emerging frameworks, not yet standardized [19] [23] | Well-established pathways [19] |

Quantitative Performance Metrics

Table 2: Experimental Data Comparison

| Performance Metric | Virtual Population Applications | Traditional Method Equivalent | Experimental Evidence |

|---|---|---|---|

| Patient Recruitment | Unlimited virtual cohorts for rare diseases [19] [20] | Often impossible for ultra-rare subtypes [19] | Rare disease subtype testing where human trials were unfeasible [20] |

| Development Timeline | Reduced from years to hours for specific simulations [20] | Average 10 years from patent to approval [19] | Sanofi's AI programs accelerated research from weeks to hours [20] |

| Success Rate Prediction | Improved prediction of clinical outcomes [17] [20] | 90% failure rate of new drug candidates [20] | Asthma compound Phase 1b outcome accurately predicted by model [20] |

| Statistical Power | Achieved 80% power with 50-70 virtual patients in specific designs [24] | Requires larger sample sizes, especially for rare diseases [19] | Crossover designs showed highest efficiency in simulated trials [24] |

Methodological Frameworks: Implementing Virtual Population Strategies

Core Technical Approaches

Multiple computational methodologies enable the creation and utilization of virtual populations, each with distinct advantages and applications:

Agent-Based Modeling (ABM): Simulates individual agents (virtual patients) and their interactions within a system, particularly valuable for studying complex behaviors like disease transmission and immune responses [19]. ABM has been successfully applied in oncology to simulate tumor progression and combination therapy effects [19].

Quantitative Systems Pharmacology (QSP): Integrates disease biology, pathophysiology, and known pharmacology into a unified computational framework to create digital twins of human patients [20]. This approach enables simulation of a compound's mechanism of action on disease pathways and prediction of clinical outcomes [20].

AI and Machine Learning: Analyzes large datasets to identify patterns and generate synthetic datasets, especially valuable for augmenting small sample sizes in rare disease research [19]. These techniques can create virtual patients by learning from real patient data, uncovering hidden relationships within the data [19].

Genome-Scale Metabolic Reconstructions (GENREs): Predictive network models containing thousands of metabolic reactions and associated genes, enabling the study of systemic metabolic disorders and their manifestations across diverse populations [25].

Experimental Workflow for Virtual Population Generation

The creation of scientifically valid virtual populations follows a systematic process encompassing model design, parameterization, and validation [26]. The following workflow diagram illustrates this iterative process:

Figure 1: Virtual Population Development Workflow

This workflow emphasizes the iterative nature of virtual population development, where models are continuously refined based on validation results and emerging data [26]. The process begins with clearly defining study objectives, which determines the appropriate model structure and level of mathematical detail required [26].

Protocol for Virtual Clinical Trial Implementation

Based on established methodologies in the field [26], the following step-by-step protocol ensures robust virtual clinical trials:

Model Selection and Design:

- Develop a fit-for-purpose model balancing mechanistic detail with practical constraints

- Incorporate pharmacokinetic (PK) components describing drug concentration over time

- Include pharmacodynamic (PD) components predicting treatment safety and efficacy

- Tailen model complexity to available data and specific research questions

Parameter Estimation:

- Utilize available biological, physiological, and treatment-response data

- Apply sensitivity analysis to identify parameters most influential on outcomes

- Conduct identifiability analysis to determine which parameters can be reliably estimated

- Implement Bayesian inference or maximum likelihood estimation methods

Virtual Population Generation:

- Introduce controlled variability in patient characteristics based on target population

- Ensure representation of relevant subgroups and underrepresented populations

- Validate virtual population against known clinical characteristics when possible

- Generate sufficient cohort size for statistical power [24]

Trial Simulation and Validation:

- Implement in silico clinical trials using the virtual population

- Compare simulation results with any available empirical data

- Refine model parameters and structure based on validation outcomes

- Conduct sensitivity analyses to understand robustness of conclusions

Signaling Pathways in Virtual Population Modeling

Virtual population models incorporate multiple interconnected signaling pathways that simulate biological processes. The following diagram illustrates key pathways and their interactions in a representative therapeutic area:

Figure 2: Key Signaling Pathways in Virtual Population Models

These interconnected pathways enable virtual population models to simulate how investigational compounds affect disease pathways and clinical outcomes across diverse populations [20]. The incorporation of population heterogeneity factors at multiple levels allows researchers to explore how genetic and demographic variations influence treatment responses.

Table 3: Research Reagent Solutions for Virtual Population Studies

| Tool Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| AI/ML Platforms | PandaOmics, ChatGPT [19] | Target identification, data analysis | Drug discovery, patient stratification [21] |

| Biosimulation Software | Monte Carlo simulations, ODE solvers [19] [26] | Mathematical modeling of biological processes | PK/PD modeling, trial simulation [26] |

| Genome Analysis Tools | DipAsm, RepeatMasker, FALCON-Unzip [27] | Haplotype-resolved assembly, variant analysis | Genetic disease modeling, population genetics [27] |

| Pathway Modeling | Quantitative Systems Pharmacology (QSP) platforms [20] | Disease pathway simulation and perturbation | Mechanism of action studies, biomarker identification [20] |

| Data Generation | Synthetic data generation algorithms [23] | Create artificial data mimicking real patient data | Augmenting rare disease datasets, enhancing diversity [23] |

Virtual population technologies offer transformative potential for addressing long-standing representation gaps in biomedical research, particularly for rare diseases and underrepresented population subgroups. While traditional experimental methods remain essential for validation and foundational knowledge generation, in silico approaches provide complementary capabilities that can accelerate research and improve inclusivity.

The most promising path forward involves the intelligent integration of both methodologies, leveraging the control and scalability of virtual populations with the empirical validation of traditional trials. As regulatory frameworks evolve and computational methods mature, these hybrid approaches promise to make biomedical research more representative, efficient, and clinically relevant across the full spectrum of human diversity.

For researchers implementing these technologies, success depends on rigorous model validation, transparent methodology, and ongoing refinement based on emerging clinical evidence. When properly implemented, virtual populations represent not just a technological advancement, but an ethical imperative for ensuring that all populations benefit from biomedical progress.

The pharmaceutical industry is undergoing a profound structural transformation, moving from a reliance solely on traditional experimental methods to the integration of computational and model-based approaches. Model-Informed Drug Development (MIDD) is an essential framework that uses quantitative methods to inform drug development and regulatory decision-making [28]. This shift is driven by escalating clinical trial costs, which have surpassed USD 2.3 billion per approved drug on average, creating intense pressure to reduce physical trial sizes and optimize protocols via digital simulations [29]. Regulatory agencies worldwide, including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), are now actively encouraging MIDD approaches, boosting industry confidence in the use of in-silico evidence [29].

This evolution represents a fundamental change in how evidence is generated and evaluated across the drug development lifecycle. The International Council for Harmonisation (ICH) has developed the M15 guideline, "General Principles for Model-Informed Drug Development," to provide a harmonized framework for assessing MIDD evidence [30] [31]. This endorsement signals a regulatory maturation where in-silico methodologies are no longer supplementary but are becoming central to development strategies and regulatory submissions across all phases, from early discovery to post-market surveillance [28].

Regulatory Endorsement and Initiatives

FDA Leadership in MIDD Implementation

The FDA has established concrete programs to advance and integrate MIDD into drug development and regulatory review. The MIDD Paired Meeting Program, operating under the Prescription Drug User Fee Act (PDUFA VII) for fiscal years 2023-2027, provides sponsors with opportunities to discuss MIDD approaches with Agency staff [32]. This program specifically focuses on dose selection, clinical trial simulation, and predictive safety evaluation, offering both initial and follow-up meetings on the same drug development issues [32]. The agency's proactive stance is further demonstrated by the December 2024 issuance of the ICH M15 draft guidance, which outlines multidisciplinary principles for MIDD, including recommendations on planning, model evaluation, and evidence documentation [30].

The impact of these initiatives is already measurable. FDA's MIDD pilot program participation increased 23% year-over-year from 2023 to 2024, and over 65% of top 50 pharmaceutical companies now use in-silico modeling routinely [29]. This regulatory leadership has positioned the United States as the dominant market for in-silico clinical trials, accounting for 44% of global market value (USD 1.74 billion in 2024) [29].

EMA's Evolving Regulatory Framework

The EMA has paralleled FDA's advancements with its own initiatives to formalize the role of modeling in drug development. The Agency has proposed a new guideline on the assessment and reporting of mechanistic models used in MIDD, covering Physiologically Based Pharmacokinetic (PBPK), Physiologically Based Biopharmaceutics (PBBM), and Quantitative Systems Pharmacology (QSP) models [33]. This guideline addresses the need for standardized assessment of these increasingly utilized tools across all drug development phases [33].

EMA's participation in the ICH M15 guideline development further demonstrates a collaborative global effort to harmonize MIDD principles [31]. The guideline aims to "facilitate multidisciplinary understanding, appropriate use, and harmonized assessment of MIDD and its associated evidence," creating consistency in how regulatory agencies evaluate model-derived submissions [30]. This harmonization is particularly valuable for global drug development programs seeking simultaneous approvals across multiple regions.

Comparative Analysis: In-Silico vs. Traditional Methodologies

Quantitative Performance Metrics

The adoption of in-silico approaches is justified by demonstrated advantages across key development metrics. The following table summarizes the comparative performance between established in-silico tools and traditional methods they supplement or replace.

Table 1: Performance Comparison of In-Silico Tools Versus Traditional Methods

| Development Stage | In-Silico Tool | Traditional Method | Comparative Performance |

|---|---|---|---|

| Vaccine Development | AI-driven epitope prediction (MUNIS) | Motif-based prediction | 26% higher performance than prior algorithms; identifies genuine epitopes previously overlooked [34] |

| B-cell Epitope Prediction | Deep learning models (e.g., NetBCE) | Physicochemical scales/sequence conservation | 87.8% accuracy (AUC=0.945) vs. 50-60% accuracy for traditional methods [34] |

| Clinical Trial Efficiency | Virtual patient simulations & digital twins | Physical clinical trials | Reduces experimental workload, enhances prediction accuracy, shortens development timelines [29] |

| Drug Discovery | AI-based virtual screening | Experimental high-throughput screening | Rapidly evaluates 26.3 million peptide–allele pairs; identifies novel targets beyond conventional focus [34] |

| Market Impact | Comprehensive in-silico trial platforms | Traditional clinical development | Market projected to reach USD 6.39 billion by 2033, growing at 5.5% CAGR [29] |

Application-Specific Methodological Comparisons

Epitope Prediction and Vaccine Design

Traditional Experimental Protocols: Classical epitope identification relied on peptide microarrays, mass spectrometry, and ELISA assays. These methods are accurate but slow, costly, and limited in throughput [34]. For instance, traditional motif-based methods for T-cell epitopes often failed to detect novel alleles or unconventional epitopes [34].

In-Silico Methodologies: Modern AI tools use convolutional neural networks (CNNs), recurrent neural networks (RNNs), and graph neural networks (GNNs) to predict epitopes with significantly higher accuracy [34]. The experimental workflow for AI-driven epitope prediction involves:

- Data Curation: Assembling large-scale immunological datasets (>650,000 human HLA–peptide interactions) [34]

- Model Training: Using deep learning architectures to learn complex sequence-structure-immunogenicity relationships

- Validation: Experimental confirmation via in vitro HLA binding and T-cell assays [34]

- Application: Scanning entire pathogen proteomes to identify dozens of candidate antigens simultaneously

The MUNIS framework exemplifies this approach, successfully identifying known and novel CD8+ T-cell epitopes from viral proteomes with validation through HLA binding and T-cell assays [34]. Similarly, the GearBind GNN facilitated computational optimization of spike protein antigens, resulting in variants with 17-fold higher binding affinity for neutralizing antibodies [34].

Rare Disease Research and Drug Development

Traditional Limitations: Rare disease research faces fundamental challenges including small patient populations, limited biological samples, and lack of validated biomarkers [35]. Traditional approaches relying on animal models are often ill-suited to capture complex pathophysiology [35].

In-Silico Solutions: Computational approaches enable virtual patient cohorts, mechanism-based modeling, and in-silico trials that address these limitations [35]. The methodological workflow includes:

- Disease Characterization: Using AI-enhanced pipelines with whole-genome sequencing and EHR analysis for differential diagnosis [35]

- Target Identification: Network pharmacology and omics integration to identify therapeutic targets [35]

- Clinical Trial Simulation: Pharmacokinetic models and virtual control arms to optimize trial designs [35]

For Gaucher disease, computational tools like SNPs3D, SIFT, and PolyPhen predict the functional impact of novel GBA1 gene mutations and reconstruct mutant protein structures, offering critical insights when patient samples are scarce [35].

The Researcher's Toolkit: Essential In-Silico Solutions

The implementation of MIDD requires specialized computational tools and platforms. The following table details key solutions available to researchers, categorized by their primary application area.

Table 2: Essential Research Reagent Solutions for In-Silico Drug Development

| Tool Category | Representative Platforms | Primary Function | Regulatory Application |

|---|---|---|---|

| Pharmacometrics & QSP Modeling | Certara Platforms, Simulations Plus PBPK Tools | Pharmacometrics, QSP modeling, PBPK simulation, clinical optimization [29] | 62% of Certara's revenue from modeling & simulation; used for regulatory submissions [29] |

| Mechanistic Biological Modeling | Dassault Systèmes BIOVIA, SIMULIA | Virtual device testing, mechanistic biological modeling [29] | USD 1.3 billion life sciences segment; dominates virtual device testing [29] |

| Cloud-Based Trial Simulation | InSilicoTrials Technologies Platform | Cloud-based simulation for CE and FDA filings [29] | Regulator-trusted for CE and FDA filings [29] |

| AI-Driven Antigen Design | MUNIS, GraphBepi, NetMHC series | Epitope prediction, antigen optimization, immunogenicity prediction [34] | Identifies novel epitopes experimentally validated for vaccine design [34] |

| Mechanistic Model Assessment | FDA M15 Framework, EMA Mechanistic Models Guideline | Regulatory assessment of PBPK, PBBM, QSP models [33] [31] | Standardized framework for regulatory evaluation of mechanistic models [30] [33] |

Regulatory Workflows and Decision Pathways

The integration of MIDD into regulatory decision-making follows structured pathways that ensure rigorous evaluation. The following diagram illustrates the typical workflow for regulatory submission and assessment of model-informed evidence.

FDA Paired Meeting Program Pathway

The FDA's MIDD Paired Meeting Program provides a structured mechanism for early regulatory alignment on modeling approaches [32]. The process involves:

- Eligibility Determination: Applicants must have an active IND or PIND number; consortia or software developers must partner with a drug development company [32]

- Meeting Request Submission: Limited to 3-4 pages, containing product information, question of interest, MIDD approach, context of use, and specific questions for the Agency [32]

- Selection Prioritization: FDA prioritizes requests focusing on dose selection, clinical trial simulation, or predictive/mechanistic safety evaluation [32]

- Meeting Package Submission: Due 47 days before the initial meeting, containing detailed model development, validation, simulation plans, and model risk assessment [32]

- Paired Meetings: An initial meeting followed by a second meeting within approximately 60 days of receiving the meeting package [32]

This pathway exemplifies the regulatory endorsement of MIDD by creating dedicated channels for model discussion and alignment throughout the development process.

Experimental Validation Frameworks

Fit-for-Purpose Model Validation

A cornerstone of regulatory acceptance is the "fit-for-purpose" validation of models, which requires close alignment between the model's context of use and its evaluation strategy [28]. The framework includes:

- Context of Use Definition: Explicit specification of how model predictions will inform regulatory decisions [28] [32]

- Question of Interest Alignment: Ensuring the model addresses a specific development question with appropriate methodology [28]

- Model Risk Assessment: Evaluating the potential consequence of incorrect decisions based on model predictions [32]

- Validation Stratification: Implementing appropriate verification, calibration, and validation based on model impact [28]

A model is considered not fit-for-purpose when it fails to define the context of use, has poor data quality, lacks proper verification, or incorporates unjustified complexities [28].

Cross-Model Validation Techniques

Rigorous validation of in-silico predictions against experimental data is essential for regulatory confidence. Successful approaches include:

- Triangulation Strategy: For ultra-rare variants, combining multiple prediction tools (REVEL, MutPred, SpliceAI) with human expert adjudication [35]

- Bidirectional Workflows: Creating closed-loop systems where in-silico predictions inform wet-lab experiments, and experimental results refine computational models [35]

- Prospective Experimental Validation: Following AI-based predictions with in vitro binding assays, T-cell activation studies, and in vivo challenge models [34]

For example, the MUNIS T-cell epitope predictor demonstrated real-world validation by identifying novel epitopes in Epstein-Barr virus that were subsequently confirmed through in vitro T-cell assays [34]. Similarly, AI-optimized SARS-CoV-2 spike antigens showed 17-fold higher binding affinity in ELISA assays, confirming computational predictions [34].

The regulatory evolution toward endorsement of Model-Informed Drug Development represents a fundamental shift in pharmaceutical development and assessment. The harmonized framework established through ICH M15, coupled with specific programs like the FDA's MIDD Paired Meeting Program and EMA's mechanistic models guideline, creates a structured pathway for integrating computational approaches into regulatory decision-making [30] [33] [32].

The comparative data clearly demonstrates that in-silico methods offer substantial advantages over traditional approaches in specific contexts, particularly epitope prediction, rare disease research, and clinical trial optimization [34] [35]. The projected growth of the in-silico clinical trials market to USD 6.39 billion by 2033 confirms this methodological transition is accelerating [29].

For researchers and drug developers, success in this evolving landscape requires meticulous attention to fit-for-purpose model validation, comprehensive documentation, and early regulatory engagement [28] [32]. As both FDA and EMA continue to refine their approaches to MIDD assessment, the integration of in-silico evidence will increasingly become standard practice rather than exception, ultimately accelerating the delivery of innovative therapies to patients while maintaining rigorous safety and efficacy standards.

From Theory to Practice: Methodological Applications of In Silico Tools in Drug Development

Creating and Utilizing Virtual Patient Cohorts for Clinical Trial Simulation

The development of new pharmaceuticals is a complex and costly endeavor, characterized by prolonged timelines, high failure rates, and escalating regulatory demands. Only about 10% of drug candidates successfully transition from patenting to market approval, with the average time from patenting to FDA approval taking approximately 10 years and costs exceeding $2.87 billion per new drug [19]. In recent years, the concept of virtual patient cohorts has emerged as a transformative solution to these challenges. Virtual patients are computer-generated simulations that mimic the clinical characteristics of real patients, enabling researchers to simulate clinical trials without involving human participants initially [19]. This in silico approach represents a paradigm shift from traditional reliance on animal and early-phase human trials, accelerated by regulatory evolution including the FDA's landmark decision to phase out mandatory animal testing for many drug types [1]. This article explores the creation and application of virtual patient cohorts for clinical trial simulation, comparing in silico methodologies with traditional experimental approaches in pharmaceutical research and development.

Methodological Foundations of Virtual Patient Generation

Defining Virtual Patients and Digital Twins

Virtual patients are computer-generated models that simulate the clinical characteristics of real patients, used within in silico studies to predict drug effects without initial human or animal testing [19]. These models range from population-representative virtual cohorts to sophisticated digital twins - virtual replicas of individual patients that integrate multi-omics data, biomarkers, lifestyle factors, and real-world data to simulate disease progression and therapeutic response with high temporal resolution [19] [1]. The key distinction lies in personalization: while virtual patient cohorts represent population diversity, digital twins are tailored to specific individuals and updated continuously with new clinical data.

Technical Approaches and Algorithms

Several methodological frameworks enable virtual patient generation, each with distinct advantages and computational considerations:

Table 1: Comparison of Virtual Patient Generation Methodologies

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Agent-Based Modeling (ABM) | Simulates individual agent interactions within a system [19] | Models complex behaviors and outcomes; suitable for disease transmission and immune responses [19] | Computationally intensive; limited scalability for very large populations [19] |

| AI and Machine Learning | Analyzes large datasets to identify patterns and make predictions [19] | Enhances simulation accuracy; facilitates synthetic datasets for rare diseases [19] | "Black box" problem reduces interpretability; risk of training data bias [19] |

| Digital Twins | Virtual replicas updated continuously with real-time clinical data [19] [1] | High temporal resolution; enables real-time intervention simulation [19] | Dependent on high-quality real-time data; computationally intensive to maintain [19] |

| Biosimulation/Statistical Methods | Uses mathematical models (ODEs, Monte Carlo) and statistical techniques (regression, bootstrapping) [19] | Cost-effective for small-scale data modeling; predicts diverse clinical scenarios [19] | Model assumptions may oversimplify complex systems; limited generalizability [19] |

Workflow for Virtual Patient Generation

The creation of physiologically plausible virtual patients follows a systematic workflow that transforms clinical data into validated computational representations:

Diagram 1: Virtual Patient Generation and Application Workflow

This workflow begins with comprehensive data integration from sources including electronic health records, clinical trials, and multi-omics databases (genomics, transcriptomics, proteomics) [1] [36]. Parameter distributions are then estimated, with lognormal distributions commonly assumed for physiological parameters [36]. Virtual patients are generated through sampling techniques like Latin Hypercube Sampling, followed by rigorous calibration and validation against real-world clinical outcomes [36]. The final stage involves deploying the validated virtual cohort for clinical trial simulation and therapeutic optimization.

Comparative Analysis: In Silico Tools vs. Traditional Methods

Performance Benchmarking Across Development Metrics

Virtual patient technologies demonstrate significant advantages over traditional methods across key pharmaceutical development metrics:

Table 2: Performance Comparison: In Silico Tools vs. Traditional Methods

| Development Metric | Traditional Methods | Virtual Patient Approaches | Comparative Advantage |

|---|---|---|---|

| Timeline | 10+ years from patent to approval [19] | Early failure identification; accelerated simulation cycles [1] | Potential 12-month acceleration (e.g., COVID-19 therapies) [3] |

| Cost | >$2.87 billion per new drug [19] | Up to 60% reduction in preclinical R&D expenses [3] | Significant cost savings through improved success rates [19] |

| Success Rate | ~10% from patent to market [19] | Improved candidate selection; better trial design [19] [1] | Higher transition probability through development phases [19] |

| Patient Recruitment | Challenging, especially for rare diseases [19] | Synthetic cohorts; no recruitment barriers [19] | Enables studies for rare diseases previously impractical to trial [19] |

| Ethical Considerations | Animal testing and human trial risks [19] [1] | Reduced animal and human experimentation [19] [1] | Addresses ethical concerns of traditional approaches [19] |

Experimental Validation and Regulatory Acceptance

The growing regulatory acceptance of in silico approaches underscores their increasing credibility. The FDA has begun accepting in silico data as primary evidence in select cases, including model-informed drug development programs and virtual bioequivalence studies [1]. This shift follows demonstrated predictive accuracy across therapeutic areas:

In immuno-oncology, virtual patient cohorts have replicated real-world response patterns to immune checkpoint inhibitors. For example, a quantitative systems pharmacology model for immuno-oncology (QSP-IO) was successfully calibrated using multi-omics data from The Cancer Genome Atlas (TCGA) and validated against real patient data from the iAtlas database [36]. The virtual cohort demonstrated statistically equivalent distributions of key immune biomarkers (CD8/CD4 ratio, CD8/Treg ratio, M1/M2 macrophage ratio) compared to real patient populations [36].

In COVID-19 research, virtual patient cohorts simulated immune response differences in cancer and immunosuppressed patients, predicting that severe cases would exhibit decreased CD8+ T cells, elevated interleukin-6 concentrations, and delayed type I interferon peaks - predictions subsequently validated against clinical data [37].

Leading Platforms for Virtual Patient Implementation

Comparative Analysis of Commercial Solutions

Several specialized platforms have emerged as leaders in virtual patient technology, each with distinct capabilities and target applications:

Table 3: Leading Virtual Patient Platform Comparison

| Platform | Key Technology | Primary Applications | Validated Performance |

|---|---|---|---|

| Deep Intelligent Pharma | AI-native multi-agent platform; dynamic digital twins [38] | End-to-end R&D transformation; complex trial simulation [38] | 18% higher R&D automation efficiency vs. BioGPT/BenevolentAI [38] |

| Unlearn.AI | TwinRCTs for synthetic control arms [38] | Randomized controlled trials; reducing patient burden [38] | Up to 30% reduction in trial sample sizes [38] |

| Nova In Silico | Jinkō platform for virtual patient twins [38] | Therapeutic response simulation; accelerated development [38] | High precision in disease progression modeling [38] |

| Dassault Systèmes | 3DEXPERIENCE with SIMULIA for biomedical simulation [38] | Complex biomedical applications; medical device testing [38] | Industry-recognized for holistic simulation environments [38] |

Implementation Considerations and Limitations

Despite their transformative potential, virtual patient technologies face several implementation challenges. The computational nature of virtual patients can yield erroneous outcomes if improperly calibrated and requires substantial expertise and computational resources [19]. Currently, standardized protocols for generating and utilizing virtual patient cohorts are lacking, creating reproducibility challenges [19]. Model accuracy remains dependent on the quality and completeness of input data, with risks of propagating biases present in training datasets [19] [38]. Additionally, regulatory frameworks for purely in silico evidence, while evolving rapidly, still require further development for broader acceptance [1].

Successful implementation of virtual patient methodologies requires both computational and experimental resources:

Table 4: Essential Research Resources for Virtual Patient Development

| Resource Category | Specific Tools & Databases | Function in Virtual Patient Development |

|---|---|---|

| Data Resources | TCGA, iAtlas, AURORA, HTAN [36] | Provide multi-omics data for model parameterization and validation [36] |

| Computational Tools | MATLAB, R, Python (SciPy/NumPy) | Statistical analysis, model implementation, and simulation execution |

| Modeling Frameworks | Agent-based platforms; QSP modeling tools [36] | Implement mechanistic models of disease progression and drug effects [36] |

| Validation Datasets | Historical clinical trial data; real-world evidence [19] | Benchmark virtual patient predictions against clinical outcomes [19] |

Virtual patient cohorts represent a fundamental transformation in clinical trial methodology, offering a powerful complement to traditional experimental approaches. By enabling more efficient, ethical, and inclusive drug development, these in silico technologies address critical limitations of conventional trials. The continuing evolution of artificial intelligence, multi-omics integration, and regulatory science will further establish virtual patients as indispensable tools in pharmaceutical development. As validation evidence accumulates and standardization improves, the integration of virtual patient cohorts alongside traditional methods promises to enhance success rates across the drug development pipeline, ultimately accelerating the delivery of innovative therapies to patients worldwide.

This guide objectively compares the performance of in silico tools against traditional experimental methods in early drug discovery, focusing on target engagement prediction and lead optimization. The analysis is framed within a broader thesis on computational tools for ecological risk assessment (ERA) research, providing researchers with a data-driven perspective on integrating these approaches.

Table 1: High-Level Comparison of Research Approaches in Early Discovery

| Feature | In Silico (Computational) | In Vitro (Test Tube) | In Vivo (Living Organism) |

|---|---|---|---|

| Core Principle | Biological experiments via computer simulation [39] | Studies in controlled environments outside living organisms [39] | Studies conducted with a whole, living organism [39] |

| Primary Context of Use in Early Discovery | Target ID, Virtual Screening, Docking, QSAR, Mechanism Modeling [35] | Cellular/molecular studies, initial efficacy/toxicity screening [39] | Understanding overall systemic effects, disease pathology [39] |

| Throughput & Scalability | Very High (runs numerous simulations quickly) [35] | High (can study many compounds at once) [39] | Low (time-consuming and resource-intensive) [29] |

| Cost Relative to Other Methods | Low (after initial model development) | Moderate [39] | Very High [29] |

| Animal Use | None (aligns with 3Rs principle) [39] | None [39] | Required [39] |

| Key Strength | Scalability, hypothesis generation from limited data, cost-effectiveness [35] [39] | Controlled environment, time-efficient, no animal use [39] | Reveals complex systemic interactions and whole-organism effects [39] |

| Key Limitation | Can be a simplification of biology; requires validation; model accuracy depends on input data [35] [39] | May not replicate precise conditions of a living organism [39] | Low scalability, high cost, ethical considerations [29] [39] |

Performance Comparison: Quantitative Data

Table 2: Quantitative Performance and Market Adoption of In Silico Methods

| Metric | Performance / Market Data | Context & Application |

|---|---|---|

| Market Size (2024) | USD 3.95 Billion [29] | Global In-Silico Clinical Trials Market, indicating widespread adoption. |

| Projected Market (2033) | USD 6.39 Billion [29] | Reflects a CAGR of 5.5% (2025-2033), showing expected growth. |

| Drug Development Cost Savings | Reduces experimental workload, shortens timelines, improves time-to-market [29] | Addresses average drug development cost >USD 2.3 billion per approved drug (2024). |

| Dominant Application (2024) | Drug Development (52% market share, USD 2.06 billion) [29] | Used for dosing optimization, toxicity prediction, and simulating population variability. |

| Regulatory Submission Growth | 19% Year-over-Year (2023–2024) [29] | Indicates growing regulatory acceptance for supporting approvals. |

Experimental Protocols & Methodologies

In SilicoTarget Engagement & Docking

Objective: To predict the binding affinity and mode of interaction between a small molecule (ligand) and a biological target (protein) prior to synthesis or physical testing.

Detailed Workflow:

- Protein Preparation: Obtain the 3D structure of the target protein from a database like the Protein Data Bank (PDB). The structure is then "cleaned" by removing water molecules and co-crystallized ligands, adding hydrogen atoms, and optimizing side-chain conformations for missing residues.

- Ligand Preparation: The 2D structure of the candidate molecule is drawn or imported from a chemical database. It is then converted into a 3D structure, and its geometry is minimized to the most stable conformation.

- Grid Generation: A grid box is defined around the protein's active site, specifying the spatial coordinates where the docking search will be conducted.

- Molecular Docking: An algorithm performs the docking simulation, sampling possible orientations and conformations of the ligand within the protein's active site.

- Scoring & Ranking: A scoring function evaluates each generated pose and ranks them based on the predicted binding affinity (often in kcal/mol). The top-ranked poses are analyzed for key molecular interactions (e.g., hydrogen bonds, hydrophobic contacts).

Quantitative Structure-Activity Relationship (QSAR) Modeling

Objective: To build a predictive model that relates a set of numerical descriptors (properties) of chemical compounds to their biological activity, enabling the virtual screening and optimization of lead compounds.

Detailed Workflow:

- Data Curation: A dataset of compounds with known biological activities (e.g., IC50, Ki) is assembled. The data is cleaned to remove duplicates and correct errors.

- Descriptor Calculation: Numerical descriptors representing the molecules' structural and physicochemical properties (e.g., molecular weight, logP, polar surface area, topological indices) are calculated for each compound.

- Dataset Division: The curated dataset is split into a training set (typically 70-80%) to build the model and a test set (20-30%) to validate its predictive power.

- Model Building: A machine learning algorithm (e.g., partial least squares regression, random forest, support vector machine) is applied to the training set to find a mathematical relationship between the descriptors and the biological activity.

- Model Validation: The model's predictive ability is rigorously assessed using the test set. Key metrics include the correlation coefficient (R²) and root mean square error (RMSE) for the test set predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Data Resources for In Silico Discovery

| Tool / Resource Category | Examples | Function in Research |

|---|---|---|

| Protein Structure Databases | RCSB Protein Data Bank (PDB) | Provides experimentally determined 3D structures of proteins and nucleic acids, essential for structure-based design and docking studies. |

| Chemical Compound Databases | PubChem, ZINC | Libraries of commercially available or known chemical compounds for virtual screening and lead identification. |

| Software for Molecular Modeling & Docking | AUTO-DOCK, GOLD, Glide, SWISS-MODEL [35], I-TASSER [35] | Platforms used for protein-ligand docking, homology modeling, and predicting protein structure and function. |

| Software for QSAR & Machine Learning | Python (Pandas, Scikit-learn), R | Programming environments with libraries for calculating molecular descriptors, building, and validating QSAR and machine learning models. |

| Variant Effect Prediction Tools | REVEL [35], MutPred [35], SpliceAI [35] | Algorithms that analyze genetic variants to predict their potential pathogenicity and impact on protein function, crucial for target validation. |

| Network Analysis Platforms | STRING [35], Cytoscape [35] | Tools for visualizing and analyzing protein-protein interaction networks, helping to understand disease pathways and identify novel targets. |

Drug discovery and environmental risk assessment (ERA) have traditionally relied on costly and time-consuming experimental methods. The emergence of sophisticated in silico tools is fundamentally shifting this paradigm, offering accelerated, cost-effective, and human-relevant predictive capabilities. This guide objectively compares the performance of these computational approaches against traditional methods, focusing on two critical advanced use cases: drug repurposing and predicting Drug-Induced Liver Injury (DILI). DILI remains a primary cause of drug attrition, accounting for approximately one in three market withdrawals and over 50% of acute liver failure cases in the Western world [40] [41]. Similarly, de novo drug discovery is a protracted process, taking 13-15 years and costing $2-3 billion on average, with a 90% attrition rate [42]. In silico methodologies are proving instrumental in mitigating these challenges, enhancing predictive accuracy while aligning with the 3Rs (Replacement, Reduction, and Refinement) principle in toxicology.

Performance Comparison: In Silico Tools vs. Traditional Methods

The following tables summarize quantitative performance data and characteristics of in silico tools compared to traditional experimental methods.

Table 1: Performance Comparison for DILI Prediction

| Method / Model | AUC | Accuracy | Key Advantages | Key Limitations |

|---|---|---|---|---|

| DILIGeNN (GNN) [43] | 0.897 | N/A | Learns directly from 3D molecular structures; state-of-the-art performance. | Complex model architecture; requires significant computational resources. |

| BioGL-GCN [44] | N/A | 79% | Integrates toxicogenomics and gene-gene interactions; validated with 3D PHH model. | Relies on quality of gene expression input data. |

| Ensemble (DNN-GATNN) [43] | 0.757 | N/A | Combines graph and fingerprint data for robust learning. | Ensemble approach can be computationally heavy. |

| Deep Neural Network (DNN) [43] | 0.713 | N/A | Effective at learning from complex molecular fingerprint data. | "Black box" nature; limited biological interpretability. |

| Traditional QSAR Models [45] | ~0.63-0.69 | ~59-69% | Cost-effective, rapid, and requires no physical compounds. | Struggles with complex biological mechanisms; limited interpretability. |

| In Vivo Animal Models [41] | Low Concordance (43-63%) | N/A | Provides systemic organism-level data. | Low concordance with human outcomes; ethically challenging; costly and slow. |

| In Vitro Cell Assays (HepG2) [40] | Variable | N/A | Human-relevant; medium-throughput. | Often lack metabolic competence; oversimplified biology. |

Table 2: Performance Comparison for Drug Repurposing

| Method / Strategy | Key Advantages | Reported Repurposing Examples | Limitations / Challenges |

|---|---|---|---|

| Signature-Based (e.g., CMap/LINCS) [42] | Unbiased discovery; can elucidate novel MoAs. | Sildenafil (Angina → Erectile Dysfunction) [42] | Requires high-quality, extensive gene expression databases. |

| Knowledge-Based (Network/Pathway) [42] | Leverages existing biological knowledge; hypothesis-driven. | Thalidomide (Morning sickness → Leprosy, Myeloma) [42] | Limited by incompleteness of existing knowledge graphs. |

| Structure-Based (Molecular Docking) [46] | Provides mechanistic hypotheses; well-established. | Various candidates for COVID-19 [46] | Computational intensive; accuracy depends on protein model quality. |

| AI/ML-Based [42] [46] | Can integrate multi-omics data for novel predictions. | Bupropion (Depression → Smoking Cessation) [46] | Intellectual property protection can be challenging [46]. |

| Traditional (Serendipitous) [42] | Has led to major successes. | Aspirin (Inflammation → Antiplatelet) [42] | Unsystematic, unpredictable, and inefficient. |

Table 3: The Scientist's Toolkit - Essential Research Reagents and Resources

| Resource / Reagent | Type | Function in Research | Example Use Case |

|---|---|---|---|

| Primary Human Hepatocytes (PHH) [40] [44] | In Vitro Cell Model | Gold standard for human-relevant liver toxicology studies; retain metabolic competence. | Experimental validation of DILI predictions in 3D culture [44]. |

| HepaRG Cell Line [40] | In Vitro Cell Model | Differentiates into hepatocyte-like cells with strong metabolic enzyme expression. | Studying chronic drug effects and compounds requiring metabolic activation [40]. |

| LINCS L1000 Dataset [44] | Transcriptomics Database | Contains over 1.3 million gene expression profiles from drug-treated cell lines. | Training data for signature-based repurposing and DILI models [44]. |

| FDA DILIrank / DILIst [43] [44] | Curated Database | Benchmark datasets of drugs with verified DILI concern levels for model training and validation. | Serving as a ground truth for developing and benchmarking DILI prediction algorithms [43]. |

| Open TG-GATEs [47] | Toxicogenomics Database | Provides transcriptomic data from drugs across multiple concentrations and time points. | Concentration-response modeling and mechanistic studies of DILI [47]. |

| CSD, ChEMBL, PDB [48] | Chemical/Biological Database | FAIR (Findable, Accessible, Interoperable, Reusable) databases of chemical structures and bioactivities. | Structure-based screening and knowledge graph construction for repurposing [48]. |

Experimental Protocols for Key Studies

This protocol outlines the methodology for developing state-of-the-art GNN models like DILIGeNN.

- Data Curation: Obtain the latest FDA DILI dataset (e.g., DILIst). Standardize and curate molecular structures.

- Molecular Graph Generation: Convert each molecule into a graph representation where atoms are nodes and bonds are edges. Augment these graphs with 3D spatial and electrostatic features (e.g., bond lengths, partial charges) derived from molecular optimization.

- Model Training:

- Implement and compare multiple GNN architectures (e.g., GCN, GAT, GraphSAGE, GIN).

- Use a warm start with repeated early stopping training strategy to avoid overfitting and improve generalization.

- The model learns to map the augmented graph structure to a DILI risk classification (e.g., Most Concern vs. Less/No Concern).

- Model Validation: Perform strict scaffold-based splitting of the dataset to evaluate performance on structurally novel compounds. Report standard metrics like AUC and accuracy.

This protocol describes an experimental workflow to biologically validate computational DILI predictions.

- Prediction Phase: Use a trained in silico model (e.g., BioGL-GCN) to predict the hepatotoxicity of a compound library.

- Cell Culture: Seed primary human hepatocytes (PHHs) in a 3D culture system (e.g., spheroids) to better mimic the in vivo liver environment.

- Compound Exposure: Treat the 3D PHH spheroids with the predicted DILI-positive and DILI-negative compounds across a range of physiologically relevant concentrations.

- Endpoint Assessment: After 48-72 hours of exposure, measure established endpoints of hepatotoxicity:

- Cell Viability: Using ATP-based assays (e.g., CellTiter-Glo).

- Liver-Specific Damage: Measure release of biomarkers like ALT and AST into the culture medium.

- Data Analysis: Compare the in silico predictions with the experimental viability and toxicity data to calculate the model's prediction accuracy.

This protocol leverages high-throughput transcriptomic data for systematic drug repurposing.

- Disease Signature Generation:

- Obtain gene expression data from diseased tissue (e.g., from GEO) and healthy controls.

- Perform differential expression analysis to identify a unique "disease signature" (a set of up- and down-regulated genes).

- Drug Signature Query:

- Access a large-scale drug perturbation database like LINCS L1000, which contains gene expression profiles from cell lines treated with thousands of compounds.

- Extract the "drug signature" for each compound in the database.

- Pattern-Matching Analysis:

- Use a connectivity metric (e.g., Kolmogorov-Smirnov test, cosine similarity) to compare the disease signature with all drug signatures.

- The goal is to identify drugs whose signature is inversely correlated ("reversed") with the disease signature, implying a potential therapeutic effect.