Large Language Models in Chemical Life Cycle Assessment: A New Frontier for Sustainable Drug Discovery

This article explores the transformative potential and practical challenges of integrating Large Language Models (LLMs) into Chemical Life Cycle Assessment (LCA) for researchers and drug development professionals.

Large Language Models in Chemical Life Cycle Assessment: A New Frontier for Sustainable Drug Discovery

Abstract

This article explores the transformative potential and practical challenges of integrating Large Language Models (LLMs) into Chemical Life Cycle Assessment (LCA) for researchers and drug development professionals. It provides a comprehensive examination, from foundational concepts where LLMs can automate data-intensive LCA tasks, to methodological applications in drug discovery pipelines like target identification. The content addresses critical troubleshooting for limitations such as model hallucinations and outlines optimization strategies. Finally, it presents a rigorous validation framework, benchmarking LLM performance against expert review to equip scientists with the knowledge to responsibly leverage AI for accelerating sustainable biomedical research.

LLMs and LCA: Demystifying the Core Concepts and Environmental Context

What are Large Language Models? A Primer on Transformers, Tokens, and Training

Large Language Models (LLMs) are a category of deep learning models trained on immense datasets, enabling them to understand, generate, and manipulate natural language with remarkable proficiency [1]. These models represent a significant leap in how humans interact with technology, as they are the first AI systems capable of handling unstructured human language at scale, moving beyond simple keyword matching to capture deeper context, nuance, and reasoning [1]. Their development is largely responsible for the recent explosion of artificial intelligence advancements and has become a cornerstone for various applications, including those in scientific domains such as chemical life cycle assessment (LCA) research. In LCA, LLMs offer the potential to automate the extraction and synthesis of chemical properties, environmental impact data, and regulatory information from vast scientific literatures, thereby accelerating sustainable drug development processes.

Foundational Architecture: The Transformer

At the heart of most modern LLMs lies the transformer architecture, introduced in the 2017 seminal paper "Attention Is All You Need" [2] [3]. This architecture overcame the limitations of previous recurrent neural networks (RNNs) and long short-term memory (LSTM) networks, which processed data sequentially and were difficult to parallelize [3]. The key innovation of the transformer is the self-attention mechanism, which allows the model to weigh the importance of different words in a sequence relative to each other, regardless of their positional distance [1] [2]. This parallel processing capability significantly reduces training time and allows models to handle long-range dependencies in text effectively [2].

The transformer architecture primarily consists of two components, though some models may use only one:

- Encoder: Processes the input sequence and builds a contextualized representation. It comprises multiple layers, each containing a multi-head self-attention mechanism and a feed-forward neural network [2] [4].

- Decoder: Generates the output sequence one token at a time, using information from the encoder and its own previous outputs. It includes an additional attention layer over the encoder's output compared to the encoder's structure [2].

The following diagram illustrates the flow of information through a standard transformer architecture:

The Self-Attention Mechanism

The self-attention mechanism is the centerpiece of the transformer [1]. It allows the model to flexibly focus on relevant context while ignoring less important tokens. For each token in a sequence, self-attention calculates a weighted sum of the values of all other tokens in the sequence, where the weights (attention scores) are determined by the compatibility between the token's query and the keys of all other tokens [1]. This process enables the model to understand contextual relationships, such as resolving pronoun antecedents (e.g., knowing whether "it" refers to "the animal" or "the street" in a sentence) [4].

Core Components and Protocols

Tokenization: The Input Protocol

Tokenization is the foundational process of converting raw text into a format understandable by an LLM. It breaks down text into smaller, manageable units called tokens, which can be whole words, subwords, or characters [5] [6]. This process is crucial for models to handle rare words, typos, and multilingual text efficiently [6].

Workflow Protocol: The tokenization process follows a standardized, multi-step protocol:

- Step 1: Normalization: The input text is converted into a standard, machine-friendly form. This includes converting characters to lowercase, applying Unicode normalization, and trimming extra whitespace [5].

- Step 2: Pre-tokenization: The normalized text is broken into preliminary chunks based on spaces and punctuation [5]. For example, "Let's explore!" might become

["Let", "'", "s", "explore", "!"]. - Step 3: Subword Segmentation: The chunks are further broken down into meaningful subword units using algorithms like Byte Pair Encoding (BPE) or WordPiece [5] [7]. This allows the model to process uncommon words (e.g., "unstoppable" →

["un", "stop", "able"]) [5]. - Step 4: Mapping to IDs: Each resulting token is mapped to a unique integer ID from the model's predefined vocabulary, which is fixed after training [5] [7]. Special tokens (e.g., beginning-of-sentence, end-of-sentence) are also added to manage sequence boundaries [5].

Table 1: Common Tokenization Algorithms and Their Characteristics

| Algorithm | Mechanism | Example Model Usage | Handling of 'unstoppable' |

|---|---|---|---|

| Byte Pair Encoding (BPE) [5] | Iteratively merges the most frequent pairs of characters or bytes. | GPT series [5] [7] | ["un", "stop", "able"] |

| WordPiece [5] | Merges subwords based on probability, not just frequency. | BERT [5] | ["un", "stop", "##able"] |

| Unigram [5] | Uses a probabilistic model to iteratively remove the least valuable tokens. | ["un", "stop", "p", "able"] |

Model Training Pipeline

The development of a sophisticated LLM is a multi-stage process designed to first instill broad knowledge and then refine the model's behavior for specific tasks or alignment with human preferences.

Protocol 1: Pretraining

- Objective: To build a base model (or foundation model) with general-world knowledge and language understanding by learning to predict the next token in a sequence [1] [8].

- Method: Self-supervised learning on a massive corpus of raw, unlabeled text (e.g., web pages, books, code) [1] [8]. The model adjusts its internal parameters (weights) through trillions of examples to minimize the error in its predictions, a process involving backpropagation and gradient descent [1].

- Output: A base model capable of next-token prediction but not yet refined for specific tasks like instruction following [8].

Protocol 2: Post-Training (Fine-Tuning and Alignment)

- A. Supervised Fine-Tuning (SFT)

- Objective: To adapt the base model to perform specific tasks or follow instructions [1] [8].

- Method: Training the model on a smaller, high-quality dataset of (input, output) pairs that demonstrate the desired task, such as question-answering or summarization [1]. This updates the model's weights to produce outputs closer to the human-provided examples.

- B. Reinforcement Learning from Human Feedback (RLHF)

- Objective: To further align the model's outputs with human preferences for qualities like helpfulness, safety, and style [1] [8].

- Method: Humans rank different model outputs, and a reward model is trained to predict these rankings. The LLM is then fine-tuned using reinforcement learning to maximize the reward, encouraging it to generate outputs that humans prefer [1].

Table 2: Key Concepts in LLM Operation and Deployment

| Concept | Description | Implication for Researchers |

|---|---|---|

| Inference [1] | The process where a trained model generates output for a given prompt, one token at a time. | The core operation for using an LLM in an application. |

| Context Window [1] [6] | The maximum number of tokens a model can process in a single interaction. It is the model's "short-term memory." | Limits the amount of text (e.g., a research paper, a long conversation) that can be processed at once. |

| Retrieval-Augmented Generation (RAG) [1] | A technique that connects an LLM to external knowledge bases, providing it with relevant, up-to-date information during inference. | Crucial for overcoming knowledge cut-offs and grounding model responses in specific, factual data (e.g., proprietary chemical databases). |

The Scientist's Toolkit: Essential Research Reagents for LLM Experimentation

Table 3: Key "Research Reagent Solutions" for LLM Application Development

| Tool / Component | Function / Protocol | Relevance to Chemical LCA Research |

|---|---|---|

| Tokenizer [5] [7] | Converts raw text to token IDs and back. Different models (GPT, BERT) use different tokenizers. | Essential for preprocessing scientific literature, patents, and chemical data sheets before analysis by an LLM. |

| Base Model (e.g., LLaMA, GPT) [1] [9] | A pretrained, general-purpose LLM. Serves as the foundation for task-specific customization. | The starting point for building a domain-specific assistant for life cycle assessment without the prohibitive cost of pretraining. |

| Instruction-Tuned Model [1] | A model fine-tuned to follow user instructions and engage in conversation. | Ready-to-use for Q&A and summarization tasks (e.g., "Summarize the environmental impact of this solvent."). |

| Embedding Model [9] [2] | Converts text into numerical vectors (embeddings) that capture semantic meaning. | Enables semantic search across scientific corpora to find relevant studies based on meaning, not just keywords. |

| RAG Pipeline [1] | A system architecture that retrieves documents from a knowledge base and feeds them to an LLM to generate answers. | Allows an LLM to provide citations from trusted LCA databases and recent research, enhancing answer reliability. |

Application in Chemical Life Cycle Assessment Research

The technical components and protocols detailed above enable powerful applications of LLMs in chemical LCA and drug development. A primary use case is the automation of data extraction and synthesis. LLMs can be deployed to systematically scan and process vast scientific literature, technical datasheets, and regulatory documents to identify and extract key parameters relevant to LCA, such as energy consumption of synthesis pathways, greenhouse gas emissions, water usage, and toxicity profiles [1] [9]. Furthermore, through Retrieval-Augmented Generation (RAG), these models can be grounded in proprietary or highly specialized databases (e.g., Ecoinvent, PubChem), allowing researchers to build conversational interfaces that provide instant, cited answers to complex queries about chemical properties and their environmental impacts [1]. This capability significantly accelerates the early stages of drug development by providing rapid sustainability assessments, thereby fostering the design of greener pharmaceutical compounds and processes.

The Data-Intensive Challenge of Traditional Chemical Life Cycle Assessment

Life Cycle Assessment (LCA) has emerged as a critical tool for chemical companies under mounting pressure to reduce environmental impacts, comply with tightening regulations, and meet investor demands for clear sustainability strategies [10]. However, the application of traditional LCA to chemical products presents significant data-intensive challenges that complicate comprehensive environmental impact evaluation. The core of this challenge lies in the need for a comprehensive evaluation of a product's environmental footprint across its entire life cycle – from raw material extraction through production, use, and end-of-life phases [10].

The data requirements for credible chemical LCA are substantial, involving complex supply chains, multiple impact categories, and diverse geographical considerations. These requirements have become increasingly difficult to meet using traditional methodologies alone. Within this context, Large Language Models (LLMs) offer transformative potential to process, analyze, and generate insights from the vast datasets required for robust chemical LCA. The emergence of sophisticated LLM architectures and training approaches, including reinforced reasoning models and cultural learning-based adaptation frameworks, creates new opportunities to overcome longstanding bottlenecks in LCA data management and interpretation [11] [12].

The data-intensive nature of chemical LCA manifests across multiple dimensions, from supply chain complexity to regulatory requirements. The tables below summarize key quantitative challenges and the corresponding data management requirements.

Table 1: Core Data Challenges in Chemical Life Cycle Assessment

| Challenge Dimension | Specific Data Requirements | Traditional Limitations |

|---|---|---|

| Supply Chain Complexity | Data from multiple tiers of suppliers; upstream and downstream emissions tracking [10] | Limited supplier transparency; incomplete Scope 3 emissions data [10] |

| Impact Assessment | Multiple environmental impact categories (GHG emissions, water use, toxicity, etc.) [10] | Data gaps for less common impact categories; methodological inconsistencies |

| Geographical Variability | Region-specific data for energy grids, transportation, and resource availability [10] | Overreliance on global averages; lack of localized data for specific production regions |

| Temporal Dynamics | Time-sensitive data for energy sources, technological evolution, and policy changes | Static assessments that quickly become outdated; insufficient longitudinal tracking |

| Regulatory Compliance | Evidence for claims under EU CSRD, ESPR, Product Environmental Footprint (PEF) [10] | Difficulty substantiating green claims; compliance documentation burdens |

Table 2: Data Management Requirements for Credible Chemical LCA

| Data Management Aspect | Minimum Requirements | Advanced Capabilities Needed |

|---|---|---|

| Data Collection | Primary data for core processes; secondary data for background systems [10] | Automated data extraction from diverse formats (PDFs, spreadsheets, databases) |

| Data Quality | Evidence for data quality indicators (precision, completeness, representativeness) | Intelligent data gap filling with uncertainty quantification |

| Data Integration | Consistent formatting across multiple data sources | Semantic integration of disparate data structures and terminology |

| Data Transparency | Documented data sources and methodological choices | Full audit trails with provenance tracking and version control |

| Data Interpretation | Identification of environmental "hotspots" across life cycle stages [10] | Predictive modeling of improvement scenarios; strategic priority setting |

LLM-Enhanced Experimental Protocols for Chemical LCA

Protocol: Automated Data Extraction and Categorization from LCA Databases

Purpose: To systematically extract, classify, and structure unstructured LCA data using LLMs to overcome data fragmentation challenges.

Materials and Reagents:

- LLM Platform: Access to foundation models (e.g., GPT-4, Claude, or domain-specific models) [13]

- Data Sources: Scientific literature, regulatory documents, supplier environmental declarations [10]

- Computational Environment: Python/R environment with LLM API access or local model deployment

- Validation Datasets: Curated LCA databases (e.g., Ecoinvent, GLAD) for benchmarking [14]

Procedure:

- Data Collection: Compile heterogeneous data sources including PDF reports, spreadsheet inventories, journal articles, and supplier sustainability disclosures.

- Pre-processing: Convert all documents to standardized text format while preserving structural elements (tables, headings, units).

- LLM Fine-tuning: Adapt base LLM using LCA-specific terminology and methodologies through continued pre-training on curated corpora [13].

- Information Extraction: Implement structured prompting to extract key LCA parameters including:

- Inventory flows (inputs/outputs with quantities and units)

- Geographical and temporal scope information

- Methodological choices (allocation rules, impact assessment methods)

- Data quality indicators

- Cross-Validation: Compare LLM-extracted data with manual extractions on subset to calculate accuracy metrics.

- Data Integration: Transform extracted information into standardized LCA data format (e.g., ILCD, Ecospold) for model import.

Validation Metrics:

- Extraction accuracy (>95% for numerical parameters)

- Unit conversion precision (>99%)

- Completeness of dataset construction (>90% of required fields)

Protocol: Predictive Hotspot Identification Using Multi-Modal LLMs

Purpose: To leverage LLM reasoning capabilities for identifying environmental impact hotspots and improvement priorities across chemical product life cycles.

Materials and Reagents:

- Reasoning-Enhanced LLM: Models with reinforced reasoning capabilities (e.g., models trained with reinforcement learning for reasoning tasks) [11]

- LCA Inventory Database: Structured inventory data for chemical processes

- Impact Assessment Methods: Standardized characterization factors (e.g., TRACI, ReCiPe)

- Visualization Tools: Graph generation libraries for result communication

Procedure:

- Data Integration: Load complete LCA model with inventory and impact assessment results.

- Contextual Analysis: Apply LLM to analyze process metadata including:

- Technology maturity and scalability

- Economic considerations and cost data

- Regulatory constraints and drivers

- Stakeholder priorities and sustainability goals

- Pattern Recognition: Utilize LLM to identify unusual patterns or discrepancies in impact distributions across life cycle stages.

- Scenario Generation: Create alternative improvement scenarios based on hotspot analysis:

- Raw material substitution options

- Process optimization opportunities

- Energy efficiency improvements

- Circular economy strategies (recycling, recovery)

- Priority Ranking: Apply multi-criteria decision analysis with LLM-assisted weighting of environmental, economic, and technical factors.

- Recommendation Formulation: Generate actionable improvement strategies with estimated impact reduction potential and implementation considerations.

Validation Metrics:

- Alignment with expert hotspot identification (>90% concordance)

- Comprehensiveness of improvement opportunities identified

- Actionability of recommendations for decision-makers

Protocol: Regulatory Compliance and Reporting Automation

Purpose: To automate the generation of compliance documentation for evolving regulatory frameworks using LLM-based content synthesis.

Materials and Reagents:

- Regulatory Knowledge Base: Updated repository of LCA-related regulations (CSRD, ESPR, PEF, DPP) [10]

- Template Library: Standardized reporting templates for different regulatory requirements

- Claim Substantiation Database: Evidence requirements for environmental claims

- Validation Protocols: Methodology for verifying compliance completeness

Procedure:

- Regulatory Monitoring: Continuously update LLM knowledge base with latest regulatory developments and reporting requirements.

- Data Gap Analysis: Systematically identify missing data elements required for specific compliance demonstrations.

- Document Assembly: Generate draft compliance documentation by populating templates with project-specific LCA data.

- Claim Substantiation: Verify all environmental claims against underlying LCA evidence with appropriate uncertainty qualifications.

- Stakeholder Customization: Adapt reporting content and detail level for different audiences (regulators, investors, customers).

- Quality Assurance: Implement automated checks for consistency, completeness, and alignment with regulatory terminology.

Validation Metrics:

- Regulatory requirement coverage (100% of mandatory elements)

- Reduction in manual preparation time (>70%)

- First-pass regulatory acceptance rate (>95%)

Visual Workflows for LLM-Enhanced Chemical LCA

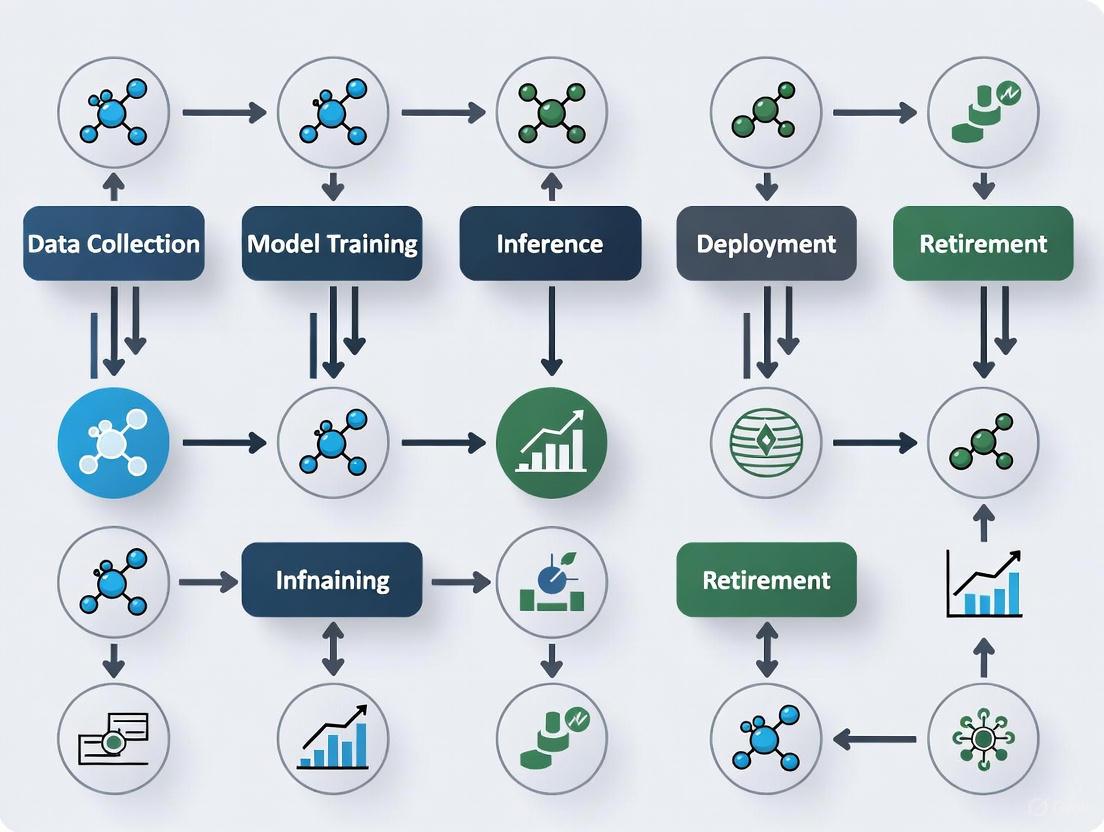

The following diagrams illustrate the integration of LLMs into traditional chemical LCA workflows, highlighting both current applications and emerging opportunities.

Diagram 1: Integration of LLMs within Traditional LCA Workflow. This diagram illustrates how LLM technologies enhance specific phases of the chemical LCA process, particularly in handling data-intensive tasks.

Diagram 2: LLM Architecture for Chemical LCA Data Processing. This diagram outlines the specialized LLM architecture required to transform diverse data inputs into actionable LCA insights, highlighting core processing capabilities.

Table 3: Key Research Reagent Solutions for LLM-Enhanced Chemical LCA

| Tool Category | Specific Solutions | Function in LCA Research |

|---|---|---|

| LLM Platforms & Models | Reasoning-enhanced LLMs (e.g., models with reinforced reasoning training) [11]; Domain-adapted models (e.g., models fine-tuned on chemical literature) | Perform complex pattern recognition across LCA datasets; generate insights from unstructured data; automate reporting tasks |

| LCA Databases & Data Sources | GLAD (Global LCA Data Access) [14]; Ecoinvent database; Proprietary chemical LCA data | Provide foundational life cycle inventory data; enable benchmarking and validation of LLM outputs; ensure data quality |

| Computational Infrastructure | High-performance computing clusters; Cloud-based LLM deployment platforms; Vector databases for embedding storage | Enable processing of large-scale LCA datasets; support fine-tuning of domain-specific models; facilitate rapid experimentation |

| Software & Libraries | Python LCA libraries (Brightway2, Activity-Browser); LLM frameworks (Hugging Face, LangChain); Visualization tools (Graphviz, Plotly) | Support end-to-end LCA modeling; integrate LLM capabilities into existing workflows; create interpretable visualizations |

| Validation & Benchmarking Tools | Standardized LCA datasets with known outcomes; Statistical analysis packages; Uncertainty quantification tools | Verify accuracy of LLM-generated insights; quantify uncertainty in predictions; ensure methodological robustness |

The data-intensive challenges of traditional chemical Life Cycle Assessment represent a significant bottleneck in the chemical industry's sustainability transformation. However, the integration of Large Language Models into LCA workflows offers promising pathways to overcome these limitations through automated data processing, intelligent pattern recognition, and enhanced decision support. By leveraging LLM capabilities for data extraction, analysis, and interpretation, researchers and practitioners can address the core challenges of data complexity, supply chain transparency, and regulatory compliance more effectively than with traditional methods alone.

The experimental protocols and visual workflows presented in this document provide a foundation for implementing LLM-enhanced approaches to chemical LCA. As LLM technologies continue to evolve—particularly in areas of reasoning, domain adaptation, and multimodal processing—their potential to transform chemical life cycle assessment will only increase. Future research should focus on validating these approaches across diverse chemical product categories, improving the integration of uncertainty quantification, and developing standardized benchmarks for evaluating LLM performance in LCA applications. Through continued innovation at the intersection of artificial intelligence and sustainability science, the chemical industry can accelerate its progress toward more sustainable products and processes.

The integration of Large Language Models (LLMs) into chemical research and drug development offers transformative potential for accelerating life cycle assessment (LCA) and molecular discovery. However, this capability comes with a significant and often overlooked environmental cost. The substantial energy and water consumption of training and deploying these models presents a critical paradox: the tools developed to advance science and sustainability are themselves resource-intensive [15] [16]. For researchers and drug development professionals, quantifying this footprint is essential for responsible AI deployment. This document provides detailed application notes and protocols to measure, benchmark, and mitigate the environmental impact of LLMs within a chemical LCA research context.

Quantitative Footprint of LLMs

The environmental footprint of LLMs is primarily measured through energy consumption (and its associated carbon emissions) and water use. The following tables summarize key quantitative data for benchmarking.

Table 1: AI Inference Operational Footprint (Per Prompt)

| Metric | Low-Efficiency Benchmark | High-Efficiency Benchmark (e.g., Gemini) | Equivalent Context |

|---|---|---|---|

| Energy | Up to 29 Wh per long prompt [17] | 0.24 Wh (median text prompt) [18] | Equivalent to watching TV for <9 seconds [18] |

| Carbon Emissions | — | 0.03 gCO2e [18] | — |

| Water Consumption | ~519 mL per 100 words (5 drops per prompt) [19] [18] | 0.26 mL [18] | Five drops of water [18] |

Table 2: Projected Macro-Scale Demand from AI Data Centers

| Resource | Current Consumption (2023-2025) | Projected Consumption (2030) | Context & Drivers |

|---|---|---|---|

| Power Demand | 55 GW (2023) [20] | 84 GW (2027) [20] | 165% increase driven by high-density AI workloads [20]. |

| Electricity Consumption (Global) | 460 TWh (2022) [16] | Approaching 1,050 TWh (2026) [16] | AI is a major driver; could make data centers a top global electricity consumer [16]. |

| Direct Water Use (U.S.) | 66 billion liters (2023) [21] | Increasing in parallel with energy [19] | Driven by cooling needs; varies significantly by local climate and cooling technology [19] [21]. |

Experimental Protocols for Footprint Measurement

Accurately measuring the resource consumption of LLMs requires a comprehensive methodology that moves beyond theoretical chip-level calculations to account for real-world, full-system overhead.

Protocol: Comprehensive Life Cycle Inventory for LLM Inference

This protocol outlines a framework for quantifying the energy, carbon, and water footprint of an LLM inference task, such as an API call to a commercial model.

1. Goal and Scope Definition:

- Functional Unit: Define the system's function. For LLM inference, this is typically a single query or prompt, characterized by input and output token length [17].

- System Boundary: The assessment must include:

- Dynamic Power of Full System: Energy used by the primary ML accelerators (TPUs/GPUs), host CPUs, and RAM during active computation [18].

- Idle Power Attribution: Energy consumed by provisioned but idle capacity, required for reliability and traffic spikes [18].

- Data Center Overhead: Energy for cooling, power distribution, and other support infrastructure, measured by Power Usage Effectiveness (PUE) [18].

- Water Consumption: Water evaporated for on-site cooling and water consumed in the generation of the electricity used [19] [21].

2. Life Cycle Inventory (LCI) Data Collection:

- Energy Measurement: Combine direct power measurements with infrastructure multipliers.

- Method A (API-based): For commercial models, use a benchmarking framework that pairs API performance data (e.g., latency, tokens per second) with provider-specific environmental multipliers for energy and carbon [17].

- Method B (Infrastructure-aware): For open-source models, measure power draw at the GPU/TPU level using profiling tools (e.g.,

nvmlfor NVIDIA GPUs). To account for full-system consumption, a common heuristic is to double the GPU power draw to include CPUs, fans, and other overheads [15]. - Apply PUE: Multiply the total IT equipment energy by the data center's PUE to account for infrastructure overhead. Google's fleet-wide average PUE is 1.09, but this varies by facility [18].

- Calculate Carbon: Multiply total energy (kWh) by the local grid's carbon intensity (gCO2e/kWh) [18].

- Water Measurement: Calculate water footprint using direct and indirect factors.

- Direct Water: Multiply the total energy consumed (kWh) by the data center's Water Usage Effectiveness (WUE), reported in liters/kWh. The average WUE across data centers is 1.9 L/kWh [19].

- Indirect Water from Electricity: Multiply energy from the grid (kWh) by the water intensity of the power source (e.g., ~1.2 gallons/kWh U.S. average in 2023) [19].

3. Interpretation:

- Report results per functional unit (e.g., energy/query, water/query).

- Conduct sensitivity analysis on key parameters (e.g., prompt length, model size, grid location) [15].

- Compare results against benchmarks (see Table 1) to contextualize performance.

Protocol: LLM-Assisted Data Retrieval for Chemical LCA

This protocol leverages a domain-specific LLM to automate life cycle inventory (LCI) data retrieval from scientific literature, significantly reducing the manual research time and associated environmental burden [22].

1. Model Selection and Retraining:

- Base Model: Select a suitable open-weight model (e.g.,

LLaMA-2-7B) [22]. - Domain Adaptation (Pre-training): Inject domain knowledge by continuing pre-training on a curated corpus of scientific texts related to the LCA domain (e.g., methanol production, plastic packaging EoL treatment) [22].

- Task Fine-tuning: Fine-tune the model for specific downstream tasks:

2. Workflow Execution:

- Stage 1 - Document Identification: The fine-tuned classification model screens a corpus of literature (e.g., PDFs from PubMed, ACS Publications) to identify relevant studies [22].

- Stage 2 - Information Retrieval: A RAG pipeline is used to fetch the most relevant text chunks from the pre-identified documents based on a user query (e.g., "What is the global warming potential for producing 1 kg of methanol from biomass?").

- Stage 3 - Data Extraction: The fine-tuned Q&A model processes the retrieved context to generate a precise answer containing the requested LCI data [22].

3. Validation:

- Validate the framework's performance by comparing its extracted data against ground-truth sources like the USLCI database or manual expert extraction [22].

- Target performance metrics include high accuracy in document classification (>0.85) and high F1 scores for Q&A (>0.82) [22].

The following workflow diagram illustrates the protocol for comprehensive footprint measurement and the LLM-assisted data retrieval for chemical LCA.

The Scientist's Toolkit: Research Reagents & Materials

This section details key "research reagents"—technologies and strategies—essential for developing and deploying more sustainable LLMs in a research environment.

Table 3: Key Reagents for Sustainable AI Research

| Reagent Solution | Function & Mechanism | Application in LCA Research |

|---|---|---|

| Mixture-of-Experts (MoE) Models | Activates only a small subset of the model's neural network for a given query, reducing computations and data transfer by 10-100x [18]. | Running large, multi-purpose models for various LCA tasks (e.g., data extraction, impact interpretation) with lower operational footprint. |

| Quantization (e.g., AQT) | Reduces the numerical precision of model weights (e.g., from 32-bit to 8-bit), decreasing memory use and energy consumption without significant quality loss [18]. | Deploying models on local infrastructure or with smaller hardware footprints for faster, less energy-intensive inference. |

| Advanced Cooling Systems | Dissipates heat more efficiently than air cooling. Immersion cooling, where hardware is submerged in dielectric fluid, offers significant energy and water savings [19] [21]. | Essential for siting high-performance computing (HPC) clusters for AI model training in water-stressed regions. Reduces direct operational water footprint. |

| Carbon-Aware Computing | Schedules and routes non-urgent AI training jobs to times and locations where grid carbon intensity is lowest (e.g., when solar/wind are abundant) [23]. | A strategy for researchers to minimize the carbon footprint of long-running model training or large batch inference jobs for LCA. |

| Retrieval Augmented Generation (RAG) | Grounds an LLM on a specific, external knowledge base (e.g., a proprietary LCI database) to reduce "hallucinations" and improve accuracy without retraining the entire model [22]. | Creating highly accurate, domain-specific LCA assistants that provide reliable data, reducing time and resource waste from error correction. |

The environmental footprint of LLMs is a non-trivial factor that must be integrated into the planning and execution of chemical life cycle assessment research. By adopting the standardized measurement protocols, benchmarking against quantitative data, and leveraging the "reagents" of efficient models and computing strategies outlined in this document, researchers and drug development professionals can harness the power of AI responsibly. This ensures that the pursuit of scientific innovation and sustainability through AI does not come at an unacceptable cost to the planet.

The integration of Large Language Models (LLMs) into chemical life cycle assessment and drug development represents a fundamental transformation in research methodology rather than a replacement of human expertise. This paradigm shift positions AI as a collaborative partner that accelerates discovery while leveraging human scientific intuition. Chemical research has traditionally faced significant challenges, including efficiency bottlenecks where drug discovery requires screening 10⁴-10⁶ compounds over 5-10 years, data management difficulties with millions of dispersed chemical data points in heterogeneous formats, and complex system modeling challenges for problems like protein folding that demand enormous computational resources [24]. Within this context, LLMs have evolved from simple pattern recognition tools to sophisticated partners capable of augmenting human intelligence across the entire chemical research lifecycle.

The progression of AI in chemistry has moved through three distinct phases: the 1.0 stage (1980s-2010s) characterized by rules and statistical models like QSAR with limited generalization capability; the 2.0 stage (2010s-2020s) marked by deep learning approaches using CNNs for spectra and GNNs for molecular graphs that improved prediction accuracy but still required human experimental guidance; and the current 3.0 stage (2020s-present) defined by intelligent agent systems that create closed-loop cycles of "data input→model reasoning→experimental decision→result feedback→model update" [24]. This evolution has transformed LLMs from passive tools into active collaborators that enhance rather than replace scientific expertise, particularly in complex domains like chemical life cycle assessment where contextual understanding and multi-stage evaluation are critical.

Table: Evolution of AI in Chemical Research

| Phase | Time Period | Key Technologies | Capability Level | Human Role |

|---|---|---|---|---|

| AI 1.0 | 1980s-2010s | QSAR, Molecular Fingerprints, Statistical Models | Limited Generalization | Full experimental control |

| AI 2.0 | 2010s-2020s | Deep Learning (CNN, RNN, GNN), Pattern Recognition | Improved Prediction Accuracy | Experimental design & guidance |

| AI 3.0 | 2020s-Present | LLM Agents, Autonomous Experimentation, Closed-Loop Systems | Autonomous Research Capability | Strategic oversight & expertise integration |

Application Notes: LLM-Driven Acceleration in Chemical Research

Molecular Design and Optimization

LLMs function as force multipliers in molecular design by rapidly exploring chemical space and predicting structure-property relationships that would require extensive experimental investigation through traditional methods. Specialized scientific language models like ChemBERTa and MolBERT represent molecular structures as embeddings in continuous vector spaces, capturing complex chemical similarities and relationships that enable property prediction and analog generation [24] [25]. These models learn the fundamental mapping between molecular structure and chemical properties (SPF relationships), allowing researchers to focus experimental efforts on the most promising candidates. For example, Chemformer models have demonstrated exceptional capability in reaction prediction and optimization tasks, achieving accuracy levels that surpass human chemists in specific domains [25].

The integration of multi-modal approaches represents a particular strength of LLM-enabled molecular design. By combining molecular graph data with spectral information, textual research findings, and experimental results, these systems develop a comprehensive understanding of chemical behavior that transcends single-data-type approaches. Vision Transformer architectures processing infrared spectra coupled with GNNs analyzing molecular structure have shown significantly improved prediction accuracy for complex chemical properties compared to single-modality approaches [24]. This multi-modal capability is especially valuable in chemical life cycle assessment, where environmental impact, synthetic complexity, and functional performance must be balanced simultaneously.

Reaction Prediction and Optimization

The application of LLMs to reaction prediction and optimization has demonstrated remarkable acceleration in synthetic planning, with systems like IBM's RXN for Chemistry achieving unprecedented accuracy in predicting reaction outcomes and suggesting optimal conditions [24]. These models leverage vast chemical corpora including patents, research articles, and experimental data to identify patterns and relationships that inform synthetic planning. The core capability lies in the models' capacity to process chemical representations—particularly SMILES strings and molecular graphs—to predict reactivity, selectivity, and potential side products with accuracy rates exceeding traditional computational methods while requiring minimal computational resources [26].

Beyond forward prediction, LLMs excel at retrosynthetic analysis, decomposing target molecules into feasible synthetic pathways using available starting materials. Systems leveraging transformer architectures trained on reaction databases can propose multiple synthetic routes with assessment of step efficiency, atom economy, and potential hazards [25]. When integrated with robotic experimentation platforms, these systems create closed-loop environments where predictions inform experiments, results refine models, and the cycle continues autonomously. For instance, the RoboChem platform demonstrated the capability to complete approximately 20 molecular syntheses and optimizations per week—equivalent to a traditional research team's six-month output—through this continuous integration of prediction and experimentation [26].

Table: Quantitative Performance of LLMs in Chemical Research Applications

| Application Area | Traditional Method Timeline | LLM-Accelerated Timeline | Performance Improvement | Key Enabling Technologies |

|---|---|---|---|---|

| Molecular Design | 12-18 months | 2-5 months | 90% reduction in lead identification time [26] | GNNs, Transformer Models, Molecular Embeddings |

| Reaction Optimization | 3-6 months | 2-4 weeks | 40% improvement in parameter optimization efficiency [26] | Retrosynthesis Algorithms, Condition Prediction Models |

| ADMET Prediction | 4-8 weeks | 1-2 days | Accuracy exceeding traditional QSAR methods [25] | Multi-task Learning, Transfer Learning |

| Experimental Execution | Manual processes (days) | Automated workflows (hours) | 30x increase in experimental throughput [26] | Robotic Platforms, Autonomous Lab Equipment |

Chemical Life Cycle Assessment

LLMs bring transformative capabilities to chemical life cycle assessment by integrating diverse data sources—from synthetic pathways and environmental impact databases to regulatory frameworks and economic factors—into a comprehensive analytical framework. Specialized models can process technical literature, patent databases, and chemical inventories to map the complete life cycle of chemical products, from raw material extraction through production, use, and disposal [27]. This systems-level analysis enables researchers to identify environmental hotspots, evaluate green chemistry alternatives, and predict unintended consequences before committing to extensive laboratory work or production scaling.

The capacity of LLMs to navigate complex, multi-dimensional constraints makes them particularly valuable for sustainable chemical design. Models can simultaneously optimize for functionality, synthetic efficiency, and environmental impact by accessing and processing specialized databases like Ecoinvent, GaBi, and US LCI that contain detailed environmental impact factors for thousands of chemical processes and materials [27]. For example, an LLM system might identify a catalytic alternative that reduces energy consumption by 40% while maintaining yield, or suggest a biodegradable structural analog that eliminates persistent environmental pollutants—decisions that would be extraordinarily time-consuming through manual literature review alone.

Experimental Protocols: Methodologies for LLM Integration

Protocol 1: LLM-Augmented Molecular Property Prediction

Objective: To predict key molecular properties (solubility, toxicity, biological activity) using LLM embeddings and validate predictions through experimental testing.

Materials and Reagents:

- Chemical compounds for validation (purity >95%)

- Molecular representation software (RDKit, OpenBabel)

- Pre-trained scientific LLM (ChemBERTa, MolBERT)

- Assay materials for experimental validation

- Statistical analysis software (Python, R)

Procedure:

- Molecular Representation: Convert chemical structures to standardized SMILES notation and compute molecular descriptors using RDKit. Generate alternative representations (molecular graphs, fingerprints) for multi-modal analysis.

- LLM Embedding Generation: Process molecular representations through pre-trained scientific LLMs to generate embedding vectors that capture structural and functional characteristics. For transformer models, use attention mechanisms to identify structurally significant molecular substructures.

- Property Prediction: Feed molecular embeddings into prediction heads (fully connected neural networks) trained on curated chemical datasets (ChEMBL, PubChem) to estimate target properties. Implement ensemble methods where multiple models contribute to final predictions with confidence intervals.

- Experimental Validation: Select compounds representing prediction confidence extremes (high-confidence vs. borderline predictions) for experimental testing. Perform standardized assays (e.g., solubility measurement, cytotoxicity testing) under controlled conditions.

- Model Refinement: Incorporate experimental results into training data for model fine-tuning. Implement active learning strategies to prioritize compounds for testing that maximize model improvement.

Validation Metrics:

- Prediction accuracy (R², RMSE) against held-out test sets

- Early recognition performance (enrichment factors at 1%, 5% of screened library)

- Computational efficiency (screening rate in molecules/second)

- Experimental confirmation rate (% of predictions validated)

Protocol 2: Retrosynthetic Planning with LLM Guidance

Objective: To develop optimized synthetic routes for target molecules using LLM-based retrosynthetic analysis and validate routes through experimental execution.

Materials and Reagents:

- Target molecule specification (structure, purity requirements)

- Available starting material inventory

- Chemical reaction databases (Reaxys, SciFinder)

- Retrosynthetic planning software (ASKCOS, IBM RXN)

- Laboratory equipment for synthetic validation

Procedure:

- Route Generation: Input target molecule SMILES into LLM-powered retrosynthetic analysis system. Generate multiple synthetic routes using template-based and template-free approaches with step-by-step rationales.

- Route Evaluation: Assess generated routes using multi-criteria scoring including step count, atom economy, predicted yield, safety considerations, and starting material availability. Apply constraint optimization to prioritize routes aligning with sustainability principles.

- Condition Optimization: For selected routes, predict optimal reaction conditions (catalyst, solvent, temperature, concentration) using reaction outcome prediction models. Identify potential side products and purification challenges.

- Experimental Validation: Execute top-ranked synthetic routes in laboratory setting. Monitor reaction progress (TLC, LC-MS) and isolate products for characterization (NMR, HRMS).

- Knowledge Integration: Document experimental results and refinements to original predictions. Update model parameters to incorporate new synthetic knowledge, creating a self-improving system.

Validation Metrics:

- Route feasibility (successful execution rate)

- Synthetic efficiency (yield, purity, step count reduction)

- Material efficiency (atom economy, E-factor)

- Prediction accuracy (condition success rate)

Protocol 3: Chemical Life Cycle Assessment Using LLM Integration

Objective: To conduct comprehensive life cycle assessments of chemical products using LLM-powered data integration and analysis.

Materials and Reagents:

- LCA software (OpenLCA, SimaPro)

- LCA databases (Ecoinvent, GaBi, US LCI)

- Chemical process data (energy inputs, waste streams, emissions)

- LLM with access to scientific literature and regulatory databases

Procedure:

- Goal and Scope Definition: Define assessment boundaries, functional units, and impact categories. Use LLMs to identify relevant regulatory frameworks and industry standards for compliance.

- Inventory Analysis: Compile energy and material inputs, emissions, and waste streams across the chemical's life cycle. Deploy LLMs to extract and standardize data from disparate sources (technical reports, patents, supplier information).

- Impact Assessment: Calculate environmental impacts using standardized methods (ReCiPe, TRACI). Apply LLMs to identify impact hotspots and contribution analysis through natural language interpretation of results.

- Interpretation and Improvement: Generate improvement recommendations using LLM analysis of alternative materials, processes, and technologies. Create scenario comparisons with projected environmental benefits.

- Reporting and Documentation: Automate generation of assessment reports, executive summaries, and regulatory compliance documentation using LLM writing capabilities.

Validation Metrics:

- Database coverage and relevance

- Uncertainty quantification in assessments

- Improvement potential identification rate

- Regulatory compliance alignment

The Scientist's Toolkit: Essential Research Reagents and Solutions

The effective implementation of LLM-accelerated chemical research requires both computational and experimental components working in concert. The following toolkit represents essential resources across both domains:

Table: Essential Research Reagents and Computational Tools for LLM-Accelerated Chemistry

| Tool Category | Specific Examples | Function | Access Method |

|---|---|---|---|

| Scientific LLMs | ChemBERTa, MolBERT, Geneformer | Domain-specific language understanding for chemical and biological data | API access, Open-source implementations |

| Chemical Databases | ChEMBL, PubChem, CAS | Curated chemical structures, properties, and bioactivity data | Public APIs, Licensed access |

| LCA Databases | Ecoinvent, GaBi, US LCI | Environmental impact factors for chemical processes | Licensed database access |

| Molecular Representation | RDKit, OpenBabel, SMILES | Standardized chemical structure representation and manipulation | Open-source libraries |

| Reaction Prediction | IBM RXN, ASKCOS | Retrosynthetic analysis and reaction condition prediction | Web interfaces, APIs |

| Automation Platforms | RoboChem, CLARify | Automated execution of chemical synthesis and testing | Integrated hardware-software systems |

| Multi-modal AI | Vision Transformer, GNNs | Processing diverse data types (spectra, structures, text) | Deep learning frameworks |

| Collaboration Frameworks | AutoGen, LangChain | Multi-agent systems for complex problem decomposition | Open-source frameworks |

Implementation Framework: From Theory to Practice

Human-AI Collaborative Workflows

The successful integration of LLMs into chemical research requires thoughtfully designed collaborative workflows that leverage the respective strengths of human and artificial intelligence. Effective frameworks position LLMs as research assistants that handle data-intensive tasks while humans provide strategic direction and nuanced interpretation. For example, in drug discovery, LLMs can rapidly identify potential lead compounds by scanning chemical space and predicting properties, while medicinal chemists apply their understanding of synthetic feasibility, patent landscape, and clinical requirements to make final selections [25] [28]. This division of labor has demonstrated remarkable efficiency improvements, with systems like Insilico Medicine's Chemistry42 reducing the timeline for clinical candidate identification from traditional 4-6 years to approximately 18 months while cutting costs to one-third of conventional approaches [24].

The human-AI interface is particularly critical for handling unexpected results and edge cases where training data may be limited. Researchers should establish protocols for LLM output validation, with clearly defined confidence thresholds that trigger human review. For instance, molecular predictions with confidence scores below 0.85 might automatically route to expert evaluation before experimental commitment. Similarly, contradictory predictions from multiple models (e.g., conflicting toxicity assessments) should flag for human arbitration. These guardrails ensure that the acceleration benefits of LLMs don't come at the cost of scientific rigor, particularly in regulated environments like pharmaceutical development where erroneous conclusions have significant consequences.

Evaluation and Validation Frameworks

Robust evaluation methodologies are essential for assessing the performance and reliability of LLM systems in chemical research contexts. Beyond traditional accuracy metrics, evaluation should encompass scientific utility, innovation potential, and practical efficiency gains. Frameworks like GraphArena provide structured assessment approaches, categorizing outputs as Correct (scientifically valid and optimal), Suboptimal (scientifically valid but non-optimal), or Hallucinatory (scientifically invalid) [29]. This granular evaluation is particularly important for chemical applications where partially correct solutions might still have practical value, but scientifically invalid suggestions must be identified and filtered.

Validation should occur across multiple dimensions including computational performance, experimental verification, and expert assessment of scientific plausibility. The AR-Bench framework offers methodologies for evaluating active reasoning capabilities—testing how well models can construct reasoning chains, propose hypotheses, gather evidence, and validate conclusions rather than simply retrieving memorized information [29]. This is especially relevant for chemical life cycle assessment, where complex trade-offs and multi-variable optimization require genuine reasoning rather than pattern matching. Implementing these comprehensive evaluation frameworks ensures that LLM acceleration delivers both speed and reliability, maintaining scientific standards while dramatically reducing development timelines.

The positioning of LLMs as accelerators rather than replacements in chemical research represents both a practical approach and a philosophical commitment to human expertise at the center of scientific discovery. The documented applications and protocols demonstrate that the most significant gains occur when LLMs handle data-intensive, repetitive, and pattern-recognition tasks while humans focus on strategic planning, creative problem-solving, and complex decision-making. This collaborative model has already demonstrated transformative potential across chemical life cycle assessment, molecular design, and drug development, with documented reductions in development timelines from years to months and substantial cost savings while maintaining scientific rigor.

Looking forward, the continued evolution of LLM capabilities—particularly in reasoning, multi-modal integration, and specialized scientific knowledge—promises even greater acceleration potential. However, the fundamental principle remains unchanged: these systems serve as amplifiers of human intelligence rather than autonomous scientists. The most successful research organizations will be those that strategically implement the protocols and frameworks outlined here, creating structured collaborations that leverage the unique strengths of both human and artificial intelligence. Through this approach, the chemical research community can address increasingly complex challenges—from sustainable chemistry to personalized therapeutics—with unprecedented speed and efficiency while maintaining the scientific integrity that remains the foundation of meaningful discovery.

The integration of Large Language Models (LLMs) and other artificial intelligence (AI) technologies into environmental science is creating a transformative paradigm for addressing complex sustainability challenges. This convergence is particularly impactful in the specialized domain of chemical life cycle assessment (LCA), where it enables researchers to quantify environmental impacts from raw material extraction to end-of-life treatment with unprecedented speed and precision [30] [31]. The application of these computational approaches is revolutionizing sustainable drug development by allowing researchers to rapidly predict and optimize the environmental footprints of pharmaceutical compounds and processes [32] [30]. However, effective collaboration across these disciplines requires a shared understanding of key terminologies, methodologies, and frameworks that bridge the computational and environmental domains. This document provides essential application notes and experimental protocols to equip researchers, scientists, and drug development professionals with the tools needed to leverage LLMs effectively within chemical LCA research, thereby facilitating more sustainable therapeutic development.

Key Terminologies: A Cross-Disciplinary Lexicon

Table 1: Foundational Terminologies Bridging AI and Environmental Science

| Terminology | Domain | Definition | Relevance to Chemical LCA |

|---|---|---|---|

| Large Language Model (LLM) | Artificial Intelligence | A deep learning model trained on vast amounts of text data to understand, generate, and manipulate human language [33]. | Processes scientific literature to extract life cycle inventory (LCI) data and environmental impact information [22]. |

| Life Cycle Assessment (LCA) | Environmental Science | A standardized methodology (ISO 14040/14044) for evaluating the environmental impacts associated with all stages of a product's life cycle [31]. | Provides the foundational framework for quantifying environmental impacts of chemicals and pharmaceuticals [30]. |

| Life Cycle Inventory (LCI) | Environmental Science | The phase of LCA involving the compilation and quantification of inputs and outputs for a product system throughout its life cycle [31]. | Serves as the primary data source for environmental impact calculations; often targeted for AI-assisted retrieval [22]. |

| Retrieval Augmented Generation (RAG) | Artificial Intelligence | A technique that enhances LLMs by retrieving relevant information from external knowledge bases before generating responses [22]. | Improves accuracy of LCI data extraction from scientific literature and databases [22]. |

| Zero-Shot Anomaly Detection | Artificial Intelligence | The capability of a model to identify anomalies or outliers in data without having been specifically trained on similar examples [33]. | Detects irregularities in environmental monitoring data from sustainable systems without task-specific training [33]. |

| Model Drift | Artificial Intelligence | The degradation of model performance over time due to changes in data distribution or relationships between variables [34] [31]. | Critical for maintaining accuracy in predictive LCA models as chemical processes and environmental data evolve [31]. |

| Prompt Injection | AI Security | A type of attack where maliciously crafted prompts manipulate LLM behavior to produce unintended outputs [34]. | A security concern when using LLMs for environmental data analysis in regulated contexts like pharmaceutical LCA [34]. |

| Carbon Footprint | Environmental Science | The total amount of greenhouse gases emitted directly or indirectly by an activity, product, or organization [30]. | A key impact category measured in chemical LCA, often predicted using machine learning models [30]. |

| LLM Observability | AI Operations | The practice of monitoring LLM applications in production to track performance, usage metrics, and output quality [34]. | Ensures reliability and compliance of AI systems used for automated LCA in pharmaceutical development [34]. |

Quantitative Performance Data in AI-Enhanced Chemical LCA

Table 2: Performance Metrics of AI/LLM Approaches in Chemical LCA Research

| AI Methodology | Application Context | Performance Metrics | Comparative Baseline | Reference |

|---|---|---|---|---|

| Sustain-LLaMA Framework (Fine-tuned LLaMA-2-7B) | LCI data extraction from scientific literature | Classification accuracy: 0.850-0.952; F1 score: 0.823-0.855 | Outperformed non-retrained LLaMA-2-7B and showed comparable/superior accuracy to ChatGPT-4o [22] | Kumar et al., 2025 [22] |

| SigLLM Framework (GPT-3.5 Turbo, Mistral) | Anomaly detection in sustainable infrastructure monitoring | Effectively detected anomalies across 11 datasets (492 univariate time series, 2,349 anomalies); performed better than some deep learning transformers but ~30% less accurate than state-of-the-art specialized models (e.g., AER) [33] | Veeramachanani et al., 2024 [33] | |

| Molecular-Structure-Based ML | Prediction of chemicals' life-cycle environmental impacts | Most promising technology for rapid prediction; accuracy depends on training data quality and feature engineering [30] | Addresses limitations of conventional LCA: slow speed and high cost [30] | Green Carbon, 2025 [30] |

| AI-Enhanced Drug Discovery | Target identification and compound screening | Increased compound success rate from 10% (traditional) to 15-20%; reduced single-drug R&D costs by 30-50% [35] | Traditional drug development: 12-15 years, $2.6B average [35] | Zhong Lun, 2025 [35] |

Experimental Protocols for AI-Driven Chemical LCA

Protocol 1: LLM-Based Life Cycle Inventory Data Extraction

Objective: To implement a systematic framework for extracting Life Cycle Inventory (LCI) and environmental impact data from scientific literature using a fine-tuned LLM.

Materials and Reagents:

- Scientific literature corpus (PDF formats)

- High-performance computing resources (GPU clusters recommended)

- LLaMA-2-7B base model or similar open-source LLM

- Domain-specific environmental science texts for retraining

- Annotation tools for data labeling

Methodology:

- Document Relevance Classification:

- Fine-tune a classification model (e.g., BERT-based classifier) on a labeled dataset of relevant and non-relevant scientific documents for your chemical domain (e.g., methanol production, plastic packaging).

- Apply the trained classifier to filter a large corpus of scientific literature, retaining only documents pertinent to the target LCI data.

- Validate classification accuracy (>0.85) on a held-out test set before proceeding [22].

Domain Adaptation Pre-training:

- Pre-train the selected LLM (e.g., LLaMA-2-7B) on the relevant scientific texts identified in Step 1.

- This injects domain-specific knowledge (e.g., LCA terminology, chemical processes) into the model's parameters.

- Use a language modeling objective with a context window sufficient for scientific paragraphs.

Question-Answering Model Fine-tuning with RAG:

- Fine-tune the domain-adapted LLM as a question-answering model specifically for LCI data extraction.

- Implement a Retrieval Augmented Generation (RAG) system where the model retrieves relevant text passages from the scientific literature before generating answers to LCI-related queries.

- Train the model on a curated dataset of LCI questions and their corresponding answers extracted from annotated texts.

- Target performance: F1 scores of 0.82-0.85 on held-out test questions [22].

Validation and Benchmarking:

- Validate the framework's extracted LCI data against established databases (e.g., USLCI) and manual expert extractions.

- Compare performance against general-purpose LLMs (e.g., ChatGPT-4o) to quantify improvement gains.

Workflow for LCI Data Extraction

Protocol 2: Molecular-Structure-Based Prediction of Chemical Life-Cycle Impacts

Objective: To develop machine learning models for rapid prediction of life-cycle environmental impacts of chemicals directly from molecular structures, bypassing traditional LCA data requirements.

Materials and Reagents:

- Chemical databases with molecular structures (SMILES, InChI)

- LCA database with environmental impact factors

- Python environment with RDKit, Scikit-learn, PyTorch/TensorFlow

- High-performance computing resources for model training

Methodology:

- Dataset Curation:

- Compile a comprehensive dataset pairing chemical structures with their life-cycle environmental impacts (e.g., carbon footprints, toxicity potentials).

- Address data scarcity by integrating multiple sources and applying data augmentation techniques where appropriate.

- Ensure data quality through rigorous validation against experimental measurements.

Molecular Feature Engineering:

- Compute molecular descriptors (e.g., molecular weight, octanol-water partition coefficient, topological surface area) from chemical structures.

- Alternatively, employ graph-based representations that directly encode molecular structure as graphs for use with graph neural networks.

- Identify features most predictive of LCA results through feature importance analysis.

Model Selection and Training:

- Implement and compare multiple machine learning algorithms, including random forests, gradient boosting machines, and graph neural networks.

- Train models to predict environmental impact categories (global warming potential, aquatic toxicity, etc.) from molecular features.

- Utilize cross-validation to optimize hyperparameters and prevent overfitting.

Model Validation and Interpretation:

- Validate model predictions against hold-out test sets of chemicals with known life-cycle impacts.

- Employ model interpretation techniques (e.g., SHAP values) to identify which molecular features drive specific environmental impacts.

- Integrate with LLMs for enhanced feature engineering and database building, as LLMs are expected to provide new impetus for these tasks [30].

ML Model for Chemical Impact Prediction

Protocol 3: LLM Observability for Compliant Environmental Assessment

Objective: To implement LLM observability protocols that ensure reliability, compliance, and performance monitoring of LLM systems used in chemical LCA research, particularly for drug development applications.

Materials and Reagents:

- LLM observability platform (commercial or open-source)

- SDKs/APIs for application instrumentation

- Dashboard visualization tools

- Compliance checklists for pharmaceutical regulations

Methodology:

- System Instrumentation:

- Integrate Software Development Kits (SDKs) or APIs into LLM applications to capture key telemetry data.

- Implement OpenTelemetry (OTel) integration for consistent generation of traces, metrics, and logs across distributed systems.

- Capture inputs, outputs, latency, token usage, and errors for all LLM interactions.

Performance and Quality Monitoring:

- Track key performance metrics: latency, throughput, token usage, and error rates.

- Implement quality assessment metrics specific to LCA applications: hallucination rates (factual inaccuracies in LCI data), relevance of retrieved information, and toxicity of outputs.

- Set up automated alerts for performance degradation or quality threshold violations.

Safety and Compliance Checks:

- Monitor for prompt injection attacks that might manipulate environmental assessment results.

- Detect potential leakage of sensitive data (e.g., proprietary chemical formulations).

- Track compliance signals relevant to pharmaceutical regulations and environmental reporting standards.

Visualization and Continuous Improvement:

- Create customized dashboards visualizing key metrics for different stakeholder groups.

- Implement A/B testing capabilities to compare different prompts, models, or retrieval strategies.

- Establish feedback loops for model retraining and refinement based on observed performance and emerging data patterns.

LLM Observability Framework

Table 3: Key Research Reagents and Computational Solutions for AI-Enhanced Chemical LCA

| Item/Resource | Category | Function/Application | Implementation Example |

|---|---|---|---|

| Sustain-LLaMA | Specialized LLM | Domain-adapted language model for extracting LCI and environmental impact data from scientific literature [22]. | Fine-tuned on methanol production and plastic packaging literature; achieves high accuracy in LCI data retrieval [22]. |

| React-OT Model | Chemistry AI Model | Accelerates transition state prediction in chemical reactions to sub-second speeds with high accuracy [32]. | Used in molecular simulation for drug discovery; improves understanding of reaction pathways and energy requirements [32]. |

| GPU Clusters (NVIDIA H100, A100) | Computational Hardware | Provides accelerated computing for training and running large AI models, including LLMs and molecular graph neural networks [36]. | Training of BloombergGPT used 512 A100 GPUs; essential for handling computational demands of AI-enhanced LCA [36]. |

| OpenTelemetry (OTel) | Observability Framework | Open-source framework for generating and collecting telemetry data (metrics, logs, traces) from LLM applications [34]. | Instruments LLM systems for chemical LCA to monitor performance, costs, and compliance requirements [34]. |

| PandaOmics Platform | Drug Discovery AI | AI platform for target identification using deep feature synthesis, causal inference, and pathway reconstruction on multi-omics data [35]. | Identified TNIK as promising anti-fibrotic target; enables more sustainable drug development through accurate early target prioritization [35]. |

| Retrieval Augmented Generation (RAG) | AI Architecture | Enhances LLM accuracy by retrieving relevant information from external knowledge bases before generating responses [22]. | Implemented in Sustain-LLaMA to improve precision of LCI data extraction from scientific literature [22]. |

| AI Credibility Assessment Framework | Regulatory Compliance | Risk-based framework for establishing credibility of AI models used in pharmaceutical development and regulatory submissions [37]. | FDA-proposed approach for evaluating AI models that generate data supporting drug safety, efficacy, or quality assessments [37]. |

The integration of LLMs and AI technologies into chemical life cycle assessment represents a frontier in sustainable pharmaceutical research and development. The protocols and frameworks presented herein provide actionable methodologies for leveraging these advanced computational tools to accelerate environmental impact assessment while maintaining scientific rigor and regulatory compliance. As these fields continue to converge, researchers equipped with both the terminological foundation and practical implementation guidelines outlined in this document will be uniquely positioned to drive innovations in sustainable drug development. The critical importance of maintaining human expertise in the loop while adopting these automated approaches cannot be overstated—the most successful implementations will harmonize computational power with scientific domain knowledge to create truly transformative environmental assessment capabilities.

From Theory to Therapy: Applying LLMs in Drug Discovery and LCA Workflows

Within chemical life cycle assessment (LCA) and drug development research, the systematic review (SR) represents a cornerstone of evidence-based practice, yet its execution is notoriously slow and resource-intensive. The growing demand for high-quality SRs, coupled with the rapid emergence of new biomedical literature, creates a significant bottleneck in research and development pipelines. This application note details how Large Language Models (LLMs) are being leveraged to automate critical stages of the systematic review process, thereby accelerating biological summarization and therapeutic target evaluation. By framing this automation within the context of a broader thesis on LLMs in chemical LCA research, we provide researchers and drug development professionals with validated protocols and tools to enhance the efficiency, reproducibility, and scope of their evidence-synthesis activities.

Systematic reviews in biomedicine are methodologically rigorous and involve multiple sequential stages, from literature search to final reporting. Automation technologies, particularly LLMs, have been proposed to expedite this workflow, reduce manual workload, and minimize human error [38]. A comprehensive overview of SR automation studies indexed in PubMed indicates that automation techniques are being developed for all SR stages, though real-world adoption remains limited [38].

The distribution of automation efforts across the systematic review workflow is summarized in Table 1.

Table 1: Distribution of Automation Applications Across Systematic Review Stages

| Systematic Review Stage | Proportion of Automated Studies (%) | Primary Automation Goals |

|---|---|---|

| Search | 15.4% | Identifying relevant publications from databases [38]. |

| Record Screening | 72.4% | Prioritizing and selecting studies based on title/abstract [38]. |

| Full-Text Selection | 4.9% | Applying inclusion/exclusion criteria to full articles [38]. |

| Data Extraction | 10.6% | Extracting structured data (e.g., chemicals, impacts) from text [38] [22]. |

| Risk of Bias Assessment | 7.3% | Evaluating the methodological quality of included studies [38]. |

| Evidence Synthesis | 1.6% | Summarizing findings and generating conclusions [38]. |

| Reporting | 1.6% | Assisting in the drafting of the review manuscript [38]. |

The performance of these automated tools can vary significantly across different review topics. For instance, automated record screening, the most commonly targeted stage, shows large variations in sensitivity and specificity depending on the SR's subject matter [38]. This highlights the need for rigorous validation within a specific research domain, such as chemical LCA or drug target evaluation.

Application Note: Sustaining-LLM for Data Retrieval in Chemical LCA

A prime example of a domain-specific LLM application is the "Sustain-LLaMA" framework, designed to retrieve Life Cycle Inventory (LCI) and environmental impact data from scientific literature [22]. This framework addresses a critical challenge in chemical LCA: the time-consuming and costly process of obtaining reliable, transparent LCI data.

Experimental Protocol for LCI Data Retrieval

The following protocol, adapted from Kumar et al. (2025), provides a step-by-step methodology for implementing an LLM-based data retrieval system [22].

- Objective: To automatically extract LCI and environmental impact data for a given chemical or process (e.g., methanol production, plastic packaging end-of-life treatment) from a corpus of scientific literature.

- Materials:

- Hardware: A standard high-performance computing workstation with a GPU (e.g., NVIDIA A100 with 40GB+ VRAM) is recommended for model fine-tuning and inference.

- Software: Python 3.8+, PyTorch or TensorFlow, Hugging Face Transformers library, and the "Sustain-LLaMA" framework code.

- Model: The LLaMA-2-7B model is used as the base architecture.

- Data: A curated corpus of scientific literature (PDFs or plain text) relevant to the target domain (e.g., methanol production).

- Procedure:

- Document Classification:

- Fine-tune a classification model (e.g., a BERT-based classifier) on a labeled dataset to identify and filter documents that are relevant to the LCA inquiry from a larger corpus.

- Validate the model's performance on a held-out test set, aiming for a high accuracy (e.g., >0.85) [22].

- Domain Knowledge Injection (Pre-training):

- Pre-train the base LLaMA-2-7B model on the selected, relevant texts from Step 1. This step injects specialized domain knowledge into the LLM's parameters.

- Question-Answering Model Fine-Tuning:

- Further fine-tune the pre-trained model from Step 2 on a custom Question-Answering (Q&A) task using a dataset of questions and answers derived from the literature.

- Implement a Retrieval Augmented Generation (RAG) architecture. The RAG system retrieves relevant text snippets from the corpus and conditions the LLM on them to generate accurate, evidence-backed answers.

- Validation and Benchmarking:

- Evaluate the final Q&A model's performance using metrics like the F1 score (target: >0.82) [22].

- Benchmark the model's accuracy and efficiency against baseline models, such as the vanilla LLaMA-2-7B without retraining, and general-purpose LLMs like ChatGPT-4o.

- Document Classification:

This framework demonstrates that a retrained LLM can achieve high accuracy in extracting complex environmental data, offering a scalable and precise tool for automating literature mining in chemical LCA research [22].

Workflow Visualization

The logical workflow for the Sustain-LLaMA protocol is outlined in the diagram below.

Figure 1: Sustain-LLaMA Workflow for LCI Data Retrieval from Literature.

Application Note: DrugGPT for Faithful Drug Analysis

In the context of drug development and target evaluation, the application of general-purpose LLMs is hindered by their tendency to produce "hallucinations"—factually incorrect but plausible-sounding content. The DrugGPT model was developed to address this critical challenge by ensuring recommendations are accurate, evidence-based, and traceable [39].

Experimental Protocol for Clinical-Quality Drug Analysis

This protocol outlines the methodology for building and evaluating a collaborative LLM for drug-related tasks, based on the DrugGPT framework [39].

- Objective: To provide accurate, evidence-based, and faithful answers to inquiries on drug recommendation, dosage, adverse reactions, drug-drug interactions, and general pharmacology questions.

- Materials:

- Knowledge Bases: Drugs.com, UK National Health Service (NHS), and PubMed.

- Base Models: Three instances of a general-purpose LLM (e.g., based on architectures like GPT or PaLM) to serve as the collaborative components.

- Datasets: For evaluation, use established benchmarks such as MedQA-USMLE, MedMCQA, ADE-Corpus-v2, DDI-Corpus, and PubMedQA [39].

- Procedure:

- Knowledge Base Integration:

- Construct a large medical knowledge graph (e.g., a Disease-Symptom-Drug Graph or DSDG) from the incorporated knowledge bases to model relationships between key entities.

- Implement Collaborative Mechanism:

- Inquiry Analysis LLM (IA-LLM): Implement this component using Chain-of-Thought (CoT) and few-shot prompting strategies to analyze user inquiries and determine what knowledge is required.

- Knowledge Acquisition LLM (KA-LLM): Use knowledge-based instruction prompt tuning to enable this component to efficiently extract relevant information from the knowledge bases and the DSDG.

- Evidence Generation LLM (EG-LLM): Employ CoT prompting, along with knowledge-consistency and evidence-traceable prompting, to generate the final answer based only on the evidence identified by the KA-LLM.

- Model Evaluation:

- Test the collaborative DrugGPT model on the designated downstream datasets.

- For multiple-choice tasks (e.g., MedQA), report accuracy. For recommendation tasks (e.g., ChatDoctor), calculate recall, precision, and F1 scores.