Machine Learning for Smartphone-Based Environmental Analysis: Applications, Algorithms, and Best Practices

This article explores the transformative role of machine learning (ML) in smartphone-based environmental analysis.

Machine Learning for Smartphone-Based Environmental Analysis: Applications, Algorithms, and Best Practices

Abstract

This article explores the transformative role of machine learning (ML) in smartphone-based environmental analysis. It covers the foundational principles of using ML for tasks like pollution detection and biodiversity monitoring, detailing specific algorithms such as CNNs for image analysis and LSTMs for time-series forecasting. The article addresses key methodological challenges, including data quality and model optimization, and provides a framework for validating and comparing different ML approaches. Aimed at researchers and development professionals, it synthesizes current advancements and future directions for creating accurate, efficient, and accessible environmental monitoring tools.

The New Frontier: How Machine Learning is Revolutionizing Environmental Sensing

The integration of artificial intelligence (AI) technologies is fundamentally transforming environmental research and analysis. As climate change and environmental degradation accelerate, the need for sophisticated tools to monitor, model, and mitigate these challenges has never been greater. AI, and particularly its subfields of machine learning (ML) and deep learning, offer unprecedented capabilities for processing complex environmental datasets, identifying subtle patterns, and generating predictive insights at scales previously impossible. These technologies are now being deployed across diverse environmental domains, from tracking air and water pollution to monitoring biodiversity and ecosystem health [1] [2].

The emergence of smartphone-based environmental analysis represents a particularly significant development, democratizing data collection and enabling real-time monitoring through widely available consumer devices. This convergence of mobile technology and AI creates powerful new paradigms for environmental research, allowing scientists to gather and process environmental data with unprecedented spatial and temporal resolution. This technical guide examines the core concepts of AI, ML, and deep learning specifically within environmental contexts, providing researchers with the theoretical foundation and practical methodologies needed to leverage these technologies in smartphone-based environmental analysis research.

Core Definitions and Hierarchical Relationships

Artificial Intelligence (AI)

Artificial Intelligence represents the broadest concept, encompassing any technique that enables machines to mimic human intelligence. This includes problem-solving, learning, perception, and decision-making capabilities. In environmental contexts, AI systems are designed to tackle complex ecological challenges that require adaptive reasoning and sophisticated pattern recognition. For example, AI can power comprehensive environmental monitoring systems that integrate data from multiple sources—including satellite imagery, sensor networks, and citizen science reports—to provide holistic assessments of ecosystem health [3].

Machine Learning (ML)

Machine Learning is a subset of AI that focuses on algorithms that can learn from and make predictions based on data without being explicitly programmed for every scenario. ML algorithms identify patterns within data and use these patterns to build models that can make increasingly accurate decisions or predictions over time. In environmental science, ML has become indispensable for tasks such as predicting air quality levels based on historical data and weather patterns, classifying land use from satellite imagery, and identifying potential pollution sources through anomaly detection in sensor networks [1] [2]. The technology demonstrates "remarkable effectiveness" in aspects like material screening, performance prediction, instant detection, and global distribution simulation of pollutants [1].

Deep Learning

Deep Learning is a specialized subset of machine learning based on artificial neural networks with multiple layers (hence "deep") that can learn increasingly abstract representations of data. These architectures are particularly well-suited for processing unstructured data like images, audio, and text. In environmental applications, deep learning enables advanced capabilities such as automated species identification from camera trap images, analysis of satellite imagery to track deforestation, and processing of acoustic data to monitor bird populations or underwater ecosystems [4]. Deep learning models have demonstrated exceptional performance in environmental health applications, often outperforming traditional machine learning approaches [2].

Table 1: Core AI Concepts and Their Environmental Applications

| Concept | Definition | Primary Environmental Applications |

|---|---|---|

| Artificial Intelligence (AI) | Systems that mimic human intelligence to perform tasks | Environmental decision support systems, resource management optimization |

| Machine Learning (ML) | Algorithms that learn patterns from data without explicit programming | Air quality prediction, pollution source identification, climate modeling |

| Deep Learning | Multi-layered neural networks that learn hierarchical data representations | Species identification from images, satellite imagery analysis, acoustic monitoring |

Technical Methodologies and Experimental Protocols

Machine Learning Workflows for Environmental Data

The application of machine learning to environmental challenges follows a structured workflow that begins with data acquisition and proceeds through multiple stages of processing and analysis. For smartphone-based environmental research, this typically involves collecting data through mobile sensors or citizen science applications, preprocessing this data to ensure quality and consistency, training models to recognize relevant patterns, and deploying these models for environmental monitoring and analysis [1] [2].

A critical challenge in environmental ML is the frequent scarcity of high-quality training data, particularly for rare events or in geographically underrepresented regions [1]. To address this, researchers have developed several innovative approaches. Transfer learning allows models trained on large, general datasets to be adapted for specific environmental applications with limited data. Data augmentation techniques can artificially expand training datasets by creating modified versions of existing data. Synthetic data generation creates artificial training examples that reflect the statistical properties of real environmental data [1].

Deep Learning Architectures for Environmental Analysis

Deep learning has enabled significant advances in environmental analysis through several specialized architectures:

Convolutional Neural Networks (CNNs) are particularly valuable for processing spatial environmental data. These networks use layered filters to automatically identify hierarchical patterns in images, making them ideal for analyzing satellite imagery, identifying species from photographs, or detecting pollution patterns in spatial data [4]. For example, researchers have used simplified one-dimensional convolutional neural networks (1DCNN) to analyze metallomic data for classifying malignant pulmonary nodules without needing to quantify metal element concentrations [2].

Recurrent Neural Networks (RNNs) and their variants, such as Long Short-Term Memory (LSTM) networks, are designed to process sequential data. These architectures are particularly useful for analyzing time-series environmental data, such as temperature records, pollutant concentrations over time, or seasonal patterns in ecosystem health [4]. Their ability to capture temporal dependencies makes them valuable for predicting environmental trends and identifying cyclical patterns.

Transformer Architectures have recently emerged as powerful tools for processing diverse environmental data types. Originally developed for natural language processing, transformers' attention mechanisms have been adapted for spatial and temporal environmental data analysis, enabling more effective modeling of complex relationships in heterogeneous environmental datasets [4].

Explainable AI (XAI) for Environmental Science

The "black box" nature of many ML and deep learning models presents particular challenges for environmental science, where understanding the reasoning behind predictions is often as important as the predictions themselves. Explainable AI (XAI) techniques have emerged to address this limitation by making model decisions more transparent and interpretable [2].

In environmental applications, techniques such as Local Interpretable Model-agnostic Explanations (LIME) are being used to identify which features in the input data most strongly influence model predictions [2]. For example, researchers have used LIME in conjunction with Random Forest classifiers to identify molecular fragments that impact key nuclear receptor targets relevant to environmental toxicology [2]. Similarly, the "repeated hold-out signed-iterated Random Forest" (rh-SiRF) algorithm helps identify "metal-microbial clique signatures" that reveal complex relationships between environmental exposures and health outcomes [2].

Smartphone-Based Environmental Analysis

Mobile AI Architectures for Environmental Monitoring

The integration of AI capabilities into smartphones has created unprecedented opportunities for distributed environmental monitoring. Modern mobile devices incorporate specialized AI processors, such as Google's Tensor G5, that enable on-device execution of sophisticated ML models without continuous cloud connectivity [5]. This capability is crucial for environmental monitoring in remote areas with limited connectivity and enables real-time analysis for time-sensitive applications.

Mobile environmental applications typically employ one of two architectural approaches: edge-based processing, where AI models run entirely on the smartphone, or hybrid architectures, where preliminary processing occurs on the device with more complex analysis handled in the cloud. Edge-based processing offers advantages in privacy, latency, and operation without network connectivity, while hybrid approaches can handle more computationally intensive analyses [5].

Sensor Integration and Data Acquisition

Smartphones incorporate a diverse array of sensors that can be leveraged for environmental monitoring, including cameras, microphones, GPS receivers, accelerometers, and increasingly specialized environmental sensors. These capabilities enable a wide range of environmental data collection modalities:

- Visual Analysis: Smartphone cameras coupled with deep learning models can identify plant diseases, assess water quality through colorimetric assays, document pollution events, and monitor wildlife [4].

- Acoustic Monitoring: Microphones can capture environmental soundscapes for analyzing bird populations, detecting illegal logging or mining activities, and monitoring noise pollution [4].

- Location-Aware Sensing: GPS capabilities enable precise geotagging of environmental observations, creating rich spatial datasets for mapping pollution gradients, biodiversity distributions, and habitat fragmentation.

The proliferation of smartphone-based environmental monitoring is generating massive datasets that fuel increasingly sophisticated AI models while raising important considerations for data standardization, quality control, and privacy protection.

Environmental Applications and Quantitative Analysis

Market Growth and Application Areas

The application of AI technologies to environmental challenges represents a rapidly growing field, with the global market for AI in environmental sustainability projected to grow from $19.8 billion in 2025 to $120.8 billion by 2035, representing a compound annual growth rate (CAGR) of 19.8% [3]. This growth is driven by increasing environmental awareness, adoption of AI technologies for sustainability solutions, and expanding government initiatives for environmental protection and climate action [3].

Table 2: AI in Environmental Sustainability Market by Application (2025)

| Application Area | Market Share (%) | Key Use Cases |

|---|---|---|

| Climate Change Mitigation | 28.0% | Carbon emission monitoring, reduction strategies, climate impact assessment |

| Renewable Energy Optimization | 16.5% | Grid management, demand forecasting, infrastructure optimization |

| Water Resource Management | 12.8% | Quality monitoring, distribution optimization, pollution detection |

| Air Quality Monitoring | 9.7% | Pollution tracking, source identification, public health alerts |

| Biodiversity & Wildlife Monitoring | 8.3% | Species identification, habitat assessment, poaching prevention |

| Precision Agriculture | 8.1% | Resource optimization, yield prediction, sustainable practices |

| Waste Management | 7.5% | Sorting optimization, recycling efficiency, landfill management |

| Natural Disaster Prediction | 5.6% | Early warning systems, impact assessment, evacuation planning |

Performance Metrics and Environmental Impact

AI systems demonstrate significant performance improvements over traditional methods for environmental applications. In environmental data analysis, AI has achieved approximately 60% reduction in decision-making time compared to traditional methods while significantly improving computational efficiency [1]. These efficiency gains are critical for time-sensitive environmental interventions and rapid response to ecological threats.

However, the environmental benefits of AI applications must be balanced against the resource consumption of the AI systems themselves. Training large models has substantial environmental costs: for example, training Mistral Large 2 (123 billion parameters) produced approximately 20,400 metric tons of greenhouse gases - roughly equal to annual emissions from 4,400 gas-powered passenger vehicles - and consumed 281,000 cubic meters of water for cooling, approximately as much as an average U.S. family of four would consume in 500 years [5]. Inference operations also carry environmental costs, with the average prompt and response (400 tokens) emitting approximately 1.14 grams of greenhouse gases and consuming 45 milliliters of water [5].

The Researcher's Toolkit: Technical Specifications

Algorithmic Approaches for Environmental Applications

Environmental researchers applying AI techniques employ a diverse toolkit of algorithmic approaches suited to different data types and research questions:

- Random Forests and Ensemble Methods: These are frequently used for classification tasks such as land cover categorization and species distribution modeling, often demonstrating strong performance with structured environmental data [2].

- Support Vector Machines (SVMs): Effective for smaller environmental datasets and high-dimensional problems, such as hyperspectral image analysis or chemical fingerprint recognition [2].

- Neural Networks: Including Multiplayer Perceptrons (MLPs) for quantitative structure-activity relationship (QSAR) modeling in toxicology and convolutional neural networks for image-based environmental monitoring [2].

- Transformer Models: Increasingly applied to diverse environmental data types, from satellite imagery time series to genomic data for biodiversity assessment [4].

Research Reagent Solutions

Table 3: Essential Research Components for AI-Driven Environmental Analysis

| Component | Function | Environmental Research Examples |

|---|---|---|

| Pre-trained Vision Models | Image classification and object detection | Species identification from camera trap images, pollution event detection |

| Transfer Learning Frameworks | Adaptation of general models to specific environmental tasks | Customizing generic image classifiers for local flora/fauna recognition |

| Sensor Fusion Algorithms | Integration of data from multiple smartphone sensors | Combining GPS, camera, and accelerometer data for habitat mapping |

| Edge AI Optimization Tools | Model compression for mobile deployment | Enabling real-time analysis on smartphones in field conditions |

| Geospatial Analysis Libraries | Processing of location-referenced environmental data | Mapping pollution gradients, analyzing spatial patterns in ecosystem health |

| Citizen Science Platforms | Crowdsourced data collection and annotation | Distributed environmental monitoring through participatory research |

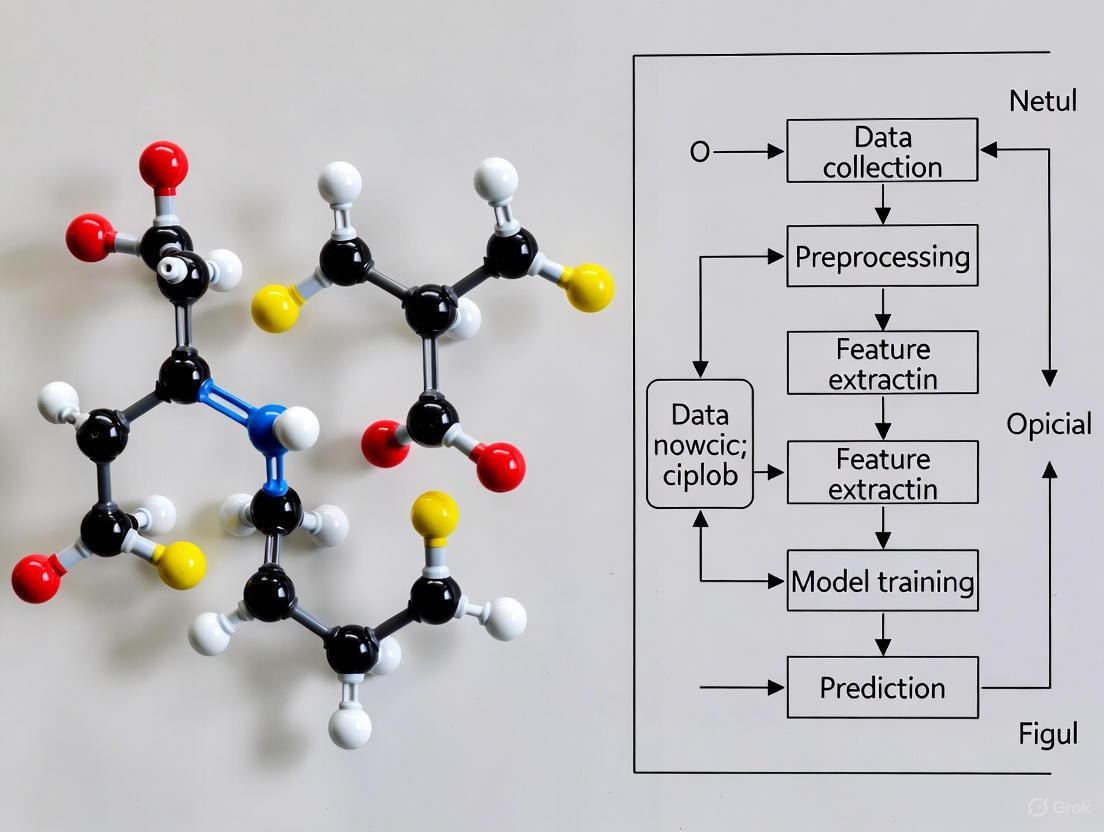

Visualizing Architectural Relationships and Workflows

AI Architecture Environmental Applications Diagram

Environmental Analysis Workflow Diagram

The integration of AI, ML, and deep learning into environmental science represents a paradigm shift in how we monitor, understand, and protect our natural world. These technologies enable researchers to process complex environmental datasets at unprecedented scales and speeds, revealing patterns and relationships that would remain hidden using traditional analytical approaches. The emergence of smartphone-based environmental analysis further democratizes this capability, distributing data collection and analysis across vast geographic areas and engaging citizen scientists in meaningful environmental monitoring.

As these technologies continue to evolve, several trends are likely to shape their future development in environmental contexts. The growing emphasis on explainable AI will address the "black box" problem of complex models, making AI-driven insights more trustworthy and actionable for environmental decision-makers [2]. Advances in edge computing will enable more sophisticated on-device analysis, reducing latency and bandwidth requirements while enhancing privacy [5] [4]. The integration of IoT networks with AI systems will create increasingly comprehensive environmental monitoring infrastructures, providing real-time insights into ecosystem health [3]. Finally, growing attention to the environmental costs of AI itself will drive development of more energy-efficient algorithms and hardware, ensuring that the benefits of AI in environmental applications are not undermined by its own resource consumption [6] [5].

For researchers working at the intersection of AI and environmental science, these developments offer unprecedented opportunities to address pressing ecological challenges while also demanding careful consideration of the ethical implications, resource constraints, and validation requirements inherent in applying these powerful technologies to complex natural systems.

The modern smartphone represents a convergence of advanced sensing, processing, and communication technologies, transforming it from a mere communication device into a powerful mobile sensor hub. This transformation is particularly impactful in environmental analysis research, where smartphones provide an unprecedented platform for distributed, real-time data collection. Machine learning serves as the critical enabling technology that unlocks the potential of these embedded sensors, turning raw data into actionable insights about our environment. This technical guide examines the capabilities of smartphones as sensor platforms and details the methodologies for leveraging them in environmental research, with a specific focus on the synergistic relationship between smartphone sensors and ML algorithms for environmental analysis.

Smartphone Sensor Ecosystem

The smartphone sensor ecosystem comprises a diverse array of hardware components capable of measuring physical, optical, and environmental parameters. These sensors form the foundational data sources for research applications.

Core Sensor Types and Capabilities

Smartphones integrate multiple sensor types that can be repurposed for environmental monitoring. The global smartphone sensors market, valued at approximately USD 60 billion in 2023 and projected to reach USD 120 billion by 2032, reflects the rapid advancement and integration of these components [7]. By 2025, the market size is estimated to be over USD 114.5 billion, expanding to USD 432 billion by 2035 at a CAGR of 15.9% [8].

Table: Primary Smartphone Sensors and Environmental Research Applications

| Sensor Type | Measured Parameter | Environmental Research Application |

|---|---|---|

| Accelerometer | Acceleration forces, device orientation | Seismic activity monitoring, transportation mode detection |

| Gyroscope | Angular velocity, rotation | Precision motion detection for field data collection workflows |

| Magnetometer | Magnetic field strength | Detection of magnetic pollutants, indoor navigation |

| Ambient Light Sensor | Illuminance | Light pollution studies, solar exposure assessment |

| Proximity Sensor | Distance to nearby objects | User interaction logging, object detection |

| Microphone | Sound pressure, frequency | Noise pollution mapping, species identification via bioacoustics |

| Camera | Visible, and sometimes IR/UV spectra | Air quality visual assessment, water turbidity, plant health analysis |

| GPS | Geographic coordinates | Spatial data tagging, movement pattern analysis |

| Barometer | Atmospheric pressure | Weather forecasting, altitude determination |

| Newer/Specialized | Various | Hyper-local environmental monitoring |

Emerging Sensor Integration and Market Trends

The sensor landscape within smartphones is continuously evolving. A significant trend is the move toward non-contact sensors, which are projected to hold a 92.5% market share by 2035 [8]. These sensors, including camera and proximity sensors, are fundamental to modern smartphone interaction and enable features like augmented reality and gesture-based controls that have research applications.

Innovations like the MobilePhysics toolkit demonstrate the next frontier: leveraging existing sensors with computational physics and AI to measure parameters like air quality, smoke levels, temperature, and UV exposure [9]. This software-based approach, now embedded in Qualcomm's Snapdragon 8 Gen 3 processor using STMicroelectronics' direct time-of-flight (dToF) sensors, transforms standard smartphones into personal environmental monitoring systems without requiring additional hardware [9].

Furthermore, the integration of microfluidic sensors with smartphones creates powerful portable analytical tools for forensic, agricultural, and environmental monitoring [10]. These lab-on-a-chip devices enable cost-effective, on-site detection of pollutants and other analytes, with the smartphone providing imaging, processing, and communication capabilities.

Machine Learning for Sensor Data Analysis

Machine learning algorithms serve as the computational engine that transforms raw, multi-dimensional sensor data into meaningful environmental insights. The unique constraints and opportunities of mobile platforms dictate specific ML approaches.

ML Workflow for Smartphone-Based Environmental Analysis

A standardized workflow ensures robust and reproducible results. The process begins with data acquisition from the smartphone's sensor suite, followed by preprocessing to handle noise, outliers, and missing values. Feature engineering then extracts discriminative characteristics from the sensor data, which may include statistical features (mean, variance), frequency-domain features (FFT coefficients), or time-series characteristics. The model training phase can occur on-device (for latency and privacy) or on cloud servers (for complex models), with final deployment and inference enabling real-time environmental analysis.

Algorithm Selection and Model Optimization

Algorithm selection depends on the specific environmental analysis task, available computational resources, and latency requirements. For resource-constrained mobile environments, efficiency is paramount.

Lightweight Models for On-Device Inference: Traditional machine learning models like Random Forests, Support Vector Machines (SVM), and simpler Neural Networks often provide the best balance between accuracy and computational demand for tasks like activity recognition or basic classification [11]. These can be deployed directly on smartphones using frameworks like TensorFlow Lite or Core ML.

Deep Learning for Complex Patterns: For more complex environmental patterns such as image-based pollution assessment or audio-based species identification, deeper neural networks, including Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), are more effective [11] [12]. These may require cloud-based processing or sophisticated on-device optimization.

Hybrid and Advanced Architectures: Research demonstrates that hybrid models combining multiple approaches can yield superior results. One study found that integrating the Capuchin Search Algorithm (CapSA) with a Multilayer Perceptron (MLP) for weight optimization significantly improved prediction accuracy for educational quality, a approach that can be adapted for environmental model calibration [11]. The CapSA algorithm is particularly suited for navigating complex solution spaces and avoiding local optima.

The expansion of 5G and 6G networks further enhances this ecosystem by providing the low-latency, high-bandwidth connectivity necessary for real-time sensor data transmission and cloud-based ML processing [8].

Experimental Protocols for Environmental Monitoring

This section provides detailed methodologies for implementing smartphone-based environmental data collection and analysis, with a focus on reproducible, scientific rigor.

Protocol: Air Quality and Particulate Matter Monitoring

Objective: To utilize smartphone cameras and ML models for the semi-quantitative assessment of airborne particulate matter.

Materials and Equipment:

- Smartphone with high-resolution camera

- Reference air quality sensor (for calibration, if available)

- Standardized imaging target for color correction

- Tripod or stabilization platform

Methodology:

- Setup and Calibration: Place the smartphone on a stable surface with the camera facing a consistent scene. Under controlled conditions, capture reference images with a standardized color card. If available, collocate with a reference sensor for initial calibration.

- Data Collection: Capture images of the sky or a standardized surface at predetermined intervals (e.g., hourly). Ensure consistent camera settings (ISO, shutter speed, white balance). Record metadata including GPS coordinates, timestamp, and barometric pressure.

- Image Preprocessing: Extract image features known to correlate with aerosol optical depth, including contrast, hue, saturation, and intensity. Apply histogram equalization and correct for lens distortion.

- Model Application: Process the extracted features using a pre-trained regression model (e.g., SVM or Neural Network) to estimate PM2.5/PM10 concentrations. The model should be trained on a dataset pairing historical imagery with ground-truth sensor data.

- Data Aggregation: Contribute results to a centralized database for spatial-temporal analysis and model refinement.

Validation: Compare smartphone-derived estimates with readings from certified air quality monitoring stations. Calculate performance metrics (R², RMSE) to quantify accuracy.

Protocol: Water Quality Assessment via Microfluidic Integration

Objective: To analyze water samples for pollutants using smartphone-integrated microfluidic sensors and computer vision.

Materials and Equipment:

- Smartphone with macro-capable camera

- Disposable microfluidic chip designed for target analyte (e.g., heavy metals, nitrates)

- LED light source for consistent illumination

- Sample preparation kit (pipettes, vials, reagents)

Methodology:

- Chip Preparation and Sample Introduction: Load the water sample and necessary reagents into the injection ports of the microfluidic chip as per manufacturer protocol.

- On-Chip Analysis: Allow the sample to flow through the microfluidic channels where specific chemical reactions (e.g., colorimetric, fluorescent) occur.

- Image Capture: Place the chip on a uniform background and use the smartphone camera under controlled lighting to capture high-resolution images of the detection zones.

- Computer Vision Analysis: Use color thresholding algorithms or a CNN to analyze the color intensity or pattern in the detection zones. Relate this optical signal to analyte concentration using a pre-established calibration curve.

- Data Reporting: The smartphone app calculates and reports the concentration, tagging the result with spatiotemporal metadata for geographic mapping.

This protocol leverages the trend noted in research where "smartphone-integrated microfluidic sensors allow timely detection of pollutants in air, water, and soil, enabling quicker responses to hazards" [10].

The Researcher's Toolkit

Implementing smartphone-based environmental analysis requires a suite of hardware and software "reagents." The table below details essential components.

Table: Essential Research Reagents for Smartphone-Based Environmental Analysis

| Category | Item/Solution | Function in Research |

|---|---|---|

| Hardware Platforms | Qualcomm Snapdragon series (with AI cores) | Provides the processing platform for on-device sensor fusion and ML inference. The Snapdragon 8 Gen 3 includes dedicated support for environmental monitoring toolkits [9]. |

| Software Frameworks | TensorFlow Lite, PyTorch Mobile | Enables the conversion and deployment of trained ML models onto mobile operating systems (Android, iOS) for real-time analysis. |

| Sensor Hub Technology | Sensor Hub ICs (e.g., from STMicroelectronics, Bosch) | Manages data from multiple sensors simultaneously while minimizing power consumption. The market for these is growing at a CAGR of 17.8% (2025-2033) [13]. |

| Specialized Sensors | STMicroelectronics dToF Sensor | Precisely measures distance. Used in advanced applications like the MobilePhysics toolkit for calculating smoke density and particulate matter levels [9]. |

| Calibration Standards | Colorimetric Reference Card, Certified Gas Samples | Provides a known reference for calibrating smartphone camera and other sensors, ensuring data consistency and accuracy across different devices and conditions. |

| Data Fusion Algorithms | Kalman Filters, Particle Filters | Software-based solutions that combine data from multiple sensors (e.g., GPS, accelerometer, camera) to produce a more accurate and reliable estimate of environmental conditions. |

Data Management and Processing Architecture

The architecture for managing and processing data from smartphone sensor hubs is a critical component of a successful research framework. The diagram below illustrates the flow from data collection to actionable insight.

This architecture highlights several key considerations:

- On-Device Processing: Initial data filtering and feature extraction occur on the smartphone to reduce bandwidth requirements and preserve user privacy.

- Secure Transmission: Processed data is transmitted via 5G or Wi-Fi to cloud or edge computing resources, leveraging high-speed connectivity that is a major market growth driver [8].

- Centralized Analysis and Model Refinement: Aggregated data from many devices enables large-scale spatial analysis and the continuous retraining of ML models to improve accuracy.

- Feedback Loop: Improved models and calibration parameters can be pushed back to the smartphone sensor network, creating an adaptive, learning system.

Smartphones have unequivocally evolved into sophisticated mobile sensor hubs, capable of supporting rigorous environmental analysis research. Their value is multiplied when their sensor capabilities are coupled with machine learning, creating a powerful, distributed platform for monitoring air quality, water safety, and ecological health. While challenges related to data calibration, privacy, and standardization persist, the trajectory of the technology—driven by market growth, sensor miniaturization, and algorithmic advances—points toward an increasingly significant role for smartphones in the environmental scientist's toolkit. The integration of specialized hardware, robust software frameworks, and validated experimental protocols will further cement their position as indispensable tools for understanding and protecting our environment.

The integration of smartphone-based analysis with machine learning (ML) is revolutionizing environmental monitoring. These technologies enable the collection of high-resolution, spatiotemporal data at a scale and speed previously unattainable, transforming how researchers and scientists track changes in air and water quality, biodiversity, and climate indicators. This paradigm shift addresses critical data gaps in human-environment systems, supporting advanced sustainability science and policy [14]. By leveraging the ubiquitous nature of smartphones and the predictive power of ML, this approach facilitates a move from reactive, event-driven data collection to proactive "police patrol" monitoring, establishing essential baselines and identifying meaningful anomalies across global ecosystems [14]. This technical guide details the core methodologies, experimental protocols, and key technological frameworks underpinning this transformative field.

Machine Learning in Smartphone-Based Air Quality Monitoring

The deployment of low-cost sensors (LCSs) via smartphone and Internet of Things (IoT) platforms has created dense, hyperlocal air quality monitoring networks. However, data from these sensors can be influenced by environmental factors like temperature and humidity, necessitating robust calibration methods where machine learning excels.

Machine Learning for Sensor Calibration and Data Refinement

Experimental Protocol: ML-Based Calibration of Low-Cost Sensors A standard methodology for enhancing the reliability of LCS data involves the following steps [15]:

- System Development: An IoT-based air quality monitoring system is constructed using common LCS types (e.g., for PM2.5, CO2) and a microcontroller (e.g., ESP8266) with wireless communication capabilities.

- Data Collection: The system collects high-frequency (e.g., one-minute resolution) data on target pollutants alongside environmental interferents like temperature and humidity. Data is transmitted to a cloud server for storage.

- Reference Comparison: Sensor measurements are collocated with a reference-grade instrument to generate a labeled dataset for model training and validation.

- Model Training and Evaluation: A suite of ML algorithms is applied to the dataset. The performance of each algorithm is evaluated using metrics such as R-squared (R²), Root Mean Square Error (RMSE), and Mean Absolute Error (MAE) to identify the best-performing model for each sensor type.

A recent study systematically evaluating eight ML algorithms found that Gradient Boosting (GB) and k-Nearest Neighbors (kNN) achieved the highest calibration accuracy for CO2 and PM2.5 sensors, respectively [15]. The following table summarizes the quantitative performance of these top-performing models.

Table 1: Performance of Top Machine Learning Models for Low-Cost Sensor Calibration [15]

| Target Pollutant | Best-Performing ML Model | R² | RMSE | MAE |

|---|---|---|---|---|

| CO2 | Gradient Boosting (GB) | 0.970 | 0.442 | 0.282 |

| PM2.5 | k-Nearest Neighbors (kNN) | 0.970 | 2.123 | 0.842 |

| Temperature & Humidity | Gradient Boosting (GB) | 0.976 | 2.284 | - |

Mobile Monitoring and High-Resolution Pollution Mapping

Beyond static sensors, smartphones and specialized sensors are deployed on mobile platforms, including vehicles, to capture pollution gradients at an unprecedented spatial resolution. A seminal study in Jinan, China, integrated data from 200 mobile cruising vehicles and 614 fixed micro-stations [16]. Using machine learning, the team reconstructed PM2.5 pollution maps with a high spatiotemporal resolution of 500 meters and 1 hour. This approach demonstrated that optimized mobile monitoring networks could reduce costs by nearly 70% while maintaining high precision [16]. Furthermore, the application of explainable AI (XAI) techniques, specifically Shapley Additive Explanations (SHAP), identified that secondary inorganic aerosols (SIA) were the primary drivers of PM2.5 pollution in the urban study area [16].

Smartphone-Driven Biodiversity Monitoring and Ecological Surveys

Smartphone apps have dramatically accelerated the collection of species occurrence data, leveraging citizen science and automated identification to create massive datasets for ecological research and conservation planning.

Community-Sourced Data and AI-Powered Identification

Experimental Protocol: Validating Community-Sourced Biodiversity Data The workflow for utilizing smartphone-derived biodiversity data involves validation and integration into species distribution models (SDMs) [17].

- Data Collection via Mobile App: A mobile application (e.g., Biome, iNaturalist) is used to gather geotagged species observations from the public. These platforms incorporate AI-based species identification and gamification to encourage participation.

- Accuracy Assessment: The species identification accuracy of the community-sourced data is validated against expert-curated records for various taxonomic groups.

- Data Integration into SDMs: The validated community data is combined with traditional survey data. Species Distribution Models are then run using both the combined dataset and the traditional data alone.

- Model Performance Comparison: The accuracy of the SDMs is evaluated using metrics like the Boyce index to quantify the improvement gained by incorporating community-sourced data.

Research on the Biome app in Japan, which accumulated over 6 million observations, demonstrated the efficacy of this protocol. The AI-powered identification achieved high accuracy for certain taxa, and integrating this data into SDMs significantly improved distribution estimates, especially for endangered species [17]. The required records for an accurate model (Boyce index ≥0.9) dropped from over 2000 using traditional data alone to around 300 when blended with community-sourced data [17].

Table 2: Species Identification Accuracy in the Biome Mobile App [17]

| Taxonomic Group | Identification Accuracy |

|---|---|

| Birds, Reptiles, Mammals, Amphibians | >95% |

| Seed Plants, Molluscs, Fishes | <90% |

AI-Powered Ecological Surveys

In 2025, AI is enabling a transition from labor-intensive traditional surveys to highly automated, precise ecological monitoring. AI-powered platforms analyze satellite imagery, drone-captured data, and IoT sensor streams to automate species identification, habitat mapping, and detection of environmental stressors [18]. The performance improvements are substantial, as shown in the comparative table below.

Table 3: Traditional vs. AI-Powered Ecological Monitoring in 2025 [18]

| Survey/Monitoring Aspect | Traditional Method (Estimated Outcome) | AI-Powered Method (Estimated Outcome) | Estimated Improvement (%) in 2025 |

|---|---|---|---|

| Vegetation Analysis Accuracy | 72% | 92%+ | +28% |

| Biodiversity Species Detected per Hectare | Up to 400 species | Up to 10,000 species | +2400% |

| Time Required per Survey | Several days to weeks | Real-time or within hours | -99% |

| Resource (Manpower & Cost) Savings | High labor and operational costs | Minimal manual intervention, automated workflows | Up to 80% |

| Data Update Frequency | Monthly or less | Daily to Real-time | +3000% |

Experimental Protocols and Workflow Visualization

A generalized experimental workflow for smartphone-based environmental analysis research is depicted in the following diagram, illustrating the integration of data collection, machine learning, and outcome application.

Diagram 1: Smartphone Environmental Analysis Workflow.

The Scientist's Toolkit: Research Reagent Solutions

This section details key hardware, software, and data components essential for conducting smartphone-based environmental analysis research.

Table 4: Essential Research Reagents and Materials for Smartphone-Based Environmental Analysis

| Research Reagent / Material | Type | Function in Research |

|---|---|---|

| Low-Cost Air Quality Sensors (PM2.5, CO2) | Hardware | Measures target pollutant concentrations; core component of mobile or static monitoring nodes. |

| Microcontroller (e.g., ESP8266) | Hardware | Interfaces with sensors, manages data collection, and enables wireless data transmission to cloud platforms. |

| Open Data Kit (ODK) | Software | Open-source suite for building mobile data collection forms, used for self-administered smartphone surveys. |

| PurpleAir, AirNow Sensor Networks | Data | Provides extensive, real-time air quality data from public sensor networks for model training and validation. |

| Species Distribution Models (SDMs) | Algorithm | Statistical tools that use species occurrence records and environmental data to estimate geographic ranges and suitable habitats. |

| Community-Sourced Data (e.g., iNaturalist, Biome) | Data | Provides massive volumes of geotagged species observations for training AI models and ecological analysis. |

| Shapley Additive Explanations (SHAP) | Algorithm | An Explainable AI (XAI) method that interprets ML model outputs, quantifying the contribution of each input feature. |

| Gradient Boosting (GB) / k-Nearest Neighbors (kNN) | Algorithm | High-performance ML algorithms used for calibrating low-cost environmental sensors against reference instruments. |

The confluence of smartphone technology and advanced machine learning has created a powerful new paradigm for environmental monitoring. The methodologies and protocols outlined in this guide demonstrate a fundamental shift towards data-driven, hyperlocal, and cost-effective research in air quality, biodiversity, and climate science. The ability to collect and intelligently analyze high-resolution spatiotemporal data is not only filling critical knowledge gaps but also empowering more precise and proactive environmental management and conservation strategies. As these technologies continue to evolve, with advancements in edge computing, 5G, and more sophisticated AI models, their role in understanding and protecting our planetary ecosystems will undoubtedly become even more central to global scientific and policy efforts.

The integration of machine learning (ML) with smartphone-based sensing represents a paradigm shift in environmental monitoring. This synergy enables a transition from centralized, expensive monitoring stations to distributed, real-time data acquisition and analysis. Framed within a broader thesis on the role of machine learning in smartphone-based environmental analysis, this technical guide explores how this convergence creates a powerful value proposition: it facilitates immediate, data-driven decision-making through intelligent alerts while simultaneously empowering a new era of citizen science, democratizing environmental data collection and fostering public engagement in scientific discovery. Advanced machine learning models, including hybrids like MLP-CapSA and resource-efficient networks, are central to transforming raw sensor data into actionable intelligence and credible scientific findings [11] [19].

Technical Foundations of Smartphone-Based Environmental Analysis

The architecture of a smartphone-based environmental monitoring system rests on three core technical pillars: on-device sensors, machine learning models, and data communication protocols.

On-Device Sensing Capabilities

Modern smartphones are equipped with a sophisticated array of sensors capable of measuring a wide range of environmental parameters. These sensors act as the primary data acquisition layer.

- Physical Quantity Sensors: These include sensors for temperature, humidity, atmospheric pressure, light intensity, and sound level, which measure fundamental physical phenomena in the device's immediate surroundings [20].

- Motion and Position Sensors: Accelerometers, gyroscopes, and GPS sensors are instrumental in mobility applications, tracking movement, vibration, and geographic location, which can be correlated with environmental data for spatial analysis [20].

- Chemical Sensing (Emerging): While less common in standard devices, advancements in accessory and integrated sensors are beginning to allow for the detection of certain chemical attributes, such as air quality parameters [20].

Machine Learning Integration and Model Optimization

Machine learning models transform raw sensor readings into meaningful insights. Given the resource constraints of mobile devices, model optimization is critical.

- On-Device ML: Deploying ML models directly on smartphones eliminates cloud dependency, reduces latency by up to 50%, and enhances data privacy. Specialized hardware like Neural Processing Engines enables local inference for tasks like voice recognition and image classification [19].

- Model Optimization Techniques: To ensure performance on mobile hardware, techniques such as quantization (reducing numerical precision of weights) and pruning (removing redundant neurons) are employed. These methods can reduce model size by up to 75% and cut inference times by 30-50% without significant accuracy loss [19].

- Frameworks and APIs: Tools like TensorFlow Lite and PyTorch Mobile are essential for converting and deploying full models into a mobile-optimized format. The Android Neural Networks API (NNAPI) allows for offloading computations to dedicated hardware like GPUs and DSPs, yielding latency reductions exceeding 40% compared to CPU-only processing [19].

Table 1: Key Machine Learning Models for Environmental Analysis on Smartphones

| Model/Algorithm | Primary Application | Key Advantage | Citation |

|---|---|---|---|

| Hybrid MLP-CapSA | Predicting AI education quality (as a proxy for system performance) | High accuracy (R²=0.9803); effective weight optimization | [11] |

| LSTM/GRU Networks | Forecasting energy consumption and indoor air quality (IAQ) | >92% accuracy in time-series prediction of environmental parameters | [21] |

| Pre-trained Models (e.g., MobileNetV3) | Image-based environmental classification (e.g., plant health, pollution) | Fast deployment; high accuracy for real-time inference | [19] |

| Random Forest | Species identification and community structure prediction | High interpretability; handles mixed data types well | [22] [23] |

Experimental Protocols for Validated Research

The credibility of smartphone-based environmental research hinges on rigorous, reproducible experimental methodologies. The following protocols detail two key applications.

Protocol 1: Monitoring Indoor Air Quality (IAQ) and Energy Efficiency

This protocol, adapted from a study balancing IAQ with energy use in buildings, demonstrates the use of ML for multi-objective optimization [21].

1. Objective: To experimentally analyze and optimize HVAC system operation for simultaneous energy savings and maintenance of optimal IAQ using machine learning.

2. Materials and Setup:

- Data Acquisition System: A network of sensors measuring CO₂, particulate matter (PM2.5, PM10), temperature, humidity, and exogenous variables (time, date, rain). Over 35,000 records were collected [21].

- Computational Platform: A system capable of training and deploying recurrent neural network models.

3. Methodology:

- Data Collection: Sensor data is collected in real-time and aggregated into a structured dataset.

- Model Training and Validation: Several ML models, including RNN, LSTM, GRU, and CNN, are trained on the dataset. The models learn to predict future IAQ parameters and energy consumption. Models are validated for robustness using diverse datasets, and their predictions are explained using SHAP (Shapley Additive exPlanations) values [21].

- Implementation: The trained model (with GRU/LSTM achieving >92% accuracy) is deployed to provide real-time control signals to the HVAC system. This enables predictive and pre-emptive adjustments, ensuring energy is not wasted while IAQ remains within healthy thresholds [21].

Diagram 1: IAQ Optimization Workflow

Protocol 2: Citizen Science for Fossil Plant Identification

This protocol outlines a quantitative method for citizen scientists to contribute to paleobotany using machine learning for fossil identification, based on a study of Czekanowskiales [23].

1. Objective: To numerically classify and identify fossil plant genera and species based on morphological trait data using a combination of cluster analysis and supervised learning.

2. Materials:

- Sample Set: A dataset of 80 fossil specimens from 35 species, documented in 206 images from published literature and specimen infrastructures [23].

- Trait Data: Macroscopic (e.g., leaf dimensions, vein density) and cuticular (e.g., stomatal patterns) traits were manually measured and recorded.

3. Methodology:

- Trait Encoding: Qualitative traits (e.g., leaf shape) are converted into numerical values using label encoding or one-hot encoding for ML processing [23].

- Unsupervised Clustering: A hierarchical clustering algorithm is applied to the trait dataset to perform numerical taxonomy and group species without prior labels, validating traditional taxonomic groups [23].

- Supervised Model Training: Five algorithms—Logistic Regression (LR), k-Nearest Neighbors (KNN), Naive Bayes (NB), Classification and Regression Tree (CART), and Support Vector Machine (SVM)—are trained on the labeled trait data. The model learns to map traits to genus and species names [23].

- Identification: The best-performing model (CART and LR in the source study) can be deployed as a mobile-friendly tool. Citizen scientists can input measurements and images of their finds for automated, quantitative identification, overcoming reliance on subjective expert judgment [23].

Table 2: Key Research Reagent Solutions for Environmental and Ecological Analysis

| Item/Reagent | Function/Application | Technical Specification/Note |

|---|---|---|

| IoT Sensor Node | Measures real-time environmental parameters (Temp, Humidity, CO₂, PM) | Integrates with microcontroller (Arduino) and HTTP/Wi-Fi for data transmission [24]. |

| Trait Encoding Scripts | Converts qualitative morphological observations into machine-readable data | Uses Label Encoding or One-Hot Encoding in Python/Pandas for ML readiness [23]. |

| TensorFlow Lite | Framework for deploying pre-trained ML models on mobile and edge devices | Enables real-time inference; supports quantization for model size reduction [19]. |

| SHAP (SHapley Additive exPlanations) | Explains the output of ML models, providing interpretability for predictive outcomes | Critical for validating model decisions in scientific contexts, such as IAQ predictions [21]. |

The Scientist's Toolkit

Implementing the above protocols requires a suite of software and methodological tools.

- iMESc App: An interactive R/Shiny-based application designed to streamline ML workflows for environmental data. It integrates tools for data pre-processing, visualization, and both unsupervised (Self-Organizing Maps, clustering) and supervised (Random Forest, SVM) algorithms, significantly reducing coding time and technical barriers [22].

- Accessible Data Visualization Principles: When presenting findings, ensure visualizations are accessible. This includes using high-contrast colors (≥4.5:1 for text), avoiding color as the sole means of conveying information, providing direct labels and alternative text, and offering data in supplemental formats (e.g., tables) [25].

The value proposition of machine learning in smartphone-based environmental analysis is robust and multi-faceted. It moves beyond simple data logging to enable real-time intelligent alerts for immediate intervention, as demonstrated in IAQ management. Concurrently, it powerfully enables citizen science by providing the public with accessible, quantitative tools for species identification and data collection, thereby expanding the scale and scope of environmental research. The continuous advancement of on-device ML, sensor technology, and user-friendly analytical platforms promises to further deepen this synergy, leading to smarter, more responsive environmental stewardship and a more engaged, scientifically literate public.

From Data to Decisions: ML Algorithms and Workflows for Smartphone Analysis

The proliferation of smartphones has ushered in a new era for environmental analysis research. These ubiquitous devices are equipped with a powerful suite of sensors, including high-resolution cameras, multi-axis inertial measurement units (IMUs), GPS, and microphones, transforming them into versatile, portable data acquisition systems. This capability enables researchers to collect high-frequency, multi-modal data across vast spatial and temporal scales, facilitating a data-driven approach to understanding complex environmental phenomena. Machine learning (ML) forms the computational backbone required to convert this raw, often noisy, sensor data into actionable insights. This whitepaper details a core algorithmic toolkit for smartphone-based research, focusing on three foundational ML architectures: Convolutional Neural Networks (CNNs) for image analysis, Long Short-Term Memory networks (LSTMs) for time-series data, and Random Forest (RF) for classification tasks. The effective application of these algorithms is critical for advancing research in areas such as precision agriculture, environmental monitoring, and human activity recognition.

The Core Algorithms

Convolutional Neural Networks (CNNs) for Image Analysis

CNNs are specialized deep learning architectures designed to process data with a grid-like topology, such as images. Their strength lies in automatically and adaptively learning spatial hierarchies of features from raw pixel data.

Theoretical Foundation: A CNN typically comprises three primary types of layers:

- Convolutional Layers: These layers apply a set of learnable filters (or kernels) to the input image. Each filter slides (convolves) across the input, computing the dot product between the filter weights and the local region of the input, producing a feature map that responds to specific visual patterns like edges, corners, and textures.

- Pooling Layers: These layers perform non-linear down-sampling, reducing the spatial dimensions of the feature maps. This operation decreases the computational load, provides a form of translation invariance, and helps control overfitting. Max pooling is the most common technique, which extracts the maximum value from a set of values.

- Fully-Connected Layers: After several rounds of convolution and pooling, the high-level reasoning is done via fully-connected layers. Every neuron in a fully-connected layer is connected to every neuron in the preceding volume, culminating in a final layer that outputs class probabilities (for classification) or continuous values (for regression).

Application in Smartphone Research: CNNs are predominantly used for tasks involving visual data captured by smartphone cameras.

- Precision Agriculture: A study on citrus leaf disease classification compared MobileNet CNN and a Self-Structured CNN (SSCNN). The SSCNN achieved a validation accuracy of 99%, outperforming MobileNet (92%), and was deemed more suitable for real-time smartphone deployment due to its computational efficiency [26].

- Environmental Monitoring: Research has explored using CNN-based regression models on mobile-captured images to predict air quality indices (AQI) and pollutant concentrations (e.g., PM2.5, NO2). This approach offers a cost-effective alternative to traditional, expensive sensor networks [27].

- Ergonomics and HCI: CNNs like MobileNetV2, Inception V3, and ResNet-50 have been employed to classify smartphone grip postures from images, with an ensemble model achieving an accuracy of 95.9%. This analysis helps in designing more ergonomic user interfaces [28].

Long Short-Term Memory (LSTM) for Time-Series Analysis

LSTM networks are a type of recurrent neural network (RNN) specifically engineered to capture long-range dependencies and temporal patterns in sequential data, a task at which traditional RNNs often fail due to the vanishing gradient problem.

Theoretical Foundation: The key innovation of the LSTM is its memory cell and gating mechanism, which regulates the flow of information. The cell state acts as a conveyor belt, running through the entire sequence chain, with minor linear interactions. This allows information to flow unchanged. The gates are neural networks that selectively add or remove information to the cell state. They are:

- Forget Gate: Decides what information to discard from the cell state.

- Input Gate: Determines which new values from the current input should be updated to the cell state.

- Output Gate: Controls what part of the current cell state is output at the current time step.

Application in Smartphone Research: LSTMs are ideal for analyzing time-series data from smartphone IMUs (accelerometer, gyroscope) and other sequential environmental readings.

- Human Activity Recognition (HAR): LSTM networks excel at classifying human activities (e.g., walking, running, using tools) from smartphone sensor data. A hybrid 4-layer CNN-LSTM model has been shown to enhance recognition performance by automatically learning spatial features and temporal representations, achieving high accuracy on public datasets like UCI-HAR [29]. Enhanced LSTM models incorporating attention and squeeze-and-excitation blocks have demonstrated accuracies of up to 99% on sensor-based HAR tasks [30].

- Advanced Environmental Forecasting: LSTM models, including hybrids with CNNs, are used for complex time-series predictions, such as forecasting PM2.5 and PM10 levels by learning from historical pollution and meteorological data [27].

Random Forest (RF) for Classification

Random Forest is a robust ensemble learning method that operates by constructing a multitude of decision trees at training time. It is renowned for its high accuracy, resistance to overfitting, and ability to handle high-dimensional data.

Theoretical Foundation: Random Forest introduces two key sources of randomness:

- Bagging (Bootstrap Aggregating): Each tree is trained on a random subset of the original training data, drawn with replacement.

- Random Feature Selection: At each split in the decision tree, the algorithm considers only a random subset of features. This de-correlates the individual trees. For classification, the final output is the class selected by the majority of the trees. This collective decision-making process results in a model that is generally more accurate and stable than any single decision tree.

Application in Smartphone Research: RF is widely used for its interpretability and effectiveness in various classification tasks, even with smaller datasets.

- Android Malware Detection: A study on permission-based Android malware detection found that the Random Forest algorithm demonstrated superior performance, achieving an accuracy of 93.96%. The methodology also reduced the feature set size by up to 90% while maintaining this high accuracy, significantly improving the model's running time [31].

- Context-Aware Smartphone Usage Prediction: In predictive modeling of personalized smartphone usage (e.g., predicting call activity), Random Forest is among the suite of classic ML classifiers that have been effectively employed to classify user behavior based on temporal, spatial, and social contexts [32].

- Sensor-Based Hand Gesture Recognition: RF has been used in ensemble models, such as a voting meta-classifier with SVM and Logistic Regression, to classify data glove-captured hand gestures with an accuracy of 95.5% [28].

Quantitative Performance Comparison

The following tables summarize the performance of the discussed algorithms across various smartphone-based research applications.

Table 1: CNN Performance in Smartphone Image-Based Tasks

| Application Domain | Specific Task | CNN Model(s) Used | Reported Performance | Source |

|---|---|---|---|---|

| Precision Agriculture | Citrus Leaf Disease Classification | MobileNet, SSCNN | Training Acc: 98.38% (MobileNet), 98% (SSCNN); Validation Acc: 92% (MobileNet), 99% (SSCNN) | [26] |

| Ergonomics | Smartphone Grip Posture Recognition | Ensemble (MobileNetV2, ResNet-50, Inception V3) | 95.9% Accuracy | [28] |

| Environmental Monitoring | Air Quality (Pollutant) Prediction | Regression-based CNN | Mean Squared Error: 0.0077 (2 pollutants), 0.0112 (5 pollutants) | [27] |

Table 2: LSTM Performance in Smartphone Time-Series Tasks

| Application Domain | Specific Task | LSTM Model(s) Used | Reported Performance | Source |

|---|---|---|---|---|

| Human Activity Recognition | Recognition of Daily/Industrial Activities | LSTM with Attention & SE blocks | 99% Accuracy | [30] |

| Human Activity Recognition | Sensor-based Activity Recognition | 4-layer CNN-LSTM | Accuracy improvement of up to 2.24% over prior approaches | [29] |

| Environmental Forecasting | PM10 Level Prediction | GRU (an LSTM variant) | Best results among RNN, LSTM, and GRU models | [27] |

Table 3: Random Forest Performance in Smartphone Classification Tasks

| Application Domain | Specific Task | Key Features | Reported Performance | Source |

|---|---|---|---|---|

| Cybersecurity | Android Malware Detection | Android Permissions | 93.96% Accuracy | [31] |

| Cybersecurity | Android Malware Detection | Reduced Permission Set (90% less) | 93.96% Accuracy (maintained) | [31] |

| Ergonomics | Hand Gesture Recognition | Voting Classifier (RF, SVM, LR) | 95.5% Accuracy | [28] |

Detailed Experimental Protocols

To ensure reproducibility, this section outlines detailed methodologies for key experiments cited in this whitepaper.

- Data Acquisition: Collect 2,939 images of citrus leaves at the vegetative stage using a smartphone. The dataset should include both healthy and diseased leaves, with diagnoses validated by a plant pathologist.

- Data Preprocessing: Resize all images to a uniform resolution suitable for the chosen CNN input. Augment the dataset using techniques like rotation, flipping, and scaling to increase its size and variability.

- Dataset Splitting: Randomly split the preprocessed image dataset into a training set (e.g., 1787 images) and a validation set.

- Model Training:

- Configure two CNN architectures: MobileNet (version 2) and a Self-Structured CNN (SSCNN).

- Train both models on the same training set, using an appropriate optimizer and loss function (e.g., categorical cross-entropy).

- Monitor the training and validation accuracy and loss over multiple epochs (e.g., 10-12).

- Model Evaluation: Evaluate the final model on the held-out validation set. The primary metric for comparison is validation accuracy. The SSCNN is expected to achieve a higher validation accuracy (~99%) than MobileNet (~92%).

- Data Collection: Use a smartphone's inertial sensors (accelerometer and gyroscope) to collect time-series data while participants perform a predefined set of activities (e.g., walking, sitting, standing, walking upstairs, walking downstairs).

- Data Preprocessing & Segmentation:

- Apply a noise filter to the raw sensor data.

- Segment the continuous data stream into fixed-width sliding windows (e.g., 2.56 seconds). Each window represents one data sample.

- Feature Extraction (for traditional ML) / Model Input Preparation (for LSTM):

- For LSTM: The raw segmented data from the sensors can be fed directly into the network, allowing it to learn features automatically.

- Alternatively, engineered features (e.g., mean, standard deviation) can be calculated for each window.

- Model Training and Validation:

- Design an LSTM-based network architecture. A hybrid CNN-LSTM model (e.g., 4-layer CNN-LSTM) can be used to first extract spatial features with CNN layers before processing the sequence with an LSTM layer.

- Train the model using the segmented data.

- Validate the model using a rigorous protocol such as 10-fold cross-validation or Leave-One-Subject-Out (LOSO) cross-validation to ensure generalizability.

- Performance Measurement: The primary evaluation metric is classification accuracy on the test set, comparing the predicted activities against the ground truth labels.

- Data Collection: Obtain a dataset of Android applications (APKs) containing both benign and malware samples.

- Feature Extraction: Static analysis is performed on each APK to extract the list of requested permissions from the AndroidManifest.xml file. This creates a feature vector for each application where each feature represents a specific Android permission.

- Feature Selection:

- Calculate a feature importance score for each permission (e.g., using Gradient Boosting).

- Rank the permissions based on their importance score and select the top N most important features, significantly reducing the dimensionality of the dataset (e.g., by 90%).

- Model Training:

- Train a Random Forest classifier on the training set, using both the full feature set and the reduced feature set.

- Model Evaluation:

- Evaluate the model on a separate test set. Compare the accuracy, precision, and recall of the model trained on the full feature set versus the reduced set.

- Compare the execution (training) time for both models. The model with the reduced feature set is expected to achieve comparable accuracy with a significantly shorter run-time.

Visualization of Model Architectures and Workflows

CNN-LSTM Hybrid Model for Human Activity Recognition

Random Forest for Android Malware Classification

Essential Research Reagent Solutions

The following table outlines the key "research reagents" — the datasets, software, and hardware — required for conducting smartphone-based ML research.

Table 4: Essential Research Reagents for Smartphone-Based ML Analysis

| Reagent Category | Specific Tool / Resource | Function in Research |

|---|---|---|

| Public Datasets | UCI-HAR Dataset [29] | Benchmark dataset for evaluating Human Activity Recognition models using smartphone sensor data. |

| Public Datasets | PlantVillage Dataset | Large public dataset of plant images, useful for training and validating agricultural disease detection models [26]. |

| Public Datasets | Android Permission-based Datasets [31] | Curated datasets of Android applications with labeled permissions, used for malware detection research. |

| Software Libraries | TensorFlow / Keras, PyTorch | Open-source deep learning frameworks used to build, train, and deploy CNN and LSTM models. |

| Software Libraries | Scikit-learn | Comprehensive machine learning library for implementing Random Forest and other classic ML algorithms, as well as for data preprocessing [31] [32]. |

| Hardware | Modern Smartphone | Primary data acquisition device, providing cameras, IMU sensors (accelerometer, gyroscope), and GPS. Also serves as a deployment platform for real-time models. |

| Computing Resources | GPU-Accelerated Workstation / Cloud Compute | Essential for reducing the time required to train complex deep learning models like CNNs and LSTMs. |

The synergistic application of CNNs, LSTMs, and Random Forest algorithms constitutes a powerful toolkit for advancing smartphone-based environmental analysis. CNNs provide the vision to interpret visual environmental indicators, LSTMs offer the ability to understand temporal patterns in sensor data, and Random Forest delivers robust and efficient classification. As smartphone sensors continue to improve and these machine learning algorithms are further refined and optimized for mobile deployment, their collective impact on research will only grow. This will enable the development of more sophisticated, real-time, and personalized systems for monitoring and responding to complex environmental dynamics, ultimately contributing to smarter and more sustainable interactions with our environment.

The integration of machine learning (ML) with smartphone technology has created a powerful paradigm for environmental analysis research. Smartphones, equipped with a diverse array of embedded sensors and significant processing capabilities, offer an unprecedented platform for collecting high-resolution environmental data and deploying analytical models at scale. This in-depth technical guide details the end-to-end workflow for developing ML systems within the context of smartphone-based environmental analysis, providing researchers and drug development professionals with a structured methodology from initial data collection to final model deployment. The proliferation of smartphones has enabled the creation of extensive datasets, with modern studies leveraging multi-sensor data collection that extends beyond Wi-Fi and Bluetooth to include inertial sensors, magnetometers, and environmental sensors [33]. This guide establishes a foundational framework for leveraging these capabilities in environmental research, with applications ranging from air quality monitoring to ecosystem health assessment.

Data Collection Methodologies

The data collection phase establishes the foundation for any successful ML application in environmental analysis. This process requires careful consideration of sensor selection, data recording protocols, and ethical frameworks.

Smartphone Sensor Capabilities

Modern smartphones contain a sophisticated array of sensors capable of capturing diverse environmental phenomena. The table below summarizes key sensors relevant to environmental analysis research:

Table 1: Smartphone Sensors for Environmental Data Collection

| Sensor Type | Environmental Measurement | Data Format | Research Application |

|---|---|---|---|

| Accelerometer | Vibration patterns, physical disturbances | Triaxial acceleration values (m/s²) | Seismic activity monitoring, infrastructure integrity |

| Magnetometer | Magnetic field strength | Microtesla (μT) | Detection of magnetic pollutants, geological mapping |

| Microphone | Ambient sound levels | Decibels (dB), frequency spectra | Noise pollution studies, biodiversity monitoring via acoustics |

| Ambient Light Sensor | Illuminance | Lux (lx) | Light pollution mapping, forest canopy density analysis |

| Barometer | Atmospheric pressure | Hectopascals (hPa) | Weather pattern prediction, altitude-corrected measurements |

| GPS | Location coordinates | Latitude, longitude | Spatial mapping of environmental parameters |

| Camera | Visual environmental features | RGB image data, video | Land use classification, pollution visualization |

Experimental Protocol for Multi-Modal Data Collection

Comprehensive environmental analysis often requires a multi-modal approach that combines multiple sensing modalities to overcome the limitations of individual sensors [34]. The following protocol ensures consistent, high-quality data collection:

Sensor Calibration: Prior to deployment, calibrate sensors against reference equipment. For example, calibrate smartphone microphones against a reference sound level meter at multiple frequencies (e.g., 250 Hz, 1 kHz, 8 kHz) and barometers against certified pressure standards.

Spatial-Temporal Sampling: Establish systematic sampling strategies that account for both spatial and temporal dimensions. For urban air quality studies, implement a grid-based collection pattern with timed intervals (e.g., samples collected at 100-meter intervals every 2 hours during peak pollution periods).

Multi-Modal Synchronization: Implement hardware-level timestamping with network time protocol (NTP) synchronization to align data streams from different sensors. This enables precise temporal correlation between, for instance, visual observations (camera) and quantitative measurements (other sensors) [34].

Contextual Metadata Recording: Document environmental conditions (temperature, humidity, weather conditions), device information (model, OS version), and collection parameters (orientation, placement) for each sampling event.

Ethical Compliance: Implement privacy-preserving techniques such as data anonymization and secure transmission, particularly when collecting visual or location data in sensitive areas [35]. Obtain necessary institutional review board (IRB) approvals for studies involving human subjects or data from private spaces.

Data Preprocessing Framework

Raw sensor data requires significant preprocessing to become suitable for ML model training. This phase transforms heterogeneous, noisy data streams into clean, structured features.

Preprocessing Pipeline

The preprocessing framework for smartphone-based environmental data consists of several critical stages:

Noise Reduction and Signal Filtering: Apply appropriate digital filters based on signal characteristics. For inertial sensor data, use a high-pass filter (cutoff frequency 0.1-0.5 Hz) to remove gravitational components, followed by a low-pass filter (cutoff frequency 15-20 Hz) to reduce high-frequency noise [35]. For audio environmental data, implement band-pass filtering to focus on relevant frequency ranges.

Data Imputation and Gap Filling: Address missing data points using sophisticated imputation methods. For short gaps (<5 seconds) in environmental time series, employ linear interpolation. For longer gaps, use sensor fusion techniques to estimate missing values from correlated sensors [34].

Temporal Alignment: Synchronize heterogeneous data streams using dynamic time warping algorithms or cross-correlation techniques to address differing sampling rates across sensors [34].

Feature Extraction: Derive informative features from raw sensor data. For environmental analysis, particularly relevant features include:

- Statistical Features: Mean, standard deviation, median, percentiles (25th, 75th)

- Spectral Features: Fast Fourier Transform (FFT) coefficients, spectral entropy, dominant frequencies

- Temporal Features: Autocorrelation coefficients, trend analysis, seasonal decomposition

- Cross-Sensor Features: Correlation coefficients between different sensor modalities

The following diagram illustrates the complete preprocessing workflow:

Data Quality Validation

Implement automated quality validation checks throughout the preprocessing pipeline:

- Sensor Integrity Verification: Detect sensor malfunctions through range checks (e.g., magnetometer readings outside Earth's typical 25-65 μT field) and consistency checks across redundant sensors.

- Signal Quality Indicators: Compute signal-to-noise ratios for each data segment and flag low-quality recordings for manual review or exclusion.

- Statistical Process Control: Establish control charts for key parameters to detect systematic deviations from expected distributions.

Model Training and Algorithm Selection

The model training phase transforms preprocessed sensor data into predictive capabilities for environmental analysis.

Machine Learning Approaches for Environmental Analysis

Different environmental monitoring tasks require specialized algorithmic approaches:

Table 2: ML Algorithms for Smartphone Environmental Analysis

| Algorithm Category | Specific Algorithms | Environmental Applications | Performance Considerations |

|---|---|---|---|

| Traditional ML | Random Forest, SVM, XGBoost | Air/water quality classification, pollution source identification | AUC: 95-98%, Accuracy: 85-92% [35] |

| Deep Learning | CNN, LSTM, Transformer Networks | Complex pattern recognition in multi-modal sensor data, temporal forecasting | Improved accuracy but higher computational cost [33] |

| Hybrid Approaches | CNN-LSTM, MLP with nature-inspired optimizers | Predictive modeling of environmental trends, quality assessment | CCC: 0.96, R²: 0.98 [11] |

| Lightweight Models | Pruned Neural Networks, MobileNet | Real-time on-device environmental monitoring | 30-50% reduction in model size with <5% accuracy drop [35] |

Experimental Protocol for Model Development

A rigorous methodology ensures robust model performance across diverse environmental conditions:

Data Partitioning: Implement stratified splitting to maintain distribution of important environmental variables (e.g., seasonal variations, geographic diversity). Recommended split: 70% training, 15% validation, 15% testing.

Cross-Validation Strategy: Use grouped k-fold cross-validation (k=5) where data from the same location or time period are kept together within folds to prevent leakage and ensure generalizability.

Hyperparameter Optimization: Employ Bayesian optimization or genetic algorithms like Capuchin Search Algorithm (CapSA) for efficient hyperparameter tuning, which has demonstrated superior performance in environmental prediction tasks [11].

Model Training with Regularization: Implement early stopping with a patience of 10-20 epochs and apply appropriate regularization techniques (L1/L2, dropout) to prevent overfitting, particularly important with limited environmental datasets.

Ensemble Methods: Combine predictions from multiple models (e.g., Random Forest, Gradient Boosting, and Neural Networks) through stacking or averaging to improve robustness and accuracy.

The following diagram illustrates the model architecture selection and training workflow:

Performance Metrics for Environmental Models

Evaluation of environmental ML models requires comprehensive assessment across multiple dimensions: