Machine Learning for Trace Contaminant Detection: Advanced Strategies for Pharmaceutical and Biomedical Applications

This article provides a comprehensive overview of machine learning (ML) methodologies for detecting and managing trace concentration contaminants, a critical challenge in drug development and biomedical research.

Machine Learning for Trace Contaminant Detection: Advanced Strategies for Pharmaceutical and Biomedical Applications

Abstract

This article provides a comprehensive overview of machine learning (ML) methodologies for detecting and managing trace concentration contaminants, a critical challenge in drug development and biomedical research. It explores the foundational principles of computational toxicology and anomaly detection, details specific ML algorithms like One-Class SVM and Autoencoders for identifying contaminants in complex processes such as fermentation, and discusses advanced optimization techniques including hyperparameter tuning with Bayesian and Dragonfly algorithms. The content further compares model performance across various applications, from pharmaceutical drying to water quality monitoring, and examines validation frameworks to ensure model reliability and regulatory compliance. Tailored for researchers, scientists, and drug development professionals, this review synthesizes current trends, addresses practical implementation challenges, and highlights future directions integrating multimodal AI and explainable models for enhanced contaminant handling.

The Rising Imperative: Trace Contaminants and Computational Toxicology

The Critical Impact of Trace Contaminants on Drug Safety and Efficacy

Trace contaminants in pharmaceutical products refer to unintended biological, chemical, or physical substances present in drugs, biologics, and other formulations that can compromise product safety, efficacy, and quality. These contaminants can arise from various sources including raw materials, manufacturing equipment, production processes, and personnel. even at minimal concentrations, these impurities can significantly impact drug stability, bioavailability, and patient safety. The detection and control of these contaminants is therefore a critical aspect of pharmaceutical manufacturing and regulatory compliance, ensuring that medications meet stringent quality standards before reaching consumers.

The pharmaceutical industry faces increasing challenges related to contamination control driven by stringent regulatory requirements, rising instances of drug recalls, and growing investments in advanced quality control systems. Market analysis indicates robust growth in the contamination detection sector, with particular expansion in the biologics and personalized medicine segments which demand higher levels of contamination control. North America currently leads this market with a 45.2% share, while the Asia-Pacific region is emerging as the fastest-growing market, driven by significant R&D investments from major pharmaceutical companies focused on enhancing detection speed, accuracy, and sensitivity.

Technical Support Center: Troubleshooting Guides

Microbial Contamination Troubleshooting Guide

Problem: Recurrent microbial contamination in cell culture samples

- Potential Cause: Inadequate aseptic technique or environmental control

- Troubleshooting Steps:

- Review personnel training records and observe aseptic technique during operations

- Increase environmental monitoring frequency for viable particulates

- Validate sterilization cycles for all media and reagents

- Implement rapid microbial methods for faster detection

- Audit HVAC system performance and room pressurization

- Preventive Measures: Establish comprehensive contamination control strategy covering facility design, equipment, utilities, raw materials, and personnel flows. Implement routine monitoring with statistical process control trending.

Problem: Endotoxin contamination in parenteral products

- Potential Cause: Biofilm formation in water for injection (WFI) system or container closures

- Troubleshooting Steps:

- Sample and test WFI system at multiple points including use points

- Inspect and sanitize storage loops and distribution systems

- Test container closures for endotoxin specifications

- Review sterilization validation studies for depyrogenation processes

- Audit component supplier quality systems

- Preventive Measures: Implement real-time endotoxin testing, establish sanitization frequency based on data, and qualify secondary packaging suppliers.

Chemical Contamination Troubleshooting Guide

Problem: Leachables and extractables in biologic formulations

- Potential Cause: Interaction between drug product and container-closure system

- Troubleshooting Steps:

- Conduct accelerated stability studies with multiple container lots

- Perform extractables and leachables profiling using LC-MS

- Review supplier change notifications for components

- Evaluate manufacturing process changes that may increase leaching

- Analyze compatibility with new drug substance variants

- Preventive Measures: Implement supplier change control protocols, maintain inventory of qualified components, and conduct predictive modeling of leachables.

Problem: Cross-contamination between product campaigns

- Potential Cause: Inadequate cleaning verification or facility design flaws

- Troubleshooting Steps:

- Review cleaning validation protocols and acceptance criteria

- Audit equipment design for cleanability and residue removal

- Evaluate changeover procedures and personnel practices

- Implement product-specific detection methods with appropriate sensitivity

- Assess facility airflow patterns and material flows

- Preventive Measures: Design dedicated equipment for highly potent compounds, establish health-based exposure limits, and implement continuous monitoring.

Table 1: Common Contamination Types and Detection Technologies

| Contamination Type | Common Sources | Primary Detection Methods | Typical Action Levels |

|---|---|---|---|

| Microbial | Personnel, raw materials, air, water | Rapid microbiological methods, PCR, colony counting | Sterile products: zero toleranceNon-sterile: based on product type |

| Chemical | Raw materials, leaching, degradation | Chromatography (HPLC, GC), spectroscopy | Based on ICH guidelines Q3A-Q3D |

| Particulate | Equipment wear, environment, packaging | Light obscuration, microscopy, laser diffraction | Visible particles: zero toleranceSubvisible: per product specification |

| Endotoxin | Water systems, components, personnel | LAL testing, recombinant methods | Based on product route of administration |

Experimental Protocols for Contaminant Detection

Machine Learning-Enhanced Contaminant Prediction Protocol

Purpose: To develop machine learning models for predicting spatial patterns of contaminants in pharmaceutical water systems.

Materials and Equipment:

- Historical water quality data (minimum 3 years)

- Environmental monitoring data

- Process parameters data

- Machine learning workstation with Python/R

- Statistical analysis software

Procedure:

- Data Collection: Compile historical data on chemical concentrations, microbial counts, and endotoxin levels from water system monitoring points. Include relevant predictors such as system age, maintenance records, and seasonal variations.

- Feature Engineering: Normalize data, handle censored values (non-detects), and select relevant predictors through correlation analysis and domain knowledge.

- Model Training: Implement random forest, gradient boosting, and neural network algorithms using k-fold cross-validation. Optimize hyperparameters through Bayesian optimization.

- Model Validation: Compare model performance using metrics including accuracy, precision, recall, and area under the ROC curve. Validate with held-back dataset.

- Implementation: Deploy best-performing model for predictive monitoring and sampling planning.

Expected Outcomes: Classification models that predict exceedances of contamination thresholds with >80% accuracy, enabling targeted sampling and early intervention.

Spectroscopy-Based Contamination Screening Protocol

Purpose: To implement UV absorbance spectroscopy with machine learning for rapid contamination screening during manufacturing.

Materials and Equipment:

- UV-Vis spectrophotometer with flow cell

- Reference standards for expected contaminants

- Data analysis software with machine learning capabilities

- Validation samples with known contamination levels

Procedure:

- System Calibration: Collect UV absorbance spectra for pure products and known contaminants at various concentrations.

- Model Development: Train machine learning algorithms (PCA, SVM, neural networks) to recognize spectral patterns associated with contamination.

- Method Validation: Challenge the system with blinded samples containing various contaminant types and concentrations.

- Implementation: Integrate with manufacturing process for real-time monitoring of critical control points.

- Continuous Improvement: Update model with new contamination data as it becomes available.

Expected Outcomes: Non-invasive, real-time contamination screening with minimal sample preparation and rapid results delivery.

Table 2: Advanced Detection Technologies for Trace Contaminants

| Technology | Detection Principle | Applications | Sensitivity | Advantages |

|---|---|---|---|---|

| PCR/Molecular Diagnostics | Genetic material amplification | Microbial contamination, viral detection | <10 CFU | High specificity, rapid results |

| Mass Spectrometry | Mass-to-charge ratio separation | Chemical contaminants, leachables | ppb to ppt range | Broad screening capability |

| Raman Spectroscopy | Inelastic light scattering | Chemical identity, crystallinity | Varies by compound | Non-destructive, minimal sample prep |

| Flow Cytometry | Light scattering and fluorescence | Microbial contamination, cell therapy | Single cell | Rapid counting and characterization |

| Biosensors | Biological recognition elements | Specific contaminants, endotoxin | High specificity | Real-time monitoring, portable |

Machine Learning Applications in Contaminant Research

Machine learning offers transformative potential for predicting and classifying contaminant risks in pharmaceutical manufacturing. Based on studies of machine learning for predicting contaminants in drinking water, random forest classification models have shown particular utility for groundwater contaminants, with categorical models for substances like arsenic and nitrate demonstrating good performance in predicting exceedances of regulatory thresholds. These classification models are especially valuable for designing targeted sampling programs by identifying high-risk areas, thereby optimizing resource allocation.

The application of machine learning to pharmaceutical contamination control faces similar challenges and opportunities. Successful implementation requires appropriate feature selection, model training protocols, and validation against known data. Current research indicates that continuous models (predicting exact concentration levels) show lower predictive power than classification models (predicting threshold exceedances), suggesting that larger datasets and additional predictors are needed for improved performance. This aligns with pharmaceutical industry needs where binary decisions (contaminated/not contaminated) often drive critical quality decisions.

The integration of AI-driven systems into pharmaceutical contamination detection enhances product quality, improves productivity, and ensures the safety and efficacy of pharmaceutical products. The real-time monitoring capabilities of AI-driven systems enable prompt detection of defects, driving appropriate intervention and preventing the release of faulty products. As these technologies evolve, they offer the potential to move from reactive detection to proactive prediction of contamination events.

Frequently Asked Questions (FAQs)

Q: What are the most common sources of contamination in pharmaceutical manufacturing? A: The primary contamination sources align with the 5M diagram (Ishikawa diagram) categories: Manpower (personnel practices), Machine (equipment design and maintenance), Material (raw inputs), Method (procedures and processes), and Medium (environment). A robust Contamination Control Strategy systematically addresses each potential source through design controls, monitoring, and procedural governance.

Q: How does the regulatory landscape impact contamination control requirements? A: Regulatory standards like FDA's CGMP regulations and EU GMP Annex 1 establish minimum requirements for contamination control. These regulations emphasize that quality cannot be tested into products but must be built into the manufacturing process through proper design, monitoring, and control. The "C" in CGMP stands for "current," requiring companies to use technologies and systems that are up-to-date to prevent contamination, mix-ups, and errors.

Q: What is the role of a Contamination Control Strategy (CCS) per EU GMP Annex 1? A: According to EU GMP Annex 1, a CCS is "A planned set of controls for microorganisms, endotoxin/pyrogen and particles, derived from current product and process understanding that assures process performance and product quality." It should be a comprehensive, holistic document covering facility and equipment design, personnel flows, utilities, raw material controls, monitoring systems, and continuous improvement mechanisms.

Q: Why are biologics and cell therapy products particularly vulnerable to contamination? A: Biologics and cell culture samples are highly sensitive to contamination because they often contain complex molecules or living cells that cannot undergo terminal sterilization. These products provide rich growth media for microorganisms and are susceptible to subtle chemical changes. The expansion of biologics manufacturing is consequently driving increased adoption of advanced detection technologies with higher sensitivity requirements.

Q: How can machine learning improve traditional contamination detection methods? A: Machine learning enhances contamination detection by: (1) Identifying complex patterns in multivariate data that may elude conventional statistical process control; (2) Enabling predictive models that forecast contamination risks based on precursor events; (3) Classifying contamination types more accurately through pattern recognition; (4) Optimizing monitoring plans by identifying highest-risk sampling locations and frequencies.

Research Reagent Solutions

Table 3: Essential Reagents and Materials for Contamination Research

| Reagent/Material | Function | Application Examples | Quality Standards |

|---|---|---|---|

| High-Purity Solvents | Mobile phases, extraction | HPLC, GC analysis | HPLC grade, low UV absorbance |

| Culture Media | Microbial growth promotion | Sterility testing, environmental monitoring | USP/EP compliant, ready-to-use |

| PCR Reagents | Nucleic acid amplification | Mycoplasma testing, viral detection | Molecular biology grade, DNase-free |

| Reference Standards | Method calibration and validation | Quantifying specific contaminants | Certified reference materials |

| LAL Reagents | Endotoxin detection | Pyrogen testing | FDA-licensed, controlled |

| Chromatography Columns | Compound separation | HPLC, UHPLC analysis | Column certification available |

| Sample Preparation Kits | Concentration and cleanup | Solid-phase extraction | High recovery, minimal interference |

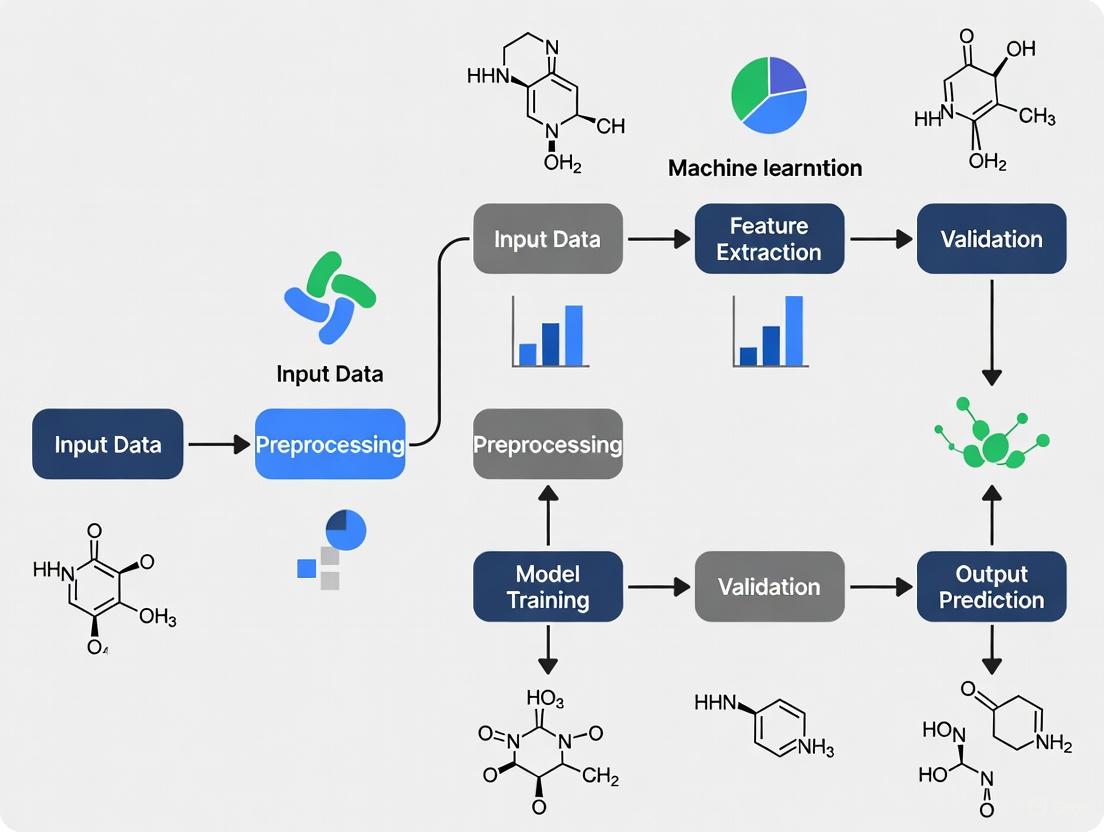

Workflow Visualizations

ML-Enhanced Contamination Control Workflow

Contamination Detection Methodology Integration

The field of toxicology is undergoing a fundamental transformation, moving away from traditional animal models toward advanced, human-relevant methods powered by artificial intelligence (AI) and machine learning (ML). This paradigm shift is particularly evident in the assessment of trace concentration contaminants, where modern computational approaches offer unprecedented precision in predicting biological effects. Regulatory agencies are now actively endorsing this transition—the U.S. Food and Drug Administration recently announced plans to phase out animal testing requirements for monoclonal antibodies and other drugs, replacing them with AI-based computational models and human-cell-based testing platforms [1]. This technical support center provides researchers, scientists, and drug development professionals with the practical frameworks needed to navigate this evolving landscape, offering specific troubleshooting guidance for implementing AI-driven approaches in contaminant assessment.

Frequently Asked Questions (FAQs)

FAQ 1: What specific AI/ML models are most effective for predicting toxicity of trace contaminants?

Random Forest and Support Vector Machines are among the most well-validated algorithms for toxicity prediction. These models consistently demonstrate strong performance across multiple toxicity endpoints, including hepatotoxicity, cardiotoxicity, and carcinogenicity [2]. For predicting concentration ranges of trace organic contaminants (TrOCs) in complex matrices like water, Random Forest has shown particularly high classification accuracy (≥73% for most compounds) using easily measurable physicochemical parameters as predictors [3]. Gradient Boosting Machine (GBM) also exhibits excellent performance, with one study reporting a testing coefficient of determination (DC) of 0.9372 for predicting water contamination indices [4].

Table 1: Performance Metrics of ML Algorithms for Toxicity Prediction

| Algorithm | Common Applications | Key Strengths | Reported Performance Metrics |

|---|---|---|---|

| Random Forest | Carcinogenicity, Cardiotoxicity, TrOC classification | Handles high-dimensional data well, provides feature importance | 73-83% accuracy for various endpoints [2] [3] |

| Support Vector Machine (SVM) | Carcinogenicity, Cardiotoxicity | Effective in high-dimensional spaces | 70-77% accuracy for various endpoints [2] |

| Gradient Boosting Machine (GBM) | Water quality assessment, Contamination indices | High predictive accuracy, strong generalization | Testing DC of 0.9372, MAE of 0.0063 [4] |

| k-Nearest Neighbors (kNN) | Carcinogenicity, Acute toxicity | Simple implementation, no training required | ~65-81% accuracy depending on endpoint [2] |

FAQ 2: What are the primary validation challenges for AI-based New Approach Methods (NAMs)?

Validating AI-based NAMs presents several interconnected challenges. Data quality remains a fundamental concern, as model performance depends heavily on consistent, well-curated datasets [5]. Model interpretability and transparency are also significant hurdles for regulatory acceptance—strategies like SHapley Additive exPlanations (SHAP) can help address this by quantifying feature importance [4]. Additionally, establishing standardized performance benchmarks across diverse chemical spaces and biological endpoints requires extensive collaboration between researchers, regulators, and industry stakeholders [5]. The dynamic nature of AI models also necessitates ongoing monitoring and refinement post-implementation to maintain predictive accuracy [5].

FAQ 3: How can researchers address contamination issues in trace element analysis?

Contamination control requires a multi-layered approach. Environmental contamination from laboratory air can introduce significant levels of elements including Ca, Si, Fe, Na, Mg, K, Tl, Cu, and Mn [6]. Effective strategies include:

- Utilizing HEPA-filtered clean rooms, which demonstrate dramatic reductions in blank levels for elements like Na, Ca, Fe, Zn, and Pb compared to conventional laboratory environments [6]

- Implementing controlled evaporation chambers when clean room access is limited [6]

- Applying advanced detection techniques such as Scanning Electron Microscopy with Energy-Dispersive X-ray spectroscopy (SEM-EDX) for elemental composition analysis of particulate contaminants [7]

- Using Inductively Coupled Plasma (ICP) spectroscopy for highly sensitive multi-elemental analysis of trace metal contamination [7]

FAQ 4: What easy-to-measure parameters can serve as surrogates for predicting trace contaminant concentrations?

Research indicates that conventional physicochemical parameters can effectively predict concentration ranges of hard-to-measure trace organic contaminants. Color, Chemical Oxygen Demand (COD), and UV Transmittance (UVT) have been identified as the top three predictive features for most investigated TrOCs, with Total Organic Carbon (TOC) and Total Suspended Solids (TSS) also showing significant predictive value [3]. This approach enables cost-effective monitoring through supervised classification algorithms that correlate these readily measurable parameters with contaminant concentration classes (low, medium, high).

Troubleshooting Guides

Issue 1: Poor Generalization of ML Toxicity Models

Problem: Models perform well on training data but poorly on external validation sets or novel chemical compounds.

Solution Protocol:

- Data Quality Assessment: Verify dataset consistency and identify conflicting toxicity assignments for the same chemicals across different sources [2]

- Feature Selection Optimization: Implement rigorous feature selection methods including Principal Component Analysis (PCA), F-score evaluation, or Monte Carlo Simulated Annealing (MC-SA) [2]

- Model Architecture Adjustment: Apply ensemble methods that combine multiple algorithms to improve robustness and predictive accuracy [2]

- Uncertainty Quantification: Incorporate uncertainty estimates into predictions to provide confidence intervals for toxicity classifications [5]

Model Generalization Improvement Workflow

Issue 2: Integration of AI-NAMs with Regulatory Requirements

Problem: Difficulty aligning AI-based approaches with regulatory validation standards for chemical safety assessment.

Solution Protocol:

- Implement Tiered Validation Strategy:

- Tier 1: Internal cross-validation with multiple data splits

- Tier 2: External validation with independent datasets

- Tier 3: Prospective validation in targeted case studies [5]

Adopt Explainable AI (XAI) Frameworks:

- Apply SHAP analysis to quantify feature importance and enhance model transparency [4]

- Document mechanistic relevance of identified features to toxicological endpoints

- Provide confidence metrics for all predictions

Leverage e-Validation Concepts:

- Utilize AI-powered reference chemical selection

- Implement mechanistic validation through pathway analysis

- Establish continuous monitoring systems for model performance [5]

Issue 3: Contamination Interference in Trace Analysis

Problem: Environmental contamination compromising analytical accuracy for trace element detection.

Solution Protocol:

- Environmental Control Implementation:

- Conduct analyses in HEPA-filtered clean rooms where possible

- Use controlled evaporation chambers as a cost-effective alternative [6]

- Monitor blank levels regularly to detect contamination sources

- Analytical Technique Selection:

Table 2: Contamination Control Methods and Effectiveness

| Control Method | Technical Approach | Effectiveness Evidence | Practical Considerations |

|---|---|---|---|

| HEPA-Filtered Clean Rooms | Positive pressure with HEPA filtration (99.99% efficient for ≥0.3µm particles) | 4-14x reduction in blank levels for Na, Ca, Fe, Zn, Pb [6] | High infrastructure cost; suitable for core facilities |

| Controlled Evaporation Chambers | Simple enclosed systems with limited air exchange | Significant reduction vs. open bench (5.5x for Pb) [6] | Low-cost alternative; suitable for individual labs |

| SEM-EDX Analysis | Microscopy with elemental analysis | Identifies elemental composition of particulate contaminants [7] | Requires specialized equipment; excellent for source identification |

| ICP Spectroscopy | High-sensitivity multi-element analysis | Detects trace metal contamination at very low concentrations [7] | Quantitative results; requires method development |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagents and Materials for AI-Enabled Trace Contaminant Research

| Item | Function | Application Notes |

|---|---|---|

| Curated Toxicity Datasets | Training and validation data for ML models | Quality impacts model performance; seek standardized datasets with consistent toxicity assignments [2] |

| Molecular Descriptors Software | Generates chemical features for QSAR modeling | PaDEL, MOE, and MACCS fingerprints commonly used; affects model interpretability [2] |

| SHAP Analysis Framework | Explains ML model outputs and feature importance | Critical for regulatory acceptance; provides quantitative feature importance metrics [4] |

| Organoid/Organ-on-a-Chip Systems | Provides human-relevant toxicity data for model training | Mimics human organ responses; can reveal toxic effects missed in animal models [1] |

| High-Quality Chemical Standards | Ensures analytical accuracy for trace contaminant detection | Essential for generating reliable training data; requires proper contamination controls [6] |

Experimental Protocol: Developing an ML Model for Trace Contaminant Toxicity Prediction

Phase 1: Data Curation and Preprocessing

- Data Collection: Compile toxicity data from diverse sources including in vitro assays, animal studies, and human adverse event reports [8]

- Chemical Standardization: Apply consistent structure standardization across all compounds (tautomer normalization, salt removal)

- Descriptor Calculation: Generate comprehensive molecular descriptors using software such as PaDEL, MOE, or custom algorithms [2]

- Data Splitting: Implement scaffold-based splitting to ensure structural diversity between training and test sets, preventing data leakage

Phase 2: Model Development and Optimization

- Algorithm Selection: Test multiple algorithms including Random Forest, SVM, GBM, and neural networks

- Feature Selection: Apply appropriate feature selection methods (PCA, recursive feature elimination) to reduce dimensionality

- Hyperparameter Tuning: Conduct systematic hyperparameter optimization using grid or Bayesian search methods

- Cross-Validation: Perform nested cross-validation to obtain robust performance estimates

Phase 3: Model Validation and Interpretation

- External Validation: Test model performance on completely independent datasets not used in training [2]

- Mechanistic Interpretation: Apply SHAP analysis to identify key molecular features driving predictions and assess mechanistic plausibility [4]

- Uncertainty Quantification: Implement conformal prediction or Bayesian methods to provide prediction confidence intervals [5]

- Regulatory Alignment: Document validation process according to emerging guidelines for AI-based NAMs [5]

ML Model Development Workflow

Defining Trace Contaminants in Machine Learning Research

In the context of machine learning research, trace contaminants refer to minute, often undesired substances or signals within a dataset that can significantly impact model performance, analytical results, or the validity of scientific conclusions. Their detection is challenging due to their low concentrations or subtle signatures, which are often obscured by dominant patterns or noise in the data.

The table below summarizes the primary types of trace contaminants encountered across different research domains.

Table 1: Types of Trace Contaminants in Research Data

| Domain | Nature of Contaminant | Typical Manifestation | Primary Challenge |

|---|---|---|---|

| Environmental Science | Heavy Metal(loid)s (e.g., Cd, Hg) [9] | Low concentrations in urban river sediments [9] | Differentiating anthropogenic pollution from natural background levels [9] |

| Water Quality Monitoring | Trace Organic Contaminants (TrOCs) [3] | Pharmaceutical and personal care products in recycled water [3] | Costly and complex direct monitoring; requires surrogate prediction [3] |

| Fermentation Processes | Biological impurities [10] | Microbial contamination in fermentation batches [10] | Scarce labeled contamination data; need for unsupervised anomaly detection [10] |

| Groundwater Monitoring | Toxic Petroleum Hydrocarbons (e.g., BEX) [11] | Benzene, Ethylbenzene, and Xylenes at regulatory thresholds (e.g., 5 μg/L) [11] | Detecting plume migration in real-time using indirect sensor data [11] |

| LLM Training Data | Data Leakage [12] | Evaluation data present in the training set [12] | Inflated performance metrics that do not reflect true model capability [12] |

Essential Methodologies for Anomaly Detection

Detecting trace contaminants is typically framed as an anomaly detection problem. The choice of methodology depends on data availability, labeling, and the specific nature of the anomaly.

Unsupervised Machine Learning Models

When labeled contamination data is scarce, unsupervised models that learn only from "normal" data are highly effective [10]. Two prominent approaches include:

- One-Class Support Vector Machine (OCSVM): This model learns a decision boundary that encompasses the majority of "normal" data points in a high-dimensional space. Any data point falling outside this boundary is flagged as an anomaly or contaminant [10].

- Autoencoders (AE): These are neural networks trained to compress input data into a lower-dimensional latent space and then reconstruct the original input. The model is trained solely on normal data. During inference, a high reconstruction error indicates that the input data has patterns the model hasn't learned, signaling a potential anomaly or contaminant [10].

Supervised Classification for Contaminant Prediction

When concentration classes are known, supervised learning can predict contaminant levels using easy-to-measure surrogate parameters [3].

- Random Forest Classifier: This algorithm constructs multiple decision trees and aggregates their results. It has demonstrated superior performance in predicting the concentration range of Trace Organic Contaminants (TrOCs) using physicochemical parameters like colour, Chemical Oxygen Demand (COD), and UV Transmittance (UVT) as features, achieving accuracies of ≥73% for most compounds [3].

The following diagram illustrates the logical workflow for selecting and applying these machine learning techniques to contamination detection.

Experimental Protocols for Key Scenarios

This protocol uses OCSVM and Autoencoders to identify contaminated batches without labeled contamination data.

- Data Preprocessing:

- Input: Time-series data from 246 fermentation batches (223 normal, 23 contaminated).

- Steps: Handle missing/invalid values, convert timestamps, resample data to a uniform 5-second interval using linear interpolation, and forward-fill remaining gaps [10].

- Feature Engineering:

- Aggregated Statistics: Calculate mean, standard deviation, min, and max for each variable over the batch duration [10].

- Rolling Features: Compute a 5-step moving average to capture process stability and trends [10].

- Lag Features: Introduce 1-step time-shifted values to detect delayed effects of contamination [10].

- Model Training & Hyperparameter Optimization:

- Train OCSVM and Autoencoder models exclusively on the 223 normal batches.

- Use the Optuna platform in Python with Bayesian Optimization with Hyperband (BOHB) to optimize model hyperparameters for maximum F2-score, which prioritizes high recall (minimizing false negatives) [10].

- Anomaly Detection:

- For OCSVM: Data points classified as outliers are flagged as contaminated.

- For Autoencoders: Batches with a reconstruction error exceeding a set threshold are flagged as contaminated. This method achieved a recall of 1.0 and precision of 0.96 [10].

This protocol uses labeled data to classify contamination levels on high-voltage insulators based on leakage current.

- Data Collection & Preprocessing:

- Generate a dataset of leakage current signals from insulators under varying pollution levels (High, Moderate, Low) and controlled environmental conditions (temperature, humidity) [13].

- Feature Extraction:

- Model Training & Optimization:

- Train multiple classifiers (e.g., Decision Trees, Neural Networks).

- Use Bayesian Optimization to tune the model parameters. Decision tree-based models have shown accuracies >98% with faster training times compared to neural networks [13].

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Resources for Contamination Detection Research

| Item / Technique | Function / Description | Application Example |

|---|---|---|

| Optuna (Python Platform) | A hyperparameter optimization framework to automate the search for the best model parameters [10]. | Used with BOHB to optimize OCSVM and Autoencoder models for fermentation [10]. |

| Bayesian Optimization | An efficient strategy for globally optimizing black-box functions, such as model hyperparameters [13]. | Tuning parameters of Decision Tree and Neural Network models for insulator contamination classification [13]. |

| In-Situ Sensors (pH, DO, EC, Redox) | Probes that measure indirect, easy-to-measure water quality parameters in real-time [11]. | Serving as input features for ML models to predict the presence of toxic petroleum hydrocarbons (BEX) in groundwater [11]. |

| Self-Organizing Maps (SOM) | An unsupervised neural network for clustering and visualizing high-dimensional data [9]. | Used in conjunction with other methods to identify major pollution sources (e.g., industrial, agricultural) in urban river sediments [9]. |

| Positive Matrix Factorization (PMF) | A receptor model that quantifies source contributions to pollution without prior source profiles [9]. | Identifying and apportioning five major sources of heavy metal(loid) pollution in an urban river [9]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q: What is the single most important metric when evaluating a contamination detection model? A: The primary metric should be Recall (the ability to find all contaminated samples). A high recall minimizes false negatives, which is critical in safety and quality control. However, to avoid an excess of false alarms, the model should be tuned using the F2-score, which balances recall with precision without sacrificing it too much [10].

Q: My model performs well in the lab but fails in real-world deployment. What could be wrong? A: This is often due to concept drift or unaccounted-for environmental variables. Ensure your training data encompasses the full range of operational conditions (e.g., lighting, humidity, sensor noise) [14] [11]. Implement a periodic retraining schedule and test your model's robustness against sensor noise, which can degrade accuracy by 10-20% [11].

Q: How can I detect contamination when I have very few or no labeled examples of it? A: Use unsupervised anomaly detection methods. Techniques like One-Class SVM and Autoencoders are designed specifically for this scenario. They learn the pattern of "normal" operation from your abundant clean data and flag any significant deviations as potential contamination [10].

Q: What is data contamination in the context of Large Language Models (LLMs), and why is it a problem? A: In LLMs, contamination refers to the leakage of benchmark evaluation data into the model's training set. This leads to inflated performance scores that do not reflect the model's true ability to generalize, jeopardizing the reliable measurement of progress in AI [12]. Detection methods range from simple string matching to more complex behavioral analysis [12].

Troubleshooting Common Problems

Problem: High False Positive Rate in Anomaly Detection

- Solution: Review your feature engineering. Extract more meaningful statistical, rolling, and lag-based features that better capture normal process variability [10]. Adjust the classification threshold to be less sensitive, balancing recall and precision.

Problem: Model Performance is Sensitive to Sensor Noise

- Solution: This is a common challenge. Combine hardware stabilization with data preprocessing techniques like adaptive smoothing on the sensor data. Analyze the impact of noise levels (e.g., 10-20%) on your model and preprocess the data accordingly to mitigate its effects [11].

Problem: Difficulty in Tracing the Source of Contamination

- Solution: Implement a multi-technique source tracing framework. Combine correlation analysis, clustering (e.g., Self-Organizing Maps), and source apportionment models (e.g., Positive Matrix Factorization) to accurately identify and quantify pollution sources [9].

Troubleshooting Guide: Database Queries and Data Quality

1. Why is my model's predictive accuracy poor despite using a large dataset? Poor model accuracy often stems from underlying data quality issues rather than the algorithm itself.

Potential Cause & Solution: The training data may be extracted from a single database with limited scope or inconsistent data formatting. Solution: Integrate data from multiple toxicological databases to create a more comprehensive and robust training set. For instance, combine high-throughput screening data from ToxCast [15] with traditional animal toxicity data from ToxRefDB [15] and detailed mechanistic data from other sources. This provides a more holistic view of chemical toxicity [16].

Potential Cause & Solution: The data may contain hidden contaminants or artifacts from the original experimental processes. Solution: Implement stringent data curation protocols. Consult laboratory guides on reducing contamination, such as ensuring the use of high-purity water and acids, and using appropriate, clean labware to minimize the introduction of trace elements that could skew experimental results [17]. Always check the certificates of analysis for reagents.

Experimental Protocol for Data Integration:

- Identify Key Databases: Select complementary databases (e.g., ToxCast for in vitro bioactivity, ToxRefDB for in vivo outcomes, ECOTOX for ecological data) [15].

- Map Chemical Identifiers: Use a common identifier (e.g., DTXSID from the CompTox Chemicals Dashboard) to align records across databases [15].

- Extract and Harmonize Data: Download datasets and harmonize endpoints (e.g., convert all dose-response data to a standard unit like µM).

- Apply Quality Filters: Remove data points flagged for quality issues or originating from high-contamination risk studies [17].

- Create a Unified Dataset: Merge the filtered and harmonized data into a single structured dataset for model training.

2. How can I efficiently find all available toxicological data for a specific chemical? A single database search is often insufficient and can miss critical historical data.

Potential Cause & Solution: Relying solely on current electronic databases may miss key older studies. Solution: Use a tiered database search strategy. Start with an aggregator like the EPA's CompTox Chemicals Dashboard, which provides access to a wide array of data sources [15]. Then, consult specialized databases and older literature indexes. A tragic case at John Hopkins University in 2001, where a volunteer died because researchers missed toxicity data from the 1950s by searching only a post-1966 database, underscores the critical importance of comprehensive, multi-source searches that include historical data [18].

Potential Cause & Solution: Search terms are too narrow. Solution: Use a platform like SciFinder, which searches both CAPLUS (from 1900) and MEDLINE (from 1946) simultaneously. Broaden searches by using controlled vocabularies (e.g., MeSH in MEDLINE) and chemical indexing terms to ensure all relevant studies are captured [18].

3. My model performs well on training data but generalizes poorly to new chemicals. What is wrong? This classic problem of overfitting often relates to the dataset's chemical diversity and the model's sensitivity.

Potential Cause & Solution: The training dataset has limited chemical structural diversity. Solution: Use the DSSTOX database from the EPA to access well-curated chemical structures. Expand your training set to include a wider range of chemical structures and use the database's associated physicochemical properties to ensure your model is trained on a representative chemical space [15].

Potential Cause & Solution: The model architecture may be overly sensitive to small input variations. Solution: Recent research into transformer architectures, which are becoming more common in AI-based toxicology models, shows that they naturally learn "low sensitivity functions." This inherent robustness makes them less likely to react dramatically to small changes in input data, which can improve generalization. Consider leveraging or developing models with this property [19].

Structured Data for Model Development

Table 1: Key Toxicological Databases for Model Training

This table summarizes major databases, their content, and primary applications in computational modeling.

| Database Name | Key Data Content | Data Format & Size | Primary ML Application | Access |

|---|---|---|---|---|

| ToxCast/Tox21 [15] | High-throughput screening (HTS) data; ~9000 chemicals tested in ~1000 assays. | Quantitative (e.g., AC50 values); Structured | Training models for hazard identification & prioritization; mechanism-of-action prediction. | Publicly available for download. |

| ToxRefDB [15] | Traditional in vivo animal toxicity data from guideline studies; >1000 chemicals. | Categorical outcomes (e.g., target organ effects); Structured | Providing in vivo anchor data for validating in vitro-informed models; chronic toxicity prediction. | Publicly available for download. |

| ECOTOX [15] | Single chemical exposure effects on aquatic and terrestrial species. | Experimental results (LC50, EC50); Structured | Building QSAR models for environmental risk assessment; ecotoxicology prediction. | Publicly available online. |

| ToxValDB [15] | Aggregated in vivo toxicity data and derived values from >40 sources; ~40,000 chemicals. | Mixed (experimental & derived values); Compiled | Large-scale model training and validation across diverse endpoints; data mining. | Publicly available for download. |

| CERAPP [15] | Curated data and model predictions for Estrogen Receptor activity for ~32,000 chemicals. | Categorical (active/inactive) & Continuous; Structured | Training and benchmarking molecular initiating event (MIE) models; collaborative project data. | Publicly available for download. |

Table 2: Research Reagent Solutions for Data Generation & Validation

Essential materials and tools for generating reliable toxicological data that feeds into these databases and models.

| Reagent / Tool | Function in Toxicology Research | Key Consideration for Trace Contaminant Work |

|---|---|---|

| High-Purity Water (ASTM Type I) [17] | Diluent for standards/samples; blank preparation. | Essential for parts-per-trillion (ppt) analysis; high resistivity (18 MΩ·cm) and low TOC are critical. |

| ICP-MS Grade Acids [17] | Sample digestion, preservation, and dilution. | Certificate of Analysis (CoA) must be checked for elemental contamination levels (e.g., Pb, Ni). |

| FEP/Quartz Labware [17] | Storage and preparation of low-concentration samples. | Use instead of borosilicate glass to avoid contamination from boron, silicon, sodium, and aluminum. |

| Powder-Free Gloves [17] | Personal protective equipment (PPE). | Powdered gloves contain high levels of zinc, which can contaminate samples and surfaces. |

| HEPA-Filtered Environment [17] | Provides clean air for sample preparation. | Significantly reduces airborne contaminants like aluminum, iron, and lead compared to a standard lab. |

Experimental Workflows & Data Relationships

Database Selection Workflow

This diagram outlines a logical workflow for selecting the most appropriate toxicological databases based on the research goal.

Model Validation Logic Flow

This chart describes the process of using multiple data sources to build and validate a computational toxicology model.

ML in Action: Algorithms and Real-World Detection Pipelines

Performance Comparison of Unsupervised Anomaly Detection Algorithms

Table 1: Algorithm performance comparison on synthetic dataset [20]

| Algorithm | Accuracy | Precision | Recall | F1 Score |

|---|---|---|---|---|

| One-Class SVM | High | High | High | High |

| Isolation Forest | Slightly higher than others | High | High | Highest |

| Robust Covariance | High | High | High | High |

| One-Class SVM with SGD | Moderate | High | Lower | Needs improvement |

| Local Outlier Factor | Variable | Variable | Variable | Requires tuning |

Table 2: One-Class SVM key hyperparameters and their effects [21] [22]

| Hyperparameter | Function | Default Value | Adjustment Effect |

|---|---|---|---|

| nu (ν) | Controls fraction of outliers allowed | 0.5 | Lower: stricter margin, fewer outliers detected; Higher: more permissive, more potential false positives |

| kernel | Defines decision boundary type | 'rbf' | 'linear', 'rbf', 'poly', 'sigmoid' - RBF captures complex non-linear relationships |

| gamma (γ) | Influence range of single training example | 'scale' (1/n_features) | Low: smoother boundary; High: more complex, sensitive to local variations |

| tol | Stopping criterion tolerance | 1e-3 | Smaller: more precise optimization but longer training |

Table 3: Autoencoder training hyperparameters [23]

| Hyperparameter | Function | Impact on Performance |

|---|---|---|

| Code Size | Number of nodes in bottleneck layer | Smaller: more compression but potential information loss |

| Number of Layers | Depth of encoder/decoder networks | Deeper: can capture more complex patterns but risk overfitting |

| Loss Function | Metric for reconstruction error | MSE or Binary Cross-Entropy depending on input data range |

| Number of Nodes per Layer | Width of each layer | Progressive decrease in encoder, increase in decoder |

Troubleshooting Guide: One-Class SVM

Common Issue 1: Abnormal Decision Boundaries

Problem: Contour lines for OCSVM scores appear irregular or unlike expected ellipsoidal patterns [24].

Solution:

- This may indicate a software implementation issue - contact technical support for the machine learning library you're using [24]

- Verify your kernel function matches your data characteristics

- Ensure proper data preprocessing and normalization

Common Issue 2: Poor Anomaly Detection Performance

Problem: Model fails to identify true anomalies or generates excessive false positives [22].

Solution:

- Adjust the

nuparameter: decrease to reduce false positives, increase to catch more anomalies [21] [22] - Experiment with different kernel functions, particularly RBF for non-linear relationships [22]

- Tune

gammaparameter using grid search with cross-validation [22] - Ensure training data represents "normal" patterns without contamination by anomalies

Common Issue 3: Handling High-Dimensional Data

Problem: Performance degradation with many features [22].

Solution:

- Leverage SVM's inherent strength in high-dimensional spaces [22]

- Use RBF kernel to handle non-linear relationships in complex feature spaces [22]

- Consider feature selection or dimensionality reduction as preprocessing step

Troubleshooting Guide: Autoencoders

Common Issue 1: High Reconstruction Error for Normal Data

Problem: Autoencoder fails to properly reconstruct normal instances [25].

Solution:

- Increase model capacity by adding more layers or neurons

- Widen bottleneck layer if it's too narrow [25]

- Verify training data quality and ensure it represents normal patterns

- Increase training dataset size if insufficient [25]

Common Issue 2: Poor Anomaly Discrimination

Problem: Similar reconstruction errors for normal and anomalous data [23].

Solution:

- Adjust bottleneck size - too large may not capture useful compression, too small may lose critical information [25]

- Introduce noise during training (denoising autoencoders) to improve robustness [25]

- Use contractive autoencoder architectures to improve feature learning [25]

- Ensure training data contains only normal instances for unsupervised approach

Common Issue 3: Training Instability

Problem: Model fails to converge or shows erratic training behavior [23].

Solution:

- Normalize input data to consistent range (typically 0-1)

- Use appropriate loss function (binary cross-entropy for 0-1 inputs, MSE otherwise) [23]

- Adjust learning rate and batch size

- Implement early stopping to prevent overfitting

Frequently Asked Questions

Q1: When should I choose One-Class SVM over Autoencoders for anomaly detection?

Answer: One-Class SVM is particularly effective for:

- High-dimensional data where it can capture complex boundaries [22]

- Scenarios with limited computational resources

- Applications requiring interpretable decision boundaries

- When you need strong theoretical guarantees on performance

Autoencoders are preferable when:

- Dealing with complex non-linear relationships in data [23]

- You need to learn feature representations for downstream tasks

- Working with sequential or image data where convolutional or recurrent architectures help

- You have sufficient data and computational resources for deep learning

Q2: How can I adapt these methods for detecting trace concentration contaminants?

Answer: For detecting trace organic contaminants:

- Use physicochemical parameters like colour, COD, and UV Transmittance as features [3]

- Implement semi-supervised approaches where normal water quality data is abundant but contaminant examples are rare

- For One-Class SVM, tune

nuparameter to reflect expected contamination frequency - For autoencoders, use reconstruction error threshold to flag unusual concentration patterns

- Consider representation learning to identify surrogate markers for hard-to-measure contaminants [3]

Q3: What are the key differences between traditional SVM and One-Class SVM?

Answer:

Table 4: SVM vs. One-Class SVM comparison [21]

| Aspect | Traditional SVM | One-Class SVM |

|---|---|---|

| Training Data | Requires multiple labeled classes | Uses only one class (normal data) |

| Objective | Find boundary between classes | Find boundary around normal data |

| Output | Class membership | Normal vs. anomaly |

| Soft Margin | Penalizes misclassification errors | Penalizes deviations from normal boundary |

Q4: How do I determine optimal bottleneck size for autoencoders?

Answer:

- Start with bottleneck size approximately half the input dimension [23]

- Use reconstruction accuracy on validation set to guide selection [25]

- Consider the complexity of your data - more complex patterns may require larger bottlenecks

- Balance between compression and information preservation [25]

- Test multiple architectures and select based on anomaly detection performance, not just reconstruction error

Experimental Protocols

Protocol 1: One-Class SVM for Contaminant Detection

Materials: Water quality dataset with physicochemical parameters [3]

Methodology:

- Data Preparation:

- Collect features: colour, Chemical Oxygen Demand (COD), UV Transmittance (UVT), Total Organic Carbon (TOC) [3]

- Normalize features to zero mean and unit variance

- Split data: 70% normal samples for training, 30% for testing with known contaminants

Model Training:

- Initialize OneClassSVM with RBF kernel

- Set initial

nu=0.1(assuming 10% contamination potential) - Use

gamma='scale'for automatic parameter setting - Fit model using only normal training samples

Evaluation:

- Predict anomalies on test set

- Calculate precision, recall, and F1-score for contaminant detection

- Optimize

nuparameter using grid search

Protocol 2: Autoencoder for Anomaly Detection in Sensor Data

Materials: Time-series sensor data, TensorFlow/PyTorch framework [23]

Methodology:

- Data Preprocessing:

- Normalize sensor readings to [0,1] range

- Create sliding windows for temporal patterns

- Split into training (normal operations only) and testing (mixed normal/anomalous)

Model Architecture:

- Input layer matching sensor feature dimension

- Encoder: 2-3 layers with decreasing neurons (e.g., 64 → 32 → 16)

- Bottleneck: 8-12 neurons (compressed representation)

- Decoder: symmetric with encoder (e.g., 16 → 32 → 64)

- Output layer: same dimension as input

Training:

- Loss function: Mean Squared Error (MSE)

- Optimizer: Adam with learning rate 0.001

- Early stopping with patience=10 epochs

- Batch size: 32-128 depending on dataset size

Anomaly Detection:

- Calculate reconstruction error for each sample

- Set threshold based on 95th percentile of training reconstruction errors

- Flag samples exceeding threshold as anomalies

Workflow Visualization

One-Class SVM Anomaly Detection Workflow

Autoencoder Anomaly Detection Architecture

The Scientist's Toolkit

Table 5: Essential research reagents and computational tools for anomaly detection experiments

| Tool/Resource | Function | Application Context |

|---|---|---|

| scikit-learn OneClassSVM | One-Class SVM implementation | General-purpose anomaly detection, high-dimensional data [21] [22] |

| TensorFlow/Keras PyTorch | Deep learning frameworks | Autoencoder implementation and customization [23] |

| ECG Dataset | Benchmark dataset for validation | Testing anomaly detection performance [23] |

| Water Quality Parameters (Colour, COD, UVT) | Feature set for contaminant detection | Predicting trace organic contaminants [3] |

| Network Flow Data (NetFlow, IPFIX) | Network traffic features | Cybersecurity anomaly detection [26] |

| Grid Search Cross-Validation | Hyperparameter optimization | Tuning nu, gamma, and architectural parameters [22] |

| Reconstruction Error Metrics (MSE) | Autoencoder performance evaluation | Quantifying anomaly detection threshold [23] |

| Radial Basis Function (RBF) Kernel | Non-linear transformation | Handling complex decision boundaries in SVM [21] [22] |

In biopharmaceutical and industrial fermentation, microbial contamination poses a significant risk to product quality, patient safety, and operational efficiency. Contamination events can lead to costly batch losses, facility shutdowns, and drug shortages [27]. Detecting these events, especially those involving trace-level contaminants, presents a substantial challenge for researchers and drug development professionals. This case study explores the application of high-recall machine learning (ML) models for fermentation contamination detection, providing a technical framework for implementation within a research context focused on trace concentration contaminants.

The Critical Need for High-Recall Detection

The Problem of Contamination

Fermentation processes are vulnerable to contamination from various microorganisms, including bacteria, yeast, mold, and viruses. Sources are diverse, ranging from raw materials and operators to the processing environment itself [27] [28] [29]. In biopharmaceutical production, for instance, viral contamination of mammalian cell cultures (like CHO cells) has occurred in multiple documented incidents, primarily traced back to raw materials [27]. The consequences of undetected contamination are severe:

- Financial Losses: Batch discards, facility decontamination costs, and lost revenue.

- Patient Safety Risks: Potential exposure to adulterated therapeutic products.

- Operational Disruption: Extended downtime and regulatory complications [27] [30].

Why Recall is Paramount

In machine learning classification, recall (or true positive rate) measures the model's ability to identify all actual positive instances. It is calculated as: [ \text{Recall} = \frac{\text{True Positives (TP)}}{\text{True Positives (TP) + False Negatives (FN)}} ] For contamination detection, a false negative (an undetected contamination event) is typically far more costly and dangerous than a false positive. A false negative could allow a contaminated batch to proceed, jeopardizing product safety and requiring extensive corrective actions. A false positive might only trigger a unnecessary, albeit costly, investigation. Therefore, maximizing recall ensures the model misses as few true contamination events as possible [31] [32].

Table 1: Key Classification Metrics for Contamination Detection

| Metric | Definition | Importance in Contamination Context |

|---|---|---|

| Recall (True Positive Rate) | Proportion of actual contaminants correctly identified. | Critical: Measures the ability to catch all contamination events. Minimizing false negatives is the primary goal. |

| Precision | Proportion of predicted contaminants that are actual contaminants. | Important but Secondary: A high value indicates fewer false alarms, but can be traded off for higher recall. |

| Accuracy | Overall proportion of correct predictions (both positive and negative). | Can be Misleading: Often high in imbalanced datasets (where contamination is rare) but fails to indicate detection capability. |

| Specificity | Proportion of actual non-contaminants correctly identified. | Context-Dependent: Important for operational efficiency, but secondary to recall for safety. |

Machine Learning Methodology for High-Recall Contamination Detection

Dataset and Preprocessing

A robust dataset is foundational. A study demonstrating ML for fermentation contamination used 246 batches of industrial fermentation data, containing 23 contaminated and 223 healthy batches [10]. Data preprocessing is critical for real-world industrial data, which often contains inconsistencies:

- Handling Inconsistencies: Drop empty/unusable rows/columns, convert data to valid numeric values, and manage invalid timestamps.

- Time-Series Alignment: Identify the most valid timestamp column for each batch, sort data chronologically, handle duplicate timestamps (e.g., using mean values), and resample to a uniform time interval (e.g., 5-second intervals).

- Missing Value Imputation: Use methods like linear interpolation or forward-fill to handle gaps in the data [10].

Feature Engineering for Process Insight

Transforming raw time-series data into meaningful features is essential for model performance. Engineered features capture process dynamics and variability that may indicate contamination.

Table 2: Key Engineered Features for Contamination Detection

| Feature Category | Specific Examples | Rationale |

|---|---|---|

| Static Aggregated Statistics | Mean, Standard Deviation, Min, Max of process variables (e.g., pH, dissolved oxygen, temperature). | Captures central tendency, variability, and extremes. Shifts in these values can indicate contamination. |

| Rolling Window Features | Rolling mean over a window (e.g., 5 values). | Filters noise and highlights trends, helping detect gradual drifts caused by contaminants. |

| Lag Features | 1-step lagged values of process variables. | Captures temporal dependencies and delayed effects of contamination on process parameters. |

After feature engineering, the dataset is transformed into a structured format where each row represents a batch with engineered features and a contamination label, ready for model training [10].

Model Selection and Hyperparameter Optimization for High Recall

Given the scarcity of labeled contamination data, the problem is well-suited for anomaly detection approaches, where models learn only from "normal" (non-contaminated) batches.

Recommended Models:

- One-Class Support Vector Machine (OCSVM): An unsupervised algorithm that defines a boundary around normal data points. Batches falling outside this boundary are flagged as anomalies. The study found OCSVM outperformed autoencoders in precision and specificity while achieving perfect recall [10].

- Autoencoders (AEs): Unsupervised neural networks trained to reconstruct their input data. The model learns a compressed representation of normal batch behavior. During inference, a high reconstruction error indicates an anomalous (potentially contaminated) batch that the model cannot accurately reconstruct [10].

Hyperparameter Optimization (HPO): To achieve high recall without excessive sacrifice of precision, systematic HPO is crucial.

- Tool: Use a Python platform like Optuna for parallel HPO execution.

- Algorithm: Bayesian Optimization with Hyperband (BOHB) is recommended to efficiently search the hyperparameter space.

- Objective: Prioritize optimization for the F2-score, which assigns double weight to recall compared to precision. This directly tunes the model to minimize false negatives [10].

The following workflow diagram illustrates the complete machine learning process for contamination detection:

Experimental Protocol and Performance

Implementation and Evaluation

In the referenced study, the trained ML models were benchmarked against a traditional threshold-based method (the mean ± 3σ rule). The results demonstrated the significant added value of the data-driven approach [10].

Table 3: Model Performance Benchmarking

| Model / Method | Recall | Precision | Specificity | Key Findings |

|---|---|---|---|---|

| One-Class SVM (OCSVM) | 1.0 | 0.96 | 0.99 | Achieved perfect recall without sacrificing precision and specificity. Outperformed autoencoders. |

| Autoencoders (AE) | 1.0 | Lower than OCSVM | Lower than OCSVM | Achieved perfect recall but with lower precision and specificity compared to OCSVM. |

| Traditional Threshold-Based (Mean ± 3σ) | Not Reported | Not Reported | Not Reported | Demonstrated inferior detection accuracy and robustness compared to both ML models. |

The Scientist's Toolkit: Key Research Reagents & Solutions

Implementing this ML framework requires a combination of computational tools and domain-specific knowledge.

Table 4: Essential Research Reagents and Computational Tools

| Item / Solution | Function / Purpose |

|---|---|

| Python with Scikit-learn & Keras/TensorFlow | Core programming environment and libraries for implementing OCSVM and Autoencoder models. |

| Optuna HPO Platform | Python framework for efficient hyperparameter optimization, enabling parallel execution and BOHB. |

| Process Historian Data | Time-series data from bioreactors (e.g., pH, dissolved oxygen, temperature, pressure) used for feature engineering. |

| SHAP (SHapley Additive exPlanations) | Post-hoc model interpretation tool to identify which process variables most contributed to a contamination flag, aiding root-cause analysis [10] [4]. |

| Labeled Historical Batches | A dataset of past fermentation runs with known contamination outcomes, essential for model training and validation. |

| PCR Assays (e.g., BAX System) | Rapid, specific microbiological tests used to confirm model predictions and screen for specific spoilage organisms [33]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Our fermentation data is very noisy and has many missing points. Can ML models still be effective? Yes. The methodology explicitly includes robust data preprocessing steps to handle these real-world issues. Techniques like linear interpolation, forward-filling, and resampling to a uniform time interval are designed to create a clean, consistent dataset for modeling [10].

Q2: Why should we use an unsupervised model when we have some labeled contamination data? While having some labels is helpful, contamination events are rare, leading to a highly imbalanced dataset. Unsupervised models like OCSVM and Autoencoders are powerful because they do not require a large set of labeled contamination examples. They learn the pattern of "normal" operation and flag significant deviations, making them ideal for detecting novel or unforeseen contaminants [10].

Q3: How do we know if the model's hyperparameters are properly tuned for our specific process? The use of a systematic HPO framework like Optuna is critical. By defining the objective function to maximize the F2-score, you directly guide the optimization process to find hyperparameters that prioritize high recall. The performance of this tuning can be validated on a hold-out test set or via cross-validation before deployment [10].

Q4: A high-recall model will generate more false alarms. How do we manage this? This is a key operational consideration. While minimizing false negatives is the priority, a model with reasonable precision (like the OCSVM achieving 0.96) keeps false alarms manageable. Furthermore, each alarm should trigger a predefined investigation protocol, which can include rapid, targeted microbiological tests (e.g., PCR) to quickly confirm or rule out contamination, minimizing unnecessary batch discards [33] [27].

Troubleshooting Guide

Problem: Model exhibits high recall but unacceptably low precision in production.

- Potential Cause 1: Concept drift – the underlying process data distribution has changed since the model was last trained.

- Solution: Implement a scheduled model retraining regimen using recent process data to keep the model's understanding of "normal" current [10].

- Potential Cause 2: Inadequate feature set – the engineered features may not capture the nuances leading to contamination.

- Potential Cause 3: The hyperparameter trade-off is too extreme.

- Solution: Slightly adjust the HPO objective function to place a bit more weight on precision (e.g., using F1.5-score instead of F2-score) and retune the model.

Problem: Contamination is detected by traditional methods but missed by the ML model.

- Potential Cause: The contamination signature is subtle and does not cause a significant deviation in the engineered features used by the model.

The integration of high-recall machine learning models, specifically One-Class SVM and Autoencoders, presents a powerful and accurate methodology for detecting fermentation contamination. By focusing on recall during model selection and hyperparameter optimization, this approach directly addresses the critical need to minimize false negatives, thereby safeguarding product quality and patient safety. This data-driven framework, which includes robust preprocessing, strategic feature engineering, and systematic optimization, offers a superior alternative to traditional threshold-based methods and provides a viable path for managing the ever-present risk of trace concentration contaminants in biopharmaceutical and industrial fermentation processes.

Frequently Asked Questions (FAQs)

Q1: My Random Forest model is predicting only a single class for all outputs. What could be wrong?

This is a common issue often traced to insufficient training data. The standard Random Forest algorithm in some software uses a default of 5000 input pixels per tree. If your total training pixels are fewer than this, the model cannot build effective, varied trees, crippling its predictive power [34]. The solution is to increase your training set size, ensuring you have many more than 5000 pixels in total. Furthermore, collect a balanced number of samples for each class and ensure your training data is saved correctly before running the classification [34].

Q2: How should I handle masked or "NoData" pixels in my classification?

When you mask an image using a polygon, the outer areas often become a class with a value of 0 (zero). The classifier will still process these pixels. A recommended best practice is to create a dedicated "edge" or "masked" class for all outer pixels during the training step. This prevents these areas from influencing the pixel statistics of your meaningful land cover or contaminant classes [34].

Q3: What are the key advantages of Support Vector Machines (SVM) for classification tasks?

SVMs are particularly powerful in several scenarios [35]:

- They are effective in high-dimensional spaces, even when the number of dimensions exceeds the number of samples.

- They are memory efficient because they use a subset of training points (support vectors) in the decision function.

- They are versatile through the use of different kernel functions (e.g., linear, radial basis function) to model non-linear decision boundaries.

Q4: My classified image appears all black or does not display correctly after processing. What steps should I take?

This can occur due to several pre-processing issues [34]:

- Check Band Resampling: Ensure all input rasters have the same spatial resolution. You may need to resample all bands to a common resolution before classification.

- Verify Projection: The coordinate reference system (CRS) of your image and training shapefiles must be identical. A reprojection of the image may be necessary.

- Inspect Pixel Values: The problem could be related to how "NoData" values are handled. Check the properties of the output band to ensure the "No-Data value used" is correctly defined.

Troubleshooting Guides

Issue: Poor Random Forest Classification Accuracy

Problem: Model accuracy is low, or one land cover class is consistently confused with another.

Solution: Follow this systematic guide to diagnose and resolve the issue.

| Step | Action | Rationale & Additional Details |

|---|---|---|

| 1 | Verify Training Data Size | Ensure total training pixels significantly exceed the default of 5000 per tree. For few samples, create polygons around sample points to multiply input data [34]. |

| 2 | Inspect Spectral Signatures | Plot and compare signatures of confused classes (e.g., soil vs. built-up). High similarity causes errors; collect more ROIs to better capture class variability [36]. |

| 3 | Apply Signature Threshold | Use a signature threshold to classify only pixels very similar to training inputs, reducing variability and potential for error [36]. |

| 4 | Check Pre-processing | Confirm correct atmospheric correction and reflectance conversion. Using images from different periods without separate training can hurt accuracy [34] [36]. |

Issue: Selecting Between Random Forest and SVM

Problem: Uncertainty about which algorithm to use for a contaminant prediction project.

Solution: Use the following decision guide based on your data characteristics and project goals.

Experimental Protocols & Data

Benchmarking Model Performance for Contaminant Prediction

The table below summarizes findings from a review of 27 U.S. drinking water studies that used machine learning to predict contaminants, providing a performance benchmark [37].

| Contaminant | Prevalence in Studies | Common Model Type | Reported Model Performance | Primary Data Source |

|---|---|---|---|---|

| Nitrate | 44% | Random Forest Classification | Good performance for binary classification (above/below threshold) | USGS National Water Information System (NWIS) |

| Arsenic | 30% | Random Forest Classification | Good performance for binary classification (above/below threshold) | USGS National Water Information System (NWIS) |

| Lead | - | Random Forest, Gradient Boosting | AUC: 0.90 - 0.95 in recent studies [38] | Integrated city data, school water tests |

Essential Research Reagent Solutions

This table lists key materials and data sources crucial for building predictive models of environmental contaminants.

| Item / Resource | Function / Application | Key Characteristics & Notes |

|---|---|---|

| USGS NWIS Database | Primary data source for groundwater contaminant concentrations. | Publicly available, extensive national coverage for contaminants like Arsenic and Nitrate [37]. |

| Water Quality Portal (WQP) | Integrated data repository combining USGS NWIS with other federal, state, and local data. | Over 290 million records; improves public access to consolidated water quality data [37]. |

| Lead Service Line Data | Critical infrastructure predictor variable for blood lead level models. | Key feature identified by explainable AI; density correlates with contamination risk [38]. |

| Social Vulnerability Data | Socioeconomic predictor variable for identifying high-risk populations. | A primary driver in city-wide predictions of lead exposure risk [38]. |

Workflow for a Contaminant Prediction Project

The following diagram outlines a standard workflow for a machine learning project aimed at predicting environmental contaminants, from data preparation to model interpretation.

Data Preprocessing and Feature Engineering for Noisy Industrial Data

Frequently Asked Questions (FAQs)

FAQ 1: What is the most effective way to handle missing data in time-series industrial data? Missing data is a common issue in industrial time-series datasets, such as those from fermentation processes. The most effective methodology involves a combination of:

- Resampling: First, resample the entire dataset to a uniform time interval (e.g., 5 seconds) to ensure consistent data points across all batches and variables [10].

- Interpolation: Use linear interpolation to estimate missing values between known data points. For subsequent missing values, apply a forward-fill method (using the last valid observation) [10].

- Dropping Data: As a last resort, remove entire rows or columns only if they are largely empty and after careful consideration of the potential loss of critical information [39] [40].

FAQ 2: How can I improve my model's robustness against sensor inaccuracies and environmental noise? A key innovation for enhancing model robustness is the intentional introduction of noise during training. By adding Gaussian noise to your training data, you can simulate real-world sensor inaccuracies and environmental uncertainties. This technique acts as a regularization strategy, forcing the model to learn more generalized patterns rather than overfitting to the precise—and potentially inaccurate—training examples. In one case study, this method substantially reduced long-term prediction error in a thermal system from 11.23% to 2.02% [41].

FAQ 3: My model is performing well on normal data but fails to detect contamination events. What should I prioritize? When detecting critical events like fermentation contamination, the most important metric to optimize for is Recall (the ability to find all positive samples). You must minimize false negatives, as failing to detect a contamination event can have severe consequences. To achieve this without completely sacrificing precision:

- Use the F2-score as your primary evaluation metric during model tuning, as it places more importance on recall than precision [10].

- Consider using one-class classification models like One-Class Support Vector Machines (OCSVM), which are trained only on normal data and have been shown to achieve high recall in contamination detection [10].

FAQ 4: What are the most important feature types for detecting anomalies in industrial processes? For time-series industrial data, the most discriminative features often come from engineered statistical summaries that capture process dynamics and variability. The table below summarizes key feature types and their utility.

Table 1: Key Feature Types for Industrial Anomaly Detection

| Feature Category | Specific Features | Utility in Anomaly Detection |

|---|---|---|

| Static Aggregated Statistics | Mean, Standard Deviation, Min, Max | Captures central tendency, variability, and extremes of a variable over a batch; shifts in these values can indicate anomalies [10]. |

| Rolling Window Features | Rolling Mean (e.g., over 5 steps) | Identifies gradual process drifts and improves stability by filtering short-term noise [10]. |

| Lag Features | 1-step lagged values | Helps models capture time-based dependencies and delayed effects of anomalies [10]. |

FAQ 5: How much time should I allocate for data preprocessing in my project? Data preprocessing and management typically consume the largest portion of a data scientist's time in a machine learning project. You should anticipate spending approximately 60-80% of your total project time on these tasks, which include data cleaning, transformation, and feature engineering [39] [42].

Troubleshooting Guides

Problem: Model performance is poor due to a high number of outliers in the dataset. Outliers can distort the training process, especially for models sensitive to data scale.

- Step 1: Diagnosis. Visually identify outliers using boxplots. For a quantitative approach, use statistical methods like calculating Z-scores or the Interquartile Range (IQR) [40].

- Step 2: Action. Decide on a handling strategy based on the nature of the outliers and your domain knowledge. Options include:

- Removal: If the outliers are confirmed to be measurement errors.

- Capping: Transform outliers to a specified upper or lower limit.

- Transformation: Use mathematical transformations to reduce the impact of extreme values [40].