Machine Learning-Powered Non-Target Analysis: A Systematic Framework for Contaminant Source Identification

This article provides a comprehensive review of the integration of Machine Learning (ML) with High-Resolution Mass Spectrometry (HRMS)-based Non-Target Analysis (NTA) for the critical task of contaminant source identification.

Machine Learning-Powered Non-Target Analysis: A Systematic Framework for Contaminant Source Identification

Abstract

This article provides a comprehensive review of the integration of Machine Learning (ML) with High-Resolution Mass Spectrometry (HRMS)-based Non-Target Analysis (NTA) for the critical task of contaminant source identification. Aimed at researchers, scientists, and environmental professionals, it outlines a systematic, four-stage workflow—from sample treatment and data acquisition to ML-driven analysis and robust validation. The content explores foundational concepts, details methodological applications with specific algorithms and case studies, addresses key troubleshooting and optimization challenges such as data quality and model interpretability, and establishes a tiered validation strategy. By translating complex chemical data into actionable environmental intelligence, this framework bridges the gap between analytical science and informed decision-making for environmental protection and public health.

The Foundational Shift: From Traditional Analysis to ML-Driven NTA

The Pollution Crisis and the Limits of Targeted Analysis

The rapid proliferation of synthetic chemicals has led to widespread environmental pollution from diverse sources such as industrial effluents, household personal care products, and agricultural runoff [1]. Conventional environmental monitoring strategies, which predominantly rely on targeted chemical analysis, are inherently limited to detecting predefined compounds [1]. As a result, they overlook a wide range of known "unknowns," including transformation products and emerging contaminants that remain unmonitored [1]. This fundamental limitation in targeted approaches creates significant blind spots in environmental assessment and necessitates a paradigm shift toward more comprehensive analytical strategies.

Non-targeted analysis (NTA) has emerged as a powerful alternative, enabling the detection and identification of thousands of chemicals without prior knowledge through high-resolution mass spectrometry (HRMS) [1] [2]. However, the principal challenge now lies not in detection alone but in developing computational methods to extract meaningful environmental information from vast chemical datasets [1]. The integration of machine learning (ML) with NTA represents a transformative advancement for contaminant source identification, offering the capability to identify latent patterns within high-dimensional data that traditional statistical methods often miss [1]. This article establishes a systematic framework for ML-assisted NTA, providing researchers with detailed protocols and applications to address the growing complexity of environmental pollution crises.

Quantitative Performance of ML-NTA Approaches

The effectiveness of machine learning in non-targeted analysis for source identification is demonstrated through various performance metrics across different methodologies. The table below summarizes quantitative results from key studies in the field.

Table 1: Performance Metrics of ML-NTA and Groundwater Contamination Identification Methods

| Application Domain | ML Method/Approach | Performance Metrics | Key Findings |

|---|---|---|---|

| Contaminant Source Classification | Support Vector Classifier (SVC), Logistic Regression (LR), Random Forest (RF) [1] | Balanced accuracy: 85.5% to 99.5% for classifying 222 PFASs in 92 samples [1] | ML classifiers successfully screen targeted and suspect substances across different sources. |

| Groundwater Point Source Inversion | Artificial Hummingbird Algorithm (AHA) with BPNN Surrogate [3] | MARE: 1.58%; R²: 0.9994 between surrogate and simulation model [3] | Surrogate model provided highly accurate estimates; AHA outperformed PSO and SSA. |

| Groundwater Areal Source Inversion | Artificial Hummingbird Algorithm (AHA) with BPNN Surrogate [3] | MARE: 2.03%; R²: 0.9989 between surrogate and simulation model [3] | Framework demonstrated strong robustness for different pollution scenarios. |

| Groundwater Source Identification | Rime Optimization Algorithm (RIME) with 1DCNN Surrogate [4] | R²: 0.9998 (surrogate); Average relative error: 8.88% (single identification) [4] | The 1DCNN surrogate maintained R² > 0.9993 under ±20% noise interference. |

Comprehensive Workflow for ML-Assisted NTA

The integration of machine learning and non-targeted analysis follows a systematic four-stage workflow that transforms raw data into actionable environmental insights [1]. The following protocol details each critical stage.

Stage 1: Sample Treatment and Extraction

Objective: To prepare environmental samples for analysis while maximizing the recovery of diverse compounds and minimizing matrix interference.

Critical Considerations:

- Extraction Selectivity: Balance the removal of interfering components with the preservation of as many compounds as possible [1].

- Broad-Spectrum Coverage: Employ multi-sorbent strategies to overcome the inherent selectivity of single-mode extractions [1]. For example, combine sorbents such as Oasis HLB with ISOLUTE ENV+, Strata WAX, and WCX [1].

- Efficiency Enhancement: Utilize green extraction techniques like QuEChERS, microwave-assisted extraction (MAE), and supercritical fluid extraction (SFE) to reduce solvent usage and processing time, particularly for large-scale environmental samples [1].

Protocol:

- Sample Preparation: Homogenize solid samples or filter liquid samples to remove particulates.

- Extraction: Process samples using optimized solid-phase extraction (SPE) with a mixed-sorbent cartridge.

- Purification: Apply additional clean-up steps such as gel permeation chromatography (GPC) if significant matrix interference is anticipated.

- Concentration: Gently evaporate extracts under nitrogen and reconstitute in a compatible solvent for instrumental analysis.

Stage 2: Data Generation and Acquisition

Objective: To generate high-quality, comprehensive chemical data using high-resolution mass spectrometry.

Critical Considerations:

- Platform Selection: HRMS platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems, are essential for resolving isotopic patterns, fragmentation signatures, and structural features [1].

- Chromatographic Separation: Couple HRMS with liquid or gas chromatography (LC/GC) to reduce sample complexity [1].

- Quality Assurance: Implement batch-specific quality control (QC) samples and confidence-level assignments (Levels 1-5) to ensure data integrity [1].

Protocol:

- Instrument Calibration: Calibrate the mass spectrometer according to manufacturer specifications before each batch.

- Data Acquisition: Analyze samples in randomized order, injecting QC samples (e.g., pooled quality control samples) at regular intervals throughout the sequence.

- Post-Acquisition Processing: Process raw data using computational workflows that include peak detection, retention time alignment, and componentization to group related spectral features (e.g., adducts, isotopes) into molecular entities [1].

- Feature Table Generation: Export a structured feature-intensity matrix, where rows represent samples and columns correspond to aligned chemical features, serving as the foundation for ML-driven analysis [1].

Stage 3: ML-Oriented Data Processing and Analysis

Objective: To process raw HRMS data and apply machine learning techniques for pattern recognition and source classification.

Critical Considerations:

- Data Quality: Address noise, missing values, and batch effects through rigorous preprocessing [1].

- Exploratory Analysis: Identify significant features via univariate statistics (t-tests, ANOVA) and prioritize compounds with large fold changes [1].

- Model Selection: Choose ML algorithms based on research goals, considering the complementary roles of unsupervised and supervised methods [1].

Protocol:

- Data Preprocessing:

- Perform missing value imputation using methods like k-nearest neighbors (KNN).

- Apply normalization (e.g., Total Ion Current (TIC) normalization) to mitigate batch effects.

- Filter out low-abundance features and noise.

- Exploratory Data Analysis:

- Conduct dimensionality reduction using Principal Component Analysis (PCA) or t-SNE to visualize sample clustering.

- Apply clustering methods (e.g., hierarchical cluster analysis (HCA), k-means) to group samples by chemical similarity.

- Supervised Modeling:

- Partition data into training and testing sets.

- Train classifiers (e.g., Random Forest, Support Vector Classifier) on labeled datasets to predict contamination sources.

- Apply feature selection algorithms (e.g., recursive feature elimination) to refine input variables and enhance model interpretability.

Stage 4: Result Validation

Objective: To ensure the reliability, accuracy, and environmental relevance of ML-NTA outputs.

Critical Considerations:

- Analytical Confidence: Verify compound identities using authentic standards or spectral library matches [1].

- Model Generalizability: Assess performance on independent external datasets [1].

- Environmental Plausibility: Correlate model predictions with contextual field data [1].

Protocol:

- Tier 1 - Analytical Validation:

- Confirm the identity of key marker compounds using certified reference materials (CRMs) where available.

- Validate spectral interpretations against high-quality library matches (e.g., Level 1 or 2 identification confidence).

- Tier 2 - Model Validation:

- Evaluate trained classifiers on a held-out external test set.

- Perform cross-validation (e.g., 10-fold) to evaluate overfitting risks.

- Tier 3 - Environmental Contextualization:

- Compare model-predicted sources with known potential emission sources in the area.

- Assess geospatial proximity of samples to identified contamination sources.

- Evaluate whether identified chemical patterns align with known source-specific markers or transformation pathways [1].

Advanced Applications: Groundwater Contamination Source Identification

The simulation-optimization framework represents a powerful application of advanced computational methods for identifying groundwater contamination sources, particularly when coupled with machine learning surrogates.

Simulation-Optimization Framework Protocol

Objective: To accurately identify groundwater contamination source characteristics (location, release history) and hydrogeological parameters through an inverse modeling approach.

Methodology:

- Numerical Simulation: Develop a groundwater flow and solute transport model using established codes (e.g., MODFLOW-2005 for flow and MT3DMS for transport) [3].

- Surrogate Model Development: Construct a machine learning surrogate model (e.g., Backpropagation Neural Network - BPNN, or 1D Convolutional Neural Network - 1DCNN) to approximate the complex simulation model, significantly reducing computational time [3] [4].

- Optimization Process: Implement an evolutionary algorithm (e.g., Artificial Hummingbird Algorithm - AHA, Rime Optimization Algorithm - RIME) to iteratively adjust source parameters and hydrogeological properties to minimize the difference between simulated and observed contaminant concentrations [3] [4].

Key Formulations: The governing equations for groundwater flow and solute transport are represented by:

- Groundwater Flow: ∂/∂xᵢ [Kᵢⱼ(H-z) (∂H/∂xⱼ)] + W = μ (∂H/∂t) [3]

- Solute Transport: ∂C/∂t = ∂/∂xᵢ (Dᵢⱼ ∂C/∂xⱼ) - ∂/∂xᵢ (uᵢC) + R/nₑ [3] Where Kᵢⱼ is hydraulic conductivity, H is the water-level elevation, C is contaminant concentration, Dᵢⱼ is the hydrodynamic dispersion tensor, and uᵢ is the average flow velocity.

Table 2: Optimization Algorithm Performance Comparison for Groundwater Contamination Identification

| Optimization Algorithm | Application Scenario | Performance Metrics | Comparative Advantage |

|---|---|---|---|

| Artificial Hummingbird Algorithm (AHA) [3] | Point & Areal Source Contamination | MARE: 1.58% (PSC), 2.03% (ASC) [3] | Superior global search ability; outperformed PSO and SSA. |

| Rime Optimization Algorithm (RIME) [4] | Groundwater Contamination Source Identification | Avg. relative error: 8.88% (single), 5.88% (100 trials) [4] | Unique soft/hard rime search strategies escape local minima. |

| Shuffled Complex Evolution (SCE-UA) [5] | PCE Contamination in Aquifers | Agreement with observed values in field conditions [5] | Robust parameter space exploration; effective in field applications. |

| Particle Swarm Optimization (PSO) [3] | Benchmarking Comparison | Higher MARE than AHA [3] | Used for performance comparison; less accurate than newer methods. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of ML-NTA workflows requires specific analytical reagents and computational resources. The following table details essential components for establishing these methodologies.

Table 3: Essential Research Reagents and Materials for ML-NTA workflows

| Category | Item | Function/Purpose | Example Application/Notes |

|---|---|---|---|

| Sample Preparation | Mixed-mode SPE sorbents (e.g., Oasis HLB, ISOLUTE ENV+, Strata WAX/WCX) [1] | Broad-spectrum analyte extraction; reduces chemical bias. | Combining sorbents with different selectivities increases coverage of polar and non-polar compounds. |

| Sample Preparation | QuEChERS kits [1] | Rapid sample preparation with minimal solvent use. | Particularly useful for large-scale environmental sampling campaigns. |

| Instrumentation | High-Resolution Mass Spectrometer (Orbitrap, Q-TOF) [1] | Provides accurate mass measurements for unknown identification. | Enables formula assignment and distinction of co-eluting compounds. |

| Instrumentation | LC/GC Systems coupled to HRMS [1] | Chromatographic separation reduces sample complexity. | Essential for isolating individual compounds before mass analysis. |

| Data Processing | Reference Spectral Libraries (e.g., NIST, MassBank) [2] | Compound identification via spectral matching. | Critical for assigning confidence levels (e.g., Level 1-2 identification). |

| Data Processing | Computational Tools (e.g., XCMS, various NTA software) [1] | Peak picking, alignment, and feature table generation. | Creates structured data matrix for machine learning input. |

| QA/QC Materials | Certified Reference Materials (CRMs) [1] | Method validation and compound confirmation. | Used in validation stage to verify compound identities. |

| QA/QC Materials | Isotopically-labeled internal standards | Quality control and semi-quantification. | Monitors analytical performance throughout sequence. |

| Computational | Machine Learning Libraries (Python/R) | Implementation of classification and pattern recognition. | Enables Random Forest, SVC, and other ML algorithms. |

| Computational | Optimization Algorithms (AHA, RIME, SCE-UA) [3] [4] [5] | Solving inverse problems in contamination source identification. | Superior to traditional algorithms for global optimization. |

The integration of machine learning with non-targeted analysis represents a paradigm shift in environmental forensics, moving beyond the limitations of targeted approaches to address the complex reality of modern chemical pollution. The structured workflows, advanced simulation-optimization frameworks, and specialized reagents detailed in these application notes provide researchers with a comprehensive toolkit for tackling contamination crises. By leveraging these methodologies, scientists can more accurately identify pollution sources, reconstruct release histories, and ultimately contribute to more effective remediation strategies and evidence-based environmental decision-making. As the field continues to evolve, ongoing harmonization efforts through initiatives like the Benchmarking and Publications for Non-Targeted Analysis Working Group (BP4NTA) will be crucial for establishing standardized reporting practices and performance metrics that ensure the reliability and adoption of these powerful techniques [2].

High-Resolution Mass Spectrometry (HRMS) as the Engine for NTA

High-Resolution Mass Spectrometry (HRMS) serves as the fundamental analytical engine enabling comprehensive non-targeted analysis (NTA) for contaminant source identification research. Unlike targeted analytical methods that are restricted to predefined compounds, HRMS-based NTA provides a powerful approach for detecting thousands of known and unknown chemicals without prior knowledge, making it particularly valuable for identifying novel contaminants and transformation products in complex environmental samples [1] [6]. The exceptional mass accuracy (<5 ppm), high resolution (>25,000), and full-scan sensitivity of modern HRMS platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems, generate the complex datasets necessary for reliable compound annotation and molecular feature characterization [1] [7]. This capability is crucial for developing machine learning models that can identify contamination sources based on distinctive chemical fingerprints, ultimately bridging critical gaps between analytical detection and actionable environmental decision-making [1].

The integration of HRMS with chromatographic separation techniques, typically liquid or gas chromatography (LC/GC), further enhances compound detection and characterization by resolving isotopic patterns, fragmentation signatures, and structural features essential for confident compound annotation [1]. When coupled with advanced data processing workflows and machine learning algorithms, HRMS-generated data transforms from raw spectral information into interpretable patterns that can differentiate contamination sources with balanced accuracy ranging from 85.5% to 99.5% in controlled studies [1]. This technological synergy positions HRMS as the indispensable analytical foundation for next-generation environmental monitoring, source tracking, and risk assessment protocols.

Analytical Protocols for HRMS-Based NTA

Sample Preparation and Extraction Methods

Effective sample preparation is critical for maximizing analyte recovery while minimizing matrix effects that can compromise downstream HRMS analysis. Based on established protocols from environmental NTA studies, the following methods have proven effective for diverse sample matrices:

Solid Phase Extraction (SPE): A widely employed concentration technique utilizing multi-sorbent strategies (e.g., combining Oasis HLB with ISOLUTE ENV+, Strata WAX, and WCX) to broaden analyte coverage across different physicochemical properties [1]. Online-SPE systems provide automated analysis with minimal sample handling, as demonstrated in PFAS screening studies [6].

Green Extraction Techniques: Methods including QuEChERS (Quick, Easy, Cheap, Effective, Rugged, and Safe), microwave-assisted extraction (MAE), and supercritical fluid extraction (SFE) improve efficiency by reducing solvent usage and processing time, particularly beneficial for large-scale environmental sampling campaigns [1].

Infinity SPE Cartridges: Effective for broad-spectrum contaminant extraction from water samples, as implemented in urban source fingerprinting studies [7]. This approach typically processes 1L unfiltered water samples using 3mL, 100mg cartridges with Osorb media, achieving comprehensive contaminant profiling with acceptable reproducibility (39%-118% RSD for internal standards) [7].

HRMS Instrumentation and Data Acquisition

Standardized instrumental parameters ensure consistent generation of high-quality data suitable for machine learning applications:

LC-HRMS Analysis: Utilizing UHPLC systems coupled to Q-Exactive Orbitrap or Q-TOF mass spectrometers equipped with electrospray ionization (ESI) sources operated in positive and/or negative ionization modes [6] [7]. Full scan MS1 data (m/z range 100-1700) is acquired at resolution >50,000, followed by data-dependent MS/MS scans for compound identification.

Quality Assurance Protocols: Incorporation of batch-specific quality control samples, internal standard mixtures (e.g., 19 isotopically labeled PFAS), and solvent blanks analyzed every 12 samples to monitor instrument performance and correct for systematic variations [6] [7]. Acceptable performance criteria include mass error <5 ppm and retention time variation <0.2 minutes [7].

Reference Materials: Use of certified reference materials (CRMs) and native standard mixtures (e.g., 30 PFAS compounds) for method validation and semi-quantitative estimation [6] [8].

Table 1: Standard HRMS Acquisition Parameters for NTA

| Parameter | Setting | Purpose |

|---|---|---|

| Mass Resolution | >50,000 FWHM | Sufficient to resolve isobaric compounds |

| Mass Accuracy | <5 ppm | Enables confident molecular formula assignment |

| Mass Range | 100-1700 m/z | Covers most environmental contaminants |

| Scan Rate | 1-5 Hz | Balances sensitivity and chromatographic definition |

| Collision Energies | 10-40 eV | Provides structural fragmentation information |

| Internal Standard Mass Correction | Continuous infusion | Maintains mass accuracy throughout run |

Data Processing Workflows

Raw HRMS data requires extensive processing to convert instrumental outputs into meaningful chemical features suitable for pattern recognition and machine learning analysis. The standard workflow encompasses:

Feature Extraction: Using software platforms (e.g., Compound Discoverer, FluoroMatch, XCMS, or Mass-Suite) to detect unique m/z-retention time pairs (mz@RT), group related spectral features (isotopologues, adducts), and align features across samples [6] [7] [9]. Parameters typically include mass tolerance <5 ppm and retention time tolerance <0.2 minutes.

Data Reduction: Applying blank subtraction (≥5-fold peak area relative to blanks), abundance thresholding (peak areas >5000), and replicate filtering (features detected in 100% of extraction replicates) to remove instrumental artifacts and environmental background [7].

Quality Control Metrics: Evaluating feature extraction accuracy (>99.5% with mixed chemical standards), retention time stability (RSD <5%), and internal standard precision (RSD 39%-118%) to ensure data quality [7] [10].

The following workflow diagram illustrates the complete HRMS data generation and processing pipeline:

Application to Contaminant Source Identification

Chemical Fingerprinting for Source Discrimination

HRMS-enabled chemical fingerprinting provides powerful discrimination between contamination sources through comprehensive chemical profiling. Proof-of-concept studies demonstrate that source-specific HRMS fingerprints can differentiate municipal wastewater influent, roadway runoff, and urban baseflow with high specificity [7]. Key findings include:

Source-Specific Signatures: Analysis of urban water samples revealed 112 co-occurring compounds unique to roadway runoff and 598 compounds unique to wastewater influent across all sampled locations, providing statistically robust discrimination between source types [7].

Ubiquitous Indicator Compounds: Roadway runoff fingerprints consistently contained hexa(methoxymethyl)melamine, 1,3-diphenylguanidine, and polyethylene glycols across geographic areas and traffic intensities, suggesting potential for universal roadway runoff fingerprints [7].

Hierarchical Cluster Analysis (HCA): Successfully differentiated source types using Euclidean distances calculated from log-normalized peak areas with Ward's clustering method, visually revealing clusters of overlapping detections at similar abundances within each source type [7].

Machine Learning Integration for Pattern Recognition

The high-dimensional chemical feature data generated by HRMS provides ideal inputs for machine learning algorithms designed for source classification and apportionment:

Classification Performance: Support Vector Classifier (SVC), Logistic Regression (LR), and Random Forest (RF) classifiers applied to 222 PFAS features across 92 samples achieved balanced classification accuracy ranging from 85.5% to 99.5% for different contamination sources [1].

Feature Selection: Recursive feature elimination and variable importance metrics (e.g., from Partial Least Squares Discriminant Analysis) identify source-specific indicator compounds, optimizing model accuracy and interpretability [1].

Model Validation: A tiered validation approach integrating reference material verification, external dataset testing, and environmental plausibility assessments ensures model robustness for real-world applications [1].

Table 2: Machine Learning Performance for Source Identification

| Algorithm | Application | Performance | Key Advantages |

|---|---|---|---|

| Random Forest | PFAS source classification | 85.5-99.5% balanced accuracy | Handles high-dimensional data, provides feature importance metrics |

| Support Vector Classifier | Contaminant source identification | 85.5-99.5% balanced accuracy | Effective in high-dimensional spaces, versatile kernel functions |

| XGBoost | Vehicle-derived chemical source tracking | 93.3% accuracy on training data | Handling of missing values, regularization prevents overfitting |

| Logistic Regression | Qualitative source identification | 100% accuracy in dot-product approach | Interpretability, probabilistic outputs |

| PLS-DA | Indicator compound identification | Effective variable importance metrics | Handles collinearities, integrates well with spectral data |

Quantitative NTA (qNTA) for Risk Assessment

Semi-quantitative approaches extend NTA beyond compound identification to concentration estimation, supporting provisional risk assessments:

Global Calibration: Using existing native standards and internal standards to create regression-based models for estimating concentrations of untargeted compounds, with semi-quantitation methods achieving reasonable estimates for total PFAS concentrations [6] [8].

Performance Metrics: Quantitative NTA using global surrogate approaches shows decreased accuracy by approximately 4×, increased uncertainty by ~1000×, and reduced reliability by ~5% compared to targeted quantification methods, but remains valuable for priority screening [8].

Uncertainty Estimation: Bootstrap simulation techniques using expert-selected surrogates (n=3) instead of global surrogates (n=25) yield improvements in predictive accuracy (~1.5×) and uncertainty (~70×), though with slightly reduced reliability [8].

The following diagram illustrates the machine learning framework for source identification:

Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for HRMS-NTA

| Reagent/Material | Function | Application Example |

|---|---|---|

| Oasis HLB SPE Cartridges | Broad-spectrum analyte extraction from water samples | Enrichment of diverse contaminant classes in wastewater and surface water [1] |

| Infinity SPE Cartridges (Osorb media) | Comprehensive contaminant extraction | Urban source fingerprinting studies for roadway runoff and wastewater [7] |

| Multi-sorbent SPE (ISOLUTE ENV+, Strata WAX/WCX) | Enhanced coverage across chemical space | Complementary extraction of acidic, neutral, and basic compounds [1] |

| PFAS Native Standard Mix (30 compounds) | Method calibration and quantitative reference | Semi-quantitative estimation of novel PFAS in environmental waters [6] |

| Isotopically Labeled Internal Standards (19 PFAS) | Quality control and signal normalization | Correction for matrix effects and instrumental variation [6] [8] |

| QuEChERS Extraction Kits | Rapid sample preparation for solid matrices | Extraction of complex environmental samples with minimal solvent usage [1] |

| Reference Materials (CRM) | Method validation and quality assurance | Verification of compound identities and quantitative accuracy [1] [8] |

The Data Interpretation Bottleneck and the Rise of Machine Learning

The rapid proliferation of synthetic chemicals has led to widespread environmental pollution from diverse sources including industrial effluents, household personal care products, and agricultural runoff [1]. Conventional environmental monitoring strategies, predominantly reliant on targeted chemical analysis, are inherently limited to detecting predefined compounds, thereby overlooking a wide range of "known unknowns" including transformation products and emerging contaminants [1]. In this context, non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) has emerged as a valuable approach for detecting thousands of chemicals without prior knowledge [1] [11] [12].

The principal challenge in environmental analysis has now shifted from chemical detection to data interpretation. The vast, complex datasets generated by HRMS-based NTA create a significant data interpretation bottleneck [1]. Early attempts to interpret these high-dimensional datasets utilized statistical methods such as univariate analysis and unsupervised clustering, but these approaches often struggle to disentangle complex source signatures as they prioritize abundance over diagnostic chemical patterns [1]. This limitation underscores the critical need for more sophisticated data interpretation frameworks capable of transforming raw chemical data into actionable environmental intelligence.

Machine Learning-Driven Solutions

Recent advances in machine learning (ML) have redefined the potential of NTA by providing powerful tools to overcome the data interpretation bottleneck [1]. Unlike traditional statistical methods, ML algorithms excel at identifying latent patterns within high-dimensional data, making them particularly well-suited for contamination source identification [1]. ML techniques have demonstrated remarkable performance in environmental applications, with classifiers such as Support Vector Classifier (SVC), Logistic Regression (LR), and Random Forest (RF) achieving balanced accuracy ranging from 85.5% to 99.5% when screening per- and polyfluoroalkyl substances (PFAS) across different sources [1].

The integration of ML with NTA follows a systematic four-stage workflow: (i) sample treatment and extraction, (ii) data generation and acquisition, (iii) ML-oriented data processing and analysis, and (iv) result validation [1]. This framework provides a structured approach for translating complex HRMS data into identifiable contamination sources, effectively addressing the interpretation bottleneck that has hindered traditional methods.

Experimental Protocols: ML-NTA Workflow

Stage 1: Sample Treatment and Extraction

Sample preparation requires careful optimization to balance selectivity and sensitivity, aiming to remove interfering components while preserving as many compounds as possible with adequate sensitivity [1]. Key extraction and purification techniques include:

- Solid Phase Extraction (SPE): Widely employed for its ability to enrich specific compound classes, though its inherent selectivity for certain physicochemical properties (e.g., polarity) limits broad-spectrum coverage [1].

- Multi-sorbent Strategies: Broader-range extractions can be achieved by combining sorbents such as Oasis HLB with ISOLUTE ENV+, Strata WAX, and WCX [1].

- Green Extraction Techniques: Methods including QuEChERS, microwave-assisted extraction (MAE), and supercritical fluid extraction (SFE) improve efficiency by reducing solvent usage and processing time, particularly beneficial for large-scale environmental samples [1].

These sample preparation methods ensure comprehensive analyte recovery while minimizing matrix interference, establishing a critical foundation for downstream ML analysis [1].

Stage 2: Data Generation and Acquisition

HRMS platforms, including quadrupole time-of-flight (Q-TOF) and Orbitrap systems, generate complex datasets essential for NTA [1]. Coupled with liquid or gas chromatographic separation (LC/GC), these instruments resolve isotopic patterns, fragmentation signatures, and structural features necessary for compound annotation [1].

Post-acquisition processing involves:

- Centroiding and peak detection

- Extracted ion chromatogram (EIC/XIC) analysis

- Peak alignment and componentization to group related spectral features (e.g., adducts, isotopes) into molecular entities [1]

Quality assurance measures, including confidence-level assignments (Level 1-5) and batch-specific quality control (QC) samples, ensure data integrity [1]. The output is a structured feature-intensity matrix where rows represent samples and columns correspond to aligned chemical features, serving as the foundation for ML-driven analysis [1].

Stage 3: ML-Oriented Data Processing and Analysis

The transition from raw HRMS data to interpretable patterns involves sequential computational steps:

- Data Preprocessing: Addresses data quality through noise filtering, missing value imputation (e.g., k-nearest neighbors), and normalization (e.g., TIC normalization) to mitigate batch effects [1].

- Exploratory Analysis: Identifies significant features via univariate statistics (t-tests, ANOVA) and prioritizes compounds with large fold changes [1].

- Dimensionality Reduction: Techniques including Principal Component Analysis (PCA) and t-distributed Stochastic Neighbor Embedding (t-SNE) simplify high-dimensional data [1].

- Clustering Methods: Hierarchical Cluster Analysis (HCA) and k-means clustering group samples by chemical similarity [1].

- Supervised ML Models: Algorithms including Random Forest (RF) and Support Vector Classifier (SVC) are trained on labeled datasets to classify contamination sources, with feature selection algorithms (e.g., recursive feature elimination) refining input variables to optimize model accuracy and interpretability [1].

Stage 4: Result Validation

Validation ensures the reliability of ML-NTA outputs through a three-tiered approach:

- Analytical Confidence Verification: Using certified reference materials (CRMs) or spectral library matches to confirm compound identities [1].

- Model Generalizability Assessment: Validating classifiers on independent external datasets, complemented by cross-validation techniques (e.g., 10-fold) to evaluate overfitting risks [1].

- Environmental Plausibility Checks: Correlating model predictions with contextual data, such as geospatial proximity to emission sources or known source-specific chemical markers [1].

This multi-faceted validation bridges analytical rigor with real-world relevance, ensuring results are both chemically accurate and environmentally meaningful [1].

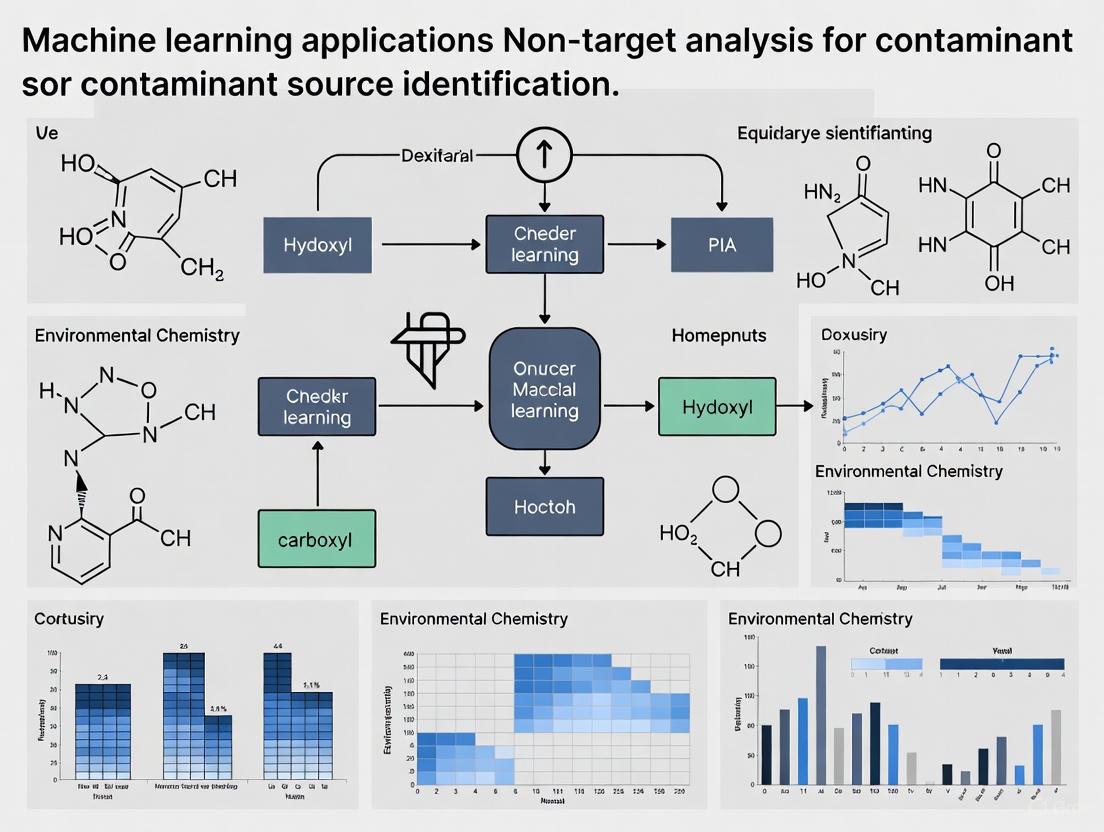

Figure 1: ML-NTA Workflow. The systematic process from sample collection to validated results.

Quantitative Performance of ML Algorithms in Environmental Applications

Table 1: Performance Metrics of Machine Learning Algorithms in Contaminant Classification

| ML Algorithm | Application Context | Accuracy (%) | Key Metrics | Reference |

|---|---|---|---|---|

| Light Gradient Boosting Machine (LGBM) | PFAS identification in water samples | >97 | High performance across five critical metrics | [13] |

| Random Forest (RF) | PFAS source classification | 85.5-99.5 | Balanced accuracy across different sources | [1] |

| Support Vector Classifier (SVC) | PFAS source classification | 85.5-99.5 | Balanced accuracy across different sources | [1] |

| Logistic Regression (LR) | PFAS source classification | 85.5-99.5 | Balanced accuracy across different sources | [1] |

| Deep Belief Neural Network (DBNN) | Groundwater contamination source identification | R²=0.982 | RMSE=3.77, MAE=7.56% | [14] |

| Decision Tree-based Models | Insulator contamination classification | >98 | Fast training and optimization times | [15] |

Table 2: Three-Tiered Validation Framework for ML-NTA Studies

| Validation Tier | Purpose | Methods | Outcome Measures |

|---|---|---|---|

| Analytical Confidence | Verify compound identities | Certified reference materials (CRMs), spectral library matches | Confidence-level assignments (Level 1-5) |

| Model Generalizability | Assess performance on unseen data | External dataset testing, cross-validation (e.g., 10-fold) | Accuracy, precision, recall, F1-score on external data |

| Environmental Plausibility | Ensure real-world relevance | Geospatial correlation, source-specific marker alignment | Consistency with known contamination patterns |

Case Study: PFAS Identification with Machine Learning

A recent study demonstrated a novel machine learning-based pseudo-targeted screening framework for identifying per- and poly-fluoroalkyl substances (PFAS) in water samples without requiring reference standards [13]. This framework integrates spectral feature engineering and model interpretability techniques to construct a discriminative PFAS recognition model from publicly available tandem mass spectrometry data.

Experimental Protocol: PFAS Screening

The methodology encompassed three main components:

- Dataset Preparation: MS2 data for various PFAS compounds was collected and curated from the MassBank database. Features related to experimental conditions were integrated to build a comprehensive training dataset [13].

- ML Model Construction: Ten different classifiers were trained and evaluated, with the Light Gradient Boosting Machine (LGBM) achieving outstanding predictive performance [13].

- Model Evaluation and Validation: External validation using both MassBank entries and experimentally measured LC-MS data confirmed the model's robustness and generalizability [13].

The LGBM model achieved exceptional performance across multiple metrics, with scores exceeding 97% across five critical evaluation metrics [13]. Model interpretation using SHAP (SHapley Additive exPlanations) revealed critical fragment-based features contributing to PFAS classification, enhancing the transparency and chemical plausibility of the predictions [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for ML-NTA Workflows

| Category | Item | Function/Application | Key Considerations |

|---|---|---|---|

| Extraction Materials | Solid Phase Extraction (SPE) cartridges (Oasis HLB, ISOLUTE ENV+, Strata WAX/WCX) | Comprehensive analyte enrichment from water samples | Multi-sorbent strategies enhance broad-spectrum coverage [1] |

| Chromatography | LC/GC columns | Compound separation prior to MS analysis | High-resolution separation critical for complex environmental samples [1] [12] |

| Mass Spectrometry | HRMS systems (Q-TOF, Orbitrap) | High-resolution data generation for NTA | Resolution, mass accuracy, and fragmentation capability essential [1] [12] |

| Data Processing Software | Open-source platforms (XCMS, MZmine, SIRIUS, MS-DIAL, PatRoon) | Feature extraction, alignment, and compound annotation | PatRoon enables algorithm comparison; InSpectra allows retrospective analysis [12] |

| ML Algorithms | Random Forest, LGBM, SVC, DBNN | Pattern recognition and contaminant classification | Balance between accuracy, interpretability, and computational efficiency [1] [13] [14] |

| Validation Materials | Certified reference materials (CRMs) | Analytical confidence verification | Essential for confirming compound identities [1] |

Figure 2: ML-NTA Data Logic. The logical flow from raw data to validated predictions.

The integration of machine learning with non-target analysis represents a paradigm shift in environmental contaminant identification, effectively addressing the data interpretation bottleneck that has long hampered comprehensive environmental monitoring. The structured workflows, validated performance metrics, and specialized tools detailed in these application notes provide researchers with a robust framework for implementing ML-NTA in contaminant source identification. As these methodologies continue to evolve, they hold significant promise for transforming how we detect, characterize, and ultimately mitigate the impact of emerging contaminants on environmental and public health.

Defining the Four-Stage Workflow for ML-Assisted NTA

Machine learning-assisted non-targeted analysis (ML-assisted NTA) represents a transformative approach for identifying unknown chemicals and attributing contamination to their sources in complex environmental samples. This workflow leverages high-resolution mass spectrometry (HRMS) to generate comprehensive chemical data, which is subsequently decoded using machine learning algorithms to identify patterns and source-specific chemical fingerprints. The integration of ML addresses the principal challenge of NTA, which lies not in detection but in extracting meaningful environmental information from vast, high-dimensional chemical datasets [1]. This application note delineates a standardized four-stage workflow, providing researchers and drug development professionals with detailed protocols for implementing this powerful analytical strategy.

The rapid proliferation of synthetic chemicals has led to widespread environmental pollution from diverse sources such as industrial effluents, agricultural runoff, and household products [1]. Conventional environmental monitoring, which relies on targeted analysis of predefined compounds, is inherently limited and overlooks many known "unknowns," including transformation products and emerging contaminants [1]. Non-targeted analysis (NTA), powered by high-resolution mass spectrometry (HRMS), has emerged as a valuable approach for detecting thousands of chemicals without prior knowledge [1] [16].

The core challenge of NTA now lies in developing computational methods to extract meaningful information from the complex HRMS datasets [1]. Machine learning (ML) algorithms are uniquely suited for this task, as they excel at identifying latent patterns within high-dimensional data, making them particularly effective for contamination source identification [1]. This document establishes a unified framework for ML-assisted NTA, systematically exploring how ML techniques transform raw HRMS data into source-specific chemical fingerprints through four critical stages, with particular emphasis on ML-oriented data processing and validation.

The Four-Stage Workflow

The integration of machine learning and non-targeted analysis for contaminant source identification follows a systematic four-stage workflow: (i) sample treatment and extraction, (ii) data generation and acquisition, (iii) ML-oriented data processing and analysis, and (iv) result validation [1]. A comprehensive overview of this workflow and the critical decisions at each stage is provided in Figure 1.

Stage 1: Sample Treatment and Extraction

Objective: Prepare representative samples that preserve the comprehensive chemical profile while minimizing interfering components.

Detailed Protocol:

- Sample Collection: Collect environmental samples (water, soil, sediment, biota, air) using clean procedures to avoid contamination. For water samples, store in amber glass containers at 4°C until extraction [17].

- Extraction Method Selection: Choose an extraction technique that balances selectivity and comprehensiveness:

- Solid Phase Extraction (SPE): Ideal for concentrating a broad range of compounds from water samples. Use multi-sorbent strategies (e.g., combining Oasis HLB with ISOLUTE ENV+, Strata WAX, and WCX) to broaden chemical coverage [1].

- Pressurized Liquid Extraction (PLE): Recommended for solid samples (soil, sediment) using solvents like methanol or acetone at high temperature and pressure [1] [17].

- QuEChERS: Employ for samples with high water content or complex matrices; effective for pesticide residues and other semi-polar compounds [1].

- Purification: Apply cleanup steps such as gel permeation chromatography (GPC) to remove macromolecular interferences (e.g., humic acids) if necessary for downstream analysis [1].

- Concentration: Gently evaporate extracts under a nitrogen stream and reconstitute in an injection solvent compatible with the chromatographic system (e.g., methanol for LC-MS) [17].

Key Considerations:

- The extraction method defines the initial "chemical space" detectable in the analysis. A generic, broad-range approach is preferred for untargeted discovery [17].

- Incorporate procedural blanks and quality control (QC) samples throughout to monitor contamination and performance.

Stage 2: Data Generation and Acquisition

Objective: Generate high-quality, comprehensive chromatographic and mass spectrometric data for all extractable components.

Detailed Protocol:

- Chromatographic Separation:

- Liquid Chromatography (LC): Use reversed-phase C18 columns with a broad generic gradient (e.g., 5-100% methanol or acetonitrile in water over 20-30 minutes) to separate a wide polarity range [17]. Maintain a column temperature of 40-50°C.

- Gas Chromatography (GC): Apply for volatile and semi-volatile non-polar compounds. Use phenylmethylpolysiloxane columns with a temperature ramp (e.g., 40-300°C) [17].

- High-Resolution Mass Spectrometry (HRMS):

- Instrumentation: Utilize Quadrupole Time-of-Flight (Q-TOF) or Orbitrap mass spectrometers capable of achieving a resolution >20,000 and mass accuracy ≤ 5 ppm [17].

- Ionization: Employ electrospray ionization (ESI) in both positive and negative modes for LC-HRMS to maximize coverage. Use electron ionization (EI) for GC-HRMS [16] [17].

- Data Acquisition: Operate in data-dependent acquisition (DDA) mode to collect both full-scan MS1 and fragmentation MS/MS (MS2) spectra for the most abundant ions in each cycle. Data-independent acquisition (DIA) is an alternative for comprehensive fragmentation data [18].

- Data Pre-processing: Convert raw instrument data into a structured feature table using software (e.g., XCMS, MS-DIAL, or vendor-specific tools). This involves:

- Peak picking and deconvolution

- Retention time alignment and correction

- Isotope and adduct annotation

- Generation of a feature-intensity matrix (samples × features) [1]

Quality Control: Inject and analyze solvent blanks, pooled QC samples, and standard reference materials periodically throughout the sequence to monitor instrument stability and data quality [1] [17].

Stage 3: ML-Oriented Data Processing and Analysis

Objective: Process the feature-intensity matrix to identify significant patterns, classify contamination sources, and select discriminatory chemical features.

Critical Data Preprocessing Steps: Before model training, the feature table must be rigorously preprocessed to ensure data quality and model robustness [1] [19].

- Missing Value Imputation: Replace missing values using methods like k-nearest neighbors (KNN) imputation with a low imputation threshold (e.g., features missing in >80% of samples should be removed) [1].

- Noise Filtering: Remove features with low intensity or high analytical variance (e.g., >30% RSD in QC samples) [20].

- Normalization: Apply total ion current (TIC) or probabilistic quotient normalization (PQN) to correct for overall signal intensity differences between samples [1].

- Data Scaling: Use autoscaling (mean-centering and division by standard deviation) or Pareto scaling to make features comparable [19].

The subsequent ML analysis workflow, encompassing exploratory analysis, model selection, and feature prioritization, is illustrated in Figure 2.

Detailed Protocol for ML Analysis:

- Exploratory Analysis and Dimensionality Reduction:

- Perform Principal Component Analysis (PCA), an unsupervised learning method, to visualize inherent sample clustering, identify outliers, and understand major sources of variance [1].

- Apply t-distributed Stochastic Neighbor Embedding (t-SNE) for non-linear dimensionality reduction if complex, non-linear patterns are suspected [1].

- Pattern Recognition and Classification:

- Unsupervised Clustering: Use k-means or Hierarchical Cluster Analysis (HCA) to group samples based on chemical similarity without prior knowledge of sample classes [1].

- Supervised Classification: Train models on labeled datasets (e.g., samples with known contamination sources) to predict sources of unknown samples. Key algorithms include:

- Feature Selection: Use model-derived metrics (e.g., Variable Importance in Projection from PLS-DA, Mean Decrease in Gini from Random Forest) or dedicated algorithms like Recursive Feature Elimination (RFE) to identify the subset of chemical features most discriminatory between sources [1]. These features represent the potential "chemical fingerprint" of a source.

Model Validation: Always use k-fold cross-validation (e.g., 5-fold or 10-fold) during model training to tune hyperparameters and provide an initial, robust estimate of model performance, thus mitigating overfitting [1] [21].

Stage 4: Result Validation

Objective: Ensure the reliability, chemical accuracy, and environmental relevance of the ML-NTA outputs through a multi-tiered validation strategy [1].

Detailed Protocol:

- Analytical Confidence Validation:

- Confidence-Level Assignment: Assign a confidence level (e.g., Level 1-5) to compound identifications based on the Schymanski et al. framework. Level 1 (confirmed structure) requires matching retention time and MS/MS spectrum with an authentic standard [1] [17].

- Reference Materials: Use Certified Reference Materials (CRMs) or commercially available analytical standards to verify the identity and chromatographic behavior of key marker compounds [1].

- Model Generalizability Validation:

- External Validation Set: Evaluate the final model's performance on a completely independent set of samples that were not used in any part of the training or cross-validation process. This is the gold standard for assessing real-world predictive power [1].

- Performance Metrics: Report balanced accuracy, F1-score, Matthews Correlation Coefficient (MCC), and area under the ROC curve to comprehensively evaluate model performance [21].

- Environmental Plausibility Assessment:

- Contextual Data: Correlate model predictions with geospatial data (e.g., proximity to known emission sources), land use information, or co-occurring traditional water quality parameters [1].

- Source-Specific Markers: Verify that the chemical features identified by the model align with known industrial or agricultural chemicals used in the sample area [1] [1] [20].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 1: Key reagents, materials, and software for implementing the ML-NTA workflow.

| Category | Item | Function in the Workflow |

|---|---|---|

| Sample Preparation | Oasis HLB & other mixed-mode SPE sorbents | Broad-spectrum extraction of diverse organic contaminants from water [1] |

| QuEChERS Extraction Kits | Efficient extraction and cleanup for complex matrices (e.g., soil, biota) [1] | |

| Analytical Grade Solvents (MeOH, ACN, Acetone) | Sample extraction, reconstitution, and mobile phase preparation [17] | |

| Data Acquisition | C18 Reversed-Phase UHPLC Columns | High-efficiency chromatographic separation of a wide polarity range [17] |

| Instrument Tuning and Calibration Solutions | Ensures mass accuracy and reproducibility of the HRMS instrument [17] | |

| Retention Index Marker Standards | Aids in retention time alignment and prediction for compound identification [22] | |

| Data Processing | NIST Mass Spectral Library | Primary reference library for identifying compounds from GC-EI-MS spectra [17] |

| mzCloud / MassBank | MS/MS spectral libraries for LC-HRMS data [16] | |

| XCMS / MS-DIAL | Open-source software for peak picking, alignment, and feature table creation [1] | |

| Machine Learning | Scikit-learn (Python) / Caret (R) | Core libraries providing a unified interface for numerous ML algorithms [21] [19] |

| Compound Discoverer / MassHunter | Vendor software platforms offering integrated workflows from feature detection to statistical analysis [16] |

Performance of ML Algorithms in NTA

The selection of an appropriate machine learning algorithm is critical and depends on the specific research goal, data structure, and need for interpretability. Table 2 summarizes the performance characteristics of commonly used algorithms in NTA studies.

Table 2: Comparison of machine learning algorithms used in non-targeted analysis.

| Algorithm | Type | Key Strengths | Performance Notes | Best Suited For |

|---|---|---|---|---|

| Random Forest (RF) | Ensemble (Supervised) | High accuracy, robust to outliers, provides feature importance [21] | Achieved MCC of 0.8203 and ACC of 0.9185 in nanobody binding prediction [21] | General-purpose classification and feature ranking [1] |

| Support Vector Classifier (SVC) | Supervised | Effective in high-dimensional spaces, versatile via kernel functions [1] | Balanced accuracy of 85.5-99.5% for PFAS source classification [1] | Complex, non-linear classification problems |

| PLS-DA | Supervised | Handles multicollinearity, provides direct feature weights (VIP scores) [1] | Effective for identifying source-specific indicator compounds [1] | Dimensionality reduction and classification when features are highly correlated |

| Principal Component Analysis (PCA) | Unsupervised | Reduces dimensionality, identifies patterns and outliers [1] | Foundation for exploratory data analysis [1] | Initial data exploration, visualization, and outlier detection |

| AdaBoost | Ensemble (Supervised) | Combines multiple weak learners for high accuracy | Demonstrated strong performance with MCC of 0.7456 [21] | Boosting model performance on difficult-to-classify samples |

| Logistic Regression (LR) | Supervised | Simple, interpretable, provides probability outputs | Used for screening PFAS source markers [1] | Linear classification problems requiring model interpretability |

This application note has detailed a standardized four-stage workflow for machine learning-assisted non-targeted analysis, providing a robust framework for contaminant discovery and source identification. The integration of advanced HRMS instrumentation with powerful ML algorithms enables researchers to move beyond targeted analysis and gain a systems-level understanding of complex chemical environments. By adhering to the detailed protocols for sample preparation, data acquisition, ML-oriented processing, and multi-tiered validation, scientists can generate reliable, actionable data. The ongoing development of standardized methods, open-source data processing tools, and more comprehensive chemical databases will further solidify ML-assisted NTA as an indispensable tool in environmental monitoring, exposure science, and public health research.

Visual Workflows

Machine learning (ML) is revolutionizing the identification and tracking of environmental contaminants, enabling researchers to move beyond traditional targeted analysis. This is particularly critical for complex pollutants like per- and polyfluoroalkyl substances (PFAS), pharmaceuticals, and industrial chemicals, where non-targeted analysis (NTA) using high-resolution mass spectrometry (HRMS) generates complex, high-dimensional data [1]. ML algorithms excel at identifying latent patterns within this data, making them indispensable for contaminant source identification—a fundamental step in environmental protection and public health decision-making [1] [23]. This application note details specific protocols and methodologies where ML-driven NTA is successfully applied to track these pervasive contaminants, providing a framework for researchers in environmental chemistry and drug development.

Application Notes & Quantitative Performance

The integration of machine learning with non-targeted analysis has yielded significant advancements in detecting and sourcing various contaminant classes. The table below summarizes key performance metrics from recent studies.

Table 1: Performance Metrics of ML Models in Contaminant Tracking

| Contaminant Class | ML Model Applied | Key Application | Reported Performance Metrics |

|---|---|---|---|

| PFAS [13] | Light Gradient Boosting Machine (LightGBM) | Pseudo-targeted screening in water samples using MS2 data | Accuracy >97% across five evaluation metrics (e.g., precision, recall); Strong generalizability on external validation datasets |

| PFAS [1] | Random Forest (RF), Support Vector Classifier (SVC), Logistic Regression (LR) | Source identification and classification of 222 PFAS in 92 samples | Balanced classification accuracy ranging from 85.5% to 99.5% across different contamination sources |

| Pharmaceuticals [24] | Deep Neural Networks (DNNs) | Bioactivity prediction and molecular design in drug discovery | Applied for pattern recognition in high-dimensional data; improves decision-making in development pipelines |

| Industrial Chemicals [23] | XGBoost, Random Forests | Predictive modeling for environmental hazard and risk assessment | Most cited algorithms in environmental chemical research (Bibliometric analysis of 3150 publications) |

Detailed Experimental Protocols

Protocol 1: ML-Based Pseudo-Targeted Screening for PFAS in Aqueous Matrices

This protocol outlines a machine learning framework for the high-throughput identification of per- and polyfluoroalkyl substances (PFAS) in water samples without authentic analytical standards, using a pseudo-targeted screening approach [13].

1. Objective: To construct a robust ML model capable of accurately classifying PFAS compounds in complex environmental water samples based on tandem mass spectrometry (MS2) data.

2. Materials and Reagents:

- Water Samples: Environmental water samples (e.g., surface water, groundwater) collected in certified clean glass or polypropylene containers.

- Solid Phase Extraction (SPE) Cartridges: Mixed-mode or broad-spectrum sorbents (e.g., Oasis HLB, ISOLUTE ENV+) for compound enrichment [1].

- LC-MS Grade Solvents: Methanol, acetonitrile, and water for sample extraction and chromatography.

- Instrumentation: Liquid Chromatography system coupled to a High-Resolution Tandem Mass Spectrometer (LC-HRMS/MS).

3. Procedural Steps:

Step 1: Dataset Curation

- Collect PFAS MS2 spectral data from public repositories such as MassBank.

- Curate a dataset containing fragment ion information, precursor m/z, and retention time indices.

- Annotate data with known PFAS structures and their fragment-based features.

Step 2: Feature Engineering

- Calculate molecular descriptors and fragment-related features from the MS2 data.

- Perform correlation analysis (e.g., using Pearson correlation coefficient) to identify and remove highly redundant features.

- Split the curated dataset into training and testing subsets (e.g., 80/20 split).

Step 3: Model Training and Selection

- Train multiple classifier algorithms (e.g., ten different models, including LightGBM, RF, SVC).

- Optimize hyperparameters for each model using techniques like grid search or random search.

- Select the best-performing model based on comprehensive metrics (Accuracy, Precision, Recall, F1-Score, AUC-ROC).

Step 4: Model Interpretation and Validation

- Apply model interpretability tools like SHAP (SHapley Additive exPlanations) to identify critical fragment features contributing to PFAS classification.

- Validate the final model's generalizability using an external dataset not used during training, such as experimentally measured LC-MS data from new environmental samples.

Protocol 2: Source Identification of PFAS Using Supervised Classification

This protocol employs supervised machine learning for attributing environmental PFAS samples to specific contamination sources by recognizing chemical fingerprints [1].

1. Objective: To classify HRMS-based NTA data of environmental samples into known source categories (e.g., industrial effluents, fire-fighting foam runoff, household wastewater) using supervised ML models.

2. Materials and Reagents:

- Environmental Samples: A diverse set of samples from known and unknown source types.

- QC Samples: Include batch-specific quality control samples (e.g., pooled samples) to ensure data integrity [1].

- Data Processing Software: Use platforms capable of peak picking, alignment, and componentization (e.g., XCMS) to generate a feature-intensity matrix.

3. Procedural Steps:

Step 1: Data Generation and Preprocessing

- Analyze samples using HRMS to obtain raw spectral data.

- Process data to generate a feature-intensity matrix: perform peak detection, retention time alignment, and group related features (adducts, isotopes).

- Apply data preprocessing: filter noise, impute missing values (e.g., with k-nearest neighbors), and normalize data (e.g., Total Ion Current normalization).

Step 2: Feature Selection and Dimensionality Reduction

- Perform univariate statistical analysis (e.g., ANOVA) to identify features with significant variation across potential sources.

- Apply dimensionality reduction techniques like Principal Component Analysis (PCA) to visualize sample groupings and identify major patterns.

Step 3: Supervised Model Training

- Assign source labels to samples in the training set.

- Train classifiers such as Random Forest (RF) or Support Vector Classifier (SVC) on the labeled feature-intensity data.

- Use recursive feature elimination to refine input variables and optimize model performance and interpretability.

Step 4: Model Validation and Environmental Plausibility Check

- Validate model performance using k-fold cross-validation (e.g., 10-fold) and an independent test set.

- Assess environmental plausibility by correlating model predictions with contextual data (e.g., geospatial proximity to known emission sources) [1].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of ML-driven NTA requires specific materials and software tools. The following table details key components of the research toolkit for these applications.

Table 2: Essential Research Reagents and Materials for ML-NTA Workflows

| Item Name | Function/Application | Specific Examples/Notes |

|---|---|---|

| High-Resolution Mass Spectrometer (HRMS) | Generates high-fidelity spectral data for non-targeted analysis; essential for detecting thousands of unknown chemicals [1]. | Quadrupole Time-of-Flight (Q-TOF), Orbitrap systems [1]. |

| Solid Phase Extraction (SPE) Sorbents | Enriches and cleans up samples, improving sensitivity and removing matrix interference for downstream analysis [1]. | Mixed-mode sorbents; Oasis HLB, ISOLUTE ENV+, Strata WAX, and WCX [1]. |

| Chromatography Systems | Separates complex mixtures before MS analysis, reducing ion suppression and providing retention time as a key feature for identification. | Liquid Chromatography (LC) or Gas Chromatography (GC) systems coupled to HRMS [1]. |

| Certified Reference Materials (CRMs) | Validates analytical methods and confirms compound identities, ensuring data quality and model reliability [1]. | Used for target compounds where available. |

| Data Processing Software | Converts raw HRMS data into a structured feature table suitable for ML analysis [1]. | Performs peak detection, retention time correction, and alignment (e.g., XCMS). |

| Machine Learning Frameworks | Provides the algorithmic foundation for building, training, and validating predictive models for source identification and classification. | TensorFlow, PyTorch, Scikit-learn [24]. |

The Methodology Deep Dive: Building an ML-NTA Workflow for Source Tracking

The efficacy of non-target analysis (NTA) for identifying emerging environmental contaminants is fundamentally dependent on the initial sample preparation stage. Comprehensive analyte recovery from complex biological and environmental matrices is a critical prerequisite for generating high-quality data suitable for machine learning (ML) modeling. Inefficient or inconsistent recovery introduces biases and artifacts that can compromise subsequent chemical identification and source attribution. This protocol details optimized sample treatment and extraction procedures designed to maximize analyte recovery, ensure high reproducibility, and produce reliable data for ML-driven contaminant source identification research. The integration of robust sample preparation forms the foundational step in a workflow that aims to leverage computational models for enhanced environmental risk assessment [25].

The challenge in NTA lies in the vast structural diversity of analytes and the complexity of sample matrices, ranging from biological fluids to environmental waters and soils. Sample preparation serves to isolate, purify, and concentrate analytes of interest while removing interfering compounds. Recent advancements have focused on improving recovery efficiency and reproducibility through novel materials and techniques, which are essential for building accurate ML models that predict contaminant presence and origin [26] [25]. The following sections provide a detailed guide to achieving these objectives through carefully selected methods and protocols.

Current State and Emerging Trends in Sample Preparation

Traditional sample preparation techniques have included Protein Precipitation (PPT), Liquid-Liquid Extraction (LLE), and Solid-Phase Extraction (SPE). While these methods remain prevalent, they often suffer from limitations such as moderate reproducibility, high solvent consumption, and inadequate recovery for certain analyte classes. SPE, considered the gold standard for many applications, has been plagued by issues like inconsistent resin mass, channeling, and voiding in traditionally loose-packed cartridges, leading to variable recovery rates [27].

The field is witnessing a paradigm shift with the advent of novel extraction methods and material technologies. Key emerging trends include:

- Novel Extraction Methods: Techniques such as Microwave-Assisted Extraction (MAE), Ultrasound-Assisted Extraction (UAE), and Pressurized Liquid Extraction (PLE) offer improved recovery rates and reduced extraction times. MAE utilizes microwave energy to heat samples rapidly, accelerating the extraction of analytes from solid matrices. UAE employs ultrasonic waves to create cavitation bubbles, enhancing mass transfer, while PLE uses high pressure and temperature for efficient extraction [26].

- Advanced Sorbent Technologies: Nanotechnology has introduced innovative materials like magnetic nanoparticles and carbon nanotubes. These materials offer high surface areas and can be functionalized for selective analyte capture, thereby improving recovery efficiency and reducing matrix effects [26].

- Composite SPE Technology: A significant innovation is the development of composite SPE products that immobilize chromatographic resin within a porous plastic matrix. This design eliminates the inconsistencies of loose packing, ensuring consistent bed weights and optimal liquid flow. Studies demonstrate that this technology can achieve an average recovery of 91% with a relative standard deviation (RSD) of less than 2%, markedly outperforming traditional loose-packed plates which showed 88% recovery with a 6% RSD [27].

These advancements are crucial for NTA, as they provide the consistent, high-quality data required for training and validating machine learning models in contaminant discovery [25].

Application Notes: Experimental Protocols

Composite Solid-Phase Extraction (SPE) Protocol

This protocol describes a method using composite C18 SPE plates for the extraction of a wide range of analytes from liquid samples. The composite technology ensures high reproducibility, which is vital for generating robust datasets for ML analysis [27].

- Principle: Analytes are retained on a C18 reversed-phase sorbent embedded in a porous plastic composite matrix. Interferences are washed away, and target analytes are eluted with a strong solvent.

- Applications: Sample cleanup and concentration for non-target analysis of emerging contaminants (e.g., pharmaceuticals, pesticides, industrial chemicals) in water, urine, or processed biological samples.

- Research Reagent Solutions:

| Item | Function in Protocol |

|---|---|

| Microlute CSi C18 Composite Plate (10 mg) | The core extraction medium; composite structure ensures even flow and high reproducibility. |

| Methanol (HPLC-grade) | Conditions the sorbent and serves as the elution solvent. |

| Water (HPLC-grade) | Equilibrates the sorbent after conditioning and is used as a wash solvent. |

| Acid/Base for pH adjustment | Neutralizes charge on acidic/basic analytes during load or creates charge for elution. |

| Agilent 1260 HPLC with MSD | Instrumentation for the final analysis of extracted samples. |

- Methodology:

- Conditioning: Add 500 µL of methanol to each well of the composite plate. Apply gentle vacuum or positive pressure until the solvent just passes through the bed.

- Equilibration: Immediately add 500 µL of HPLC-grade water to each well. Pass through until the bed is just dry. Do not allow the sorbent to dry out completely between steps.

- Sample Loading: Adjust the pH of the 500 µL sample load to neutralize charges on acidic and basic compounds to facilitate retention. Apply the sample to the well and pass through slowly.

- Washing: Add 500 µL of HPLC-grade water to each well to remove weakly retained interferences. Pass through completely.

- Elution: Elute the analytes with 2 x 250 µL of methanol. The pH of the methanol may be adjusted to ionize acidic/basic compounds and ensure efficient elution. Collect the eluate.

- Post-processing: Evaporate the collected eluate to dryness under a gentle stream of nitrogen. Reconstitute the dried sample in a solvent compatible with the subsequent analytical instrument (e.g., LC-MS mobile phase) [27].

Ultrasound-Assisted Extraction (UAE) for Solid Matrices

This protocol is optimized for extracting analytes from complex solid matrices, such as soil, sediment, or tissue, which is a common challenge in environmental NTA.

- Principle: Ultrasonic energy creates cavitation bubbles in the solvent, which implode and generate micro-turbulence and high-velocity jets. This disrupts the sample matrix and enhances the mass transfer of analytes into the solvent.

- Applications: Extraction of organic contaminants from solid environmental samples or biological tissues prior to SPE cleanup and LC-MS analysis.

- Methodology:

- Sample Preparation: Homogenize and accurately weigh approximately 1 g of the solid sample into a centrifuge tube.

- Solvent Addition: Add a suitable extraction solvent (e.g., a dichloromethane/methanol mixture) at a solvent-to-sample ratio of 10:1 (v/w).

- Sonication: Place the tube in an ultrasonic bath or use an ultrasonic probe. Extract for 15 minutes at a controlled temperature (e.g., 30°C) to prevent analyte degradation.

- Separation: Centrifuge the mixture at 4000 rpm for 10 minutes to pellet the solid debris.

- Collection: Carefully decant or pipette the supernatant into a clean tube.

- Concentration: Repeat the extraction once or twice and combine the supernatants. Evaporate the combined extract to near dryness and reconstitute in a small volume of a solvent compatible with a downstream cleanup step (e.g., SPE) or direct analysis [26].

Data Presentation and Analysis

The quantitative performance of different extraction techniques is critical for selection and validation. The following tables summarize recovery and reproducibility data from comparative studies, providing a basis for informed method selection.

Table 1. Percent Recovery of Selected Analytes using Composite vs. Loose Packed C18 SPE Plates (n=6) [27]

| Compound | Analyte Type | LogP [27] | Composite Plate | Loose Packed Plate |

|---|---|---|---|---|

| Atenolol | Basic | 0.16 | 91% | 88% |

| Pindolol | Basic | 1.75 | 92% | 89% |

| Dexamethasone | Neutral | 1.83 | 90% | 87% |

| Ketoprofen | Acidic | 3.12 | 92% | 90% |

| Naproxen | Acidic | 3.18 | 91% | 89% |

| Propranolol | Basic | 3.48 | 93% | 90% |

| Nortriptyline | Basic | 3.90 | 92% | 89% |

| Niflumic acid | Acidic | 4.43 | 91% | 86% |

| Average Recovery | 91% | 88% |

Table 2. Reproducibility (%RSD) of Recovery for Composite vs. Loose Packed C18 SPE Plates (n=6) [27]

| Compound | Composite Plate (%RSD) | Loose Packed Plate (%RSD) |

|---|---|---|

| Atenolol | < 2% | ~6% |

| Pindolol | < 2% | ~6% |

| Dexamethasone | < 2% | ~5% |

| Ketoprofen | < 2% | ~7% |

| Naproxen | < 2% | ~6% |

| Propranolol | < 2% | ~5% |

| Nortriptyline | < 2% | ~6% |

| Average %RSD | < 2% | ~6% |

The data unequivocally demonstrates the superior performance of composite SPE technology, which provides not only high recovery but also exceptional reproducibility. This low variability is a key asset for non-target analysis, as it minimizes technical noise and enhances the signal from true chemical patterns, thereby improving the quality of data for machine learning applications [27] [25].

Workflow Integration for Machine Learning Applications

The sample treatment and extraction stage is the first and most critical physical data generation point in an integrated workflow for ML-based contaminant identification. The following diagram illustrates the logical flow from sample to model-ready data.

Sample Prep Workflow for ML-Grade Data

In this workflow, the Sample Treatment & Extraction module is governed by the protocols detailed in this document. Its output is a cleaned and concentrated extract ready for High-Resolution Mass Spectrometry (HRMS). A rigorous Quality Control checkpoint, assessing recovery and reproducibility against predefined thresholds (e.g., RSD < 5%), is essential. Data that fails QC may necessitate a repeat of the extraction, ensuring only high-fidelity data proceeds. Successful data is then pre-processed into a format suitable for ML algorithms, which can identify patterns and correlations indicative of specific contaminant sources [25].

The role of advanced sample preparation in enabling ML is profound. As one review notes, ML-assisted NTA can "significantly enhance the detection, quantification, and evaluation of emerging environmental contaminants," but this potential can only be realized with a foundation of reliable input data generated by robust extraction protocols [25].

The pursuit of comprehensive analyte recovery is not merely a technical objective but a fundamental requirement for the success of machine learning in non-target analysis and contaminant source identification. This document has outlined why the sample preparation stage is critical and has provided detailed, validated protocols—particularly for composite SPE and UAE—that deliver the high recovery and exceptional reproducibility needed. By adhering to these standardized methods, researchers can generate analytically robust and consistent datasets. This high-quality data forms the reliable foundation upon which machine learning models can be effectively trained and deployed to accurately identify the origin and fate of emerging environmental contaminants, ultimately contributing to more effective public health and environmental protection.

Within the framework of machine learning (ML) for non-target analysis (NTA), the generation of high-quality, structured data is a critical prerequisite for successful model training and contaminant source identification [1]. This stage transforms raw analytical signals from high-resolution mass spectrometry (HRMS) into a structured feature-intensity matrix, which serves as the foundational dataset for all subsequent ML-driven pattern recognition and classification tasks [1] [11]. The reliability of the final ML model is directly contingent upon the precision and comprehensiveness of the data produced in this phase.

Experimental Protocol: From Sample to Digital Feature Table

Instrumentation and Data Acquisition

The core of this protocol relies on High-Resolution Mass Spectrometry, typically coupled with liquid or gas chromatography (LC/GC). Key platforms include quadrupole time-of-flight (Q-TOF) and Orbitrap systems, which provide the high mass accuracy and resolution necessary for discerning thousands of chemical features [1] [28]. The data acquisition is performed in full-scan mode, often augmented with data-dependent (DDA) or data-independent (DIA) acquisition modes to collect fragmentation spectra (MS/MS) for compound annotation [29].

Critical Data Acquisition Parameters:

- Mass Accuracy: Typically < 5 ppm for confident elemental composition assignment.

- Resolution: Typically > 25,000 (FWHM) to separate isobaric compounds.

- Chromatographic Separation: Essential to reduce sample complexity and matrix effects.

Data Processing Workflow

The transformation of raw HRMS data into a feature-intensity matrix involves a multi-step computational process. The following workflow diagram outlines the key stages and their logical relationships.

Diagram 1: The HRMS Data Processing Workflow for Feature-Intensity Matrix Creation.

Detailed Protocol Steps

Peak Picking and Chromatogram Deconvolution:

- Objective: To identify all chromatographic peaks from the raw data files.