Mapping the Exponential Rise of Machine Learning in Environmental Chemical Research: A 2025 Bibliometric Analysis of Trends, Gaps, and Future Directions

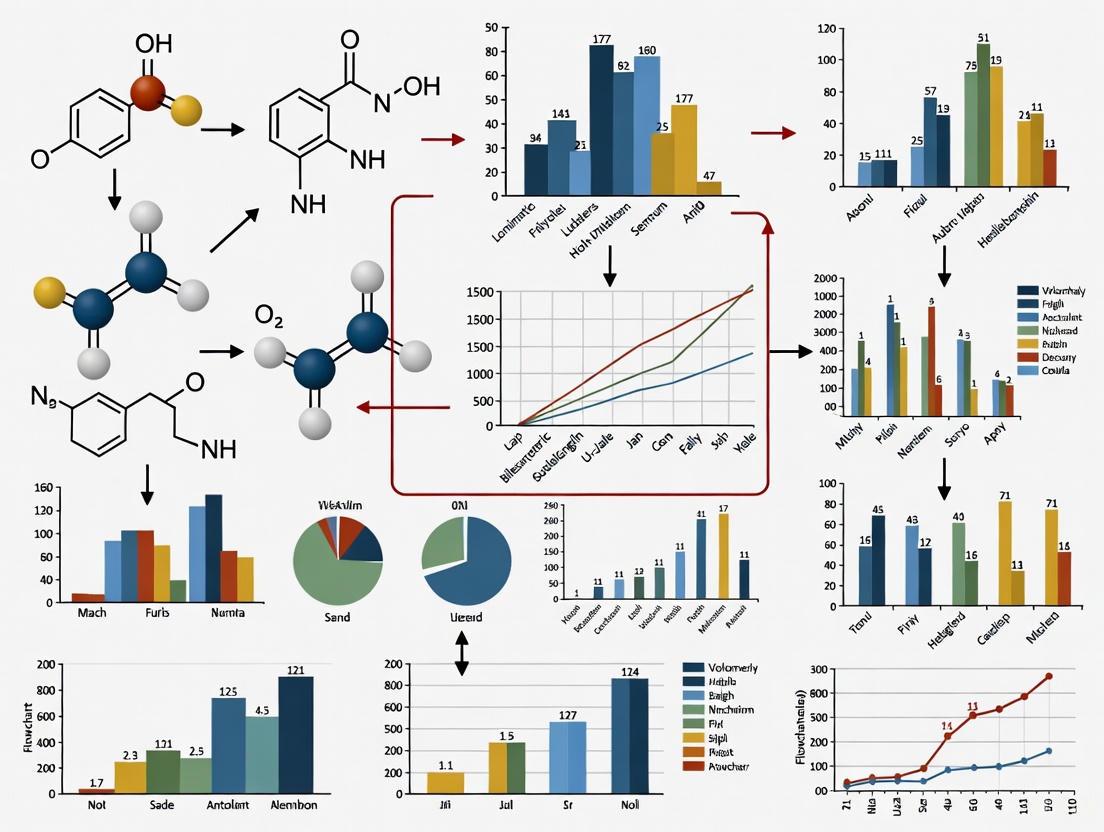

This bibliometric analysis synthesizes findings from 3150 peer-reviewed publications to map the rapid evolution of machine learning (ML) in environmental chemical research.

Mapping the Exponential Rise of Machine Learning in Environmental Chemical Research: A 2025 Bibliometric Analysis of Trends, Gaps, and Future Directions

Abstract

This bibliometric analysis synthesizes findings from 3150 peer-reviewed publications to map the rapid evolution of machine learning (ML) in environmental chemical research. The analysis reveals an exponential publication surge from 2015, dominated by China and the United States, with XGBoost and random forests as the dominant algorithms. The study identifies eight key thematic clusters, from water quality prediction to per-/polyfluoroalkyl substances (PFAS), and uncovers a critical 4:1 research bias toward environmental endpoints over human health integration. For researchers and drug development professionals, this article provides a comprehensive landscape of methodological applications, troubleshooting insights on data and model limitations, and a forward-looking perspective on translating ML advances into actionable risk assessments and sustainable biomedical innovations.

The Landscape of ML in Environmental Chemicals: Exponential Growth, Thematic Clusters, and Global Research Leaders

The integration of machine learning (ML) into environmental chemical research represents a paradigm shift, moving from traditional toxicological methods toward data-driven, predictive science. This transformation is characterized by an explosive growth in scientific publications, reflecting the global research community's rapid adoption of these advanced computational techniques. The publication trajectory in this interdisciplinary field serves as a critical indicator of technological adoption, emerging research priorities, and future directions for scientists, policymakers, and drug development professionals engaged in chemical risk assessment and environmental health. This technical analysis employs bibliometric data from peer-reviewed literature to quantify and characterize this exponential surge, providing an evidence-based framework for understanding the evolution of ML applications in environmental chemistry from 1996 to 2025. The analysis is situated within a broader thesis on bibliometric trends, offering not only a quantitative assessment of growth patterns but also deconstructing the methodological protocols and research tools driving this scientific revolution.

Quantitative Analysis of Publication Growth

Annual Publication Trends

The analysis of publication data from the Web of Science Core Collection reveals a dramatic acceleration in research output at the intersection of machine learning and environmental chemicals. The period from 1996 to 2015 was characterized by modest annual publication outputs, consistently remaining below 25 papers per year, indicating nascent-stage development and limited institutional engagement [1]. A significant inflection point occurred around 2015, marking the beginning of an exponential growth phase that has continued unabated through 2025.

Table 1: Annual Publication Count for Machine Learning in Environmental Chemical Research (1996-2025)

| Year | Publication Count | Cumulative Publications | Growth Rate (%) |

|---|---|---|---|

| 1996-2014 | <25 per year | ~200 (estimated) | - |

| 2020 | 179 | ~700 (estimated) | >600% from 2015 |

| 2021 | 301 | ~1000 | 68% |

| 2024 | 719 | ~2500 | 139% (from 2021) |

| 2025* | 545 (mid-year) | ~3000 | Projected >2024 |

Data for 2025 is partial, current as of mid-2025 [1].

The data indicates that approximately 75% of the total publications in this domain have appeared since 2017, underscoring the remarkable recent acceleration [2]. The 2025 output, with 545 publications already recorded by mid-year, projects to surpass the 2024 record, confirming the field's continued upward trajectory and sustained global research interest [1]. This growth pattern aligns with broader trends observed in computational toxicology and artificial intelligence applications across scientific disciplines, but with a distinctive acceleration pattern specific to environmental chemical applications [1].

Geographic and Institutional Contributions

The global distribution of research output reveals concentrated expertise with emerging worldwide participation. An analysis of 4,254 institutions across 94 countries indicates that the People's Republic of China leads in raw publication volume with 1,130 publications, while the United States follows with 863 publications but demonstrates stronger collaborative networks as evidenced by a higher Total Link Strength (TLS) of 734 compared to China's 693 [1]. This suggests more extensive international partnerships in U.S.-led research initiatives.

Table 2: Top Contributing Countries and Institutions in ML for Environmental Chemical Research

| Rank | Country | Publications | Total Link Strength (TLS) | Leading Institution | Institutional Publications |

|---|---|---|---|---|---|

| 1 | China | 1,130 | 693 | Chinese Academy of Sciences | 174 |

| 2 | United States | 863 | 734 | U.S. Department of Energy | 113 |

| 3 | India | 255 | Data Not Provided | Data Not Provided | Data Not Provided |

| 4 | Germany | 232 | Data Not Provided | Data Not Provided | Data Not Provided |

| 5 | England | 229 | Data Not Provided | Data Not Provided | Data Not Provided |

Other significant contributors include India (255 publications), Germany (232 publications), and England (229 publications), reflecting the global scientific priority placed on this research domain [1]. At the institutional level, the Chinese Academy of Sciences leads with 174 publications over the past decade, followed by the United States Department of Energy with 113 publications, highlighting the pivotal role of major research organizations and national laboratories in advancing this field [1].

Methodological Framework for Bibliometric Analysis

Data Collection Protocol

The quantitative trends presented in this analysis derive from a rigorous bibliometric methodology designed to ensure comprehensive data capture and reproducibility. The primary data source was the Web of Science Core Collection, a curated database renowned for its quality-controlled scientific literature indexing [1]. The search query employed a Boolean logic structure: "machine learning" AND "environmental chemicals" applied across all searchable fields including title, abstract, author keywords, and Keywords Plus [1].

Temporal parameters were set to encompass publications from 1985 to 2025, ensuring capture of the complete historical trajectory while focusing analytical attention on the period of most significant growth (1996-2025) [1]. The dataset was filtered to include only article-type documents written in English, maintaining consistency in publication type and language accessibility [1]. The final refined dataset comprised 3,150 relevant publications that served as the foundation for all subsequent quantitative and thematic analyses [1].

Analytical Techniques and Software Tools

The analytical workflow employed multiple complementary approaches to extract meaningful patterns from the publication data:

- Descriptive Statistics: Basic publication metrics, including annual distribution, author contributions, and institutional affiliations, were generated using the Web of Science built-in data analysis tool [1].

- Network Analysis: VOSviewer version 1.6.20 was utilized for in-depth bibliometric mapping and network visualization [1]. The software performed several analytical operations:

- Co-citation analysis of cited authors, cited sources, and cited references

- Co-occurrence analysis of author keywords

- Cluster analysis to identify major thematic structures within the literature

- Temporal and Statistical Analysis: The R programming environment version 4.2.2 provided complementary visualizations and statistical analyses, particularly focusing on:

- Construction of temporal keyword evolution maps

- Identification and visualization of frequently mentioned and emerging chemicals

- Extraction of terms from abstracts, author keywords, and Keywords Plus

This multi-method approach enabled both quantitative assessment and network-based insights into the development and intellectual structure of ML applications in environmental chemical research [1]. A similar B-SLR (Bibliometric-Systematic Literature Review) approach has been successfully applied in related fields, such as water quality prediction, where researchers collected 1,822 articles from Scopus databases and employed topic modeling to analyze trends [3].

Experimental Protocols for Machine Learning Applications

The publication surge has been driven by innovative methodological applications of machine learning to specific environmental chemical challenges. Three prominent experimental protocols exemplify this trend:

Enhanced Spectral Library Matching

Objective: Improve the accuracy of mass spectrometry-based chemical identification through advanced spectral similarity algorithms beyond traditional cosine similarity [4].

Workflow:

- Data Acquisition: Collect high-resolution tandem mass spectrometry (HRMS) data from environmental samples [4].

- Spectral Comparison: Compare unknown spectra against reference databases (NIST, GNPS, MassBank) using ML-enhanced similarity algorithms [4].

- Similarity Scoring: Implement advanced algorithms such as:

- Result Validation: Apply false discovery rate (FDR) estimation, with optimal methods achieving 5.8% FDR at 0.75 similarity score threshold compared to 9.6% FDR using traditional dot product similarity [4].

Chemical Mixture Analysis

Objective: Identify important components and interactions within complex environmental chemical mixtures associated with health outcomes [5].

Workflow:

- Exposure Assessment: Measure multiple chemical concentrations in biological or environmental samples (e.g., 62 chemicals in urine samples) [6].

- Method Selection: Implement appropriate statistical ML methods based on research question:

- Model Validation: Use simulation studies to compare method performance under varied sample sizes, number of pollutants, and signal-to-noise ratios [5].

- Implementation: Utilize integrated R package "CompMix" as a comprehensive toolkit for environmental mixtures analysis [5].

Water Quality Prediction Modeling

Objective: Develop accurate predictive models for freshwater quality parameters using historical data and environmental variables [3].

Workflow:

- Data Collection: Compile large-scale datasets from in situ sensors, remote sensing, and hydrological models [3].

- Algorithm Selection: Apply predominant techniques based on data characteristics:

- Ensemble Models: Random Forest (RF), Light Gradient Boosting Machine (LightGBM), Extreme Gradient Boosting (XGB) - representing 43.07% and 25.91% of approaches, respectively [3].

- Deep Neural Networks: Long Short-Term Memory (LSTM) and Convolutional Neural Networks (CNN) for complex temporal dynamics [3].

- Traditional Algorithms: Artificial Neural Networks (ANN), Support Vector Machines (SVMs), Decision Trees (DT) [3].

- Model Training & Validation: Implement cross-validation and performance metrics (e.g., R², RMSE) specific to water quality indices [3].

- Interpretation: Apply explainable AI techniques such as SHAP (Shapley Additive Explanations) for model transparency [3].

The advancement of ML applications in environmental chemical research relies on a curated collection of computational tools, databases, and analytical resources. The following table catalogues the essential components of the research infrastructure driving the publication surge documented in this analysis.

Table 3: Essential Research Resources for ML in Environmental Chemical Studies

| Resource Category | Specific Tool/Database | Application Function | Key Characteristics |

|---|---|---|---|

| Mass Spectral Databases | NIST | Spectral library matching | 2,374,064 spectra; commercial [4] |

| GNPS | Spectral library matching | 592,542 spectra; nonprofit [4] | |

| MassBank | Spectral library matching | 122,512 spectra; nonprofit [4] | |

| Programming Frameworks | R Statistical Environment | Data analysis, visualization, statistical modeling | Comprehensive packages for mixtures analysis (CompMix) [1] [5] |

| Python with ML libraries (scikit-learn, TensorFlow, PyTorch) | Algorithm development, deep learning models | Flexible implementation of custom neural architectures [7] | |

| Bibliometric Software | VOSviewer | Network visualization, co-citation analysis | Identifies thematic clusters and research fronts [1] |

| Chemical Databases | PubChem/ChemSpider | Structural database retrieval | Billions of known chemical structures for identification [4] |

| Specialized Algorithms | Spec2Vec/MS2DeepScore | Enhanced spectral similarity | NLP-inspired spectral matching [4] |

| Signed Iterative Random Forest (SiRF) | Interaction discovery in mixtures | Identifies threshold-based synergistic effects [6] | |

| Weighted Quantile Sum (WQS) Regression | Mixture effect estimation | Creates summary index for cumulative risk [6] |

Thematic Research Clusters and Emerging Trends

Co-citation and keyword co-occurrence analyses of the 3,150 publications reveal distinct thematic clusters that characterize the intellectual structure of this research domain. Eight major research foci have emerged, centered on: (1) ML model development and optimization, (2) water quality prediction, (3) quantitative structure-activity relationship (QSAR) applications, and (4) per- and polyfluoroalkyl substances (PFAS) research [1]. The algorithms most frequently cited across these clusters include XGBoost and random forests, reflecting their dominant position in the methodological toolkit [1].

A distinct risk assessment cluster indicates the migration of these tools toward dose-response modeling and regulatory applications, though a significant bias exists in keyword frequencies with a 4:1 ratio favoring environmental endpoints over human health endpoints [1]. Emerging topics rapidly gaining traction include climate change impacts, microplastics pollution, and digital soil mapping, while chemicals such as lignin, arsenic, and phthalates appear as fast-growing but understudied substances requiring further research attention [1].

The field shows a pronounced trend toward hybrid and explainable architectures, with increased application of interpretability techniques like SHAP (Shapley Additive Explanations) [3]. Emerging methodological approaches include Generative Adversarial Networks (GANs) for data-scarce contexts, Transfer Learning for knowledge reuse, and Transformer architectures that outperform LSTM in specific time series prediction tasks [3].

The quantitative analysis of publication trends from 1996 to 2025 reveals an unmistakable exponential surge in machine learning applications for environmental chemical research. The inflection point around 2015 marks a fundamental transition from theoretical exploration to widespread implementation, driven by converging factors including computational advances, data availability, and pressing environmental health challenges. The geographic distribution of research output demonstrates global leadership from China and the United States, with increasingly diverse international participation strengthening the field's knowledge base.

The methodological protocols and research resources detailed in this analysis provide both a retrospective understanding of the field's development and a prospective roadmap for future innovation. As the field matures, critical challenges remain in expanding chemical coverage, systematically integrating human health endpoints, adopting explainable artificial intelligence workflows, and fostering international collaboration to translate ML advances into actionable chemical risk assessments [1]. The ongoing publication surge suggests these challenges are actively being addressed by a growing global research community, positioning machine learning as an increasingly indispensable tool in environmental chemical research through 2025 and beyond.

This technical guide provides a comprehensive framework for analyzing country and institutional output within the domain of machine learning (ML) applications in environmental chemical research. Through bibliometric analysis, we delineate the methodological protocols for quantifying research contributions, visualizing collaborative networks, and identifying global leaders. The findings reveal a research landscape dominated by the United States and China in terms of publication volume, though with significant variations in collaborative impact and thematic focus. This whitepaper serves as an essential resource for researchers, scientists, and drug development professionals seeking to navigate the intellectual structure and strategic partnerships in this rapidly evolving, interdisciplinary field.

The integration of machine learning into environmental chemical research is reshaping traditional toxicological approaches, enabling the analysis of complex, high-dimensional datasets for improved chemical monitoring, hazard evaluation, and human health risk assessment [1]. This interdisciplinary field has experienced exponential growth in research output, necessitating systematic analyses to map its intellectual landscape. Bibliometric analysis offers a powerful, quantitative approach to examine academic literature, enabling researchers to identify trends, map collaboration networks, and analyze patterns within scientific fields through data-driven approaches [8] [9].

This guide, situated within a broader thesis on machine learning environmental chemicals bibliometric analysis, focuses specifically on the critical dimensions of country and institutional output. Understanding the geographic and organizational distribution of research is paramount for identifying knowledge centers, fostering strategic partnerships, and benchmarking performance. The objective is to provide a detailed methodological framework and present current findings on global leaders and collaborative networks, thereby offering strategic insights for researchers and policymakers navigating this domain.

Methodological Protocols for Bibliometric Analysis

A rigorous bibliometric analysis requires a structured, multi-step process to ensure comprehensiveness, accuracy, and meaningful interpretation of results. The following protocol, synthesized from established methodologies, is tailored for analyzing country and institutional contributions [10] [9].

Data Collection Strategy

Data Source and Search Query:

- Primary Database: Web of Science Core Collection is recommended due to its comprehensive coverage of high-impact journals and robust data structure for bibliometric analysis [8] [1] [10].

- Search Query: A typical query should combine terms related to ML ("machine learning," "artificial intelligence," "deep learning") with environmental chemical concepts ("environmental chemicals," "chemicals," "toxicity," "risk assessment"). The search can be applied across title, abstract, and keyword fields [1].

- Time Frame: Analyses often span multiple decades to capture evolutionary trends. For current trends, a focus from 2015 to the present is advisable due to the field's recent acceleration [1].

- Inclusion Criteria: Restriction to "article" document types and English language is common to maintain data consistency, though this may introduce linguistic bias.

Data Preprocessing and Cleaning

Retrieved bibliographic records must be cleaned and standardized to ensure analytical accuracy [9]. Key steps include:

- Removal of Duplicates: Identifying and merging duplicate records from the dataset.

- Standardization of Names: Correcting variations in country, institution, and author names (e.g., "USA" and "United States" should be merged).

- Data Extraction: Exporting essential metadata, including titles, authors, affiliations, keywords, cited references, and publication dates into formats compatible with analysis software (e.g., plain text, Excel) [8].

Analytical Techniques and Software

A multi-software approach leverages the strengths of different tools for a holistic analysis [8] [10].

- VOSviewer: Ideal for constructing and visualizing networks of collaborative links between countries and institutions. It calculates Total Link Strength (TLS) as a key metric for collaboration intensity [1].

- CiteSpace: Excels in conducting co-citation analysis, burst detection, and visualizing the evolution of a research field over time. It is particularly useful for identifying emerging trends and pivotal publications [8] [11].

- Bibliometrix (R Package): Provides a suite of tools for comprehensive science mapping, including temporal trend analysis, author productivity, and thematic evolution [8] [9].

Table 1: Key Software Tools for Bibliometric Analysis

| Software | Primary Function | Key Metric | Application in this Context |

|---|---|---|---|

| VOSviewer | Network Visualization | Total Link Strength (TLS) | Mapping country/institution collaboration networks. |

| CiteSpace | Evolution & Burst Detection | Centrality, Burst Strength | Identifying emerging institutions and paradigm-shifting papers. |

| Bibliometrix (R) | Comprehensive Science Mapping | Publication Growth, Thematic Map | Analyzing productivity trends and thematic focus of countries. |

Network Analysis Parameters

Configuring minimum thresholds is critical to balance network comprehensiveness and interpretability. The following parameters, derived from established studies, serve as a starting point [8]:

- Country Collaboration: Minimum number of documents per country ≥ 10.

- Institutional Collaboration: Minimum number of documents per institution ≥ 7.

- Author Collaboration: Minimum number of documents per author ≥ 4.

These thresholds filter out marginal contributors, allowing primary collaborative structures and major knowledge producers to be clearly visualized. The robustness of the resulting clusters can be statistically validated using modularity analysis (Q > 0.3) and silhouette coefficient analysis (>0.7) [8].

Global Leaders: Country and Institutional Output

Quantitative analysis of publication data reveals clear global leaders in ML research for environmental chemicals. The following tables summarize the output and impact of the top contributing countries and institutions.

Table 2: Top Contributing Countries in ML for Environmental Chemical Research (Data sourced from [1])

| Rank | Country | Publication Count | Total Link Strength (TLS) | Key Characteristics |

|---|---|---|---|---|

| 1 | People's Republic of China | 1130 | 693 | Leads in volume; dominant role in shaping the research area. |

| 2 | United States | 863 | 734 | High publication output with the strongest collaborative network (highest TLS). |

| 3 | India | 255 | Data not specified | Significant volume, indicating growing engagement. |

| 4 | Germany | 232 | Data not specified | Major European contributor. |

| 5 | England | 229 | Data not specified | Strong research output within the European context. |

The data indicates a duopoly of China and the United States in terms of pure research volume. However, the Total Link Strength (TLS) reveals a critical nuance: while China leads in publication count, the United States maintains a more deeply integrated and extensive global collaborative network. This pattern of geographical dominance is consistent with findings in other AI-driven fields, such as sepsis research, where the US and China also lead in output, though the US often demonstrates a higher citation impact [8].

Table 3: Leading Institutional Contributors in ML for Environmental Chemical Research (Data sourced from [1])

| Rank | Institution | Country | Publication Count |

|---|---|---|---|

| 1 | Chinese Academy of Sciences | China | 174 |

| 2 | United States Department of Energy | United States | 113 |

| 3 | Other prominent institutions | Various | Data not specified |

Institutional leadership is anchored by major national academies and government research bodies, highlighting the resource-intensive nature of cutting-edge research at the intersection of ML and environmental science.

Visualizing Collaborative Networks

The relationships between countries and institutions can be effectively modeled and visualized as networks. The following diagrams, generated using Graphviz DOT language, illustrate typical collaborative structures identified through bibliometric analysis.

Global Research Collaboration Network

The diagram above models the complex interplay between national and institutional collaboration. Key insights include:

- Core-Periphery Structure: The network often exhibits a structure with the most prolific countries (the US and China) at the core, with the strongest collaborative link between them [12].

- Hub-and-Spoke Model: The United States often acts as a central hub, maintaining strong collaborative ties (high TLS) with multiple other countries, which is consistent with the quantitative data in Table 2 [1].

- Institutional Alignment: Leading institutions' collaborative patterns generally mirror their respective countries' overall networks, though with specific, strong international partnerships that cross national boundaries.

The Scientist's Toolkit: Essential Research Reagents

Conducting a bibliometric analysis in this field requires a suite of digital "reagents" and tools. The following table details the essential components.

Table 4: Essential Tools for Conducting Bibliometric Analysis

| Tool / Resource | Category | Function | Application Note |

|---|---|---|---|

| Web of Science Core Collection | Data Source | Provides comprehensive bibliographic data for analysis. | Preferred for its structured data; Scopus is a common alternative. |

| VOSviewer | Analysis & Visualization | Creates maps based on network data (e.g., co-authorship, co-occurrence). | Excellent for intuitive visualization of collaborative networks [10]. |

| CiteSpace | Analysis & Visualization | Detects emerging trends, burst concepts, and intellectual turning points. | Crucial for dynamic, time-sliced analysis and finding pivotal papers [8] [11]. |

| Bibliometrix (R-package) | Analysis & Visualization | Performs a comprehensive suite of bibliometric analyses. | Ideal for reproducibility and integrating statistical analysis with science mapping [8] [9]. |

| Python / R | Programming Language | Data cleaning, preprocessing, and custom analysis. | Essential for handling large datasets and performing operations beyond GUI software capabilities [9]. |

Discussion and Future Directions

The analysis confirms the preeminent positions of China and the United States in the production of ML research for environmental chemicals. However, the distinction between volume and influence is critical. The higher TLS of the US suggests its research ecosystem is more globally integrated, potentially leading to greater visibility and impact, a pattern observed in other high-tech research domains [8] [12]. Future trends point toward several key developments:

- Rise of Explainable AI (XAI): As ML models are increasingly used for regulatory risk assessment, the demand for interpretable and transparent models will grow, shifting the focus from pure prediction to understanding and trust [8] [1].

- Multi-Omics Integration: Research is expected to increasingly incorporate multi-omics data (genomics, metabolomics) to build more comprehensive models of chemical toxicity, moving beyond traditional endpoints [8].

- Addressing Collaboration Gaps: The persistent inequality in global research dynamics, where collaborations between the Global North and Global Majority can be uneven, requires conscious effort to foster more equitable partnerships [12]. Supporting the agency of researchers in less dominant systems is key to a more pluralistic global research landscape.

In conclusion, this whitepaper provides a validated methodological framework and a snapshot of the current global landscape. For researchers and institutions, understanding these collaborative networks and output metrics is not merely an academic exercise but a strategic necessity for positioning, partnership formation, and driving innovation in the critical field of machine learning applications for environmental health.

The application of machine learning (ML) in environmental chemical research is fundamentally reshaping how scientists monitor chemical presence, evaluate ecological hazards, and assess human health risks. This transformation is driven by the need to analyze complex, high-dimensional datasets that characterize modern chemical and toxicological research, moving beyond traditional empirical approaches toward a data-rich discipline ripe for artificial intelligence (AI) integration [13]. A comprehensive bibliometric analysis of 3,150 peer-reviewed articles from the Web of Science Core Collection (1985-2025) reveals the intellectual structure and emerging trends within this rapidly evolving field [13]. This analysis reveals an exponential surge in publication output beginning in 2015, dominated by environmental science journals, with China and the United States leading global research contributions [13]. The field's conceptual structure crystallizes around eight distinct thematic clusters, providing a systematic map of research fronts from water quality prediction to per- and polyfluoroalkyl substances (PFAS) and chemical risk assessment.

Methodological Framework: Bibliometric Analysis and Data Processing

Dataset Collection and Processing

The bibliometric foundation of this analysis employed the Web of Science Core Collection as the primary data source, accessed on 16 June 2025 [13]. The search strategy utilized a precise query of "machine learning" AND "environmental chemicals" across all searchable fields, restricted to publications between 1985 and 2025 and limited to article-type documents in English [13]. This methodology yielded a final dataset of 3,150 relevant publications that served as the basis for all subsequent analyses [13].

Analytical Techniques and Visualization

For in-depth bibliometric mapping and network visualization, the study employed VOSviewer version 1.6.20 to perform several specialized analyses [13]. These included: (i) co-citation analysis of cited authors, cited sources, and cited references; (ii) co-occurrence analysis of author keywords; and (iii) cluster analysis to identify major thematic structures within the literature [13]. The R programming environment (version 4.2.2) provided complementary visualizations and statistical analyses, including temporal keyword evolution maps and identification of frequently mentioned and emerging chemicals based on terms extracted from abstracts, author keywords, and Keywords Plus [13].

Figure 1: Bibliometric Analysis Workflow: From Data Collection to Thematic Clustering

The Eight Emerging Thematic Clusters

ML Model Development and Algorithm Applications

This foundational cluster focuses on the development and refinement of core machine learning algorithms specifically adapted for environmental chemical applications. Research in this domain centers on comparing algorithmic performance, optimizing model architectures, and adapting computational approaches for chemical data characteristics [13]. The cluster encompasses both classical machine learning approaches and advanced neural network architectures, with studies frequently deploying interpretable ML alongside classical learners including random forests, support vector machines (SVMs), gradient boosting, k-nearest neighbors (k-NN), and Bayesian models such as Bernoulli naïve Bayes [13]. Deep and multitask neural networks represent the cutting edge within this cluster, particularly for classifying complex molecular interactions such as receptor binding, agonism, and antagonism [13].

Table 1: Dominant ML Algorithms in Environmental Chemical Research

| Algorithm Category | Specific Methods | Primary Applications | Citation Prevalence |

|---|---|---|---|

| Ensemble Methods | XGBoost, Random Forests, Extremely Randomized Trees | Chemical classification, contamination prediction, risk assessment | Highest cited algorithms [13] |

| Neural Networks | Multilayer Perceptrons, Convolutional Neural Networks, Graph Neural Networks (GNNs) | Receptor binding prediction, spatial contamination mapping | Rapidly emerging [13] |

| Classical ML | Support Vector Machines (SVMs), k-Nearest Neighbors (k-NN) | Quantitative structure-activity relationship (QSAR) modeling | Consistently applied [13] |

| Bayesian Methods | Bernoulli Naïve Bayes | Endocrine disruption prediction, chemical prioritization | Specialized applications [13] |

Water Quality Prediction and Monitoring

The water quality prediction cluster represents a major application domain where ML models are deployed to forecast contamination events, assess drinking water safety, and monitor aquatic ecosystems. Research in this cluster utilizes diverse ML approaches including SVMs, Kolmogorov-Arnold Networks, multilayer perceptrons, and extreme gradient boosting (XGBoost) for drinking water quality index prediction [13]. Recent advances include graph neural networks (GNNs) that encode river network topology and frameworks for long-term calibration and validation in data-scarce regions [13]. This cluster demonstrates particular strength in addressing spatial and temporal patterns of contamination, with models designed to predict contaminant spread and concentration across watersheds and drinking water systems.

Quantitative Structure-Activity Relationship (QSAR) Modeling

QSAR modeling represents a mature yet rapidly evolving cluster focused on predicting chemical toxicity and environmental behavior based on molecular structures. This domain deploys interpretable ML alongside classical learners to classify receptor binding, agonism, and antagonism, with large-scale consensus efforts improving robustness and external predictivity [13]. Research has extended beyond the estrogen receptor to include classification models for the androgen receptor using k-NN, random forests, and Bernoulli naïve Bayes, and convolutional neural networks for the progesterone receptor [13]. These approaches demonstrate significant portability across different endocrine targets and toxicological endpoints, facilitating virtual screening of chemicals for environmental risk assessment.

PFAS (Per- and Polyfluoroalkyl Substances) Research

PFAS represents a rapidly emerging thematic cluster driven by growing regulatory attention and scientific concern about these persistent, bioaccumulative compounds. Bibliometric analysis specific to PFAS reveals a dramatic increase in research output, with publications rising from just 7 in 2015 to 134 in 2024, indicating intensified global scientific attention [14]. Common PFAS compounds, particularly perfluorooctanoic acid (PFOA) and perfluorooctane sulfonic acid (PFOS), have been widely detected in various ecosystems, including surface water, groundwater, and soil [14]. ML applications in this cluster focus on tracking contamination sources, predicting environmental fate and transport, and identifying effective treatment methods such as adsorption and photocatalysis for PFAS removal [14].

Chemical Risk Assessment and Regulatory Applications

This cluster marks the migration of ML tools toward dose-response modeling and regulatory decision-making frameworks. A distinct risk assessment cluster has emerged within the bibliometric landscape, indicating the growing application of these computational tools for supporting chemical safety evaluations and regulatory guidelines [13]. However, keyword frequency analysis reveals a significant 4:1 bias toward environmental endpoints over human health endpoints, highlighting a critical gap in connecting environmental exposure data with human health outcomes [13]. Emerging approaches in this cluster seek to integrate mechanistic toxicology data with exposure science to develop more predictive risk assessment frameworks.

Air Quality Monitoring and Forecasting

The air quality monitoring cluster applies ML techniques to model atmospheric chemical concentrations, predict pollution episodes, and identify emission sources. Research in this domain utilizes hybrid directed graph neural networks with spatiotemporal meteorological fusion, ML-guided integration of fixed and mobile sensors for high-resolution PM2.5 mapping, and data-driven modeling of long-range wildfire transport [13]. These modern ML frameworks significantly enhance forecasting accuracy and exposure assessment precision, providing critical tools for public health protection and environmental management.

Soil and Land Contamination Assessment

This cluster encompasses ML applications for predicting soil chemical concentrations, mapping contamination patterns, and assessing land quality impacts. Supervised learners including extremely randomized trees, gradient boosting, XGBoost, SVMs, and tuned random forests are being augmented with spatial regionalization indices to encode spatial dependence for mapping heavy-metal contamination from field to global scales [13]. Emerging topics within this cluster include digital soil mapping, which represents a fast-growing methodological innovation strengthening environmental surveillance and decision-making for land management.

Emerging Contaminants and High-Growth Chemical Domains

This forward-looking cluster identifies newly recognized chemical threats and rapidly expanding application domains for ML in environmental chemistry. Emerging topics include climate change, microplastics, and high-growth specialty chemicals such as those used in electronics and clean energy technologies [13]. Meanwhile, specific chemicals including lignin, arsenic, and phthalates appear as fast-growing but understudied substances in the literature [13]. The global specialty chemicals market, expected to grow from $641.5 billion in 2023 to $914.4 billion in 2030, underscores the importance of this research domain [15].

Table 2: Emerging Contaminants and Research Focus Areas

| Emerging Contaminant Category | Specific Compounds/Materials | Research Trends | ML Applications |

|---|---|---|---|

| Persistent Organic Pollutants | PFAS (PFOA, PFOS), phthalates | Rapidly growing research attention [14] | Source tracking, treatment optimization, risk prediction [14] |

| Novel Materials | Microplastics, bioplastics, nanomaterials | Increasing detection in environmental matrices [13] | Environmental fate modeling, ecological impact assessment |

| High-Growth Specialty Chemicals | Electronic chemicals, specialty polymers, surfactants | Market expected to grow to $914.4B by 2030 [15] | Lifecycle assessment, alternative chemical design |

| Legacy Contaminants | Arsenic, lead, dioxins | Continued concern with new analytical approaches | Spatial prediction, exposure route identification, remediation planning |

Experimental Protocols and Methodological Approaches

QSAR Modeling Experimental Protocol

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone methodological approach within multiple thematic clusters. The standard experimental protocol involves several defined stages:

Dataset Curation: Compilation of chemical structures with associated experimental bioactivity data from public databases such as PubChem or specialized toxicology repositories. Data preprocessing includes standardization of chemical structures, removal of duplicates, and resolution of activity value discrepancies.

Molecular Descriptor Calculation: Generation of numerical representations of chemical structures using specialized software (e.g., RDKit, PaDEL). Descriptors encompass topological, electronic, and physicochemical properties that serve as input features for ML models.

Dataset Splitting: Division of data into training (∼70-80%), validation (∼10-15%), and test sets (∼10-15%) using stratified sampling to maintain activity class distribution. External validation compounds are often set aside completely during model development.

Model Training and Optimization: Application of multiple ML algorithms (e.g., random forests, SVM, neural networks) with hyperparameter tuning via cross-validation. Models are evaluated using metrics including accuracy, sensitivity, specificity, and area under the receiver operating characteristic curve (AUC-ROC).

Model Interpretation and Validation: Application of explainable AI techniques (e.g., SHAP, LIME) to identify structural features driving predictions. External validation using completely held-out compounds provides the most rigorous assessment of predictive performance.

Figure 2: QSAR Modeling Workflow: From Data Curation to Predictive Model

Water Quality Prediction Experimental Protocol

ML approaches for water quality prediction employ distinct methodological considerations tailored to spatial and temporal data characteristics:

Data Collection and Preprocessing: Compilation of historical water quality measurements from monitoring networks, satellite data, and environmental sensors. Handling of missing data through imputation techniques and normalization of parameters with different measurement scales.

Spatiotemporal Feature Engineering: Creation of features that capture geographical relationships (e.g., distance to pollution sources, upstream land use) and temporal patterns (e.g., seasonal variations, precipitation events). Integration of meteorological and hydrological data as predictive features.

Model Architecture Selection: Implementation of algorithms capable of capturing spatiotemporal dependencies. Traditional approaches include random forests and gradient boosting, while advanced methods utilize graph neural networks that encode watershed topology or recurrent neural networks for temporal sequences.

Model Validation and Uncertainty Quantification: Evaluation using temporal or spatial cross-validation to assess generalizability. Quantification of prediction uncertainty through methods such as quantile regression or Bayesian approaches, particularly important for regulatory decision-making.

Table 3: Key Research Reagent Solutions and Computational Tools

| Tool/Category | Specific Examples | Function/Application | Thematic Cluster Relevance |

|---|---|---|---|

| Bibliometric Software | VOSviewer, R Bibliometrics Packages | Research landscape mapping, trend analysis, collaboration network visualization | Field overview and research gap identification [13] |

| ML Algorithms & Libraries | XGBoost, Scikit-learn, TensorFlow/PyTorch | Model development, predictive analytics, pattern recognition | All clusters, especially ML Model Development [13] |

| Chemical Databases | Web of Science, Scopus, PubChem, TOXNET | Data source for model training, literature analysis, chemical property information | QSAR Modeling, PFAS Research [13] [14] |

| Molecular Descriptors | RDKit, PaDEL, Dragon | Chemical structure quantification, feature generation for ML models | QSAR Modeling, Chemical Risk Assessment [13] |

| Environmental Sensors | PFAS detection kits, multi-parameter water quality probes | Field data collection, model validation, monitoring network establishment | Water Quality Prediction, PFAS Research [16] |

| Explainable AI Tools | SHAP, LIME, partial dependence plots | Model interpretation, hypothesis generation, regulatory acceptance | Chemical Risk Assessment, QSAR Modeling [13] |

Research Gaps and Future Directions

The bibliometric analysis reveals several significant research gaps and strategic opportunities for advancing the field. First, a substantial imbalance exists between environmental and human health focus, with keyword frequencies showing a 4:1 bias toward environmental endpoints over human health endpoints [13]. This indicates a critical need for more research systematically coupling ML outputs with human health data. Second, chemical coverage remains limited, with emerging chemicals like lignin, arsenic, and phthalates appearing as fast-growing but understudied substances [13]. Third, methodological challenges persist in model interpretability, highlighting the need for adopting explainable artificial intelligence workflows to enhance regulatory acceptance and scientific insight [13].

Future research should prioritize expanding the substance portfolio to encompass more diverse chemical classes, developing standardized protocols for model validation and reporting, fostering international collaboration to translate ML advances into actionable chemical risk assessments, and strengthening the integration between environmental monitoring data and human health endpoints [13]. As the field continues to evolve, these thematic clusters provide both a map of current research fronts and a compass pointing toward the most promising future directions at the intersection of machine learning and environmental chemical research.

Keyword co-occurrence mapping has emerged as a fundamental bibliometric technique for visualizing and understanding the intellectual structure of scientific fields. This methodology operates on the principle that the frequency with which keywords appear together in scientific publications reveals conceptual relationships and thematic connections within a research domain. When applied to interdisciplinary fields such as machine learning (ML) applications in environmental chemical research, co-occurrence analysis provides unparalleled insights into evolving research trends, knowledge gaps, and emerging frontiers. The exponential growth in ML applications for environmental chemical research, with publications surging from fewer than 25 annually before 2015 to over 719 in 2024, creates both opportunity and necessity for systematic mapping of this rapidly expanding knowledge landscape [13].

Within the context of a broader thesis on machine learning in environmental chemical research, keyword co-occurrence mapping serves as the essential cartographic tool that renders visible the hidden connections between methodological advances, chemical substances of concern, and environmental or health endpoints. This technical guide provides researchers with comprehensive methodologies for executing rigorous co-occurrence analyses, from data collection through visualization and interpretation, with specific application to the ML-environmental chemicals domain. By mastering these techniques, researchers can identify central research themes, trace conceptual evolution, and pinpoint strategic opportunities for future investigation at this critical interdisciplinary frontier.

Theoretical Foundations and Key Concepts

Bibliometric Principles Underlying Co-occurrence Analysis

Co-word analysis rests upon the fundamental premise that keywords assigned to scientific publications function as valid descriptors of their conceptual content. When two keywords frequently co-occur across a corpus of publications, this indicates a substantive conceptual relationship between the topics they represent. The strength of this relationship can be quantified through association measures such as co-occurrence frequency, proximity indices, and statistical measures of association [17]. In network terms, keywords constitute nodes while co-occurrence relationships form edges, creating a semantic network that mirrors the intellectual structure of a research field.

The analytical value of co-occurrence mapping extends beyond mere description to hypothesis generation and research forecasting. By examining clusters of tightly interconnected keywords, researchers can identify established research specialties. Similarly, weakly connected regions of the network may reveal underexplored interfaces between subfields, while emerging keywords with rapidly increasing co-occurrence patterns can signal new research fronts. Temporal analyses tracking these patterns over time provide unique insights into knowledge diffusion paths and the evolution of scientific paradigms [17].

Operational Terminology and Metrics

- Co-occurrence Frequency: The simple count of documents in which two keywords appear together. This raw frequency forms the basic weight assigned to edges in the network.

- Association Strength: A normalized measure of co-occurrence that accounts for the overall frequency of each keyword, often calculated as the ratio of actual co-occurrences to expected co-occurrences under independence assumptions.

- Centrality Measures: Network metrics that identify the most influential keywords, including:

- Degree Centrality: The number of direct connections a keyword has to other keywords.

- Betweenness Centrality: The extent to which a keyword lies on the shortest paths between other keywords, indicating its role as a conceptual bridge.

- Closeness Centrality: How quickly a keyword can reach all other keywords in the network.

- Cluster/Community Detection: Algorithms that partition the network into groups of densely connected keywords representing thematic subfields.

- Modularity: A measure of the quality of network division into clusters, with higher values indicating well-separated communities.

Methodological Protocols for Co-occurrence Analysis

Data Collection and Preprocessing Framework

The foundation of any robust co-occurrence analysis is a comprehensive and representative bibliographic dataset. For research focusing on ML applications in environmental chemicals, the following protocol ensures data quality and relevance:

Database Selection and Search Strategy:

Utilize established bibliographic databases such as Web of Science Core Collection or Scopus, which provide standardized metadata and citation information. Construct a balanced search query that captures the interdisciplinary nature of the field. Based on proven methodologies in recent bibliometric studies, a query such as: ("machine learning" OR "deep learning" OR "artificial intelligence") AND ("environmental chemicals" OR "emerging contaminants" OR "chemical risk assessment") retrieves an appropriate dataset [13]. Apply filters for document type (e.g., articles, reviews) and time span according to research objectives.

Data Extraction and Cleaning: Download complete records including titles, authors, abstracts, author keywords, indexed keywords (e.g., Keywords Plus), and references. The critical preprocessing step involves keyword normalization to merge variants (e.g., "ML," "machine learning," "deep learning") through automated and manual methods. As demonstrated in a recent analysis of 3,150 publications on ML in environmental chemical research, this ensures accurate representation of conceptual relationships [13]. Remove ambiguous or overly broad terms that do not contribute to thematic discrimination.

Table 1: Data Collection Parameters for ML in Environmental Chemicals Research

| Parameter | Recommended Setting | Rationale |

|---|---|---|

| Database | Web of Science Core Collection | Comprehensive coverage with standardized keywords |

| Time Span | 1985-present (customizable) | Captures field evolution from early applications |

| Document Types | Articles, Review Articles | Focuses on primary research and synthesis |

| Search Field | Topic (Title, Abstract, Keywords) | Balances comprehensiveness and relevance |

| Minimum Dataset | 3,000+ publications (current) | Ensures robust pattern identification [13] |

Analytical Workflow and Software Implementation

The transformation of raw bibliographic data into insightful co-occurrence maps follows a structured workflow implemented through specialized software tools. The following workflow diagram illustrates this end-to-end process:

Network Construction and Analysis: From the normalized keyword list, construct a co-occurrence matrix where cells represent the frequency with which each keyword pair appears together. This matrix serves as input for network analysis software. Apply network reduction techniques such as minimum co-occurrence thresholds (e.g., 5-10 co-occurrences) to focus on meaningful relationships. Calculate standard network metrics including density, centralization, and average path length to characterize overall network structure. Employ community detection algorithms such as the Louvain method to identify thematic clusters [18]. In the ML-environmental chemicals domain, recent analyses have consistently identified 6-8 major thematic clusters, including ML model development, water quality prediction, quantitative structure-activity relationship (QSAR) applications, and specific contaminant-focused research such as per-/polyfluoroalkyl substances (PFAS) [13].

Visualization and Interpretation: Create two-dimensional network maps using force-directed layout algorithms (e.g., Force Atlas 2 in Gephi) that position strongly connected keywords closer together. Visually represent clusters through color coding, node size proportional to frequency or centrality, and edge thickness proportional to co-occurrence strength. For the ML-environmental chemicals field, expect prominent clusters around specific algorithm types (XGBoost, random forests), environmental media (water, air, soil), and chemical classes (PFAS, heavy metals, pharmaceuticals) [13]. Interpret cluster labels by examining the most central and frequent keywords within each grouping, ensuring they accurately represent the thematic content.

Applied Tools for Mapping and Visualization

Comparative Analysis of Software Platforms

Multiple software platforms enable the implementation of co-occurrence analysis, each with distinct strengths and learning curves. The selection criteria should consider technical expertise, analysis depth requirements, and visualization needs.

Table 2: Software Tools for Keyword Co-occurrence Analysis

| Tool | Primary Use Case | Strengths | Limitations |

|---|---|---|---|

| VOSviewer | Beginner-friendly analysis with publication-ready visuals | Intuitive interface, specialized for bibliometrics, clear clustering | Limited customization, less suitable for very large datasets |

| Gephi | Advanced network analysis and customization | Extensive layout algorithms, plugin ecosystem, handles large networks | Steeper learning curve, requires separate data preprocessing [19] |

| R (Bibliometrix/biblioShiny) | Reproducible analysis pipelines and statistical rigor | Complete workflow integration, advanced statistics, high reproducibility | Programming knowledge required, less immediate visualization |

| InfraNodus | Online analysis with AI-enhanced interpretation | Web-based, structural gap analysis, AI recommendations | Subscription cost, node limits (~500) [20] |

Specialized Protocol: Gephi Implementation for ML-Environmental Chemicals Research

For researchers requiring maximum analytical flexibility, Gephi provides a powerful open-source solution. The following protocol specifics are adapted from established methodologies [18]:

Data Import and Network Creation: After installing Gephi and necessary plugins (e.g., CSV import plugin), import the co-occurrence matrix. Configure the network as undirected since co-occurrence is inherently symmetric. A typical analysis of ML in environmental chemicals research yields networks of 200-500 nodes after applying frequency thresholds [13]. The initial imported network will appear as a hairball structure requiring layout application.

Network Layout and Cluster Identification: Apply the Force Atlas 2 layout algorithm with appropriate scaling to achieve optimal node distribution. Run the Modularity Class algorithm (resolution 1.0-2.0) to detect thematic clusters, which typically identifies 6-8 major communities in this field [13]. Assign distinct colors to each modularity class for visual discrimination. Calculate centrality metrics (degree, betweenness) through the Network Diameter algorithm to identify the most influential keywords.

Visual Enhancement and Export: Size nodes according to degree centrality or frequency to emphasize important concepts. Adjust edge thickness based on co-occurrence strength and apply alpha blending to reduce visual clutter from numerous connections. For the ML-environmental chemicals domain, expect to see central nodes representing key algorithms (XGBoost, random forests) bridging methodological and application clusters [13]. Export high-resolution visualizations (SVG/PNG) for publications and network files (GEXF) for future reanalysis.

Interpreting Results in the ML-Environmental Chemicals Context

Thematic Cluster Analysis and Research Trends

The interpretation of co-occurrence maps requires both quantitative network metrics and qualitative domain expertise. In the specific context of ML applications for environmental chemicals, several consistent thematic patterns emerge from recent bibliometric analyses:

Primary Research Clusters: Comprehensive mapping of 3,150 publications reveals eight thematic clusters dominated by: (1) ML model development and optimization, (2) water quality prediction and monitoring, (3) QSAR applications for toxicity prediction, and (4) contaminant-specific research on per-/polyfluoroalkyl substances (PFAS) [13]. The centrality of XGBoost and random forests algorithms across multiple clusters indicates their established utility for environmental chemical data structures.

Structural Patterns and Research Gaps: Network analysis frequently reveals a 4:1 bias in keyword frequencies toward environmental endpoints over human health endpoints, highlighting a significant research gap in connecting environmental chemical data with health outcomes [13] [21]. Emerging keyword trajectories show rapidly growing attention to climate change, microplastics, and explainable AI, while lignin, arsenic, and phthalates represent fast-growing but understudied chemicals [13].

Temporal Evolution and Emerging Frontiers

Longitudinal analysis of co-occurrence networks reveals the dynamic evolution of the field. The following diagram maps the typical knowledge development trajectory in this interdisciplinary domain:

The publication surge from 2015 onward, with output nearly doubling between 2020 (179 publications) and 2021 (301 publications), indicates rapid field maturation [13]. Recent network analyses show the emergence of distinct risk assessment clusters, signaling migration of these tools toward dose-response modeling and regulatory applications. The increasing co-occurrence of "explainable AI" with chemical risk assessment keywords reflects growing attention to model interpretability needs in regulatory contexts [13] [21].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Analytical Tools for Co-occurrence Mapping Research

| Tool/Category | Specific Examples | Function in Analysis |

|---|---|---|

| Bibliometric Data Sources | Web of Science Core Collection, Scopus | Provides standardized metadata and citation data for analysis |

| Network Analysis Software | VOSviewer, Gephi, CitNetExplorer | Performs cluster detection, centrality calculations, and network visualization |

| Statistical Programming | R (Bibliometrix, igraph), Python (NetworkX) | Enables customized analysis pipelines and advanced statistical testing |

| Visualization Libraries | Cytoscape.js, Sigma.js, Graphviz | Creates interactive and publication-quality network visualizations |

| Data Cleaning Tools | OpenRefine, Custom scripts | Normalizes keyword variants and prepares structured data for analysis |

Keyword co-occurrence mapping provides an indispensable methodological framework for revealing the intellectual structure of machine learning applications in environmental chemical research. Through the rigorous application of the protocols outlined in this technical guide, researchers can transform overwhelming publication volumes into actionable intelligence about their field's conceptual organization, evolution, and emerging frontiers.

The specific findings from applications in the ML-environmental chemicals domain highlight several strategic priorities for future research: expanding the portfolio of studied chemicals, systematically coupling ML outputs with human health data, adopting explainable AI workflows, and fostering international collaboration to translate ML advances into actionable chemical risk assessments [13] [21]. As the field continues its exponential growth, co-occurrence mapping will remain an essential methodology for guiding research investments, identifying collaborative opportunities, and ensuring that machine learning applications effectively address the most pressing challenges in environmental chemical management.

A 2025 bibliometric analysis of 3,150 scientific publications reveals that machine learning (ML) is fundamentally reshaping the monitoring and hazard evaluation of environmental chemicals [1] [13]. This transformation is characterized by an exponential surge in ML application, dominated by algorithms such as XGBoost and random forests [1]. The analysis identifies eight major thematic research clusters, with a notable 4:1 research bias toward environmental endpoints over human health impacts [1] [21]. Within this landscape, lignin, arsenic, and phthalates have emerged as fast-growing yet understudied chemicals, presenting significant knowledge gaps despite their increasing environmental prevalence and potential health risks [1]. This whitepaper provides a technical guide to these chemicals, detailing their profiles, toxicological mechanisms, and the experimental and computational frameworks essential for advancing their risk assessment.

The assessment of environmental chemicals is undergoing a profound paradigm shift, moving from traditional toxicological methods toward data-rich disciplines powered by artificial intelligence [1]. The period from 2015 onward has witnessed exponential growth in the application of ML to environmental chemical research, with annual publication output surging from fewer than 25 papers pre-2015 to over 719 in 2024 [1] [13]. This growth is globally distributed, with China and the United States leading in research output, though the U.S. demonstrates stronger collaborative networks as measured by Total Link Strength [1] [13].

The intellectual structure of this field, as revealed through co-citation and co-occurrence analysis, has coalesced into eight thematic clusters centered on ML model development, water quality prediction, quantitative structure-activity relationship (QSAR) applications, per- and polyfluoroalkyl substances (PFAS), and increasingly, chemical risk assessment [1]. This whitepaper focuses on three chemicals—lignin, arsenic, and phthalates—that appear in this analysis as rapidly emerging substances with significant research gaps, particularly regarding their human health implications [1]. We examine their environmental profiles, toxicological mechanisms, and the integrated experimental-computational approaches needed to elucidate their health impacts.

Chemical Profiles and Research Gaps

Table 1: Profiles of Fast-Growing Yet Understudied Chemicals

| Chemical | Primary Sources & Applications | Human Exposure Routes | Key Health Concerns | Major Research Gaps |

|---|---|---|---|---|

| Lignin | Paper/pulp industry, biomass valorization, emerging bioproducts | Occupational inhalation, environmental contamination from industrial waste | Data limited; potential inflammatory and respiratory effects | Toxicity data scarce, metabolic pathways uncharacterized, biomarker identification needed |

| Arsenic | Natural geological deposits, contaminated groundwater, industrial processes | Drinking water, food chain, occupational exposure | Cancer (bladder, lung, skin), cardiovascular disease, neurotoxicity, diabetes [22] | Mechanisms of chronic disease progression, susceptibility factors, remediation optimization at scale |

| Phthalates | Plasticizers (PVC), personal care products, food packaging, medical devices [23] | Ingestion, inhalation, dermal absorption, placental transfer [23] [24] | Endocrine disruption, reproductive toxicity, developmental effects, metabolic syndrome [23] [24] | Low-dose chronic exposure effects, mixture toxicity, metabolic consequences of substitutes |

The tabulated data reveals critical commonalities across these chemicals: complex environmental fate, bioaccumulation potential, and insufficient characterization of their long-term health impacts, particularly at environmentally relevant exposure levels.

Arsenic: A Prototypical Case for ML-Enhanced Risk Assessment

Environmental Persistence and Health Impacts

Arsenic represents a well-established yet persistently challenging environmental toxicant. Groundwater contamination affects over 100 million people in the United States alone and approximately 50 million in Bangladesh, which the WHO has described as "the largest mass poisoning in history" [22]. The JAMA-published 20-year longitudinal study (2000-2022) following nearly 11,000 adults in Bangladesh provides the strongest evidence to date that reducing arsenic exposure slashes chronic disease mortality [22]. This research demonstrated that participants who switched to safer wells experienced up to a 50% reduction in deaths from heart disease, cancer, and other chronic illnesses, with their risk levels matching those who had never been heavily exposed [22].

Experimental Protocol for Arsenic Exposure Assessment

Table 2: Key Research Reagents and Materials for Arsenic Studies

| Reagent/Material | Function/Application | Technical Specifications |

|---|---|---|

| Urine Collection Kits | Biomarker sampling for internal exposure assessment | Pre-acidified containers to preserve arsenic species integrity |

| Atomic Absorption Spectrophotometry | Quantification of total arsenic in biological/environmental samples | Detection limit ≤0.1 μg/L for water samples |

| HPLC-ICP-MS System | Arsenic speciation analysis | Capable of separating As(III), As(V), DMA, MMA |

| Certified Reference Materials | Quality assurance/quality control | NIST 2668 (arsenic in frozen human urine) |

| Well Water Test Kits | Field-based arsenic screening | Colorimetric detection, range 0-100 μg/L |

Detailed Methodology for Arsenic Exposure Biomarker Analysis:

- Sample Collection: Collect spot urine samples in pre-screened arsenic-free containers, acidify to pH <2, and store at -20°C until analysis [22].

- Speciation Analysis: Employ high-performance liquid chromatography coupled with inductively coupled plasma mass spectrometry (HPLC-ICP-MS) to separate and quantify arsenic species, including inorganic forms (AsIII, AsV) and major metabolites (monomethylarsonic acid, dimethylarsinic acid).

- Quality Control: Include method blanks, duplicates, and certified reference materials (NIST 2668) with each analytical batch to ensure accuracy and precision, maintaining recovery rates of 85-115%.

- Data Normalization: Adjust urinary arsenic concentrations for dilution using specific gravity (1.005-1.030) or creatinine to account for hydration status in epidemiological analyses.

The temporal relationship between arsenic exposure reduction and mortality risk decline provides a compelling evidence base for public health intervention, demonstrating that risks gradually decrease following exposure reduction, analogous to smoking cessation benefits [22].

Figure 1: Arsenic Toxicity and Intervention Pathway. This diagram illustrates the mechanistic pathway from arsenic exposure to chronic disease outcomes (yellow to red nodes) alongside the beneficial pathway following exposure reduction (green nodes).

Phthalates: Endocrine Disruption and Experimental Challenges

Exposure Ubiquity and Metabolic Fate

Phthalates demonstrate extensive global utilization, with consumption exceeding 3 million tons annually and an estimated market value reaching $10 billion USD [23]. These compounds function as plasticizers in polyvinyl chloride (PVC) products and appear in diverse consumer goods including personal care products, pharmaceuticals, food packaging, and medical devices [23] [24]. Their non-covalent bonding to polymer matrices enables continuous leaching into the environment throughout product life cycles [24].

Human exposure occurs primarily through ingestion, inhalation, and dermal absorption [23]. Particularly concerning is the transplacental transmission of phthalates, creating exposure during critical developmental windows [23]. Unlike many persistent organic pollutants, phthalates undergo relatively rapid biotransformation with biological half-lives of approximately 12 hours [23]. Metabolism proceeds through a two-step process: initial hydrolyzation to monoester metabolites followed by conjugation to form hydrophilic glucuronide conjugates catalyzed by uridine 5′-diphosphoglucuronyl transferase [23].

Experimental Protocol for Phthalate Toxicity Assessment

Detailed Methodology for Phthalate Endocrine Disruption Screening:

- Receptor Binding Assays:

- Culture human embryonic kidney (HEK293) cells stably transfected with estrogen receptor (ER) or androgen receptor (AR) response elements linked to luciferase reporters.

- Expose cells to phthalates (DEHP, DBP, DEP, DiNP) and their major metabolites (MEHP, MECPP) across concentration ranges (0.1-100 μM) for 24-72 hours.

- Measure luciferase activity to quantify receptor activation/antagonism, using 17β-estradiol and dihydrotestosterone as positive controls for ER and AR respectively.

Steroidogenesis Analysis:

- Expose H295R adrenocortical carcinoma cells to phthalates for 48 hours.

- Quantify testosterone, estradiol, and cortisol production using ELISA or LC-MS/MS.

- Analyze expression of steroidogenic genes (CYP11A1, CYP17A1, CYP19A1) via qRT-PCR.

Metabolite Quantification:

- Collect urine samples from human cohorts or animal models.

- Perform enzymatic deconjugation followed by solid-phase extraction.

- Analyze phthalate metabolites using high-performance liquid chromatography-tandem mass spectrometry (HPLC-MS/MS) with isotope-labeled internal standards.

Table 3: Research Toolkit for Phthalate Studies

| Reagent/Material | Function/Application | Technical Specifications |

|---|---|---|

| H295R Cell Line | In vitro steroidogenesis screening | ATCC CRL-1070 |

| Transfected HEK293 Cells | Nuclear receptor activation profiling | Stable transfection with ER/AR response elements |

| Isotope-Labeled Internal Standards | Mass spectrometry quantification | d4-MEHP, d4-MEP, d4-MBP for major metabolites |

| Glucuronidase Enzyme | Urine sample pretreatment | Helix pomatia β-glucuronidase |

| Phthalate-Free Collection Materials | Contamination prevention in biomonitoring | Polypropylene or glass containers, verified blanks |

The metabolic fate varies significantly between short- and long-branched phthalates. Short-branched phthalates (DMP, DEP) typically hydrolyze to monoester metabolites excreted directly in urine, while complex branched phthalates like DEHP undergo additional transformations including hydroxylation and oxidation before excretion as phase 2 conjugated compounds [23]. This complexity necessitates comprehensive metabolite profiling for accurate exposure assessment.

Figure 2: Phthalate Metabolism and Toxicity Pathways. This diagram maps the metabolic processing of phthalates (blue nodes) alongside their key mechanisms of toxicity (red nodes), culminating in adverse health outcomes.

Machine Learning Applications for Chemical Risk Assessment

Current ML Algorithm Deployment

The bibliometric analysis reveals that XGBoost and random forests currently dominate the ML landscape for environmental chemical research [1]. These algorithms are particularly effective for handling complex, non-linear relationships between chemical structures and biological activity. Additional commonly employed algorithms include support vector machines (SVMs), k-nearest neighbors (k-NN), Bernoulli naïve Bayes, and increasingly, deep neural networks for specific applications like receptor binding prediction [1].

ML applications span multiple scales, from molecular-level predictions of receptor binding and toxicological endpoints to environmental forecasting of chemical fate and transport [1]. At the molecular and cellular level, researchers deploy interpretable ML alongside classical learners to classify receptor binding, agonism, and antagonism, with large-scale consensus efforts improving robustness and external predictivity [1]. For environmental monitoring, ML models are widely applied to forecasting water, air, and land quality to support early warning systems and exposure assessment [1].

Integrated Computational-Experimental Workflow

Figure 3: ML-Driven Chemical Risk Assessment Framework. This workflow diagram illustrates the iterative cycle integrating experimental data generation with machine learning model development to prioritize chemicals for testing and support regulatory decisions.

Protocol for Developing QSAR Models for Toxicity Prediction:

- Data Curation:

- Compile high-quality experimental data from in vitro and in vivo studies for model training, ensuring consistent endpoint measurements.

- Apply rigorous data cleaning to remove duplicates and correct errors, with particular attention to unit consistency and experimental condition documentation.

Feature Engineering:

- Calculate chemical descriptors using tools like RDKit or PaDEL-Descriptor.

- Apply feature selection techniques (mutual information, random forest importance) to identify the most predictive descriptors while minimizing redundancy.

Model Training and Validation:

- Implement multiple algorithms (XGBoost, random forest, SVM, neural networks) using cross-validation to prevent overfitting.

- Assess model performance using stringent external validation with completely held-out test sets, reporting accuracy, sensitivity, specificity, and AUC metrics.

The emerging frontier in this field involves the application of explainable AI (XAI) techniques to elucidate the structural features and properties driving toxicity predictions, thereby enhancing regulatory acceptance and providing mechanistic insights [1]. Molecular-structure-based ML represents the most promising technology for rapid prediction of life-cycle environmental impacts of chemicals, though current applications are limited by data availability and quality challenges [25].

The research landscape for environmental chemicals is rapidly evolving, with machine learning emerging as a transformative tool for risk assessment and chemical prioritization. Within this context, lignin, arsenic, and phthalates represent chemically distinct but conceptually similar challenges—substances with significant data gaps relative to their environmental prevalence and potential health impacts.

Future research should prioritize:

- Expanding the Chemical Portfolio for ML modeling to include emerging contaminants like lignin and phthalate substitutes.

- Systematic Coupling of ML outputs with human health data, addressing the current 4:1 bias toward environmental endpoints [1].

- Adoption of Explainable AI workflows to enhance model interpretability and regulatory acceptance [1].

- International Collaboration to translate ML advances into actionable chemical risk assessments across geopolitical boundaries.

The twenty-year Bangladesh cohort study provides compelling evidence that reducing chemical exposure, even after years of contamination, produces substantial health benefits [22]. This finding underscores the public health imperative of identifying and mitigating risks from understudied chemicals through integrated computational-experimental approaches. As ML methodologies continue to mature, they offer unprecedented potential to accelerate chemical risk assessment and protect vulnerable populations from emerging chemical threats.

From Algorithms to Action: Dominant ML Models and Their Cutting-Edge Applications in Chemical Research

The application of machine learning (ML) in environmental chemical research represents a paradigm shift in how scientists monitor chemical hazards, assess ecological risks, and protect human health. As the field has evolved from traditional toxicological approaches to data-intensive computational methods, specific ML algorithms have emerged as dominant tools for tackling the complex, high-dimensional datasets that characterize modern chemical and toxicological research. A comprehensive bibliometric analysis of 3,150 peer-reviewed articles (1985-2025) reveals an exponential publication surge since 2015, with China and the United States leading research output [13] [1]. This analytical landscape is characterized by eight thematic clusters centered on ML model development, water quality prediction, quantitative structure-activity applications, and per-/polyfluoroalkyl substances (PFAS) research [1] [21]. Within this rapidly expanding field, tree-based ensemble methods, particularly XGBoost and Random Forests, have established themselves as the most cited and implemented algorithms, while Neural Networks power increasingly sophisticated applications in environmental chemistry and toxicology [13] [21]. The migration of these tools toward dose-response modeling and regulatory applications signifies a critical transition from theoretical research to actionable chemical risk assessment [1].

Bibliometric Dominance: Quantitative Analysis of Algorithm Prevalence

Table 1: Bibliometric Analysis of ML Algorithms in Environmental Chemical Research (2015-2025)

| Algorithm | Citation Prevalence | Primary Application Domains | Performance Advantages |

|---|---|---|---|