Microfluidic Chip Design for Pharmaceutical Analysis: Fundamentals, Applications, and Future Trends

This article provides a comprehensive overview of the core principles and practical applications of microfluidic chip design tailored for pharmaceutical analysis.

Microfluidic Chip Design for Pharmaceutical Analysis: Fundamentals, Applications, and Future Trends

Abstract

This article provides a comprehensive overview of the core principles and practical applications of microfluidic chip design tailored for pharmaceutical analysis. Aimed at researchers, scientists, and drug development professionals, it explores the foundational concepts of fluid mechanics and material science governing chip design. The scope extends to advanced applications in high-throughput drug screening, single-cell analysis, and organ-on-a-chip models. It further addresses critical challenges in design optimization and manufacturing, offering insights from troubleshooting and comparative validation studies. By synthesizing recent advancements, including the integration of artificial intelligence, this article serves as a strategic guide for leveraging microfluidic technology to accelerate and refine pharmaceutical research and development.

Core Principles of Microfluidic Chip Design: Mastering Fluid Mechanics and Materials

The behavior of fluids within microfluidic chips, which process minute volumes from 10^(-9) to 10^(-18) liters through channels tens to hundreds of micrometers wide, diverges significantly from macroscopic flow phenomena [1] [2]. In the context of pharmaceutical analysis and research, understanding these fundamentals is not merely academic; it is a prerequisite for designing robust, reproducible, and efficient Lab-on-a-Chip (LOC) devices for applications ranging from high-throughput drug screening to advanced pharmacological safety assessment [3] [4]. At the microscale, surface forces—such as viscous drag and surface tension—become dominant over inertial forces like gravity, leading to a fluidic environment characterized by predictable laminar flow, diffusion-dominated mixing, and significant capillary effects [2] [5]. This paradigm shift enables the precise manipulation of picoliter-volume reagents, single cells, and drug-loaded nanoparticles, thereby providing a powerful toolkit for accelerating drug discovery and development [1]. The integration of these physical principles allows for the creation of sophisticated "Pharm-Lab-on-a-Chip" platforms that minimize reagent consumption, reduce analysis times, and enhance detection sensitivity, marking a transformative advancement in pharmaceutical sciences [4].

The Laminar Flow Regime

The Reynolds Number and the Laminar-Turbulent Transition

In fluid mechanics, the flow regime—whether laminar or turbulent—is determined by the dimensionless Reynolds number (Re), which represents the ratio of inertial forces to viscous forces [2] [5]. It is defined by the equation:

Re = ρνL/µ

Where:

- ρ is the fluid density (kg/m³)

- ν is the flow velocity (m/s)

- L is the characteristic linear dimension of the system, typically the hydraulic diameter of the channel (m)

- μ is the dynamic viscosity of the fluid (Pa·s)

Owing to the extremely small characteristic dimension (L) of microchannels, the Reynolds number in microfluidic systems is typically very low, nearly always less than 2000, and often less than 1 [2] [5]. In this low-Re regime, viscous forces dampen any perturbations that would lead to turbulence, resulting in a smooth, orderly flow pattern known as laminar flow [5]. In laminar flow, adjacent layers of fluid slide past one another without macroscopic mixing, creating predictable and parallel streamlines. This behavior is a cornerstone of microfluidic design, enabling precise spatial control over fluidic elements, which is exploited in applications such as hydrodynamic focusing for cell sorting, precise gradient generation for chemotaxis studies, and the creation of highly monodisperse droplets for nanoparticle synthesis [1] [2].

Experimental Analysis of Flow Parameters

Quantifying the relationship between flow velocity and the resulting flow regime is a fundamental experiment in microfluidics. The following protocol outlines a method to visualize and characterize laminar flow.

Experimental Protocol: Flow Visualization and Reynolds Number Characterization

- Objective: To experimentally determine the flow regime (laminar or turbulent) in a microchannel and correlate it with the calculated Reynolds number.

Materials & Setup:

- A straight microfluidic channel fabricated in PDMS or glass, bonded to a transparent substrate.

- Two independent, programmable syringe pumps for precise control of flow rates.

- Two aqueous solutions: one deionized water (dyed with a visible dye, e.g., food coloring), and one undyed.

- Inverted optical microscope with a high-speed camera for flow visualization.

Methodology:

- Channel Priming: Thoroughly prime the microchannel with deionized water to remove any air bubbles.

- Flow Configuration: Connect the syringes containing the dyed and undyed solutions to the two inlets of a Y-shaped or T-shaped junction microchannel. The main channel should be sufficiently long to allow for full flow development.

- Data Acquisition: Set the syringe pumps to identical, low flow rates, resulting in a low average velocity (ν). Observe the interface between the two streams at the junction and downstream.

- Flow Regime Mapping: Gradually increase the flow rates in a stepwise manner. At each step, capture images or video of the flow streamlines. Continue this process until a significant disruption of the parallel streamlines is observed.

- Parameter Calculation: For each step, calculate the Reynolds number using the equation above. The hydraulic diameter for a rectangular channel is given by ( D_h = 2wh/(w+h) ), where w is the width and h is the height of the channel.

Expected Outcome: At low flow rates (Re << 2000), the dyed and undyed streams will flow side-by-side in parallel laminae with mixing occurring only via diffusion at their interface. As the flow rate increases and Re approaches and exceeds 2000, the distinct interface will begin to break down, indicating the onset of transitional or turbulent flow.

Table 1: Quantitative Relationship between Flow Velocity and Reynolds Number in a Typical Microchannel (w=100µm, h=50µm, ρ=1000 kg/m³, µ=0.001 Pa·s)

| Average Flow Velocity (ν, m/s) | Calculated Reynolds Number (Re) | Observed Flow Regime |

|---|---|---|

| 0.001 | 0.1 | Stable Laminar Flow |

| 0.01 | 1.0 | Laminar Flow |

| 0.1 | 10.0 | Laminar Flow |

| 1.0 | 100.0 | Laminar Flow |

| > 2.0 | > 2000 | Transition to Turbulence |

Diffusion at the Microscale

Principles and Kinetics of Diffusive Mixing

In the absence of turbulent eddies in laminar flow, the primary mechanism for molecular mixing is diffusion [2]. Diffusion is the process by which molecules move from a region of higher concentration to a region of lower concentration due to random thermal motion. The timescale for diffusive mixing is critically important in microfluidic reactions, such as rapid reagent quenching or initiating cell lysis. This timescale is approximated by the equation:

t ≈ x² / 2D

Where:

- t is the diffusion time (s)

- x is the diffusion distance, or the characteristic length scale between molecules (m)

- D is the molecule-specific diffusion coefficient (m²/s)

The profound implication of this relationship for microfluidics is that the diffusion time scales with the square of the distance [2]. When the channel dimensions are reduced from the macroscopic scale (e.g., 1 cm in a beaker) to the microscale (e.g., 100 µm in a microchannel), the diffusion distance decreases by a factor of 100, and consequently, the diffusion time decreases by a factor of 10,000. This dramatic acceleration enables reaction and analysis times that are orders of magnitude faster than in conventional laboratory setups, a key advantage for high-throughput pharmaceutical screening [1] [2].

Experimental Protocol for Quantifying Diffusive Mixing

Understanding and measuring the rate of diffusive mixing is essential for designing efficient microfluidic reactors and analysis systems.

Experimental Protocol: Diffusion Coefficient Measurement in a Laminar Flow Device

- Objective: To visualize and quantify the diffusive mixing of two parallel laminar streams and estimate the diffusion coefficient of a solute.

Materials & Setup:

- A straight microchannel with a Y- or T-junction for inlet streams.

- Two programmable syringe pumps.

- A buffer solution and a solution of a fluorescent dye (e.g., fluorescein) dissolved in the same buffer.

- Fluorescence microscope equipped with a photomultiplier tube (PMT) or a CCD camera for intensity profiling.

Methodology:

- Stream Alignment: Introduce the buffer and dye solutions into the two inlets at identical, low flow rates to establish stable, parallel laminar streams.

- Image Acquisition: Using the fluorescence microscope, capture a high-resolution image of the channel downstream from the junction, perpendicular to the flow direction. The fluorescence intensity will be high in the dye stream and low in the buffer stream, with a gradient at the interface.

- Intensity Profiling: Extract a fluorescence intensity profile across the width of the channel at a specific downstream point. This profile represents the concentration gradient of the dye.

- Data Fitting: The concentration profile can be fitted to the solution of Fick's second law of diffusion for the given boundary conditions. The diffusion coefficient (D) is the fitting parameter that aligns the theoretical curve with the experimental data.

- Validation: Repeat the experiment at different flow rates. While the flow rate will change the distance required for complete mixing, the fitted diffusion coefficient should remain constant.

Expected Outcome: The experiment will yield a sigmoidal fluorescence intensity profile across the channel. The width of the transition region between the two streams is a direct function of the diffusion coefficient and the time the fluids have been in contact (determined by the flow velocity and distance from the junction).

Table 2: Diffusion Times for Common Molecules over Varying Microscale Distances (Approximate D = 10⁻⁹ m²/s for a small molecule in water)

| Diffusion Distance (x, µm) | Calculated Diffusion Time (t) | Practical Implication in Microfluidics |

|---|---|---|

| 1 | 0.5 ms | Nearly instantaneous mixing for very narrow channels |

| 10 | 50 ms | Rapid mixing, suitable for fast chemical reactions |

| 50 | 1.25 s | Moderate mixing time, may require enhanced mixer designs |

| 100 | 5 s | Slow mixing, passive diffusion is often insufficient |

| 1000 (1 mm) | 500 s (~8.3 min) | Impractically slow, highlighting need for active mixing |

Surface Tension and Capillary Action

The Dominance of Surface Forces

At the microscale, the surface-to-volume ratio of a fluid increases dramatically. This makes surface-related forces, such as surface tension and capillary action, overwhelmingly dominant compared to body forces like gravity [2] [5]. Surface tension arises from the cohesive forces between liquid molecules at an interface, minimizing the surface area. Capillary action is the ability of a liquid to flow in narrow spaces without the assistance of, or even in opposition to, external forces like gravity [5]. This is the fundamental principle behind many passive, pump-free microfluidic devices, including paper-based diagnostic strips and lateral flow assays (like home pregnancy tests) [2]. Furthermore, the manipulation of these interfacial forces is the basis for digital microfluidics, where discrete droplets are generated and moved as individual micro-reactors for high-throughput applications like single-cell analysis or combinatorial drug screening [2] [6].

Experimental Control of Droplet Formation

The controlled formation of droplets is a critical process in digital microfluidics, used for creating uniform drug carriers and compartmentalized reactions.

Experimental Protocol: Analyzing Droplet Formation in a Flow-Focusing Geometry

- Objective: To investigate the influence of flow rates and interfacial tension on the size and frequency of droplets generated in a flow-focusing microfluidic device.

Materials & Setup:

- A flow-focusing microchannel, typically fabricated via soft lithography in PDMS or via injection molding in thermoplastics [2] [6].

- Two syringe pumps for the continuous (carrier) phase and the dispersed (droplet) phase.

- Immiscible fluids: e.g., an aqueous solution (dispersed phase) and mineral oil with a surfactant (continuous phase). The surfactant controls the interfacial tension.

- High-speed camera mounted on a microscope.

Methodology:

- System Priming: Prime the microfluidic device with the continuous phase (oil) to wet the channels and prevent unwanted aqueous adhesion.

- Droplet Generation: Initiate flow of both the continuous and dispersed phases. The continuous phase hydrodynamically "focuses" the dispersed phase, causing it to break off into droplets at the orifice.

- Parameter Variation:

- Flow Rate Ratio (φ): Hold the continuous phase flow rate constant and systematically vary the dispersed phase flow rate. Capture video of droplet formation for each condition.

- Interfacial Tension (γ): Repeat the experiment using continuous phases with different concentrations of surfactant, which alters the interfacial tension.

- Data Analysis: From the recorded videos, measure the resulting droplet diameter and formation frequency for each experimental condition. Plot droplet size as a function of the flow rate ratio and capillary number (Ca = μν/γ, which represents the relative effect of viscous forces versus surface tension).

Expected Outcome: The experiment will demonstrate that higher flow rate ratios (more dispersed phase) generally produce larger droplets, while higher continuous phase viscosity and velocity accelerate breakup, yielding smaller droplets [6]. Conversely, higher interfacial tension delays droplet detachment, resulting in larger droplets [6]. Recent computational fluid dynamics (CFD) studies have further quantified that the injection angle in a flow-focusing geometry also significantly impacts droplet characteristics, with a 90° angle yielding the maximum droplet diameter [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of microfluidic experiments and the fabrication of functional devices rely on a carefully selected set of materials and reagents. The table below details key components used in the field, with an emphasis on their role in studying fluid behavior and developing pharmaceutical analysis platforms.

Table 3: Essential Research Reagents and Materials for Microfluidic Research

| Item Name | Function / Application in Microfluidics |

|---|---|

| Polydimethylsiloxane (PDMS) | An elastomeric polymer used for rapid prototyping of microchannels via soft lithography; prized for its gas permeability (essential for cell culture), optical transparency, and biocompatibility [2] [5]. |

| Surfactants (e.g., Span 80, Tween 20) | Amphiphilic molecules used to stabilize emulsions in droplet-based microfluidics; they lower interfacial tension between immiscible phases, preventing droplet coalescence and enabling the generation of stable, monodisperse droplets for use as micro-reactors [6]. |

| Fluorescent Dyes (e.g., Fluorescein) | Critical tracer molecules for flow visualization and quantitative analysis; used to map streamlines in laminar flow, measure concentration gradients for diffusion studies, and quantify mixing efficiency [5]. |

| Programmable Syringe Pumps | Provide precise, computer-controlled pressure-driven or volume-driven flow of fluids into microchannels; essential for achieving stable flow conditions and for systematically varying flow parameters in experiments [6]. |

| Photoresist (e.g., SU-8) | A light-sensitive polymer used in photolithography to create high-resolution master molds on silicon wafers; these masters are the negative template for casting PDMS microchannels, defining the channel geometry [2] [5]. |

| Cyclic Olefin Copolymer (COC) | A thermoplastic polymer increasingly used for industrial-scale production of microfluidic chips via injection molding; offers excellent optical clarity, high chemical resistance, and low water absorption, making it suitable for diagnostic devices [2]. |

| Biocompatible Hydrogels (e.g., Matrigel) | Used to create 3D cell culture environments and as barrier structures within microchannels; essential for developing more physiologically relevant Organ-on-a-Chip models for pharmacological testing and disease modeling [5]. |

Optimizing Microchannel Geometry for Efficient Sample Transport and Mixing

In the pharmaceutical industry, the precision of analytical results and the efficacy of drug delivery systems are paramount. Microfluidic technology, which manipulates fluids at microscale dimensions, has emerged as a transformative tool, enabling high-throughput screening, precise dosing, and the creation of physiologically realistic microenvironments [1]. Within this domain, the geometry of microchannels is a critical design parameter that directly influences two fundamental processes: sample transport and mixing. Effective transport ensures that analyte bands reach their target without dispersion that could compromise diagnostic accuracy, while efficient mixing is essential for reactions, assays, and the synthesis of drug carriers [7] [8].

At the microscale, fluid flow is predominantly laminar, making turbulent mixing, common in macroscale systems, ineffective. Consequently, mixing relies primarily on molecular diffusion, which can be impractically slow for many applications [9]. Passive mixing strategies, which use channel geometry to induce secondary flows and chaotic advection, offer a powerful solution without the complexity and cost of external actuators [8] [9]. This guide delves into the optimization of microchannel geometry, providing a technical foundation for researchers and drug development professionals to design systems that enhance mixing performance and control sample dispersion, thereby improving the reliability and efficiency of pharmaceutical analysis.

Fundamental Principles of Microfluidic Flow and Mixing

Fluid Dynamics at the Microscale

In microfluidic systems, fluid behavior is governed by a low Reynolds number (Re), a dimensionless quantity representing the ratio of inertial forces to viscous forces. This results in laminar flow, where fluids move in parallel, ordered layers without turbulence [10]. While this allows for precise fluid control, it poses a significant challenge for mixing, which becomes dependent on the slow process of molecular diffusion. The key transport mechanisms involved are:

- Molecular Diffusion: The random thermal motion of molecules from regions of high concentration to low concentration. Its effectiveness is described by Fick's law and is most significant over short distances.

- Advection: The transport of molecules by the bulk motion of the fluid. In pressure-driven flows, the velocity profile is parabolic (Poiseuille flow), meaning molecules in the center of the channel travel faster than those near the walls.

- Hydrodynamic Dispersion: The combined effect of a non-uniform velocity profile (advection) and molecular diffusion, which leads to the spreading of an analyte band as it travels through a microchannel. This can be detrimental in separation processes but beneficial for mixing [7].

Performance Metrics for Mixing and Transport

To quantitatively evaluate and optimize microchannel designs, researchers use several key metrics:

Mixing Index (Mi): This metric quantifies the homogeneity of a mixture at a specific cross-section of a channel. It is calculated using the formula:

( \tau^2 = \frac{1}{n}\sum{i=1}^{n}(\omegai - \omega{\infty})^2 ) and ( Mi = 1 - \frac{\tau^2}{\tau{max}} )

where ( \omegai ) is the mass fraction at a sampling point, ( \omega{\infty} ) is the fully mixed concentration, and ( n ) is the number of sampling points. A mixing index of 1 indicates complete mixing, while 0 signifies no mixing [8].

Figure of Merit (FoM): This holistic metric balances mixing performance against the required energy input, defined as ( FoM = \frac{Mi}{\Delta p} ), where ( \Delta p ) is the pressure drop across the channel. A high FoM indicates efficient mixing with low parasitic power loss [8].

Analyte Band Dispersion: In transport and separation applications, minimizing dispersion is critical. It is often expressed as a percentage of band broadening, with lower values indicating better performance and more reliable diagnostic measurements [7].

Optimized Microchannel Geometries and Their Performance

Extensive research has identified several passive microchannel geometries that effectively enhance mixing and control transport. The following sections and tables summarize the optimized parameters and performance of key designs.

Wavy-Channel Micromixers

Wavy-channel designs feature sinusoidal walls, which are simple to manufacture, especially via stamping methods, making them economically attractive for industrial-scale production [8]. The geometry is defined by its width (w), height (h), wavy amplitude (a), and wavy frequency (f). Optimization studies using the Taguchi statistical method reveal that while higher amplitude and frequency generally improve the mixing index by creating stronger secondary flows, they also increase the pressure drop due to greater Darcy friction loss. Therefore, optimization must carefully balance these parameters to achieve a high Figure of Merit [8].

Table 1: Optimization Parameters and Performance for Wavy-Channel Micromixers [8]

| Geometric Parameter | Effect on Mixing Index (Mi) | Effect on Pumping Power | Optimization Goal |

|---|---|---|---|

| Wavy Amplitude (a) | Increases with higher amplitude | Increases with higher amplitude | Balance for high FoM |

| Wavy Frequency (f) | Increases with higher frequency | Increases with higher frequency | Balance for high FoM |

| Channel Width (w) | Influences flow profile and mixing | Affects flow resistance | Optimize with other parameters |

| Channel Height (h) | Influences flow profile and mixing | Affects flow resistance | Optimize with other parameters |

Grooved Serpentine Micromixers

This advanced topology combines two effective strategies: serpentine (curved) channels and grooved surfaces. Serpentine channels generate Dean vortices—two vertically stacked rotational flows caused by centrifugal forces. When asymmetric grooves (e.g., a staggered herringbone, SHB, pattern) are added to the channel bottom, they induce horizontally stacked vortices. The interaction between these orthogonal vortex systems creates complex, chaotic advection, dramatically enhancing mixing across the channel's cross-section [9]. Key geometric parameters for optimization include the inner radius of curvature (( R_{in} )) and the specific dimensions of the grooves (angle, depth, and apex position).

Table 2: Design Parameters and Performance of Grooved Serpentine Mixers [9]

| Parameter | Description | Optimized Value/Effect |

|---|---|---|

| Inner Radius (( R_{in} )) | Inner radius of the curved channel section | Optimized for mixing index >0.95 across Re 10-100 |

| Groove Angle | Angle of asymmetric grooves relative to channel axis | 45° |

| Groove Depth (( h_{groove} )) | Depth of the grooved patterns | 33 µm (50% of channel height) |

| Apex Position | Lateral position of the groove's apex | Switches at (2/3)W from the sidewall |

| Mixing Mechanism | Interaction of Dean flow (serpentine) and helical flow (grooves) | Creates complex vortices and saddle points |

Curved Microchannels for Dispersion Control

For applications like capillary electrophoresis and chromatography within lab-on-a-chip devices, controlling analyte band dispersion in curved sections is critical. Optimizing the curvature geometry can significantly reduce band broadening, which enhances resolution and diagnostic accuracy [7]. A key parameter is the internal-to-external curvature radius ratio (Rr).

Table 3: Impact of Curvature and Zeta Potential on Analyte Dispersion [7]

| Factor | Range | Impact on Analyte Band Dispersion |

|---|---|---|

| Curvature Radius Ratio (Rr) | 0.1 → 0.5 | Decreases dispersion from 42% to 15% |

| Wall Zeta Potential (ζ) | -0.1 V → -0.5 V | Increases dispersion from 25% to 90% |

| Microchannel Type | Type II (Optimized) | 60% reduction in dispersion post-optimization |

Experimental Protocols for Microchannel Optimization

A rigorous, iterative process of computational modeling and experimental validation is standard for optimizing microchannel geometry. The following protocol outlines a typical workflow.

Computational Fluid Dynamics (CFD) Modeling Protocol

Objective: To simulate fluid flow, species concentration, and mixing performance for a given microchannel geometry. Software: Commercial CFD packages such as ANSYS Fluent or COMSOL Multiphysics [8] [9].

- Geometry Creation and Meshing: Create a precise 3D model of the microchannel (e.g., wavy, serpentine, grooved). Generate a computational mesh, ensuring finer elements near walls and in regions of expected high velocity or concentration gradients.

- Define Governing Equations and Boundary Conditions:

- Physics Setup: Solve the steady-state incompressible Navier-Stokes equations (for momentum and continuity) and the transient species transport equation [8] [9].

- Boundary Conditions:

- Inlets: Specify inlet velocities or pressures for each fluid stream. Define the mass fraction of species (e.g., ωA=1, ωB=0 for a T-junction) [8].

- Outlet: Set a pressure outlet (often atmospheric pressure).

- Walls: Apply a no-slip boundary condition for velocity and a zero-flux condition for species.

- Solver Settings and Simulation:

- Select a pressure-based solver.

- Set fluid properties (density, viscosity, diffusion coefficient).

- Run the simulation until residuals converge to a pre-defined criterion (e.g., 10⁻⁶).

- Post-Processing and Analysis:

- Extract velocity fields and concentration contours across the channel.

- Calculate the Mixing Index (Mi) at various cross-sections using the formula in Section 2.2 [8].

- Calculate the pressure drop (( \Delta p )) between the inlet and outlet.

- Compute the Figure of Merit (FoM).

Design of Experiment (DoE) and Optimization Protocol

Objective: To systematically explore the design space and identify the optimal geometric parameters.

- Parameter Selection: Identify key geometric variables to optimize (e.g., wavy amplitude/frequency, inner radius of curvature, groove dimensions).

- DoE Matrix Setup: Employ a statistical method like the Taguchi method to create an orthogonal array of simulation runs. This approach efficiently samples the parameter space with a minimal number of simulations [8].

- Execution and Analysis:

- Run CFD simulations for each design in the Taguchi array.

- For each run, record the performance metrics (Mi, ( \Delta p ), FoM).

- Perform an analysis of variance (ANOVA) to determine the sensitivity of the performance metrics to each geometric parameter and identify the optimal parameter combination.

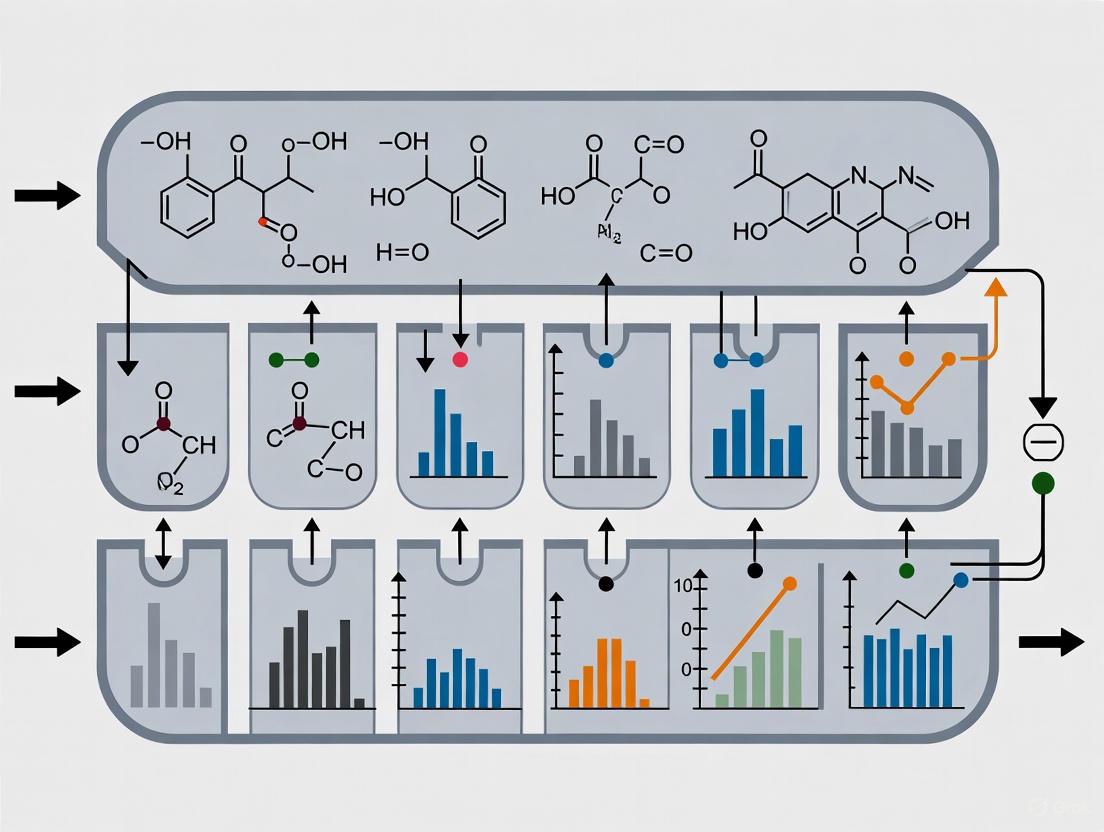

Workflow Visualization

The following diagram illustrates the integrated computational and experimental workflow for microchannel optimization.

Microchannel Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Successful experimentation in microfluidics requires specific materials and reagents. The following table details essential components for fabricating and operating optimized microchannels.

Table 4: Essential Research Reagents and Materials for Microfluidic Experimentation

| Item | Function/Description | Application Example |

|---|---|---|

| Polydimethylsiloxane (PDMS) | A silicone-based elastomer used for rapid prototyping of microchannels via soft lithography. Biocompatible and gas-permeable. | Standard material for academic prototyping of grooved serpentine and wavy channels [10] [9]. |

| Flexdym | A thermoplastic, biocompatible polymer enabling cleanroom-free fabrication. | Alternative to PDMS for more robust and mass-producible devices [10]. |

| Photoresist (e.g., SU-8) | A light-sensitive polymer used to create high-resolution molds on silicon wafers for soft lithography. | Creating the master mold for PDMS devices with features like herringbone grooves [9]. |

| Fluorescent Dyes | Tracers used to visualize and quantify fluid flow and mixing efficiency within microchannels. | Essential for experimental validation of mixing index in protocols [8]. |

| Buffer Solutions with adjusted Zeta Potential | Electrolyte solutions where ionic strength and pH are controlled to modify the wall zeta potential, affecting electroosmotic flow (EOF). | Critical for experiments focused on controlling analyte dispersion in electrokinetically-driven systems [7]. |

| Newtonian Fluids (e.g., Deionized Water, Glycerol solutions) | Fluids with constant viscosity, used to establish baseline hydraulic and mixing performance. | Used in initial CFD model validation and fundamental mixing studies [8] [9]. |

The strategic optimization of microchannel geometry is a cornerstone of effective microfluidic design for pharmaceutical research. As demonstrated, passive designs such as wavy channels, grooved serpentine mixers, and optimized curved channels can dramatically enhance mixing efficiency and control analyte transport by intelligently inducing secondary flows and chaotic advection. The quantitative data and protocols provided in this guide offer a clear roadmap for researchers.

The future of microfluidics in pharmaceuticals is inextricably linked to advances in design and manufacturing. Emerging trends, including AI-driven design optimization, the use of 3D printing for rapid prototyping of complex geometries, and the development of multi-layer hybrid systems, are pushing the boundaries of what is possible [10] [11]. By leveraging these optimized geometric strategies, scientists and drug development professionals can continue to build more reliable, efficient, and powerful microfluidic systems, accelerating the journey from discovery to clinical application.

The evolution of microfluidic technology has transformed pharmaceutical analysis research, enabling lab-on-a-chip systems that miniaturize and integrate complex laboratory functions. The selection of appropriate materials for microfluidic chip fabrication represents a fundamental decision that directly impacts device performance, experimental validity, and translational potential. Within the context of pharmaceutical research, material properties including biocompatibility, chemical resistance, optical characteristics, and fabrication feasibility must be carefully balanced against application-specific requirements. This guide provides a comprehensive technical comparison of predominant microfluidic materials—Polydimethylsiloxane (PDMS), glass, Polymethyl methacrylate (PMMA), and other engineering plastics—focusing on their suitability for pharmaceutical analysis applications. By synthesizing current research and experimental data, this review aims to equip researchers and drug development professionals with evidence-based criteria for optimal material selection in microfluidic chip design.

Fundamental Material Properties and Comparative Analysis

Material-Specific Characteristics and Pharmaceutical Applications

Polydimethylsiloxane (PDMS) remains the most widely used material for microfluidic prototyping in academic research settings. This silicone-based elastomer offers exceptional flexibility (elastic modulus of 300-500 kPa), optical transparency (240-1100 nm wavelength range), and high gas permeability beneficial for cell culture applications [12] [13]. However, PDMS exhibits significant limitations for pharmaceutical analysis, including hydrophobic molecule absorption, leaching of uncrosslinked oligomers, and limited chemical resistance to organic solvents, potentially compromising drug compound stability and quantitative analysis [12] [14]. The material's propensity to adsorb hydrophobic drugs and metabolites can significantly alter concentration profiles in pharmacokinetic studies [14].

Glass provides superior chemical resistance, minimal nonspecific adsorption, and excellent optical properties, making it invaluable for applications requiring high-performance liquid chromatography, capillary electrophoresis, and precise chemical synthesis [15] [16]. Its stable electroosmotic mobility and high thermal conductivity facilitate applications involving electrokinetic phenomena and thermal cycling [13]. However, glass processing demands specialized equipment, cleanroom facilities, and high-temperature bonding processes, increasing fabrication complexity and cost [16]. Its brittleness and poor gas permeability further limit certain cell culture applications [13].

Polymethyl methacrylate (PMMA) offers an advantageous balance of optical clarity, mechanical rigidity, and fabrication versatility. As a thermoplastic, PMMA can be processed using hot embossing, injection molding, or laser cutting, enabling cost-effective device replication [17] [18]. Its moderate UV resistance and biocompatibility with specific cell types make it suitable for various detection modalities and cellular assays [18] [14]. Surface modification via UV-ozone or plasma treatment enhances hydrophilicity and reduces adsorption of hydrophobic compounds, though treated surfaces may gradually revert to hydrophobic states [14].

Other Plastics including polystyrene (PS), polycarbonate (PC), and cyclic olefin copolymer (COC) offer specialized properties for pharmaceutical applications. PS is particularly valuable for cell culture studies due to its extensive use in biological laboratories and inherent biocompatibility [13] [14]. PC provides high thermal stability (glass transition temperature ~145°C) suitable for DNA thermal cycling applications [13]. COC exhibits low autofluorescence and excellent chemical resistance, making it ideal for sensitive detection applications [14].

Quantitative Material Comparison

Table 1: Comparative Properties of Microfluidic Materials for Pharmaceutical Applications

| Property | PDMS | Glass | PMMA | PS | COC |

|---|---|---|---|---|---|

| Biocompatibility | Good (with restrictions) [12] | Excellent [15] | Good with specific cell types [18] [14] | Excellent [13] [14] | Good [14] |

| Protein/Drug Adsorption | High (hydrophobic molecules) [12] [14] | Very Low [15] [13] | Moderate (reducible by treatment) [14] | Moderate (reducible by treatment) [14] | Low (after treatment) [14] |

| Optical Transparency | Excellent (240-1100 nm) [12] | Excellent [15] | Excellent [17] [18] | Excellent [13] | Excellent [14] |

| Gas Permeability | High [12] [13] | None [13] | Low [19] [18] | Low [13] | Low [14] |

| Chemical Resistance | Poor (swells in organic solvents) [12] | Excellent [15] [16] | Good [18] | Moderate [13] | Excellent [14] |

| Fabrication Complexity | Low [12] [20] | High [15] [16] | Moderate [17] [18] | Moderate [13] | Moderate [14] |

| Approximate Cost | Low [12] | High [16] | Low [17] [13] | Low [13] | Moderate [14] |

Table 2: Adsorption Properties of Testosterone and Metabolites on Different Materials [14]

| Material | Untreated Surface Adsorption | UV-Ozone Treated Surface Adsorption | Biocompatibility (HepG2 Culture) |

|---|---|---|---|

| PDMS | High | Not Stable | Good |

| PMMA | Moderate | Reduced | Moderate |

| PS | Moderate | Reduced | Excellent |

| PC | High | Significantly Reduced | Good |

| COC | Moderate | Significantly Reduced | Good |

Fabrication Methodologies and Experimental Protocols

PDMS Device Fabrication via Soft Lithography

The dominant protocol for PDMS microfluidic device fabrication employs soft lithography techniques, enabling rapid prototyping of microchannel networks with feature sizes down to the nanometer scale [12] [20]. The process begins with master mold fabrication, typically using silicon wafers patterned with SU-8 photoresist through photolithography [20]. PDMS prepolymer is prepared by mixing base and curing agent (commonly at 10:1 ratio for Sylgard 184), followed by degassing in a vacuum desiccator to remove entrapped air bubbles [20]. The mixture is poured onto the master mold and cured at 60-80°C for 1-2 hours [20]. Once cured, the PDMS replica is peeled from the mold, and access ports are created using biopsy punches. Bonding to glass substrates or other PDMS layers is achieved through oxygen plasma treatment, which activates silanol groups on both surfaces, enabling permanent covalent bonding when brought into conformal contact [12] [20]. The completed device is finally heated (60-80°C) for 1-2 hours to strengthen the bond [20].

PMMA Device Fabrication via Solvent Bonding and Hot Embossing

PMMA microfluidic devices can be fabricated through several approaches, with solvent bonding and hot embossing representing the most common methods [17] [18]. For solvent bonding, PMMA substrates are first machined using laser cutting or micromilling to create microchannel patterns [17]. The surfaces are cleaned sequentially with detergent, acetone, isopropanol, and deionized water in an ultrasonic bath, followed by nitrogen drying [17]. Optimal bonding employs solvent mixtures such as ethanol/acetone (1:1 ratio) applied to the PMMA surfaces, which facilitates transesterification reactions that create molecular bridges between substrates [17]. The assembled device is subjected to controlled pressure (30-50 N) and incubated in a vacuum oven at 50°C for 3 hours to complete bonding while minimizing channel deformation [17]. Hot embossing provides an alternative fabrication strategy, involving heating PMMA above its glass transition temperature (∼105°C) under pressure using a master mold, followed by cooling to retain the imprinted pattern [18]. This method enables high-resolution, high-throughput production suitable for commercialization [18].

Glass Device Fabrication via Etching and Bonding

Glass microfluidic fabrication employs photolithography and etching techniques adapted from semiconductor processing [16]. The process begins with cleaning the glass substrates, followed by deposition of photoresist and exposure through a photomask defining the microchannel pattern [16]. Development removes exposed resist, and the revealed glass areas are etched using hydrofluoric acid-based solutions [16]. Access holes for fluidic interconnects are created via drilling, sand-blasting, or ultrasonic machining [16]. Bonding of patterned glass to cover plates utilizes thermal fusion bonding (above 600°C) or anodic bonding (∼200°C with applied voltage), creating chemically resistant and optically clear devices [16]. The high temperature and specialized equipment requirements present significant barriers to implementation in conventional research laboratories [15] [16].

Material Selection Framework for Pharmaceutical Applications

Application-Specific Recommendations

Drug Screening and ADME-Tox Studies: PDMS should be avoided due to significant small molecule absorption, particularly for hydrophobic compounds [12] [14]. COC and PS demonstrate superior performance, with COC offering excellent chemical resistance and low adsorption after surface treatment [14]. PS provides established biocompatibility for cell-based assays, though surface modification may be necessary to reduce protein and drug adsorption [14].

High-Pressure Chromatographic Separations: Glass remains the preferred material for applications requiring resistance to organic solvents and minimal sample interaction [15] [16]. For higher throughput or disposable formats, PMMA and COC provide viable alternatives with good chemical stability and lower manufacturing costs [18] [14].

Organ-on-a-Chip and Cell Culture Models: Traditional PDMS offers advantages for oxygen/carbon dioxide exchange but suffers from hydrophobic molecule absorption and potential leaching of uncrosslinked oligomers [12] [19]. Surface-treated PS provides a physiologically relevant substrate with extensive validation for mammalian cell culture [13] [14]. For advanced models requiring optical accessibility and electrical sensing, glass-PDMS hybrid systems offer complementary benefits [16].

Point-of-Care Diagnostic Devices: PMMA excels in disposable diagnostic applications due to its low cost, manufacturability, and optical clarity [17] [18]. For detection modalities requiring low background fluorescence, COC provides superior performance [14].

Decision Framework and Future Directions

Material selection should follow a systematic evaluation of application requirements: (1) Identify critical chemical compatibility needs based on solvents and analytes; (2) Determine necessary optical properties for detection modalities; (3) Evaluate biocompatibility requirements for biological components; (4) Assess manufacturing constraints including scalability and cost; (5) Consider operational parameters including pressure, temperature, and gas exchange needs [12] [15] [16]. Emerging trends include development of surface modification technologies to enhance material performance, composite material strategies that combine advantages of multiple materials, and increased adoption of thermoplastic materials for commercial applications [19] [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Microfluidic Device Fabrication

| Material/Reagent | Function | Application Notes |

|---|---|---|

| Sylgard 184 PDMS Kit | Elastomeric substrate | Base:curing agent typically 10:1 ratio; degas before curing [20] |

| SU-8 Photoresist | Master mold fabrication | Negative tone epoxy resist; thickness varies with spin speed [20] |

| PMMA Sheets | Thermoplastic substrate | Optically clear; fabricate via laser cutting or micromilling [17] [18] |

| Ethanol/Acetone Mixture | Solvent bonding | 1:1 ratio optimal for PMMA bonding; minimal channel deformation [17] |

| Oxygen Plasma System | Surface activation | Creates silanol groups for PDMS-glass bonding; hydrophilizes surfaces [12] [20] |

| UV-Ozone Cleaner | Surface modification | Reduces adsorption on thermoplastics; enhances hydrophilicity [14] |

| Biopsy Punches | Access port creation | Create inlet/outlet ports in PDMS devices; various diameters available [20] |

Microfluidic technology, characterized by the manipulation of fluids in channels with dimensions of tens to hundreds of micrometers, has emerged as a transformative tool in pharmaceutical research and development [21] [22]. At the heart of any microfluidic system lies its architectural design, which dictates its functionality, throughput, and biological relevance. The evolution from simple planar (often referred to as two-dimensional or 2D) layouts to complex three-dimensional (3D) configurations represents a significant paradigm shift, enabling more sophisticated biomimetic environments and integrated analytical operations [23]. For researchers in drug development, the choice between planar and 3D architectures influences critical parameters including drug screening accuracy, predictability of human physiological responses, and overall experimental efficiency. This guide provides a technical examination of both architectural approaches, detailing their design principles, fabrication methodologies, and applications within modern pharmaceutical analysis.

Fundamental Design Principles and Material Selection

Core Principles of Microfluidic Operation

Microfluidic devices operate on fundamental principles that become particularly pronounced at the microscale. Laminar flow dominates in microchannels, with fluids flowing in parallel streams without turbulence, enabling precise control over mixing and chemical gradients [21] [24]. Surface effects become significantly enhanced due to the high surface-to-volume ratio, making surface chemistry and wettability critical design considerations [24]. The principle of miniaturization allows for reduced consumption of precious samples and reagents, lowering costs and enabling high-throughput experimentation [25] [24]. Furthermore, capillary action can be harnessed to move fluids without external pumping in certain designs, simplifying device operation [24].

Material Selection for Pharmaceutical Applications

Material choice is a critical determinant of microfluidic chip performance, affecting biocompatibility, chemical resistance, optical properties, and fabrication complexity.

- Polydimethylsiloxane (PDMS): A widely used elastomer in research settings, PDMS is favored for its optical transparency, gas permeability (beneficial for cell culture), and ease of prototyping. However, its porosity makes it susceptible to absorption of small molecules and swelling with organic solvents, which can limit its use in pharmaceutical analysis [26] [22].

- Glass and Silicon: These materials offer excellent optical clarity, high chemical resistance, and thermal stability. They are ideal for applications involving harsh solvents or high temperatures. Their rigidity, however, makes integrating active components like valves more challenging, and fabrication costs are typically higher [22].

- Thermoplastics (PMMA, COC, PC): Polymers like polymethylmethacrylate (PMMA) and cyclo-olefin copolymer (COC) provide a balance of properties, including good optical quality, mechanical strength, and suitability for mass production techniques like injection molding. They are often more chemically resistant than PDMS [22].

- Hydrogels: Materials such as alginate or collagen are used to create scaffolds within microfluidic devices, especially in 3D cell culture and organ-on-a-chip models. They provide a biomimetic extracellular matrix (ECM) that supports cell growth and function [26].

- 3D Printing Resins: Stereolithography (SLA) resins are increasingly used for rapid prototyping of complex 3D microfluidic architectures. Challenges remain with their optical transparency and inherent biocompatibility, often requiring post-processing surface functionalization to ensure cell adhesion and viability [27].

Table 1: Key Materials for Microfluidic Chip Fabrication in Pharmaceutical Research

| Material | Key Advantages | Key Limitations | Common Fabrication Methods | Ideal Use Cases |

|---|---|---|---|---|

| PDMS | Gas permeable, optically transparent, flexible, easy prototyping | Absorbs small molecules, swells with solvents, hydrophobic | Soft lithography, replica molding | Organ-on-chip, rapid prototyping, cell culture studies |

| Glass | Chemically inert, optically excellent, hydrophilic | Brittle, high fabrication cost, difficult to integrate valves | Etching, laser ablation, bonding | High-pressure/ temperature reactions, analytical chemistry |

| PMMA | Good optical clarity, rigid, low cost | Susceptible to solvents, lower temperature resistance | CNC machining, injection molding, laser ablation | Disposable diagnostic chips, electrophoretic separations |

| Hydrogels | Biocompatible, mimic extracellular matrix, tunable properties | Mechanically soft, may degrade over time | Direct casting, photopolymerization | 3D cell culture, tissue engineering, drug screening |

| SLA Resins | High resolution, complex 3D geometries, rapid prototyping | Poor optical clarity, can require surface modification for cell adhesion | Stereolithography 3D printing | Custom, complex 3D channel networks, integrated devices |

Planar Microfluidic Chip Architectures

Design and Fabrication

Planar microfluidic chips are characterized by their essentially two-dimensional layout, where channels and chambers are fabricated in a single plane, typically on a flat substrate [23]. The fabrication of these devices has been standardized over decades. For PDMS-based devices, the primary method is soft lithography, where a mold (often made of SU-8 photoresist on a silicon wafer) is created using photolithography. PDMS polymer is then poured over this mold, cured, and peeled off, resulting in a slab of PDMS containing the channel network. This slab is subsequently bonded to a glass slide or another PDMS layer to seal the channels [22]. For thermoplastic materials like PMMA or COC, hot embossing and injection molding are common manufacturing techniques, especially for cost-effective mass production [22]. Laser ablation is another versatile method used to directly engrave microchannel patterns into polymer substrates [22].

Applications in Pharmaceutical Analysis

The simplicity and maturity of planar architectures make them well-suited for a range of pharmaceutical applications:

- High-Throughput Drug Screening (HTDS): The planar format is ideal for creating arrays of microchambers or channels for parallelized testing of drug compounds on cells or enzymes, significantly reducing reagent consumption and time compared to conventional 96-well plates [25] [28].

- Droplet-Based Microfluidics: Utilizing immiscible phases, planar devices can generate uniform picoliter to nanoliter droplets that act as isolated microreactors. This is powerful for screening drug combinations, encapsulating single cells for analysis, and synthesizing nanoparticles with precise control over size and polydispersity [25].

- Analytical Separations: When coupled with detection techniques like laser-induced fluorescence or mass spectrometry, planar chips provide an excellent platform for efficient separations of drug compounds and metabolites via techniques such as capillary electrophoresis, offering rapid analysis with high resolution [28] [22].

Figure 1: Overview of Planar Microfluidic Chip Technology

Three-Dimensional (3D) Microfluidic Chip Architectures

Design and Fabrication Strategies

3D microfluidic chips feature channel networks that extend and interconnect across multiple layers or planes, enabling complex fluidic pathways that more closely mimic the intricate vasculature of biological tissues [23]. This architecture allows for fluidic routing that is impossible in a single plane. Key fabrication strategies include:

- Multi-Layer Soft Lithography: This technique involves fabricating multiple layers of PDMS, each containing a patterned channel network, and then bonding them together in a stack. Vertical "vias" are incorporated to create fluidic connections between layers, enabling complex 3D flow control [23].

- Additive Manufacturing (3D Printing): Techniques like Stereolithography (SLA) are revolutionizing the fabrication of 3D microfluidics. SLA uses a laser to selectively cure photosensitive resin layer-by-layer, directly building monolithic devices with intricate internal 3D channels without the need for assembly [27] [29]. This approach offers unparalleled design freedom but faces challenges related to resin biocompatibility and optical clarity [27].

- Integrated Scaffolds: In this approach, 3D architecture refers not only to the fluidic channels but also to the internal structure of the device. Hydrogels or other porous scaffolds are patterned within microfluidic chambers to support 3D cell culture, creating a more physiologically relevant microenvironment for cells compared to flat, 2D surfaces [26].

Applications in Pharmaceutical Analysis

3D architectures unlock advanced applications that require spatial complexity and biomimicry:

- Organs-on-Chips: These are advanced 3D microfluidic devices that host living human cells arranged to simulate the structure and function of human organs. By incorporating multiple cell types, mechanical cues (e.g., cyclic stretch for lungs), and perfusion, they create a more predictive model for drug efficacy, toxicity, and pharmacokinetic studies, potentially reducing the reliance on animal models [23] [28].

- Advanced Disease Models: 3D chips can be used to create sophisticated models of human diseases, such as tumors. A 3D tumor-on-a-chip can incorporate cancer cells in a 3D hydrogel scaffold, perfused by microvessels, to study tumor invasion and the penetration of anti-cancer drugs in a more realistic context [26] [28].

- Multi-Organ Microphysiological Systems: By linking several organ-on-chip modules through microfluidic channels, researchers can create a "human-on-a-chip" system. This allows for the study of inter-organ interactions and systemic ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) of drug candidates, providing a holistic view of drug effects [25] [28].

Figure 2: Core Concepts of 3D Microfluidic Chip Architectures

Comparative Analysis: Planar vs. 3D Architectures

A direct comparison of planar and 3D microfluidic architectures reveals a trade-off between simplicity and biological relevance, guiding researchers in selecting the appropriate platform for their specific pharmaceutical analysis needs.

Table 2: Comparative Analysis of Planar vs. 3D Microfluidic Chip Architectures

| Parameter | Planar (2D) Architecture | Three-Dimensional (3D) Architecture |

|---|---|---|

| Design Complexity | Low; primarily 2D channel layouts [23] | High; complex multi-layer networks and interconnects [23] |

| Fabrication Throughput | High for established methods (e.g., soft lithography) [22] | Lower; more complex and time-consuming processes [27] |

| Biocompatibility & Cell Culture | Suitable for 2D monolayer cell culture, but lacks physiological context [26] | Superior; enables 3D cell culture that mimics native tissue structure and function [23] [26] |

| Biomimicry | Limited; cannot replicate complex tissue interfaces or gradients [23] | High; can recreate in vivo-like microenvironments, mechanical forces, and concentration gradients [23] [28] |

| Throughput & Scalability | High; easily parallelized for screening [25] | Moderate to Low; more complex to operate and scale [23] |

| Integration Potential | Good for combining sample prep, reaction, and detection [24] | Excellent; can integrate multiple organ models and complex fluidic logic on a single chip [23] [25] |

| Typical Applications | High-throughput drug screening, droplet assays, analytical separations [25] [28] | Organs-on-chips, complex disease models, multi-organ interaction studies [23] [28] |

Experimental Protocols for Key Pharmaceutical Applications

Protocol 1: High-Throughput Drug Screening Using a Planar Droplet Array

This protocol outlines the use of a planar droplet microfluidic platform for rapid screening of drug compound combinations [25].

- Chip Priming: Flush the oil phase (e.g., fluorinated oil with surfactant) through the continuous flow channels of the PDMS/glass droplet chip to fill them and prevent aqueous solution from entering.

- Droplet Generation: Simultaneously pump the aqueous phase (containing cells, buffer, and a drug compound) and the oil phase into the chip. Use a flow-focusing or T-junction droplet generator geometry to produce monodisperse, water-in-oil droplets (typical volume: 0.1 - 10 nL).

- Droplet Trapping: Guide the generated droplets into an on-chip array of hydrodynamic traps. Each trap is designed to hold a single droplet, creating a massive parallel array of isolated micro-reactors.

- Drug Exposure & Incubation: Once the array is loaded, stop the flow. The trapped droplets, each containing cells and a specific drug condition, are incubated on-chip. The chip can be placed in a controlled environment (e.g., 37°C, 5% CO₂) for several hours to days.

- Viability Readout: Introduce a fluorescent viability stain (e.g., Calcein-AM for live cells, Propidium Iodide for dead cells) into the droplets via a continuous flow or a second merging step. Image the entire droplet array using an automated fluorescence microscope.

- Data Analysis: Use image analysis software to quantify the fluorescence intensity in each droplet, calculating the ratio of live to dead cells to determine drug efficacy for each condition in the screen.

Protocol 2: Establishing a 3D Liver-on-a-Chip for Toxicity Testing

This protocol details the creation of a 3D biomimetic liver model to assess drug-induced toxicity [26] [28].

- Chip Fabrication: Use an SLA 3D printer to fabricate a multi-layer chip from a biocompatible resin. The design should include a central tissue chamber connected to two flanking perfusion channels. Subject the printed chip to post-processing (e.g., UV curing, ethanol washing) and surface functionalization (e.g., with oxygen plasma or ECM protein coating) to improve wettability and cell adhesion.

- Hydrogel Preparation: Mix primary human hepatocytes with a liquid basement membrane extract (BME) hydrogel (e.g., Matrigel) or collagen type I solution on ice to prevent premature gelation.

- 3D Cell Loading: Carefully pipette the cell-hydrogel mixture into the central tissue chamber of the chip. Allow the hydrogel to polymerize at 37°C for 20-30 minutes, forming a 3D tissue construct.

- Perfusion Culture: Connect the chip to a pneumatic or syringe pump system. Circulate cell culture medium through the flanking perfusion channels. The medium will diffuse into the 3D tissue, providing nutrients and oxygen while removing waste. Culture the tissue under flow for 7-14 days to allow for tissue maturation and formation of functional bile canaliculi.

- Drug Treatment: Introduce the drug candidate into the perfusion medium at the desired concentration. Maintain flow for a set period (e.g., 24-72 hours).

- Endpoint Analysis:

- Metabolic Function: Collect effluent medium and measure the concentration of albumin and urea as markers of liver-specific function.

- Cytotoxicity: Measure the release of lactate dehydrogenase (LDH) into the effluent medium.

- Histology: At the end of the experiment, fix the tissue in the chip with paraformaldehyde, paraffin-embed, section, and stain (e.g., H&E for morphology, immunofluorescence for CYP450 enzymes) to assess structural integrity and protein expression.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Microfluidic Pharmaceutical Analysis

| Reagent/Material | Function | Example Use Case |

|---|---|---|

| PDMS (Sylgard 184) | Elastomeric polymer for flexible, gas-permeable chips [22] | Fabricating rapid prototypes for planar cell culture and droplet generators. |

| Fluorinated Oil w/ Surfactant | Continuous phase for forming and stabilizing aqueous droplets [25] | Creating stable water-in-oil emulsions for single-cell analysis or combinatorial drug screening. |

| Basement Membrane Extract (e.g., Matrigel) | Hydrogel scaffold mimicking the extracellular matrix [26] | Providing a 3D support structure for cultivating organoids or building organ-on-chip models. |

| Primary Human Cells | Biologically relevant cell source for predictive models [28] | Populating organ-on-chip devices (e.g., hepatocytes for liver chips, endothelial cells for vasculature). |

| Fluorescent Viability Stains (e.g., Calcein-AM/PI) | Live/Dead cell discrimination [25] | Quantifying drug-induced cytotoxicity in both 2D and 3D culture formats within microchips. |

| SLA Biocompatible Resin | Photopolymer for 3D printing monolithic chips [27] | Additively manufacturing devices with complex 3D internal architectures. |

The strategic selection between planar and 3D microfluidic architectures is a fundamental decision in the design of pharmaceutical research platforms. Planar chips offer a proven, high-throughput path for screening and analysis, while 3D architectures provide unprecedented biological fidelity for predictive modeling of human physiology and disease. The ongoing convergence of these fields—such as incorporating 3D cell culture units into highly parallel planar screening arrays—points to a future where microfluidic systems will offer both high content and high throughput [23] [28].

Future advancements will be driven by innovations in materials science, particularly the development of more biocompatible and functional 3D printing resins, and the integration of artificial intelligence for chip design and data analysis [23] [27]. Furthermore, the push for standardization and commercialization will be critical for translating these sophisticated lab-based technologies into robust, reliable tools that can be widely adopted within the pharmaceutical industry to streamline drug development pipelines and improve the success rate of new therapeutics [30] [28].

Transforming Pharma R&D: Microfluidic Applications from Drug Screening to Delivery

Lab-on-a-Chip (LOC) Systems for High-Throughput Drug Screening and Potency Testing

The development of new therapeutics is a complex process, characterized by extensive timelines, high costs, and a significant attrition rate where over 90% of screened drug candidates fail after entering clinical trials, largely due to their inability to accurately capture human physiological responses during the initial screening phases [31]. Within this challenging landscape, Lab-on-a-Chip (LOC) technology has emerged as a transformative tool for high-throughput drug screening (HTDS). LOC systems are defined by the miniaturization and integration of multiple laboratory functions—such as sample preparation, analysis, and detection—onto a single chip, typically measuring only a few square centimeters [25]. By leveraging microfluidics, the science of manipulating fluids at sub-millimeter scales, these systems enable high-throughput testing and flexible automation while offering the critical advantages of miniaturized size, low reagent consumption, high analytical accuracy, and user-friendliness [31] [25].

The fundamental principle behind LOC technology for pharmaceutical analysis is the replication of critical biological environments in a controlled, in vitro setting. This capability is paramount for improving the predictive power of early-stage drug screening. The internal dimensions of these chips, which range from micrometers to millimeters, lead to drastically reduced consumption of samples and reagents, often at the nanoliter and picoliter levels [25]. When combined with multichannel and array designs, this miniaturization allows for high-throughput screening that can increase the speed of analysis by hundreds of times compared to conventional methods, while simultaneously lowering associated costs [25]. For drug development professionals, this translates into a powerful platform that can more reliably predict the efficacy, toxicity, and pharmacokinetics of drug compounds in humans, thereby de-risking the pipeline and accelerating the journey from discovery to market.

Core LOC Technology Platforms and Their Applications

LOC systems are not a monolithic technology but encompass a diverse array of platforms, each tailored to address specific challenges in drug screening. The most impactful of these include organ-on-a-chip systems, droplet-based microfluidics, and chips designed for three-dimensional (3D) cell culture. Each platform offers a unique set of advantages for mimicking human physiology and conducting high-throughput potency testing.

Organ-on-a-Chip platforms are sophisticated microfluidic devices that contain continuously perfused, living human cells arranged to simulate tissue-level and organ-level functions. These systems provide a bridge between conventional 2D cell cultures and complex in vivo animal models. They can be configured as single-organ systems (e.g., skin-on-a-chip, kidney-on-a-chip) or as interconnected multi-organ chips [25]. A key application is the development of complex disease models, such as a glioblastoma (GBM) model surrounded by vascular cells to study the tumor microenvironment (TME) [32]. One advanced model constructs an arterial-like structure by encapsulating GBM spheroids with layers of human smooth muscle cells (SMCs) and human umbilical vein endothelial cells (HUVECs), thereby replicating the critical cell-cell interactions and blood flow-induced shear stress found in native tissues [32]. Comparative analyses using such models have revealed the significant role of proteins like platelet endothelial cell adhesion molecule (PECAM) in tumor-vascular interactions, demonstrating how organ-on-a-chip technology can uncover novel biological mechanisms and assess drug resistance [32].

Droplet Microfluidics involves compartmentalizing reactions or assays into nanoliter to picoliter volume droplets, which are generated and manipulated within an immiscible carrier fluid. This platform acts as a highly efficient micro-reactor system. Its primary advantages include separate compartments for each experiment, very low reagent consumption, excellent repeatability, and rapid mixing due to high surface-to-volume ratios [25]. For drug screening, droplet-based methods are exceptionally well-suited for high-throughput compound screening. They can be used to create in vitro microtumor models or for encapsulating cells in 3D cultures, providing a more physiologically relevant screening environment than traditional well plates [25] [33]. A prominent technique is the sequential operation droplet array, which allows for the screening of different drug dosing combinations and treatment durations to optimize therapeutic regimens with minimal consumption, a crucial capability for managing complex diseases requiring combination therapies [25] [31]. Compared to traditional 96-well plate screening, droplet microfluidic platforms can reduce sample consumption by approximately 200 times and slash reaction times from hours to just minutes [25] [31].

3D Cell Culture and Microfluidic Hydrogel Chips represent another major technological branch. Moving beyond flat, 2D cell monolayers, these systems allow cells to be embedded within hydrogels (e.g., alginate) in microchannels, creating a 3D microenvironment that more accurately recapitulates the biological and physiological parameters of cells in vivo [25] [33]. This 3D architecture facilitates superior cell-cell and cell-matrix interactions, which are critical for accurate assessment of drug potency and mechanisms of action. Microfluidic hydrogel chips are particularly adept at performing long-term cell culture and establishing diffusion-based nutrient and drug transport models that mimic natural tissues [25]. The ability to culture cells in three dimensions within a dynamic microfluidic environment provides a more predictive model for how a drug will penetrate and act upon tissues in the human body, addressing a major limitation of conventional screening assays.

Table 1: Comparison of Key LOC Technology Platforms for Drug Screening

| Platform Type | Key Advantages | Common Applications in Drug Screening | Inherent Challenges |

|---|---|---|---|

| Organ-on-a-Chip [25] [32] | Reduced complexity of operation; Models organ-level functionality; Can investigate multi-organ interactions | Disease modeling (e.g., tumor microenvironment); Toxicity testing; Absorption and metabolism studies | Difficult to fully replicate all organ functionalities; Can involve intricate design and manufacturing |

| Droplet Microfluidics [25] [33] [31] | Ultra-low consumption; High-throughput; Rapid mixing and response times; Compartmentalization | High-throughput compound screening; Single-cell analysis; Optimizing drug combination regimens | Complex manufacturing; Limited detection parameters; Not always ideal for quantification |

| 3D Cell Culture/Hydrogel Chips [25] [33] | Mimics in vivo cellular microenvironment; Recapitulates natural tissue diffusion; Suitable for long-term culture | Potency testing of anti-cancer drugs; Studies of drug penetration; Mechanistic action studies | Application range not universal; Methods for commercial promotion are still maturing |

Quantitative Performance and Detection Methodologies

The efficacy of any drug screening platform is ultimately judged by its performance metrics and its ability to generate reliable, quantitative data. LOC systems excel in this regard, particularly when coupled with advanced detection techniques. The quantitative superiority of LOC platforms is evident in direct comparisons with traditional methods. For instance, droplet-based microfluidics can reduce sample consumption by approximately 200-fold and decrease reaction times from 2 hours to just 2.5 minutes when compared to standard 96-well plate screenings [25] [31]. This dramatic enhancement in speed and efficiency is a cornerstone of high-throughput screening.

To capture the rich biological data generated within these micro-environments, LOCs are often integrated with a variety of sensitive detection instruments. The choice of detection method depends on the specific assay and the type of analyte being measured. Common and powerful combinations include:

- Electrochemical Detection: Used for measuring metabolic activity and cell viability, often in real-time [25].

- Mass Spectrometry: Coupled via nano-HPLC-Chip-MS/MS interfaces for detailed analysis of metabolites, proteins, and secreted biomarkers from cells on-chip [25].

- Optical Detection: Includes methods like UV spectroscopy for concentration analysis, chemiluminescence for enzymatic assays, and surface-enhanced Raman spectroscopy for highly sensitive molecular fingerprinting [25].

- Impedance Sensing: Techniques like Electric Cell-substrate Impedance Sensing (ECIS) are deployed to monitor barrier function integrity in real-time, which is crucial for modeling endothelial and epithelial tissues and assessing toxin- or drug-induced damage [31].

Table 2: Key Quantitative Performance Metrics of LOC Systems

| Performance Parameter | LOC System Capability | Traditional Method (e.g., 96-well plate) Comparison | Significance for Drug Screening |

|---|---|---|---|

| Reagent/Sample Consumption [25] [31] | Nanoliter to Picoliter scale | Microliter to Milliliter scale | Drastically reduces costs, especially for rare/expensive compounds |

| Analytical Throughput [25] | High (via multiplexing and droplet arrays) | Moderate | Enables screening of vast compound libraries in a shorter time |

| Assay Response Time [25] [31] | Minutes (e.g., ~2.5 minutes) | Hours (e.g., ~2 hours) | Accelerates feedback for iterative drug design and optimization |

| Sensitivity (LOD) [31] | Sub-microgram per liter (e.g., 0.005–0.025 µg L⁻¹ for antidepressants) | Varies, but generally higher | Allows detection of low-abundance biomarkers and subtle cellular responses |

A concrete example of a quantitative bioassay performed on an LOC is the on-chip electromembrane surrounded solid phase microextraction (EM-SPME) for determining tricyclic antidepressants from biological fluids [31]. In this setup, a conductive coating of poly(3,4-ethylenedioxythiophene)–graphene oxide (PEDOT-GO) is electrodeposited on an SPME fiber. This method achieved remarkably low limits of detection, ranging from 0.005 to 0.025 µg L⁻¹, and demonstrated a wide linear range when coupled with gas chromatography–mass spectrometry [31]. This highlights the capability of LOC systems to perform sophisticated sample preparation and analysis with exceptional sensitivity, making them suitable for pharmacokinetic and metabolomic studies in drug development.

Detailed Experimental Protocol: Tumor-Vascular Interaction Screening

The following protocol details the creation and use of a glioblastoma (GBM) tumor-vascular model on a chip for high-throughput drug screening, based on a recently developed platform [32]. This protocol exemplifies the integration of several core LOC technologies, including 3D spheroid culture, co-culture systems, and dynamic flow.

Research Reagent Solutions and Essential Materials

Table 3: Essential Materials and Reagents for Tumor-Vascular LOC Model

| Item Name | Function/Description | Application in Protocol |

|---|---|---|

| Human Umbilical Vein Endothelial Cells (HUVECs) [32] | Forms the inner lining of the vascular model, mimicking capillary and arterial endothelium. | Used in both capillary (HUVECs only) and arterial (with SMCs) model configurations. |

| Human Smooth Muscle Cells (SMCs) [32] | Provides structural support to the vessel wall in the arterial model. | Co-cultured with HUVECs to create a layered arterial structure around the tumor spheroid. |

| Glioblastoma (GBM) Cell Line [32] | Forms the tumor core of the model, representing the disease target. | Cultured as 3D spheroids prior to encapsulation within the vascular cell layers. |

| Hydrogel Matrix (e.g., Alginate) [32] | A biocompatible polymer that forms a 3D scaffold for cell encapsulation. | Used to encapsulate the GBM spheroids and vascular cells, mimicking the extracellular matrix. |

| Cell Culture Media | Provides nutrients for maintaining cell viability and function. | Circulated through the microfluidic device to feed the constructs and apply shear stress. |

| Anti-Cancer Drug Candidates | The compounds whose efficacy and potency are being tested. | Introduced into the circulating media to assess their effect on the tumor-vascular model. |

| Cytokine/Antibody Assay Kits | For detecting secreted proteins (e.g., PECAM, drug resistance cytokines). | Used to collect and analyze effluent from the chip to quantify biological responses. |

Step-by-Step Workflow

GBM Spheroid Formation:

- Culture GBM cells in a low-adherence, U-bottom well plate to promote self-assembly into 3D spheroids.

- Incubate until spheroids reach a uniform and desired size (typically 150-300 µm in diameter).

Vascular Model Construction:

- For the Arterial Model: Prepare a mixed-cell suspension containing the pre-formed GBM spheroid, human SMCs, and HUVECs in a hydrogel precursor solution (e.g., alginate).

- For the Capillary Model: Prepare a suspension of the GBM spheroid with HUVECs only in the hydrogel solution.

- Load the cell-hydrogel mixture into the microfluidic chip's designated cell culture chamber. Use on-chip gelation triggers (e.g., exposure to calcium ions for alginate) to polymerize the hydrogel, thereby encapsulating the cells and forming the 3D construct.

On-Chip Culture and Perfusion:

- Connect the chip to a pneumatic or syringe pump system to initiate continuous perfusion of cell culture media.

- Set the flow rate to generate a physiologically relevant shear stress on the vascular endothelial layer (e.g., 0.5 - 5 dyn/cm²).

- Maintain the system under standard cell culture conditions (37°C, 5% CO₂) for a predetermined period to allow for model maturation and the establishment of robust cell-cell interactions.

Drug Administration and Screening:

- Switch the perfusion fluid from pure culture media to media containing the anti-cancer drug candidate(s) at specified concentrations.

- For high-throughput screening, the platform can be scaled using an array of chips or multiple chambers to test several drugs or concentrations in parallel.

- Maintain drug exposure for a set duration (e.g., 24-72 hours) while continuous circulation is maintained.

Endpoint Analysis and Data Collection:

- Viability and Potency Assessment: After drug treatment, introduce fluorescent live/dead cell stains into the system. Use on-chip or off-chip fluorescence microscopy to quantify cell death within the tumor and vascular compartments.

- Molecular Analysis: Collect effluent from the chip outlet during and after drug treatment. Analyze this media using ELISA or other immunoassays to quantify the secretion of biomarkers (e.g., PECAM) and drug resistance cytokines.

- Gene Expression: At the end of the experiment, retrieve the hydrogel constructs, dissociate the cells, and perform RNA extraction. Use qRT-PCR to analyze the expression of genes associated with tumor progression and metastasis (e.g., MMPs, VEGF) [32].

LOC systems represent a paradigm shift in the approach to high-throughput drug screening and potency testing. By enabling the creation of more physiologically relevant human models in a miniaturized, automated, and high-throughput format, this technology directly addresses the critical bottlenecks of cost, time, and predictive accuracy that have long plagued the pharmaceutical industry [31] [25]. The integration of advanced capabilities such as organ-on-a-chip disease models, droplet-based microreactors, and dynamic 3D cell culture within microfluidic environments provides a powerful "scientist's toolkit." This toolkit allows researchers to dissect complex drug-tissue interactions, uncover novel mechanisms of action and resistance, and generate high-quality quantitative data with unprecedented efficiency [32]. As these platforms continue to evolve, their adoption in academia and the pharmaceutical industry is poised to enhance the success rate of clinical trials and accelerate the delivery of new, effective therapeutics to patients.

Organ-on-a-Chip Models for Predictive Toxicology and Pharmacokinetic/Pharmacodynamic (PK/PD) Studies