Navigating Big Data in Environmental Science: Challenges, Solutions, and Future Frontiers

This article provides a comprehensive analysis of the challenges and solutions associated with big data in environmental science.

Navigating Big Data in Environmental Science: Challenges, Solutions, and Future Frontiers

Abstract

This article provides a comprehensive analysis of the challenges and solutions associated with big data in environmental science. It explores the foundational 'Five Vs' of big data and their unique implications for environmental datasets, examines cutting-edge methodological applications from climate modeling to biodiversity conservation, and addresses critical troubleshooting areas like data quality and algorithmic bias. Furthermore, it discusses validation frameworks and the impact of data-driven insights on environmental policy. Designed for researchers and scientists, this review synthesizes current knowledge to guide the responsible and effective use of big data for tackling complex environmental problems.

The Big Data Landscape in Environmental Science: Defining the Challenge

Big Data represents a paradigm shift in scientific analysis, characterized by the Five V's: Volume, Velocity, Variety, Veracity, and Value. In environmental science, where research is critical for addressing climate change, biodiversity loss, and sustainable development, these characteristics present both unprecedented opportunities and formidable challenges. This whitepaper provides an in-depth technical examination of the Five V's, framing them within the context of environmental research. It details practical methodologies for managing large-scale environmental datasets, visualizes core workflows, and provides a toolkit of essential resources, aiming to equip researchers and scientists with the knowledge to navigate the complexities of Big Data in their pursuit of actionable environmental insights.

Big Data refers to extremely large and complex datasets that are difficult to process using traditional data management tools. The framework of the Five V's offers a lens to understand its unique dimensions [1]. For environmental science, this data deluge comes from a multitude of sources, including satellite remote sensing, climate models, in-situ sensors, social media, and genomic sequencing [2] [3] [4]. The capacity to harness this information is transforming the field, enabling large-scale analyses of agricultural production [4], precise monitoring of species distribution [5], and real-time assessment of community vulnerability to climate impacts [4]. However, the sheer scale and heterogeneity of these datasets necessitate advanced computational frameworks and carefully considered methodologies to ensure the derived insights are robust, reliable, and ultimately, of practical Value.

Deconstructing the Five V's: A Technical Guide

This section dissects each of the Five V's, providing definitions, contextualizing them within environmental research, and presenting associated challenges and solutions.

Volume

- Definition: Volume denotes the immense quantity of generated and stored data. Measurements now regularly range from terabytes (TB) and petabytes (PB) to zettabytes (ZB) [2] [1].

- Environmental Context: The Centre for Environmental Data Analysis (CEDA) archive in the UK, for instance, holds over 15 petabytes of data in more than 250 million files, with an influx of over 10 terabytes of new data daily [2]. Satellite missions, such as the Copernicus Programme's Sentinel fleet, are primary drivers of this data volume, with CEDA alone archiving over 8PB of Sentinel data [2].

- Challenges & Solutions:

- Challenge: High infrastructure costs and performance bottlenecks associated with storing and processing massive datasets [2] [6].

- Solution: Implementation of tiered storage architectures (disk, object store, tape) and data lifecycle management policies [2]. CEDA employs a "fileset" system to logically group data for efficient storage management and a Near Line Archive (NLA) to automatically move less frequently accessed data to cost-effective tape storage, which users can recall on demand [2].

Table 1: Representative Data Volumes in Environmental Science

| Data Source | Exemplar Volume | Use Case in Environmental Research |

|---|---|---|

| Sentinel Satellite Missions (at CEDA) | Over 8 Petabytes (and growing daily) [2] | Monitoring ice sheet changes, forest fires, land use change, and sea surface temperatures [2] |

| CEDA Archive (Total) | Over 15 Petabytes, 250 million files [2] | Supporting atmospheric and earth observation research for the UK community [2] |

| Global Data Sphere (Prediction for 2025) | Over 180 Zettabytes [7] | Encompasses total global data creation and replication across all domains [7] |

Velocity

- Definition: Velocity describes the speed at which new data is generated, captured, and processed [1]. This can involve high-frequency streaming data requiring real-time analysis.

- Environmental Context: This is critical for early warning systems for natural disasters like floods and fires, and for real-time monitoring of air quality or pollutant levels [5] [1] [8]. Social media platforms generate continuous streams of geotagged data that can be mined for near real-time understanding of human interaction with the environment [3] [4].

- Challenges & Solutions:

- Challenge: Processing and analyzing high-speed data streams to enable timely insights and responses [6].

- Solution: Employing stream processing frameworks like Apache Flink or Apache Kafka, and leveraging cloud-based analytics platforms for scalable computation [6]. AI algorithms are increasingly used to predict phenomena like energy production from renewable sources based on real-time weather patterns [8].

Variety

- Definition: Variety refers to the different types and formats of data, which can be structured (e.g., databases), semi-structured (e.g., JSON, XML), or unstructured (e.g., images, text, video) [1] [7].

- Environmental Context: Researchers routinely integrate diverse datasets. A single study might combine structured climate model output (e.g., NetCDF files), unstructured text from social media posts, imagery from satellite sensors and street-view cameras, and semi-structured JSON data from IoT sensors [3] [4].

- Challenges & Solutions:

- Challenge: Achieving interoperability between heterogeneous data formats and structures to enable unified analysis [2] [6].

- Solution: Adopting and enforcing community data standards. CEDA mandates the use of the Climate and Forecast (CF) conventions for NetCDF files, which standardize metadata and enable data from different sources to be compared [2]. Data integration platforms and semantic layers can also virtualize access to disparate sources [6].

Veracity

- Definition: Veracity concerns the quality, accuracy, and trustworthiness of the data and its sources [1]. It is a cornerstone of credible scientific research.

- Environmental Context: Inherent biases in novel data sources pose significant challenges. For example, social media data (SMD) and street view imagery (SVI) can suffer from spatial sampling biases (e.g., oversampling of urban and tourist areas) and demographic representation issues [5] [3]. Similarly, imbalanced data, where certain classes of events (e.g., forest fires) or species observations are rare, can lead to flawed models if not properly addressed [5].

- Challenges & Solutions:

- Challenge: Ensuring data quality and managing uncertainty, especially with novel and unstructured data sources [5] [3].

- Solution: Implementing rigorous data cleaning, validation, and profiling processes [6]. Techniques to handle spatial autocorrelation (SAC) and data imbalance, such as spatial cross-validation and synthetic minority over-sampling, are essential for robust geospatial modeling [5]. Transparent data lineage tracking is also critical for auditability [6].

Value

- Definition: Value is the ultimate benefit derived from the analysis of Big Data. It represents the actionable insights and informed decision-making capabilities enabled by processing the other four V's [1] [9].

- Environmental Context: The value of Big Data in environmental science is demonstrated in its application to critical issues. It enables the prediction of climate change impacts on crop yields for food security [4], the identification of communities most vulnerable to climate risks [4], and the empirical measurement of Big Data Analytics' positive impact on corporate environmental performance [9].

- Challenges & Solutions:

- Challenge: Extracting meaningful, actionable, and trustworthy insights from the complexity and noise of Big Data [1].

- Solution: Employing advanced analytical techniques, including machine learning and AI, and ensuring close collaboration between domain scientists and data experts to frame research questions and interpret results effectively [5] [8]. Centralized semantic layers can help harmonize metrics and ensure all stakeholders are using consistent, trusted definitions [6].

Experimental Protocols for Big Data Analysis in Environmental Research

The workflow for data-driven geospatial modeling provides a robust framework for addressing Big Data challenges in environmental science [5]. The following protocol outlines the key stages.

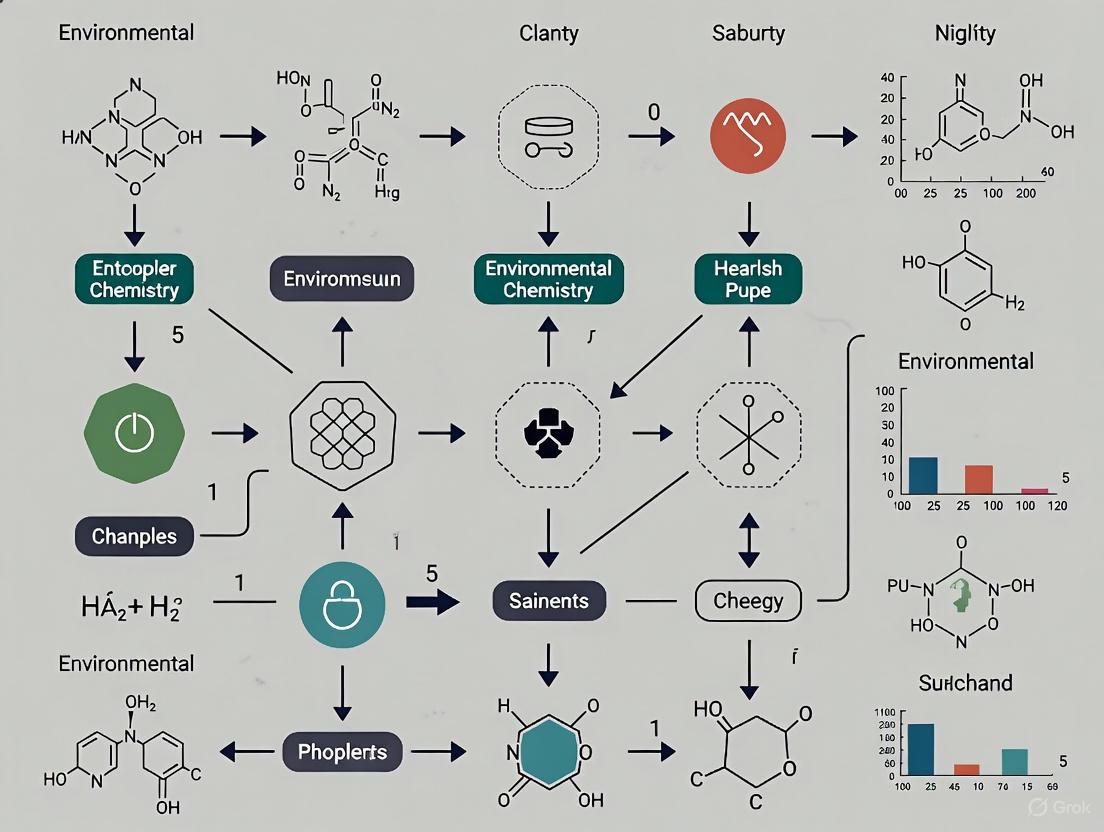

Diagram 1: Geospatial modeling workflow for environmental Big Data.

Problem Understanding and Data Collection

- Objective: Define the environmental research question and identify relevant, multi-modal data sources.

- Protocol:

- Problem Formulation: Clearly articulate the hypothesis, such as "Identifying factors influencing urban park usage and perceived benefits."

- Multi-Source Data Acquisition:

- Social Media Data (SMD): Collect geotagged photographs and text from platforms like Flickr or Twitter to understand human visitation and sentiment [3] [4]. APIs are typically used for large-scale data collection.

- Street View Imagery (SVI): Acquire image sequences from services like Google Street View to assess street-level green space visibility and quality [3].

- Mobility Data (MD): Obtain anonymized mobile device location data to quantify footfall and visitor origins more representatively [3].

- Ancillary Data: Integrate traditional data like census demographics, land cover maps, and weather records.

Data Preprocessing and Feature Engineering

- Objective: Clean, integrate, and transform raw data from multiple sources into a consistent format for analysis. This stage directly addresses Variety and Veracity.

- Protocol:

- Data Cleaning:

- SMD: Remove duplicate posts, bots, and irrelevant content. For images, use AI-based convolutional neural networks (CNNs) to classify content (e.g., presence of wildlife, vegetation) [4].

- SVI: Use semantic segmentation models (e.g., PSPNet) to calculate the Green View Index (GVI), quantifying the percentage of vegetation in each image [3].

- Data Integration: Spatially and temporally align all datasets using GIS software or computational libraries (e.g., GeoPandas in Python). A common spatial grid and time interval must be established.

- Addressing Data Bias (Veracity):

- Imbalance: For classification tasks (e.g., predicting rare fire events), apply techniques like SMOTE (Synthetic Minority Over-sampling Technique) to rebalance training data [5].

- Spatial Autocorrelation (SAC): Test for SAC using Moran's I or similar indices. If present, it must be accounted for in model validation (see Step 4) [5].

- Data Cleaning:

Model Selection, Training, and Validation

- Objective: Train a machine learning model to identify patterns and make predictions, while rigorously evaluating its performance to ensure generalizability.

- Protocol:

- Model Selection: Choose an algorithm based on the task. Random Forests or Gradient Boosting machines are common for tabular data, while CNNs are used for image analysis [5] [3].

- Model Training: Use a portion of the processed data to train the model, optimizing hyperparameters via cross-validation.

- Spatial Validation (Critical for Veracity): To avoid over-optimistic performance from SAC, use a spatial cross-validation technique. This involves partitioning data by location (e.g., k-fold by region) so that models are trained and tested on geographically distinct areas, providing a realistic measure of predictive power [5].

- Uncertainty Estimation: Quantify prediction uncertainty using methods like bootstrapping or quantile regression to communicate the reliability of the results [5].

Model Deployment, Inference, and Analysis

- Objective: Apply the validated model to generate spatial predictions (e.g., maps) and derive actionable insights (Value).

- Protocol:

- Model Inference: Run the trained model on held-out data or new geographic areas to create prediction maps (e.g., maps of park visitation probability or ecosystem service value) [5] [3].

- Interpretation and Synthesis: Analyze model outputs and feature importance to understand driving factors. Combine quantitative results with qualitative domain knowledge to form conclusive, actionable recommendations for stakeholders and policymakers [4].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table catalogs key computational tools, standards, and data sources essential for handling the Five V's in environmental research.

Table 2: Key Research Reagent Solutions for Environmental Big Data

| Tool/Standard Category | Representative Examples | Primary Function |

|---|---|---|

| Data Formats & Standards | NetCDF (with CF Conventions), NASA Ames, BADC-CSV [2] | Standardized formats for climate and environmental data that ensure metadata richness and long-term interoperability. |

| Data Processing & Analysis | Climate Data Operators (CDO), NetCDF Operators (NCO), Python (cf-python, cf-plot) [2] | Command-line and programming tools for data manipulation, analysis, and visualization of structured geospatial data. |

| Computational Frameworks | Apache Hadoop, Apache Spark [10] | Distributed computing platforms that enable parallel processing of massive datasets across clusters of computers. |

| Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch [5] | Libraries for implementing a wide range of ML and deep learning models for classification, regression, and pattern recognition. |

| Novel Data Sources | Social Media Data (SMD), Street View Imagery (SVI), Mobility Data (MD) [3] | Provide high-resolution, human-centric data on landscape use, perceptions, and movement at large spatial scales. |

| Data Integration Tools | Talend, Informatica PowerCenter, IBM InfoSphere, CloverDX [7] | Platforms for combining, cleaning, and transforming data from disparate sources into a unified, analysis-ready format. |

The Five V's of Big Data provide a critical framework for understanding the transformative potential and inherent complexities of modern environmental research. Successfully navigating the challenges of Volume, Velocity, Variety, and Veracity is the pathway to deriving genuine Value—whether it be in crafting effective climate mitigation policies, protecting biodiversity, or building sustainable and resilient communities. The future of environmental science hinges on the interdisciplinary collaboration between domain experts and data scientists, the continued development and adoption of robust computational tools and standards, and a steadfast commitment to ethical and verifiable data practices. By embracing this data-driven paradigm, the research community can unlock deeper insights into the intricate workings of our planet and propel the development of effective solutions for its most pressing environmental challenges.

The field of environmental science is undergoing a profound transformation, driven by an unprecedented influx of large, complex, and diverse datasets. This "data deluge" originates from a proliferation of sources, from advanced satellite constellations to ground-based citizen sensing networks, collectively termed Environmental Big Data [11]. This paradigm shift presents both extraordinary opportunities and significant challenges for researchers and scientists. The integration of these diverse data streams is critical for developing a holistic understanding of complex Earth systems, yet it demands sophisticated computational architectures and novel analytical approaches to manage issues of volume, heterogeneity, and veracity [11] [12]. Framed within a broader thesis on understanding big data challenges in environmental science, this whitepaper provides a technical guide to the primary sources of this data deluge, their characteristics, and the methodologies for their effective use. It aims to equip researchers with the knowledge to navigate this complex landscape, leveraging these data for breakthroughs in environmental monitoring, climate research, and sustainable development.

Remote Sensing: The Aerial and Satellite Vantage Point

Remote sensing serves as a foundational pillar for environmental big data, providing synoptic, multi-scale observations of the Earth's surface and atmosphere. The field has evolved from basic aerial photography to the acquisition of high-resolution multispectral and hyperspectral data from a diverse array of platforms [11].

The following table categorizes the primary remote sensing data sources, their key attributes, and representative applications in environmental research.

Table 1: Primary Remote Sensing Data Sources and Characteristics

| Data Source | Key Characteristics | Environmental Applications | Examples / Specifications |

|---|---|---|---|

| Satellite Imagery | Broad coverage, multi-scale data, varying spatial & temporal resolution [11]. | Environmental monitoring, agriculture, urban planning, resource management [11]. | High-resolution optical, multispectral, hyperspectral, and Synthetic Aperture Radar (SAR) sensors [11]. |

| Unmanned Aerial Vehicles (UAVs) | High-resolution imagery, flexible data acquisition, user-defined intervals [11]. | Precision agriculture, infrastructure inspection, disaster response [11]. | Sensors include RGB, multispectral, and thermal cameras [11]. |

| Geospatial Big Data (GBD) | Provides data on human activity and socioeconomic patterns [13]. | Urban land use classification, human-environment interaction studies [13]. | Mobile device data, social media data, point-of-interest data [13]. |

Key Data Features for Analysis

The analytical value of remote sensing data is defined by several key features that researchers must understand to select appropriate data and algorithms [11] [13]:

- Spectral Features: The intensity of electromagnetic radiation across different wavelengths (e.g., visible, infrared, microwave). Hyperspectral sensors, which capture hundreds of narrow bands, are particularly powerful for distinguishing material properties [11].

- Spatial Features: The level of detail in an image, determined by pixel size. High spatial resolution is essential for identifying fine-scale features like individual trees or buildings [11].

- Temporal Features: The frequency of data acquisition over a specific location. High temporal resolution (e.g., from satellite constellations) is crucial for monitoring dynamic processes like crop growth or disaster progression [11].

- Textural Features: Patterns of spatial intensity variation within an image, useful for characterizing heterogeneous landscapes like urban areas or forests [13].

Citizen Science: The Ground-Level Data Ecosystem

Citizen science represents a paradigm shift in environmental data collection, democratizing the monitoring process by engaging the public in data gathering. This approach, also referred to as participatory sensing or citizen sensing, empowers communities to use low-cost sensors and digital tools to evidence local environmental issues [14] [15].

Methodologies and Frameworks for Action

For citizen science to move beyond data collection to tangible impact, a structured, action-oriented framework is essential. The following workflow outlines a replicable process for designing and implementing citizen sensing initiatives.

Citizen Sensing Workflow

This framework, derived from multi-year projects, emphasizes that data collection is only one component of a successful initiative [15]. Key stages include:

- Co-Design & Planning: Collaboratively defining the research question, selecting appropriate low-cost sensor technologies, and choosing sensor locations based on community knowledge. This fosters a sense of ownership and ensures data relevance [14] [15].

- Data Contextualization: Integrating quantitative sensor data with qualitative local experiences and observations. This "thick data" is crucial for interpreting sensor readings and understanding the real-world context of pollution issues [15].

- Action & Advocacy: Using the collaboratively generated insights to inform advocacy efforts, influence local policy, and drive community-led interventions aimed at reducing pollution exposure [14].

Experimental Protocol: Deploying a Community Air Quality Network

The Breathe London Community Programme provides a model for a robust experimental protocol in citizen science [14].

- Objective: To integrate community-based knowledge with scientific air quality monitoring to democratize data production and inform local policy.

- Materials:

- Sensors: A network of over 400 real-time, calibrated air pollution sensors (e.g., PM~2.5~, NO~2~).

- Platform: A central data platform for aggregating and visualizing data in near real-time.

- Community Engagement Resources: Toolkits for workshops, data interpretation guides, and facilitation materials.

- Procedure:

- Recruitment & Partnership: Engage 60+ diverse community groups across the urban area.

- Co-Location Workshops: Facilitate sessions where community members choose sensor placements based on local knowledge and concerns (e.g., near schools, busy intersections, parks).

- Deployment & Calibration: Install and calibrate sensors in chosen locations, ensuring data reliability.

- Data Collection & Integration: Collect continuous sensor data alongside community observations and narratives.

- Collaborative Analysis: Host workshops for researchers and community members to jointly analyze data, identify patterns, and generate evidence-based insights.

- Knowledge Translation & Action: Support communities in using the evidence to advocate for policy changes, such as traffic rerouting or emission controls.

The full potential of environmental big data is realized only through the integration of disparate data sources—such as satellite imagery, UAV data, IoT sensor streams, and citizen-generated data. This integration combines physical and socioeconomic aspects, enabling high-quality applications like detailed urban land use mapping [13]. However, this process faces significant challenges related to data semantics, format heterogeneity, and the integration of unstructured data [12].

Data Integration Strategies

Two primary integration strategies are employed in geospatial analysis, each with distinct advantages and limitations [13]:

Table 2: Comparison of Data Integration Strategies for Geospatial Analysis

| Integration Strategy | Description | Advantages | Challenges |

|---|---|---|---|

| Feature-Level Integration (FI) | Integrates raw or processed features from different sources (e.g., RS spectral features + GBD semantic features) into a single feature set for model training [13]. | Potentially higher model performance by capturing complex, cross-modal interactions [13]. | Susceptible to the "curse of dimensionality"; requires careful feature selection and alignment [13]. |

| Decision-Level Integration (DI) | Processes RS and GBD data independently using separate models, then merges the classification results (e.g., urban land cover + land use) based on decision rules [13]. | More flexible and robust; avoids issues of data misalignment; allows for domain-specific model optimization [13]. | May lose synergistic information that could be captured by joint analysis at the feature level [13]. |

The following diagram illustrates the architectural differences between these two dominant data fusion approaches.

Data Fusion Architectures

The Scientist's Toolkit: Research Reagent Solutions

Navigating the data deluge requires a suite of technological "reagents" and platforms. This toolkit is essential for managing, processing, analyzing, and visualizing environmental big data.

Table 3: Essential Toolkit for Environmental Big Data Research

| Tool Category | Purpose & Function | Key Examples |

|---|---|---|

| Cloud Computing Platforms | Provide scalable infrastructure to store, process, and analyze petabyte-scale geospatial data without extensive local resources [11]. | Google Earth Engine, Amazon Web Services (AWS), Microsoft Azure [11]. |

| Citizen Science Platforms (CSPs) & Citizen Observatories (COs) | Web-based infrastructures for citizen science data collection, management, sharing, and participant engagement [16]. | iNaturalist (biodiversity), eBird (ornithology), Safecast (radiation) [16]. |

| Low-Cost Sensor Technologies | Enable hyperlocal, high-frequency environmental monitoring and democratize access to data production [14] [15]. | Air Quality Eggs, Smart Citizen Kits, and custom Do-It-Yourself (DIY) sensors for air/noise pollution [15]. |

| Data Integration & Analysis Tools | Address semantics and heterogeneity challenges in data fusion; apply ML/DL models for insight generation [12]. | Ontology-based integration systems; Convolutional Neural Networks (CNNs) for image analysis; Long Short-Term Memory (LSTM) networks for temporal data [11] [12]. |

| Data Visualization & Color Tools | Ensure accurate, accessible, and colorblind-friendly representation of complex environmental data [17] [18]. | ColorBrewer (palette selection), Coblis (color blindness simulation), Viz Palette (palette testing) [18]. |

Challenges and Future Research Directions

Despite the advancements, significant challenges persist in harnessing environmental big data. Key issues include data management and computational efficiency when processing petabytes of data, model interpretability as complex AI models often operate as "black boxes," and socio-technical barriers such as data privacy, equity in resource access, and overcoming power imbalances in citizen science [11] [14] [12].

Future research is poised to leverage emerging technologies to overcome these hurdles. Promising directions include the integration of quantum computing for complex geospatial simulations, federated learning to train models across decentralized data sources without sharing raw data (addressing privacy concerns), and the development of more advanced data fusion techniques that seamlessly combine physical remote sensing data with socio-economic GBD and citizen-sensed data for a more holistic understanding of environmental systems [11] [12].

Big data analytics is fundamentally transforming environmental science research, offering unprecedented capabilities to address complex ecological challenges. Framed within the broader thesis of understanding big data challenges in this field, this whitepaper examines key domains where data-driven approaches are making significant impacts. The integration of massive datasets from satellites, sensors, and citizen science initiatives presents both extraordinary opportunities and substantial methodological hurdles for researchers and scientists. This technical guide provides an in-depth examination of current applications, quantitative findings, and experimental protocols across four critical domains: climate science, biodiversity conservation, pollution control, and resource management, while addressing the pervasive data management and analytical challenges unique to environmental research.

Big Data Applications in Environmental Domains

Climate Science and Supply Chain Resilience

Big data analytics enables the creation of sophisticated climate models that predict temperature changes, sea-level rise, and extreme weather events with increasing accuracy [19]. These models help policymakers design proactive strategies to mitigate climate impacts and assess potential outcomes of various climate policies before implementation [8]. For operations and supply chain management, big data helps address climate change-related challenges including raw material supply problems, changes in customer behavior and demand, production relocation, and process efficiency effectiveness changes [20].

Table 1: Big Data Applications in Climate Science and Supply Chains

| Application Area | Data Sources | Analytical Approaches | Key Outcomes |

|---|---|---|---|

| Climate Modeling | Satellite imagery, weather stations, ocean buoys [19] [8] | Machine learning algorithms, predictive analytics [8] | Forecast temperature changes, sea-level rise, extreme weather events [19] [8] |

| Supply Chain Resilience | Sensor data, social media, market data [20] | Big Data Analytics (BDA), real-time processing [20] | Address raw material supply problems, demand changes, process efficiency [20] |

| Renewable Energy Optimization | Weather patterns, energy production data, consumption trends [8] | AI algorithms, consumption trend analysis [8] | Predict energy production, optimize distribution, develop efficient energy grids [8] |

Biodiversity Conservation

The 30x30 biodiversity challenge—protecting 30% of land and sea by 2030—exemplifies data-driven conservation. Recent research using machine-based pattern recognition has mapped distributions for over 600,000 terrestrial and marine species based on millions of occurrence records from the Global Biodiversity Information Facility (GBIF) [21]. This represents a major advance in representativeness, with vertebrates accounting for 8.6% of species, plants 37.8%, and invertebrates 35.5% [21]. The study identified 242,414 conservation-critical species—either endemic or restricted to habitats smaller than 625 sq. km—of which 83,600 (34.5%) remain unprotected [21].

Table 2: Biodiversity Protection Status by Numbers

| Metric | Terrestrial | Marine | Total |

|---|---|---|---|

| Conservation-Critical Species | 165,942 | 76,472 | 242,414 |

| Currently Protected Species | ~126,275 | ~32,539 | 158,814 (65.5%) |

| Unprotected Species | 39,667 | 43,923 | 83,600 (34.5%) |

AI-powered tools like image recognition track endangered species in real-time, while camera traps equipped with AI can identify and count animals, reducing the need for invasive human intervention [8]. These systems also detect poaching activities by analyzing patterns in human movement and behavior within protected areas [8].

Pollution Control and Emerging Contaminants

Big data approaches are increasingly used to replace or assist laboratory studies of emerging contaminants (ECs) such as microplastics, antibiotics, and PFAS [22]. Digital technology pilot zones in China have demonstrated significant effects in reducing pollutant emissions by empowering urban environmental governance [23]. The national digital technology integrated pilot zone can mitigate environmental pollution in prefecture-level cities by increasing public environmental awareness and encouraging green technology innovation [23].

AI-powered sensors monitor air quality in urban areas, identifying pollution hotspots and sources, while machine learning models detect correlations between traffic patterns and pollutant levels, enabling cities to implement data-driven policies to reduce emissions [8]. In water management, AI systems analyze data from rivers, lakes, and reservoirs to predict contamination risks and suggest timely interventions [8].

Sustainable Resource Management

Big data facilitates sustainable practices across agricultural and energy sectors. Precision agriculture leverages AI and big data to analyze soil quality, weather conditions, and crop health to recommend optimal planting, watering, and harvesting schedules [8]. This approach reduces resource wastage, enhances crop yields, and minimizes environmental impact by detecting early signs of pest infestations or plant diseases, enabling preventive measures without excessive chemical treatments [8].

In energy management, big data analytics helps balance supply and demand, improve energy efficiency, and integrate renewable energy sources [19] [8]. Smart grids use real-time data to balance supply and demand, while AI algorithms predict energy production based on weather patterns [8]. Tesla's Opticaster uses big data to maximize economic benefits and sustainability objectives for distributed energy resources [19].

Experimental Protocols and Methodologies

Data-Driven Biodiversity Assessment Protocol

The World Bank's methodology for assessing progress toward the 30x30 target provides a replicable experimental framework [21]:

Data Collection and Integration: Compile species occurrence records from GBIF and other biodiversity repositories, ensuring representation across taxa (vertebrates, plants, invertebrates, fungi)

Species Distribution Modeling: Apply machine learning-based pattern recognition to map distributions for all recorded species using environmental covariates and spatial statistics

Conservation Status Classification: Identify conservation-critical species based on endemism (habitat in single country) and habitat restriction (<625 sq. km)

Protection Gap Analysis: Overlay species distributions with protected area boundaries from the World Database on Protected Areas (WDPA) to determine unprotected species

Priority Area Delineation: Develop national templates identifying succession of priority areas that extend cost-effective species coverage until full protection achieved

Urban Pollution Monitoring Protocol

The digital technology pilot zone methodology employed in Chinese cities provides a structured approach to urban pollution assessment [23]:

Baseline Establishment: Collect historical pollution data (air quality indices, water quality metrics, waste management statistics) for prefecture-level cities prior to policy implementation

Treatment and Control Group Definition: Designate digital technology pilot zones as treatment groups while selecting comparable non-pilot cities as control groups

Mechanism Analysis: Quantify mediating variables including public environmental awareness (measured through search engine data and social media analysis) and green technology innovation (tracked via patent applications and R&D investment)

Difference-in-Differences (DID) Analysis: Apply PSM-DID models to isolate policy effects while controlling for confounding factors

Robustness Testing: Conduct parallel trend tests, placebo tests, and alternative model specifications to verify findings

Visualization of Research Workflows

Big Data Environmental Research Framework

Biodiversity Gap Analysis Methodology

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Environmental Big Data Research

| Tool/Category | Specific Examples | Function/Application |

|---|---|---|

| Data Platforms & Repositories | Global Biodiversity Information Facility (GBIF), World Database on Protected Areas (WDPA) [21] | Provides standardized, global species occurrence data and protected area boundaries for biodiversity research |

| Analytical Frameworks | Apache Hadoop, Apache Spark, Cloud-native ecosystems [24] [25] | Enables distributed storage and processing of large and complex environmental datasets |

| Real-time Processing Tools | Apache Kafka, Apache Flink, AWS Kinesis [25] | Facilitates real-time ingestion and analysis of streaming environmental data from sensors and satellites |

| Machine Learning Libraries | TensorFlow, PyTorch, Scikit-learn (implied) | Supports species distribution modeling, climate pattern recognition, and pollution forecasting |

| Visualization Platforms | Tableau, Power BI, Custom Dashboards [25] | Transforms complex environmental data into interpretable visualizations for decision support |

| Spatial Analysis Tools | GIS Software, Remote Sensing Platforms | Processes geospatial data for habitat mapping, land use change detection, and conservation planning |

| Data Governance Solutions | Metadata Management Tools, Access Control Systems [24] [25] | Ensures data quality, security, and compliance with regulations throughout the research lifecycle |

Critical Implementation Challenges and Solutions

The implementation of big data strategies in environmental science faces several significant challenges that researchers must overcome to ensure reliable outcomes.

Data Management and Technical Hurdles

Big data management involves addressing the "Five V's" of big data: Volume (large datasets), Velocity (high-speed data generation), Variety (diverse data types), Veracity (data quality issues), and Value (extracting meaningful insights) [24]. Specific challenges include:

- Storage Scalability: The sheer volume of environmental data requires scalable storage solutions capable of accommodating exponential data growth [24] [25]

- Data Integration Complexity: Combining diverse data sources and formats (satellites, sensors, social media, scientific research) presents technical challenges due to inconsistencies and incompatibilities [19] [24]

- Real-time Processing Demands: Environmental monitoring often requires real-time or near-real-time processing capabilities, necessitating specialized architectures [25]

Data Quality and Availability Issues

Environmental research faces particular data challenges including matrix influence, trace concentration complexities, and complex scenario modeling that have often been ignored in previous works [22]. There exists large knowledge gaps between data science findings and natural eco-environmental meaning, with complicated biological and ecological data requiring more sophisticated ensemble models [22]. Additional challenges include:

- Ensuring Data Quality: Maintaining accuracy, completeness, and reliability of big data from disparate sources with varying quality levels [24] [25]

- Data Comparability and Timeliness: Climate data specifically faces hurdles in availability, quality, comparability, and timeliness, compounded by high acquisition costs [26]

Ethical and Governance Considerations

The ethical implications of big data include concerns about data ownership, consent, and potential for misuse, alongside issues of equitable access to ensure benefits reach vulnerable communities disproportionately affected by environmental challenges [19] [8]. Specific considerations include:

- Data Privacy and Security: Protecting sensitive information collected from IoT devices, satellite imagery, and other sources while maintaining privacy regulations [19] [25]

- Transparency and Accountability: AI models must be interpretable to ensure recommendations are trustworthy and unbiased [8]

- Environmental Impact of Data Infrastructure: Data centers currently consume significant energy, with AI training potentially emitting "as much carbon as five cars over their entire lifetimes" [27]

Big data analytics presents transformative potential for addressing critical environmental challenges across climate science, biodiversity conservation, pollution control, and resource management domains. The methodologies and frameworks outlined in this technical guide provide researchers and scientists with structured approaches for leveraging data-driven insights while navigating the significant implementation challenges inherent in environmental data science. As the field advances, future research should focus on developing more sophisticated ensemble models with strong causal relationships, improving integration of diverse data sources, and establishing ethical frameworks that ensure equitable access and environmental sustainability of data infrastructure itself. Through continued refinement of these approaches, big data analytics will play an increasingly vital role in informing evidence-based environmental decision-making and policy development.

The System of Systems (SoS) Approach for Integrating Complex Environmental Data

The monumental challenge of modern environmental science lies in synthesizing disparate, complex, and voluminous data streams into a coherent understanding of planetary systems. A System of Systems (SoS) approach provides a critical framework for this integration, moving beyond isolated systems to manage complex interactions and emergent behaviors. An SoS is defined as a “set of systems or system elements that interact to provide a unique capability that none of the constituent systems can accomplish on its own” [28]. In environmental science, this translates to integrating diverse data acquisition platforms—satellites, ground-based sensors, unmanned aerial vehicles, and forecast models—into a unified analytical capability that provides insights no single system could deliver independently [29].

The big data challenges in environmental research are characterized by the four V's: volume (terabytes of daily satellite data), velocity (real-time sensor streams), variety (diverse formats and structures), and veracity (quality and uncertainty across sources). These challenges necessitate the SoS approach, which manages complexity through structured architecting and standardized interfaces [30] [31]. When successfully implemented, this approach transforms environmental data integration, enabling researchers to address complex phenomena such as climate change modeling, ecosystem monitoring, and extreme weather prediction with unprecedented comprehensiveness [32] [33].

Core Characteristics and Types of System of Systems

Defining Characteristics of SoS

Systems of Systems are distinguished from traditional monolithic systems by five key characteristics first postulated by Maier and further refined in ISO/IEC/IEEE 21839 [28]:

- Operational Independence: Constituent systems operate independently to fulfill their own purposes and maintain their own operational viability outside the SoS context. For example, a satellite system within an environmental monitoring SoS continues to collect its designated Earth observation data regardless of its participation in the larger integrated system.

- Managerial Independence: Component systems are separately acquired, managed, and funded by different organizations. In environmental science, this manifests when satellite data from NASA, weather station data from NOAA, and ocean buoy data from academic institutions are integrated without centralized management [32] [28].

- Geographical Distribution: Constituent systems are spatially dispersed and communicate through networking infrastructure. This distribution is inherent in global environmental monitoring systems that combine assets across continents, oceans, and orbital planes.

- Emergent Behavior: The SoS delivers capabilities and behaviors that arise from the interactions among constituent systems and cannot be achieved by any single system alone. For instance, predicting hurricane paths emerges from integrating atmospheric, oceanic, and terrestrial sensing systems.

- Evolutionary Development: SoS develop and adapt over time as constituent systems are added, modified, or removed. This evolutionary process responds to changing scientific priorities, technological advancements, and emerging environmental challenges [30].

Types of System of Systems

SoS configurations exist along a spectrum of organizational integration and control, generally categorized into three primary types [28]:

Table 1: Types of Systems of Systems in Environmental Science Contexts

| SoS Type | Control Structure | Environmental Science Example |

|---|---|---|

| Directed | Created and managed to fulfill specific purposes; constituent systems operate subordinately | NOAA's integrated satellite system architecture with centrally coordinated satellite and ground system operations [32] |

| Acknowledged | Has recognized objectives and designated management but constituent systems retain independence | The Global Earth Observation System of Systems (GEOSS) with coordinated but independent national and organizational contributions |

| Collaborative | Constituent systems voluntarily interact to fulfill agreed purposes through collective standards | Ad-hoc research networks formed for specific campaigns (e.g., wildfire monitoring integrating satellite, UAV, and ground sensors) [29] |

Architecting SoS for Environmental Data Integration

Fundamental Architecting Principles

Architecting a successful SoS for environmental data requires specialized approaches distinct from traditional systems engineering. The core principles guiding this process include [30]:

- Focus on Interfaces and Interoperability: Since constituent systems maintain operational and managerial independence, SoS architecting primarily focuses on standardizing interfaces rather than redesigning internal system functions. This approach leverages existing system capabilities while ensuring they can interact effectively within the SoS framework.

- Design for Evolution and Reconfiguration: SoS architects must anticipate and accommodate continuous change, recognizing that environmental monitoring needs and technological capabilities will evolve. The architecting process employs stable intermediate steps, such as the Wave Model, to manage this evolution in controlled phases [30].

- Leverage Open Standards: Implementation of Open Systems Architectures (OSA) and standardized protocols enables interoperability while maintaining system independence. OSA is defined as "an architecture that adopts open standards supporting a modular, loosely coupled and highly cohesive system structure" [30], which is particularly crucial for integrating commercial and international partner systems.

- Ensure Cooperation Through Incentives: Recognizing that collaborative participation depends on mutual benefit, SoS architects must identify and implement incentives for constituent systems to participate. These may include data sharing agreements, access to enhanced capabilities, or funding arrangements that acknowledge contributions to the collective capability.

Interoperability as the Foundation

Interoperability represents the most critical technical consideration in environmental SoS architecting, extending far beyond simple data exchange to encompass multiple layers of coordination. The Network Centric Operations Industry Consortium (NCOIC) Interoperability Framework provides a comprehensive model for understanding these layers [30]:

Table 2: Layers of Interoperability in Environmental Data SoS

| Interoperability Layer | Technical Requirements | Implementation Examples |

|---|---|---|

| Network Transport | Physical connectivity and network protocols | Internet protocols, satellite communication links, wireless sensor networks |

| Information Services | Data/Object models, semantics, knowledge representation | OGC Sensor Web Enablement standards, CF conventions for climate data, ISO metadata standards |

| People, Processes & Applications | Aligned procedures, operations, and strategic objectives | Data sharing agreements, quality assurance protocols, collaborative analysis workflows |

The Sensor Web Enablement (SWE) suite from the Open Geospatial Consortium has emerged as a critical standards framework for environmental SoS, providing specific protocols including Sensor Observation Service (SOS) for requesting and retrieving sensor data, Sensor Planning Service (SPS) for tasking sensor systems, and SensorML for describing sensor systems and processes [29]. Implementation of these standards has been demonstrated in projects worldwide, including NASA's Earth Observing 1 satellite mission and the German-Indonesian Tsunami Early Warning System, proving their effectiveness in operational environmental monitoring scenarios [29].

Methodologies and Implementation Protocols

Sensor Web Enablement Implementation Protocol

The implementation of OGC Sensor Web Enablement standards provides a proven methodology for integrating diverse environmental sensors into a coherent SoS. The following workflow details the core implementation protocol [29]:

- Sensor Characterization: Document sensor capabilities, measurement parameters, geographic location, and operational characteristics using SensorML, an XML-based encoding for describing sensor systems.

- Service Deployment: Implement SOS instances for each sensor system or data repository to provide standardized web service interfaces for data access. Each SOS instance handles requests for sensor information, observation data, and platform descriptions.

- Service Registration: Publish service metadata to a discovery catalog or registry that supports harvesting information from individual sensor services using SWE encodings. This registry must accommodate dynamically changing metadata, such as sensor location or operational status.

- Data Encoding Standardization: Format observational data using Observations & Measurements (O&M), an XML encoding for representing sensor observations and measurements that ensures consistent interpretation across systems.

- Client Application Development: Create analytical tools and visualization applications that interact with SOS instances through standard protocols, enabling integrated analysis across previously incompatible data sources.

This methodology has been successfully implemented in diverse environmental monitoring scenarios, including the Real Time Mission Monitor for managing field campaign assets and the SMART (Short-term Prediction Research and Transition) system for weather forecasting [29].

Graph-Based Modeling for SoS Complexity Management

Graph-based modeling and visualization have emerged as essential methodologies for managing the complexity inherent in environmental SoS. The recently approved Systems Modeling Language (SysML) version 2.0 specification utilizes graph-based modeling, which provides scalability and robustness to collaborative engineering processes [34]. The implementation protocol includes:

- Node Identification: Define all constituent systems, data flows, and control relationships as nodes within the graph structure.

- Relationship Mapping: Establish edges (connections) between nodes to represent data exchanges, dependencies, and interactions.

- Layering Strategy: Implement abstraction layers to manage complexity, enabling users to navigate between high-level system overviews and detailed component views.

- Navigation Implementation: Support multiple navigation strategies including top-down (overview to details), bottom-up (specific element to context), and middle-out (abstraction level to details or broader context) approaches [34].

SoS Architecture for Environmental Data Integration

Big Data Analytics Integration for Environmental SoS

The integration of big data analytics platforms with environmental SoS requires specialized methodologies to handle the volume, velocity, and variety of environmental data. Evidence from China's big data comprehensive pilot zones demonstrates that this integration drives corporate green transformation through three primary pathways: enhancing ESG performance, bolstering green co-innovation capabilities, and facilitating industrial structure advancement [33]. The implementation protocol includes:

- Real-time Data Ingestion: Deploy distributed streaming platforms capable of handling high-velocity sensor data from diverse environmental monitoring systems.

- Automated Quality Control: Implement machine learning algorithms to identify anomalies, fill gaps, and flag questionable data across heterogeneous sources.

- Predictive Analytics: Develop models that forecast environmental conditions based on historical patterns, real-time data, and scenario simulations.

- Stakeholder Reporting: Generate accessible visualizations and automated reports that translate complex analytical results into actionable intelligence for researchers, policymakers, and operational decision-makers.

Organizations implementing these methodologies report significant benefits, with 72% of companies noting increased transparency and 65% identifying ESG risks more effectively [31].

The Researcher's Toolkit: Essential Solutions for SoS Implementation

Successful implementation of environmental SoS requires a suite of specialized tools and standards that enable interoperability while respecting the independence of constituent systems. The following table details essential solutions currently employed in operational systems:

Table 3: Research Reagent Solutions for Environmental SoS Implementation

| Solution Category | Specific Protocols/Tools | Function in SoS Implementation |

|---|---|---|

| Interoperability Standards | OGC Sensor Web Enablement (SWE), SensorML, O&M Encoding | Provide standardized interfaces and data formats for integrating heterogeneous sensor systems and data repositories [29] |

| Data Analytics Platforms | Predictive Analytics, Digital Twins, Machine Learning Models | Enable forecasting of environmental conditions based on historical patterns and real-time data; simulate scenarios to optimize resource allocation [31] |

| Visualization & Modeling | Graph-Based Visualization, SysML v2.0, Cluster Mapping | Represent complex system relationships and dependencies; support navigation through large, interconnected data spaces [34] [35] |

| Data Acquisition & Management | Sensor Observation Service (SOS), Sensor Planning Service (SPS) | Handle near-real-time management of sensor data; enable user-driven acquisition requests and tasking of sensor systems [29] |

Case Study: NOAA's Environmental Monitoring SoS

The National Oceanic and Atmospheric Administration (NOAA) provides a compelling real-world example of SoS implementation for environmental data integration. Through its Office of Systems Architecture and Engineering (SAE), NOAA serves as lead systems engineer for the broader NOAA remote-sensing, data, products and services enterprise [32]. The implementation demonstrates key SoS characteristics:

NOAA is transitioning from independent Low Earth Orbit (LEO) and Geostationary Orbit (GEO) satellite missions to "a more agile Earth observation architecture based on enterprise-wide assessments of the mix of NOAA, partner, and commercial data sources" [32]. This approach exemplifies the acknowledged SoS type, where constituent systems (satellites, ground systems, partner assets) retain independent ownership and objectives while cooperating to achieve collective capabilities.

The architectural approach employs Open Systems Architecture principles to enable competition among suppliers and rapid deployment of new systems within the SoS. Key functions include conducting long-term architecture studies to identify cost-effective options, acquiring and assessing commercial satellite data, and facilitating the operationalization of partner data products [32]. This systematic approach to SoS engineering accelerates the nation's environmental information services by designing and developing integrated Earth observation and data information systems that surpass the capabilities of any single constituent system.

The System of Systems approach represents a paradigm shift in how researchers integrate complex environmental data to address pressing scientific challenges. By architecting federated systems that maintain operational independence while achieving collective capabilities, environmental scientists can overcome the limitations of isolated data systems. The methodologies, standards, and implementations detailed in this technical guide provide a roadmap for constructing environmental SoS that are interoperable, evolvable, and capable of delivering emergent insights into complex Earth system processes.

As big data challenges continue to grow in environmental science, the SoS approach offers a structured framework for managing complexity while preserving the autonomy of constituent systems. The integration of open standards, graph-based modeling, and advanced analytics creates a foundation upon which researchers can build increasingly sophisticated understanding of our planet's interconnected systems, ultimately enabling more informed decisions for environmental stewardship and sustainability.

From Data to Decisions: Methodologies and Real-World Applications

Environmental science research is undergoing a paradigm shift, driven by an unprecedented influx of big data from diverse sources such as satellite imagery, IoT sensor networks, and climate simulations. The traditional research paradigm has become inadequate for processing these massive, heterogeneous datasets and extracting actionable insights in a timely manner [36]. The integration of Artificial Intelligence (AI), Machine Learning (ML), and Cloud Computing represents a foundational change, enabling researchers to overcome these challenges. These technologies collectively provide the computational framework and analytical power necessary to model complex environmental systems, predict future scenarios, and support evidence-based policy decisions. This technical guide examines the core technologies transforming environmental analysis, detailing their applications, implementation protocols, and the critical balance between their computational demands and environmental benefits.

AI and Machine Learning in Environmental Analysis

Methodological Approaches and Applications

AI and ML technologies are revolutionizing environmental research by delivering significant improvements in computational efficiency and predictive accuracy. Compared with traditional methods, AI has achieved a remarkable improvement in computational efficiency in environmental data analysis, reducing decision-making time by more than 60% [36]. This effectively supports the efficient resolution of complex environmental issues.

Table 1: Key Applications of AI and ML in Environmental Research

| Application Domain | ML Technique | Function | Impact/Effectiveness |

|---|---|---|---|

| Climate Physics & Weather Forecasting | Neural Networks, Ensemble Learning | Predicting weather systems & climate phenomena (e.g., El Niño) | Uses orders-of-magnitude less computing resources vs. physics-based models [37] |

| Pollutant Monitoring & Control | Machine Learning | Global distribution simulation of pollutants; Material screening & performance prediction | Enables instant detection & control of human health impacts [36] |

| Environmental Data Curation | Machine Learning | Filling missing observational data points; Creating robust climate records | Extrapolates from past conditions when observations are abundant [37] |

| Climate Risk Assessment | Predictive Modeling, Historical Data Analysis | Quantifying risks of extreme weather, flooding, droughts, and heatwaves | Provides comprehensive insights for strategic planning & resource allocation [38] |

Machine learning is particularly transformative in climate science, where it is driving change in three key areas: accounting for missing observational data, creating more robust climate models, and enhancing predictions [37]. ML algorithms can learn from historical data to predict future conditions without exclusively relying on solving underlying governing equations, thus conserving substantial computational resources.

Experimental Protocols and Workflows

A critical application of ML in environmental science involves improving parameterizations in climate models. The following workflow, derived from research at Georgia Tech, outlines this process [37]:

Protocol: ML-Enhanced Climate Model Parameterization

- High-Resolution Simulation: Run a physical climate model at extremely high resolutions for a short duration to minimize the need for parameterizing small-scale physical processes.

- Data Extraction: Use the high-resolution output to generate training data that captures the relationship between resolved-scale variables and the sub-grid-scale processes.

- Machine Learning Training: Apply machine learning (often neural networks) to derive equations that best approximate the physics occurring at scales below the grid resolution.

- Implementation in Coarser Model: Integrate the ML-derived parameterizations into a lower-resolution global climate model that can be run for centuries-long simulations.

- Validation and Iteration: Compare the output of the ML-augmented model with observational data and high-resolution benchmarks, refining the ML component as needed.

Additional standard protocols include:

- Predictive Climate Modeling: Utilizing neural networks, regression models, and ensemble learning to forecast trends in temperature, rainfall, sea-level rise, and extreme weather events. These models are trained on historical climate data to identify patterns and correlations [38].

- Environmental Data Gap Filling: Employing ML to create a more robust historical record by extrapolating from past conditions where observations are abundant, effectively patching spatial and temporal gaps in datasets [37].

Diagram 1: ML climate model workflow.

The Role of Sustainable Cloud Computing

Foundational Concepts and Infrastructure

Cloud computing provides the essential, scalable infrastructure that enables the storage and processing of massive environmental datasets. Sustainable cloud computing refers to the adoption of eco-friendly practices to reduce energy consumption, minimize carbon footprints, and improve efficiency in cloud-based operations [39]. This is achieved through several key techniques:

- Energy-efficient infrastructure: Utilizing AI-driven workload optimization and modern hardware to reduce power consumption.

- Carbon-aware computing: Scheduling workloads based on renewable energy availability to maximize the use of clean power sources.

- Green data centers: Leveraging renewable energy sources and advanced cooling techniques (e.g., liquid cooling, free-air cooling) to improve sustainability.

- Optimized resource utilization: Avoiding idle compute power by dynamically adjusting resources based on demand.

Leading cloud providers are making significant strides in sustainability. For instance, Google reported that despite a 27% overall increase in electricity consumption, it reduced its data centre energy emissions by 12% in 2024 through efficiency improvements and clean energy procurement [40]. Their data centers now provide six times more computing capacity per unit of electricity compared to five years ago, largely due to more efficient AI chips [40].

Operational Protocols for Sustainable Computing

Implementing sustainable practices in cloud computing involves specific technical protocols:

Protocol: Carbon-Aware Workload Scheduling

- Energy Source Monitoring: Integrate with real-time data feeds from grid operators and on-site renewable generation (solar, wind) to determine the current carbon intensity of available electricity.

- Workload Classification: Identify non-urgent, computationally intensive tasks (e.g., model training, batch data processing) that can be flexibly scheduled.

- Scheduling Optimization: Use optimization algorithms to delay flexible workloads until periods of high renewable energy availability or lower grid carbon intensity.

- Geographic Distribution: For organizations with multi-cloud or global data center presence, route workloads to regions where the carbon-free energy percentage is highest [40]. Tools like Windmill, which can run on platforms like Shakudo, enable precise timing of resource-intensive tasks for this purpose [39].

Protocol: Dynamic Resource Optimization

- Monitoring and Observability: Deploy comprehensive observability tools (e.g., HyperDX) across logs, metrics, and traces to identify underutilized resources and optimization opportunities [39].

- Automated Scaling: Implement automated scaling policies that dynamically allocate compute resources (e.g., using Kubeflow on Kubernetes) based on real-time workload demand, preventing over-provisioning [39].

- Resource Termination: Automatically identify and terminate idle or orphaned resources that consume power without adding value.

- Multi-Cloud Optimization: Leverage platforms that enable workload optimization across public, private, and hybrid cloud environments, allowing choice of providers that prioritize renewable energy [39].

Environmental Costs and Sustainable Mitigation

Quantifying the Environmental Footprint

The computational infrastructure powering AI and cloud services carries a substantial environmental footprint that must be accounted for in any comprehensive analysis. Research from Cornell University projects that by 2030, the current rate of AI growth would annually put 24 to 44 million metric tons of carbon dioxide into the atmosphere, the emissions equivalent of adding 5 to 10 million cars to U.S. roadways [41]. The water consumption is equally significant, estimated at 731 to 1,125 million cubic meters per year – equal to the annual household water usage of 6 to 10 million Americans [41].

The power density required for AI is particularly intense; a generative AI training cluster might consume seven or eight times more energy than a typical computing workload [42]. Furthermore, each ChatGPT query consumes about five times more electricity than a simple web search, and the energy demands of inference are expected to eventually dominate as these models become ubiquitous [42].

Table 2: Projected Environmental Impact of U.S. AI Computing Infrastructure (2030)

| Impact Category | Projected Annual Volume (2030) | Equivalent To |

|---|---|---|

| Carbon Dioxide Emissions | 24 - 44 million metric tons | 5 - 10 million cars on U.S. roadways [41] |

| Water Consumption | 731 - 1,125 million cubic meters | Annual household water usage of 6 - 10 million Americans [41] |

| Data Center Electricity | Approaching 1,050 TWh (Global, 2026) | Would rank 5th globally, between Japan & Russia [42] |

Mitigation Strategies and Roadmaps

Research indicates that strategic interventions can significantly reduce these impacts. A comprehensive roadmap could cut carbon dioxide impacts by approximately 73% and water usage by 86% compared with worst-case scenarios [41]. The following diagram synthesizes this mitigation framework:

Diagram 2: AI environmental impact mitigation.

Key mitigation strategies include:

- Smart Siting: Locating data facilities in regions with lower water-stress and better clean energy profiles. The Midwest and "windbelt" states (Texas, Montana, Nebraska, South Dakota) offer the best combined carbon-and-water profile [41].

- Grid Decarbonization: Accelerating the clean-energy transition in locations where AI computing is expanding. If decarbonization does not catch up with computing demand, emissions could rise by roughly 20% [41].

- Operational Efficiency: Deploying energy- and water-efficient technologies, such as advanced liquid cooling and improved server utilization, which could potentially remove another 7% of carbon dioxide and lower water use by 29% [41].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Tool/Solution | Type | Function in Research | Implementation Example |

|---|---|---|---|

| ML-Derived Parameterizations | Software Algorithm | Replaces traditional physics-based approximations in climate models, improving efficiency & accuracy | Deriving equations from high-res runs for use in coarse models [37] |

| Carbon-Aware Schedulers | Software Service | Schedules computationally intensive AI tasks during periods of high renewable energy availability | Using Windmill for 5x faster workflow scheduling to time tasks for off-peak hours [39] |

| Advanced Cooling Systems | Physical Infrastructure | Reduces water and energy consumption for data center cooling, directly mitigating environmental impact | Implementing liquid cooling & free-air techniques to reduce energy-intensive air conditioning [39] |

| Multi-Cloud Management Platforms | Software Platform | Optimizes workloads across cloud environments, enabling choice of providers with best renewable energy sources | Using Shakudo to manage hybrid deployments and diversify workloads [39] |

| HyperDX | Observability Tool | Provides comprehensive observability across logs, metrics, and traces to identify resource waste | Integrating on Shakudo's platform to find optimization opportunities [39] |

| Kubeflow | MLOps Tool | Automates scaling of machine learning workloads and intelligent resource allocation across clusters | Deploying on Shakudo for optimal resource management in ML pipelines [39] |

The integration of AI, machine learning, and sustainable cloud computing represents a powerful frontier in environmental science research, enabling researchers to overcome significant big data challenges. These technologies facilitate unprecedented capabilities in climate modeling, pollutant tracking, and predictive assessment. However, this computational advancement comes with a tangible environmental footprint that must be proactively managed through smart siting, grid decarbonization, and operational efficiency. The future of environmentally sustainable research depends on a continued commitment to technological innovation coupled with responsible implementation, ensuring that the tools used to understand and protect our planet do not simultaneously contribute to its degradation. As these fields evolve, researchers must remain vigilant in applying the mitigation strategies and sustainable protocols outlined in this guide to maintain a positive net environmental benefit.

The field of climate science is undergoing a profound transformation, increasingly relying on massive, multi-source datasets and machine learning (ML) to understand complex environmental systems. This shift introduces significant big data challenges, including the management of heterogeneous data streams, the need for robust uncertainty quantification, and the integration of physical principles with data-driven approaches. Environmental researchers are now working with increasingly large datasets from diverse sources, presenting new opportunities for innovative analytical approaches beyond traditional hypothesis-driven methods [43]. The core challenge lies in extracting meaningful patterns and reliable forecasts from this data deluge, a task for which machine learning has become an indispensable tool. However, as models grow more complex, fundamental questions about their reliability, interpretability, and physical consistency must be addressed within the broader context of environmental big data analytics.

Machine Learning Approaches in Climate Modeling

Comparative Performance of ML Techniques

Machine learning applications in climate modeling span from localized weather predictions to global climate projections. Different ML architectures offer distinct advantages depending on the specific prediction task, data characteristics, and computational constraints. The table below summarizes the performance of various ML techniques across different climate modeling applications:

Table 1: Performance of Machine Learning Techniques in Climate Applications

| ML Technique | Application Domain | Performance Highlights | Limitations |

|---|---|---|---|

| Linear Pattern Scaling (LPS) | Regional temperature estimation | Outperformed deep learning in certain climate scenarios [44] | Limited for non-linear phenomena like precipitation [44] |

| Long Short-Term Memory (LSTM) | Streamflow prediction | Remarkable performance in rainfall-runoff modeling [45] | Requires uncertainty quantification for changing conditions [45] |

| Random Forest | Building water quality prediction | Outperformed LSTM for free chlorine residual prediction [46] | |

| Deep Learning (Emulators) | Climate simulation | Faster execution (seconds vs. hours) [47] | Struggles with natural variability in climate data [44] |

| Conformal Prediction | Earth observation uncertainty | Provides statistically valid prediction regions [48] | Requires exchangeability assumption [48] |

Specialized ML Architectures for Climate Data

Beyond standard ML models, researchers have developed specialized architectures to address unique challenges in climate data. The PI3NN framework integrates with LSTM networks to quantify predictive uncertainty by training three neural networks: one for mean prediction and two for upper and lower prediction intervals [45]. This approach is particularly valuable for handling non-stationary conditions under climate change. For data assimilation tasks, the Latent-EnSF technique employs variational autoencoders to encode sparse data and predictive models in the same space, demonstrating higher accuracy, faster convergence, and greater efficiency in medium-range weather forecasting and tsunami prediction [47]. These specialized architectures represent the cutting edge of ML research for environmental big data challenges.

Experimental Protocols and Methodologies

Climate Emulation Benchmarking Protocol

Objective: To evaluate and compare the performance of simple physical models versus deep learning approaches for climate prediction tasks.

Materials and Data Sources:

- Climate model outputs or reanalysis data (temperature, precipitation)

- Benchmark datasets for climate emulator evaluation

- Computational resources for model training and validation

Methodology:

- Data Preparation: Collect climate model runs or observational data, ensuring coverage of relevant variables (e.g., surface temperature, precipitation) across the spatial and temporal domains of interest.

- Model Implementation:

- Implement simple physical models (e.g., Linear Pattern Scaling)

- Configure deep learning architectures (e.g., neural network-based emulators)

- Benchmarking:

- Train both model types on historical climate data

- Evaluate predictions against held-out test data

- Account for natural climate variability (e.g., El Niño/La Niña oscillations) in evaluation metrics

- Validation:

- Compare prediction accuracy for different variables (temperature vs. precipitation)

- Assess computational efficiency and scalability

- Analyze performance under different emission scenarios

This protocol revealed that simple models like LPS can outperform deep learning for temperature estimation, while deep learning may be preferable for precipitation forecasting, highlighting the importance of problem-specific model selection [44].

Uncertainty Quantification Framework for Hydrological Predictions

Objective: To quantify predictive uncertainty in ML-based streamflow predictions under changing climate conditions.

Materials and Data Sources:

- Historical streamflow data

- Meteorological observations (precipitation, temperature, etc.)

- Watershed characteristics

- PI3NN-LSTM computational framework

Methodology:

- Data Processing:

- Compile temporal sequences of meteorological observations and streamflow measurements

- Handle missing data and outliers

- Normalize input features

- Model Configuration:

- Implement LSTM network for streamflow prediction

- Integrate PI3NN framework with three neural networks for uncertainty quantification

- Apply network decomposition strategy to handle complex LSTM structures

- Training and Calibration:

- Train initial LSTM on historical data

- Calibrate prediction intervals using PI3NN to achieve desired confidence levels

- Validate uncertainty bounds using known observations

- Evaluation:

- Assess prediction interval coverage probability

- Identify out-of-distribution samples where model confidence decreases

- Compare uncertainty quantification with traditional methods

This methodology enables identification of when model predictions become less trustworthy due to changing environmental conditions, addressing a critical challenge in climate adaptation planning [45].

Figure 1: ML Climate Modeling Workflow

Visualization and Interpretability in Climate ML

Integrated ML-Visualization Systems

The complexity of ML models in climate science necessitates advanced visualization tools for interpretation and communication. CityAQVis represents an innovative approach as an interactive ML sandbox tool that predicts and visualizes pollutant concentrations using multi-source data, including satellite observations, meteorological parameters, and demographic information [49]. This tool enables researchers to build, compare, and visualize predictive models for ground-level pollutant concentrations through an intuitive graphical interface, bridging the gap between complex model outputs and actionable insights for urban air quality management.

The system employs comparative visualization to analyze pollution patterns across different cities or temporal periods, allowing researchers to adaptively select optimal models based on performance across varying urban settings. This functionality addresses a critical big data challenge in environmental science: translating complex, high-dimensional model outputs into interpretable information for decision-makers [49].

Interactive ML Platforms for Environmental Research