Opening the Black Box: Interpretable Machine Learning for Predictive Ecotoxicology and Safer Drug Development

This article explores the critical role of model interpretability in applying machine learning to ecotoxicity prediction.

Opening the Black Box: Interpretable Machine Learning for Predictive Ecotoxicology and Safer Drug Development

Abstract

This article explores the critical role of model interpretability in applying machine learning to ecotoxicity prediction. As regulatory and research demands grow, 'black-box' models present significant challenges for trust and mechanistic understanding. We provide a comprehensive guide for researchers and drug development professionals, covering the foundational need for explainability, a practical overview of key interpretable ML techniques (including SHAP, PDP, and ALE), strategies to overcome implementation hurdles, and rigorous validation frameworks. By synthesizing the latest methodologies and applications, this work aims to equip scientists with the knowledge to build transparent, reliable, and regulatory-compliant predictive models that accelerate the identification of toxic hazards.

The Urgent Need for Transparency: Why Black-Box Models Fail in High-Stakes Ecotoxicology

FAQs: Understanding Black-Box Models

1. What exactly is a "black-box" model in machine learning?

A black-box model is an AI system whose internal decision-making processes are opaque and not easily understandable to humans [1]. Users can observe the data fed into the model (inputs) and the predictions or classifications it produces (outputs), but the reasoning behind how it transforms inputs into outputs remains hidden [2] [1]. This complexity is particularly characteristic of advanced models like deep neural networks, which can have hundreds or thousands of layers, making it difficult even for their creators to fully interpret their inner workings [1].

2. Why is the "black-box" problem particularly critical in scientific fields like ecotoxicology?

In scientific research, the goal is not only to make accurate predictions but also to gain knowledge and understand underlying mechanisms [3]. Black-box models can obscure scientific discovery because the model itself becomes the source of knowledge instead of the data, hiding the causal relationships researchers seek to understand [3] [4]. For ecotoxicology, this means you might predict a chemical's toxicity accurately but fail to identify the structural features or biological pathways causing that toxicity, which is essential for regulatory science and mechanistic understanding [5] [6].

3. Is there a necessary trade-off between model accuracy and interpretability?

No, this is a common misconception. For many problems involving structured data with meaningful features, there is often no significant performance difference between complex black-box models and simpler, interpretable models [4]. Furthermore, the ability to interpret a model's results can help you better process data and identify issues in the next experimental iteration, potentially leading to greater overall accuracy [4]. The belief in this trade-off can perpetuate reliance on black boxes when more interpretable models would be sufficient and more scientifically informative [4].

4. What are the main types of problems caused by using black-box models for high-stakes decisions?

- Reduced Trust and Validation Difficulty: It's challenging to validate outputs when you don't understand the decision process [1].

- Clever Hans Effect: Models may arrive at correct conclusions for the wrong reasons, such as using spurious correlations in your training data (e.g., annotations on X-rays instead of the medical imagery itself) that fail in real-world applications [1].

- Difficulty Debugging and Bias Detection: Uninterpretable models make it hard to identify errors, correct model behavior, or detect biases that could lead to discriminatory outcomes [3] [4].

- Ethical and Compliance Risks: The opacity can hide biases and make it difficult to prove regulatory compliance (e.g., with EU AI Act or CCPA) [1].

Troubleshooting Guides

Guide 1: Diagnosing a Misclassified Prediction

Scenario: Your deep learning model for classifying aquatic species misclassifies a healthy specimen as "highly stressed." You need to understand why.

| Step | Action | Tool/Technique Example | Expected Outcome |

|---|---|---|---|

| 1 | Isolate the Prediction | Select the specific data point (e.g., the image or chemical descriptor) that resulted in the misclassification. | A single instance for local explanation. |

| 2 | Apply Local Explainability | Use a method like LIME (Local Interpretable Model-agnostic Explanations) to create a local surrogate model [6]. | Identifies which features (e.g., pixels, molecular descriptors) most influenced this specific wrong prediction. |

| 3 | Analyze Feature Influence | Use SHAP (SHapley Additive exPlanations) to calculate feature importance for that instance [2] [5]. | A quantitative list of features and their contribution to the misclassification. |

| 4 | Hypothesize the Cause | Correlate the explainability output with your domain knowledge. Was the decision based on a biologically irrelevant artifact? | A testable hypothesis (e.g., "The model is confusing background substrate with toxicity indicators"). |

Guide 2: Proactively Validating Model Logic Before Deployment

Scenario: You have trained an XGBoost model to predict HC50 (ecotoxicity) values and need to ensure its logic is sound before publicating your results [5].

| Step | Action | Tool/Technique Example |

|---|---|---|

| 1 | Global Explainability | Apply SHAP summary plots to the entire training set to see the global feature importance [5] [6]. |

| 2 | Check Feature Dependence | Use ALE (Accumulated Local Effects) plots to understand the relationship between key features and the predicted outcome [5]. |

| 3 | Audit for Bias | Use the model's explanations to check if predictions are unduly influenced by features correlating with sensitive attributes [3]. |

| 4 | Contextualize with Domain Knowledge | Compare the model's explanation (e.g., "Feature X is most important") with established toxicological knowledge. Does it make sense? |

The Scientist's Toolkit: Key Reagents for Interpretable ML in Ecotoxicology

| Tool / Reagent | Type | Primary Function in Research |

|---|---|---|

| SHAP | Explainability Library | Quantifies the contribution of each input feature (e.g., molecular descriptor) to a single prediction, providing a unified measure of feature importance [2] [5] [6]. |

| LIME | Explainability Library | Creates a local, interpretable surrogate model (e.g., linear model) to approximate the predictions of the black-box model for a specific instance, making single decisions understandable [1] [6]. |

| ALE Plots | Diagnostic Plot | Shows how a feature influences the model's predictions on average, overcoming limitations of partial dependence plots when features are correlated [5]. |

| XGBoost | ML Algorithm | A powerful, high-performance gradient boosting framework that can be paired with SHAP/LIME to create models that are both accurate and explainable [5]. |

| Interpretable ML Models | ML Algorithm | Models like linear regression, decision trees, or rule-based learners that are inherently transparent and provide their own explanations, which are faithful to what the model computes [4]. |

Technical Support Center: Interpreting Your Black-Box Models

Frequently Asked Questions (FAQs)

1. What does it mean if my model has high accuracy but the variable importance plot shows unexpected features? Your model may be learning from spurious correlations or data artifacts rather than genuine biological cause-and-effect relationships. For instance, a model predicting stream health might learn to associate "snow" with "wolf" instead of actual animal features [2]. In toxicology, this could mean your model is using laboratory-specific artifacts instead of compound structural properties for prediction.

- Troubleshooting Steps:

- Investigate Feature Relationships: Use Partial Dependence Plots (PDPs) and Individual Conditional Expectation (ICE) curves to visualize the relationship between the unexpected feature and the model's prediction [7].

- Check for Data Leakage: Ensure no feature in your dataset contains information that would not be available at the time of prediction in a real-world scenario.

- Use Model-Agnostic Tools: Apply tools like SHAP (SHapley Additive exPlanations) to analyze predictions for individual instances to understand the driving features on a case-by-case basis [2].

2. How can I visualize the effect of a specific chemical feature on my model's toxicity prediction? You can use graphical tools designed for interpretable machine learning to visualize covariate-response relationships [7].

- Troubleshooting Steps:

- Generate a Partial Dependence Plot (PDP): This shows the average relationship between a feature and the predicted outcome.

- Plot Individual Conditional Expectation (ICE) Curves: These show the relationship for each individual instance, helping you identify heterogeneity in the feature's effect.

- Calculate Accumulated Local Effects (ALE) Plots: These are more reliable than PDPs when features are correlated, as they show the effect of a feature in a localized region of the feature space [7].

3. My gradient boosted tree model for species distribution is a "black-box." How can I debug it and ensure it's learning ecologically relevant interactions? Gradient boosted trees (GBT) are powerful but complex. Their black-box nature can be opened using several statistical tools [7].

- Troubleshooting Steps:

- Quantify Interaction Effects: Use statistical measures like Interaction Strength (IAS) and Friedman's ( H^2 ) statistic to identify which features are involved in strong interactions [7].

- Visualize Key Variables: For the most important variables, create PDP and ALE plots to visualize their marginal effect on the prediction. In ecological contexts, key variables often include region, stability indices, and vegetation [7].

- Validate with Domain Knowledge: Compare the identified important features and interactions against established ecological knowledge. If a model highlights a previously unknown interaction, it warrants further, targeted investigation.

4. What should I do if my model's performance degrades when applied to a new geographical region or chemical space? This indicates a model generalization failure, likely due to data distribution shift or the presence of confounding variables not accounted for in the original model.

- Troubleshooting Steps:

- Analyze Input Data Distributions: Visualize and compare the distributions of key features in your training data versus the new deployment data. A significant shift can explain performance degradation [8].

- Check for Confounders: Re-evaluate your feature set. In environmental health, factors like ecoregion or upstream human disturbance indices can be critical confounders that, if missing, limit a model's transferability [7].

- Employ Interpretability Tools: Use ICE curves or SHAP to analyze predictions in the new region. This can reveal if the model is applying the same logic incorrectly or if the underlying relationships have changed.

Diagnostic Tables for Common Model Interpretation Issues

Table 1: Common "Black-Box" Model Symptoms and Diagnostic Tools

| Problem Symptom | Potential Cause | Recommended Diagnostic Tool(s) | Purpose of Tool |

|---|---|---|---|

| High validation accuracy but predictions are untrustworthy | Spurious correlations; model relying on data artifacts | SHAP, LIME, PDP/ICE Plots [7] [2] | Vet individual predictions and visualize feature-output relationships to identify illogical decision paths. |

| Model fails to generalize to new data | Overfitting; dataset shift; unaccounted confounders | ALE Plots; Input/Output Distribution Visualization [7] [8] | Isolate true feature effects from correlated ones and check for data drift. |

| Difficult to explain which features drive a prediction | Inherent complexity of the model (e.g., GBT, DNN) | Variable Importance Measures; SHAP; Model-specific tools (e.g., TensorBoard for DNNs) [9] [7] [2] | Quantify global and local feature contribution to the model's output. |

| Need to visualize complex model architecture | Debugging and optimization of neural networks | Netron, TensorBoard Model Graph [9] | Produce interactive visualizations of neural network layers and connections. |

| Suspected complex interaction effects | Non-linear relationships between features | Friedman's ( H^2 ), Interaction Strength (IAS), 2D PDPs [7] | Quantify and visualize interaction strength between pairs of features. |

Table 2: Key Software Tools for Explainable AI (XAI) in Research

| Tool Name | Primary Function | Key Features for Ecotoxicology | Integration |

|---|---|---|---|

| SHAP | Unified framework for explaining model predictions | Calculates exact feature contribution for any model; ideal for justifying toxicity classifications [2]. | Python (R) |

| PDP/ICE Box | Visualizes marginal effect of a feature on prediction | Highlights individual instance heterogeneity (ICE) and average effect (PDP); useful for analyzing chemical dose-response [7]. | R |

| iml | Provides model-agnostic interpretation tools | Contains various methods including feature importance, PDPs, and ALE; flexible for different model types [7]. | R |

| TensorBoard | Suite of visualization tools | Tracks metrics, visualizes model graph, views histograms of weights/biases; good for deep learning models [9] [8]. | TensorFlow/PyTorch |

| Neptune.ai | Experiment tracking and model management | Logs and compares all model-building metadata; ensures reproducibility across complex toxicity studies [9]. | Cloud/On-prem |

Experimental Protocols for Model Interpretation

Protocol 1: Creating and Interpreting Partial Dependence Plots (PDPs)

- Objective: To visualize the average marginal effect of one or two features on the predicted outcome of a machine learning model.

- Methodology:

- Model Training: Train your chosen model (e.g., Gradient Boosted Tree) on your dataset.

- Feature Selection: Select the feature of interest (e.g., "Chemical Concentration").

- Grid Creation: Create a grid of values covering the range of the selected feature.

- Prediction and Averaging: For each value in the grid:

- Replace the actual feature values in the dataset with the current grid value.

- Use the trained model to generate predictions for this modified dataset.

- Average the predictions.

- Plotting: Plot the grid values on the x-axis and the average predictions on the y-axis [7].

- Interpretation: The resulting curve shows how the average model prediction changes as the feature of interest changes.

Protocol 2: Quantifying Feature Interactions using Friedman's H² Statistic

- Objective: To measure the strength of interaction between a feature of interest and all other features in the model.

- Methodology:

- Compute Partial Dependence: Calculate the partial dependence function, ( Fs(xs) ), for the feature(s) of interest.

- Compute Individual Feature Dependence: Calculate the partial dependence function, ( Fj(xj) ), for each individual feature.

- Calculate the Statistic: Use Friedman's ( H^2 ) statistic, which is based on the variance of the interaction effects. A value of 0 implies no interaction, while a value of 1 implies that the entire effect of the features is due to interaction [7].

- Interpretation: A high ( H^2 ) value for a feature indicates that its effect on the prediction is highly dependent on other features, suggesting a significant interaction that should be investigated further with 2D PDPs.

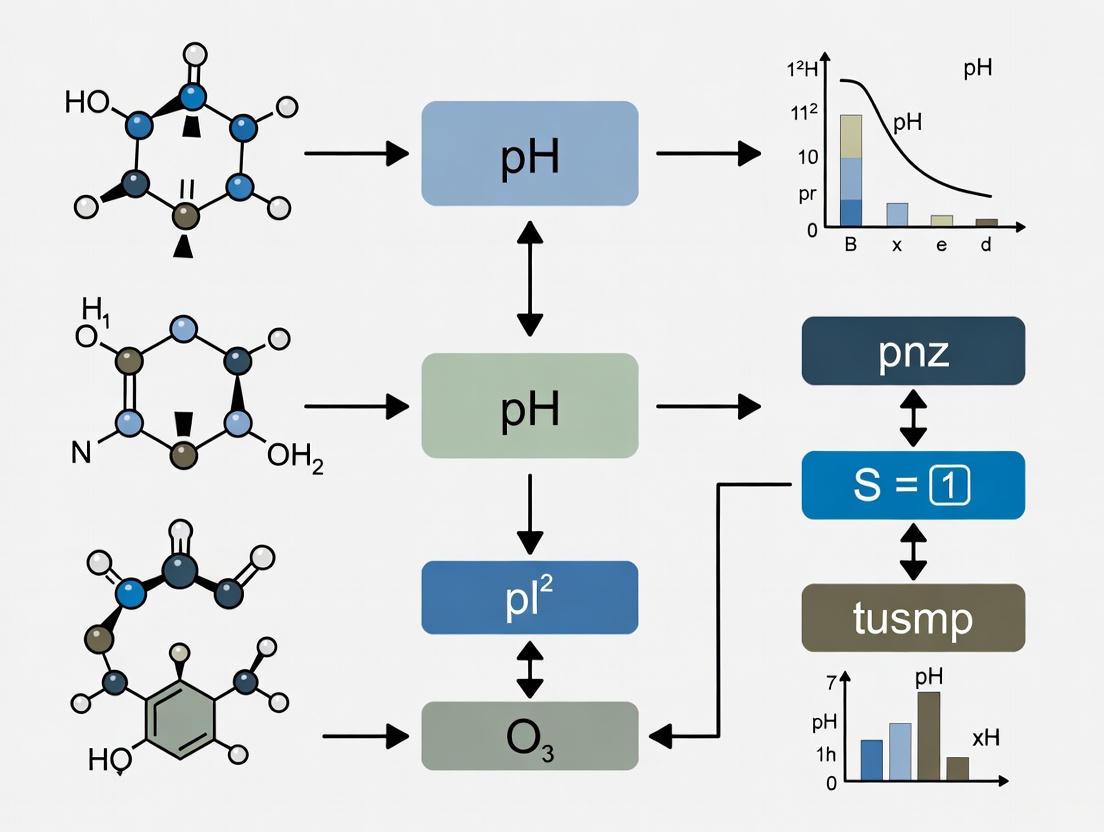

Workflow Visualization

Model Interpretation Workflow

The Scientist's Toolkit: Essential XAI Reagents & Software

Table 3: Key Research Reagents and Solutions for Interpretable Modeling

| Tool / "Reagent" | Type | Function in the "Experiment" |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Software Library | Provides a unified measure of feature importance for any prediction, explaining the output of any model by quantifying each feature's contribution [2]. |

| Partial Dependence Plots (PDP) | Visualization Method | Shows the average marginal effect of a feature on the model's prediction, helping to visualize the relationship's shape (e.g., linear, monotonic) [7]. |

| Accumulated Local Effects (ALE) Plots | Visualization Method | A more robust alternative to PDPs when features are correlated. It calculates the effect of a feature in localized intervals, preventing skewed results [7]. |

| Individual Conditional Expectation (ICE) Curves | Visualization Method | Plots the prediction path for each individual instance as a feature changes, revealing heterogeneity in the feature's effect and uncovering subgroups [7]. |

| Gradient Boosted Trees (GBT) with Interaction Constraints | Modeling Algorithm | A powerful prediction model that can be coupled with interpretability tools. Its flexibility allows it to capture complex, non-linear relationships in ecological data [7]. |

| Neptune.ai / MLflow | Experiment Tracker | Acts as a "lab notebook" for machine learning, logging parameters, metrics, and artifacts to ensure reproducibility and facilitate model comparison [9] [8]. |

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between a "black box" and a "white box" model in machine learning?

- White Box Models are characterized by their transparency; you can see and understand everything that’s going on inside them. Their decision-making process is fully traceable and comprehensible. Examples include linear regression, decision trees, and logistic regression [10]. They are inherently interpretable [11].

- Black Box Models are more complex, and their internal decision-making processes are opaque and difficult for humans to comprehend. Examples include deep neural networks, random forests, and gradient boosting machines [10]. They often deliver high performance but require post-hoc techniques to explain their outputs [12] [11].

2. Why is Explainable AI (XAI) critically important in ecotoxicology and drug development research?

XAI is crucial for several reasons [12] [11] [6]:

- Trust and Adoption: It helps researchers and regulators trust model predictions, especially when they inform critical decisions about chemical safety or therapeutic interventions.

- Debugging and Insight: Explanations can reveal spurious correlations, data leakage, or unstable features, leading to faster model improvement.

- Regulatory Compliance: Regulations increasingly push for transparency, fairness, and oversight in models that impact health and the environment. XAI helps meet these requirements.

- Scientific Discovery: In ecotoxicology, methods like SHAP can link predictive features to mechanistic toxicology, helping to generate new hypotheses about the biological pathways affected by pollutants [6] [13].

3. How do I choose between using an inherently interpretable model and applying post-hoc explanation techniques?

The choice involves a trade-off and should be guided by your project's specific needs [11] [10]:

- Use Inherently Interpretable Models (White Box) when operating in high-stakes, regulated domains, when working with smaller datasets, or when transparency is the primary requirement. Examples include decision trees or linear models.

- Use Post-hoc Explanation Techniques with complex models when the predictive task is highly complex and requires the superior accuracy of a black-box model, but explanations are still needed for validation and insight. Techniques like LIME and SHAP can be applied to models like random forests or neural networks [14].

4. What are the most common XAI techniques used for high-dimensional environmental data?

For high-dimensional data common in ecotoxicology, such as measurements of numerous chemical mixtures, the following techniques are particularly valuable [11] [6] [13]:

- SHAP (SHapley Additive exPlanations): Provides consistent, theoretically grounded feature attributions for both global and local explanations. It is highly effective for identifying the most influential predictors from a large set of features.

- LIME (Local Interpretable Model-agnostic Explanations): Explains individual predictions by approximating the complex model locally with an interpretable one.

- Partial Dependence Plots (PDP): Visualize the relationship between a feature and the predicted outcome on average.

5. Our model's performance is degrading over time. What could be the cause and how can XAI help?

Performance degradation is often caused by model drift [12] [11]. This can be:

- Data Drift (Covariate Shift): When the statistical properties of the input data change over time.

- Concept Drift: When the relationship between the input variables and the target variable changes. XAI helps by: Continuously monitoring and comparing feature importance distributions and individual explanations over time. A significant change in the explanations or the features that drive predictions can serve as an early warning signal for model drift, allowing you to retrain or update the model proactively.

Troubleshooting Guide for Common XAI Issues

| Issue | Possible Causes | Diagnostic Steps | Recommended Solutions |

|---|---|---|---|

| Unstable/Conflicting Explanations | High model sensitivity; Noisy data; Unsuitable explanation technique [11]. | Check explanation stability for similar input instances; Use multiple explanation methods for comparison. | Simplify the model if possible; Use explanation methods with built-in stability guarantees; Increase data quality. |

| Explanations Lack Scientific Plausibility | Model has learned spurious correlations; Data quality issues; Domain knowledge not integrated [11]. | Validate explanations with a domain expert (e.g., an ecotoxicologist); Conduct a literature review on identified features. | Incorporate domain constraints (e.g., monotonicity); Use feature engineering informed by science; Prioritize models with plausible explanations. |

| Failure to Meet Regulatory Standards | Model is not sufficiently transparent; Lack of audit trail; Inadequate fairness assessments [12]. | Review regulatory guidelines (e.g., EU AI Act); Conduct an internal audit of model documentation and explanations. | Switch to an inherently interpretable model; Implement rigorous model cards and documentation; Use XAI for fairness and bias scanning. |

| Inability to Identify Key Features from Chemical Mixtures | High feature correlation; Complex interactions; Explanation method not capturing interactions [13]. | Use SHAP interaction values; Perform correlation analysis on features. | Employ techniques like SHAP that can handle interactions; Use recursive feature elimination (RFE) for feature selection [13]. |

| Long Training Time for Explainable Models | Large dataset; Complex model architecture; Inefficient explanation algorithms. | Profile code to identify bottlenecks; Start with a smaller subset of data. | Use faster, model-specific explanation methods; Leverage hardware acceleration (GPUs); Use approximation methods for explanations. |

Experimental Protocols & Methodologies

Protocol 1: Building an Interpretable ML Model for Ecotoxicity Prediction

This protocol is adapted from a study predicting depression risk from environmental chemical mixtures (ECMs) using an interpretable machine-learning framework [13].

1. Problem Formulation & Data Collection

- Objective: To predict a continuous or categorical ecotoxicological endpoint (e.g., LC50, mutagenicity) based on a set of features (e.g., chemical descriptors, exposure levels).

- Data Source: Utilize public databases or experimental data. For example, the study used data from the National Health and Nutrition Examination Survey (NHANES) [13].

2. Data Preprocessing & Feature Selection

- Handling Missing Data: For covariates with less than 20% missing data, impute using methods like k-nearest neighbors (KNN). Exclude variables with excessive missing data [13].

- Outlier Treatment: Adjust abnormal values using methods like Winsorization (e.g., setting a threshold at the 1st and 99th percentiles) [13].

- Feature Selection: Use Recursive Feature Elimination (RFE) with a 10-fold cross-validation to identify the most important features. This helps in reducing dimensionality and improving model interpretability [13].

3. Model Training & Evaluation

- Model Selection: Train and compare multiple machine learning algorithms. The cited study evaluated nine, including Random Forest (RF), Gradient Boosting Machines (GBM), and Support Vector Machines (SVM) [13].

- Model Evaluation: Use a 10-fold cross-validation scheme. Evaluate models based on performance metrics like AUC (Area Under the ROC Curve), F1 score, and Root Mean Square Error (RMSE). In the cited study, the Random Forest model showed the best performance (AUC: 0.967) [13].

4. Model Explanation & Interpretation

- Global Explanation: Apply SHAP (SHapley Additive exPlanations) to the entire dataset to understand the overall importance of each feature in the model's predictions [13].

- Local Explanation: Use SHAP or LIME to explain individual predictions, which is crucial for understanding specific cases [11].

The following workflow diagram summarizes this experimental protocol:

Protocol 2: Implementing a Post-hoc XAI Technique with SHAP

This protocol details the steps to explain a "black-box" model's predictions using SHAP, which is highly applicable for understanding complex models in ecotoxicology [11] [6] [13].

1. Model Agnostic Setup

- Prerequisite: A trained machine learning model (e.g., a random forest or neural network) and a test dataset.

- Objective: To explain the model's output by calculating the contribution (Shapley value) of each feature to the final prediction for a single instance or the entire dataset.

2. SHAP Explanation Computation

- Choose a SHAP Explainer: Select the appropriate explainer for your model (e.g.,

TreeExplainerfor tree-based models,KernelExplaineras a general-purpose method). - Compute SHAP Values: Calculate the SHAP values for the instances you wish to explain. This provides a matrix of contributions for each feature per instance.

3. Visualization and Interpretation

- Summary Plot: Create a global summary plot (e.g., a bar plot of mean absolute SHAP values) to see which features are most important overall.

- Force Plot: Generate a local force plot for a single prediction to see how each feature pushed the model's output from the base value to the final prediction.

- Dependence Plot: Plot a feature's SHAP value against its feature value to understand the direction and nature of its effect (e.g., monotonic, non-linear).

Essential Research Reagent Solutions

The following table details key computational "reagents" and tools essential for conducting interpretable machine learning research in ecotoxicology.

| Research Reagent / Tool | Function & Explanation |

|---|---|

| SHAP (SHapley Additive exPlanations) | A unified framework for interpreting model predictions. It assigns each feature an importance value for a particular prediction based on cooperative game theory, providing both global and local interpretability [11] [13]. |

| LIME (Local Interpretable Model-agnostic Explanations) | A technique that explains individual predictions by perturbing the input data and seeing how the predictions change. It then fits a simple, interpretable model (like linear regression) to the perturbed data to explain the local decision boundary [11] [14]. |

| Partial Dependence Plots (PDP) | A global model-agnostic method that visualizes the marginal effect one or two features have on the predicted outcome of a machine learning model, helping to understand the relationship between features and prediction [11]. |

| Random Forest with Recursive Feature Elimination (RFE) | An ensemble learning method that constructs many decision trees. When combined with RFE, it becomes a powerful tool for feature selection, helping to identify the most relevant predictors from a high-dimensional dataset, such as a complex chemical mixture [13]. |

| Model Cards | A documentation framework used to provide context and transparency about a machine learning model's intended use, performance characteristics, and limitations. This is crucial for auditability and regulatory compliance [12] [11]. |

Core Conceptual Relationships in XAI

The following diagram illustrates the logical relationships between core concepts in Explainable AI, from the fundamental trade-off to the ultimate goal of trustworthy AI.

Frequently Asked Questions (FAQs) on Model Interpretability

FAQ 1: Why is model interpretability suddenly so critical in our ecotoxicology research? Regulatory bodies are increasingly mandating transparency for model-based decisions, especially in environmental and health safety domains [15]. Furthermore, interpretability is an ethical imperative. It helps ensure that your models for predicting chemical toxicity (e.g., HC50) are not making decisions based on spurious correlations, which builds trust in your results and ensures accountability for the outcomes [2] [4]. Explaining a model's decision-making process is key to justifying its use in high-stakes scenarios like ecological risk assessment [2].

FAQ 2: What is the fundamental difference between an interpretable model and an explainable black-box model? An inherently interpretable model is designed to be transparent from the start, such as a linear model with meaningful coefficients or a short decision tree. Its internal logic is the explanation [4]. In contrast, an explainable black-box model (like a complex neural network or ensemble method) is opaque, and a second, separate technique (like SHAP or LIME) is used to generate post-hoc explanations for its predictions after the fact [16] [17]. The core distinction is that the former provides a single, faithful explanation, while the latter provides an approximation that may not be perfectly accurate [4].

FAQ 3: We need high accuracy. Must we sacrifice performance for interpretability? Not necessarily. A common misconception is that a trade-off between accuracy and interpretability is inevitable [4]. For many problems involving structured data with meaningful features—common in scientific fields—highly interpretable models like logistic regression or decision trees can achieve performance comparable to more complex black boxes [4]. The iterative process of building an interpretable model often leads to better data understanding and feature engineering, which can ultimately improve overall accuracy [4].

FAQ 4: When should we use model-agnostic interpretation methods like SHAP and LIME? SHAP and LIME are most valuable when the highest possible predictive accuracy depends on using a complex, black-box model, but you still have a regulatory or scientific need to explain its predictions [16] [17]. SHAP is excellent for quantifying the contribution of each feature to a single prediction [16], while LIME is designed to create a local, interpretable approximation around a specific prediction [16]. They should be used with the understanding that they are approximations of the model's behavior [4].

FAQ 5: Our Random Forest model for predicting fish population impact is performing well. How can we identify which features are driving its predictions? For a global understanding of your model, you can use Permutation Feature Importance to see which features cause the largest increase in model error when shuffled [16]. For a more detailed, instance-level explanation, SHAP (SHapley Additive exPlanations) is a powerful method that shows how each feature contributes to pushing the model's output from a base value to the final prediction for any given data point [2] [16] [17].

Troubleshooting Guides

Problem: Model Explanations are Inconsistent or Unstable

Symptoms: Slightly changing the input data or re-running LIME/SHAP leads to significantly different explanations. The story behind the model's decision seems to change arbitrarily.

Diagnosis and Resolution:

Check for Local Explanation Instability:

- Cause: This is a known issue with some local surrogate methods like LIME, where the random sampling used to perturb instances can lead to different results [16].

- Solution: Increase the number of samples in LIME to stabilize the approximation. For critical results, do not rely on a single explanation; instead, run the explanation multiple times to ensure the core features identified are consistent. Consider using SHAP, which, while computationally more expensive, can provide more consistent results due to its theoretical foundation [16].

Validate Feature Independence Assumptions:

- Cause: Methods like PDP and Permutation Feature Importance assume features are independent. If your ecotoxicology features are highly correlated (e.g., chemical weight and volume), these methods can create unrealistic data points during analysis, leading to biased interpretations [16].

- Solution: Analyze your feature correlation matrix. If high correlations exist, consider using methods like Accumulated Local Effects (ALE) plots, which are designed to handle correlated features effectively [5].

Audit the Model for Overfitting:

- Cause: An overfitted model has learned noise in the training data rather than the generalizable underlying relationships. This makes its behavior, and therefore its explanations, highly sensitive to small input changes.

- Solution: Review your model's performance on a held-out test set. If performance on the test set is significantly worse than on the training set, implement stronger regularization, simplify the model, or gather more data.

Problem: Explanation Does Not Align with Domain Knowledge

Symptoms: Your model has high predictive accuracy, but the interpretation method highlights a feature that ecotoxicologists know is biologically irrelevant or suggests a relationship that is the inverse of established scientific consensus (e.g., a known toxicant is shown to decrease toxicity risk).

Diagnosis and Resolution:

Investigate for a Spurious Correlation:

- Cause: The model may have latched onto an artifact in your dataset. A famous example is a wolf/husky classifier that learned to detect "snow" in the background rather than the animal's features [2].

- Solution: Use your interpretation method as a debugging tool. Perform a thorough error analysis: are there subgroups of data where the model performs poorly? Manually inspect the instances where the counter-intuitive feature has a strong influence. This may reveal a data collection or labeling bias.

Check for Feature Interaction Effects:

- Cause: The effect of one feature may be dependent on the value of another. A PDP might show an average effect that hides important heterogeneous relationships [16].

- Solution: Move beyond one-dimensional plots. Use Individual Conditional Expectation (ICE) plots to see how predictions for individual instances change with a feature, which can reveal interactions [16]. Use SHAP interaction values to quantitatively analyze feature interactions.

Enforce Model Constraints with Interpretable Models:

- Cause: The black-box model is free to learn any relationship, even those that are scientifically impossible.

- Solution: If the problem persists, abandon the black-box approach. Switch to an inherently interpretable model where you can enforce constraints like monotonicity [4]. For example, you can constrain a feature like "pesticide concentration" to have a strictly non-negative effect on a "mortality risk" score, ensuring the model's behavior aligns with fundamental domain knowledge.

Problem: Difficulty Meeting Regulatory Scrutiny with a Black-Box Model

Symptoms: Regulators or internal compliance officers are questioning your model, asking for a complete and faithful accounting of its logic, which your post-hoc explanations are failing to provide.

Diagnosis and Resolution:

Recognize the Fidelity Gap of Post-hoc Explanations:

- Cause: By definition, an explanation for a black-box model cannot have perfect fidelity. If it did, it would be the model [4]. Regulators are increasingly aware that these explanations can be inaccurate representations of the underlying model [15] [4].

- Solution: The most robust path to compliance is to use an inherently interpretable model [4]. Models like logistic regression, decision trees, or rule-based models provide their own transparent logic, which is exactly what they compute. This eliminates the fidelity gap and builds trust with regulators [4] [17].

Implement a Global Surrogate Model:

- Cause: You are temporarily locked into a black-box model for legacy reasons but need a global understanding.

- Solution: Train an interpretable model (e.g., a decision tree) to approximate the predictions of your black-box model across your entire dataset [16]. This surrogate model can provide a holistic view of the black box's logic. Be transparent that this is an approximation and report the fidelity (e.g., R-squared) between the surrogate and the original model [16].

Table 1: Comparison of Key Model-Agnostic Interpretability Methods

| Method | Scope | Key Advantage | Key Limitation | Best Use Case in Ecotoxicology |

|---|---|---|---|---|

| Partial Dependence Plot (PDP) [16] | Global | Intuitive visualization of a feature's average marginal effect. | Hides heterogeneous effects; assumes feature independence. | Understanding the average effect of a single chemical property (e.g., logP) on toxicity. |

| Individual Conditional Expectation (ICE) [16] | Local & Global | Uncovers individual heterogeneity and feature interactions. | Can become cluttered; hard to see the average effect. | Identifying if a toxicant affects a specific sub-population of fish differently. |

| Permutation Feature Importance [16] | Global | Simple, intuitive measure of a feature's importance to model performance. | Results can be unstable; requires access to true outcomes. | Auditing a model to find the top 3 most important molecular descriptors. |

| LIME [16] [17] | Local | Creates human-friendly, contrastive explanations for a single prediction. | Explanations can be unstable; sensitive to kernel settings. | Explaining why a specific chemical was flagged as "highly toxic" to a regulator. |

| SHAP [2] [16] [17] | Local & Global | Provides a unified, theoretically sound measure of feature contribution. | Computationally expensive for large datasets/models. | A comprehensive audit of model logic, both for individual predictions and overall behavior. |

Experimental Protocols

Protocol: Explaining a HC50 Prediction using SHAP

Objective: To interpret a trained XGBoost model predicting chemical ecotoxicity (HC50) by quantifying the contribution of each molecular descriptor to the prediction for a specific chemical.

Materials: Trained XGBoost model, pre-processed test dataset of chemical descriptors, Python environment with shap library installed.

Methodology:

- Model Training: Train an XGBoost regressor to predict HC50 values using a set of curated molecular descriptors [5].

- SHAP Explainer Initialization: Initialize a

TreeExplainerfrom theshaplibrary, passing your trained XGBoost model. - SHAP Value Calculation: Calculate the SHAP values for the instance (chemical) you wish to explain. This is done by calling

explainer.shap_values(instance). - Result Visualization:

- Use

shap.force_plot()to visualize the explanation for the single instance, showing how each feature pushed the prediction from the base value. - Use

shap.summary_plot()to get a global view of feature importance across the entire dataset.

- Use

Troubleshooting: If the SHAP calculation is slow, consider using a representative sample of your training data as the background dataset for the explainer, rather than the full set.

Protocol: Validating a Global Surrogate Model

Objective: To create and validate a globally interpretable surrogate model that approximates the predictions of a black-box model for regulatory reporting.

Materials: Black-box model (e.g., Random Forest, Neural Network), training dataset, interpretable model algorithm (e.g., Logistic Regression, shallow Decision Tree).

Methodology:

- Generate Predictions: Use the black-box model to generate predictions (

Ŷ_blackbox) for your training (or a hold-out) dataset. - Train Surrogate Model: Train your chosen interpretable model using the original features of the dataset as inputs and

Ŷ_blackboxas the target variable. - Fidelity Assessment:

- Use the surrogate model to make its own predictions (

Ŷ_surrogate) on the same dataset. - Calculate the R-squared between

Ŷ_blackboxandŶ_surrogateto measure how well the surrogate approximates the black box [16].

- Use the surrogate model to make its own predictions (

- Interpret and Report: Interpret the surrogate model (e.g., examine coefficients in linear regression, plot the decision tree) and document its logic. In your report, explicitly state the fidelity (R-squared) of the surrogate.

Troubleshooting: A low R-squared indicates the surrogate is a poor approximation. This suggests the black-box model's logic is too complex. Consider using a simpler black-box model or a different class of interpretable model.

Visual Workflow: From Black Box to Interpretable Model

The diagram below illustrates the logical pathways and decision points for achieving model interpretability in ecotoxicology research, bridging the gap between black-box models and regulatory acceptance.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Libraries for Interpretable ML Research

| Tool Name | Type / Category | Primary Function in Research |

|---|---|---|

| SHAP [16] [17] | Explanation Library | Quantifies the contribution of each feature to any prediction for any model, providing both local and global interpretability. |

| LIME [16] [17] | Explanation Library | Creates local, interpretable surrogate models to explain individual predictions of any black-box classifier or regressor. |

| InterpretML [17] | Unified Framework | Provides a single library for training interpretable models (like Explainable Boosting Machines) and for using model-agnostic explanation methods. |

| Eli5 [17] | Debugging & Inspection | Helps to debug and inspect machine learning classifiers and explain their predictions. Supports various ML frameworks. |

| ALE [5] | Visualization Tool | Generates Accumulated Local Effects plots, which are more reliable than Partial Dependence Plots when features are correlated. |

| XGBoost [5] | ML Algorithm | A highly performant gradient-boosting algorithm that can be used as a black-box model and later explained with SHAP due to its tree-based structure. |

A Practical Toolkit: Key Interpretable ML Techniques and Their Applications in Ecotoxicology

Technical Support Center

Frequently Asked Questions (FAQs)

Q: My SHAP summary plot shows high feature importance for a variable that is known to be biologically irrelevant in ecotoxicology. Is my model wrong?

- A: Not necessarily. This can indicate a strong statistical correlation in your dataset that is not causal. It could also be due to feature leakage, where information from the target variable is inadvertently included in the features. We recommend:

- Conducting a domain expertise review to validate the finding.

- Checking your data preprocessing pipeline for potential leakage.

- Using SHAP dependence plots to investigate the relationship between the feature and the model's output.

- A: Not necessarily. This can indicate a strong statistical correlation in your dataset that is not causal. It could also be due to feature leakage, where information from the target variable is inadvertently included in the features. We recommend:

Q: LIME provides different explanations for the same data point when I run it multiple times. Why is this happening and how can I trust the result?

- A: LIME's explanation is based on a locally sampled dataset, which is stochastic. The variability is a known characteristic. To increase stability:

- Increase the

num_samplesparameter to generate a more stable local dataset. - Set a random seed (

random_state) in your code for reproducible results. - Run LIME multiple times and consider the consensus or average of the top features as a more robust explanation.

- Increase the

- A: LIME's explanation is based on a locally sampled dataset, which is stochastic. The variability is a known characteristic. To increase stability:

Q: When explaining an image-based model for identifying toxic algae blooms, LIME highlights seemingly random pixels. What could be the cause?

- A: This is often due to the perturbation step in LIME for images. The super-pixel segmentation might not align with the biologically relevant features in the image.

- Experiment with different segmentation algorithms (e.g.,

quickshift,felzenszwalb) provided by LIME'sImageExplanationclass. - Adjust segmentation parameters like

kernel_sizeandmax_distto create more meaningful super-pixels. - Validate the highlighted regions with a domain expert to ensure they correspond to known visual indicators of the bloom.

- Experiment with different segmentation algorithms (e.g.,

- A: This is often due to the perturbation step in LIME for images. The super-pixel segmentation might not align with the biologically relevant features in the image.

Q: Calculating SHAP values for my large dataset is computationally very slow. Are there any optimizations?

- A: Yes. The exact KernelSHAP method can be slow. Consider these alternatives:

- Use the

TreeSHAPexplainer if your underlying model is tree-based (e.g., XGBoost, Random Forest). It is computationally efficient and exact. - For non-tree models, use the

SamplingExplainerorPartitionExplaineras a faster, approximate alternative toKernelExplainer. - Compute SHAP values on a representative subset of your data or use parallel processing.

- Use the

- A: Yes. The exact KernelSHAP method can be slow. Consider these alternatives:

Troubleshooting Guides

Issue: SHAP Bar Plot Shows All Features with Near-Zero Importance

- Symptoms: The bar plot for a classification model shows all features with very low mean(|SHAP value|).

- Diagnosis: This typically occurs when the model is primarily using a single, dominant feature for its predictions.

- Resolution:

- Generate a beeswarm plot (

shap.plots.beeswarm). This can reveal if one feature has high SHAP values but low variance (which keeps the mean absolute value low). - Check for a highly imbalanced dataset. The model might be predicting the majority class without relying on strong feature signals.

- Verify that the model has not over-regularized, forcing all feature weights to be small.

- Generate a beeswarm plot (

Issue: LIME Explanation Fails with a "Model Prediction Error"

- Symptoms: The

explain_instancefunction returns an error related to the model's prediction function. - Diagnosis: The format of the data passed to the model's prediction function during LIME's perturbation step is incorrect.

- Resolution:

- Ensure your

predict_fnreturns probabilities for classification (e.g.,model.predict_proba) and not class labels. - Verify that the perturbed samples created by LIME are in the exact same format (shape, data type, and feature order) as your original training data.

- For text explainers, ensure the

predict_fncan handle a list of strings.

- Ensure your

- Symptoms: The

Quantitative Data Summary

Table 1: Comparison of SHAP and LIME Core Properties

| Property | SHAP | LIME |

|---|---|---|

| Explanation Scope | Global & Local | Local |

| Theoretical Foundation | Cooperative Game Theory (Shapley values) | Local Surrogate Model |

| Output | Additive feature importance values (Shapley values) | Linear model weights for the local vicinity |

| Stability | High (Deterministic for given model & data) | Lower (Stochastic due to sampling) |

| Computational Cost | Can be high for complex models/large datasets | Generally lower than SHAP |

| Feature Dependence | Accounted for (with TreeSHAP, KernelSHAP) | Not inherently accounted for |

Table 2: Common SHAP Explainer Types and Their Use Cases in Ecotoxicology

| Explainer | Underlying Model Type | Use Case Example |

|---|---|---|

TreeExplainer |

Tree-based models (RF, XGBoost, etc.) | Predicting fish mortality based on chemical descriptors. |

KernelExplainer |

Any model (model-agnostic) | Interpreting a neural network for toxicity prediction. |

DeepExplainer |

Deep Learning models (TF, PyTorch) | Analyzing a CNN model for histopathology image classification. |

LinearExplainer |

Linear Models | Explaining a logistic regression model for binary toxicity classification. |

Experimental Protocols

Protocol: Global Feature Importance Analysis with SHAP for a Toxicity Prediction Model

- Train Model: Train your chosen model (e.g., XGBoost) on your ecotoxicology dataset (e.g., molecular structures and LC50 values).

- Initialize Explainer:

- Calculate SHAP Values:

- Generate Summary Plot:

- Interpretation: The plot displays features by their mean absolute impact on model output, providing a global view of which molecular descriptors drive toxicity predictions.

Protocol: Local Instance Explanation with LIME for a Single Compound Prediction

- Setup LIME Explainer:

- Select Instance: Choose a single compound from your test set (

X_test.iloc[instance_index]). - Generate Explanation:

- Visualize: Display the explanation in a notebook:

exp.show_in_notebook(show_table=True). - Interpretation: The output shows which features and their values contributed most to the prediction for that specific compound, allowing for hypothesis generation about its mechanism of action.

Visualizations

SHAP vs LIME Workflow

SHAP Dependence Plot Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Interpretable ML in Ecotoxicology

| Item | Function |

|---|---|

SHAP Python Library (shap) |

Core library for calculating and visualizing SHAP values for any model. |

LIME Python Library (lime) |

Core library for creating local, interpretable surrogate explanations. |

| Tree-based Models (e.g., XGBoost) | Often provide high performance and have fast, exact SHAP value calculators (TreeExplainer). |

| Domain-Knowledge Feature Set | A curated set of molecular descriptors or biological endpoints relevant to toxicology, crucial for validating explanation plausibility. |

| Curated Benchmark Dataset | A dataset with known toxicants and mechanisms, used to validate that explanation methods highlight the correct features. |

| Jupyter Notebook Environment | An interactive environment ideal for running explanation code and visualizing results side-by-side. |

Frequently Asked Questions (FAQs)

Q1: What is the core difference between a PDP and an ICE plot? A Partial Dependence Plot (PDP) shows the average effect that one or two features have on the predictions of a machine learning model [18] [19]. In contrast, an Individual Conditional Expectation (ICE) plot shows how the prediction for a single instance changes as the feature changes, displaying one line per instance [20] [21]. The PDP is the average of all ICE lines [22].

Q2: When should I use an ICE plot instead of a PDP? Use an ICE plot when you suspect your model has heterogeneous relationships or interactions [20] [21]. If the average effect shown by a PDP is flat, it might hide that the feature has positive effects for some instances and negative effects for others, which would be revealed in an ICE plot [23].

Q3: What is the fundamental assumption of PDPs and ICE plots, and what happens when it is violated? Both methods assume that the features of interest are independent of the other features [18] [19]. When this assumption is violated (e.g., with correlated features), the plots are created using unrealistic data points. For example, you might see a prediction for a day with high rainfall and low humidity, a combination that never occurs in the real data, which can lead to misleading interpretations [18] [19].

Q4: How can I improve the interpretability of an ICE plot that is too crowded? For overcrowded ICE plots, you can:

- Use transparency for the lines [20].

- Plot only a sample of instances [20] [22].

- Use a centered ICE (c-ICE) plot, which anchors all curves to start at zero at a specific feature value, making it easier to see divergence [20] [21].

- Combine it with the PDP line to maintain a view of the average effect [20] [19].

Q5: In an ecotoxicology context, how can I visualize interactions between an environmental stressor and a landscape feature? You can use a two-way PDP to visualize the interaction between two features, such as impervious surface cover and watershed area, on a predicted biotic index [18] [7] [19]. This creates a surface or heatmap showing how the joint values of the two features affect the prediction.

Troubleshooting Guides

Issue 1: Uninterpretable or Misleading PDP/ICE Plots

Problem Your Partial Dependence Plot appears flat, shows unexpected behavior in data-sparse regions, or you suspect it is being skewed by feature correlations.

Solution Follow this diagnostic workflow to identify and address the issue.

Diagnostic Steps & Protocols

Overlay Feature Distribution: The first step is to visually inspect the data support for the PDP.

- Protocol: Using a plotting library (e.g.,

matplotliborseaborn), add a rug plot or histogram to the x-axis of your PDP. This shows the distribution of the feature values in your training data [18]. - Interpretation: If the PDP shows a strong trend in a region with very few data points, that part of the plot is an extrapolation and should not be trusted.

- Protocol: Using a plotting library (e.g.,

Generate ICE Plots: This test determines if a flat PDP is hiding instance-level heterogeneity.

- Protocol: Using your machine learning library (e.g.,

sklearn.inspection.PartialDependenceDisplaywithkind='individual'orkind='both'), generate an ICE plot for the same feature [19] [24]. - Interpretation: If the ICE lines are not parallel and show different slopes or directions, it indicates the presence of interactions between the feature of interest and other features [20] [21]. The flat PDP was an average of these opposing effects.

- Protocol: Using your machine learning library (e.g.,

Check for Feature Correlations: This test validates a core assumption of the method.

- Protocol: Calculate a correlation matrix (for numerical features) or analyze dependency for categorical features on your training dataset. Visually inspect it with a heatmap.

- Interpretation: If the feature of interest is highly correlated (e.g., |r| > 0.7) with other features in the model, the PDP/ICE plots will be computed using unrealistic data combinations, violating the method's assumption and potentially rendering it invalid [18] [19].

Issue 2: Implementing PDP/ICE for Categorical or Multi-class Models

Problem You are working on a multi-class classification problem (e.g., predicting "Poor," "Fair," or "Good" ecological condition) or your features of interest are categorical, and you are unsure how to correctly generate and interpret the plots.

Solution Adapt the standard procedure for categorical outputs and inputs.

Protocol for Multi-class Classification

- Software: The

sklearn.inspection.PartialDependenceDisplayfunction has atargetparameter for this purpose [19]. - Procedure: After training your model, you must generate one PDP or ICE plot per class. Each plot will show the relationship between the feature and the predicted probability for that specific class [18] [19].

- Example: In an ecotoxicology context, you might have three PDPs for a feature like "nitrate deposition": one showing its effect on the probability of a "Poor" MMI condition, one for "Fair," and one for "Good" [7].

Protocol for Categorical Features

- Procedure: The calculation is straightforward: for each category, force all data instances to have that category and average the predictions [18].

- Visualization: The partial dependence for a categorical feature is typically displayed as a bar plot [19]. For two-way interactions between categorical features, a heatmap is an effective visualization.

Essential Research Reagent Solutions

| Reagent / Software Tool | Function in Analysis | Ecotoxicology Application Example |

|---|---|---|

sklearn.inspection.PartialDependenceDisplay [19] [24] |

Primary Python function for generating PDP and ICE plots. | Visualize the marginal effect of watershed area on a benthic macroinvertebrate index. |

R iml Package [20] [7] |

R package providing model-agnostic interpretability tools, including PDP and ICE. | Analyze the effect of riparian vegetation condition across different ecoregions. |

R pdp Package [20] |

R package dedicated to constructing partial dependence plots. | Plot the relationship between impervious surface cover and predicted stream health. |

| Centered ICE (c-ICE) [20] [21] | A variant of ICE plots where lines are anchored at a starting point. | Better visualize the divergence in effect of a toxin across different species. |

| Accumulated Local Effects (ALE) Plots [7] | An alternative to PDP that is faster and more reliable when features are correlated. | Accurately model the effect of bed stability, which is correlated with watershed slope. |

| Two-way PDP [18] [19] | A 3D plot or heatmap showing the interaction effect of two features on the prediction. | Investigate the joint effect of nitrate deposition and agriculture land use. |

Core Concepts & Troubleshooting FAQs

This section addresses frequently asked questions to build a foundational understanding of Accumulated Local Effects (ALE) plots and troubleshoot common issues.

FAQ 1: What is the core advantage of ALE over Partial Dependence Plots (PDP) in the presence of correlated features?

In real-world ecotoxicology data, features (e.g., chemical concentration, pH, water temperature) are often correlated. PDPs create unrealistic data instances by forcing all data points to have a specific feature value, ignoring correlations [25]. This can lead to biased estimates. ALE plots overcome this by using the conditional distribution and calculating differences in predictions within small intervals, which blocks the effect of other correlated features and provides a more reliable estimate of the feature's main effect [25] [26] [27].

FAQ 2: My ALE plot is very "wiggly" and unstable. What could be the cause and how can I fix it?

A wiggly or unstable ALE plot is often a symptom of data sparsity and an inappropriate number of intervals (bins) [26] [28]. In high-dimensional data, or data that is not uniformly distributed, some intervals may contain too few instances to reliably estimate the local effect.

- Solution: Reduce the number of intervals (

max_num_binsparameter in R'salepackage) to increase the number of data points per interval, creating a smoother, more stable plot [29] [28]. This trades off some detail for greater reliability.

FAQ 3: How do I interpret the y-axis value on an ALE plot for a continuous feature?

The ALE value is centered at zero. An ALE value at a specific feature value represents the main effect of that feature on the prediction compared to the average prediction of the dataset [25] [26] [28]. For example, in a model predicting fish mortality, if an ALE value of +0.15 is associated with a toxin concentration of 5mg/l, it means that at this concentration, the model's predicted probability of mortality is, on average, 0.15 units higher than the average prediction across all data points [27].

FAQ 4: Can ALE plots be used for categorical features, and if so, how?

Yes, but it requires an extra step. Since ALE relies on accumulating local changes, the categories must be ordered in a meaningful way [26] [28]. The typical approach is to order categories based on their similarity to other features or their relationship with the target variable using methods like:

- Target Encoding: Using the average target value per category.

- Distance Similarity: Computing a similarity matrix based on other features [26]. Once ordered, the ALE calculation proceeds analogously to the numerical case.

FAQ 5: What is a key limitation of ALE plots that I should be aware of?

ALE plots primarily visualize the main effect of a single feature. While second-order ALE plots can show two-way interactions, ALE is not designed to easily reveal complex higher-order interactions between multiple features on its own. For this, you may need to supplement ALE with other techniques like SHAP interaction values [30] [28].

Visualizing the ALE Workflow

The following diagram illustrates the core computational workflow for generating an ALE plot for a single numerical feature, connecting the theoretical concepts to the practical steps.

Essential Research Reagent Solutions

The table below catalogs key software tools and packages essential for implementing ALE analysis in your research workflow.

| Research Reagent | Function & Explanation |

|---|---|

ale R Package [29] |

A comprehensive R package for calculating ALE data, creating plots, and performing statistical inference with bootstrap-based confidence intervals. Extends ALE for hypothesis testing. |

ALEPython Package [31] |

A Python package dedicated to quickly generating ALE plots for models developed in scikit-learn and other ML frameworks. |

alibi Library [32] |

A popular Python library for model inspection and interpretation. It includes an ALE implementation alongside other methods like Anchor, Counterfactuals, and CEM. |

iml R Package [7] |

An R package providing a unified interface for many interpretable machine learning methods, including ALE plots, partial dependence, and Shapley values. |

mgcv R Package [29] |

While not exclusively for ALE, the Generalized Additive Models (GAMs) in mgcv can serve as a highly interpretable "white-box" alternative or supplement for understanding complex, non-linear relationships. |

Comparative Analysis of Feature Effect Methods

The table below provides a structured comparison of ALE with two other common feature effect methods, PDP and M-Plots, summarizing their approaches and key differentiators.

| Method | Core Computational Approach | Handling of Correlated Features | Key Advantage | Key Disadvantage |

|---|---|---|---|---|

| Partial Dependence Plot (PDP) | Averages predictions over the marginal distribution of features [25]. | Poor. Creates unrealistic data instances when features are correlated, leading to biased effect estimates [25] [26]. | Intuitive and simple to understand. | Can be highly misleading with correlated features common in ecological data [7]. |

| Marginal Plots (M-Plots) | Averages predictions over the conditional distribution of the feature [25]. | Mixed. Avoids unrealistic data but mixes the effect of the feature of interest with effects of all correlated features [25] [27]. | Uses realistic data instances for averaging. | Does not isolate the pure effect of a single feature; effect is conflated. |

| Accumulated Local Effects (ALE) | Averages differences in predictions over the conditional distribution and accumulates them [25]. | Strong. Isolates the effect of the feature of interest by using differences, blocking the influence of correlated features [25] [33]. | Provides an unbiased estimate of the feature's main effect, even with correlated features. | More complex to implement and interpret than PDP; requires sufficient data in each interval [26]. |

In both data science and experimental sciences, a synergistic effect occurs when the combined effect of two or more features, drugs, or chemical agents is greater than the sum of their individual effects [34] [35]. Detecting and quantifying these interactions is crucial for advancing fields such as drug discovery, ecotoxicology, and the development of interpretable machine learning models. Synergistic interactions can reveal complex biological pathways, improve the efficacy of therapeutic treatments, and enhance the predictive power of statistical models. However, accurately identifying these interactions presents significant methodological challenges, particularly when working with high-dimensional data or complex biological systems. This guide addresses the core concepts, methods, and common pitfalls in synergy detection to support your research.

Core Concepts and Quantification Models

Defining Synergy and Antagonism

The effect of combining two or more factors is typically categorized into three primary classes:

- Synergy: The combined effect is greater than the sum of the individual effects.

- Additivity: The combined effect is equal to the sum of the individual effects.

- Antagonism: The combined effect is less than the sum of the individual effects [34].

Fundamental Quantitative Models

Two classical models dominate the quantification of synergistic effects in biological and chemical contexts. The choice between them depends on the underlying assumption of how the agents interact.

Table 1: Classical Models for Quantifying Synergistic Effects

| Model | Core Principle | Synergy Condition | Best Used When |

|---|---|---|---|

| Loewe Additivity Model [34] [36] | Dose equivalence: one drug's dose can be replaced by an equally effective dose of another. | ( \sum{i=1}^{N} \frac{di}{D_i} < 1 ) | Two drugs are believed to have a similar mechanism of action or act on the same target pathway. |

| Bliss Independence Model [34] [36] | Probabilistic independence: drugs act through unrelated mechanisms. | ( E > E1 + E2 - E1E2 ) | Two drugs are assumed to act independently on different cellular targets or pathways. |

A significant challenge in the field is the lack of consensus on which model to use, as they can sometimes yield different interpretations of the same data. Bliss may misjudge synergism in certain cases, while Loewe may overemphasize antagonistic effects [34]. Furthermore, a critical consideration from ecotoxicology research is that interactive effects can vary dramatically with the total concentration of the mixture, the ratio of the components, and the magnitude of the tested effect (e.g., LC10 vs. LC50). Testing only a single combination ratio or concentration can lead to biased or incomplete interpretations of synergy [37].

Methodologies for Detection and Quantification

Experimental Design and Workflow

A robust experimental workflow for synergy detection involves careful planning, execution, and data analysis. The following diagram outlines the key stages, highlighting critical decision points that can influence the outcome and interpretability of your study.

Computational and Machine Learning Approaches

Machine learning (ML) offers powerful tools for predicting synergistic effects without exhaustive experimental testing.

- Supervised Learning: ML models can be trained to classify drug pairs as synergistic or antagonistic, or to predict a continuous synergy score. For example, a study predicting the synergy between antimicrobial peptides and antimicrobial agents achieved 76.92% accuracy using a hyperparameter-optimized Light Gradient Boosted Machine Classifier [38].

- Feature Importance: The same study used ML to identify that the target microbial species, the minimum inhibitory concentrations (MICs) of the individual agents, and the type of antimicrobial agent were the most important features for prediction, aligning with domain knowledge [38].

- Novel Metrics for Large Datasets: For high-dimensional data, such as genomics, a model-free metric called the Relative Synergy Coefficient (RSC) has been developed. The RSC uses information theory to detect interacting features that provide more information together than the sum of their individual contributions. Its advantage is the ability to identify synergistic pairs even when the individual features have small main effects, which are often overlooked by other methods [35].

The Scientist's Toolkit: Key Research Reagents & Databases

Leveraging publicly available data is crucial for building predictive models and validating findings. The table below lists essential databases for research on drug combination synergy.

Table 2: Key Databases for Drug Combination and Bioactivity Research

| Database Name | Type | URL | Key Description |

|---|---|---|---|

| DrugComb [34] | Synergistic Drug Combination | https://drugcomb.fimm.fi/ | Contains data on the response of cancer cell lines to drug combinations. |

| DrugCombDB [34] | Synergistic Drug Combination | http://drugcombdb.denglab.org/ | A database for drug combination screening. |

| NCI-ALMANAC [34] | Synergistic Drug Combination | https://dtp.cancer.gov/ncialmanac | A large dataset of drug combinations tested against cancer cell lines. |

| ChEMBL [34] | Bioactivity | https://www.ebi.ac.uk/chembl/ | A large-scale bioactivity database for drug-like molecules. |

| DrugBank [34] | Bioactivity | https://www.drugbank.com | Contains detailed drug data with comprehensive drug-target information. |

| GEO [34] | Gene Expression | https://www.ncbi.nlm.nih.gov/geo/ | A public repository of gene expression datasets. |

Troubleshooting Common Experimental Issues

FAQ 1: Why do different synergy models (Bliss vs. Loewe) give conflicting results for my drug combination?

This is a common occurrence and stems from their different fundamental assumptions [34] [36]. The Bliss independence model assumes the two drugs act through completely independent mechanisms. In contrast, the Loewe additivity model does not require this assumption and is often preferred when drugs might share a similar mechanism of action. There is no universal "best" model.

- Solution: The choice of model should be guided by your biological hypothesis. If the drugs target different pathways, Bliss may be more appropriate. If they target the same pathway, Loewe might be a better fit. For a comprehensive analysis, it is considered good practice to calculate synergy using both models and compare the results. Reporting both can provide a more nuanced understanding of the interaction.

FAQ 2: My in vitro synergy data does not translate to in vivo animal models. What could be the reason?

This is a major challenge in translational research. The discrepancy can arise from several factors specific to in vivo environments [36]:

- Pharmacokinetics (PK): The absorption, distribution, metabolism, and excretion (ADME) of the drugs in a living organism can lead to temporal and spatial variability in drug concentration at the target site, which is not present in a controlled in vitro setting.

- Tumor Microenvironment: Factors like hypoxia, pH, and stromal cell interactions in a real tumor can alter drug efficacy.

Experimental Endpoints: Synergy might be transient and occur only at specific time points during treatment. Relying solely on a final endpoint like mouse survival might miss these temporal synergistic windows [36].

Solution:

- Conduct PK/PD (pharmacodynamics) modeling to understand the drug exposure-response relationship in vivo.

- Measure tumor volume or other relevant biomarkers at multiple time points, not just at the end of the experiment, to capture temporal synergy [36].

- Use statistical methods designed for longitudinal data to analyze the time-series results.

FAQ 3: I am getting many false positive synergistic interactions in my high-throughput screen. How can I improve the reliability?

A key source of false positives, particularly when using the Chou-Talalay method (which is related to Loewe additivity), is the "additivity bias." This occurs when the individual effects of both drugs are potent (e.g., reducing viability to below 50%), making it appear that the combination is synergistic even when it is merely additive [36].

- Solution:

- Statistical Validation: Always complement a quantitative synergy score (like Combination Index or Excess over Bliss) with a statistical test (e.g., a t-test) comparing the measured combination effect to the expected additive effect [36].

- Dose-Response Curves: Avoid testing only a single high dose. Instead, generate full dose-response curves for single agents and their combinations to accurately define the additive effect line [37].

- Test Multiple Ratios: As advocated in ecotoxicology, test a wider spectrum of total concentrations and ratios to avoid biased interpretations based on a single data point [37].

Integration with Model Interpretability in Machine Learning

The challenge of interpreting synergistic effects mirrors the "black-box" problem in machine learning. Using complex models to predict synergy without understanding the "why" limits trust and utility [4] [2].

- The Trade-off Myth: A common belief is that one must sacrifice predictive accuracy for model interpretability. However, for structured data with meaningful features, this is often not true. Simple, interpretable models like logistic regression or decision trees can perform on par with deep neural networks, and the insights gained can lead to better data processing and ultimately, higher accuracy [4].

- The Path Forward: Instead of relying on post-hoc explanations for black-box models, a more reliable approach is to use inherently interpretable models [4]. These include:

- Sparse Linear Models: Models that use only a small number of features are easier for humans to validate.

- Decision Rules and Lists: Models that output simple "if-then" rules that can be directly validated by domain experts.

- Explainable Boosting Machines (EBMs): These are modern implementations of Generalized Additive Models (GAMs) that learn a separate function for each feature, making the contribution of each variable clear and intuitive.

By applying these interpretable ML techniques, researchers can not only predict synergistic interactions but also gain trustworthy, human-understandable insights into the key features driving those interactions, thereby bridging the gap between predictive power and scientific understanding.

Technical Support Center

FAQ & Troubleshooting Guide

Category 1: Model Performance & Interpretation

Q1: My model for predicting pesticide phytotoxicity has high accuracy (>90%), but the SHAP summary plot shows no clear feature importance. All SHAP values are clustered near zero. What does this mean and how can I fix it?

A: This is a classic sign of a data leakage issue, where information from the training dataset unintentionally leaks into the test set. The model is finding a "shortcut" to make predictions, often via a confounding variable, rather than learning the true underlying relationship between the molecular features and toxicity.

Troubleshooting Steps:

- Audit Your Data Splitting Procedure: Ensure you are splitting data at the correct level. For time-series or related compounds, standard random splits can cause leakage. Use structured splits (e.g., by chemical scaffold using a toolkit like RDKit) or temporal splits.

- Check for Preprocessing Leaks: Confirm that any data scaling or normalization was fit only on the training data and then applied to the test data. Fitting a scaler on the entire dataset is a common mistake.

- Identify and Remove Confounding Variables: A typical confounder in phytotoxicity is the application concentration or logP, which may be perfectly correlated with both the features and the endpoint in your dataset. Stratify your data splits to ensure this variable is balanced.

Q2: When interpreting my Random Forest model for ionic liquid toxicity, the permutation feature importance score for "Molecular Weight" is high, but the partial dependence plot (PDP) is flat. Why the contradiction?

A: This indicates that the feature "Molecular Weight" is likely correlated with other important features. Permutation importance can be inflated for correlated features because permuting one breaks its relationship with the others, harming the model's performance. The flat PDP shows that, in isolation, the marginal effect of Molecular Weight on the prediction is minimal.

Resolution Strategy:

- Use SHAP dependence plots instead of PDPs. This will plot the SHAP value for Molecular Weight against its feature value, and color the points by the value of the feature it is most correlated with (e.g., chain length). This often reveals the true, interactive relationship.

- Consider using a model without built-in feature selection (like Linear Regression) with heavy regularization (Lasso) to better handle multicollinearity for interpretation purposes.

Category 2: Data & Feature Handling

Q3: My dataset for chemical ecotoxicity is highly imbalanced (few toxic compounds). My model achieves 95% accuracy but fails to identify any true positives. How can I address this?