Overcoming Data Scarcity in Chemical Life Cycle Assessment with Machine Learning

Life Cycle Assessment (LCA) is essential for quantifying the environmental footprint of chemicals, yet its application is often hampered by data scarcity, high costs, and slow processes.

Overcoming Data Scarcity in Chemical Life Cycle Assessment with Machine Learning

Abstract

Life Cycle Assessment (LCA) is essential for quantifying the environmental footprint of chemicals, yet its application is often hampered by data scarcity, high costs, and slow processes. This article explores how Machine Learning (ML) is revolutionizing chemical LCA by enabling rapid, data-driven predictions even with limited datasets. We review the foundational challenges of data scarcity, detail cutting-edge methodological approaches like molecular-structure-based models, and provide troubleshooting strategies for data quality and model uncertainty. A comparative analysis of ML algorithms, including top-performing models like SVM and XGBoost, offers validation and selection guidance. Tailored for researchers, scientists, and drug development professionals, this synthesis provides a roadmap for integrating ML into LCA workflows to accelerate the development of safer and more sustainable chemicals.

The Data Scarcity Challenge in Chemical Life Cycle Assessment

Troubleshooting Guides

Missing Life Cycle Inventory (LCI) Data for Novel Chemicals

- Problem: LCI data for new chemicals, intermediates, or catalysts are absent from standard LCA databases (e.g., ecoinvent), making a comprehensive assessment impossible [1].

- Why it Happens: Standard databases cover a limited number of chemicals (e.g., ~1,000 in ecoinvent), which is insufficient for complex, multi-step chemical syntheses [1].

- Solution: Implement an iterative retrosynthetic workflow to build LCI data from the ground up [1].

- Deconstruct the target molecule into simpler precursors.

- Identify which precursors exist in available databases.

- For missing precursors, collect inventory data (energy, materials, waste) from published industrial routes, lab experiments, or patents.

- Compile the LCI for the missing chemical by tallying the resource use and emissions from its synthesis pathway.

Data Scarcity for Emerging Technologies at Low TRL

- Problem: Prospective LCA of lab-scale technologies suffers from a lack of data on future large-scale production [2].

- Why it Happens: Emerging technologies are often developed at laboratory scale, where processes are not optimized for mass production, making it difficult to anticipate the performance and resource use of a future commercial-scale plant [2].

- Solution: Apply prospective LCA methodology with scenario modeling [2].

- Collect foreground data based on research and expert interviews to model the technology at full scale.

- Model the background system (e.g., energy grid) based on predictive future scenarios to avoid temporal mismatch.

- Conduct scenario and sensitivity analyses to understand how data variability affects results and to provide a range of potential impacts.

Inconsistent Results from Inadequate System Boundaries

- Problem: LCA results for chemicals are incomparable due to inconsistent selection of system boundaries (e.g., gate-to-gate vs. cradle-to-gate) [3].

- Why it Happens: A practitioner may choose narrow boundaries to simplify the study or due to a lack of data on upstream supply chains [3].

- Solution: Adhere to the principle of "Cradle to Gate" as a minimum standard [3].

- Ensure system boundaries always include raw material extraction and all processing steps up to the production of the finished chemical.

- Avoid gate-to-gate boundaries, which focus only on direct (Scope 1) flows and neglect significant upstream impacts from material extraction and purification.

Frequently Asked Questions (FAQs)

Q1: What are the most critical data gaps when performing an LCA for a chemical, especially an Active Pharmaceutical Ingredient (API)? The most critical gaps are for specific intermediates, catalysts, and solvents used in multi-step syntheses. For example, a study on the antiviral drug Letermovir found that only 20% of the chemicals used were present in a standard LCA database [1]. Complex reagents like lithium diisopropylamide (LDA) or 1-Ethyl-3-(3-dimethylaminopropyl)carbodiimide (EDC) are typically missing, forcing practitioners to use inaccurate proxies or neglect their impacts entirely [1].

Q2: How does data scarcity affect the reliability of LCA results for chemical processes? Data scarcity introduces significant uncertainty and can lead to incomplete or inaccurate conclusions [4] [1]. When the life cycle inventory of a high-impact reagent is missing, the LCA may fail to identify the true environmental "hotspot," leading to misguided optimization efforts. For instance, an LCA might correctly flag a palladium-catalyzed coupling reaction as a hotspot but lack the data to compare the environmental footprint of alternative catalytic systems [1].

Q3: What is the difference between retrospective and prospective LCA, and why is it important for chemicals?

- Retrospective LCA assesses the environmental footprint of existing, established technologies based on current or historical data. It provides a static snapshot [2] [5].

- Prospective LCA is forward-looking and evaluates the potential impacts of emerging technologies that are not yet commercially mature. It models the technology in a future scenario at full scale, incorporating predictive background data and scenario analysis to guide early-stage R&D toward more sustainable designs [2].

This distinction is crucial for green chemistry, where decisions made at the lab bench can lock in environmental impacts for years. Prospective LCA helps avoid "regrettable developments" by providing early warnings [2].

Q4: Which machine learning techniques show the most promise for overcoming LCA data gaps? Supervised learning algorithms are particularly prominent. Studies frequently use Extreme Gradient Boosting (XGBoost), Random Forest, and Artificial Neural Networks to predict life cycle inventory data [4] [6]. These models can learn the relationship between a chemical's readily available properties (e.g., molecular, structural, physicochemical) and its LCI results, enabling predictions for new chemicals where only structural information is known [6] [5].

Experimental Protocols & Workflows

This protocol describes a method to build complete life cycle inventory data for a complex chemical synthesis by breaking it down into its constituent parts.

- Application: LCA of complex molecules (e.g., APIs, fine chemicals) with numerous intermediates not found in LCA databases.

- Principle: An iterative, retrosynthesis-based approach to fill data gaps without relying on proxies or class-averages.

Workflow: Iterative LCA for Chemical Synthesis

Materials and Reagents:

- LCA Software & Database: Brightway2, ecoinvent database v3.9.1 or newer [1].

- Chemical Synthesis Data: Detailed reaction data (masses, solvents, energy) for each synthesis step from literature, patents, or laboratory experiments [1].

- Functional Unit: 1 kg of the target chemical (e.g., final API) [1].

Step-by-Step Procedure:

- Goal and Scope: Define a cradle-to-gate system for producing 1 kg of the target molecule [1].

- Initial Inventory (Phase 1): Compile a list of all input chemicals for the synthesis. Check their availability in the LCA database [1].

- Iteration for Data Gaps: For each chemical missing from the database:

- Perform a retrosynthetic analysis to identify simpler, commercially available precursors.

- Use documented industrial or lab-scale routes to model the synthesis of the missing chemical.

- Calculate the life cycle inventory for the missing chemical by scaling all input and output flows to produce 1 kg of that chemical.

- Integrate this new LCI data into the model [1].

- LCA Calculation (Phase 2): Once a complete inventory is built, run the LCA calculation using impact assessment methods like ReCiPe 2016 (endpoints: Human Health, Ecosystem Quality, Resources) and IPCC 2021 for Global Warming Potential [1].

- Interpretation (Phase 3): Visualize the results to identify environmental hotspots within the synthesis route [1].

This protocol uses machine learning to predict missing life cycle impact assessment (LCIA) results for chemicals, facilitating rapid early-stage sustainability screening.

- Application: Early-stage design and screening of new chemicals or processes when LCI data is unavailable.

- Principle: ML models learn the relationship between a chemical's inherent properties and its LCA impacts, acting as a predictive surrogate for detailed LCA calculations.

Workflow: ML-Based Prediction of LCA Impacts

Materials and Reagents:

- ML Algorithms: Extreme Gradient Boosting (XGBoost), Random Forest, or Artificial Neural Networks, as implemented in Python libraries (e.g., scikit-learn, XGBoost) [4] [6].

- Input Features: Data on physicochemical, molecular, and structural properties of chemicals (e.g., molecular weight, polarity, bond types), obtainable from databases or lab measurements [6].

- Output Labels: LCI or LCIA results for impact categories like Global Warming Potential, Human Health, and Ecosystem Quality from a training dataset [6].

- Software: Python environment for data processing and model training.

Step-by-Step Procedure:

- Dataset Curation: Assemble a training dataset containing the input features (molecular properties) and output labels (LCIA results) for a wide range of known chemicals [6].

- Model Training: Train selected ML algorithms to map the input features to the output labels. Use a portion of the data for training and another for validation [6].

- Model Validation: Evaluate the trained model's performance on the validation set using metrics like R² to ensure prediction accuracy [6].

- Prediction and Application: Use the validated model to predict the LCIA results for new chemicals based solely on their input features. Integrate these predictions into an early-stage LCA to guide sustainable process design [6].

Research Reagent Solutions: LCA & Data Science Tools

The following table details key computational and data resources essential for addressing data scarcity in chemical LCA.

| Research Reagent | Function/Benefit |

|---|---|

| Ecoinvent Database | A leading LCA database providing life cycle inventory data for thousands of core materials and energy processes. Serves as the foundational background data for most chemical LCA studies [1]. |

| Brightway2 LCA Software | An open-source LCA framework written in Python. It allows for high flexibility in managing LCA databases, performing calculations, and implementing custom workflows like iterative retrosynthesis [1]. |

| Extreme Gradient Boosting (XGBoost) | A powerful, scalable machine learning algorithm based on gradient boosting. It is highly effective for tabular data and is a prominent choice for predicting LCA results from chemical properties [4] [6]. |

| GLAM LCIA Method | The Global Guidance for Life Cycle Impact Assessment (GLAM) method provides a consensus-based, global set of factors for assessing impacts on human health, ecosystem quality, and resources, ensuring consistency across studies [7]. |

| Physics-Informed ML | A machine learning approach that integrates known physical laws or constraints into the model. Shown to be promising for LCA of complex systems like biochar production, improving prediction robustness [8]. |

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center addresses common experimental challenges in machine learning (ML) research for life cycle assessment (LCA) of chemicals, particularly under conditions of data scarcity.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical data quality issues that hinder ML model performance in chemical LCA?

Poor data quality is the primary reason up to 87% of AI projects fail to reach production [9]. The most critical issues are:

- Incompleteness: Missing data for life cycle inventory (LCI) flows or environmental impact factors creates significant gaps that models cannot learn from.

- Inconsistency: Heterogeneous data formats, varying units, and disparate reporting standards across different studies or databases (e.g., for chemical properties or emissions) prevent effective data integration [9] [5].

- Inaccuracy: Errors in underlying experimental measurements or outdated secondary data sources propagate through the model, leading to unreliable predictions [9].

FAQ 2: Our dataset for a specific chemical class is highly imbalanced, with very few positive hits for a particular toxicity endpoint. How can we address this?

Imbalanced data is a widespread challenge in chemistry that leads to models biased toward the majority class (e.g., predicting "non-toxic" for everything) [10]. The following table summarizes standard techniques to mitigate this:

| Technique Category | Specific Methods | Best-Suited For |

|---|---|---|

| Resampling | SMOTE, Borderline-SMOTE, ADASYN [10] | When the minority class has too few samples for the model to learn its characteristics. |

| Algorithmic | Cost-sensitive learning; Ensemble methods like Balanced Random Forests [11] [10] | When you want to avoid modifying the dataset directly and use the algorithm to handle imbalance. |

| Data Augmentation | Using physical models or LLMs to generate synthetic data [10] | When data is extremely scarce and expensive to generate experimentally. |

FAQ 3: A lack of standardization is causing major bottlenecks in our data integration pipeline. What are the operational impacts?

The absence of consistent standards for data formats, nomenclature, and modeling practices creates significant operational friction [12] [13]. Key impacts include:

- Increased Manual Work: Scientists spend excessive time on data cleaning, harmonization, and manual validation instead of research [12] [9].

- Higher Costs: Custom integration scripts, reconciliation of disparate data, and rectifying errors consume substantial resources [13].

- Data Silos: Incompatible systems and formats prevent departments from sharing information, leading to fragmented and non-reproducible analyses [12] [14].

FAQ 4: How can we quantify the uncertainty in our predictions when training data is scarce?

When data is limited, quantifying uncertainty becomes critical. Two recommended methodologies are:

- Probabilistic Imputation: Use ML models designed for uncertainty quantification, such as Gaussian Process Regression, to fill data gaps while providing confidence intervals for the imputed values [5].

- Surrogate and Hybrid Modeling: Develop simpler, interpretable surrogate ML models to approximate complex LCA calculations. These can be combined with physical models (physics-informed ML) to constrain predictions within scientifically plausible ranges, improving robustness despite scarce data [5].

Troubleshooting Guides

Issue: Model exhibits high accuracy but fails to predict rare events (e.g., a specific high-impact toxicity).

This is a classic symptom of a model trained on an imbalanced dataset.

- Diagnosis:

- Solution: Implement the SMOTE Algorithm.

- Identify Minority Class: Isolate the samples belonging to the underrepresented class in your dataset.

- Synthesize New Samples: For each sample in the minority class, find its k-nearest neighbors. Create new, synthetic examples along the line segments joining the original sample and its neighbors [10].

- Re-train Model: Combine the original majority class data with the original and newly synthesized minority class data to create a balanced training set. Re-train your model on this new dataset.

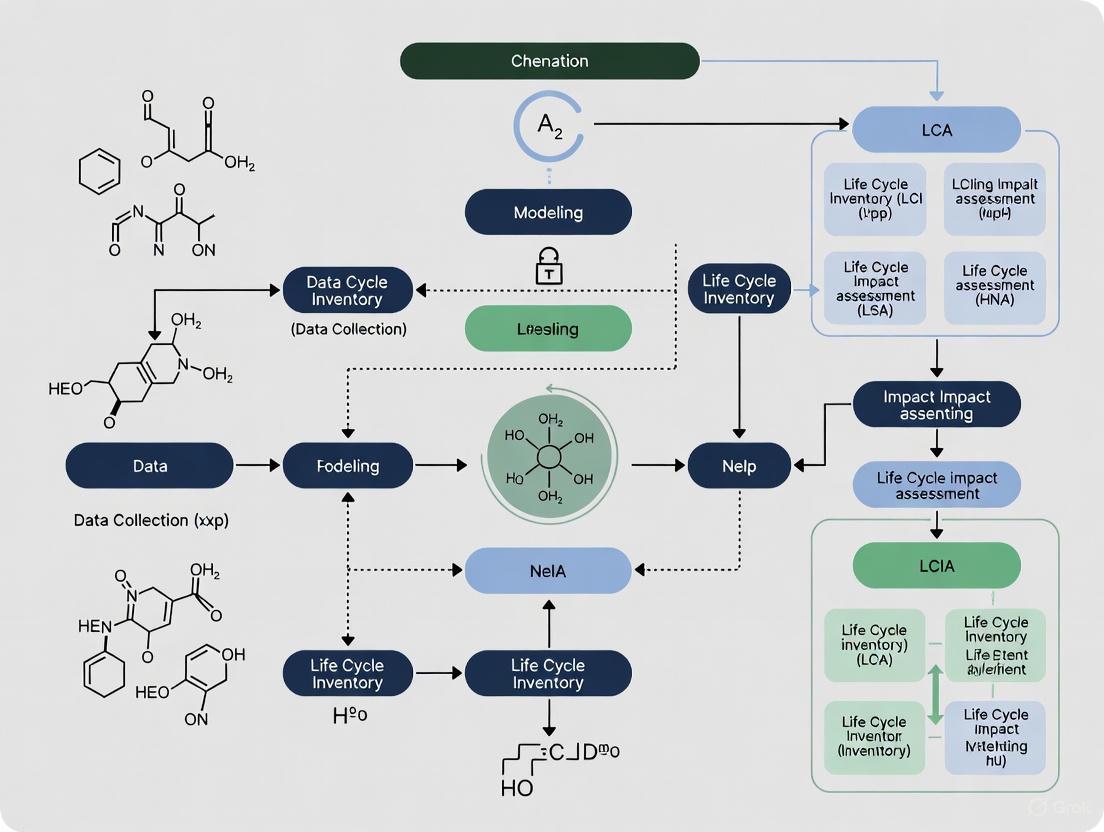

The following workflow diagram illustrates the process of addressing imbalanced data in an ML experiment for chemical LCA.

Issue: Inconsistent data formats from different LCA databases cause integration failures.

This problem stems from a lack of standardization, which creates data silos and complicates analysis [13].

- Diagnosis:

- Audit the data sources. Identify conflicts in column headers, units (e.g., kg vs. g), chemical identifiers (e.g., CAS numbers vs. common names), and file formats.

- Solution: Establish a Data Quality Framework.

- Define Requirements: Create a data dictionary that specifies acceptable formats, units, and mandatory fields for all incoming data [9].

- Automate Validation: Implement scripts or use data profiling tools to automatically check new datasets against these specifications upon ingestion, flagging inconsistencies [9] [14].

- Centralize with a Semantic Layer: Use a semantic layer or a unified platform to harmonize data definitions (e.g., standardizing the calculation for "global warming potential") across the organization, ensuring all researchers use consistent, trusted metrics [14].

Experimental Protocols & Methodologies

Protocol 1: Handling Missing Life Cycle Inventory Data using Probabilistic Imputation

Objective: To estimate missing LCI data points (e.g., energy consumption for a specific chemical process) with associated uncertainty.

Materials: Existing, incomplete LCI database; Python/R environment with ML libraries (e.g., scikit-learn, GPy).

Procedure:

- Data Preparation: Construct a dataset where rows represent processes and columns represent inventory flows. Mark missing values.

- Model Selection: Employ a Gaussian Process Regression (GPR) model. GPR is ideal as it provides a mean prediction and a measure of uncertainty (variance) for each imputed value [5].

- Training: Train the GPR model on the available, complete data points to learn the relationships between different inventory flows.

- Imputation: Use the trained model to predict the missing values. For each missing data point, record both the imputed value and its standard deviation.

- Integration: Incorporate the imputed values and their uncertainties into the subsequent Life Cycle Impact Assessment (LCIA) phase, propagating the uncertainty through the final impact scores [5].

Protocol 2: Mitigating Class Imbalance in Toxicity Classification using SMOTE

Objective: To improve ML model recall for a rare toxicological endpoint.

Materials: Imbalanced chemical dataset (e.g., with molecular descriptors/features and a binary toxicity label); Python with imbalanced-learn library.

Procedure:

- Data Splitting: Split the dataset into training and testing sets. Crucially, apply resampling only to the training set to avoid data leakage and an over-optimistic evaluation.

- Apply SMOTE: On the training set only, use the SMOTE algorithm to generate synthetic examples of the minority (toxic) class until the class distribution is approximately balanced [10].

- Model Training: Train a classification model (e.g., Random Forest or XGBoost) on the resampled, balanced training set.

- Validation: Evaluate the model on the original, untouched test set. Compare the F1-score and recall for the minority class against the model trained on the imbalanced data.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methodologies essential for overcoming data scarcity in ML-driven chemical LCA.

| Tool / Solution | Function & Application |

|---|---|

| Gaussian Process Regression (GPR) | A ML method used for probabilistic imputation of missing data; provides predictions with confidence intervals, crucial for uncertainty analysis in LCI [5]. |

| SMOTE & Variants (e.g., Borderline-SMOTE) | Algorithms for generating synthetic samples of the minority class to balance datasets, directly addressing data scarcity for rare events in toxicity or impact classification [10]. |

| Semantic Layer | A centralized data management layer that harmonizes definitions, units, and metrics across disparate data sources, directly combating the problems caused by a lack of standardization [14]. |

| SqlFluff & dbt | Tools for enforcing SQL style guides and analytics engineering best practices. They standardize code, naming conventions, and documentation, improving reproducibility and collaboration in data operations [12]. |

| Physics-Informed ML (PIML) | A hybrid modeling approach that integrates known physical laws or constraints into ML models. This helps generate more plausible predictions even when training data is sparse [5]. |

The systemic hurdles in this field are interconnected, as shown in the following causality diagram.

Troubleshooting Guides & FAQs

Frequently Asked Questions

What are the primary causes of data scarcity in impact assessments? Data scarcity often arises from the high cost and complexity of generating high-fidelity data, the presence of data silos due to commercial interests, and the reliance on generic or industry-average proxies when specific, verifiable information is unavailable, especially from upstream suppliers [15] [16] [17].

How does data scarcity quantitatively affect Life Cycle Impact Assessment (LCIA) results? The uncertainty can be extreme. Case studies show that for most impact categories (e.g., acidification, ecotoxicity), the maximum reported impact values can be up to 10,000 times larger than the minimum values due to discrepancies in characterization factors and substance coverages across different assessment methods [18].

Can Machine Learning (ML) help overcome data scarcity in drug discovery? Yes, but it requires specific strategies. ML models, particularly deep learning, are data-hungry. In low-data regimes, researchers successfully use techniques like Transfer Learning (TL), Active Learning (AL), and Multi-Task Learning (MTL) to maximize the utility of limited datasets [15].

What is a foundational model in biomedical imaging, and how does it address data scarcity? A foundational model like UMedPT is a large network pre-trained on a multi-task database containing diverse image types and labels. This model learns versatile image representations, allowing it to match or exceed the performance of traditional models while using only 1-50% of the original training data for new, related tasks [19].

Troubleshooting Common Data Scarcity Issues

| Problem Scenario | Root Cause | Recommended Solution | Expected Outcome |

|---|---|---|---|

| Highly imbalanced predictive maintenance data, with very few failure instances [20]. | Proactive maintenance leads to rare failure events, so models cannot learn failure patterns. | Create "failure horizons" (labeling the last n observations before failure) and use Generative Adversarial Networks (GANs) to generate synthetic run-to-failure data [20]. |

Increased failure instances in the dataset. ML model accuracy improvements of ~20% have been reported [20]. |

| Insufficient or low-quality data for training a reliable ML model in drug discovery [15]. | The property of interest is difficult/expensive to measure (e.g., synthesis outcomes, material stability). | Apply Transfer Learning (TL): Initialize model with weights from a pre-trained model on a large, related dataset. Alternatively, use Multi-Task Learning (MTL) to learn several related tasks simultaneously [15]. | Improved model performance and generalization, reducing the required dataset size for the primary task. |

| Uncertainty in LCA results due to different Life Cycle Impact Assessment (LCIA) method choices [18]. | LCIA methods provide different characterization factors and impact units for the same category. | Systematically evaluate results using multiple LCIA methods. Quantify and report the uncertainty range instead of relying on a single method [18]. | More transparent and robust impact assessments, enabling better-informed decision-making. |

| Data is distributed across organizations (data silos), impeding collaboration in drug discovery [15]. | Privacy concerns, intellectual property rights, and commercial competition. | Use Federated Learning (FL), a technique that trains an ML model across decentralized data sources without sharing the data itself [15]. | Collaborative model improvement while preserving data privacy and overcoming individual data scarcity. |

Quantitative Impact of Data Scarcity and Solutions

Table 1: Documented Uncertainties from LCIA Method Selection

This table summarizes the dramatic uncertainties in environmental impact assessment that arise from data and methodology choices, as revealed by a study of process-based LCI databases [18].

| Impact Category | Uncertainty Magnitude (Max vs. Min) | Primary Causes of Discrepancy |

|---|---|---|

| Global Warming | Low | Consistent characterization factors across methods. |

| Acidification | Up to 10,000x | Differences in total emission values, substance coverage, and characterization factor values [18]. |

| Ecotoxicity | Up to 10,000x | Differences in total emission values, substance coverage, and characterization factor values [18]. |

| Other Categories (e.g., Eutrophication) | Up to 10,000x | Differences in total emission values, substance coverage, and characterization factor values [18]. |

Table 2: Performance of a Foundational Model (UMedPT) Under Data Scarcity

This table shows how a foundational multi-task model in biomedical imaging maintains high performance even when training data is severely limited, offering a powerful solution to data scarcity [19].

| Task Domain | Task Name | Best ImageNet Performance (F1 Score) | UMedPT Performance with 1% of Data (F1 Score) | Data Reduction Compensated |

|---|---|---|---|---|

| In-Domain | CRC-WSI (Tissue Classification) | 95.2% | 95.4% | 99% [19] |

| In-Domain | Pneumo-CXR (Pneumonia Diagnosis) | 90.3% | 90.3% (matched) | 99% [19] |

| Out-of-Domain | Various Classification Tasks | Varies | Matched ImageNet performance | ≥50% [19] |

Experimental Protocols for Overcoming Data Scarcity

Protocol 1: Multi-Task Learning with a Foundational Model for Biomedical Imaging

Objective: To train a universal biomedical image representation (UMedPT) that performs robustly on data-scarce downstream tasks by leveraging multiple data sources and label types [19].

Workflow Diagram:

Methodology:

- Database Curation: Combine multiple small- and medium-sized biomedical imaging datasets into a single multi-task database. Include diverse image modalities (e.g., tomographic, microscopic, X-ray) and various labeling strategies (classification, segmentation, object detection) [19].

- Model Architecture:

- Shared Encoder: A core convolutional neural network (e.g., ResNet) that serves as the foundational model for all tasks. This is the UMedPT model.

- Task-Specific Heads: Lightweight output networks attached to the shared encoder, tailored for each task type (e.g., a decoder for segmentation, a classifier for classification).

- Training Loop: Use a gradient accumulation strategy to handle the memory constraints of multi-task learning. The model is trained to minimize the combined loss from all tasks simultaneously, forcing the shared encoder to learn versatile, general-purpose features [19].

- Application to Downstream Task: For a new, data-scarce task, the pre-trained UMedPT encoder can be used in two ways:

- Frozen Feature Extractor: Keep the encoder weights frozen and train only a new task-specific head on the limited new data.

- Fine-Tuning: Use the UMedPT weights to initialize the model and fine-tune the entire network on the new data [19].

Protocol 2: Using Generative Adversarial Networks (GANs) for Synthetic Data Generation in Predictive Maintenance

Objective: To generate synthetic run-to-failure sensor data that mirrors the patterns of real, scarce data, thereby creating a large enough dataset to train accurate machine learning models for failure prediction [20].

Workflow Diagram:

Methodology:

- Data Preprocessing: Clean and normalize historical run-to-failure sensor data. To address data imbalance, create "failure horizons" by labeling the last

ntime-step observations before a failure event as "failure" and all preceding ones as "healthy" [20]. - GAN Training:

- Generator (G): A neural network that takes a random noise vector as input and outputs synthetic sensor data sequences.

- Discriminator (D): A neural network that takes either real data from the training set or fake data from the Generator and classifies it as "real" or "fake."

- Adversarial Process: Train both networks concurrently in a mini-max game. The Generator aims to produce data so realistic that it fools the Discriminator, while the Discriminator aims to become better at distinguishing real from fake [20].

- Synthetic Data Generation: Once trained, the Generator can be used to produce large volumes of synthetic run-to-failure data that possess relational patterns similar to the original, scarce data.

- Model Training: Train traditional ML models (e.g., Random Forest, ANN) or deep learning models on the augmented dataset containing both original and synthetic data to predict machine failures or estimate Remaining Useful Life (RUL) [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational Tools and Methods for Data-Scarce Research

| Tool / Method | Function | Field of Application |

|---|---|---|

| Transfer Learning (TL) | Transfers knowledge from a model trained on a large, source dataset to a new model for a target task with limited data [15]. | Drug Discovery, Biomedical Imaging, Materials Science. |

| Multi-Task Learning (MTL) | Trains a single model to perform multiple related tasks simultaneously, allowing shared representations to improve learning from scarce data for any single task [15] [19]. | Biomedical Imaging, Drug Property Prediction. |

| Generative Adversarial Networks (GANs) | Generates high-quality synthetic data that mimics the statistical properties of real, scarce data, effectively augmenting training datasets [20]. | Predictive Maintenance, Molecular Design. |

| Active Learning (AL) | Iteratively selects the most valuable data points from a pool of unlabeled data to be labeled by an expert, optimizing the cost of data annotation [15]. | Drug Discovery, Materials Screening. |

| Federated Learning (FL) | Enables collaborative training of ML models across multiple institutions without sharing the underlying data, overcoming data silos and privacy hurdles [15]. | Drug Discovery, Clinical Research. |

| Foundational Model (e.g., UMedPT) | A large model pre-trained on vast and diverse data, serving as a versatile feature extractor for many downstream tasks with minimal task-specific data required [19]. | Biomedical Image Analysis. |

Frequently Asked Questions (FAQs)

Q1: What are the most common data-related causes of poor performance in ML models for chemical and life science research? Poor model performance can often be traced to several common data issues, including:

- Corrupt data: Data that is mismanaged, improperly formatted, or combined with incompatible sources [21].

- Incomplete or insufficient data: Datasets with missing values or an insufficient volume of data for the model to learn effectively [22] [21].

- Imbalanced data: Datasets where data is unequally distributed, skewing model predictions toward the over-represented class [21].

- Overfitting: Occurs when a model is trained too closely to a limited set of data, capturing noise rather than the underlying signal, which harms its performance on new data [23] [22] [21].

- Underfitting: The opposite problem, where a model is too simple and fails to capture meaningful relationships in the data, often due to overly complex data or insufficient training [23] [21].

Q2: How can Machine Learning address data scarcity in Life Cycle Inventory (LCI) for chemicals? ML offers several techniques to overcome LCI data gaps:

- Predictive Modeling and Imputation: ML models can predict and fill in missing inventory data by learning from existing, high-quality datasets [5] [24].

- Data Augmentation: Techniques like SMOTE can generate synthetic data to balance and enlarge small datasets [22].

- Transfer Learning: Models pre-trained on large, general datasets can be fine-tuned for specific chemical processes, even with limited target data [22].

- Hybrid Modeling: Combining ML with process simulation or other mechanistic models can create robust surrogates where empirical data is lacking [5] [24].

Q3: What steps should I take during data preprocessing to ensure my model's reliability? A robust preprocessing pipeline is crucial. Key steps include [21]:

- Handling Missing Data: Remove entries with excessive missing values or impute missing values using statistical measures (mean, median, mode).

- Balancing Datasets: Use resampling or data augmentation techniques to address class imbalance.

- Outlier Detection and Treatment: Use statistical methods (e.g., box plots) to identify and handle outliers that can skew model training.

- Feature Scaling: Apply normalization or standardization to bring all features onto a comparable scale, ensuring some features do not dominate others during training.

Q4: How can I validate that my ML model will generalize to new data? Robust validation is key to ensuring generalizability:

- Use Appropriate Test Sets: Always evaluate the final model on a completely held-out test set that was not used during model building or hyperparameter tuning [22].

- Apply Cross-Validation: Use techniques like k-fold cross-validation to get a more reliable estimate of model performance by training and testing on different data splits [23] [21].

- Avoid Information Leakage: Ensure no information from the test set inadvertently influences the training process, for example, during exploratory data analysis or feature selection [22].

Troubleshooting Guides

Issue 1: Model is Overfitting the Training Data

Problem: Your model performs excellently on training data but poorly on unseen validation or test data.

| Step | Action | Key Considerations |

|---|---|---|

| 1. Diagnose | Check performance metrics (e.g., accuracy) on training vs. validation sets. A large gap indicates overfitting. | High training accuracy with low validation accuracy is a classic sign [23]. |

| 2. Simplify Model | Reduce model complexity (e.g., decrease the number of layers in a neural network, reduce tree depth). | Simpler models are less prone to memorizing noise [22]. |

| 3. Regularize | Apply regularization techniques (e.g., L1/L2 regularization, dropout in neural networks). | These techniques penalize model complexity during training [23]. |

| 4. Get More Data | Use data augmentation to artificially expand your training dataset. | This helps the model learn more generalizable patterns [22]. |

| 5. Tune Hyperparameters | Systematically search for optimal hyperparameters (e.g., learning rate, regularization strength). | Use cross-validation to guide the search, not the final test set [21]. |

Issue 2: Handling High-Dimensional and Complex Data in Drug Discovery

Problem: Dealing with thousands of features (e.g., from 'omics' data, high-throughput screens) makes modeling slow and prone to overfitting.

| Step | Action | Key Considerations |

|---|---|---|

| 1. Exploratory Analysis | Perform exploratory data analysis to understand feature distributions and relationships. | Use domain expertise to guide this process [22]. |

| 2. Feature Selection | Use statistical methods (Univariate Selection, PCA) or model-based methods (Feature Importance from Random Forests) to select the most informative features. | Reduces training time and improves model performance [21]. |

| 3. Dimensionality Reduction | Apply algorithms like Principal Component Analysis (PCA) or Autoencoders to project data into a lower-dimensional space. | PCA is linear, while autoencoders can capture non-linear relationships [23] [21]. |

| 4. Model Choice | Choose models suited for high-dimensional data, such as regularized linear models or tree-based ensembles. | For very complex patterns (e.g., image-based phenotyping), deep neural networks may be necessary [23] [25]. |

Problem: LCA for chemicals requires combining inconsistent, incomplete data from various databases, literature, and simulations.

| Step | Action | Key Considerations |

|---|---|---|

| 1. Data Auditing | Systematically catalog available data sources, noting their scope, regionality, and data quality. | Use aggregators like Open LCA Nexus or GLAD to find datasets [24]. |

| 2. Handle Missing Data | Use probabilistic imputation or ML-based methods to fill data gaps, propagating uncertainty. | This provides a more robust estimate than simple mean/median imputation [5]. |

| 3. Data Harmonization | Use Natural Language Processing (NLP) to automatically map and categorize processes from different databases. | Helps in standardizing the goal and scope phase of LCA [5]. |

| 4. Build Hybrid Models | Combine ML surrogates with traditional process-based LCA models. | ML can model complex, non-linear subsystems where data is scarce, while process models provide structure [5]. |

Experimental Protocols & Workflows

Protocol 1: SPARROW for Cost-Aware Molecular Candidate Selection

This methodology, exemplified by the SPARROW framework, optimizes the selection of molecules for synthesis in drug discovery by balancing potential property value with synthetic cost [26].

1. Goal: Identify the optimal batch of molecular candidates that maximizes the likelihood of desired properties while minimizing collective synthesis costs.

2. Inputs:

- A set of potential molecular compounds (hand-designed, from catalogs, or AI-generated).

- A definition of the target properties.

- Data on synthetic pathways and associated costs.

3. Procedure:

- Data Collection: SPARROW gathers information on the molecules and their potential synthetic routes from online repositories and AI tools [26].

- Unified Optimization: The algorithm performs a single optimization step that considers [26]:

- The shared intermediate compounds and common experimental steps in batch synthesis.

- The costs of starting materials and the number of reaction steps.

- The likelihood of synthetic success.

- The predicted property values of the candidates.

- Output: It automatically selects the best subset of candidates and identifies the most cost-effective synthetic routes for the batch.

4. Outcome: A streamlined list of molecules for experimental testing that accounts for the complex, interdependent costs of batch synthesis, thereby accelerating the early drug discovery pipeline [26].

Protocol 2: ML-Driven Analysis of Cellular Images for Drug-Response Phenotyping

This protocol uses high-resolution cellular imaging and ML to rapidly screen compounds for therapeutic potential, as implemented by companies like Recursion [25].

1. Goal: To identify promising therapeutic compounds by detecting subtle drug-response patterns from cellular images.

2. Inputs:

- High-resolution cellular images from experiments where disease-relevant cell models are treated with various compounds.

- High-performance computing (HPC) infrastructure, such as GPU clusters.

3. Procedure:

- Massive Experimentation: Run up to 2.2 million biological image-based experiments per week to generate a high-dimensional dataset of cellular morphology [25].

- Image-Based Phenotypic Modeling: Train ML models (typically Deep Convolutional Neural Networks) to analyze the cellular images and predict how compounds affect the disease phenotype [25].

- Compute Optimization: Optimize GPU cluster usage to handle the massive computational load efficiently. This can lead to a 35% improvement in efficiency and a 10x increase in computational throughput [25].

- Funnel Reinvention: Use the ML predictions to eliminate weak candidates early, inverting the traditional discovery funnel to focus resources on the most promising leads [25].

4. Outcome: Accelerated identification of high-priority drug candidates, particularly for rare diseases, with increased confidence in downstream clinical success [25].

Table 1: Performance Metrics from AI/ML Applications in Drug Discovery

| Application / Company | Key Metric | Result | Impact |

|---|---|---|---|

| Recursion [25] | GPU Cluster Efficiency | Improved by 35% | Significant cost savings and faster processing |

| Computational Throughput | Increased by 10x | Accelerated screening of molecules | |

| Annualized Net Value | Captured $2.8M | Direct financial benefit from optimization | |

| Pharma Industry Average [23] | Drug Development Success Rate (Phase I to approval) | ~6.2% | Highlights industry-wide inefficiency ML aims to solve |

| Genentech (Roche) [27] | Traditional Drug Candidate Failure Rate | ~90% | Business rationale for adopting "lab in a loop" ML strategies |

Table 2: Machine Learning Techniques for LCA Data Scarcity

| Technique | Application in LCA | Benefit |

|---|---|---|

| Natural Language Processing (NLP) | Automating goal and scope definition; harmonizing data from different literature sources and databases [5]. | Increases speed and consistency of the initial LCA phase. |

| Probabilistic Imputation | Estimating missing Life Cycle Inventory (LCI) data while quantifying the introduced uncertainty [5]. | Creates more robust and transparent inventories compared to deterministic methods. |

| Surrogate & Hybrid Modeling | Creating simplified ML models that emulate complex process models or integrate real-time operational data [5]. | Drastically reduces computational cost and allows for dynamic LCA. |

| Gaussian Process Regression | Used in life cycle impact assessment (LCIA) for building surrogate models and for uncertainty quantification [5]. | Provides reliable predictions with built-in uncertainty estimates. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Tool / Resource | Function | Relevance to Field |

|---|---|---|

| TensorFlow / PyTorch | Open-source programmatic frameworks for building and training machine learning models, especially deep neural networks [23]. | Essential for developing custom ML models for tasks like image analysis (PyTorch) or bioactivity prediction. |

| Scikit-learn | A free software library containing a wide range of traditional ML algorithms for classification, regression, and clustering [23]. | Ideal for prototyping models, performing feature selection, and preprocessing data. |

| Open LCA Nexus / GLAD | Online aggregators that provide access to numerous Life Cycle Inventory (LCI) databases [24]. | Critical for finding LCI data sets for specific products or processes, helping to address data scarcity. |

| SPARROW Algorithm | A unified framework for the cost-aware down-selection of molecular candidates for synthesis [26]. | Directly addresses the challenge of balancing synthetic cost with molecular property optimization in drug discovery. |

| GPU Clusters (e.g., BioHive-1) | High-performance computing infrastructure that provides the massive parallel processing power required for training large ML models [25]. | Enables the scale of experimentation needed for AI-driven drug discovery (e.g., analyzing millions of cellular images). |

Technical Workflow Diagrams

ML-Enhanced LCA Workflow Diagram

AI vs Traditional Drug Discovery Funnel

Building Predictive Models: ML Techniques for Chemical Impact Forecasting

A technical support center for researchers tackling data scarcity in chemical LCA

Frequently Asked Questions & Troubleshooting Guides

This section addresses common challenges researchers face when integrating SMILES strings and feature engineering into Life Cycle Assessment (LCA) for machine learning (ML) projects focused on chemicals.

SMILES and Featurization

Q1: My ML model performs poorly even after featurizing SMILES strings. What are the common featurization methods I should try?

Different featurization methods can significantly impact model performance [28]. The table below summarizes key methods suitable for various research applications.

| Featurization Method | Description | Example Use Cases in Literature | Considerations for LCA/ML |

|---|---|---|---|

| General Features | Macroscopic, numerical descriptors (e.g., composition, temperature, costs) [28]. | Predicting sorption capacity of solid materials [28]. | Good for integrating process conditions (e.g., CAPEX) with molecular data. |

| SMILES (Simplified Molecular-Input Line-Entry System) | String-based representation of a molecule's structure [28]. | Widely used as a starting point for various featurizers in different research fields [28]. | String must be converted to machine-readable format; performance varies by method [28]. |

| Other Molecular Representations | Specialized methods (e.g., geometry files for DFT calculations) [28]. | Molecular calculations (e.g., DFT) [28]. | Can be computationally intensive; may require specialized expertise. |

How to troubleshoot featurization performance:

- Experiment with multiple featurizers: Test different algorithms available in toolkits like DeepChem. The fitting performance and computational speed vary significantly between methods [28].

- Validate SMILES integrity: Ensure your input SMILES strings are accurate and canonicalized before featurization.

- Start with established methods: Beginners in the field are advised to start with well-known methods like those based on SMILES [28].

Q2: What code packages are essential for converting SMILES into machine-learning features?

You will typically need a combination of packages for data handling, molecule manipulation, ML modeling, and visualization [28].

Troubleshooting code execution:

- Issue: "ModuleNotFoundError" when importing packages.

- Solution: Ensure packages are installed in your environment. For example, install DeepChem using

pip install deepchem. Check documentation for specific version dependencies.

LCA and Machine Learning Integration

Q3: How can ML help overcome data scarcity in chemical LCA?

Machine learning can strengthen LCA across all four phases defined by ISO 14040 and 14044, making it more robust against data gaps [5].

- Goal & Scope: Natural Language Processing (NLP) can assist in automating scope definition [5].

- Life Cycle Inventory (LCI): ML techniques like probabilistic imputation can fill data gaps and quantify uncertainty in inventory data [5].

- Life Cycle Impact Assessment (LCIA): Surrogate and hybrid ML models can predict environmental impacts when primary data is scarce [5].

- Interpretation: ML can help provide calibrated, decision-oriented interpretation of results [5].

Q4: What is a robust ML methodology for LCA that is interpretable for chemical research?

Random Forest is a highly appreciated ML method in chemistry for its interpretability [28]. It is based on ensembles of independent decision trees, which often leads to a stable and reliable model [28].

Experimental Protocol: Random Forest for LCA Prediction

- Data Preprocessing: Featurize your chemical compounds (e.g., from SMILES) using a chosen method from DeepChem. Split the data into training and testing sets.

- Model Training: Train a Random Forest regressor (or classifier) on the training data. The algorithm creates multiple decision trees, each providing a vote for the final prediction [28].

- Model Validation: Validate the model's performance on the held-out test set using metrics relevant to your study (e.g., Mean Absolute Error, R²).

- Interpretation: Analyze the model to identify which features (molecular descriptors or process parameters) were most important in predicting the LCA outcome.

Q5: My LCA results show unexpected hotspots. How do I check if my molecular data is the problem?

Unexpected results, such as a minor product aspect having a huge impact, can indicate mistakes in the system model [29]. This is often caused by:

- Incorrect primary input data.

- Use of an incorrect or suboptimal dataset for a material [29].

Troubleshooting checklist:

- Conduct unit sanity checks: A common error is inputting numbers in a different unit than the dataset uses (e.g., kg vs. grams) or neglecting factors of 1000 (e.g., kWh vs. MWh) [29].

- Verify dataset relevance: Check that your background datasets are appropriate for your product's geographical and temporal scope. An outdated dataset or one from the wrong region can skew results [29].

- Check dataset-match: Ensure the reference dataset is the best available match for your specific material and its production method [29].

- Consult published literature: Compare your findings with other LCA studies on similar products to gauge expected outcomes [29].

Data Management and Validation

Q6: What are the critical steps for documenting my LCA-ML workflow to ensure reproducibility?

Sloppy data documentation leads to chaos, blunders, and a lack of transparency [29].

How to ensure robust documentation:

- Document every number, calculation, and assumption used in the LCA and ML model [29].

- Record data sources (references) and note any conflicting references.

- Estimate and document your uncertainty about the accuracy of key data points [29].

- Use external tools like Excel for detailed notes, links, and calculations, in addition to your LCA software [29].

Q7: Why is a sensitivity analysis crucial in an LCA-ML study, and how do I perform one?

Skipping the interpretation phase, including sensitivity analysis, is a common mistake [29]. It tells you how susceptible your results are to data uncertainties [29].

Protocol for Sensitivity Analysis:

- Identify Key Parameters: Select uncertain or highly influential data points, assumptions, or model parameters (e.g., the choice of featurization method, a specific inventory data value).

- Define Variation Ranges: Systematically vary these parameters over a plausible range (e.g., ±10% for a material's mass, testing alternative datasets).

- Re-run and Compare: Re-run your LCA-ML model with these variations and observe the change in the final results (e.g., Global Warming Potential).

- Interpret Results: Determine which parameters your results are most sensitive to. This highlights areas where better data quality is most needed and strengthens the credibility of your conclusions.

Experimental Workflows & Data Pipelines

The following diagrams and tables provide a structured overview of the key components for building an LCA-ML pipeline for chemicals.

Workflow: From Data Scarcity to Impact Prediction

This diagram illustrates the integrated experimental workflow for applying molecular descriptors and ML to overcome data scarcity in chemical LCA.

Research Reagent Solutions: Essential Tools for LCA-ML

This table details key software, data, and methodological "reagents" essential for experiments in this field.

| Item Name | Type | Function / Application | Key Considerations |

|---|---|---|---|

| SMILES Strings | Data Representation | A string-based description of a molecule's structure; the foundational input for featurization [28]. | Ensure canonicalization for consistency. Widely used but requires conversion. |

| DeepChem | Software Library | A Python toolkit specifically designed for deep learning in chemistry, providing numerous molecular featurizers and ML models [28]. | Ideal for converting SMILES into machine-readable features and building subsequent models. |

| Random Forest | Algorithm | An interpretable ML method based on ensembles of decision trees; valued in chemistry for its stability and reliability [28]. | Provides feature importance scores, helping to understand which molecular descriptors drive LCA results. |

| Ecoinvent Database | LCA Data | A large, transparent background database often used (and sometimes prescribed) for LCI data [29]. | Avoid mixing database versions. Ensure geographical/temporal scope matches your study [29]. |

| Product Category Rules (PCRs) | Methodology | Standardized rules for conducting LCAs for specific product categories, ensuring comparability [29]. | Must be selected and applied correctly during the Goal and Scope phase to enable valid comparisons [29]. |

| Sensitivity Analysis | Methodology | Assesses how variations in input data (e.g., uncertain assumptions, molecular features) influence final LCA results [29]. | Critical for understanding the robustness of conclusions and the impact of data scarcity. |

Scientific FAQs: Your Algorithm Questions Answered

Q1: Which algorithm is most suitable for data-scarce scenarios in chemical life cycle assessment (LCA) research?

For data-scarce situations common in chemical LCA, Gaussian Process Regression (GPR) is particularly advantageous. Unlike other algorithms that require large datasets, GPR provides reliable uncertainty quantification even with limited data points. This is crucial for LCA where data gaps are frequent. GPR explicitly models prediction uncertainty, allowing researchers to identify where predictions are less reliable due to data scarcity. Furthermore, GPR's performance has been demonstrated in various scientific domains with limited data, such as predicting soil cohesion and other geotechnical properties, making it well-suited for the sparse data environments often encountered in chemical life cycle inventory analysis [30] [5].

Q2: How do XGBoost and ANN handle missing data in life cycle inventory datasets?

XGBoost has a built-in capability for handling missing values. During training, it automatically learns whether missing values should be assigned to the left or right child node during splits, based on which assignment provides the maximal loss reduction. This eliminates the need for extensive data imputation as a separate preprocessing step [31].

Artificial Neural Networks (ANNs), conversely, typically require complete datasets. Missing values must be handled through preprocessing techniques such as imputation (using mean, median, or more sophisticated methods) or complete-case analysis. This additional preprocessing step can introduce bias or increase computational overhead before model training can begin [30].

Q3: Why would I choose GPR over XGBoost for uncertainty quantification in environmental impact assessment?

GPR provides native probabilistic predictions, delivering both an expected mean value and a measure of variance (uncertainty) for each prediction. This is intrinsic to its statistical framework, making it ideal for applications where understanding prediction confidence is critical, such as in environmental impact assessments and decision-making processes under uncertainty [32] [33].

XGBoost, while excellent for predictive accuracy, is primarily a deterministic model. It does not naturally provide prediction intervals. While techniques like quantile regression or jackknife-based methods can approximate uncertainty, these are add-ons to the core algorithm and not inherent properties [31].

Q4: What are the key computational trade-offs between these algorithms for large-scale LCA models?

The table below summarizes the key computational considerations:

Table: Computational Trade-offs for LCA Models

| Algorithm | Computational Complexity | Memory Consumption | Best Suited for Problem Scale |

|---|---|---|---|

| GPR | High (O(n³) for training) [32] | Moderate to High | Small to medium datasets where uncertainty is a priority [32] |

| XGBoost | Moderate (can be optimized with parallel processing) [31] | High (can be memory-intensive) [31] | Large-scale datasets requiring high accuracy [31] [34] |

| ANN | Variable (depends on architecture & training) [30] | Variable (depends on architecture) | Large, complex datasets with non-linear patterns [30] |

Troubleshooting Guides

Issue 1: GPR Model Fitting is Too Slow or Fails to Converge

Problem: Training a GPR model on your LCA dataset is taking an excessively long time or failing to converge to a solution.

Solution: This is a common issue, as GPR training time scales cubically (O(n³)) with the number of data points [32].

- Reduce Dataset Size: For initial experiments, work with a smaller, representative subset of your data.

- Optimize the Kernel: Choose a simpler kernel (e.g., a simple RBF) instead of a complex composite kernel. Complex kernels increase the parameter space the optimizer must search [32].

- Adjust Optimizer Parameters: In scikit-learn's

GaussianProcessRegressor, you can increase then_restarts_optimizerparameter. This helps the model find a better optimum by restarting the optimization from different initial starting points [32].

Issue 2: XGBoost Model is Overfitting to Training Data

Problem: Your XGBoost model performs excellently on training data but poorly on validation or test data from your LCA study.

Solution: Overfitting is a known risk with XGBoost, especially with small datasets or improper parameter tuning [31].

- Apply Regularization: Utilize XGBoost's built-in L1 (alpha) and L2 (lambda) regularization parameters. These penalties shrink feature weights and prevent the model from becoming overly complex [31].

- Control Model Complexity:

- Reduce

max_depthof the trees (e.g., from 6 to 3 or 4). - Increase

min_child_weightto require a minimum number of instances in leaf nodes.

- Reduce

- Use Stochastic Boosting:

- Set

subsample< 1.0 (e.g., 0.8) to train each tree on a random subset of the data. - Set

colsample_bytree< 1.0 to use only a fraction of the features per tree.

- Set

- Lower the Learning Rate: Use a smaller

learning_rate(e.g., 0.01, 0.1) and increase the number of estimators (n_estimators) proportionally. This is a very effective strategy [31].

Issue 3: Poor Generalization of ANN on Small LCA Datasets

Problem: An ANN model fails to learn effectively or generalizes poorly, which is a frequent challenge with the limited data typical in chemical LCA.

Solution: ANNs typically require large amounts of data. Mitigate this with strong regularization and architectural adjustments [30].

- Implement Robust Regularization: Use techniques like Dropout, which randomly deactivates a proportion of neurons during training to prevent co-adaptation, and L2 weight regularization.

- Simplify the Architecture: Drastically reduce the number of layers and neurons per layer. For small datasets, a network with just 1-2 hidden layers is often sufficient.

- Early Stopping: Halt the training process as soon as the validation performance stops improving. This prevents the model from learning noise in the training data.

- Leverage Transfer Learning: If possible, pre-train the ANN on a larger, related dataset from a public LCA database and then fine-tune it on your specific, smaller dataset.

Experimental Protocol: Benchmarking Algorithms for LCA Prediction

This protocol outlines a standardized procedure for comparing the performance of ANN, XGBoost, and GPR on a dataset relevant to life cycle assessment, such as predicting chemical environmental impact scores.

Objective: To empirically evaluate and compare the predictive accuracy and uncertainty quantification capabilities of ANN, XGBoost, and GPR under data-scarce conditions.

1. Data Preparation

- Data Source: Utilize a LCA database (e.g., related to chemical properties or environmental impacts). Input features (X) may include molecular descriptors, process conditions, or prior inventory data. The target variable (y) is the impact score or property of interest [5].

- Data Splitting: Split the data into training (70%), validation (15%), and test (15%) sets. Use stratified splitting if the target distribution is highly skewed.

- Data Scaling: Standardize all input features (e.g., scale to zero mean and unit variance) as this is critical for ANN and beneficial for GPR and XGBoost.

2. Model Training & Hyperparameter Tuning

- GPR: Use a Radial Basis Function (RBF) kernel. Tune the

length_scaleparameter. Setn_restarts_optimizerto 9 or 10 to avoid local optima during maximum likelihood estimation [32]. - XGBoost: Perform a grid or random search over key parameters:

max_depth[3, 5, 7],learning_rate[0.01, 0.1, 0.2],subsample[0.8, 1.0], andcolsample_bytree[0.8, 1.0]. Use the validation set for early stopping [31]. - ANN: Construct a simple MLP with 1-2 hidden layers. Tune the number of neurons [8, 16, 32], dropout rate [0.2, 0.5], and learning rate. Use the Adam optimizer and train with early stopping [30].

3. Model Evaluation

- Metrics: Calculate the following on the held-out test set:

- R² (Coefficient of Determination): Measures the proportion of variance explained.

- RMSE (Root Mean Square Error): Measures average prediction error.

- MAE (Mean Absolute Error): Provides a robust measure of average error.

- Mean Standard Error (for GPR): Assess the quality of the uncertainty estimates by examining the predicted standard errors in regions with test data.

Table: Example Evaluation Metrics for LCA Problem (Illustrative)

| Algorithm | R² (test) | RMSE (test) | MAE (test) | Uncertainty Quantification |

|---|---|---|---|---|

| Gaussian Process Regression | 0.95 [35] | 0.022 [35] | 0.014 [35] | Native, Probabilistic |

| XGBoost | 0.988 [34] | Low | <5.07% MAPE [34] | Add-on methods required |

| Artificial Neural Network | Varies with data [30] | Varies with data [30] | Varies with data [30] | Not native |

Algorithm Selection Workflow

Use the following workflow diagram to guide your choice of algorithm based on your LCA project's primary constraints and goals.

The Scientist's Toolkit: Essential Research Reagents & Software

Table: Key Computational Tools for ML in LCA Research

| Tool Name | Type | Primary Function in Research | Application Example |

|---|---|---|---|

| scikit-learn | Python Library | Provides unified implementation of GPR, ANN, data preprocessing, and model evaluation tools [32]. | Implementing a GPR model with an RBF kernel for predicting chemical impact scores [32]. |

| XGBoost | Python Library | Efficient, scalable implementation of gradient boosting for high-performance tabular data analysis [31]. | Building an ensemble model to classify high vs. low environmental impact chemicals with missing data [31]. |

| Radial Basis Function (RBF) Kernel | Algorithm Component | Defines covariance in GPR, assuming smooth, infinitely differentiable functions [32]. | Modeling the continuous relationship between molecular weight and biodegradation potential in LCA. |

| SHAP (SHapley Additive exPlanations) | Interpretation Library | Explains output of any ML model by quantifying feature contribution to each prediction [34]. | Identifying which molecular descriptors most influence the predicted toxicity in an XGBoost LCA model [34]. |

| K-fold Cross-Validation | Evaluation Technique | Robust method for model validation and hyperparameter tuning by rotating training/validation splits [31]. | Reliably estimating the real-world performance of an ANN on a limited LCA inventory dataset [31]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most suitable machine learning models for predicting characterization factors in Life Cycle Assessment (LCA)?

The choice of machine learning model depends on your specific data and prediction goal. Based on a systematic review and performance ranking of models in LCA applications, the following algorithms are often the most effective. The ranking below, determined using multi-criteria decision-making methods, can guide your selection [36].

Table: Performance Ranking of Machine Learning Models for LCA Applications [36]

| Machine Learning Model | Performance Score (0-1) | Key Strengths in LCA Context |

|---|---|---|

| Support Vector Machine (SVM) | 0.6412 | High performance in various LCA prediction tasks. |

| Extreme Gradient Boosting (XGB) | 0.5811 | Handles complex, non-linear relationships; can internally manage missing values. |

| Artificial Neural Networks (ANN) | 0.5650 | Powerful for modeling complex, high-dimensional datasets. |

| Random Forest (RF) | 0.5353 | Robust and handles high-dimensional data well. |

| Decision Trees (DT) | 0.4776 | Simple and interpretable. |

| Linear Regression (LR) | 0.4633 | Simple baseline model for linear relationships. |

| Adaptive Neuro-Fuzzy Inference System (ANFIS) | 0.4336 | Combines neural networks and fuzzy logic. |

| Gaussian Process Regression (GPR) | 0.2791 | Provides uncertainty estimates. |

FAQ 2: My LCA inventory data has significant gaps and missing values. What is the best strategy to handle this?

Data gaps are a common challenge. A robust strategy involves using advanced imputation libraries designed for complex data structures like time series or life cycle inventories. The ImputeGAP library is a comprehensive solution that supports a wide range of algorithms and realistic missing data patterns [37].

Experimental Protocol: Data Imputation with ImputeGAP

- Data Contamination Analysis: Use the

Contaminatormodule to analyze your data's existing missingness patterns. If you need to simulate gaps for testing, you can configure the number of missing blocks, contamination rate (e.g., 1% to 80%), and their placement [37]. - Algorithm Selection and Tuning: The

Imputermodule provides access to multiple algorithm families (Statistical, Machine Learning, Matrix Completion, Deep Learning). Initiate the imputation process using default parameters or customize them. Use theOptimizermodule with hyperparameter tuning (e.g., via Ray Tune) to find the optimal configuration for your dataset [37]. - Imputation and Evaluation: Execute the imputation and use the

Testermodule to benchmark algorithm performance. The library provides various metrics to evaluate the quality of the imputed values against ground truth data if available [37]. - Downstream Impact Assessment: A critical final step is to use the

Evaluatormodule. This assesses how the different imputation methods impact the performance of your final predictive model for characterization factors, ensuring that your data repair leads to reliable outcomes [37].

FAQ 3: How can I make my ML-based LCA model more interpretable for stakeholders?

Model interpretability is crucial for building trust. To explain your model's predictions, leverage explainable AI (XAI) techniques. The SHapley Additive exPlanations (SHAP) framework is a state-of-the-art method that is integrated into libraries like ImputeGAP for explaining imputations and Pharm-AutoML for explaining classification models [37] [38]. It quantifies the contribution of each input feature to a final prediction, helping you identify which factors most influence your characterization factors.

Troubleshooting Guides

Issue 1: Poor Predictive Performance of the ML Model for Characterization Factors

Potential Causes and Solutions:

- Cause: Inadequate Data Preprocessing

- Solution: Ensure rigorous data preprocessing. This includes normalization/standardization of data and correct handling of missing values, not just with simple mean imputation but with the advanced methods described in FAQ 2. Data leakage during preprocessing is a common mistake; use pipelines that prevent information from the test set leaking into the training process [38].

- Cause: Suboptimal Model or Hyperparameters

- Solution: Instead of relying on default models, use Automated Machine Learning (AutoML) frameworks to find the best model and hyperparameters. Frameworks like Pharm-AutoML automate the entire pipeline, including data preprocessing, model tuning, and selection, which can outperform manually configured models [38]. This is particularly useful for researchers with limited ML expertise.

- Cause: Lack of Human Expertise Integration

- Solution: Implement a human-in-the-loop framework. AI should augment, not replace, domain expertise. LCA practitioners must provide critical oversight, validate model predictions against scientific knowledge, define system boundaries, and ensure the model's logic aligns with the LCA's goal and scope [39].

Issue 2: My Data is Heterogeneous and Sparse, Making it Difficult to Train a Unified Model

Potential Causes and Solutions:

- Cause: Data from Multiple Incompatible Sources

- Solution: Use a modular framework designed for heterogeneous data. The ehrapy framework, while built for electronic health records, offers a proven paradigm for such challenges. Its workflow can be adapted for LCA [40]:

- Quality Control & Imputation: Inspect feature distributions and impute missing rates.

- Normalization & Encoding: Apply functions to achieve a uniform numerical representation.

- Lower-Dimensional Representation: Use techniques like PCA to project data into a unified, lower-dimensional space for analysis and modeling [40].

- Solution: Use a modular framework designed for heterogeneous data. The ehrapy framework, while built for electronic health records, offers a proven paradigm for such challenges. Its workflow can be adapted for LCA [40]:

- Cause: Sparse or Unlabeled Data

- Solution: Explore semi-supervised or unsupervised learning methods. If labeled data for characterization factors is scarce, use unsupervised learning to find hidden structures or clusters in your inventory data. This can help identify groups of processes or products with similar environmental profiles [5] [39].

This table lists key software tools and libraries that facilitate the end-to-end workflow from data imputation to characterization factor prediction.

Table: Key Research Reagent Solutions for ML in LCA

| Tool / Library Name | Type | Primary Function in the Workflow | Reference/Link |

|---|---|---|---|

| ImputeGAP | Python Library | A comprehensive library for time series imputation, supporting multiple algorithms, realistic missing data simulation, and evaluation of downstream impact. | [37] |

| Pharm-AutoML | Python Package | An end-to-end Automated Machine Learning solution that automates data preprocessing, model tuning, selection, and interpretation, ideal for classification tasks. | [38] |

| Chemprop | Python Package | A message passing neural network specifically designed for molecular property prediction, which can be adapted for predicting chemical-specific characterization factors. | [41] |

| ChemXploreML | Desktop Application | A user-friendly app that allows chemists to predict molecular properties without deep programming skills, useful for filling chemical data gaps. | [42] |

| scikit-learn | Python Library | The fundamental library for machine learning in Python, providing a wide array of models for classification, regression, and clustering. | [37] [38] |

| SHAP (SHapley Additive exPlanations) | Python Library | A game-theoretic method to explain the output of any machine learning model, crucial for interpreting characterization factor predictions. | [37] [38] |

| ehrapy | Python Framework | A framework for analyzing heterogeneous and complex data, providing a workflow from data QC to statistical comparison and trajectory inference. | [40] |

Integrated Workflow for End-to-End LCA

The following diagram synthesizes the core components of the guides and FAQs above into a complete, iterative workflow for conducting an ML-augmented LCA, emphasizing the balance between automation and human expertise [5] [39].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most suitable machine learning algorithms for predicting chemical toxicity and environmental impacts, especially when dealing with limited data?

The optimal machine learning algorithm often depends on your specific data characteristics and endpoint. However, several algorithms have demonstrated strong performance in these domains. For predicting chemical toxicity, models like Gradient Boosting Decision Trees (GBDT) have been used successfully for endpoints like zebrafish embryo toxicity, with methods like SHAP value analysis helping to identify key高风险污染物 (high-risk pollutants) like Ibuprofen [43]. For life-cycle environmental impact predictions, studies have found Extreme Gradient Boosting (XGBoost), Random Forests (RF), and Artificial Neural Networks (ANN) to be particularly effective [44]. When data is scarce, transfer-learning techniques can be valuable, allowing models pre-trained on larger datasets to be fine-tuned with smaller, specific datasets [45]. It is critical to compare your chosen model's performance against simple baseline models to ensure its added complexity is justified [46].

FAQ 2: How can I address the challenge of data scarcity in chemical life cycle assessment (LCA) when building an ML model?

Data scarcity is a fundamental challenge in this field. Researchers are tackling it through several strategies:

- Leveraging Transfer Learning: This involves adapting a model pre-trained on a large, general dataset to a specific, smaller dataset, which is common in chemical applications [45].

- Advocating for Open Data: There is a strong community push for the establishment of large, open, and transparent LCA databases for chemicals that cover a wider range of chemical types [47].

- Utilizing Generative AI: The integration of Large Language Models (LLMs) is expected to provide new impetus for database building and feature engineering, potentially helping to overcome data gaps [47]. Furthermore, physics-informed machine learning (PIML) frameworks can maintain high prediction accuracy even under data-scarce conditions by incorporating known physical laws [43].

- Data Representation: Constructing more efficient chemical-related descriptors and identifying the features most pertinent to LCA results are pivotal steps for making the most of limited data [47].

FAQ 3: My model performs well on the test set but poorly in real-world applications. What could be the cause?

This is often a problem of model generalizability. The issue likely stems from a mismatch between your training data and the real-world data you are applying the model to. This can occur if:

- The test set is not representative of the broader application domain [45]. For example, a model trained and tested on data for a specific class of compounds may fail when presented with a chemically different compound.

- The data sources are biased toward specific types of "star" compounds (e.g., metal dichalcogenides or halide perovskites) and do not represent the full chemical diversity you encounter later [45]. To mitigate this, employ validation methods that test extrapolation performance, such as "leave-class-out" selection or scaffold splits, which provide a more rigorous assessment of how the model handles novelty [45].

FAQ 4: What are the best practices for data cleaning and preprocessing to ensure a robust ML model in chemical applications?

Robust models require meticulous data curation. Key steps include:

- Systematic Cleaning: Remove duplicates, entries with missing values, and non-physical or incoherent values. One study found that even major databases can contain over 10% erroneous data [45].

- Data Provenance: List all data sources, record data selection strategies, and include access dates or version numbers for the databases used. This is crucial for reproducibility [46] [45].

- Describe All Steps: Document every cleaning and normalization step applied to the raw data. It is also important to assess the range of values that were removed or modified during the process [45].

- Semi-Automated Workflows: For large databases, implement and share semi-automated data pipelines to ensure consistency and efficiency [45].

Troubleshooting Guides

Problem: Low Predictive Accuracy of the ML Model

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient or Low-Quality Data | - Check dataset size and for missing values.- Analyze data quality scores or lineage. | - Collect more data if possible.- Apply rigorous data cleaning to remove errors and duplicates [45].- Use data augmentation techniques or transfer learning [47]. |

| Poor Feature Representation | - Evaluate if molecular descriptors capture relevant structural properties.- Compare performance with established descriptor sets. | - Experiment with different molecular representations (e.g., graph neural networks for structure-activity relationships [45]).- Utilize standard open-source libraries like RDKit, DScribe, or Matminer for descriptor generation [45]. |

| Inappropriate Model Selection | - Compare model performance against simple baselines (e.g., predicting the mean).- Test simpler models (e.g., Random Forest) on the same data. | - Justify model choice by comparing it to simpler and state-of-the-art models [46].- For complex problems, consider deep learning (e.g., Graph Convolutional Networks (GCNs) fused with Deep Neural Networks (DNNs) have been used for toxicity prediction [43]). |

| Overfitting to the Training Data | - Check for a large gap between training and validation accuracy. | - Simplify the model architecture.- Implement stronger regularization (e.g., L1/L2).- Ensure a rigorous train/validation/test split and use techniques like k-fold cross-validation [46] [45]. |

Problem: Model is Not Interpretable (The "Black Box" Issue)

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|