Pearson vs. Spearman Correlation: A Practical Guide for Environmental Data Analysis

This article provides a comprehensive guide for researchers and environmental scientists on selecting and applying Pearson and Spearman correlation coefficients.

Pearson vs. Spearman Correlation: A Practical Guide for Environmental Data Analysis

Abstract

This article provides a comprehensive guide for researchers and environmental scientists on selecting and applying Pearson and Spearman correlation coefficients. It covers the foundational concepts of linear versus monotonic relationships, offers practical methodologies for analysis with real-world environmental examples, addresses common pitfalls and optimization strategies for complex ecological data, and presents a rigorous framework for validation and comparative assessment. The guide synthesizes key takeaways to empower robust statistical inference and enhance reproducibility in environmental and biomedical research.

Understanding Correlation: Linear vs. Monotonic Relationships in Environmental Datasets

In environmental science, understanding the relationships between variables—such as temperature and species diversity, or pollutant concentration and toxicity—is fundamental. Correlation analysis provides researchers with statistical tools to quantify the strength and direction of these bivariate associations. Two methods are predominantly used for this purpose: the Pearson correlation coefficient and the Spearman rank correlation coefficient. The appropriate selection between these methods is not merely a statistical formality; it is a critical decision that directly influences the validity of research findings, especially when dealing with environmental data that often violate the ideal assumptions required for parametric tests. This guide provides an objective comparison of Pearson and Spearman correlation coefficients, detailing their performance, underlying assumptions, and application protocols within environmental research contexts, supported by experimental data and methodological frameworks.

Statistical Foundations: Pearson vs. Spearman Correlation

Pearson Correlation Coefficient

The Pearson correlation coefficient (denoted as r) measures the strength and direction of a linear relationship between two continuous variables [1] [2]. It is defined as the covariance of the two variables divided by the product of their standard deviations, resulting in a value between -1 and +1 [2]. A value of +1 indicates a perfect positive linear relationship, -1 a perfect negative linear relationship, and 0 indicates no linear relationship [1] [3]. The formula for calculating the Pearson correlation coefficient for a sample is:

$$ r{xy} = \frac{\sum{i=1}^{n}(xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum{i=1}^{n}(xi - \bar{x})^2} \sqrt{\sum{i=1}^{n}(yi - \bar{y})^2}} $$

Where:

- ( n ) is the number of data points

- ( xi ) and ( yi ) are the individual sample points

- ( \bar{x} ) and ( \bar{y} ) are the sample means [2]

Spearman Rank Correlation Coefficient

The Spearman rank correlation coefficient (denoted as ρ or r_s) is a non-parametric measure that assesses the strength and direction of a monotonic relationship between two variables, whether linear or not [4] [5]. It is calculated by applying the Pearson correlation formula to the rank-ordered values of the variables rather than their raw values [5]. Spearman's ρ also ranges from -1 to +1, with similar interpretations for extreme values but pertaining to monotonicity rather than linearity. When there are no tied ranks, Spearman's ρ can be computed using the simplified formula:

$$ \rho = 1 - \frac{6 \sum d_i^2}{n(n^2 - 1)} $$

Where:

- ( d_i ) is the difference between the two ranks of each observation

- ( n ) is the number of observations [4] [5]

Comparative Analysis: Key Differences and Similarities

Theoretical Comparison

Table 1: Fundamental Characteristics of Pearson and Spearman Correlation Coefficients

| Characteristic | Pearson Correlation | Spearman Correlation |

|---|---|---|

| Relationship Type | Linear | Monotonic (linear or non-linear) |

| Data Distribution | Assumes bivariate normal distribution | No distributional assumptions |

| Data Requirements | Continuous, interval or ratio data | Ordinal, interval, or ratio data |

| Basis of Calculation | Raw data values | Rank-ordered data |

| Sensitivity to Outliers | High sensitivity | Robust against outliers |

| Statistical Power | Higher when assumptions are met | Slightly lower power |

Performance in Environmental Data Analysis

Environmental data often present challenges that complicate correlation analysis, including non-normal distributions, outliers, and non-linear relationships. A 2024 study published in Ecological Modelling analyzed variable selection methods in Ecological Niche Models (ENM) and Species Distribution Models (SDM), finding that among 134 articles that applied correlation methods for variable selection, 47 used Pearson correlation, 18 used Spearman correlation, and 69 did not specify the method used [6]. This highlights a concerning lack of clarity and consistency in the application of correlation methods in environmental research.

The same study examined 56 bird species and found a tendency for non-normal distributions in environmental variables, suggesting that Spearman correlation might be more appropriate for many ecological applications [6]. However, the research also demonstrated that the choice between Pearson and Spearman correlation, combined with the strategy for extracting environmental information (species records versus calibration areas), created four distinct scenarios with significant implications for model outcomes [6].

Table 2: Correlation Strength Interpretation Guidelines

| Value Range | Pearson Interpretation | Spearman Interpretation |

|---|---|---|

| 0.7 to 1.0 or -0.7 to -1.0 | Strong linear association | Strong monotonic association |

| 0.5 to 0.7 or -0.5 to -0.7 | Moderate linear association | Moderate monotonic association |

| 0.3 to 0.5 or -0.3 to -0.5 | Weak linear association | Weak monotonic association |

| 0 to 0.3 or -0.3 to 0 | Little or no linear association | Little or no monotonic association |

Interpretation guidelines for correlation coefficients are similar for both methods, though they reference different types of relationships [1] [7].

Decision Framework for Method Selection

Statistical Assumptions and Validation

Pearson Correlation Assumptions:

- Linear relationship between variables

- Continuous variables measured on interval or ratio scales

- Bivariate normal distribution

- Homoscedasticity (constant variance of residuals)

- No significant outliers [1] [3]

Spearman Correlation Assumptions:

- Monotonic relationship between variables

- Variables must be on ordinal, interval, or ratio scales

- No distributional assumptions [4]

Validation of these assumptions should precede method selection. The linearity assumption for Pearson correlation can be checked visually using scatter plots, while normality can be assessed using statistical tests such as the Shapiro-Wilk test or graphical methods like Q-Q plots [8].

Application Guidelines for Environmental Data

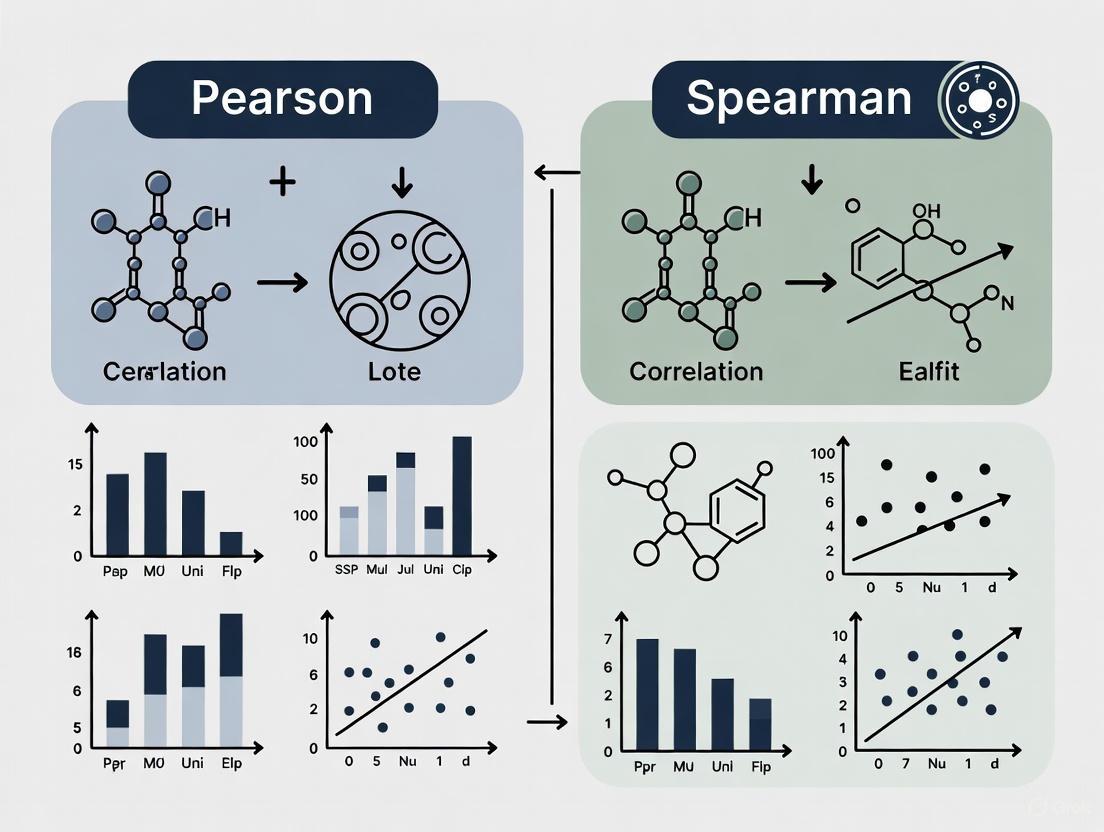

The decision workflow for selecting between Pearson and Spearman correlation in environmental research can be visualized as follows:

Figure 1: Decision workflow for selecting between Pearson and Spearman correlation methods in environmental research.

Experimental Protocols and Case Studies

Standardized Experimental Protocol for Correlation Analysis

Protocol 1: Comprehensive Correlation Analysis for Environmental Variables

Data Collection and Preparation

- Collect paired values for two environmental variables of interest

- Document measurement units and sampling methodology

- Screen for data entry errors and missing values

Exploratory Data Analysis

- Generate descriptive statistics (mean, median, standard deviation)

- Create histograms for each variable to assess distribution

- Construct scatter plots to visualize bivariate relationships

- Identify potential outliers using box plots or statistical methods

Assumption Testing

- Test for normality using Shapiro-Wilk or Kolmogorov-Smirnov tests

- Assess linearity through visual inspection of scatter plots

- Evaluate homoscedasticity by examining residual plots (for linear relationships)

Correlation Analysis

- Based on assumption testing, select appropriate correlation method

- Calculate correlation coefficient using statistical software

- Determine statistical significance (p-value)

- Compute confidence intervals for correlation coefficient

Interpretation and Reporting

- Report correlation coefficient, sample size, and p-value

- Interpret strength and direction of relationship

- Discuss limitations and potential confounding factors

- Visualize results with appropriate graphics

Environmental Case Studies

Case Study 1: Water Quality Monitoring A water quality study analyzed the relationship between multiple water quality indicators and environmental drivers using correlation analysis [7]. Researchers employed Pearson correlation as an initial screening tool before proceeding to more comprehensive regression analysis. The correlation matrix helped identify variables with strong linear associations, which were then prioritized for further modeling.

Case Study 2: Ecological Niche Modeling A 2024 study examined variable selection methods for Ecological Niche Models (ENM) and Species Distribution Models (SDM) for 56 bird species [6]. Researchers found that non-normal distributions were common in environmental variables, making Spearman correlation often more appropriate. The study highlighted how different variable selection strategies (using species records versus calibration areas) combined with choice of correlation method significantly impacted model outcomes.

Case Study 3: Environmental Forensics Spearman's rank correlation has been successfully applied in environmental forensic investigations to detect monotonic trends in chemical concentration with time or space [9]. Its non-parametric nature makes it particularly valuable for analyzing contaminant data that often violate normality assumptions.

Advanced Considerations for Environmental Data

Compositional Data in Environmental Research

Environmental data often have compositional properties, such as congener patterns of pollutants or sediment composition, where components represent parts of a whole [10]. Standard correlation analysis applied directly to such data can yield biased results. The isometric log-ratio (ilr) transformation is recommended before applying correlation analysis to compositional data, as it maps the data from the simplex to the real space while preserving its properties [10]. Research has demonstrated that this approach increases the statistical power of correlation tests for compositional data, reducing both Type I and Type II error rates [10].

Hidden Correlations and Threshold Effects

Under certain conditions, Pearson correlation can reveal hidden correlations that occur only above or below specific thresholds, even when data are not normally distributed [8]. This phenomenon is particularly relevant in environmental research where relationships between variables may change at different ranges of values. For example, a study of COVID-19 cases and web interest during the early pandemic stages in Italy found correlations only above a certain case threshold [8]. In such cases, iterative correlation analysis across different data ranges may be necessary to fully characterize variable relationships.

Research Reagent Solutions: Essential Tools for Correlation Analysis

Table 3: Essential Analytical Tools for Correlation Analysis in Environmental Research

| Tool Category | Specific Solutions | Function in Correlation Analysis |

|---|---|---|

| Statistical Software | R, Python (with pandas, scipy), SPSS, SAS | Calculate correlation coefficients, perform significance tests, generate visualizations |

| Normality Testing Tools | Shapiro-Wilk test, Kolmogorov-Smirnov test, Q-Q plots | Validate distributional assumptions for Pearson correlation |

| Data Visualization Tools | Scatter plots, histograms, box plots | Explore relationships, identify outliers, assess linearity/monotonicity |

| Data Transformation Tools | Logarithmic transformation, ilr transformation for compositional data | Address non-normality, work with compositional data |

| Sample Size Calculators | Power analysis tools, G*Power | Determine required sample size for adequate statistical power |

Both Pearson and Spearman correlation coefficients are valuable tools for measuring bivariate associations in environmental variables, yet they serve distinct purposes and rely on different assumptions. Pearson correlation is optimal for identifying linear relationships in normally distributed data, while Spearman correlation is more appropriate for monotonic relationships or when data violate parametric assumptions. The high prevalence of non-normal distributions in environmental data, as evidenced by recent research, often makes Spearman correlation the more suitable choice in ecological studies [6]. Researchers should systematically evaluate their data characteristics and research questions before selecting a correlation method, following the decision framework outlined in this guide. Proper application of these methods, with attention to underlying assumptions and potential pitfalls such as compositional data structures, will enhance the validity and interpretability of correlation analyses in environmental research.

Correlation analysis is a foundational statistical method used across scientific disciplines to quantify the strength and direction of the relationship between two variables. In environmental data research, understanding these relationships is crucial for model building, hypothesis testing, and predicting ecological outcomes. The Pearson correlation coefficient (r), developed by Karl Pearson, stands as one of the most widely employed measures for assessing linear relationships between continuous variables [2]. This product-moment correlation coefficient serves as a normalized measurement of covariance, always yielding values between -1 and +1 that indicate both the strength and direction of a linear association [2].

The interpretation of Pearson's r is straightforward: a value of +1 indicates a perfect positive linear relationship, -1 indicates a perfect negative linear relationship, and 0 indicates no linear relationship [11]. The strength of association is commonly interpreted using guidelines where coefficients between 0.1-0.3 indicate small associations, 0.3-0.5 medium associations, and 0.5-1.0 large associations, with corresponding ranges for negative relationships [12]. In ecological research, selecting appropriate correlation methods significantly impacts model reliability, as demonstrated in species distribution modeling where variable selection methods affected model outcomes in 56 bird species studies [6].

Theoretical Foundations of Pearson's Correlation

Mathematical Formulation

The Pearson correlation coefficient is mathematically defined as the covariance of two variables divided by the product of their standard deviations [2]. For a population, the coefficient (denoted as ρ) is calculated as:

ρX,Y = cov(X,Y) / (σXσY)

where cov(X,Y) represents the covariance between variables X and Y, while σX and σY represent their standard deviations [2]. For sample data, the Pearson correlation coefficient (denoted as r) is calculated using the formula:

r = Σ(xi - x̄)(yi - ȳ) / [√Σ(xi - x̄)² √Σ(yi - ȳ)²]

where xi and yi are the individual sample points, and x̄ and ȳ are the sample means [13]. This formula essentially normalizes the covariance, creating a dimensionless quantity that enables comparison across different measurement scales and units [2] [12].

Underlying Assumptions

The validity of Pearson's correlation coefficient depends on several key assumptions about the data and the relationship between variables [14] [12]. When these assumptions are violated, the resulting coefficient may be misleading or inaccurate. The core assumptions include:

Interval or Ratio Level Measurement: Both variables must be measured on a continuous scale (interval or ratio level) [14] [12]. Examples include temperature measured in Celsius, height in centimeters, or test scores from 0 to 100 [14].

Linear Relationship: The relationship between the two variables must be linear, meaning that the data points should follow a straight-line pattern when plotted on a scatterplot [14] [12].

Normality: Both variables should be approximately normally distributed [14]. This can be checked visually using histograms or Q-Q plots, or through formal statistical tests like the Shapiro-Wilk test [14].

Related Pairs: Each observation in the dataset must consist of a paired measurement for both variables [14]. For example, each participant in a study should have both a height and weight measurement.

Independence of Cases: The pairs of observations should be independent of each other, meaning that the value of one pair should not influence the value of another pair [12].

No Outliers: The data should not contain extreme outliers, as these can disproportionately influence the correlation coefficient [14].

The following diagram illustrates the logical workflow for determining when to use Pearson's correlation based on its key assumptions:

Key Assumptions in Detail

Level of Measurement and Linearity

The level of measurement assumption requires that both variables are quantitative, measured at either the interval or ratio level [14] [12]. Interval variables have equal intervals between values but no true zero point (e.g., temperature in Celsius), while ratio variables have equal intervals and a true zero point (e.g., height, weight) [14]. When variables are measured on an ordinal scale (e.g., Likert scales, satisfaction rankings), Spearman's correlation becomes the more appropriate choice [15] [5].

The linearity assumption is fundamental to Pearson's correlation, as it specifically measures the strength of linear relationships [14] [12]. This assumption can be verified through visual inspection of a scatterplot: if the data points roughly follow a straight-line pattern, the linearity assumption is satisfied [14]. If the relationship appears curved or follows any other non-linear pattern, Pearson's correlation will not adequately capture the true relationship between variables [14] [11]. In such cases, even strong non-linear relationships may yield deceptively low Pearson correlation coefficients, leading to incorrect conclusions about variable associations.

Normality and Outlier Considerations

The normality assumption requires that both variables are roughly normally distributed [14]. This can be assessed visually using histograms (looking for a roughly bell-shaped distribution) or Q-Q plots (where data points should fall approximately along a 45-degree line) [14]. Formal statistical tests for normality include the Jarque-Bera test, Shapiro-Wilk test, or Kolmogorov-Smirnov test [14]. While Pearson's correlation is somewhat robust to minor violations of normality, severe non-normality can distort the correlation coefficient and associated p-values [14].

The no outliers assumption is critical because extreme values can disproportionately influence Pearson's correlation coefficient [14]. A single outlier can substantially alter the correlation value, potentially leading to erroneous conclusions [14]. For example, in a dataset where the Pearson correlation was 0.949 without an outlier, the coefficient dropped to 0.711 when one extreme value was introduced [14]. This sensitivity to outliers makes it essential to screen data for unusual values through scatterplots and diagnostic statistics before interpreting Pearson correlations [11].

Table 1: Methods for Verifying Pearson Correlation Assumptions

| Assumption | Diagnostic Method | Interpretation | Remediation for Violations |

|---|---|---|---|

| Level of Measurement | Review measurement methodology | Variables should be interval or ratio scale | Use Spearman's correlation for ordinal data [15] |

| Linearity | Scatterplot visualization | Points should follow straight-line pattern | Apply transformations or use Spearman's correlation [4] |

| Normality | Histograms, Q-Q plots, statistical tests | Approximately bell-shaped distribution | Use non-parametric alternatives or transform data [14] |

| No Outliers | Scatterplots, boxplots, residual analysis | No extreme values disproportionately influencing relationship | Consider robust statistical methods or remove outliers with justification [14] |

Comparative Analysis with Spearman's Correlation

Theoretical Differences

While Pearson's correlation measures linear relationships, Spearman's rank correlation assesses monotonic relationships, whether linear or not [5] [4]. Spearman's coefficient (denoted as ρ or rs) is calculated by applying Pearson's formula to the rank-ordered data rather than the raw values [5]. This fundamental difference makes Spearman's correlation a non-parametric statistic that doesn't assume normality or linearity [4].

The mathematical formula for Spearman's correlation when there are no tied ranks is:

ρ = 1 - [6Σdi² / (n(n² - 1))]

where di represents the difference between the two ranks of each observation, and n is the sample size [5] [4]. This simplified formula demonstrates how Spearman's correlation focuses exclusively on the ordering of values rather than their precise numerical properties, making it less sensitive to the specific distribution characteristics of the data [5].

Practical Applications in Environmental Research

In ecological modeling and environmental research, the choice between Pearson and Spearman correlations has significant implications. A recent study analyzing variable selection methods in Species Distribution Models (SDMs) found that among 150 articles, 134 used correlation methods for variable selection, with 47 employing Pearson, 18 using Spearman, and 69 not specifying the method used [6]. This lack of methodological transparency and consistency poses challenges for reproducibility in ecological research [6].

The same study examined 56 bird species and found a tendency for non-normal distributions in environmental variables, suggesting that Spearman's correlation might often be more appropriate for ecological data [6]. Furthermore, the research demonstrated that the choice of correlation method (Pearson vs. Spearman) combined with the variable extraction strategy (species records vs. calibration area) created four distinct scenarios that significantly affected the composition of selected variables and subsequent model performance [6].

Table 2: Comparison of Pearson's and Spearman's Correlation Coefficients

| Characteristic | Pearson Correlation | Spearman Correlation |

|---|---|---|

| Relationship Type Measured | Linear [2] | Monotonic (linear or non-linear) [4] |

| Data Requirements | Interval or ratio level [14] | Ordinal, interval, or ratio level [15] |

| Distribution Assumptions | Both variables normally distributed [14] | No distribution assumptions [4] |

| Sensitivity to Outliers | High sensitivity [14] | Less sensitive [15] |

| Calculation Basis | Original data values [2] | Rank-ordered data [5] |

| Interpretation | Strength of linear relationship [11] | Strength of monotonic relationship [4] |

Experimental Protocols and Research Applications

Methodological Workflow for Correlation Analysis

Implementing proper correlation analysis in environmental research requires a systematic approach to ensure valid results. The following workflow provides a standardized protocol for conducting and interpreting correlation analyses:

Variable Screening: Examine each variable's distribution using histograms, Q-Q plots, and normality tests [14]. For environmental data, which often exhibits non-normal distributions, this step is particularly important for method selection [6].

Relationship Assessment: Create scatterplots to visually assess the form of the relationship between variables [14] [11]. Determine if the relationship appears linear (suggesting Pearson) or monotonic but non-linear (suggesting Spearman).

Outlier Detection: Identify potential outliers through scatterplots, boxplots, or statistical tests [14]. Document any extreme values and assess their potential impact on results.

Method Selection: Choose the appropriate correlation method based on the screening results. Pearson's correlation is appropriate when all assumptions are reasonably met, while Spearman's correlation is more appropriate for ordinal data, non-normal distributions, or when outliers are present [11] [4].

Coefficient Calculation: Compute the selected correlation coefficient using appropriate statistical software or the previously described formulas.

Significance Testing: Conduct hypothesis testing to determine if the observed correlation is statistically significant [11]. For Pearson's correlation, this typically involves calculating a t-statistic using the formula: t = r√[(n-2)/(1-r²)] [11].

Interpretation and Reporting: Interpret the coefficient value, direction, and statistical significance in the context of the research question. Report both the correlation coefficient and the p-value, along with a measure of uncertainty such as confidence intervals [11].

The following diagram illustrates the experimental workflow for proper correlation analysis:

Case Study: Correlation Methods in Species Distribution Modeling

A comprehensive study published in Ecological Modelling (2024) provides a compelling case study on the practical implications of correlation method selection in environmental research [6]. The researchers analyzed variable selection practices in Ecological Niche Models (ENM) and Species Distribution Models (SDM), which are crucial tools in biogeography, ecology, and conservation [6].

The study implemented the following experimental protocol:

Literature Review: The researchers conducted a systematic review of 150 randomly selected articles from 2000-2023 that used ecological niche modeling [6]. They documented the correlation methods used and the variable extraction strategies employed.

Data Collection: For 56 bird species in the Americas, environmental data was extracted using two different strategies: from pixels with species records only, and from all pixels within a defined calibration area [6].

Normality Testing: The researchers conducted normality tests for the environmental variables per species, finding a tendency for non-normal distributions in ecological data [6].

Correlation Analysis: Both Pearson and Spearman correlations were calculated using the two extraction strategies, creating four distinct analytical scenarios [6].

Model Evaluation: For six selected species, different sets of variables were used to build species distribution models, and the performance of models based on different variable selection methods was compared [6].

The results demonstrated that the choice of correlation method and extraction strategy significantly affected which variables were selected and subsequently influenced model performance [6]. This highlights the critical importance of transparent methodological reporting and careful consideration of correlation methods in environmental research.

Research Reagent Solutions for Correlation Analysis

Table 3: Essential Tools for Correlation Analysis in Research

| Tool Category | Specific Examples | Function in Analysis |

|---|---|---|

| Statistical Software | SPSS Statistics, R, Stata, Excel | Calculate correlation coefficients and perform significance tests [15] [11] |

| Data Visualization Tools | Scatterplots, Histograms, Q-Q Plots | Assess linearity, normality, and identify outliers [14] [11] |

| Normality Tests | Shapiro-Wilk test, Jarque-Bera test, Kolmogorov-Smirnov test | Formally evaluate distributional assumptions [14] |

| Documentation Frameworks | Lab notebooks, electronic documentation systems | Ensure transparency and reproducibility of methodological choices [6] |

The Pearson correlation coefficient remains a fundamental statistical tool for assessing linear relationships between continuous variables in environmental research and other scientific disciplines. Its proper application requires careful attention to its underlying assumptions, including linearity, normality, interval/ratio measurement, and the absence of influential outliers. Violations of these assumptions can lead to misleading conclusions, making diagnostic testing an essential component of any correlation analysis.

In ecological and environmental research, where data often violate the strict assumptions of Pearson's correlation, Spearman's rank correlation provides a valuable non-parametric alternative for assessing monotonic relationships. The choice between these methods should be guided by the nature of the data and the research question, rather than convenience or convention. As demonstrated in species distribution modeling studies, this methodological decision significantly impacts variable selection and model outcomes, underscoring the need for transparent reporting and justification of analytical choices.

By understanding the theoretical foundations, assumptions, and practical applications of both Pearson and Spearman correlation coefficients, researchers can make informed methodological decisions that enhance the validity, reliability, and interpretability of their findings in environmental research and beyond.

Theoretical Foundations of Spearman's Correlation

Spearman's rank-order correlation coefficient, denoted as ρ (rho) or rₛ, is a non-parametric measure of the strength and direction of the monotonic relationship between two variables. As a nonparametric statistic, it does not rely on assumptions about the underlying data distribution, making it a robust tool for data analysis when the assumptions of parametric tests are violated [16] [17]. The coefficient can take values from +1 to -1, where +1 indicates a perfect positive monotonic relationship, -1 indicates a perfect negative monotonic relationship, and 0 suggests no monotonic association [18].

A key conceptual foundation is understanding what constitutes a monotonic relationship. This is a relationship where, as one variable increases, the other variable tends to also increase (or decrease) consistently, though not necessarily at a constant rate. This differs fundamentally from the linear relationship assessed by Pearson's correlation coefficient [16]. Monotonic relationships can be linear, but they can also be nonlinear while still maintaining a consistent directional trend, which Spearman's correlation is designed to detect [17]. This makes it particularly valuable for analyzing relationships in environmental data, where variables often exhibit complex, non-linear interdependencies.

The method operates by converting the raw data values into ranks before calculating the correlation. By working with the rank-ordered data rather than the original values, Spearman's correlation becomes less sensitive to outliers and can handle ordinal variables or continuous variables that do not meet normality assumptions [16] [13]. This ranking procedure effectively transforms the problem into one of assessing how well the relationship between the two variables can be described using a monotonic function, regardless of the specific measurement scales of the original data.

Calculation Methodology and Protocol

Step-by-Step Calculation Procedure

The standard method for calculating Spearman's rank-order correlation involves a systematic ranking process followed by application of the correlation formula. The following workflow illustrates this step-by-step procedure from raw data to final correlation coefficient:

The calculation begins with data ranking, where values for each variable are sorted and assigned ranks. The smallest value receives rank 1, the next smallest rank 2, and so forth [16]. A critical step in this process involves handling tied values. When two or more values are identical, they receive the average of the ranks they would have occupied. For example, if two values tie for ranks 6 and 7, both receive a rank of 6.5 [16].

Once ranking is complete, the difference in ranks (d) for each pair of observations is calculated, squared (d²), and summed (Σd²). For data without tied ranks, the Spearman coefficient is calculated using the formula [16] [19]:

ρ = 1 - [6 × Σdᵢ²] / [n(n² - 1)]

where:

- dᵢ = difference between the two ranks for each observation

- n = number of observations

When tied ranks are present, the formula requires adjustment. In practice, with tied ranks, the calculation involves using the Pearson correlation formula applied to the rank values themselves rather than the simplified formula shown above [16] [5].

Practical Calculation Example

Consider the following example comparing exam scores in English and Mathematics for 10 students [16] [18]:

Table 1: Spearman's Correlation Calculation for Exam Scores

| English Score | Mathematics Score | Rank (English) | Rank (Mathematics) | Rank Difference (d) | d² |

|---|---|---|---|---|---|

| 56 | 66 | 9 | 4 | 5 | 25 |

| 75 | 70 | 3 | 2 | 1 | 1 |

| 45 | 40 | 10 | 10 | 0 | 0 |

| 71 | 60 | 4 | 7 | 3 | 9 |

| 62 | 65 | 6 | 5 | 1 | 1 |

| 64 | 56 | 5 | 9 | 4 | 16 |

| 58 | 59 | 8 | 8 | 0 | 0 |

| 80 | 77 | 1 | 1 | 0 | 0 |

| 76 | 67 | 2 | 3 | 1 | 1 |

| 61 | 63 | 7 | 6 | 1 | 1 |

From this table, Σd² = 25 + 1 + 0 + 9 + 1 + 16 + 0 + 0 + 1 + 1 = 54

With n = 10, we calculate: ρ = 1 - [6 × 54] / [10 × (100 - 1)] = 1 - (324/990) = 1 - 0.327 = 0.67

This result of 0.67 indicates a strong positive monotonic relationship between English and Mathematics exam ranks [18]. Students who ranked high in one subject tended to rank high in the other, demonstrating the practical interpretation of the Spearman coefficient.

Comparative Analysis: Spearman vs. Pearson Correlation

Key Theoretical and Practical Differences

Understanding when to apply Spearman's versus Pearson's correlation is crucial for proper data analysis. These two correlation measures approach data relationship assessment from fundamentally different perspectives, as summarized in the comparative table below:

Table 2: Comparison of Pearson's and Spearman's Correlation Coefficients

| Aspect | Pearson's Correlation | Spearman's Correlation |

|---|---|---|

| Relationship Type Measured | Linear relationships | Monotonic relationships (linear or non-linear) |

| Data Distribution Assumptions | Assumes bivariate normal distribution | No distributional assumptions (distribution-free) |

| Data Level Requirement | Interval or ratio data | Ordinal, interval, or ratio data |

| Sensitivity to Outliers | Highly sensitive | Robust (less sensitive) |

| Basis of Calculation | Raw data values | Rank-ordered data |

| Primary Application Context | When linear relationship is expected | When monotonic relationship is suspected or data is ordinal |

The fundamental distinction lies in what each coefficient measures. Pearson's correlation specifically quantifies the strength and direction of a linear relationship between two continuous variables, assuming that the relationship between variables can be approximated by a straight line [13]. In contrast, Spearman's correlation assesses whether the relationship between two variables can be described by any monotonic function, whether linear or nonlinear [16] [17].

This distinction has significant implications for handling non-normal data. While Pearson's correlation requires the data to be approximately normally distributed for valid inference, Spearman's correlation makes no such distributional assumptions, making it particularly valuable for environmental data, which often deviates from normality [6] [13]. Additionally, because Spearman's method uses ranks rather than raw values, it is less affected by extreme observations or outliers that could disproportionately influence Pearson's correlation [13].

Empirical Comparison in Environmental Research

A recent study examining variable selection methods in Ecological Niche Models (ENM) and Species Distribution Models (SDM) analyzed 150 scientific articles and found that 134 used correlation methods for variable selection [6]. Among these, 47 employed Pearson's correlation, while only 18 specifically used Spearman's correlation, with 69 articles failing to specify which correlation method was used [6].

The same study explored four different combinations of correlation methods and data extraction strategies for 56 bird species, finding a tendency for non-normal distributions in the environmental variables [6]. This distribution characteristic makes Spearman's correlation particularly appropriate for environmental data analysis, as it does not require the normality assumption that is frequently violated in real-world environmental datasets.

When the researchers conducted normality tests for variables across species, they discovered that variables frequently exhibited non-normal distributions, reinforcing the value of Spearman's correlation for ecological applications [6]. The choice between correlation coefficients and extraction strategies led to different compositions of selected variable sets, ultimately affecting species distribution model outcomes [6].

Applications in Environmental Data Research

Environmental Variable Selection

In environmental research, Spearman's correlation plays a crucial role in variable selection for ecological modeling. The selection of appropriate environmental variables is essential for developing accurate Ecological Niche Models (ENM) and Species Distribution Models (SDM), as the suitability estimates produced by these models should reflect the actual biology of the species being studied [6]. Correlation methods, including Spearman's, help researchers identify and remove highly correlated environmental variables to reduce multicollinearity and prevent overfitting in predictions [6].

A significant methodological consideration in this context is the strategy for extracting environmental information. Researchers can extract data either from pixels with species records or from all pixels within a defined calibration area [6]. The choice between these strategies, combined with the selection of correlation method (Pearson or Spearman), creates four distinct analytical scenarios that can yield meaningfully different results in species distribution modeling [6].

Advantages for Environmental Data

Environmental data often exhibits characteristics that make Spearman's correlation particularly advantageous. These datasets frequently contain non-normal distributions, outliers, and non-linear relationships between variables—all conditions where Spearman's correlation outperforms Pearson's [6] [13]. For example, relationships between environmental factors like altitude, temperature, and species abundance often follow monotonic but non-linear patterns that are better captured by rank-based correlation measures.

The versatility of Spearman's correlation in handling different data types makes it invaluable for environmental research. It can be applied to continuous variables (like temperature or pH measurements), discrete ordinal variables (like abundance ranks), and can properly handle tied values without compromising analytical integrity [5]. This flexibility ensures that researchers can maintain methodological rigor across diverse environmental datasets and research questions.

Essential Research Toolkit

Table 3: Essential Tools for Spearman's Correlation Analysis in Environmental Research

| Tool/Software | Function | Environmental Research Application |

|---|---|---|

| Statistical Software (SPSS, R) | Automated correlation calculation | Handles large environmental datasets and complex ranking procedures |

| Python (SciPy, pandas libraries) | Programming-based statistical analysis | Customizable analysis pipelines for specialized environmental data |

| Digital Light Microscope | Precise measurement of environmental samples | Measuring morphological traits in environmental specimens [13] |

| Geographic Information Systems (GIS) | Spatial data extraction | Extracting environmental variables from species records and calibration areas [6] |

| Normality Testing Methods | Distribution assessment | Determining whether Pearson or Spearman is more appropriate for specific variables [6] |

Spearman's rank-order correlation provides environmental researchers with a robust, versatile tool for assessing monotonic relationships in datasets that frequently violate the assumptions of parametric correlation methods. Its ability to handle non-normal distributions, ordinal data, and nonlinear monotonic relationships makes it particularly valuable for ecological niche modeling, species distribution modeling, and environmental variable selection.

The comparative analysis with Pearson's correlation reveals distinct applications for each method: Pearson's is optimal for linear relationships with normally distributed data, while Spearman's is superior for detecting consistent directional trends in data regardless of distributional characteristics or linearity. As environmental research continues to grapple with complex, multivariate datasets, the appropriate application of Spearman's rank correlation will remain essential for drawing valid inferences about relationships within ecological systems.

In environmental data research, understanding the relationships between variables—such as pollutant concentrations, climate factors, and ecological indicators—is fundamental. Correlation analysis serves as a primary tool for quantifying these associations, with the Pearson correlation coefficient and the Spearman correlation coefficient being among the most widely employed methods. The choice between these two coefficients is critical, as an inappropriate selection can lead to misleading conclusions about the strength and nature of relationships within complex environmental datasets. This guide provides a objective comparison of these two methods, focusing on their theoretical foundations, practical applications, and performance in the context of environmental science. By framing this comparison within a broader thesis on environmental data research, we aim to equip researchers, scientists, and drug development professionals with the knowledge to select and apply the correct correlation measure for their specific data characteristics and research questions.

Core Concepts and Mathematical Foundations

Pearson Correlation Coefficient

The Pearson correlation coefficient is a parametric statistic that measures the strength and direction of a linear relationship between two continuous variables [2] [20]. It is defined as the covariance of the two variables divided by the product of their standard deviations. For a sample, it is denoted by ( r ) and its formula is expressed as:

$$ r{xy} = \frac{\sum{i=1}^{n}(xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum{i=1}^{n}(xi - \bar{x})^2} \sqrt{\sum{i=1}^{n}(yi - \bar{y})^2}} $$

where ( xi ) and ( yi ) are the individual sample points, and ( \bar{x} ) and ( \bar{y} ) are the sample means [2]. The coefficient's value ranges from -1 to +1, where +1 indicates a perfect positive linear relationship, -1 a perfect negative linear relationship, and 0 indicates no linear relationship [21].

Spearman Correlation Coefficient

The Spearman correlation coefficient is a non-parametric measure that assesses how well the relationship between two variables can be described using a monotonic function [20] [16]. A monotonic relationship is one where the variables tend to move in the same (or opposite) direction consistently, though not necessarily at a constant rate. Instead of using the raw data values, Spearman's method applies the Pearson correlation formula to the rank-ordered data [22].

For data without tied ranks, the formula is often simplified to: $$ \rho = 1 - \frac{6 \sum di^2}{n(n^2 - 1)} $$ where ( di ) is the difference between the two ranks of each observation and ( n ) is the sample size [16]. Like Pearson, it yields a value between -1 and +1, interpreted as the strength and direction of the monotonic relationship.

Comparative Analysis: Pearson vs. Spearman

The following table summarizes the key differences between the Pearson and Spearman correlation coefficients, providing a quick reference for researchers.

Table 1: Key Differences Between Pearson and Spearman Correlation Coefficients

| Aspect | Pearson Correlation Coefficient | Spearman Correlation Coefficient |

|---|---|---|

| Type of Relationship Measured | Linear relationships [20] [23] | Monotonic relationships (linear or non-linear) [20] [23] |

| Underlying Assumptions | Linearity, normality of data, homoscedasticity [21] [22] | No assumptions on distribution; requires a monotonic relationship [16] [22] |

| Data Types | Continuous interval or ratio data [24] [23] | Ordinal, interval, or ratio data; ideal for ranked data [24] [23] |

| Sensitivity to Outliers | Highly sensitive, as it uses raw data [23] [22] | Less sensitive, as it uses data ranks [23] [22] |

| Calculation Basis | Covariance and standard deviations of raw data values [2] [23] | Differences in ranks assigned to data points [16] [23] |

| Interpretation | Strength and direction of a linear relationship [2] [22] | Strength and direction of a monotonic relationship [16] [22] |

Guidelines for Method Selection in Environmental Research

Selecting the appropriate coefficient depends on the nature of the data and the research question.

- Use Pearson correlation when: Your data is continuous, meets the assumptions of normality and linearity, and you are specifically interested in quantifying a linear relationship. In environmental research, this could be applied to the relationship between two standardized climatic variables, such as temperature and air pressure, where the underlying physical laws suggest a linear association [20] [21].

- Use Spearman correlation when: The relationship appears monotonic but not linear, the data is ordinal, or the assumptions for Pearson correlation are violated. It is also more robust when dealing with outliers or non-normal distributions. This is particularly useful in environmental science for analyzing data like species abundance ranks against levels of a pollutant, or when using Likert-scale survey data about public perception of environmental risks [24] [16] [23].

Experimental Protocols and Data Analysis

Workflow for Correlation Analysis in Environmental Studies

A standardized workflow ensures a systematic and rigorous approach to correlation analysis. The following diagram outlines the key steps, from data preparation to interpretation.

Detailed Experimental Methodology

To illustrate a practical application, we outline a protocol for analyzing the relationship between tree girth and height, a common type of morphological data in ecological studies [22].

1. Research Question and Data Loading:

- Objective: To determine the strength and nature of the association between the girth and height of Black Cherry Trees.

- Data Source: The "trees" dataset, available in the R programming environment.

- Protocol: Load the dataset and perform an initial inspection to understand its structure using commands like

head(data, 3)to view the first few entries [22].

2. Data Preparation and Visualization:

- Visualization: Create a scatter plot using a package like

ggplot2in R. The codeggplot(data, aes(x = Girth, y = Height)) + geom_point() + geom_smooth(method = "lm", se=TRUE, color = 'red')generates a scatter plot with a linear trend line. This visual inspection is crucial for identifying the potential form (linear or monotonic) of the relationship [22].

3. Testing Statistical Assumptions:

- Normality Test: Check the normality of each variable using the Shapiro-Wilk test (

shapiro.testfunction in R). A p-value greater than 0.05 suggests the data does not significantly deviate from normality [22]. This is a key step in deciding whether the data meets the assumptions for the Pearson correlation.

4. Computing Correlation Coefficients:

- Execution: Calculate both coefficients to compare.

- Pearson:

cor(data$Girth, data$Height, method = "pearson") - Spearman:

cor(data$Girth, data$Height, method = "spearman")In the example, the results were r = 0.519 (Pearson) and ρ = 0.441 (Spearman) [22].

- Pearson:

5. Testing for Significance:

- Protocol: Use the

cor.testfunction in R to determine if the calculated correlations are statistically significant (p-value < 0.05). This test evaluates whether the observed relationship is likely to exist in the population, not just the sample [22].

The Researcher's Toolkit for Correlation Analysis

Table 2: Essential Reagents and Solutions for Computational Analysis

| Item | Function/Description |

|---|---|

| R Statistical Software | An open-source programming language and environment for statistical computing and graphics, essential for performing correlation analyses and other data manipulations [22]. |

| RStudio IDE | An integrated development environment for R that provides a user-friendly interface for coding, visualization, and managing data analysis projects. |

| 'ggplot2' R Package | A powerful and widely-used data visualization package that enables the creation of sophisticated scatter plots to visually assess data relationships before formal analysis [22]. |

| Shapiro-Wilk Test | A statistical test for normality, available via the shapiro.test function in R, used to verify the assumption of normal distribution for Pearson correlation [22]. |

| 'cor.test' Function | The core function in R for calculating the value of a correlation coefficient (both Pearson and Spearman) and simultaneously testing its statistical significance [22]. |

Performance Evaluation with Environmental Data

Quantitative Results and Interpretation

In the tree morphology experiment, both correlation coefficients yielded positive values, confirming a positive association between tree girth and height. However, the differing values—0.519 for Pearson and 0.441 for Spearman—highlight the importance of method selection [22].

The higher Pearson value suggests that the relationship has a relatively strong linear component. The Spearman coefficient, being lower, indicates that when the data is transformed to ranks, the association is slightly less strong. This is often the case when the relationship is linear, but Spearman is less influenced by the exact spacing between data points. Both correlations were found to be statistically significant (p-value < 0.05), allowing researchers to reject the null hypothesis of no association [22].

Limitations and Considerations for Environmental Research

While powerful, correlation coefficients have inherent limitations that researchers must consider, especially in complex environmental systems.

- Inability to Capture Nonlinear Relationships: A key limitation of the Pearson correlation is its focus solely on linearity. It can completely miss strong, but nonlinear, relationships (e.g., U-shaped or exponential curves) [25]. Spearman is an improvement as it captures any monotonic trend, but it may still be inadequate for complex, non-monotonic relationships common in ecological phenomena.

- Sensitivity to Variability and Outliers: As noted in neuroscience and psychology research, the Pearson correlation coefficient "lacks comparability across datasets, with high sensitivity to data variability and outliers, potentially distorting model evaluation results" [25]. Environmental data, often noisy and containing extreme values, is particularly susceptible to this issue, making Spearman a more robust choice in many scenarios.

- Correlation is Not Causation: This fundamental principle bears repeating. Establishing a correlation between two variables, such as a chemical pollutant and a decline in species health, does not prove that the pollutant caused the decline. Other confounding variables may be responsible for the observed relationship [24].

The comparative analysis reveals that the choice between Pearson and Spearman correlation is not a matter of one being superior to the other, but rather of selecting the right tool for the specific data and research context. Pearson correlation is the appropriate measure for quantifying the strength of a linear relationship when the underlying data meets its parametric assumptions. In contrast, Spearman correlation serves as a versatile non-parametric alternative that is less sensitive to outliers and effective for capturing monotonic trends in ordinal data or data that violates normality.

For environmental researchers, this distinction is paramount. The highly variable and often non-normal nature of environmental data—from species counts to pollutant concentrations—makes Spearman's coefficient a frequently safer and more applicable choice. A thorough analysis should begin with visual data exploration, proceed with formal assumption testing, and may often include reporting both coefficients to provide a comprehensive view of the relationship. By adhering to this rigorous methodology, scientists can ensure their conclusions about relationships in the natural world are both statistically sound and ecologically meaningful.

In scientific data analysis, particularly within environmental research and drug development, the choice between Pearson's and Spearman's correlation coefficients is frequently reduced to a simple rule of thumb: use Pearson for normal distributions and Spearman for non-normal distributions. However, this oversimplification conceals a more fundamental distinction that directly impacts research conclusions—the critical difference between linearity and monotonicity. This guide objectively compares the performance of Pearson and Spearman correlation methods, providing experimental data and protocols to inform selection criteria for researchers analyzing complex environmental datasets. The distinction matters profoundly because selecting an inappropriate correlation measure can cause researchers to underestimate relationship strength or miss vital patterns entirely [8] [26] [27].

Theoretical Foundations: Linearity vs. Monotonicity

Defining the Relationship Types

The core distinction between Pearson and Spearman correlation coefficients lies in the type of relationship they are designed to detect:

- Pearson's Correlation (r): Measures the strength and direction of a linear relationship between two continuous variables. It assumes variables are normally distributed and works best when the relationship between variables can be approximated by a straight line [28] [27].

- Spearman's Correlation (ρ): Measures the strength and direction of a monotonic relationship between two variables. A monotonic relationship exists when as one variable increases, the other tends to also increase (or decrease), but not necessarily at a constant rate [28] [15] [27].

Visualizing the Critical Distinction

The following diagram illustrates the fundamental difference in what each correlation coefficient measures:

Figure 1: Correlation Method Selection Based on Relationship Type

Experimental Comparison: Performance Across Relationship Types

Comparative Analysis on Mathematical Functions

Experimental data from polynomial functions demonstrates how each correlation method performs across different relationship types:

Table 1: Pearson vs. Spearman Correlation on Monotonic Polynomial Functions [8]

| Variable | x | x² | x³ | x⁴ | x⁵ | x⁶ | x⁷ | x⁸ | x⁹ | x¹⁰ |

|---|---|---|---|---|---|---|---|---|---|---|

| Pearson (R) | 1.00 | 0.97 | 0.93 | 0.88 | 0.84 | 0.80 | 0.77 | 0.74 | 0.72 | 0.70 |

| Spearman (r) | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 |

| Difference (%) | 0 | 2.61 | 7.71 | 13.42 | 19.13 | 24.64 | 29.88 | 34.80 | 39.42 | 43.73 |

This experimental data reveals a crucial pattern: while Spearman's correlation perfectly detects all monotonic relationships (ρ=1.00), Pearson's correlation systematically underestimates the strength of higher-order polynomial relationships, with the underestimation exceeding 40% for x¹⁰ [8]. This demonstrates that for non-linear but monotonic relationships, Spearman's correlation provides a more accurate representation of relationship strength.

Real-World Environmental Application

In ecological niche modeling research analyzing 150 scientific articles, correlation methods were extensively used for variable selection:

Table 2: Correlation Method Application in Ecological Niche Modeling [6]

| Methodological Aspect | Number of Papers | Percentage |

|---|---|---|

| Used correlation for variable selection | 134 | 89.3% |

| Specified Pearson correlation | 47 | 35.1% |

| Specified Spearman correlation | 18 | 13.4% |

| Did not specify correlation type | 69 | 51.5% |

| Clarified variable extraction strategy | 39 | 29.1% |

This analysis revealed significant methodological gaps, with 51.5% of studies failing to specify which correlation coefficient they used, and 70.9% not clarifying how environmental variables were extracted [6]. This lack of methodological transparency directly impacts reproducibility in environmental research.

Practical Applications in Research Domains

Environmental Data Analysis

In environmental contexts, data frequently violate the normality assumption required for Pearson's correlation. For example:

- Water quality monitoring often generates high-dimensional, non-normal data where relationships between parameters (e.g., nutrient levels and algal blooms) may be monotonic but not linear [7].

- Ecological niche modeling requires selecting environmental variables with minimal multicollinearity. Research shows variable selection differs significantly based on whether Pearson or Spearman correlation is used, ultimately affecting species distribution predictions [6].

- Groundwater trend analysis often employs non-parametric methods like Spearman's correlation because environmental data typically contain outliers and rarely follow normal distributions [29].

Drug Discovery Applications

In pharmaceutical research, a large-scale analysis of machine learning models for 218 target proteins demonstrated practical implications of correlation choice:

Table 3: Feature Importance Correlation in Drug Discovery [30]

| Analysis Type | Pearson Correlation | Spearman Correlation |

|---|---|---|

| Median correlation across all protein pairs | 0.11 | 0.43 |

| Proteins sharing active compounds | Strong correlation | Strong correlation |

| Proteins with functional relationships | Detected | Detected |

| Proteins without obvious relationships | Weak correlation | Weak-to-moderate correlation |

This research found Spearman's correlation generally showed higher values across comparisons, potentially making it more sensitive for detecting subtle relationships in high-dimensional biological data [30].

Methodological Protocols

Experimental Workflow for Correlation Analysis

The following diagram outlines a systematic protocol for determining and applying appropriate correlation methods:

Figure 2: Protocol for Correlation Method Selection

Assumption Testing Procedures

Normality Assessment:

- Graphical method: Create Q-Q plots and histograms to visually assess distribution [8].

- Statistical tests: Employ Shapiro-Wilk test for formal normality testing [8] [6].

- Descriptive statistics: Calculate skewness and kurtosis with standard errors [8].

Relationship Assessment:

- Linear relationship: Data should approximately follow a straight line with constant slope [28] [27].

- Monotonic relationship: Consistent increasing or decreasing trend without reversal of direction [28] [27].

- Non-monotonic relationship: Presence of peaks, valleys, or directional changes in the relationship [27].

Implementation in Statistical Software

SPSS Statistics:

- Navigate to: Analyze > Correlate > Bivariate...

- Select both Pearson and Spearman checkboxes for comprehensive analysis [15].

- For Spearman only: Deselect Pearson checkbox and select Spearman checkbox [15].

General Best Practices:

- Always visualize relationships with scatterplots before calculating correlations [28] [27].

- Report both coefficients when uncertain about relationship type [8].

- Document all assumption tests and methodological decisions for reproducibility [6].

The Scientist's Toolkit: Essential Materials for Correlation Analysis

Table 4: Research Reagent Solutions for Correlation Analysis

| Tool/Resource | Function/Purpose | Example Applications |

|---|---|---|

| Statistical Software (SPSS, R, etc.) | Calculate correlation coefficients and perform assumption tests | Implementation of Pearson/Spearman correlation with statistical significance testing [15] |

| Visualization Packages | Create scatterplots to assess relationship type | Identifying linear vs. monotonic patterns before analysis [28] [27] |

| Normality Testing Tools | Assess data distribution assumptions | Shapiro-Wilk test, skewness/kurtosis analysis [8] [6] |

| Environmental Variable Databases | Source of correlated parameters in ecological studies | Water quality monitoring, species distribution modeling [6] [7] |

| Bioactivity Databases | Compound-target interaction data for pharmaceutical applications | Drug discovery research, target relationship analysis [30] |

The distinction between linearity and monotonicity represents more than a statistical technicality—it fundamentally influences research conclusions across environmental science and drug development. Experimental evidence demonstrates that Pearson's correlation systematically underestimates relationship strength in non-linear monotonic associations, with differences exceeding 40% in some cases [8]. Meanwhile, methodological reviews reveal that many studies fail to adequately justify their correlation method selection, potentially compromising reproducibility [6].

For researchers working with environmental data, which frequently violates normality assumptions and exhibits complex relationships, Spearman's correlation often provides a more robust measure of association. However, the optimal approach involves comprehensive exploratory analysis—visualizing relationships, testing assumptions, and in cases of uncertainty, reporting both coefficients with clear methodological justification. By adopting this rigorous framework, scientists can ensure their correlation analyses accurately reflect underlying patterns in their data, leading to more reliable conclusions in environmental research and drug development.

Choosing and Applying Correlation Methods: A Step-by-Step Guide for Environmental Data

In environmental research, the choice between Pearson and Spearman correlation coefficients is a critical decision that directly impacts the validity of data interpretation. This guide provides a structured framework for selecting the appropriate correlation measure based on data distribution, relationship type, and research context. Through comparative analysis of experimental data and real-world scenarios from environmental monitoring, we demonstrate how proper methodology selection can reveal authentic biological relationships while avoiding common statistical pitfalls. Our findings indicate that while Pearson's correlation is optimal for linear relationships with normal data distribution, Spearman's rank correlation provides robust performance for monotonic relationships across diverse data conditions encountered in ecological studies.

Correlation analysis serves as a fundamental statistical tool in environmental science, enabling researchers to quantify relationships between ecological variables such as species abundance, nutrient concentrations, and environmental parameters. The pervasive use of correlation-based approaches in ecological studies necessitates rigorous methodology selection to ensure accurate interpretation of complex biological systems [31]. While Pearson's product-moment correlation and Spearman's rank correlation coefficient are both widely employed in scientific literature, inappropriate application remains common and can lead to fallacious identification of associations between variables [32].

The distinction between these correlation methods extends beyond mathematical formulation to their underlying assumptions and interpretive contexts. Pearson's r measures the strength and direction of linear relationships between continuous variables, while Spearman's ρ assesses monotonic relationships through rank transformation [8] [1]. This technical report establishes a comprehensive decision framework for researchers navigating the selection between these statistical tools, with particular emphasis on applications within environmental data research contexts including microbial ecology, pollution monitoring, and climate studies.

Theoretical Foundations

Pearson's Correlation Coefficient

Pearson's correlation coefficient (r) quantifies the strength and direction of a linear relationship between two continuous variables based on covariance and standard deviation calculations. The formula for calculating Pearson's r is expressed as:

$$r = \frac{\sum{(xi - \bar{x})(yi - \bar{y})}}{\sqrt{\sum{(xi - \bar{x})^2}\sum{(yi - \bar{y})^2}}}$$

where $xi$ and $yi$ are individual data points, and $\bar{x}$ and $\bar{y}$ are the means of the respective variables [1] [3]. The coefficient yields values ranging from -1 to +1, where +1 indicates a perfect positive linear relationship, -1 represents a perfect negative linear relationship, and 0 suggests no linear association [3].

The assumptions underlying Pearson's correlation include:

- Continuous measurements for both variables

- Approximately normal distribution for each variable

- Linear relationship between variables

- Homoscedasticity (constant variance of residuals)

- Absence of significant outliers [1] [32]

Spearman's Rank Correlation Coefficient

Spearman's rank correlation coefficient (ρ) operates on rank-transformed data rather than raw values, evaluating the strength and direction of monotonic relationships (whether linear or nonlinear). The calculation involves converting continuous values to ranks and applying Pearson's formula to these ranks:

$$\rho = 1 - \frac{6\sum{d_i^2}}{n(n^2 - 1)}$$

where $d_i$ represents the difference between ranks of corresponding variables, and $n$ is the sample size [8] [9]. Spearman's ρ similarly ranges from -1 to +1, with extreme values indicating perfect monotonic relationships.

Spearman's correlation has less restrictive assumptions:

- Ordinal, interval, or ratio measurement scales

- Monotonic relationship (consistently increasing or decreasing)

- No distributional assumptions (nonparametric) [9] [33]

Comparative Analysis: Key Differences

Table 1: Fundamental Differences Between Pearson and Spearman Correlation Coefficients

| Characteristic | Pearson's r | Spearman's ρ |

|---|---|---|

| Relationship Type | Linear | Monotonic |

| Data Distribution | Assumes normality | Distribution-free |

| Data Requirements | Continuous, interval/ratio | Ordinal, interval, or ratio |

| Outlier Sensitivity | High sensitivity | Robust resistance |

| Calculation Basis | Raw values | Rank-transformed values |

| Statistical Power | Higher when assumptions met | Reduced due to rank transformation |

Interpretation Guidelines

Correlation strength is typically interpreted using established thresholds, though these should be considered alongside domain knowledge and statistical significance [8] [1]:

- Strong correlation: |r| or |ρ| > 0.7

- Moderate correlation: 0.3 ≤ |r| or |ρ| ≤ 0.7

- Weak correlation: |r| or |ρ| < 0.3

Statistical significance (p-value) indicates whether an observed correlation is unlikely to occur by random chance, though it does not quantify relationship strength [8]. The American Statistical Association cautions against relying solely on binary significance thresholds, recommending effect sizes and confidence intervals for comprehensive interpretation [32].

Decision Framework

Figure 1: Decision Framework for Selecting Between Pearson and Spearman Correlation

Framework Application Guidelines

The decision pathway illustrated in Figure 1 provides a systematic approach for researchers to select the appropriate correlation method. Key considerations at each decision point include:

Data Type Assessment: Determine whether variables are continuous with meaningful numerical intervals (favoring Pearson) or ordinal with ranks without consistent intervals (requiring Spearman) [33].

Relationship Visualization: Prior to statistical testing, generate scatterplots to visually assess the relationship pattern. Linear patterns suggest Pearson, while consistently increasing/decreasing but curved patterns suggest Spearman [32].

Distribution Testing: Evaluate normality using statistical tests (Shapiro-Wilk) or descriptive statistics (skewness and kurtosis). For small sample sizes (n < 30), normality tests have limited power [8].

Outlier Evaluation: Identify influential observations that disproportionately affect correlation coefficients. Spearman's method is generally preferred when outliers cannot be justified for removal [32] [3].

Experimental Protocols & Case Studies

Protocol 1: Assessing River Water Quality Trends

Objective: Evaluate the relationship between industrial discharge concentrations and benthic macroinvertebrate diversity in a freshwater ecosystem.

Materials:

- Water quality sampling equipment

- Spectrophotometer for chemical analysis

- D-net for invertebrate collection

- Species identification keys

Methodology:

- Collect paired water and biological samples from 30 monitoring stations

- Measure heavy metal concentrations (continuous, parts per billion)

- Quantify macroinvertebrate diversity using Shannon-Wiener Index

- Test data distributions using Shapiro-Wilk normality test

- Apply Spearman's correlation due to expected non-normal distributions of pollution indicators

Interpretation: A strong negative Spearman correlation (ρ = -0.82, p < 0.001) indicates that increasing heavy metal concentrations associate with reduced biological diversity, supporting environmental regulation development [9].

Protocol 2: Microbial Community Dynamics

Objective: Investigate relationships between temperature fluctuations and relative abundance of specific bacterial taxa in agricultural soils.

Materials:

- Soil coring equipment

- Temperature data loggers

- DNA extraction kits

- 16S rRNA sequencing reagents

Methodology:

- Monitor soil temperature at 2-hour intervals for 60 days

- Extract and sequence microbial DNA from weekly soil samples

- Calculate relative abundance of key bacterial families

- Assess linearity through scatterplot visualization

- Apply Pearson correlation due to normal distribution of temperature measurements and linear response patterns

Interpretation: A moderate positive Pearson correlation (r = 0.68, p = 0.003) between temperature and Pseudomonadaceae abundance suggests thermal niche preferences, informing climate change impact models [31].

Comparative Performance Analysis

Table 2: Comparative Analysis of Pearson vs. Spearman on Polynomial Relationships

| Variable Relationship | Pearson (r) | Spearman (ρ) | Deviation (Δ%) | Recommended Method |

|---|---|---|---|---|

| Linear (x) | 1.00 | 1.00 | 0.00 | Either |

| Quadratic (x²) | 0.97 | 1.00 | 2.61 | Spearman |

| Cubic (x³) | 0.93 | 1.00 | 7.71 | Spearman |

| Quartic (x⁴) | 0.88 | 1.00 | 13.42 | Spearman |

| Quintic (x⁵) | 0.84 | 1.00 | 19.13 | Spearman |

Data adapted from comparative analysis of polynomial functions demonstrating how Spearman perfectly detects monotonic relationships while Pearson sensitivity decreases with increasing nonlinearity [8].

Table 3: COVID-19 Case Study - Correlation Between Web Interest and Pandemic Metrics

| Region | Coronavirus RSV | COVID-19 Cases | Medical Swabs |

|---|---|---|---|

| Lombardy | 100 | 240 | 3700 |

| Veneto | 79 | 43 | 3780 |

| Emilia-Romagna | 84 | 26 | 391 |

| Lazio | 60 | 3 | 124 |

| Piedmont | 82 | 3 | 141 |

| Spearman ρ | 0.72 | 0.81 | |

| Pearson r | 0.89 | 0.63 |

Real dataset from early COVID-19 pandemic in Italy demonstrating how Pearson correlation (r = 0.89) revealed a stronger relationship between web interest and cases than Spearman (ρ = 0.72) in this threshold-based phenomenon, while Spearman performed better for the swabs-cases relationship [8].

Research Reagent Solutions

Table 4: Essential Materials for Environmental Correlation Studies

| Research Material | Function/Application | Specification Guidelines |

|---|---|---|

| Statistical Software | Correlation computation and visualization | R (recommended), Python, SPSS, or SAS with normality testing and visualization capabilities |

| Data Loggers | Continuous environmental monitoring | Temperature, pH, conductivity sensors with appropriate measurement ranges and calibration |

| Sample Collection Equipment | Field sampling for ecological variables | Sterile containers, filtration apparatus, preservatives appropriate for target analytes |

| DNA/RNA Extraction Kits | Microbial community analysis | Commercial kits optimized for environmental samples with inhibition removal |

| Reference Materials | Quality assurance and method validation | Certified standards for target chemical analyses in appropriate matrices |

| Visualization Tools | Data exploration and relationship assessment | Graphing software capable of scatterplots, distribution histograms, and Q-Q plots |

Advanced Considerations & Limitations

Hidden Correlation Phenomena

Environmental data may exhibit threshold effects where correlations manifest only above or below certain values. In these cases, iterative correlation analysis using data subsets may reveal relationships obscured in full datasets [8]. For example, in the COVID-19 case study (Table 3), Pearson correlation outperformed Spearman in detecting the relationship between web search interest and case numbers because the correlation primarily existed above a certain outbreak threshold [8].

Causation vs. Correlation

A significant correlation coefficient, regardless of magnitude, does not establish causation. Environmental systems contain numerous latent variables that can create spurious correlations. For instance, Martin-Plantera et al. demonstrated that marine bacterial population correlations primarily reflected shared seasonal responses rather than direct biological interactions [31]. Experimental validation through manipulation studies remains essential for causal inference.

Compositional Data Challenges

In microbial ecology, relative abundance data from sequencing experiments creates compositional constraints where changes in one taxon's abundance necessarily affect others. Standard correlation approaches applied to compositional data can produce misleading results, necessitating special methods like proportionality measures or centered log-ratio transformations [31].

The selection between Pearson and Spearman correlation coefficients represents a critical methodological decision in environmental research. This decision framework emphasizes the importance of matching statistical methods to data characteristics and research questions. Pearson's correlation provides optimal sensitivity for linear relationships with normally distributed data, while Spearman's method offers robust performance for ordinal data, non-normal distributions, and monotonic nonlinear relationships.

Environmental researchers should prioritize comprehensive data exploration, including visualization and distribution assessment, before selecting correlation methods. The experimental protocols and case studies presented demonstrate that context-aware application of these statistical tools can reveal meaningful ecological patterns while avoiding common misinterpretation pitfalls. As correlation analysis continues to evolve with emerging computational approaches, the fundamental principles outlined in this guide will maintain their relevance for validating hypotheses in complex environmental systems.

Table of Contents

- Introduction

- Theoretical Foundations and Selection Criteria

- Experimental Protocol for Correlation Analysis

- A Researcher's Toolkit for R

- Case Study: Application in Ecological Niche Modeling

- Results and Comparative Analysis

- Discussion and Best Practices