Preventing Overfitting in Environmental Machine Learning: A Cross-Validation Guide for Researchers

This article provides a comprehensive guide for researchers and scientists on using cross-validation to prevent overfitting in environmental machine learning models.

Preventing Overfitting in Environmental Machine Learning: A Cross-Validation Guide for Researchers

Abstract

This article provides a comprehensive guide for researchers and scientists on using cross-validation to prevent overfitting in environmental machine learning models. It covers foundational concepts like the bias-variance tradeoff, explores methodological applications in areas such as water quality and greenhouse gas prediction, addresses troubleshooting for data-scarce scenarios, and compares validation techniques to ensure model generalizability and reliability in biomedical and environmental research.

Understanding Overfitting: The Core Challenge in Environmental ML

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Model Overfitting

Problem: My model performs excellently on training data but poorly on new, unseen data.

Explanation: This is the classic sign of overfitting, where your model has memorized the training data—including its noise and random fluctuations—instead of learning the underlying pattern or signal. It fits the training set too closely and fails to generalize [1] [2].

Diagnosis Steps:

- Split your data: Divide your dataset into separate training and test (or validation) sets [3].

- Evaluate separately: Train your model on the training set and then evaluate its performance on both the training and test sets.

- Compare performance: A significant performance gap between high training accuracy and low test accuracy indicates overfitting [4] [2]. For example, 99% training accuracy versus 55% test accuracy is a clear red flag [3].

Solutions:

- Apply Cross-Validation: Use k-fold cross-validation to get a more robust performance estimate and reduce dependency on a single data split [5].

- Simplify the Model:

- Get More Data: If possible, increase the size of your training dataset to help the model better capture the true signal [3].

- Stop Training Early: For iterative learners like neural networks, use early stopping to halt the training process before the model begins to memorize the noise [1] [4].

Guide 2: Addressing Poor Generalization in Environmental ML Models

Problem: My model, trained on data from one geographical location or set of conditions, performs poorly when applied to a new environment.

Explanation: In environmental machine learning, scenario differences—such as variations in climate, soil type, instrumentation, or local ecosystems—can cause the data from a "source" location to have a different statistical distribution from the "target" location. This distribution shift leads to poor generalization [6] [7].

Diagnosis Steps:

- Check data stationarity: Ensure that the statistical properties of your training data (source) are consistent with those of the deployment environment (target). Environmental data is often non-stationary [8].

- Test on target data: Validate your source model directly on a small, representative sample from the target location to establish a performance baseline.

Solutions:

- Leverage Transfer Learning: Instead of training a new model from scratch, use a pre-trained model from a data-rich source location and fine-tune it using a small amount of data from your target location. This can significantly improve performance and reduce data and computational requirements [6] [7].

- Use Domain Adaptation Techniques: Employ methods that explicitly aim to minimize the distribution difference between the source and target data domains during training [7].

- Ensure Representative Data Splits: When partitioning your dataset, ensure that all partitions (training, validation, test) contain data that is statistically similar and representative of the different environmental conditions (e.g., all four seasons) [8].

Frequently Asked Questions (FAQs)

What is the fundamental difference between signal and noise in a dataset?

The signal is the true, underlying pattern you want your model to learn. It is the consistent relationship between input features and the output variable. Noise refers to the irrelevant information, random fluctuations, or errors inherent in any real-world dataset. An overfit model mistakenly learns the noise as if it were the signal [2] [3].

How does k-fold cross-validation help prevent overfitting?

K-fold cross-validation doesn't prevent overfitting in the model training process itself, but it is a powerful tool to detect it and guide model selection to avoid overfit models. By providing a more robust and reliable estimate of a model's performance on unseen data, it helps you choose a model that is more likely to generalize well [9] [5].

- It reduces the reliance on a single, potentially lucky or unlucky, train-test split.

- It uses all data for both training and validation, giving a better picture of model performance [5].

- The average performance across all folds is a more trustworthy metric for comparing different models or hyperparameters.

What is the trade-off between model complexity and overfitting?

Simpler models (with high bias) may fail to capture important patterns in the data, leading to underfitting. They perform poorly on both training and test data. More complex models (with high variance) have the capacity to capture intricate patterns but are also prone to learning the noise in the training set, leading to overfitting. The goal is to find the "sweet spot" where the model is complex enough to learn the signal but not so complex that it memorizes the noise [1] [4] [3].

In environmental ML, what are common data issues that lead to overfitting?

- Small Datasets: Many environmental studies have limited data, which gives the model fewer examples to learn from and increases the risk of memorization [1].

- Non-Stationarity: Environmental systems change over time (e.g., climate change, seasonal shifts). A model trained on past data may not generalize to future conditions if the data is not stationary [8].

- Poor Representation: If the training data does not adequately represent all the conditions the model will encounter (e.g., training a water quality model only on data from one type of watershed), the model will not generalize well [1] [7].

- Noisy Measurements: Data collected from environmental sensors often contains irrelevant information and measurement errors, which can be misinterpreted as signal by a complex model [1].

Experimental Protocols & Data

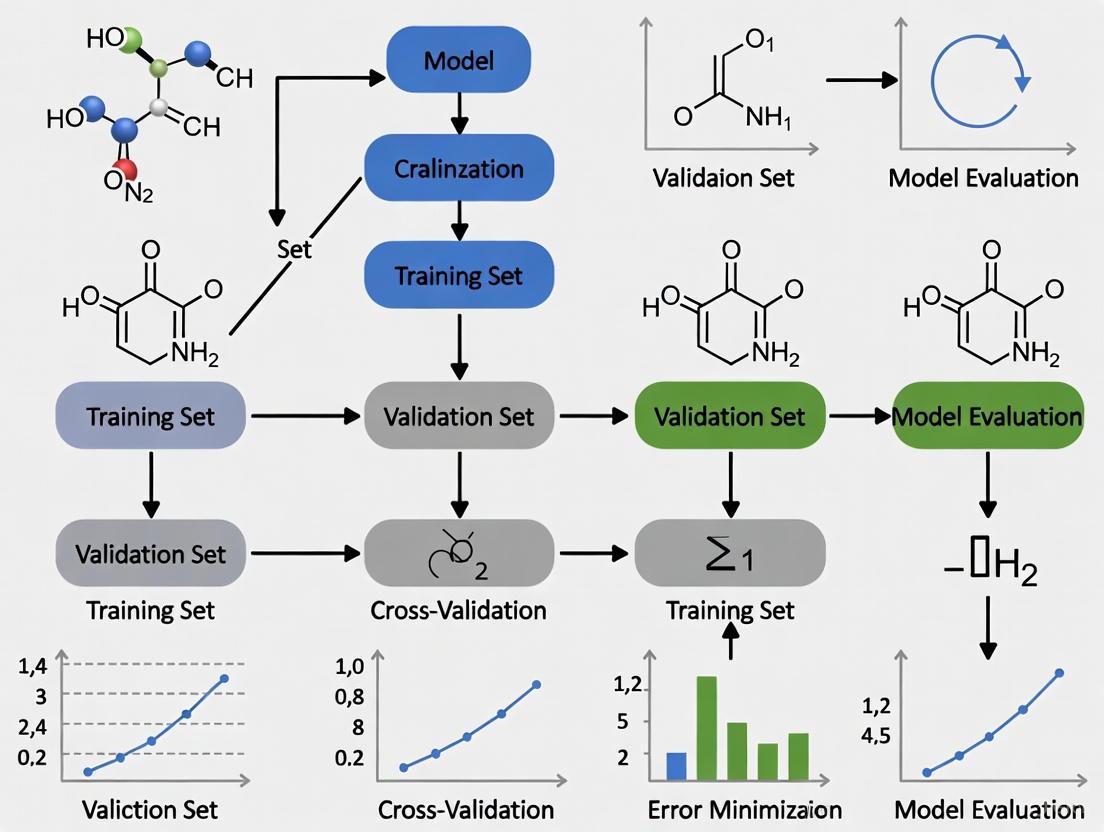

Detailed Methodology: K-Fold Cross-Validation

This protocol is essential for rigorously evaluating model performance and generalizability [1] [5].

- Shuffle and Partition: Randomly shuffle the entire dataset to eliminate any order effects. Split the data into

kequal-sized subsets (called "folds"). A typical value forkis 5 or 10. - Iterative Training and Validation: For each of the

kiterations:- Holdout Fold: Designate one fold as the validation (test) set.

- Training Folds: Combine the remaining

k-1folds to form the training set. - Train Model: Train the model on the training set.

- Validate Model: Evaluate the trained model on the holdout validation set and record the performance metric (e.g., R², accuracy).

- Average Results: Once all

kiterations are complete, calculate the average of thekrecorded performance metrics. This average provides a more robust estimate of the model's generalization error than a single train-test split.

The table below summarizes hypothetical results from a model predicting median house value, demonstrating how k-fold validation provides a more reliable performance estimate [5].

| Evaluation Method | R² Score | Key Interpretation |

|---|---|---|

| Single Train-Test Split | 0.61 | Suggests the model explains 61% of the variance, but this is highly dependent on one specific data split. |

| 5-Fold Cross-Validation | 0.63 (Average) | Provides a more reliable and generalizable performance estimate by testing the model on multiple data splits. |

Model Generalization Workflow

The Bias-Variance Tradeoff

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and strategies for building robust, generalizable models in environmental ML research.

| Research Reagent / Solution | Function & Explanation |

|---|---|

| K-Fold Cross-Validation | A model evaluation method that provides a robust performance estimate by repeatedly training and testing on different data subsets, reducing variance in the estimate [1] [5]. |

| Regularization (L1/L2) | A training/optimization technique that applies a penalty to the model's complexity, forcing it to be simpler and reducing its tendency to fit noise [1] [4] [3]. |

| Transfer Learning | A methodology where a model developed for a data-rich source task is fine-tuned for a data-poor target task, drastically reducing data and computational needs for new environments [6] [7]. |

| Early Stopping | A training procedure that halts the iterative learning process once performance on a validation set stops improving, preventing the model from over-optimizing on the training data [1] [4]. |

| Ensemble Methods (Bagging/Boosting) | A class of techniques that combine predictions from multiple models to produce a single, more robust and accurate prediction, thereby smoothing out individual model errors [1] [3]. |

| Data Augmentation | A strategy to artificially expand the training set by creating modified versions of existing data, helping the model learn to be invariant to irrelevant variations (e.g., slight rotations of images) [1] [2]. |

The Bias-Variance Tradeoff in Environmental Predictions

Frequently Asked Questions (FAQs)

Q1: What is the practical significance of the bias-variance tradeoff for my environmental prediction model?

In environmental modeling, the bias-variance tradeoff is the challenge of balancing model simplicity and complexity to optimize prediction accuracy and generalization, which is crucial for developing robust sustainability solutions [10]. A model with high bias (overly simplistic) may miss important ecological relationships (underfitting), while a model with high variance (overly complex) may learn noise and spurious patterns from your specific dataset, failing when applied to new locations or time periods (overfitting) [10] [11]. Successfully managing this tradeoff directly impacts the reliability of predictions used for critical decisions in areas like climate policy, resource management, and conservation [11].

Q2: How can I tell if my model is suffering from high bias or high variance?

You can diagnose these issues by examining your model's performance metrics on training versus validation data:

| Condition | Typical Performance Pattern | Common in These Models |

|---|---|---|

| High Bias (Underfitting) | High error on both training and validation data [12]. | Overly simple models (e.g., linear regression used for a complex, non-linear process) [10] [12]. |

| High Variance (Overfitting) | Low error on training data, but high error on validation data [12]. | Highly complex models (e.g., deep decision trees, large neural networks) trained on limited or noisy data [10] [11]. |

Learning curves, which plot training and validation error against the size of the training set, are also effective tools for diagnosing these issues [12].

Q3: My model performs well in cross-validation but fails in real-world deployment. Why?

This is a classic sign of overfitting and is often caused by a flaw in the validation method, especially for spatial or temporal environmental data. If your random cross-validation splits contain data from locations or time periods that are very similar to the training data, the validation score will be overly optimistic [13]. To detect this failure, you must use spatial or temporal cross-validation, where the validation set is explicitly separated from the training data in space or time. This mimics the true challenge of predicting into new, unseen contexts [13] [14].

Q4: What are the most effective techniques to reduce overfitting in my environmental ML model?

Several techniques are commonly employed to reduce variance and prevent overfitting:

- Regularization: Techniques like Lasso (L1) or Ridge (L2) regularization add a penalty to the model's loss function for complexity. Lasso can also perform feature selection by driving some feature coefficients to zero [11] [15].

- Spatial/Temporal Cross-Validation: This is critical for obtaining a realistic error estimate and for model selection. It involves splitting data into folds based on location or time, ensuring training and validation sets are sufficiently separated [13] [14].

- Ensemble Methods: Methods like Random Forests combine multiple models to average out their errors, which reduces variance and often leads to more robust predictions [10] [16].

- Data Augmentation: For issues like limited data, you can generate synthetic data points through techniques like interpolation or noise injection to improve the model's ability to generalize [11].

Q5: Does the bias-variance tradeoff still apply to modern, highly complex models like deep neural networks?

While the classical view is that test error increases with model complexity after a certain point, recent research has observed a "double-descent" phenomenon in very large models like deep neural networks. Here, test error can decrease again as complexity increases far beyond the point of perfectly fitting the training data [17]. However, this does not mean the tradeoff is obsolete. It suggests that the number of parameters is a poor measure of effective complexity. These large models often have strong implicit regularization, meaning their effective complexity is controlled, preventing overfitting despite the high parameter count [17].

Troubleshooting Guides

Problem: Model Fails to Generalize Spatially

Symptoms: High accuracy in regions with dense training data, but poor performance in data-sparse regions or when making maps.

Solution Protocol: Implementing Spatial Block Cross-Validation

- Define Spatial Blocks: Partition your study area into distinct spatial blocks. The size is critical; blocks should be large enough to break the spatial autocorrelation between training and testing sets.

- Choose Block Size: Use tools like correlograms of your predictors to understand the spatial dependency structure and choose an appropriate block size [14].

- Assign Data to Folds: Group your data according to the blocks you created.

- Run Cross-Validation: Iteratively hold out all data within one (or more) blocks as the validation set and train the model on data from all other blocks.

- Validate and Select Model: Use the cross-validation score from this spatial blocking procedure to select your final model. This score provides a more realistic estimate of performance in unsampled locations.

The following workflow outlines this spatial cross-validation process:

Problem: Model is Overfitting Despite Using Complex Techniques

Symptoms: Performance remains poor on validation data even after applying techniques like Random Forests or Neural Networks.

Solution Protocol: A Systematic Anti-Overfitting Checklist

- Audit Your Validation Method: Ensure you are not using a random train/test split. Immediately implement spatial or temporal cross-validation as described above [13] [14].

- Apply Explicit Regularization:

- For regression models, implement Lasso (L1) or Ridge (L2) regularization. A study on air quality prediction in Tehran showed Lasso successfully enhanced model reliability by reducing overfitting and identifying key features [15].

- For neural networks, use techniques like Dropout or Early Stopping [11].

- Simplify the Model: If possible, reduce the number of features. Lasso regularization is particularly useful for this, as it automatically performs feature selection [15].

- Increase Data Quantity and Quality: If feasible, collect more data. Alternatively, use data augmentation (e.g., creating synthetic data points) to improve the model's exposure to variation [11].

Experimental Protocols for Model Validation

Detailed Protocol: Spatial Block Cross-Validation

Objective: To obtain a realistic estimate of a model's prediction error when applied to new, unseen geographic areas.

Materials & Input Data:

- A dataset of ecological measurements with geographic coordinates (e.g.,

longitude,latitude). - Corresponding environmental predictor variables (e.g., satellite data, climate grids).

Methodology:

- Spatial Blocking:

- Overlay a grid on your study area or define blocks based on natural boundaries (e.g., watersheds, sub-basins). Leaving out whole subbasins has been shown to be an effective strategy [14].

- Key Parameter - Block Size: This is the most important choice. The block size should be larger than the range of spatial autocorrelation in your residuals. A study on marine remote sensing recommended using correlograms of the predictors to inform this choice [14].

- Fold Assignment: Assign each of your data points to the spatial block it falls into. These blocks will form the folds for cross-validation.

- Model Training & Validation:

- For

kfolds, you will runkexperiments. In each experimenti:- Set aside all data in block

ias the test set. - Use all data from the remaining

k-1blocks as the training set. - Train your model on the training set.

- Use the trained model to predict values for the test set and calculate the chosen error metric (e.g., RMSE, MAE).

- Set aside all data in block

- For

- Performance Calculation: Aggregate the error metrics from all

kfolds to produce a single, robust estimate of your model's spatial generalization error.

Case Study Performance Table

The following table summarizes quantitative results from real-world environmental ML studies that employed various validation and regularization techniques:

| Study & Prediction Target | Models & Techniques Compared | Key Performance Metric (Best Model) | Experimental Takeaway |

|---|---|---|---|

| Air Quality in Tehran [15] | Lasso Regularization | R² (PM2.5) = 0.80; R² (O3) = 0.35 | Lasso effectively reduced overfitting for particulate matter, but performance was poor for gaseous pollutants, highlighting domain-specific challenges. |

| Groundwater Quality in Thailand [16] | RF-CV vs. ANN-CV | RMSE = 0.06, R² = 0.87 (RF-CV) | Random Forest integrated with Cross-Validation (RF-CV) significantly outperformed an Artificial Neural Network (ANN-CV) in this task. |

| Marine Chlorophyll-a [14] | Spatial Block CV vs. Random CV | N/A (Methodology Study) | Spatial block CV with appropriately sized blocks provided more realistic error estimates for spatial prediction tasks compared to naive random CV. |

The Scientist's Toolkit

Research Reagent Solutions

| Tool / Technique | Primary Function | Application in Environmental ML |

|---|---|---|

| Lasso (L1) Regularization [15] | Performs both regularization and feature selection by shrinking some coefficients to zero. | Ideal for creating simpler, more interpretable models and identifying the most important environmental drivers. |

| Spatial Block Cross-Validation [14] | Provides a realistic estimate of model error when predicting to new geographic locations. | Essential for any spatial mapping application (e.g., species distribution, soil property mapping) to avoid over-optimistic performance estimates. |

| Random Forest (Ensemble Method) [16] | Reduces variance by averaging predictions from multiple de-correlated decision trees. | A robust, go-to algorithm for many ecological predictions that helps stabilize predictions and reduce overfitting. |

| Learning Curves [12] | Diagnostic plots showing training/validation error vs. training set size or model complexity. | Used to visually diagnose whether a model is suffering from high bias or high variance, guiding further model improvement. |

Model Selection Logic Diagram

The following diagram visualizes the decision process for diagnosing and addressing common model problems related to bias and variance, guiding you toward a well-generalized final model:

Why Environmental Data is Particularly Prone to Overfitting

Frequently Asked Questions

1. Why is overfitting a more significant problem for environmental data than for other data types? Environmental data possesses several unique characteristics that increase overfitting risk. Unlike data from controlled domains, ecological data is often spatially autocorrelated, meaning points close to each other are more similar than distant points [18]. This spatial structure violates the statistical assumption of data independence. Furthermore, environmental data can be noisy, imbalanced, and contain artifacts from collection processes, while the underlying ecological relationships are often complex and non-linear [11] [19]. When highly flexible Machine Learning (ML) models learn from such data, they can easily mistake local noise or artifacts for a true, generalizable signal.

2. I use k-fold cross-validation and get good results. Why is my model performing poorly when deployed in a new geographic area? This is a classic sign of overfitting due to spatial autocorrelation [18]. Standard cross-validation randomly splits your data into training and testing folds. However, if your randomly selected test points are spatially close to your training points, they will be highly similar. The model may appear accurate because it is effectively being tested on data that is nearly identical to its training set. This does not assess how it will perform in a truly new, spatially distinct environment, a problem known as poor "out-of-domain generalization" or "transferability" [20]. To truly test for this, you should use a spatially independent validation set, such as holding out an entire region for testing.

3. What are the practical consequences of using an overfitted environmental model? The consequences can be severe and far-reaching. Decision-makers may rely on overfitted models to:

- Develop inaccurate climate predictions, leading to poor preparedness for extreme weather events [11].

- Implement misguided environmental policies or conservation strategies [11].

- Misallocate valuable resources, such as funding for projects based on flawed biodiversity or flood risk maps [11] [19].

- Erode trust in data-driven scientific approaches when predictions repeatedly fail [11].

4. My complex ML model (e.g., Deep Neural Network) has a much higher cross-validation accuracy than a simpler one (e.g., Logistic Regression). Shouldn't I always use the best-performing model? Not necessarily. Research has shown that while complex models may show a slight improvement in cross-validation performance, this often comes at the cost of severely reduced interpretability and a higher risk of overfitting [20]. One study on species distribution models found that the gain in predictive performance from more complex models was minor and was outweighed by their overfitting [20]. Furthermore, these "black box" models can learn ecologically implausible relationships that are difficult to interpret. A simpler, more interpretable model that is slightly less accurate may be more robust and useful for informing environmental management [20] [21].

5. Besides spatial issues, what other data quality problems contribute to overfitting? Environmental data often suffers from several key issues [19]:

- Training Data Mismatches: Data collected from different sources or with different standards can introduce noise.

- Artifacts in Input Data: Errors like using "0" for missing values can be learned by sensitive ML algorithms as false signals.

- Imbalanced Datasets: For example, having more data from urban areas than rural ones can create a model biased toward the over-represented class [11].

- Insufficient Data Volume: There may simply not be enough data to capture the true complexity of the environmental system without also fitting the noise.

Troubleshooting Guide: Diagnosing and Preventing Overfitting

Diagnosis: Is My Model Overfitting?

Look for the following warning signs in your experiments:

| Warning Sign | Description to Check |

|---|---|

| Performance Gap | A large discrepancy between high performance (e.g., accuracy) on training data and low performance on testing/validation data [22] [21]. |

| Poor Transferability | The model performs well on random test splits but fails when predicting for new spatial regions or time periods (out-of-domain generalization) [20]. |

| Overly Complex Relationships | Model interpretation tools (e.g., SHAP plots) reveal irregular, overly complex, or ecologically implausible response shapes [20]. |

| High Sensitivity | The model's performance or predictions change dramatically with minor changes to the input data or hyperparameters [23]. |

Prevention: Methodologies and Best Practices

Implement these strategies to build more robust models.

1. Employ Robust Validation Techniques Standard random cross-validation is often insufficient for environmental data.

- Spatial Cross-Validation: Instead of splitting data randomly, hold out entire regions or blocks for validation. This tests the model's ability to predict in truly new locations [18].

- Time-Based Validation: For temporal data, train on older data and validate on newer data to simulate real-world forecasting [23].

The workflow below illustrates a robust validation approach that incorporates spatial considerations.

2. Simplify the Model and Apply Regularization If your model is overfitting, it may be too complex for the available data.

- Regularization Methods: Techniques like L1 (Lasso) and L2 (Ridge) regularization add a penalty term to the model's loss function, discouraging it from becoming overly complex by constraining the weights of the parameters [11] [21]. Typical lambda values range from 0.01 to 0.0001 [23].

- Feature Selection: Reduce the number of input variables by selecting only those with the strongest predictive power. Reducing feature sets by 30-40% can often lead to better generalization [23].

- Pruning: For decision trees, prune back the tree after training to remove less important branches [11] [22].

- Early Stopping: When using iterative models like Neural Networks, monitor the validation performance and halt training when performance on the validation set stops improving and starts to degrade [23] [11].

3. Improve Data Quality and Diversity A robust model starts with robust data.

- Data Augmentation: Artificially increase the size and diversity of your training set by creating slightly modified copies of existing data. For environmental data, this could include adding controlled noise or generating synthetic data via simulation [23] [11].

- Address Artifacts and Imbalances: Thoroughly clean data to remove artifacts (e.g., incorrect missing value codes). Use techniques like oversampling or SMOTE to balance imbalanced datasets [11] [19].

4. Use Ensemble Methods Ensemble methods combine predictions from multiple models to improve generalization and reduce overfitting.

- Frameworks: Use Ensemble ML frameworks (e.g.,

mlr3,scikit-learn) which come with built-in mechanisms to reduce overfitting [19]. - Methods:

The Scientist's Toolkit

The table below details key computational tools and methodologies for preventing overfitting in environmental ML research.

| Tool / Method | Function in Overfitting Prevention |

|---|---|

| Spatial Cross-Validation | A resampling technique that holds out geographically distinct blocks of data for validation, directly testing model transferability and exposing spatial overfitting [18]. |

Ensemble ML Frameworks (e.g., scikit-learn, mlr3) |

Software libraries that provide built-in support for ensemble methods (bagging, boosting) and hyperparameter tuning, which inherently reduce overfitting through model averaging [19]. |

| L1 / L2 Regularization | A mathematical technique applied during model training that adds a penalty to the loss function based on model coefficient size, discouraging over-complexity [23] [11]. |

| Model Agnostic Interpretation Tools (e.g., SHAP, PDPs) | Software tools that help explain the predictions of any ML model, allowing researchers to check for ecologically implausible relationships learned by the model, a key sign of overfitting [20]. |

| Data Augmentation Techniques | Methods to artificially expand training datasets by creating modified versions (e.g., adding noise, interpolation), helping the model learn more generalizable patterns [23] [11]. |

Technical Support Center: Troubleshooting Guides and FAQs

Troubleshooting Guide: Is Your Model Overfitting?

Use this guide to diagnose and address overfitting in your environmental machine learning models.

| Symptom | Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|---|

| High training accuracy, low validation/test accuracy [1] [24] | Excessive model complexity; Training for too many epochs [25] | Compare performance metrics on training vs. hold-out test sets [3] | Apply regularization (L1/L2); Use early stopping [1] [25] |

| Model performance is highly sensitive to small changes in input data [24] | Model has learned noise in the training dataset [3] | Introduce slight variations to validation data and observe prediction stability [26] | Simplify model architecture; Remove irrelevant features [25] [26] |

| Low training accuracy and low test accuracy [1] [24] | Model is too simple; Underfitting [1] | Check if a more complex model performs better on the training data [24] | Increase model complexity; Add relevant features; Train for more epochs [24] |

| Large gap between k-fold cross-validation scores and final test score [9] | Data splitting introduced bias; Information leak during preprocessing | Ensure preprocessing is fitted only on the training fold during cross-validation | Re-run cross-validation pipeline, ensuring no data contamination |

Frequently Asked Questions (FAQs)

Q1: My model achieved 99% accuracy on the training set but only 55% on the test set. What should I do first?

This is a classic sign of overfitting [3]. Your first step should be to implement k-fold cross-validation to get a more robust estimate of your model's true performance [1] [5]. Next, consider applying regularization techniques (like L1 or L2) to penalize model complexity or using early stopping to halt the training process before it starts memorizing the noise in your data [1] [25].

Q2: For environmental data, which k-value is more suitable in k-fold cross-validation: 5 or 10?

The choice often depends on your dataset size. A value of k=10 is a common and reliable choice as it provides a good balance between bias and variance [5]. However, if you have a very limited dataset (a common issue in environmental studies [27]), using a lower k=5 can be more practical, reducing computational cost while still providing a better estimate than a single train-test split. You should try both and compare the consistency of the resulting performance metrics.

Q3: How can I prevent my model from learning spurious correlations from irrelevant features in my environmental dataset?

Feature selection, or pruning, is key [1] [26]. You can use techniques provided by algorithms (like Random Forest's feature importance) or manually analyze and remove features that lack a plausible causal relationship with your target variable [1] [3]. Additionally, regularization methods automatically penalize models for relying on less important features, helping them focus on the strongest signals [1] [25].

Q4: We are developing a model to predict habitat suitability for a rare bird species with limited occurrence data. How can we avoid overfitting?

Small-sample models are highly susceptible to overfitting [27]. In this scenario, a combination of strategies is most effective:

- Data Augmentation: Slightly alter your existing data to create new, synthetic training samples (if applicable to your data type) [1] [25].

- Use Simpler Models: Start with less complex models like Regularized Logistic Regression before moving to highly complex algorithms [26].

- Rigorous Cross-Validation: Use k-fold cross-validation to tune your hyperparameters and validate your model's generalizability thoroughly [9] [5].

Quantitative Data on Overfitting Consequences

The table below summarizes real-world impacts and evidence of overfitting from machine learning research.

| Domain | Impact of Overfitting | Evidence from Research / Models |

|---|---|---|

| Environmental Science (Species Distribution) | Inaccurate habitat suitability projections, leading to flawed conservation strategies [28]. | In habitat modeling, overfit models fail to generalize to new geographical areas, misclassifying suitable habitats. Ensemble techniques are used to reduce this uncertainty [28]. |

| Healthcare / Drug Development | Unreliable diagnostic tools and wasted R&D resources on false leads [25] [26]. | An AI model trained on data from a single hospital may overfit to local practices or device artifacts, failing when deployed elsewhere [26]. |

| Financial Forecasting | Misleading market predictions, resulting in poor investment decisions [25] [26]. | Models trained only on historical data may memorize past trends and break down under novel economic conditions or regulatory changes [26]. |

| General ML Performance | High variance in model predictions, making it unreliable for deployment. | A model might show an R² score of 0.99 on training data but only 0.61 on a held-out test set, indicating overfitting [5]. Cross-validation provides a more realistic average score (e.g., 0.63) [5]. |

Experimental Protocol: K-Fold Cross-Validation

Objective: To obtain a robust performance estimate for a predictive model and mitigate overfitting. Materials: Labeled dataset (e.g., environmental sensor data, species occurrence points), machine learning algorithm (e.g., Random Forest, XGBoost).

- Data Preparation: Shuffle the dataset randomly to eliminate any order effects [5].

- Splitting: Partition the data into k (e.g., 5, 10) mutually exclusive subsets (folds) of approximately equal size [1] [5].

- Iterative Training and Validation: For each of the k iterations:

- Performance Calculation: After all k iterations, calculate the average of the k performance metrics obtained in the validation step. This average is the final cross-validation performance score [1] [5].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational "reagents" and their functions for building robust environmental ML models.

| Item / Technique | Function in Experiment / Analysis |

|---|---|

| K-Fold Cross-Validation [1] [5] | A resampling procedure used to evaluate machine learning models on limited data. It provides a robust estimate of model performance and generalizability by rotating the data used for training and validation. |

| Regularization (L1/L2) [1] [25] | Techniques that prevent overfitting by adding a penalty term to the model's loss function. This penalty discourages the model from becoming overly complex and relying too heavily on any single feature. |

| Ensemble Methods (e.g., Random Forest) [1] [28] | Methods that combine predictions from multiple separate models (e.g., decision trees) to produce a more accurate and stable final prediction, reducing variance and overfitting. |

| Data Augmentation [1] [25] | A strategy to artificially increase the size and diversity of a training dataset by creating modified copies of existing data (e.g., rotating images, adding noise), helping the model learn more generalizable patterns. |

| Early Stopping [1] [25] | A technique used during iterative model training where the training process is halted once performance on a validation set stops improving, preventing the model from over-optimizing to the training data. |

Cross-Validation as a Primary Defense Mechanism

Troubleshooting Guide: Common Cross-Validation Pitfalls and Solutions

1. My model performs well during cross-validation but poorly on the final hold-out test set. What happened?

- Problem: This is a classic sign of indirect tuning to the test set or information leakage [29]. If you use your test set results to iteratively refine your model or hyperparameters, the model effectively "learns" from the test set, making the CV scores over-optimistic and non-generalizable [29] [30].

- Solution: Implement a nested cross-validation (or double CV) protocol [31]. Use an inner CV loop within your training data exclusively for hyperparameter tuning and model selection. Use an outer CV loop to provide an unbiased estimate of your model's performance on unseen data. Your final test set should be used only once, to evaluate the model chosen after the entire nested CV process is complete [29].

2. The performance metrics vary drastically across different cross-validation folds. Why?

- Problem: High variance in scores across folds often indicates that your dataset is too small, has an unbalanced class distribution, or contains hidden subclasses that are not uniformly distributed across folds [29]. A single, random train-test split may not reveal this instability.

- Solution:

- For imbalanced classification datasets, use Stratified K-Fold Cross-validation. This ensures each fold has the same proportion of class labels as the complete dataset, leading to more reliable performance estimates [32] [33].

- Ensure your data splits are subject-wise or patient-wise rather than record-wise, especially with environmental time-series data or medical data from the same subject. This prevents correlated samples from appearing in both training and testing sets, which can inflate performance metrics [29] [31].

3. Cross-validation is taking too long to run on my large dataset. Are there alternatives?

- Problem: K-fold CV requires training and validating the model

ktimes, which can be computationally prohibitive for large models or datasets [32]. - Solution: For an initial, quick evaluation, the holdout method can be sufficient for very large datasets [32] [29]. Alternatively, repeated random sub-sampling validation (a.k.a. Monte Carlo CV) allows you to control the number of iterations independently of the size of the validation set [33]. You can run fewer iterations than in standard k-fold CV, though the results may have higher variance.

4. How do I know if my model is overfit or underfit during cross-validation?

- Problem: It can be difficult to diagnose the model's state from a single metric.

- Solution: Compare the performance on the training and validation sets across folds [3] [1].

- Overfitting: High performance on the training set but significantly lower performance on the validation sets.

- Underfitting: Low performance on both the training and validation sets [3] [1]. A well-generalized model will have similar, stable performance across both training and validation splits [5].

Frequently Asked Questions (FAQs)

Q: What is the ideal number of folds, K, to use? A: There is no universal "best" K. The choice represents a bias-variance tradeoff [31].

- K=5 or K=10 are common choices in practice, as they provide a good balance between reliable performance estimation and computational cost [29] [33].

- Lower K (e.g., 2 or 3): Faster to compute, but the performance estimate may have higher bias (pessimistic) as the training set in each fold is smaller [32].

- Higher K (e.g., Leave-One-Out CV): Uses almost all data for training in each fold, leading to a less biased estimate. However, it is computationally expensive and can have higher variance, especially with small datasets [9] [32] [33].

Q: Can cross-validation completely eliminate overfitting? A: No. Cross-validation is primarily a evaluation technique to estimate how well your model will generalize and to detect overfitting [9]. It does not, by itself, prevent your model from overfitting. It is a diagnostic tool that should be used in conjunction with preventative measures like regularization, pruning, early stopping, and ensembling during the model training phase [3] [1]. Furthermore, if the model selection process itself is overly complex, you can "overfit the cross-validation scheme" by exploiting random variations in the data splits [9].

Q: How does k-fold cross-validation specifically help prevent overfitting? A: It mitigates overfitting through several mechanisms [5]:

- Robust Evaluation: By testing the model on multiple different data subsets and averaging the results, you get a more reliable estimate of generalization error than from a single train-test split.

- Reduces Split Dependency: It ensures the model's performance is not artificially high or low due to a fortunate or unfortunate single split of the data.

- Utilizes All Data: Every data point is used for both training and validation, providing a more complete picture of the model's learning behavior.

Q: When should I not use cross-validation? A: Cross-validation may be less suitable or require modification in these scenarios:

- Temporal Data: For time-series data, standard k-fold CV can leak future information into the past. Use specialized methods like rolling-forward or time-series split cross-validation.

- Very Large Datasets: With sufficiently large data, a single, well-constructed hold-out test set may be statistically reliable and more efficient [29].

- Grouped Data: If your data has inherent groupings (e.g., multiple samples from the same patient or location), use Group K-Fold to ensure all samples from a group are in either the training or test set, preventing information leakage [29] [31].

Comparison of Cross-Validation Techniques

The table below summarizes key characteristics of common cross-validation methods to help you select the most appropriate one for your experiment [32] [29] [33].

| Method | Description | Best Use Case | Advantages | Disadvantages |

|---|---|---|---|---|

| Holdout | One-time split into training and test sets (typically 50/50 or 80/20). | Very large datasets or quick initial model evaluation [32] [29]. | Simple and fast to compute [32]. | Performance estimate can be highly dependent on a single, potentially non-representative split; inefficient use of data [32] [29]. |

| K-Fold | Partitions data into K equal folds; each fold serves as a validation set once. | The general-purpose standard for small to medium-sized datasets [32] [29]. | Lower bias than holdout; makes efficient use of all data [32] [5]. | Computationally expensive (trains K models); higher variance with small K or small datasets [9] [32]. |

| Stratified K-Fold | K-Fold but ensures each fold preserves the percentage of samples for each class. | Classification problems with imbalanced classes [32] [31]. | Produces more reliable performance estimates for imbalanced data. | Not necessary for balanced datasets or regression problems. |

| Leave-One-Out (LOOCV) | A special case of K-Fold where K equals the number of data samples (N). | Very small datasets where maximizing training data is critical [33]. | Low bias; uses maximum data for training. | Computationally very expensive for large N; high variance in the estimate [9] [32]. |

| Repeated Random Sub-sampling | Randomly splits data into training and validation sets multiple times. | When you need to control the number of iterations independently of data size [33]. | More flexible than k-fold in split ratio and iterations. | Some observations may never be selected for validation, others multiple times; not exhaustive [33]. |

Experimental Protocol: Implementing Nested Cross-Validation

This protocol provides a detailed methodology for using nested cross-validation to reliably tune hyperparameters and select a model without overfitting to the test set [29] [31].

1. Problem Definition and Data Preparation

- Define Objective: Clearly state the predictive task (e.g., classify soil type from sensor data, predict compound toxicity).

- Data Preprocessing: Handle missing values, encode categorical variables, and scale features. Crucially, fit preprocessing transformers (like scalers) on the training fold only within the CV loop to prevent data leakage [30]. Using a

Pipelineis highly recommended.

2. Define CV Schemes

- Outer Loop: Choose a CV strategy (e.g., 5-fold Stratified K-Fold) to assess the generalizability of the entire modeling process. This loop provides the final performance estimate.

- Inner Loop: Choose a CV strategy (e.g., 3-fold or 5-fold) for hyperparameter tuning and model selection within the training set provided by the outer loop.

3. Model Training and Tuning

- For each fold

iin the Outer Loop:- The data is split into

Training_outer_iandTest_outer_i. - On

Training_outer_i, perform a grid or random search with the Inner Loop CV:- For each hyperparameter candidate, train a model on the inner training folds and evaluate it on the inner validation fold.

- Average the performance across all inner folds to select the best hyperparameters.

- Train a final model on the entire

Training_outer_idataset using the best hyperparameters. - Evaluate this final model on the held-out

Test_outer_iset to get an unbiased performance score for that fold.

- The data is split into

- Final Model: After completing the outer loop, average the performance scores from all outer folds. The final model to be deployed is then trained on the entire dataset using the hyperparameters that were found to be best on average across the outer loops.

The following diagram illustrates this nested workflow:

The Scientist's Toolkit: Essential Research Reagents & Software

The table below lists key computational tools and concepts essential for implementing robust cross-validation in environmental ML and drug development research.

| Tool / Concept | Function | Example Use in Cross-Validation |

|---|---|---|

| Scikit-learn (sklearn) | A comprehensive open-source Python library for machine learning [30]. | Provides implementations for KFold, StratifiedKFold, cross_val_score, GridSearchCV, and Pipeline, which are essential for building and evaluating models with CV [32] [30]. |

| Pipeline | A scikit-learn object that chains together data preprocessing and model estimation steps [30]. | Prevents data leakage by ensuring that all transformations (e.g., scaling) are fitted only on the training fold of each CV split and then applied to the validation fold [30]. |

| Hyperparameters | Model configuration parameters not learned from data (e.g., regularization strength, tree depth) [34]. | CV, especially Grid Search or Random Search, is used to find the optimal hyperparameter values that maximize a model's generalization performance [34] [30]. |

| Stratified Splitting | A sampling technique that maintains the original class distribution in each fold [32] [33]. | Critical for imbalanced datasets (common in medical/ecological studies) to ensure each fold is representative of the overall class balance, preventing skewed performance estimates [32] [31]. |

| Nested Cross-Validation | A double CV loop structure for unbiased hyperparameter tuning and performance estimation [31]. | The gold-standard protocol for obtaining a reliable performance estimate when both selecting a model and tuning its hyperparameters [29] [31]. |

Implementing Cross-Validation: Techniques for Environmental Datasets

Frequently Asked Questions (FAQs)

Q1: Does k-Fold Cross-Validation directly prevent my model from overfitting? No, k-fold cross-validation itself does not prevent overfitting. Its primary role is to provide a robust evaluation of your model's performance and, crucially, to detect the presence of overfitting [35] [5]. If your model shows high accuracy on training data but significantly lower accuracy across the validation folds, this performance gap is a clear indicator of overfitting [5] [36]. Preventing overfitting requires other techniques applied during model training, such as regularization, dropout, or early stopping [5].

Q2: My k-fold results have high variance between folds. What could be wrong? High variance in scores across folds can stem from several issues [37]:

- Small Dataset or Unlucky Splits: With small datasets, a single split can disproportionately impact the score. Solution: Use a repeated k-fold approach to create multiple random splits and average the results for a more stable estimate [37].

- Imbalanced Data: If some classes are rare, a standard k-fold might create folds without representative samples. Solution: Use Stratified K-Fold to preserve the percentage of samples for each class in every fold [37].

- Data Leakage: Information from the validation set may be influencing the training process. Solution: Ensure all preprocessing steps (like scaling) are fit solely on the training data within each fold and then applied to the validation data [37].

Q3: When should I not use standard k-Fold Cross-Validation? Standard k-fold is not suitable for all data types. Key exceptions include:

- Time Series Data: Standard k-fold breaks temporal dependencies. Use Time Series Cross-Validation (e.g., rolling window) instead [38] [37].

- Grouped Data: If your data has natural groupings (e.g., multiple samples from the same patient), you must keep all samples from the same group together in a fold. Use Group K-Fold to prevent over-optimistic performance estimates [37].

- Extremely Large Datasets: For very large datasets, a single, large hold-out test set might be sufficient and more computationally efficient than repeated model training [39].

Q4: How do I choose the right value of K?

The choice of k is a trade-off between computational cost and the bias-variance of your estimate [38] [5] [36]. The table below summarizes this trade-off:

| K Value | Bias | Variance | Computational Cost | Typical Use Case |

|---|---|---|---|---|

| Low (e.g., k=3, 5) | Higher | Lower | Lower | Large datasets, initial model prototyping [38]. |

| Medium (e.g., k=5, 10) | Balanced | Balanced | Moderate | Standard choice for most applications [38] [36]. |

| High (e.g., k=20, LOOCV) | Lower | Higher | Higher | Very small datasets where data is precious [38] [39]. |

Q5: What is the difference between k-Fold CV and Bootstrapping? Both are resampling methods, but they work differently [39] [40]:

| Aspect | k-Fold Cross-Validation | Bootstrapping |

|---|---|---|

| Method | Splits data into k mutually exclusive folds. |

Samples data with replacement to create new datasets. |

| Data Usage | Each data point is in the test set exactly once. | About ~63.2% of data is in each training sample; ~36.8% are "out-of-bag" for testing [40]. |

| Primary Goal | Model evaluation and selection. | Estimating the uncertainty (e.g., variance, standard error) of a model's parameters or performance [39] [40]. |

Troubleshooting Guides

Issue 1: Consistently Poor Performance Across All Folds

This suggests a systematic problem with the model or data, not just overfitting.

Diagnosis Steps:

- Check for Underfitting: Compare training and validation scores. If both are low, the model is too simple to capture the underlying patterns [35].

- Inspect Feature Quality: The features provided to the model may not be predictive enough for the task.

- Verify Data Preprocessing: Ensure data is cleaned and scaled correctly. Remember to fit scalers (like

StandardScaler) on the training fold only and then transform both training and validation data to avoid data leakage [37].

Resolution Protocol:

- For Underfitting: Use a more complex model (e.g., increase model depth for a neural network), reduce regularization, or perform feature engineering to create more informative inputs [41].

- Conduct an Ablation Study: Systematically add or remove features to understand their impact on performance, as demonstrated in environmental ML research [41].

Issue 2: Performance Gap Between Training and Validation Folds (Overfitting)

Diagnosis Steps:

- Calculate the Performance Gap: For each fold, subtract the validation score from the training score. A large average gap (e.g., training accuracy >95% with validation accuracy <80%) indicates overfitting [36].

- Examine Learning Curves: Plot the training and validation loss over epochs. A diverging gap is a classic sign of overfitting.

Resolution Protocol:

- Implement Regularization: Apply L1 (Lasso) or L2 (Ridge) regularization to penalize complex models [5].

- Use Dropout: For neural networks, incorporate dropout layers to randomly disable neurons during training, forcing the network to learn robust features [42] [41].

- Apply Early Stopping: Halt the training process when the validation performance stops improving, preventing the model from memorizing the training data [5].

- Perform Hyperparameter Tuning: Use methods like Bayesian Optimization combined with k-fold CV to systematically find hyperparameters (like learning rate and dropout rate) that generalize well [42].

Issue 3: High Variability in Scores Across Folds

Diagnosis Steps:

- Calculate Standard Deviation: Compute the standard deviation of the performance metric (e.g., accuracy) across the k folds. A high standard deviation indicates unstable model performance [36].

- Check Data Distribution: Verify if the dataset is small or has an imbalanced class distribution, which can lead to high-variance estimates.

Resolution Protocol:

- Increase the Number of Folds (

k): A higherk(e.g., 10 instead of 5) can reduce the variance of the performance estimate [38] [36]. - Use Repeated K-Fold: Repeat the k-fold cross-validation process multiple times with different random shuffles of the data. The final performance is the average of all runs, which significantly reduces variance [37].

- Switch to Stratified K-Fold: For classification tasks with imbalanced classes, this ensures each fold is representative of the whole, leading to more stable results [37].

Experimental Protocols & Data

Protocol 1: Standard k-Fold Cross-Validation for Model Evaluation

This is the foundational protocol for robust performance estimation [38] [5].

Workflow Diagram:

Methodology:

- Shuffle: Randomly shuffle the dataset to remove any inherent order [5].

- Split: Partition the data into

k(e.g., 5 or 10) subsets of approximately equal size, known as "folds" [38] [5]. - Iterate and Train: For each unique fold

i:- Designate fold

ias the validation set. - Combine the remaining

k-1folds to form the training set. - Train your model on the training set [5].

- Designate fold

- Validate and Record: Evaluate the trained model on the validation set (fold

i) and record the chosen performance metric (e.g., accuracy, R²) [5]. - Average Results: After all

kiterations, calculate the average of thekrecorded performance metrics. This average provides a robust estimate of the model's generalization ability [38] [5].

Protocol 2: k-Fold CV with Bayesian Hyperparameter Optimization

This advanced protocol, proven in environmental ML research, finds hyperparameters that generalize well [42].

Methodology:

- Define Search Space: Specify the hyperparameters to optimize (e.g., learning rate, dropout rate, gradient clipping threshold) and their potential value ranges [42].

- Inner k-Fold Loop: For each set of hyperparameters suggested by the Bayesian optimizer:

- Perform a standard k-fold cross-validation (as in Protocol 1) on the training data.

- Use the average validation score from the inner k-fold to judge the quality of the hyperparameters.

- Select Best Hyperparameters: After the optimization process concludes, select the hyperparameter set that achieved the best average validation score in the inner loop.

- Final Model Training: Train a final model on the entire training dataset using these optimized hyperparameters.

- Unbiased Evaluation: Perform a final evaluation on a separate, held-out test set that was not involved in the optimization or training process [37] [42] [41].

Quantitative Results from Environmental ML Research: The effectiveness of combining k-fold with Bayesian optimization is demonstrated in land cover classification using the EuroSAT dataset [42].

| Optimization Method | Model | Overall Accuracy | Key Hyperparameters Tuned |

|---|---|---|---|

| Bayesian Optimization | ResNet18 | 94.19% | Learning rate, Gradient clipping, Dropout rate [42] |

| Bayesian Opt. + K-Fold CV | ResNet18 | 96.33% | Learning rate, Gradient clipping, Dropout rate [42] |

Protocol 3: Stratified Group K-Fold for Complex Data

This protocol addresses data with class imbalances and underlying group structures, a common scenario in scientific data [37].

Workflow Diagram:

Methodology:

- Identify Groups and Strata: Determine the grouping factor (e.g., patient ID, experimental batch) and the stratification factor (the target class labels).

- Split Preserving Structure: The splitting algorithm ensures that:

- The relative class frequencies (strata) are preserved in each fold.

- All samples from the same group are contained entirely within a single fold (no data from the same group is in both training and validation sets).

- Proceed with Standard k-fold: The rest of the k-fold process remains the same, providing a reliable performance estimate for grouped, imbalanced data.

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational "reagents" for implementing k-fold cross-validation in a research environment, particularly for environmental ML and drug development.

| Item / Solution | Function | Example / Brief Explanation |

|---|---|---|

Scikit-Learn (sklearn) |

Primary library for implementation. | Provides KFold, StratifiedKFold, GroupKFold, and cross_val_score classes [38] [5]. |

| Bayesian Optimizer | For efficient hyperparameter search. | Libraries like scikit-optimize or Optuna can be combined with k-fold to find optimal model parameters [42]. |

| Stratified K-Fold | Handles imbalanced classification datasets. | Ensures each fold has the same proportion of class labels as the full dataset [37]. |

| Group K-Fold | Prevents data leakage from correlated samples. | Essential when data points are grouped (e.g., multiple cell readings from one patient) [37]. |

| Repeated K-Fold | Reduces variance in performance estimates. | Runs k-fold multiple times with different random splits and averages the results [37]. |

| Separate Test Set | Provides an unbiased final evaluation. | A data holdout never used during model training or hyperparameter tuning [37] [41]. |

| Data Augmentation | Artificially increases training data diversity. | For image-based environmental models (e.g., satellite), applies rotations, flips, and zooms to improve generalization [42]. |

Stratified Cross-Validation for Imbalanced Environmental Data

FAQs and Troubleshooting Guides

This guide addresses common challenges researchers face when using stratified cross-validation for imbalanced environmental datasets, within the broader context of preventing overfitting.

Q1: My model performs well during cross-validation but poorly on new environmental samples. Why? This is a classic sign of overfitting, often due to data leakage during preprocessing. If you perform feature scaling or normalization on the entire dataset before splitting into cross-validation folds, information from the test set leaks into the training process [43]. The model learns patterns it wouldn't otherwise see, causing optimistic performance estimates.

- Solution: Always preprocess within each cross-validation fold. Use scikit-learn's

Pipelineto ensure that scaling and other transformations are learned from the training fold and applied to the validation fold [30]. For example:

- Solution: Always preprocess within each cross-validation fold. Use scikit-learn's

Q2: Is stratified cross-validation sufficient for handling severely imbalanced classes? Stratification ensures your folds are representative, but it does not change the class distribution in the training data [44]. If your dataset has a 1:100 imbalance, each training fold will also have a ~1:100 imbalance, which can bias the model toward the majority class.

- Solution: Combine stratified cross-validation with techniques that address class imbalance directly. Consider:

- Class weights: Penalize misclassifications of the minority class more heavily (e.g.,

class_weight='balanced'in scikit-learn) [44]. - Resampling: Use oversampling (e.g., SMOTE) or undersampling on the training fold only to balance classes, being careful not to apply it to the validation fold.

- Class weights: Penalize misclassifications of the minority class more heavily (e.g.,

- Solution: Combine stratified cross-validation with techniques that address class imbalance directly. Consider:

Q3: How do I choose between

StratifiedKFoldandStratifiedShuffleSplit? The choice depends on your validation strategy.StratifiedKFoldis for standard k-fold cross-validation. It splits the data into k distinct folds, each used once as a validation set. This is the most common method for robust model evaluation [45] [32].StratifiedShuffleSplitperforms a single random train/validation split. It is useful when you need a simple hold-out validation set but want to preserve the class distribution [44]. For reliable results in model selection,StratifiedKFoldis generally preferred.

Q4: How can I reduce the high computational cost of repeated model training during cross-validation? Performing k-fold cross-validation requires training the model k times, which can be prohibitive for large models or datasets [46].

- Solution: Research has shown that for some models, especially those used with Three-Way Decisions (TWDs), computational analysis can be used to reduce redundant calculations across folds [46]. While specific implementations are model-dependent, general strategies include using fewer folds (e.g., k=5 instead of 10) if the dataset is large enough, and ensuring efficient hyperparameter tuning.

Failure of Standard K-Fold vs. Stratified K-Fold

The table below demonstrates how standard K-Fold cross-validation can create non-representative folds with imbalanced data, while Stratified K-Fold preserves the original distribution. This example is based on a synthetic dataset with a 99% majority class and 1% minority class (10 samples) [47].

| Fold # | Standard K-Fold (Train/Test) | Stratified K-Fold (Train/Test) |

|---|---|---|

| 1 | Train: 0=791, 1=9; Test: 0=199, 1=1 | Train: 0=792, 1=8; Test: 0=198, 1=2 |

| 2 | Train: 0=793, 1=7; Test: 0=197, 1=3 | Train: 0=792, 1=8; Test: 0=198, 1=2 |

| 3 | Train: 0=794, 1=6; Test: 0=196, 1=4 | Train: 0=792, 1=8; Test: 0=198, 1=2 |

| 4 | Train: 0=790, 1=10; Test: 0=200, 1=0 | Train: 0=792, 1=8; Test: 0=198, 1=2 |

| 5 | Train: 0=792, 1=8; Test: 0=198, 1=2 | Train: 0=792, 1=8; Test: 0=198, 1=2 |

As shown, Standard K-Fold can produce a fold (Fold 4) with zero minority class samples in the test set, making evaluation impossible. Stratified K-Fold maintains a consistent and representative number of minority samples in every fold [47].

Experimental Protocol: Implementing Stratified K-Fold Cross-Validation

The following workflow and code provide a detailed methodology for implementing a robust stratified cross-validation protocol for an environmental ML task, such as predicting water quality management actions [48].

Code Implementation (Python using scikit-learn)

The Scientist's Toolkit: Essential Research Reagents

The table below details key computational tools and concepts essential for implementing stratified cross-validation in environmental ML research.

| Item | Function / Purpose |

|---|---|

| StratifiedKFold (scikit-learn) | Splits data into k folds while preserving the percentage of samples for each target class. The core validator for imbalanced data [30]. |

| Pipeline (scikit-learn) | Chains together data preprocessing steps and a model estimator to prevent data leakage during cross-validation [30]. |

| F1-Score / ROC-AUC | Performance metrics robust to class imbalance, providing a better measure of model utility than accuracy alone [30]. |

| Class Weights | A model parameter (e.g., class_weight='balanced') that increases the cost of misclassifying minority samples, helping the model learn from all classes equally [44]. |

| SMOTETomek | A hybrid resampling technique that combines oversampling (SMOTE) and undersampling (Tomek links) to create a balanced dataset, used on the training fold only [48]. |

Spatial and Temporal Considerations for Environmental Data Splitting

Frequently Asked Questions

1. Why does standard random cross-validation fail for spatial environmental data? Standard random cross-validation fails because it ignores spatial autocorrelation—the principle that nearby geographic locations are more likely to have similar values than distant ones [49]. When you randomly split such data, information from a location very close to a "test" point is likely present in the "training" set. The model can then appear to perform well by effectively "cheating," learning local noise rather than the underlying spatial process, which leads to poor generalization to new geographic areas [49]. This results in an overoptimistic and unreliable performance estimate.

2. What is target-based spatial splitting, and how does it prevent overfitting? Target-based spatial splitting involves partitioning your data based on the spatial distribution of your samples, ensuring that training and test sets are geographically distant from one another [41]. For instance, you can hold out entire drive tests, cities, or watersheds for testing [41]. This method prevents overfitting by simulating a real-world scenario where the model must predict in a completely new location. It ensures the model learns broad, generalizable spatial patterns rather than memorizing local, site-specific variations.

3. How should I handle data that has both spatial and temporal dependencies? Handling spatio-temporal data requires a splitting strategy that respects both dependencies. The most robust method is spatio-temporal blocking:

- Spatial Dimension: Create clusters based on geographic location (e.g., regions, grids).

- Temporal Dimension: Define time blocks (e.g., years, seasons).

Hold out entire spatial clusters from specific time blocks for testing. For example, use all data from one or more regions in the most recent year as your test set. This prevents the model from using information from the same location at a similar time for both training and prediction, giving a true measure of its forecasting ability [50].

4. My dataset is limited. Are there any spatial cross-validation techniques I can use?

Yes, Spatial k-Fold Cross-Validation is a powerful technique for limited data. Instead of holding out a single large block, the study area is divided into multiple spatial folds, often using a grid or clustering algorithm. The model is trained on k-1 folds and validated on the held-out fold, repeating the process until each fold has been used for validation [49]. This provides multiple performance estimates while ensuring training and test sets are spatially separated, reducing the risk of overfitting compared to a single hold-out set [41].

5. What are the key metrics to track to detect overfitting in spatial models? The primary indicator of overfitting is a significant performance gap between training and test sets. Track these metrics for both sets:

- Root Mean Squared Error (RMSE): Useful for retaining unit-based interpretation [41].

- R-squared (R²): Indicates the proportion of variance explained.

- Mean Absolute Error (MAE).

A large discrepancy (e.g., high R² on training, low R² on test) signals overfitting [3]. Furthermore, you should analyze the spatial distribution of errors. If prediction errors are strongly clustered in specific geographic areas, it indicates the model is performing poorly in those regions due to a non-generalizable fit [49].

Troubleshooting Guides

Problem: High Performance on Training Data, Poor Performance on New Regions

Symptoms:

- Model accuracy (e.g., R²) is high on training data but drops significantly on test data from a different geographic area [3].

- Prediction errors are not random but are clustered in specific, unseen locations [49].

Diagnosis: This is a classic sign of spatial overfitting. The model has learned patterns that are too specific to the training locations, including spatial noise, and has failed to capture the general processes that apply across the entire domain.

Solution: Implement Spatial Data Splitting.

- Define Spatial Blocks: Cluster your data into distinct geographic groups. This can be done by:

- Administrative boundaries (e.g., states, counties).

- Regular grids over the study area.

- Clustering algorithms like K-Means on latitude and longitude coordinates [49].

- Apply a Spatial Hold-Out: Hold out entire spatial blocks to use as your test set. For example, if your data covers six different cities, train your model on five and use the sixth for testing [41].

- Validate and Refine: Train your model on the training blocks and evaluate its performance strictly on the held-out test block. The performance on this unseen block is the true measure of your model's generalizability.

Problem: Model Fails to Predict Future Time Periods Accurately

Symptoms:

- The model accurately predicts past events but performance degrades when predicting future dates.

- The model cannot capture shifting trends or seasonal variations over time.

Diagnosis: The model is temporally overfitted. A standard random split has likely leaked future information into the training phase, allowing the model to "peek" at the answers. It has not learned to forecast.

Solution: Implement Temporal Data Splitting.

- Order Data Chronologically: Ensure your dataset is sorted by time.

- Create a Time-Based Split:

- For Model Validation: Use a forward-chaining (rolling-origin) method. For example:

- Train on data from 2020-2022, validate on 2023.

- Then, train on 2020-2023, validate on 2024.

- For Final Evaluation: Designate the most recent period of data as a strict hold-out test set. For instance, use 2010-2020 for training and validation, and reserve 2021-2023 for final testing [50]. This simulates a real-world forecasting scenario.

- For Model Validation: Use a forward-chaining (rolling-origin) method. For example:

- Incorporate Temporal Features: Explicitly include relevant temporal features (e.g., hour-of-day, day-of-year, seasonal indicators) to help the model learn cyclical patterns [50].

Experimental Protocols & Data

Protocol 1: Spatial k-Fold Cross-Validation for Model Assessment

This protocol is ideal for evaluating a model's generalizability across space when you don't have a single large region to hold out [49].

- Spatial Partitioning: Divide your entire study area into k spatial folds (e.g., k=5 or 10). This can be done using a regular grid or spatial clustering.

- Iterative Training & Validation: For each iteration i (from 1 to k):

- Assign all data points in fold i to the validation set.

- Assign all data points in the remaining k-1 folds to the training set.

- Train the model on the training set.

- Predict on the validation set and calculate performance metrics (e.g., RMSE, R²).

- Performance Aggregation: After all k iterations, aggregate the performance metrics (e.g., calculate the mean and standard deviation). This gives a robust estimate of how your model will perform in new geographic areas.

Table: Example Results from a 5-Fold Spatial Cross-Validation

| Fold | Region Description | R² | RMSE |

|---|---|---|---|

| 1 | Eastern Forest Zone | 0.85 | 1.2 |

| 2 | Western Agricultural Belt | 0.78 | 1.8 |

| 3 | Central Urban Area | 0.65 | 2.5 |

| 4 | Northern Highlands | 0.81 | 1.5 |

| 5 | Southern Basin | 0.75 | 2.0 |

| Mean ± Std Dev | 0.77 ± 0.07 | 1.8 ± 0.5 |

Protocol 2: Spatial Hold-Out with Statistical Validation

This protocol, used in rigorous environmental ML studies, involves holding out entire geographical regions and repeating the experiment to ensure statistical significance [41].

- Define Geographic Hold-Outs: Identify m distinct geographical regions in your dataset (e.g., six different drive test locations) [41].

- Create Test Scenarios: For each of the m regions, create a scenario where that single region is the test set, and the other m-1 regions form the training/validation pool.

- Repeat and Measure: For each of the m test scenarios, run the model training and evaluation n times independently (e.g., n=20). In each run, use a different random split of the m-1 training regions into training and validation sets, and different random initial weights for the model [41].

- Statistical Analysis: For each test scenario, calculate the mean and standard deviation of your performance metrics (e.g., RMSE) across the n runs. This provides a stable performance estimate for that unseen region and quantifies the model's sensitivity to initial conditions and data sampling.

Table: Statistical Results from Geographic Hold-Out Tests (RMSE)

| Held-Out Test Region | Mean RMSE | Standard Deviation |

|---|---|---|

| London | 2.5 dB | 0.2 dB |

| Nottingham | 2.8 dB | 0.3 dB |

| Southampton | 2.4 dB | 0.1 dB |

| Overall Mean | 2.6 dB | - |

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Spatial Environmental ML Experiments

| Item | Function & Explanation |

|---|---|

| Geographic Information Systems (GIS) Data | Provides the foundational spatial data (e.g., Digital Surface Models, land use maps) from which features like obstruction depth and distance are derived [41]. |

| Spatial Clustering Algorithms | Algorithms like K-Means or DBSCAN are used to group data points into geographic clusters for creating spatial folds or hold-out blocks [49]. |

| Spatial Autocorrelation Metrics | Statistical tools like Moran's I or Semivariograms are used in exploratory analysis to quantify and confirm the presence of spatial structure in the data, informing the splitting strategy [49]. |

| Specialized Cross-Validation Classes | Software classes (e.g., SpatialKFold in libraries like scikit-learn) that implement spatial splitting schemes, ensuring proper separation of training and test data during model validation [49]. |

| High-Resolution Remote Sensing Data | Satellite or aerial imagery (e.g., MODIS surface reflectance data) used to create rich feature sets (e.g., spectral indices) that describe the environment for the model [51]. |

| Statistical Analysis Software | Tools like R or Python with Pandas are used to calculate performance metrics (mean, standard deviation) across multiple validation runs, providing a rigorous assessment of model performance and stability [41]. |

Technical Support & Troubleshooting

This section addresses common technical challenges researchers face when developing ensemble machine learning models for predicting greenhouse gas (GHG) emissions.

Frequently Asked Questions (FAQs)

FAQ 1: My ensemble model performs well on training data but poorly on new, unseen climate data. What is the cause and how can I fix it?

- Issue: This is a classic sign of overfitting, where the model has memorized noise and specific patterns in the training data rather than learning the underlying generalizable relationships [52] [53].

- Solution:

- Implement Rigorous Cross-Validation: Use K-Fold Cross-Validation instead of a simple train/test split to get a more reliable estimate of model performance on unseen data [32] [53]. A value of K=5 or K=10 is commonly recommended [32].

- Apply Regularization: Introduce regularization techniques (e.g., L1 or L2) to your model's cost function to penalize overly complex models and prevent them from fitting the training data too closely [52].

- Tune Hyperparameters: Systematically optimize model hyperparameters (e.g., tree depth in Random Forest, learning rate in boosting) on a dedicated validation set to find the right balance between bias and variance [52].

FAQ 2: What is the best way to split my temporal GHG flux data to avoid data leakage?

- Issue: Standard shuffling in K-Fold Cross-Validation corrupts the inherent time-order of data, allowing the model to be trained on future data to predict the past, which gives an unrealistic performance estimate [53].

- Solution: Use Time Series Cross-Validation [53]. This method respects temporal order by ensuring that the training set always consists of data points that occur before the data points in the test set. This simulates a real-world forecasting scenario and prevents data leakage.

FAQ 3: How do I choose between a simple model and a complex ensemble for my GHG prediction task?

- Issue: A more complex model is not always better. It can be computationally expensive and more prone to overfitting, especially with limited data [54].

- Solution: The choice should be guided by: