QSAR Models for Environmental Chemicals: Principles, Applications, and Regulatory Validation

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) models for assessing the environmental impact and toxicity of chemical substances.

QSAR Models for Environmental Chemicals: Principles, Applications, and Regulatory Validation

Abstract

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) models for assessing the environmental impact and toxicity of chemical substances. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of QSAR, detailing key methodologies and their practical applications in predicting chemical persistence, bioaccumulation, and toxicity. The content addresses common challenges in model development, such as data quality and applicability domain definition, and outlines the OECD validation framework essential for regulatory acceptance. By synthesizing current research and emerging trends, including machine learning integration, this guide serves as a critical resource for leveraging QSAR in the development of safer chemicals and robust environmental risk assessments.

Understanding QSAR: Core Concepts and the Drive Toward New Approach Methodologies (NAMs)

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational chemistry and toxicology, establishing statistically significant correlations between chemical structures and their biological activities, physicochemical properties, or environmental fate parameters [1]. These in silico methodologies have gained substantial importance in environmental chemicals research, particularly as regulatory requirements increasingly prioritize animal-free testing approaches under initiatives like the European Chemicals Strategy for Sustainability [2]. The fundamental premise of QSAR is that molecular structure encodes information that determines how chemicals interact with biological systems and environmental compartments, enabling researchers to predict properties of untested compounds based on structural similarities to well-characterized analogues.

The historical development of QSAR dates to the early 1960s, with the pioneering work of Hansch and Fujita establishing the foundation for correlating biological activity with physicochemical parameters [1]. Over nearly five decades of maturation, QSAR modeling has evolved into a disciplined research area characterized by well-defined protocols and procedures for expert application to growing chemical libraries [3]. In environmental research, QSAR approaches are particularly valuable for addressing data gaps for cosmetic ingredients, industrial chemicals, and potential endocrine disruptors where traditional testing may be impractical, ethically concerning, or economically prohibitive [4] [2].

Molecular Descriptors: The Building Blocks of QSAR

Molecular descriptors serve as the quantitative foundation of QSAR models, translating structural information into numerical values that can be correlated with biological activity or chemical properties. These descriptors encompass diverse aspects of molecular structure, from simple atom counts to complex quantum-chemical calculations [5].

Table 1: Categories and Examples of Molecular Descriptors in QSAR Modeling

| Descriptor Category | Representative Examples | Computational Method | Interpretation |

|---|---|---|---|

| Constitutional | Molecular weight, atom counts, H-bond acceptors/donors | Empirical formulas based on structure and connectivity | Molecular size and composition |

| Electronic | HOMO/LUMO energies, dipole moment | Quantum chemical calculations (ab initio, semi-empirical) | Reactivity and charge distribution |

| Geometric | Molecular volume, surface area | Molecular mechanics or semi-empirical methods | Steric properties and shape |

| Topological | Connectivity indices, path counts | Graph theory applied to molecular structure | Branching patterns and molecular complexity |

| Hydrophobic | logP (octanol-water partition coefficient) | Fragment contribution methods (e.g., KOWWIN) | Solubility and membrane permeability |

The HOMO (Highest Occupied Molecular Orbital) and LUMO (Lowest Unoccupied Molecular Orbital) energies represent particularly insightful electronic descriptors according to Frontier Orbital Theory. Molecules with accessible (near-zero) HOMO levels tend to be good nucleophiles, while those with low LUMO energies typically function as good electrophiles [5]. Similarly, polarizability descriptors characterize how readily molecular charge distribution distorts in response to electromagnetic fields, influencing London dispersion forces that affect binding interactions in biological systems [5].

For complex environmental chemicals, descriptor selection must align with the endpoint being modeled. For instance, hydrophobic descriptors like logP prove critical for predicting bioaccumulation potential, while electronic descriptors may better correlate with metabolic persistence or receptor-binding affinity [4] [2].

QSAR Model Development Workflow

The development of robust, predictive QSAR models follows a structured workflow emphasizing statistical rigor and external validation [3]. This process integrates multiple stages from data collection through model deployment, with particular attention to applicability domain definition and uncertainty quantification.

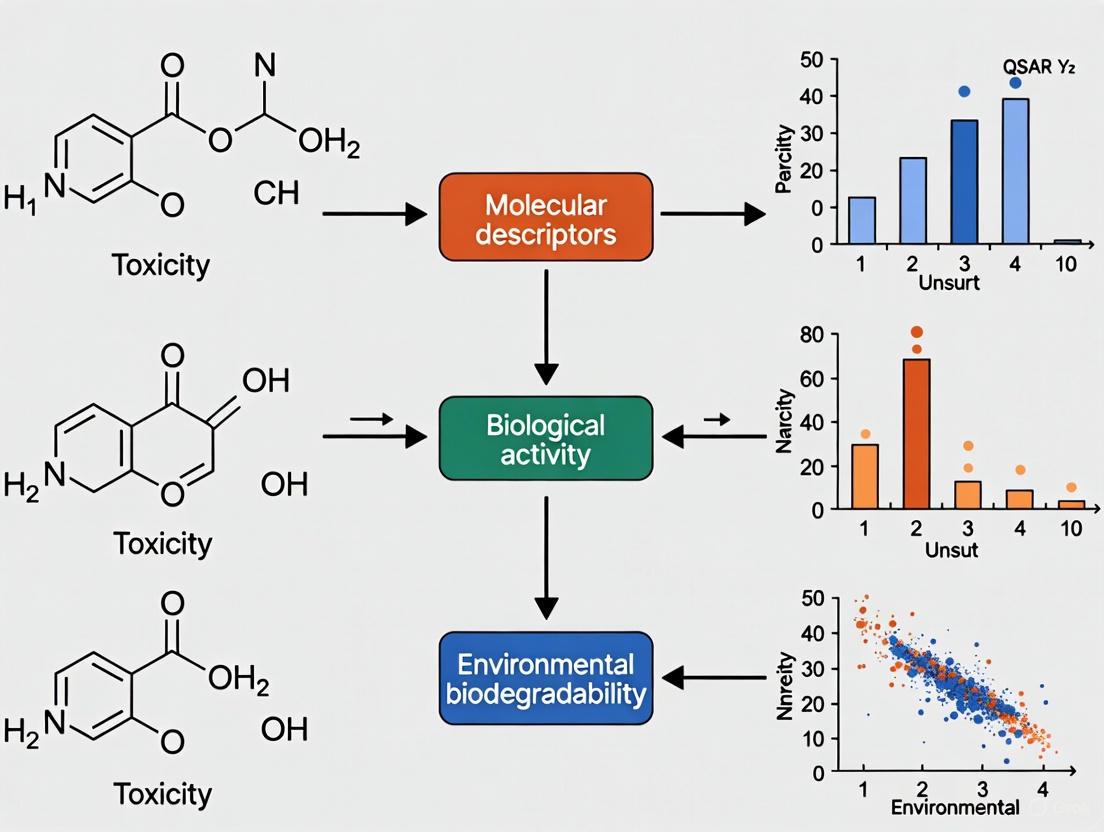

Figure 1: QSAR Model Development and Validation Workflow

Data Preparation and Curation

The initial phase involves assembling a high-quality dataset of chemical structures with associated experimental values for the target endpoint. Data curation addresses structure standardization, identifier conflicts, and outlier detection to ensure dataset consistency [3]. For environmental applications, this may involve compiling biodegradation rates, bioaccumulation factors (BCF), or toxicity values from reliable sources. Dataset balancing techniques address unequal representation of active versus inactive compounds, which can significantly impact model performance [3] [1].

Molecular Descriptor Calculation and Selection

Following data curation, molecular descriptors are calculated using specialized software. These may range from simple constitutional descriptors to quantum-chemical properties requiring substantial computational resources [5]. Descriptor selection techniques identify the most informative, non-redundant parameters to avoid overfitting, especially critical for small datasets common in environmental chemical research [2] [1]. For predicting thyroid hormone system disruption, for example, descriptors reflecting electronic properties and molecular size often prove most relevant to receptor-binding interactions [2].

Model Training and Validation

The core modeling phase applies statistical or machine learning algorithms to establish quantitative relationships between selected descriptors and the target property. Internal validation using techniques like cross-validation assesses model stability, while external validation with completely independent test sets provides the truest measure of predictive power [3]. The applicability domain (AD) definition establishes the chemical space where model predictions can be considered reliable, a critical component for regulatory acceptance [4] [6]. Models must demonstrate both statistical significance and mechanistic interpretability to gain scientific acceptance, particularly for environmental hazard assessment [3] [6].

Experimental Protocols for QSAR Modeling

Protocol: HOMO Energy Calculation for Aromatic Compounds

This protocol details the calculation of HOMO energies as electronic descriptors for QSAR modeling of aromatic environmental chemicals, adapted from computational chemistry tutorials [5].

- Structure Building: Construct the molecular structure using a molecular builder interface (e.g., MOLDEN's ZMAT Editor). For substituted aromatics, build a phenyl ring and use the "Substitute atom by Fragment" function to add specific substituents.

- Quantum Chemical Calculation Setup: Select an ab initio quantum chemistry program (e.g., Gaussian, Firefly/PC GAMESS). Set the calculation method to geometry optimization with a standard basis set (e.g., 6-31G*).

- Job Execution and Monitoring: Submit the calculation job and monitor progress by examining the log file. For aromatic compounds of moderate size, computation typically requires several minutes.

- Result Extraction: Upon completion, open the output file and load the optimized geometry. Access the orbital analysis module to visualize the HOMO and record its energy value (reported in atomic units, typically Hartrees).

- Comparative Analysis: Repeat the procedure for structurally related compounds and compare HOMO energies to determine relative reactivity as electron donors, which may correlate with metabolic transformation rates or electrophilic toxicity.

Protocol: Polarizability Calculation for Bioaccumulation Assessment

Molecular polarizability serves as a valuable descriptor for predicting bioaccumulation potential and hydrophobic interactions in environmental fate modeling [5].

- Initial Structure Preparation: Obtain the 3D structure of the target compound either by building it manually or converting from SMILES notation using online translation tools. Save the structure as a 3D MOL file.

- Semi-empirical Calculation Configuration: Read the structure into computational software (e.g., MOLDEN) and select a semi-empirical method (e.g., MOPAC with PM6 parameter set) for efficient calculation of larger molecules.

- Polarizability-Specific Keywords: Set the calculation task to "Geometry Optimization" and include specific keywords (XYZ, STATIC, POLAR) in the job options to request polarizability calculation.

- Job Execution: Submit the calculation job. For typical drug-sized molecules, semi-empirical calculations generally complete within 20-60 seconds.

- Data Extraction: Examine the output file for the polarizability tensor components. Record the mean polarizability volume, reported in cubic Ångströms (ų), for use as a molecular descriptor in bioaccumulation QSAR models.

Applications in Environmental Chemicals Research

QSAR modeling has become indispensable for environmental hazard assessment, particularly for chemical categories where experimental data is limited or animal testing restrictions apply. The European Union's ban on animal testing for cosmetics has accelerated development and application of QSAR approaches for predicting environmental fate parameters of cosmetic ingredients [4].

Table 2: Recommended QSAR Models for Environmental Fate Assessment of Cosmetic Ingredients

| Environmental Fate Parameter | Recommended QSAR Models | Software Platform | Key Application Notes |

|---|---|---|---|

| Persistence (Biodegradation) | Ready Biodegradability IRFMN | VEGA | Higher performance for qualitative classification |

| BIOWIN | EPISUITE | Quantitative prediction with applicability domain | |

| Leadscope model | Danish QSAR Model | Regulatory acceptance under REACH | |

| Bioaccumulation (log Kow) | ALogP | VEGA | Direct measurement surrogate |

| KOWWIN | EPISUITE | Fragment-based method | |

| ADMETLab 3.0 | Standalone | Integrated platform with multiple descriptors | |

| Bioaccumulation (BCF) | Arnot-Gobas | VEGA | Mechanistic model approach |

| KNN-Read Across | VEGA | Similarity-based prediction | |

| Mobility (log Koc) | OPERA v. 1.0.1 | VEGA | Multiple parameter prediction |

| KOCWIN-Log Kow | VEGA | Hydrophobicity-based estimation |

For persistence assessment, the Ready Biodegradability model (VEGA), Leadscope model (Danish QSAR Database), and BIOWIN (EPISUITE) have demonstrated highest performance for cosmetic ingredients [4]. These models typically provide more reliable qualitative predictions (classifying compounds as biodegradable or persistent) than quantitative degradation rate estimates, especially when predictions fall within well-defined applicability domains [4].

In bioaccumulation assessment, multiple models address different aspects of this complex endpoint. For the log P (log Kow) parameter, ALogP (VEGA), ADMETLab 3.0, and KOWWIN (EPISUITE) models have shown particular relevance for cosmetic ingredients [4]. For bioconcentration factor (BCF) prediction, the Arnot-Gobas model (VEGA) incorporates mechanistic understanding of fish physiology, while the KNN-Read Across model (VEGA) applies similarity-based approaches [4].

For mobility assessment in soil systems, VEGA's OPERA and KOCWIN-Log Kow estimation models provide reliable predictions of the soil organic carbon-water partition coefficient (Koc), a key parameter determining chemical movement in terrestrial environments [4].

Table 3: Essential Software and Databases for Environmental QSAR Research

| Resource Name | Type | Key Functionality | Environmental Application Examples |

|---|---|---|---|

| VEGA | Integrated QSAR Platform | Multiple validated models for toxicity and environmental fate | Persistence, bioaccumulation, and mobility prediction for cosmetic ingredients [4] |

| EPISUITE | Software Suite | Physicochemical property and environmental fate prediction | KOWWIN for log P, BIOWIN for biodegradation prediction [4] |

| Danish QSAR Model | Database | Regulatory-focused QSAR predictions | Leadscope model for persistence assessment [4] |

| ADMETLab 3.0 | Web Platform | Integrated ADMET property prediction | log Kow prediction for bioaccumulation assessment [4] |

| MOLDEN | Visualization Interface | Molecular modeling and quantum chemistry calculations | HOMO/LUMO energy and polarizability calculations for descriptor generation [5] |

| OECD QSAR Toolbox | Regulatory Assessment | Grouping of chemicals and read-across | Regulatory hazard assessment for data-poor chemicals [6] |

Regulatory Framework and Future Perspectives

The regulatory acceptance of QSAR predictions continues to evolve, with the OECD (Q)SAR Assessment Framework (QAF) providing structured guidance for evaluating scientific rigor and establishing confidence in model predictions [6]. The QAF establishes principles for assessing both QSAR models and individual predictions, emphasizing transparent evaluation of uncertainties while maintaining flexibility for different regulatory contexts [6].

Machine learning approaches are increasingly dominating the QSAR landscape, with bibliometric analyses revealing exponential growth in publications since 2015, dominated by environmental science applications [7]. Algorithm development clusters around XGBoost, random forests, and support vector machines, with a distinct risk assessment cluster indicating migration of these tools toward dose-response and regulatory applications [7].

Future directions in environmental QSAR modeling include expanding chemical domain coverage, systematically coupling ML outputs with human health data, adopting explainable artificial intelligence workflows, and fostering international collaboration to translate computational advances into actionable chemical risk assessments [7]. As the field progresses, integration of QSAR with adverse outcome pathway (AOP) frameworks will strengthen mechanistic understanding and regulatory acceptance, particularly for complex endpoints like thyroid hormone system disruption [2].

The European Union's Registration, Evaluation, Authorisation and Restriction of Chemicals (REACH) regulation is undergoing a fundamental transformation, shifting from traditional animal testing toward advanced non-animal methodologies. This paradigm shift is driven by a powerful combination of ethical imperatives, scientific advancements, and regulatory policy changes. Central to this transition are Quantitative Structure-Activity Relationship (QSAR) models and other New Approach Methodologies (NAMs), which enable researchers to predict chemical toxicity and fill critical data gaps without animal use. The European Chemicals Agency (ECHA) has committed to a structured phase-out, with a roadmap aiming to revise REACH information requirements by 2026 to explicitly accept non-animal-derived data [8]. This technical guide examines the regulatory framework, computational tools, and experimental strategies essential for navigating this transition, providing researchers and regulatory professionals with a comprehensive toolkit for implementing animal-free safety assessments compliant with evolving REACH requirements.

The Driving Forces: Regulatory and Ethical Framework

The regulatory landscape for chemical safety assessment is evolving rapidly toward eliminating animal testing, creating both imperatives and opportunities for research and industry professionals.

EU Policy and Legislative Timeline

The European Union has established a long-standing policy of replacing, reducing, and refining animal testing (the 3Rs principles) [9]. Key legislative milestones include Directive 2010/63/EU, which sets the explicit goal of phasing out animal use for research and regulatory purposes in the EU as soon as scientifically possible [9]. The European Commission is now preparing a detailed "Roadmap Towards Phasing Out Animal Testing for Chemical Safety Assessments" with the intention to publish this comprehensive plan by the first quarter of 2026 at the latest [9]. This roadmap will outline specific milestones and actions to be implemented in the short to longer term, serving as a prerequisite for transitioning toward an animal-free regulatory system.

REACH Requirements and Animal Testing as Last Resort

Under REACH, animal tests must be conducted only as a last resort when all other means to generate necessary information have been exhausted [10]. The regulation mandates a specific data gathering strategy where registrants must first collect all available existing information, consider their specific information needs based on tonnage bands, identify missing information (data gaps), and only then generate new information [10]. The practical implementation of this strategy requires that for tests on environmental or human health properties, any new testing must use GLP-certified laboratories if animal testing is ultimately necessary, though this requirement does not apply to physicochemical testing [10].

Global Regulatory Alignment and Initiatives

This transition is not isolated to the EU. The U.S. Food and Drug Administration (FDA) has announced plans to phase out animal testing requirements for monoclonal antibodies and other drugs, promoting the use of AI-based computational models, cell lines, and organoid toxicity testing [11]. Similarly, the U.S. Environmental Protection Agency (EPA) is incorporating NAMs into regulatory decisions, using approaches such as high-throughput transcriptomics, harmful outcome pathways, and high-throughput toxicokinetics for chemical assessments [12]. China has also begun allowing alternative methods for certain product categories, such as imported cosmetics, indicating a global shift in regulatory toxicology paradigms [8].

Computational Tools and QSAR Modeling for Data Gap Filling

Computational methods, particularly QSAR models, provide powerful approaches for filling data gaps without animal testing. These methodologies leverage existing chemical data to predict toxicity endpoints for new substances.

Fundamental Principles of QSAR Modeling

QSAR models mathematically link a chemical compound's structure to its biological activity or properties based on the fundamental principle that structural variations directly influence biological activity [13]. These models use physicochemical properties and molecular descriptors of chemicals as predictor variables, with biological activity or other chemical properties serving as response variables [13]. The general mathematical expression for this relationship is:

Activity = f(descriptors) + ϵ

Where "descriptors" are numerical representations of molecular structures, and "ϵ" represents the error not explained by the model [13]. QSAR modeling plays a crucial role in prioritizing compounds for further development, predicting properties, guiding chemical modifications, and most importantly, reducing animal testing by serving as validated alternatives in regulatory frameworks [13].

QSAR Model Development Workflow

Developing robust QSAR models requires a systematic workflow to ensure predictive reliability and regulatory acceptance:

- Dataset Compilation: Curate a high-quality dataset of chemical structures with associated biological activities from reliable sources, ensuring diversity and relevance to the chemical space of interest [13].

- Descriptor Calculation: Compute molecular descriptors that quantify structural, physicochemical, and electronic properties using software tools such as PaDEL-Descriptor, Dragon, or RDKit [13].

- Feature Selection: Apply selection techniques (filter, wrapper, or embedded methods) to identify the most relevant descriptors and avoid model overfitting [13].

- Model Building: Implement appropriate algorithms including Multiple Linear Regression (MLR), Partial Least Squares (PLS), Support Vector Machines (SVM), or Neural Networks (NN) based on dataset characteristics [13].

- Validation: Assess model performance using internal validation (cross-validation) and external validation with an independent test set [13].

- Applicability Domain: Define the chemical space where the model can make reliable predictions [13].

The following workflow diagram illustrates the QSAR model development process:

The OECD QSAR Toolbox: A Key Regulatory Tool

The OECD QSAR Toolbox is a freely available software application that supports reproducible and transparent chemical hazard assessment [14]. It offers critical functionalities for regulatory compliance under REACH:

- Data Retrieval: Access to approximately 63 databases covering over 155,000 chemicals and 3.3 million experimental data points [14].

- Metabolism Simulation: Capability to simulate metabolism and transformation products across different organisms and conditions [14].

- Analogue Identification: Functionality to find structurally and mechanistically defined analogues for read-across justification [14].

- Category Building: Tools to build and assess chemical categories for read-across and trend analysis [14].

- Data Gap Filling: Modules for filling data gaps using read-across, trend analysis, or external QSAR models [14].

- Reporting: Automated generation of assessment reports to ensure transparency and regulatory acceptance [14].

The Toolbox has been downloaded over 30,000 times globally, with significant adoption across Europe, Asia, and North America, indicating its widespread regulatory acceptance [14].

Advanced Machine Learning Approaches

Beyond traditional QSAR, advanced machine learning solutions like DeepAutoQSAR provide automated, scalable platforms for training and applying predictive machine learning models [15]. These systems offer key capabilities including automated descriptor computation and model building with multiple machine learning architectures, customization with project-specific descriptors, uncertainty estimation for domain of applicability assessment, and visualization of atomic contributions toward target properties [15]. Such advanced platforms support both classical ML methods for smaller datasets and modern deep learning approaches for large-scale QSAR modeling, making them particularly valuable for complex toxicity endpoints [15].

Experimental Protocols and Alternative Methods

While computational approaches are essential, integrated testing strategies often incorporate advanced non-animal experimental methods for toxicity assessment.

Validated Alternative Methods for Key Endpoints

Substantial progress has been made in validating alternative methods for specific toxicity endpoints relevant to REACH. The table below summarizes key validated methods and their regulatory status:

Table 1: Validated Non-Animal Methods for Key Toxicity Endpoints

| Toxicity Endpoint | Method Name | Test Type | Regulatory Acceptance |

|---|---|---|---|

| Skin Corrosion | Reconstructed Human Epidermis (RHE) tests: Episkin, Epiderm, SkinEthic | In vitro | OECD TG 431 [16] |

| Skin Irritation | Reconstructed Human Epidermis methods: Episkin, LabCyte EPI-MODEL24 | In vitro | OECD TG 439 [16] |

| Skin Sensitization | ARE-Nrf2 Luciferase Test (KeratinoSens) | In vitro | OECD TG 442D [16] |

| Skin Sensitization | Direct Peptide Reactivity Assay (DPRA) | In chemico | OECD TG 442C [16] |

| Skin Sensitization | Human Cell Line Activation Test (h-CLAT) | In vitro | OECD TG 442C [16] |

| Developmental Toxicity | Embryonic Stem Cell Test | In vitro | ESAC (2002) [16] |

| Eye Irritation | Bovine Corneal Opacity and Permeability (BCOP) | In vitro | OECD TG 437 [16] |

| Eye Irritation | Isolated Chicken Eye (ICE) | Ex vivo | OECD TG 438 [16] |

Protocol: Skin Sensitization Assessment Using Integrated Testing Strategies

Skin sensitization is one of the most advanced areas for non-animal assessment. The following protocol outlines an integrated approach to skin sensitization testing:

Objective: To assess the skin sensitization potential of a chemical without animal testing, following the Adverse Outcome Pathway (AOP) for skin sensitization.

Principle: This Integrated Approach to Testing and Assessment (IATA) combines multiple non-animal methods to cover key events in the skin sensitization AOP: molecular initiation (protein binding), cellular response (keratinocyte activation), and immune response (dendritic cell activation) [16].

Procedure:

Sample Preparation: Prepare test chemical at appropriate concentrations in suitable solvents based on solubility and chemical stability.

Direct Peptide Reactivity Assay (DPRA):

- Incubate test chemical with synthetic peptides containing either cysteine or lysine.

- Use HPLC to measure peptide depletion after 24 hours.

- Classify reactivity based on mean peptide depletion: <6.38% (low), 6.38-22.62% (moderate), >22.62% (high).

KeratinoSens Assay:

- Expose recombinant KeratinoSens cells (containing an antioxidant response element linked to luciferase reporter) to test chemical.

- Measure luciferase activity after 48 hours.

- Determine EC1.5 value (concentration causing 1.5-fold induction) and evaluate cell viability.

Human Cell Line Activation Test (h-CLAT):

- Expose THP-1 cells (human monocytic leukemia cell line) to test chemical.

- Measure expression of CD86 and CD54 surface markers by flow cytometry after 24 hours.

- Determine CV75 (concentration causing 75% cell viability) and evaluate marker expression.

Data Integration:

- Combine results from all three assays using a predefined decision tree or weight-of-evidence approach.

- Classify chemicals as sensitizers (subcategorized as weak, moderate, or strong) or non-sensitizers.

This integrated approach has been shown to provide accuracy comparable to the traditional Local Lymph Node Assay (LLNA) while eliminating animal use [16].

New Approach Methodologies (NAMs) in Development

Beyond validated methods, numerous NAMs are under development and evaluation for more complex toxicity endpoints:

- Organ-on-a-Chip Systems: Microfluidic devices containing human cells that simulate organ function and response, showing particular promise for repeated dose toxicity assessment [12].

- High-Content Imaging and Transcriptomics: Approaches using high-throughput gene expression profiling to predict toxicological outcomes, with applications in neurodevelopmental toxicity and endocrine disruption [12].

- Stem Cell-Based Assays: Methods using human embryonic stem cells or induced pluripotent stem cells to model developmental toxicity and organ-specific effects [12].

- Computational Toxicokinetics: Approaches like high-throughput toxicokinetic (HTTK) modeling that combine in vitro metabolism data with physiological models to predict in vivo chemical concentrations [12].

The following diagram illustrates the integrated testing strategy for skin sensitization:

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of animal-free testing strategies requires specific research tools and platforms. The table below details essential resources for building a non-animal toxicology laboratory:

Table 2: Essential Research Tools for Animal-Free Chemical Assessment

| Tool/Platform | Type | Key Function | Regulatory Relevance |

|---|---|---|---|

| OECD QSAR Toolbox | Software | Data retrieval, read-across, category formation | REACH compliance for data gap filling [14] |

| Reconstructed Human Epidermis (RhE) Models | In vitro test system | Skin corrosion/irritation testing | OECD TG 431, 439 [16] |

| KeratinoSens Cell Line | In vitro test system | Detection of skin sensitizers via Nrf2 activation | OECD TG 442D [16] |

| THP-1 Cell Line | In vitro test system | Detection of dendritic cell activation in skin sensitization | OECD TG 442E [16] |

| DeepAutoQSAR | Machine learning platform | Automated QSAR model training and prediction | Predictive toxicology for complex endpoints [15] |

| Organ-on-a-Chip Systems | Advanced in vitro model | Repeated dose toxicity assessment | Next-generation risk assessment [12] |

| Metabolomic Platforms | Analytical technology | Biomarker discovery and pathway analysis | Mechanistic toxicology [12] |

Implementation Strategy and Change Management

Transitioning to animal-free testing requires careful planning and organizational commitment. ECHA has established a Change Management Working Group (CM WG) specifically to address these implementation challenges [9]. This group develops indicators to monitor progress toward replacing animal testing and creates collaboration models to promote trust among stakeholders and build confidence in non-animal assessment strategies [9].

Data Gathering Strategy Under REACH

A systematic, tiered approach to data gathering ensures compliance with REACH while minimizing animal testing:

- Collect Available Information: Gather all existing study results, scientific literature, and handbook data on the substance [10].

- Evaluate Information Needs: Identify specific information requirements based on tonnage bands and regulatory mandates [10].

- Identify Data Gaps: Compare existing information with requirements to determine missing data [10].

- Prioritize Non-Animal Methods: Implement QSAR, read-across, and in vitro methods before considering any animal testing [10].

This strategy ensures that animal testing remains truly a last resort, as required by REACH legislation.

Read-Across and Category Approaches

Read-across is a powerful data gap filling technique where properties of a data-poor "target" substance are predicted from similar, data-rich "source" substances [14]. The OECD QSAR Toolbox facilitates this approach through:

- Mechanistic Profiling: Identifying chemicals that share the same toxicological mechanisms based on structural alerts [14].

- Metabolic Similitude: Grouping chemicals that share common metabolic pathways or transformation products [14].

- Empirical Data Analysis: Building categories based on experimental data patterns across multiple endpoints [14].

Successful read-across requires rigorous justification of category consistency and documented scientific rationale to ensure regulatory acceptance.

Overcoming Implementation Barriers

The transition to animal-free testing presents several challenges that organizations must address:

- Technical Complexity: Some toxicity endpoints, particularly reproductive toxicity and repeated dose toxicity, involve complex biological interactions that are difficult to model with current non-animal methods [8].

- Regulatory Validation: The process for validating new alternative methods can be time-consuming, though initiatives like the EPA's coordination on NAMs are accelerating this timeline [11].

- Expertise Development: Implementing alternative approaches requires multidisciplinary expertise in computational toxicology, cell biology, and regulatory science [9].

- Cost Considerations: While alternative methods generally reduce long-term testing costs, initial implementation requires investment in technology, training, and method transfer [8].

The regulatory imperative to reduce animal testing under REACH is accelerating, driven by scientific advances and ethical considerations. Several key developments will shape the future landscape:

Roadmap Implementation and Timeline

The EU's detailed roadmap for phasing out animal testing, scheduled for publication by Q1 2026, will establish specific milestones and actions for transitioning to animal-free regulatory systems [9]. This includes the revision of REACH information requirements by 2026 to enable explicit acceptance of non-animal-derived data [8]. While complete elimination of animal testing for complex endpoints may extend into the 2030s, the direction is clear and irreversible [8].

Scientific and Technological Advancements

Emerging technologies will continue to enhance the toolbox available for animal-free safety assessment:

- Advanced Organ-on-a-Chip Systems: Multi-organ platforms that enable the study of complex toxicological interactions [12].

- High-Resolution Transcriptomics: Methods for detecting subtle biological changes that predict adverse outcomes at earlier timepoints [12].

- Artificial Intelligence and Machine Learning: Enhanced prediction models that integrate diverse data sources including chemical structures, biological pathways, and existing toxicological data [15].

- Human Biomonitoring Integration: Approaches that incorporate real-world human exposure data into safety assessment [11].

Global Harmonization

International alignment on alternative methods will be crucial for global chemical management. Organizations such as the OECD play a vital role in harmonizing test guidelines and validation processes across regions [8]. The increasing acceptance of NAMs by regulatory agencies in the United States, Asia, and other regions suggests that the transition away from animal testing will continue to gain global momentum [8] [11].

In conclusion, the regulatory imperative to reduce animal testing under REACH represents both a significant challenge and opportunity for the scientific community. By embracing QSAR modeling, New Approach Methodologies, and integrated testing strategies, researchers can not only meet regulatory requirements but also advance the science of toxicology toward more human-relevant, predictive, and efficient safety assessment. The successful implementation of these approaches requires continued collaboration between researchers, regulators, and industry stakeholders to build confidence in animal-free methods while maintaining rigorous safety standards for chemical protection of human health and the environment.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a computational approach that mathematically links a chemical compound's molecular structure to its biological activity or physicochemical properties [13]. These models operate on the fundamental principle that structural variations directly influence biological activity, enabling researchers to predict the behavior of untested chemicals based on their structural characteristics [13]. In environmental chemicals research, QSAR models have become indispensable tools for screening, ranking, and prioritizing chemicals that may pose hazards to humans and ecosystems, thereby supporting regulatory decision-making while reducing reliance on animal testing [17] [18]. The robustness of QSAR modeling stems from its ability to transform molecular structures into numerical descriptors, establish quantitative relationships between these descriptors and biological endpoints, and apply these relationships for predictive purposes across chemical classes [13].

The evolution of QSAR methodologies has progressed from traditional linear models based solely on chemical descriptors to advanced hybrid approaches that incorporate both chemical and biological information [18]. Recent innovations include Bio-QSARs that exploit biological information for exceptional predictive power in ecotoxicity assessment [18] and QSAR-QSIIR (Quantitative Structure In vitro-In vivo Relationship) models that bridge in vitro and in vivo data for more accurate predictions of parameters like bioconcentration factors [19]. These advancements, coupled with the integration of machine learning algorithms, have significantly expanded the applicability and reliability of QSAR models in environmental research.

Molecular Descriptors in QSAR

Definition and Fundamental Role

Molecular descriptors are numerical representations that quantify the structural, physicochemical, and electronic properties of molecules [13]. They serve as the independent variables in QSAR models, providing the quantitative foundation that links molecular structure to biological activity or environmental behavior. By encoding chemical information into mathematical form, descriptors enable the statistical identification of patterns that would be impossible to discern through chemical intuition alone. The selection and calculation of appropriate descriptors is therefore critical to developing robust QSAR models, as they must capture the structural features relevant to the endpoint being predicted [13].

Comprehensive Classification of Molecular Descriptors

Molecular descriptors can be categorized into several distinct classes based on the molecular properties they represent. The table below summarizes the primary descriptor types used in QSAR modeling for environmental research:

Table 1: Classification of Molecular Descriptor Types in QSAR Modeling

| Descriptor Type | Description | Examples | Applications in Environmental Research |

|---|---|---|---|

| Constitutional | Describe molecular composition without connectivity | Molecular weight, atom counts, bond counts | Preliminary screening of chemical inventories |

| Topological | Based on molecular connectivity and branching patterns | Molecular connectivity indices, Wiener index | Predicting bioavailability and degradation |

| Electronic | Characterize electron distribution and reactivity | Partial charges, HOMO/LUMO energies, dipole moment | Modeling reactivity in toxicological pathways |

| Geometric | Describe 3D molecular shape and size | Molecular volume, surface area, principal moments of inertia | Assessing receptor binding and transport properties |

| Thermodynamic | Quantify energy-related properties | Log P (octanol-water partition coefficient), solubility, vapor pressure | Predicting environmental fate, distribution, and bioaccumulation |

The octanol-water partition coefficient (Log P) exemplifies a critically important thermodynamic descriptor in environmental QSARs, as it directly influences a chemical's potential for bioaccumulation and biomagnification in food chains [4]. Recent studies have highlighted Log P as a key predictor in bioconcentration factor (BCF) models, with tools like ALogP (VEGA), ADMETLab 3.0, and KOWWIN (EPISUITE) showing particularly strong performance for this descriptor [4].

Descriptor Calculation and Selection Methods

The calculation of molecular descriptors employs specialized software tools that transform chemical structures into numerical representations. Commonly used platforms include PaDEL-Descriptor, Dragon, RDKit, Mordred, ChemAxon, and OpenBabel [13]. These tools can generate hundreds to thousands of descriptors for a given molecule, necessitating careful selection to avoid overfitting and improve model interpretability.

Feature selection techniques employed in QSAR modeling include:

- Filter Methods: Descriptors are ranked based on their individual correlation or statistical significance with the endpoint [13].

- Wrapper Methods: The modeling algorithm evaluates different descriptor subsets to identify the most informative combination [13].

- Embedded Methods: Feature selection occurs intrinsically during model training, as implemented in LASSO regression or random forests [13].

Optimized descriptor selection is exemplified in recent QSAR-QSIIR research, where investigators selected 17 traditional molecular descriptors and 5 bioactivity descriptors from an initial pool of more than 200 molecular descriptors and 25 biological activity descriptors to construct highly accurate bioconcentration factor prediction models [19].

Endpoints in Environmental QSAR

Defining Endpoints and Their Regulatory Significance

In QSAR modeling, endpoints represent the measurable biological activities, toxicological effects, or physicochemical properties that models aim to predict [13]. For environmental research, these endpoints typically reflect key processes in chemical fate, transport, exposure, and effects on biological systems. Endpoints serve as the dependent variables in QSAR models and are directly linked to regulatory requirements for chemical risk assessment under frameworks such as REACH (Registration, Evaluation, Authorisation and Restriction of Chemicals) and CLP (Classification, Labeling and Packaging) [4].

Critical Endpoint Categories for Environmental Assessment

Environmental QSAR models address endpoints spanning multiple disciplinary domains, from physicochemical properties to ecological and human health effects. The following table systematizes the primary endpoint categories relevant to environmental chemicals research:

Table 2: Key Endpoint Categories in Environmental QSAR Modeling

| Endpoint Category | Specific Endpoints | Regulatory Relevance | Example Models |

|---|---|---|---|

| Physicochemical Properties | Log P, water solubility, vapor pressure, soil adsorption coefficient (Koc) | Environmental fate assessment, exposure modeling | OPERA, KOCWIN [4] |

| Environmental Fate & Transport | Biodegradation, photodegradation, hydrolysis, persistence | PBT assessment (Persistence, Bioaccumulation, Toxicity) | BIOWIN, Ready Biodegradability IRFMN [4] |

| Bioaccumulation | Bioconcentration Factor (BCF), Bioaccumulation Factor (BAF) | Chemical prioritization, trophic transfer assessment | Arnot-Gobas, KNN-Read Across [4], QSAR-QSIIR [19] |

| Ecotoxicological Effects | Aquatic toxicity (fish, daphnia, algae), terrestrial toxicity | Ecological risk assessment, safety thresholds | Bio-QSAR [18], TEST models [20] |

| Human Health Hazards | Acute toxicity, repeated dose toxicity, mutagenicity, carcinogenicity | Health risk assessment, chemical classification | TEST models [20], QSAR Toolbox profiles [14] |

The QSAR Toolbox exemplifies the comprehensive nature of modern endpoint prediction, incorporating 254 (Q)SAR models spanning 28 for physicochemical properties, 41 for environmental fate and transport, 39 for ecotoxicological information, and 146 for human health hazards [21].

Endpoint-Specific Methodological Considerations

Different endpoints require specialized modeling approaches. For bioaccumulation assessment, recent research demonstrates that hybrid QSAR-QSIIR models combining molecular descriptors with bioactivity descriptors achieve superior prediction accuracy for bioconcentration factors (BCF), with R² values of 0.8575 for verification sets and 0.7924 for test sets [19]. For persistence assessment, models like BIOWIN (EPISUITE) and the Ready Biodegradability IRFMN model (VEGA) have shown particularly strong performance for cosmetic ingredients and other chemical classes [4].

A critical consideration in endpoint prediction is the distinction between qualitative and quantitative predictions. Recent comparative studies suggest that qualitative predictions aligned with REACH and CLP regulatory criteria generally demonstrate higher reliability than quantitative predictions, particularly when the chemical being assessed falls within the model's applicability domain [4].

Biological Basis for QSAR Predictions

Fundamental Principles of Biological Prediction

The biological basis of QSAR predictions rests on the fundamental principle that a chemical's biological activity arises from its molecular structure and properties [13]. This structure-activity relationship enables the extrapolation of biological behavior from chemical characteristics, forming the conceptual foundation for all QSAR modeling. The biological relevance of QSAR predictions has evolved from simple correlative relationships to mechanistically grounded models informed by adverse outcome pathways (AOPs) and mode-of-action classifications [22] [20].

Modern QSAR implementations increasingly incorporate biological context through various strategies. The Bio-QSAR approach enhances predictive power by integrating biological information about target species alongside chemical descriptors, resulting in models with exceptional accuracy (R² up to 0.92) for aquatic toxicity prediction [18]. Similarly, mode-of-action based QSARs first classify chemicals by their toxicological mechanism before applying specific quantitative models, thereby incorporating biological context directly into the prediction framework [20].

Molecular Initiating Events and Adverse Outcome Pathways

At the most fundamental biological level, QSAR predictions often target molecular initiating events (MIEs) within adverse outcome pathways (AOPs) [22]. These MIEs represent the initial interaction between a chemical and biological macromolecules that triggers subsequent cascades of effects at higher levels of biological organization. For endocrine-disrupting chemicals, for instance, MIEs may include binding to hormone receptors, interference with hormone synthesis, or disruption of transport proteins [22].

Recent reviews have identified 86 different QSAR models specifically addressing thyroid hormone system disruption, focusing on MIEs such as receptor binding and transport protein interactions [22]. These models demonstrate the trend toward biologically mechanistic QSAR development that aligns with AOP frameworks to enhance regulatory utility and scientific validity.

Incorporating Biological Complexity in Next-Generation QSAR

Advanced QSAR methodologies now explicitly address biological complexity through several innovative approaches:

- Bio-QSARs: These models incorporate biological descriptors such as species taxonomy, physiological traits, and Dynamic Energy Budget parameters alongside chemical descriptors to enable both cross-chemical and cross-species predictions [18].

- QSAR-QSIIR Hybrid Models: By integrating quantitative structure-in vitro-in vivo relationships, these approaches bridge between high-throughput in vitro data and in vivo outcomes, improving prediction accuracy for complex endpoints like bioconcentration [19].

- Mixed-Effects Modeling: Techniques like Gaussian Process Boosting accommodate biological variability by combining tree-boosting with mixed-effects modeling, allowing for variable test durations and inter-species differences [18].

The biological basis of QSAR predictions continues to expand with the incorporation of metabolomic information, protein-binding specificities, and pathway-level effects, moving beyond single-target approaches to network-based assessments that better reflect biological systems complexity.

Integrated Workflow for QSAR Modeling

Comprehensive QSAR Methodology

The development of robust QSAR models follows a systematic workflow that integrates descriptor calculation, endpoint selection, and biological validation. The following diagram illustrates the key stages in this process:

Figure 1: QSAR Modeling Workflow

Experimental Protocols for Key QSAR Endpoints

Bioconcentration Factor (BCF) Prediction Protocol

The following protocol details the methodology for predicting bioconcentration factors using hybrid QSAR-QSIIR approaches as described in recent literature [19]:

Dataset Compilation: Curate a comprehensive dataset of chemicals with experimentally measured BCF values from peer-reviewed literature and regulatory sources. Ensure representation across diverse chemical classes and taxonomic groups.

Descriptor Calculation and Selection:

- Calculate an initial pool of >200 molecular descriptors using software such as Dragon or PaDEL-Descriptor.

- Compute bioactivity descriptors from high-throughput screening assays where available.

- Apply feature selection algorithms to identify the most predictive descriptor subset (typically 15-25 descriptors).

Model Training:

- Implement optimized machine learning algorithms such as 4-MLP (Multi-Layer Perceptron).

- Partition data into training (≈70%), verification (≈15%), and test sets (≈15%) using stratified sampling.

- Train multiple model architectures and select based on verification set performance.

Validation and Application:

- Perform internal validation through k-fold cross-validation (typically 5-10 folds).

- Conduct external validation using the hold-out test set.

- Calculate performance metrics (R², Q², RMSE) and compare against predefined acceptance criteria.

- Apply validated models to predict BCF for target chemicals (e.g., BTEX compounds in aquatic products).

Environmental Persistence Assessment Protocol

For predicting chemical persistence using QSAR models [4]:

Endpoint Classification: Classify chemicals according to regulatory persistence criteria (e.g., REACH definitions for water, soil, and sediment compartments).

Model Selection: Identify appropriate models based on chemical domain and endpoint specificity:

- Ready Biodegradability IRFMN model (VEGA) for rapid screening.

- BIOWIN model (EPISUITE) for comprehensive persistence assessment.

- Leadscope model (Danish QSAR) for mechanism-based evaluation.

Prediction and Interpretation:

- Execute predictions across multiple models where feasible.

- Apply applicability domain filters to identify reliable predictions.

- Generate consensus predictions from multiple models to reduce uncertainty.

- Classify chemicals according to regulatory thresholds (e.g., persistent, readily biodegradable).

Advanced Machine Learning Approaches in QSAR

Contemporary QSAR modeling employs diverse machine learning algorithms, each with specific strengths for different prediction tasks:

Table 3: Machine Learning Algorithms in QSAR Modeling

| Algorithm Type | Examples | Advantages | Limitations | Typical Applications |

|---|---|---|---|---|

| Linear Methods | Multiple Linear Regression (MLR), Partial Least Squares (PLS) | High interpretability, resistance to overfitting | Limited capacity for complex non-linear relationships | Single-mechanism chemical sets |

| Tree-Based Methods | Random Forest, Gradient Boosting | Handles non-linear relationships, robust to outliers | Lower interpretability, requires careful tuning | Heterogeneous chemical datasets |

| Neural Networks | Multi-Layer Perceptron (MLP), Deep Learning | Captures complex interactions, high predictive power | High computational demand, risk of overfitting | Large-scale chemical datasets |

| Hybrid Methods | Gaussian Process Boosting, Mixed-Effects ML | Accommodates hierarchical data, biological variability | Implementation complexity | Cross-species toxicity prediction [18] |

The hierarchical methodology implemented in the EPA's Toxicity Estimation Software Tool (TEST) exemplifies the integration of multiple algorithms, where predictions are generated through weighted averages of models applied to structurally similar chemical clusters [20].

Research Reagent Solutions: Essential Tools for QSAR Implementation

The practical implementation of QSAR modeling relies on specialized software tools and computational resources that constitute the essential "reagent solutions" for in silico research. The following table details key resources available to researchers:

Table 4: Essential Research Reagent Solutions for QSAR Modeling

| Tool Category | Specific Tools | Key Functionality | Application in Environmental QSAR |

|---|---|---|---|

| Descriptor Calculation | PaDEL-Descriptor, Dragon, RDKit | Generate molecular descriptors from chemical structures | Calculate 1D-3D molecular features for model development |

| Integrated QSAR Platforms | QSAR Toolbox, VEGA, TEST | Comprehensive workflows from data collection to prediction | Regulatory assessment, data gap filling for hazard endpoints [14] [20] |

| Specialized Prediction Tools | EPI Suite, ADMETLab 3.0, Danish QSAR | Endpoint-specific model implementation | Persistence, bioaccumulation, toxicity prediction [4] |

| Model Development Environments | R, Python (scikit-learn), Weka | Custom model building and validation | Algorithm implementation, feature selection, performance evaluation |

| Data Resources | QSAR Toolbox Databases (3.2M+ data points) | Experimental data for training and validation | Read-across, category development, model training [14] |

These tools collectively enable the entire QSAR workflow, from initial data collection and descriptor calculation through model development, validation, and application. The QSAR Toolbox deserves particular emphasis as it provides access to approximately 63 databases containing over 155,000 chemicals and 3.3 million experimental data points, making it an invaluable resource for environmental chemical assessment [14].

Molecular descriptors, biological endpoints, and the mechanistic basis for prediction constitute the foundational triad of QSAR modeling in environmental chemicals research. Molecular descriptors provide the quantitative translation of chemical structure into model-ready parameters, while endpoints represent the biological phenomena and environmental behaviors that models aim to predict. The biological basis connecting these elements continues to evolve from correlative relationships toward mechanistically grounded predictions informed by adverse outcome pathways and mode-of-action classification.

Contemporary QSAR methodologies have achieved significant advances through the integration of machine learning, the development of hybrid QSAR-QSIIR approaches, and the creation of biological-enhanced Bio-QSAR models. These innovations have substantially expanded the applicability domains and predictive power of QSAR models while enhancing their biological relevance. The systematic workflow encompassing data curation, descriptor selection, model training, and rigorous validation remains essential for developing reliable predictions.

As QSAR modeling continues to evolve, emerging trends point toward greater incorporation of biological complexity, expanded applicability domains, and increased integration with new approach methodologies (NAMs). These developments will further solidify the role of QSAR as an indispensable tool in environmental chemical assessment, supporting the transition toward more efficient, ethical, and mechanistically informed chemical safety evaluation.

The challenge of assessing the potential hazards of tens of thousands of chemicals in the environment with limited traditional toxicity data has driven a paradigm shift in toxicology. The Adverse Outcome Pathway (AOP) framework has emerged as a critical tool for organizing biological information to support chemical safety assessment [23] [24]. This conceptual framework provides a structured approach for connecting mechanistic data to adverse outcomes of regulatory concern, thereby enabling more predictive toxicology. Within the context of Quantitative Structure-Activity Relationship (QSAR) modeling for environmental chemicals research, AOPs offer a biologically-grounded scaffold for interpreting computational predictions [25]. By framing chemical perturbations within a causal pathway leading to adverse effects, the AOP framework bridges the gap between molecular interactions predicted by QSAR models and adverse outcomes relevant to risk assessors and regulators.

The AOP concept represents an evolution of prior pathway-based approaches, building upon mode of action (MOA) analysis to create a chemically-agnostic, modular framework for organizing toxicological knowledge [26]. Its development aligns with the vision of "Toxicity Testing in the 21st Century," which emphasizes the use of in vitro methods and computational approaches to increase the depth and breadth of toxicological information while reducing animal testing [26]. For QSAR modelers working with environmental chemicals, the AOP framework provides the contextual basis for relating chemical structure to biological activity across multiple levels of biological organization.

Core Concepts and Principles of the AOP Framework

Foundational Definitions and Components

An Adverse Outcome Pathway is a conceptual construct that depicts a sequential chain of causally linked events beginning with a molecular interaction and culminating in an adverse outcome relevant to risk assessment [23] [27]. The core components of an AOP include:

- Molecular Initiating Event (MIE): The initial point of chemical interaction with a biomolecule within an organism, such as a chemical binding to a specific receptor or enzyme [23] [27]. The MIE represents the direct interaction between a stressor (chemical or non-chemical) and a biological target.

- Key Events (KEs): Measurable biological changes that occur at different levels of biological organization (cellular, tissue, organ) following the MIE [23]. These events are essential for progression along the pathway.

- Key Event Relationships (KERs): Descriptions of the causal or predictive linkages between two key events, explaining how one event leads to the next [23] [27]. KERs are supported by evidence of biological plausibility, empirical support, and quantitative understanding.

- Adverse Outcome (AO): The adverse effect at the individual or population level that is relevant for regulatory decision-making, such as impaired reproduction, organ toxicity, or population decline [23] [27].

The AOP framework is intentionally chemically-agnostic, meaning that it describes biological response pathways that can be initiated by any stressor capable of interacting with the specified MIE [23] [26]. This separation of the biological pathway from specific chemical properties enables broader application and facilitates the use of AOPs for predicting effects of multiple chemicals sharing a common MIE.

The "Biological Dominos" Analogy and AOP Networks

The sequential nature of AOPs is often described using a "biological dominos" analogy [23]. In this analogy, the MIE represents the first domino in a sequence. If this initial interaction is sufficiently strong, it triggers a cascade of subsequent events (key events) at increasingly complex levels of biological organization, ultimately leading to the adverse outcome. Each key event is viewed as "essential," meaning if it does not occur, none of the downstream key events will follow [23].

While individual AOPs are often depicted as linear sequences, the framework accommodates biological complexity through AOP networks [23] [24]. These networks consist of multiple AOPs linked by shared key events and key event relationships, creating a more realistic representation of biological systems where pathways intersect and interact. As more AOPs are developed, these networks become increasingly comprehensive, capturing the complexity of real biological systems and enabling predictions of interactive effects between different stressors [23].

Table 1: Core Components of an Adverse Outcome Pathway

| Component | Definition | Example |

|---|---|---|

| Molecular Initiating Event (MIE) | Initial interaction between stressor and biomolecule | Chemical binding to estrogen receptor |

| Key Event (KE) | Measurable biological change at different organizational levels | Altered gene expression in liver cells |

| Key Event Relationship (KER) | Causal linkage between two key events | How altered gene expression leads to tissue damage |

| Adverse Outcome (AO) | Adverse effect relevant for regulatory decision-making | Impaired reproduction in fish populations |

Fundamental AOP Principles

The development and application of AOPs are guided by five fundamental principles that ensure scientific rigor and practical utility [23] [26]:

- AOPs are not stressor-specific: AOPs depict generalized sequences of biological effects that can be initiated by any stressor capable of interacting with the specified MIE, enabling application to multiple chemicals [23].

- AOPs are modular: Each AOP consists of units (key events) and linkages (key event relationships) that can be reassembled in different configurations, facilitating the construction of AOP networks [23].

- AOPs are living documents: As knowledge evolves, AOPs can be updated, refined, or expanded to incorporate new evidence and understanding [23].

- AOP networks are the functional unit for prediction: Individual AOPs are simplifications of biology, while networks of interconnected AOPs provide more realistic and comprehensive models for prediction [23].

- AOPs are tools for evaluating biological effects, not risk assessments: AOPs inform hazard identification but do not explicitly address exposure, which is required for complete risk assessment [23].

The AOP Framework and QSAR Modeling: A Strategic Integration

Bridging Molecular Interactions and Adverse Outcomes

The integration of QSAR modeling with the AOP framework creates a powerful approach for predicting chemical hazards [25]. QSAR models excel at predicting molecular interactions—precisely the types of events that serve as MIEs in AOPs. By positioning QSAR predictions within the context of an AOP, researchers can establish a causal connection between predicted molecular interactions and adverse outcomes of regulatory concern [25]. This integration addresses a fundamental challenge in computational toxicology: how to interpret molecular-level predictions in terms of meaningful adverse effects at the organism or population level.

The AOP framework simplifies complex systemic endpoints into discrete, measurable events at the molecular and cellular levels [25]. This simplification makes these endpoints more amenable to QSAR modeling, as relationships between chemical structure and these simpler events are often more straightforward to capture than relationships with complex apical outcomes [25]. For environmental chemicals research, this approach enables prioritization of chemicals based on their potential to initiate adverse outcome pathways, guiding targeted testing and risk assessment efforts.

AOP-Informed QSAR Model Development

The development of QSAR models for predicting MIE-related activity involves several methodological considerations [25]:

- Target Selection: AOP knowledge bases identify specific protein targets (receptors, enzymes, transporters) associated with MIEs upstream of adverse outcomes such as liver steatosis, cholestasis, nephrotoxicity, and developmental neurotoxicity [25].

- Bioactivity Data Curation: High-quality bioactivity data from sources like ChEMBL are curated and converted to binary classifications (active/inactive) based on standardized activity thresholds (e.g., 10,000 nM) [25].

- Model Building and Validation: Multiple machine learning algorithms are applied with comprehensive hyperparameter optimization, followed by rigorous external validation to assess predictive performance [25].

This approach has demonstrated strong predictive performance, with balanced accuracy exceeding 0.80 for most MIE targets, highlighting the utility of AOP-informed QSAR models for chemical screening and prioritization [25].

Quantitative AOPs: From Qualitative Description to Predictive Modeling

The Spectrum of Quantitative Approaches

While qualitative AOPs provide valuable conceptual frameworks, the development of quantitative AOPs (qAOPs) represents a critical advancement for predictive toxicology [28]. Quantitative AOPs incorporate mathematical relationships that describe how changes in the magnitude or timing of upstream key events predict changes in downstream events, ultimately enabling prediction of the adverse outcome under specific exposure conditions [28]. The continuum of quantitative approaches includes:

- Quantitative Key Event Relationships (qKERs): Mathematical models describing the relationship between two specific key events, which may take the form of regression equations, response-response relationships, or more complex computational models [28].

- Partial qAOPs: Quantitative models that describe relationships for more than one key event relationship within an AOP but not the entire pathway [28].

- Full qAOP models: Comprehensive mathematical constructs that model the dose-response or response-response relationships for all key event relationships in an AOP [28].

The selection of modeling approaches for qAOP development depends on the available data and the specific questions being addressed. Useful methods range from statistical models and Bayesian networks to ordinary differential equations and individual-based models [28].

Building Quantitative AOP Models

The development of qAOP models follows a systematic process [28]:

- Question Formulation: Clearly define the assessment problem and the level of biological fidelity needed for the model to support decision-making.

- Evaluation of Applicability Domain: Assess whether the biological domain of the AOP (species, life stages, biological organization) aligns with the assessment question.

- Model Structure Development: Define the mathematical relationships between key events based on existing knowledge and data.

- Parameterization: Estimate model parameters using available experimental data.

- Validation and Refinement: Test model predictions against independent data and refine as needed.

Toxicokinetic models play an essential role in qAOPs by linking external exposures to internal doses at the site of the MIE, enabling extrapolation from in vitro to in vivo systems and across species [28].

Table 2: Quantitative Modeling Approaches for AOP Development

| Model Type | Description | Application Context |

|---|---|---|

| Statistical Models | Regression-based relationships between key events | When empirical data are available but mechanistic understanding is limited |

| Bayesian Networks | Probabilistic graphs representing causal relationships | When dealing with uncertainty and multiple influencing factors |

| Ordinary Differential Equations | Systems of equations describing dynamic biological processes | When temporal dynamics and feedback mechanisms are important |

| Toxicokinetic-Toxicodynamic Models | Combined models of chemical disposition and biological effects | When extrapolating across exposure scenarios or species |

Practical Applications and Case Studies

Chemical Prioritization and Screening

The AOP framework provides a biologically-grounded approach for prioritizing chemicals for further testing [23] [24]. By identifying MIEs linked to adverse outcomes of concern, screening programs can focus on detecting these initiating events using efficient in vitro or in silico methods [24]. For example, the U.S. Environmental Protection Agency has used AOPs to prioritize chemicals for endocrine disruptor screening, focusing on MIEs such as estrogen receptor binding and steroidogenesis inhibition [24]. This approach allows thousands of chemicals to be evaluated using high-throughput screening methods, with traditional testing reserved for chemicals that show activity in these initial screens.

In the pharmaceutical sector, AOPs related to organ-specific toxicities (e.g., liver steatosis, cholestasis, nephrotoxicity) support early safety assessment by identifying potential MIEs that can be screened during drug development [25]. QSAR models trained to predict activity against MIE-related targets enable computational screening of compound libraries, flagging structures with potential safety liabilities before significant resources are invested in their development [25].

Cross-Species Extrapolation

A significant challenge in both human health and ecological risk assessment involves extrapolating toxicity data from tested species to untested species [23]. The AOP framework supports cross-species extrapolation by focusing on the conservation of key events and key event relationships across species [23]. Tools such as the U.S. EPA's SeqAPASS can evaluate the structural and functional conservation of proteins involved in MIEs across species, informing the domain of applicability for specific AOPs [23]. For example, if a fish species used in toxicity testing and an untested endangered fish species have conserved estrogen receptors, an AOP linking estrogen receptor activation to reproductive impairment would support extrapolation between these species [23].

Assessment of Chemical Mixtures

Predicting the toxicity of chemical mixtures represents a particular challenge in risk assessment. AOP networks provide insights into mixture effects by identifying points of convergence where chemicals with different MIEs may impact shared key events [23]. If two chemicals affect the same key event through different MIEs, they may exhibit additive effects even if their initial molecular targets differ [23]. This understanding helps design efficient testing strategies for mixtures by focusing on key events where interactions are most likely to occur.

Key Databases and Computational Tools

Successful application of the AOP framework in research and regulatory contexts relies on specialized tools and resources that support AOP development, evaluation, and application:

- AOP-Knowledge Base (AOP-KB): The main AOP database managed by the Organisation for Economic Co-operation and Development (OECD), providing a centralized platform for AOP development and sharing [27]. The AOP-KB includes approximately 460 AOPs, 1,700 key events, and over 2,500 key event relationships [25].

- AOP-Wiki: An interactive, collaborative platform for AOP development where researchers can start building new AOPs, add information to existing AOPs, or find information on established AOPs [23] [25].

- ChEMBL Database: A manually curated database of bioactive molecules with drug-like properties, containing bioactivity data for targets relevant to MIEs [25].

- SeqAPASS Tool: A computational tool developed by the U.S. EPA that evaluates protein sequence similarity across species to inform the domain of applicability for AOPs [23].

Table 3: Essential Research Resources for AOP Development and Application

| Resource Category | Specific Tools/Databases | Primary Function |

|---|---|---|

| AOP Repositories | AOP-KB, AOP-Wiki | Collaborative development and storage of AOPs |

| Bioactivity Data | ChEMBL, ToxCast/Tox21 | Source of MIE-related bioactivity data |

| Chemical Information | PubChem, ACToR | Chemical structure and property data |

| Cross-Species Extrapolation | SeqAPASS | Assessment of functional conservation across species |

| QSAR Modeling | OECD QSAR Toolbox | Chemical category formation and read-across |

Experimental Protocols for AOP Development

The development of scientifically robust AOPs follows systematic protocols for evidence collection and evaluation [29]:

- Weight-of-Evidence Evaluation: A structured approach to evaluating the scientific support for key event relationships using modified Bradford Hill considerations [29]. This includes assessing biological plausibility, essentiality, empirical support, consistency, and analogies.

- Evidence Categorization: Classifying evidence based on study type (in vitro, in vivo, computational) and quality to transparently communicate the strength of support for each key event relationship.

- Uncertainty Characterization: Explicitly documenting uncertainties and knowledge gaps to guide future research and inform appropriate application of the AOP for decision-making.

Case studies illustrate how these protocols are applied in practice. For example, the development of AOPs for skin sensitization involved systematic evaluation of mechanistic data linking covalent protein binding to the activation of inflammatory responses and ultimately allergic responses [24]. This AOP has supported the development and validation of in vitro assays that can now replace traditional animal tests for skin sensitization assessment [24].

The Adverse Outcome Pathway framework represents a transformative approach for organizing toxicological knowledge to support predictive toxicology and risk assessment. By providing a structured representation of the causal connections between molecular initiating events and adverse outcomes, AOPs create a critical bridge between computational predictions (including QSAR models) and regulatory decisions. The integration of QSAR modeling with the AOP framework is particularly powerful for environmental chemicals research, as it enables interpretation of molecular-level predictions in the context of biologically plausible pathways to adverse effects.

As the field advances, several areas represent promising directions for future development. The construction of quantitative AOP models will enhance predictive capability by enabling dose-response predictions and identification of points of departure for risk assessment [28]. The expansion of AOP networks will better capture the complexity of biological systems and support prediction of mixture effects [23]. Continued development of computational tools for AOP development and application will increase accessibility and usability for researchers and regulators [25].

For QSAR modelers working with environmental chemicals, the AOP framework provides both context and direction—context for interpreting model predictions in terms of toxicological significance, and direction for focusing modeling efforts on molecular interactions with established connections to adverse outcomes. As both AOP development and computational modeling capabilities advance, their integration will play an increasingly important role in enabling efficient, mechanistically-informed assessment of chemical hazards.

Building and Applying QSAR Models: From Algorithm Selection to Real-World Use Cases

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational toxicology and environmental chemistry, providing crucial methodologies for predicting the fate and effects of chemicals when experimental data are limited or unavailable. The fundamental principle of QSAR is that the biological activity or physicochemical property of a molecule can be correlated with its structural and molecular features through statistical or machine learning models [30]. In the context of environmental research, this approach has become increasingly vital for regulatory compliance, particularly with growing restrictions on animal testing and the need to assess the thousands of chemicals in commercial use [4]. The European Union's ban on animal testing for cosmetics, for instance, has propelled the adoption of in silico predictive tools like QSAR as essential components for environmental risk assessment of cosmetic ingredients [4].

The evolution of QSAR has progressed from simple regression analyses handling similar compounds to sophisticated machine learning techniques capable of analyzing large, diverse datasets [30]. This transformation has been driven by interdisciplinary breakthroughs and community initiatives, positioning QSAR as a powerful tool for modeling the biophysical properties of numerous chemicals and assessing potential impacts of medicines, chemicals, and nanomaterials on human health and ecosystems [30]. For environmental scientists, QSAR models offer the ability to predict critical endpoints such as chemical persistence, bioaccumulation potential, mobility, and toxicity, thereby enabling proactive risk assessment and informed regulatory decision-making [4].

Traditional QSAR Approaches

Traditional QSAR methodologies established the foundation for correlating molecular structure with biological activity through interpretable mathematical relationships. These approaches typically rely on predefined molecular descriptors and linear statistical methods that provide transparent and mechanistically understandable models.

Historical Development and Fundamental Principles

QSAR was first established by Corwin Hansch as a natural extension of physical chemistry into the field of virtual drug screening [30]. Early QSAR technologies were based on traditional machine learning and interpretive expert features, with limitations in versatility and accuracy across broader chemical domains [30]. The fundamental principle underlying all QSAR approaches is that molecules with similar structural features are expected to exhibit similar biological activities or physicochemical properties—a concept formally known as the similarity principle in chemical modeling [31]. This principle forms the theoretical basis for both traditional and modern QSAR approaches, though implementation strategies have evolved significantly.

Common Traditional Algorithms

Multiple Linear Regression (MLR)

Multiple Linear Regression represents one of the earliest and most straightforward QSAR approaches, establishing a linear relationship between molecular descriptors and the target activity. MLR models are valued for their interpretability, as the coefficient for each descriptor directly indicates its contribution to the activity prediction. The general form of an MLR model is:

Activity = β₀ + β₁D₁ + β₂D₂ + ... + βₙDₙ + ε