Specificity, Linearity, Range, LOD, and LOQ: A Comprehensive Guide to Analytical Method Validation

This article provides researchers, scientists, and drug development professionals with a complete framework for validating the key parameters of analytical methods.

Specificity, Linearity, Range, LOD, and LOQ: A Comprehensive Guide to Analytical Method Validation

Abstract

This article provides researchers, scientists, and drug development professionals with a complete framework for validating the key parameters of analytical methods. Covering foundational concepts, practical methodologies, advanced troubleshooting, and regulatory compliance, it offers a step-by-step guide to establishing specificity, linearity, analytical range, Limit of Detection (LOD), and Limit of Quantitation (LOQ) to ensure reliable, accurate, and defensible data in pharmaceutical and clinical research.

Core Principles: Defining Specificity, Linearity, Range, LOD, and LOQ

In pharmaceutical analysis, specificity is the foundational parameter that confirms an analytical method can accurately measure the analyte of interest without interference from other components in a complex matrix. It provides the assurance that the signal measured belongs solely to the target molecule, even when faced with closely related impurities, degradation products, or matrix components. Within the framework of method validation guided by ICH Q2(R1) guidelines, specificity works in concert with other critical parameters including linearity range, limit of detection (LOD), and limit of quantitation (LOQ) to ensure reliable analytical performance [1]. This objective comparison examines how these validation parameters perform across different analytical techniques and complex matrices, providing researchers with experimental data to guide method selection.

Comparative Analysis of Validation Parameters Across Techniques

Table 1: Validation Parameter Comparison Across Analytical Methods

| Analytical Method | Specificity Demonstration | Linearity Range | LOD | LOQ | Matrix Complexity | Key Applications |

|---|---|---|---|---|---|---|

| HPLC-UV [2] | Resolution of mesalamine from degradation products | 10-50 μg/mL | 0.22 μg/mL | 0.68 μg/mL | Pharmaceutical tablets | Stability-indicating methods |

| HPLC-MS/MS [3] | Identification of 3,4-DCQA, 3,5-DCQA, and 4,5-DCQA isomers | 1-50 μg/mL | 0.1 μg/mL | 1 μg/mL | Plant extract (Ligularia fischeri) | Natural product quantification |

| RP-HPLC [2] | Forced degradation studies (acid, base, oxidation, thermal, photolytic) | 20-50 μg/mL (7-point calibration) | - | - | Bulk drug and formulations | Assay validation and impurity profiling |

| HPLC-ESI-MS [4] | Specific identification of calactin in complex plant extract | 1-50 μg/mL | 0.1 μg/mL | 1 μg/mL | Calotropis gigantea stem bark | Bioactive compound quantification |

Table 2: Specificity Challenges in Complex Matrices

| Matrix Type | Specificity Challenges | Experimental Approach for Specificity Demonstration | Resolution Techniques |

|---|---|---|---|

| Pharmaceutical Tablets [2] | Excipients, degradation products, impurities | Forced degradation under stress conditions | Peak purity analysis, resolution factor >2 |

| Herbal Plant Extracts [3] | Structural isomers, co-eluting compounds, polyphenolic interference | HPLC-MS/MS with standard comparison | Isomer separation, mass transition identification |

| Biological Samples [1] | Proteins, metabolites, endogenous compounds | Sample preparation (SPE, precipitation), matrix blank analysis | Selective detection (MS/MS), chromatographic separation |

Experimental Protocols for Specificity Assessment

Protocol 1: HPLC-UV Specificity and Linearity Validation for Pharmaceutical Compounds

This protocol follows ICH Q2(R1) guidelines for validating a stability-indicating method for mesalamine in tablet formulations [2]:

Instrumentation and Conditions:

- HPLC System: Shimadzu UFLC with LC-20AD binary pump

- Column: Reverse-phase C18 (150 mm × 4.6 mm, 5 μm)

- Mobile Phase: Methanol:water (60:40 v/v)

- Flow Rate: 0.8 mL/min

- Detection: UV at 230 nm

- Injection Volume: 20 μL

- Run Time: 10 minutes

Specificity Assessment:

- Prepare sample solutions under forced degradation conditions:

- Acidic degradation: 0.1 N HCl at 25°C for 2 hours, then neutralize with 0.1 N NaOH

- Alkaline degradation: 0.1 N NaOH at 25°C for 2 hours, then neutralize with 0.1 N HCl

- Oxidative degradation: 3% hydrogen peroxide at 25°C for 2 hours

- Thermal degradation: 80°C dry heat for 24 hours

- Photolytic degradation: UV exposure at 254 nm for 24 hours per ICH Q1B

- Inject degraded samples and demonstrate that mesalamine peak is resolved from all degradation products with resolution factor >2.0

- Confirm peak purity using photodiode array detection

Linearity and Range:

- Prepare standard solutions at concentrations of 10, 20, 25, 30, 35, and 50 μg/mL

- Inject each concentration in triplicate

- Plot mean peak area against concentration

- Calculate regression parameters: slope = 173.53, y-intercept = -2435.64, R² = 0.9992

- The range is established as 10-50 μg/mL with R² ≥ 0.999

LOD and LOQ Determination:

- Based on signal-to-noise ratio of 3:1 for LOD and 10:1 for LOQ

- LOD = 0.22 μg/mL, LOQ = 0.68 μg/mL

- Verify LOQ by six replicate injections showing %RSD <2%

Protocol 2: HPLC-MS/MS Specificity for Natural Product Analysis

This protocol details the determination of dicaffeoylquinic acids (DCQAs) in Ligularia fischeri using HPLC-MS/MS [3]:

Sample Preparation:

- Plant material (leaves and stems) washed, cut, and freeze-dried

- Powder (5 g) extracted with 50 mL of solvent (100% DW, 30% EtOH, or 50% EtOH)

- Extraction at 60°C for 72 hours in water bath

- Centrifugation at 3000 rpm for 10 minutes

- Supernatant filtered through Whatman filter paper

- Concentration using rotary evaporator at 45°C

- Final extract filtered through 0.45 μm PVDF syringe filter

HPLC-MS/MS Conditions:

- HPLC System: Shimadzu Nexera Lite LC-40D

- MS System: X500R QTOF LC/MS/MS with ESI in positive mode

- Column: Prontosil C18 (250 mm × 4.6 mm, 5 μm)

- Mobile Phase: Water and acetonitrile with 0.1% formic acid, gradient elution

- Flow Rate: 0.5 mL/min

- Detection: UV-PDA at 284 nm and MS/MS

- MS Conditions: Spray voltage -4.5 kV, desolvation temperature 500°C, mass range m/z 100-2000

Specificity Assurance:

- Confirm absence of interfering substances at retention times of 3,4-DCQA, 3,5-DCQA, and 4,5-DCQA

- Use MS/MS fragmentation patterns to confirm compound identity

- Compare chromatograms with standard substances

- Verify no cross-interference between DCQA isomers

Validation Parameters:

- Linearity: Verified with standard curves for all three DCQAs

- Precision: Intra-day and inter-day %RSD <1%

- Accuracy: Recovery rates 99.05-99.25% (%RSD <0.32%)

- LOD/LOQ: Established per ICH Q2(R1) guidelines

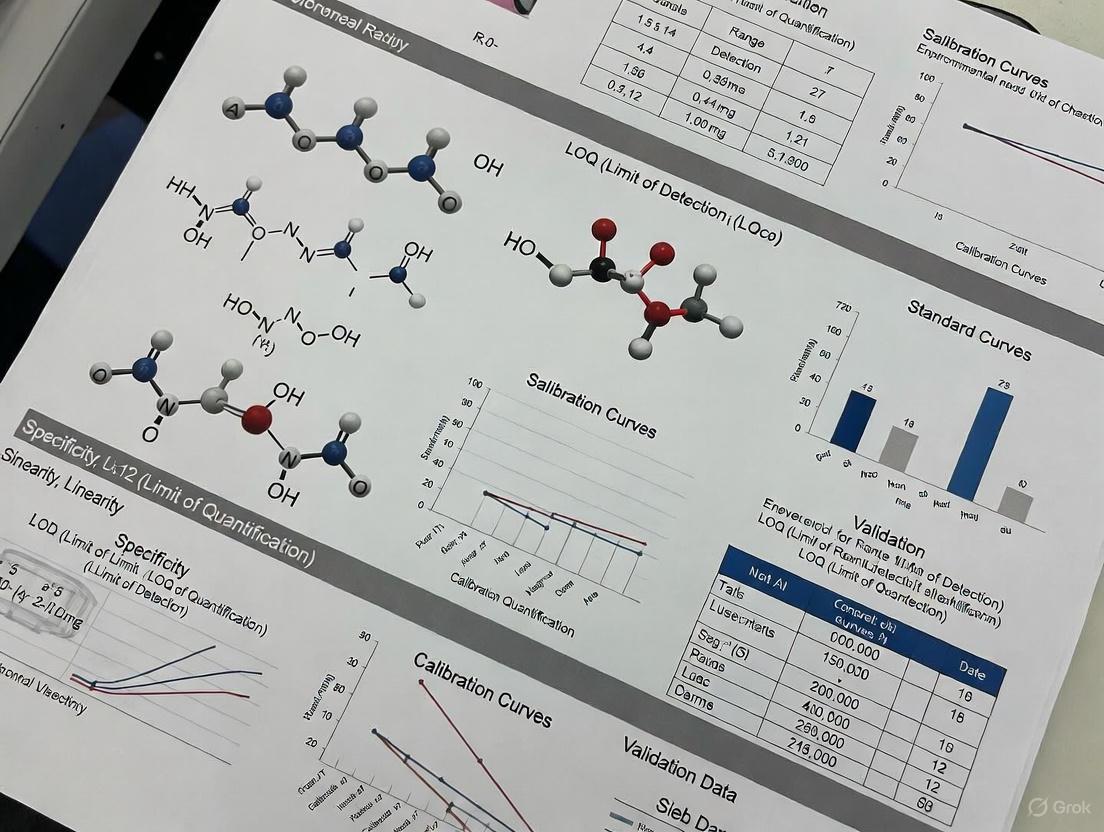

Visualization of Specificity Validation Workflow

Specificity Validation Workflow in Analytical Methods

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Specificity Validation

| Reagent/Material | Function in Specificity Validation | Application Examples |

|---|---|---|

| HPLC-MS Grade Solvents [3] | Mobile phase preparation, sample reconstitution | Acetonitrile, methanol, water with 0.1% formic acid for HPLC-MS/MS |

| Reference Standards [3] [2] | Method calibration, peak identification | 3,4-DCQA, 3,5-DCQA, 4,5-DCQA standards; mesalamine API (99.8% purity) |

| Chromatography Columns [2] [4] | Stationary phase for compound separation | C18 columns (150-250 mm length, 4.6 mm ID, 5 μm particle size) |

| Sample Preparation Materials [3] | Extraction, purification, filtration | PVDF syringe filters (0.45 μm), Whatman filter paper, solid phase extraction cartridges |

| Degradation Reagents [2] | Forced degradation studies for specificity | 0.1N HCl, 0.1N NaOH, 3% H₂O₂ for stress testing |

The experimental data presented demonstrates that specificity remains the cornerstone parameter in analytical method validation, directly influencing the reliability of linearity range, LOD, and LOQ determinations. HPLC-UV methods provide robust specificity for pharmaceutical compounds with LOD values around 0.22 μg/mL, while HPLC-MS/MS techniques offer enhanced specificity for complex natural product matrices with improved LOD of 0.1 μg/mL. The consistency in validation approaches across different techniques and matrices—all adhering to ICH Q2(R1) guidelines—provides researchers with a standardized framework for demonstrating method specificity. As analytical challenges grow with increasingly complex matrices, the fundamental requirement remains unchanged: unequivocal demonstration that the measured signal originates solely from the target analyte, free from interference.

In pharmaceutical analysis and clinical diagnostics, the validity of any quantitative result hinges on the demonstrated performance of the analytical method itself. Within the framework of method validation, linearity and range are two fundamental parameters that establish the working boundaries within which an analyte can be accurately and precisely measured [5]. They are the foundation upon which reliable quantification is built.

Linearity refers to the ability of an analytical procedure to obtain test results that are directly proportional to the concentration (or amount) of the analyte in the sample within a given range [6] [7]. It is a measure of the method's accuracy across different concentrations. Range, on the other hand, is the interval between the upper and lower concentration levels of the analyte for which suitable levels of precision, accuracy, and linearity have been demonstrated [5] [7]. Essentially, linearity defines the quality of the proportional relationship, while the range defines the span of concentrations where this relationship holds true and is reliable.

This guide compares the theoretical concepts with practical experimental protocols, providing a clear roadmap for researchers and scientists to establish and verify these critical parameters.

Core concepts: distinguishing linearity from range

While deeply interconnected, linearity and range address distinct aspects of an analytical method's performance. The following table summarizes their key differences.

| Feature | Linearity | Range |

|---|---|---|

| Definition | Ability to produce results directly proportional to analyte concentration [5] [7] | Interval between upper and lower analyte concentrations that demonstrate suitable precision, accuracy, and linearity [5] [7] |

| Primary Focus | Quality of the concentration-response relationship [5] | Usable span of concentrations [5] |

| Demonstrated By | Calibration curve (response vs. concentration) [5] | Successful linearity, accuracy, and precision studies within the interval [7] |

| Key Metrics | Correlation coefficient (R²), slope, y-intercept, residual plots [8] [5] | Numerical interval (e.g., 50-150% of target concentration) [5] |

| Dependence | A property of the method's response | Defined based on linearity, accuracy, and precision data [5] |

A step-by-step experimental protocol

Establishing linearity and range is a systematic process. The workflow below outlines the key stages, from preparation to final determination.

Planning and preparation

The first phase involves careful design and preparation of calibration standards [8].

- Define the Range and Levels: Prepare a minimum of five concentration levels to establish a calibration curve [9] [8]. A common and often sufficient approach is to bracket the expected sample concentrations, typically from 50% to 150% of the target concentration or specification limit [8] [5]. For impurity testing, this might extend from the quantitation limit (QL) to 150% of the specification limit [5].

- Standard Preparation: Prepare standard solutions using calibrated equipment and certified reference materials. To avoid propagating errors, it is good practice to prepare standards independently rather than through serial dilution from a single stock solution [8]. The standards should be prepared in a matrix that matches the sample matrix as closely as possible to account for potential matrix effects [8].

Execution and data acquisition

- Analysis: Analyze each of the prepared concentration levels. To eliminate systematic bias, run the standards in a random order rather than in ascending or descending concentration [8].

- Replication: Analyze each concentration level in triplicate to obtain a measure of repeatability at each point [8].

Analysis and evaluation

This phase involves statistical and visual assessment of the acquired data to judge the method's linearity.

- Regression Analysis: Plot the analytical response (e.g., peak area, absorbance) on the y-axis against the theoretical concentration on the x-axis. Using the least-squares method, perform a linear regression analysis to obtain the line of best fit, characterized by its slope, y-intercept, and coefficient of determination (R²) [8] [5].

- Residual Plot Examination: A critical step often overlooked is the visual inspection of the residual plot (the plot of the difference between the measured value and the value predicted by the regression line against concentration) [8]. A linear method will display residuals randomly scattered around zero. Any observable pattern (e.g., U-shaped curve, funnel shape) indicates a potential lack of linearity that a high R² value might mask [8].

Quantitative data assessment and acceptance criteria

The evaluation of linearity data requires more than just an R² value. The table below presents a typical dataset for an impurity method validation and the corresponding acceptance criteria.

Table: Example Linearity Data for an Impurity (e.g., Impurity A) [5]

| Level | Impurity Value (%) | Concentration (mcg/mL) | Area Response |

|---|---|---|---|

| QL (0.05%) | 0.05% | 0.5 | 15,457 |

| 50% | 0.10% | 1.0 | 31,904 |

| 70% | 0.14% | 1.4 | 43,400 |

| 100% | 0.20% | 2.0 | 61,830 |

| 130% | 0.26% | 2.6 | 80,380 |

| 150% | 0.30% | 3.0 | 92,750 |

| Slope | 30,746 | ||

| R² | 0.9993 |

Key acceptance criteria

- Correlation Coefficient (R²): For many pharmaceutical applications, an R² value of ≥ 0.995 (or often ≥ 0.997) is considered acceptable [8] [5]. However, a high R² alone is not a guarantee of linearity [8] [6].

- Visual Inspection: The calibration curve and, more importantly, the residual plot must be visually inspected for randomness and the absence of systematic patterns [8].

- Accuracy and Precision: For the range to be valid, the method must demonstrate acceptable accuracy (e.g., % recovery) and precision (e.g., %RSD) at the extremes and throughout the range [7]. The range is the interval where all these parameters are met.

In the example above, the R² of 0.9993 exceeds the typical threshold of 0.997, and the data visually forms a straight line (not shown), passing the linearity criteria. The range for this impurity would then be reported as 0.05% (QL) to 0.30% (150% of specification) [5].

The scientist's toolkit: essential research reagents and materials

The following reagents, solutions, and instruments are foundational for conducting linearity and range experiments.

Table: Essential Materials for Linearity and Range Validation

| Item | Function in Validation |

|---|---|

| Certified Reference Material (CRM) | Provides a known quantity of the target analyte with high purity and certainty, used for preparing the primary stock solution to ensure accuracy [8]. |

| Blank Matrix | The analyte-free sample material (e.g., plasma, formulation excipients) used to prepare calibration standards, crucial for identifying and accounting for matrix effects [8]. |

| Linearity/Calibration Standards | A series of samples with known concentrations of the analyte, typically at 5-6 levels spanning the intended range (e.g., 50%, 80%, 100%, 120%, 150%), used to construct the calibration curve [9] [8] [5]. |

| Analytical Balance (Calibrated) | Used for accurate weighing of the reference standard and any other solid components, fundamental to preparing solutions of known concentration. |

| Volumetric Glassware/Pipettes (Calibrated) | Used for precise dilution and transfer of liquids to ensure each linearity standard is prepared at the correct theoretical concentration. |

Advanced considerations and troubleshooting

Even with a well-planned experiment, challenges can arise. Advanced statistical techniques can offer more robust evaluations of linearity, particularly for methods where the traditional R² is misleading [6]. Furthermore, being able to troubleshoot common issues is a critical skill.

- Beyond R²: The ICH Q2(R1) guideline defines linearity based on the proportionality of test results, not the instrumental response function. In techniques that use a non-linear calibration curve (e.g., ELISA, qPCR), assessing "sample dilution linearity" is more appropriate. One advanced method involves a double logarithm transformation; taking the log of both the theoretical and back-calculated concentrations and fitting a line. A slope of 1.00 in this log-log plot indicates perfect proportionality, providing a more direct assessment of the linearity of results [6].

- Common Issues and Solutions:

- Poor R² or Non-Random Residuals: This can be caused by an incorrectly selected concentration range, detector saturation, matrix effects, or chemical interactions. Re-evaluate the working range and consider using weighted regression models if heteroscedasticity (variance changing with concentration) is observed [8] [6].

- Significant Y-Intercept: A large, non-zero intercept suggests a constant systematic error, such as interference from the blank matrix or an instrumental baseline drift. Investigate the blank response and method specificity [8] [7].

- Matrix Effects: If the analyte responds differently in the sample matrix than in a pure solvent, linearity will be compromised. Always prepare calibration standards in the blank matrix or employ a standard addition method to compensate [8].

A thorough understanding and rigorous validation of linearity and range are non-negotiable for generating reliable quantitative data in drug development and clinical science. By moving beyond a sole reliance on R² and implementing a holistic protocol that includes visual residual analysis and stringent acceptance criteria, scientists can ensure their analytical methods are truly fit for purpose. This foundational work, documented with complete transparency, not only satisfies regulatory requirements but also instills confidence in every result the method produces.

In analytical chemistry and bioanalysis, the Limit of Detection (LOD) and Limit of Quantitation (LOQ) are fundamental performance characteristics that define the sensitivity and operational range of an analytical method. The LOD represents the lowest concentration of an analyte that can be reliably distinguished from the analytical background noise, while the LOQ is the lowest concentration that can be quantitatively measured with acceptable precision and accuracy [10]. These parameters are essential for methods intended to detect and measure trace levels of analytes, such as impurities in pharmaceuticals, biomarkers in biological samples, or contaminants in environmental samples [11].

Proper determination of LOD and LOQ is critical for validating analytical methods according to regulatory guidelines such as ICH Q2(R2) and for ensuring data quality in research and development [12]. Without established detection and quantitation limits, researchers cannot confidently interpret low-concentration results, potentially leading to incorrect conclusions in drug development studies, diagnostic test development, and quality control operations [13].

Defining LOD and LOQ Concepts

Fundamental Definitions and Distinctions

The Limit of Detection (LOD), also called Lower Limit of Detection (LLD), is the lowest analyte concentration that can be reliably distinguished from a blank sample but not necessarily quantified as an exact value [10] [14]. It represents the concentration at which detection is feasible, though without guaranteed precision or accuracy. Statistically, it is the point where the analyte signal becomes significantly different from the noise or blank signal with a stated confidence level, typically 99% [14].

The Limit of Quantitation (LOQ), also called Lower Limit of Quantification (LLOQ), is the lowest concentration at which the analyte can not only be detected but also measured with acceptable accuracy and precision [11] [15]. At or above the LOQ, the method can provide quantitative results that meet predefined performance criteria for bias and imprecision. The LOQ is always equal to or higher than the LOD [11].

For methods with a defined quantitative range, the Upper Limit of Quantification (ULOQ) represents the highest concentration that can be accurately measured, defining the upper boundary of the method's quantitative range [14].

Comparative Characteristics of LOD and LOQ

Table 1: Key Characteristics of LOD and LOQ

| Parameter | Limit of Detection (LOD) | Limit of Quantitation (LOQ) |

|---|---|---|

| Definition | Lowest concentration reliably detected | Lowest concentration quantified with acceptable accuracy and precision |

| Primary Function | Qualitative detection | Quantitative measurement |

| Signal-to-Noise Ratio | 3:1 [10] | 10:1 [10] |

| Statistical Confidence | 99% (distinguishable from blank) [14] | Defined by precision and accuracy requirements (typically ≤20% CV) [15] |

| Relative Position | Lower | Always ≥ LOD [11] |

| Typical Use Cases | Screening methods, impurity detection | Quantitative assays, pharmacokinetic studies |

Methodologies for Determining LOD and LOQ

Calculation Approaches and Formulas

Multiple approaches exist for determining LOD and LOQ, each with specific applications depending on the analytical technique and validation requirements.

Table 2: Common Methods for Determining LOD and LOQ

| Method | LOD Calculation | LOQ Calculation | Applications |

|---|---|---|---|

| Signal-to-Noise Ratio | Concentration giving S/N = 3:1 [10] | Concentration giving S/N = 10:1 [10] | Chromatographic methods with baseline noise [10] |

| Standard Deviation of Blank | Meanblank + 1.645(SDblank) [11] | Meanblank + 10(SDblank) (estimated) | Methods with consistent blank measurements |

| Calibration Curve Parameters | 3.3 × σ/S [10] | 10 × σ/S [10] | Instrumental methods where σ = SD of response, S = slope of calibration curve |

| Empirical Based on Precision | Not applicable | Lowest concentration with CV ≤ 20% [15] | Bioanalytical methods (BMV guidelines) |

The CLSI EP17 guideline protocol defines LOD using the formula: LOD = LoB + 1.645(SDlow concentration sample), where LoB (Limit of Blank) is the highest apparent analyte concentration expected when replicates of a blank sample are tested [11]. This approach acknowledges the statistical overlap between blank and low-concentration samples, providing a more reliable detection limit.

Graphical Assessment Approaches

Advanced graphical approaches have been developed to provide more realistic assessments of LOD and LOQ. The accuracy profile and uncertainty profile methods use tolerance intervals and measurement uncertainty to define the valid quantification range [16]. These approaches simultaneously evaluate bias, precision, and total error to determine the lowest concentration where measurement uncertainty falls within acceptable limits [15] [16].

Compared to classical statistical methods, graphical strategies provide more realistic assessments of LOD and LOQ. A 2025 comparative study found that classical statistical approaches often underestimate these limits, while uncertainty profiles provide precise estimates of measurement uncertainty and more reliable detection and quantitation limits [16].

Experimental Protocols for Determination

Sample Preparation and Analysis

The experimental determination of LOD and LOQ requires careful preparation and analysis of specific sample types. For a comprehensive assessment, two different kinds of samples are generally prepared: a "blank" sample containing no analyte, and a "spiked" sample containing a low concentration of the analyte of interest [17]. In some cases, multiple spiked samples at different concentrations may be prepared to bracket the expected detection and quantitation limits.

The blank solution should ideally have the same matrix as regular patient samples to account for matrix effects [17]. For methods validated according to CLSI guidelines, a recommended practice is to measure 60 replicates for establishing these parameters during method development, while 20 replicates may suffice for verification [11]. The samples should be analyzed over different days, using multiple instruments and reagent lots when possible, to capture expected performance under typical laboratory conditions [11].

LOD and LOQ Determination Workflow

Statistical Analysis and Verification

For the signal-to-noise approach, the LOD and LOQ are verified by visually examining chromatograms or spectra and confirming that the average signal at the proposed LOD is at least 3 times the baseline noise, and at the LOQ is at least 10 times the baseline noise [10].

When using the standard deviation and slope method, the residual standard deviation of the regression line or the standard deviation of the y-intercepts of regression lines serves as the measure of variability (σ), while the slope (S) of the calibration curve represents the sensitivity of the method [10]. The calculated LOD and LOQ should then be verified by analyzing samples at these concentrations and confirming that they meet the acceptance criteria.

For the accuracy profile approach, the LOQ is determined as the lowest concentration where the tolerance intervals (accounting for both bias and precision) fall within the acceptability limits [16]. This method simultaneously validates the entire method and establishes the quantification limit based on total error principles.

Advanced Applications and Statistical Considerations

Handling Data Below the LOQ

In practical research settings, analysts frequently encounter measurements that fall below the LOQ, presenting challenges for data interpretation and statistical analysis. Simply replacing sub-LOQ values with a fixed value such as LOQ/2 introduces significant bias in both mean and standard deviation estimates, particularly when a substantial proportion of data is affected [13].

A more statistically sound approach treats sub-LOQ values as left-censored data, acknowledging that the exact value is unknown but falls below a known threshold [13]. Statistical methods such as maximum likelihood estimation (MLE) can then be applied to fit distributions and estimate parameters that account for the censored nature of the data. Simulation studies demonstrate that this censoring approach maintains much better fidelity to the underlying data, providing reasonable estimates of mean and standard deviation even when up to 90% of observations fall below the LOQ [13].

Statistical Handling of Sub-LOQ Data

Case Study: HPLC Method for Sotalol in Plasma

A 2025 study compared different approaches for assessing LOD and LOQ in a bioanalytical method using HPLC for determination of sotalol in plasma [16]. The researchers implemented three strategies: the classical approach based on statistical parameters, the accuracy profile method, and the uncertainty profile approach.

The study found that the classical strategy based on statistical concepts provided underestimated values of LOD and LOQ, potentially leading to overconfidence in the method's capabilities at low concentrations [16]. In contrast, both graphical methods (accuracy and uncertainty profiles) provided more relevant and realistic assessments. The uncertainty profile method was particularly valuable as it provided precise estimates of measurement uncertainty alongside the detection and quantitation limits [16].

This case study demonstrates the importance of selecting appropriate assessment methodologies based on the intended use of the analytical method and the required confidence in low-level measurements.

Essential Research Tools and Reagents

Analytical Method Development Toolkit

Table 3: Essential Research Reagent Solutions for LOD/LOQ Studies

| Reagent/Material | Function | Application Example |

|---|---|---|

| Blank Matrix | Provides analyte-free background for LoB determination | Blank plasma for bioanalytical methods [17] |

| Reference Standards | Precise analyte quantities for spiking experiments | Certified reference materials for calibration [18] |

| Internal Standards | Correction for analytical variability | Stable isotope-labeled analogs in HPLC-MS [16] |

| High-Purity Solvents | Minimize background interference | Traceselect grade for ICP-OES [18] |

| Calibration Solutions | Establish analytical response relationship | TraceCERT multielement standards [18] |

Successful determination of LOD and LOQ requires not only appropriate statistical approaches but also high-quality materials and reagents. The blank matrix should be commutable with actual patient specimens to ensure realistic assessment of background signals [11]. Reference standards with certified purity and concentration are essential for preparing accurate spiked samples at low concentrations near the expected detection and quantitation limits [18].

For chromatographic methods, the selection of appropriate columns and mobile phases significantly impacts method sensitivity. For example, in an RP-HPLC method developed for favipiravir quantification, an Inertsil ODS-3 C18 column with specific mobile phase composition was critical for achieving the necessary sensitivity [19]. Similarly, in ICP-OES methodology for quality assessment of radiopharmaceuticals, high-purity reagents and appropriate buffer systems were essential for accurate determination of trace metal impurities [18].

The Limit of Detection and Limit of Quantitation are critical performance characteristics that define the lower boundaries of an analytical method's capabilities. Appropriate determination of these parameters requires careful experimental design, proper statistical analysis, and verification using independent samples. While classical approaches based on signal-to-noise ratios or standard deviation calculations provide a starting point, advanced graphical methods such as accuracy profiles and uncertainty profiles offer more realistic assessments of method capabilities, particularly for regulated bioanalytical applications.

The choice of methodology should be guided by the intended use of the analytical method, regulatory requirements, and the necessary confidence in low-concentration measurements. Proper establishment and verification of LOD and LOQ ensure that analytical methods are "fit for purpose" and generate reliable data for research and regulatory decision-making. As analytical technologies continue to advance, pushing detection capabilities to increasingly lower levels, the appropriate determination and application of these fundamental method characteristics remains essential for scientific progress in pharmaceutical development and biomedical research.

In the pharmaceutical and clinical laboratory sciences, the reliability of analytical data is paramount to ensuring product quality, patient safety, and public health. This reliability is established through rigorous analytical method validation, a process governed by internationally recognized guidelines. Among these, the International Council for Harmonisation (ICH) Q2(R2), the United States Pharmacopeia (USP), and the Clinical and Laboratory Standards Institute (CLSI) form a foundational triad. While interconnected in their pursuit of data integrity, each organization provides a unique perspective and set of requirements. ICH Q2(R2) offers a broad, harmonized framework for the pharmaceutical industry, focusing on the validation of analytical procedures for drug substances and products [20]. The USP provides legally recognized, enforcable standards for quality, including mandatory and informational general chapters that detail specific testing procedures [21] [22]. CLSI, through its Evaluation Protocol (EP) series, delivers detailed, practical guidance for evaluating the performance of clinical laboratory tests, with a strong emphasis on statistical protocols and metrological traceability [23] [24]. This guide objectively compares these three pivotal frameworks, focusing on their approaches to key validation parameters—Specificity, Linearity Range, Limit of Detection (LOD), and Limit of Quantitation (LOQ)—to equip researchers and drug development professionals with the knowledge to ensure regulatory compliance and scientific excellence.

The following table summarizes the core identity, regulatory standing, and primary audience of each guideline.

Table 1: Foundational Overview of ICH Q2(R2), USP, and CLSI Guidelines

| Feature | ICH Q2(R2) | USP General Chapters | CLSI EP Guidelines |

|---|---|---|---|

| Full Name & Issuer | Q2(R2) Validation of Analytical Procedures; Issued by ICH (adopted by FDA, EMA, etc.) [20] | United States Pharmacopeia General Chapters; Published by USP [21] [22] | Evaluation Protocol (EP) Standards; Published by CLSI [23] [25] |

| Primary Regulatory Scope | Pharmaceutical drug development and manufacturing (Chemical & Biotech) [20] | Pharmaceutical products, dietary supplements, and food ingredients [22] | Clinical laboratory tests (IVDs and LDTs) [23] |

| Regulatory Status | Harmonized guideline for regulatory submissions [20] | Legally recognized standards; chapters can be "required" (enforceable) or "informational" (guidance) [22] | Internationally accepted consensus standards and best practices [23] [25] |

| Core Focus in Validation | Providing a general framework for validating analytical procedures [20] | Providing specific, monograph-referenced testing methods and acceptance criteria [21] [22] | Providing detailed protocols for evaluating method performance characteristics (e.g., precision, accuracy) [23] |

The relationships and applications of these guidelines within the product lifecycle are illustrated below.

Figure 1: Guideline Scope in Product Lifecycle

Comparative Analysis of Key Validation Parameters

This section delves into the specific requirements and methodologies for critical validation parameters as defined by each guideline.

Specificity

Specificity is the ability to assess the analyte unequivocally in the presence of components that may be expected to be present, such as impurities, degradants, or matrix components.

- ICH Q2(R2): This guideline mandates that specificity be demonstrated using stressed samples or samples spiked with potential interferents. The response of the analytical procedure to the analyte in the presence of these materials is compared to the response of the analyte alone. For chromatographic methods, peak purity tests are often critical evidence [20].

- USP: The approach to specificity in USP general chapters is often tightly integrated into specific monographs. A required chapter may describe a general technique, but the acceptance criteria for resolving the analyte from specific impurities are detailed in the individual product monograph, providing a highly specific, product-oriented pass/fail standard [21] [22].

- CLSI: CLSI standards, such as those found in the EP07 (Interference Testing) and EP17 (Limits of Detection and Quantitation) protocols, provide detailed experimental designs for assessing specificity in the context of clinical sample matrices. This includes protocols for testing common interferents like hemolysis, icterus, and lipemia, as well as medications and endogenous substances, using statistical measures to determine clinical significance [23].

Linearity and Range

The linearity of an analytical procedure is its ability to obtain test results that are directly proportional to the concentration of analyte in the sample within a given range.

- ICH Q2(R2): Linearity is typically demonstrated by testing a minimum of five concentrations over the claimed range. The data is treated using statistical methods for linear regression (e.g., y = mx + b), and outputs such as the correlation coefficient, y-intercept, and residual sum of squares are used to confirm linearity. The verified range is established as the interval between the upper and lower concentration levels for which linearity, accuracy, and precision have been demonstrated [20].

- USP: USP general chapters provide procedures and acceptance criteria for verifying the linearity of instruments and methods. The approach is pragmatic and tied to compliance, often referencing standard solutions and specifying the minimum correlation coefficient required for a procedure to be considered acceptable for a given monograph [22].

- CLSI: CLSI guidelines, such as EP06 (Evaluation of Linearity of Quantitative Measurement Procedures), offer a highly detailed and statistically robust protocol. It often involves testing multiple replicates at several concentrations and uses polynomial regression analysis to distinguish between linear and non-linear responses. The guideline provides clear methods for determining the reportable range and establishing the limits of linearity [23].

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

The LOD is the lowest amount of analyte that can be detected, but not necessarily quantified. The LOQ is the lowest amount of analyte that can be quantitatively determined with suitable precision and accuracy.

- ICH Q2(R2): This guideline describes multiple approaches for determining LOD and LOQ. These include a visual evaluation, a signal-to-noise ratio (typically 3:1 for LOD and 10:1 for LOQ), and the standard deviation of the response and the slope of the calibration curve (using the formula LOD = 3.3σ/S and LOQ = 10σ/S, where σ is the standard deviation and S is the slope). The method chosen must be justified and supported by relevant data [20].

- USP: USP chapters often align with the ICH approaches, particularly the signal-to-noise and standard deviation/slope methods. The criteria for what constitutes an acceptable LOD/LOQ may be explicitly stated in specific monographs, especially for impurities and degradation products, ensuring consistency across testing laboratories [22].

- CLSI: CLSI EP17 (Limits of Detection and Quantitation) provides the most comprehensive and granular protocol for this parameter in a clinical setting. It guides laboratories through a multi-stage process involving the preparation of low-concentration samples, repeated measurements, and sophisticated statistical analysis to determine the blank limit, detection limit, and quantitation limit, along with their associated uncertainties [23].

Table 2: Direct Comparison of LOD and LOQ Methodologies

| Guideline | Recommended LOD/LOQ Methods | Typical Experimental Design | Key Outputs & Acceptance |

|---|---|---|---|

| ICH Q2(R2) | 1. Visual Inspection2. Signal-to-Noise3. Standard Deviation of Response & Slope [20] | Analysis of samples with analyte at/near the expected limit. | LOD/LOQ values are reported. The LOQ must be demonstrated with specified precision and accuracy. |

| USP | Similar to ICH; signal-to-noise is commonly referenced. Acceptance may be monograph-specific [22]. | Verification per general chapter, with criteria defined in the specific product monograph. | The LOD/LOQ must meet the criteria set forth in the enforceable monograph. |

| CLSI EP17 | A detailed multi-protocol approach based on the measurement of low-level pools and replicates to characterize the entire detection/quantitation curve [23]. | Extensive replication of low-concentration samples and a blank. Statistical analysis of the resulting data distribution. | Establishes Blank Limit, Detection Limit, Quantitation Limit, and associated measurement uncertainty. |

Experimental Protocols and Data Presentation

To illustrate the application of these guidelines, consider a typical experiment for determining the Linearity Range and LOQ for a new active pharmaceutical ingredient (API).

Detailed Protocol for Linearity and LOQ Determination

1. Sample Preparation:

- Prepare a stock solution of the API of known high purity and concentration.

- From this stock, perform a serial dilution to create a minimum of five standard solutions spanning the expected range (e.g., 50% to 150% of the target assay concentration). The solutions should be prepared in the same matrix as the sample (e.g., dissolution medium, placebo mixture) [20].

2. Instrumental Analysis:

- Analyze each concentration level in triplicate using the developed analytical method (e.g., HPLC with UV detection). The analysis should be performed in a randomized order to minimize the impact of instrumental drift.

3. Data Analysis for Linearity:

- Plot the mean measured response (e.g., peak area) against the theoretical concentration for each level.

- Perform a linear regression analysis to obtain the correlation coefficient (r), slope, y-intercept, and residual sum of squares.

- Calculate the %Bias at each concentration: [(Observed Concentration - Theoretical Concentration) / Theoretical Concentration] * 100.

- The range is considered linear if the correlation coefficient exceeds a pre-defined limit (e.g., r > 0.998), the y-intercept is not statistically significant from zero, and the %Bias at each point is within acceptable limits (e.g., ±2%) [20].

4. Data Analysis for LOQ:

- Identify the lowest concentration level in the linearity study that demonstrated acceptable precision and accuracy.

- Prepare and analyze six independent samples at this concentration level.

- Calculate the precision (as %RSD) and accuracy (as %Recovery) of these six replicates.

- The LOQ is confirmed if the %RSD is ≤ 5% and the %Recovery is within 95-105%. If these criteria are not met, repeat the analysis at a slightly higher concentration until they are satisfied [20].

The workflow for this integrated experiment is as follows:

Figure 2: Linearity & LOQ Validation Workflow

Research Reagent Solutions for Validation

The following table details essential materials and their functions in conducting these validation experiments.

Table 3: Essential Research Reagents and Materials for Method Validation

| Research Reagent / Material | Critical Function in Validation | Application Example |

|---|---|---|

| Certified Reference Material (CRM) | Provides a substance with a certified purity and assigned property value, used to establish trueness and calibration in quantitative analysis. | Used as the primary standard to prepare stock solution for linearity and LOQ/ LOD studies [24]. |

| Commutable Reference Material | A reference material whose properties demonstrate the same interrelationship as patient samples when measured by different analytical platforms. Crucial for ensuring calibration is valid across systems. | Used as a common calibrator to harmonize results across multiple measurement procedures in a laboratory network [24]. |

| Placebo/Blank Matrix | The analyte-free formulation or biological matrix used to prepare standards. Critical for assessing specificity, LOD, and the background signal. | Used in specificity experiments to confirm no interference from excipients or matrix components at the retention time of the analyte. |

| Stability-Indicating Materials | Samples subjected to stress conditions (heat, light, acid/base, oxidation) to generate degradants. Used to prove method specificity and stability-indicating capabilities. | Stressed samples are analyzed to demonstrate the method can accurately quantify the API in the presence of its degradation products. |

The choice and application of ICH Q2(R2), USP, and CLSI guidelines are not mutually exclusive but are dictated by the stage of development, the product's nature, and the intended use of the analytical data. ICH Q2(R2) provides the overarching, science-based principles for validating methods intended for regulatory submissions in the pharmaceutical industry. Its strength lies in its harmonized, flexible framework. The USP offers legally binding, product-specific standards; its strength is in providing explicit, enforceable methods and acceptance criteria for quality control and release testing. CLSI EP guidelines deliver granular, statistically rigorous protocols ideal for developing, verifying, and validating methods in a clinical laboratory setting, with a strong focus on understanding performance in a biological matrix.

For a comprehensive validation strategy, a drug development professional might begin method development using the principles of ICH Q2(R2), then refine the method using CLSI's detailed protocols (e.g., EP06 for linearity, EP17 for LOD/LOQ) to ensure robust statistical performance, and finally, confirm that the method meets the specific, enforceable standards outlined in the relevant USP monographs for product registration and commercial quality control. Understanding the synergies and specific applications of these three foundational pillars is essential for generating reliable, defensible, and regulatory-compliant analytical validation data.

Practical Protocols: How to Determine and Validate Each Parameter

In the rigorous world of pharmaceutical analysis, demonstrating that an analytical method accurately measures the intended analyte without interference from other components is paramount. This property, known as specificity, is a cornerstone of analytical method validation, which also includes linearity, range, LOD, and LOQ [26]. Within this framework, peak purity assessment is a critical technique for confirming specificity, especially for stability-indicating methods. It ensures that the chromatographic peak for the active pharmaceutical ingredient (API) is not compromised by co-elution with impurities, degradants, or excipients. This guide provides a comparative analysis of the primary techniques for peak purity and interference testing, supported by experimental data and detailed protocols.

Core Principles of Peak Purity Assessment

The fundamental goal of peak purity assessment is to demonstrate the spectral homogeneity of a chromatographic peak. A pure peak originates from a single compound, meaning its UV spectrum remains consistent throughout the peak's elution—at the upslope, apex, and downslope. Conversely, a change in the spectral shape across the peak is a strong indicator of co-elution [27].

These assessments are typically performed during forced degradation studies, where drug substances and products are stressed under various conditions (e.g., heat, light, acid/base hydrolysis, and oxidation) to generate potential degradants. A successful specificity test shows that the analytical method can separate and accurately quantify the API in the presence of these degradation products [26] [27].

Comparative Analysis of Peak Purity Techniques

The following table summarizes the key techniques available for peak purity assessment, each with distinct advantages and limitations.

Table 1: Comparison of Major Peak Purity Assessment Techniques

| Technique | Underlying Principle | Detection Capability | Key Strengths | Key Limitations |

|---|---|---|---|---|

| PDA-Facilitated UV PPA | Compares UV spectral shapes across a peak using algorithms (e.g., purity angle vs. threshold) [27]. | Detects co-eluting compounds with differing UV spectra. | - Non-destructive; standard with most HPLC systems [27].- High efficiency and minimal extra cost [27]. | - False negatives if impurities have similar UV spectra or poor UV response [27].- False positives from baseline shifts or suboptimal processing [27]. |

| Mass Spectrometry-Facilitated PPA | Monitors precursor ions, product ions, and/or adducts across a peak in TIC or EIC [27]. | Detects co-eluting compounds with differing mass spectra. | - High sensitivity and selectivity [27].- Provides structural identity of interferents. | - Destructive technique.- Higher instrument cost and operational complexity.- Not always compatible with all mobile phases (e.g., non-volatile buffers). |

| Two-Dimensional Correlation (2D-Corr) Analysis | Applies chemometrics to data from multi-channel detectors (e.g., coulometric array); synchronous/asynchronous maps reveal co-elution [28]. | Detects subtle differences in compound behavior across multiple detection channels. | - Powerful for analyzing complex, overlapping peaks [28].- Can be automated. | - Requires specialized software and chemometric knowledge [28].- Limited to specific multi-channel detectors. |

| Orthogonal Chromatography (2D-LC) | Separates the peak of interest using a second chromatographic dimension with a different separation mechanism. | High-resolution separation of co-eluting compounds. | - Considered a "gold standard" for unambiguous separation. | - Technically complex and time-consuming.- Requires sophisticated instrumentation. |

Supporting Experimental Data

A 2022 study utilizing 2D-corr analysis with a 16-sensor coulometric array detector provides a clear example of this technique's power. The analysis was performed on a peak from a Capsicum chili extract that appeared homogeneous by conventional HPLC. The 2D-corr synchronous and asynchronous contour plots revealed the presence of at least three co-eluting species, which were later identified by mass spectrometry as quinic acid, ascorbic acid, and phenylalanine [28]. This demonstrates how advanced chemometric techniques can uncover interferences missed by one-dimensional analysis.

Experimental Protocols for Key Techniques

Protocol 1: PDA-Facilitated Peak Purity Assessment

This is the most common protocol for establishing specificity in stability-indicating methods [27].

Sample Preparation:

- Forced Degradation: Subject the drug substance and product to relevant stress conditions (e.g., 0.1N HCl and 0.1N NaOH at 60°C for 1 week, 3% H₂O₂ at room temperature for 24 hours, heat at 105°C for 1 week, and light exposure per ICH conditions). Target degradation between 5-20% [26] [27].

- Prepare samples of the unstressed API, placebo (excipients), and stressed samples.

Instrumentation and Data Acquisition:

- Use an HPLC system equipped with a Photodiode Array (PDA) detector.

- Inject the prepared samples and acquire chromatographic data with continuous spectral collection across the peaks of interest. Ensure the signal is within the linear range of the detector.

Data Processing and Analysis:

- Process the data using a Chromatography Data System (CDS) with peak purity algorithm (e.g., Waters Empower, Agilent OpenLab, or Shimadzu LabSolutions).

- The software compares spectra from different time points across the peak (front, apex, tail) against a reference spectrum (usually the apex). It calculates a purity angle and a purity threshold [27].

- Interpretation: A peak is considered spectrally pure if the purity angle is less than the purity threshold [27].

Protocol 2: Mass Spectrometry-Facilitated Peak Purity

This technique is used when higher sensitivity and selectivity are required or when PDA results are inconclusive [27].

Sample Preparation: Follow the same forced degradation and sample preparation as in Protocol 1.

Instrumentation and Data Acquisition:

- Use an HPLC system coupled to a mass spectrometer (e.g., single quadrupole or tandem MS).

- Acquire data in Total Ion Chromatogram (TIC) or Selected Ion Monitoring (SIM) mode. For enhanced specificity, use Multiple Reaction Monitoring (MRM) on a tandem MS.

Data Processing and Analysis:

- Extract ion chromatograms (EICs) for the specific mass-to-charge ratio (m/z) of the API and potential degradants.

- Examine the mass spectra across the API peak (front, apex, tail).

- Interpretation: The peak is considered pure if the mass spectra across the peak show consistent precursor and product ions attributable only to the API. The presence of other ions or changing ratios indicates a co-eluting impurity [27].

Visualizing Workflows and Relationships

The following diagrams illustrate the logical workflow for establishing specificity and the scientific principles behind PDA-based peak purity assessment.

Diagram Title: Specificity Establishment Workflow

Diagram Title: PDA Peak Purity Angle Principle

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials for Peak Purity and Forced Degradation Studies

| Item | Function / Purpose | Example / Specification |

|---|---|---|

| High-Purity Reference Standards | Serves as the benchmark for identity, retention time, and spectral comparison. | API, known impurities, and degradation products [26]. |

| Chromatography Data System (CDS) | Software for instrument control, data acquisition, processing, and peak purity algorithm calculation. | Waters Empower, Agilent OpenLab, Shimadzu LabSolutions [27]. |

| PDA Detector | Captures full UV-Vis spectra for every data point across a chromatographic peak, enabling spectral comparison. | Standard feature on modern HPLC/UHPLC systems [29]. |

| LC-MS Instrumentation | Provides definitive identification of co-eluting species based on molecular weight and fragmentation pattern. | Single quadrupole MS (e.g., Agilent MSD) or tandem MS systems [27]. |

| Chemometric Software | Enables advanced data analysis techniques like 2D-Corr for complex peak deconvolution. | RStudio with custom scripts, or commercial multivariate analysis packages [28]. |

| Stress Reagents | Used in forced degradation studies to generate potential degradants. | Hydrochloric Acid (HCl), Sodium Hydroxide (NaOH), Hydrogen Peroxide (H₂O₂) [26]. |

Establishing specificity through robust peak purity testing is non-negotiable for developing reliable analytical methods in drug development. While PDA-based PPA is the most widely used and efficient first-line approach, its limitations necessitate a method-dependent strategy. MS-assisted PPA offers superior sensitivity and identification power, while emerging techniques like 2D-corr analysis provide powerful tools for deconvoluting complex co-elutions. The most defensible validation strategy often involves a combination of these techniques, supported by well-designed forced degradation studies, to provide irrefutable evidence that an analytical method is truly stability-indicating and fit for its intended purpose.

Linearity and range are foundational parameters in analytical method validation, ensuring that a method produces results that are directly proportional to the concentration of the analyte in a given sample [30]. These parameters confirm that an analytical procedure will perform reliably across the entire spectrum of expected concentrations, providing assurance that the method is fit-for-purpose for its intended application, whether for drug substance assay, impurity quantification, or other critical quality attribute testing.

The linearity of an analytical procedure is its ability, within a defined range, to obtain test results that are directly proportional to the concentration (amount) of analyte in the sample [7]. This proportional relationship is fundamental for accurate quantification, as it allows for the reliable calculation of unknown concentrations from instrumental responses using a calibration curve. The range of an analytical procedure is the interval between the upper and lower concentrations of analyte (including these concentrations) for which it has been demonstrated that the analytical procedure has a suitable level of precision, accuracy, and linearity [7]. The range is therefore dependent on the established linearity but also incorporates accuracy and precision considerations.

For pharmaceutical analysis, establishing linearity and range is not merely a regulatory formality but a scientific necessity to ensure that product quality and patient safety are not compromised by unreliable analytical measurements. Properly validated methods form the bedrock of quality control systems, process understanding, and product knowledge throughout the drug development lifecycle [31].

Regulatory Framework and Key Definitions

Regulatory Expectations

International regulatory guidelines provide a framework for linearity and range validation, though specific acceptance criteria should be justified based on the method's intended use [31]. The International Council for Harmonisation (ICH) Q2(R1) guideline provides the primary framework for analytical method validation, while the United States Pharmacopeia (USP) chapters <1225> and <1033> offer additional guidance, particularly emphasizing that acceptance criteria should be consistent with the method's intended purpose [31] [7].

The FDA states that "an analytical procedure is developed to test a defined characteristic of the drug substance or drug product against established acceptance criteria for that characteristic" [31]. This underscores the importance of designing validation studies that reflect the actual conditions under which the method will be used, with acceptance criteria that ensure reliable performance.

Distinguishing Linearity from Range

While linearity and range are interrelated, they represent distinct validation characteristics:

Linearity demonstrates the quality of the relationship between concentration and response, indicating how well the method can quantify varying amounts of the analyte [5]. It is evaluated through statistical measures of the calibration curve, including correlation coefficient, slope, and y-intercept.

Range defines the span of usable concentrations where the method performs with suitable precision, accuracy, and linearity [5]. It represents the practical operating interval where the method has been demonstrated to be reliable.

Other related terms include working range (the range where the method gives results with an acceptable uncertainty, potentially wider than the linear range) and calibration range (the interval between upper and lower analyte concentrations that can be determined with demonstrated accuracy, precision, and linearity) [32].

Experimental Design for Linearity and Range Studies

Preparation of Standards

The foundation of a robust linearity study lies in the careful preparation of calibration standards. A minimum of five concentration levels is recommended, with many guidelines suggesting 5-8 levels for adequate characterization of the linear response [9] [8] [7]. These levels should be evenly distributed across the specified range, typically spanning 50% to 150% of the target concentration or the expected working range [8] [32].

For impurity methods, the range should cover from the quantitation limit (QL) to at least 150% of the specification limit [5]. Each concentration level should be prepared independently rather than through serial dilution from a single stock solution to avoid propagating errors [8]. Analyzing each level in triplicate provides essential data for assessing precision across the concentration range and improves the reliability of the statistical evaluation [8].

Concentration Selection and Range Bracketting

Proper selection of concentration levels requires strategic bracketing of the expected sample concentrations. The calibration range should extend beyond the expected sample concentrations to ensure reliable extrapolation, with points distributed evenly across the working range [8]. The table below illustrates recommended concentration spacing for linearity studies:

| Concentration Level | Bracket Selection Criteria | Typical Spacing |

|---|---|---|

| Lower Limit | 50-80% of LLOQ | Tighter spacing |

| Low Range | 1-2× LLOQ | 25-50% intervals |

| Mid Range | Expected sample range | 50-100% intervals |

| High Range | 80-100% of ULOQ | 25-50% intervals |

When designing the range, consider the analyte's physicochemical properties and its behavior across different concentrations. Some compounds exhibit linear responses only within specific ranges due to solubility limitations or detection thresholds [8]. Account for potential matrix effects that may cause non-linearity at concentration extremes, particularly with biological samples where protein binding can affect linearity at higher concentrations [8].

Experimental Workflow

The following diagram illustrates the comprehensive workflow for conducting linearity and range studies, from initial planning through final documentation:

Statistical Evaluation and Acceptance Criteria

Regression Analysis and Residual Evaluation

Statistical evaluation of linearity data requires more than just calculating a correlation coefficient. While the coefficient of determination (R²) is commonly used, with a typical acceptance criterion of ≥0.995 to ≥0.997 [8] [5], this value alone can be misleading as it may mask subtle non-linear patterns [8].

A more comprehensive approach includes visual inspection of residual plots, which provides essential evidence of linearity that numerical values alone might miss [31] [8]. In a properly linear method, residuals should be randomly distributed around zero with no discernible pattern. Systematic patterns in residual plots may indicate issues with the regression model:

- U-shaped curves suggest a quadratic relationship, potentially requiring a non-linear model [8]

- Funnel shapes indicate heteroscedasticity (non-constant variance), possibly necessitating data transformation or weighted regression [8]

- Consistent directional trends may suggest matrix effects or other systematic biases

The use of studentized residuals can further enhance linearity assessment. By establishing limits at ±1.96 (95% confidence level), one can statistically determine the point at which an assay response ceases to be linear [31].

Acceptance Criteria Comparison

Acceptance criteria for linearity and range should be established based on the method's intended use and the analytical technology employed. The table below summarizes typical acceptance criteria for different method types and parameters:

| Validation Parameter | Traditional Criteria | Tolerance-Based Criteria | Application Context |

|---|---|---|---|

| Linearity (R²) | ≥0.995 to ≥0.997 [8] [5] | N/A | Universal |

| Range | 50-150% of target [8] | Covers 80-120% of product specifications [31] | Drug substance/product assay |

| Repeatability | %RSD based on concentration | ≤25% of tolerance (chemical methods) [31] | Relative to specification limits |

| Bias/Accuracy | % recovery based on theoretical | ≤10% of tolerance [31] | Ensures minimal method bias relative to specifications |

For bioassays, which typically exhibit higher variability, more lenient criteria may be appropriate, such as ≤50% of tolerance for repeatability while maintaining ≤10% of tolerance for bias [31].

Tolerance-based criteria are particularly valuable as they evaluate method performance relative to the product specification limits the method is intended to measure [31]. This approach directly addresses the method's impact on out-of-specification (OOS) rates and provides a more meaningful assessment of its fitness for purpose.

Troubleshooting Common Linearity Issues

Identifying and Addressing Non-linearity

When linearity problems occur during method validation, systematic troubleshooting is essential. Common issues and their solutions include:

Detector saturation at high concentrations: This manifests as a flattening of the response curve at higher concentrations. Solutions include sample dilution, reducing injection volume, or using a shorter pathlength in UV detection [8].

Matrix effects causing non-linearity: Particularly problematic in biological samples, where matrix components can interfere with analyte response. Solutions include improved sample cleanup, using matrix-matched calibration standards, or employing the standard addition method [8].

Insufficient detector response at low concentrations: This results in poor linearity at the lower end of the range. Approaches to address this include sample concentration, increasing injection volume, or using a more sensitive detection technique [8].

Inappropriate regression model: Simple linear regression may be inadequate for some analytical responses. When visual inspection of residuals reveals systematic patterns, consider weighted regression (for heteroscedastic data) or polynomial fitting for defined curvilinear relationships [8].

Expanding the Linear Range

For techniques with inherently narrow linear ranges, such as LC-MS, several strategies can extend the usable range:

Employ isotopically labeled internal standards (ILIS): While the signal-concentration dependence for the analyte may not be linear, the ratio of analyte to internal standard signals may exhibit linearity across a wider concentration range [32].

Reduce charge competition in ESI-MS: In LC-ESI-MS, decreasing the flow rate (e.g., using nano-ESI) can reduce charge competition and extend the linear dynamic range [32].

Strategic dilution of samples: For samples with concentrations outside the linear range, appropriate dilution can bring the measurement within the established linear range [32].

Essential Research Reagents and Materials

Successful linearity and range studies require high-quality materials and reagents. The following table outlines essential items and their functions:

| Reagent/Material | Function | Critical Considerations |

|---|---|---|

| Certified Reference Standards | Provides known purity analyte for accurate standard preparation | Should be traceable to certified reference materials |

| Appropriate Solvent/Matrix | Dissolves and stabilizes standards across concentration range | Should match sample matrix to account for matrix effects |

| Blank Matrix | Assesses specificity and establishes baseline response | Must be free of interfering components |

| High-Purity Water | Preparation of mobile phases and aqueous standards | Should be HPLC-grade or equivalent |

| Volumetric Glassware | Accurate preparation and dilution of standards | Class A recommended for highest accuracy |

| Calibrated Pipettes | Precise transfer of solutions during standard preparation | Regular calibration essential for measurement accuracy |

| Stable Internal Standard | Normalizes analytical response (for internal standard methods) | Should be structurally similar but resolvable from analyte |

Documentation and Regulatory Compliance

Meeting Documentation Requirements

Comprehensive documentation is essential for demonstrating regulatory compliance. Documentation should include:

Raw data alongside statistical analysis results, including correlation coefficient, y-intercept, slope, and residual values, with acceptance criteria clearly stated for each parameter [8]

Complete audit trail of data processing steps, including any data points excluded from regression analysis and the scientific rationale for exclusion [8]

Visual representations of calibration curves and residual plots to support numerical statistics [8]

Justification for the selected range based on the method's intended use and the demonstrated linearity, accuracy, and precision across that range [7]

Regulatory authorities expect that "the validation target acceptance criteria should be chosen to minimize the risks inherent in making decisions from bioassay measurements and to be reasonable in terms of the capability of the art" [31]. When product specifications exist, "acceptance criteria can be justified on the basis of the risk that measurements may fall outside of the product specification" [31].

Method Transfer Considerations

When transferring methods between laboratories, additional verification of linearity and range is typically required. Acceptance criteria for method transfer often include:

- R² > 0.995 for linear regression

- Slope ratio of 0.98-1.02 between transferring and receiving laboratories

- Residuals within ±2% across the validated range [8]

These criteria ensure that the method performance remains consistent across different laboratory environments, instruments, and analysts.

Properly conducted linearity and range studies are fundamental to establishing reliable analytical methods that generate meaningful data throughout the drug development lifecycle. By implementing rigorous experimental designs, applying appropriate statistical evaluations with tolerance-based acceptance criteria when possible, and thoroughly documenting the process, researchers can ensure their methods are truly fit-for-purpose. The approaches outlined in this guide provide a framework for developing scientifically sound, regulatory-compliant methods that support the accurate assessment of drug product quality and ultimately contribute to patient safety. As regulatory guidance continues to evolve, particularly with the increasing application of AI and advanced analytical technologies [33] [34], the fundamental principles of proper linearity and range validation remain essential for generating trustworthy analytical data.

In analytical chemistry, the Limit of Detection (LOD) and Limit of Quantification (LOQ) are fundamental parameters that define the sensitivity and applicability of an analytical procedure. The LOD represents the lowest concentration of an analyte that can be reliably detected—but not necessarily quantified—under stated experimental conditions, answering the question, "Is there something there?" [35] [10]. In contrast, the LOQ is the lowest concentration that can be quantified with acceptable precision and accuracy, addressing the question, "How much is there?" [11] [36]. These parameters are essential components of method validation, providing critical information about the capability of any analytical method to determine very low concentrations of analytes, which is particularly crucial in pharmaceutical analysis, food safety testing, and environmental monitoring [10] [37].

The International Council for Harmonisation (ICH) guideline Q2(R2) on the validation of analytical procedures recognizes multiple approaches for determining these limits, including visual evaluation, signal-to-noise ratio, and calibration curve-based methods using standard deviation and slope [35] [38]. However, research has demonstrated that these approaches are far from equivalent, yielding significantly different values and varying degrees of reliability [16] [39] [37]. This comparison guide objectively examines these three established methodologies, providing experimental protocols, comparative data, and practical recommendations to help researchers select the most appropriate approach for their specific analytical needs.

Key Definitions and Regulatory Context

According to ICH Q2(R2), the LOD is "the lowest amount of analyte in a sample which can be detected but not necessarily quantitated as an exact value," while the LOQ is "the lowest amount of analyte in a sample which can be quantitatively determined with suitable precision and accuracy" [38]. These definitions establish the fundamental distinction between detection (confirming presence) and quantification (measuring amount). The clinical and laboratory standards institute (CLSI) further refines this concept by introducing the Limit of Blank (LoB), defined as the highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested [11] [36]. This establishes a statistical baseline for distinguishing true analyte signals from background noise.

The ICH guideline explicitly endorses three primary approaches for determining LOD and LOQ [35] [10]:

- Visual Evaluation: Direct assessment by analysis of samples with known concentrations.

- Signal-to-Noise Ratio: Applicable for procedures exhibiting baseline noise.

- Standard Deviation and Slope: Based on the standard deviation of the response and the slope of the calibration curve.

Each method carries distinct assumptions, applications, and limitations, which must be understood to ensure appropriate implementation and interpretation of results.

Decision Workflow for Method Selection

The following diagram illustrates a systematic approach for selecting the most appropriate LOD/LOQ determination method based on analytical requirements and method characteristics:

Comparative Analysis of Methods

Visual Evaluation Method

Experimental Protocol

The visual evaluation method, also referred to as the empirical method, involves the direct analysis of samples with known concentrations of analyte to establish the minimum level at which detection or quantification is feasible [37]. The step-by-step methodology includes:

- Sample Preparation: Prepare a series of blank samples (containing no analyte) and spike samples with known, gradually reduced concentrations of the target analyte in the appropriate matrix [37]. For aflatoxin analysis in hazelnuts, this involved adding 250 μL of a 10-fold diluted stock standard solution to 25g blank samples to achieve 1 μg/kg total aflatoxin concentration [37].

- Analysis: Analyze multiple replicates (typically n=10) of each concentration level using the complete analytical procedure [37].

- Detection Assessment: Determine the lowest concentration where the analyte can be consistently detected by visual inspection of chromatograms or analytical outputs.

- Calculation: For the LOD, use the formula LOD = 3 × SD + B~ave~, where SD is the standard deviation of measurements and B~ave~ is the average concentration of spike samples [37]. For the LOQ, apply LOQ = 10 × SD + B~ave~ [37].

Applications and Limitations

The visual method is particularly valuable for non-instrumental procedures or methods where baseline noise is not easily measurable [10]. Examples include inhibition zone tests for antibiotics or titration endpoints [10]. The primary advantage of this approach is its practical simplicity and direct empirical observation, which often provides realistic, practically relevant limits [37]. However, this method suffers from subjectivity, as it relies on analyst judgment, and may yield less precise values compared to instrumental approaches [35]. It is generally considered more arbitrary than statistical approaches and is often best employed as a confirmatory technique alongside other methods [35].

Signal-to-Noise Ratio Method

Experimental Protocol

The signal-to-noise (S/N) method is exclusively applicable to analytical procedures that exhibit measurable baseline noise, such as chromatographic or spectroscopic techniques [10] [37]. The implementation protocol consists of:

- Blank Analysis: Analyze a sufficient number of blank samples (n≥10) to characterize the baseline noise. The noise can be measured as peak-to-peak variation in a clean region of the chromatogram near the analyte retention time [37].

- Low-Concentration Sample Analysis: Analyze multiple replicates of samples containing known low concentrations of analyte [40].

- Signal-to-Noise Calculation: Compare measured signals from samples with known low concentrations of analyte with those of blank samples [37]. The S/N ratio is calculated by comparing the average peak height values of samples containing the analyte and the noise peak-to-peak average value of blank samples [37].

- Threshold Application: Establish the LOD at a S/N ratio of 3:1 and the LOQ at a S/N ratio of 10:1 [10] [37]. Some regulatory bodies may accept a 2:1 ratio for LOD estimation [10].

Applications and Limitations

The S/N method is widely implemented in chromatographic techniques like HPLC, UPLC, and GC, where baseline noise is readily measurable [10] [41]. Its key advantages include instrument-based objectivity, straightforward implementation, and direct visualization of method performance at low concentrations [35]. However, this approach requires a stable baseline with consistent noise characteristics and may not adequately account for matrix effects or extraction variability [41]. The S/N method also depends on injection volume and chromatographic conditions, making it somewhat less robust for cross-laboratory comparisons without strict protocol standardization [41].

Standard Deviation and Slope Method (Calibration Curve)

Experimental Protocol

The standard deviation and slope method, based on calibration curve parameters, is considered the most statistically rigorous approach by many researchers [35] [16]. The methodology involves:

- Calibration Curve Preparation: Construct a specific calibration curve using samples containing the analyte in the range of the expected LOD and LOQ [35] [37]. The calibration standards should be prepared in the same matrix as the samples and processed through the entire analytical procedure.

- Regression Analysis: Perform linear regression analysis on the calibration data. From the regression output, obtain the slope (S) of the calibration curve and the standard error of the regression or the standard deviation of the y-intercepts [35].

- Calculation: Apply the ICH-recommended formulas: LOD = 3.3 × σ / S and LOQ = 10 × σ / S, where σ represents the standard deviation of the response and S is the slope of the calibration curve [35] [10]. The standard deviation (σ) can be estimated as the standard error of the calibration curve obtained from linear regression analysis [35].

Applications and Limitations