Ultimate Guide to Smartphone Colorimetric Calibration: Methods for Precise Quantitative Analysis in Biomedical Research

This comprehensive guide explores advanced calibration methodologies for smartphone-based quantitative colorimetric analysis, tailored for researchers and drug development professionals.

Ultimate Guide to Smartphone Colorimetric Calibration: Methods for Precise Quantitative Analysis in Biomedical Research

Abstract

This comprehensive guide explores advanced calibration methodologies for smartphone-based quantitative colorimetric analysis, tailored for researchers and drug development professionals. It covers foundational principles of smartphone colorimetry, detailed calibration protocols using specialized apps and software, strategies to overcome illumination and hardware variability, and rigorous validation against reference spectrophotometers. The article provides practical frameworks for implementing robust, field-deployable colorimetric sensors for applications spanning clinical diagnostics, therapeutic drug monitoring, and environmental analysis, addressing both current capabilities and future directions in mobile sensing technology.

Smartphone Colorimetry Fundamentals: Principles, Advantages, and Core Components

Core Principles of Smartphone-Based Colorimetry and CIELAB Color Space

Core Principles and Frequently Asked Questions

Fundamental Concepts

Q1: What is the key advantage of using the CIELAB color space over standard RGB in smartphone colorimetry?

The CIELAB color space (also referred to as Lab*) provides significant advantages for scientific colorimetric analysis. Unlike RGB, which is device-dependent and highly sensitive to lighting changes, CIELAB is designed to approximate human vision and is a device-independent, standardized color model. Its a* and b* chromatic coordinates exhibit inherent resistance to illumination changes, a phenomenon explained by the concept of "equichromatic surfaces." This makes CIELAB particularly valuable for quantitative analysis, as it is intended to be a perceptually uniform space where a given numerical change corresponds to a similar perceived change in color. While no space is perfectly uniform, CIELAB is highly effective for detecting small color differences. In practice, this enables much broader measurement ranges compared to absorbance-based techniques, with comparable limits of detection, but without the need for complex, controlled lighting housings [1] [2] [3].

Q2: What are the common connection issues with colorimeter apps and how are they solved?

Connection problems often stem from incorrect pairing procedures and permission settings.

- Incorrect Pairing Method: Do not pair the colorimeter directly from your phone's main Bluetooth settings. Instead, open the dedicated application (e.g., LScolor app) and select your device's Serial Number (SN) from within the app's connection interface [4].

- Location Services Not Enabled: For both iOS and Android devices, the "Location" permission must be enabled for the app to scan for and connect to Bluetooth Low Energy (BLE) devices. This can be enabled via your phone's Settings menu [4].

- Insufficient App Permissions: If the app stalls on the initial screen, it may lack necessary permissions. Reinstalling the app and granting all requested permissions (especially "Location" and "Access/Modify Internal Storage") upon first launch typically resolves this [4].

Calibration and Measurement

Q3: Why is calibration critical and what methods improve accuracy?

Calibration is essential to overcome biases introduced by variable smartphone hardware and environmental factors. Research has systematically quantified that lighting conditions and viewing angles can introduce substantial bias, with color deviation (ΔE) increasing by up to 64% at oblique angles [3]. Advanced calibration methods use a color reference chart (e.g., a Spyder Color Checkr) to implement a matrix-based color correction. This methodology can reduce inter-device and lighting-dependent variations by 65–70% [3]. For the highest accuracy, an augmented reality-guided approach can be used. This system directs the user to capture an image at an optimal angle to minimize non-Lambertian reflectance, and when combined with a novel color correction algorithm, can reduce color variance by up to 90% [5].

Q4: What is a fundamental limitation of RGB-based colorimetry?

A key limitation is the artificial discontinuities created when highly saturated colors exceed the sRGB color gamut. During kinetic monitoring, for example, this can manifest as "shouldering" effects in the data that are not present in reference spectrophotometric measurements. This occurs because the RGB color space cannot accurately represent all visible colors, leading to clipping and distortion for colors outside its gamut [3].

Experimental Protocols and Data

Detailed Methodology for Illumination-Invariant Colorimetric Sensing

This protocol is based on research demonstrating that careful optimization of color space boosts performance [1].

- Sample Preparation: Prepare samples or sensors that produce monotonal shadings with spectral compositions covering a wide range of the visible spectrum.

- Image Acquisition: Place the sample adjacent to a standardized color reference chart. Capture images using a smartphone camera. For optimal results, use an app that guides the user to a consistent viewing angle to minimize reflective effects [5].

- Color Data Extraction: Use a region of interest (ROI) selection algorithm to automatically extract raw color data from both the sample and the reference chart.

- Color Space Conversion: Convert the raw image data (typically sRGB) first to CIE XYZ values, and then to the CIELAB color space using standard formulas. The most common conversion uses the D65 standard illuminant as the reference white point [2] [3].

- ( L^* = 116 \, f(Y/Y_n) - 16 )

- ( a^* = 500 \left( f(X/Xn) - f(Y/Yn) \right) )

- ( b^* = 200 \left( f(Y/Yn) - f(Z/Zn) \right) ) Where ( f(t) = t^{1/3} ) if ( t > (\frac{6}{29})^3 ), else ( f(t) = \frac{1}{3}(\frac{29}{6})^2 t + \frac{4}{29} ).

- Color Correction: Apply a matrix-based color correction algorithm using the known reference values from the color chart to the measured values. This corrects for device-specific and lighting-specific biases [3] [5].

- Quantitative Analysis: Use the corrected ( a^* ) and ( b^* ) coordinates for quantitative analysis, as they provide the highest illumination-invariance. Construct calibration curves by plotting these chromatic coordinates against analyte concentration.

Performance Comparison of Color Spaces

The table below summarizes key performance characteristics of different color spaces used in smartphone-based colorimetry, based on research findings.

Table 1: Quantitative Comparison of Color Spaces in Smartphone Colorimetry

| Color Space | Illumination Invariance | Perceptual Uniformity | Typical Measurement Range | Key Advantage |

|---|---|---|---|---|

| RGB | Low [1] | Low [2] | Limited by gamut clipping [3] | Simple to acquire, direct from sensor |

| sRGB | Low [1] | Low [2] | Limited, prone to "shouldering" at high saturation [3] | Standard for consumer digital images |

| CIELAB | High (a, b coordinates) [1] | High (Intended) [2] | Broad, outperforms absorbance-based techniques [1] | Device-independent, illumination-resistant |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials for Smartphone-Based Colorimetric Experiments

| Item | Function / Application |

|---|---|

| Color Reference Chart (e.g., Spyder Color Checkr, RAL Classic charts) | Provides known color values for calibrating and correcting color data from smartphone cameras, critical for reducing inter-device variability [3] [5]. |

| Paper-Based Microfluidics / Lateral Flow Assays | Serve as low-cost, portable, and disposable platforms for colorimetric reactions in point-of-care diagnostics and environmental testing [6]. |

| Polymeric Dye Films (e.g., with nitrophenol or azobenzene moieties) | Provide reversible, continuous color changes in response to analytes like pH; are robust for long-term monitoring as the dye is covalently fixed [7]. |

| Nanoparticle-Based Sensors (e.g., Gold, Silver NPs) | Act as colorimetric probes; color changes occur due to aggregation or specific reactions, enabling detection of various chemical and biological targets [6]. |

| Standard Illuminant Data (D65 or D50) | Used as the reference white point (( Xn, Yn, Z_n )) for accurate conversion from CIE XYZ to the CIELAB color space [2] [3]. |

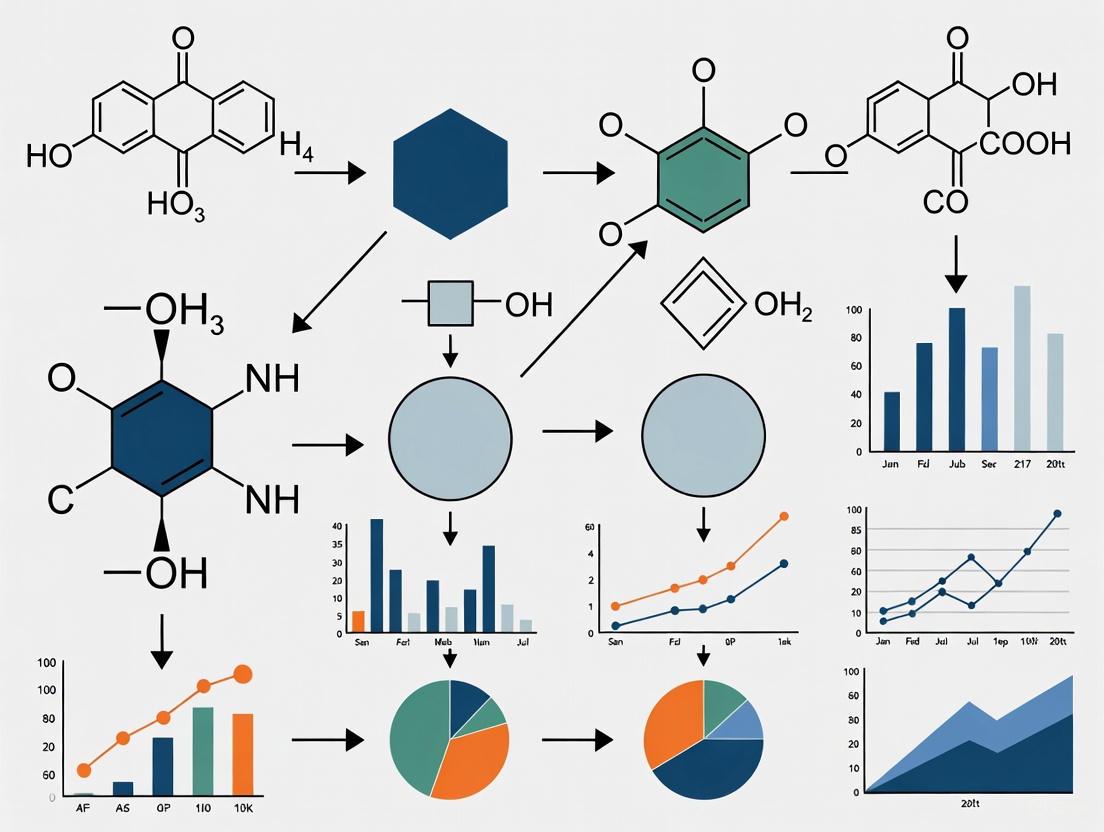

Workflow Visualization

The following diagram illustrates the complete workflow for achieving accurate, illumination-invariant colorimetric measurements using a smartphone.

The core logical relationship in optimizing smartphone colorimetry is summarized below.

Smartphone-based quantitative colorimetric analysis represents a significant shift in diagnostic and environmental testing, moving from traditional centralized laboratories to portable, point-of-need applications. This methodology leverages the ubiquitous smartphone as a powerful analytical tool, combining optical sensors with sophisticated software to perform quantitative chemical analysis. The core principle involves using the smartphone's camera to capture images of colorimetric reactions—where a change in color indicates the presence or concentration of a target analyte—and then using image processing algorithms to convert color intensity into quantitative data.

This technical support center provides researchers and scientists with essential troubleshooting guides, detailed protocols, and FAQs to overcome common challenges in implementing these systems, with a specific focus on robust calibration methods essential for obtaining research-grade data.

★ Technical Troubleshooting Guide: FAQs & Solutions

FAQ 1: How can I minimize the impact of varying ambient lighting on measurement accuracy?

- Problem: Inconsistent lighting conditions cause significant signal variance, leading to poor data reproducibility.

- Solution: Implement a reference color correction system directly within your sensor design.

- Procedure: Integrate three reference cells (e.g., with low-, medium-, and high-blue intensity) on the same sensor strip as your test zone [8]. Capture an image of the entire sensor. For analysis, first convert the RGB values of the reference cells to absorbance. Then, use the following relationship to correct the sensing area's signal:

Corrected Abs = (Abs of Sensing Area) / (Correlation Slope of Blue References)[8] - This method digitally normalizes the image, effectively canceling out the effects of variable illumination and different camera qualities [8].

- Procedure: Integrate three reference cells (e.g., with low-, medium-, and high-blue intensity) on the same sensor strip as your test zone [8]. Capture an image of the entire sensor. For analysis, first convert the RGB values of the reference cells to absorbance. Then, use the following relationship to correct the sensing area's signal:

FAQ 2: What smartphone camera settings are critical for reproducible results?

- Problem: Automatic camera processing (white balance, auto-focus, color enhancement) introduces unpredictable variability.

- Solution: Always use the manual or "Pro" mode and capture images in RAW format if possible [8].

- Essential Settings:

- Manual White Balance: Set to a fixed value (e.g., "Daylight" or a specific color temperature).

- Manual Focus: Ensure the sensor is in sharp focus.

- Disable Filters: Turn off all automatic color enhancement, filters, and HDR modes.

- RAW Format: Using RAW image capture bypasses the phone's built-in JPEG processing pipeline, providing unprocessed data from the sensor that is ideal for quantitative analysis [8].

- Essential Settings:

FAQ 3: My colorimetric data is noisy. How can I improve signal stability?

- Problem: High coefficient of variation in replicate measurements.

- Solution: Ensure you are analyzing the correct color channel and using a standardized image processing workflow.

- Channel Selection: For reactions that produce a red complex (e.g., the thiocyanatoiron(III) complex), the blue channel often provides the most sensitive and inverse relationship for quantification [9] [8]. Confirm this by analyzing the RGB deconvolution of your specific reaction.

- Standardized Analysis: Use a consistent region of interest (ROI) size and location when analyzing images with software like ImageJ. Calculate the absolute absorbance for the most relevant color channel using the formula:

A = -log(I/I₀), whereIis the mean intensity of the test zone andI₀is the mean intensity of an on-sensor white reference area [8].

FAQ 4: How can I validate the accuracy of my smartphone method against a gold standard?

- Problem: Uncertainty about the reliability of a novel smartphone-based assay.

- Solution: Perform a method comparison study using a standard laboratory instrument, such as a UV-Vis spectrophotometer.

- Validation Protocol: Prepare a series of standard concentrations. Analyze each sample with both the smartphone method and the UV-Vis spectrometer. Use statistical tests, such as a dependent samples t-test, to determine if there is a significant difference between the results at a 95% confidence level (p < 0.05). Successful validation is achieved when no statistically significant difference is found [9].

★ Detailed Experimental Protocol: Determining a Chemical Equilibrium Constant (Kc)

This protocol outlines the methodology for determining the equilibrium constant (Kc) of the thiocyanatoiron(III) complex, [Fe(SCN)]²⁺, adapting a published inquiry-based activity for researchers [9].

Materials and Equipment

Table: Essential Research Reagents and Equipment

| Item | Specification / Function |

|---|---|

| Iron(III) Nitrate | Provides the Fe³⁺ ions for complex formation [9]. |

| Potassium Thiocyanate (KSCN) | Provides the SCN⁻ ions for complex formation [9]. |

| Nitric Acid | Provides an acidic medium to prevent iron hydrolysis [9]. |

| White Well-Plate | Provides a uniform white background for consistent imaging, replacing traditional test tubes or cuvettes [9]. |

| Autopipettes | For accurate and precise liquid handling (e.g., volumes from 10–1000 µL) [9]. |

| Smartphone | Must have a camera with manual control capabilities [8]. |

| Light Control Box | Optional but recommended to create consistent, uniform illumination for image capture [9]. |

| ImageJ Software | Open-source image processing software for quantitative color intensity analysis [9]. |

Procedure

Step 1: Preparation of Standard Solutions

- Prepare a stock solution of 2.00 × 10⁻² M Fe(NO₃)₃ in 0.25 M HNO₃.

- Prepare a stock solution of 2.00 × 10⁻² M KSCN.

- Create a series of 5-6 standard solutions with known concentrations of [Fe(SCN)]²⁺ by mixing varying volumes of the two stock solutions and diluting with 0.25 M HNO₃ to a constant final volume.

Step 2: Image Acquisition

- Place the well-plate containing the standard solutions and your test samples inside the light control box.

- Using a smartphone mounted on a stand, capture images of the well-plate with all camera settings fixed in manual mode, as detailed in the troubleshooting guide above [8].

Step 3: Image Analysis with ImageJ

- Open the image in ImageJ.

- For each well, use the "Oval" selection tool to define a consistent Region of Interest (ROI) within the solution.

- Use the "Analyze > Measure" function to obtain the mean intensity values for the Red, Green, and Blue (RGB) channels.

- Also measure the mean intensity of a white reference area on the well-plate (I₀).

- Calculate the absorbance for the most relevant color channel (typically Blue for the red complex):

A = -log( I_well / I_whiteReference ).

Step 4: Data Analysis and Kc Calculation

- Plot the absorbance (A) of the standard solutions against the known concentration of [Fe(SCN)]²⁺ to create a calibration curve and obtain a linear regression equation.

- For the equilibrium samples, use this calibration curve to determine the equilibrium concentration of [Fe(SCN)]²⁺ from the measured absorbance.

- Using an ICE (Initial, Change, Equilibrium) table and the stoichiometry of the reaction

Fe³⁺ + SCN⁻ ⇌ [Fe(SCN)]²⁺, calculate the equilibrium concentrations of all species. - Calculate the equilibrium constant:

Kc = [Fe(SCN)²⁺] / ([Fe³⁺][SCN⁻]).

Table: Example Data Structure for Kc Determination

| Initial [Fe³⁺] (M) | Initial [SCN⁻] (M) | Absorbance (Blue) | Equilibrium [[Fe(SCN)]²⁺] (M) | Equilibrium [Fe³⁺] (M) | Equilibrium [SCN⁻] (M) | Kc |

|---|---|---|---|---|---|---|

| 2.00 x 10⁻³ | 2.00 x 10⁻⁴ | 0.15 | Calculated from Calibration | Initial - Equilibrium | Initial - Equilibrium | Calculated |

| 2.00 x 10⁻³ | 4.00 x 10⁻⁴ | 0.28 | ... | ... | ... | ... |

| ... | ... | ... | ... | ... | ... | ... |

★ Visual Workflows for Experimental and Calibration Processes

Smartphone Colorimetry Workflow

Three-Reference Calibration System

Frequently Asked Questions (FAQs)

Q1: My colorimetric results are inconsistent across different smartphones. What is the primary cause and how can it be mitigated? The primary cause is the automatic image processing (e.g., auto-white balance, color enhancement) performed by smartphone cameras and the variability in ambient lighting [8]. To mitigate this:

- Use Manual/Pro Mode: Operate the smartphone camera in manual or "Pro" mode to disable all automatic enhancements [8].

- Capture RAW Images: Use RAW image format capture where available, as it provides unprocessed sensor data. This can be enabled on Samsung phones in "Pro Mode" or on iPhones using third-party apps like Halide Mark II [8].

- Incorporate a Reference: Use a sensor design that includes an internal reference area or reference cells (e.g., a white blotting paper or colored reference cells) within the same image to allow for post-capture correction of lighting variations [8].

Q2: How can I control lighting conditions without an expensive laboratory setup? A low-cost, controlled imaging environment can be created using a simple light-diffusing imaging box. This box shields the sensor from ambient daylight and improves the signal-to-noise ratio [10]. For more advanced control, an ambient ring light-based smartphone platform can be used to provide consistent, uniform illumination [11].

Q3: What are the essential features to look for in a clip-on accessory for smartphone colorimetry? An effective clip-on accessory should:

- Provide Controlled Illumination: Integrate a stable light source, such as the phone's own LED flash channeled through optical fibers, to ensure consistent excitation [12].

- Ensure Optical Alignment: Precisely align optical components (lenses, diffraction gratings) with the phone's camera and flash using a custom-fabricated cradle [12].

- Standardize Sample Positioning: Include a cartridge or holder to maintain a fixed distance and angle between the sample, the light source, and the camera lens [12] [13].

Troubleshooting Guides

Issue 1: Inconsistent Color Values Under Varying Ambient Light

Problem: Analyte concentration results vary significantly when the same sample is imaged in different locations (e.g., in a bright vs. a dim lab).

Solution: Implement a multi-reference cell correction method.

- Step 1: Sensor Design. Incorporate a three-reference-cell system directly onto the sensor strip. These cells should have varying intensities of a stable color, with blue reference cells demonstrated to perform well [8].

- Step 2: Image Capture. Capture the sensor image under your experimental conditions, ensuring the reference cells are in the frame.

- Step 3: Calculate Correction Factor.

- Use image processing software (e.g., ImageJ) to convert the RGB values of the reference cells to absorbance values:

Abs = -log(I/I₀), whereIis the reference cell intensity andI₀is the white reference intensity [8]. - A linear correlation is established between the absorbance values of the reference cells captured under uncontrolled conditions and their values from a controlled condition (e.g., inside a light box) [8].

- The slope of this correlation is your correction factor.

- Use image processing software (e.g., ImageJ) to convert the RGB values of the reference cells to absorbance values:

- Step 4: Apply Correction. Correct the absorbance of your sensing area using the formula:

Corrected Abs = (Abs of Sensing Area) / (Correlation Slope from Blue References)[8].

Issue 2: Non-Linear or Unreliable Calibration Curves

Problem: The calibration curve plotted from RGB values has a poor correlation coefficient, making quantitative analysis unreliable.

Solution: Optimize the image analysis workflow and color model.

- Step 1: Validate Imaging Setup. Ensure you have followed the protocols in FAQ A1 and A2. Consistency is key.

- Step 2: Convert Color Spaces. RGB "gray values" are inversely related to color intensity. Convert RGB to CMY (Cyan, Magenta, Yellow) values using the formula

CMY = 255 - RGBto obtain values proportional to the color intensity developed in the assay [10]. - Step 3: Select the Optimal Color Channel. Test all RGB and CMY channels against your calibration standards. The channel that shows the most significant and consistent change with analyte concentration should be selected for building your final calibration curve. For example, the blue (B) channel may be optimal for a blue-colored product [10] [14].

- Step 4: Use Open-Source Software. Utilize robust, scientific image processing software like ImageJ for color quantification, as it can provide more precise and reproducible results than simple mobile apps [10] [11].

Experimental Protocols & Data Presentation

Protocol: Quantitative Iron Detection in Whole Blood

This protocol is adapted from a peer-reviewed method for smartphone-based iron quantification [8].

1. Sensor Fabrication and Assembly:

- Materials: A 3D-printed top and bottom sensor frame, four membrane layers (general nylon, fiberglass, asymmetric polysulfone, hydrophilic nylon), white blotting paper for the reference area, and three blue reference cells.

- Assembly: Laser-cut membranes into 6x6 mm squares. Assemble them between the 3D-printed frames, with the hydrophilic nylon (4th layer) impregnated with iron-capturing reagents. Include the white reference and blue reference cells on the side. Individually pack finished sensors in aluminized Mylar bags with desiccant [8].

2. Sensor Testing and Image Acquisition:

- Sample Application: Apply a liquid sample to the sampling port with the reading side face down. After a 10-minute reaction time, flip the sensor so the reading side is face up [8].

- Smartphone Imaging:

- Use smartphones with cameras set to manual/Pro mode (e.g., iPhone XR, Samsung Galaxy S10+, Samsung Note 8).

- Disable all filters and color enhancements.

- If supported, enable RAW image capture.

- Capture the image in a consistent manner, ensuring the entire sensing and reference area is in frame [8].

3. Image Analysis and Data Correction:

- Software: Use ImageJ (version 1.54g or later).

- ROI Analysis: Select regions of interest (ROIs) for the sensing area, white reference (I₀), and the three blue reference cells.

- Calculate Absorbance: For each ROI, obtain the mean intensity (I) and calculate absolute absorbance:

Abs = -log(I/I₀). - Apply In-Image Correction: Use the calculated slope from the blue reference cells to correct the sensing area absorbance, as detailed in the troubleshooting guide above [8].

The table below summarizes performance data for this method across different phone models and lighting conditions [8].

Table 1: Performance of Smartphone-Based Iron Quantification

| Smartphone Model | Lighting Condition (Lux) | Coefficient of Variation (CV) | Improvement vs. Previous Method |

|---|---|---|---|

| Samsung Galaxy S10+ | Controlled (1316 ± 3) | ~5% | Baseline |

| iPhone XR | Variable (6 - 1693) | ~5% | Consistent performance across lights |

| Samsung Note 8 | Variable (6 - 1693) | ~5% | Consistent performance across lights |

| Average across platform | Mixed | 5.13% | Absorbance results improved by 8.80% |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Colorimetric Assays

| Item | Function / Application | Example from Literature |

|---|---|---|

| Citric Acid, Ascorbic Acid, Thiourea (Reagent A) | Component of a reducing agent mixture for iron quantification; facilitates the colorimetric reaction [8]. | Used with Ferene to detect iron in blood [8]. |

| Ferene (Reagent B) | Chromogenic agent that reacts with iron to produce a colored complex [8]. | Used for iron quantification, measured at 590 nm [8]. |

| Phosphotungstate Reagent | Oxidizing agent used in the detection of uric acid; produces a blue-colored product in an alkaline medium [10]. | Detection of uric acid in artificial and real urine samples [10]. |

| Hydrophilic Nylon Membrane | The fourth layer in a sensor stack; impregnated with capturing reagents for the target analyte [8]. | Serves as the reaction site in the iron sensor [8]. |

| Paper-based Test Strips | A solid support for dry reagent pads that change color upon exposure to a liquid analyte. | Used in urinalysis for glucose, ketones, pH, etc. [12] [11]. |

Workflow Visualization

Diagram Title: Smartphone Colorimetric Analysis Workflow

Diagram Title: Troubleshooting Guide for Data Consistency

Diagram Title: Clip-on Spectrometer Assembly Path

Frequently Asked Questions (FAQs)

Q1: Why do I get different RGB values when using different smartphones to analyze the same sample?

Smartphone cameras undergo device-specific processing (demosaicing, gamma correction, sharpening, and compression) that alters raw sensor data, leading to inter-device variability [15]. This is compounded by differences in camera sensors, lenses, and built-in image processing algorithms [16]. Furthermore, ambient lighting conditions and the camera angle relative to the sample can introduce significant bias [16] [17]. A study measuring urine samples with five different smartphones found that without color correction, agreement between devices was poor, particularly for the Red channel [17].

Q2: My colorimetric assay shows inconsistent results between replicates. What are the common causes?

Inconsistent replicates often stem from three main areas:

- Sample Handling: Variability in pipetting accuracy or inconsistent preparation of biological samples can alter results [18].

- Reagent Issues: Using expired or improperly stored reagents can change their reactivity. Reagents must be prepared according to instructions and stored at recommended temperatures, protected from light if necessary [18].

- Assay Conditions: Non-optimized incubation times and temperatures can lead to variable reaction rates and background formation [18]. Always include blank and positive controls to account for non-specific absorbance and assess assay performance.

Q3: How can I improve the reliability of my smartphone-based colorimetric measurements?

Implement these key steps:

- Use a Controlled Imaging Environment: Capture images in a light-box or customized photo box to block ambient light and standardize lighting conditions [10] [17].

- Employ Color Correction: Use a color calibration card (e.g., Datacolor SpyderCHECKR) in your images. A matrix-based correction method can reduce inter-device and lighting-dependent variations by 65–70% [16] [17].

- Explore Robust Color Spaces: While RGB is common, the Saturation channel from HSV (Hue-Saturation-Value) color space can provide more reliable results for intensity-based assays and is less susceptible to ambient lighting noise [15].

- Ensure Proper Calibration: Always calibrate your system with standard solutions across the expected concentration range to create a reliable calibration curve [18].

Q4: What does it mean if my calculated absorbance value is greater than 2.00?

Absorbance readings above 2.00 typically indicate that your sample solution is too concentrated or too dark [19]. In this range, very little light passes through the sample, making the signal unreliable and difficult to distinguish from noise. For accurate readings, you should dilute your sample so that its absorbance falls within the useful range of 0.05 to 1.0 [19].

Q5: My assay's color is very intense, but the RGB values seem to max out and don't change with higher concentrations. What is happening?

This indicates that you are likely dealing with a highly saturated color that exceeds the gamut, or reproducible color range, of the standard sRGB color space [16]. This can create artificial discontinuities in your data. The solution is to dilute your samples to bring the color intensity back into a range where the RGB values change proportionally with concentration.

Troubleshooting Guides

Problem: High Background Noise or Signal

Possible Causes and Solutions:

- Cause 1: Non-specific reactions or contaminated reagents.

- Solution: Use high-purity reagents and include a blank control to subtract the background signal [18].

- Cause 2: Sub-optimal assay conditions.

- Solution: Carefully optimize incubation times and temperatures according to manufacturer guidelines to minimize non-specific background formation [18].

- Cause 3: Complex sample matrix (e.g., lipids, proteins in biological fluids).

- Solution: Dilute the sample, or use pre-clearing techniques like centrifugation or filtration to remove interfering particulates [18].

Problem: Poor Agreement Between Smartphones

Possible Causes and Solutions:

- Cause 1: Differing built-in image processing pipelines across smartphone brands.

- Cause 2: Varying lighting conditions and camera angles.

- Cause 3: Relying on the native RGB color channels which are highly sensitive to ambient noise.

- Solution: Convert images to HSV color space and use the Saturation channel for analysis, which has been shown to be more robust to lighting variations [15].

Problem: Non-Linear or Poor Correlation in Calibration Curve

Possible Causes and Solutions:

- Cause 1: Sample concentrations are outside the linear dynamic range of the assay.

- Solution: Dilute or concentrate your samples to fit within the established linear range [19].

- Cause 2: Improver color value extraction or region of interest (ROI) selection.

- Solution: Use consistent ROI size and location. Employ software like Image J to quantify average color intensity across a defined area rather than a single point [10].

- Cause 3: Using an inappropriate color channel for analysis.

Experimental Protocols

1. Principle: In an alkaline medium, uric acid reduces phosphotungstate reagent, producing a blue-colored tungsten complex. The intensity of this blue color is proportional to the concentration of uric acid.

2. Materials and Reagents:

- Uric acid standard solution

- Phosphotungstate reagent (Follin reagent)

- Sodium carbonate (Na₂CO₃) solution, 10%

- Artificial urine or diluted real urine sample

- Glass cuvettes

- Smartphone (e.g., Samsung Galaxy A52)

- Imaging box with white background

- Computer with Image J software

3. Procedure:

- Color Development: Transfer aliquots of uric acid standard (1-5 mL of a 30 µg/mL solution) into a series of 10 mL volumetric flasks. Add 3.0 mL of 10% Na₂CO₃ to each flask and let stand for 10 minutes. Then, add 1.0 mL of phosphotungstate reagent, mix well using a vortex, and dilute to the mark with distilled water. The final concentration range should be 3.0–15 µg/mL.

- Imaging: Place the solutions in glass cuvettes. Position them in an imaging box to eliminate external light interference. Capture an image using the smartphone camera against a white background. Ensure the image includes all samples and a color calibration card if used.

- Image Analysis with Image J:

- Open the image file (TIFF format is preferred) in Image J.

- Crop the image to remove blank edges, ensuring each sample segment is included.

- For each sample, select a consistent Region of Interest (ROI) within the colored solution.

- Use Image J's analysis tools to measure the mean gray value for the Red, Green, and Blue (RGB) channels within the ROI.

- Convert the RGB values to CMY (Cyan-Magenta-Yellow) values using the formula: CMY = 255 - RGB [10]. The CMY value is proportional to the color intensity.

4. Data Analysis:

- Plot the CMY values (or the values from the most responsive channel) against the known uric acid concentrations to generate a calibration curve.

- Use the regression equation of this curve to determine the concentration of unknown samples.

Workflow: Smartphone-Based Colorimetric Analysis

The following diagram illustrates the general workflow for a quantitative smartphone-based colorimetric analysis, from sample preparation to concentration determination.

Data Presentation

Table 1: Comparison of Colorimetric Analysis Methods for Quantitative Determination

This table summarizes key performance characteristics of different analytical methods as demonstrated in the determination of uric acid [10].

| Method | Linear Range (µg/mL) | Correlation Coefficient (R²) | Key Advantages | Key Limitations |

|---|---|---|---|---|

| DIC / Image J | 3.0 – 15 | ~0.99 (CMY values) | High precision; uses free, open-source software; good for static analysis [10]. | Requires transfer to computer for analysis. |

| DIC / RGB Color Detector App | 3.0 – 15 | ~0.97 (B channel) | Direct on-phone analysis; portable and rapid [10]. | Lower correlation than Image J; primarily semi-quantitative [10]. |

| UV/VIS Spectrophotometry | 3.0 – 15 | ~0.99 (Absorbance) | Gold standard; high accuracy and precision [10]. | Requires expensive, non-portable laboratory equipment. |

| HSV Saturation Method | Varies by assay | High (as per study) | Robust to ambient lighting; improved limit of detection; enables equipment-free analysis [15]. | Performance is application-specific; requires validation. |

Table 2: Research Reagent Solutions for Smartphone Colorimetry

This table details essential materials and their functions for setting up a smartphone-based colorimetric experiment, as referenced in the search results.

| Item | Function / Application | Example from Literature |

|---|---|---|

| Color Calibration Card | Standardizes color measurements across different devices and lighting conditions by providing reference colors for correction [16] [17]. | Datacolor SpyderCHECKR 24 [17]. |

| Imaging Box / Light Box | Provides a controlled environment with consistent, diffuse lighting, shielding the sample from variable ambient light [10] [17]. | Custom-built polystyrene foam box with LED light source [17]. |

| Image Analysis Software | Used to quantitatively extract color intensity values from digital images. | Image J (open-source) [10], Adobe Photoshop [17]. |

| Open-Source Mobile Apps | Allows for direct, on-device color extraction, useful for rapid or field-based screening. | RGB Color Detector [10], Color Picker [11]. |

| Standard Cuvettes / Petri Dishes | Hold liquid samples for imaging; ensure consistent optical path length and placement. | Glass cuvettes [10], disposable Petri dishes [17]. |

Technical Specifications & Methodologies

For high-precision requirements, especially in kinetic studies, a more advanced color correction is needed:

- Image Capture with References: Always capture the sample alongside a color calibration chart that includes known reference colors.

- Reference Color Processing: Use a spectrophotometer to measure the visible transmission/reflectance spectra of the color chart patches. Convert these spectra to reference CIE XYZ values using standard illuminants (e.g., D65) and observer functions.

- Matrix Calculation: Extract the smartphone's RGB values for each corresponding color patch. Calculate a correction matrix (e.g., using linear least squares) that best maps the smartphone RGB values to the reference XYZ values.

- Application: Apply this correction matrix to all subsequent sample images taken with the same smartphone and lighting setup to obtain standardized color values.

Logical Relationship: From Sample to Quantitative Result

The following diagram maps the logical pathway of converting a physical sample's property into a quantitative analytical result using smartphone colorimetry, highlighting critical transformation steps.

The Role of Standard Illuminants and Observers in Color Measurement

Frequently Asked Questions

1. What are standard illuminants and observers, and why are they critical for smartphone colorimetry? Standard illuminants are published theoretical sources of visible light with defined spectral power distributions (SPDs), providing a basis for comparing colors under different lighting [20] [21]. Standard observers are mathematical functions representing the average human eye's color response under a specific field of view [22] [23]. In smartphone-based colorimetry, they are fundamental for transforming device-specific camera responses into standardized, reproducible color values, ensuring your quantitative results are accurate and comparable across different devices, locations, and times.

2. I'm setting up my smartphone imaging system. Which standard illuminant should I use to simulate daylight? You should use a D-series illuminant, specifically CIE Standard Illuminant D65 [20] [21]. It is intended to represent average daylight with a correlated color temperature (CCT) of approximately 6500 K and is the standard representative daylight illuminant for colorimetric calculations [20] [24]. While D50 (5003 K) is also used in some industries like photography, D65 is the canonical choice for scientific applications requiring representative daylight [21].

3. My color measurements don't match visual assessments. Could the standard observer be the issue? Yes. The CIE 1931 2° Standard Observer is based on a narrow field of view and may not correlate well with visual assessments, especially if your sample is large or viewed peripherally [22]. For a wider field of view, the CIE 1964 10° Supplementary Standard Observer is more representative of how the human eye perceives color in such contexts and is recommended for instrumental color measurement [22] [23]. Ensure your color analysis software is configured for the correct observer.

4. How can I achieve a consistent D65 illuminant in my smartphone setup? Achieving a perfect artificial source for D65 is challenging [20]. The most practical approach is to use a high-quality daylight-simulating LED panel with a high Color Rendering Index (CRI > 95). Characterize the LED's SPD with a spectrometer if possible, and use the CIE's metamerism index to assess its quality as a daylight simulator [20]. Alternatively, for less critical applications, you can perform a white balance calibration on your smartphone using a standard white reference tile under your chosen light source.

5. What causes two samples to match under my phone's flash but look different outdoors? This is a classic case of metamerism [25]. Two colors with different spectral compositions are metamers if they match under one illuminant but not under another [25]. Your phone's flash (which may be similar to illuminant A) and daylight (D65) have different SPDs. If your samples are metameric, they will appear different under these two light sources. This underscores the importance of using and reporting a standard illuminant in your analysis.

Essential Concepts for Experiment Design

The following tables summarize the core components you must define for your colorimetric experiments.

Table 1: Common CIE Standard Illuminants [20] [21] [24]

| Illuminant | Correlated Color Temperature (CCT) | Represents | Key Application in Smartphone Colorimetry |

|---|---|---|---|

| A | 2856 K | Typical incandescent / tungsten-filament lighting. | Use when the phone's built-in flash is the primary light source. |

| D50 | 5003 K | "Horizon" daylight. | Common in photography and graphic arts; a daylight reference. |

| D55 | 5500 K | Mid-morning / mid-afternoon daylight. | An alternative daylight reference. |

| D65 | 6504 K | Noon daylight (standard). | The default for representing average daylight. |

| F Series | Varies (e.g., F2: 4230 K) | Various fluorescent lamps. | Use when measuring under typical office or lab fluorescent lighting. |

Table 2: CIE Standard Colorimetric Observers [22] [23] [25]

| Standard Observer | Field of View | Description | Recommended Use |

|---|---|---|---|

| CIE 1931 | 2° (≈ thumbnail at arm's length) | First standardized function; based on the belief color-sensing cones were in a 2° foveal arc [22]. | Colorimeters; quality control for small samples [22]. |

| CIE 1964 | 10° (≈ palm at arm's length) | Supplementary standard; more representative of typical human color perception [22]. | Recommended for spectrophotometry and formulating color for larger samples [22]. |

Experimental Protocol: Smartphone Color Measurement Calibration

This protocol provides a methodology for calibrating a smartphone-based colorimetric analysis system using standard illuminants and observers.

1. Objective To establish a standardized workflow for capturing and analyzing color data with a smartphone, ensuring measurements are traceable to CIE standards.

2. Materials and Reagents Table 3: Research Reagent Solutions & Essential Materials

| Item | Function / Specification |

|---|---|

| Smartphone | Fixed in a stand; camera settings locked (ISO, shutter speed, white balance). |

| Controlled Light Box | Equipped with high-CRI D65-simulating LEDs. |

| Color Calibration Chart | Chart with known colorimetric values (e.g., X-Rite ColorChecker). |

| Standard White Reference Tile | Provides a consistent white point for white balance and reflectance calibration. |

| Image Analysis Software | Software capable of converting RGB to CIE XYZ and CIELAB values (e.g., Python, Matlab, ImageJ with plugins). |

3. Workflow Diagram The following diagram illustrates the logical workflow for a calibrated smartphone color measurement experiment.

4. Step-by-Step Procedure

- System Setup: Place the smartphone on a stable stand inside the light box, ensuring the camera lens is parallel to the sample plane. Power on the D65-simulating LED light source and allow it to warm up for a consistent output.

- Reference Capture: Place the color calibration chart within the field of view. Capture an image in RAW format (DNG) if supported, otherwise use the highest quality JPEG without compression.

- Sample Capture: Replace the calibration chart with your test sample and capture an image under identical settings and geometry.

- Image Pre-processing: Use software to correct for lens distortion and extract the average RGB values from each color patch of the chart and the region of interest in your sample. Perform a white balance correction using the known white reference tile.

- Colorimetric Transformation: Build a transformation matrix that maps the device's calibrated RGB values from the chart image to the known CIE XYZ values of the chart patches. The calculation of XYZ tristimulus values for a color with a spectral power distribution

S(λ)is defined as: X = ∫ S(λ) * x̄(λ) dλ Y = ∫ S(λ) * ȳ(λ) dλ Z = ∫ S(λ) * z̄(λ) dλ where x̄, ȳ, and z̄ are the color-matching functions for the chosen standard observer [25]. Apply this matrix to the RGB values from your sample image to convert them to standardized CIE XYZ, and subsequently to a perceptually uniform color space like CIELAB. - Data Analysis: Perform your quantitative analysis (e.g., concentration determination) using the calibrated CIELAB values, particularly the lightness (L), and chromaticity (a, b*) coordinates.

Troubleshooting Guide

| Problem | Potential Cause | Solution |

|---|---|---|

| Poor repeatability | Inconsistent lighting geometry or camera settings. | Use a fixed light box and mount. Lock all camera settings (ISO, shutter speed, white balance). |

| Measurements differ from benchtop spectrometer | Mismatch in standard illuminant or observer definitions. | Verify and align the illuminant (e.g., D65) and observer (e.g., 1964 10°) settings in all analysis software. |

| Colors look different under another phone | Uncalibrated device-dependent RGB space. | Implement the calibration protocol using a standard chart to transform to device-independent color spaces (XYZ, CIELAB). |

| Samples match in app but not visually | Metamerism or use of the 2° observer for a large sample [25]. | Check for metamerism by comparing under a second standard illuminant. Switch to the 1964 10° standard observer for analysis [22]. |

Step-by-Step Calibration Protocols: From Basic Apps to Advanced Reference Systems

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My application is giving inconsistent RGB values for the same sample. What could be the cause? Inconsistent readings are often due to variable lighting conditions. Ensure all measurements are taken in a controlled, uniform lighting environment. Avoid shadows and direct light on the sample. Furthermore, use a fixed-distance holder or a 3D-printed jig to maintain a consistent distance and angle between the smartphone camera and the sample for every measurement [26].

Q2: How can I validate the accuracy of my smartphone colorimeter setup? A reliable method is to use certified colorimetric tiles or standards with known reference values [26]. Measure these standards with your setup and calculate the CIELab color difference (ΔE) between your measured values and the certified values. A lower ΔE indicates higher accuracy. For greater precision, consider using a clip-on dispersive grating, which has been shown to improve colorimetric performance compared to using the smartphone camera alone [26].

Q3: What is the difference between the RGB Detector and PhotoMetrix Pro apps for calibration? While both apps can be used for color detection, their calibration approaches differ. The RGB Detector app used in research has auto-calibrating capabilities, converting camera output to RGB coordinates that are independent of the camera model [26]. PhotoMetrix Pro is known for providing more advanced analytical functionalities, allowing for the construction of calibration curves for quantitative analysis. The choice depends on whether you need basic color detection (RGB Detector) or a more comprehensive analytical tool (PhotoMetrix Pro).

Q4: Why is a "sandwich-type" lateral flow assay mentioned in the context of smartphone colorimetry? The sandwich-type Lateral Flow Immunoassay (LFA) represents an advanced application of smartphone-based colorimetry. It combines immunochromatographic test strips with a smartphone application for automated image acquisition, calibration, and classification [27]. This integration allows for semi-quantitative analysis of specific biomarkers, such as 25-hydroxyvitamin D, by converting the color intensity of a test line into a quantitative result, demonstrating the move towards decentralized diagnostics [27].

Troubleshooting Common Experimental Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| High variation between replicate measurements | Inconsistent camera focus or sample illumination. | Use a fixed-focus setting on the camera app and a dedicated light source (e.g., built-in white LED) [26]. |

| Calibration curve has poor linearity (low R² value) | Improper color space usage or sample concentration outside dynamic range. | Ensure RGB values are correctly transformed into a suitable color space (e.g., CIELab) for analysis [26]. |

| App cannot distinguish between similar colors | Limited color resolution of the smartphone camera or insufficient contrast. | Use a clip-on dispersive grating to enhance measurement precision [26]. |

| Results are not reproducible across different smartphone models | Variances in camera sensors and built-in image processing. | Use an app with auto-calibrating capability or perform a device-specific calibration with certified standards [26]. |

Experimental Protocols and Data Presentation

Protocol: Smartphone Colorimetry with Certified Standards

This methodology outlines the steps for performing a reliable colorimetric measurement and calibration using a smartphone and certified reference tiles [26].

- Equipment Setup: Smartphone, a 3D-printed or fixed holder to maintain a constant sample distance, certified color tiles (e.g., red, green, blue, yellow), and a white reference tile (e.g., Spectralon) [26].

- Application Configuration: Install a color detection application (e.g., RGB Detector). Ensure the smartphone's flash (white LED) is enabled as a consistent light source [26].

- Image Acquisition: Place the white reference tile in the holder and capture an image for white balancing. Then, place each certified color tile in the holder and capture multiple images (e.g., 5 replicates).

- Data Extraction: Use the application to obtain the average RGB values for each tile from the captured images.

- Data Conversion: Convert the averaged RGB values to tristimulus XYZ coordinates using a predefined conversion matrix. An example matrix from literature is [26]:

[X, Y, Z] = [0.412, 0.358, 0.180; 0.213, 0.715, 0.072; 0.019, 0.119, 0.950] * [R, G, B]These XYZ values are then transformed into the CIELab color space to calculate the color difference ΔE [26]. - Validation: Calculate the ΔE between your measured CIELab values and the certified values for the tiles to quantify the accuracy of your setup [26].

Quantitative Performance Data from Literature

The table below summarizes the colorimetric performance achievable with different smartphone setups, as reported in research. The color difference (ΔE) and resolution (δE) are key metrics for assessing accuracy and precision [26].

Table 1: Performance of Smartphone Colorimetry on Certified Color Tiles

| Color Tile | Color Difference using RGB Detector (ΔE) | Color Difference using GoSpectro Grating (ΔE) |

|---|---|---|

| Yellow (YW) | Data not specified in results | Smallest difference observed [26] |

| Cyan (CY) | Data not specified in results | Smallest difference observed [26] |

| Purple (PU) | Data not specified in results | Biggest difference observed [26] |

| Red (RD) | Data not specified in results | Biggest difference observed [26] |

| All Tiles (Average) | Acceptable results for quick evaluation [26] | Best results, highest precision [26] |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials for Smartphone-Based Quantitative Colorimetric Analysis

| Item | Function in the Experiment |

|---|---|

| Certified Colorimetric Tiles (e.g., from Labsphere) | Provide a set of colors with known, certified tristimulus values. They are essential for validating the accuracy and precision of the smartphone colorimeter by calculating the color difference ΔE [26]. |

| White Reference Tile (e.g., Spectralon) | Serves as a high-reflectance standard for white balancing and calibration before sample measurement, ensuring consistent and accurate color capture [26]. |

| Fixed-Distance Holder / 3D-Printed Jig | A physical fixture that maintains a consistent distance and angle between the smartphone camera and the sample. This is critical for achieving reproducible results by eliminating variability from hand-held operation [26]. |

| Clip-On Dispersive Grating (e.g., GoSpectro) | An accessory that clips onto the smartphone camera, turning it into a basic spectrophotometer. It significantly enhances colorimetric precision over using the camera alone by dispersing light and allowing wavelength-based analysis [26]. |

| Lateral Flow Assay (LFA) Strips | Used in advanced applications for detecting specific analytes (e.g., vitamins, pathogens). The smartphone app quantitatively reads the color intensity of the test line, enabling semi-quantitative analysis at the point of care [27]. |

| Anti-Idiotype Antibody | A specialized reagent used in a "sandwich-type" LFA for small molecules like Vitamin D. It allows for a more sensitive and reproducible assay format compared to traditional competitive assays [27]. |

Troubleshooting Guides

FAQ 1: How can I convert my RGB immunofluorescence images to a colorblind-friendly format in ImageJ?

Problem: A user needs to convert RGB immunofluorescence images to a cyan/magenta/yellow (CMY) format to make them colorblind-friendly but finds that the color scheme is not retained when saving and reopening the files.

Solution: The most reliable method involves splitting the original channels and then re-merging them with the desired CMY color assignments, rather than converting an existing RGB image.

Detailed Protocol:

- Open your original image in ImageJ/Fiji.

- Split the channels: Run

Image > Color > Split Channels. This creates separate grayscale images for each channel (e.g., "red," "green," "blue"). - Re-merge with new colors: Run

Image > Color > Merge Channels....- In the dialog box, assign the red channel to the cyan channel, the green channel to the magenta channel, and the blue channel to the yellow channel.

- For example, in the "C5 (cyan)" dropdown, select your original image's "(red)" channel.

- In the "C6 (magenta)" dropdown, select your original image's "(green)" channel.

- In the "C7 (yellow)" dropdown, select your original image's "(blue)" channel.

- Ensure the "Create composite" box is unchecked to generate a true RGB image with the new color mapping [28].

- Save the new image: Use

File > Save As > Tiffto preserve the color scheme. This method creates an RGB image that should retain the CMY colors when reopened in other software like Photoshop or Illustrator [28].

Important Consideration: Note that simply recoloring channels as cyan, magenta, and yellow may not be fully effective for all types of color blindness, as dichromatic observers cannot discern colors from three mixed channels. A more robust alternative is to use the Colorblind Action Bar plugin for Fiji, which performs a semi-CYM conversion designed to address oversaturation and be more perceptible [28].

FAQ 2: Why does my image appear all black after importing, even though the data is valid?

Problem: After loading an image, it displays as entirely black or very dark, but the user knows the data is present.

Solution: This is a common issue with high-bit-depth images (e.g., 12-bit, 14-bit, or 16-bit) where the actual data occupies only a small portion of the full display range.

Troubleshooting Steps:

- Verify Data Presence: Move your mouse over the image and observe the status bar at the bottom of the main ImageJ window. If pixel values other than zero are displayed as you move the cursor, your data is intact [29].

- Auto-adjust Contrast:

- Go to

Image > Adjust > Brightness/Contrast...(or pressShift+C). - In the dialog that opens, click the Auto button. This will rescale the display to map the minimum and maximum intensity values in your image data to black and white, making the features visible [29].

- Go to

- Prevent Future Issues (during import): If you are using the Bio-Formats Importer for specific file types, you can disable autoscaling upon import:

- Use

File > Import > Bio-Formats. - Select your file.

- In the import options, uncheck the "Autoscale" box.

- Click OK. The data will then be scaled to the maximum value of the bit depth instead of being autoscaled to the image's actual intensity range [29].

- Use

FAQ 3: How do I convert an intensity-based color scale to a concentration-based scale?

Problem: A user has an 8-bit image with a color scale from 0 to 255 and needs to convert this to a concentration scale, for example, 0 to 150 mmol/liter.

Solution: Use ImageJ's calibration tool to establish a mathematical relationship between pixel intensity and concentration.

Experimental Protocol:

- Open your image in ImageJ.

- Open the Calibrate Tool: Navigate to

Analyze > Calibrate.... - Perform Calibration:

- In the Calibrate dialog, you will see a plot of Intensity vs. Value.

- From the "Function" dropdown, select the calibration model that best fits your data (e.g., Linear, Polynomial).

- You need known standard concentrations to create the calibration curve. Enter the known concentration values in the "Value" column corresponding to their measured intensity values.

- Click "OK" to apply the calibration. Once calibrated, ImageJ will display concentration values instead of raw intensity values when you use tools like the mouse pointer to probe the image [30].

- Alternative Method for Image Conversion: If you need to create a new image where the pixel values directly represent concentration:

- First, convert your image to 32-bit float using

Image > Type > 32-bit. This prevents data clipping during mathematical operations. - Then, use

Process > Math > Macro...to apply the calibration function (e.g.,v=(v/255)*150) to convert the 0-255 intensity range to a 0-150 concentration range [30].

- First, convert your image to 32-bit float using

FAQ 4: What should I do if ImageJ freezes or becomes unresponsive during processing?

Problem: ImageJ stops responding to inputs during an operation.

Solution: Generate a "thread dump" or "stack trace" to capture the program's state, which is invaluable for developers to diagnose the problem.

Detailed Protocol:

- The Easy Way (Within ImageJ):

- Press

Shift + \(backslash) while ImageJ is the active window. - If successful, a new window containing the stack trace will open.

- Press

Ctrl+A(orCmd+Aon Mac) to select all text, thenCtrl+C(orCmd+C) to copy it. You can then paste this into a bug report [29].

- Press

- The Fallback Method (Using the Console):

- If the first method fails, launch ImageJ from the system console/terminal to capture log output.

- Linux/macOS: Open a terminal and run

DEBUG=1 /path/to/ImageJ(adjust the path as needed). - Windows: Make a copy of

ImageJ-win64.exe, rename it todebug.exe, and run it. This launches ImageJ with an attached command prompt. - Reproduce the freeze.

- On Linux/macOS: Press

Ctrl + \in the terminal window to print the stack trace. Select the text with your mouse and copy it. - On Windows: Press

Ctrl + Pause(the Break key) in the command prompt. Click the icon in the upper left corner of the window, choose Edit > Mark, select the stack trace with your mouse, and press Enter to copy it [29].

Experimental Protocols & Data Presentation

Quantitative CMY Conversion Workflow

The following diagram illustrates the core methodology for converting an RGB image to a quantitative, colorblind-friendly CMY format in ImageJ.

Comparison of Colorblind-Friendly Conversion Methods

The table below summarizes the two primary methods for creating accessible images, helping you choose the right approach for your research.

| Method | Key Feature | Best Use Case | Limitation |

|---|---|---|---|

| Manual Channel Merge [28] | Direct reassignment of original channels to Cyan, Magenta, and Yellow during merge. | Full control over channel-color mapping; requires a specific, consistent color scheme. | May not be effective for all forms of color blindness; can lead to oversaturation. |

| Colorblind Action Bar Plugin [28] | Semi-automated plugin designed specifically for color accessibility. | General-purpose creation of colorblind-friendly figures; handles oversaturation better. | Less granular control than manual method; requires plugin installation. |

Essential Research Reagent Solutions for Colorimetric Analysis

This table lists key materials and software tools essential for conducting quantitative colorimetric analysis, particularly in the context of smartphone-based and ImageJ-driven research.

| Item | Function in Research | Example Application |

|---|---|---|

| TCS3200 Color Sensor [31] | A programmable RGB color light-to-frequency converter that captures raw color data from samples. | Integrating with a Raspberry Pi to create a low-cost, portable colorimetric sensor for protein assays (e.g., BCA, Bradford) [31]. |

| Micro-BCA/Bradford Assay Kits [31] | Standardized chemical reagents that produce a color change proportional to protein concentration. | Generating calibration curves for quantitative protein analysis using image-based or sensor-based colorimetry [31]. |

| Standardized Color Charts | Provides a known reference for color correction and white balancing across different lighting conditions. | Essential for calibrating smartphone cameras or scanners to ensure reproducible color data acquisition. |

| Fiji/ImageJ Software | Open-source image analysis platform with built-in tools and plugins for color space conversion, calibration, and quantification. | Performing CMY conversion, intensity-to-concentration calibration, and colocalization analysis [29] [30] [28]. |

| Colorblind Action Bar Plugin [28] | A specialized Fiji plugin that transforms images into colorblind-friendly color spaces. | Preparing scientific figures and microscopy images that are accessible to a wider audience, including those with color vision deficiencies [28]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

1. What is the primary purpose of a multi-cell color reference sticker in smartphone colorimetry? The primary purpose is to achieve color constancy. These stickers contain patches of known colors, allowing software to mathematically model and correct for the variable illumination conditions (color temperature, brightness) present when an image is captured [32]. This transformation ensures the colors in the image accurately represent the true colors of the scene, independent of the lighting, which is critical for quantitative measurements [32].

2. My color-corrected results are still inconsistent. What could be wrong? Inconsistent results after correction can stem from several issues:

- Localized Shadows or Glare: The color correction algorithm assumes even illumination across the reference sticker. If shadows or glare affect only part of the sticker, as indicated by diverging color measurements from oppositely placed chips, the correction will be unreliable [32].

- Low-Quality Reference Sticker: The printing quality of the color stickers is critical. High variation in the printed color patches (with a high ΔE00 score, e.g., >5.3) will directly impair the system's precision [32].

- Incorrect Camera Settings: Using automatic settings or JPEG processing can introduce unpredictable color shifts [33] [34]. Whenever possible, use a manual camera mode and capture in a raw image format to minimize automated processing.

3. How do I validate that my smartphone sensor and reference system are working correctly? A validation procedure should test each component of your system [32]:

- Phone Sensor Consistency: Photograph a known color patch (e.g., from a Pantone library) multiple times under identical lighting. Convert the images to the CIELAB color space and calculate the mean ΔE00 between all pairwise comparisons. A mean ΔE00 of ≤1.0 indicates excellent sensor repeatability [32].

- Sticker Quality: Measure the color values of patches from several randomly selected stickers from your print batch. The ΔE00 between the measured color and the known reference value should be as low as possible (e.g., <3 on average) [32].

4. Are there alternatives to using a physical color card for illumination correction? Yes, retrospective computational methods like BaSiCPy exist. These software-based approaches derive an illumination correction function directly from a set of your images, without needing a reference object captured in every shot [35]. This is particularly useful for correcting uneven illumination (vignetting) in fields like fluorescence microscopy [35].

Troubleshooting Common Problems

| Problem | Possible Cause | Solution |

|---|---|---|

| High variation in corrected color values | 1. Inconsistent lighting conditions.2. Shadows or glare on the reference sticker.3. Low-quality printed color stickers. | 1. Use a controlled, diffuse light source.2. Reposition the setup to avoid shadows/glare. Check for color divergence in mirrored patches [32].3. Source stickers from a printer that guarantees low ΔE00 variation (e.g., <5.3) [32]. |

| Corrected image colors look unnatural | The color transfer algorithm is too aggressive or using an inappropriate method. | Ensure the software uses a localized transfer, applying corrections only within the region of interest defined by the reference card to avoid over-correction of the entire scene [32]. |

| Different results from different smartphones | Proprietary JPEG processing and auto-white-balance algorithms vary by manufacturer and model [33] [34]. | 1. Use a manual camera mode and disable all auto-features (white balance, exposure, saturation).2. Use a machine learning classifier trained on data from multiple phone models to improve inter-phone repeatability [34]. |

| The system fails to correct for extreme color temperatures | The color gamut of the reference sticker may not be broad enough to cover the transform required for extreme lighting. | Use an enhanced color sticker design that includes a wider range of brightness and hues, extending beyond the original ColorChecker palette to capture a broader range of possible transforms [32]. |

Experimental Protocols and Data

Protocol: Validating a Smartphone-Based Color Correction Pipeline

This protocol outlines the key experiments to validate a system like the HueDx platform, which uses a multi-cell reference sticker [32].

1. Objective To empirically measure the performance and limitations of a smartphone-based color correction system, including the phone hardware, reference sticker quality, and software correction capabilities.

2. Materials and Reagents

- Smartphone with a manual camera mode (e.g., iPhone 11 or newer).

- Custom multi-cell color reference sticker (e.g., HueCard).

- Controlled lighting environment with variable color temperature and brightness.

- Colorimetric assay of interest (e.g., paper-based total protein diagnostic assay).

- Standardized color patches (e.g., Pantone Cool Gray 1C, Neutral Black).

3. Methodology

Step 1: Phone Sensor Quantification

- Place a standardized color patch in a controlled, evenly lit environment.

- Capture ten images of the patch without moving the phone or the patch.

- Manually isolate the patch in each image and convert the pixels to the CIELAB color space.

- Calculate the average color value for each image and then compute the ΔE00 (Delta E 2000) pairwise between all images.

- Report the mean ΔE00 and max ΔE00. A mean ΔE00 ≤ 1.0 indicates the sensor has imperceptible variation and is sufficiently consistent [32].

Step 2: Sticker Manufacturing Quality Control

- Randomly select a batch of printed color stickers (e.g., 10 from 400).

- Image each sticker under standard laboratory illumination conditions.

- For each color patch on each sticker, measure the average color value and calculate the ΔE00 against its known reference value.

- Report the mean ΔE00, max ΔE00, and standard deviation across all patches and all stickers. High-quality prints should have a mean ΔE00 < 3 and a max ΔE00 < 5.3 [32].

Step 3: Color Correction Pipeline Efficacy

- Capture images of the color reference sticker and a colorimetric assay under a wide range of illumination conditions (varying brightness and color temperature).

- Process the images through the color correction pipeline (e.g., using white-balancing, multivariate Gaussian distributions, and dynamic lookup tables).

- Calculate the ΔE00 between the corrected images and a reference image taken under standard lab conditions.

- A ΔE00 < 3 after correction indicates the system has successfully restored the images to near-imperceptible levels of difference [32].

Step 4: Real-World Application in Diagnostics

- Perform a colorimetric assay (e.g., total protein test) with samples of known concentration.

- Capture and analyze images with and without using the color correction pipeline.

- Compare the coefficient of variation (CV) in precision testing and calculate the limits of blank (LoB), detection (LoD), and quantitation (LoQ) for both methods. A well-functioning system will show a lower CV and lower LoB/LoD/LoQ with color correction [32].

The following table summarizes expected performance metrics for a robust system based on the HueDx study [32].

Table 1: Key Performance Indicators (KPIs) for System Validation

| Validation Target | Metric | Expected Outcome | Industry Standard |

|---|---|---|---|

| Phone Sensor | Mean ΔE00 (pairwise) | ≤ 1.0 | ≤ 1.0 (Imperceptible) [32] |

| Reference Sticker | Max ΔE00 (vs. reference) | < 5.3 | ≤ 5.0 (Small perceptible difference) [32] |

| Color Correction | ΔE00 after correction | < 3.0 | N/A |

| Diagnostic Assay (Precision) | Coefficient of Variation (CV) | Almost 2x lower with correction | Lower is better [32] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Smartphone Colorimetry

| Item | Function | Example/Specification |

|---|---|---|

| Multi-Cell Color Reference Card | Provides known color values for the software to model and correct for prevailing illumination conditions. | HueCard; contains 48 color patches and 2 black/white references, mirroring an enhanced ColorChecker palette [32]. |

| Smartphone with Manual Controls | Acts as the image acquisition device. Requires control over focus, white balance, ISO, and shutter speed. | iPhone 11 or newer; or Android phones supporting third-party camera apps with manual mode [32]. |

| Controlled Light Source | Provides consistent, diffuse illumination to minimize shadows and glare, which are challenging to correct. | D65 standard illuminant (6500K color temperature) is a common calibration target [36] [37]. |

| Colorimetric Assay | The test medium that produces a color change proportional to the analyte concentration. | Paper-based total protein diagnostic assay; hydrogen peroxide test strips [32] [34]. |

| Calibration Software | Applies color transfer algorithms to transform the image based on the reference sticker. | HueTools; utilizes algorithms like multivariate Gaussian distributions and dynamic lookup tables [32]. |

| Colorimeter | A device used for high-accuracy color measurement to validate the system and create reference values. | X-Rite i1 Display Pro; used for calibrating displays and measuring color patches [36]. |

Workflow and System Diagrams

Illumination Correction Workflow

System Components and Algorithms

Frequently Asked Questions (FAQs)

Q1: Why should I use CIELAB over RGB for smartphone-based colorimetric analysis?

CIELAB offers significant advantages for quantitative analysis because it is designed to be perceptually uniform, meaning numerical changes correspond more closely to perceived color changes. Crucially, research shows that the CIELAB color space—specifically its a* and b* chromatic coordinates—exhibits inherent resistance to illumination changes. This makes it superior to RGB models, which are highly sensitive to lighting variations and limit reliability. Using CIELAB can enable housing-free, illumination-invariant detection, simplifying your field setup [1].

Q2: My CIELAB results seem perceptually inaccurate, especially for highly saturated colors. Why?

You are likely observing the limitations of CIELAB in accounting for the Helmholtz-Kohlrausch effect. This phenomenon describes how strongly saturated colors can appear brighter than their measured L* (lightness) value suggests. For example, a saturated red may look significantly brighter than a gray with the same L* value. This is a known issue where CIELAB undervalues the contribution of saturation to perceived lightness [38].

Q3: How can I optimize the median cut algorithm when working in CIELAB color space?

Standard median cut in CIELAB does not always improve results because it may not prioritize lightness (L) error sufficiently. To optimize it, consider scaling the L channel more aggressively than the a* and b* channels. Experimental evidence shows that weighting the L* channel, for instance, by a factor of two, forces the algorithm to reduce perceptual lightness error more effectively, leading to visibly better results, such as the removal of color blotches in image quantizations [39].

Q4: What are the common pitfalls when measuring color data for analysis?

Common mistakes include:

- Relying on visual assessment: Human perception is subjective and prone to fatigue. Always use instrument-based measurement [40].

- Ignoring environmental factors: Ambient light, temperature, and humidity can affect color measurements. Control these factors where possible [40].

- Misunderstanding device output: Ensure you know whether your smartphone app or spectrophotometer provides data in RGB, CIELAB, or another space, and understand the required conversions [2] [40].

Troubleshooting Guides

Issue: Results Are Inconsistent Under Different Lighting Conditions

Problem: Color measurements taken with a smartphone vary unpredictably when lighting changes. Solution:

- Switch to CIELAB Chromaticity Coordinates: Base your analysis on the a* and b* channels of CIELAB instead of RGB values. These coordinates form "equichromatic surfaces" that are inherently more resilient to illumination changes [1].

- Use a Controlled Lighting Box: For critical measurements, use a simple light control box with adjustable LEDs to ensure consistent, uniform illumination during image capture [41].

- Employ a Reference Chart: Include a standardized color reference chart (e.g., X-Rite ColorChecker) within your captured image. This allows for post-hoc color correction and calibration across different lighting scenarios.

Issue: Poor Color Quantization or Palette Generation

Problem: When reducing an image to a limited color palette (e.g., for analysis), the results are perceptually poor. Solution: This is common when using algorithms like median cut in an unoptimized color space.

- Implement Lightness-Scaled CIELAB: Apply median cut in CIELAB, but scale the L* channel relative to a* and b. A relative weight of 2:1 for L versus a/b has been shown to improve results by better prioritizing perceptual lightness [39].

- Verify with Pixel Mapping: Ensure the final pixel mapping step (assigning the original colors to the nearest palette color) is also performed in a perceptual color space like CIELAB or Oklab for greatest accuracy [39].

Issue: Converting Between RGB and CIELAB is Causing Errors

Problem: Converted colors seem incorrect or out of gamut. Solution:

- Linearize RGB Data: RGB values from cameras and displays are typically gamma-encoded. You must first linearize these RGB values (remove the gamma correction) before applying the transformation to CIEXYZ and then to CIELAB [2].

- Specify the Correct White Point: CIELAB is calculated relative to a reference white point (e.g., D65 for typical displays, D50 for printing). Using the wrong white point will lead to incorrect conversions. Ensure your conversion math uses the one appropriate for your context [2].

- Use Floating-Point Calculations: An 8-bit integer implementation of CIELAB can lead to significant quantization errors and clipping. For research purposes, always perform conversions using floating-point arithmetic [2].

Experimental Protocols & Data Presentation

Protocol: Smartphone-Assisted Colorimetric Determination of an Equilibrium Constant (Kc)

This protocol is adapted for determining the Kc of the thiocyanatoiron(III) complex and serves as a model for quantitative analysis [41].

1. Principle The concentration of the red [Fe(SCN)]²⁺ complex ion at equilibrium is determined by analyzing the blue color intensity (B-channel) of smartphone-captured images. A calibration curve is created from standards of known concentration, which is then used to find unknown concentrations in test mixtures for Kc calculation [41].

2. Key Research Reagent Solutions

| Reagent / Equipment | Function / Specification |

|---|---|

| Iron(III) Nitrate Solution | Provides the Fe³⁺ reactant ion. |

| Potassium Thiocyanate Solution | Provides the SCN⁻ reactant ion. |

| Nitric Acid (Aqueous) | Provides an acidic medium to prevent iron precipitation. |

| White 20-Well Acrylic Plate | Serves as a reusable, multi-sample container for reaction mixtures. |

| Adjustable Autopipettes (10-200 µL, 100-1000 µL) | Ensures accurate and precise liquid handling. |

| Light Control Box | Provides consistent, uniform illumination for reproducible image capture. |

| ImageJ Software | Used to process images and determine the mean blue intensity in a Region of Interest (ROI). |

3. Step-by-Step Methodology

- Step 1: Preparation of Standard Solutions. Prepare a series of standard solutions with known concentrations of the [Fe(SCN)]²⁺ complex.