Unlocking Environmental Insights: A Comprehensive Guide to Exploratory Data Analysis Goals and Methods

This article provides researchers and environmental professionals with a systematic framework for applying Exploratory Data Analysis (EDA) to environmental research challenges.

Unlocking Environmental Insights: A Comprehensive Guide to Exploratory Data Analysis Goals and Methods

Abstract

This article provides researchers and environmental professionals with a systematic framework for applying Exploratory Data Analysis (EDA) to environmental research challenges. Covering foundational principles, methodological applications, troubleshooting strategies, and validation techniques, we demonstrate how EDA serves as a critical first step in understanding complex environmental datasets. Through practical examples from ecosystem monitoring, geochemical mapping, and spatial analysis, we illustrate how EDA identifies patterns, informs hypothesis development, guides appropriate statistical methods, and supports evidence-based environmental decision-making. The integration of traditional statistical methods with modern spatial analysis and emerging AI tools positions EDA as an essential methodology for addressing contemporary environmental research challenges.

Laying the Groundwork: Core Principles and Exploratory Objectives of EDA

Understanding EDA as an Essential First Step in Environmental Data Analysis

Exploratory Data Analysis (EDA) is an indispensable approach for investigating datasets, summarizing their core characteristics, and identifying underlying patterns through visual and statistical methods. In the context of environmental research, where data is often complex, multi-faceted, and spatially correlated, EDA serves as a critical first step before any formal modeling or hypothesis testing [1] [2]. This guide details the application of EDA for researchers and scientists, outlining its importance, core methodologies, and specific adaptations for handling environmental data, including geospatial analysis.

The primary purpose of EDA is to understand the data's structure, identify obvious errors, detect outliers, uncover interesting relationships among variables, and check assumptions that will inform subsequent, more sophisticated analyses [2] [3]. For environmental professionals, this process is vital. Biological monitoring data, for instance, is often affected by multiple stressors, and initial explorations of stressor correlations are crucial before attempting to relate them to biological response variables [1]. EDA provides insights into candidate causes that should be included in a causal assessment, ensuring that statistical analyses yield meaningful and reliable results [1].

Core Methodologies and Analytical Protocols

The following sections describe the fundamental techniques used in EDA, ranging from single-variable analysis to the exploration of complex multivariate relationships.

Univariate Analysis

Univariate analysis focuses on a single variable to understand its distribution and identify unusual values.

- Objective: To describe the central tendency, spread, and shape of the distribution of an individual variable [1] [4].

- Protocol: Begin by calculating summary statistics (mean, median, standard deviation, variance, skewness, and kurtosis) [3]. This should be followed by graphical displays.

- Key Visualizations:

- Histograms: A bar plot showing the frequency of observations within specified intervals, useful for visualizing the overall distribution and skewness [1] [4]. For example, a histogram of log-transformed total nitrogen can reveal whether the data approximates a normal distribution [1].

- Box Plots: A compact graphical representation displaying the median, quartiles, and potential outliers of a dataset. They are particularly useful for comparing distributions across different subsets [1].

- Quantile-Quantile (Q-Q) Plots: Used to assess whether a variable follows a theoretical distribution, such as normality. Deviations from the straight line indicate departures from the assumed distribution [1] [3].

Bivariate Analysis

Bivariate analysis explores the relationship between two variables.

- Objective: To measure the association and understand the functional relationship between pairs of variables [1] [4].

- Protocol: Use scatterplots as an initial graphical tool, followed by the calculation of correlation coefficients.

- Key Methods:

- Scatterplots: A graphical display with one variable on each axis, used to visualize relationships (linear, nonlinear) and identify issues like outliers or non-constant variance [1].

- Correlation Analysis: Measures the degree of association.

- Pearson's (r): Measures the degree of linear association.

- Spearman's (ρ): A rank-based measure that is more robust to outliers and can capture monotonic nonlinear associations [1].

- Conditional Probability Analysis (CPA): Used to estimate the probability of an event (e.g., a biologically impaired site) given the occurrence of another condition (e.g., a stressor exceeding a threshold). This requires a dichotomous response variable [1].

Multivariate Analysis

Environmental processes are rarely driven by single factors. Multivariate techniques are essential for understanding interactions between three or more variables.

- Objective: To visualize and summarize the structure of a dataset with multiple variables, often to reduce dimensionality or identify clusters [1] [5].

- Protocol: Employ techniques like variable clustering and Principal Component Analysis (PCA).

- Key Methods:

- Variable Clustering: An automated method that groups variables based on their pairwise correlations, helping to identify redundant variables or underlying latent factors [5].

- Principal Component Analysis (PCA): A technique that creates new, uncorrelated summary variables (principal components) as weighted combinations of the original variables. It is used for dimension reduction and to avoid collinearity problems in subsequent regression analyses [5]. The results are often visualized with a biplot, which displays both the variable loadings and the sample scores, showing the correlation between variables (as the cosine of the angle between vectors) and the multivariate distance between samples [5].

- Heat Maps: A graphical representation of a correlation matrix for all quantitative variables, providing a quick overview of interrelationships in the dataset [4].

Spatial EDA

A critical component of environmental data analysis is understanding spatial patterns and dependencies.

- Objective: To identify spatial trends, detect spatial outliers, and characterize the range of spatial autocorrelation [3].

- Protocol: Begin by mapping sample locations and posting results. Use interpolation and variogram analysis to model spatial structure.

- Key Methods:

- Mapping and Trend Surface Analysis: Posting data on a map and using interpolation (e.g., spline interpolation) or regression on coordinates to visualize and model large-scale spatial trends [3].

- Variogram (Semivariogram) Analysis: The primary tool for assessing spatial correlation. It plots the semivariance of data pairs as a function of the distance between them (lag). Key features include:

- Nugget: Represents micro-scale variation or measurement error.

- Sill: The plateau the variogram reaches, representing the total variance.

- Range: The lag distance at which the sill is reached, indicating the limit of spatial autocorrelation [3].

- Directional Variograms: Used to evaluate anisotropy, where spatial correlation depends on direction as well as distance [3].

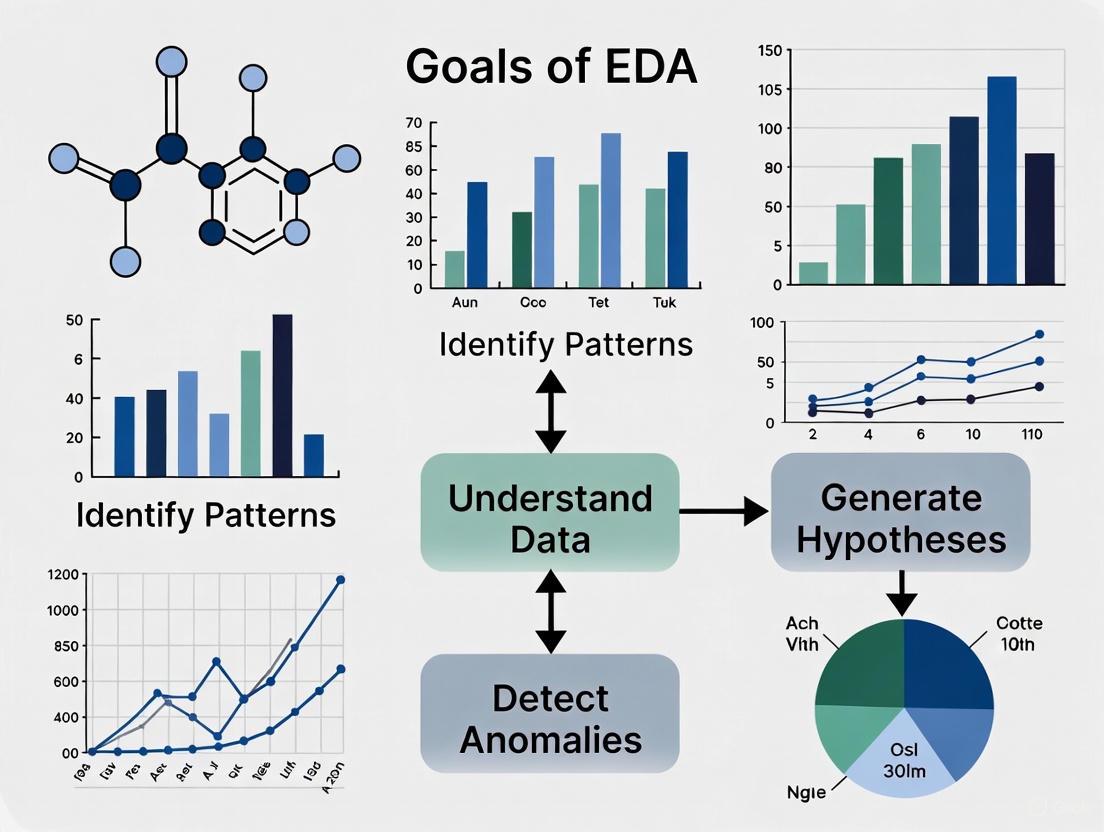

The following diagram illustrates the core workflow of EDA in environmental science, connecting the various analytical phases:

Essential Research Toolkit

Environmental data analysts rely on a combination of statistical software, programming languages, and specialized packages to perform EDA effectively. The table below summarizes the key tools and their applications.

Table 1: Key Software Tools for Environmental EDA

| Tool Category | Specific Tools | Primary Use in EDA | Environmental Application Example |

|---|---|---|---|

| Programming Languages | R, Python [2] [6] | Data manipulation, statistical analysis, and visualization. | R's varclus() function for variable clustering; Python's pandas for data summary [5] [7]. |

| Statistical Packages | CADStat [1] | Menu-driven package with specific tools for environmental data. | Calculating conditional probabilities for stressor-response relationships [1]. |

| Specialized R Packages | Hmisc, princomp [5] |

Multivariate analysis (e.g., variable clustering, PCA). | Running PCA with options for outlier-resistant correlations [5]. |

| Geospatial Packages | R (gstat), Python (scikit-learn) |

Spatial trend analysis and variogram modeling. | Creating empirical variograms to determine the range of spatial autocorrelation [3]. |

Advanced Applications and Workflows

Geospatial EDA Workflow

For environmental data with spatial components, a specialized EDA workflow is required to account for location-based correlations. The process involves both standard and spatial-specific techniques to guide the selection of appropriate geostatistical models.

Table 2: Protocol for Geospatial EDA

| Step | Action | Tool/Method | Purpose |

|---|---|---|---|

| 1 | Map sample locations and post results. | GIS-based mapping with posted values. | Visualize spatial distribution and compare with site features [3]. |

| 2 | Perform initial non-spatial EDA. | Histograms, Q-Q plots, summary statistics. | Check data quality, distribution, and identify global outliers [3]. |

| 3 | Assess and model spatial trend. | Scatterplot by coordinates, trend surface analysis. | Identify and remove large-scale spatial trends (detrending) [3]. |

| 4 | Analyze spatial correlation. | Empirical (sample) variogram. | Quantify the spatial structure and determine the range of influence [3]. |

| 5 | Check for anisotropy. | Directional variograms. | Determine if spatial correlation is direction-dependent [3]. |

The following diagram outlines this iterative investigative process for spatial data:

Data Quality and Assumption Checking

A fundamental goal of EDA is to ensure data quality and validate assumptions for planned statistical analyses.

- Handling Missing Data and Outliers: EDA helps identify missing values and outliers. Outliers can be actual extreme values (critical for the study) or result from measurement error, and EDA aids in determining which is the case [3]. The process may involve excluding abnormal data that skews results, but such choices must always be justified [3].

- Testing Distributional Assumptions: Many statistical and geospatial methods assume data is approximately normally distributed and not highly skewed. EDA uses histograms and Q-Q plots to assess this. If data is highly skewed, transformations (e.g., logarithmic) are applied prior to analysis [1] [3].

- Checking for Independence: A key assumption for many classic statistical tests is that data are independent and identically distributed (i.i.d.). EDA, particularly spatial EDA, is critical for checking this, as spatial data are often not independent—each measurement is correlated to some degree with its neighbors [3].

Exploratory Data Analysis is the foundational step that transforms raw environmental data into actionable understanding. By systematically employing univariate, bivariate, multivariate, and spatial techniques, researchers can ensure their data is of high quality, their model assumptions are met, and their subsequent analyses are sound. In an era of increasing data complexity, a rigorous EDA process is not optional but essential for generating reliable scientific insights and making informed environmental management decisions.

Identifying General Patterns and Unexpected Features in Environmental Data

This technical guide provides environmental researchers with a comprehensive framework for conducting Exploratory Data Analysis (EDA) to identify general patterns and unexpected features within complex environmental datasets. EDA serves as a critical first step in the data analysis pipeline, enabling scientists to understand data structure, detect anomalies, test hypotheses, and inform subsequent statistical modeling [1] [2]. Within environmental research, where data often exhibit spatial dependencies, multiple stressors, and complex interactions, EDA provides essential insights for designing robust analytical approaches that yield meaningful ecological conclusions [1] [8].

Exploratory Data Analysis represents an approach to analyzing datasets that emphasizes identifying general patterns, detecting outliers, and uncovering unexpected features through visual and statistical methods [1] [2]. Originally developed by American mathematician John Tukey in the 1970s, EDA techniques remain fundamental to data discovery processes across scientific disciplines [2]. In environmental science, EDA helps researchers understand complex relationships among multiple stressors and biological response variables before formal hypothesis testing [1]. This approach is particularly valuable for environmental data, which often contain missing values, outliers, mixed attribute types, and high dimensionality [8].

The primary goals of EDA in environmental research include: ensuring data quality before advanced analysis; understanding variable distributions and relationships; identifying spatial and temporal patterns; detecting outliers and anomalous events; informing selection of appropriate statistical techniques; and generating hypotheses for further investigation [2] [9]. By employing EDA, environmental scientists can transform raw data into actionable insights that support evidence-based decision-making for environmental management and policy development [8].

Fundamental EDA Techniques for Environmental Data

Univariate Analysis

Univariate analysis examines the distribution and properties of individual variables, forming the foundation of EDA [9]. This approach helps researchers understand data structure, identify anomalies, and determine appropriate transformations or statistical tests.

Table 1: Univariate Graphical Methods for Environmental Data

| Method | Description | Environmental Application Example | Key Information Revealed |

|---|---|---|---|

| Histograms | Graphical representation of data distribution using bins or intervals [1] | Distribution of total nitrogen concentrations in stream water [1] | Shape of distribution, central tendency, spread, gaps, skewness |

| Boxplots | Compact display of distribution based on five-number summary (min, Q1, median, Q3, max) [1] | Comparing nutrient concentrations across different watersheds | Central tendency, spread, skewness, outliers |

| Stem-and-leaf plots | Hybrid display showing both individual data points and overall distribution [2] | Preliminary analysis of small environmental datasets | Individual values, shape of distribution, gaps |

| Cumulative Distribution Functions (CDF) | Shows probability that observations are not larger than a specified value [1] | Assessing proportion of lakes exceeding water quality thresholds | Complete distribution, percentiles, exceedance probabilities |

Bivariate and Multivariate Analysis

Bivariate analysis explores relationships between two variables, while multivariate analysis examines interactions among three or more variables simultaneously [9]. These approaches are essential for understanding complex environmental systems where multiple factors interact.

Table 2: Bivariate and Multivariate Analysis Techniques

| Technique | Type | Description | Environmental Application |

|---|---|---|---|

| Scatterplots | Bivariate graphical | Plots paired observations of two variables on x-y axes [1] | Visualizing stressor-response relationships [1] |

| Scatterplot Matrix | Multivariate graphical | Multiple scatterplots displayed in matrix format [1] | Examining pairwise relationships among multiple water quality parameters |

| Correlation Analysis | Bivariate statistical | Measures strength and direction of association between variables [1] | Quantifying relationship between pollutant concentrations and biological indicators |

| Conditional Probability Analysis | Bivariate/Multivariate | Probability of an event given another event has occurred [1] | Estimating probability of biological impairment given stressor thresholds |

Advanced EDA Methodologies for Environmental Applications

Conditional Probability Analysis for Stressor Identification

Conditional Probability Analysis (CPA) provides a valuable framework for assessing relationships between environmental stressors and biological responses [1]. This technique is particularly useful when dealing with dichotomous outcomes (e.g., impaired/not impaired) and continuous stressor variables.

Methodology:

- Define Response Threshold: Establish a biologically meaningful threshold for the response variable (e.g., relative abundance of sensitive taxa < 40% indicates poor condition) [1]

- Calculate Conditional Probabilities: For each potential stressor threshold (Xc), compute P(Y|X > Xc), where Y represents the adverse biological condition

- Visualize Relationship: Plot probability of observing biological impairment against increasing stressor levels

- Interpret Pattern: Identify stressor thresholds at which probability of impairment increases substantially

Environmental Application Example: In sediment quality assessment, CPA can estimate the probability of observing benthic macroinvertebrate impairment when fine sediment percentage exceeds specific thresholds [1]. The analysis reveals how impairment probability changes with increasing stressor levels, informing management decisions about acceptable sediment thresholds.

Correlation Analysis for Multiple Stressors

Environmental systems often involve multiple, correlated stressors that jointly affect biological communities [1]. Correlation analysis helps identify these interrelationships, preventing spurious conclusions in subsequent analyses.

Protocol for Correlation Analysis:

- Select Appropriate Coefficient:

- Pearson's r: For linear relationships between normally distributed variables

- Spearman's ρ: For monotonic relationships or non-normal data

- Kendall's τ: Alternative rank-based measure with similar applications to Spearman's

- Calculate Pairwise Correlations: Compute correlations between all stressor variables

- Visualize Correlation Matrix: Create heatmap or similar visualization to identify strongly correlated stressors

- Interpret Ecological Significance: Consider whether correlations represent causal relationships or coincidental patterns

Table 3: Correlation Coefficients for Environmental Data Analysis

| Coefficient | Data Requirements | Strengths | Limitations | Interpretation |

|---|---|---|---|---|

| Pearson's r | Interval/ratio data, linear relationship, normality | Measures strength of linear relationship | Sensitive to outliers, assumes linearity | -1 to +1, with 0 indicating no linear relationship |

| Spearman's ρ | Ordinal, interval, or ratio data, monotonic relationship | Robust to outliers, no distribution assumptions | Less powerful than Pearson's for linear relationships | -1 to +1, measures monotonic relationship strength |

| Kendall's τ | Ordinal, interval, or ratio data, monotonic relationship | Handles ties better than Spearman's, more intuitive interpretation | Smaller absolute values than Spearman's for same relationship | -1 to +1, represents probability of concordance minus discordance |

Experimental Protocols for Environmental EDA

Systematic EDA Framework for Complex Environmental Datasets

Recent research demonstrates the value of systematic EDA frameworks for addressing challenges in complex environmental datasets, such as the Whole Building Life Cycle Assessment (WBLCA) dataset comprising 244 North American buildings [8]. The following protocol provides a structured approach for environmental researchers:

Phase 1: Data Characterization and Preparation

- Distinguish Attributes: Separate metadata, categorical variables, and continuous variables

- Address High Dimensionality: Identify relevant variable subsets based on research questions

- Document Data Sources: Record provenance, collection methods, and potential quality issues

Phase 2: Univariate Analysis

- Distribution Assessment: For each variable, examine distribution shape using histograms, boxplots, and Q-Q plots

- Normality Testing: Evaluate deviation from normal distribution using statistical tests and visualizations

- Summary Statistics: Calculate mean, median, standard deviation, skewness, and kurtosis

- Identify Data Issues: Flag outliers, missing values, and potential measurement errors

Phase 3: Bivariate and Multivariate Analysis

- Pairwise Relationships: Conduct correlation analysis and scatterplot examination for variable pairs

- Group Differences: Implement one-way ANOVA and post-hoc analysis for categorical groupings

- Interaction Effects: Apply two-way ANOVA to identify interacting factors

- Multivariate Visualization: Create scatterplot matrices, heatmaps, and other multivariate graphics

Phase 4: Feature Engineering and Selection

- Create Derived Variables: Develop ratios, composites, and other meaningful variable transformations

- Identify Influential Features: Use mutual information and other techniques to select important predictors

- Validate Feature Selection: Ensure selected features align with domain knowledge and research questions

Protocol for Spatial EDA of Environmental Data

Satial analysis represents a critical component of environmental EDA, enabling researchers to identify geographic patterns, hotspots, and spatial dependencies [1].

Methodology:

- Data Mapping: Create maps displaying sampling locations and variable values

- Spatial Autocorrelation: Calculate Moran's I or similar metrics to assess spatial clustering

- Variogram Analysis: For geostatistical data, examine spatial dependence structure

- Hotspot Identification: Use local indicators of spatial association (LISA) to detect significant clusters

The Environmental Scientist's EDA Toolkit

Table 4: Essential Tools for Environmental Exploratory Data Analysis

| Tool Category | Specific Tools/Software | Key Functions | Environmental Applications |

|---|---|---|---|

| Programming Languages | Python (Pandas, NumPy, Matplotlib, Seaborn) [9] | Data manipulation, statistical analysis, visualization | Water quality trend analysis, ecological indicator assessment |

| Programming Languages | R (ggplot2, dplyr, tidyr) [2] [9] | Statistical computing, advanced visualization, data tidying | Statistical analysis of monitoring data, spatial pattern detection |

| Specialized Environmental Tools | CADStat [1] | Correlation analysis, conditional probability, visualization | Stressor identification, causal analysis in biological monitoring |

| Statistical Techniques | K-means clustering [2] | Unsupervised grouping of similar observations | Classification of monitoring sites, identification of similar watersheds |

| Statistical Techniques | Principal Component Analysis (PCA) [9] | Dimension reduction for high-dimensional data | Identifying major gradients in multivariate environmental data |

| Visualization Methods | Scatterplot matrices [1] | Simultaneous display of multiple pairwise relationships | Exploring correlations among multiple water quality parameters |

| Visualization Methods | Cumulative distribution functions [1] | Display complete distribution of values | Assessing compliance with water quality standards across regions |

Data Visualization and Accessibility in Environmental EDA

Effective visualization is fundamental to EDA, enabling researchers to identify patterns, relationships, and anomalies that might be overlooked in numerical summaries [10]. For environmental data, which often involves complex spatial and multivariate relationships, accessible visual design is particularly important.

Color Contrast Guidelines for Environmental Data Visualization:

- Text Elements: Maintain contrast ratio of at least 4.5:1 for standard text against background [11] [10]

- Large Text: Use minimum contrast ratio of 4.5:1 for large-scale text (approximately 18 point or 14 point bold) [11]

- Graphical Elements: Ensure contrast ratio of at least 3:1 between adjacent data elements (e.g., bars in bar graph, pie chart segments) [10]

- Non-Color Coding: Supplement color with patterns, shapes, or direct labels to convey meaning without relying solely on color perception [10]

Accessible Visualization Practices for Environmental Data:

- Direct Labeling: Position labels adjacent to data points rather than relying on legends alone [10]

- Pattern Differentiation: Use distinct patterns (e.g., stripes, dots) in addition to color for differentiating elements

- Data Tables: Provide supplemental data tables alongside visualizations to support different learning preferences [10]

- Text Descriptions: Include alternative text for images and longer descriptions for complex visualizations [10]

Case Study: EDA of North American Building Life Cycle Assessment Data

A recent systematic EDA of Whole Building Life Cycle Assessment (WBLCA) data demonstrates the practical application of EDA principles to complex environmental datasets [8]. This study analyzed 244 real-world North American buildings using a structured EDA framework to understand embodied carbon patterns.

Key Findings and Methodological Insights:

- Weak Correlations: Embodied carbon intensity showed weak correlations with most meta-features, highlighting the complexity of environmental impact drivers [8]

- Influential Factors: Building materials and usage type emerged as the most influential factors on embodied carbon, identified through multivariate EDA techniques [8]

- Data Challenges: The EDA framework successfully addressed common environmental data challenges including high dimensionality, mixed attribute types, missing values, and outliers [8]

- Decision Support: The EDA revealed nuanced patterns that conventional simplified analyses would miss, supporting more informed decision-making for low-carbon building design [8]

This case study illustrates how systematic EDA can extract meaningful insights from complex environmental datasets, providing a foundation for advanced analysis, predictive modeling, and evidence-based environmental decision-making [8].

Exploratory Data Analysis represents an indispensable approach for identifying general patterns and unexpected features in environmental data. By employing the techniques, protocols, and tools outlined in this guide, environmental researchers can transform complex, multidimensional datasets into actionable insights that support environmental management and policy decisions. The systematic application of EDA—from basic univariate analysis to advanced multivariate techniques—ensures that subsequent statistical modeling and hypothesis testing are built upon a thorough understanding of data structure, quality, and inherent patterns. As environmental challenges grow increasingly complex, rigorous EDA will continue to play a critical role in extracting meaningful signals from noisy environmental data and informing sustainable solutions.

Detecting Outliers and Their Environmental Significance

In the realm of environmental research, Exploratory Data Analysis (EDA) serves as a critical first step for identifying general patterns, unexpected features, and outliers within datasets. [1] These outliers—observations that deviate significantly from the majority of the data—can arise from multiple sources, including sensor malfunctions, measurement inaccuracies, transmission errors, or genuine rare environmental events. [12] [13] The reliable detection of outliers is not merely a statistical exercise; it is fundamental to ensuring the integrity of subsequent analyses, from assessing model reliability to informing policy decisions for environmental conservation and public health protection. [12] This guide provides an in-depth technical framework for detecting and interpreting outliers within the broader thesis of EDA, equipping researchers with methodologies to discern erroneous measurements from critical environmental signals.

Fundamental Concepts and Importance

The Dual Nature of Outliers

In environmental datasets, outliers possess a dual nature. They can represent data quality issues that must be identified and mitigated to prevent skewed or erroneous model predictions. For instance, a malfunctioning water level sensor may report values that are physically impossible, compromising flood forecasting models. [13] Conversely, outliers can also signify critical environmental phenomena, such as a sudden spike in heavy metal concentration indicating a pollution event or an extreme rainfall measurement heralding a major storm. [12] The core challenge for researchers is to distinguish between these two types of outliers, a process that requires both robust technical methods and domain-specific knowledge.

Impact on Machine Learning Models

The presence of outliers can profoundly impact the development and performance of machine learning (ML) models designed to predict environmental conditions. Studies on predicting heavy metal (HM) contamination in soils have demonstrated that outliers can lead to inaccurate data patterns, detrimentally affecting model robustness and predictive accuracy. [12] Research shows that employing outlier detection techniques like Density-Based Spatial Clustering of Applications with Noise (DBSCAN) before model training can substantially improve the performance of ensemble algorithms such as XGBoost. For example, the R² values for predicting Chromium (Cr), Nickel (Ni), Cadmium (Cd), and Lead (Pb) were enhanced by 11.11%, 6.33%, 14.47%, and 5.68%, respectively, after outlier management. [12] This underscores the hypothesis that managing input data outliers is crucial for enhancing the precision of environmental contamination predictions.

Methodologies for Outlier Detection

A range of methodologies exists for outlier detection, from traditional statistical approaches to advanced machine learning algorithms. The choice of method depends on the data's nature, size, and distribution, as well as the availability of pre-labeled data.

Traditional Statistical and Exploratory Methods

Exploratory Data Analysis (EDA) utilizes several graphical and statistical techniques to understand variable distributions and identify potential outliers. [1]

- Boxplots: A box and whisker plot provides a compact distribution summary. The box is defined by the 25th and 75th percentiles, with a line at the median. Whiskers typically extend to a span calculated as

1.5 * (75th percentile - 25th percentile), and data points beyond this span are often identified as outliers. [1] This method is particularly useful for comparing distributions across different data subsets. - Histograms: A histogram summarizes data distribution by grouping observations into intervals (bins) and counting observations in each. Intervals with exceptionally low or high counts may indicate outliers. [1]

- Scatterplots: These graphical displays plot one variable against another, helping to visualize relationships and identify unusual observations that fall far from the general data cluster. [1]

- Q-Q Plots: Quantile-Quantile plots compare a variable's distribution to a theoretical distribution (e.g., normal distribution). Deviations from the straight line can indicate outliers, among other distributional issues. [1]

Machine Learning Approaches

Machine learning offers powerful, automated techniques for outlier detection, broadly categorized into supervised and unsupervised learning.

Unsupervised Learning: These methods are valuable when labeled data (data pre-classified as normal or outlier) are unavailable.

- Isolation Forest (IF): This algorithm is based on the principle that outliers are few and different, making them easier to isolate. It builds binary trees by randomly selecting features and split values. Samples that are isolated with fewer splits (i.e., found in shorter branch depths) are considered outliers. IF is non-parametric, has linear time complexity, and is efficient for large datasets. [13] It has been successfully applied to detect outliers in hydrological time series data from rainfall and water level stations. [13]

- DBSCAN (Density-Based Spatial Clustering of Applications with Noise): A clustering algorithm that groups together closely packed points. Points that do not belong to any cluster are labeled as noise (outliers). It is effective for identifying outliers in spatial data and has been used to improve ML model efficacy for heavy metal prediction. [12]

Supervised Learning: These methods are applicable when a labeled dataset is available for training.

- XGBoost (Extreme Gradient Boosting): An ensemble learning algorithm that can be trained on historical data containing known outlier patterns to classify new data points. In comparative studies, XGBoost demonstrated superior outlier detection performance compared to Isolation Forest when labeled data were available, yielding fewer false positives and false negatives. [13]

Table 1: Comparison of Outlier Detection Methods

| Method | Type | Key Principle | Advantages | Disadvantages |

|---|---|---|---|---|

| Boxplot | Statistical | Identifies points outside 1.5*IQR | Simple, fast, intuitive | Less effective for high-dimensional data |

| Isolation Forest | Unsupervised ML | Isolates outliers via random splits | No labels needed, efficient for large data | May struggle with high-dimensional clustered data |

| DBSCAN | Unsupervised ML | Density-based clustering | Effective for spatial data, identifies arbitrary clusters | Sensitive to hyperparameters (eps, min_samples) |

| XGBoost | Supervised ML | Ensemble tree-based classification | High accuracy with labeled data, handles complex patterns | Requires labeled training data |

Experimental Protocols and Workflows

Implementing a robust outlier detection strategy requires a structured workflow. The following protocols detail key methodologies and their application in environmental case studies.

Protocol 1: Machine Learning with Outlier Detection for Soil Heavy Metals

This protocol is adapted from studies on predicting heavy metal contamination in soils using ML and advanced outlier detection techniques. [12]

- Data Collection: Collect soil samples (e.g., 150 samples from a study region). Analyze for heavy metal concentrations (Cr, Ni, Cd, Pb) and potential influencing factors (soil characteristics, climate, geology, land use). [12]

- Data Screening: Perform initial EDA using boxplot analysis to visualize data distributions and identify obvious outliers across variables like soil pH, organic matter, and metal concentrations. [12] [1]

- Outlier Detection: Apply an outlier detection algorithm such as DBSCAN to the dataset. DBSCAN will cluster densely packed data points and mark points in low-density regions as outliers. [12]

- Model Training: Train multiple machine learning models (e.g., XGBoost, LightGBM) on the dataset both with and without the outliers identified in the previous step.

- Model Evaluation: Compare model efficacy using metrics like R² (coefficient of determination). The study demonstrated significant improvements in R² for Cr, Ni, Cd, and Pb after using DBSCAN, validating the importance of outlier management. [12]

- Feature Importance Analysis: Use the trained model (e.g., XGBoost) to determine the relative importance of different soil factors on heavy metal contents. Studies have shown soil characteristics can influence Cr (80%), Ni (72.61%), Cd (53.35%), and Pb (63.47%). [12]

- Spatial Analysis: Employ spatial analysis techniques like LISA (Local Indicators of Spatial Association) on the predicted values to identify significant contamination hotspots and understand spatial autocorrelation. [12]

Protocol 2: Quality Control of Hydrological Time Series Data

This protocol outlines a framework for quality control and outlier detection in hydrological data, such as rainfall and water levels, crucial for flood forecasting and water resource management. [13]

- Data Acquisition: Gather time series data from monitoring stations (e.g., daily rainfall and water level data from multiple stations in a river basin). [13]

- Data Preprocessing: Handle missing values by excluding intervals containing them from the model training process. [13]

- Model Selection and Application:

- If labeled data is available: Employ a supervised learning algorithm like XGBoost, trained on historical data labeled with known outlier patterns (e.g., sensor malfunctions, transmission errors). [13]

- If labeled data is unavailable: Employ an unsupervised learning algorithm like Isolation Forest (IF). IF will build multiple isolation trees to identify data points that are isolated with fewer splits as anomalies. [13]

- Validation and Implementation: Validate the model's performance by comparing its detections against known events or manual checks. Implement the chosen model within an automated quality control (AQC) system to enable real-time monitoring and outlier detection, shifting from manual processes to data-driven management. [13]

Outlier Detection and Interpretation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Computational Tools

| Item/Tool | Type | Function in Outlier Detection & Environmental Analysis |

|---|---|---|

| Soil Samples | Physical Sample | Primary matrix for laboratory analysis of heavy metal concentrations (e.g., Cr, Ni, Cd, Pb) and other soil characteristics. [12] |

| Hydrological Sensors | Field Instrument | Collects time-series data on rainfall and water levels, which are prone to outliers from malfunctions or extreme events. [13] |

| Python/R Libraries | Software | Provide implementations of statistical tests, ML algorithms (Isolation Forest, XGBoost, DBSCAN), and visualization tools (boxplots, scatterplots). [12] [13] |

| CAMS Global Reanalysis Data | Atmospheric Data | Provides coarse-resolution global data on air pollutants; used as a base for downscaling and anomaly detection projects. [14] |

| Spatial Analysis Software (e.g., GIS) | Software | Enables spatial EDA and the application of techniques like LISA and Moran's I to identify contamination hotspots and spatial outliers. [12] |

Visualization of Method Selection and Relationships

Choosing the correct outlier detection method is a critical decision point in the analytical workflow. The following diagram outlines the logical decision process based on data characteristics and research goals.

Method Selection for Outlier Detection

Within the framework of Exploratory Data Analysis, the detection and correct interpretation of outliers are foundational to robust environmental research. Whether through traditional statistical graphics or advanced machine learning techniques like Isolation Forest and XGBoost, managing outliers directly enhances the accuracy of predictive models for soil contamination, hydrological forecasting, and air quality assessment. [12] [13] The experimental protocols and workflows detailed in this guide provide a roadmap for researchers to systematically improve data integrity. By effectively identifying and reconciling these anomalous data points, scientists can ensure their analyses yield reliable, actionable insights, ultimately supporting informed decisions for environmental conservation and public health protection.

Examining Variable Distributions Through Histograms, Boxplots, and Q-Q Plots

Exploratory Data Analysis (EDA) is an essential first step in any data analysis, aimed at identifying general patterns, unexpected features, and outliers within datasets [1]. In the context of environmental research, where data is often complex and influenced by multiple natural and anthropogenic factors, understanding the distribution of variables is not merely preliminary work but a foundational component of a scientifically defensible analysis [15]. This initial exploration helps researchers understand the underlying structure of their data, check the assumptions required for more sophisticated statistical techniques, and design analyses that yield meaningful, reliable results about environmental conditions [1] [2].

The process of establishing soil background values, for instance, relies heavily on understanding data distributions, as many statistical tests have underlying assumptions about how the data is distributed [15]. Applying a statistical test to a dataset that does not meet its distributional assumptions can produce erroneous and misleading conclusions, potentially compromising environmental decision-making [15]. This guide will detail the methodologies for using three pivotal graphical tools—histograms, boxplots, and Q-Q plots—to examine variable distributions effectively.

Key Graphical Methods for Distribution Analysis

Histograms

A histogram is a graphical representation that summarizes the distribution of a continuous variable by grouping observations into intervals (also called classes or bins) and counting the number of observations in each interval [1]. It provides a visual impression of the shape, spread, and central tendency of the data, making it easy to identify patterns such as skewness, modality, and the presence of gaps.

Detailed Methodology

The construction of a histogram involves the following steps:

- Data Preparation: Begin with a single continuous variable. The EPA's analysis of log-transformed total nitrogen from the EMAP-West Streams Survey is a prime environmental example [1].

- Interval (Bin) Selection: Choose the number and width of the intervals. The appearance and interpretability of a histogram can depend significantly on this choice. While there is no single fixed rule, common strategies include:

- Using a rule of thumb like Sturges' formula or the Square-root choice for the number of bins.

- Experimenting with different bin widths in software to see which best reveals the underlying structure of the data without being too smooth or too rough.

- Axis Labeling:

- The x-axis represents the range of the data, divided into the chosen intervals.

- The y-axis can represent the frequency (count of observations), the percent or fraction of the total, or the density (where the area of the bar represents the relative frequency) [1].

- Plotting: Draw a bar for each interval, with the height corresponding to the count (or proportion) of observations within that interval. The bars are typically drawn adjacent to each other to emphasize the continuous nature of the data.

Table 1: Key Characteristics and Interpretation of Histograms

| Characteristic | Description | Interpretation in Environmental Context |

|---|---|---|

| Symmetry | Whether the distribution is mirror-imaged around a central point. | Asymmetrical, skewed distributions are common for natural soil concentrations (often positively skewed) [15]. |

| Modality | The number of prominent peaks (modes). | A single peak (unimodal) may suggest one population; multiple peaks (bimodal/multimodal) can suggest multiple populations or mixtures of materials, which should be investigated [15]. |

| Skewness | The tendency of the distribution to tail off to one side. | Positive skew (tail to the right) indicates many low values and a few very high values, common for pollutant concentrations. |

| Gaps | Intervals with no observations. | May indicate data quality issues or the presence of different geological strata or source populations. |

Boxplots (Box-and-Whisker Plots)

A boxplot (or box-and-whisker plot) provides a compact, standardized visual summary of a distribution based on a five-number summary: minimum, first quartile (Q1), median, third quartile (Q3), and maximum [1] [2]. Its primary strength lies in its ability to highlight the central value, spread, and potential outliers in the data, making it particularly useful for comparing distributions across different subsets (e.g., soil samples from different depths or regions) [1].

Detailed Methodology

The construction of a standard boxplot follows this protocol [1]:

- Calculate the Five-Number Summary:

- Minimum: The smallest data point, excluding outliers.

- First Quartile (Q1): The 25th percentile; 25% of the data fall below this value.

- Median (Q2): The 50th percentile; the middle value of the dataset.

- Third Quartile (Q3): The 75th percentile; 75% of the data fall below this value.

- Maximum: The largest data point, excluding outliers.

- Draw the Box:

- A box is drawn from Q1 to Q3. The length of this box is the Interquartile Range (IQR), which contains the middle 50% of the data.

- A line is drawn inside the box at the median.

- Draw the Whiskers:

- The upper whisker extends from Q3 to the largest data point that is less than or equal to

Q3 + 1.5 * IQR. - The lower whisker extends from Q1 to the smallest data point that is greater than or equal to

Q1 - 1.5 * IQR.

- The upper whisker extends from Q3 to the largest data point that is less than or equal to

- Plot Outliers:

- Any data points that fall outside the whisker span (i.e., greater than

Q3 + 1.5 * IQRor less thanQ1 - 1.5 * IQR) are plotted as individual points or dots. These are considered potential outliers and warrant further investigation in an environmental context [1].

- Any data points that fall outside the whisker span (i.e., greater than

Table 2: Components of a Boxplot and Their Scientific Meaning

| Component | Statistical Value | Interpretation |

|---|---|---|

| Box | Spans the Interquartile Range (IQR) from Q1 to Q3. | Represents the middle 50% of the data, showing the core spread of the distribution. |

| Median Line | The middle value of the dataset (50th percentile). | Indicates the central tendency of the data. A median not in the center of the box suggests skewness. |

| Whiskers | Extend to the minimum and maximum values within 1.5 IQR from the quartiles. | Show the range of typical data values. The length of the whiskers indicates the variability of the lower and upper quarters of the data. |

| Outliers | Data points beyond the whiskers. | Potential anomalies that may be due to measurement error, contamination, or rare natural events. Must be investigated, not automatically removed. |

Quantile-Quantile (Q-Q) Plots

A Q-Q plot (or probability plot) is a graphical technique used to compare a sample dataset to a theoretical distribution (e.g., the normal distribution) or to compare two sample datasets [1] [15]. It is one of the most powerful tools for assessing whether a dataset follows a particular distribution, which is a critical assumption for many parametric statistical tests used in environmental modeling [15].

Detailed Methodology

The protocol for creating a Q-Q plot against a theoretical normal distribution is as follows:

- Data Preparation and Sorting: Take the variable of interest and sort the data points in ascending order.

- Theoretical Quantile Calculation: For each ordered data point, calculate its corresponding theoretical quantile (z-score) from a standard normal distribution. This is often done using a plotting position formula like

(i - 0.5) / n, whereiis the rank of the observation andnis the sample size. - Plotting: Plot the points on a scatterplot where:

- The x-axis represents the theoretical quantiles (from the normal distribution).

- The y-axis represents the actual observed quantiles (the sorted data values).

- Reference Line: A reference line (often a 45-degree line) is added to the plot, representing where the points would lie if the data perfectly followed the theoretical distribution.

Interpretation

- Data Points Follow the Line: If the plotted points fall approximately along the straight reference line, it suggests the data are consistent with the theoretical distribution (e.g., normal) [1].

- Systematic Deviations from the Line: Curvature at the ends of the Q-Q plot indicates skewness. S-shaped curves indicate heavier or lighter tails than the theoretical distribution. The EPA highlights the utility of Q-Q plots in demonstrating how a log-transform can make total nitrogen data better approximate a normal distribution [1].

- Identification of Multiple Populations: The presence of distinct breaks or curves in the Q-Q plot can suggest that the data come from more than one underlying population, a key consideration when defining a target population for background soil studies [15].

Practical Workflow and Implementation

The following diagram illustrates a logical workflow for employing these three graphical methods in sequence to thoroughly examine a variable's distribution.

Graphical Workflow for Distribution Analysis

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Distributional Analysis

| Tool / Reagent | Function / Purpose | Example in Environmental Research |

|---|---|---|

| Statistical Software (R/Python) | Provides the computational environment to generate visualizations and calculate summary statistics. | R with ggplot2 for creating publication-quality histograms and boxplots. Python with SciPy and matplotlib for generating Q-Q plots and assessing normality [2]. |

| Probability Distribution Tables/Functions | Serves as the theoretical benchmark against which empirical data is compared. | The normal distribution is a common benchmark, but lognormal or gamma distributions may be more appropriate for skewed environmental data like soil contaminant concentrations [15]. |

| Data Visualization Guidelines | A set of principles to ensure graphs are accurate, clear, and accessible. | Using sufficient color contrast (≥ 4.5:1 for text) for readability [16], employing intuitive colors (e.g., green for vegetation indices), and using grey for less important context elements [17]. |

| ProUCL Software (USEPA) | A specialized statistical software package for environmental applications. | Used for calculating background threshold values, especially with datasets that are skewed and not normally distributed [15]. |

Critical Data Considerations for Environmental Research

Before applying any graphical or statistical method, data must meet certain quality and characteristic standards to ensure defensible results [15].

- Minimum Sample Size: A sufficient sample size is critical. While some guidance suggests a minimum of 8–10 samples to calculate statistical parameters, the typical heterogeneity of soils leads many agencies to recommend at least 20 samples for a robust analysis [15].

- Data Representativeness: The dataset must be a random, unbiased sample of the target population (e.g., arsenic in a specific soil type). Combining data from different populations (e.g., shallow and deep soils) without justification can lead to erroneous background values [15]. Graphical displays like Q-Q plots and histograms are key tools for identifying the presence of multiple populations [15].

- Data Independence: A core assumption for most statistical tests is that each sample is independent and not influenced by other measurements [15]. Sampling design must account for this.

Histograms, boxplots, and Q-Q plots are not merely isolated graphics but are interconnected tools that, when used in concert, provide a comprehensive picture of a variable's distribution. For environmental researchers and scientists, this rigorous exploratory process is indispensable. It confirms that subsequent, more complex statistical analyses and models—which often form the basis for risk assessments and regulatory decisions—are built upon a sound and well-understood data foundation. By following the detailed methodologies and workflows outlined in this guide, professionals can ensure their findings are both statistically valid and scientifically defensible.

Generating Hypotheses About Stressor-Response Relationships in Environmental Systems

In environmental research, stressor-response relationships describe how biological systems change in response to varying levels of environmental stressors. As defined by the U.S. Environmental Protection Agency (EPA), this relationship follows the fundamental principle that as exposure to a stressor increases, the intensity or frequency of a biological effect increases correspondingly [18]. Understanding these relationships is a core component of causal assessment in environmental systems, enabling researchers to identify anthropogenic impacts and guide restoration efforts.

This process is intrinsically linked to Exploratory Data Analysis (EDA). EDA serves as the critical first step in identifying general patterns, outliers, and unexpected features within environmental datasets [1]. In biological monitoring, where sites are often affected by multiple, co-occurring stressors, initial explorations of stressor correlations are essential before attempting to relate stressor variables to biological responses. EDA provides the foundational insights that inform the development of robust, testable causal hypotheses about the mechanisms affecting ecological communities.

Foundational Concepts and Evidence Integration

The evaluation of stressor-response hypotheses typically relies on multiple lines of evidence, which can be categorized based on the source and nature of the data. Two primary types of evidence used in frameworks like the EPA's CADDIS are detailed below.

Table 1: Types of Stressor-Response Evidence from Field Studies

| Evidence Type | Definition | Supporting Evidence Example | Weakening Evidence Example |

|---|---|---|---|

| Stressor-Response from the Field [18] | Relationships derived from data collected at the impaired site or from a set of spatially contiguous sites. | Mayfly taxonomic richness correlates inversely with % embeddedness; high embeddedness sites have low taxa counts. | No clear pattern exists between % embeddedness and mayfly richness; sites with high and low embeddedness have similar taxa counts. |

| Stressor-Response from Other Field Studies [19] | Relationships derived from other, similar field studies, used to assess if the stressor at the impaired site is at levels sufficient to cause the observed effect. | State monitoring data shows mayfly richness declines once fine silt coverage exceeds 10%; at the case site, coverage is 15%. | State monitoring data shows mayfly richness declines once fine silt coverage exceeds 10%; at the case site, coverage is only 5%. |

Hypotheses are evaluated by scoring the strength and consistency of the evidence. The following table provides a generalized scoring framework for stressor-response relationships from field data.

Table 2: Scoring Evidence for Stressor-Response Relationships from Field Data [18]

| Finding | Interpretation | Score |

|---|---|---|

| A strong effect gradient is observed relative to exposure to the candidate cause at spatially linked sites, and the gradient is in the expected direction. | Strongly supports the case, but is not convincing due to potential confounding. | ++ |

| A weak effect gradient is observed at spatially linked sites, OR a strong gradient is observed at non-linked sites in the expected direction. | Somewhat supports the case, but not strongly supportive due to potential confounding or random error. | + |

| An uncertain effect gradient is observed. | Neither supports nor weakens the case, as the evidence is ambiguous. | 0 |

| An inconsistent effect gradient is observed at spatially linked sites, OR a strong gradient is observed at non-linked sites in an unexpected direction. | Somewhat weakens the case, but not strongly weakening due to potential confounding or error. | - |

| A strong effect gradient is observed at spatially linked sites, but the relationship is not in the expected direction. | Strongly weakens the case, but is not convincing due to potential confounding. | -- |

Methodological Workflow for Hypothesis Generation

The process of generating and evaluating stressor-response hypotheses involves a sequence of steps from initial data exploration to causal assessment. The following diagram visualizes this core analytical workflow.

Exploratory Data Analysis (EDA) Techniques

EDA is the essential first step for generating initial hypotheses about potential stressors. Key graphical methods include [1]:

- Histograms and Boxplots: Used to examine the distribution of individual stressor and response variables. Boxplots are particularly useful for comparing distributions across different site categories (e.g., reference vs. impaired).

- Scatterplots: Fundamental for visualizing the relationship between pairs of variables (e.g., a stressor on the x-axis and a biological response on the y-axis). Scatterplots can reveal nonlinear relationships, non-constant variance, and outliers that may influence subsequent statistical analyses.

- Scatterplot Matrices: A convenient way to display pairwise relationships between several variables simultaneously, providing a broad overview of potential interactions in the dataset.

- Quantile-Quantile (Q-Q) Plots: Used to check whether a variable follows a particular theoretical distribution (e.g., normality), which can inform the selection of appropriate statistical tests.

Statistical Analysis of Relationships

Once potential relationships are identified visually, statistical methods are employed to quantify them.

- Correlation Analysis: Measures the covariance of two random variables. Pearson's product-moment correlation coefficient (r) measures linear association, while Spearman's rank-order coefficient (ρ) and Kendall's tau (τ) are more robust to outliers and non-linear, monotonic relationships [1].

- Conditional Probability Analysis (CPA): Used to estimate the probability of observing a biological effect (Y) given that a particular stressor condition (X) is present or exceeds a threshold [1]. This requires dichotomizing the biological response variable (e.g., defining "poor" vs. "good" condition). The probability is calculated as P(Y|X) = P(X and Y) / P(X).

- Regression Techniques: Field stressor-response relationships are commonly analyzed with regression. Quantile regression may be particularly useful for identifying upper or lower limits of a biological response across a gradient of stressor intensity [19].

Successfully navigating a stressor-response analysis requires a suite of conceptual and statistical tools. The following table outlines essential resources for environmental researchers.

Table 3: Research Reagent Solutions for Stressor-Response Analysis

| Tool or Technique | Primary Function | Application Context |

|---|---|---|

| Scatterplots & Correlation Coefficients [1] | Visually and statistically assess the relationship between two continuous variables. | Initial exploration of data to identify potential causal links and data issues (e.g., outliers, non-linearity). |

| Conditional Probability Analysis (CPA) [1] | Estimate the probability of a biological effect given the presence or level of a stressor. | Useful when the response variable can be meaningfully dichotomized (e.g., impaired/not impaired). |

| Boxplots (Box and Whisker Plots) [1] | Compact visual summary of a variable's distribution, including median, quartiles, and outliers. | Comparing the distribution of a stressor or response metric across different site groups or conditions. |

| Multivariate Visualization (e.g., PCA) [18] [19] | Reduce dimensionality and group highly correlated stressors to understand co-occurrence patterns. | Addressing confounding when multiple stressors co-vary, helping to identify groups of stressors that increase/decrease together. |

| CADStat [1] | A menu-driven software package that provides tools for calculating correlations, conditional probabilities, and other statistical measures relevant to causal analysis. | Applying standardized analytical methods within the EPA CADDIS causal assessment framework. |

Visualization and Accessibility in Scientific Communication

Effective communication of stressor-response findings is critical. Adhering to principles of accessible data visualization ensures that charts and diagrams are understandable by the broadest possible audience [10].

- Color and Contrast: Use colors with a high contrast ratio (at least 4.5:1 for text and 3:1 for graphical elements) [10]. Never use color as the sole means of conveying information; supplement with patterns, shapes, or direct labels [10].

- Clarity and Annotation: Eliminate visual clutter and use descriptive titles, subtitles, and annotations. Annotations should explain not only "what" is being measured but "why" it matters and "how" to read the chart, using plain language and avoiding acronyms [20].

- Accessible Flowcharts: For complex diagrams, provide a text-based alternative. This can be an ordered list with "If X, then go to Y" language or a structured heading hierarchy that conveys the same logical flow [21]. The alt text for a flowchart image should summarize the overall relationship as you would describe it over the phone [21].

The rigorous generation and testing of hypotheses about stressor-response relationships form the bedrock of scientific causal assessment in environmental systems. This process is inherently iterative, grounded in thorough exploratory data analysis, and reliant on the integration of multiple lines of evidence. By applying a structured workflow—from initial data exploration using EDA techniques, through quantitative analysis with correlation and regression, to critical evaluation against evidence from other studies—researchers can move from observational patterns to defensible causal inferences. Adhering to best practices in data visualization and accessibility ensures that these complex relationships are communicated effectively, fostering robust scientific discourse and informing sound environmental decision-making.

Assessing Data Quality and Recognizing Measurement Limitations

Exploratory Data Analysis (EDA) serves as a critical first step in environmental research, establishing a foundation for robust scientific conclusions. This approach identifies general patterns, outliers, and unexpected features within datasets before formal statistical modeling is conducted [1]. In the context of environmental monitoring, where sites are often affected by multiple interacting stressors, initial explorations of data quality and variable relationships are paramount. Understanding measurement limitations at this early stage guides the selection of appropriate analytical techniques and ensures that subsequent analyses yield meaningful, reliable results that accurately represent complex environmental systems [1].

The growing integration of big data analytics into environmental quality monitoring further amplifies the importance of rigorous data assessment [22]. As researchers increasingly employ advanced data science techniques and machine learning algorithms to analyze vast environmental datasets, establishing robust protocols for evaluating data quality becomes essential for effective evidence-based policymaking [22]. This technical guide provides environmental researchers with comprehensive methodologies for assessing data quality and recognizing measurement limitations within the EDA framework, enabling more transparent and reproducible environmental science.

Foundational Concepts in Data Quality Assessment

Data Quality Dimensions in Environmental Context

Data quality in environmental research encompasses multiple dimensions that collectively determine the fitness-for-use of datasets for specific analytical purposes. Key dimensions include:

- Completeness: The degree to which expected data values are present without gaps or missing observations, critical for time-series analysis of environmental parameters.

- Accuracy: The closeness of measured values to true values or accepted reference standards, particularly important for chemical concentration measurements and sensor data.

- Precision: The repeatability of measurements under unchanged conditions, relevant for laboratory analyses and field instrument calibration.

- Consistency: The absence of contradictions in datasets across time and space, essential for long-term environmental monitoring studies.

- Representativeness: The extent to which data accurately reflects the environmental population or phenomenon being studied, crucial for spatial analyses and ecological surveys.

FAIR Principles for Environmental Data

Adhering to Findable, Accessible, Interoperable, and Reusable (FAIR) principles significantly enhances data quality in environmental research [23]. Implementing community-centric metadata reporting formats makes Earth and environmental science data more transparent and reusable, addressing critical interoperability challenges that often hinder data integration across disciplines [23]. These standardized formats provide guidelines for consistently formatting data within specific environmental science domains, facilitating both human understanding and machine-actionability of complex environmental datasets.

Table 1: FAIR Principles Implementation for Environmental Data Quality

| Principle | Quality Dimension Addressed | Implementation in Environmental Research |

|---|---|---|

| Findable | Completeness | Persistent identifiers (DOIs), Rich metadata, Indexed in searchable repositories |

| Accessible | Representativeness | Standardized retrieval protocols, Authentication and authorization where appropriate, Long-term preservation |

| Interoperable | Consistency | Use of controlled vocabularies, Standard data formats, Qualified references to other metadata |

| Reusable | Accuracy | Multiple attributes of provenance, Detailed usage licenses, Community reporting formats |

Methodologies for Assessing Data Quality

Distribution Analysis Techniques

Examining how values of different variables are distributed represents an essential initial step in EDA for assessing data quality [1]. Multiple graphical approaches reveal distribution characteristics that inform both quality assessment and subsequent analytical choices:

Histograms summarize data distribution by placing observations into intervals and counting observations in each interval [1]. The y-axis can represent number of observations, percent of total, fraction of total, or density. In environmental applications, histograms can reveal measurement limitations such as detection limit effects, where values cluster at instrument detection thresholds.

Boxplots provide compact distribution summaries through five-number summaries (minimum, first quartile, median, third quartile, maximum) [1]. These visualizations are particularly valuable for comparing distributions across different environmental subsets (e.g., sampling sites, time periods) and identifying potential measurement errors appearing as extreme outliers.

Quantile-Quantile (Q-Q) Plots graphically compare variable distributions to theoretical distributions or to other variables [1]. A common application checks normality assumptions, with deviations from linearity indicating distributional issues that may suggest measurement limitations or need for data transformation before analysis.

Cumulative Distribution Functions (CDF) display the probability that observations of a variable are not larger than a specified value [1]. In environmental monitoring with probability sampling designs, weighted CDFs (using inclusion probabilities as weights) provide population-level estimates that account for sampling design, addressing representativeness limitations.

Relationship Analysis Methods

Scatterplots graphically display matched data with one variable on each axis, visualizing relationships and identifying potential data quality issues [1]. These plots reveal characteristics such as nonlinear relationships or non-constant variance that influence analytical choices and may indicate measurement limitations in environmental datasets.

Correlation Analysis measures covariance between two random variables in matched data [1]. Different correlation coefficients serve complementary roles in data quality assessment:

- Pearson's product-moment correlation coefficient (r): Measures degree of linear association

- Spearman's rank-order correlation coefficient (ρ): Uses data ranks, more robust to outliers

- Kendall's tau (τ): Represents probability that two variables are ordered nonrandomly

Different correlation coefficients may provide divergent estimates depending on data distribution, offering insights into potential measurement limitations and data quality issues [1].

Conditional Probability Analysis (CPA) applies conditional probability concepts to dichotomized environmental response variables [1]. This technique requires defining thresholds that categorize samples into two classes (e.g., impaired/unimpaired), then estimating the probability of observing environmental impairment given particular stressor conditions. CPA is most meaningful when applied to field data collected using randomized, probabilistic sampling designs [1].

Table 2: Statistical Measures for Data Quality Assessment in Environmental Research

| Method | Primary Quality Dimension | Calculation | Application Context |

|---|---|---|---|

| Pearson's r | Consistency | r = Σ[(xi - x̄)(yi - ȳ)] / [√Σ(xi - x̄)² √Σ(yi - ȳ)²] | Linear relationships between normally distributed variables |

| Spearman's ρ | Consistency | ρ = 1 - [6Σd_i² / (n(n² - 1))] where d = rank difference | Monotonic relationships, non-normal data, ordinal measurements |

| Kendall's τ | Consistency | τ = (C - D) / √[(C + D + Tx)(C + D + Ty)] where C=concordant pairs, D=discordant pairs | Small sample sizes, many tied ranks |

| Conditional Probability | Accuracy | P(Y|X) = P(Y∩X) / P(X) where Y=response, X=stressor | Stressor identification in causal analysis with dichotomous response |

Experimental Protocols for Data Quality Assessment

Protocol for Distribution-Based Quality Assessment

Purpose: Systematically evaluate data quality through distribution analysis to identify measurement limitations and inform analytical approaches.

Materials Required:

- Environmental dataset with relevant variables

- Statistical software (R, Python, or specialized tools like CADStat [1])

- Visualization capabilities

Procedure:

- Generate distribution visualizations: Create histograms, boxplots, and Q-Q plots for all key variables [1].

- Assess normality: Examine Q-Q plots for deviations from linearity indicating non-normality.

- Identify outliers: Use boxplots to detect extreme values requiring verification.

- Compare subsets: Generate separate boxplots for different sampling locations, time periods, or experimental conditions.

- Evaluate transformations: Apply appropriate transformations (e.g., log transformation for environmental concentration data) and reassess distributions.

- Document findings: Record distribution characteristics, identified outliers, and transformation decisions.

Protocol for Relationship-Based Quality Assessment

Purpose: Identify relationships between variables and detect potential data quality issues through correlation and conditional probability analysis.

Materials Required:

- Matched environmental dataset with stressor and response variables

- Statistical software with correlation and probability calculation capabilities

- Threshold values for dichotomizing response variables (for CPA)

Procedure:

- Create scatterplot matrices: Generate pairwise scatterplots for multiple variables to visualize relationships [1].

- Calculate correlation coefficients: Compute Pearson's, Spearman's, and Kendall's coefficients for variable pairs.

- Compare coefficient patterns: Identify discrepancies between coefficients that may indicate nonlinear relationships or outliers.

- Dichotomize response variables: Apply scientifically-defensible thresholds to categorize response variables (e.g., biologically impaired/unimpaired) [1].

- Compute conditional probabilities: Calculate probabilities of observing response categories given stressor conditions.

- Visualize CPA results: Plot conditional probabilities against stressor gradients to identify potential thresholds.

Visualization of Data Quality Assessment Workflows

Figure 1: Comprehensive workflow for assessing data quality and recognizing measurement limitations in environmental research, integrating distribution analysis and relationship assessment methodologies.

Table 3: Research Reagent Solutions for Environmental Data Quality Assessment

| Tool/Resource | Function | Application Context |

|---|---|---|

| CADStat [1] | Menu-driven package for data visualization and statistical methods | Calculating correlations, conditional probabilities; EDA for environmental data |

| ESS-DIVE Reporting Formats [23] | Community-centric (meta)data reporting formats | Standardizing data structure and metadata for FAIR environmental data |

| Scatterplot Matrices [1] | Multiple scatterplots displayed in matrix format | Visualizing pairwise relationships between multiple variables simultaneously |

| Probability Sampling Designs [1] | Statistical sampling approaches with known inclusion probabilities | Ensuring data representativeness for population-level inference |

| FAIR Data Principles [23] | Guidelines for Findable, Accessible, Interoperable, Reusable data | Enhancing data transparency, reproducibility, and reuse potential |

| Community Crosswalks [23] | Tabular maps of existing data standards and resources | Identifying gaps in standards and harmonizing variables across datasets |

Implementing systematic approaches to assess data quality and recognize measurement limitations represents a fundamental component of exploratory data analysis in environmental research. Through distribution analysis, relationship assessment, and adherence to FAIR data principles, researchers can ensure their datasets support robust scientific conclusions and evidence-based environmental policymaking. The integration of big data analytics into environmental quality monitoring [22] makes these rigorous assessment protocols increasingly vital for deriving meaningful insights from complex environmental datasets. As environmental challenges grow more complex, systematic data quality assessment enables researchers to accurately characterize environmental systems, identify emerging threats, and develop effective management strategies supported by high-quality, trustworthy data.

From Theory to Practice: Essential EDA Methods for Environmental Applications

Exploratory Data Analysis (EDA) is an essential first step in any data-driven environmental research project. It involves investigating data sets to summarize their main characteristics, often using visual methods to discover patterns, spot anomalies, test hypotheses, and check assumptions before formal modeling [2]. Within the environmental sciences, EDA is particularly crucial due to the complexity, volume, and inherent spatiotemporal variability of ecological and climatic data [24] [1]. This technical guide details the application of three foundational EDA visualization techniques—scatterplots, histograms, and boxplots—within the context of environmental research, providing researchers with detailed methodologies and practical frameworks for their implementation.

Core Visualization Techniques for Environmental Data

The following table summarizes the primary functions and environmental data applications of the three core visualization techniques.

Table 1: Core Visualization Techniques for Environmental Data Analysis

| Visualization Type | Primary Function in EDA | Common Environmental Data Applications | Key Insights Revealed |

|---|---|---|---|

| Scatterplot [25] [1] | Display relationships or correlations between two continuous variables. | Marketing spend vs. sales; Customer acquisition cost (CAC) vs. lifetime value (LTV) [25]. | Trends, outliers, clusters, and the strength/direction of correlations between variables. |

| Histogram [25] [1] | Show how values are distributed across ranges or bins. | Age demographics; Emergency department wait times; Pollution level distributions; Rainfall patterns [25] [26]. | Whether data is normally distributed, skewed, or multi-modal (having multiple peaks). |

| Boxplot (Box & Whisker) [1] [27] | Provide a compact summary of a variable's distribution. | Comparing distributions across different groups; Summarizing massive datasets like historical temperature records [27]. | Central tendency, spread, skewness, and identification of outliers across groups or over time. |

Technical Protocols and Application in Environmental Research

Scatterplots