Unveiling the Chemical Universe: A Comprehensive Guide to HRMS for Non-Target Screening of Environmental Pollutants

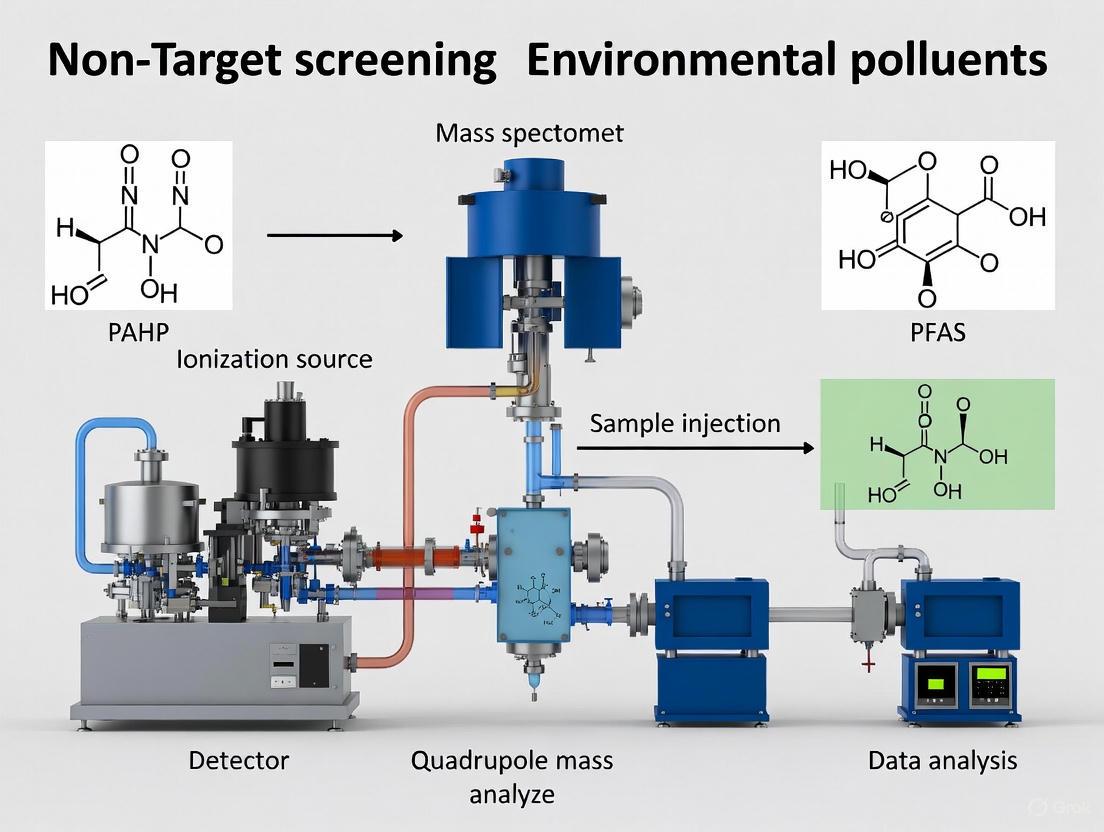

This article provides a comprehensive overview of the application of High-Resolution Mass Spectrometry (HRMS) in non-target screening (NTS) for identifying unknown and emerging environmental contaminants.

Unveiling the Chemical Universe: A Comprehensive Guide to HRMS for Non-Target Screening of Environmental Pollutants

Abstract

This article provides a comprehensive overview of the application of High-Resolution Mass Spectrometry (HRMS) in non-target screening (NTS) for identifying unknown and emerging environmental contaminants. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of NTS, detailing advanced methodological workflows from data acquisition to processing. The content addresses key challenges in troubleshooting and optimization, and critically evaluates validation strategies and comparative performance against traditional techniques. By synthesizing current research and real-world applications, this guide serves as a vital resource for leveraging HRMS to achieve a holistic understanding of complex environmental pollutant mixtures, thereby enhancing regulatory science and prioritization efforts.

The New Paradigm: Why HRMS and Non-Target Screening are Revolutionizing Environmental Analysis

Traditional environmental monitoring frameworks, such as the European Water Framework Directive (WFD), focus on a limited set of priority substances (PS) and River Basin Specific Pollutants (RBSP). While this approach is useful for regulating known contaminants, it overlooks the vast majority of chemical pollutants present in the environment. Current WFD monitoring addresses only 45 priority substances across the EU, with member states monitoring an average of approximately 55 regulated compounds per river catchment [1]. This represents just a fraction of the over 350,000 chemicals and chemical mixtures registered for commercial use globally [2]. This narrow focus creates a significant gap in environmental risk assessment, allowing newly emerging contaminants and transformation products to remain undetected until they potentially cause ecological harm.

High-resolution mass spectrometry (HRMS) enables non-target screening (NTS), a powerful approach that moves beyond conventional targeted analysis. Unlike targeted methods that look for predefined compounds, NTS employs broad screening to detect thousands of organic substances simultaneously in a single analysis [3]. This paradigm shift allows researchers to identify chemicals of emerging concern (CECs), transformation products, and previously unknown contaminants, providing a more comprehensive basis for environmental monitoring and protection [1].

The NTS Solution: A Multi-Dimensional Approach

Non-target screening with HRMS generates extensive datasets, often containing thousands of chemical features per sample. The major challenge lies in prioritizing these features for identification. Recent research has established that no single strategy is sufficient; instead, a combination of complementary approaches is required to focus resources on the most relevant contaminants [4]. The integration of seven key prioritization strategies creates a robust framework for efficient compound identification.

Table 1: The Seven Key Prioritization Strategies for Non-Target Screening

| Strategy Number | Strategy Name | Core Principle | Key Applications |

|---|---|---|---|

| P1 | Target and Suspect Screening | Matching features against predefined databases of known or suspected contaminants | Identifying compounds with known environmental relevance; early complexity reduction |

| P2 | Data Quality Filtering | Applying quality control measures to remove artifacts and unreliable signals | Foundation step to reduce false positives and improve data accuracy |

| P3 | Chemistry-Driven Prioritization | Using HRMS data properties to prioritize specific compound classes | Finding halogenated compounds (e.g., PFAS), transformation products, homologues |

| P4 | Process-Driven Prioritization | Using spatial, temporal, or process-based comparisons | Identifying persistent compounds, newly formed compounds, source apportionment |

| P5 | Effect-Directed Prioritization | Linking chemical features to biological effects | Directly targeting bioactive contaminants; virtual EDA (vEDA) using statistical models |

| P6 | Prediction-Based Prioritization | Using models and machine learning to estimate risk or concentration | Calculating risk quotients (PEC/PNEC) without full structural elucidation |

| P7 | Pixel- or Tile-Based Analysis | Using the chromatographic image to pinpoint regions of interest | Managing complex datasets (especially 2D chromatography); early-stage exploration |

These strategies can be grouped into four complementary domains that address different aspects of feature reduction: chemical (P1, P3), toxicological (P5, P6), external (P4), and preprocessing (P2, P7) [4]. The sequential application of these strategies enables a stepwise reduction from thousands of detected features to a manageable number of high-priority compounds worthy of further investigation.

Workflow Integration and Cumulative Filtering

The power of these prioritization strategies emerges from their integration into a cohesive workflow. For example, an initial analysis might detect 5,000 features in an environmental sample. Target and suspect screening (P1) could flag 300 of these as known or suspected contaminants. Data quality filtering (P2) and chemistry-driven prioritization (P3) might then reduce this list to 100 features by removing low-quality signals and chemically irrelevant compounds. Subsequent process-driven prioritization (P4) could identify 20 features linked to poor removal in a wastewater treatment plant. Finally, effect-directed (P5) and prediction-based (P6) prioritization might highlight 10 features present in a toxic fraction, with 5 ultimately prioritized based on predicted risk [4]. This cumulative filtering approach efficiently narrows complex datasets to a focused list of environmentally relevant contaminants.

Detailed Experimental Protocols

Protocol 1: Implementing an Integrated Prioritization Workflow

This protocol outlines the step-by-step procedure for applying the seven prioritization strategies to NTS data, from initial data acquisition to final compound identification.

3.1.1 Materials and Equipment

- Liquid chromatography system coupled to high-resolution mass spectrometer (LC-HRMS)

- Sample set including environmental samples, blanks, and quality controls

- Data processing software (e.g., MZmine 2, XCMS)

- Compound databases (e.g., PubChemLite, CompTox Chemicals Dashboard, NORMAN Suspect List Exchange)

- Statistical analysis software (e.g., R, Python with appropriate packages)

3.1.2 Procedure

- Sample Preparation and Analysis

- Prepare samples using appropriate extraction methods (e.g., solid-phase extraction for water samples)

- Analyze samples using LC-HRMS with data-dependent acquisition (DDA) or data-independent acquisition (DIA)

- Include procedural blanks, solvent blanks, and quality control samples (pooled quality control) throughout the sequence

Data Preprocessing and Feature Detection

- Convert raw data to open formats (e.g., mzML)

- Perform peak picking, retention time alignment, and gap filling using data processing software

- Generate a feature table containing m/z, retention time, and intensity values

Sequential Prioritization

- Apply P1 (Target and Suspect Screening): Screen features against suspect lists of known environmental contaminants using precise mass (typically < 5 ppm error) and isotope patterns

- Apply P2 (Data Quality Filtering): Remove features present in blanks, those with poor peak shapes, and those showing low reproducibility across replicates

- Apply P3 (Chemistry-Driven Prioritization): Use mass defect filtering to identify halogenated compounds; search for homologous series and diagnostic fragments

- Apply P4 (Process-Driven Prioritization): Compare feature intensities across sample groups (e.g., upstream vs. downstream, influent vs. effluent) using statistical tests; perform correlation analysis with process parameters

- Apply P5 (Effect-Directed Prioritization): Correlate feature intensities with biological effect data; for virtual EDA, use statistical models like partial least squares discriminant analysis

- Apply P6 (Prediction-Based Prioritization): Use in silico tools (e.g., MS2Quant, MS2Tox) to predict concentration and toxicity; calculate risk quotients

- Apply P7 (Pixel-Based Approaches): For complex samples, apply pixel-based analysis before feature detection to identify regions of interest

Compound Identification

- Acquire MS/MS spectra for prioritized features

- Compare experimental spectra with spectral libraries (e.g., MassBank, NIST)

- Use in silico fragmentation tools to predict spectra for candidate structures

- Apply confidence levels for identification according to established guidelines

Validation

- Confirm identities using analytical standards when available

- Perform semi-quantification for prioritized compounds

- Integrate results with risk assessment frameworks

Protocol 2: Toxicological Priority Index (ToxPi) for Risk-Based Prioritization

This protocol details the procedure for implementing the Toxicological Priority Index (ToxPi), a semi-quantitative risk scoring system that integrates multiple criteria to prioritize contaminants based on their potential risk.

3.2.1 Materials and Equipment

- List of identified compounds from NTS

- Chemical property prediction software (e.g., OPERA, EPI Suite)

- Toxicity databases (e.g., CompTox Chemicals Dashboard, ECOTOX)

- Statistical software with visualization capabilities

3.2.2 Procedure

- Data Matrix Compilation

- For each compound, compile the following parameters:

- Detection frequency across samples

- Mean relative abundance (peak area)

- Bioconversion half-life (experimental or predicted)

- Bioconcentration factor (BCF) or bioaccumulation factor (experimental or predicted)

- Predicted no-effect concentration (PNEC)

- For each compound, compile the following parameters:

Data Normalization

- Normalize each parameter to a 0-1 scale using min-max normalization or percentile ranking

- For negative correlates (e.g., PNEC), invert the scale so higher values indicate higher concern

ToxPi Calculation

- Calculate the overall ToxPi score as a weighted sum of normalized values

- Alternatively, use the ToxPi graphical approach, slicing the score into wedges representing different parameters

Priority Setting

- Rank compounds based on their ToxPi scores

- Set a threshold for priority concern (e.g., mean + 1 standard deviation of all scores)

- Visually inspect the ToxPi profiles to understand the drivers of concern for high-priority compounds

Application

- Focus further resources (standard acquisition, monitoring) on high-priority compounds

- Use the results to inform regulatory monitoring programs and risk management decisions

Table 2: Case Study Application of NTS and ToxPi in Tropical Island Watersheds [5]

| Study Aspect | Application Details | Key Outcomes |

|---|---|---|

| Sample Location | Three major rivers in Hainan Province: Changhua, Wanquan, and Nandu | Comprehensive characterization of emerging pollutants in understudied tropical ecosystems |

| NTS Results | 177 high-confidence compounds identified | Detected pharmaceuticals, industrial additives, pesticides, and natural products |

| Source Apportionment | Non-negative matrix factorization (NMF) machine learning approach | Revealed distinct anthropogenic signatures: domestic sewage, pharmaceutical discharges, agricultural runoff |

| Risk Prioritization | Toxicological Priority Index (ToxPi) with multiple criteria | Prioritized 29 substances of elevated concern (ToxPi > 4.41); key compounds: stearic acid, tretinoin, ethyl myristate |

| Framework Value | NTS combined with machine learning and semi-quantitative risk scoring | Established a replicable framework for pollution assessment under data-limited conditions |

Successful implementation of NTS requires both specialized materials and computational resources. The following table details key components of the NTS toolkit.

Table 3: Essential Research Reagents and Computational Tools for NTS

| Tool Category | Specific Tools/Resources | Function in NTS Workflow |

|---|---|---|

| HRMS Instrumentation | LC-HRMS, GC-HRMS, GC×GC-HRMS, LC×LC-HRMS | Separation and accurate mass measurement of complex environmental samples |

| Reference Standards | Analytical standards for target compounds, isotope-labeled internal standards | Quantification and confirmation of compound identities |

| Database Resources | PubChemLite, CompTox Dashboard, NORMAN Suspect List Exchange | Compound identification via mass and spectral matching |

| Spectral Libraries | MassBank, NIST HRMS Library, mzCloud | MS/MS spectrum matching for structural elucidation |

| Data Processing Software | MZmine 2, XCMS, MS-DIAL | Feature detection, alignment, and data reduction |

| In Silico Prediction | MS2Tox, MS2Quant, CFM-ID, MetFrag | Prediction of toxicity, concentration, and fragmentation patterns |

| Statistical Platforms | R, Python with specialized packages (e.g., patRoon, IPO) | Multivariate statistics, trend analysis, and data visualization |

The limitations of traditional monitoring approaches, focused on a narrow set of priority pollutants, are evident in the face of increasing chemical complexity in the environment. Non-target screening with HRMS, particularly when implementing integrated prioritization strategies, provides a powerful framework to address these limitations. By combining chemical, toxicological, and process-based approaches, researchers can efficiently transition from thousands of detected features to a focused list of high-priority contaminants deserving further investigation and potential regulatory attention.

The future of comprehensive environmental monitoring lies in the widespread adoption of these approaches, enhanced by harmonized protocols, open data exchange, and interdisciplinary collaboration. As these methodologies continue to mature and become more accessible, they will play an increasingly vital role in protecting ecosystem and human health from the complex mixture of pollutants present in our environment.

High-Resolution Mass Spectrometry (HRMS) has revolutionized environmental monitoring by enabling non-target screening (NTS) to detect and identify unknown chemical contaminants. A foundational capability of HRMS-based NTS is the creation of a digital archive of full-scan HRMS analyses and HRMS/MS spectra [1]. This archive can be exploited retrospectively as new concerns or knowledge about specific substances emerge, providing a powerful mechanism for proactive chemical risk assessment [1]. This application note details the protocols for leveraging digital archiving to investigate future environmental threats without re-sampling, framed within broader research on HRMS for non-target screening of environmental pollutants.

The Digital Archiving Workflow

The digital archiving workflow transforms raw environmental sample data into a reusable knowledge base for retrospective investigation. The process, depicted in the diagram below, ensures that data acquired today remains valuable for addressing tomorrow's analytical challenges.

This workflow enables researchers to investigate compounds that were not targets or even known at the time of original analysis, such as newly identified persistent, mobile, and toxic (PMT) substances or emerging transformation products [1] [6].

Key Applications and Quantitative Findings

Digital archiving has enabled significant discoveries across multiple environmental compartments. The table below summarizes key findings from recent studies utilizing retrospective analysis.

Table 1: Key Findings from Retrospective HRMS Studies in Environmental Monitoring

| Sample Matrix | Number of Features Detected | Prioritized Compounds | Key Findings | Reference |

|---|---|---|---|---|

| Urban Stormwater (First Flush) | 7,707 total features | 42 PMT/vPvM compounds | 66% of quantified PMTs present in >50% of samples; 11 PMTs first report in runoff | [6] |

| River Rhine Monitoring | Not specified | Quaternary phosphonium compounds | Significant emissions (tons/year) over at least a decade identified | [1] |

| Stormwater vs. Rainwater | 280 (LC-ESI-), 1,156 (GC-APCI) significantly different features | Tolytriazole, Methylsalicylate, 1,3-diphenylguanidine | Runoff considerably more polluted than rainwater | [6] |

Experimental Protocols

Protocol 1: Building a Retrospective HRMS Archive

Purpose: To establish a standardized procedure for creating digital archives of environmental samples suitable for retrospective NTS.

Materials:

- High-resolution mass spectrometer (Orbitrap, Q-TOF, or FT-ICR)

- Liquid or gas chromatography system

- Sample collection equipment appropriate for matrix

- Data storage system with adequate capacity and backup

Procedure:

Sample Collection and Preparation

- Collect representative environmental samples (water, soil, biota) using clean techniques

- For water matrices: Use solid-phase extraction (SPE) with mixed-mode cartridges to broaden compound coverage [7]

- For solid matrices: Employ accelerated solvent extraction with organic solvents

- Include procedural blanks, quality control samples, and replicates

HRMS Data Acquisition

- Utilize both data-dependent acquisition (DDA) and data-independent acquisition (DIA) methods for comprehensive coverage [8]

- Apply generic chromatographic gradients (e.g., 5-100% organic solvent) to maximize separation range [7]

- Use positive and negative electrospray ionization (ESI) modes for LC-HRMS analyses

- For GC-HRMS, employ electron ionization (EI) complemented by atmospheric pressure chemical ionization (APCI) for molecular ion information [7] [9]

- Store raw data in open, non-proprietary formats when possible to ensure long-term accessibility

Metadata Documentation

- Record comprehensive sample information: location, date, time, collection method, and preparation details

- Document instrument parameters: ionization settings, mass resolution, calibration status

- Track quality control measures and performance data

Protocol 2: Retrospective Suspect Screening for Emerging Contaminants

Purpose: To interrogate archived HRMS data for newly recognized contaminants of concern using updated suspect lists.

Materials:

- Archived HRMS data files in open or vendor formats

- Updated suspect lists (e.g., NORMAN, EPA CompTox, UBA PMT list)

- Data processing software (vendor-specific or open-source platforms)

Procedure:

Data Processing and Feature Detection

- Reprocess raw data using current software algorithms to extract molecular features

- Apply retention time alignment and peak picking consistency filters across samples

- Use blank subtraction to eliminate background and instrumental artifacts

Database Matching and Prioritization

- Import updated suspect lists (e.g., 350 PMT substances from German Environmental Agency) [6]

- Apply mass tolerance filters (typically ≤5 ppm) for accurate mass matching

- Utilize seven-tiered prioritization strategy:

- P1: Target and suspect screening against known contaminants

- P2: Data quality filtering to remove artifacts and unreliable signals

- P3: Chemistry-driven prioritization (mass defect, homologue series)

- P4: Process-driven prioritization (spatial/temporal trends)

- P5: Effect-directed prioritization (bioassay correlations)

- P6: Prediction-based prioritization (risk quotients)

- P7: Pixel/tile-based approaches for complex datasets [4] [10]

Confidence Assessment and Reporting

- Apply Schymanski et al. confidence levels for identification

- For Level 1 confirmation, acquire authentic standards for retention time and fragmentation matching

- Use in-silico fragmentation tools (e.g., CSI:FingerID, MetFrag) for structural predictions

- Report findings with appropriate confidence level assignments

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of digital archiving for retrospective analysis requires specific tools and databases. The following table details essential components of the NTS research toolkit.

Table 2: Essential Research Reagents and Materials for HRMS Digital Archiving

| Tool Category | Specific Examples | Function | Application Notes |

|---|---|---|---|

| HRMS Instrumentation | Q-TOF, Orbitrap, FT-ICR MS | Provides accurate mass measurements (<5 ppm) and high resolution (>25,000) for molecular formula assignment | Benchtop instruments increasingly accessible; enables sensitive non-target detection [1] [7] |

| Suspect List Databases | NORMAN Suspect List Exchange, EPA CompTox, UBA PMT List | Curated lists of potential environmental contaminants for suspect screening | Regular updates essential for retrospective analysis; NORMAN contains >100,000 compounds [4] [7] |

| MS/MS Spectral Libraries | NIST, mzCloud, MassBank | Reference fragmentation spectra for compound identification | GC-EI libraries well-established; LC-MS/MS libraries growing but limited by reproducibility issues [7] |

| Data Processing Software | Compound Discoverer, XCMS, MS-DIAL, MZmine | Processes raw HRMS data, performs feature detection, alignment, and statistical analysis | Open-source options increase accessibility and method transparency [9] |

| Quantification Approaches | MS2Quant, Prediction models | Estimates concentration without reference standards using fragmentation patterns or prediction models | Enables risk assessment even when standards unavailable [4] |

Digital archiving of HRMS data represents a paradigm shift in environmental monitoring, transforming single-point analyses into enduring resources for chemical safety assessment. By implementing the protocols outlined in this application note, research institutions and regulatory bodies can build chemical exposure knowledge bases that increase in value over time. As instrumental capabilities advance and chemical databases expand, the retrospective analysis of archived environmental samples will play an increasingly crucial role in identifying future chemical threats before they escalate into widespread contamination issues.

High-Resolution Mass Spectrometry (HRMS) has become an indispensable tool for the non-targeted screening (NTS) of environmental pollutants, with Orbitrap technology emerging as a leading analytical platform. Orbitrap mass spectrometers function as ion trap mass analyzers that utilize "electrodynamic squeezing" to capture ions, which then oscillate around a central electrode at frequencies proportional to their mass-to-charge ratio (m/z). This operating principle enables the acquisition of high-resolution, accurate-mass (HRAM) data through image current detection, functioning as a Fourier Transform mass analyzer analogous to FT-ion cyclotron resonance (ICR) technology, yet in a more compact and operable format [11].

The distinguishing capability of Orbitrap technology lies in its exceptional resolution and mass accuracy. These instruments can achieve a maximum resolution of up to 1,000,000 FWHM at m/z 200 while maintaining sub-1 ppm mass accuracy, enabling the confident identification of unknown compounds and trace-level contaminants in complex environmental matrices without compromising selectivity or sensitivity [11]. This performance level surpasses alternative technologies like Q-TOF systems, which face limitations in resolution within the small molecule mass range, potentially leading to false identifications [11].

For environmental scientists investigating chemical pollutants, Orbitrap-based platforms provide the analytical robustness necessary to detect, identify, and quantify a diverse array of organic contaminants—from legacy persistent organic pollutants (POPs) to emerging contaminants of concern—even when present at trace concentrations in challenging sample matrices [9] [1].

Key Technical Specifications and Performance Metrics

Orbitrap mass spectrometers offer a range of technical specifications that make them particularly suitable for non-targeted screening of environmental samples. The high resolution and accurate mass capabilities enable the differentiation of isobaric compounds (those with similar nominal mass but different exact mass) and provide confident molecular formula assignments for unknown identification [11].

Table 1: Performance Metrics of Orbitrap Systems for Environmental Analysis

| Performance Parameter | Specification Range | Significance for Environmental NTS |

|---|---|---|

| Mass Resolution | Up to 1,000,000 FWHM at m/z 200 | Separates isobaric compounds with minimal mass differences; reduces chemical noise |

| Mass Accuracy | <1 ppm (typical) | Enishes confident molecular formula assignment; reduces false positives |

| Dynamic Range | Wide dynamic range | Enables detection of trace-level contaminants alongside abundant matrix components |

| Detection Capability | Sub-ppb to ppt levels | Suitable for monitoring environmental contaminants at regulatory relevant concentrations |

| Scan Modes | Full scan, targeted MS/MS, DDA, DIA | Flexible data acquisition for comprehensive contaminant screening |

The hybrid configurations of Orbitrap instruments, particularly those combining quadrupole mass filters with Orbitrap mass analyzers (Q-Orbitrap systems), provide additional functionality for structural elucidation. These configurations enable MS/MS experiments with high-resolution fragment detection, which is crucial for confirming the identity of unknown pollutants through their fragmentation patterns [12] [8]. The Thermo Scientific Orbitrap Exploris series and Q Exactive series represent such hybrid systems that have been successfully applied to environmental analysis [11].

Recent advancements include the development of specialized instruments like the Orbitrap Exploris EFOX Mass Detector, specifically designed for environmental and food safety testing. This system applies Orbitrap technology to the quantification of trace-level contaminants such as per- and polyfluoroalkyl substances (PFAS), pesticides, and other organic xenobiotics, making high-resolution testing more accessible for routine laboratory analysis [13].

Applications in Non-Target Screening of Environmental Pollutants

Orbitrap-based HRMS has demonstrated exceptional capability in characterizing complex environmental mixtures through non-targeted screening approaches. In one comprehensive study, researchers employed GC-Q-Orbitrap-HRMS with chromatogram segmentation and Cl/Br-specific screening algorithms to identify halogenated organic pollutants (HOPs) in fly ash, egg, and sediment samples [12]. This methodology enabled the identification of 122 HOP formulas tentatively assigned with structures, with 28 compounds detected across multiple matrices. When considering isomers, the study revealed a total of 1059 HOP congeners, demonstrating the powerful congener-specific analysis capability of Orbitrap technology [12].

The quantitative analysis revealed significant concentration variations across environmental compartments, with total HOP levels measuring 4.7 μg g⁻¹ in fly ash, 41.2 μg g⁻¹ in egg, and 750.8 μg g⁻¹ in sediment [12]. The study highlighted the predominance of organochlorines across halogenated categories, with halogenated benzenes, halogenated dioxins, halogenated biphenyls/terphenyls, and halogenated polycyclic aromatic hydrocarbons (H-PAHs) representing the predominant structural categories. Furthermore, the research identified dozens of novel or little-known HOP formulas, including mix-chlorinated/brominated PAHs with ≥4 aromatic rings and polychlorinated terphenyls [12].

Orbitrap technology has also proven valuable for regulatory environmental monitoring. The International Commission for the Protection of the River Rhine (ICPR) has implemented NTS using HRMS since 2012, documenting ten major spill events of previously undetected compounds totaling approximately 25 tons of chemical load in the river Rhine in 2014 alone [1]. This monitoring led to the discovery of quaternary phosphonium compounds—industrial process intermediates not registered in REACH—that were subsequently shown to possess cytotoxic and genotoxic potential [1].

Table 2: Representative Environmental Contaminants Identified Using Orbitrap HRMS

| Contaminant Class | Specific Compounds Identified | Environmental Matrices | Analytical Approach |

|---|---|---|---|

| Halogenated Organic Pollutants (HOPs) | Halogenated benzenes, dioxins, biphenyls, terphenyls, PAHs | Fly ash, eggs, sediment | GC-Q-Orbitrap-HRMS with chromatogram segmentation [12] |

| Emerging Industrial Chemicals | Quaternary phosphonium compounds | River water | LC-HRMS and GC-HRMS NTS [1] |

| Per- and Polyfluoroalkyl Substances (PFAS) | Various PFAS congeners | Water, biological samples | LC-Orbitrap-HRMS [9] [13] |

| Pharmaceuticals and Personal Care Products | Diverse pharmaceutical compounds | Water, wastewater | LC-HRMS NTS [9] [8] |

| Pesticides and Transformation Products | Current-use and legacy pesticides | Soil, sediment, water | GC- and LC-Orbitrap-HRMS [9] [8] |

The application of Orbitrap technology spans multiple environmental compartments. A comprehensive review of NTA and suspect screening analysis (SSA) reported the detection of per- and polyfluoroalkyl substances (PFAS) and pharmaceuticals in water, pesticides and polyaromatic hydrocarbons (PAHs) in soil and sediment, volatile and semi-volatile organic compounds in air, flame retardants in dust, and plasticizers in consumer products [9]. This broad coverage of chemical classes underscores the versatility of Orbitrap systems for comprehensive environmental exposomics.

Experimental Protocols for Environmental Sample Analysis

Sample Preparation and Extraction

Proper sample preparation is fundamental for successful non-targeted screening of environmental pollutants. While specific protocols vary depending on the sample matrix, the general principles include:

Sample Collection and Preservation: Environmental samples (water, soil, sediment, biota) should be collected using clean procedures to avoid contamination, preserved appropriately (often at 4°C or frozen), and processed within designated holding times to maintain sample integrity.

Extraction Techniques: Solid-phase extraction (SPE) is commonly employed for water samples, while pressurized liquid extraction (PLE), QuEChERS, or sonication-assisted extraction are used for solid matrices. The selection of extraction solvent, pH adjustment, and cleanup media significantly influences the detectable chemical space [9].

Extract Concentration and Reconstitution: Following extraction, samples are typically concentrated under gentle nitrogen evaporation and reconstituted in solvents compatible with the chromatographic system (often methanol or acetonitrile with water).

Instrumental Analysis Parameters

Chromatographic separation coupled to Orbitrap HRMS detection forms the core of NTS workflows. Both liquid chromatography (LC) and gas chromatography (GC) approaches are employed, with 51% of environmental NTS studies using only LC-HRMS, 32% using only GC-HRMS, and 16% utilizing both platforms to expand chemical coverage [9].

LC-Orbitrap-HRMS Method:

- Chromatography: Reversed-phase LC using C18 columns with water and organic modifiers (methanol or acetonitrile), often with formic acid or ammonium buffers to enhance ionization [14].

- Ionization: Electrospray ionization (ESI) in positive, negative, or switching modes. Among LC-HRMS studies, 43% use both ESI+ and ESI-, while 18% use only ESI+, and 22% use only ESI- [9].

- Mass Analysis: Full-scan MS data acquisition at resolution ≥60,000 (at m/z 200) with mass accuracy calibration using standard compounds.

- Data Acquisition: Both data-dependent acquisition (DDA) and data-independent acquisition (DIA) methods are employed. DDA selects precursor ions based on intensity or specific features for MS/MS fragmentation, while DIA sequentially fragments all ions within predefined mass windows without precursor selection [8].

GC-Q-Orbitrap-HRMS Method:

- Chromatography: Non-polar or mid-polar capillary GC columns with temperature programming optimized for the volatility range of target analytes.

- Ionization: Electron ionization (EI) is standard; some methods complement EI with chemical ionization (CI) for molecular ion information [9].

- Mass Analysis: Full-scan MS data acquisition at resolution ≥60,000 with accurate mass measurement.

- Specialized Screening: Algorithm-based approaches such as Cl/Br-specific screening have been developed for selective detection of halogenated compounds through isotope pattern recognition [12].

Data Processing and Compound Identification

The processing of HRMS data for NTS involves multiple steps that can be optimized using design of experiments (DoE) approaches [14]:

Peak Detection and Alignment: Automated software (e.g., MZmine, Compound Discoverer) detects chromatographic peaks, deconvolutes co-eluting compounds, and aligns features across samples.

Molecular Formula Assignment: HRAM data enables the generation of potential molecular formulas within specified mass error tolerance (typically <5 ppm).

Compound Identification: Strategies include:

- Suspect Screening: Comparison against chemical databases (e.g., NORMAN, CompTox) with confidence levels [9].

- True NTS: Structural elucidation through interpretation of MS/MS fragmentation patterns and retention time prediction.

- Library Matching: Spectral matching against reference libraries (e.g, NIST, mzCloud).

Workflow for Environmental NTS Using Orbitrap HRMS

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of Orbitrap-based NTS requires carefully selected reagents, materials, and software tools. The following table outlines essential components of the environmental analyst's toolkit:

Table 3: Essential Research Reagents and Materials for Orbitrap-Based NTS

| Category | Specific Items | Function/Purpose |

|---|---|---|

| Chromatography | U/HPLC system (e.g., Thermo Scientific Vanquish); GC system; C18, HILIC, or other LC columns; GC capillary columns; Mobile phases (water, methanol, acetonitrile); Buffer additives (formic acid, ammonium salts) | Compound separation prior to mass analysis; Reduction of matrix effects; Optimization of ionization efficiency |

| Sample Preparation | Solid-phase extraction (SPE) cartridges; QuEChERS kits; Solvents (acetone, hexane, ethyl acetate, dichloromethane); Filtration devices; Internal standards (isotope-labeled compounds) | Sample cleanup and concentration; Matrix component removal; Compensation for extraction and ionization variability |

| Mass Calibration | Calibration solutions (e.g., Pierce LTQ Velos ESI Positive and Negative Ion Calibration Solutions); Mass accuracy standards | Instrument mass calibration and performance verification; Ensuring sub-ppm mass accuracy |

| Data Processing Software | Commercial (Compound Discoverer, MassHunter); Open-source (MZmine 2, MS-DIAL); Databases (NORMAN, CompTox, mzCloud, NIST) | Molecular feature extraction; Compound identification; Data visualization and interpretation |

| Quality Control | Procedure blanks; Matrix spikes; Reference materials; Solvent blanks | Contamination assessment; Process efficiency monitoring; Data quality assurance |

The selection of specific reagents and materials should be guided by the target analytes and sample matrices. For example, the optimization of MZmine 2 parameters using design of experiments approaches has been shown to significantly improve peak detection performance in environmental samples, enabling detection of 75-100% of peaks compared to manual evaluation [14]. Additionally, the use of both positive and negative ionization modes and complementary LC and GC separation expands the detectable chemical space for comprehensive environmental analysis [9].

Method Optimization and Data Acquisition Strategies

Optimal performance in non-targeted screening requires careful optimization of both instrumental parameters and data processing methods. Research has demonstrated that shorter MS cycle times in Orbitrap instruments significantly improve the quality of automatic peak detection, suggesting that full scan acquisition without additional MS2 experiments may be preferable for initial screening [14].

For data acquisition, two primary approaches dominate environmental NTS:

Data-Dependent Acquisition (DDA): This method performs a full MS scan followed by MS/MS scans on the most abundant precursor ions meeting specific intensity thresholds. While DDA accounts for approximately 60% of NTS applications, it may miss lower-abundance compounds due to preferential selection of intense ions [8].

Data-Independent Acquisition (DIA): This approach fragments all ions within sequential mass windows without precursor selection, ensuring comprehensive MS/MS data collection. Although more challenging for data processing due to complex fragmentation spectra, DIA represents approximately 19% of NTS applications and provides more complete compound coverage [8].

The design of experiments (DoE) methodology has proven valuable for optimizing data processing parameters in software such as MZmine 2, providing a systematic approach to maximize peak detection while minimizing false positives [14]. This approach is particularly important given that different environmental questions and regulatory needs require tailored NTS strategies [1].

Data Acquisition Strategies for NTS

The future development of Orbitrap technology and associated methodologies continues to address current challenges in environmental NTS, including improved compound identification confidence, standardized reporting standards, and more efficient data processing workflows to handle the increasingly large and complex datasets generated by comprehensive environmental monitoring programs [1] [8].

High-Resolution Mass Spectrometry (HRMS) has revolutionized the analysis of environmental samples by enabling comprehensive detection and identification of organic contaminants [15]. The screening approaches for these analyses can be categorized into three distinct paradigms: target, suspect, and non-target screening. Each method offers a different balance between specificity, scope, and analytical effort, making them suitable for various applications in environmental monitoring and regulatory science [1]. The integration of liquid chromatography with HRMS (LC-HRMS) provides the separation power and mass accuracy necessary to resolve and identify compounds in complex environmental matrices such as water, sediments, and biological tissues [16] [17].

In modern environmental analysis, the immense diversity of chemical substances from industrial, agricultural, and domestic sources presents a significant analytical challenge. Conventional targeted methods, while quantitative and precise, cover only a limited number of pre-defined analytes [1]. The complementary use of suspect and non-target screening allows researchers to cast a wider net, detecting known contaminants without reference standards (suspect screening) and discovering entirely unknown chemicals (non-target screening) [17]. This hierarchical approach to chemical analysis is particularly valuable for identifying Chemicals of Emerging Concern (CECs) and addressing the "known unknowns" and "unknown unknowns" in environmental compartments [4] [10].

Defining the Screening Workflows

Target Screening

Target screening is a hypothesis-driven approach focused on the definitive identification and quantification of a predefined set of analytes. This method relies on reference standards to confirm compound identity through exact mass, retention time, and fragmentation spectrum matching [17]. Target screening provides the highest level of confidence in compound identification and is the foundation for regulatory compliance monitoring. For example, under the EU Water Framework Directive, monitoring focuses on 45 Priority Substances using targeted methods [1]. The key characteristics of target screening include its quantitative nature, dependence on authentic standards, and limited scope to known compounds of immediate interest.

Suspect Screening

Suspect screening represents an intermediate approach where analysts search for compounds suspected to be present in samples based on existing knowledge, but without available reference standards for confirmation [17]. This method leverages suspect lists and databases containing thousands of potential environmental contaminants, such as the NORMAN Suspect List Exchange or the US EPA's CompTox Chemicals Dashboard [4] [10]. Identification is based on matching exact mass, isotope patterns, and sometimes in silico-predicted fragmentation patterns, resulting in tentative identification unless confirmed with standards. Suspect screening significantly expands the monitoring scope beyond target methods while providing more structured identification than purely non-target approaches.

Non-Target Screening (NTS)

Non-target screening (NTS) is a hypothesis-generating approach that aims to comprehensively detect all measurable organic compounds in a sample without prior knowledge or expectations [18] [1]. As a true discovery tool, NTS seeks to identify previously unrecognized contaminants, transformation products, or entirely new chemical entities. The non-target workflow involves detecting chromatographic features, prioritizing them based on various criteria, and ultimately elucidating their structures [4] [10]. NTS is particularly valuable for identifying CECs that may escape conventional monitoring programs, as demonstrated by the detection of quaternary phosphonium compounds in the Rhine River that had been emitted for years without regulation [1].

Table 1: Comparative Analysis of Screening Workflows

| Parameter | Target Screening | Suspect Screening | Non-Target Screening |

|---|---|---|---|

| Scope | Limited to predefined analytes | Database-dependent (hundreds to thousands) | Virtually unlimited |

| Identification Confidence | Confirmed (with standards) | Tentative (without standards) | Tentative to confirmed |

| Quantification | Absolute (with standards) | Semi-quantitative | Semi-quantitative at best |

| Data Acquisition | Targeted MS/MS | Data-dependent (DDA) or data-independent (DIA) | DDA or DIA |

| Primary Application | Regulatory compliance | Research, prioritization | Discovery, research |

| Data Processing | Targeted extraction | Suspect list matching | Feature detection, prioritization |

Experimental Protocols for HRMS-Based Screening

Sample Preparation and LC-HRMS Analysis

A generalized protocol for water sample analysis across all screening approaches involves careful sample collection, preservation, and preparation. Water samples should be collected in pre-cleaned glass containers, stored at 4°C, and processed within 48 hours. Filtration through 0.2-μm polycarbonate or glass fiber filters removes particulate matter [18]. Solid-phase extraction (SPE) using polymeric sorbents (e.g., Oasis HLB) provides broad-spectrum extraction of contaminants with varying physicochemical properties. For non-target screening, minimal sample cleanup preserves the comprehensive chemical profile, though this may increase matrix effects.

For LC-HRMS analysis, reversed-phase chromatography with C18 columns (e.g., 100 × 2.1 mm, 1.8-μm particle size) provides effective separation with gradient elution using water and methanol or acetonitrile, both modified with 0.1% formic acid or ammonium acetate for positive and negative electrospray ionization, respectively [18] [17]. The acquisition should include full-scan MS1 data at high resolution (≥50,000 FWHM) and data-dependent MS/MS fragmentation at stepped collision energies to maximize structural information. Both positive and negative ionization modes are essential for comprehensive coverage [16].

Data Processing Workflows

Target Screening Data Processing: For target screening, data processing involves extracting specific ion chromatograms for each target compound using a narrow mass window (typically 5 ppm). Identification requires matching both exact mass and retention time to the reference standard, with MS/MS fragmentation confirmation [17].

Suspect Screening Data Processing: Suspect screening workflows involve extracting potential suspects based on exact mass from comprehensive databases, followed by evaluation of isotope patterns and comparison with in silico or library MS/MS spectra when available [17]. Software platforms such as UNIFI provide integrated workflows for suspect screening with automated matching against curated libraries [17].

Non-Target Screening Data Processing: Non-target data processing begins with feature detection using algorithms such as those in MZmine3 or XCMS to detect chromatographic peaks representing unique molecular ions [18]. This is followed by retention time alignment, isotope and adduct annotation, and gap filling. The resulting feature table undergoes prioritization using strategies such as statistical analysis, blank subtraction, and intensity thresholds [4] [18]. Two distinct approaches for NTS data processing include:

- Feature Profiling (FP) with software such as MZmine3: This approach detects and aligns chromatographic peaks across samples to create a feature table with mass-to-charge ratio (m/z), retention time, and intensity [18].

- Component Profiling (CP) with methods such as Regions of Interest Multivariate Curve Resolution-Alternating Least Squares (ROIMCR): This approach performs bilinear decomposition of compressed data matrices to resolve "pure" component profiles without prior peak picking [18].

Table 2: Key Research Reagent Solutions for HRMS Screening

| Reagent/Category | Function/Application | Examples/Specifications |

|---|---|---|

| HRMS Instrumentation | Exact mass measurement, high-resolution separation | Orbitrap, Q-TOF, FT-ICR mass analyzers |

| Chromatography Systems | Compound separation prior to MS detection | UHPLC with C18 columns (100×2.1mm, 1.8μm) |

| Reference Standards | Target compound confirmation and quantification | Authentic chemical standards for calibration |

| Suspect Databases | Digital libraries for suspect screening | NORMAN, CompTox Dashboard, PubChemLite |

| SPE Sorbents | Sample extraction and concentration | Oasis HLB, polymeric mixed-mode sorbents |

| Internal Standards | Quality control, signal correction | Isotopically-labeled analog standards |

| Data Processing Software | Feature detection, statistical analysis | MZmine3, XCMS, ROIMCR, PatRoon |

Workflow Integration and Prioritization Strategies

Complementary Workflow Implementation

The most effective environmental monitoring strategies integrate all three screening approaches to leverage their complementary strengths [17] [1]. An integrated workflow begins with non-target screening to obtain a comprehensive chemical profile of samples. Detected features are then filtered through suspect screening against extensive databases, providing tentative identifications for known environmental contaminants. Finally, a subset of high-priority compounds is confirmed and quantified through target screening with authentic standards. This hierarchical approach maximizes both the scope of chemical coverage and the confidence in identification for critical contaminants.

Recent studies demonstrate this integrated approach, such as the assessment of a Mediterranean River basin where target screening of 171 pesticides and 33 pharmaceuticals was combined with suspect screening against a library of 2200 components and non-target discovery [17]. This comprehensive strategy identified 68 contaminants through suspect screening, with 6 confirmed by standards, plus the non-target identification of eprosartan, an antihypertensive drug not included in the original suspect list [17].

Prioritization Strategies in Non-Target Screening

The primary challenge in NTS is the thousands of detected features, making prioritization essential for efficient resource allocation [4] [10]. Seven key prioritization strategies have been identified:

- Target and Suspect Screening (P1): Using predefined databases of known or suspected contaminants to narrow candidates early in the process [4] [10].

- Data Quality Filtering (P2): Removing artifacts and unreliable signals based on occurrence in blanks, replicate consistency, and peak shape [4] [10].

- Chemistry-Driven Prioritization (P3): Focusing on compound-specific properties to identify classes of interest, such as using mass defect filtering for halogenated compounds [4] [10].

- Process-Driven Prioritization (P4): Using spatial, temporal, or technical processes (e.g., upstream vs. downstream comparisons) to highlight relevant features [4] [10].

- Effect-Directed Prioritization (P5): Integrating biological response data with chemical analysis through Effect-Directed Analysis (EDA) or virtual EDA [4] [10].

- Prediction-Based Prioritization (P6): Calculating risk quotients using predicted concentrations and toxicities when full identification is incomplete [4] [10].

- Pixel- or Tile-Based Approaches (P7): For complex datasets, especially in 2D chromatography, localizing regions of high variance before peak detection [4] [10].

These strategies are most effective when combined, enabling stepwise reduction from thousands of features to a focused shortlist of high-priority compounds [4]. For example, an initial suspect screening might flag 300 features, which data quality and chemistry-driven filters reduce to 100. Process-driven comparison could then identify 20 features linked to poor removal in a treatment plant, with effect-directed and prediction-based methods finally prioritizing 5 features based on demonstrated toxicity and predicted risk [4].

Comparative Performance and Applications

Workflow Performance Assessment

Studies comparing NTS data processing workflows reveal significant differences in their performance characteristics. A 2025 comparative analysis of MZmine3 (feature profiling) and ROIMCR (component profiling) demonstrated that both approaches could differentiate treatment and temporal effects in wastewater-impacted river water, but with distinct characteristics [18]. MZmine3 showed increased sensitivity to treatment effects but greater susceptibility to false positives, while ROIMCR provided superior consistency and reproducibility with clearer temporal patterns, though with lower treatment sensitivity [18].

The choice between data acquisition modes also impacts performance. Both Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA) have complementary advantages for obtaining MS2 information for database matching [19]. DDA provides cleaner MS/MS spectra for library matching, while DIA ensures fragmentation data for all detected ions, reducing the risk of missing important compounds [19].

Table 3: Performance Metrics of NTS Data Processing Workflows

| Performance Metric | MZmine3 (FP-based) | ROIMCR (CP-based) |

|---|---|---|

| Temporal Variation Capture | 20.5–31.8% variance | 35.5–70.6% variance |

| Treatment Effect Sensitivity | Higher (11.6–22.8% variance) | Lower |

| False Positive Rate | Increased susceptibility | Reduced susceptibility |

| Reproducibility | Moderate | Superior |

| Data Dimensionality | Feature table (peaks) | Component profiles |

| Best Application | Treatment effect studies | Temporal trend analysis |

Regulatory and Research Applications

The three screening workflows have distinct but complementary roles in environmental monitoring and chemicals management. Target screening remains essential for regulatory compliance, such as monitoring Priority Substances under the EU Water Framework Directive [1]. Suspect screening supports chemical prioritization and regulatory processes, such as adding chemicals to the WFD Watch List or re-evaluating substances under REACH [1]. Non-target screening serves as a discovery tool for identifying previously unknown contaminants and transformation products, as demonstrated by the detection of significant emissions of quaternary phosphonium compounds in the Rhine River [1] [10].

The retrospective analysis capability of stored HRMS data represents a particularly powerful application of NTS. As digital archives of full-scan HRMS analyses, these datasets can be re-interrogated as new concerns emerge or new knowledge about specific substances develops [1]. This future-proofs environmental monitoring programs against newly identified threats without requiring re-sampling or re-analysis.

The hierarchical framework of target, suspect, and non-target screening represents a comprehensive strategy for addressing the immense complexity of chemical mixtures in environmental systems. While each approach has distinct strengths and limitations, their integrated implementation provides the most powerful solution for contemporary environmental analytical challenges. Target screening delivers the quantitative rigor required for regulatory compliance, suspect screening expands monitoring scope to hundreds or thousands of potential contaminants, and non-target screening enables discovery of previously unrecognized environmental contaminants.

The implementation of harmonized protocols, quality control procedures, and data sharing infrastructures will be crucial for advancing these screening approaches from research tools to routine monitoring applications [1]. Future developments in HRMS instrumentation, data processing algorithms, and predictive modeling will further enhance the sensitivity, scope, and efficiency of all three screening paradigms. As these methodologies continue to mature, their integration into regulatory frameworks will be essential for comprehensive chemicals management and effective environmental protection.

High-Resolution Mass Spectrometry (HRMS) has emerged as a pivotal analytical technology supporting diverse regulatory frameworks across environmental and pharmaceutical domains. Its unparalleled ability to perform precise quantitative analysis and comprehensive non-target screening makes it uniquely positioned to address complex challenges within the European Water Framework Directive (WFD), the Regulation for the registration, evaluation, authorisation and restriction of chemicals (REACH), and the stringent characterization of biosimilar medicinal products. In environmental monitoring, HRMS enables the detection and identification of previously unknown contaminants, thereby strengthening the evidence base for regulatory action [1]. In pharmaceutical development, advanced HRMS platforms provide the rigorous analytical data required to demonstrate biosimilarity, supporting a paradigm shift toward more efficient regulatory pathways [20]. This application note details how HRMS methodologies underpin these critical regulatory areas, providing detailed protocols and data interpretation frameworks for researchers and regulatory professionals.

Regulatory Drivers for HRMS Application

Environmental Monitoring: The Water Framework Directive (WFD) and REACH

Modern environmental regulation requires a proactive approach to chemical risk management, moving beyond a limited set of predefined target substances.

- The WFD Challenge: The WFD monitors 45 Priority Substances (PS) and an average of 55 River Basin Specific Pollutants (RBSP) to assess the chemical status of water bodies. However, research studies using HRMS routinely detect hundreds to thousands of substances in environmental samples, revealing a significant monitoring gap [1].

- The REACH Connection: Data on environmental occurrence, gathered via HRMS, can feed back into the chemical registration process. REACH Annex III now allows the use of environmental monitoring data in a weight-of-evidence approach for assessing substance persistence and bioaccumulation [1].

- The NTS Solution: Non-target screening (NTS) with HRMS allows for the untargeted detection of thousands of organic chemicals in a single analysis, creating a "digital archive" of the sample that can be re-interrogated as new concerns emerge [1]. This capability is crucial for identifying new substances for the WFD's "Watch List" mechanism and for triggering substance evaluation under REACH.

Pharmaceutical Development: Biosimilar Characterization

The global regulatory landscape for biosimilars is evolving toward a more streamlined, science-driven approach that heavily relies on advanced analytical characterization.

- The Regulatory Shift: Regulatory agencies, including the European Medicines Agency (EMA) and the U.S. Food and Drug Administration (FDA), are moving toward a "tailored clinical approach" [20]. For some biosimilars, extensive comparative clinical efficacy trials (Phase III) may be waived if extensive analytical and functional data demonstrate a high degree of similarity to the reference product.

- The HRMS Imperative: This shift places immense importance on robust Chemistry, Manufacturing, and Controls (CMC) packages and analytical comparability. HRMS is critical for characterizing the biosimilar's structure, including primary amino acid sequence and complex post-translational modifications (e.g., glycosylation), with sufficient precision to prove "no clinically meaningful differences" from the reference product [21] [20].

- Global Harmonization Challenges: Regulatory pathways for biosimilars, while maturing, still lack full international harmonization. Differences in requirements for clinical studies, extrapolation of indications, and interchangeability between regions like the EU, US, and Latin America complicate global market entry [21]. A robust, HRMS-driven analytical similarity package forms the universal foundation for any regulatory submission.

HRMS-Based Methodologies and Protocols

Comprehensive Non-Target Screening for Environmental Monitoring

This protocol outlines a robust workflow for the identification of unknown organic pollutants in water samples, supporting WFD and REACH regulatory goals.

Materials and Reagents:

- Water Samples: Surface water, groundwater, or effluent samples.

- Solid Phase Extraction (SPE) Cartridges: e.g., Oasis HLB or equivalent.

- LC-MS Grade Solvents: Methanol, Acetonitrile, and Water with 0.1% Formic Acid.

- Internal Standard Mixture: A suite of stable isotope-labeled compounds for quality control.

- HRMS System: LC coupled to a Q-TOF or Orbitrap mass spectrometer.

Experimental Workflow:

The following diagram illustrates the comprehensive NTS workflow, from sample preparation to final reporting.

Detailed Procedural Steps:

Sample Preparation: Collect water samples in pre-cleaned glass bottles. Acidify if necessary and filter through 0.45 μm glass fiber filters. Perform solid-phase extraction (SPE) using a hydrophilic-lipophilic balanced sorbent. Elute with a suitable solvent (e.g., methanol), evaporate to dryness under a gentle nitrogen stream, and reconstitute in a initial mobile phase for LC-MS analysis [5] [22].

HRMS Data Acquisition:

- Chromatography: Utilize reversed-phase C18 column with a water/acetonitrile or water/methanol gradient. Maintain column temperature at 40°C.

- Ionization: Employ electrospray ionization (ESI) in both positive and negative modes to maximize compound coverage.

- Mass Spectrometry: Acquire data in full-scan mode with a resolution of >50,000 (FWHM) to ensure accurate mass measurements. Simultaneously, use data-dependent acquisition (DDA) to fragment the most intense ions, generating MS/MS spectra for structural elucidation [23] [22] [15].

Data Processing and Evaluation:

- Use software (e.g., patRoon, XCMS) for feature detection, peak picking, and alignment.

- Perform suspect screening against curated databases (e.g., NORMAN Suspect List Exchange, MassBank) [24].

- For true non-targets, use the software to generate molecular formulas from the accurate mass and isotope patterns.

- Interpret MS/MS spectra and propose structures, potentially using in-silico fragmentation tools [1] [22].

Prioritization and Identification:

- Prioritization: Apply a risk-based scoring system, such as the Toxicological Priority Index (ToxPi), which integrates criteria like detection frequency, relative abundance, persistence, bioaccumulation potential, and predicted toxicity [5].

- Identification Confidence: Follow the Schymanski scale for reporting identification confidence, from Level 1 (confirmed structure) to Level 5 (exact mass of interest) [22].

Analytical Similarity Assessment for Biosimilars

This protocol describes the use of HRMS for the comprehensive structural characterization of a biosimilar candidate against its reference product.

Materials and Reagents:

- Reference Product and Biosimilar Candidate: Multiple lots of each.

- Denaturants and Reductants: Guanidine HCl, Dithiothreitol (DTT).

- Enzymes: Trypsin, IdeS, PNGase F.

- LC-MS Grade Solvents: Water, Acetonitrile with 0.1% Formic Acid.

- HRMS System: LC coupled to high-resolution mass spectrometer (Orbitrap preferred).

Experimental Workflow:

The following diagram outlines the key steps in the analytical similarity assessment.

Detailed Procedural Steps:

Intact Mass Analysis:

- Desalt the intact protein using a rapid buffer exchange cartridge or column.

- Inject onto a reversed-phase or size-exclusion column coupled to the HRMS.

- Use ESI and deconvolute the resulting charge envelope spectrum to determine the average and exact molecular mass. Compare the biosimilar and reference product profiles [15].

Peptide Mapping:

- Denature, reduce, and alkylate the protein. Desalt if necessary.

- Digest with a specific protease (e.g., trypsin) and/or other enzymes (e.g., IdeS for antibodies) to generate peptides.

- Analyze the digested sample using LC-HRMS/MS with a C18 column and a water/acetonitrile gradient.

- Use a data-dependent method to acquire MS and MS/MS spectra for peptide identification.

- Process data using software to identify peptides, confirm the amino acid sequence, and locate post-translational modifications (PTMs) such as oxidation, deamidation, and most critically, glycosylation [20] [15].

Glycan Analysis:

- Release N-linked glycans enzymatically using PNGase F.

- Label the released glycans with a fluorescent tag (e.g., 2-AB).

- Analyze using LC-HRMS or LC-fluorescence, comparing the glycan profile (glycoform distribution) of the biosimilar to the reference product [21].

Data Analysis and Similarity Assessment:

- Integrate data from all experiments to perform a side-by-side comparative analysis.

- Use orthogonal methods (e.g., functional bioassays, capillary electrophoresis) to complement HRMS findings.

- The overall goal is to demonstrate that the biosimilar is highly similar to the reference product despite expected microheterogeneity, with no clinically meaningful differences in structural or quality attributes [21] [20].

Data Presentation and Interpretation

Table 1: Key regulatory and technical parameters for HRMS applications.

| Application Area | Key Regulatory/Technical Parameter | Typical Requirement or Value | Purpose |

|---|---|---|---|

| Environmental NTS | HRMS Mass Resolution | >50,000 FWHM [23] | Sufficient resolution to separate isobaric compounds and determine elemental composition. |

| Mass Accuracy | < 5 ppm [23] [15] | Confident assignment of molecular formula. | |

| Identification Confidence | Schymanski Level 1-5 [22] | Standardized reporting of identification certainty for new/emerging substances. | |

| Biosimilar Characterization | Intact Mass Accuracy | < 50 Da (for large proteins) [15] | Confirmation of correct primary structure and major PTMs. |

| Peptide Mapping Coverage | >95% [20] | Comprehensive verification of amino acid sequence and identification of PTM sites. | |

| Regulatory Goal | "No clinically meaningful differences" [21] [20] | Foundation for streamlined clinical development and regulatory approval. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key reagents, materials, and software solutions for HRMS-based regulatory studies.

| Item Name | Function/Description | Application Context |

|---|---|---|

| Hydrophilic-Lipophilic Balanced (HLB) SPE Cartridges | Broad-spectrum extraction of diverse organic pollutants from water samples. | Environmental NTS for WFD/REACH [22] |

| Stable Isotope-Labeled Internal Standards | Correction for matrix effects and analyte loss during sample preparation; quality control. | Both Environmental NTS and Biosimilar Analysis |

| NORMAN Suspect List Exchange Database | A collaborative repository of suspect lists for emerging environmental contaminants. | Environmental NTS for suspect screening [24] |

| patRoon Software Platform | Open-source software for structured non-target screening data processing. | Environmental NTS workflow management [22] |

| PNGase F Enzyme | Enzyme that releases N-linked glycans from glycoproteins for detailed analysis. | Biosimilar Characterization (Glycan Profiling) |

| Trypsin Protease | High-purity enzyme for specific digestion of proteins into peptides for sequence mapping. | Biosimilar Characterization (Peptide Mapping) |

| ToxPi (Toxicological Priority Index) Framework | A visual and computational framework for integrating multiple data streams to prioritize chemicals based on risk. | Environmental NTS for risk-based prioritization [5] |

High-Resolution Mass Spectrometry stands as a cornerstone technology for addressing some of the most pressing challenges in modern environmental and pharmaceutical regulation. Its application in non-target screening provides the comprehensive data necessary to move beyond a limited list of target pollutants under the WFD and REACH, enabling a more proactive and protective approach to chemical risk management. Simultaneously, its unparalleled analytical power is driving a scientific and regulatory evolution in the biosimilar sector, where demonstrating structural similarity at the molecular level can form the basis for abbreviated clinical development pathways. The protocols and frameworks outlined in this document provide a foundation for researchers to generate robust, regulatory-grade data that supports the protection of human health and the environment, as well as the efficient development of safe and effective biologic medicines.

From Data to Discovery: Essential Workflows and Real-World Applications of HRMS-NTS

Sample Preparation and Chromatographic Separation for Complex Matrices

The comprehensive analysis of environmental pollutants requires advanced analytical techniques capable of identifying both known and unknown contaminants in complex sample matrices. High-resolution mass spectrometry (HRMS) has emerged as a powerful tool for non-target screening (NTS), enabling the detection and identification of thousands of organic micropollutants without prior knowledge of their identity [1]. This application note details standardized protocols for sample preparation and chromatographic separation tailored for the analysis of complex environmental samples, supporting the broader research objectives in environmental pollutant characterization and risk assessment.

The challenge in analyzing complex environmental samples lies in the vast number of potential chemical contaminants with varying physicochemical properties present at trace concentrations alongside interfering matrix components [25] [26]. Effective strategies must address these challenges through optimized sample preparation to isolate compounds of interest and advanced separation techniques to resolve complex mixtures prior to HRMS detection.

Sample Preparation Protocols for Complex Matrices

Proper sample preparation is critical for successful NTS, as it directly impacts analyte recovery, matrix effects, and overall method sensitivity. The following protocols have been optimized for environmental matrices including water, biosolids, and biota samples.

Solid-Phase Extraction (SPE) for Water Samples

Protocol for Comprehensive Pollutant Extraction [26]

Sample Collection and Preservation: Collect water samples in pre-cleaned amber glass containers. Adjust pH to 7.0 ± 0.5 if necessary and store at 4°C until processing (preferably within 24 hours).

SPE Cartridge Preparation: Condition Oasis HLB cartridges (200 mg, 6 cc) sequentially with 5 mL methanol followed by 5 mL ultrapure water at a flow rate of approximately 5 mL/min. Do not allow the sorbent to dry completely.

Sample Loading: Pass 500 mL to 1000 mL of water sample through the cartridge at a controlled flow rate of 5-10 mL/min using a vacuum manifold system. For highly contaminated samples, reduce sample volume to 100-250 mL.

Cartridge Washing: After sample loading, wash with 5-10 mL of ultrapure water to remove interfering salts and polar matrix components. Allow the cartridge to run dry for 5 minutes under vacuum.

Analyte Elution: Elute retained compounds with 2 × 5 mL of methanol into a clean collection tube. Alternatively, for comprehensive coverage, use a methanol:dichloromethane (1:1, v/v) mixture.

Extract Concentration: Evaporate the eluate to near dryness under a gentle nitrogen stream at 30-40°C. Reconstitute the residue in 100-500 μL of initial mobile phase compatible with the subsequent chromatographic separation (typically methanol or acetonitrile with 0.1% formic acid).

Sample Storage: Store prepared extracts at -20°C until analysis (preferably within 48 hours).

Table 1: SPE Method Variations for Different Analyte Classes

| Analyte Class | Recommended Sorbent | Sample Volume | Elution Solvent | Average Recovery (%) |

|---|---|---|---|---|

| Polar Pharmaceuticals | Oasis HLB | 500 mL | Methanol | 85-105 |

| PFAS Compounds | WAX + Graphitized Carbon | 250 mL | Ammonium hydroxide in methanol | 75-95 |

| Pesticides | C18 + PS-DVB | 500 mL | Ethyl acetate | 80-100 |

| Very Polar/Ionic Compounds | Mixed-mode anion/cation exchange | 1000 mL | Methanol with 2% formic acid | 60-85 |

Quick, Easy, Cheap, Effective, Rugged, and Safe (QuEChERS) Method for Solid Samples

Protocol for Biosolids and Biota Samples [26]

Sample Homogenization: Homogenize 5 g of wet biosolid or biota sample with 10 mL acetonitrile in a 50 mL centrifuge tube.

Salting Out: Add QuEChERS extraction packet containing 4 g MgSO₄, 1 g NaCl, 1 g sodium citrate, and 0.5 g disodium hydrogen citrate sesquihydrate. Shake vigorously for 1 minute.

Centrifugation: Centrifuge at 4000 × g for 5 minutes to separate phases.

Cleanup: Transfer 1 mL of the acetonitrile supernatant to a d-SPE tube containing 150 mg MgSO₄, 25 mg PSA, and 25 mg C18 sorbent. Shake for 30 seconds and centrifuge at 4000 × g for 2 minutes.

Concentration: Transfer the cleaned extract to an autosampler vial and concentrate under nitrogen if necessary. For very low concentration analytes, evaporate to 100 μL and reconstitute in 50 μL acetonitrile.

Alternative Sample Preparation Techniques

Electromembrane Extraction (EME) [26] EME shows particular promise for ionic and ionizable analytes. The protocol involves:

- Place sample (donor solution) in a vial with a supported liquid membrane (typically 2-nitrophenyl octyl ether) impregnated in the pores of a hollow fiber.

- Apply an electrical potential (typically 10-300 V) across the membrane to facilitate extraction of charged analytes into an acceptor solution.

- Typical extraction time: 10-30 minutes with significantly reduced solvent consumption compared to traditional techniques.

Pyrolysis/Thermal Desorption GC-HRMS [27] For complex solid matrices like plastics and biosolids:

- Weigh 0.5-1 mg of sample into a pyrolysis cup.

- For thermal desorption: Heat at 300°C for 1-2 minutes to volatilize additives without degrading polymer matrix.

- For pyrolysis: Ramp to 600-800°C to decompose polymeric materials.

- Directly transfer desorbed/pyrolyzed compounds to GC-HRMS system for analysis.

Chromatographic Separation Methods

Effective chromatographic separation is essential for reducing matrix effects and resolving isobaric compounds prior to HRMS detection. The following methods have been optimized for NTS of environmental pollutants.

Reversed-Phase Liquid Chromatography (RPLC)

Standard Protocol for Medium to Non-Polar Compounds [25] [26]

Column: C18 stationary phase (100 × 2.1 mm, 1.7-1.8 μm particle size) Mobile Phase A: Water with 0.1% formic acid Mobile Phase B: Acetonitrile or methanol with 0.1% formic acid Flow Rate: 0.3 mL/min Temperature: 40°C Injection Volume: 5-10 μL

Gradient Program:

| Time (min) | %B | Description |

|---|---|---|

| 0 | 5 | Initial conditions |

| 1 | 5 | Hold for equilibration |

| 15 | 95 | Linear gradient |

| 20 | 95 | Wash at high organic |

| 20.1 | 5 | Return to initial conditions |

| 25 | 5 | Re-equilibration |

Hydrophilic Interaction Liquid Chromatography (HILIC)

Protocol for Very Polar and Ionic Compounds [26]

Column: HILIC stationary phase (150 × 2.1 mm, 1.7-1.8 μm particle size) Mobile Phase A: Acetonitrile with 0.1% formic acid Mobile Phase B: Water with 0.1% formic acid Flow Rate: 0.4 mL/min Temperature: 35°C Injection Volume: 2-5 μL

Gradient Program:

| Time (min) | %A | Description |

|---|---|---|

| 0 | 95 | High organic for retention |

| 2 | 95 | Hold for weak elution |

| 15 | 50 | Linear gradient to aqueous |

| 18 | 50 | Wash |

| 18.1 | 95 | Return to initial conditions |

| 23 | 95 | Re-equilibration |

Two-Dimensional Liquid Chromatography (2D-LC)

Comprehensive Protocol for Complex Mixtures [26]

First Dimension: HILIC separation (150 × 1.0 mm) Second Dimension: RPLC separation (50 × 4.6 mm) Modulation Time: 30-60 seconds Transfer: Use of two-position, ten-port switching valve Analysis Time: 60-120 minutes for comprehensive analysis

This configuration provides orthogonality, with HILIC separating by polarity and RPLC by hydrophobicity.

Table 2: Chromatographic Method Selection Guide Based on Analyte Properties

| Analyte Characteristics | Recommended Separation | Key Parameters | Typical Applications |

|---|---|---|---|

| Non-polar to medium polar (log P > 1) | RPLC | C18 column, water/acetonitrile gradient | Pesticides, pharmaceuticals, industrial chemicals |

| Very polar/ionic (log P ≤ 1) | HILIC | Silica/aminopropyl column, acetonitrile/water gradient | Artificial sweeteners, polar pharmaceuticals, metabolites |

| Mixed polarity compounds | 2D-LC (HILIC × RPLC) | Complementary mechanisms | Comprehensive NTS of complex environmental extracts |

| Volatile and semi-volatile | GC | DB-5MS column, temperature programming | Plastic additives, flame retardants, personal care products |

Workflow Visualization

Figure 1: Comprehensive Workflow for NTS of Environmental Pollutants

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Sample Preparation and Analysis

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Oasis HLB Cartridges | Broad-spectrum SPE for polar and non-polar compounds | 60 μm porosity, 200 mg/6 cc; Suitable for pharmaceuticals, pesticides, and industrial chemicals |

| QuEChERS Extraction Kits | Efficient extraction for solid matrices | Contains MgSO₄, NaCl, sodium citrate for salting-out; d-SPE cleanup with PSA/C18 for matrix removal |

| Mixed-mode SPE Cartridges | Targeted extraction of ionic compounds | Combined reversed-phase and ion-exchange mechanisms; ideal for PFAS, acidic/basic pharmaceuticals |

| U/HPLC Columns (C18) | RPLC separation of medium to non-polar compounds | 100-150 mm × 2.1 mm, 1.7-1.8 μm particles; temperature stable to 60°C |

| HILIC Columns | Separation of very polar and ionic compounds | 150 mm × 2.1 mm, 1.7-1.8 μm silica/aminopropyl particles; compatible with high organic mobile phases |

| GC Columns (DB-5MS) | Separation of volatile and semi-volatile compounds | 30 m × 0.25 mm × 0.25 μm; low-bleed stationary phase suitable for HRMS detection |

| MALDI Matrices | Surface-assisted laser desorption/ionization | For direct analysis of biosolids and solid samples; enables mass spectrometry imaging |

| Tuning/Calibration Solutions | Mass accuracy calibration for HRMS | Contains reference compounds across mass range; ensures < 1 ppm mass accuracy |

| Internal Standards | Quantification and process monitoring | Isotopically labeled analogs of target compounds; corrects for matrix effects and recovery losses |

Advanced Applications and Methodologies

Non-Target Screening Data Processing and Prioritization

Following chromatographic separation and HRMS analysis, effective data processing strategies are essential for managing the complexity of NTS data. The following prioritization strategies have been validated for environmental samples [10] [28]: