Validating In Silico Chromatographic Modeling: A New Paradigm for Greener, Faster Environmental Analysis

This article explores the transformative role of in silico chromatographic modeling in environmental analysis, addressing a critical need for efficient and sustainable methodologies.

Validating In Silico Chromatographic Modeling: A New Paradigm for Greener, Faster Environmental Analysis

Abstract

This article explores the transformative role of in silico chromatographic modeling in environmental analysis, addressing a critical need for efficient and sustainable methodologies. It establishes the foundational principles of computer-assisted method development, detailing how models predict retention and optimize separations without extensive experimentation. The scope extends to practical applications in non-targeted screening for identifying unknown environmental contaminants and enhancing method greenness by replacing hazardous solvents. The article provides a critical troubleshooting guide for optimizing complex protein and small molecule interactions and presents a multi-faceted validation framework comparing in silico predictions with experimental results across pharmaceutical and environmental case studies. Aimed at researchers and analytical scientists, this review synthesizes current evidence to validate in silico modeling as a robust, reliable tool that accelerates development cycles, reduces environmental impact, and improves the accuracy of environmental monitoring.

The Foundations of In Silico Chromatography: Principles, Drivers, and Core Concepts

Defining In Silico Modeling in Separation Science

In silico modeling refers to the use of computer simulations, data-driven algorithms, and mechanistic theories to predict the outcomes of scientific experiments, thereby reducing or replacing laboratory work. In the field of separation science, particularly chromatography, these models have become powerful tools for accelerating method development, optimizing operating conditions, and enhancing the environmental sustainability of analytical techniques [1] [2]. The core principle involves creating a digital representation of the chromatographic process, which can include the physics of mass transfer and fluid dynamics, or employ statistical and machine-learning models that correlate molecular structure with retention behavior [3] [2]. This approach aligns with the broader pharmaceutical industry's shift toward Quality by Design (QbD) and digitalization, providing a structured framework for developing robust and efficient separation methods [4].

The validation of in silico models is paramount for their adoption in research and regulated environments. For environmental analysis, where methods must be both precise and sustainable, in silico modeling offers a pathway to simultaneously map separation performance and environmental impact, enabling scientists to make informed decisions that balance analytical needs with green chemistry principles [1].

Core Methodologies and Comparison

In silico modeling in separation science is not a monolithic approach but encompasses several distinct methodologies. The table below provides a comparative overview of the primary techniques.

Table 1: Comparison of Primary In Silico Modeling Methodologies in Separation Science

| Methodology | Core Principle | Typical Applications | Data Requirements | Key Advantages |

|---|---|---|---|---|

| Mechanistic Modeling [3] [2] | Uses first-principle equations (e.g., mass balance, adsorption kinetics) to simulate the chromatography process. | Flowsheet optimization, preparative purification, scale-up [3]. | Adsorption isotherm parameters, column characteristics, operating conditions. | High predictive power under varied conditions; strong mechanistic insight. |

| Quantitative Structure–Retention Relationship (QSRR) [5] [2] | Correlates molecular descriptors (e.g., size, polarity) of analytes with their chromatographic retention. | Method development for novel compounds, green solvent replacement [5]. | Database of analyte structures and their retention times. | Requires no prior experimentation for new molecules if descriptors are known. |

| Artificial Neural Networks (ANNs) / Surrogate Modeling [3] | Machine-learning models trained on data (from experiments or mechanistic simulations) to predict outcomes. | Complex flowsheet optimization, rapid screening of conditions [3]. | Large datasets of input conditions and corresponding output performance. | Extremely fast predictions once trained; good for navigating large design spaces. |

| Linear Solvent Strength (LSS) Theory [6] [2] | A semi-empirical model that linearly relates the log of retention factor to the mobile phase composition. | Initial method scouting, gradient optimization for small molecules and proteins [6]. | Retention factors at two or more mobile phase compositions. | Simple and widely used; provides a good first approximation. |

Each methodology offers a unique balance of computational efficiency, predictive accuracy, and required input data. The choice of model often depends on the specific stage of method development and the available information about the system.

Experimental Protocols for Model Validation

Protocol: QSRR for Green Method Development

This protocol, adapted from recent research, outlines the use of QSRR to develop a greener chromatographic method by replacing a fluorinated mobile phase additive [1] [5].

- Problem Definition: Start with an existing chromatographic method that uses a solvent with a high environmental impact (e.g., a fluorinated additive or acetonitrile). The goal is to find a greener alternative while maintaining or improving separation performance.

- Data Collection & Molecular Descriptor Calculation: For the target analytes, obtain or calculate a set of molecular descriptors (e.g.,

Wlambda3.unity,ATSc5,geomShape) that encode structural information [5]. Simplified Molecular Input Line Entry System (SMILES) strings are commonly used as input for descriptor calculation software [2]. - Model Building: Using a Design of Experiments (DoE) approach, develop a multiple regression model that correlates the molecular descriptors and chromatographic conditions (e.g., proportion of ethanol, pH, temperature) with the retention time [5]. The model is validated internally and externally to ensure its predictive power (e.g., R² prediction > 99.7%) [5].

- In Silico Screening: Use the validated model to map the Analytical Method Greenness Score (AMGS) across the entire separation landscape. This allows for the simultaneous evaluation of separation performance (e.g., resolution of critical pairs) and environmental impact [1].

- Experimental Verification: Run a limited set of laboratory experiments under the optimal conditions identified by the model to confirm the prediction. For example, a study successfully reduced the AMGS from 9.46 to 4.49 by switching from a fluorinated to a chlorinated additive, while also improving the resolution of a critical pair from fully overlapped to 1.40 [1].

Protocol: Nonlinear Predictive Modeling for Biomolecules

This protocol is critical for accurately modeling the retention of large molecules like proteins and peptides, which can undergo conformational changes during chromatography [6].

- Initial Scouting Runs: Perform a limited set of initial experiments. For a protein mixture, this typically involves running three different gradient slopes (e.g., 10-70% B in 10, 20, and 30 minutes) at three different temperatures (e.g., 20, 40, and 60 °C) to build a foundational dataset [6].

- Model Fitting with Polynomial Regression: Input the experimental data into chromatography simulation software (e.g., ACD/LC Simulator). The key step is to deploy a second-degree polynomial fit for the relationship between the natural log of the retention factor (ln k) and the inverse of temperature (1/T), rather than a standard linear fit [6].

- Resolution Map Generation: The software uses the fitted model to generate a three-dimensional resolution map. This map visualizes the combined effects of gradient time and temperature on the separation quality, identifying the "sweet spot" for optimal resolution [6].

- Model Validation and Comparison: Compare the predicted chromatograms generated by both linear and polynomial models against a new experimental run at the identified optimum conditions. Studies show that using the second-degree polynomial fit can achieve remarkably accurate retention time predictions (ΔtR < 0.1%), whereas a first-degree fit may yield significant errors [6]. The accuracy of the model is further enhanced when using strong chaotropic reagents (e.g., perchloric acid), which denature proteins and simplify their retention behavior [6].

Performance Data and Validation

The validation of in silico models is demonstrated through quantitative improvements in both analytical and environmental metrics. The following table summarizes key performance data from recent studies.

Table 2: Quantitative Performance Outcomes of In Silico Modeling in Separation Science

| Application Context | Key Performance Metric | Result with In Silico Approach | Experimental Validation |

|---|---|---|---|

| Replacing Fluorinated Additive [1] | Analytical Method Greenness Score (AMGS) | Reduced from 9.76 to 4.49 | Resolution of critical pair improved from co-elution to 1.40 |

| Replacing Acetonitrile with Methanol [1] | Analytical Method Greenness Score (AMGS) | Reduced from 7.79 to 5.09 | Critical resolution was preserved |

| Preparative Chromatography [1] | Active Pharmaceutical Ingredient (API) Loading | Increased by 2.5× | Reduced replicates needed for purification by 2.5× |

| Flowsheet Optimization [3] | Computational Time | Reduced by 50% using ANNs vs. Mechanistic Models | Identified 3 out of 4 best flowsheets |

| Retention Time Prediction for Proteins [6] | Prediction Accuracy (ΔtR) | < 0.1% error with 2nd-degree polynomial fit | Significant error observed with 1st-degree linear fit |

These data points provide strong evidence that in silico modeling is not merely a theoretical exercise but a practical tool that delivers verified improvements in efficiency, sustainability, and accuracy.

Essential Research Reagent Solutions

Implementing in silico modeling requires a combination of software tools and theoretical frameworks. The table below details key components of the research "toolkit."

Table 3: Essential Reagents and Tools for In Silico Chromatographic Modeling

| Tool / Solution | Function / Description | Role in In Silico Workflow |

|---|---|---|

| Mechanistic Model Software (e.g., CADET) [3] | Solves systems of partial differential equations for chromatography (e.g., general rate model). | Provides a first-principles digital twin for detailed process simulation and scale-up. |

| QSRR/QSPR Software & Descriptors [5] [2] | Calculates molecular descriptors (e.g., from SMILES strings) and builds retention models. | Predicts retention behavior for new molecules solely from their chemical structure. |

| Artificial Neural Networks (ANNs) [3] | Machine learning models that act as surrogates for slower mechanistic models. | Dramatically speeds up optimization and screening of vast operational landscapes. |

| Linear Solvent Strength (LSS) Theory [2] | A simple model relating retention factor to mobile phase composition: log k = log k_w - Sφ. | Forms the basis for many initial simulations and gradient scouting predictions. |

| Linear Solvation Energy Relationship (LSER) [2] | Models retention based on solute-solvent interactions (e.g., hydrogen bonding, polarity). | Offers a semi-mechanistic approach to predict retention based on physicochemical properties. |

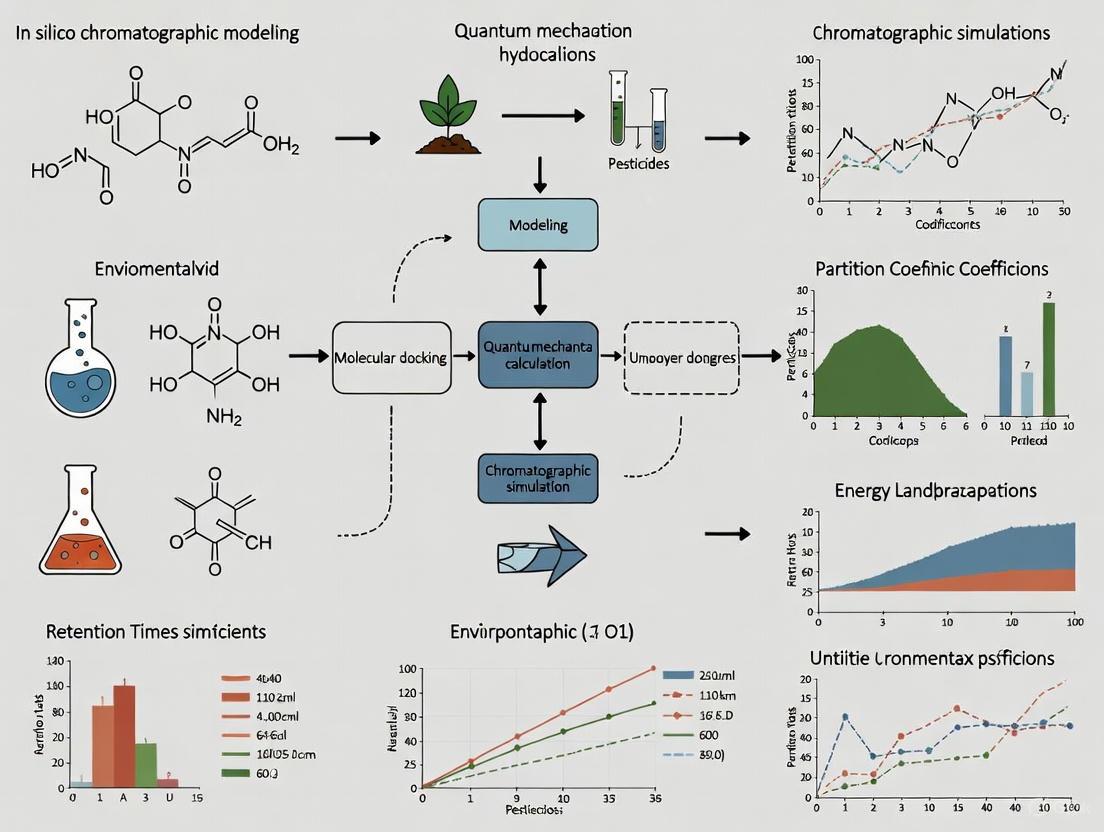

Visualized Workflows

The following diagram illustrates the integrated workflow for developing and validating a greener analytical method using a QSRR-driven in silico approach.

Figure 1: QSRR Workflow for Green Method Development.

The workflow for modeling biomolecules requires special attention to the retention model, as depicted in the decision pathway below.

Figure 2: Decision Pathway for Biomolecule Retention Modeling.

Analytical chemistry, particularly chromatography, plays a vital role in industrial R&D, from pharmaceuticals to environmental science. However, its significant environmental footprint stems from the extensive use of solvents, energy consumption, and waste generation [7]. In an era of heightened environmental awareness, the field is undergoing a critical transformation toward Green Analytical Chemistry (GAC), which aims to minimize this footprint while maintaining analytical performance [8]. This paradigm shift is driven by both regulatory pressures and corporate sustainability goals, making the development of greener methods an urgent priority for researchers and drug development professionals.

A cornerstone of this transformation is the adoption of in silico modeling and computer-assisted method development. These approaches offer a rapid, accurate, and robust technique to design greener chromatographic methods by significantly reducing the need for laborious, resource-intensive laboratory experimentation [1]. This guide provides a comparative analysis of traditional experimental methods against emerging in silico approaches, evaluating their performance, environmental impact, and practical applicability within environmental and pharmaceutical research contexts.

Comparative Analysis: Traditional Experimentation vs. In Silico Modeling

The journey toward greener chromatography necessitates a fundamental change in how methods are developed. The traditional, trial-and-error approach is increasingly being supplemented—and in some cases replaced—by computational predictions. The table below provides a objective comparison of these two paradigms.

Table 1: Comparison of Traditional and In Silico Method Development Approaches

| Aspect | Traditional Experimental Approach | In Silico Modeling Approach |

|---|---|---|

| Core Principle | Physical trial-and-error in the laboratory | Computer simulation and predictive modeling |

| Solvent Consumption | High (large volumes for scouting gradients) | Reduced by up to 65% through pre-optimization [9] |

| Experimental Waste | Significant (failed runs, method refinement) | Minimal (the most eco-friendly experiments are those on a computer) [7] |

| Development Time | Laborious, involving significant analyst time | Rapid, accelerated by predictive algorithms [1] |

| Method Greenness | Often less optimal; greenness is a secondary concern | Actively optimized; the Analytical Method Greenness Score (AMGS) can be mapped across the separation landscape [1] |

| Key Performance Outcome | Critical pair resolution achieved through repeated experiments | Resolution improved from fully overlapped to 1.40 via simulation-guided solvent replacement [1] |

| Environmental Impact Scoring (e.g., AGREE) | Typically lower scores due to hazardous solvents and high waste | Higher scores facilitated by the use of greener solvents like methanol and waste prevention [1] [8] |

Key Insights from Comparative Data

The data demonstrates that in silico modeling is not merely a direct substitute for experimentation but a transformative tool that redefines the development workflow. For the first time, the Analytical Method Greenness Score (AMGS) can be visualized and optimized across the entire separation parameter space, allowing scientists to make informed decisions that balance performance with environmental impact from the outset [1]. A prime example is the replacement of problematic solvents: in silico modeling enabled a switch from a fluorinated mobile phase additive to a chlorinated alternative, reducing the AMGS from 9.46 to 4.49 while simultaneously resolving a critical pair of analytes [1]. Furthermore, acetonitrile can be replaced with more environmentally friendly methanol, reducing the AMGS from 7.79 to 5.09 while preserving critical resolution [1].

Experimental Validation: Protocols and Greenness Assessment

The validation of in silico models relies on rigorous experimental protocols that verify their predictive accuracy. The following section details a standard methodology for validating an in silico-predicted chromatographic method, using a case study from recent literature.

Detailed Experimental Protocol for Method Validation

Objective: To calibrate and validate a digital twin for the purification of a multi-component mixture using linear gradient ion exchange chromatography [10].

Materials:

- Proteins: A three-component protein mixture.

- Chromatography System: An Orbit software-controlled system (or equivalent) for automated method execution.

- Column: Appropriate ion-exchange column.

- Buffers: Elution buffers A and B (e.g., low- and high-salt buffers at optimized pH).

Procedure:

- Automated Model Calibration: The software automatically generates a mathematical model structure and performs a series of six necessary assays to obtain data for model calibration. This includes gradient elution experiments to determine adsorption isotherms and kinetic parameters for each component [10].

- Model-Based Optimization: The calibrated model is used for multi-objective optimization (e.g., balancing purity, yield, and duration) to suggest optimal operating points for purifying a target component [10].

- Experimental Validation: A seventh experiment is conducted under one of the suggested optimal conditions to validate the model's predictive capability. The success criterion is often a target purity (e.g., ≥95%) for the collected fraction [10].

Outcome: The study demonstrated that the automated procedure could generate a calibrated model capable of satisfactorily reproducing experimental chromatograms. The validation run under the optimized condition respected the 95% purity requirement, confirming the model's accuracy [10].

Quantifying Environmental Impact: Greenness Assessment Tools

The greenness of analytical methods can be quantitatively evaluated using several established metrics. The case study below applies these tools to a sample preparation method, illustrating a standardized approach for environmental impact assessment.

Table 2: Greenness Assessment Metrics for Analytical Methods

| Metric Tool | Type of Output | Key Assessment Criteria | Application in Case Study (SULLME Method) |

|---|---|---|---|

| Modified GAPI (MoGAPI) | Semi-quantitative pictogram | Visual assessment of the entire analytical workflow | Score: 60/100. Strengths: Green solvents, microextraction. Weaknesses: Toxic substances, waste generation [8]. |

| AGREE | Numerical score (0-1) & pictogram | Based on the 12 Principles of Green Analytical Chemistry | Score: 0.56. Benefits: Miniaturization, automation. Drawbacks: Toxic solvents, low throughput [8]. |

| AGSA | Numerical score & star diagram | Reagent safety, energy use, waste, etc. | Score: 58.33. Manual handling and numerous hazard pictograms were key limitations [8]. |

| Carbon Footprint Reduction Index (CaFRI) | Numerical score | Life-cycle carbon emissions | Score: 60/100. Favorable: Low energy use. Unfavorable: No renewable energy, >10 mL organic solvent used [8]. |

This multidimensional evaluation highlights how complementary metrics provide a comprehensive view of a method's sustainability, crucial for making informed, environmentally responsible choices [8].

Visualizing the Workflow: From In Silico Design to Green Validation

The integration of in silico tools into the method development lifecycle creates a more efficient and sustainable workflow. The following diagram maps this logical pathway.

In Silico Method Development and Greenness Validation Workflow

This workflow highlights the iterative cycle of computational design and minimal laboratory testing. It begins with defining the separation goal, followed by in silico method design where initial conditions are simulated. The process then moves to greenness optimization, where tools like AMGS are used to map the environmental and performance landscape [1]. After in silico validation confirms the method's viability, only a final, targeted laboratory experiment is needed for confirmation, drastically reducing the environmental footprint compared to traditional scouting.

The Scientist's Toolkit: Essential Reagents and Solutions for Green Chromatography

The practical implementation of greener chromatography relies on a suite of computational and chemical tools. The following table catalogs key research reagents and solutions central to developing and validating in silico models for environmentally friendly separations.

Table 3: Essential Research Reagents and Solutions for Green In Silico Chromatography

| Item Name | Function/Description | Application in Green Chemistry |

|---|---|---|

| In Silico Modeling Software | Computer software that uses complex algorithms to predict optimal chromatographic conditions (pH, gradient, etc.) and simulate outcomes. | Prevents waste by minimizing trial-and-error experimentation; enables mapping of the greenness score (AMGS) across the separation landscape [1] [7]. |

| Methanol | A polar protic solvent commonly used as a mobile phase component. | A greener alternative to acetonitrile; in silico modeling facilitates its implementation while preserving critical resolution, reducing the environmental impact [1]. |

| Hydrogen Carrier Gas | A mobile phase for Gas Chromatography (GC). | An alternative to helium, mitigating supply shortages and offering a greener operational profile [9]. |

| Supercritical CO₂ | A supercritical fluid used as a mobile phase in Supercritical Fluid Chromatography (SFC). | A non-toxic, recyclable solvent that significantly reduces the need for organic solvents, aligning with green chemistry principles [9] [11]. |

| Bio-Based/Green Solvents | Solvents derived from renewable resources with lower toxicity and better biodegradability. | Used to replace hazardous solvents as guided by solvent selection guides (e.g., ACS GCI-PR guide), reducing environmental and safety risks [7]. |

| Ionic Liquids | Salts in a liquid state, used as mobile phase additives or in stationary phases. | Can replace more hazardous solvents and offer unique selectivity, contributing to waste reduction and safer processes [7]. |

The urgent drive for sustainability is irrevocably changing the practice of analytical chemistry. The comparative data and experimental protocols presented in this guide objectively demonstrate that in silico chromatographic modeling is a mature, validated technology that offers a decisive path forward. By transitioning from a reliance on physical experimentation to a strategy of computational prediction and targeted validation, researchers and drug development professionals can simultaneously achieve two critical goals: upholding the highest standards of analytical performance and significantly reducing the environmental footprint of their work. This synergy between scientific excellence and environmental responsibility is the foundation of the future analytical laboratory.

In silico chromatographic modeling represents a paradigm shift in separation science, offering a powerful strategy to reduce extensive laboratory experimentation, accelerate method development, and minimize solvent waste. At the heart of these computational approaches lie retention models that predict how analytes behave under varying chromatographic conditions. The Linear Solvent Strength (LSS) model has served as the fundamental predictive framework for decades, prized for its simplicity and effectiveness in many reversed-phase liquid chromatography (RPLC) applications. However, the increasing complexity of analytical samples—from pharmaceutical compounds to environmental contaminants—has driven the development of sophisticated models that transcend LSS limitations. This guide objectively compares the core predictive frameworks, evaluating their mathematical foundations, applicability, and experimental validation to inform researchers' selection for environmental analysis and drug development.

Core Predictive Models: Mathematical Foundations and Experimental Protocols

Linear Solvent Strength (LSS) Theory

The LSS model establishes a linear relationship between the logarithm of the retention factor (k) and the volume fraction of the organic modifier (φ) in the mobile phase [12] [2]. Its fundamental equation is:

log k = log k₀ - Sφ

where k₀ is the extrapolated retention factor in pure weak solvent (e.g., water), and S is a solute-specific solvent strength parameter [12]. For small molecules under standard RPLC conditions, this model provides a robust approximation, enabling accurate retention time predictions across a range of organic modifier concentrations.

- Experimental Protocol for LSS Parameter Determination (Gradient Method)

A common approach for determining LSS parameters (log k₀ and S) involves two or more gradient elution experiments [12]. The protocol proceeds as follows:

- Preliminary Experiments: Perform two gradient runs with different gradient times (tg1, tg2) while maintaining the same initial and final mobile phase composition.

- Retention Time Measurement: Record the analyte retention times (tr) from these initial experiments.

- Calculation of Derived Variables: For each gradient, calculate the normalized gradient slope (s), defined as *s = (t₀ × Δφ) / tg, where t₀ is the column dead time and Δφ is the change in organic modifier volume fraction. Then, determine the organic modifier fraction at elution (Ce).

- Linear Regression: Plot Ce versus log(s) for the analyte. According to LSS theory, this relationship is linear: *C_e = α log(s) + β.

- Parameter Extraction: The LSS parameters are calculated from the linear regression coefficients: the slope α yields the S parameter (S = 1/α), and the intercept β is used to calculate log k₀ using the relation log k₀ = S × β - log(2.3 × S) [12]. This method is particularly effective for biomolecules like proteins, which often exhibit strongly linear LSS behavior [12].

Advanced and Multimodal Retention Models

For complex separations where the LSS model fails—such as those involving multimodal stationary phases or a wide range of organic solvent compositions—more advanced empirical and mechanistic models are required.

Quadratic and Complex Empirical Models The quadratic model extends the LSS relationship by adding a second-order term to account for curvature in the log k vs. φ plot: log k = log k₀ + Aφ + Bφ². Other three-parameter empirical models (e.g., those incorporating reciprocal or square root terms) offer additional flexibility to fit U-shaped or multimodal retention curves, which are common in hydrophilic interaction liquid chromatography (HILIC) and mixed-mode chromatography [13].

Box-Cox Transformation for Multimodal Systems A unified approach for modeling complex retention in trimodal chromatography (combining reversed-phase, cation-exchange, and anion-exchange mechanisms) uses the Box-Cox transformation [13]. This framework can fit a variety of curve shapes, from U-shaped to multimodal, using a single generalized equation. The model introduces sophisticated descriptors like turning points and symmetry parameters to provide a deeper fundamental interpretation of the chromatographic behavior.

Quantitative Structure-Retention Relationship (QSRR) Models QSRR models represent a fundamentally different, structure-based predictive approach. They correlate molecular descriptors derived from a compound's chemical structure with its chromatographic retention [2] [14] [15].

- Mechanism: Molecular descriptors—which can be one-dimensional (e.g., molecular weight), two-dimensional (e.g., topological indices), or three-dimensional (e.g., steric properties)—are calculated from the molecular structure, often represented by a SMILES (Simplified Molecular Input Line Entry System) string [14] [15].

- Model Building: Machine learning algorithms (e.g., multiple linear regression, random forests, or neural networks) are trained on a dataset of known retention times to establish a mathematical relationship between the descriptors and retention [14].

- Prediction: The trained model can predict the retention of new compounds based solely on their structural information, requiring no prior experimentation under the specific chromatographic conditions [2]. For example, robust QSRR models using the Monte Carlo technique have been successfully developed to predict the retention times of hundreds of pesticide residues [15].

The following workflow diagram illustrates the predictive logic and relationships between these core modeling frameworks.

Comparative Analysis of Predictive Frameworks

The choice of a predictive model involves trade-offs between simplicity, accuracy, and the required experimental input. The following table provides a direct comparison of the core frameworks.

Table 1: Objective Comparison of Chromatographic Retention Models

| Model | Mathematical Form | Key Applications | Experimental Load | Limitations |

|---|---|---|---|---|

| Linear Solvent Strength (LSS) [12] [2] | (\log k = \log k_0 - S\phi) | Standard RPLC for small molecules and proteins [12]. | Low (2 initial runs) | Limited accuracy for wide (\phi) ranges and multimodal mechanisms. |

| Quadratic & Empirical Models | (\log k = \log k_0 + A\phi + B\phi^2) | Wider (\phi) ranges in RPLC and HILIC [13]. | Moderate (3+ initial runs) | Requires more data; parameters can be less interpretable. |

| Box-Cox Transformation [13] | Unified equation for U-shaped/multimodal curves | Trimodal (RP/CEX/AEX) and mixed-mode systems [13]. | High (requires design of multiple initial runs) | Complex model fitting; specialized computational knowledge needed. |

| QSRR [2] [14] [15] | (RT = f(Molecular\ Descriptors)) | Novel compound identification; green method development [1] [14]. | Very Low (once model is trained) | Depends on availability and quality of training data; transferability between systems can be low [14]. |

Experimental Validation and Performance Data

The practical utility of in silico models is confirmed by their demonstrated predictive accuracy in real-world separation challenges.

Performance in Greening Chromatographic Methods

In silico modeling based on retention models enables the systematic design of greener chromatographic methods without sacrificing performance. A recent study showcased this by using modeling software to replace acetonitrile with greener methanol and to substitute a fluorinated mobile phase additive (trifluoroacetic acid) with trichloroacetic acid [1] [16]. The results were quantified using the Analytical Method Greenness Score (AMGS), where a lower score indicates a greener method [16]:

- Solvent Replacement: The AMGS was reduced from 7.79 (acetonitrile) to 5.09 (methanol) while preserving critical resolution [16].

- Additive Replacement: The switch from TFA to TCA dramatically reduced the AMGS from 9.46 to 4.49, simultaneously improving chromatography by increasing the resolution of a critical pair from fully overlapped to 1.40 [16]. This validates modeling as a robust strategy for meeting green chemistry principles.

Performance in Complex Separation Scenarios

Advanced models are essential when dealing with complex retention mechanisms.

- Trimodal Chromatography: For 45 antidiabetic-related compounds analyzed on a trimodal RP/CEX/AEX stationary phase, a unified Box-Cox model effectively described complex U-shaped and multimodal retention curves. The study found that using molar fraction as an independent variable provided better predictive performance than traditional volume fraction [13].

- Peptide and Protein Analysis: While LSS theory works well for intact proteins, its application to peptides requires validation. A simple Excel-based LSS protocol showed that prediction accuracy for peptides depended heavily on two criteria: a sufficiently high initial retention factor ((k_i)) and a linear retention model. When these conditions were met, prediction errors were minimal [12].

Table 2: Summary of Key Experimental Validations from Recent Literature

| Application Scenario | Model Used | Reported Outcome | Source |

|---|---|---|---|

| Solvent & Additive Replacement | Commercial Software (LSS-based) | Reduced AMGS score; maintained or improved resolution [16]. | [1] [16] |

| Peptide/Protein Retention Prediction | Simplified LSS Calculation | Accurate for proteins and peptides meeting linearity/retention criteria [12]. | [12] |

| Pesticide Residue Analysis | QSRR with Monte Carlo | R² = 0.842 on external validation set for 823 pesticides [15]. | [15] |

| Antidiabetic Drug Analysis | Box-Cox Transformation | Successfully modeled U-shaped/multimodal curves in trimodal LC [13]. | [13] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of predictive frameworks relies on key reagents, software, and materials.

Table 3: Essential Reagents and Resources for Predictive Modeling

| Category | Specific Item / Example | Critical Function in Modeling |

|---|---|---|

| Chromatography Reagents | LC-MS Grade Solvents (Acetonitrile, Methanol) | Ensures reproducibility and prevents detector interference during initial scouting runs. |

| Mobile Phase Additives | Formic Acid, Trifluoroacetic Acid (TFA), Ammonium Acetate | Modifies pH and ionic strength, critically impacting ionization and retention of analytes. |

| Reference Standards | Pharmacopeial Standards (e.g., Uracil for (t_0)) | Essential for accurate determination of system dead time and retention factors [12]. |

| Software & Databases | ACD/LC Simulator, DryLab, CORAL, Open-Source Python Algorithms [17] | Performs retention modeling, peak tracking, and optimization based on experimental data. |

| Molecular Descriptors | Software like PaDEL, Dragon, or Online Calculators | Generates numerical descriptors from molecular structure for QSRR model building [14]. |

| Public Databases | METLIN SMRT, PredRet, NIST RI [14] | Provides retention data for training and validating QSRR models across different systems. |

The validation of in silico chromatographic modeling, particularly for impactful fields like environmental analysis, rests on a tiered ecosystem of predictive frameworks. The LSS model remains a powerful, efficient tool for standard reversed-phase separations. However, the increasing complexity of analytical challenges necessitates a broader toolkit. Quadratic and Box-Cox transformed models provide the mathematical flexibility to capture non-ideal and multimodal retention behaviors. Meanwhile, QSRR approaches represent a transformative, data-driven paradigm that can predict retention based solely on molecular structure, offering tremendous potential for reducing experimental waste. The choice of model is not a question of which is best in absolute terms, but which is the most appropriate for the specific separation mechanism, analyte set, and development constraints at hand.

Quantitative Structure–Property Relationship (QSPR) modeling represents a cornerstone of modern computational chemistry, enabling the prediction of chemical properties based solely on molecular structure. The integration of machine learning (ML) algorithms has transformed QSPR from a traditionally linear modeling approach into a powerful predictive framework capable of capturing complex, nonlinear relationships. Within environmental analysis, particularly for in silico chromatographic modeling, this synergy provides researchers with robust tools to predict the behavior of persistent organic pollutants (POPs) without resorting to laborious experimental measurements. This guide examines the foundational methodologies, compares the performance of leading ML algorithms, and details experimental protocols for validating QSPR models, with a specific focus on applications in environmental chemistry for researchers and drug development professionals.

Core Methodologies: QSPR Workflow and Machine Learning Algorithms

The development of a reliable QSPR model follows a structured workflow, from data collection to model deployment. Adherence to the Organisation for Economic Co-operation and Development (OECD) principles for validation is paramount to ensure the model's reliability, robustness, and regulatory acceptance.

The Standard QSPR Modeling Workflow

The following diagram illustrates the critical stages in developing and validating a QSPR model.

Figure 1: QSPR Model Development Workflow. This flowchart outlines the standard procedure for building a validated QSPR model, including iterative refinement loops.

Key Machine Learning Algorithms in QSPR

Various machine learning algorithms, from linear to highly nonlinear, are employed in QSPR studies. The choice of algorithm depends on the complexity of the structure-property relationship and the size of the dataset.

Table 1: Comparison of Common Machine Learning Algorithms in QSPR

| Algorithm | Type | Key Advantages | Typical QSPR Performance (R²/ Q²) | Ideal Use Cases |

|---|---|---|---|---|

| Multiple Linear Regression (MLR) | Linear | High interpretability, simple implementation | R²: 0.873-0.891 [18] | Linear relationships, small datasets, initial screening |

| Artificial Neural Network (ANN) | Nonlinear | High predictive power, captures complex nonlinearities | Q²ₑₓₜ: 0.880-0.971 [19] | Large, complex datasets with strong nonlinear trends |

| Random Forest (RF) | Nonlinear (Ensemble) | Robust to overfitting, provides feature importance | R²: 0.919-0.975 [19] | Datasets with many descriptors, feature selection needed |

| Support Vector Machine (SVM) | Nonlinear | Effective in high-dimensional spaces, memory efficient | Log Kₚₑ․ᵥ prediction [19] | Complex datasets with clear margin of separation |

| Gradient-Boosting Decision Tree (GBDT) | Nonlinear (Ensemble) | High predictive accuracy, handles mixed data types | R²ₐⱼ: 0.925, Q²ₑₓₜ: 0.811 [18] | Winning model in recent plant cuticle-air partition studies |

| k-Nearest Neighbor (kNN) | Instance-based | Simple, no model training, adapts to new data easily | Log Kₚₑ․ᵥ prediction [19] | Local structure-property relationships, similarity-based reasoning |

Comparative Performance Analysis of QSPR Approaches

Case Study: Predicting Polyethylene-Water Partition Coefficients

A pivotal study directly compared multiple ML algorithms for predicting the polyethylene-water partition coefficients (KPE-w) of polychlorinated biphenyls (PCBs), critical parameters in passive sampling of aquatic environments [19]. The researchers developed 10 different in-silico models using five algorithms and validated them with experimental data for 16 PCBs.

Table 2: Performance Metrics for log KPE-w Prediction of PCBs [19]

| Model Type | Goodness-of-Fit (R²adj) | Robustness (Q²LOO) | External Prediction (Q²ext) | Residuals (log units) |

|---|---|---|---|---|

| RF-2 Model (Recommended) | 0.919 - 0.975 | 0.870 - 0.954 | 0.880 - 0.971 | Within ± 0.3 |

| ANN-based Models | High | High | High | Approaching ± 0.3 |

| SVM-based Models | High | High | High | Approaching ± 0.3 |

| MLR-based Models | Good | Good | Good | Larger than nonlinear models |

The study concluded that the Random Forest (RF-2) model demonstrated superior performance and was recommended for predicting KPE-w values [19]. Mechanism interpretations revealed that the number of chlorine atoms and ortho-substituted chlorines were the most significant structural parameters affecting KPE-w.

Emerging Hybrid Approaches: q-RASPR

A novel quantitative Read-Across Structure-Property Relationship (q-RASPR) approach integrates traditional QSPR with chemical similarity information from read-across techniques [20]. This hybrid framework, applied to predict the properties of POPs like PCBs and PBDEs, has shown enhanced predictive accuracy, especially for compounds with limited experimental data. By incorporating similarity-based descriptors and error metrics, q-RASPR improves robustness and reduces overfitting, resulting in models with superior external validation performance compared to conventional QSPRs [20].

Experimental Protocols for QSPR Validation

Protocol 1: Three-Phase System for Experimental Verification of Partition Coefficients

Objective: To rapidly and accurately determine LDPE-water partition coefficients (KPE-w) for experimental validation of QSPR models [19].

Materials:

- Test Compounds: e.g., 16 Polychlorinated Biphenyl (PCB) congeners.

- Polymer Phase: Low-density polyethylene (LDPE) sheets.

- Aqueous Phase: Deionized water or buffer solution.

- Surfactant: To form micelles (e.g., sodium dodecyl sulfate).

- Apparatus: Glass vials with Teflon-lined caps, agitator, temperature-controlled incubator, analytical instrument (e.g., GC-MS or HPLC-MS).

Methodology:

- System Setup: A three-phase system (aqueous phase, surfactant micelles, LDPE) is prepared in sealed vials. The surfactant concentration should be above the critical micelle concentration.

- Equilibration: The target compounds are introduced, and the system is agitated at a constant temperature (e.g., 20-30°C) until equilibrium is reached. The micellar phase significantly accelerates equilibration compared to traditional two-phase systems.

- Analysis: The equilibrium concentration of the analyte in each phase is measured.

- Calculation: The KPE-w is calculated as the product of the LDPE-micellar pseudo phase partition coefficients (KPE-mic) and the micelle-water partition coefficients (Kmic-w).

- Validation: The experimentally determined log KPE-w values are compared to the QSPR-predicted values, with residuals within ±0.3 log units considered excellent agreement [19].

Protocol 2: QSPR Model Development and Internal Validation

Objective: To construct and internally validate a QSPR model according to OECD guidelines [19] [18].

Materials:

- Software: alvaDesc, CORAL, or PaDEL-Descriptor for descriptor calculation; R, Python, or specialized QSPR software for modeling.

- Dataset: A curated set of chemical structures and their associated experimental property data.

Methodology:

- Data Collection and Curation: Experimental property values (e.g., log KPE-w) are gathered from literature. Duplicates are removed, and values are averaged for consistency.

- Descriptor Calculation and Selection: Thousands of molecular descriptors are calculated. Redundant and non-informative descriptors are removed. The final set is selected using methods like Variance Inflation Factor (VIF < 10) to avoid multicollinearity [18].

- Data Set Splitting: The dataset is divided into a training set (~70-80%) for model development and a test set (~20-30%) for external validation. The split should be rational (e.g., random, based on structural similarity).

- Model Construction: A machine learning algorithm (e.g., MLR, RF, GBDT) is applied to the training set to establish a mathematical relationship between the descriptors and the target property.

- Internal Validation: The model's performance is assessed using the training data with techniques like Leave-One-Out (LOO) cross-validation, which yields Q²LOO, and bootstrapping, which yields Q²BOOT. A Q² > 0.5 is generally considered acceptable [19] [18].

- External Validation and Applicability Domain: The model's true predictive power is evaluated by predicting the hold-out test set (Q²ext). The applicability domain is defined to identify compounds for which the model can reliably make predictions [20].

The experimental workflow for model validation is summarized below.

Figure 2: QSPR Model Validation Workflow. This process highlights the critical steps of internal and external validation required for a robust model.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for QSPR-Supported Environmental Analysis

| Reagent/Material | Function in Research | Application Example |

|---|---|---|

| Low-Density Polyethylene (LDPE) | Sorbent phase in passive sampling devices | Determining freely dissolved concentrations of hydrophobic contaminants (e.g., PCBs, PBDEs) in water [19] |

| Octadecyl Silica (C18) Columns | Stationary phase for reversed-phase chromatography | Predicting skin permeability or environmental partitioning behavior of compounds [21] |

| Chaotropic Reagents (e.g., TFA, Perchloric Acid) | Mobile phase additives in LC for biomolecules | Denaturing proteins/peptides for more predictable retention modeling in chromatographic method development [6] |

| AlvaDesc / PaDEL-Descriptor Software | Calculation of molecular descriptors from chemical structures | Generating thousands of 1D, 2D, and 3D molecular descriptors for QSPR model development [19] [22] |

| CORAL Software | QSPR model development using SMILES notation | Building models based on the Monte Carlo optimization method with SMILES-based descriptors [23] |

The integration of machine learning with foundational QSPR principles has created a powerful, data-driven paradigm for predicting chemical properties. As demonstrated, algorithm selection is critical, with Random Forest and Gradient-Boosting Decision Trees often outperforming traditional linear models in complex prediction tasks like estimating partition coefficients for environmental analysis. The emergence of hybrid approaches like q-RASPR further enhances predictive accuracy and reliability. For researchers in environmental and pharmaceutical sciences, adherence to rigorous experimental protocols and comprehensive validation, as outlined in this guide, is essential for developing QSPR models that are not only predictive but also trustworthy for informing regulatory decisions and guiding sustainable chemical design.

The field of analytical chemistry, particularly chromatographic separation, faces a critical challenge: balancing high-performance method development with the urgent need for greener, more sustainable laboratory practices. Traditional chromatography often relies on large volumes of environmentally detrimental solvents and involves laborious, trial-and-error experimentation that consumes significant analyst time and resources. Against this backdrop, in silico modeling has emerged as a transformative approach, enabling researchers to develop analytical and preparative chromatographic methods that are both high-performing and environmentally conscious. This paradigm shift allows scientists to map separation landscapes that simultaneously optimize for retention parameters and greenness scores, creating a new framework for sustainable analytical science. The integration of computational tools represents a fundamental advancement in how separation scientists approach method development, moving from purely empirical optimization to a predictive, knowledge-driven discipline that aligns with the principles of Green Analytical Chemistry.

In Silico Modeling: A Framework for Greener Chromatography

Fundamental Principles and Mechanisms

In silico modeling applies computational power to predict chromatographic behavior, replacing resource-intensive laboratory experimentation with simulation. This approach leverages quantitative structure-retention relationships (QSRR), which correlate molecular descriptors of analytes with their chromatographic retention parameters [24]. By modeling the interactions between analytes, stationary phases, and mobile phases, these tools can accurately predict retention times, peak shapes, and resolution under various chromatographic conditions. The predictive models are built using a combination of machine learning algorithms—including random forest (RF) and artificial neural networks (ANN)—and mechanistic models based on physicochemical principles [25] [26]. This enables researchers to virtually screen thousands of potential method conditions in silico before performing minimal validation experiments in the laboratory.

The transition to in silico methods represents more than just a technical improvement—it fundamentally changes the environmental calculus of analytical chemistry. As Handlovic et al. demonstrated, this approach allows the analytical method greenness score (AMGS) to be mapped across the entire separation landscape, enabling simultaneous optimization for both performance and environmental impact [1]. This dual-parameter optimization was previously nearly impossible with traditional method development approaches, as the relationship between chromatographic parameters and environmental impact is complex and multidimensional.

Key Modeling Approaches

Mechanistic Models: These models are based on physicochemical principles describing mass transport and protein sorption, such as the general rate model and steric mass action model [26]. They provide a priori predictions but require calibration with empirical data and substantial computational resources.

Data-Driven Models: Built without prior knowledge of underlying mechanisms, these models use machine learning and statistical regression analysis to establish correlations between dependent and independent variables [26]. They are particularly valuable for poorly characterized systems.

Hybrid Models: Combining mechanistic and data-driven approaches, hybrid models offer the benefits of both worlds and can form the basis for digital twins of production processes [26].

Quantifying Greenness: Metrics and Environmental Impact

The Analytical Method Greenness Score (AMGS)

A critical advancement in sustainable chromatography is the development of standardized metrics to quantify environmental impact. The Analytical Method Greenness Score (AMGS) provides a standardized approach to evaluate the environmental footprint of chromatographic methods [1]. This scoring system enables direct comparison between different method conditions and facilitates objective assessment of sustainability improvements. The AMGS incorporates multiple factors, including solvent toxicity, energy consumption, and waste generation, providing a comprehensive view of a method's environmental impact.

Solvent Replacement Strategies

Chromatography's primary environmental impact comes from solvent use, making solvent substitution a key strategy for improving greenness. Research demonstrates two primary replacement strategies with significant environmental benefits:

Table 1: Solvent Replacement Strategies and Their Greenness Impact

| Replacement Strategy | Specific Change | Greenness Improvement | Performance Outcome |

|---|---|---|---|

| Fluorinated to Chlorinated Additive | Fluorinated mobile phase additive to chlorinated alternative | AMGS reduced from 9.46 to 4.49 [1] | Critical pair resolution improved from fully overlapped to 1.40 [1] |

| Acetonitrile to Methanol | Acetonitrile replaced with environmentally friendlier methanol | AMGS reduced from 7.79 to 5.09 [1] | Critical resolution preserved [1] |

These solvent substitutions demonstrate that environmental improvements can coincide with performance enhancements or maintenance, countering the traditional assumption that greener methods necessarily compromise analytical quality.

Experimental Protocols for In Silico Method Development

QSRR Model Development Protocol

The development of robust QSRR models follows a systematic protocol that ensures predictive accuracy and applicability across different chromatographic systems:

Analyte Selection and Descriptor Calculation: Select a diverse set of representative analytes (7 UV filters were used in one study) and calculate molecular descriptors using software such as Mordred, which can compute over 1800 2D and 3D descriptors [25].

Experimental Design: Employ Design of Experiments (DoE) to systematically explore the chromatographic parameter space, including factors such as ethanol proportion in mobile phase, pH, flow rate, and column temperature [24].

Model Training: Use multiple regression analysis or machine learning algorithms to correlate molecular descriptors with retention times. High-performing models can achieve determination coefficients (R²) of 99.82% [24].

Model Validation: Conduct internal and external validation using techniques such as 5-fold cross-validation to ensure predictive power, with prediction coefficients (R²pred) of 99.71% achievable [24] [25].

Chromatographic Profile Simulation: Apply Monte Carlo methods to simulate full chromatographic profiles, providing a comprehensive view of separation under various conditions [24].

Relative Response Factor Prediction Protocol

For quantification in non-targeted analysis, machine learning algorithms can predict relative response factors (RRFs), enabling concentration estimates without analytical standards:

Dataset Preparation: Compile datasets from different instrumental setups (e.g., CE-ESI+, LC-QTOF/MS ESI+/-) with known RRFs [25].

Descriptor Selection: Utilize Abraham descriptors or other physicochemical properties that influence ionization efficiency [25].

Algorithm Application: Implement random forest or artificial neural network models to predict RRFs based on physicochemical properties [25].

Concentration Calculation: Divide measured abundance (peak area or height) by the predicted RRF to estimate chemical concentrations [25].

This protocol has demonstrated particular success in ESI+ mode, with mean absolute errors as low as 0.19 log units for RRF prediction [25].

Comparative Performance Data: Traditional vs. In Silico Approaches

Environmental and Efficiency Metrics

The implementation of in silico approaches demonstrates significant advantages over traditional method development across multiple performance metrics:

Table 2: Performance Comparison of Traditional vs. In Silico Method Development

| Performance Metric | Traditional Approach | In Silico Approach | Improvement Factor |

|---|---|---|---|

| Experimental effort | 100% baseline | ~25% of traditional approach [26] | 75% reduction [26] |

| Method optimization time | Weeks to months | Days to weeks | 2-4x acceleration [1] |

| Solvent consumption during development | High | Significantly reduced | Not quantified but substantial |

| Process understanding | Empirical | Mechanistic with deeper insight | Enhanced fundamental understanding [26] |

| Preparative purification efficiency | Standard loading | 2.5× increased loading [1] | 2.5× less replicates needed [1] |

Greenness Score Improvements Across Applications

The environmental benefits of in silico optimization extend across various chromatographic applications, with demonstrated AMGS reductions:

Pharmaceutical Analysis: Implementation of in silico modeling for pharmaceutical compounds enabled AMGS reductions from 9.46 to 4.49 (52.5% improvement) while improving critical pair resolution from fully overlapped to 1.40 [1].

Preparative Chromatography: Using resolution maps to capitalize on peak crossover increased active pharmaceutical ingredient loading by 2.5×, directly reducing solvent consumption and waste generation in preparative applications [1].

These improvements demonstrate that in silico approaches not only reduce environmental impact but also enhance operational efficiency and throughput, creating a compelling business case alongside the sustainability benefits.

Visualization of the In Silico Method Development Workflow

The following diagram illustrates the integrated workflow for developing greener chromatographic methods using in silico modeling:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of in silico chromatography modeling requires both computational tools and physical materials. The following table details key resources in this emerging field:

Table 3: Essential Research Reagents and Computational Tools for In Silico Chromatography

| Tool/Reagent Category | Specific Examples | Function/Purpose |

|---|---|---|

| Molecular Descriptor Software | Mordred [25], UFZ-LSER database [25] | Calculates 2D/3D molecular descriptors for QSRR modeling |

| Machine Learning Platforms | TensorFlow [25], custom RF/ANN algorithms [25] | Predicts retention behavior and relative response factors |

| Chromatographic Stationary Phases | C18 columns [24] [27], ion-exchange resins [26] | Provides separation mechanism for method validation |

| Mobile Phase Modifiers | Fluorinated additives, chlorinated alternatives, methanol, acetonitrile [1] | Enables selectivity optimization and greenness improvement |

| Model Validation Standards | Pharmaceutical compounds, UV filters, endogenous metabolites [24] [25] [27] | Verifies predictive model accuracy against experimental data |

| Process Modeling Software | GoSilico Chromatography Modeling Software [26] | Facilitates mechanistic modeling of purification processes |

The integration of in silico modeling into chromatographic method development represents a paradigm shift that successfully maps the separation landscape from traditional retention parameters to comprehensive greenness scores. This approach demonstrates that environmental sustainability and analytical performance are not mutually exclusive but can be simultaneously optimized through computational prediction. The documented reductions in AMGS scores—from 9.46 to 4.52—coupled with maintained or improved resolution metrics provide compelling evidence for the superiority of this approach [1]. As the field advances, the integration of more sophisticated machine learning algorithms, expanded chemical space coverage, and real-time process analytical technologies will further enhance the predictive power and environmental benefits of in silico chromatography modeling. For researchers and pharmaceutical developers, adopting these methodologies offers a clear path to reducing environmental impact while accelerating analytical development timelines and deepening fundamental process understanding.

From Theory to Practice: Methodological Approaches and Environmental Applications

Workflow for Computer-Assisted Chromatographic Method Development

In the field of environmental analysis, the identification and quantification of unknown chemicals in complex samples presents a significant challenge. Non-targeted screening (NTS) with liquid chromatography coupled to high-resolution mass spectrometry (LC/HRMS) often detects thousands of features, the vast majority of which remain unannotated, constituting what we refer to as the "unknown chemical space" [28]. The validation of in silico chromatographic modeling has emerged as a critical approach to address this challenge, providing a framework for structural annotation of LC/HRMS features and their further prioritization without extensive laboratory experimentation. Computer-assisted method development leverages predictive technology and complex algorithms to optimize chromatographic parameters, significantly reducing the time and resources required for method development while improving separation quality [29] [7].

The broader thesis of this guide centers on validating these in silico approaches specifically for environmental research, where samples are particularly complex and contain diverse chemical constituents. This validation requires careful assessment of optimization algorithms, software platforms, and workflow efficiency to establish reliable protocols for environmental analytical laboratories. As green chemistry principles gain prominence in analytical science, the environmental impact of chromatographic processes—including solvent consumption, waste generation, and energy use—has become a significant concern, further driving the adoption of in silico methods [7].

Core Workflow for Computer-Assisted Method Development

Fundamental Workflow Components

Computer-assisted chromatographic method development follows a systematic workflow that integrates theoretical modeling with targeted experimental validation. The process transforms traditional trial-and-error approaches into a efficient, predictive science.

The workflow begins with clearly defining separation objectives based on the analytical goals, which may include targeted compound analysis or untargeted characterization of complex environmental samples [29]. For pharmaceutical applications, method requirements are guided by Quality by Design (QbD) principles established in ICH guidelines Q8, Q9, and Q10, which emphasize predefined objectives and thorough process understanding [30]. Subsequent steps involve analyte characterization using predictive software tools to determine physicochemical properties such as pKa, logP, and logD, which inform the selection of appropriate initial conditions [31].

A critical phase involves in silico modeling and retention prediction, where chromatographic simulations map the separation landscape under various conditions [32]. This phase significantly reduces the need for extensive laboratory experiments. Limited experimental validation follows to verify model predictions and collect essential data for model refinement. Sophisticated optimization algorithms are then applied to identify optimal method parameters before final method validation and documentation [33].

Key Optimization Algorithms and Performance

Optimization algorithms play a pivotal role in computer-assisted method development, with different algorithms exhibiting distinct strengths depending on the specific application context, required iteration budget, and optimization goals.

Table 1: Comparison of Optimization Algorithms for Chromatographic Method Development [33]

| Algorithm | Data Efficiency | Time Efficiency | Optimal Use Cases | Limitations |

|---|---|---|---|---|

| Bayesian Optimization (BO) | Highest | Low with large iterations (<200) | Search-based optimization, limited iteration budget | Unfavorable computational scaling with large iterations |

| Differential Evolution (DE) | High | Highest | Dry optimization (in silico), large iteration budgets | Less effective for search-based optimization |

| Genetic Algorithm (GA) | Moderate | Moderate | General purpose optimization | Outperformed by DE and BO in specific scenarios |

| CMA-ES | Moderate | Moderate | Complex optimization landscapes | Not typically best-performing for chromatography |

| Random Search | Low | Low | Baseline comparison | Not efficient for production use |

| Grid Search | Lowest | Lowest | Systematic screening | Computationally expensive and inefficient |

The selection of optimization algorithms must consider the specific context of environmental analysis, where samples often contain diverse chemical constituents with varying properties. Bayesian optimization has demonstrated exceptional performance in data efficiency, making it particularly valuable for search-based optimization requiring fewer than 200 iterations [33]. This approach is well-suited for environmental applications where reference standards may be unavailable for many compounds, and experimental runs must be minimized. In contrast, differential evolution excels in time efficiency for dry, in silico optimization, making it ideal for virtual screening of large method parameter spaces before any laboratory work [33].

The performance of these algorithms is significantly influenced by the chromatographic response function (CRF) and sample complexity, emphasizing the importance of selecting appropriate quality metrics aligned with analytical goals [33]. For environmental research targeting specific pollutant classes, targeted CRFs may enhance optimization efficiency, while untargeted analysis of complex environmental samples may require different quality descriptors focused on peak capacity and resolution.

Software Tools and Platforms

Commercial Method Development Suites

Several comprehensive software platforms have been developed to support computer-assisted chromatographic method development, integrating multiple tools into unified workflows.

Table 2: Commercial Software Platforms for Chromatographic Method Development

| Software Platform | Key Features | Optimization Capabilities | Environmental Application Features |

|---|---|---|---|

| ACD/Method Selection Suite [31] | Physicochemical property prediction, column selection, retention modeling | 1D, 2D, and 3D modeling for LC/GC parameters; customizable suitability criteria | Solvent reduction tools, greenness scoring, waste minimization |

| Empower Method Development Tools [34] | Automated screening, method validation manager, system suitability testing | Design of Experiments (DoE), autonomous column/solvent screening | Compliance-ready documentation, method performance monitoring |

| In Silico Platform for UV Filters [5] | QSRR modeling, Monte Carlo simulation, retention prediction | DoE with molecular descriptors, pH/solvent optimization | Specialized for organic UV filters in environmental analysis |

These platforms enable method simulation under different conditions, allowing researchers to visualize potential separations before conducting physical experiments [31]. The ACD/Method Selection Suite incorporates predictive tools for physicochemical properties and column selection, facilitating rational starting condition selection [31]. The software enables modeling in 1D, 2D, or 3D parameter spaces and allows users to define custom suitability criteria based on resolution, run time, and retention factors. Similarly, Empower Method Development Tools automate traditionally manual steps, including creating methods, running and processing data, and comparing outcomes from multiple experimental conditions [34].

Specialized platforms have also been developed for specific environmental applications, such as the in silico platform for UV filters that combines Quantitative Structure-Retention Relationship (QSRR) modeling with Monte Carlo methods to predict chromatographic behavior of organic UV filters without experimentation [5]. This specialized approach demonstrates how computer-assisted method development can be tailored to specific environmental contaminant classes.

Green Chemistry and Sustainability Integration

The adoption of computer-assisted method development directly supports the implementation of green chemistry principles in analytical laboratories [7]. By minimizing trial-and-error experimentation, these approaches significantly reduce solvent consumption and waste generation, key environmental concerns in chromatographic processes.

Software tools contribute to sustainability through:

- Solvent reduction and replacement: In silico modeling enables method development with minimal solvent usage and facilitates the identification of greener solvent alternatives [32]. For example, acetonitrile can be replaced with more environmentally friendly methanol, reducing the Analytical Method Greenness Score (AMGS) from 7.79 to 5.09 while preserving critical resolution [32].

- Waste prevention: Predictive tools minimize unnecessary experiments, preventing waste generation at the source rather than treating it after creation [7].

- Energy efficiency: Methods developed in silico typically require shorter run times and lower solvent volumes, reducing energy consumption associated with solvent delivery and waste disposal [7].

The integration of greenness scoring directly into method development software represents a significant advancement, allowing researchers to visualize both separation performance and environmental impact simultaneously when evaluating different method conditions [32].

Experimental Protocols and Validation

Standardized Experimental Methodology

Validating in silico chromatographic modeling requires rigorous experimental protocols that assess both predictive accuracy and method robustness. The following methodology outlines a standardized approach for validating computer-assisted method development in environmental analysis.

Initial System Configuration and Parameter Selection:

- Analyte Selection and Standard Preparation: Select a representative set of target analytes relevant to environmental monitoring. For UV filter analysis, representative compounds included benzophenone-3, butyl methoxydibenzoilmethane, ethylhexyl triazone, and octocrylene, prepared at appropriate concentrations in suitable solvents [5].

- Instrumental Conditions: Utilize LC/HRMS systems capable of precise mobile phase delivery, column temperature control, and automated sampling. Maintain consistent detection parameters (e.g., UV wavelength, MS ionization mode) throughout method validation.

- Chromatographic Column Selection: Screen multiple stationary phases with different selectivity characteristics using column comparison tools based on Tanaka parameters or hydrophobic subtraction models [29] [31].

- Mobile Phase Composition: Systematically vary organic modifier composition (acetonitrile, methanol), pH (within column stability limits), and additive concentration based on predicted analyte properties.

Data Acquisition and Model Calibration:

- Initial Scouting Runs: Perform a limited set of initial experiments (typically 10-20 runs) across the analytical design space, varying critical parameters identified during in silico modeling [5].

- Retention Time Recording: Precisely measure retention times for all analytes across different chromatographic conditions, ensuring adequate peak resolution and detection sensitivity.

- Model Training: Input experimental retention data into prediction software to train retention models using multiple regression analysis or machine learning algorithms. For the UV filter platform, this approach achieved a determination coefficient (R²) of 99.82% and adjusted determination coefficient (R² adj) of 99.80% [5].

- Model Validation: Conduct additional validation experiments (not used in model training) to assess prediction accuracy, calculating coefficients of prediction (R² pred) to verify model performance [5].

Optimization and Final Validation:

- Algorithm Application: Apply selected optimization algorithms (Bayesian optimization, differential evolution, etc.) to identify optimal chromatographic conditions based on predefined quality criteria [33].

- Method Verification: Experimentally verify predicted optimal conditions, comparing observed versus predicted chromatographic profiles.

- Robustness Testing: Evaluate method robustness by deliberately varying critical parameters (temperature ±2°C, flow rate ±0.1 mL/min, mobile phase composition ±2%) and assessing system suitability criteria [34].

- Greenness Assessment: Calculate environmental impact metrics such as the Analytical Method Greenness Score (AMGS) to quantify sustainability improvements [32].

Research Reagents and Essential Materials

Successful implementation of computer-assisted chromatographic method development requires specific reagents, software, and analytical resources.

Table 3: Essential Research Reagents and Materials for Computer-Assisted Method Development

| Category | Specific Items | Function/Purpose | Examples/Notes |

|---|---|---|---|

| Chromatographic Columns | C18, C8, phenyl, cyano, HILIC, chiral | Stationary phases with different selectivity mechanisms | Selected based on Tanaka parameters or hydrophobic subtraction model [29] |

| Mobile Phase Solvents | Acetonitrile, methanol, tetrahydrofuran, water | Solvent selection based on analyte properties and green chemistry principles | Solvent selection guides (e.g., ACS GCI-PR guide) inform greener choices [7] |

| Additives and Buffers | Formic acid, ammonium acetate, ammonium formate, phosphate buffers | Mobile phase modifiers to control ionization and improve separation | Concentration typically 0.05-0.1%; volatile additives preferred for MS compatibility |

| Software Tools | ACD/Method Selection Suite, Empower, in-house platforms | Method prediction, optimization, and data management | Vendor-neutral tools facilitate data integration from multiple instruments [31] [34] |

| Reference Standards | Target analytes, internal standards, system suitability mixtures | Method development and validation reference materials | Critical for confirming tentative annotations in environmental samples [28] |

Applications in Environmental Analysis

Environmental Case Studies and Applications

Computer-assisted method development has demonstrated significant utility in environmental analysis, particularly for complex sample matrices and emerging contaminants.

Analysis of Organic UV Filters: A specialized in silico platform was developed to predict chromatographic profiles of organic UV filters using QSRR and Monte Carlo methods [5]. The platform utilized molecular descriptors (Wlambda3.unity, ATSc5, and geomShape) alongside chromatographic parameters (ethanol proportion, pH, flow rate, temperature) to build predictive models with exceptional accuracy (R² = 99.82%, R² adj = 99.80%). This approach enabled method development without experimentation, providing comprehensive understanding of retention behavior across various chromatographic conditions specifically for environmental UV filter analysis.

Wastewater Sample Analysis: In silico methods have been applied to structural annotation of LC/HRMS features in wastewater samples, where non-targeted screening typically detects thousands of features [28]. Approaches combining spectral library matching with in silico fragmentation tools (MetFrag, CFM-ID) have enabled tentative identification of hundreds of compounds in complex environmental samples. In one application, 884 and 550 of 3764 and 3845 prioritized LC/HRMS features were tentatively identified in positive and negative ESI modes, respectively, with 25 annotations subsequently confirmed using analytical standards [28].

Greener Method Transformation: Computer-assisted method development enabled the transformation of existing chromatographic methods to greener alternatives while maintaining performance [32]. For example, in silico modeling facilitated the replacement of fluorinated mobile phase additives with chlorinated alternatives, reducing the AMGS from 9.46 to 4.49 while maintaining resolution (1.40 versus fully overlapped). Similarly, acetonitrile was replaced with environmentally friendlier methanol, reducing the AMGS from 7.79 to 5.09 while preserving critical resolution [32].

Performance Benchmarking and Validation Metrics

Rigorous performance assessment is essential for validating computer-assisted method development approaches, particularly for environmental applications where sample complexity presents unique challenges.

Table 4: Performance Metrics for Computer-Assisted Method Development

| Validation Parameter | Assessment Method | Acceptance Criteria | Environmental Application Considerations |

|---|---|---|---|

| Prediction Accuracy | Goodness-of-fit between predicted and experimental retention times | R² > 0.99 for retention models [5] | Matrix effects in environmental samples may reduce accuracy |

| Spectral Matching | Cosine similarity, spectral entropy, MS2DeepScore [28] | Variable based on application; level 2b confidence per Schymanski scale [28] | Environmental samples may contain unknown transformation products |

| Method Greenness | Analytical Method Greenness Score (AMGS) [32] | Lower scores indicate greener methods | Balance greenness with method performance requirements |

| Separation Quality | Resolution, peak capacity, run time | Application-dependent; typically resolution >1.5 between critical pairs | Environmental samples may have higher complexity requiring greater peak capacity |

| Annotation Confidence | Confirmation with analytical standards | Proportion of tentative annotations confirmed | Limited availability of standards for environmental transformation products |

The validation of in silico approaches must also consider practical implementation factors, including computational efficiency and algorithm scalability. Bayesian optimization demonstrates superior data efficiency but becomes impractical for dry optimization requiring large iteration budgets due to unfavorable computational scaling [33]. In contrast, differential evolution offers excellent time efficiency for such applications, highlighting the importance of selecting optimization algorithms aligned with specific environmental analysis goals and computational resources [33].

Computer-assisted chromatographic method development represents a paradigm shift in analytical science, transforming traditional trial-and-error approaches into efficient, predictive workflows. The validation of in silico modeling for environmental analysis provides powerful tools for addressing the complex challenge of identifying and quantifying diverse chemicals in environmental samples. Through the integration of sophisticated optimization algorithms, predictive software platforms, and rigorous validation protocols, researchers can develop high-quality chromatographic methods with significantly reduced time, cost, and environmental impact.

The continued advancement of these approaches will likely focus on improving prediction accuracy for novel chemical entities, expanding application to emerging contaminant classes, and further enhancing sustainability through greener solvent systems and minimized resource consumption. As environmental analytical challenges grow increasingly complex, computer-assisted method development will play an increasingly vital role in enabling comprehensive environmental monitoring and protection.

Application in Non-Targeted Screening (NTS) for Unknown Environmental Chemicals