XGBoost vs. Random Forest: A Comparative Analysis for Advanced Environmental Modeling

This article provides a comprehensive comparative analysis of two powerful ensemble machine learning algorithms, XGBoost and Random Forest, within environmental science applications.

XGBoost vs. Random Forest: A Comparative Analysis for Advanced Environmental Modeling

Abstract

This article provides a comprehensive comparative analysis of two powerful ensemble machine learning algorithms, XGBoost and Random Forest, within environmental science applications. Tailored for researchers and data scientists, it explores the foundational principles, methodological applications, and optimization strategies for both models. Drawing on recent, high-impact studies across air and water quality, climate science, and renewable energy, we dissect their performance, computational efficiency, and suitability for specific environmental tasks. The analysis synthesizes evidence-based guidance on model selection, tuning, and validation to empower professionals in building more accurate, efficient, and interpretable predictive tools for tackling complex ecological challenges.

Understanding XGBoost and Random Forest: Core Principles for Environmental Science

Ensemble learning has emerged as a powerful paradigm in machine learning, combining multiple models to achieve superior predictive performance compared to individual estimators. Within this domain, two fundamentally distinct approaches—bagging (Bootstrap Aggregating) and boosting—have demonstrated remarkable effectiveness across diverse applications. Random Forest exemplifies the bagging approach, while XGBoost (Extreme Gradient Boosting) represents a sophisticated implementation of boosting. In environmental research, where predictive accuracy directly impacts decision-making for contamination prevention, resource management, and public health protection, selecting the appropriate ensemble method is crucial. This guide provides a comprehensive comparison of these two dominant paradigms, supported by experimental data and methodological frameworks tailored for scientific applications.

Theoretical Foundations

Bagging and the Random Forest Algorithm

Bagging, or Bootstrap Aggregating, is a parallel ensemble method designed primarily to reduce variance and prevent overfitting. The algorithm creates multiple subsets of the original dataset through bootstrap sampling (sampling with replacement), trains a base model (typically a decision tree) on each subset independently, and aggregates their predictions through averaging (for regression) or majority voting (for classification) [1] [2].

Random Forest extends this concept by incorporating feature randomness along with data randomness. When building each tree, instead of considering all features for splits, it randomly selects a subset of features at each candidate split, further decorrelating the trees and enhancing the ensemble's robustness [3] [4]. This dual randomization—of data and features—makes Random Forest particularly resistant to overfitting, even with noisy environmental datasets.

Boosting and the XGBoost Algorithm

Boosting represents a sequential ensemble approach where models are built consecutively, with each new model focusing on the errors of its predecessors. Unlike bagging's parallel construction, boosting creates an additive model where subsequent weak learners are trained to correct the residual errors of the combined existing ensemble [1] [2].

XGBoost is an advanced gradient boosting implementation that optimizes the model training process through several key innovations: a regularized objective function (L1 and L2 regularization) to control model complexity, more accurate tree pruning using a maximum depth parameter followed by backward pruning, handling of missing values, and computational optimizations like weighted quantile sketch for efficient candidate split proposal [3] [4]. The algorithm builds trees sequentially, with each tree learning from the mistakes of previous trees through gradient descent, progressively minimizing a differentiable loss function.

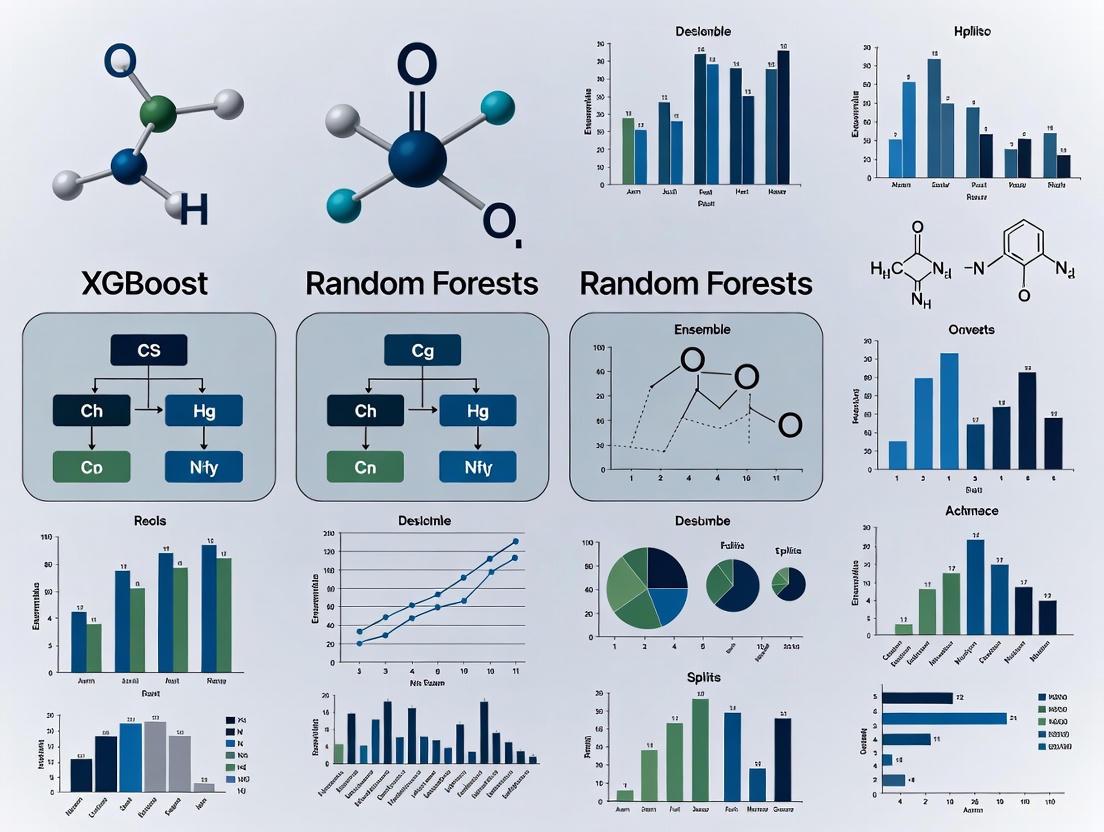

Figure 1: Sequential Workflow of Boosting Algorithms like XGBoost

Methodological Comparison

Architectural Differences

The fundamental distinction between these paradigms lies in their training methodologies. Random Forest employs a parallel architecture where trees are built independently, while XGBoost utilizes a sequential approach where each tree depends on its predecessors [3] [4].

Figure 2: Architectural Comparison of Bagging and Boosting Approaches

Handling of Overfitting

Both algorithms employ distinct strategies to prevent overfitting. Random Forest utilizes its inherent randomness—both in data sampling (bootstrap aggregation) and feature selection—to create diverse trees whose collective prediction generalizes well [4]. The ensemble nature averages out individual tree variances.

XGBoost incorporates explicit regularization terms (L1 and L2) into its objective function, which penalizes complex models to prevent overfitting [4]. Additionally, it employs tree pruning techniques, stopping tree growth when no significant positive gain is detected, resulting in simpler, more generalized trees compared to standard decision trees [3].

Performance on Class Imbalance

In environmental applications with inherent class imbalances (e.g., rare contamination events), XGBoost typically demonstrates superior performance. The algorithm naturally handles imbalance through its iterative focus on misclassified instances and the scale_pos_weight parameter that adjusts weights for the minority class [3] [4]. Random Forest lacks an inherent mechanism for class imbalance, though it can be mitigated through techniques like class-weighted voting or balanced bootstrap samples.

Experimental Comparison in Environmental Applications

Soil and Groundwater Contamination Prediction

A recent study evaluated XGBoost, LightGBM, and Random Forest for predicting soil and groundwater contamination risks from gas stations, utilizing field data from basic and environmental information, maintenance records, and environmental monitoring [5]. The models were assessed using multiple performance metrics with the following results:

Table 1: Performance Comparison for Contamination Risk Prediction

| Model | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) | AUC-ROC |

|---|---|---|---|---|---|

| XGBoost | 87.4 | 88.3 | 87.2 | 87.8 | 0.95 |

| LightGBM | 86.2 | 87.1 | 85.3 | 86.2 | 0.94 |

| Random Forest | 85.1 | 86.6 | 83.0 | 84.8 | 0.93 |

The study concluded that while all three models demonstrated satisfactory predictive capabilities, XGBoost exhibited optimal performance across all evaluation metrics [5]. The consistency across metrics suggests XGBoost's advantage in capturing complex contamination patterns in environmental data.

Air Quality Classification

Another comparative analysis classified Jakarta's Air Pollution Index (ISPU) into three categories (Good, Moderate, Unhealthy) using Logistic Regression, Random Forest, and XGBoost [6]. The research employed 1,367 data points combining weather and air quality data from 2021-2024 and evaluated three feature selection scenarios:

Table 2: Air Quality Classification Accuracy with Different Feature Selection Methods

| Model | No Feature Selection | Random Projection | Pearson Correlation |

|---|---|---|---|

| XGBoost | 98.91% | 97.25% | 98.91% |

| Random Forest | 97.08% | 95.61% | 97.08% |

| Logistic Regression | 96.41% | 89.74% | 96.41% |

XGBoost consistently achieved the highest accuracy across all feature selection scenarios, demonstrating particular robustness when using Pearson Correlation for feature selection [6]. The research highlighted that tree-based methods like XGBoost and Random Forest benefited significantly from appropriate feature selection, improving both accuracy and interpretability.

Aquaculture Water Quality Management

A 2025 study developed machine learning models for optimizing water quality management decisions in tilapia aquaculture, comparing Random Forest, Gradient Boosting, XGBoost, Support Vector Machines, Logistic Regression, and Neural Networks [7]. Using a synthetic dataset representing 20 critical water quality scenarios with 21 comprehensive parameters, the research found that multiple models including Random Forest, Gradient Boosting, XGBoost, and Neural Networks achieved perfect accuracy on the held-out test set. Cross-validation confirmed high performance across all top models, with the Neural Network achieving the highest mean accuracy (98.99% ± 1.64%), though XGBoost and Random Forest also demonstrated exceptional performance in this environmental management application [7].

The Researcher's Toolkit

Essential Algorithm Parameters

Table 3: Key Hyperparameters for Random Forest and XGBoost

| Parameter | Random Forest | XGBoost | Function |

|---|---|---|---|

| Number of Trees | n_estimators |

n_estimators |

Controls number of weak learners in ensemble |

| Tree Complexity | max_depth |

max_depth |

Limits tree depth to prevent overfitting |

| Feature Sampling | max_features |

colsample_by* |

Controls fraction of features used for splits |

| Instance Sampling | max_samples |

subsample |

Controls fraction of data used for each tree |

| Learning Rate | Not applicable | learning_rate (eta) |

Shrinks feature weights to make boosting more robust |

| Regularization | Not inherent | reg_alpha, reg_lambda |

L1 and L2 regularization to prevent overfitting |

Experimental Protocol Guidelines

For researchers conducting comparative studies between Random Forest and XGBoost in environmental applications, the following methodological framework is recommended:

Data Preprocessing: Address missing values, scale numerical features, and encode categorical variables. XGBoost has built-in missing value handling, while Random Forest requires explicit imputation [4].

Class Imbalance Treatment: For contamination prediction with rare events, employ techniques like SMOTETomek (as used in the aquaculture study) [7] or adjust class weights (

class_weightin Random Forest,scale_pos_weightin XGBoost) [3].Feature Selection: Implement correlation-based feature selection (like Pearson Correlation) to enhance model performance and interpretability, particularly beneficial for tree-based methods [6].

Hyperparameter Tuning: Utilize grid search or Bayesian optimization with cross-validation. For XGBoost, include learning rate, regularization parameters, and early stopping rounds [8].

Evaluation Metrics: Employ multiple metrics including accuracy, precision, recall, F1-score, and AUC-ROC curves, as environmental decisions often require balancing different types of errors [5].

Validation Strategy: Implement k-fold cross-validation (typically 10-fold as used in multiple studies) with held-out test sets to ensure robustness of results [6].

Both Random Forest and XGBoost represent powerful ensemble methods with distinct characteristics suited to different environmental research applications. Random Forest, with its parallel architecture and inherent simplicity, provides robust performance with less extensive hyperparameter tuning, making it suitable for initial explorations and when model interpretability is prioritized. XGBoost, with its sequential error-correction approach and regularization capabilities, typically achieves higher predictive accuracy at the cost of increased computational complexity and more intensive parameter optimization.

The consistent outperformance of XGBoost across multiple environmental applications—from contamination prediction to air quality classification—suggests its superiority when maximum predictive accuracy is the primary objective. However, Random Forest remains a formidable alternative, particularly in scenarios with limited computational resources or when requiring rapid model prototyping. The selection between these ensemble paradigms should be guided by specific project requirements, data characteristics, and operational constraints, with the experimental evidence provided offering a foundation for informed algorithmic decision-making in environmental research contexts.

In the domain of machine learning, ensemble methods significantly enhance predictive performance by combining multiple models. Random Forest and XGBoost represent two fundamentally different approaches to this combination. Random Forest employs a technique called bagging (Bootstrap Aggregating), building multiple decision trees independently and in parallel [9] [10]. In contrast, XGBoost (eXtreme Gradient Boosting) utilizes a boosting technique, constructing decision trees sequentially, with each new tree learning from the errors of its predecessors [11] [12]. This core mechanistic difference—parallel independence versus sequential dependency—shapes their respective strengths, performance characteristics, and suitability for various applications, including environmental research where predictive accuracy and model interpretability are paramount.

The Inner Workings of Random Forest

The Random Forest algorithm, trademarked by Leo Breiman and Adele Cutler, creates its "forest" by introducing randomness into the construction of multiple decision trees, ensuring they are decorrelated [9] [13].

The Pillars of Random Forest: Bagging and Feature Randomness

The algorithm's robustness stems from two key randomization techniques applied during training:

- Bagging (Bootstrap Aggregating): Each tree in the forest is trained on a different bootstrap sample of the original training data [10]. This involves randomly selecting data points with replacement, meaning the same data point can appear multiple times in a single tree's training subset. Some data points are left out of each sample; these are called "out-of-bag" (OOB) samples and can be used for internal cross-validation [9].

- Feature Randomness (The Random Subspace Method): When splitting a node during the construction of a tree, the algorithm does not consider all available features. Instead, it randomly selects a subset of features (e.g., the square root of the total number of features for classification) and finds the best split from within this subset [10]. This process is repeated at every node.

These two sources of randomness ensure that the individual decision trees in the forest are diverse and not highly correlated with one another [9] [10].

Independent and Parallel Tree Construction

A critical characteristic of Random Forest is that each decision tree is constructed independently [14]. There is no flow of information or feedback between trees during the training process. The algorithm can be summarized as follows:

- For each tree in the forest (e.g., 100, 500, or 1000 trees):

- Take a bootstrap sample from the training data.

- Build a decision tree using this sample by recursively splitting nodes. At each node, only a random subset of features is considered for the split.

- Grow the tree fully or until a stopping criterion is met, typically without pruning [10].

Because the trees are independent, the entire process is embarrassingly parallel. All trees can be built simultaneously if sufficient computational resources are available, which can significantly speed up training time on large datasets [14].

The Prediction Process: Aggregation

Once all trees are built, predictions are made by aggregating the results from every tree in the forest.

- For classification tasks, the final prediction is determined by majority voting. Each tree "votes" for a class, and the class with the most votes wins [13] [15].

- For regression tasks, the final prediction is the average of the predictions from all the individual trees [13] [15].

This aggregation of numerous, slightly different models reduces overall variance and mitigates the overfitting commonly seen in single, complex decision trees [9].

Diagram 1: Random Forest parallel training and aggregation workflow.

The Sequential Power of XGBoost

XGBoost is an advanced implementation of gradient boosting that builds models in a sequential, additive manner, with each new model focusing on the mistakes of the previous ones [11] [12].

The Core Boosting Mechanism: Learning from Errors

Unlike Random Forest, XGBoost builds its ensemble of trees one after the other, and each new tree is trained to correct the residual errors of the combined previous trees. The process is as follows:

- Initialization: Start with a simple initial prediction (e.g., the mean of the target variable for regression or the log-odds for classification) [16].

- Sequential Iteration: For each subsequent boosting round (t=1 to T):

a. Compute Residuals/Gradients: Calculate the pseudo-residuals (negative gradients) of the loss function (e.g., squared error for regression, log loss for classification) with respect to the current ensemble's predictions [16]. For a squared error loss, this is simply

(Actual Value - Predicted Value). b. Build a Tree to Predict Residuals: A new, typically shallow, decision tree is built to predict these residuals. This tree identifies patterns in the errors of the current model. c. Update the Ensemble: The new tree's predictions are added to the existing ensemble's predictions to form an improved model. The contribution of the new tree is controlled by a learning rate (eta), a small value (e.g., 0.1) that prevents overfitting by taking small, cautious steps [11] [16].

Key Optimizations in XGBoost

XGBoost incorporates several advanced features that contribute to its "eXtreme" performance and efficiency:

- Regularization: The objective function includes L1 (Lasso) and L2 (Ridge) regularization terms that penalize model complexity, directly helping to prevent overfitting [11] [12].

- Second-Order Gradient Optimization: XGBoost uses both the first (gradient) and second (Hessian) derivatives of the loss function for a more precise and faster optimization process [17].

- Handling Missing Values: The algorithm can automatically learn the best direction to handle missing data values during training [12].

- Stochastic Boosting: Inspired by Random Forest, XGBoost can introduce randomness by using random subsets of the data (row sampling) and/or features (column sampling) for each tree, which increases robustness and further reduces overfitting [16].

Dependent and Sequential Tree Construction

The trees in an XGBoost model form a single, dependent hierarchy [16]. The structure and purpose of Tree t are entirely dependent on the collective errors made by Trees 1 to t-1. This sequential dependency means the training process is inherently sequential and cannot be parallelized in the same way as Random Forest. The final prediction is the sum of the predictions from all trees in the sequence, each weighted by the learning rate [11].

Diagram 2: XGBoost sequential training and residual correction workflow.

Direct Comparative Analysis: Random Forest vs. XGBoost

The table below provides a structured comparison of the two algorithms based on their core mechanics and characteristics.

Table 1: Algorithmic comparison between Random Forest and XGBoost.

| Feature | Random Forest | XGBoost |

|---|---|---|

| Core Mechanism | Bagging (Bootstrap Aggregating) [9] | Boosting (Gradient Boosting) [11] |

| Tree Relationship | Independent, built in parallel [14] | Dependent, built sequentially [16] |

| Goal of New Tree | To grow a deep, unpruned tree on a random data/feature subset [10] | To correct the residuals/errors of the previous ensemble [16] |

| Randomization | Bootstrap sampling & feature subset per tree [10] | Stochastic options: data/feature subsampling per round [16] |

| Overfitting Control | Averaging many uncorrelated trees [9] | Learning rate, regularization, & early stopping [11] [12] |

| Prediction Aggregation | Majority vote (classification) or averaging (regression) [15] | Summation of weighted tree predictions [11] |

| Parallelization | High (Trees are built independently) [14] | Limited (Tree building is sequential) |

Performance and Practical Considerations

- Bias-Variance Tradeoff: Random Forest primarily reduces variance by averaging multiple deep, potentially overfit trees. XGBoost reduces bias by sequentially focusing on difficult-to-predict instances [14].

- Training Speed: Random Forest can be faster to train on multi-core systems due to its parallel nature. XGBoost training is sequential but is highly optimized for speed and can be faster on a single core, especially with its ability to handle missing data internally [12].

- Hyperparameter Tuning: Random Forest is generally simpler to tune (key parameters: number of trees, features per split). XGBoost has more hyperparameters (e.g., learning rate, max depth, regularization terms) which can lead to superior performance but requires more careful tuning [11].

Experimental Protocols for Environmental Research

To objectively evaluate these algorithms in a research context, such as predicting pollutant levels or species distribution, a standardized experimental protocol is essential.

Common Workflow for Model Comparison

Data Preparation:

- Dataset: Use a relevant environmental dataset (e.g., atmospheric sensor data, soil chemistry measurements, satellite imagery features).

- Preprocessing: Handle missing values. For Random Forest, imputation may be needed, whereas XGBoost can handle them natively [12]. Split data into training (70%), validation (15%), and test (15%) sets.

Model Training & Hyperparameter Tuning:

- Random Forest: Perform a grid search over key parameters like

n_estimators(number of trees) andmax_features(number of features considered per split). Use out-of-bag error or cross-validation on the training set [9] [13]. - XGBoost: Perform a grid or random search over

learning_rate,max_depth,subsample,colsample_bytree, and regularization parameters (lambda,alpha). Use the validation set for early stopping to determine the optimal number of rounds [18] [12].

- Random Forest: Perform a grid search over key parameters like

Model Evaluation:

- Evaluate the final models on the held-out test set using task-appropriate metrics.

- For Regression (e.g., predicting temperature or concentration): Use Root Mean Squared Error (RMSE) and R-squared (R²).

- For Classification (e.g., land cover classification): Use Accuracy, F1-Score, and Area Under the ROC Curve (AUC).

The Scientist's Toolkit: Essential Software and Hyperparameters

Table 2: Key software implementations and hyperparameters for researchers.

| Tool / Parameter | Function / Purpose | Relevant Algorithm |

|---|---|---|

scikit-learn (RandomForestRegressor/Classifier) |

Python library for implementing Random Forest [13]. | Random Forest |

xgboost (XGBRegressor/XGBClassifier) |

Python library for the optimized XGBoost algorithm [18] [12]. | XGBoost |

n_estimators |

Number of trees in the forest/boosting rounds. | Both |

max_features / colsample_bytree |

Controls the randomness of feature selection. | Both |

learning_rate (eta) |

Shrinks the contribution of each tree to prevent overfitting. | XGBoost |

max_depth |

Maximum depth of each tree, controlling model complexity. | Both |

subsample |

Fraction of training data used for a tree/boosting round. | Both |

lambda / alpha |

L2 and L1 regularization terms on weights. | XGBoost |

Random Forest and XGBoost, while both being powerful tree-based ensemble methods, are founded on distinct algorithmic philosophies. Random Forest leverages independent, parallel tree construction through bagging and feature randomness, creating a robust model that is highly resistant to overfitting and easy to parallelize. XGBoost employs a sequential, dependent tree construction where each new tree corrects the errors of the previous ones, a process refined with regularization and advanced optimization to often achieve state-of-the-art predictive accuracy. The choice between them in environmental science, or any field, depends on the specific problem constraints, the need for interpretability versus absolute accuracy, and the available computational resources. Understanding their fundamental mechanics is the first step toward making an informed modeling decision.

In environmental science, where data is often complex, noisy, and limited, the selection of an appropriate machine learning model is paramount. The bias-variance tradeoff represents a fundamental concept in this selection process, dictating a model's ability to capture genuine ecological patterns (bias) versus its susceptibility to learning spurious noise in the training data (variance). This guide provides a comparative analysis of two dominant ensemble algorithms—XGBoost and Random Forests—within the context of environmental applications. We objectively evaluate their performance through experimental data, detail methodological protocols from relevant environmental studies, and provide resources to inform researchers and scientists in deploying these models effectively.

Theoretical Foundations: Bias and Variance in Tree Ensembles

Conceptualizing the Tradeoff

The bias of a model is the error arising from its simplifying assumptions about the underlying data relationship, leading to underfitting. The variance is the error from sensitivity to fluctuations in the training set, leading to overfitting [19]. The goal is to minimize the total expected error, which is the sum of bias², variance, and irreducible error [20].

Algorithmic Approaches: Bagging vs. Boosting

Random Forest and XGBoost both create ensembles of decision trees but use different strategies to manage the bias-variance tradeoff.

- Random Forest (Bagging): This method builds multiple deep, complex trees in parallel on bootstrapped data samples. Individual trees have low bias but high variance. By averaging their predictions, Random Forest effectively reduces the final variance without significantly increasing bias [20] [21].

- XGBoost (Boosting): This method builds trees sequentially, where each new tree corrects the errors of the previous ensemble. The trees are typically weaker (higher bias), but the additive model progressively reduces the overall bias. XGBoost integrates strong regularization (L1 and L2) directly into its objective function to control the complexity of the trees, thereby managing variance [20] [17].

The diagram below illustrates the core structural and operational differences between these two approaches.

Experimental Performance Comparison in Environmental Applications

Empirical studies across diverse environmental domains provide concrete evidence of how these theoretical tradeoffs translate into performance.

Quantitative Performance Metrics

The following table summarizes key findings from recent environmental research, comparing the performance of XGBoost and Random Forest.

Table 1: Comparative Model Performance in Environmental Research Studies

| Application Domain | Primary Metric | XGBoost Performance | Random Forest Performance | Key Findings and Context |

|---|---|---|---|---|

| Habitat Suitability Modeling(Bird Species in Ethiopia) [22] | AUC-ROC | 0.99 | 0.98 | XGBoost achieved the highest predictive accuracy; Precipitation of the driest month was the most critical environmental variable. |

| Air Quality Classification(Jakarta, Indonesia) [6] | Accuracy | 98.91% | 97.08% | XGBoost consistently outperformed Random Forest across different feature selection scenarios. |

| Air Quality Classification(Jakarta, Indonesia) [6] | F1-Score | Highest | High | XGBoost achieved the highest F1 score, indicating superior precision-recall balance. |

| Customer Churn Prediction(Imbalanced Data) [23] | F1-Score | Consistently Highest(with SMOTE) | Poor under severe imbalance | Highlights XGBoost's robustness to class imbalance, a common issue in ecological data like rare species detection. |

Analysis of Experimental Results

The data consistently shows that XGBoost often holds a slight performance edge over Random Forest in terms of pure predictive accuracy (e.g., AUC, Accuracy, F1-Score). This can be attributed to its sequential, error-correcting nature and built-in regularization, which allows it to model complex, non-linear relationships in environmental data effectively without overfitting [20] [17].

However, the choice is context-dependent. For instance, in the study on imbalanced data [23], Random Forest's performance degraded significantly, whereas XGBoost, especially when paired with sampling techniques like SMOTE, remained robust. This suggests that for problems like predicting rare species occurrences or extreme pollution events, XGBoost might be the more reliable choice.

Detailed Experimental Protocols from Cited Studies

To ensure reproducibility and provide a clear methodological framework, this section outlines the experimental designs from key studies referenced in this guide.

- Objective: To model the current and future habitat suitability for Crithagra xantholaema in Ethiopia under climate change scenarios.

- Data:

- Occurrence Data: 188 spatially filtered presence records from the Global Biodiversity Information Facility (GBIF).

- Predictor Variables: 19 bioclimatic variables (e.g., annual mean temperature, precipitation of the driest month) at ~1 km² resolution from WorldClim.

- Model Training & Tuning:

- Four models were trained: XGBoost, Random Forest, Support Vector Machine, and MaxEnt.

- Models were tuned and evaluated using a suite of metrics, including AUC-ROC, accuracy, precision, and F1-score.

- Future Projections: The trained models were projected onto future climate scenarios (2050 and 2070) from the HadGEM3-GC31-LL CMIP6 model under SSP245 and SSP585 pathways.

- Objective: To classify Jakarta's Air Pollution Index (ISPU) into categories (Good, Moderate, Unhealthy) using weather and air quality data.

- Data: 1,367 data points combining weather and air quality measurements from 2021-2024.

- Experimental Design:

- Algorithms Compared: Logistic Regression, Random Forest, and XGBoost.

- Feature Selection: Three scenarios were tested: no feature selection, Random Projection, and Pearson Correlation.

- Evaluation: Models were evaluated based on F1-score, 10-fold cross-validation, accuracy, precision, and recall.

Implementing and experimenting with these models requires a standard set of computational tools and data sources. The following table details essential "research reagents" for environmental machine learning workflows.

Table 2: Essential Computational Tools and Data Sources for Environmental ML

| Tool / Resource | Type | Primary Function in Research | Example in Cited Studies |

|---|---|---|---|

| XGBoost Library | Software Library | Provides scalable implementation of gradient boosting for training and prediction. | Used as the primary model in all cited XGBoost applications [6] [20] [22]. |

| Scikit-learn | Software Library | Offers implementations of Random Forest, Logistic Regression, and data preprocessing tools. | Serves as a common benchmark and tool for model comparison [6] [24]. |

| WorldClim Database | Data Repository | Provides global, high-resolution historical and future climate data. | Source of 19 bioclimatic variables for habitat suitability modeling [22]. |

| Global Biodiversity Info Facility (GBIF) | Data Repository | Aggregates and provides access to species occurrence data from worldwide sources. | Source of 188 presence records for Crithagra xantholaema [22]. |

| SMOTE | Algorithm | Synthetically generates samples for the minority class to address class imbalance. | Used with XGBoost to improve performance on severely imbalanced churn data [23]. |

The comparative analysis reveals that both XGBoost and Random Forest are powerful tools for environmental research. XGBoost, with its bias-reducing, sequential boosting and integrated regularization, frequently demonstrates a small but consistent advantage in predictive accuracy across diverse tasks, from habitat modeling to air quality classification. It shows particular strength in handling imbalanced datasets. Random Forest remains a highly robust and effective method, often achieving performance very close to XGBoost, and operates through a simpler, parallelizable training process that is less prone to overfitting on its own.

The ultimate choice between them should be guided by the specific problem, data characteristics, and computational resources. For researchers seeking the highest possible predictive performance and are willing to engage in careful hyperparameter tuning, XGBoost is an excellent choice. For applications requiring a robust, quickly-deployable baseline model with less tuning, Random Forest is a remarkably effective and reliable alternative.

This guide provides an objective comparison of two dominant machine learning algorithms, XGBoost and Random Forest, with a specific focus on their application in environmental and drug development research. For scientists in these fields, selecting and properly tuning an algorithm is crucial for building predictive models with high real-world validity, whether for forecasting air quality or predicting drug entrapment efficiency.

The following sections break down the core hyperparameters for each model, present comparative experimental data from relevant research, and provide methodologies for optimization.

Core Hyperparameters at a Glance

The performance of tree-based models is highly dependent on the configuration of their hyperparameters. The tables below summarize the critical levers for each algorithm.

Random Forest Hyperparameters

| Hyperparameter | Function & Impact on Model | Default Value | Common Tuning Range |

|---|---|---|---|

n_estimators |

Number of trees in the forest. More trees generally increase performance but also computational cost. [25] [26] | 100 [26] | 100 to 1000 [25] |

max_features |

Max features considered for a split. Lower values increase diversity and reduce overfitting. [25] [26] | "sqrt" [26] |

"sqrt", "log2", 0.2 (20%) [25] |

max_depth |

Maximum depth of each tree. Limits tree complexity; None allows full expansion. [26] |

None [26] |

3 to 20, or None [26] |

min_samples_leaf |

Minimum samples required to be at a leaf node. Larger values prevent overfitting on noisy data. [25] | 1 [25] | 1 to 50+ [25] |

min_samples_split |

Minimum samples required to split an internal node. [26] | 2 [26] | 2, 5, 10 [26] |

bootstrap |

Whether to use bootstrap samples when building trees. [26] | True [26] |

True, False [26] |

XGBoost Hyperparameters

| Hyperparameter | Function & Impact on Model | Default Value | Common Tuning Range |

|---|---|---|---|

n_estimators |

Number of boosting rounds (trees). [27] | - | 100 to 1000 [27] |

learning_rate/eta |

Shrinks feature weights at each step, making the boosting process more conservative. [28] | 0.3 [28] | 0 to 1 [28] [27] |

max_depth |

Maximum depth of a tree. Increased depth makes model more complex. [28] | 6 [28] | 1 to 20 [27] |

subsample |

Subsample ratio of training instances. Prevents overfitting. [28] | 1 [28] | 0.5 to 1 [27] |

colsample_bytree |

Subsample ratio of columns when constructing each tree. [28] | 1 [28] | 0.5 to 1 [27] |

reg_alpha |

L1 regularization term on weights. Increases model conservatism. [28] | 0 [28] | 10e-7 to 10 [27] |

reg_lambda |

L2 regularization term on weights. Increases model conservatism. [28] | 1 [28] | 0 to 1 [27] |

gamma |

Minimum loss reduction required to make a further partition. A form of regularization. [28] | 0 [28] | 0 to 100 [27] |

scale_pos_weight |

Controls balance of positive/negative weights for unbalanced classes. [28] | 1 [28] | e.g., sum(negative) / sum(positive) [28] |

Comparative Performance in Research Applications

Experimental data from real-world research demonstrates how these algorithms perform in practice. The table below summarizes results from environmental science and pharmaceutical development studies.

| Research Context | Algorithm | Key Performance Metrics | Best Feature Selection Method | Reference / Dataset |

|---|---|---|---|---|

| Air Quality Index Classification (Jakarta) [6] | XGBoost | Accuracy: 98.91% | Pearson Correlation | 1,367 data points (weather & air quality, 2021-2024) [6] |

| Random Forest | Accuracy: 97.08% | Pearson Correlation | ||

| Logistic Regression | Lower Accuracy (suffers when features are removed) | - | ||

| Liposomal Drug Entrapment Prediction [29] | XGBoost & Random Forest | Identified key predictive factors: water solubility, drug log P, size. | Genetic Algorithm | 500 data points [29] |

Tuning Methodologies and Protocols

To achieve the performance levels cited, researchers must systematically tune hyperparameters. Below are detailed protocols for the most common and effective methods.

Protocol 1: Grid Search with Cross-Validation

This method performs an exhaustive search over a predefined set of hyperparameters.

Protocol 2: Randomized Search with Cross-Validation

This method is more efficient than GridSearchCV for large parameter spaces, as it evaluates a fixed number of random parameter combinations. [26]

Protocol 3: Bayesian Optimization with Hyperopt

A superior, more efficient automatic tuning technique that uses Bayesian methods (TPE) to model the hyperparameter space. [27]

The Scientist's Toolkit: Essential Research Reagents & Solutions

Building and tuning these models requires a standard set of software tools and libraries.

| Item / Solution | Function in Research | Typical Use Case |

|---|---|---|

| Scikit-learn | Provides implementations of Random Forest, Logistic Regression, and tuning tools like GridSearchCV. [26] |

General-purpose machine learning, data preprocessing, and model evaluation. |

| XGBoost Library | Highly optimized library for gradient boosting; offers Scikit-learn compatible interfaces as well as its own API. [28] [30] | High-performance boosting for structured/tabular data where maximum accuracy is desired. |

| Hyperopt | A Python library for Bayesian optimization over complex search spaces. [27] | Efficiently finding the best hyperparameters when the search space is large. |

| Genetic Algorithms | An optimization technique inspired by natural selection, used for feature selection and hyperparameter tuning. [29] | Simultaneously optimizing model parameters and selecting the most informative features from a dataset. |

Algorithmic Workflows: From Data to Prediction

Understanding the fundamental difference in how Random Forest and XGBoost build their models is key to effective tuning. The diagrams below illustrate their core workflows.

Random Forest uses bagging to build independent trees in parallel and aggregates their results. [4] [3]

XGBoost uses boosting to build trees sequentially, with each new tree correcting the errors of the previous ones. [4] [30] [3]

XGBoost and Random Forest in Action: Diverse Environmental Case Studies

The rapid degradation of environmental quality due to industrialization and urbanization has necessitated the development of advanced monitoring and prediction systems. Machine learning (ML) has emerged as a powerful tool for accurately forecasting air and water quality parameters, enabling proactive environmental management. Within this domain, ensemble learning algorithms, particularly eXtreme Gradient Boosting (XGBoost), have demonstrated exceptional performance in handling complex, nonlinear environmental data.

This comparative analysis examines the application of XGBoost relative to other machine learning models, including Random Forest, LightGBM, and traditional algorithms, within the specific contexts of air quality index classification and wastewater parameter forecasting. The performance evaluation is grounded in empirical evidence from recent scientific studies, focusing on key metrics such as predictive accuracy, robustness, and interpretability. Understanding the relative strengths of these algorithms provides researchers and environmental professionals with critical insights for selecting appropriate modeling approaches to address specific environmental forecasting challenges.

Performance Comparison of Machine Learning Models

Air Quality Index (AQI) Prediction

The accurate classification and prediction of air quality indices are crucial for public health advisories and environmental policy. Recent research consistently shows that ensemble methods, especially XGBoost, achieve superior performance in this domain.

Table 1: Model Performance in Air Quality Index Classification and Prediction

| Study Focus | Best Performing Model | Key Performance Metrics | Comparative Models | Data Source |

|---|---|---|---|---|

| Jakarta's Air Pollution Index Classification [6] | XGBoost | Accuracy: 98.91% (with Pearson Correlation feature selection) | Random Forest, Logistic Regression | 1,367 data points (weather & air quality, 2021-2024) |

| Daily AQI Prediction in Eastern Türkiye [31] | XGBoost | R²: 0.999, RMSE: 0.234, MAE: 0.158 | LightGBM, Support Vector Machine (SVM) | Meteorological and pollutant data (2016-2024) |

| AQI Prediction in Indian Urban Areas [31] | XGBoost | R²: 0.9850, RMSE: 11.2696, MAE: 8.3845 | AdaBoost, CatBoost, Random Forest, SVM | PM2.5, PM10, NO2, SO2, meteorological data |

In a direct comparison for classifying Jakarta's Air Pollution Index, XGBoost not only achieved the highest accuracy but also demonstrated consistent superiority across all feature selection scenarios tested, including without feature selection, Random Projection, and Pearson Correlation [6]. Random Forest also showed strong performance with an accuracy of 97.08%, particularly when using Pearson Correlation for feature selection, while Logistic Regression's performance was more susceptible to feature elimination [6]. The long-term assessment in eastern Türkiye further cemented XGBoost's leading position for regression-based AQI prediction, showcasing its ability to model complex AQI fluctuations with remarkable precision using meteorological and pollutant predictors [31].

Water Quality and Wastewater Parameter Forecasting

The application of machine learning in water science spans from predicting the Water Quality Index (WQI) in natural rivers to forecasting critical effluent parameters in wastewater treatment plants (WWTPs). Ensemble methods dominate this sphere as well.

Table 2: Model Performance in Water Quality and Wastewater Forecasting

| Study Focus | Best Performing Model | Key Performance Metrics | Comparative Models | Key Influential Parameters |

|---|---|---|---|---|

| Water Quality Classification [32] | LightGBM & XGBoost | Accuracy: 99.65% | Random Forest, Support Vector Machines | Dissolved Oxygen (DO), Biological Oxygen Demand (BOD), Turbidity |

| WQI Prediction [32] | XGBoost | R²: 0.9685 | LightGBM, Random Forest | Dissolved Oxygen (DO), BOD |

| Stacked Ensemble for WQI Prediction [33] | Stacked Ensemble (XGBoost, CatBoost, RF, etc.) | R²: 0.9952, MAE: 0.7637, RMSE: 1.0704 | Individual base models (XGBoost, CatBoost, etc.) | DO, BOD, Conductivity, pH |

| Wastewater Effluent Prediction [34] | Gradient Boosting & XGBoost | MAE: 3.667, R²: 97.53% (Total Nitrogen) | Decision Tree, Random Forest, LightGBM | Effluent Volatile Suspended Solids (VSS) |

For water quality classification, LightGBM and XGBoost achieved state-of-the-art accuracy, nearing perfect classification scores [32]. In WQI prediction, a stacked ensemble model that incorporated XGBoost as a base learner achieved the highest reported performance, outperforming all individual models, including a standalone XGBoost [33]. This highlights that while XGBoost is exceptionally powerful, its capabilities can be further enhanced through meta-ensemble approaches. In wastewater treatment, different ensemble models excelled at predicting different parameters; Gradient Boosting was best for Total Suspended Solids (TSS) and Total Nitrogen, while XGBoost was superior for Chemical Oxygen Demand (COD) and Biochemical Oxygen Demand (BOD) prediction [34]. A consistent finding across water and air studies is the significant performance gain achieved through hyperparameter tuning [32].

Experimental Protocols and Methodologies

The superior performance of the models discussed above is underpinned by rigorous and systematic experimental methodologies. The following workflows are representative of the protocols used in the cited research.

Generalized Workflow for Environmental Quality Prediction

The following diagram illustrates the common end-to-end pipeline for developing machine learning models in this domain.

Detailed Methodological Breakdown

Data Preprocessing and Feature Selection

The initial stage involves preparing the raw environmental data for modeling. This typically includes:

- Data Cleaning: Handling missing values through methods like median imputation [33] and addressing outliers using techniques such as the Interquartile Range (IQR) method [33].

- Normalization: Scaling numerical features to a common range to ensure stable and efficient model training [33].

- Feature Selection: Identifying the most predictive input variables is a critical step. Studies have systematically compared techniques like:

- Pearson Correlation: Effectively removes weakly related features, significantly boosting performance for tree-based models like XGBoost and Random Forest [6].

- Recursive Feature Elimination (RFE): Iteratively constructs models and removes the weakest features until the optimal subset is identified [34].

- SelectKBest & Mutual Information: Filter-based methods that select features according to univariate statistical tests [34].

The application of Pearson Correlation for feature selection was a key factor in achieving the 98.91% accuracy with XGBoost for air quality classification in Jakarta [6]. Conversely, the use of Random Projection, a randomized dimensionality reduction technique, led to a noticeable performance drop across all models, underscoring that the choice of feature selection method is highly consequential [6].

Model Training and Hyperparameter Tuning

The core of the experimental protocol involves the training and optimization of the ML models.

- Training/Test Split: Data is typically partitioned, for example, using a 70:30 ratio for training and testing, respectively [35].

- Hyperparameter Tuning: Models are not used with default settings. Optimization techniques like grid search [32] are employed to systematically explore combinations of hyperparameters (e.g., learning rate, tree depth, number of estimators) to find the configuration that yields the best performance.

- Cross-Validation: A robust evaluation method like k-fold cross-validation (e.g., 5-fold or 10-fold [6]) is used during training to ensure that the model's performance is consistent and not dependent on a particular data split.

Hybrid Modeling for Complex Temporal Data

For forecasting complex, time-dependent parameters in wastewater treatment, a singular model is often insufficient. One study proposed a dual hybrid framework that integrates Long Short-Term Memory (LSTM) and XGBoost to leverage their complementary strengths [35]. The logical relationship of this hybrid approach is shown below.

This hybrid framework overcomes the limitations of standalone models. The LSTM component excels at capturing temporal dependencies in the sequential data, while XGBoost robustly models non-linear relationships. The integration can occur in two primary ways: one model uses LSTM to extract temporal features that are then fed into XGBoost, while the other uses XGBoost to generate an initial prediction and an LSTM to learn the complex residual errors [35]. This approach consistently outperformed standalone models in predicting key effluent indicators like chemical oxygen demand and ammonia nitrogen [35].

Successful implementation of machine learning models for environmental forecasting relies on a suite of computational tools, algorithms, and interpretability frameworks.

Table 3: Essential Research Reagents and Computational Tools

| Category | Item/Technique | Specific Function in Research | Exemplary Application |

|---|---|---|---|

| Core Algorithms | XGBoost (eXtreme Gradient Boosting) | High-performance gradient boosting for classification and regression tasks. | Air/water quality index prediction [6] [32] [31]. |

| LightGBM (Light Gradient Boosting Machine) | Efficient gradient boosting framework designed for speed and large datasets. | Water quality classification [32]. | |

| Random Forest | Ensemble of decision trees for robust modeling, resistant to overfitting. | Baseline model for performance comparison [6] [34]. | |

| LSTM (Long Short-Term Memory) | Captures long-range temporal dependencies in time-series data. | Forecasting wastewater parameters in hybrid models [35]. | |

| Interpretability Tools | SHAP (SHapley Additive exPlanations) | Explains model output by quantifying the contribution of each feature. | Identifying DO and BOD as key WQI predictors [32] [33]. |

| Feature Selection Methods | Pearson Correlation | Selects features based on linear correlation with the target variable. | Improved accuracy and interpretability for XGBoost/RF in air quality [6]. |

| Recursive Feature Elimination (RFE) | Recursively removes features to find the most important subset. | Identifying effluent VSS as a critical predictor in wastewater [34]. | |

| Computational Libraries | Python (pandas, scikit-learn, NumPy) | Data manipulation, model implementation, and numerical computation. | Core programming environment for model development [35] [33]. |

The comprehensive comparative analysis presented in this guide leads to several definitive conclusions. XGBoost has firmly established itself as a top-performing algorithm for both air and water quality prediction, consistently delivering superior accuracy and robustness across diverse environmental datasets. Its performance is closely rivaled by other ensemble methods like LightGBM and Random Forest, while traditional and simpler models often fall short.

The effectiveness of any model is heavily dependent on rigorous experimental protocols, including appropriate feature selection, hyperparameter tuning, and robust validation. For forecasting complex temporal processes, such as in wastewater treatment, hybrid models that combine the strengths of different algorithms (e.g., LSTM and XGBoost) represent the cutting edge, offering enhanced predictive performance. Finally, the integration of Explainable AI (XAI) techniques like SHAP is no longer optional but a critical component for building trust, validating model decisions, and extracting scientifically meaningful insights from these powerful predictive tools. This empowers researchers and policymakers to move from simple forecasting to actionable, data-driven environmental management.

Net Ecosystem Exchange (NEE) represents the net flux of carbon dioxide between an ecosystem and the atmosphere, serving as a primary gauge of an ecosystem's carbon sink strength [36]. Quantitatively, NEE is the difference between carbon dioxide uptake through photosynthesis and carbon release through ecosystem respiration (from both autotrophs and heterotrophs) [37] [38]. This metric has become increasingly crucial for analyzing the carbon balance of different areas and understanding the feedbacks between the terrestrial biosphere and atmosphere in the context of global change [37] [39]. As a paradigm shift in tracking land-based CO2 sequestration and emissions, NEE provides a holistic parameter that accounts for all major carbon pools—above- and below-ground biomass, soil organic matter, and dead organic matter—making it superior to approaches that focus on single carbon pools [36].

The quantification of NEE is particularly important given the ongoing rise in global carbon emissions, which have increased rapidly over the last 50 years and not yet peaked [40]. Current climate policies are projected to reduce emissions but remain insufficient to keep temperature rise below 2°C, with current trajectories pointing toward approximately 2.7°C of warming by 2100 [40]. Within this context, accurate monitoring of ecosystem carbon fluxes through NEE becomes essential for climate policy-making and for assessing the effectiveness of nature-based solutions in mitigating climate change [37] [36].

Methodological Approaches for NEE Quantification

Traditional Biophysical and Remote Sensing Methods

Traditional approaches to estimating NEE have relied on a combination of field measurements and satellite remote sensing. The eddy covariance (EC) technique has been a cornerstone method, providing continuous, direct measurements of carbon fluxes at the ecosystem scale [37]. This method uses tower-based instruments to measure the vertical flux of CO2, providing integrated measurements within tower footprints [37]. However, a significant limitation of EC is that it cannot directly measure NEE at regional or global scales, creating a critical need for scaling up beyond the tower footprint [37].

To address this limitation, researchers have developed remote sensing models that combine vegetation indices and environmental parameters. One prominent approach is the multiple-linear regression (MR) model which relates the Enhanced Vegetation Index (EVI) and Land Surface Temperature (LST) derived from the Moderate Resolution Imaging Spectroradiometer (MODIS), along with photosynthetically active radiation (PAR), to estimate site-level NEE [37]. At the deciduous-dominated Harvard Forest, this MR model demonstrated strong performance with R² = 0.84 for training datasets (2001-2004) and R² = 0.76 for validation datasets (2005-2006) [37]. Other models include the Temperature and Greenness (TG) model based on MODIS EVI and LST products, and the Greenness and Radiation (GR) model utilizing chlorophyll indices and PAR [37].

These traditional remote sensing approaches provide valuable spatial and temporal coverage but face challenges in capturing the complex, nonlinear relationships between environmental drivers and carbon fluxes, particularly across diverse ecosystems and under changing climate conditions [37].

Emerging Machine Learning Approaches

Machine learning (ML) models have emerged as powerful tools for modeling complex environmental processes like NEE, capable of capturing nonlinear relationships and identifying key drivers from multi-source data [41]. These algorithms offer significant advantages in handling high-dimensional features and modeling complex interactions that challenge traditional statistical methods [41].

Among the most prominent ML approaches are tree-based ensemble methods, including Random Forest and XGBoost (Extreme Gradient Boosting), which have been widely applied in environmental research due to their robust performance and ability to handle complex, heterogeneous datasets [42] [41]. These models can integrate diverse data sources—including satellite imagery, meteorological data, soil properties, and land use characteristics—to generate accurate predictions of carbon fluxes across spatial and temporal scales [42] [41].

The superiority of ML approaches lies in their ability to learn complex patterns directly from data without relying on pre-specified functional forms, automatically handle interactions between predictor variables, provide feature importance rankings to identify key drivers, and maintain robust performance even with missing data or collinear predictors [42] [41]. These characteristics make them particularly suitable for NEE estimation across heterogeneous landscapes and under varying environmental conditions.

Comparative Analysis: Methodological Performance

The evaluation of different NEE estimation methods relies on standardized experimental protocols and high-quality data sources. For traditional approaches, the protocol typically involves collecting eddy covariance measurements from flux tower networks (such as AmeriFlux and FluxNet), combined with satellite-derived products like MODIS EVI, LST, and PAR measurements [37] [39]. These datasets are then processed using statistical models (e.g., multiple regression) to establish relationships between remote sensing indices and ground-truth NEE measurements [37].

For machine learning approaches, the workflow generally involves four phases: (1) data collection and processing of spatial form indicators, building characteristics, and energy consumption patterns; (2) correlation analysis to identify significant predictors; (3) model construction and training using algorithms like Random Forest and XGBoost; and (4) spatial form optimization and carbon emission prediction based on model results [41]. The data collection encompasses detailed field surveys, government statistics, and publicly available energy consumption information to ensure completeness and accuracy [41].

Figure 1: Machine Learning Workflow for NEE Prediction. This diagram illustrates the three-phase methodology for developing machine learning models to predict Net Ecosystem Exchange, from data preparation to practical application.

Quantitative Performance Comparison

The performance of different methodological approaches can be compared through various statistical metrics, including R-squared (R²) values, Root Mean Square Error (RMSE), and other model accuracy measures. The table below summarizes the performance of various approaches based on experimental results from multiple studies:

Table 1: Performance Comparison of NEE Estimation Methods

| Method Category | Specific Model | Application Context | Performance Metrics | Reference |

|---|---|---|---|---|

| Traditional Remote Sensing | Multiple Regression (MR) | Harvard Forest (Deciduous) | R² = 0.76-0.84, RMSE = 1.33-1.54 g Cm⁻² day⁻¹ | [37] |

| Traditional Remote Sensing | Global NEE Estimation Model | Global Terrestrial Systems | R² = 0.60-0.68 for different ecosystems | [39] |

| Machine Learning | XGBoost | Rural Residential Carbon Emissions | Superior prediction accuracy and generalization ability | [41] |

| Machine Learning | Random Forest | Rural Residential Carbon Emissions | High accuracy, lower than XGBoost | [41] |

| Machine Learning | XGBoost | Heavy Metal Source Apportionment | Accuracy: 87.4%, Precision: 88.3% | [42] |

| Machine Learning | Random Forest | Heavy Metal Source Apportionment | Accuracy: 85.1%, Precision: 86.6% | [42] |

| Machine Learning | XGBoost | Soil/Groundwater Contamination | Accuracy: 87.4%, AUC: 0.95 | [5] |

| Machine Learning | Random Forest | Soil/Groundwater Contamination | Accuracy: 85.1%, AUC: 0.93 | [5] |

The performance data reveals consistent patterns across different environmental applications. In studies comparing XGBoost and Random Forest for various environmental prediction tasks, XGBoost consistently outperforms Random Forest across all evaluation metrics [42] [41] [5]. In one comprehensive analysis, the performance ranking across multiple metrics consistently showed: XGBoost > LightGBM > Random Forest [5].

For traditional remote sensing approaches, the multiple regression model demonstrated strong performance with R² values of 0.84 for training and 0.76 for validation periods in a temperate deciduous forest [37]. However, these models may have limitations in capturing complex nonlinear relationships across diverse ecosystems compared to machine learning approaches [41].

Advantages and Limitations Across Methods

Each methodological approach presents distinct advantages and limitations for NEE estimation and environmental applications. Traditional remote sensing models provide physically interpretable relationships based on established ecological principles and offer direct connections to biophysical processes [37]. They benefit from long data records and well-understood behavior across different ecosystems. However, they often struggle with capturing complex nonlinearities and may have limited transferability across diverse ecosystem types [37].

Machine learning approaches, particularly ensemble methods like XGBoost and Random Forest, excel at handling complex, high-dimensional datasets and automatically capturing nonlinear relationships without pre-specified functional forms [42] [41]. They provide robust performance even with missing data and can integrate diverse data sources. However, these models often function as "black boxes" with limited interpretability of underlying mechanisms, require substantial computational resources for training, and need careful tuning to prevent overfitting [42] [41].

The hybrid approaches that combine process-based understanding with machine learning's pattern recognition capabilities are emerging as promising directions for future research, potentially leveraging the strengths of both methodological paradigms [41].

The Scientist's Toolkit: Essential Research Solutions

Data Collection and Measurement Technologies

Table 2: Essential Research Tools for NEE and Carbon Emissions Monitoring

| Tool Category | Specific Technology | Primary Function | Key Features | Application Context |

|---|---|---|---|---|

| Field Measurement | Eddy Covariance System | Direct CO2 flux measurement | Continuous, ecosystem-scale measurements | Tower-based flux monitoring [37] [38] |

| Remote Sensing | MODIS (Terra/Aqua Satellites) | Vegetation indices (EVI, LSWI) and LST | Global coverage, 8-day composites | Regional NEE upscaling [37] |

| Gas Analyzer | Picarro G2508 CRDS System | High-precision GHG concentration measurement | Simultaneous CH4, CO2, N2O quantification | Laboratory and field emissions [43] |

| Data Processing | Soil Flux Processor | GHG flux calculations from concentration data | Integration with CRDS systems | Experimental flux calculations [43] |

| Modeling Framework | Python-based ML Libraries | Implementation of RF, XGBoost algorithms | Handling high-dimensional features | Carbon emission prediction [41] |

Machine Learning Algorithms in Environmental Research

The integration of machine learning into environmental research has created a specialized toolkit of algorithms and approaches specifically suited for carbon cycle science. Random Forest operates by constructing multiple decision trees during training and outputting the mode of the classes (classification) or mean prediction (regression) of the individual trees [42]. This ensemble approach reduces overfitting and provides robust feature importance measures, making it valuable for identifying key drivers of carbon fluxes [42] [41].

XGBoost (Extreme Gradient Boosting) represents a more advanced implementation of gradient boosted trees, designed for speed and performance [42] [41]. It builds trees sequentially, with each new tree correcting errors made by previously grown trees, and incorporates regularization to prevent overfitting [42]. This approach has demonstrated superior performance in multiple environmental applications, including heavy metal source apportionment in farmland soils [42], prediction of rural residential carbon emissions [41], and assessment of soil and groundwater contamination risks [5].

The application of these algorithms to NEE prediction typically involves training on multi-source datasets including satellite-derived vegetation indices, meteorological data, soil properties, land use characteristics, and topographic information [41]. The models learn complex relationships between these predictor variables and measured carbon fluxes, then generate predictions across spatial and temporal scales [41].

Implications for Climate Policy and Ecosystem Management

The advancement of NEE monitoring methodologies has significant implications for climate policy and ecosystem management. Accurate, scalable NEE monitoring enables agrifood companies to track and assess global supply chain performance with a unified tool, understand whole ecosystem health and productivity, and avoid using averages and emission factors that don't fully capture local variations [36]. This is particularly important given that up to 90% of a food & beverage company's greenhouse gas emissions are Scope 3, originating from their supply chain [36].

From a global perspective, NEE mapping provides critical insights into continental-scale carbon balances. Research has estimated the global annual NEE to be -18.41 billion tons C, with forests accounting for 51.75% of this global CO2 absorption [39]. However, the distribution is uneven, with Asia, North America, and Europe having essentially run out of their ecosystem potential to absorb the CO2 they emit [39]. This information is crucial for international climate agreements and for achieving a better fairness in controlling carbon emission tasks by considering both emission generation and natural carbon handling ability [39].

The integration of machine learning approaches with traditional methods enhances our capacity to monitor nature-based solutions and ecosystem restoration efforts, providing science-based, primary data to track and share climate progress [36]. As these methodologies continue to evolve, they offer the potential for more accurate carbon accounting, more effective climate policies, and better management of ecosystems for carbon sequestration.

The global push for sustainable energy and industrial processes has catalyzed the development of technologies that optimize resource utilization while minimizing environmental impact. Among these, syngas production from waste biomass and CO2 flooding for enhanced oil recovery (EOR) represent two critical pathways in the waste-to-energy paradigm and carbon capture, utilization, and storage (CCUS) framework, respectively [44] [45]. The optimization of these complex processes benefits significantly from advanced computational approaches, particularly machine learning (ML) models that can handle nonlinear relationships and multiple variables.

The application of explainable ML models, such as XGBoost, provides unprecedented capabilities for predicting key performance indicators and identifying optimal operational parameters [44]. This comparative analysis examines the experimental methodologies, performance outcomes, and optimization approaches for both syngas production via co-gasification and CO2 flooding for EOR, with a focus on data-driven optimization techniques that enhance efficiency, yield, and environmental sustainability.

Syngas Production from Co-Gasification

Syngas production through co-gasification involves the thermochemical conversion of waste biomass and low-quality coal into synthesis gas, primarily containing hydrogen (H₂), carbon monoxide (CO), and methane (CH₄) [44]. This process occurs through four main stages: drying (moisture expulsion), pyrolysis (thermal decomposition at 300-500°C), combustion (partial oxidation for heat generation), and gasification (char conversion to CO and H₂). The complexity of this multi-stage process necessitates sophisticated modeling approaches to optimize operational parameters and maximize syngas yield and quality.

Experimental data for ML model development is typically gathered from published literature on coal-biomass co-pyrolysis, incorporating ultimate analysis, proximate analysis, and operational settings as control factors [44]. Key parameters include reaction temperature, biomass mixing ratio, feedstock characteristics (moisture content, ash composition, energy density), and gasification conditions. These variables serve as inputs for predicting syngas yield and lower heating value (LHV).

Machine Learning Optimization and Performance

In syngas production optimization, five ML algorithms are commonly evaluated: Linear Regression (LR), Support Vector Regression (SVR), Gaussian Process Regression (GPR), Extreme Gradient Boosting (XGBoost), and Categorical Boosting (CatBoost) [44]. The performance comparison reveals XGBoost as the superior model for both syngas yield and LHV prediction.

Table 1: Performance Metrics of Machine Learning Models for Syngas Prediction

| Model | R² (Syngas Yield) | MSE (Syngas Yield) | MAPE (Syngas Yield) | R² (LHV) | MSE (LHV) | MAPE (LHV) |

|---|---|---|---|---|---|---|

| XGBoost | 0.9786 | 10.82 | 9.8% | 0.9992 | 0.03 | 0.83% |

| CatBoost | - | - | - | - | - | - |

| GPR | - | - | - | - | - | - |

| SVR | - | - | - | - | - | - |

| LR | - | - | - | - | - | - |

Through explainable AI (XAI) methods, particularly Shapley Additive Explanations (SHAP) and Local Interpretable Model-agnostic Explanations (LIME), researchers have identified reaction temperature and biomass mixing ratio as the most significant control factors affecting syngas yield [44]. These techniques provide transparency and interpretability, enabling researchers to understand the underlying factors driving model predictions and optimize process parameters accordingly.

CO2 Flooding for Enhanced Oil Recovery

Technical Fundamentals and Methodologies

CO2-enhanced oil recovery is a cornerstone technology in CCUS applications, leveraging CO2's unique properties to improve oil mobility and recovery rates [45]. The process involves injecting CO2 into reservoirs, where it mixes with crude oil, reduces viscosity, and enhances displacement efficiency. A critical parameter in CO2-EOR is the minimum miscibility pressure (MMP), defined as the lowest pressure required for complete miscibility between CO2 and oil [45]. Maintaining injection pressure above MMP ensures minimal interfacial tension and optimal displacement efficiency.

Experimental determination of MMP employs several laboratory methods, including slim-tube tests, rising bubble apparatus, and vanishing interfacial tension techniques [45]. The slim-tube method, considered the reference standard, simulates reservoir conditions by gradually increasing injection pressure to observe CO2-oil miscibility. Despite its precision, this approach is time-consuming, costly, and impractical for rapid field applications, driving the development of computational prediction methods.

Data-Driven Prediction Models

Machine learning approaches for MMP prediction utilize extensive experimental datasets encompassing reservoir temperature, crude oil composition, and injected gas characteristics [45]. The improved XGBoost model incorporates the critical temperature of the injected gas as a novel feature and employs Particle Swarm Optimization for hyperparameter tuning, achieving exceptional prediction accuracy.

Table 2: Performance Comparison of MMP Prediction Methods

| Method | R² (Training) | RMSE (Training) | R² (Testing) | RMSE (Testing) | Key Features |

|---|---|---|---|---|---|

| Improved XGBoost | 0.9991 | 0.2347 | 0.9845 | 1.0303 | Critical temperature of injection gas, PSO optimization |

| Traditional Empirical Correlations | Varies | Varies | Varies | Varies | Reservoir temperature, C5+ molecular weight |

| ANN Models | - | - | - | - | Nonlinear pattern recognition |

| Numerical Simulation | - | - | - | - | Equation-of-state modeling |

Feature importance analysis through SHAP reveals that reservoir temperature represents the most significant factor affecting MMP, followed by volatile oil fractions and C5+ molecular weight [45]. This interpretability provides valuable insights for designing injection strategies and understanding the underlying physical relationships governing miscibility.

Advanced CO2 Flooding Techniques

Comparative Performance of Gas Injection Media

Research on extra-low permeability reservoirs demonstrates significant variations in displacement efficiency among different gas injection media [46]. Experimental evaluations compare CO2, CH4, and oxygen-reduced air flooding through slim-tube tests and long-core flooding experiments, measuring minimum miscibility pressures and displacement efficiencies under controlled conditions.

Table 3: Comparison of Gas Flooding Media Performance in Extra-Low Permeability Reservoirs

| Flooding Medium | Minimum Miscibility Pressure | Displacement Efficiency | Miscibility under Reservoir Conditions |

|---|---|---|---|

| CO2 Flooding | 15.5 MPa | 85.08% | Miscible |

| Water Flooding | - | 55.75% | - |

| CH4 Flooding | 36.5 MPa | 47.23% | Immiscible |

| Oxygen-Reduced Air Flooding | Cannot achieve miscibility | 36.30% | Immiscible |

The experimental protocols involve establishing displacement efficiency through long-core flooding experiments at reservoir conditions, with CO2 achieving superior performance due to its miscibility and effective viscosity reduction [46]. The studies further demonstrate that water flooding followed by CO2 flooding represents the optimal combination, achieving the highest displacement efficiency of 86.61% among the evaluated schemes.

Cosolvent-Enhanced CO2 Flooding

Innovative approaches to CO2 flooding involve cosolvents to improve performance in heavy oil reservoirs. Dimethyl ether-enhanced CO2 flooding represents a technological advancement that addresses viscosity fingering effects and enhances both oil recovery and CO2 sequestration [47]. Experimental methodologies include developing thermodynamic phase equilibrium models using the Peng-Robinson equation of state and conducting numerical simulations to compare performance with conventional CO2 flooding.

The results demonstrate that DME promotes single-phase state formation in the heavy oil-CO2-DME system, enhances CO2 solubility in heavy oil, lowers interfacial tension, and inhibits excessive extraction of light hydrocarbon components [47]. Under identical pressure conditions, DME-assisted CO2 flooding achieves higher final CO2 sequestration rates compared to conventional CO2 flooding, reinforcing pore-scale trapping within the reservoir.

Research Reagent Solutions

Table 4: Essential Research Materials and Their Applications

| Reagent/Material | Function/Application | Field |

|---|---|---|

| Silver Nanopowder | Cathodic catalyst for CO2 electroreduction to CO | CO2 Electrolysis |

| Iridium Oxide Nanopowder | Anodic catalyst for oxygen evolution reaction | CO2 Electrolysis |

| Nafion Membrane | Cation exchange membrane in MEA assemblies | CO2 Electrolysis |

| Dimethyl Ether | Cosolvent to enhance CO2 solubility in heavy oil | CO2-EOR |

| KHCO3 Electrolyte | Anolyte solution for CO2 electrolysis systems | CO2 Electrolysis |

| Sigracet 39 BB Carbon Paper | Gas diffusion layer for MEA cathodes | CO2 Electrolysis |

This comparative analysis demonstrates the critical role of machine learning, particularly XGBoost models, in optimizing complex energy and industrial processes. For syngas production, XGBoost achieves superior prediction accuracy for both yield and heating value, with reaction temperature and biomass mixing ratio identified as the most influential parameters. In CO2-EOR applications, improved XGBoost models with PSO optimization provide exceptional MMP prediction accuracy, offering valuable insights for injection strategy design.

The experimental data consistently shows CO2's superior performance as a displacement medium compared to alternatives like CH4 and oxygen-reduced air, particularly in extra-low permeability reservoirs. Advanced techniques such as DME-enhanced CO2 flooding further improve recovery efficiency while promoting geological CO2 sequestration. The integration of explainable AI methodologies provides transparency and interpretability, enabling researchers to understand underlying factor relationships and optimize process parameters effectively across both domains.

These data-driven approaches represent significant advancements over traditional empirical correlations and experimental methods, offering more efficient, accurate, and scalable solutions for optimizing renewable energy systems and industrial processes within the broader context of environmental sustainability and resource efficiency.

Experimental Workflows

In the context of rapid global urbanization, the accurate mapping of urban impervious surfaces (UIS) has become a critical parameter for studies on climate change, environmental change, and urban sustainability [48]. The conversion of natural land surfaces to UIS triggers numerous environmental challenges, including urban heat islands, waterlogging, and soil erosion [48]. High-resolution land use classification is essential for analyzing the impacts of urbanization on the environment and for supporting sustainable urban development, with machine learning models like XGBoost and Random Forest playing increasingly pivotal roles in this domain [49] [41] [50]. This guide provides a comparative analysis of these algorithms within environmental applications, detailing their performance, experimental protocols, and implementation frameworks to inform researchers and scientists in the field.

Machine Learning Models in Environmental Remote Sensing: A Comparative Performance Analysis

The selection of an appropriate machine learning model is fundamental to the success of land use classification projects. The table below summarizes the performance of key algorithms as evidenced by recent environmental applications.

Table 1: Comparative performance of machine learning models in environmental remote sensing applications

| Application Domain | Random Forest Performance | XGBoost Performance | Performance Metrics | Key Findings |

|---|---|---|---|---|